1c9dd78fdac9fe25dc5ca0f0ff858ce6.ppt

- Количество слайдов: 47

Evidence-based Application of Evidencebased Treatments Peter S. Jensen, M. D. President & CEO The REACH Institute REsource for Advancing Children’s Health New York, NY

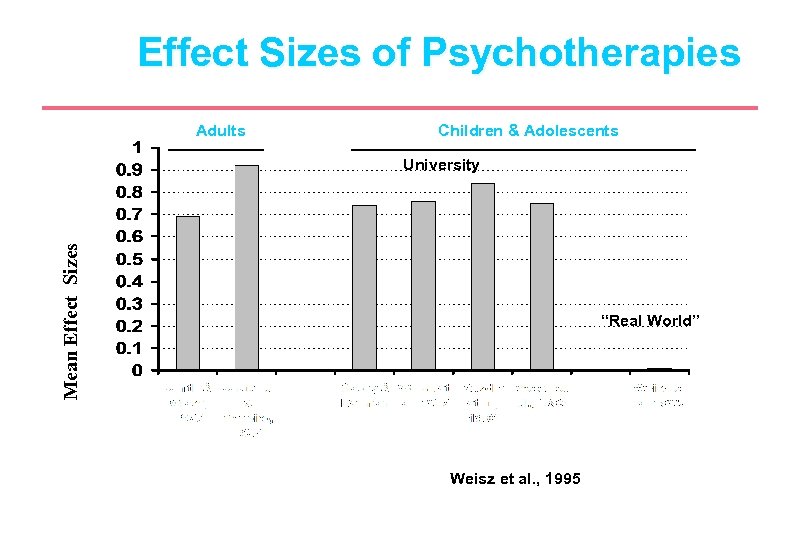

Effect Sizes of Psychotherapies Adults Children & Adolescents Mean Effect Sizes University “Real World” Weisz et al. , 1995

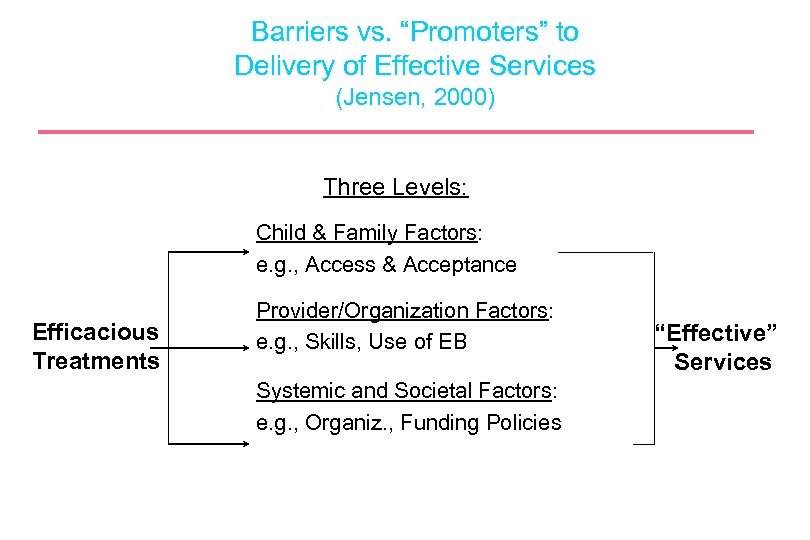

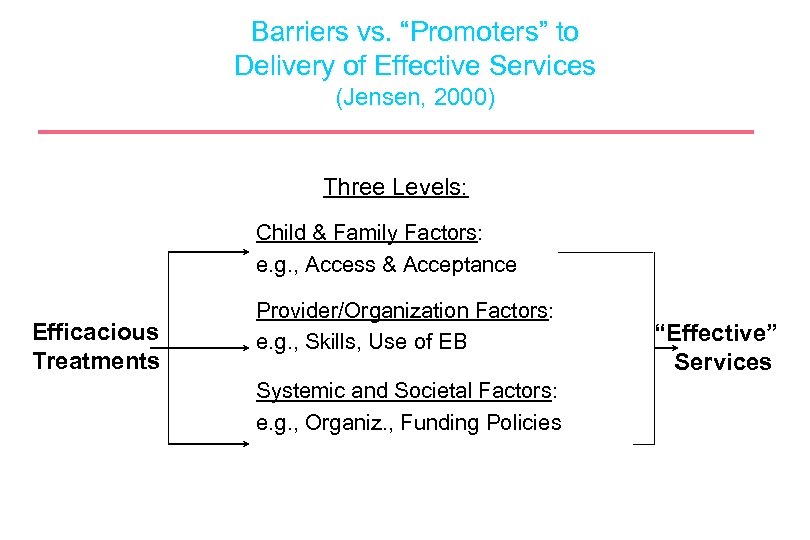

Barriers vs. “Promoters” to Delivery of Effective Services (Jensen, 2000) Three Levels: Child & Family Factors: e. g. , Access & Acceptance Efficacious Treatments Provider/Organization Factors: e. g. , Skills, Use of EB Systemic and Societal Factors: e. g. , Organiz. , Funding Policies “Effective” Services

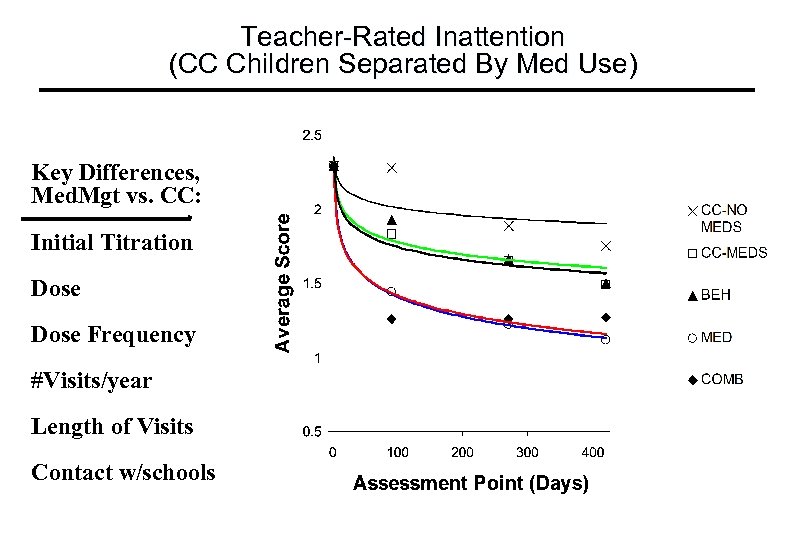

Teacher-Rated Inattention (CC Children Separated By Med Use) Key Differences, Med. Mgt vs. CC: Initial Titration Dose Frequency #Visits/year Length of Visits Contact w/schools

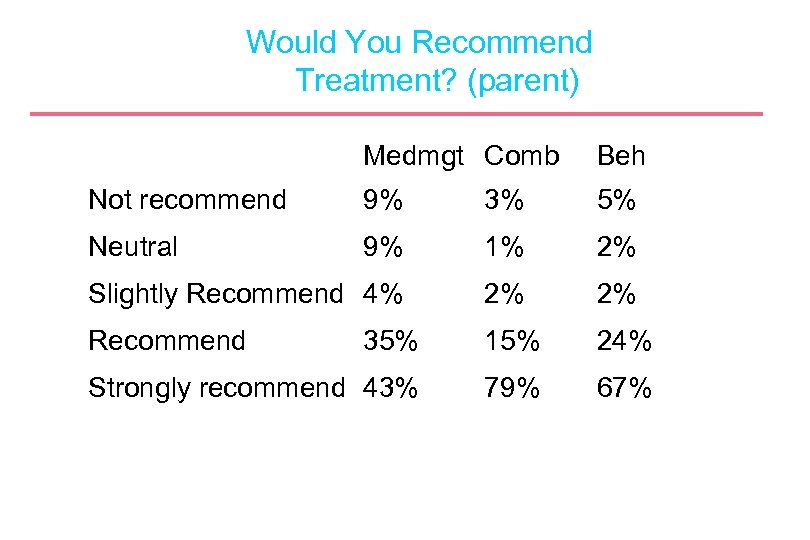

Would You Recommend Treatment? (parent) Medmgt Comb Beh Not recommend 9% 3% 5% Neutral 9% 1% 2% Slightly Recommend 4% 2% 2% Recommend 35% 15% 24% Strongly recommend 43% 79% 67%

Key Challenges l l l Policy makers and practitioners hesitant to implement change Vested interests in the status quo Researchers often not interested in promoting findings beyond academic settings Manualized interventions perceived as difficult to implement or too costly Obstacles and disincentives actively interfere with implementation

Key Challenges l l Interventions implemented but “titrate the dose”, reducing effectiveness “Clients too difficult”, “resources inadequate” used to justify bad outcomes Research population “not the same” as youth being cared for at their clinical site Having data and “being right” neither necessary nor sufficient to influence policy makers

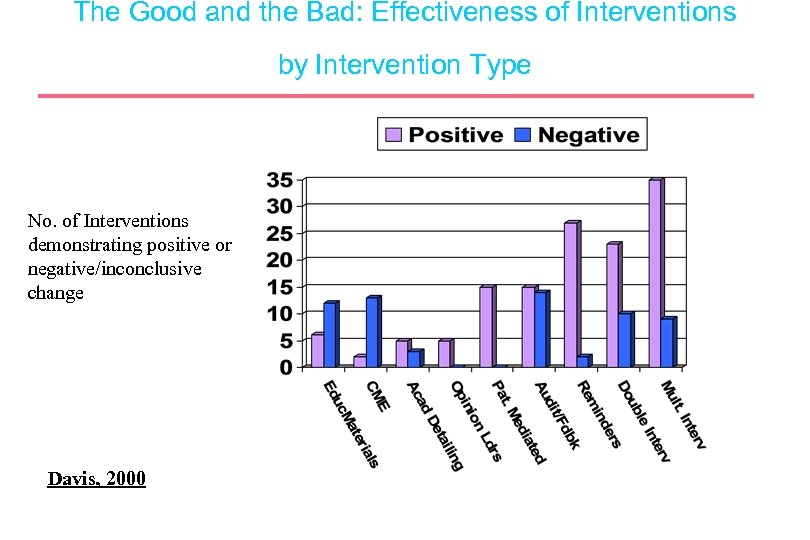

The Good and the Bad: Effectiveness of Interventions by Intervention Type No. of Interventions demonstrating positive or negative/inconclusive change Davis, 2000

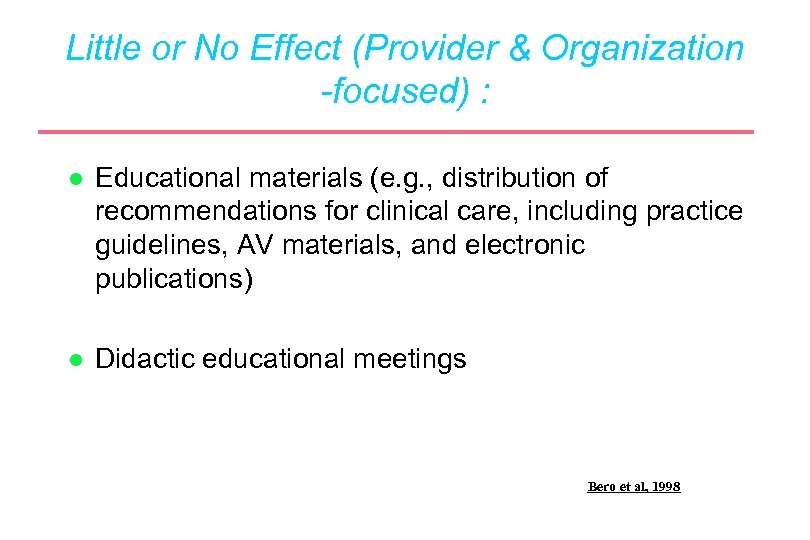

Little or No Effect (Provider & Organization -focused) : l Educational materials (e. g. , distribution of recommendations for clinical care, including practice guidelines, AV materials, and electronic publications) l Didactic educational meetings Bero et al, 1998

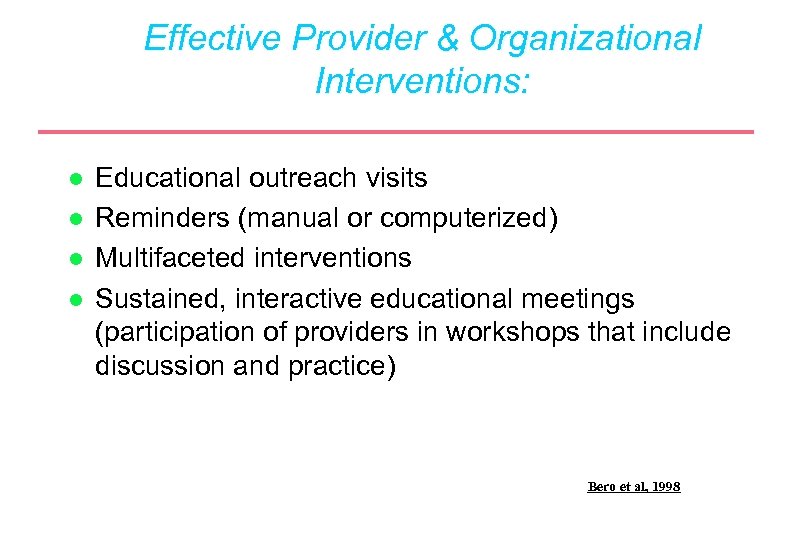

Effective Provider & Organizational Interventions: l l Educational outreach visits Reminders (manual or computerized) Multifaceted interventions Sustained, interactive educational meetings (participation of providers in workshops that include discussion and practice) Bero et al, 1998

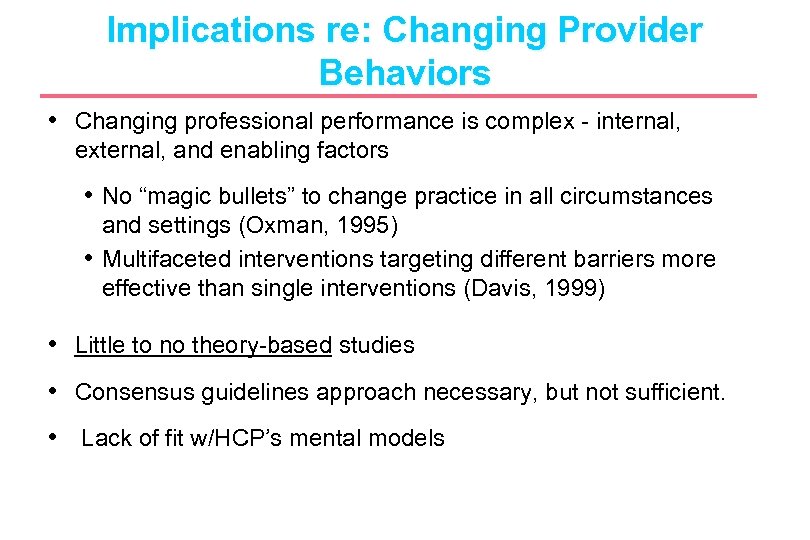

Implications re: Changing Provider Behaviors • Changing professional performance is complex - internal, external, and enabling factors • No “magic bullets” to change practice in all circumstances and settings (Oxman, 1995) • Multifaceted interventions targeting different barriers more effective than single interventions (Davis, 1999) • Little to no theory-based studies • Consensus guidelines approach necessary, but not sufficient. • Lack of fit w/HCP’s mental models

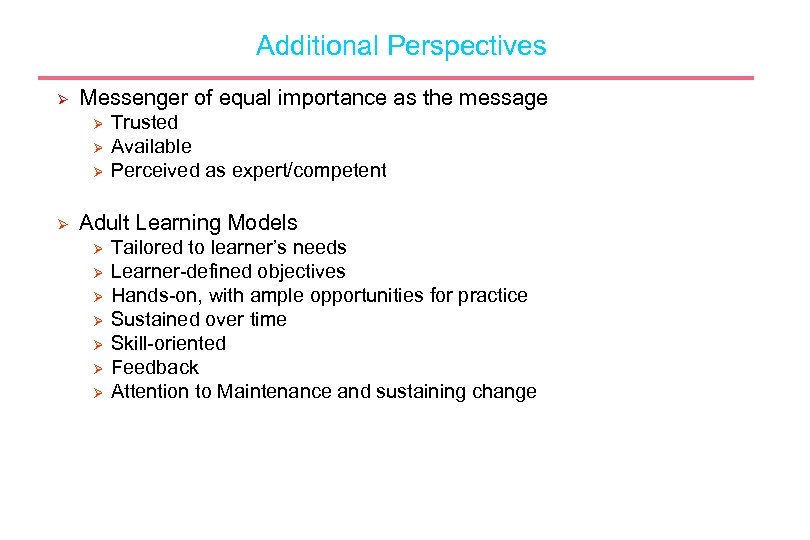

Additional Perspectives Ø Messenger of equal importance as the message Ø Ø Trusted Available Perceived as expert/competent Adult Learning Models Ø Ø Ø Ø Tailored to learner’s needs Learner-defined objectives Hands-on, with ample opportunities for practice Sustained over time Skill-oriented Feedback Attention to Maintenance and sustaining change

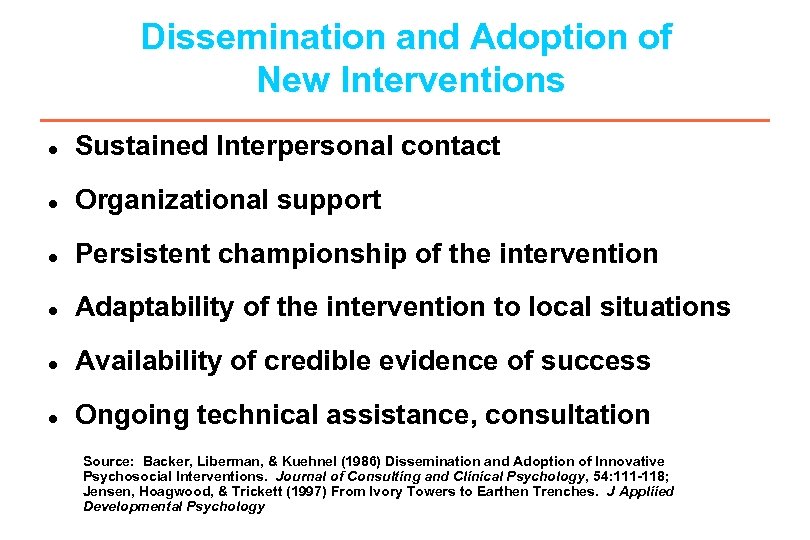

Dissemination and Adoption of New Interventions l Sustained Interpersonal contact l Organizational support l Persistent championship of the intervention l Adaptability of the intervention to local situations l Availability of credible evidence of success l Ongoing technical assistance, consultation Source: Backer, Liberman, & Kuehnel (1986) Dissemination and Adoption of Innovative Psychosocial Interventions. Journal of Consulting and Clinical Psychology, 54: 111 -118; Jensen, Hoagwood, & Trickett (1997) From Ivory Towers to Earthen Trenches. J Appliied Developmental Psychology

Science-based Plus Necessary “-abilities” • • Palatable Affordable Transportable Trainable Adaptable, Flexible Evaluable Feasible Sustainable

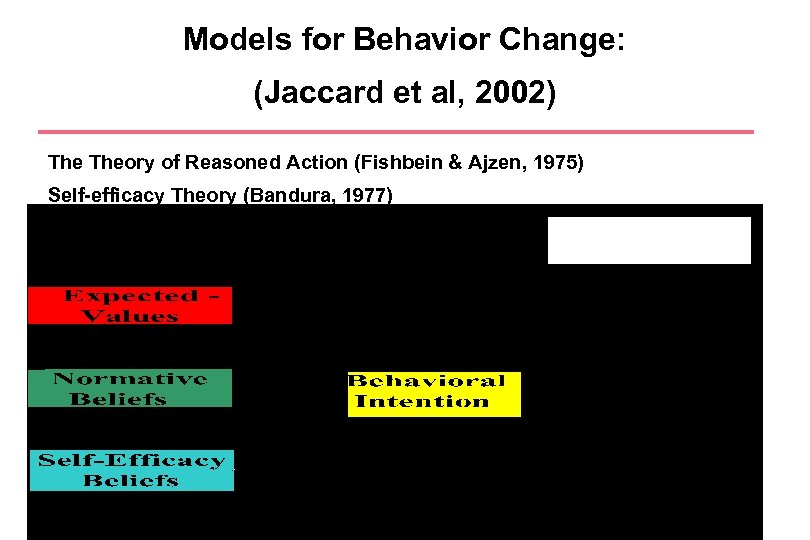

Models for Behavior Change: (Jaccard et al, 2002) Theory of Reasoned Action (Fishbein & Ajzen, 1975) Self-efficacy Theory (Bandura, 1977) Theory of Planned Behavior (Ajzen, 1981) Diffusion of Innovations (Rogers, 1995)

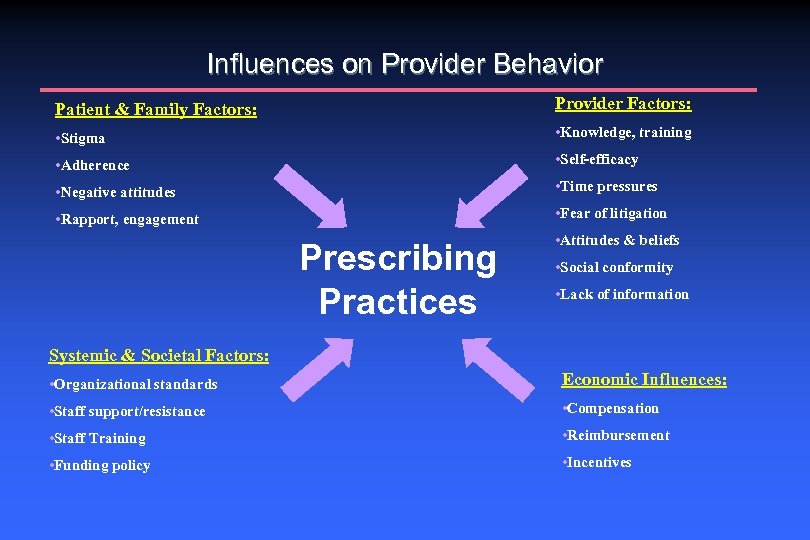

Influences on Provider Behavior Patient & Family Factors: Provider Factors: • Stigma • Knowledge, training • Adherence • Self-efficacy • Negative attitudes • Time pressures • Rapport, engagement • Fear of litigation Prescribing Practices • Attitudes & beliefs • Social conformity • Lack of information Systemic & Societal Factors: • Organizational standards Economic Influences: • Staff support/resistance • Compensation • Staff Training • Reimbursement • Funding policy • Incentives

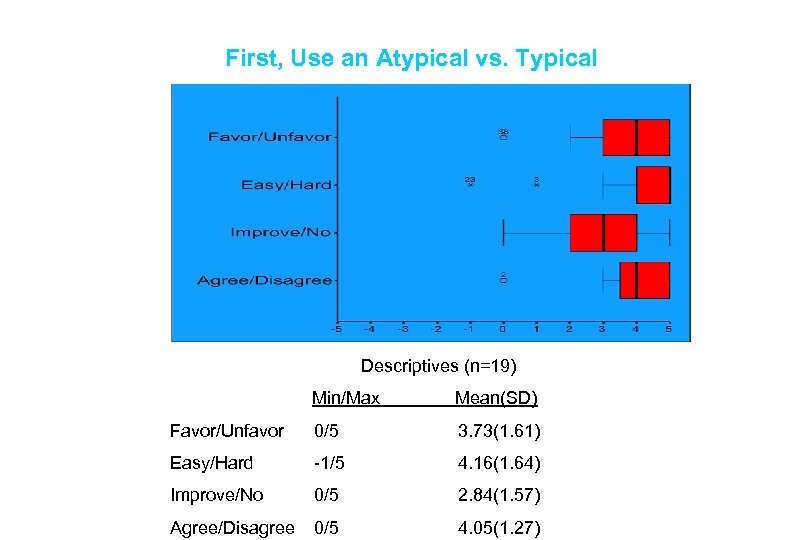

First, Use an Atypical vs. Typical Descriptives (n=19) Min/Max Mean(SD) Favor/Unfavor 0/5 3. 73(1. 61) Easy/Hard -1/5 4. 16(1. 64) Improve/No 0/5 2. 84(1. 57) Agree/Disagree 0/5 4. 05(1. 27)

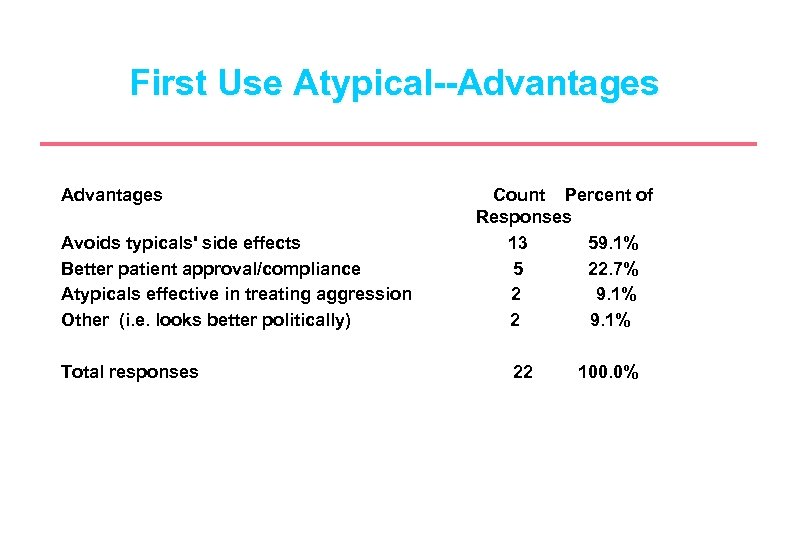

First Use Atypical--Advantages Avoids typicals' side effects Better patient approval/compliance Atypicals effective in treating aggression Other (i. e. looks better politically) Total responses Count Percent of Responses 13 59. 1% 5 22. 7% 2 9. 1% 22 100. 0%

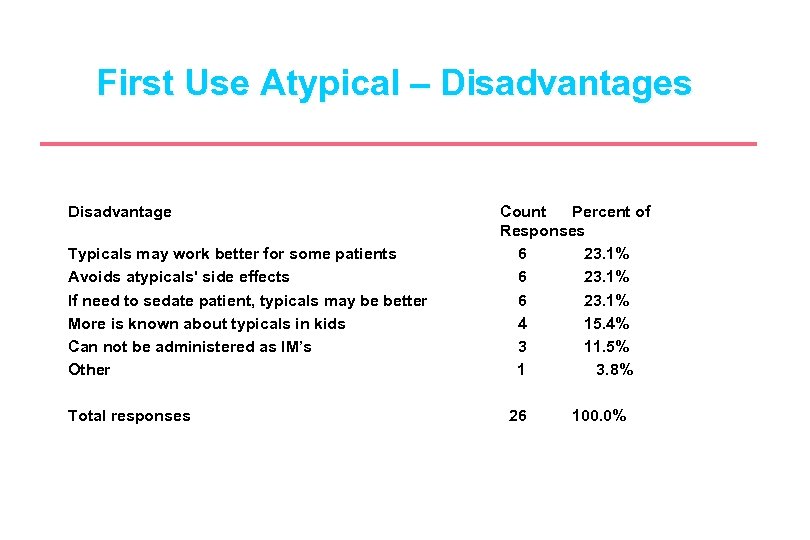

First Use Atypical – Disadvantages Disadvantage Typicals may work better for some patients Avoids atypicals' side effects If need to sedate patient, typicals may be better More is known about typicals in kids Can not be administered as IM’s Other Total responses Count Percent of Responses 6 23. 1% 4 15. 4% 3 11. 5% 1 3. 8% 26 100. 0%

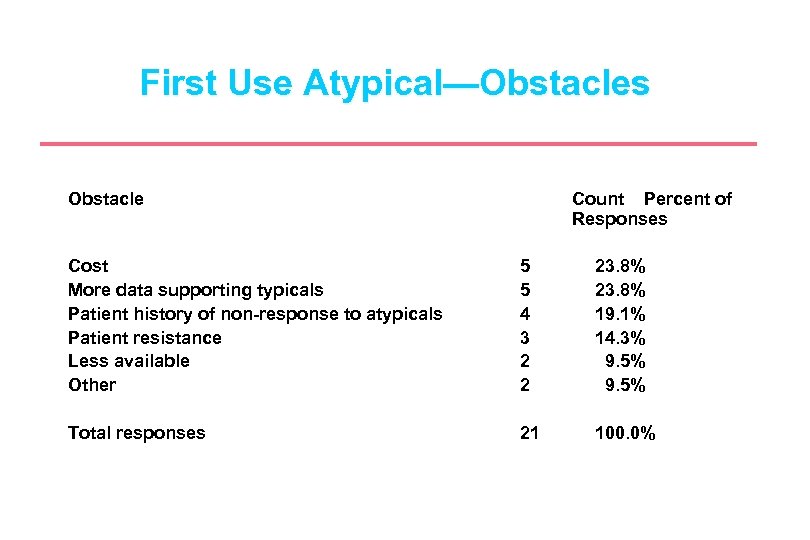

First Use Atypical—Obstacles Obstacle Count Percent of Responses Cost More data supporting typicals Patient history of non-response to atypicals Patient resistance Less available Other 5 5 4 3 2 2 23. 8% 19. 1% 14. 3% 9. 5% Total responses 21 100. 0%

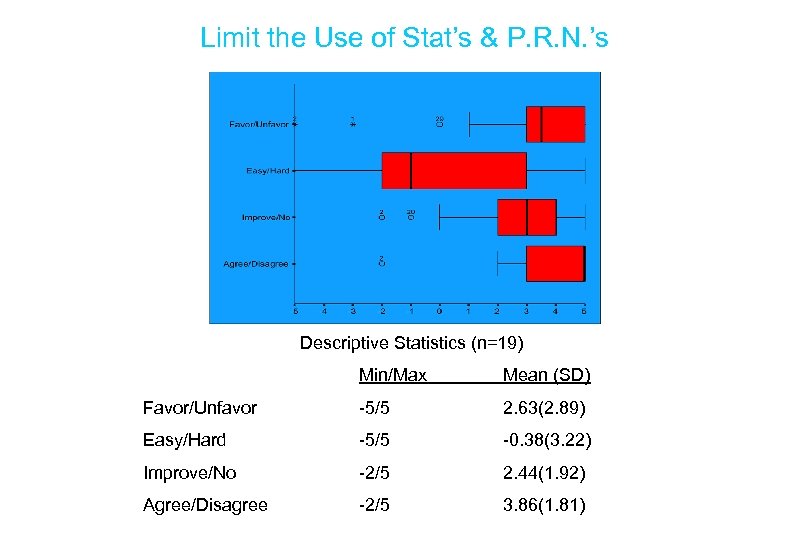

Limit the Use of Stat’s & P. R. N. ’s Descriptive Statistics (n=19) Min/Max Mean (SD) Favor/Unfavor -5/5 2. 63(2. 89) Easy/Hard -5/5 -0. 38(3. 22) Improve/No -2/5 2. 44(1. 92) Agree/Disagree -2/5 3. 86(1. 81)

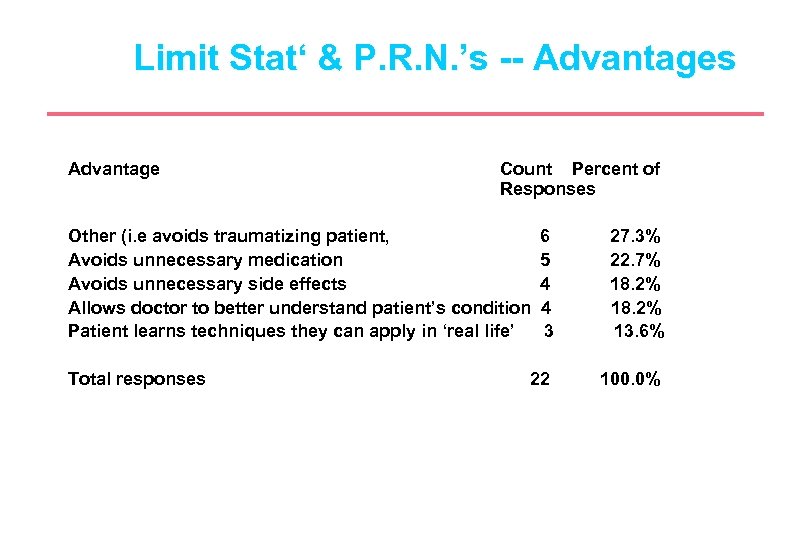

Limit Stat‘ & P. R. N. ’s -- Advantages Advantage Count Percent of Responses Other (i. e avoids traumatizing patient, Avoids unnecessary medication Avoids unnecessary side effects Allows doctor to better understand patient’s condition Patient learns techniques they can apply in ‘real life’ Total responses 6 5 4 4 3 27. 3% 22. 7% 18. 2% 13. 6% 22 100. 0%

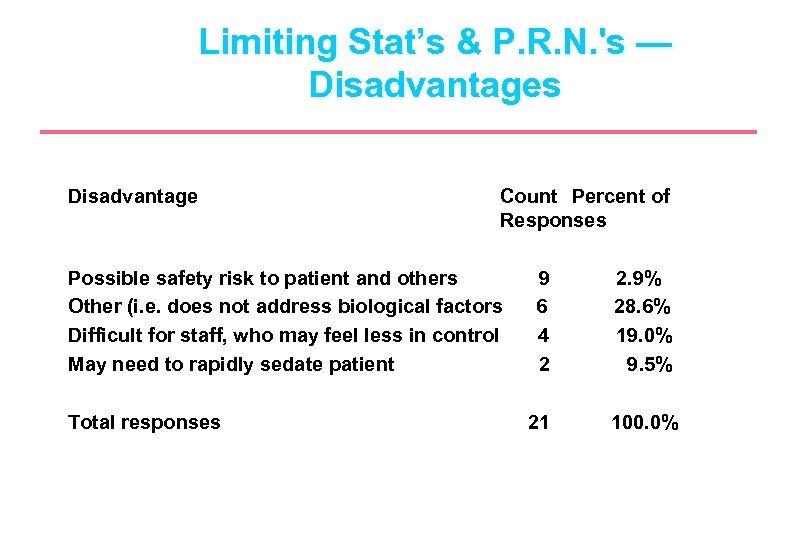

Limiting Stat’s & P. R. N. 's — Disadvantages Disadvantage Count Percent of Responses Possible safety risk to patient and others Other (i. e. does not address biological factors Difficult for staff, who may feel less in control May need to rapidly sedate patient Total responses 9 6 4 2 2. 9% 28. 6% 19. 0% 9. 5% 21 100. 0%

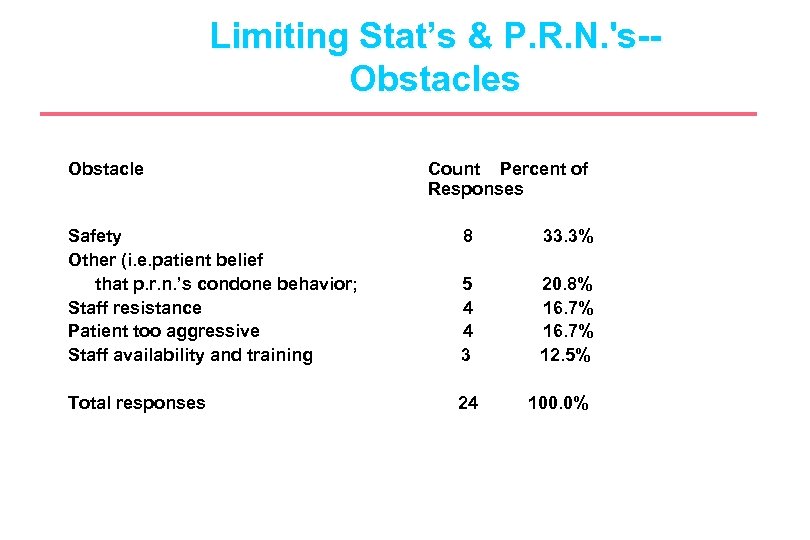

Limiting Stat’s & P. R. N. 's-Obstacles Obstacle Count Percent of Responses Safety Other (i. e. patient belief that p. r. n. ’s condone behavior; Staff resistance Patient too aggressive Staff availability and training 8 33. 3% 5 4 4 3 20. 8% 16. 7% 12. 5% Total responses 24 100. 0%

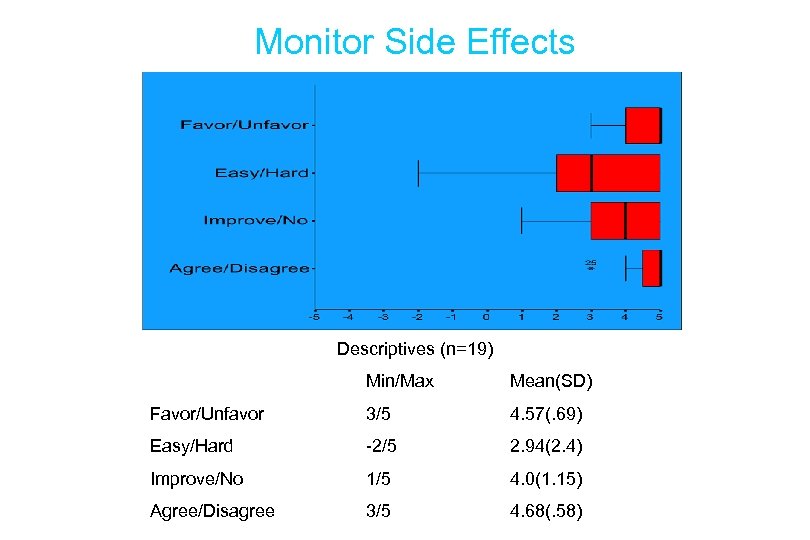

Monitor Side Effects Descriptives (n=19) Min/Max Mean(SD) Favor/Unfavor 3/5 4. 57(. 69) Easy/Hard -2/5 2. 94(2. 4) Improve/No 1/5 4. 0(1. 15) Agree/Disagree 3/5 4. 68(. 58)

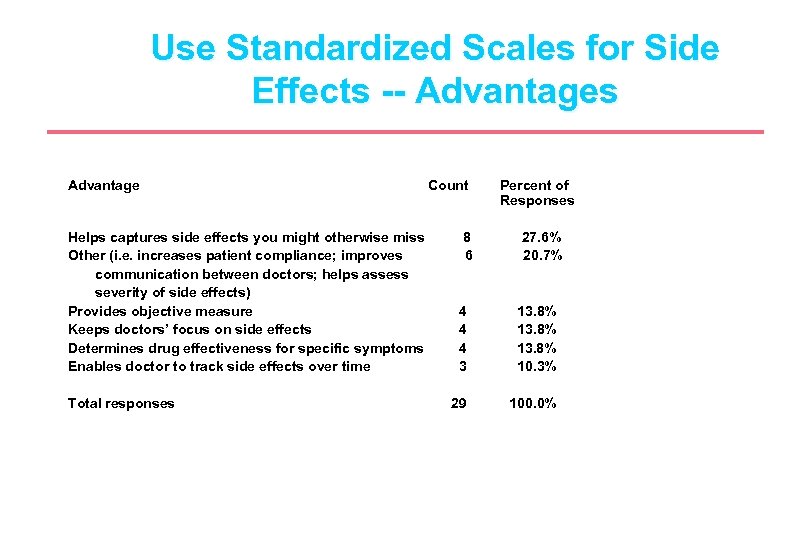

Use Standardized Scales for Side Effects -- Advantages Advantage Helps captures side effects you might otherwise miss Other (i. e. increases patient compliance; improves communication between doctors; helps assess severity of side effects) Provides objective measure Keeps doctors’ focus on side effects Determines drug effectiveness for specific symptoms Enables doctor to track side effects over time Total responses Count 8 6 Percent of Responses 27. 6% 20. 7% 4 4 4 3 13. 8% 10. 3% 29 100. 0%

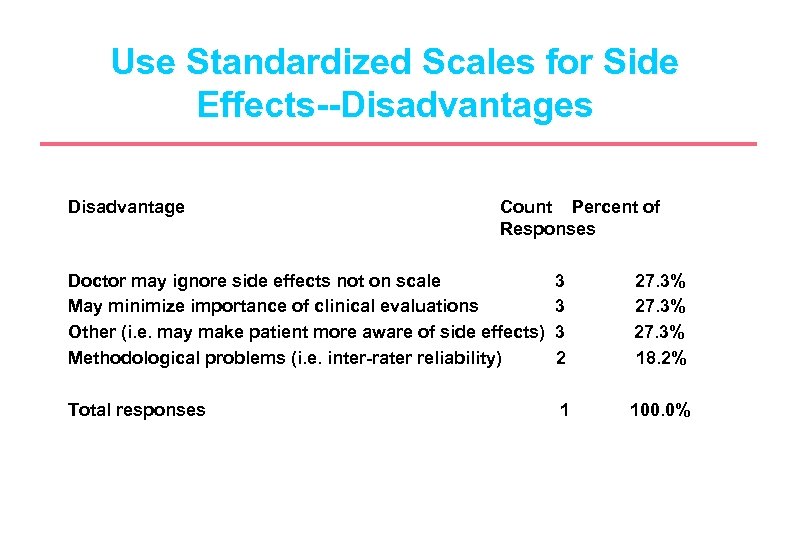

Use Standardized Scales for Side Effects--Disadvantages Disadvantage Count Percent of Responses Doctor may ignore side effects not on scale May minimize importance of clinical evaluations Other (i. e. may make patient more aware of side effects) Methodological problems (i. e. inter-rater reliability) 3 3 3 2 27. 3% 18. 2% Total responses 1 100. 0%

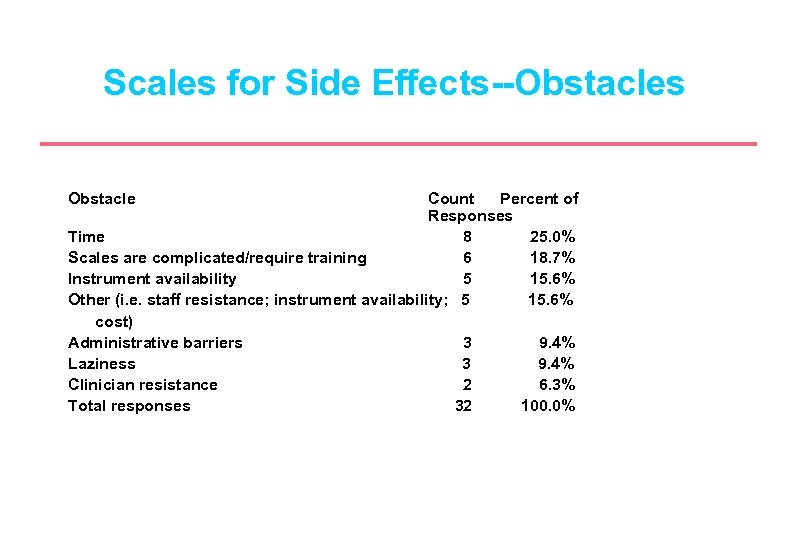

Scales for Side Effects--Obstacles Obstacle Count Percent of Responses Time 8 25. 0% Scales are complicated/require training 6 18. 7% Instrument availability 5 15. 6% Other (i. e. staff resistance; instrument availability; 5 15. 6% cost) Administrative barriers 3 9. 4% Laziness 3 9. 4% Clinician resistance 2 6. 3% Total responses 32 100. 0%

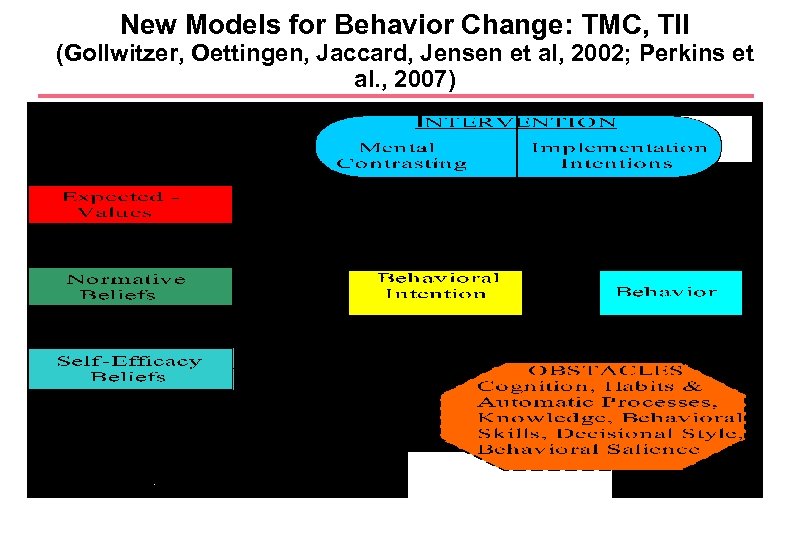

New Models for Behavior Change: TMC, TII (Gollwitzer, Oettingen, Jaccard, Jensen et al, 2002; Perkins et al. , 2007)

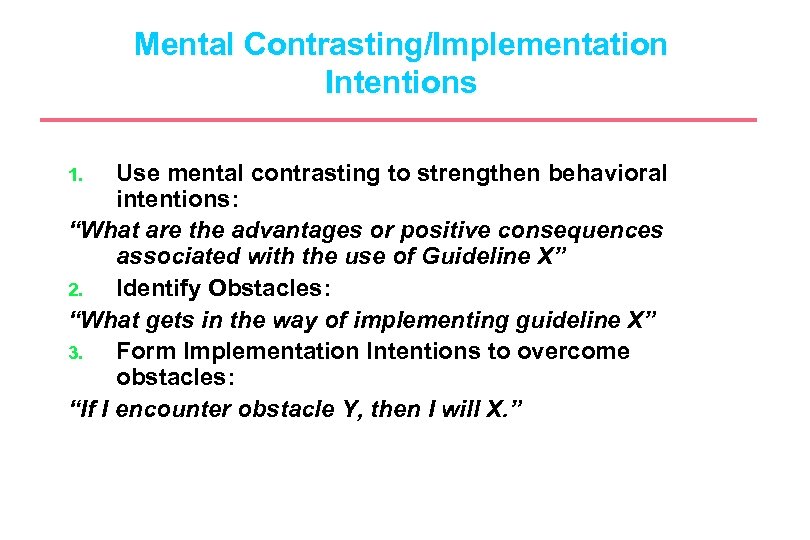

Mental Contrasting/Implementation Intentions Use mental contrasting to strengthen behavioral intentions: “What are the advantages or positive consequences associated with the use of Guideline X” 2. Identify Obstacles: “What gets in the way of implementing guideline X” 3. Form Implementation Intentions to overcome obstacles: “If I encounter obstacle Y, then I will X. ” 1.

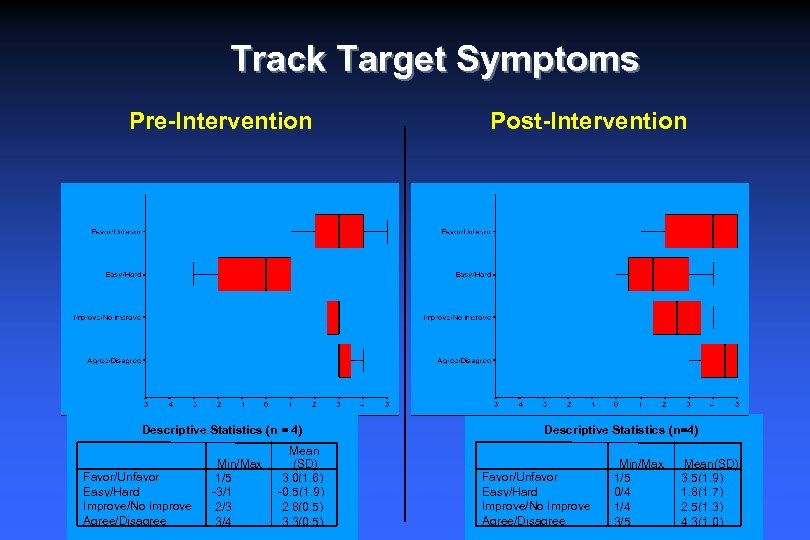

Track Target Symptoms Pre-Intervention Descriptive Statistics (n = 4) Favor/Unfavor Easy/Hard Improve/No Improve Agree/Disagree Min/Max 1/5 -3/1 2/3 3/4 Mean (SD) 3. 0(1. 6) -0. 5(1. 9) 2. 8(0. 5) 3. 3(0. 5) Post-Intervention Descriptive Statistics (n=4) Favor/Unfavor Easy/Hard Improve/No Improve Agree/Disagree Min/Max 1/5 0/4 1/4 3/5 Mean(SD) 3. 5(1. 9) 1. 8(1. 7) 2. 5(1. 3) 4. 3(1. 0)

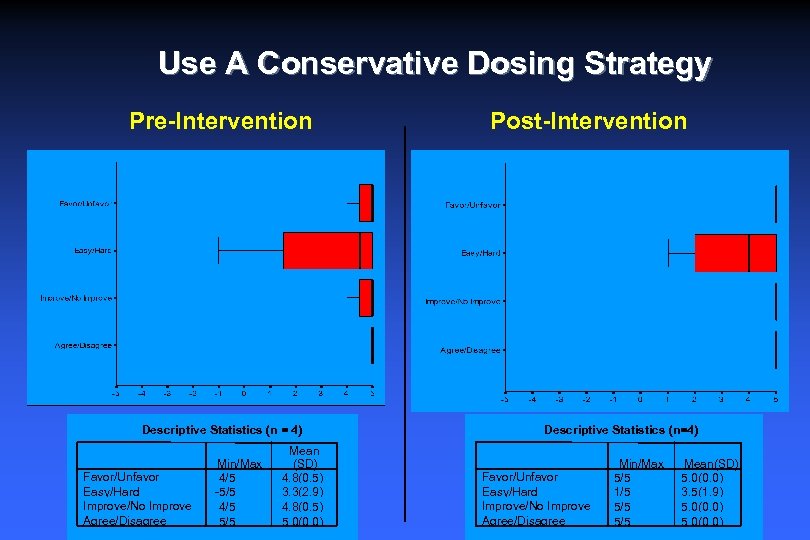

Use A Conservative Dosing Strategy Pre-Intervention Descriptive Statistics (n = 4) Favor/Unfavor Easy/Hard Improve/No Improve Agree/Disagree Min/Max 4/5 -5/5 4/5 5/5 Mean (SD) 4. 8(0. 5) 3. 3(2. 9) 4. 8(0. 5) 5. 0(0. 0) Post-Intervention Descriptive Statistics (n=4) Favor/Unfavor Easy/Hard Improve/No Improve Agree/Disagree Min/Max 5/5 1/5 5/5 Mean(SD) 5. 0(0. 0) 3. 5(1. 9) 5. 0(0. 0)

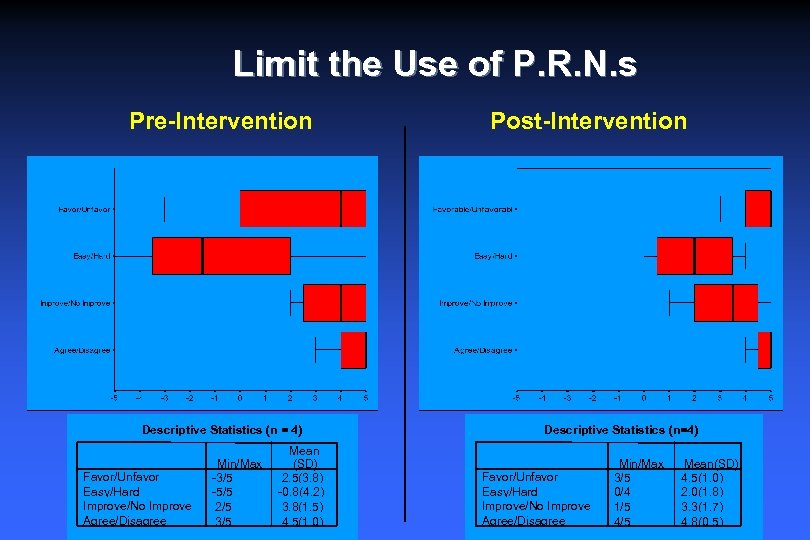

Limit the Use of P. R. N. s Pre-Intervention Descriptive Statistics (n = 4) Favor/Unfavor Easy/Hard Improve/No Improve Agree/Disagree Min/Max -3/5 -5/5 2/5 3/5 Mean (SD) 2. 5(3. 8) -0. 8(4. 2) 3. 8(1. 5) 4. 5(1. 0) Post-Intervention Descriptive Statistics (n=4) Favor/Unfavor Easy/Hard Improve/No Improve Agree/Disagree Min/Max 3/5 0/4 1/5 4/5 Mean(SD) 4. 5(1. 0) 2. 0(1. 8) 3. 3(1. 7) 4. 8(0. 5)

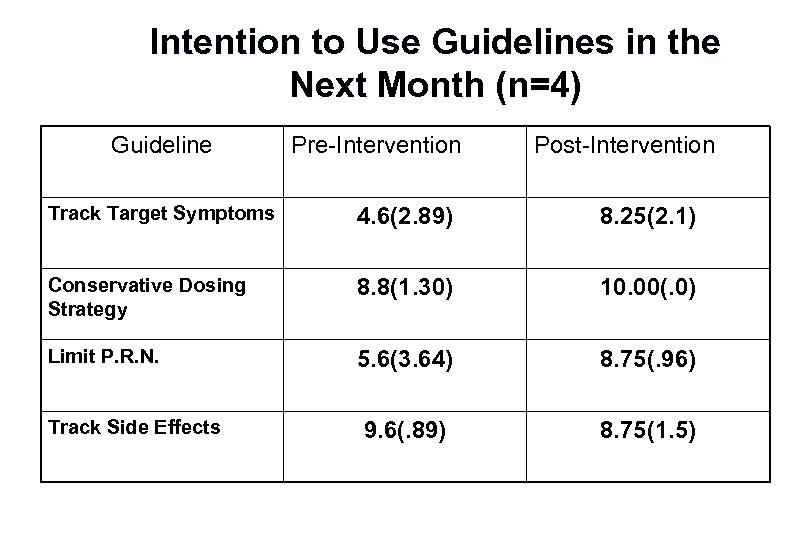

Intention to Use Guidelines in the Next Month (n=4) Guideline Pre-Intervention Post-Intervention Track Target Symptoms 4. 6(2. 89) 8. 25(2. 1) Conservative Dosing Strategy 8. 8(1. 30) 10. 00(. 0) Limit P. R. N. 5. 6(3. 64) 8. 75(. 96) 9. 6(. 89) 8. 75(1. 5) Track Side Effects

Barriers vs. “Promoters” to Delivery of Effective Services (Jensen, 2000) Three Levels: Child & Family Factors: e. g. , Access & Acceptance Efficacious Treatments Provider/Organization Factors: e. g. , Skills, Use of EB Systemic and Societal Factors: e. g. , Organiz. , Funding Policies “Effective” Services

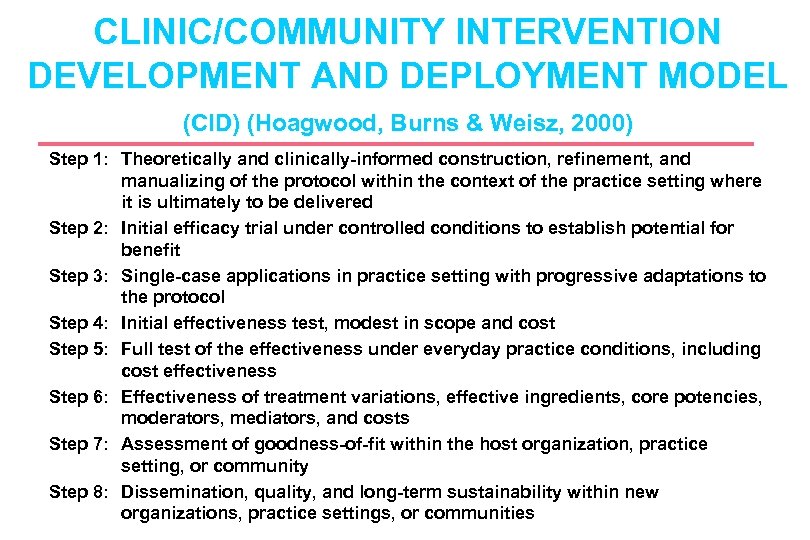

CLINIC/COMMUNITY INTERVENTION DEVELOPMENT AND DEPLOYMENT MODEL (CID) (Hoagwood, Burns & Weisz, 2000) Step 1: Theoretically and clinically-informed construction, refinement, and manualizing of the protocol within the context of the practice setting where it is ultimately to be delivered Step 2: Initial efficacy trial under controlled conditions to establish potential for benefit Step 3: Single-case applications in practice setting with progressive adaptations to the protocol Step 4: Initial effectiveness test, modest in scope and cost Step 5: Full test of the effectiveness under everyday practice conditions, including cost effectiveness Step 6: Effectiveness of treatment variations, effective ingredients, core potencies, moderators, mediators, and costs Step 7: Assessment of goodness-of-fit within the host organization, practice setting, or community Step 8: Dissemination, quality, and long-term sustainability within new organizations, practice settings, or communities

Partnerships & Collaborations in Community-Based Research l Why Partnerships? l partnerships -- not with other scientists per se, but with experts of a different type -- experts from families, neighborhoods, schools, in communities. l Only from these experts can we learn what is palatable, feasible, durable, affordable, and sustainable for children and adolescents at risk or in need of mental health services l “Partnership” - changes in typical university investigator - research subject relationship Practice – based Research Networks l l Bi-directional learning

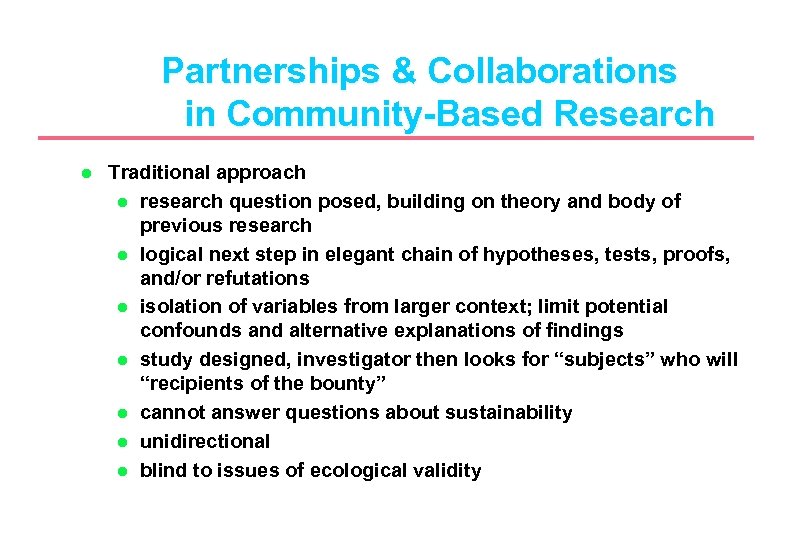

Partnerships & Collaborations in Community-Based Research l Traditional approach l research question posed, building on theory and body of previous research l logical next step in elegant chain of hypotheses, tests, proofs, and/or refutations l isolation of variables from larger context; limit potential confounds and alternative explanations of findings l study designed, investigator then looks for “subjects” who will “recipients of the bounty” l cannot answer questions about sustainability l unidirectional l blind to issues of ecological validity

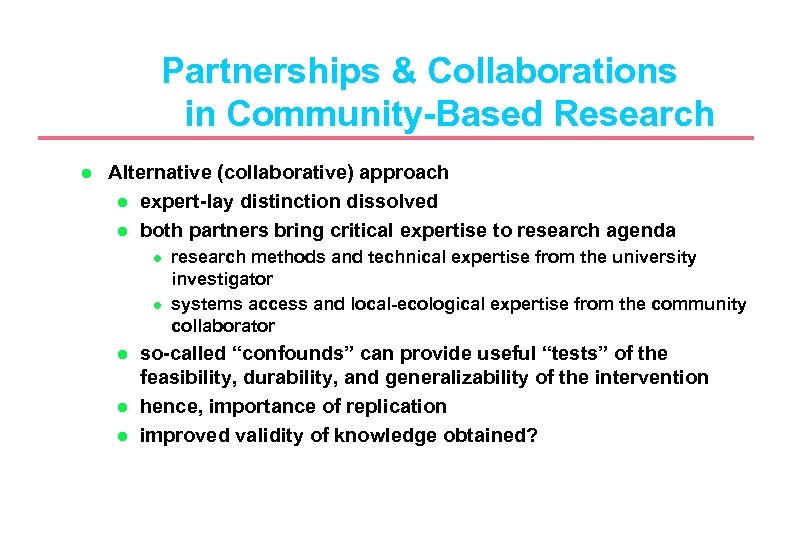

Partnerships & Collaborations in Community-Based Research l Alternative (collaborative) approach l expert-lay distinction dissolved l both partners bring critical expertise to research agenda l l l research methods and technical expertise from the university investigator systems access and local-ecological expertise from the community collaborator so-called “confounds” can provide useful “tests” of the feasibility, durability, and generalizability of the intervention hence, importance of replication improved validity of knowledge obtained?

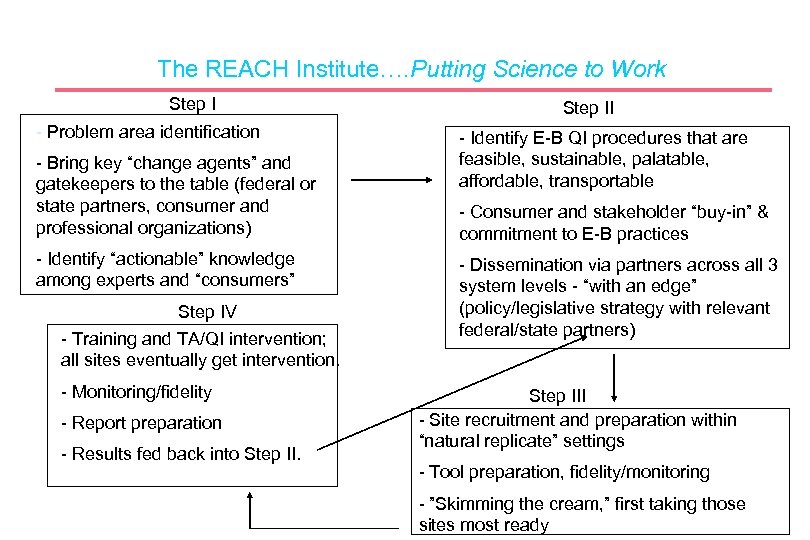

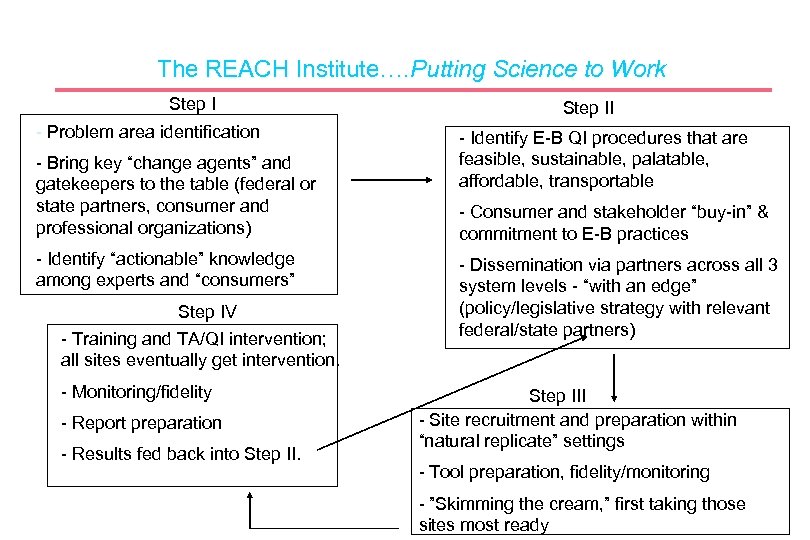

The REACH Institute…. Putting Science to Work Step I - Problem area identification - Bring key “change agents” and gatekeepers to the table (federal or state partners, consumer and professional organizations) - Identify “actionable” knowledge among experts and “consumers” Step IV - Training and TA/QI intervention; all sites eventually get intervention. - Monitoring/fidelity - Report preparation - Results fed back into Step II - Identify E-B QI procedures that are feasible, sustainable, palatable, affordable, transportable - Consumer and stakeholder “buy-in” & commitment to E-B practices - Dissemination via partners across all 3 system levels - “with an edge” (policy/legislative strategy with relevant federal/state partners) Step III - Site recruitment and preparation within “natural replicate” settings - Tool preparation, fidelity/monitoring - ”Skimming the cream, ” first taking those sites most ready

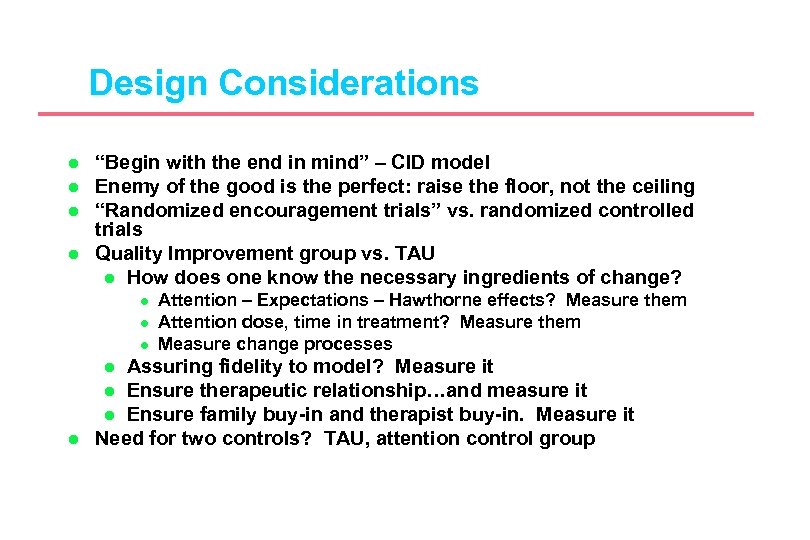

Design Considerations l l “Begin with the end in mind” – CID model Enemy of the good is the perfect: raise the floor, not the ceiling “Randomized encouragement trials” vs. randomized controlled trials Quality Improvement group vs. TAU l How does one know the necessary ingredients of change? l l l Assuring fidelity to model? Measure it l Ensure therapeutic relationship…and measure it l Ensure family buy-in and therapist buy-in. Measure it Need for two controls? TAU, attention control group l l Attention – Expectations – Hawthorne effects? Measure them Attention dose, time in treatment? Measure them Measure change processes

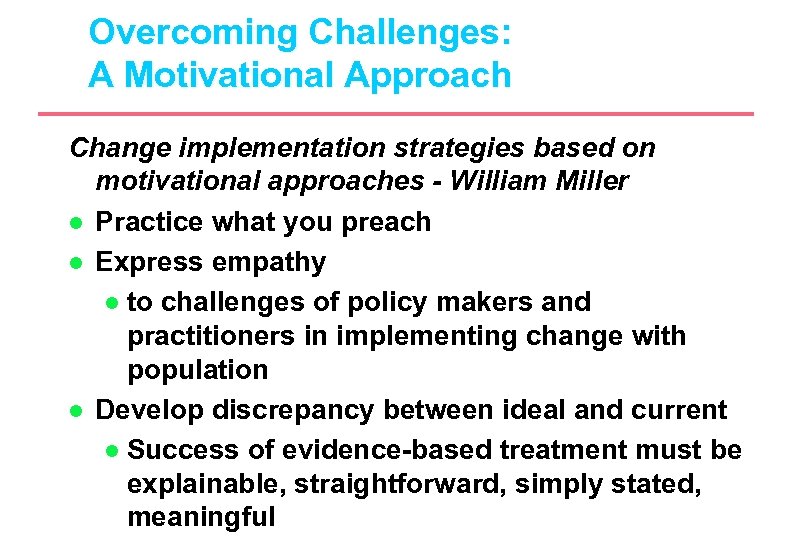

Overcoming Challenges: A Motivational Approach Change implementation strategies based on motivational approaches - William Miller l Practice what you preach l Express empathy l to challenges of policy makers and practitioners in implementing change with population l Develop discrepancy between ideal and current l Success of evidence-based treatment must be explainable, straightforward, simply stated, meaningful

Overcoming Challenges: A Motivational Approach l l Avoid argumentation l Clinician scientists credible to policy makers and community-based practitioners l Avoid overstating the case and “poisoning the well” Roll with resistance l Develop strategies for engagement, prepare for possible resistance

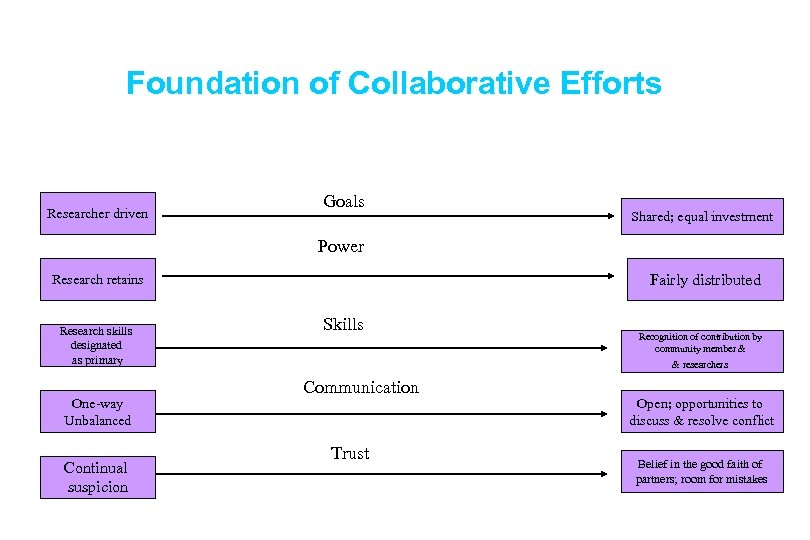

Foundation of Collaborative Efforts Researcher driven Goals Shared; equal investment Power Fairly distributed Research retains Research skills designated as primary One-way Unbalanced Continual suspicion Skills Recognition of contribution by community member & & researchers Communication Trust Open; opportunities to discuss & resolve conflict Belief in the good faith of partners; room for mistakes

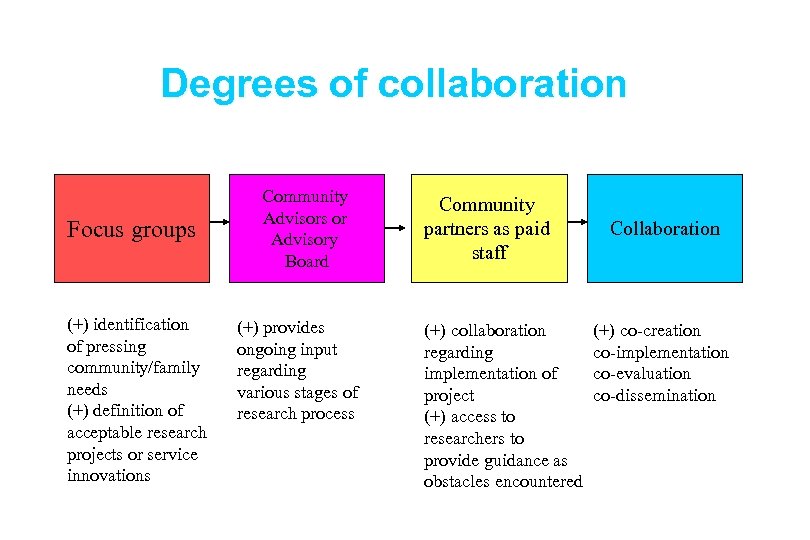

Degrees of collaboration Focus groups (+) identification of pressing community/family needs (+) definition of acceptable research projects or service innovations Community Advisors or Advisory Board (+) provides ongoing input regarding various stages of research process Community partners as paid staff (+) collaboration regarding implementation of project (+) access to researchers to provide guidance as obstacles encountered Collaboration (+) co-creation co-implementation co-evaluation co-dissemination

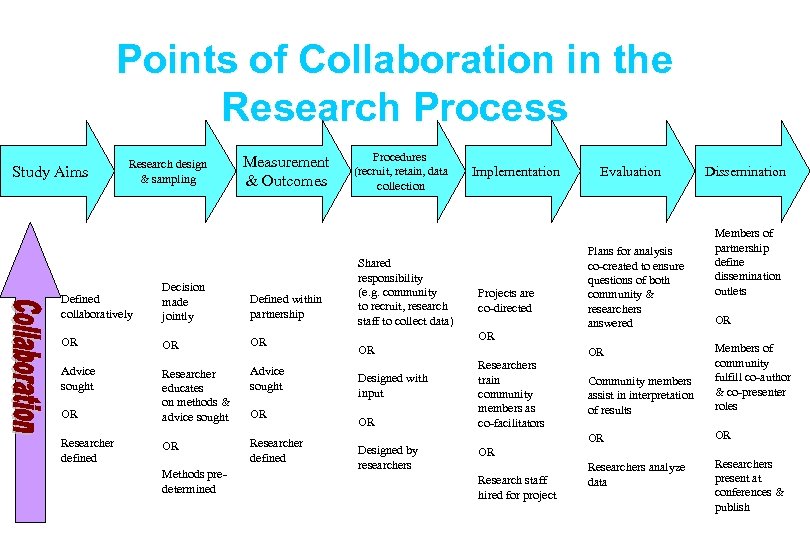

Points of Collaboration in the Research Process Study Aims Research design & sampling Measurement & Outcomes Defined collaboratively Decision made jointly Defined within partnership OR OR OR Advice sought Researcher educates on methods & advice sought Advice sought OR Researcher defined Methods predetermined OR Procedures (recruit, retain, data collection Shared responsibility (e. g. community to recruit, research staff to collect data) OR Designed with input OR Designed by researchers Implementation Projects are co-directed OR Researchers train community members as co-facilitators OR Research staff hired for project Evaluation Plans for analysis co-created to ensure questions of both community & researchers answered Dissemination Members of partnership define dissemination outlets OR Community members assist in interpretation of results Members of community fulfill co-author & co-presenter roles OR OR Researchers analyze data Researchers present at conferences & publish OR

The REACH Institute…. Putting Science to Work Step I - Problem area identification - Bring key “change agents” and gatekeepers to the table (federal or state partners, consumer and professional organizations) - Identify “actionable” knowledge among experts and “consumers” Step IV - Training and TA/QI intervention; all sites eventually get intervention. - Monitoring/fidelity - Report preparation - Results fed back into Step II - Identify E-B QI procedures that are feasible, sustainable, palatable, affordable, transportable - Consumer and stakeholder “buy-in” & commitment to E-B practices - Dissemination via partners across all 3 system levels - “with an edge” (policy/legislative strategy with relevant federal/state partners) Step III - Site recruitment and preparation within “natural replicate” settings - Tool preparation, fidelity/monitoring - ”Skimming the cream, ” first taking those sites most ready

1c9dd78fdac9fe25dc5ca0f0ff858ce6.ppt