9b53c7b1acbe7ea34c6de79331c51d58.ppt

- Количество слайдов: 111

ESnet Status Update ESCC, July 2008 William E. Johnston, ESnet Department Head and Senior Scientist Joe Burrescia, General Manager Mike Collins, Chin Guok, and Eli Dart Engineering Jim Gagliardi, Operations and Deployment Stan Kluz, Infrastructure and ECS Mike Helm, Federated Trust Dan Peterson, Security Officer Gizella Kapus, Business Manager and the rest of the ESnet Team Energy Sciences Network Lawrence Berkeley National Laboratory wej@es. net, www. es. net This talk is available at www. es. net/ESnet 4 Networking for the Future of Science 1

ESnet Status Update ESCC, July 2008 William E. Johnston, ESnet Department Head and Senior Scientist Joe Burrescia, General Manager Mike Collins, Chin Guok, and Eli Dart Engineering Jim Gagliardi, Operations and Deployment Stan Kluz, Infrastructure and ECS Mike Helm, Federated Trust Dan Peterson, Security Officer Gizella Kapus, Business Manager and the rest of the ESnet Team Energy Sciences Network Lawrence Berkeley National Laboratory wej@es. net, www. es. net This talk is available at www. es. net/ESnet 4 Networking for the Future of Science 1

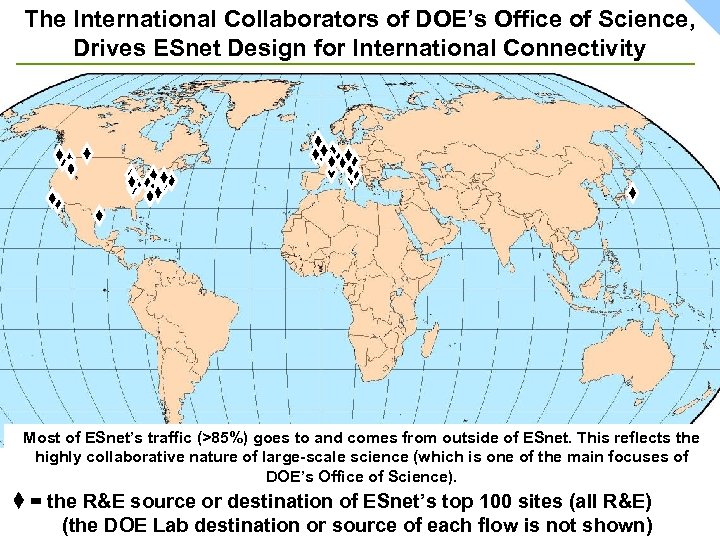

DOE Office of Science and ESnet – the ESnet Mission • ESnet’s primary mission is to enable the largescale science that is the mission of the Office of Science (SC) and that depends on: – – – Sharing of massive amounts of data Supporting thousands of collaborators world-wide Distributed data processing Distributed data management Distributed simulation, visualization, and computational steering – Collaboration with the US and International Research and Education community • ESnet provides network and collaboration services to Office of Science laboratories and many other DOE programs in order to accomplish its mission 2

DOE Office of Science and ESnet – the ESnet Mission • ESnet’s primary mission is to enable the largescale science that is the mission of the Office of Science (SC) and that depends on: – – – Sharing of massive amounts of data Supporting thousands of collaborators world-wide Distributed data processing Distributed data management Distributed simulation, visualization, and computational steering – Collaboration with the US and International Research and Education community • ESnet provides network and collaboration services to Office of Science laboratories and many other DOE programs in order to accomplish its mission 2

ESnet Stakeholders and their Role in ESnet • DOE Office of Science Oversight (“SC”) of ESnet – The SC provides high-level oversight through the budgeting process – Near term input is provided by weekly teleconferences between SC and ESnet – Indirect long term input is through the process of ESnet observing and projecting network utilization of its largescale users – Direct long term input is through the SC Program Offices Requirements Workshops (more later) • SC Labs input to ESnet – Short term input through many daily (mostly) email interactions – Long term input through ESCC 3

ESnet Stakeholders and their Role in ESnet • DOE Office of Science Oversight (“SC”) of ESnet – The SC provides high-level oversight through the budgeting process – Near term input is provided by weekly teleconferences between SC and ESnet – Indirect long term input is through the process of ESnet observing and projecting network utilization of its largescale users – Direct long term input is through the SC Program Offices Requirements Workshops (more later) • SC Labs input to ESnet – Short term input through many daily (mostly) email interactions – Long term input through ESCC 3

ESnet Stakeholders and the Role in ESnet • SC science collaborators input – Through numerous meeting, primarily with the networks that serve the science collaborators 4

ESnet Stakeholders and the Role in ESnet • SC science collaborators input – Through numerous meeting, primarily with the networks that serve the science collaborators 4

New in ESnet – Advanced Technologies Group / Coordinator • Up to this point individual ESnet engineers have worked in their “spare” time to do the R&D, or to evaluate R&D done by others, and coordinate the implementation and/or introduction of the new services into the production network environment – and they will continue to do so • In addition to this – looking to the future – ESnet has implemented a more formal approach to investigating and coordinating the R&D for the new services needed by science – An ESnet Advanced Technologies Group / Coordinator has been established with a twofold purpose: 1) To provide a unified view to the world of the several engineering development projects that are on-going in ESnet in order to publicize a coherent catalogue of advanced development work going on in ESnet. 2) To develop a portfolio of exploratory new projects, some involving technology developed by others, and some of which will be developed within the context of ESnet. • A highly qualified Advanced Technologies lead – Brian Tierney – has been hired and funded from current ESnet operational funding, and by next year a second staff person will be added. Beyond this, growth of the effort will be driven by new funding obtained specifically for that purpose. 5

New in ESnet – Advanced Technologies Group / Coordinator • Up to this point individual ESnet engineers have worked in their “spare” time to do the R&D, or to evaluate R&D done by others, and coordinate the implementation and/or introduction of the new services into the production network environment – and they will continue to do so • In addition to this – looking to the future – ESnet has implemented a more formal approach to investigating and coordinating the R&D for the new services needed by science – An ESnet Advanced Technologies Group / Coordinator has been established with a twofold purpose: 1) To provide a unified view to the world of the several engineering development projects that are on-going in ESnet in order to publicize a coherent catalogue of advanced development work going on in ESnet. 2) To develop a portfolio of exploratory new projects, some involving technology developed by others, and some of which will be developed within the context of ESnet. • A highly qualified Advanced Technologies lead – Brian Tierney – has been hired and funded from current ESnet operational funding, and by next year a second staff person will be added. Beyond this, growth of the effort will be driven by new funding obtained specifically for that purpose. 5

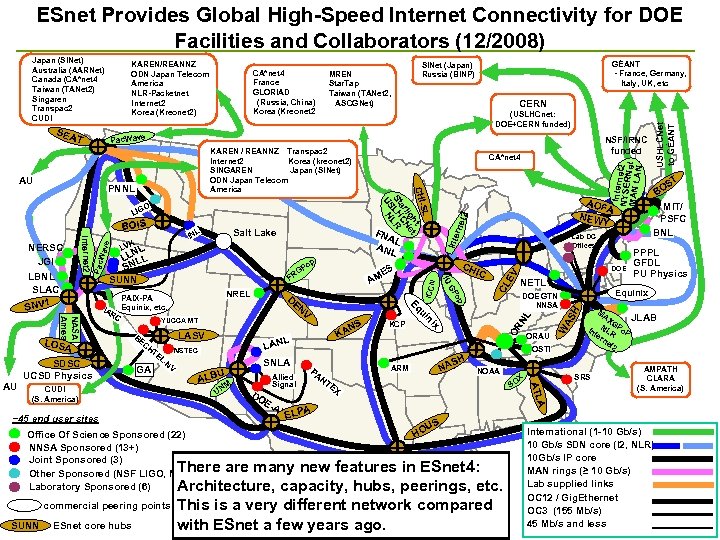

ESnet Provides Global High-Speed Internet Connectivity for DOE Facilities and Collaborators (12/2008) KAREN/REANNZ ODN Japan Telecom America NLR-Packetnet Internet 2 Korea (Kreonet 2) SEA T CA*net 4 France GLORIAD (Russia, China) Korea (Kreonet 2 TE GA LNV NSTEC SNLA U M UN EAL NT Intern NYSERet 2 MAN L Net AN OSTI NOAA EX S OX MIT/ PSFC BNL PPPL GFDL PU Physics Equinix H EV CL NL N ARM PA B LPA E H AS ORAU T S BO MA XG In NLR Po. P te rn et 2 SRS JLAB AMPATH CLARA (S. America) A Office Of Science Sponsored (22) NNSA Sponsored (13+) Joint Sponsored (3) Other Sponsored (NSF LIGO, NOAA) Laboratory Sponsored (6) DO Allied Signal DOE GTN NNSA ATL ALB ~45 end user sites commercial peering points L LAN NETL W AS LASV BE CH KA KCP x NS DOE op YUCCA MT CUDI (S. America) ESnet core hubs EN V ni SDSC UCSD Physics D GP PAIX-PA Equinix, etc. IC ui NASA Ames RC NREL CH ES AM G FR Eq IA p Po IU NL LL LL N S SUNN Lab DC Offices Inte FNA L ANL L LOSA SUNN Salt Lake L IN VK OR Pac Internet 2 Wav e BOIS AOF A NEW Y rne t 2 O ICCN PNNL NSF/IRNC funded CA*net 4 SL CHIht t lig e ar CN St LH LR US N KAREN / REANNZ Transpac 2 Internet 2 Korea (kreonet 2) SINGAREN Japan (SINet) ODN Japan Telecom America LIG AU CERN (USLHCnet: DOE+CERN funded) Pac. Wave AU NERSC JGI LBNL SLAC SNV 1 GÉANT - France, Germany, Italy, UK, etc SINet (Japan) Russia (BINP) MREN Star. Tap Taiwan (TANet 2, ASCGNet) USHLCNet to GÉANT Japan (SINet) Australia (AARNet) Canada (CA*net 4 Taiwan (TANet 2) Singaren Transpac 2 CUDI US HO There are many new features in ESnet 4: Architecture, capacity, hubs, peerings, etc. R&E This is. Specific R&E network peers a very different network compared networks with ESnet. R&Efew years ago. only. Geography is Other a peering points representational International (1 -10 Gb/s) 10 Gb/s SDN core (I 2, NLR) 10 Gb/s IP core MAN rings (≥ 10 Gb/s) Lab supplied links OC 12 / Gig. Ethernet OC 3 (155 Mb/s) 45 Mb/s and less

ESnet Provides Global High-Speed Internet Connectivity for DOE Facilities and Collaborators (12/2008) KAREN/REANNZ ODN Japan Telecom America NLR-Packetnet Internet 2 Korea (Kreonet 2) SEA T CA*net 4 France GLORIAD (Russia, China) Korea (Kreonet 2 TE GA LNV NSTEC SNLA U M UN EAL NT Intern NYSERet 2 MAN L Net AN OSTI NOAA EX S OX MIT/ PSFC BNL PPPL GFDL PU Physics Equinix H EV CL NL N ARM PA B LPA E H AS ORAU T S BO MA XG In NLR Po. P te rn et 2 SRS JLAB AMPATH CLARA (S. America) A Office Of Science Sponsored (22) NNSA Sponsored (13+) Joint Sponsored (3) Other Sponsored (NSF LIGO, NOAA) Laboratory Sponsored (6) DO Allied Signal DOE GTN NNSA ATL ALB ~45 end user sites commercial peering points L LAN NETL W AS LASV BE CH KA KCP x NS DOE op YUCCA MT CUDI (S. America) ESnet core hubs EN V ni SDSC UCSD Physics D GP PAIX-PA Equinix, etc. IC ui NASA Ames RC NREL CH ES AM G FR Eq IA p Po IU NL LL LL N S SUNN Lab DC Offices Inte FNA L ANL L LOSA SUNN Salt Lake L IN VK OR Pac Internet 2 Wav e BOIS AOF A NEW Y rne t 2 O ICCN PNNL NSF/IRNC funded CA*net 4 SL CHIht t lig e ar CN St LH LR US N KAREN / REANNZ Transpac 2 Internet 2 Korea (kreonet 2) SINGAREN Japan (SINet) ODN Japan Telecom America LIG AU CERN (USLHCnet: DOE+CERN funded) Pac. Wave AU NERSC JGI LBNL SLAC SNV 1 GÉANT - France, Germany, Italy, UK, etc SINet (Japan) Russia (BINP) MREN Star. Tap Taiwan (TANet 2, ASCGNet) USHLCNet to GÉANT Japan (SINet) Australia (AARNet) Canada (CA*net 4 Taiwan (TANet 2) Singaren Transpac 2 CUDI US HO There are many new features in ESnet 4: Architecture, capacity, hubs, peerings, etc. R&E This is. Specific R&E network peers a very different network compared networks with ESnet. R&Efew years ago. only. Geography is Other a peering points representational International (1 -10 Gb/s) 10 Gb/s SDN core (I 2, NLR) 10 Gb/s IP core MAN rings (≥ 10 Gb/s) Lab supplied links OC 12 / Gig. Ethernet OC 3 (155 Mb/s) 45 Mb/s and less

Talk Outline I. ESnet 4 » Ia. Building ESnet 4 Ib. Network Services – Virtual Circuits Ic. Network Services – Network Monitoring Id. Network Services – IPv 6 II. SC Program Requirements and ESnet Response » IIa. Re-evaluating the Strategy III. Science Collaboration Services IIIa. Federated Trust IIIb. Audio, Video, Data Teleconferencing IIIc. Enhanced Collaboration Services 7

Talk Outline I. ESnet 4 » Ia. Building ESnet 4 Ib. Network Services – Virtual Circuits Ic. Network Services – Network Monitoring Id. Network Services – IPv 6 II. SC Program Requirements and ESnet Response » IIa. Re-evaluating the Strategy III. Science Collaboration Services IIIa. Federated Trust IIIb. Audio, Video, Data Teleconferencing IIIc. Enhanced Collaboration Services 7

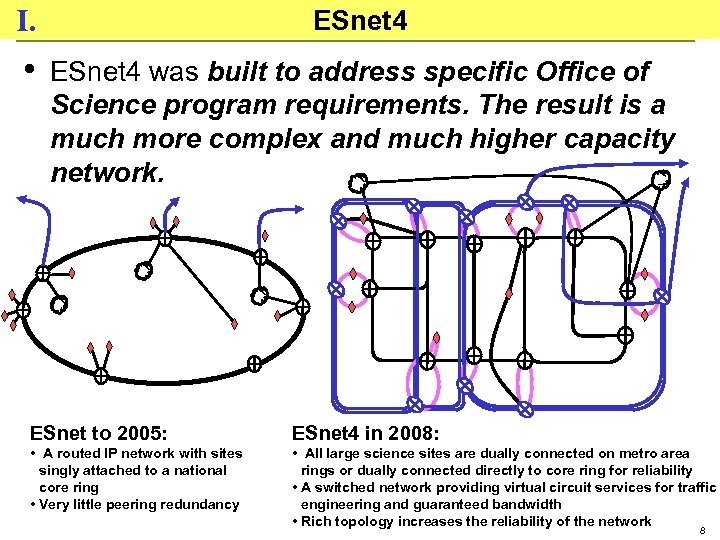

I. ESnet 4 • ESnet 4 was built to address specific Office of Science program requirements. The result is a much more complex and much higher capacity network. ESnet to 2005: ESnet 4 in 2008: • A routed IP network with sites singly attached to a national core ring • Very little peering redundancy • All large science sites are dually connected on metro area rings or dually connected directly to core ring for reliability • A switched network providing virtual circuit services for traffic engineering and guaranteed bandwidth • Rich topology increases the reliability of the network 8

I. ESnet 4 • ESnet 4 was built to address specific Office of Science program requirements. The result is a much more complex and much higher capacity network. ESnet to 2005: ESnet 4 in 2008: • A routed IP network with sites singly attached to a national core ring • Very little peering redundancy • All large science sites are dually connected on metro area rings or dually connected directly to core ring for reliability • A switched network providing virtual circuit services for traffic engineering and guaranteed bandwidth • Rich topology increases the reliability of the network 8

Ia. Building ESnet 4 - SDN State of SDN as of mid-June (Actually, not quite, as Jim's crew had already deployed Chicago and maybe one other hub, and we were still waiting on a few Juniper deliveries. ) 9

Ia. Building ESnet 4 - SDN State of SDN as of mid-June (Actually, not quite, as Jim's crew had already deployed Chicago and maybe one other hub, and we were still waiting on a few Juniper deliveries. ) 9

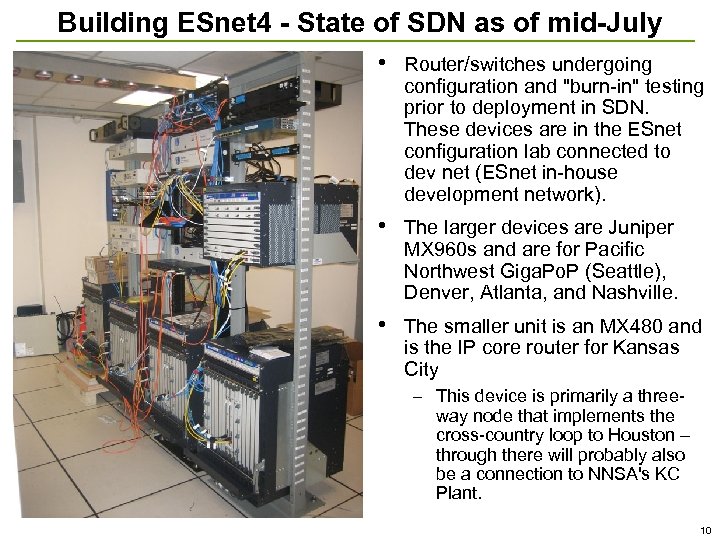

Building ESnet 4 - State of SDN as of mid-July • Router/switches undergoing configuration and "burn-in" testing prior to deployment in SDN. These devices are in the ESnet configuration lab connected to dev net (ESnet in-house development network). • The larger devices are Juniper MX 960 s and are for Pacific Northwest Giga. Po. P (Seattle), Denver, Atlanta, and Nashville. • The smaller unit is an MX 480 and is the IP core router for Kansas City – This device is primarily a threeway node that implements the cross-country loop to Houston – through there will probably also be a connection to NNSA's KC Plant. 10

Building ESnet 4 - State of SDN as of mid-July • Router/switches undergoing configuration and "burn-in" testing prior to deployment in SDN. These devices are in the ESnet configuration lab connected to dev net (ESnet in-house development network). • The larger devices are Juniper MX 960 s and are for Pacific Northwest Giga. Po. P (Seattle), Denver, Atlanta, and Nashville. • The smaller unit is an MX 480 and is the IP core router for Kansas City – This device is primarily a threeway node that implements the cross-country loop to Houston – through there will probably also be a connection to NNSA's KC Plant. 10

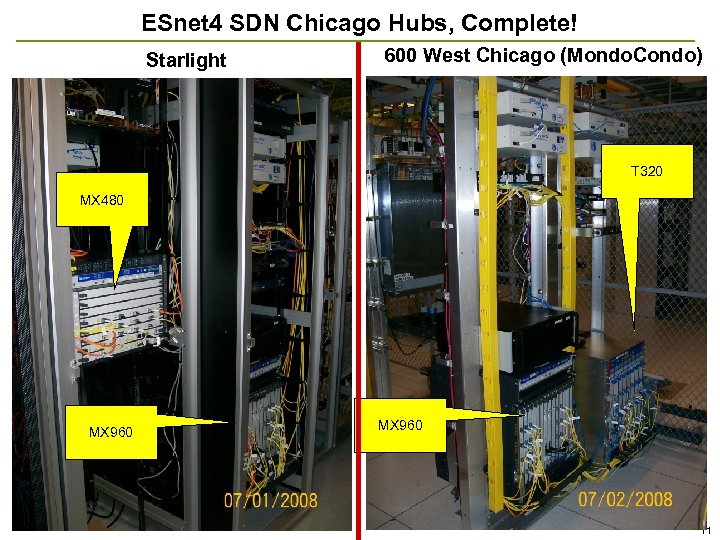

ESnet 4 SDN Chicago Hubs, Complete! Starlight 600 West Chicago (Mondo. Condo) T 320 MX 480 MX 960 11

ESnet 4 SDN Chicago Hubs, Complete! Starlight 600 West Chicago (Mondo. Condo) T 320 MX 480 MX 960 11

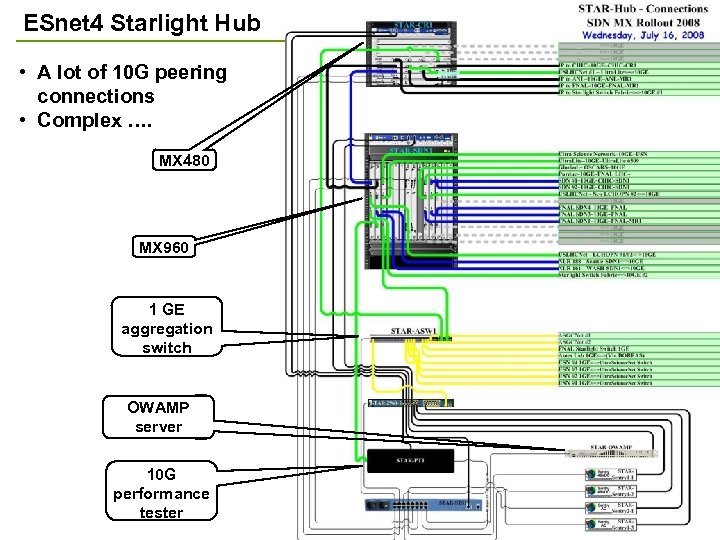

ESnet 4 Starlight Hub • A lot of 10 G peering connections • Complex …. MX 480 MX 960 1 GE aggregation switch OWAMP server 10 G performance tester 12

ESnet 4 Starlight Hub • A lot of 10 G peering connections • Complex …. MX 480 MX 960 1 GE aggregation switch OWAMP server 10 G performance tester 12

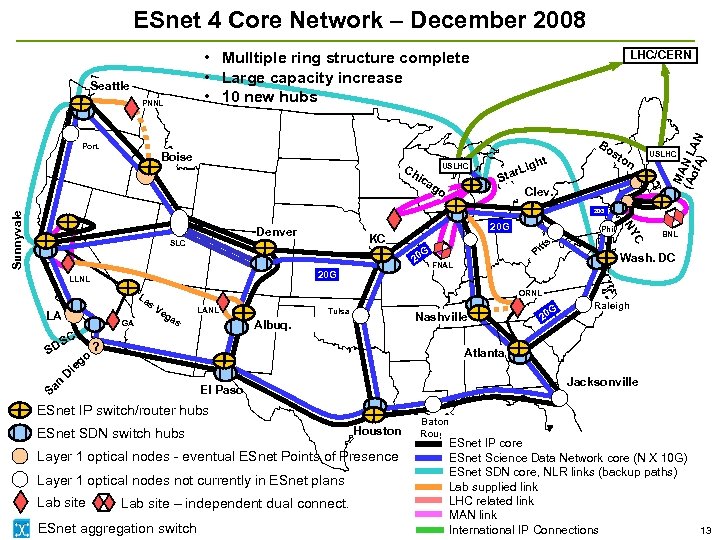

ESnet 4 Core Network – December 2008 PNNL Boise Ch USLHC ica go t Bo st h r. Lig Sta on Clev. MA (Ao N L f. A) AN Seattle Port. LHC/CERN • Mulltiple ring structure complete • Large capacity increase • 10 new hubs USLHC Denver SLC G 20 20 G LLNL La s LA GA SC SD go 20 G KC tt Pi FNAL D Ve ga s LANL Tulsa Nashville Albuq. ? Wash. DC G 20 Raleigh Atlanta Jacksonville El Paso ESnet IP switch/router hubs ESnet SDN switch hubs Houston Layer 1 optical nodes - eventual ESnet Points of Presence Layer 1 optical nodes not currently in ESnet plans Lab site BNL ORNL ie n Sa Phil s. C NY Sunnyvale 20 G Lab site – independent dual connect. ESnet aggregation switch Baton Rouge ESnet IP core ESnet Science Data Network core (N X 10 G) ESnet SDN core, NLR links (backup paths) Lab supplied link LHC related link MAN link International IP Connections 13

ESnet 4 Core Network – December 2008 PNNL Boise Ch USLHC ica go t Bo st h r. Lig Sta on Clev. MA (Ao N L f. A) AN Seattle Port. LHC/CERN • Mulltiple ring structure complete • Large capacity increase • 10 new hubs USLHC Denver SLC G 20 20 G LLNL La s LA GA SC SD go 20 G KC tt Pi FNAL D Ve ga s LANL Tulsa Nashville Albuq. ? Wash. DC G 20 Raleigh Atlanta Jacksonville El Paso ESnet IP switch/router hubs ESnet SDN switch hubs Houston Layer 1 optical nodes - eventual ESnet Points of Presence Layer 1 optical nodes not currently in ESnet plans Lab site BNL ORNL ie n Sa Phil s. C NY Sunnyvale 20 G Lab site – independent dual connect. ESnet aggregation switch Baton Rouge ESnet IP core ESnet Science Data Network core (N X 10 G) ESnet SDN core, NLR links (backup paths) Lab supplied link LHC related link MAN link International IP Connections 13

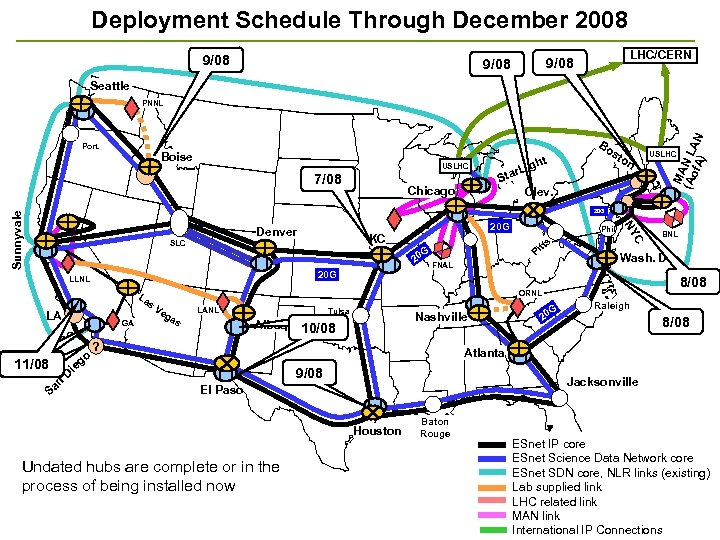

Deployment Schedule Through December 2008 9/08 LHC/CERN 9/08 Seattle Port. Boise USLHC 7/08 Chicago t Bo st h r. Lig Sta on Clev. MA (Ao N L f. A) AN PNNL USLHC Denver SLC G 20 20 G LLNL La s LA GA C S SD 11/08 n Sa go Phil s. tt Pi FNAL Ve ga s LANL Tulsa Nashville Albuq. 10/08 ? G 20 BNL Wash. DC 8/08 ORNL Raleigh 8/08 Atlanta ie D 20 G KC C NY Sunnyvale 20 G 9/08 Jacksonville El Paso Houston Undated hubs are complete or in the process of being installed now Baton Rouge ESnet IP core ESnet Science Data Network core ESnet SDN core, NLR links (existing) Lab supplied link LHC related link MAN link 14 International IP Connections

Deployment Schedule Through December 2008 9/08 LHC/CERN 9/08 Seattle Port. Boise USLHC 7/08 Chicago t Bo st h r. Lig Sta on Clev. MA (Ao N L f. A) AN PNNL USLHC Denver SLC G 20 20 G LLNL La s LA GA C S SD 11/08 n Sa go Phil s. tt Pi FNAL Ve ga s LANL Tulsa Nashville Albuq. 10/08 ? G 20 BNL Wash. DC 8/08 ORNL Raleigh 8/08 Atlanta ie D 20 G KC C NY Sunnyvale 20 G 9/08 Jacksonville El Paso Houston Undated hubs are complete or in the process of being installed now Baton Rouge ESnet IP core ESnet Science Data Network core ESnet SDN core, NLR links (existing) Lab supplied link LHC related link MAN link 14 International IP Connections

ESnet 4 Metro Area Rings, Projected for December 2008 Long Island MAN West Chicago MAN 600 W. Chicago Seattle LHC/CERN USLHCNet BNL PNNL Starlight Port. Boise USLHCNet Chicago Denver SLC LA Ve LANL ga s Tulsa tt Pi SUNN BNL Wash. DC FNAL Nashville Albuq. Atlanta NERSC SNLL LLNL • • G 20 Raleigh Newport News - Elite Jacksonville El Paso JGI V 1 Phil s. go ie LBNL D SLAC USLHC ORNL GA San Francisco S Bay. C ? MAN SD Area SN 20 G KC 20 G La s on 20 G G 20 LLNL n Sa ANL st C NY Sunnyvale FNAL ht Lig tar 8 th (NEWY) S 111 Clev. Bo MA (Ao N L f. A) AN 32 Ao. A, NYC Houston Baton Rouge Wash. , DC MATP JLab ESnet IP core Upgrade SFBAMAN switches – 12/08 -1/09 ELITE ESnet Science Data Network core LI MAN expansion, BNL diverse entry – 7 -8/08 ESnet SDN core, NLR links (existing) ODU FNAL and BNL dual ESnet connection - ? /08 Lab supplied link Dual connections for large data centers LHC related link MAN link (FNAL, BNL) 15 International IP Connections

ESnet 4 Metro Area Rings, Projected for December 2008 Long Island MAN West Chicago MAN 600 W. Chicago Seattle LHC/CERN USLHCNet BNL PNNL Starlight Port. Boise USLHCNet Chicago Denver SLC LA Ve LANL ga s Tulsa tt Pi SUNN BNL Wash. DC FNAL Nashville Albuq. Atlanta NERSC SNLL LLNL • • G 20 Raleigh Newport News - Elite Jacksonville El Paso JGI V 1 Phil s. go ie LBNL D SLAC USLHC ORNL GA San Francisco S Bay. C ? MAN SD Area SN 20 G KC 20 G La s on 20 G G 20 LLNL n Sa ANL st C NY Sunnyvale FNAL ht Lig tar 8 th (NEWY) S 111 Clev. Bo MA (Ao N L f. A) AN 32 Ao. A, NYC Houston Baton Rouge Wash. , DC MATP JLab ESnet IP core Upgrade SFBAMAN switches – 12/08 -1/09 ELITE ESnet Science Data Network core LI MAN expansion, BNL diverse entry – 7 -8/08 ESnet SDN core, NLR links (existing) ODU FNAL and BNL dual ESnet connection - ? /08 Lab supplied link Dual connections for large data centers LHC related link MAN link (FNAL, BNL) 15 International IP Connections

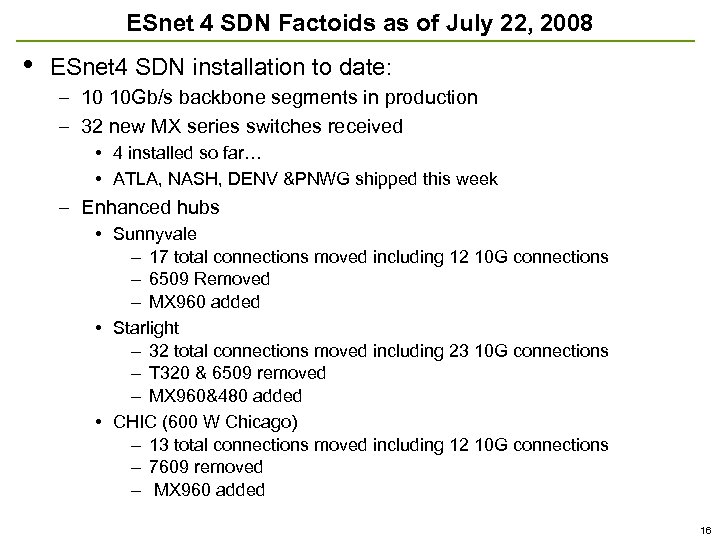

ESnet 4 SDN Factoids as of July 22, 2008 • ESnet 4 SDN installation to date: – 10 10 Gb/s backbone segments in production – 32 new MX series switches received • 4 installed so far… • ATLA, NASH, DENV &PNWG shipped this week – Enhanced hubs • Sunnyvale – 17 total connections moved including 12 10 G connections – 6509 Removed – MX 960 added • Starlight – 32 total connections moved including 23 10 G connections – T 320 & 6509 removed – MX 960&480 added • CHIC (600 W Chicago) – 13 total connections moved including 12 10 G connections – 7609 removed – MX 960 added 16

ESnet 4 SDN Factoids as of July 22, 2008 • ESnet 4 SDN installation to date: – 10 10 Gb/s backbone segments in production – 32 new MX series switches received • 4 installed so far… • ATLA, NASH, DENV &PNWG shipped this week – Enhanced hubs • Sunnyvale – 17 total connections moved including 12 10 G connections – 6509 Removed – MX 960 added • Starlight – 32 total connections moved including 23 10 G connections – T 320 & 6509 removed – MX 960&480 added • CHIC (600 W Chicago) – 13 total connections moved including 12 10 G connections – 7609 removed – MX 960 added 16

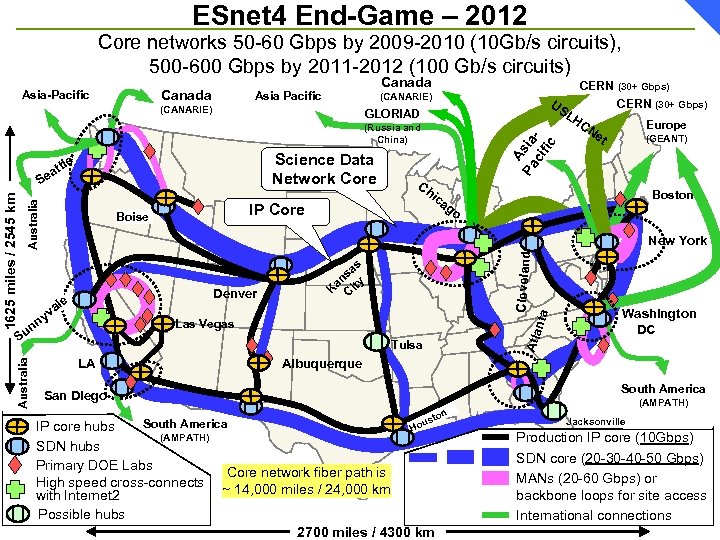

ESnet 4 End-Game – 2012 Core networks 50 -60 Gbps by 2009 -2010 (10 Gb/s circuits), 500 -600 Gbps by 2011 -2012 (100 Gb/s circuits) Canada Asia-Pacific Canada Asia Pacific (CANARIE) US LH GLORIAD Science Data Network Core Australia Europe (GEANT) Boston ag o as ns ty a K Ci nta Denver le va y Cleveland Las Vegas S Atla 1625 miles / 2545 km ic CN et New York n un Australia Ch IP Core Boise A Pa sia ci fic (Russia and China) le att Se CERN (30+ Gbps) Tulsa LA Washington DC Albuquerque South America San Diego (AMPATH) on ust South America IP core hubs Ho (AMPATH) SDN hubs Primary DOE Labs Core network fiber path is High speed cross-connects ~ 14, 000 miles / 24, 000 km with Internet 2 Possible hubs 2700 miles / 4300 km Jacksonville Production IP core (10 Gbps) SDN core (20 -30 -40 -50 Gbps) MANs (20 -60 Gbps) or backbone loops for site access International connections

ESnet 4 End-Game – 2012 Core networks 50 -60 Gbps by 2009 -2010 (10 Gb/s circuits), 500 -600 Gbps by 2011 -2012 (100 Gb/s circuits) Canada Asia-Pacific Canada Asia Pacific (CANARIE) US LH GLORIAD Science Data Network Core Australia Europe (GEANT) Boston ag o as ns ty a K Ci nta Denver le va y Cleveland Las Vegas S Atla 1625 miles / 2545 km ic CN et New York n un Australia Ch IP Core Boise A Pa sia ci fic (Russia and China) le att Se CERN (30+ Gbps) Tulsa LA Washington DC Albuquerque South America San Diego (AMPATH) on ust South America IP core hubs Ho (AMPATH) SDN hubs Primary DOE Labs Core network fiber path is High speed cross-connects ~ 14, 000 miles / 24, 000 km with Internet 2 Possible hubs 2700 miles / 4300 km Jacksonville Production IP core (10 Gbps) SDN core (20 -30 -40 -50 Gbps) MANs (20 -60 Gbps) or backbone loops for site access International connections

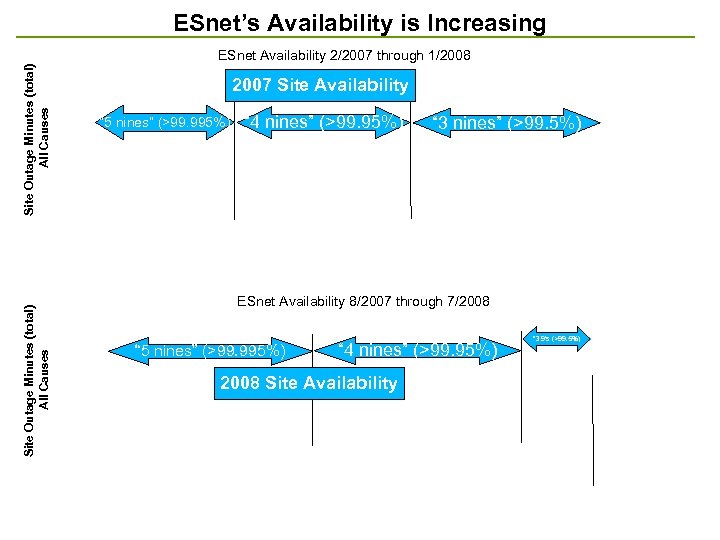

ESnet’s Availability is Increasing Site Outage Minutes (total) All Causes ESnet Availability 2/2007 through 1/2008 2007 Site Availability “ 5 nines” (>99. 995%) “ 4 nines” (>99. 95%) “ 3 nines” (>99. 5%) ESnet Availability 8/2007 through 7/2008 “ 5 nines” (>99. 995%) “ 4 nines” (>99. 95%) 2008 Site Availability “ 3 9’s (>99. 5%)

ESnet’s Availability is Increasing Site Outage Minutes (total) All Causes ESnet Availability 2/2007 through 1/2008 2007 Site Availability “ 5 nines” (>99. 995%) “ 4 nines” (>99. 95%) “ 3 nines” (>99. 5%) ESnet Availability 8/2007 through 7/2008 “ 5 nines” (>99. 995%) “ 4 nines” (>99. 95%) 2008 Site Availability “ 3 9’s (>99. 5%)

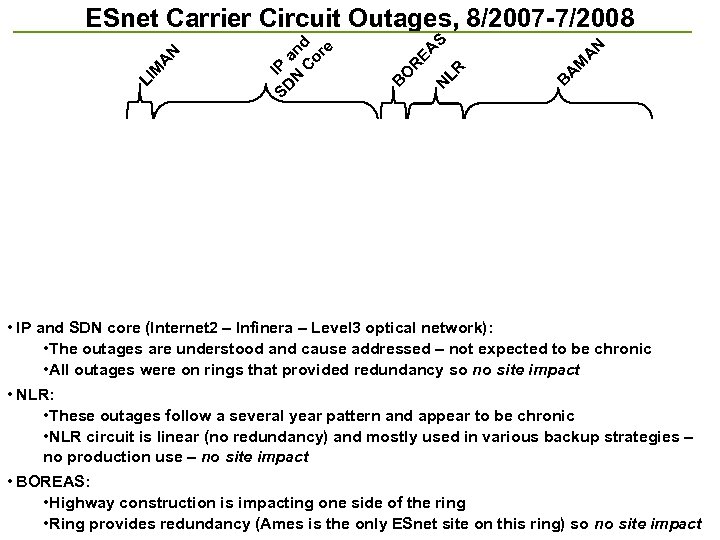

B A M A N S N LR EA R O B SD IP N and C or e LI M A N ESnet Carrier Circuit Outages, 8/2007 -7/2008 • IP and SDN core (Internet 2 – Infinera – Level 3 optical network): • The outages are understood and cause addressed – not expected to be chronic • All outages were on rings that provided redundancy so no site impact • NLR: • These outages follow a several year pattern and appear to be chronic • NLR circuit is linear (no redundancy) and mostly used in various backup strategies – no production use – no site impact • BOREAS: • Highway construction is impacting one side of the ring • Ring provides redundancy (Ames is the only ESnet site on this ring) so no site impact

B A M A N S N LR EA R O B SD IP N and C or e LI M A N ESnet Carrier Circuit Outages, 8/2007 -7/2008 • IP and SDN core (Internet 2 – Infinera – Level 3 optical network): • The outages are understood and cause addressed – not expected to be chronic • All outages were on rings that provided redundancy so no site impact • NLR: • These outages follow a several year pattern and appear to be chronic • NLR circuit is linear (no redundancy) and mostly used in various backup strategies – no production use – no site impact • BOREAS: • Highway construction is impacting one side of the ring • Ring provides redundancy (Ames is the only ESnet site on this ring) so no site impact

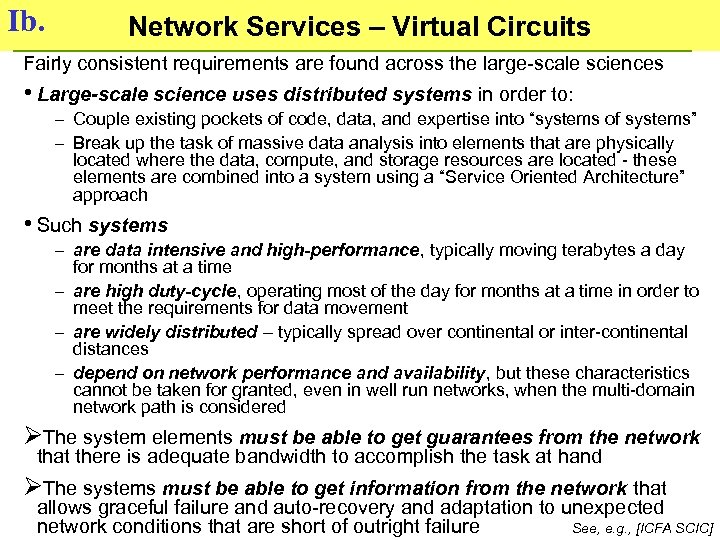

Ib. Network Services – Virtual Circuits Fairly consistent requirements are found across the large-scale sciences • Large-scale science uses distributed systems in order to: – Couple existing pockets of code, data, and expertise into “systems of systems” – Break up the task of massive data analysis into elements that are physically located where the data, compute, and storage resources are located - these elements are combined into a system using a “Service Oriented Architecture” approach • Such systems – are data intensive and high-performance, typically moving terabytes a day for months at a time – are high duty-cycle, operating most of the day for months at a time in order to meet the requirements for data movement – are widely distributed – typically spread over continental or inter-continental distances – depend on network performance and availability, but these characteristics cannot be taken for granted, even in well run networks, when the multi-domain network path is considered ØThe system elements must be able to get guarantees from the network that there is adequate bandwidth to accomplish the task at hand ØThe systems must be able to get information from the network that allows graceful failure and auto-recovery and adaptation to unexpected network conditions that are short of outright failure See, e. g. , [ICFA SCIC]

Ib. Network Services – Virtual Circuits Fairly consistent requirements are found across the large-scale sciences • Large-scale science uses distributed systems in order to: – Couple existing pockets of code, data, and expertise into “systems of systems” – Break up the task of massive data analysis into elements that are physically located where the data, compute, and storage resources are located - these elements are combined into a system using a “Service Oriented Architecture” approach • Such systems – are data intensive and high-performance, typically moving terabytes a day for months at a time – are high duty-cycle, operating most of the day for months at a time in order to meet the requirements for data movement – are widely distributed – typically spread over continental or inter-continental distances – depend on network performance and availability, but these characteristics cannot be taken for granted, even in well run networks, when the multi-domain network path is considered ØThe system elements must be able to get guarantees from the network that there is adequate bandwidth to accomplish the task at hand ØThe systems must be able to get information from the network that allows graceful failure and auto-recovery and adaptation to unexpected network conditions that are short of outright failure See, e. g. , [ICFA SCIC]

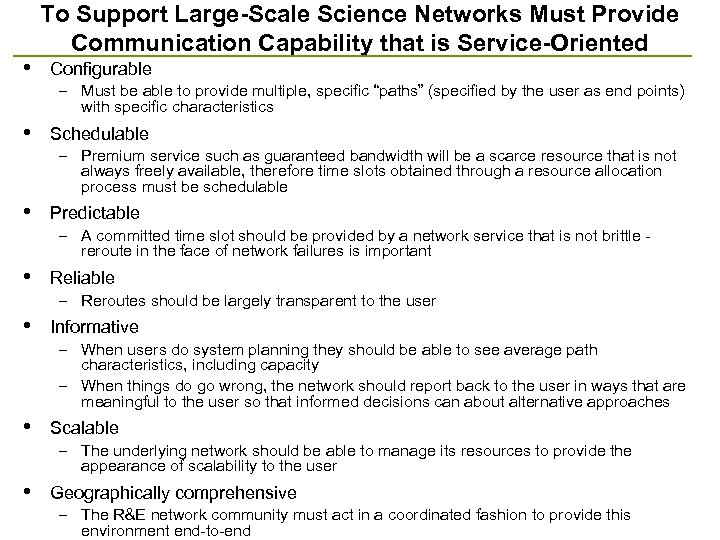

• To Support Large-Scale Science Networks Must Provide Communication Capability that is Service-Oriented Configurable – Must be able to provide multiple, specific “paths” (specified by the user as end points) with specific characteristics • Schedulable – Premium service such as guaranteed bandwidth will be a scarce resource that is not always freely available, therefore time slots obtained through a resource allocation process must be schedulable • Predictable – A committed time slot should be provided by a network service that is not brittle - reroute in the face of network failures is important • Reliable – Reroutes should be largely transparent to the user • Informative – When users do system planning they should be able to see average path characteristics, including capacity – When things do go wrong, the network should report back to the user in ways that are meaningful to the user so that informed decisions can about alternative approaches • Scalable – The underlying network should be able to manage its resources to provide the appearance of scalability to the user • Geographically comprehensive – The R&E network community must act in a coordinated fashion to provide this environment end-to-end

• To Support Large-Scale Science Networks Must Provide Communication Capability that is Service-Oriented Configurable – Must be able to provide multiple, specific “paths” (specified by the user as end points) with specific characteristics • Schedulable – Premium service such as guaranteed bandwidth will be a scarce resource that is not always freely available, therefore time slots obtained through a resource allocation process must be schedulable • Predictable – A committed time slot should be provided by a network service that is not brittle - reroute in the face of network failures is important • Reliable – Reroutes should be largely transparent to the user • Informative – When users do system planning they should be able to see average path characteristics, including capacity – When things do go wrong, the network should report back to the user in ways that are meaningful to the user so that informed decisions can about alternative approaches • Scalable – The underlying network should be able to manage its resources to provide the appearance of scalability to the user • Geographically comprehensive – The R&E network community must act in a coordinated fashion to provide this environment end-to-end

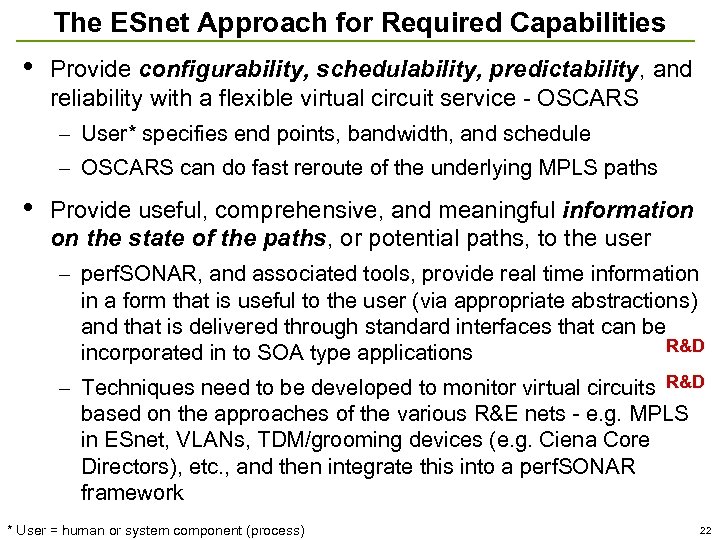

The ESnet Approach for Required Capabilities • Provide configurability, schedulability, predictability, and reliability with a flexible virtual circuit service - OSCARS – User* specifies end points, bandwidth, and schedule – OSCARS can do fast reroute of the underlying MPLS paths • Provide useful, comprehensive, and meaningful information on the state of the paths, or potential paths, to the user – perf. SONAR, and associated tools, provide real time information in a form that is useful to the user (via appropriate abstractions) and that is delivered through standard interfaces that can be R&D incorporated in to SOA type applications – Techniques need to be developed to monitor virtual circuits R&D based on the approaches of the various R&E nets - e. g. MPLS in ESnet, VLANs, TDM/grooming devices (e. g. Ciena Core Directors), etc. , and then integrate this into a perf. SONAR framework * User = human or system component (process) 22

The ESnet Approach for Required Capabilities • Provide configurability, schedulability, predictability, and reliability with a flexible virtual circuit service - OSCARS – User* specifies end points, bandwidth, and schedule – OSCARS can do fast reroute of the underlying MPLS paths • Provide useful, comprehensive, and meaningful information on the state of the paths, or potential paths, to the user – perf. SONAR, and associated tools, provide real time information in a form that is useful to the user (via appropriate abstractions) and that is delivered through standard interfaces that can be R&D incorporated in to SOA type applications – Techniques need to be developed to monitor virtual circuits R&D based on the approaches of the various R&E nets - e. g. MPLS in ESnet, VLANs, TDM/grooming devices (e. g. Ciena Core Directors), etc. , and then integrate this into a perf. SONAR framework * User = human or system component (process) 22

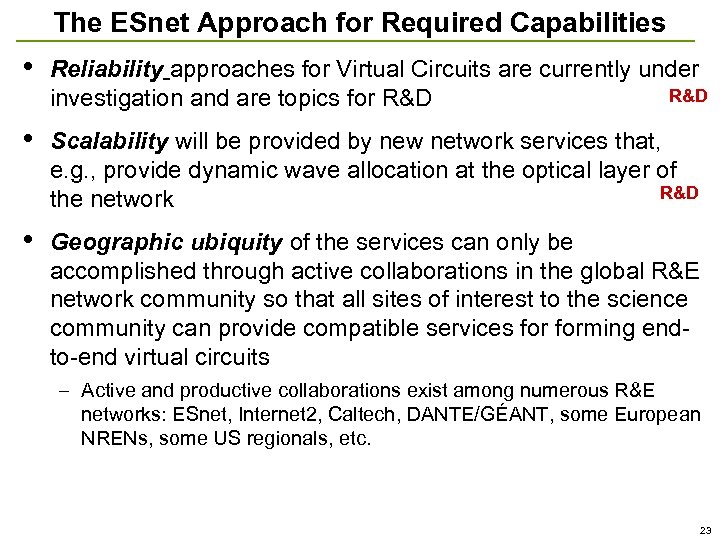

The ESnet Approach for Required Capabilities • Reliability approaches for Virtual Circuits are currently under R&D investigation and are topics for R&D • Scalability will be provided by new network services that, e. g. , provide dynamic wave allocation at the optical layer of R&D the network • Geographic ubiquity of the services can only be accomplished through active collaborations in the global R&E network community so that all sites of interest to the science community can provide compatible services forming endto-end virtual circuits – Active and productive collaborations exist among numerous R&E networks: ESnet, Internet 2, Caltech, DANTE/GÉANT, some European NRENs, some US regionals, etc. 23

The ESnet Approach for Required Capabilities • Reliability approaches for Virtual Circuits are currently under R&D investigation and are topics for R&D • Scalability will be provided by new network services that, e. g. , provide dynamic wave allocation at the optical layer of R&D the network • Geographic ubiquity of the services can only be accomplished through active collaborations in the global R&E network community so that all sites of interest to the science community can provide compatible services forming endto-end virtual circuits – Active and productive collaborations exist among numerous R&E networks: ESnet, Internet 2, Caltech, DANTE/GÉANT, some European NRENs, some US regionals, etc. 23

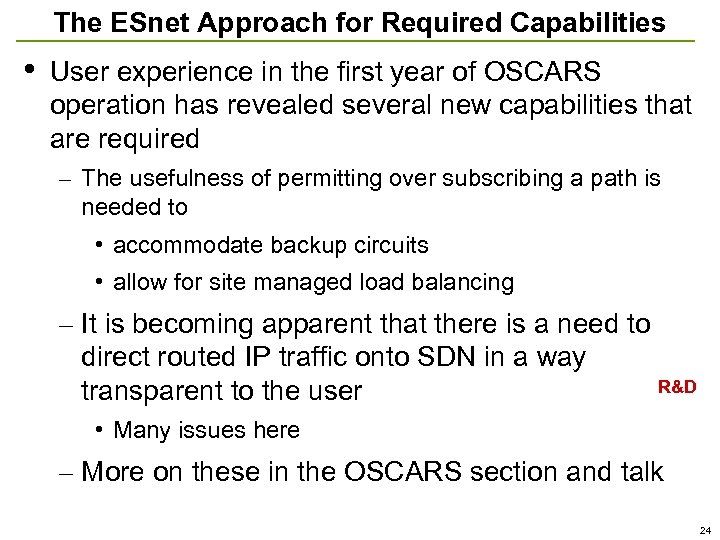

The ESnet Approach for Required Capabilities • User experience in the first year of OSCARS operation has revealed several new capabilities that are required – The usefulness of permitting over subscribing a path is needed to • accommodate backup circuits • allow for site managed load balancing – It is becoming apparent that there is a need to direct routed IP traffic onto SDN in a way R&D transparent to the user • Many issues here – More on these in the OSCARS section and talk 24

The ESnet Approach for Required Capabilities • User experience in the first year of OSCARS operation has revealed several new capabilities that are required – The usefulness of permitting over subscribing a path is needed to • accommodate backup circuits • allow for site managed load balancing – It is becoming apparent that there is a need to direct routed IP traffic onto SDN in a way R&D transparent to the user • Many issues here – More on these in the OSCARS section and talk 24

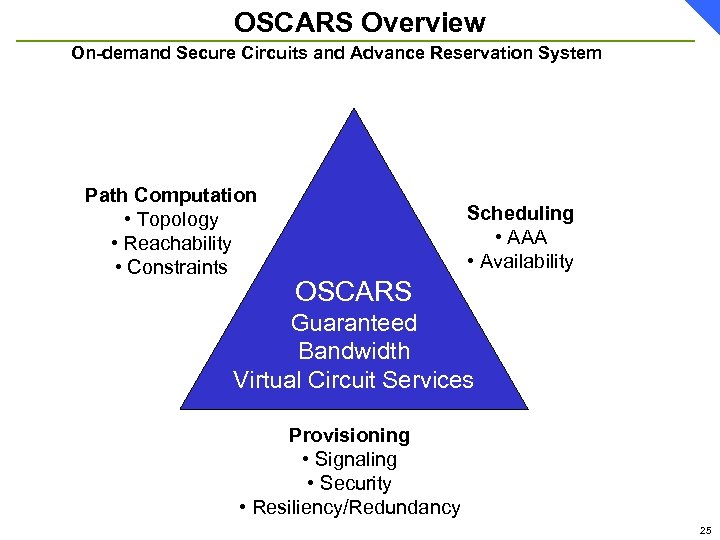

OSCARS Overview On-demand Secure Circuits and Advance Reservation System Path Computation • Topology • Reachability • Constraints Scheduling • AAA • Availability OSCARS Guaranteed Bandwidth Virtual Circuit Services Provisioning • Signaling • Security • Resiliency/Redundancy 25

OSCARS Overview On-demand Secure Circuits and Advance Reservation System Path Computation • Topology • Reachability • Constraints Scheduling • AAA • Availability OSCARS Guaranteed Bandwidth Virtual Circuit Services Provisioning • Signaling • Security • Resiliency/Redundancy 25

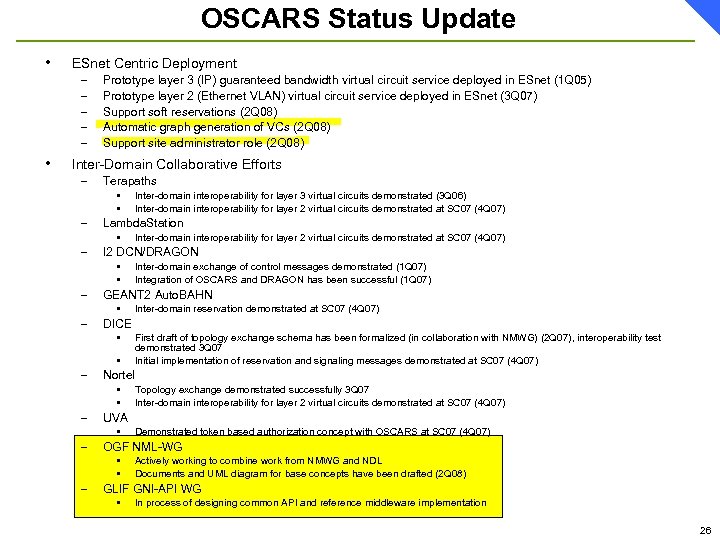

OSCARS Status Update • ESnet Centric Deployment – – – • Prototype layer 3 (IP) guaranteed bandwidth virtual circuit service deployed in ESnet (1 Q 05) Prototype layer 2 (Ethernet VLAN) virtual circuit service deployed in ESnet (3 Q 07) Support soft reservations (2 Q 08) Automatic graph generation of VCs (2 Q 08) Support site administrator role (2 Q 08) Inter-Domain Collaborative Efforts – Terapaths • • – Lambda. Station • – Inter-domain interoperability for layer 3 virtual circuits demonstrated (3 Q 06) Inter-domain interoperability for layer 2 virtual circuits demonstrated at SC 07 (4 Q 07) I 2 DCN/DRAGON • • Inter-domain exchange of control messages demonstrated (1 Q 07) Integration of OSCARS and DRAGON has been successful (1 Q 07) – GEANT 2 Auto. BAHN – DICE • • • – Demonstrated token based authorization concept with OSCARS at SC 07 (4 Q 07) OGF NML-WG • • – Topology exchange demonstrated successfully 3 Q 07 Inter-domain interoperability for layer 2 virtual circuits demonstrated at SC 07 (4 Q 07) UVA • – First draft of topology exchange schema has been formalized (in collaboration with NMWG) (2 Q 07), interoperability test demonstrated 3 Q 07 Initial implementation of reservation and signaling messages demonstrated at SC 07 (4 Q 07) Nortel • • – Inter-domain reservation demonstrated at SC 07 (4 Q 07) Actively working to combine work from NMWG and NDL Documents and UML diagram for base concepts have been drafted (2 Q 08) GLIF GNI-API WG • In process of designing common API and reference middleware implementation 26

OSCARS Status Update • ESnet Centric Deployment – – – • Prototype layer 3 (IP) guaranteed bandwidth virtual circuit service deployed in ESnet (1 Q 05) Prototype layer 2 (Ethernet VLAN) virtual circuit service deployed in ESnet (3 Q 07) Support soft reservations (2 Q 08) Automatic graph generation of VCs (2 Q 08) Support site administrator role (2 Q 08) Inter-Domain Collaborative Efforts – Terapaths • • – Lambda. Station • – Inter-domain interoperability for layer 3 virtual circuits demonstrated (3 Q 06) Inter-domain interoperability for layer 2 virtual circuits demonstrated at SC 07 (4 Q 07) I 2 DCN/DRAGON • • Inter-domain exchange of control messages demonstrated (1 Q 07) Integration of OSCARS and DRAGON has been successful (1 Q 07) – GEANT 2 Auto. BAHN – DICE • • • – Demonstrated token based authorization concept with OSCARS at SC 07 (4 Q 07) OGF NML-WG • • – Topology exchange demonstrated successfully 3 Q 07 Inter-domain interoperability for layer 2 virtual circuits demonstrated at SC 07 (4 Q 07) UVA • – First draft of topology exchange schema has been formalized (in collaboration with NMWG) (2 Q 07), interoperability test demonstrated 3 Q 07 Initial implementation of reservation and signaling messages demonstrated at SC 07 (4 Q 07) Nortel • • – Inter-domain reservation demonstrated at SC 07 (4 Q 07) Actively working to combine work from NMWG and NDL Documents and UML diagram for base concepts have been drafted (2 Q 08) GLIF GNI-API WG • In process of designing common API and reference middleware implementation 26

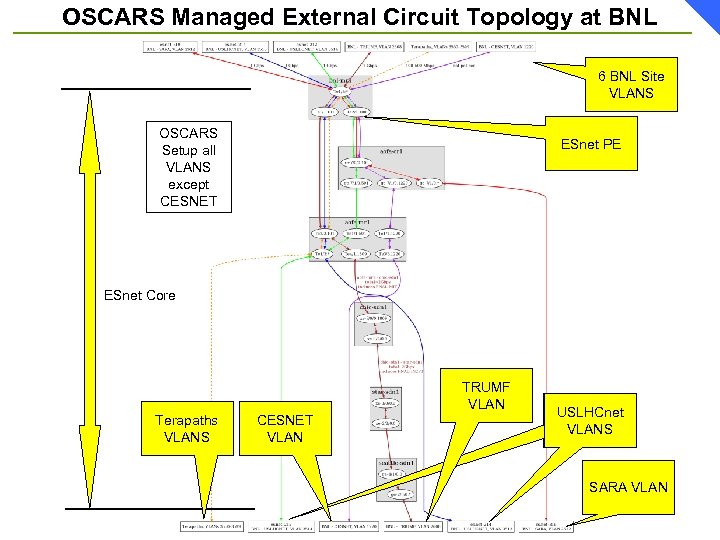

OSCARS Managed External Circuit Topology at BNL 6 BNL Site VLANS OSCARS Setup all VLANS except CESNET ESnet PE ESnet Core TRUMF VLAN Terapaths VLANS CESNET VLAN USLHCnet VLANS SARA VLAN

OSCARS Managed External Circuit Topology at BNL 6 BNL Site VLANS OSCARS Setup all VLANS except CESNET ESnet PE ESnet Core TRUMF VLAN Terapaths VLANS CESNET VLAN USLHCnet VLANS SARA VLAN

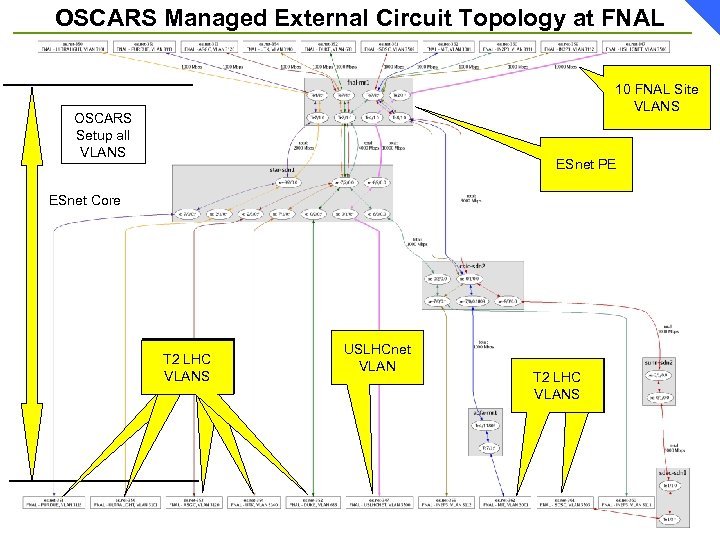

OSCARS Managed External Circuit Topology at FNAL 10 FNAL Site VLANS OSCARS Setup all VLANS ESnet PE ESnet Core USLHCnet T 2 LHC VLANS USLHCnet VLAN T 2 LHC VLANS VLAN

OSCARS Managed External Circuit Topology at FNAL 10 FNAL Site VLANS OSCARS Setup all VLANS ESnet PE ESnet Core USLHCnet T 2 LHC VLANS USLHCnet VLAN T 2 LHC VLANS VLAN

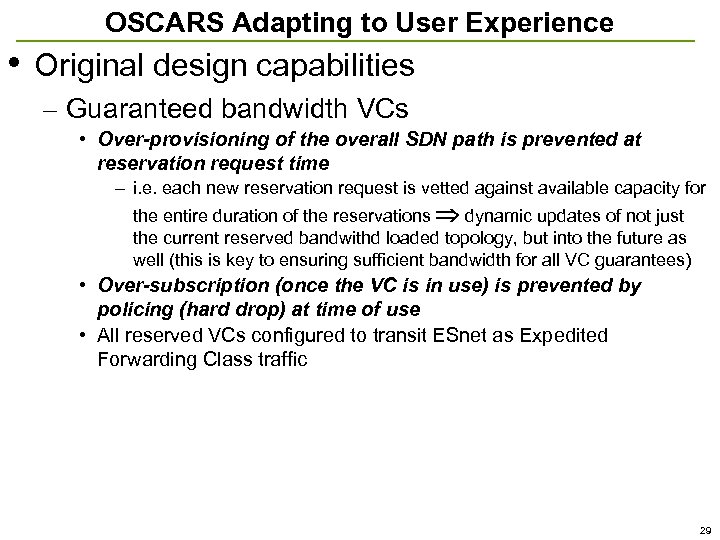

OSCARS Adapting to User Experience • Original design capabilities – Guaranteed bandwidth VCs • Over-provisioning of the overall SDN path is prevented at reservation request time – i. e. each new reservation request is vetted against available capacity for the entire duration of the reservations dynamic updates of not just the current reserved bandwithd loaded topology, but into the future as well (this is key to ensuring sufficient bandwidth for all VC guarantees) • Over-subscription (once the VC is in use) is prevented by policing (hard drop) at time of use • All reserved VCs configured to transit ESnet as Expedited Forwarding Class traffic 29

OSCARS Adapting to User Experience • Original design capabilities – Guaranteed bandwidth VCs • Over-provisioning of the overall SDN path is prevented at reservation request time – i. e. each new reservation request is vetted against available capacity for the entire duration of the reservations dynamic updates of not just the current reserved bandwithd loaded topology, but into the future as well (this is key to ensuring sufficient bandwidth for all VC guarantees) • Over-subscription (once the VC is in use) is prevented by policing (hard drop) at time of use • All reserved VCs configured to transit ESnet as Expedited Forwarding Class traffic 29

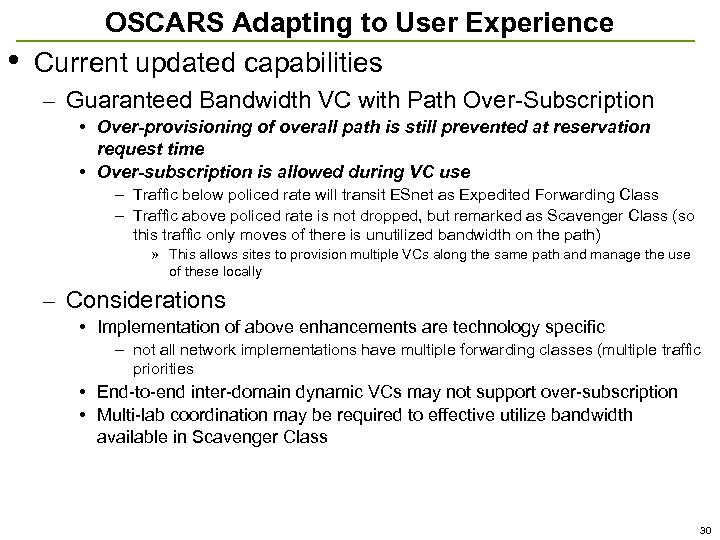

• OSCARS Adapting to User Experience Current updated capabilities – Guaranteed Bandwidth VC with Path Over-Subscription • Over-provisioning of overall path is still prevented at reservation request time • Over-subscription is allowed during VC use – Traffic below policed rate will transit ESnet as Expedited Forwarding Class – Traffic above policed rate is not dropped, but remarked as Scavenger Class (so this traffic only moves of there is unutilized bandwidth on the path) » This allows sites to provision multiple VCs along the same path and manage the use of these locally – Considerations • Implementation of above enhancements are technology specific – not all network implementations have multiple forwarding classes (multiple traffic priorities • End-to-end inter-domain dynamic VCs may not support over-subscription • Multi-lab coordination may be required to effective utilize bandwidth available in Scavenger Class 30

• OSCARS Adapting to User Experience Current updated capabilities – Guaranteed Bandwidth VC with Path Over-Subscription • Over-provisioning of overall path is still prevented at reservation request time • Over-subscription is allowed during VC use – Traffic below policed rate will transit ESnet as Expedited Forwarding Class – Traffic above policed rate is not dropped, but remarked as Scavenger Class (so this traffic only moves of there is unutilized bandwidth on the path) » This allows sites to provision multiple VCs along the same path and manage the use of these locally – Considerations • Implementation of above enhancements are technology specific – not all network implementations have multiple forwarding classes (multiple traffic priorities • End-to-end inter-domain dynamic VCs may not support over-subscription • Multi-lab coordination may be required to effective utilize bandwidth available in Scavenger Class 30

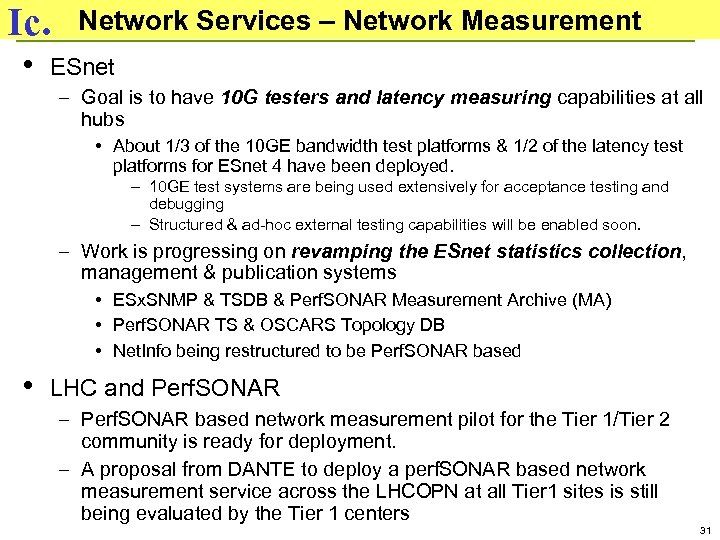

Ic. • Network Services – Network Measurement ESnet – Goal is to have 10 G testers and latency measuring capabilities at all hubs • About 1/3 of the 10 GE bandwidth test platforms & 1/2 of the latency test platforms for ESnet 4 have been deployed. – 10 GE test systems are being used extensively for acceptance testing and debugging – Structured & ad-hoc external testing capabilities will be enabled soon. – Work is progressing on revamping the ESnet statistics collection, management & publication systems • ESx. SNMP & TSDB & Perf. SONAR Measurement Archive (MA) • Perf. SONAR TS & OSCARS Topology DB • Net. Info being restructured to be Perf. SONAR based • LHC and Perf. SONAR – Perf. SONAR based network measurement pilot for the Tier 1/Tier 2 community is ready for deployment. – A proposal from DANTE to deploy a perf. SONAR based network measurement service across the LHCOPN at all Tier 1 sites is still being evaluated by the Tier 1 centers 31

Ic. • Network Services – Network Measurement ESnet – Goal is to have 10 G testers and latency measuring capabilities at all hubs • About 1/3 of the 10 GE bandwidth test platforms & 1/2 of the latency test platforms for ESnet 4 have been deployed. – 10 GE test systems are being used extensively for acceptance testing and debugging – Structured & ad-hoc external testing capabilities will be enabled soon. – Work is progressing on revamping the ESnet statistics collection, management & publication systems • ESx. SNMP & TSDB & Perf. SONAR Measurement Archive (MA) • Perf. SONAR TS & OSCARS Topology DB • Net. Info being restructured to be Perf. SONAR based • LHC and Perf. SONAR – Perf. SONAR based network measurement pilot for the Tier 1/Tier 2 community is ready for deployment. – A proposal from DANTE to deploy a perf. SONAR based network measurement service across the LHCOPN at all Tier 1 sites is still being evaluated by the Tier 1 centers 31

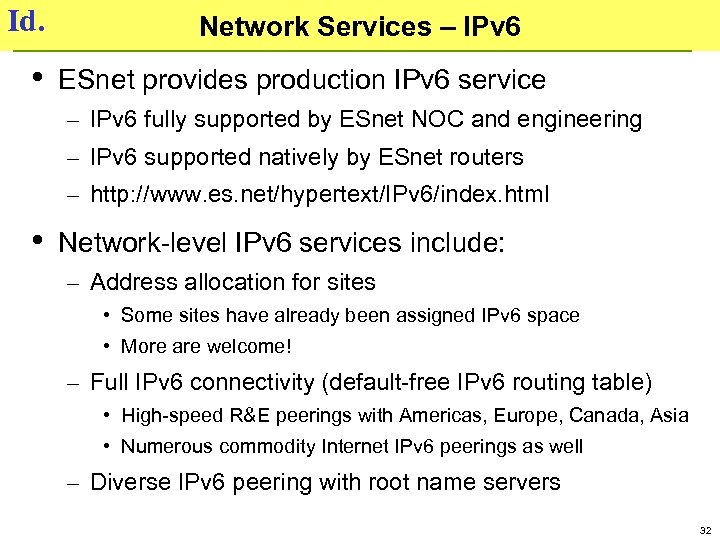

Id. • Network Services – IPv 6 ESnet provides production IPv 6 service – IPv 6 fully supported by ESnet NOC and engineering – IPv 6 supported natively by ESnet routers – http: //www. es. net/hypertext/IPv 6/index. html • Network-level IPv 6 services include: – Address allocation for sites • Some sites have already been assigned IPv 6 space • More are welcome! – Full IPv 6 connectivity (default-free IPv 6 routing table) • High-speed R&E peerings with Americas, Europe, Canada, Asia • Numerous commodity Internet IPv 6 peerings as well – Diverse IPv 6 peering with root name servers 32

Id. • Network Services – IPv 6 ESnet provides production IPv 6 service – IPv 6 fully supported by ESnet NOC and engineering – IPv 6 supported natively by ESnet routers – http: //www. es. net/hypertext/IPv 6/index. html • Network-level IPv 6 services include: – Address allocation for sites • Some sites have already been assigned IPv 6 space • More are welcome! – Full IPv 6 connectivity (default-free IPv 6 routing table) • High-speed R&E peerings with Americas, Europe, Canada, Asia • Numerous commodity Internet IPv 6 peerings as well – Diverse IPv 6 peering with root name servers 32

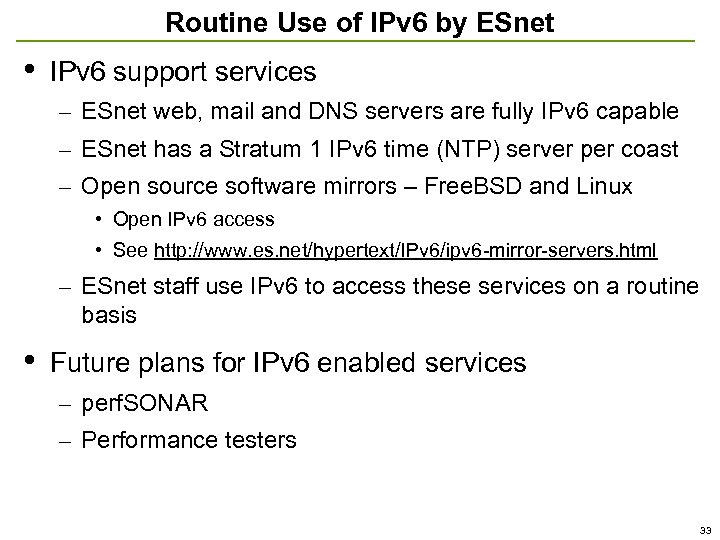

Routine Use of IPv 6 by ESnet • IPv 6 support services – ESnet web, mail and DNS servers are fully IPv 6 capable – ESnet has a Stratum 1 IPv 6 time (NTP) server per coast – Open source software mirrors – Free. BSD and Linux • Open IPv 6 access • See http: //www. es. net/hypertext/IPv 6/ipv 6 -mirror-servers. html – ESnet staff use IPv 6 to access these services on a routine basis • Future plans for IPv 6 enabled services – perf. SONAR – Performance testers 33

Routine Use of IPv 6 by ESnet • IPv 6 support services – ESnet web, mail and DNS servers are fully IPv 6 capable – ESnet has a Stratum 1 IPv 6 time (NTP) server per coast – Open source software mirrors – Free. BSD and Linux • Open IPv 6 access • See http: //www. es. net/hypertext/IPv 6/ipv 6 -mirror-servers. html – ESnet staff use IPv 6 to access these services on a routine basis • Future plans for IPv 6 enabled services – perf. SONAR – Performance testers 33

New See www. es. net – “network services” tab – IPv 6 link

New See www. es. net – “network services” tab – IPv 6 link

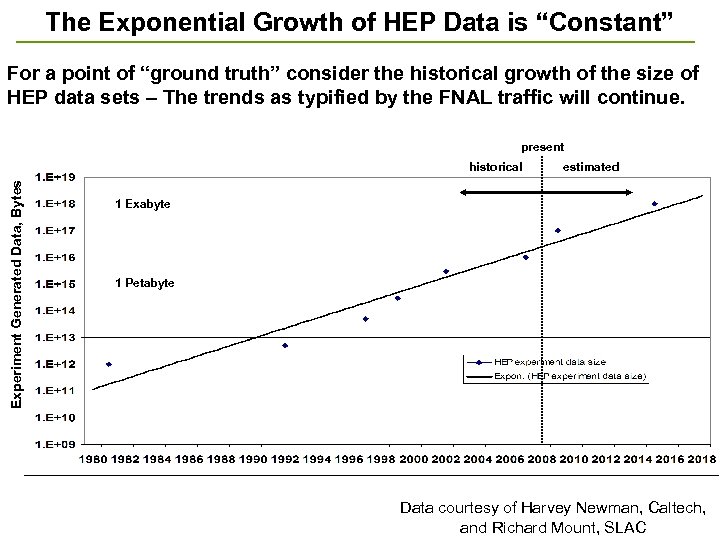

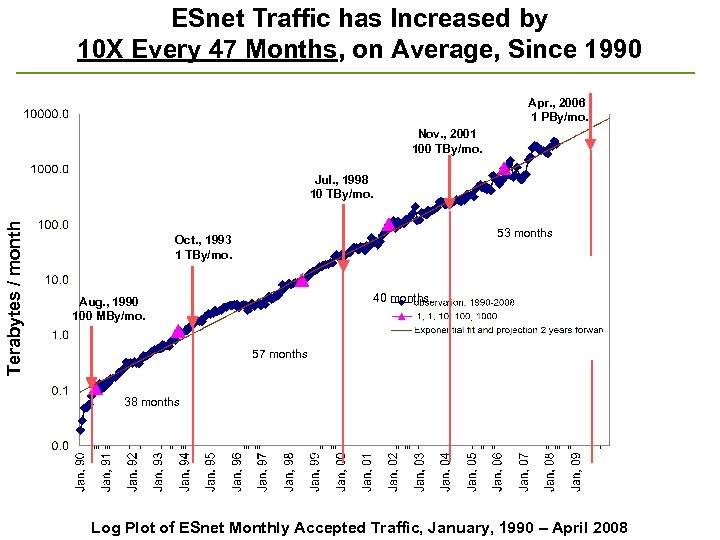

II. SC Program Requirements and ESnet Response Recall the Planning Process • Requirements are determined by 1) Exploring the plans of the major stakeholders: • 1 a) Data characteristics of instruments and facilities – What data will be generated by instruments coming on-line over the next 5 -10 years (including supercomputers)? • 1 b) Examining the future process of science – How and where will the new data be analyzed and used – that is, how will the process of doing science change over 5 -10 years? 2) Observing traffic patterns • What do the trends in network patterns predict for future network needs? • The assumption has been that you had to add 1 a) and 1 b) (future plans) to 2) (observation) in order to account for unpredictable events – e. g. the turn-on of major data generators like the LHC 35

II. SC Program Requirements and ESnet Response Recall the Planning Process • Requirements are determined by 1) Exploring the plans of the major stakeholders: • 1 a) Data characteristics of instruments and facilities – What data will be generated by instruments coming on-line over the next 5 -10 years (including supercomputers)? • 1 b) Examining the future process of science – How and where will the new data be analyzed and used – that is, how will the process of doing science change over 5 -10 years? 2) Observing traffic patterns • What do the trends in network patterns predict for future network needs? • The assumption has been that you had to add 1 a) and 1 b) (future plans) to 2) (observation) in order to account for unpredictable events – e. g. the turn-on of major data generators like the LHC 35

(1 a) Requirements from Instruments and Facilities Network Requirements Workshops • • Collect requirements from two DOE/SC program offices per year • Workshop schedule ESnet requirements workshop reports: http: //www. es. net/hypertext/requirements. html – – – • BES (2007 – published) BER (2007 – published) FES (2008 – published) NP (2008 – published) ASCR (Spring 2009) HEP (Summer 2009) Future workshops - ongoing cycle – – BES, BER – 2010 FES, NP – 2011 ASCR, HEP – 2012 (and so on. . . )

(1 a) Requirements from Instruments and Facilities Network Requirements Workshops • • Collect requirements from two DOE/SC program offices per year • Workshop schedule ESnet requirements workshop reports: http: //www. es. net/hypertext/requirements. html – – – • BES (2007 – published) BER (2007 – published) FES (2008 – published) NP (2008 – published) ASCR (Spring 2009) HEP (Summer 2009) Future workshops - ongoing cycle – – BES, BER – 2010 FES, NP – 2011 ASCR, HEP – 2012 (and so on. . . )

Requirements from Instruments and Facilities • Typical DOE large-scale facilities are the Tevatron accelerator (FNAL), RHIC accelerator (BNL), SNS accelerator (ORNL), ALS accelerator (LBNL), and the supercomputer centers: NERSC, NCLF (ORNL), Blue Gene (ANL) • These are representative of the ‘hardware infrastructure’ of DOE science • Requirements from these can be characterized as – Bandwidth: Quantity of data produced, requirements for timely movement – Connectivity: Geographic reach – location of instruments, facilities, and users plus network infrastructure involved (e. g. ESnet, Abilene, GEANT) – Services: Guaranteed bandwidth, traffic isolation, etc. ; IP multicast 37

Requirements from Instruments and Facilities • Typical DOE large-scale facilities are the Tevatron accelerator (FNAL), RHIC accelerator (BNL), SNS accelerator (ORNL), ALS accelerator (LBNL), and the supercomputer centers: NERSC, NCLF (ORNL), Blue Gene (ANL) • These are representative of the ‘hardware infrastructure’ of DOE science • Requirements from these can be characterized as – Bandwidth: Quantity of data produced, requirements for timely movement – Connectivity: Geographic reach – location of instruments, facilities, and users plus network infrastructure involved (e. g. ESnet, Abilene, GEANT) – Services: Guaranteed bandwidth, traffic isolation, etc. ; IP multicast 37

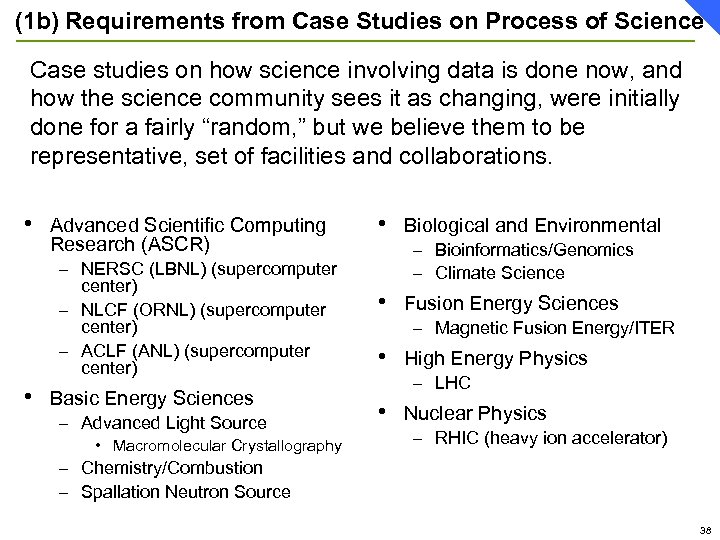

(1 b) Requirements from Case Studies on Process of Science Case studies on how science involving data is done now, and how the science community sees it as changing, were initially done for a fairly “random, ” but we believe them to be representative, set of facilities and collaborations. • Advanced Scientific Computing Research (ASCR) – NERSC (LBNL) (supercomputer center) – NLCF (ORNL) (supercomputer center) – ACLF (ANL) (supercomputer center) • Basic Energy Sciences – Advanced Light Source • Macromolecular Crystallography • Biological and Environmental – Bioinformatics/Genomics – Climate Science • Fusion Energy Sciences – Magnetic Fusion Energy/ITER • High Energy Physics – LHC • Nuclear Physics – RHIC (heavy ion accelerator) – Chemistry/Combustion – Spallation Neutron Source 38

(1 b) Requirements from Case Studies on Process of Science Case studies on how science involving data is done now, and how the science community sees it as changing, were initially done for a fairly “random, ” but we believe them to be representative, set of facilities and collaborations. • Advanced Scientific Computing Research (ASCR) – NERSC (LBNL) (supercomputer center) – NLCF (ORNL) (supercomputer center) – ACLF (ANL) (supercomputer center) • Basic Energy Sciences – Advanced Light Source • Macromolecular Crystallography • Biological and Environmental – Bioinformatics/Genomics – Climate Science • Fusion Energy Sciences – Magnetic Fusion Energy/ITER • High Energy Physics – LHC • Nuclear Physics – RHIC (heavy ion accelerator) – Chemistry/Combustion – Spallation Neutron Source 38

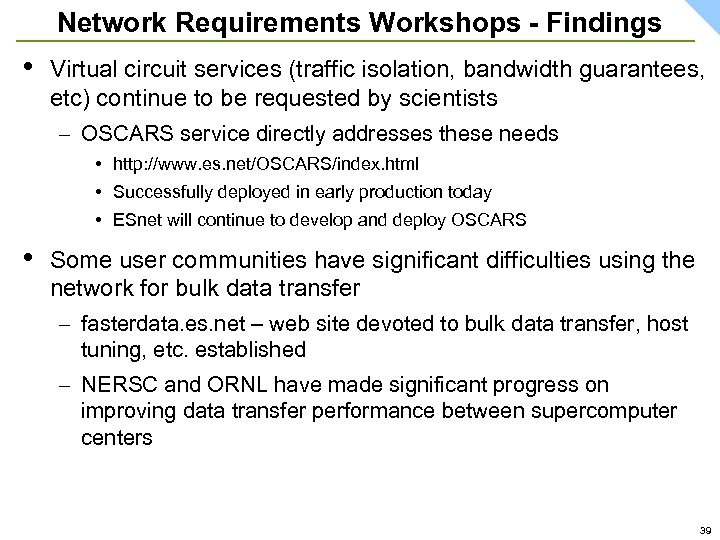

Network Requirements Workshops - Findings • Virtual circuit services (traffic isolation, bandwidth guarantees, etc) continue to be requested by scientists – OSCARS service directly addresses these needs • http: //www. es. net/OSCARS/index. html • Successfully deployed in early production today • ESnet will continue to develop and deploy OSCARS • Some user communities have significant difficulties using the network for bulk data transfer – fasterdata. es. net – web site devoted to bulk data transfer, host tuning, etc. established – NERSC and ORNL have made significant progress on improving data transfer performance between supercomputer centers 39

Network Requirements Workshops - Findings • Virtual circuit services (traffic isolation, bandwidth guarantees, etc) continue to be requested by scientists – OSCARS service directly addresses these needs • http: //www. es. net/OSCARS/index. html • Successfully deployed in early production today • ESnet will continue to develop and deploy OSCARS • Some user communities have significant difficulties using the network for bulk data transfer – fasterdata. es. net – web site devoted to bulk data transfer, host tuning, etc. established – NERSC and ORNL have made significant progress on improving data transfer performance between supercomputer centers 39

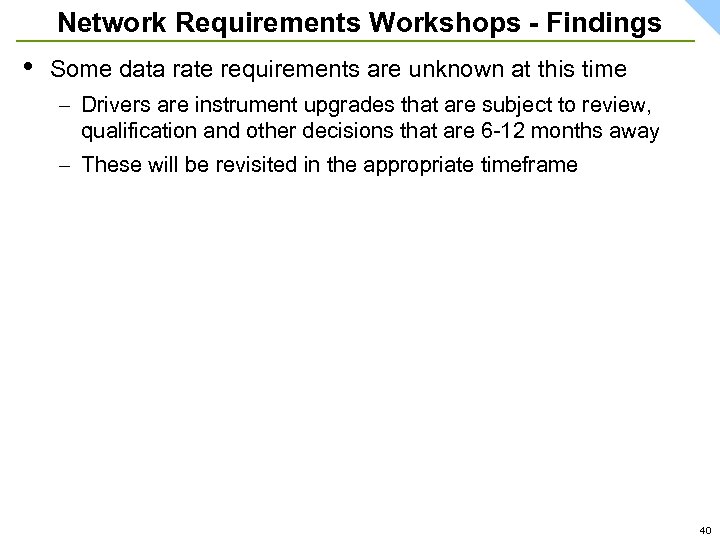

Network Requirements Workshops - Findings • Some data rate requirements are unknown at this time – Drivers are instrument upgrades that are subject to review, qualification and other decisions that are 6 -12 months away – These will be revisited in the appropriate timeframe 40

Network Requirements Workshops - Findings • Some data rate requirements are unknown at this time – Drivers are instrument upgrades that are subject to review, qualification and other decisions that are 6 -12 months away – These will be revisited in the appropriate timeframe 40

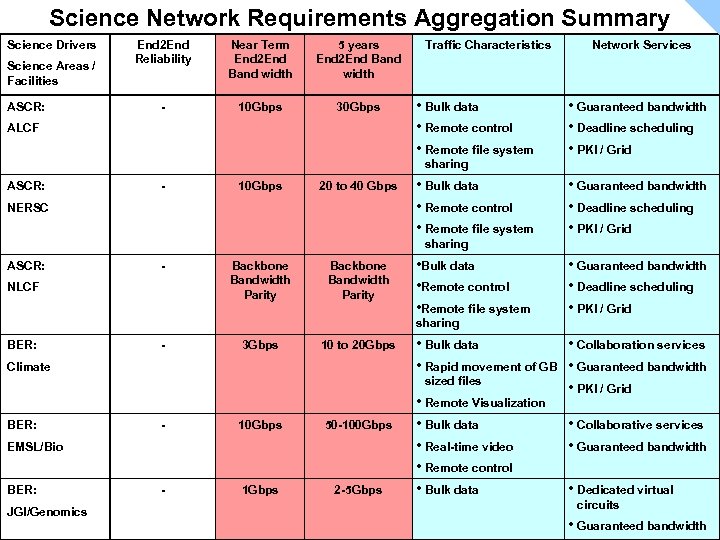

Science Network Requirements Aggregation Summary Science Drivers Science Areas / Facilities ASCR: End 2 End Reliability Near Term End 2 End Band width 5 years End 2 End Band width - 10 Gbps 30 Gbps ALCF Traffic Characteristics • Bulk data • Remote control • Remote file system Network Services • Guaranteed bandwidth • Deadline scheduling • PKI / Grid sharing ASCR: - 10 Gbps 20 to 40 Gbps NERSC • Bulk data • Remote control • Remote file system • Guaranteed bandwidth • Deadline scheduling • PKI / Grid sharing ASCR: - NLCF Backbone Bandwidth Parity • Bulk data • Remote control • Remote file system • Guaranteed bandwidth • Deadline scheduling • PKI / Grid sharing BER: - 3 Gbps 10 to 20 Gbps - 10 Gbps 50 -100 Gbps - 1 Gbps 2 -5 Gbps Climate BER: EMSL/Bio BER: JGI/Genomics • Bulk data • Collaboration services • Rapid movement of GB • Guaranteed bandwidth sized files • PKI / Grid • Remote Visualization • Bulk data • Collaborative services • Real-time video • Guaranteed bandwidth • Remote control • Bulk data • Dedicated virtual circuits • Guaranteed bandwidth

Science Network Requirements Aggregation Summary Science Drivers Science Areas / Facilities ASCR: End 2 End Reliability Near Term End 2 End Band width 5 years End 2 End Band width - 10 Gbps 30 Gbps ALCF Traffic Characteristics • Bulk data • Remote control • Remote file system Network Services • Guaranteed bandwidth • Deadline scheduling • PKI / Grid sharing ASCR: - 10 Gbps 20 to 40 Gbps NERSC • Bulk data • Remote control • Remote file system • Guaranteed bandwidth • Deadline scheduling • PKI / Grid sharing ASCR: - NLCF Backbone Bandwidth Parity • Bulk data • Remote control • Remote file system • Guaranteed bandwidth • Deadline scheduling • PKI / Grid sharing BER: - 3 Gbps 10 to 20 Gbps - 10 Gbps 50 -100 Gbps - 1 Gbps 2 -5 Gbps Climate BER: EMSL/Bio BER: JGI/Genomics • Bulk data • Collaboration services • Rapid movement of GB • Guaranteed bandwidth sized files • PKI / Grid • Remote Visualization • Bulk data • Collaborative services • Real-time video • Guaranteed bandwidth • Remote control • Bulk data • Dedicated virtual circuits • Guaranteed bandwidth

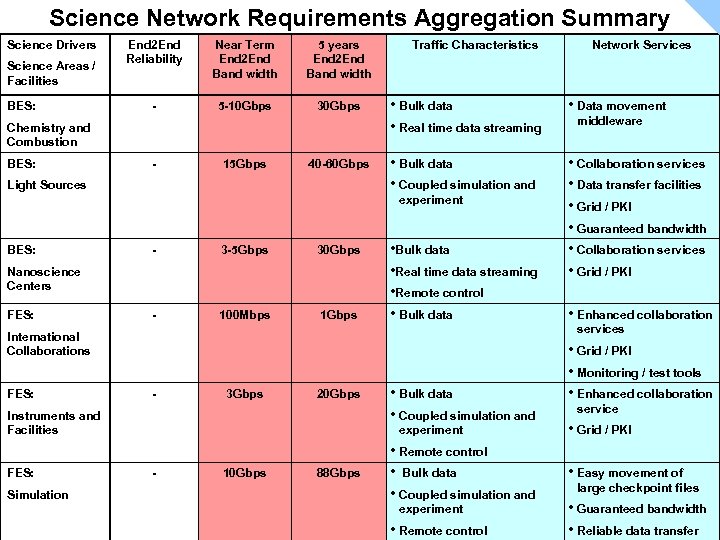

Science Network Requirements Aggregation Summary Science Drivers Science Areas / Facilities BES: End 2 End Reliability Near Term End 2 End Band width 5 years End 2 End Band width - 5 -10 Gbps 30 Gbps Chemistry and Combustion BES: - 15 Gbps 40 -60 Gbps Light Sources Traffic Characteristics • Bulk data • Real time data streaming • Data movement • Bulk data • Coupled simulation and • Collaboration services • Data transfer facilities • Grid / PKI • Guaranteed bandwidth • Collaboration services • Grid / PKI experiment BES: - 3 -5 Gbps 30 Gbps - 100 Mbps 1 Gbps Nanoscience Centers FES: • Bulk data • Real time data streaming • Remote control • Bulk data - 3 Gbps 20 Gbps Instruments and Facilities FES: Simulation middleware • Enhanced collaboration services International Collaborations FES: Network Services • Bulk data • Coupled simulation and experiment - 10 Gbps 88 Gbps • Remote control • Bulk data • Coupled simulation and experiment • Remote control • Grid / PKI • Monitoring / test tools • Enhanced collaboration service • Grid / PKI • Easy movement of large checkpoint files • Guaranteed bandwidth • Reliable data transfer

Science Network Requirements Aggregation Summary Science Drivers Science Areas / Facilities BES: End 2 End Reliability Near Term End 2 End Band width 5 years End 2 End Band width - 5 -10 Gbps 30 Gbps Chemistry and Combustion BES: - 15 Gbps 40 -60 Gbps Light Sources Traffic Characteristics • Bulk data • Real time data streaming • Data movement • Bulk data • Coupled simulation and • Collaboration services • Data transfer facilities • Grid / PKI • Guaranteed bandwidth • Collaboration services • Grid / PKI experiment BES: - 3 -5 Gbps 30 Gbps - 100 Mbps 1 Gbps Nanoscience Centers FES: • Bulk data • Real time data streaming • Remote control • Bulk data - 3 Gbps 20 Gbps Instruments and Facilities FES: Simulation middleware • Enhanced collaboration services International Collaborations FES: Network Services • Bulk data • Coupled simulation and experiment - 10 Gbps 88 Gbps • Remote control • Bulk data • Coupled simulation and experiment • Remote control • Grid / PKI • Monitoring / test tools • Enhanced collaboration service • Grid / PKI • Easy movement of large checkpoint files • Guaranteed bandwidth • Reliable data transfer

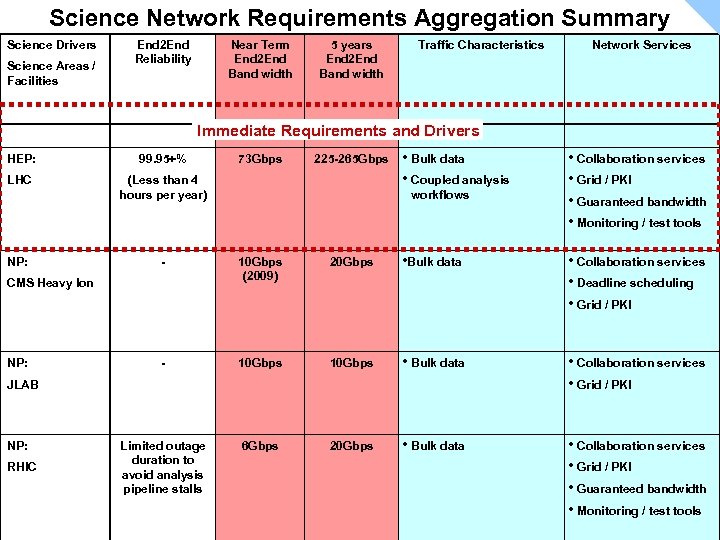

Science Network Requirements Aggregation Summary Science Drivers Science Areas / Facilities End 2 End Reliability Near Term End 2 End Band width 5 years End 2 End Band width Traffic Characteristics Network Services Immediate Requirements and Drivers HEP: 99. 95+% LHC (Less than 4 hours per year) NP: 225 -265 Gbps • Bulk data • Coupled analysis workflows 10 Gbps (2009) 20 Gbps • Bulk data • Collaboration services • Deadline scheduling • Grid / PKI - 10 Gbps • Bulk data • Collaboration services • Grid / PKI Limited outage duration to avoid analysis pipeline stalls 6 Gbps 20 Gbps • Bulk data • Collaboration services • Grid / PKI • Guaranteed bandwidth • Monitoring / test tools JLAB NP: RHIC • Collaboration services • Grid / PKI • Guaranteed bandwidth • Monitoring / test tools - CMS Heavy Ion NP: 73 Gbps

Science Network Requirements Aggregation Summary Science Drivers Science Areas / Facilities End 2 End Reliability Near Term End 2 End Band width 5 years End 2 End Band width Traffic Characteristics Network Services Immediate Requirements and Drivers HEP: 99. 95+% LHC (Less than 4 hours per year) NP: 225 -265 Gbps • Bulk data • Coupled analysis workflows 10 Gbps (2009) 20 Gbps • Bulk data • Collaboration services • Deadline scheduling • Grid / PKI - 10 Gbps • Bulk data • Collaboration services • Grid / PKI Limited outage duration to avoid analysis pipeline stalls 6 Gbps 20 Gbps • Bulk data • Collaboration services • Grid / PKI • Guaranteed bandwidth • Monitoring / test tools JLAB NP: RHIC • Collaboration services • Grid / PKI • Guaranteed bandwidth • Monitoring / test tools - CMS Heavy Ion NP: 73 Gbps

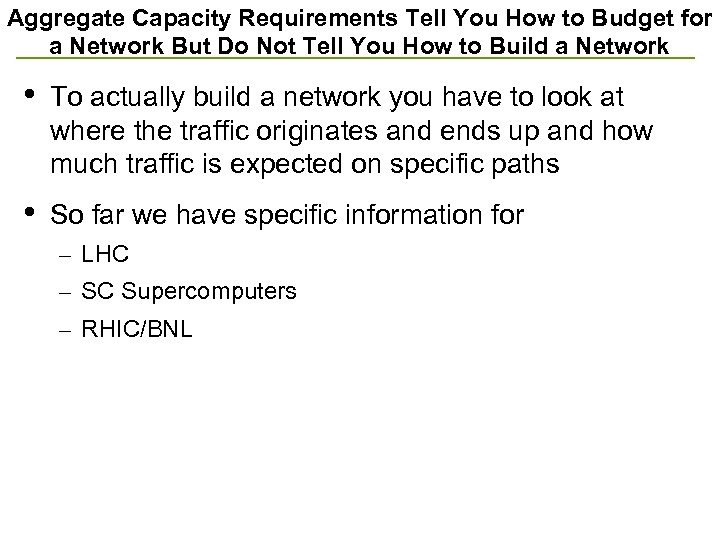

Aggregate Capacity Requirements Tell You How to Budget for a Network But Do Not Tell You How to Build a Network • To actually build a network you have to look at where the traffic originates and ends up and how much traffic is expected on specific paths • So far we have specific information for – LHC – SC Supercomputers – RHIC/BNL

Aggregate Capacity Requirements Tell You How to Budget for a Network But Do Not Tell You How to Build a Network • To actually build a network you have to look at where the traffic originates and ends up and how much traffic is expected on specific paths • So far we have specific information for – LHC – SC Supercomputers – RHIC/BNL

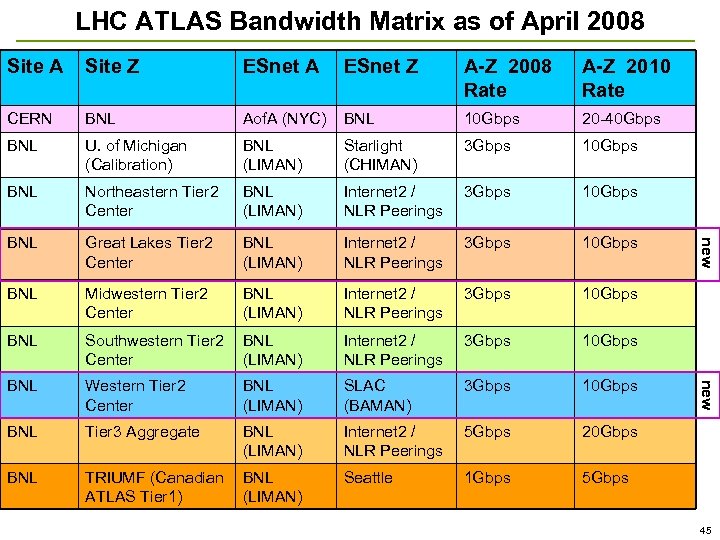

LHC ATLAS Bandwidth Matrix as of April 2008 ESnet A ESnet Z A-Z 2008 Rate A-Z 2010 Rate CERN BNL Aof. A (NYC) BNL 10 Gbps 20 -40 Gbps BNL U. of Michigan (Calibration) BNL (LIMAN) Starlight (CHIMAN) 3 Gbps 10 Gbps BNL Northeastern Tier 2 Center BNL (LIMAN) Internet 2 / NLR Peerings 3 Gbps 10 Gbps BNL Great Lakes Tier 2 Center BNL (LIMAN) Internet 2 / NLR Peerings 3 Gbps 10 Gbps BNL Midwestern Tier 2 Center BNL (LIMAN) Internet 2 / NLR Peerings 3 Gbps 10 Gbps BNL Southwestern Tier 2 BNL Center (LIMAN) Internet 2 / NLR Peerings 3 Gbps 10 Gbps BNL Western Tier 2 Center BNL (LIMAN) SLAC (BAMAN) 3 Gbps 10 Gbps BNL Tier 3 Aggregate BNL (LIMAN) Internet 2 / NLR Peerings 5 Gbps 20 Gbps BNL TRIUMF (Canadian BNL ATLAS Tier 1) (LIMAN) Seattle 1 Gbps 5 Gbps new Site Z new Site A 45

LHC ATLAS Bandwidth Matrix as of April 2008 ESnet A ESnet Z A-Z 2008 Rate A-Z 2010 Rate CERN BNL Aof. A (NYC) BNL 10 Gbps 20 -40 Gbps BNL U. of Michigan (Calibration) BNL (LIMAN) Starlight (CHIMAN) 3 Gbps 10 Gbps BNL Northeastern Tier 2 Center BNL (LIMAN) Internet 2 / NLR Peerings 3 Gbps 10 Gbps BNL Great Lakes Tier 2 Center BNL (LIMAN) Internet 2 / NLR Peerings 3 Gbps 10 Gbps BNL Midwestern Tier 2 Center BNL (LIMAN) Internet 2 / NLR Peerings 3 Gbps 10 Gbps BNL Southwestern Tier 2 BNL Center (LIMAN) Internet 2 / NLR Peerings 3 Gbps 10 Gbps BNL Western Tier 2 Center BNL (LIMAN) SLAC (BAMAN) 3 Gbps 10 Gbps BNL Tier 3 Aggregate BNL (LIMAN) Internet 2 / NLR Peerings 5 Gbps 20 Gbps BNL TRIUMF (Canadian BNL ATLAS Tier 1) (LIMAN) Seattle 1 Gbps 5 Gbps new Site Z new Site A 45

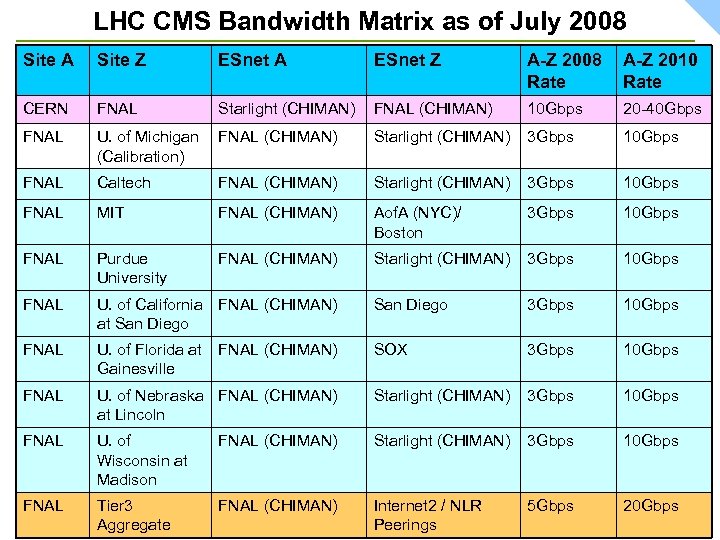

LHC CMS Bandwidth Matrix as of July 2008 Site A Site Z ESnet A ESnet Z A-Z 2008 Rate A-Z 2010 Rate CERN FNAL Starlight (CHIMAN) FNAL (CHIMAN) 10 Gbps 20 -40 Gbps FNAL U. of Michigan FNAL (CHIMAN) (Calibration) Starlight (CHIMAN) 3 Gbps 10 Gbps FNAL Caltech FNAL (CHIMAN) Starlight (CHIMAN) 3 Gbps 10 Gbps FNAL MIT FNAL (CHIMAN) Aof. A (NYC)/ Boston 3 Gbps 10 Gbps FNAL Purdue University FNAL (CHIMAN) Starlight (CHIMAN) 3 Gbps 10 Gbps FNAL U. of California FNAL (CHIMAN) at San Diego 3 Gbps 10 Gbps FNAL U. of Florida at FNAL (CHIMAN) Gainesville SOX 3 Gbps 10 Gbps FNAL U. of Nebraska FNAL (CHIMAN) at Lincoln Starlight (CHIMAN) 3 Gbps 10 Gbps FNAL U. of Wisconsin at Madison FNAL (CHIMAN) Starlight (CHIMAN) 3 Gbps 10 Gbps FNAL Tier 3 Aggregate FNAL (CHIMAN) Internet 2 / NLR Peerings 5 Gbps 20 Gbps 46

LHC CMS Bandwidth Matrix as of July 2008 Site A Site Z ESnet A ESnet Z A-Z 2008 Rate A-Z 2010 Rate CERN FNAL Starlight (CHIMAN) FNAL (CHIMAN) 10 Gbps 20 -40 Gbps FNAL U. of Michigan FNAL (CHIMAN) (Calibration) Starlight (CHIMAN) 3 Gbps 10 Gbps FNAL Caltech FNAL (CHIMAN) Starlight (CHIMAN) 3 Gbps 10 Gbps FNAL MIT FNAL (CHIMAN) Aof. A (NYC)/ Boston 3 Gbps 10 Gbps FNAL Purdue University FNAL (CHIMAN) Starlight (CHIMAN) 3 Gbps 10 Gbps FNAL U. of California FNAL (CHIMAN) at San Diego 3 Gbps 10 Gbps FNAL U. of Florida at FNAL (CHIMAN) Gainesville SOX 3 Gbps 10 Gbps FNAL U. of Nebraska FNAL (CHIMAN) at Lincoln Starlight (CHIMAN) 3 Gbps 10 Gbps FNAL U. of Wisconsin at Madison FNAL (CHIMAN) Starlight (CHIMAN) 3 Gbps 10 Gbps FNAL Tier 3 Aggregate FNAL (CHIMAN) Internet 2 / NLR Peerings 5 Gbps 20 Gbps 46

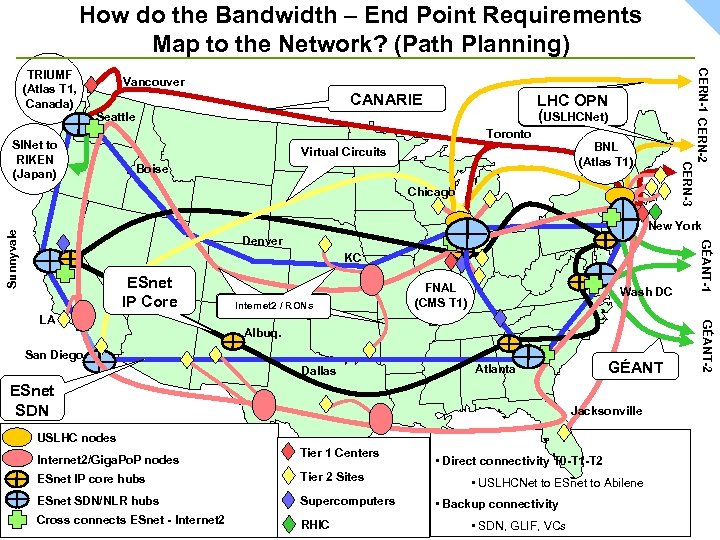

How do the Bandwidth – End Point Requirements Map to the Network? (Path Planning) CERN-1 CERN-2 CERN-3 TRIUMF (Atlas T 1, Canada) Vancouver CANARIE LHC OPN (USLHCNet) Seattle SINet to RIKEN (Japan) Toronto BNL (Atlas T 1) Virtual Circuits Boise Chicago Denver KC ESnet IP Core Internet 2 // RONs Internet 2 RONs Wash DC Albuq. San Diego Dallas GÉANT Atlanta ESnet SDN Jacksonville USLHC nodes Internet 2/Giga. Po. P nodes Tier 1 Centers ESnet IP core hubs Tier 2 Sites ESnet SDN/NLR hubs Supercomputers Cross connects ESnet - Internet 2 RHIC • Direct connectivity T 0 -T 1 -T 2 • USLHCNet to ESnet to Abilene • Backup connectivity • SDN, GLIF, VCs GÉANT-2 LA FNAL (CMS T 1) GÉANT-1 Sunnyvale New York

How do the Bandwidth – End Point Requirements Map to the Network? (Path Planning) CERN-1 CERN-2 CERN-3 TRIUMF (Atlas T 1, Canada) Vancouver CANARIE LHC OPN (USLHCNet) Seattle SINet to RIKEN (Japan) Toronto BNL (Atlas T 1) Virtual Circuits Boise Chicago Denver KC ESnet IP Core Internet 2 // RONs Internet 2 RONs Wash DC Albuq. San Diego Dallas GÉANT Atlanta ESnet SDN Jacksonville USLHC nodes Internet 2/Giga. Po. P nodes Tier 1 Centers ESnet IP core hubs Tier 2 Sites ESnet SDN/NLR hubs Supercomputers Cross connects ESnet - Internet 2 RHIC • Direct connectivity T 0 -T 1 -T 2 • USLHCNet to ESnet to Abilene • Backup connectivity • SDN, GLIF, VCs GÉANT-2 LA FNAL (CMS T 1) GÉANT-1 Sunnyvale New York

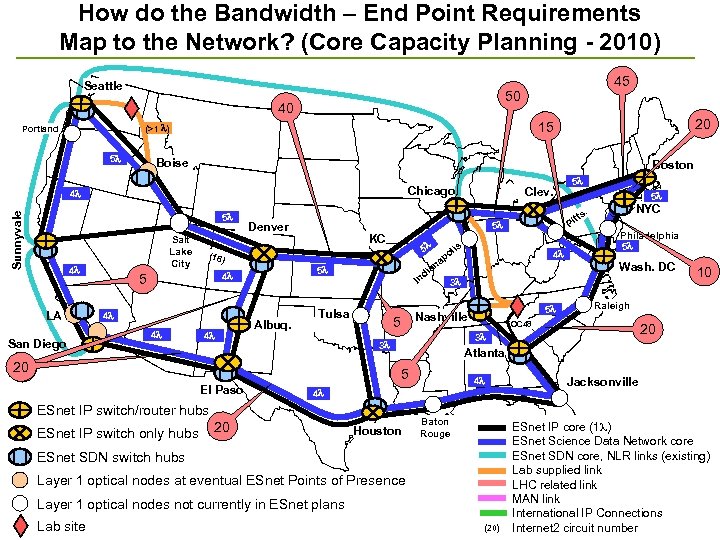

How do the Bandwidth – End Point Requirements Map to the Network? (Core Capacity Planning - 2010) Seattle 50 40 5 Boise Boston Chicago 4 5 Salt Lake City 4 LA San Diego 5 4 4 KC 5 (16 ) 4 Albuq. d In Tulsa po 4 3 5 Wash. DC 10 Raleigh OC 48 20 Atlanta 4 4 20 Philadelphia 5 3 ESnet IP switch/router hubs ESnet IP switch only hubs NYC . 3 5 El Paso 5 tts lis Nashville 5 5 Pi na ia 5 4 Clev. 5 Denver 20 Houston Baton Rouge ESnet SDN switch hubs Layer 1 optical nodes at eventual ESnet Points of Presence Layer 1 optical nodes not currently in ESnet plans Lab site 20 15 (>1 ) Portland Sunnyvale 45 (20) Jacksonville ESnet IP core (1 ) ESnet Science Data Network core ESnet SDN core, NLR links (existing) Lab supplied link LHC related link MAN link International IP Connections Internet 2 circuit number

How do the Bandwidth – End Point Requirements Map to the Network? (Core Capacity Planning - 2010) Seattle 50 40 5 Boise Boston Chicago 4 5 Salt Lake City 4 LA San Diego 5 4 4 KC 5 (16 ) 4 Albuq. d In Tulsa po 4 3 5 Wash. DC 10 Raleigh OC 48 20 Atlanta 4 4 20 Philadelphia 5 3 ESnet IP switch/router hubs ESnet IP switch only hubs NYC . 3 5 El Paso 5 tts lis Nashville 5 5 Pi na ia 5 4 Clev. 5 Denver 20 Houston Baton Rouge ESnet SDN switch hubs Layer 1 optical nodes at eventual ESnet Points of Presence Layer 1 optical nodes not currently in ESnet plans Lab site 20 15 (>1 ) Portland Sunnyvale 45 (20) Jacksonville ESnet IP core (1 ) ESnet Science Data Network core ESnet SDN core, NLR links (existing) Lab supplied link LHC related link MAN link International IP Connections Internet 2 circuit number

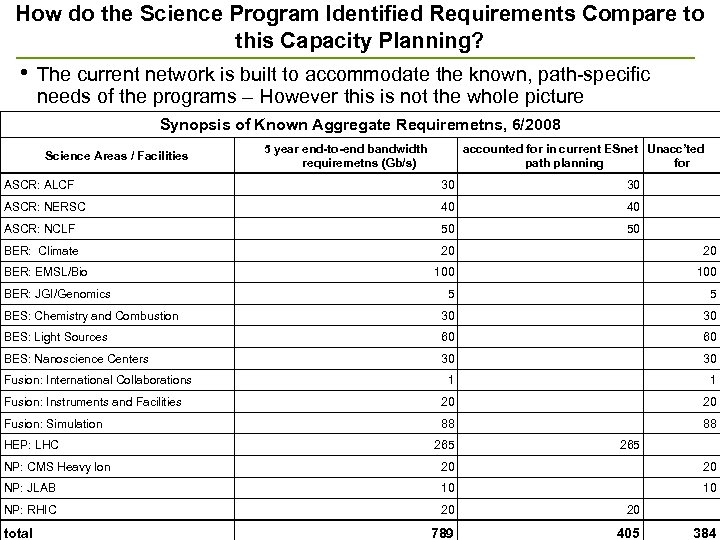

How do the Science Program Identified Requirements Compare to this Capacity Planning? • The current network is built to accommodate the known, path-specific needs of the programs – However this is not the whole picture Synopsis of Known Aggregate Requiremetns, 6/2008 Science Areas / Facilities 5 year end-to-end bandwidth requiremetns (Gb/s) accounted for in current ESnet Unacc’ted path planning for ASCR: ALCF 30 30 ASCR: NERSC 40 40 ASCR: NCLF 50 50 BER: Climate 20 20 100 5 5 BES: Chemistry and Combustion 30 30 BES: Light Sources 60 60 BES: Nanoscience Centers 30 30 1 1 Fusion: Instruments and Facilities 20 20 Fusion: Simulation 88 88 265 NP: CMS Heavy Ion 20 20 NP: JLAB 10 10 NP: RHIC 20 20 789 405 384 BER: EMSL/Bio BER: JGI/Genomics Fusion: International Collaborations HEP: LHC total

How do the Science Program Identified Requirements Compare to this Capacity Planning? • The current network is built to accommodate the known, path-specific needs of the programs – However this is not the whole picture Synopsis of Known Aggregate Requiremetns, 6/2008 Science Areas / Facilities 5 year end-to-end bandwidth requiremetns (Gb/s) accounted for in current ESnet Unacc’ted path planning for ASCR: ALCF 30 30 ASCR: NERSC 40 40 ASCR: NCLF 50 50 BER: Climate 20 20 100 5 5 BES: Chemistry and Combustion 30 30 BES: Light Sources 60 60 BES: Nanoscience Centers 30 30 1 1 Fusion: Instruments and Facilities 20 20 Fusion: Simulation 88 88 265 NP: CMS Heavy Ion 20 20 NP: JLAB 10 10 NP: RHIC 20 20 789 405 384 BER: EMSL/Bio BER: JGI/Genomics Fusion: International Collaborations HEP: LHC total

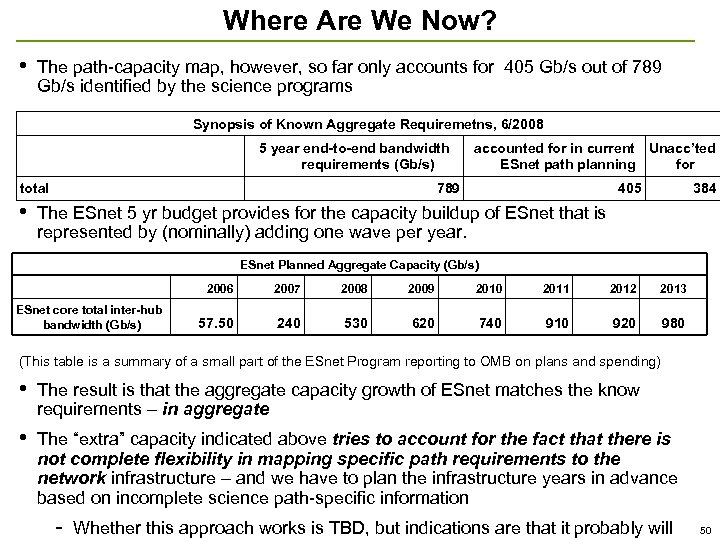

Where Are We Now? • The path-capacity map, however, so far only accounts for 405 Gb/s out of 789 Gb/s identified by the science programs Synopsis of Known Aggregate Requiremetns, 6/2008 5 year end-to-end bandwidth requirements (Gb/s) total • accounted for in current Unacc’ted ESnet path planning for 789 405 384 The ESnet 5 yr budget provides for the capacity buildup of ESnet that is represented by (nominally) adding one wave per year. ESnet Planned Aggregate Capacity (Gb/s) 2006 ESnet core total inter-hub bandwidth (Gb/s) 2007 2008 2009 2010 2011 2012 2013 57. 50 240 530 620 740 910 920 980 (This table is a summary of a small part of the ESnet Program reporting to OMB on plans and spending) • The result is that the aggregate capacity growth of ESnet matches the know requirements – in aggregate • The “extra” capacity indicated above tries to account for the fact that there is not complete flexibility in mapping specific path requirements to the network infrastructure – and we have to plan the infrastructure years in advance based on incomplete science path-specific information - Whether this approach works is TBD, but indications are that it probably will 50

Where Are We Now? • The path-capacity map, however, so far only accounts for 405 Gb/s out of 789 Gb/s identified by the science programs Synopsis of Known Aggregate Requiremetns, 6/2008 5 year end-to-end bandwidth requirements (Gb/s) total • accounted for in current Unacc’ted ESnet path planning for 789 405 384 The ESnet 5 yr budget provides for the capacity buildup of ESnet that is represented by (nominally) adding one wave per year. ESnet Planned Aggregate Capacity (Gb/s) 2006 ESnet core total inter-hub bandwidth (Gb/s) 2007 2008 2009 2010 2011 2012 2013 57. 50 240 530 620 740 910 920 980 (This table is a summary of a small part of the ESnet Program reporting to OMB on plans and spending) • The result is that the aggregate capacity growth of ESnet matches the know requirements – in aggregate • The “extra” capacity indicated above tries to account for the fact that there is not complete flexibility in mapping specific path requirements to the network infrastructure – and we have to plan the infrastructure years in advance based on incomplete science path-specific information - Whether this approach works is TBD, but indications are that it probably will 50

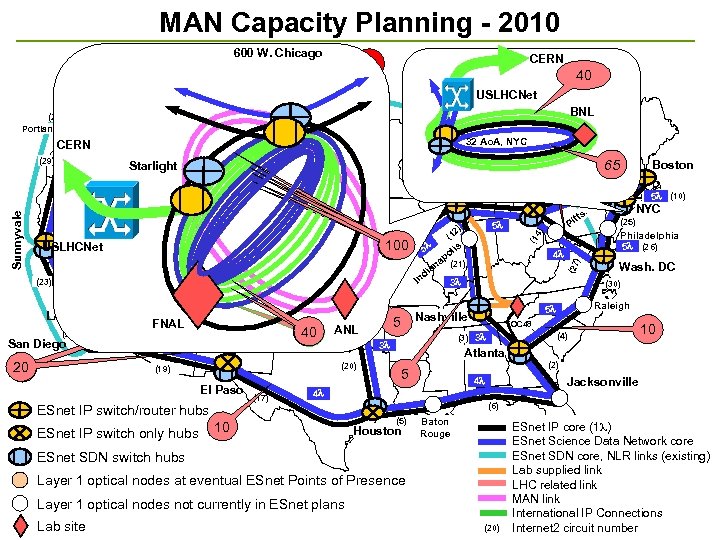

MAN Capacity Planning - 2010 600 W. Chicago CERN 25 40 Seattle BNL 15 (>1 ) ) (8 (28) Portland 32 Ao. A, NYC CERN Boise Starlight 65 Chicago Salt Lake City USLHCNet 4 LA 4 (24) San Diego 20 Denver FNAL 5 4 (22) (0) Tulsa Albuq. 40 ANL (20) El Paso ESnet IP switch/router hubs 5 (1 (17) 5 2) lis 4 Wash. DC OC 48 (4) (3) 3 10 Atlanta (2) 5 4 Jacksonville (6) (5) Houston Baton Rouge ESnet SDN switch hubs Layer 1 optical nodes at eventual ESnet Points of Presence Layer 1 optical nodes not currently in ESnet plans Lab site Philadelphia 5 (26) Raleigh 5 4 10 NYC (25) (30) Nashville 5 . tts Pi po na (21) a di In 3 3 (1) (19) ESnet IP switch only hubs 100 80 80 (16 ) 4 4 KC (15) 5 (23) (13) 5 (10) (27 ) 5 (32) (11) Boston (9) 5 Clev. 4) (7) 4 (1 5 (29) Sunnyvale 20 USLHCNet 25 (20) ESnet IP core (1 ) ESnet Science Data Network core ESnet SDN core, NLR links (existing) Lab supplied link LHC related link MAN link International IP Connections Internet 2 circuit number 51

MAN Capacity Planning - 2010 600 W. Chicago CERN 25 40 Seattle BNL 15 (>1 ) ) (8 (28) Portland 32 Ao. A, NYC CERN Boise Starlight 65 Chicago Salt Lake City USLHCNet 4 LA 4 (24) San Diego 20 Denver FNAL 5 4 (22) (0) Tulsa Albuq. 40 ANL (20) El Paso ESnet IP switch/router hubs 5 (1 (17) 5 2) lis 4 Wash. DC OC 48 (4) (3) 3 10 Atlanta (2) 5 4 Jacksonville (6) (5) Houston Baton Rouge ESnet SDN switch hubs Layer 1 optical nodes at eventual ESnet Points of Presence Layer 1 optical nodes not currently in ESnet plans Lab site Philadelphia 5 (26) Raleigh 5 4 10 NYC (25) (30) Nashville 5 . tts Pi po na (21) a di In 3 3 (1) (19) ESnet IP switch only hubs 100 80 80 (16 ) 4 4 KC (15) 5 (23) (13) 5 (10) (27 ) 5 (32) (11) Boston (9) 5 Clev. 4) (7) 4 (1 5 (29) Sunnyvale 20 USLHCNet 25 (20) ESnet IP core (1 ) ESnet Science Data Network core ESnet SDN core, NLR links (existing) Lab supplied link LHC related link MAN link International IP Connections Internet 2 circuit number 51

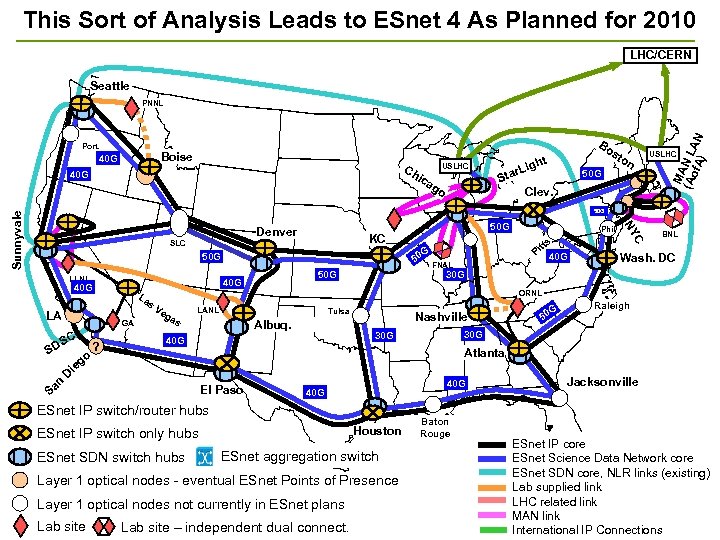

This Sort of Analysis Leads to ESnet 4 As Planned for 2010 LHC/CERN Seattle Port. Boise 40 G Ch 40 G USLHC ica go Bo st t h r. Lig Sta 50 G on Clev. MA (Ao N L f. A) AN PNNL USLHC Denver SLC G 50 50 G LLNL LA SD n Sa La s GA SC o ? 50 G 40 G 50 G s. KC 40 G Wash. DC Phil tt Pi FNAL BNL 30 G ORNL Ve ga s LANL Tulsa Albuq. Nashville G 50 Raleigh 30 G 40 G Atlanta g ie D El Paso 40 G ESnet IP switch/router hubs Houston ESnet IP switch only hubs ESnet SDN switch hubs ESnet aggregation switch Layer 1 optical nodes - eventual ESnet Points of Presence Layer 1 optical nodes not currently in ESnet plans Lab site C NY Sunnyvale 50 G Lab site – independent dual connect. Baton Rouge Jacksonville ESnet IP core ESnet Science Data Network core ESnet SDN core, NLR links (existing) Lab supplied link LHC related link MAN link 52 International IP Connections

This Sort of Analysis Leads to ESnet 4 As Planned for 2010 LHC/CERN Seattle Port. Boise 40 G Ch 40 G USLHC ica go Bo st t h r. Lig Sta 50 G on Clev. MA (Ao N L f. A) AN PNNL USLHC Denver SLC G 50 50 G LLNL LA SD n Sa La s GA SC o ? 50 G 40 G 50 G s. KC 40 G Wash. DC Phil tt Pi FNAL BNL 30 G ORNL Ve ga s LANL Tulsa Albuq. Nashville G 50 Raleigh 30 G 40 G Atlanta g ie D El Paso 40 G ESnet IP switch/router hubs Houston ESnet IP switch only hubs ESnet SDN switch hubs ESnet aggregation switch Layer 1 optical nodes - eventual ESnet Points of Presence Layer 1 optical nodes not currently in ESnet plans Lab site C NY Sunnyvale 50 G Lab site – independent dual connect. Baton Rouge Jacksonville ESnet IP core ESnet Science Data Network core ESnet SDN core, NLR links (existing) Lab supplied link LHC related link MAN link 52 International IP Connections

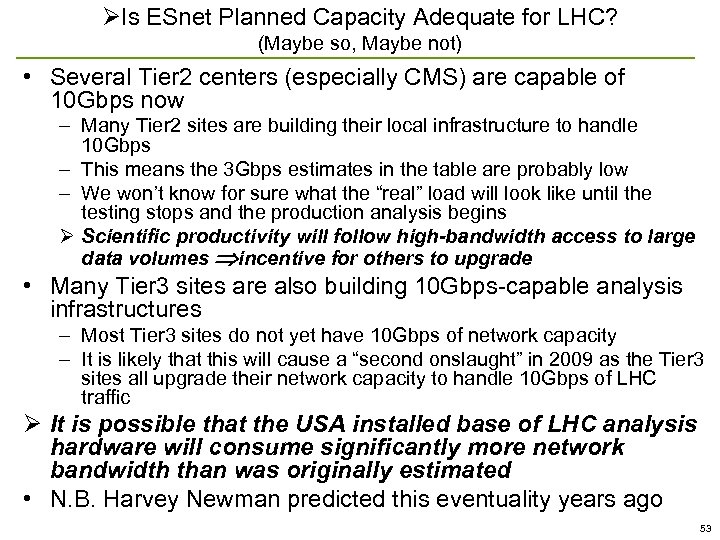

ØIs ESnet Planned Capacity Adequate for LHC? (Maybe so, Maybe not) • Several Tier 2 centers (especially CMS) are capable of 10 Gbps now – Many Tier 2 sites are building their local infrastructure to handle 10 Gbps – This means the 3 Gbps estimates in the table are probably low – We won’t know for sure what the “real” load will look like until the testing stops and the production analysis begins Ø Scientific productivity will follow high-bandwidth access to large data volumes incentive for others to upgrade • Many Tier 3 sites are also building 10 Gbps-capable analysis infrastructures – Most Tier 3 sites do not yet have 10 Gbps of network capacity – It is likely that this will cause a “second onslaught” in 2009 as the Tier 3 sites all upgrade their network capacity to handle 10 Gbps of LHC traffic Ø It is possible that the USA installed base of LHC analysis hardware will consume significantly more network bandwidth than was originally estimated • N. B. Harvey Newman predicted this eventuality years ago 53

ØIs ESnet Planned Capacity Adequate for LHC? (Maybe so, Maybe not) • Several Tier 2 centers (especially CMS) are capable of 10 Gbps now – Many Tier 2 sites are building their local infrastructure to handle 10 Gbps – This means the 3 Gbps estimates in the table are probably low – We won’t know for sure what the “real” load will look like until the testing stops and the production analysis begins Ø Scientific productivity will follow high-bandwidth access to large data volumes incentive for others to upgrade • Many Tier 3 sites are also building 10 Gbps-capable analysis infrastructures – Most Tier 3 sites do not yet have 10 Gbps of network capacity – It is likely that this will cause a “second onslaught” in 2009 as the Tier 3 sites all upgrade their network capacity to handle 10 Gbps of LHC traffic Ø It is possible that the USA installed base of LHC analysis hardware will consume significantly more network bandwidth than was originally estimated • N. B. Harvey Newman predicted this eventuality years ago 53

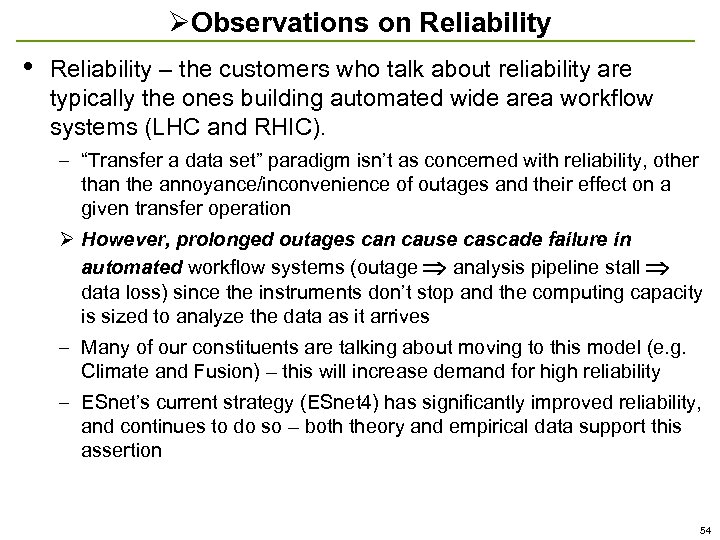

ØObservations on Reliability • Reliability – the customers who talk about reliability are typically the ones building automated wide area workflow systems (LHC and RHIC). – “Transfer a data set” paradigm isn’t as concerned with reliability, other than the annoyance/inconvenience of outages and their effect on a given transfer operation Ø However, prolonged outages can cause cascade failure in automated workflow systems (outage analysis pipeline stall data loss) since the instruments don’t stop and the computing capacity is sized to analyze the data as it arrives – Many of our constituents are talking about moving to this model (e. g. Climate and Fusion) – this will increase demand for high reliability – ESnet’s current strategy (ESnet 4) has significantly improved reliability, and continues to do so – both theory and empirical data support this assertion 54

ØObservations on Reliability • Reliability – the customers who talk about reliability are typically the ones building automated wide area workflow systems (LHC and RHIC). – “Transfer a data set” paradigm isn’t as concerned with reliability, other than the annoyance/inconvenience of outages and their effect on a given transfer operation Ø However, prolonged outages can cause cascade failure in automated workflow systems (outage analysis pipeline stall data loss) since the instruments don’t stop and the computing capacity is sized to analyze the data as it arrives – Many of our constituents are talking about moving to this model (e. g. Climate and Fusion) – this will increase demand for high reliability – ESnet’s current strategy (ESnet 4) has significantly improved reliability, and continues to do so – both theory and empirical data support this assertion 54

IIa. Re-evaluating the Strategy • The current strategy (that lead to the ESnet 4, 2012 plans) was developed primarily as a result of the information gathered in the 2003 and 2003 networkshops, and their updates in 2005 -6 (including LHC, climate, RHIC, SNS, Fusion, the supercomputers, and a few others) [workshops] • So far the more formal requirements workshops have largely reaffirmed the ESnet 4 strategy developed earlier • However – is the whole story? 55

IIa. Re-evaluating the Strategy • The current strategy (that lead to the ESnet 4, 2012 plans) was developed primarily as a result of the information gathered in the 2003 and 2003 networkshops, and their updates in 2005 -6 (including LHC, climate, RHIC, SNS, Fusion, the supercomputers, and a few others) [workshops] • So far the more formal requirements workshops have largely reaffirmed the ESnet 4 strategy developed earlier • However – is the whole story? 55

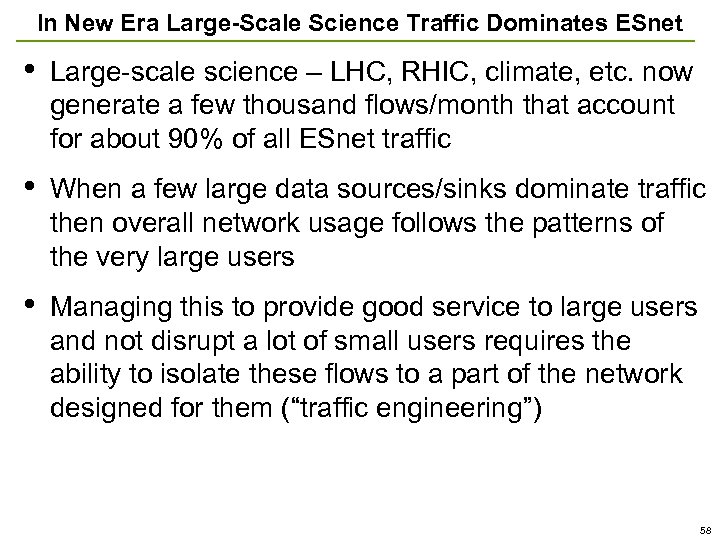

“Philosophical” Issues for the Future Network • One can qualitatively divide the networking issues into what I will call “old era” and “new era” • In the old era (to about mid-2005) data from scientific instruments did grow exponentially, but the actual used bandwidths involved did not really tax network technology • In the old era there were few, if any, dominate traffic flows – all the traffic could be treated together as a “well behaved” aggregate. 56

“Philosophical” Issues for the Future Network • One can qualitatively divide the networking issues into what I will call “old era” and “new era” • In the old era (to about mid-2005) data from scientific instruments did grow exponentially, but the actual used bandwidths involved did not really tax network technology • In the old era there were few, if any, dominate traffic flows – all the traffic could be treated together as a “well behaved” aggregate. 56

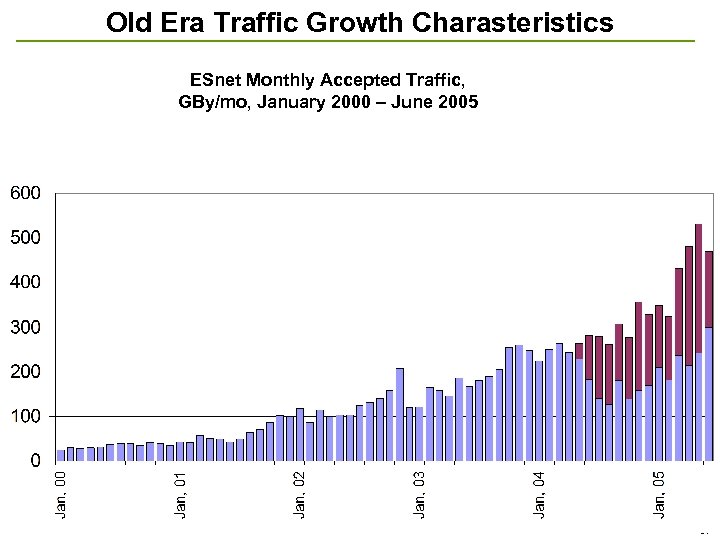

Old Era Traffic Growth Charasteristics ESnet Monthly Accepted Traffic, GBy/mo, January 2000 – June 2005 57

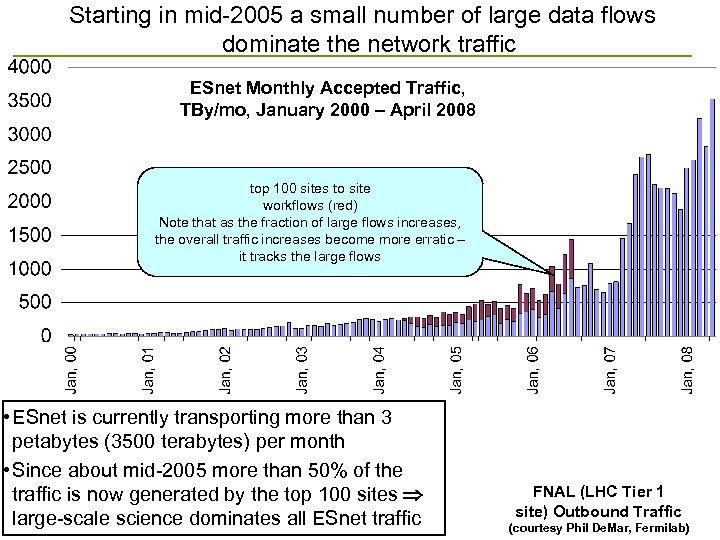

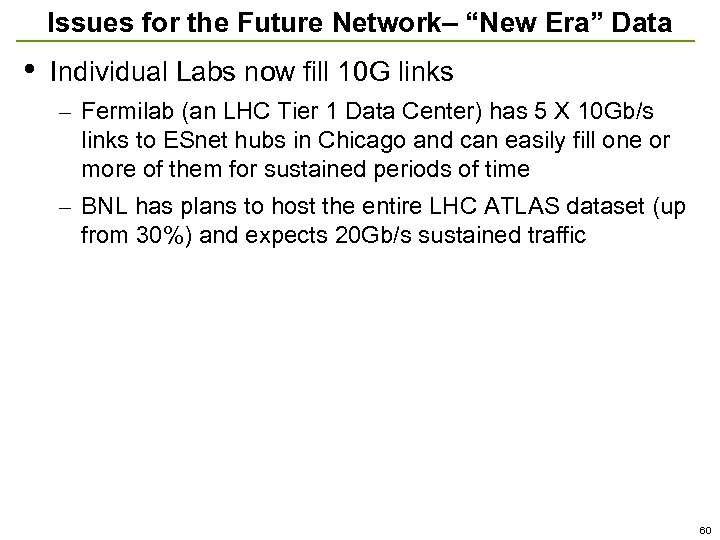

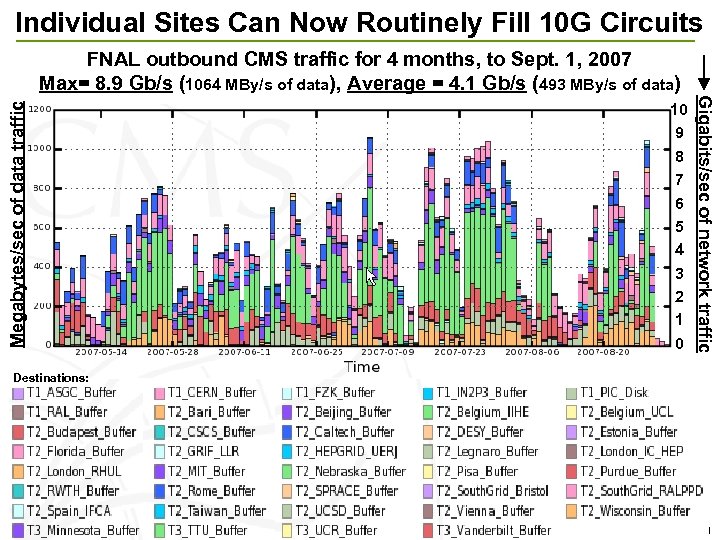

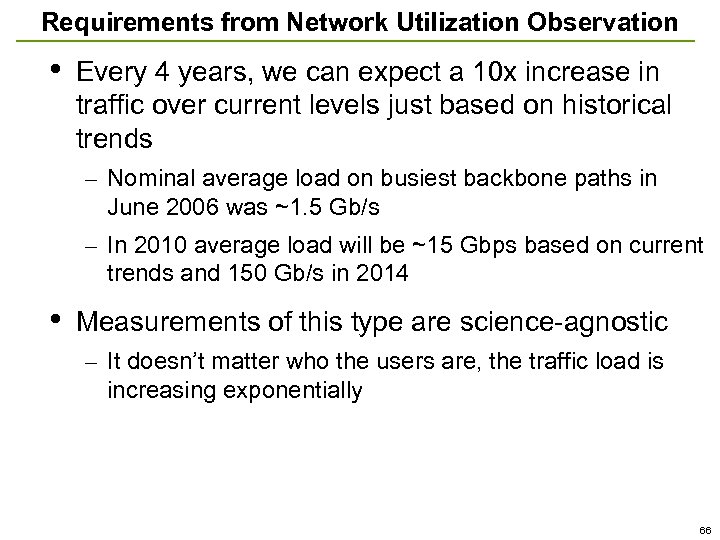

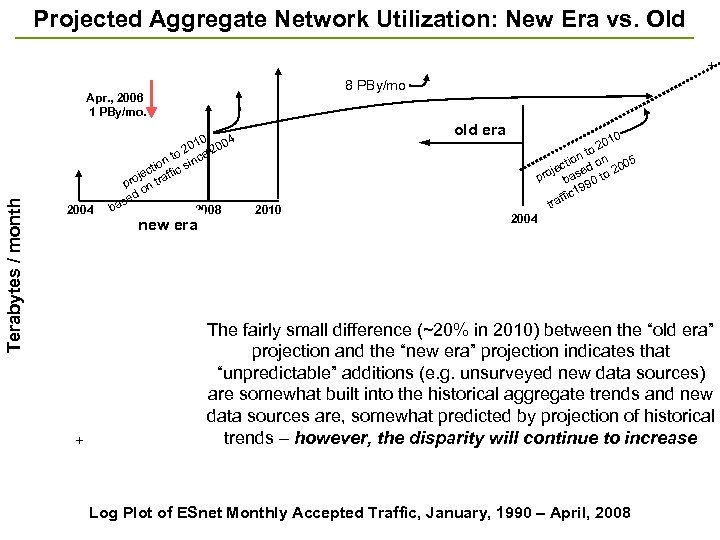

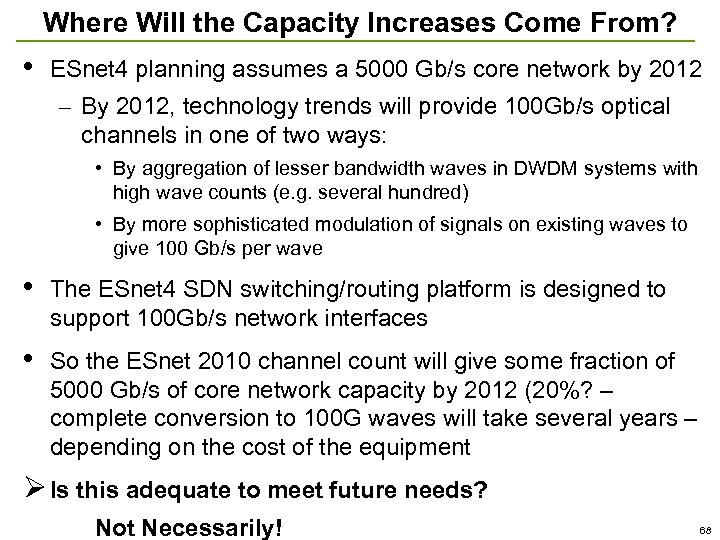

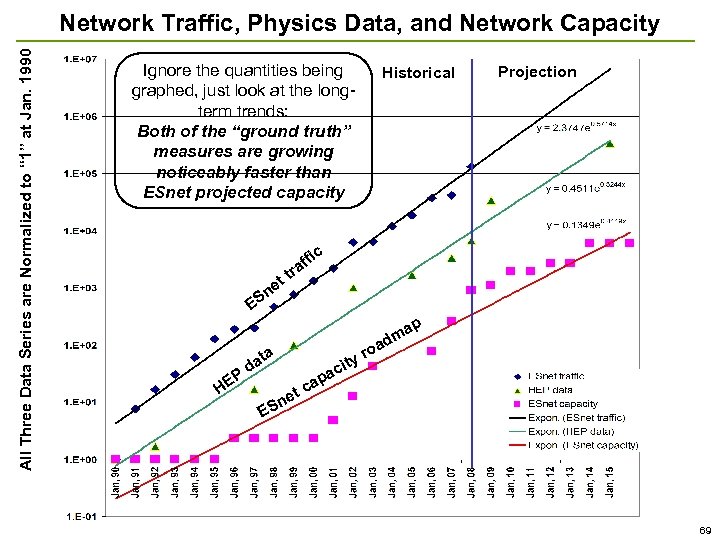

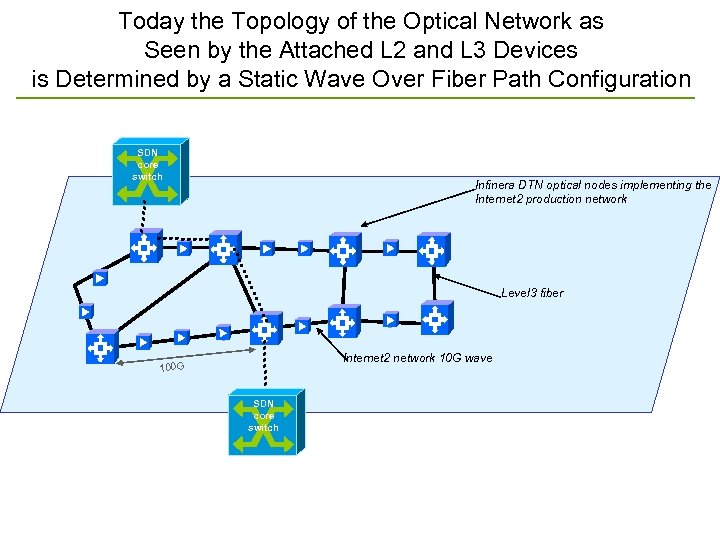

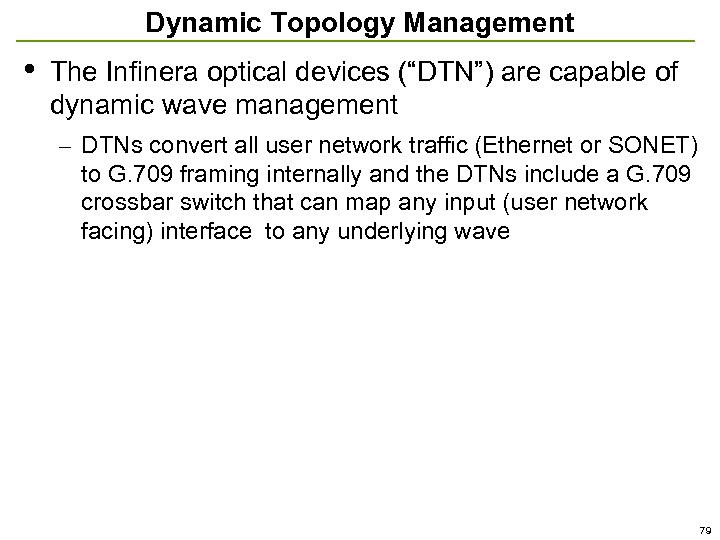

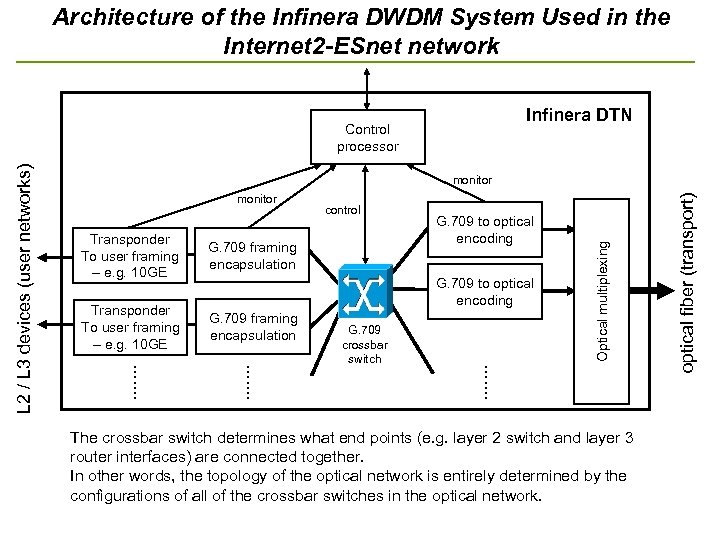

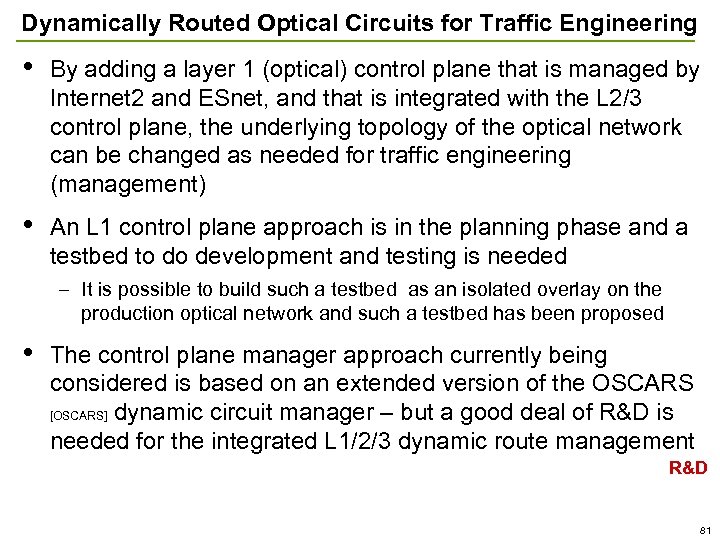

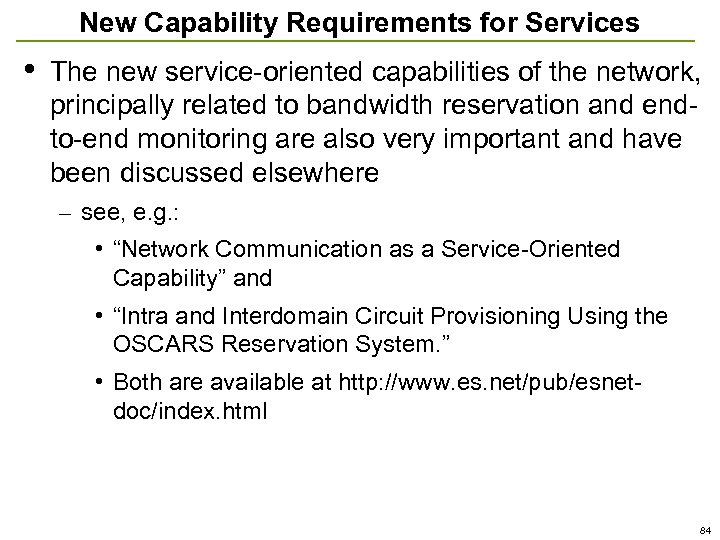

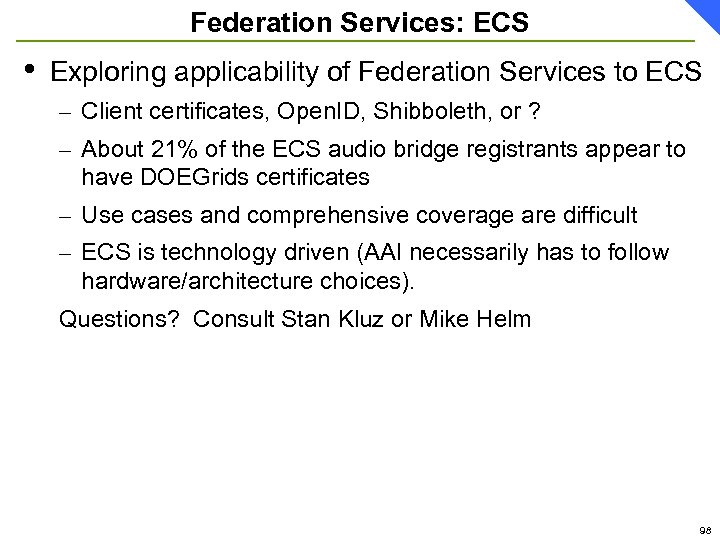

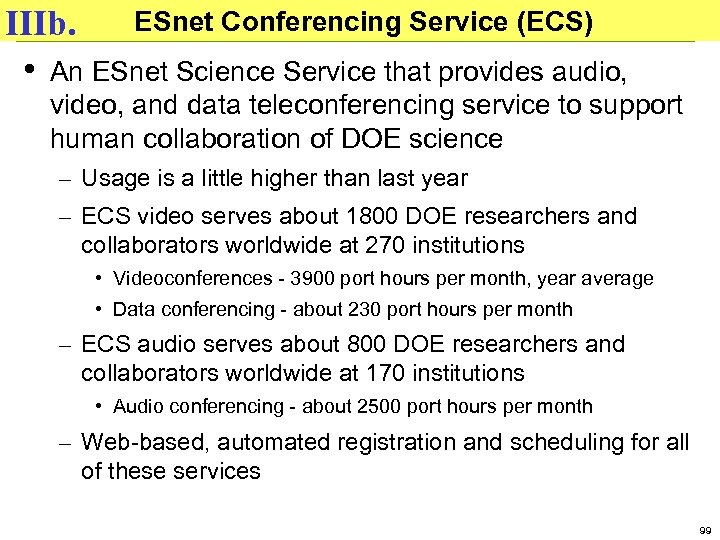

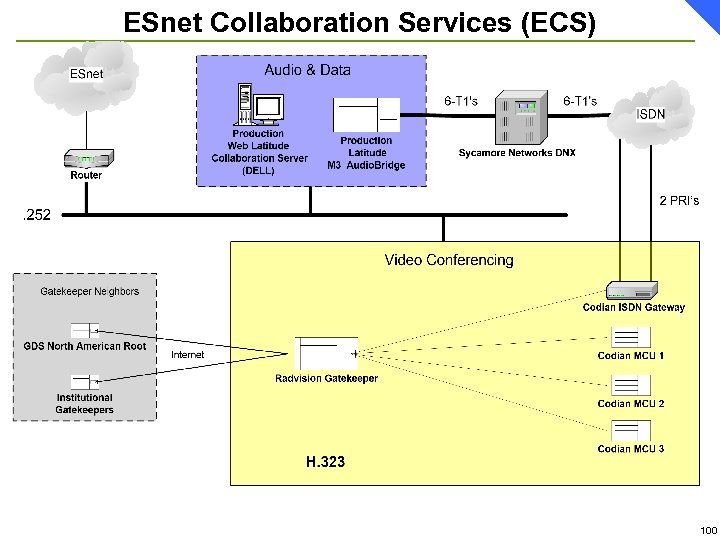

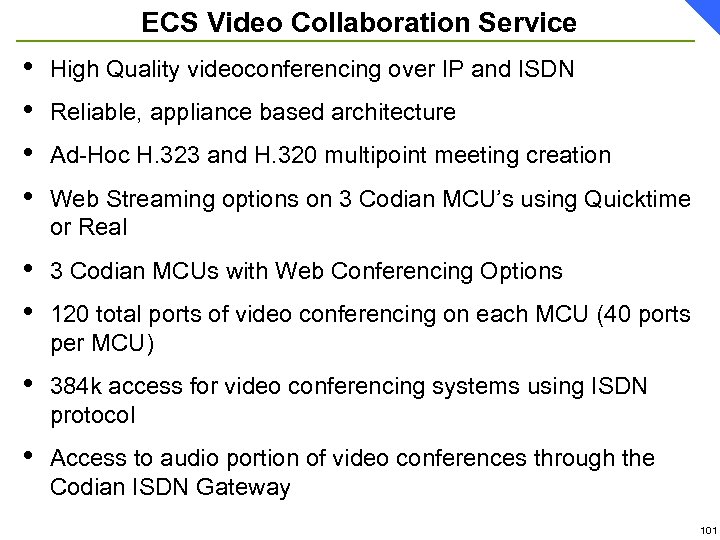

Old Era Traffic Growth Charasteristics ESnet Monthly Accepted Traffic, GBy/mo, January 2000 – June 2005 57