0b3094820b60387ee4fe9ae8f3dc9b71.ppt

- Количество слайдов: 25

Empirical Evaluation of Pronoun Resolution and Clausal Structure Joel Tetreault and James Allen University of Rochester Department of Computer Science

Empirical Evaluation of Pronoun Resolution and Clausal Structure Joel Tetreault and James Allen University of Rochester Department of Computer Science

RST and pronoun resolution n Previous work suggests that breaking apart utterances into clauses (Kameyama 1998), or assigning a hierarchical structure (Grosz and Sidner, 1986; Webber 1988) can aid in the resolution of pronouns: 1. 2. n n Make search more efficient (less entities to consider) Make search more successful (block competing antecedents) Empirical work has focused on using segmentation to limit accessibility space of antecedents Test claim by performing an automated study on a corpus (1241 sentence subsection of Penn. Treebank; 454 3 rd person pronouns)

RST and pronoun resolution n Previous work suggests that breaking apart utterances into clauses (Kameyama 1998), or assigning a hierarchical structure (Grosz and Sidner, 1986; Webber 1988) can aid in the resolution of pronouns: 1. 2. n n Make search more efficient (less entities to consider) Make search more successful (block competing antecedents) Empirical work has focused on using segmentation to limit accessibility space of antecedents Test claim by performing an automated study on a corpus (1241 sentence subsection of Penn. Treebank; 454 3 rd person pronouns)

Rhetorical Structure Theory n n n A way of organizing and describing natural text (Mann and Thompson, 1988) It identifies a hierarchical structure Describes binary relations between text parts

Rhetorical Structure Theory n n n A way of organizing and describing natural text (Mann and Thompson, 1988) It identifies a hierarchical structure Describes binary relations between text parts

Experiment n n Create coref corpus that includes PT syntactic trees and RST information Run pronoun algorithms over this merged data set to determine baseline score n n LRC (Tetreault, 1999) S-list (Strube, 1998) BFP (Brennan et al. , 1987) Develop algorithms that use clausal information to compare with baseline

Experiment n n Create coref corpus that includes PT syntactic trees and RST information Run pronoun algorithms over this merged data set to determine baseline score n n LRC (Tetreault, 1999) S-list (Strube, 1998) BFP (Brennan et al. , 1987) Develop algorithms that use clausal information to compare with baseline

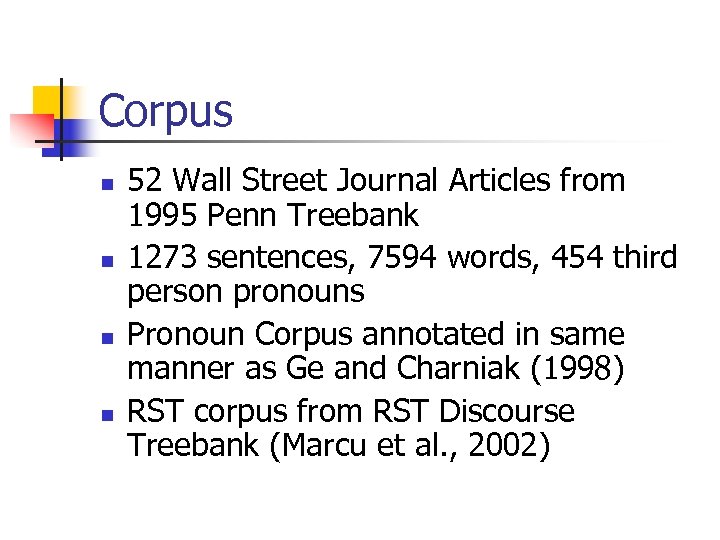

Corpus n n 52 Wall Street Journal Articles from 1995 Penn Treebank 1273 sentences, 7594 words, 454 third person pronouns Pronoun Corpus annotated in same manner as Ge and Charniak (1998) RST corpus from RST Discourse Treebank (Marcu et al. , 2002)

Corpus n n 52 Wall Street Journal Articles from 1995 Penn Treebank 1273 sentences, 7594 words, 454 third person pronouns Pronoun Corpus annotated in same manner as Ge and Charniak (1998) RST corpus from RST Discourse Treebank (Marcu et al. , 2002)

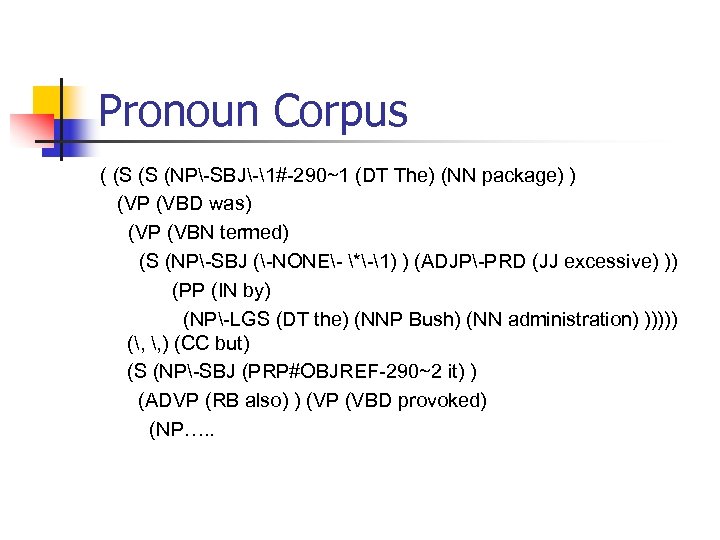

Pronoun Corpus ( (S (S (NP-SBJ-1#-290~1 (DT The) (NN package) ) (VP (VBD was) (VP (VBN termed) (S (NP-SBJ (-NONE- *-1) ) (ADJP-PRD (JJ excessive) )) (PP (IN by) (NP-LGS (DT the) (NNP Bush) (NN administration) ))))) (, , ) (CC but) (S (NP-SBJ (PRP#OBJREF-290~2 it) ) (ADVP (RB also) ) (VP (VBD provoked) (NP…. .

Pronoun Corpus ( (S (S (NP-SBJ-1#-290~1 (DT The) (NN package) ) (VP (VBD was) (VP (VBN termed) (S (NP-SBJ (-NONE- *-1) ) (ADJP-PRD (JJ excessive) )) (PP (IN by) (NP-LGS (DT the) (NNP Bush) (NN administration) ))))) (, , ) (CC but) (S (NP-SBJ (PRP#OBJREF-290~2 it) ) (ADVP (RB also) ) (VP (VBD provoked) (NP…. .

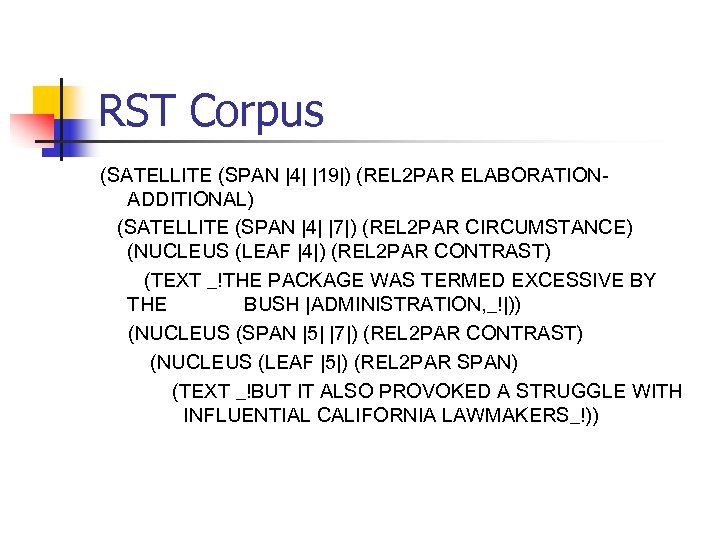

RST Corpus (SATELLITE (SPAN |4| |19|) (REL 2 PAR ELABORATIONADDITIONAL) (SATELLITE (SPAN |4| |7|) (REL 2 PAR CIRCUMSTANCE) (NUCLEUS (LEAF |4|) (REL 2 PAR CONTRAST) (TEXT _!THE PACKAGE WAS TERMED EXCESSIVE BY THE BUSH |ADMINISTRATION, _!|)) (NUCLEUS (SPAN |5| |7|) (REL 2 PAR CONTRAST) (NUCLEUS (LEAF |5|) (REL 2 PAR SPAN) (TEXT _!BUT IT ALSO PROVOKED A STRUGGLE WITH INFLUENTIAL CALIFORNIA LAWMAKERS_!))

RST Corpus (SATELLITE (SPAN |4| |19|) (REL 2 PAR ELABORATIONADDITIONAL) (SATELLITE (SPAN |4| |7|) (REL 2 PAR CIRCUMSTANCE) (NUCLEUS (LEAF |4|) (REL 2 PAR CONTRAST) (TEXT _!THE PACKAGE WAS TERMED EXCESSIVE BY THE BUSH |ADMINISTRATION, _!|)) (NUCLEUS (SPAN |5| |7|) (REL 2 PAR CONTRAST) (NUCLEUS (LEAF |5|) (REL 2 PAR SPAN) (TEXT _!BUT IT ALSO PROVOKED A STRUGGLE WITH INFLUENTIAL CALIFORNIA LAWMAKERS_!))

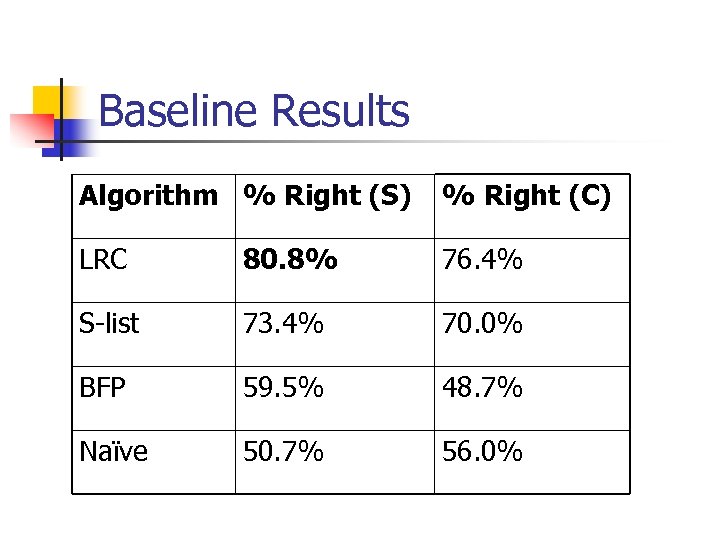

Baseline Results Algorithm % Right (S) % Right (C) LRC 80. 8% 76. 4% S-list 73. 4% 70. 0% BFP 59. 5% 48. 7% Naïve 50. 7% 56. 0%

Baseline Results Algorithm % Right (S) % Right (C) LRC 80. 8% 76. 4% S-list 73. 4% 70. 0% BFP 59. 5% 48. 7% Naïve 50. 7% 56. 0%

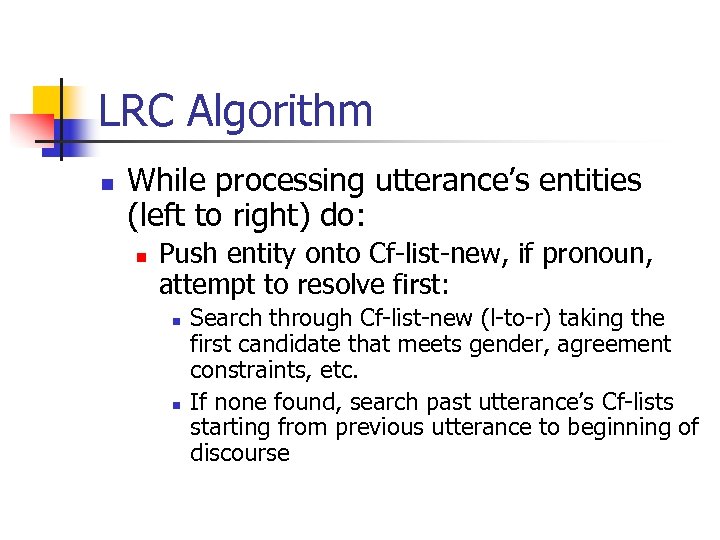

LRC Algorithm n While processing utterance’s entities (left to right) do: n Push entity onto Cf-list-new, if pronoun, attempt to resolve first: n n Search through Cf-list-new (l-to-r) taking the first candidate that meets gender, agreement constraints, etc. If none found, search past utterance’s Cf-lists starting from previous utterance to beginning of discourse

LRC Algorithm n While processing utterance’s entities (left to right) do: n Push entity onto Cf-list-new, if pronoun, attempt to resolve first: n n Search through Cf-list-new (l-to-r) taking the first candidate that meets gender, agreement constraints, etc. If none found, search past utterance’s Cf-lists starting from previous utterance to beginning of discourse

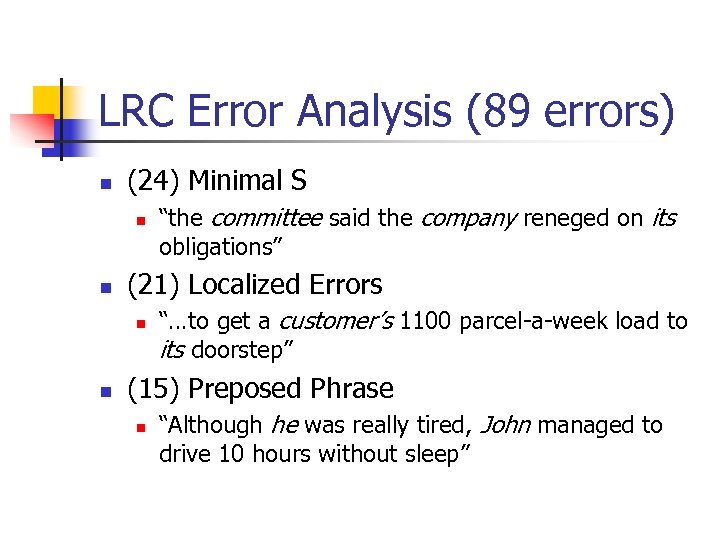

LRC Error Analysis (89 errors) n (24) Minimal S n n (21) Localized Errors n n “the committee said the company reneged on its obligations” “…to get a customer’s 1100 parcel-a-week load to its doorstep” (15) Preposed Phrase n “Although he was really tired, John managed to drive 10 hours without sleep”

LRC Error Analysis (89 errors) n (24) Minimal S n n (21) Localized Errors n n “the committee said the company reneged on its obligations” “…to get a customer’s 1100 parcel-a-week load to its doorstep” (15) Preposed Phrase n “Although he was really tired, John managed to drive 10 hours without sleep”

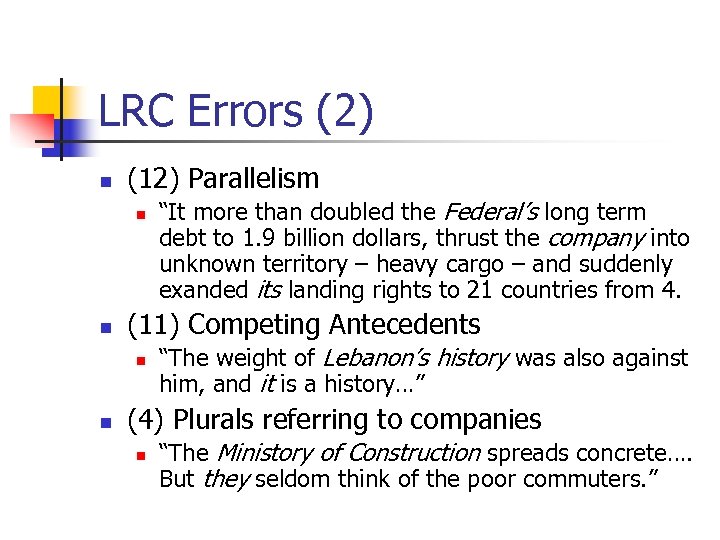

LRC Errors (2) n (12) Parallelism n n (11) Competing Antecedents n n “It more than doubled the Federal’s long term debt to 1. 9 billion dollars, thrust the company into unknown territory – heavy cargo – and suddenly exanded its landing rights to 21 countries from 4. “The weight of Lebanon’s history was also against him, and it is a history…” (4) Plurals referring to companies n “The Ministory of Construction spreads concrete…. But they seldom think of the poor commuters. ”

LRC Errors (2) n (12) Parallelism n n (11) Competing Antecedents n n “It more than doubled the Federal’s long term debt to 1. 9 billion dollars, thrust the company into unknown territory – heavy cargo – and suddenly exanded its landing rights to 21 countries from 4. “The weight of Lebanon’s history was also against him, and it is a history…” (4) Plurals referring to companies n “The Ministory of Construction spreads concrete…. But they seldom think of the poor commuters. ”

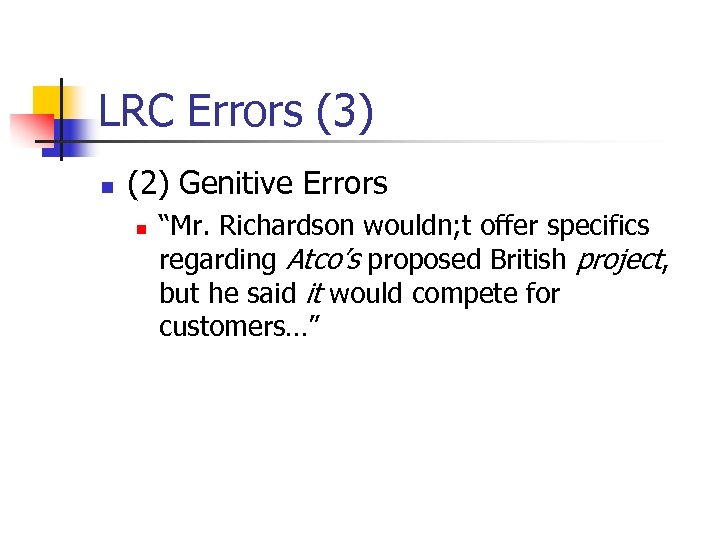

LRC Errors (3) n (2) Genitive Errors n “Mr. Richardson wouldn; t offer specifics regarding Atco’s proposed British project, but he said it would compete for customers…”

LRC Errors (3) n (2) Genitive Errors n “Mr. Richardson wouldn; t offer specifics regarding Atco’s proposed British project, but he said it would compete for customers…”

Advanced Approaches n n Grosz and Sidner (1986)– discourse structure is dependent on intentional structure. Attentional state is modeled as a stack that pushes and pops current state with changes in intentional structure Veins Theory (Ide and Cristea, 2000) – position of nuclei and satellites in a RST tree determine DRA (domain of referential accessibility) for each clause

Advanced Approaches n n Grosz and Sidner (1986)– discourse structure is dependent on intentional structure. Attentional state is modeled as a stack that pushes and pops current state with changes in intentional structure Veins Theory (Ide and Cristea, 2000) – position of nuclei and satellites in a RST tree determine DRA (domain of referential accessibility) for each clause

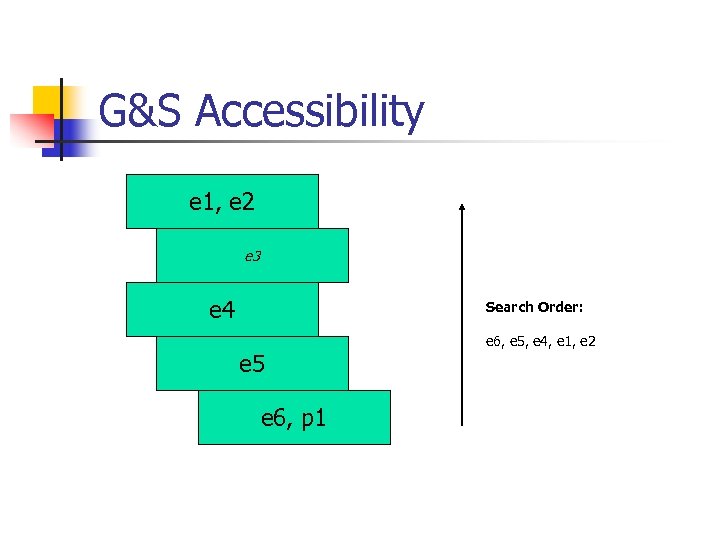

G&S Accessibility e 1, e 2 e 3 e 4 Search Order: e 5 e 6, p 1 e 6, e 5, e 4, e 1, e 2

G&S Accessibility e 1, e 2 e 3 e 4 Search Order: e 5 e 6, p 1 e 6, e 5, e 4, e 1, e 2

Veins Theory n n Each RST discourse unit (leaf) has an associated vein (Cristea et al. , 1998; Ide and Cristea, 2000) Vein provides a “summary of the discourse fragment that contains that unit” Contains salient parts of the RST tree – the preceding nuclei and surrounding satellites Veins determined by whether node is a nucleus or satellite and what its left and right children are

Veins Theory n n Each RST discourse unit (leaf) has an associated vein (Cristea et al. , 1998; Ide and Cristea, 2000) Vein provides a “summary of the discourse fragment that contains that unit” Contains salient parts of the RST tree – the preceding nuclei and surrounding satellites Veins determined by whether node is a nucleus or satellite and what its left and right children are

Veins Algorithm n n Use same data set augmented with head and veins information (automatically computed) Exception: RST data set has some multi-child nodes, assume all extra children are right children Bonus: areas to the left of the root are potentially accessible – makes global topics introduced in the beginning accessible Implementation – search each unit in the entity’s DRA starting with most-recent and left-to-right within clause. If no antecedent is found, use LRC to search.

Veins Algorithm n n Use same data set augmented with head and veins information (automatically computed) Exception: RST data set has some multi-child nodes, assume all extra children are right children Bonus: areas to the left of the root are potentially accessible – makes global topics introduced in the beginning accessible Implementation – search each unit in the entity’s DRA starting with most-recent and left-to-right within clause. If no antecedent is found, use LRC to search.

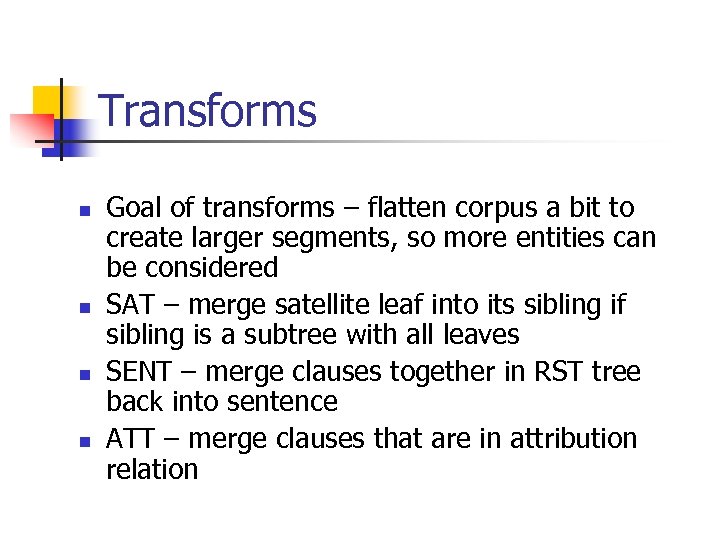

Transforms n n Goal of transforms – flatten corpus a bit to create larger segments, so more entities can be considered SAT – merge satellite leaf into its sibling if sibling is a subtree with all leaves SENT – merge clauses together in RST tree back into sentence ATT – merge clauses that are in attribution relation

Transforms n n Goal of transforms – flatten corpus a bit to create larger segments, so more entities can be considered SAT – merge satellite leaf into its sibling if sibling is a subtree with all leaves SENT – merge clauses together in RST tree back into sentence ATT – merge clauses that are in attribution relation

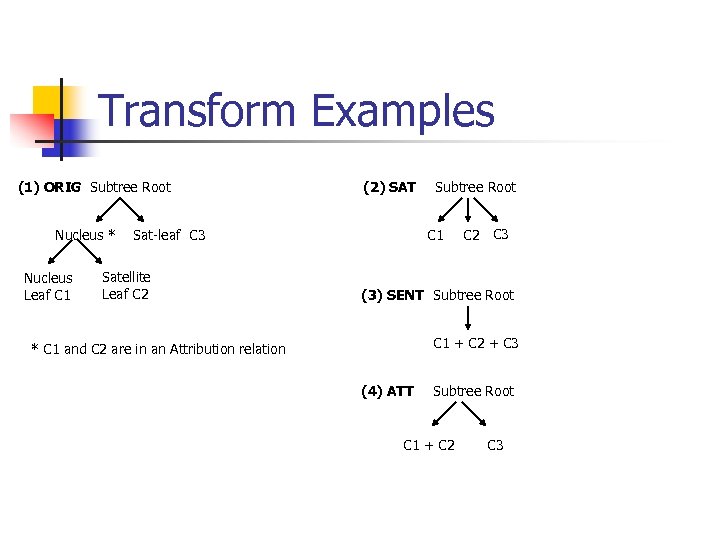

Transform Examples (1) ORIG Subtree Root Nucleus * Nucleus Leaf C 1 (2) SAT C 1 Sat-leaf C 3 Satellite Leaf C 2 Subtree Root C 2 C 3 (3) SENT Subtree Root C 1 + C 2 + C 3 * C 1 and C 2 are in an Attribution relation (4) ATT Subtree Root C 1 + C 2 C 3

Transform Examples (1) ORIG Subtree Root Nucleus * Nucleus Leaf C 1 (2) SAT C 1 Sat-leaf C 3 Satellite Leaf C 2 Subtree Root C 2 C 3 (3) SENT Subtree Root C 1 + C 2 + C 3 * C 1 and C 2 are in an Attribution relation (4) ATT Subtree Root C 1 + C 2 C 3

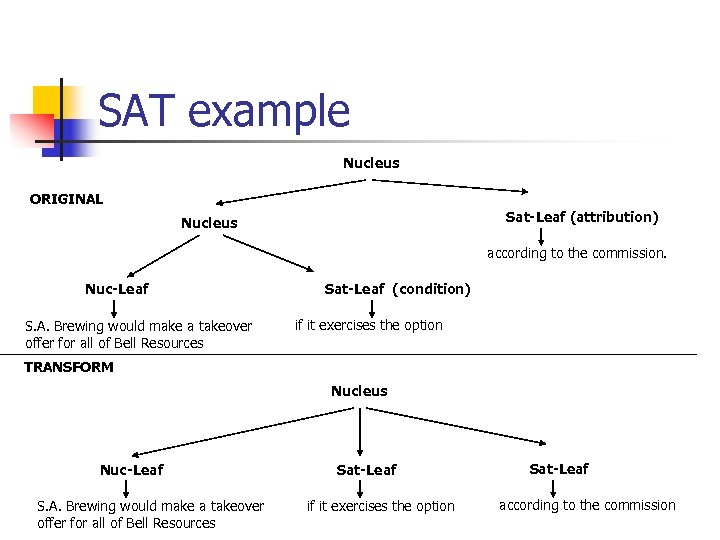

SAT example Nucleus ORIGINAL Sat-Leaf (attribution) Nucleus according to the commission. Nuc-Leaf S. A. Brewing would make a takeover offer for all of Bell Resources Sat-Leaf (condition) if it exercises the option TRANSFORM Nucleus Nuc-Leaf S. A. Brewing would make a takeover offer for all of Bell Resources Sat-Leaf if it exercises the option Sat-Leaf according to the commission

SAT example Nucleus ORIGINAL Sat-Leaf (attribution) Nucleus according to the commission. Nuc-Leaf S. A. Brewing would make a takeover offer for all of Bell Resources Sat-Leaf (condition) if it exercises the option TRANSFORM Nucleus Nuc-Leaf S. A. Brewing would make a takeover offer for all of Bell Resources Sat-Leaf if it exercises the option Sat-Leaf according to the commission

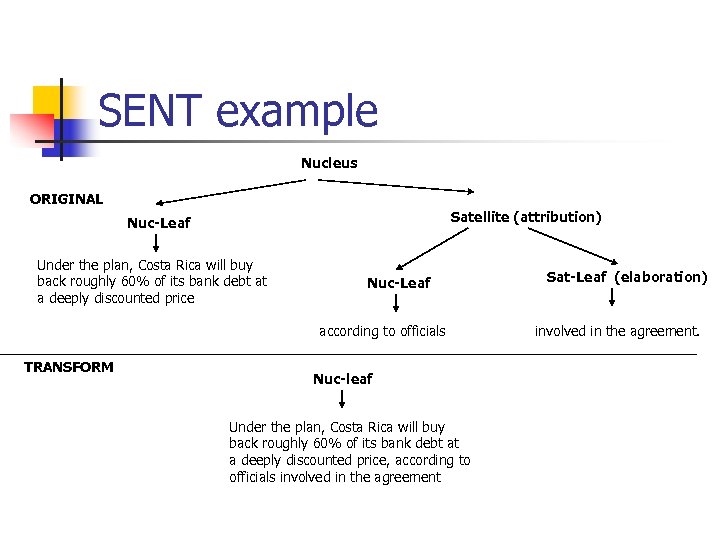

SENT example Nucleus ORIGINAL Satellite (attribution) Nuc-Leaf Under the plan, Costa Rica will buy back roughly 60% of its bank debt at a deeply discounted price Nuc-Leaf according to officials TRANSFORM Nuc-leaf Under the plan, Costa Rica will buy back roughly 60% of its bank debt at a deeply discounted price, according to officials involved in the agreement Sat-Leaf (elaboration) involved in the agreement.

SENT example Nucleus ORIGINAL Satellite (attribution) Nuc-Leaf Under the plan, Costa Rica will buy back roughly 60% of its bank debt at a deeply discounted price Nuc-Leaf according to officials TRANSFORM Nuc-leaf Under the plan, Costa Rica will buy back roughly 60% of its bank debt at a deeply discounted price, according to officials involved in the agreement Sat-Leaf (elaboration) involved in the agreement.

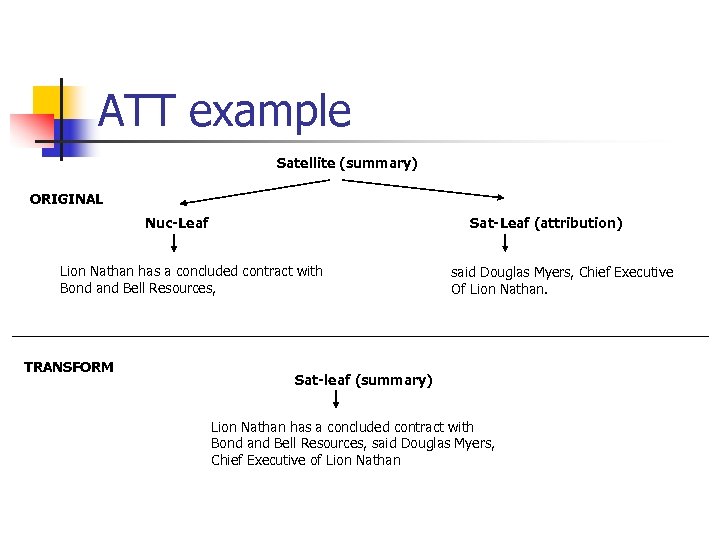

ATT example Satellite (summary) ORIGINAL Nuc-Leaf Sat-Leaf (attribution) Lion Nathan has a concluded contract with Bond and Bell Resources, TRANSFORM said Douglas Myers, Chief Executive Of Lion Nathan. Sat-leaf (summary) Lion Nathan has a concluded contract with Bond and Bell Resources, said Douglas Myers, Chief Executive of Lion Nathan

ATT example Satellite (summary) ORIGINAL Nuc-Leaf Sat-Leaf (attribution) Lion Nathan has a concluded contract with Bond and Bell Resources, TRANSFORM said Douglas Myers, Chief Executive Of Lion Nathan. Sat-leaf (summary) Lion Nathan has a concluded contract with Bond and Bell Resources, said Douglas Myers, Chief Executive of Lion Nathan

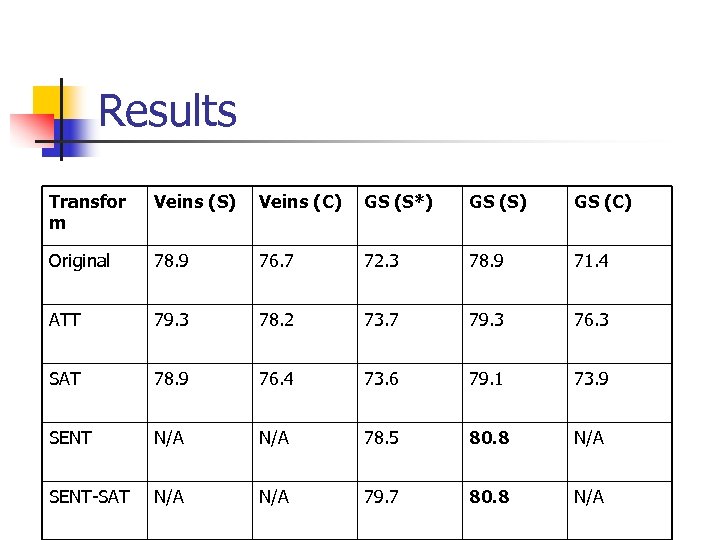

Results Transfor m Veins (S) Veins (C) GS (S*) GS (S) GS (C) Original 78. 9 76. 7 72. 3 78. 9 71. 4 ATT 79. 3 78. 2 73. 7 79. 3 76. 3 SAT 78. 9 76. 4 73. 6 79. 1 73. 9 SENT N/A 78. 5 80. 8 N/A SENT-SAT N/A 79. 7 80. 8 N/A

Results Transfor m Veins (S) Veins (C) GS (S*) GS (S) GS (C) Original 78. 9 76. 7 72. 3 78. 9 71. 4 ATT 79. 3 78. 2 73. 7 79. 3 76. 3 SAT 78. 9 76. 4 73. 6 79. 1 73. 9 SENT N/A 78. 5 80. 8 N/A SENT-SAT N/A 79. 7 80. 8 N/A

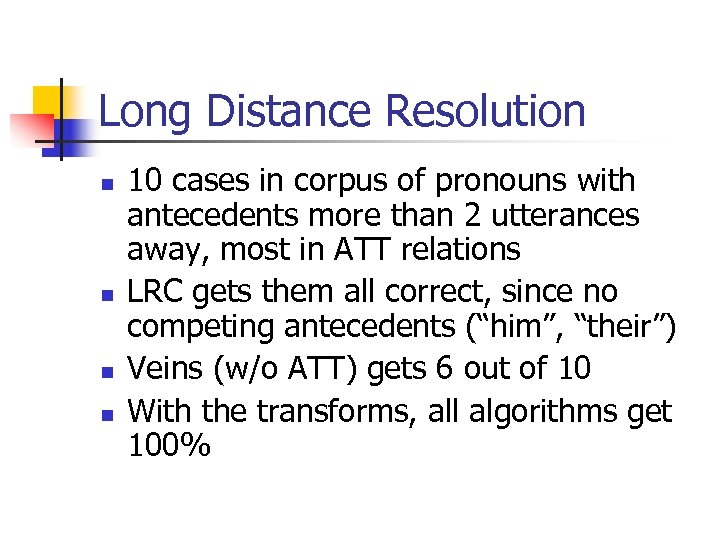

Long Distance Resolution n n 10 cases in corpus of pronouns with antecedents more than 2 utterances away, most in ATT relations LRC gets them all correct, since no competing antecedents (“him”, “their”) Veins (w/o ATT) gets 6 out of 10 With the transforms, all algorithms get 100%

Long Distance Resolution n n 10 cases in corpus of pronouns with antecedents more than 2 utterances away, most in ATT relations LRC gets them all correct, since no competing antecedents (“him”, “their”) Veins (w/o ATT) gets 6 out of 10 With the transforms, all algorithms get 100%

Conclusions n n n Two ways to determine success of decomposition strategy: intrasentential and intersentential resolution Intra: no improvement, better to use grammatical function Inter: LDR’s…. Hard to draw concrete conclusions n n Need more data to determine if transforms give a good approximation of segmentation Using G&S accessibility of clauses doesn’t seem to work either

Conclusions n n n Two ways to determine success of decomposition strategy: intrasentential and intersentential resolution Intra: no improvement, better to use grammatical function Inter: LDR’s…. Hard to draw concrete conclusions n n Need more data to determine if transforms give a good approximation of segmentation Using G&S accessibility of clauses doesn’t seem to work either

Future Work n n n Error analysis shows determining coherence relations could account for several intrasentential cases Use rhetorical relations themselves to constrain accessibility of entities Annotating human-human dialogues in TRIPS 911 domain for reference, already been annotated for argumentation acts (Stent, 2001)

Future Work n n n Error analysis shows determining coherence relations could account for several intrasentential cases Use rhetorical relations themselves to constrain accessibility of entities Annotating human-human dialogues in TRIPS 911 domain for reference, already been annotated for argumentation acts (Stent, 2001)