982f7e9f4463cba62d7c16cbb4e3b448.ppt

- Количество слайдов: 39

EFFICIENCY AND EFFECTIVENESS IN ASSESSMENT Strategies for Streamlining Assessment Win Hornby Teaching Fellow The Robert Gordon University

EFFICIENCY AND EFFECTIVENESS IN ASSESSMENT Strategies for Streamlining Assessment Win Hornby Teaching Fellow The Robert Gordon University

Objectives § To look at the changing environment within which assessment now takes place § To look at a framework for evaluating Efficiency and Effectiveness § To look at some empirical data based on a survey of assessment practice in my own university § To propose a number of strategies @ Win Hornby 2004

Objectives § To look at the changing environment within which assessment now takes place § To look at a framework for evaluating Efficiency and Effectiveness § To look at some empirical data based on a survey of assessment practice in my own university § To propose a number of strategies @ Win Hornby 2004

The Importance of assessment? “Students can with difficulty escape from the effects of poor teaching. . . . they cannot ( by definition if they wish to graduate) escape the effects of poor assessment” Boud (1995: 35) @ Win Hornby 2004

The Importance of assessment? “Students can with difficulty escape from the effects of poor teaching. . . . they cannot ( by definition if they wish to graduate) escape the effects of poor assessment” Boud (1995: 35) @ Win Hornby 2004

Time spent on Assessment? § § § RGU Annual Assessment Survey 2003 5 -6 minutes per student-credit point per annum! 75 -90 minutes per student per annum taking a standard 15 credit module Gross this number up, university of 10, 000 students, 4 year programmes, 120 credits p. a Collect in all assessments in one year Get one member of staff to mark it, working 9 till 5 365 days a year! § It would take @ Win Hornby 2004 35 years to mark!

Time spent on Assessment? § § § RGU Annual Assessment Survey 2003 5 -6 minutes per student-credit point per annum! 75 -90 minutes per student per annum taking a standard 15 credit module Gross this number up, university of 10, 000 students, 4 year programmes, 120 credits p. a Collect in all assessments in one year Get one member of staff to mark it, working 9 till 5 365 days a year! § It would take @ Win Hornby 2004 35 years to mark!

Aims of Assessment § § § Assessment has four main roles: formative, to provide support for future learning; summative, to provide information about performance at the end of a course; § certification, selecting by means of qualification and § evaluative, a means by which stakeholders can judge the effectiveness of the system as a whole. @ Win Hornby 2004

Aims of Assessment § § § Assessment has four main roles: formative, to provide support for future learning; summative, to provide information about performance at the end of a course; § certification, selecting by means of qualification and § evaluative, a means by which stakeholders can judge the effectiveness of the system as a whole. @ Win Hornby 2004

The Changing Environment § § § Changing role of the stakeholders Changing resource base Changing course architecture @ Win Hornby 2004

The Changing Environment § § § Changing role of the stakeholders Changing resource base Changing course architecture @ Win Hornby 2004

The Changing Environment § How have we adapted to this changing environment? § What changes in assessment practices? § Research Evidence? @ Win Hornby 2004

The Changing Environment § How have we adapted to this changing environment? § What changes in assessment practices? § Research Evidence? @ Win Hornby 2004

Rust (2000) “…. although the prevailing orthodoxy in UK higher education is now to describe all courses in terms of learning outcomes, assessment systems have not changed. Under current arrangements, rather than students having to satisfactorily demonstrate each outcome (which is surely what should logically be the case…. ) marking still tends to be more subjective with the aggregation of positive and negative aspects of the work resulting in many cases in fairly meaningless marks being awarded with 40% still being sufficient to pass”

Rust (2000) “…. although the prevailing orthodoxy in UK higher education is now to describe all courses in terms of learning outcomes, assessment systems have not changed. Under current arrangements, rather than students having to satisfactorily demonstrate each outcome (which is surely what should logically be the case…. ) marking still tends to be more subjective with the aggregation of positive and negative aspects of the work resulting in many cases in fairly meaningless marks being awarded with 40% still being sufficient to pass”

Research Evidence § Based on a survey of assessment practices in UK universities in 1999 by Brown and Glasner they found: § 90% of the assessment of a typical British Degree consists of examinations PLUS tutor marked reports or essays § Recent evidence based on 2003 Survey of assessment practices in my own university § Shows the pattern changing, moving away from “typical model” § More assessment done by coursework only @ Win Hornby 2004

Research Evidence § Based on a survey of assessment practices in UK universities in 1999 by Brown and Glasner they found: § 90% of the assessment of a typical British Degree consists of examinations PLUS tutor marked reports or essays § Recent evidence based on 2003 Survey of assessment practices in my own university § Shows the pattern changing, moving away from “typical model” § More assessment done by coursework only @ Win Hornby 2004

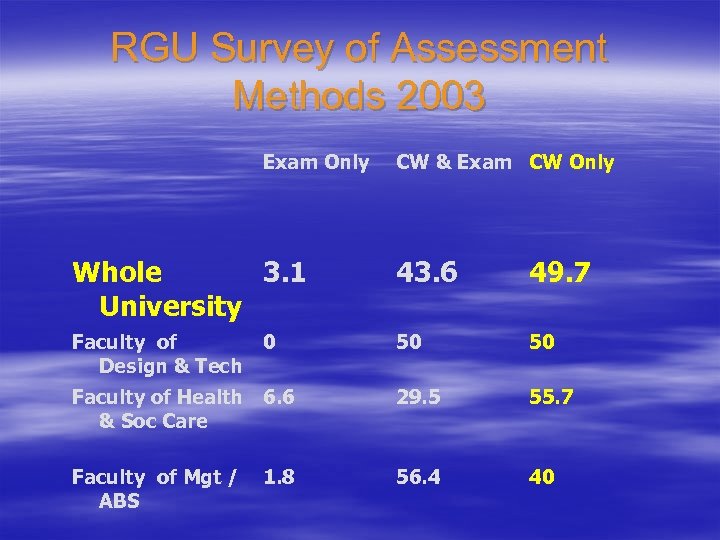

RGU Survey of Assessment Methods 2003 Exam Only CW & Exam CW Only Whole 3. 1 University 43. 6 49. 7 Faculty of Design & Tech 50 50 Faculty of Health 6. 6 & Soc Care 29. 5 55. 7 Faculty of Mgt / ABS 56. 4 40 0 1. 8

RGU Survey of Assessment Methods 2003 Exam Only CW & Exam CW Only Whole 3. 1 University 43. 6 49. 7 Faculty of Design & Tech 50 50 Faculty of Health 6. 6 & Soc Care 29. 5 55. 7 Faculty of Mgt / ABS 56. 4 40 0 1. 8

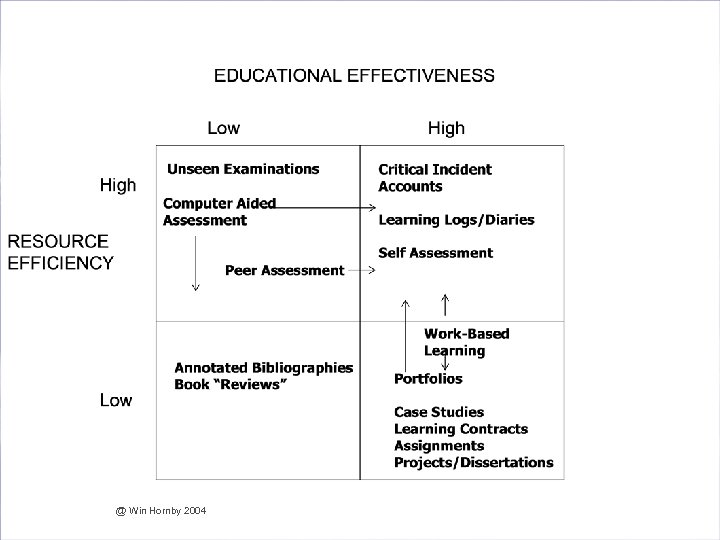

The Trade Off § Effectiveness – Encouraging Staff to experiment with alternative assessment modes § Efficiency – Resource pressures – Coping with the Changing Environment @ Win Hornby 2004

The Trade Off § Effectiveness – Encouraging Staff to experiment with alternative assessment modes § Efficiency – Resource pressures – Coping with the Changing Environment @ Win Hornby 2004

Effectiveness Defined § To what extent are the methods used educationally valid? § To what extent are the assessment methods used closely linked with desired skills and competences? § Are the assessment methods “constructively aligned” to the stated outcomes to use Biggs (1997) phrase? § Does the assessment method match the task and outcomes? @ Win Hornby 2004

Effectiveness Defined § To what extent are the methods used educationally valid? § To what extent are the assessment methods used closely linked with desired skills and competences? § Are the assessment methods “constructively aligned” to the stated outcomes to use Biggs (1997) phrase? § Does the assessment method match the task and outcomes? @ Win Hornby 2004

Effectiveness Defined § Is there over-reliance on just one mode of assessment such as formal unseen examinations? § Are students overloaded thus encouraging coping strategies which lead to what Entwistle has described as “surface” as opposed to “deep” learning? (Entwistle 1981) § Do the various stakeholders understand the criteria employed in the assessment method and what they are designed to assess? @ Win Hornby 2004

Effectiveness Defined § Is there over-reliance on just one mode of assessment such as formal unseen examinations? § Are students overloaded thus encouraging coping strategies which lead to what Entwistle has described as “surface” as opposed to “deep” learning? (Entwistle 1981) § Do the various stakeholders understand the criteria employed in the assessment method and what they are designed to assess? @ Win Hornby 2004

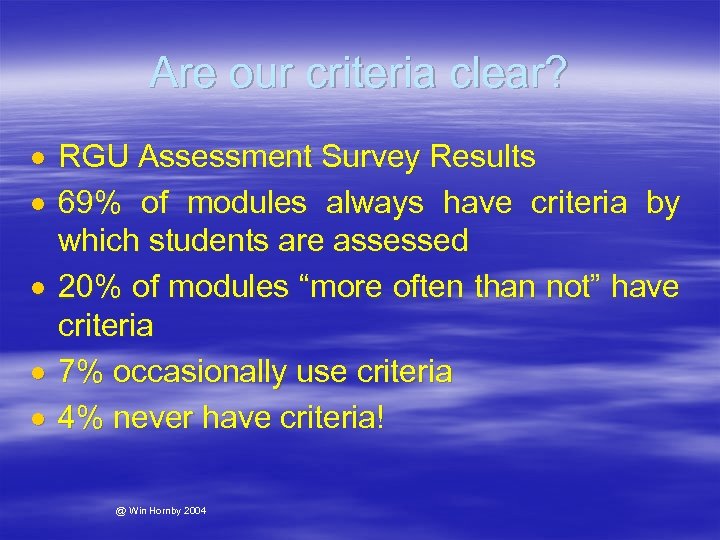

Are our criteria clear? RGU Assessment Survey Results 69% of modules always have criteria by which students are assessed 20% of modules “more often than not” have criteria 7% occasionally use criteria 4% never have criteria! @ Win Hornby 2004

Are our criteria clear? RGU Assessment Survey Results 69% of modules always have criteria by which students are assessed 20% of modules “more often than not” have criteria 7% occasionally use criteria 4% never have criteria! @ Win Hornby 2004

Efficiency Defined § What are the educational and opportunity costs of each method in terms of staff time, resources etc. ? § What are the costs of systems to ensure fidelity in the assessment method used? § What are the administrative costs of different methods? § What are the costs to ensure assessment is reliable and free from bias? § What are the costs of complying with various stakeholder demands on transparency? @ Win Hornby 2004

Efficiency Defined § What are the educational and opportunity costs of each method in terms of staff time, resources etc. ? § What are the costs of systems to ensure fidelity in the assessment method used? § What are the administrative costs of different methods? § What are the costs to ensure assessment is reliable and free from bias? § What are the costs of complying with various stakeholder demands on transparency? @ Win Hornby 2004

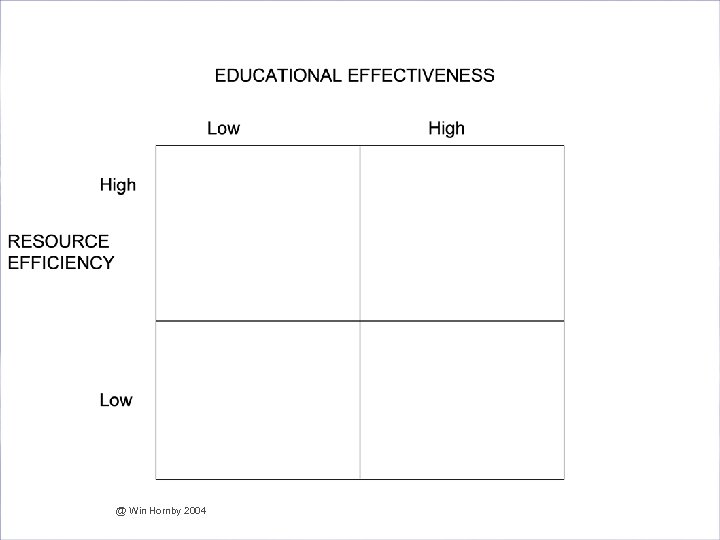

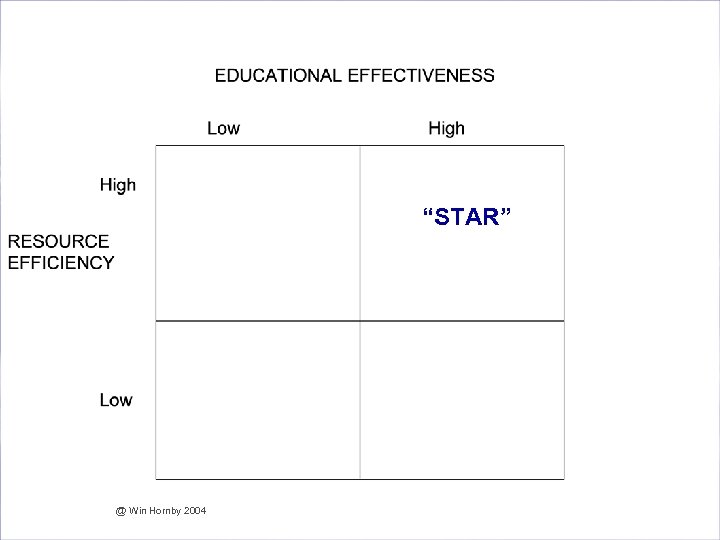

Are we Effective and Efficient in our Assessment? @ Win Hornby 2004

Are we Effective and Efficient in our Assessment? @ Win Hornby 2004

Are we Effective and Efficient in our Assessment? “STAR” @ Win Hornby 2004

Are we Effective and Efficient in our Assessment? “STAR” @ Win Hornby 2004

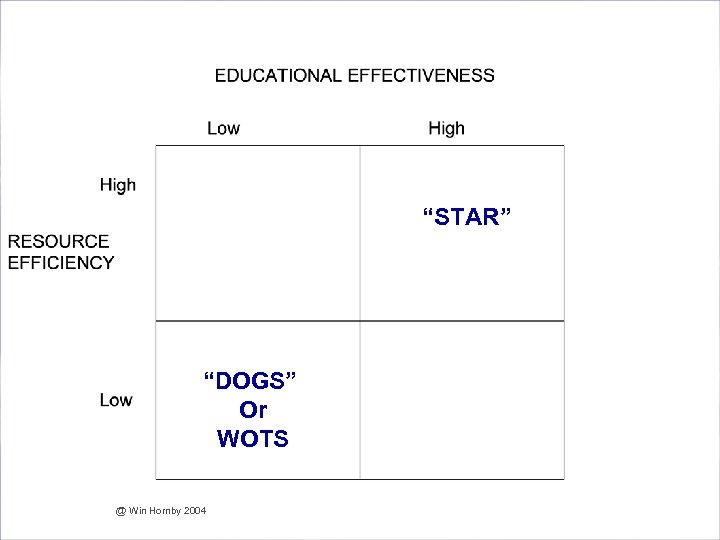

Are we Effective and Efficient in our Assessment? “STAR” “DOGS” Or WOTS @ Win Hornby 2004

Are we Effective and Efficient in our Assessment? “STAR” “DOGS” Or WOTS @ Win Hornby 2004

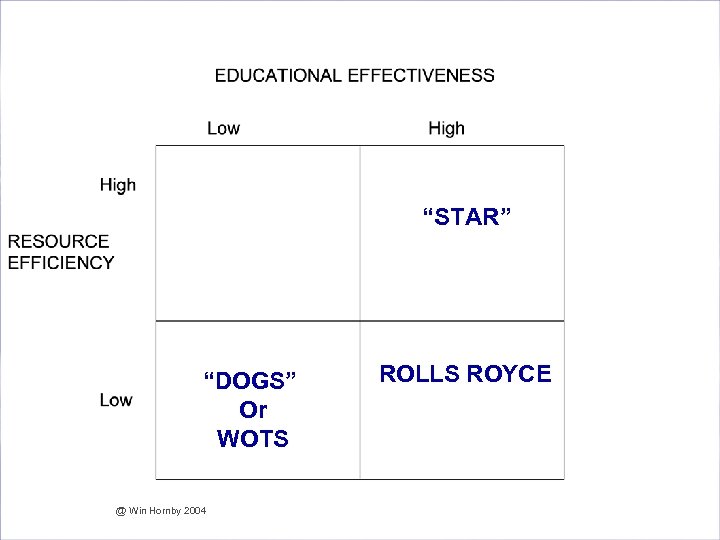

Are we Effective and Efficient in our Assessment? “STAR” “DOGS” Or WOTS @ Win Hornby 2004 ROLLS ROYCE

Are we Effective and Efficient in our Assessment? “STAR” “DOGS” Or WOTS @ Win Hornby 2004 ROLLS ROYCE

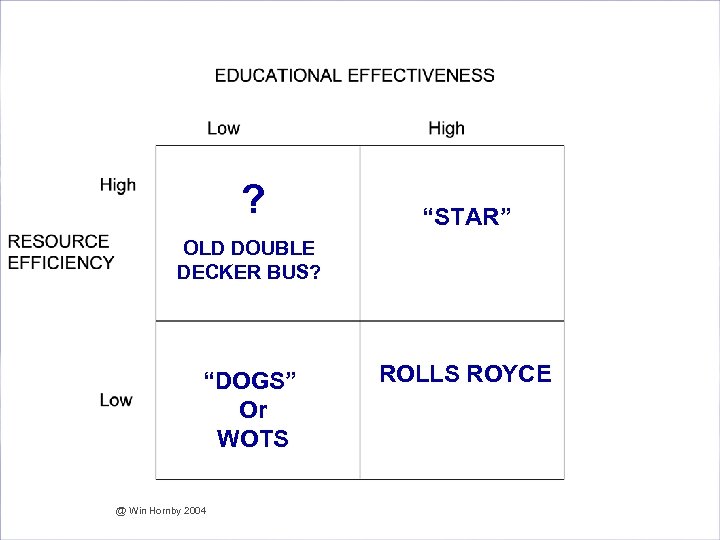

Are we Effective and Efficient in our Assessment? ? “STAR” OLD DOUBLE DECKER BUS? “DOGS” Or WOTS @ Win Hornby 2004 ROLLS ROYCE

Are we Effective and Efficient in our Assessment? ? “STAR” OLD DOUBLE DECKER BUS? “DOGS” Or WOTS @ Win Hornby 2004 ROLLS ROYCE

Are we Effective and Efficient in our Assessment? @ Win Hornby 2004

Are we Effective and Efficient in our Assessment? @ Win Hornby 2004

Consequences of overassessment § Poor Feedback § “ When it comes to giving feedback some staff stop at nothing!” § Late feedback § “Feedback is like fish it goes off after a week!” § Formative Assessment sacrificed § Students cut classes/tutorials § Students work strategically § Students look for “short cuts” including plagiarising work and personating § Little meaningful learning takes place @ Win Hornby 2004

Consequences of overassessment § Poor Feedback § “ When it comes to giving feedback some staff stop at nothing!” § Late feedback § “Feedback is like fish it goes off after a week!” § Formative Assessment sacrificed § Students cut classes/tutorials § Students work strategically § Students look for “short cuts” including plagiarising work and personating § Little meaningful learning takes place @ Win Hornby 2004

Strategies for Streamlining Assessment 1. Strategic Reduction of Summative assessment 2. Front-end loading 3. In Class assessment 4. Self and Peer assessment 5. Group Assessment 6. Automated Assessment and feedback @ Win Hornby 2004

Strategies for Streamlining Assessment 1. Strategic Reduction of Summative assessment 2. Front-end loading 3. In Class assessment 4. Self and Peer assessment 5. Group Assessment 6. Automated Assessment and feedback @ Win Hornby 2004

Option 1. Strategic Reduction of Summative Assessment § § § § Reduce the instances of assessment? Exemptions from exams on basis of coursework performance? Assess learning outcomes once only? Combine assessments across modules? Abolish resit examinations and reassess differently? Mechanisms for balancing types of assessment between modules? Timetabling assessments? @ Win Hornby 2004

Option 1. Strategic Reduction of Summative Assessment § § § § Reduce the instances of assessment? Exemptions from exams on basis of coursework performance? Assess learning outcomes once only? Combine assessments across modules? Abolish resit examinations and reassess differently? Mechanisms for balancing types of assessment between modules? Timetabling assessments? @ Win Hornby 2004

Option 2. Front-end loading § § Coursework briefing sessions? Discuss, explain and “unpack” criteria? Get students to “engage” with the criteria? Get students to assess previous cohort’s work? § Allow students input into deciding the criteria? § Rust, Price and O’Donovan Study (2003) @ Win Hornby 2004

Option 2. Front-end loading § § Coursework briefing sessions? Discuss, explain and “unpack” criteria? Get students to “engage” with the criteria? Get students to assess previous cohort’s work? § Allow students input into deciding the criteria? § Rust, Price and O’Donovan Study (2003) @ Win Hornby 2004

Option 3. In Class assessment § § § § § Marked/graded in class by students? Build in several of these for coursework? Gow (quoted in Hornby (2003) First year Engineering students @RGU Reported: Improved attendance rates Improved motivation to learn Higher retention rates Higher pass rates in the final examination, hence fewer resits to mark Improved second year performance because underpinning first year knowledge better @ Win Hornby 2004

Option 3. In Class assessment § § § § § Marked/graded in class by students? Build in several of these for coursework? Gow (quoted in Hornby (2003) First year Engineering students @RGU Reported: Improved attendance rates Improved motivation to learn Higher retention rates Higher pass rates in the final examination, hence fewer resits to mark Improved second year performance because underpinning first year knowledge better @ Win Hornby 2004

Option 4. Self and Peer assessment § Some scepticism that students can be trusted to do this for themselves § Reliability of Assessment? § Survey of Research Evidence? § Tutor/Student Correlations Low (r=0. 21) § High Variability between tutor ratings and student ratings § Students over-rate themselves? @ Win Hornby 2004

Option 4. Self and Peer assessment § Some scepticism that students can be trusted to do this for themselves § Reliability of Assessment? § Survey of Research Evidence? § Tutor/Student Correlations Low (r=0. 21) § High Variability between tutor ratings and student ratings § Students over-rate themselves? @ Win Hornby 2004

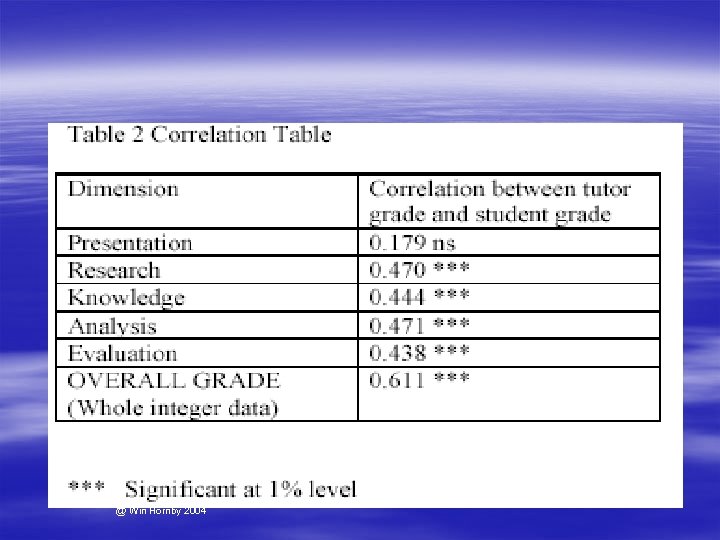

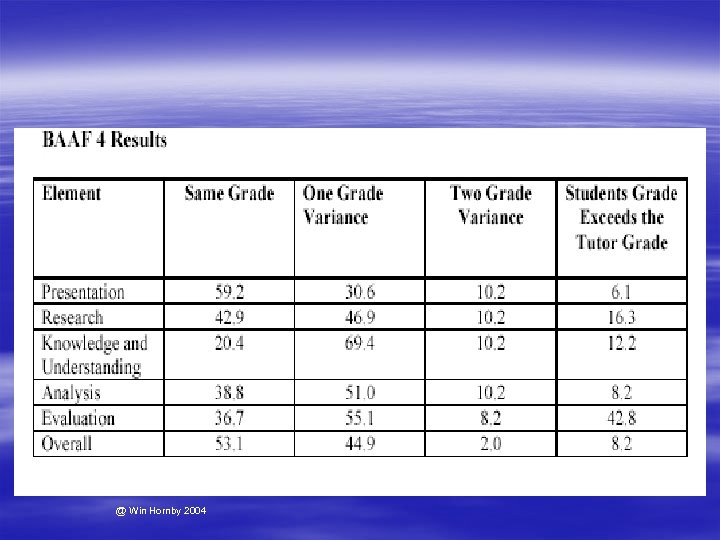

Self and Peer assessment § § § Self Assessment of 48 honours year Accounting Students Using Grade Related Criteria Dimensions – Presentation – Research – Knowledge and Understanding – Analysis – Evaluation § “Unpacked” these with briefing and online discussion forum § Results? § “Tutors, Who Needs them? ” @ Win Hornby 2004

Self and Peer assessment § § § Self Assessment of 48 honours year Accounting Students Using Grade Related Criteria Dimensions – Presentation – Research – Knowledge and Understanding – Analysis – Evaluation § “Unpacked” these with briefing and online discussion forum § Results? § “Tutors, Who Needs them? ” @ Win Hornby 2004

Self and Peer assessment § Using GRC and some “front end loading” we found: § High Correlation Tutor Students Ratings § High Agreement between students ratings and tutor ratings § No evidence of over-rating by students § Evidence that students found the experience educationally valuable @ Win Hornby 2004

Self and Peer assessment § Using GRC and some “front end loading” we found: § High Correlation Tutor Students Ratings § High Agreement between students ratings and tutor ratings § No evidence of over-rating by students § Evidence that students found the experience educationally valuable @ Win Hornby 2004

@ Win Hornby 2004

@ Win Hornby 2004

@ Win Hornby 2004

@ Win Hornby 2004

Self and Peer assessment § The Educational Value of Self Assessment § Students feedback indicated benefits from the self assessment process but to paraphrase: § “Why was this not done at an earlier stage in the degree programme! Why wait until my final year to do this? We should be doing this kind of thing from Day 1” @ Win Hornby 2004

Self and Peer assessment § The Educational Value of Self Assessment § Students feedback indicated benefits from the self assessment process but to paraphrase: § “Why was this not done at an earlier stage in the degree programme! Why wait until my final year to do this? We should be doing this kind of thing from Day 1” @ Win Hornby 2004

Option 5. Group Assessment § § Potential to be very effective and efficient But is it reliable? What about the “hassle” factor? The free rider problem? @ Win Hornby 2004

Option 5. Group Assessment § § Potential to be very effective and efficient But is it reliable? What about the “hassle” factor? The free rider problem? @ Win Hornby 2004

Group Assessment § § § § Barnes (quoted in Hornby (2003)) Third Year Hospitality Management Students Reports on using an evaluation instrument for both self and peer assessment Administered electronically via Question Mark Perception Assesses a number of different dimensions of individual and group work such as Attendance, ideas generation, contribution to group report, knowledge and skill acquisition, effort Also an anonymous assessment of the work of others which was fed back to each student Not only did student get a grade but also got a score on each of the identified dimensions and a report @ Win Hornby 2004

Group Assessment § § § § Barnes (quoted in Hornby (2003)) Third Year Hospitality Management Students Reports on using an evaluation instrument for both self and peer assessment Administered electronically via Question Mark Perception Assesses a number of different dimensions of individual and group work such as Attendance, ideas generation, contribution to group report, knowledge and skill acquisition, effort Also an anonymous assessment of the work of others which was fed back to each student Not only did student get a grade but also got a score on each of the identified dimensions and a report @ Win Hornby 2004

Option 6. Automated Assessment and Feedback § Automated Assessment @ Win Hornby 2004

Option 6. Automated Assessment and Feedback § Automated Assessment @ Win Hornby 2004

Automated Feedback § Checklists and Statement Banks § RGU Case Studies in Streamlining Assessment – – – Checklists in Applied Sciences Statement Banks in the Economics of Tax Module Grade related statements on each of the dimensions Cut and Paste + a bit of personalisation E-mail feedback in real-time “Quick and dirty” v “Clean and slow”? § Whole Class feedback online via i. NET @ Win Hornby 2004

Automated Feedback § Checklists and Statement Banks § RGU Case Studies in Streamlining Assessment – – – Checklists in Applied Sciences Statement Banks in the Economics of Tax Module Grade related statements on each of the dimensions Cut and Paste + a bit of personalisation E-mail feedback in real-time “Quick and dirty” v “Clean and slow”? § Whole Class feedback online via i. NET @ Win Hornby 2004

Automated Assessment § Cooper (quoted in Hornby (2003)) § Bsc (Hons) Sports Science students in the School of Health Sciences. § Self Assessment linked to video clips of particular movements of the body to identify key muscles and joints § Required to identify muscles producing the movements and the type of muscle work involved. § Stop/ Start/Replay facility § Multiple choice questions with feedback on each response § i. NET discussion forum to ask questions about the various parts of the syllabus and self assessment assignments § Transferability of the idea to Science and engineering areas @ Win Hornby 2004

Automated Assessment § Cooper (quoted in Hornby (2003)) § Bsc (Hons) Sports Science students in the School of Health Sciences. § Self Assessment linked to video clips of particular movements of the body to identify key muscles and joints § Required to identify muscles producing the movements and the type of muscle work involved. § Stop/ Start/Replay facility § Multiple choice questions with feedback on each response § i. NET discussion forum to ask questions about the various parts of the syllabus and self assessment assignments § Transferability of the idea to Science and engineering areas @ Win Hornby 2004

How will all this save me time? § Some strategies do not involve extra costs (e. g. strategic reduction) § Some strategies do involve some initial set up costs § View these like an investment appraisal project § Initial capital outlay § Reap benefits over time @ Win Hornby 2004

How will all this save me time? § Some strategies do not involve extra costs (e. g. strategic reduction) § Some strategies do involve some initial set up costs § View these like an investment appraisal project § Initial capital outlay § Reap benefits over time @ Win Hornby 2004

References § Quality Enhancement in Teaching, Learning and Assessment § Assessment @RGU § http: //www. rgu. ac. uk/celt/quality/page. cfm? pge=6201 @ Win Hornby 2004

References § Quality Enhancement in Teaching, Learning and Assessment § Assessment @RGU § http: //www. rgu. ac. uk/celt/quality/page. cfm? pge=6201 @ Win Hornby 2004