12415043725998ecec64f1ef68a45730.ppt

- Количество слайдов: 43

EE 515/IS 523 Think Like an Adversary Lecture 7 UI and Psychological Failures Yongdae Kim

EE 515/IS 523 Think Like an Adversary Lecture 7 UI and Psychological Failures Yongdae Kim

Recap ^ http: //syssec. kaist. ac. kr/courses/ee 515 ^ E-mail policy 4 Include [ee 515] or [is 523] in the subject of your e-mail ^ Class Presentation Assignments are made ^ Always check calendar ^ Text only posting, email! ^ Preproposal meeting this week 4 Group leader sends me three 30 -min time windows between Wednesday and Friday (evening is OK)

Recap ^ http: //syssec. kaist. ac. kr/courses/ee 515 ^ E-mail policy 4 Include [ee 515] or [is 523] in the subject of your e-mail ^ Class Presentation Assignments are made ^ Always check calendar ^ Text only posting, email! ^ Preproposal meeting this week 4 Group leader sends me three 30 -min time windows between Wednesday and Friday (evening is OK)

Recap ^Access Control Matrix 4 ACL, Capabilities, Role-based ACL ^User Interface 4 Too many warnings… 4 Password Authentication -Text, graphical, hardware token, biometrics, … 4 Phishing: Psychological failure!

Recap ^Access Control Matrix 4 ACL, Capabilities, Role-based ACL ^User Interface 4 Too many warnings… 4 Password Authentication -Text, graphical, hardware token, biometrics, … 4 Phishing: Psychological failure!

Policy and Usability

Policy and Usability

Cost of Reading Policy Cranor et al. ^TR= p x R x n 4 p is the population of all Internet users 4 R is the average time to read one policy 4 n is the average number of unique sites Internet users visit annually ^p = 221 million Americans online (Nielsen, May 2008) ^R = avg time to read a policy = # words in policy / reading rate 4 To estimate words per policy: - Measured the policy length of the 75 most visited websites - Reflects policies people are most likely to visit ^Reading rate = 250 WPM Mid estimate: 2, 514 words / 250 WPM = 10 minutes

Cost of Reading Policy Cranor et al. ^TR= p x R x n 4 p is the population of all Internet users 4 R is the average time to read one policy 4 n is the average number of unique sites Internet users visit annually ^p = 221 million Americans online (Nielsen, May 2008) ^R = avg time to read a policy = # words in policy / reading rate 4 To estimate words per policy: - Measured the policy length of the 75 most visited websites - Reflects policies people are most likely to visit ^Reading rate = 250 WPM Mid estimate: 2, 514 words / 250 WPM = 10 minutes

^n = number of unique sites per year 4 Nielsen estimates Americans visit 185 unique sites in a month: 4 but that doesn’t quite scale x 12, so 1462 unique sites per year. ^TR= p x R x n = 221 million x 10 minutes x 1462 sites ^R x n = 244 hours per year person

^n = number of unique sites per year 4 Nielsen estimates Americans visit 185 unique sites in a month: 4 but that doesn’t quite scale x 12, so 1462 unique sites per year. ^TR= p x R x n = 221 million x 10 minutes x 1462 sites ^R x n = 244 hours per year person

P 3 P: Platform for Privacy Preferences ^A framework for automated privacy discussions 4 Web sites disclose their privacy practices in standard machine-readable formats 4 Web browsers automatically retrieve P 3 P privacy policies and compare them to users’ privacy preferences 4 Sites and browsers can then negotiate about privacy terms

P 3 P: Platform for Privacy Preferences ^A framework for automated privacy discussions 4 Web sites disclose their privacy practices in standard machine-readable formats 4 Web browsers automatically retrieve P 3 P privacy policies and compare them to users’ privacy preferences 4 Sites and browsers can then negotiate about privacy terms

Why Johnny Can’t Encrypt - A Usability Evaluation of PGP 5. 0 - Alma Whitten and J. D. Tygar Usenix Sec’ 99 Presented by Yongdae Kim Some of the Slides borrowed from Jeremy Hyland

Why Johnny Can’t Encrypt - A Usability Evaluation of PGP 5. 0 - Alma Whitten and J. D. Tygar Usenix Sec’ 99 Presented by Yongdae Kim Some of the Slides borrowed from Jeremy Hyland

Defining Usable Security Software ^Security software is usable if the people who are expected to use it: 4 are reliably made aware of the security tasks they need to perform. 4 are able to figure out how to successfully perform those tasks 4 don't make dangerous errors 4 are sufficiently comfortable with the interface to continue using it.

Defining Usable Security Software ^Security software is usable if the people who are expected to use it: 4 are reliably made aware of the security tasks they need to perform. 4 are able to figure out how to successfully perform those tasks 4 don't make dangerous errors 4 are sufficiently comfortable with the interface to continue using it.

Why is usable security hard? 1. The unmotivated users 4“Security is usually a secondary goal” 2. Policy Abstraction 4 Programmers understand the representation but normal users have no background knowledge. 3. The lack of feedback 4 We can’t predict every situation. 4. The proverbial “barn door” 4 Need to focus on error prevention. 5. The weakest link 4 Attacker only needs to find one vulnerability

Why is usable security hard? 1. The unmotivated users 4“Security is usually a secondary goal” 2. Policy Abstraction 4 Programmers understand the representation but normal users have no background knowledge. 3. The lack of feedback 4 We can’t predict every situation. 4. The proverbial “barn door” 4 Need to focus on error prevention. 5. The weakest link 4 Attacker only needs to find one vulnerability

Why Johnny can’t encrypt? ^PGP 5. 0 4 Pretty Good Privacy 4 Software for encrypting and signing data 4 Plug-in provides “easy” use with email clients 4 Modern GUI, well designed by most standards ^Usability Evaluation following their definition If an average user of email feels the need for privacy and authentication, and acquires PGP with that purpose in mind, will PGP's current design allow that person to realize what needs to be done, figure out how to do it, and avoid dangerous errors, without becoming so frustrated that he or she decides to give up on using PGP after all?

Why Johnny can’t encrypt? ^PGP 5. 0 4 Pretty Good Privacy 4 Software for encrypting and signing data 4 Plug-in provides “easy” use with email clients 4 Modern GUI, well designed by most standards ^Usability Evaluation following their definition If an average user of email feels the need for privacy and authentication, and acquires PGP with that purpose in mind, will PGP's current design allow that person to realize what needs to be done, figure out how to do it, and avoid dangerous errors, without becoming so frustrated that he or she decides to give up on using PGP after all?

Usability Evaluation Methods ^Cognitive walk through 4 Mentally step through the software as if we were a new user. Attempt to identify the usability pitfalls. 4 Focus on interface learnablity. ^Results

Usability Evaluation Methods ^Cognitive walk through 4 Mentally step through the software as if we were a new user. Attempt to identify the usability pitfalls. 4 Focus on interface learnablity. ^Results

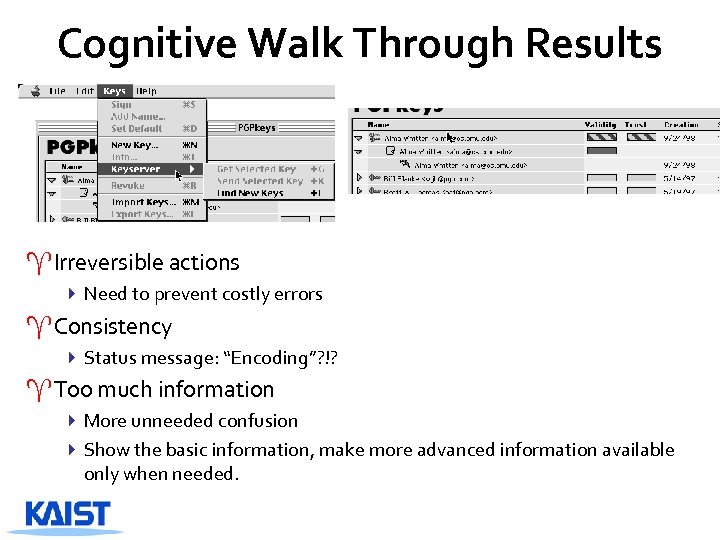

Cognitive Walk Through Results ^Irreversible actions 4 Need to prevent costly errors ^Consistency 4 Status message: “Encoding”? !? ^Too much information 4 More unneeded confusion 4 Show the basic information, make more advanced information available only when needed.

Cognitive Walk Through Results ^Irreversible actions 4 Need to prevent costly errors ^Consistency 4 Status message: “Encoding”? !? ^Too much information 4 More unneeded confusion 4 Show the basic information, make more advanced information available only when needed.

User Test ^User Test 4 PGP 5. 0 with Eudora 412 participants all with at least some college and none with advanced knowledge of encryption 4 Participants were given a scenario with tasks to complete within 90 min 4 Tasks built on each other 4 Participants could ask some questions through email

User Test ^User Test 4 PGP 5. 0 with Eudora 412 participants all with at least some college and none with advanced knowledge of encryption 4 Participants were given a scenario with tasks to complete within 90 min 4 Tasks built on each other 4 Participants could ask some questions through email

User Test Results ^3 users accidentally sent the message in clear text ^7 users used their public key to encrypt and only 2 of the 7 figured out how to correct the problem ^Only 2 users were able to decrypt without problems ^Only 1 user figured out how to deal with RSA keys correctly. ^A total of 3 users were able to successfully complete the basic process of sending and receiving encrypted emails. ^One user was not able to encrypt at all

User Test Results ^3 users accidentally sent the message in clear text ^7 users used their public key to encrypt and only 2 of the 7 figured out how to correct the problem ^Only 2 users were able to decrypt without problems ^Only 1 user figured out how to deal with RSA keys correctly. ^A total of 3 users were able to successfully complete the basic process of sending and receiving encrypted emails. ^One user was not able to encrypt at all

Conclusion ^Reminder If an average user of email feels the need for privacy and authentication, and acquires PGP with that purpose in mind, will PGP's current design allow that person to realize what needs to be done, figure out how to do it, and avoid dangerous errors, without becoming so frustrated that he or she decides to give up on using PGP after all? ^Is this a failure in the design of the PGP 5. 0 interface or is it a function of the problem of traditional usable design vs. design for usable secure systems? ^What other issues? What kind of similar security issues? What do we learn from this paper?

Conclusion ^Reminder If an average user of email feels the need for privacy and authentication, and acquires PGP with that purpose in mind, will PGP's current design allow that person to realize what needs to be done, figure out how to do it, and avoid dangerous errors, without becoming so frustrated that he or she decides to give up on using PGP after all? ^Is this a failure in the design of the PGP 5. 0 interface or is it a function of the problem of traditional usable design vs. design for usable secure systems? ^What other issues? What kind of similar security issues? What do we learn from this paper?

Why (Special Agent) Johnny (Still) Can’t Encrypt: A Security Analysis of the APCO Project 25 Two-Way Radio System S. Clark, T. Goodspeed, P. Metzger, Z. Wasserman, K. Xu, M. Blaze Usenix Sec’ 11 Presented by Yongdae Kim Slides borrowed from Matt Blaze

Why (Special Agent) Johnny (Still) Can’t Encrypt: A Security Analysis of the APCO Project 25 Two-Way Radio System S. Clark, T. Goodspeed, P. Metzger, Z. Wasserman, K. Xu, M. Blaze Usenix Sec’ 11 Presented by Yongdae Kim Slides borrowed from Matt Blaze

APCO Project 25 (“P 25”) ^Standard (in the US and elsewhere) for digital two-way radio (voice and low-speed text) 4 Widely fielded by government: local police & fire dept, federal law enforcement & security services, Do. D 4 Standard under ongoing development since early 90’s. 4 P 25 products increasingly available since early 2000’s. ^Drop-in replacement for analog FM systems 4 User narrow band channels, limited infrastructure 4 Can use simplex, repeaters, or trunked infrastructure ^Cryptographic security options 4 Content confidentiality (encryption)

APCO Project 25 (“P 25”) ^Standard (in the US and elsewhere) for digital two-way radio (voice and low-speed text) 4 Widely fielded by government: local police & fire dept, federal law enforcement & security services, Do. D 4 Standard under ongoing development since early 90’s. 4 P 25 products increasingly available since early 2000’s. ^Drop-in replacement for analog FM systems 4 User narrow band channels, limited infrastructure 4 Can use simplex, repeaters, or trunked infrastructure ^Cryptographic security options 4 Content confidentiality (encryption)

P 25 Equipment ^Wide range of COTS subscriber radios available 4 Mobile, portable, base and infrastructure ^Several US vendors 4 Motorola dominates in federal law enforcement sector ^Equipment features and user interfaces (somewhat) standardized across vendors.

P 25 Equipment ^Wide range of COTS subscriber radios available 4 Mobile, portable, base and infrastructure ^Several US vendors 4 Motorola dominates in federal law enforcement sector ^Equipment features and user interfaces (somewhat) standardized across vendors.

P 25: Deployed Examples

P 25: Deployed Examples

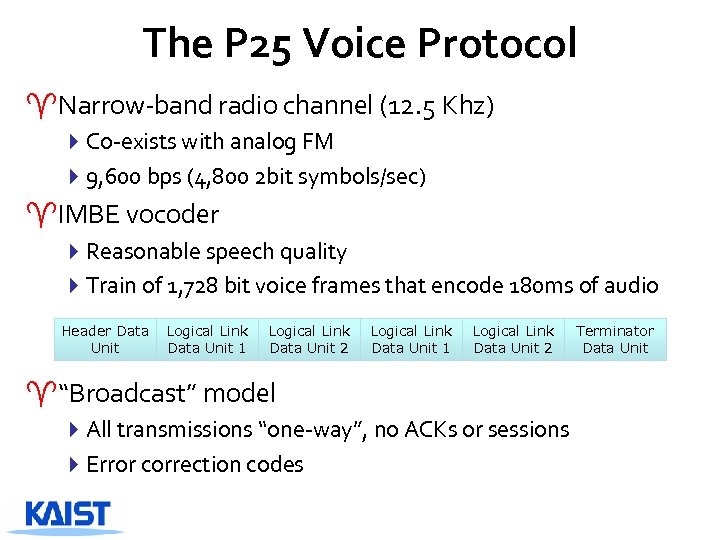

The P 25 Voice Protocol ^Narrow-band radio channel (12. 5 Khz) 4 Co-exists with analog FM 49, 600 bps (4, 800 2 bit symbols/sec) ^IMBE vocoder 4 Reasonable speech quality 4 Train of 1, 728 bit voice frames that encode 180 ms of audio Header Data Unit Logical Link Data Unit 1 Logical Link Data Unit 2 ^“Broadcast” model 4 All transmissions “one-way”, no ACKs or sessions 4 Error correction codes Terminator Data Unit

The P 25 Voice Protocol ^Narrow-band radio channel (12. 5 Khz) 4 Co-exists with analog FM 49, 600 bps (4, 800 2 bit symbols/sec) ^IMBE vocoder 4 Reasonable speech quality 4 Train of 1, 728 bit voice frames that encode 180 ms of audio Header Data Unit Logical Link Data Unit 1 Logical Link Data Unit 2 ^“Broadcast” model 4 All transmissions “one-way”, no ACKs or sessions 4 Error correction codes Terminator Data Unit

P 25 Optional Security Features ^Symmetric key encryption ^Received cleartext always demodulated & played 4 Unclassified: AES, DES, … 4 Classified: various Type I ^Received ciphertext decrypted & played if correct key available ^Traffic keys must be loaded into ^No authentication radios in advance 4 Via keyloader device or over-theair rekeying 4 Keys can expire, self destruct ^No “sessions” 4 Sender radio selects crypto mode & key 4 Up to receiver to decrypt ^Sender’s radio makes all security decisions 4 Radios can be configured for always clear, always encrypted, or user selected 4 User-selected is standard configuration

P 25 Optional Security Features ^Symmetric key encryption ^Received cleartext always demodulated & played 4 Unclassified: AES, DES, … 4 Classified: various Type I ^Received ciphertext decrypted & played if correct key available ^Traffic keys must be loaded into ^No authentication radios in advance 4 Via keyloader device or over-theair rekeying 4 Keys can expire, self destruct ^No “sessions” 4 Sender radio selects crypto mode & key 4 Up to receiver to decrypt ^Sender’s radio makes all security decisions 4 Radios can be configured for always clear, always encrypted, or user selected 4 User-selected is standard configuration

Highlights ^Apparently ad hoc design 4 No formal (or informal) security requirements specified in P 25 standard 4 But traffic encryption itself isn’t obviously broken ^But does suffer significant protocol weakness 4 No authentication 4 Susceptible to (active and passive) traffic analysis -Radio unit IDs sent in clear even when encryption enabled 4 Vulnerable to very efficient Denial of Service -13 d. B energy advantage to attacker ^Serious crypto-usability weakness

Highlights ^Apparently ad hoc design 4 No formal (or informal) security requirements specified in P 25 standard 4 But traffic encryption itself isn’t obviously broken ^But does suffer significant protocol weakness 4 No authentication 4 Susceptible to (active and passive) traffic analysis -Radio unit IDs sent in clear even when encryption enabled 4 Vulnerable to very efficient Denial of Service -13 d. B energy advantage to attacker ^Serious crypto-usability weakness

Passive and Active Traffic Analysis ^Subscriber radio’s unit ID, Talk. Group ID, NAC sent with every transmission 424 bit unit ID is typically unique to each radio 4 Effectively identifies individual radio + agency it belongs to ^Standard supports encryption of Unit ID 4 But they found UID always in clear, if crypto enabled ^Radios typically automatically respond to pings 4 Active adversary can easily discover idle radios 4 Transparent to pinged radio

Passive and Active Traffic Analysis ^Subscriber radio’s unit ID, Talk. Group ID, NAC sent with every transmission 424 bit unit ID is typically unique to each radio 4 Effectively identifies individual radio + agency it belongs to ^Standard supports encryption of Unit ID 4 But they found UID always in clear, if crypto enabled ^Radios typically automatically respond to pings 4 Active adversary can easily discover idle radios 4 Transparent to pinged radio

Scenario ^Ping response is sufficient to allow automated direction findings of targeted radios 4 Requires two bases at fixed location with phased directional antenna ^Adversary can thus create a real 0 time map of selected radios, even when they are “idle” ^Significant potential threat in military environment

Scenario ^Ping response is sufficient to allow automated direction findings of targeted radios 4 Requires two bases at fixed location with phased directional antenna ^Adversary can thus create a real 0 time map of selected radios, even when they are “idle” ^Significant potential threat in military environment

Denial of Service (in theory) ^P 25 uses aggressive error correction codes 4 But individual subfields of transmission are error-corrected separately ^Adversary can select a single subfield to jam within frame 4 Pattern at start of transmission makes synchronization easy ^Voice frame is 1, 728 bits, including critical 64 bits NID subfield that IDs frame type 4 Jamming 64 bits renders entire 1, 728 bit frame useless 4 32 symbols of jamming per 864 symbols ^Jammer needs 14 d. B less energy than the transmitter 4 Compare: Analog FM requires (about) equal energy to jam 4 Jamming digital spread spectrum requires much more energy

Denial of Service (in theory) ^P 25 uses aggressive error correction codes 4 But individual subfields of transmission are error-corrected separately ^Adversary can select a single subfield to jam within frame 4 Pattern at start of transmission makes synchronization easy ^Voice frame is 1, 728 bits, including critical 64 bits NID subfield that IDs frame type 4 Jamming 64 bits renders entire 1, 728 bit frame useless 4 32 symbols of jamming per 864 symbols ^Jammer needs 14 d. B less energy than the transmitter 4 Compare: Analog FM requires (about) equal energy to jam 4 Jamming digital spread spectrum requires much more energy

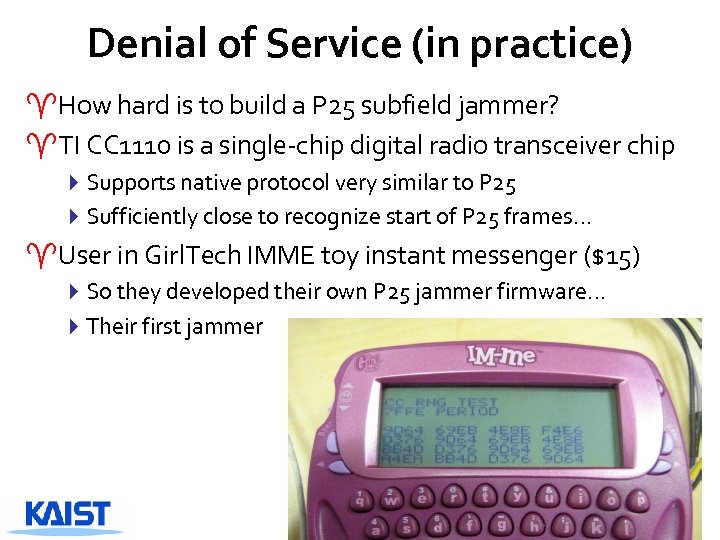

Denial of Service (in practice) ^How hard is to build a P 25 subfield jammer? ^TI CC 1110 is a single-chip digital radio transceiver chip 4 Supports native protocol very similar to P 25 4 Sufficiently close to recognize start of P 25 frames… ^User in Girl. Tech IMME toy instant messenger ($15) 4 So they developed their own P 25 jammer firmware… 4 Their first jammer

Denial of Service (in practice) ^How hard is to build a P 25 subfield jammer? ^TI CC 1110 is a single-chip digital radio transceiver chip 4 Supports native protocol very similar to P 25 4 Sufficiently close to recognize start of P 25 frames… ^User in Girl. Tech IMME toy instant messenger ($15) 4 So they developed their own P 25 jammer firmware… 4 Their first jammer

Scenario: Selective Jamming ^Need not to jam every P 25 transmission ^Jammer is low duty cycle 4 Spends most time in receiving mode 4 Can be programmed to recognize certain types of transmissions and interfere only with them ^Easy to configure a jammer that recognizes and disables only encrypted P 25 signals 4 Force users to switch to clear in order for communication to work

Scenario: Selective Jamming ^Need not to jam every P 25 transmission ^Jammer is low duty cycle 4 Spends most time in receiving mode 4 Can be programmed to recognize certain types of transmissions and interfere only with them ^Easy to configure a jammer that recognizes and disables only encrypted P 25 signals 4 Force users to switch to clear in order for communication to work

Potential Usability Problems ^Poor feedback about crypto state 4 Transmit crypto is controlled by an obscurely marked toggle switch 4 Switch’s state has no effect on received audio -Clear always accepted in encrypted mode -Encrypted accepted in clear mode (if keyed) ^Frequent rekeying + unreliable rekeying 4 Many agencies use short-lived keys 4 But, re-keying is difficult and unreliable

Potential Usability Problems ^Poor feedback about crypto state 4 Transmit crypto is controlled by an obscurely marked toggle switch 4 Switch’s state has no effect on received audio -Clear always accepted in encrypted mode -Encrypted accepted in clear mode (if keyed) ^Frequent rekeying + unreliable rekeying 4 Many agencies use short-lived keys 4 But, re-keying is difficult and unreliable

Poor Crypto Feedback ^Remember “Why Johnny can’t encrypt? ” ^Radios are typically configured to control outbound crypto with a two-position switch 4 Often obscurely marked, out of view ^Little feedback to user about crypto state other than the switch itself 4 “Encrypted” icon on display 4 Configurable “clear” beep warning -But the same beep used to indicate other things. ^Little chance for other users to notice or help 4 Received cleartext always accepted, even when their own switch is in the “secure” position

Poor Crypto Feedback ^Remember “Why Johnny can’t encrypt? ” ^Radios are typically configured to control outbound crypto with a two-position switch 4 Often obscurely marked, out of view ^Little feedback to user about crypto state other than the switch itself 4 “Encrypted” icon on display 4 Configurable “clear” beep warning -But the same beep used to indicate other things. ^Little chance for other users to notice or help 4 Received cleartext always accepted, even when their own switch is in the “secure” position

Motorola XTS 5000: Clear Mode

Motorola XTS 5000: Clear Mode

Motorola XTS 5000: Secure Mode

Motorola XTS 5000: Secure Mode

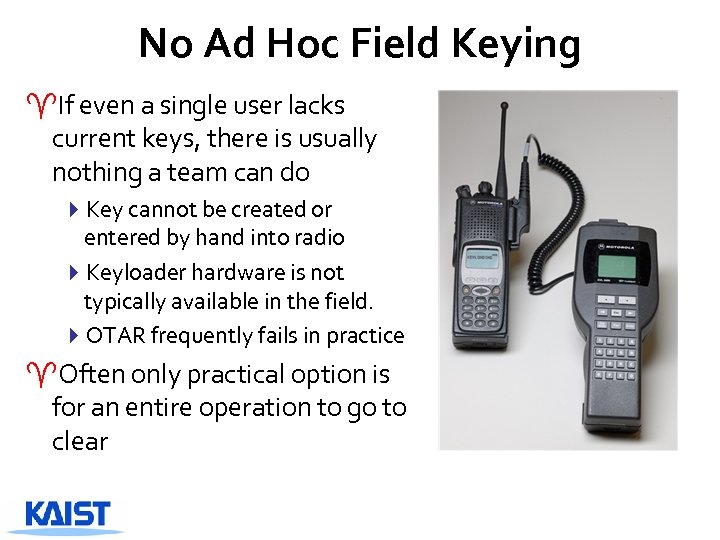

No Ad Hoc Field Keying ^If even a single user lacks current keys, there is usually nothing a team can do 4 Key cannot be created or entered by hand into radio 4 Keyloader hardware is not typically available in the field. 4 OTAR frequently fails in practice ^Often only practical option is for an entire operation to go to clear

No Ad Hoc Field Keying ^If even a single user lacks current keys, there is usually nothing a team can do 4 Key cannot be created or entered by hand into radio 4 Keyloader hardware is not typically available in the field. 4 OTAR frequently fails in practice ^Often only practical option is for an entire operation to go to clear

P 25 COMSEC in practice ^The P 25 traffic analysis and Do. S attacks they found are potentially serious, but require some expertise and resources on part of adversary 4 Current off-the-shelf equipment can’t easily implement most of the protocol-level attacks we found without modification -Inexpensive software-defined radio will soon change this, however 4 Not much can be done to mitigate these vulnerabilities without changing P 25 protocols in any case ^More serious are usability weaknesses that can be easily exploited by anyone, today: A significant volume of law-enforcement-sensitive cleartext regularly goes over the air, without users unaware.

P 25 COMSEC in practice ^The P 25 traffic analysis and Do. S attacks they found are potentially serious, but require some expertise and resources on part of adversary 4 Current off-the-shelf equipment can’t easily implement most of the protocol-level attacks we found without modification -Inexpensive software-defined radio will soon change this, however 4 Not much can be done to mitigate these vulnerabilities without changing P 25 protocols in any case ^More serious are usability weaknesses that can be easily exploited by anyone, today: A significant volume of law-enforcement-sensitive cleartext regularly goes over the air, without users unaware.

Unintended Sensitive P 25 Cleartext ^They accidently misconfigured a P 25 radio in their lab, and were surprised to hear chatter from a federal tactical surveillance operation 4 This turned out not to have been a fluke event ^They subsequently collected statistics about unintended over-the -air sensitive cleartext in several metropolitan areas 4 Focused on confidential tactical law-enforcement traffic -Omitted local agencies, non-covert operations (e. g. interop networks, uninformed FPS patrols), etc. -No encrypted traffic captured -Used only readily-available, unmodified consumer-grade equipment -Live monitored samples of traffic, recorded traffic statistics.

Unintended Sensitive P 25 Cleartext ^They accidently misconfigured a P 25 radio in their lab, and were surprised to hear chatter from a federal tactical surveillance operation 4 This turned out not to have been a fluke event ^They subsequently collected statistics about unintended over-the -air sensitive cleartext in several metropolitan areas 4 Focused on confidential tactical law-enforcement traffic -Omitted local agencies, non-covert operations (e. g. interop networks, uninformed FPS patrols), etc. -No encrypted traffic captured -Used only readily-available, unmodified consumer-grade equipment -Live monitored samples of traffic, recorded traffic statistics.

Intercepting the Federal Spectrum ^2000 discrete VHF and UHF voice channels allocated to Federal government 4 24 MHz of spectrum 4 12. 5 KHz channels 4 Law enforcement mixed in among less sensitive users -Some agency channels are widely known, others not. ^Easy to identify the channels used locally for covert tactical LE activities 4 They are the ones with encrypted traffic on them. ^Many P 25 receivers on market ^Icom R-2500 4 Aimed at hobby “scanner” market, includes P 25 options 4 Legally available to anyone

Intercepting the Federal Spectrum ^2000 discrete VHF and UHF voice channels allocated to Federal government 4 24 MHz of spectrum 4 12. 5 KHz channels 4 Law enforcement mixed in among less sensitive users -Some agency channels are widely known, others not. ^Easy to identify the channels used locally for covert tactical LE activities 4 They are the ones with encrypted traffic on them. ^Many P 25 receivers on market ^Icom R-2500 4 Aimed at hobby “scanner” market, includes P 25 options 4 Legally available to anyone

Results ^Searched the Federal VHF and UHF spectrum for the frequencies used for sensitive tactical networks 4 Likely candidate frequencies easy to identify: they carry mostly encrypted traffic ^Configured a small network of R-2500 receivers in several metropolitan areas with software to systemically scan these networks and log incidence of cleartext 4 Periodically “live monitored” samples of cleartext audio 4 Did not retain identifiable information about agencies or targets ^In each metropolitan area: 4 Most tactical traffic was apparently successfully encrypted 4 But still > 20 min (mean) sensitive cleartext per city per day - High variance; lower volume on weekends and holidays

Results ^Searched the Federal VHF and UHF spectrum for the frequencies used for sensitive tactical networks 4 Likely candidate frequencies easy to identify: they carry mostly encrypted traffic ^Configured a small network of R-2500 receivers in several metropolitan areas with software to systemically scan these networks and log incidence of cleartext 4 Periodically “live monitored” samples of cleartext audio 4 Did not retain identifiable information about agencies or targets ^In each metropolitan area: 4 Most tactical traffic was apparently successfully encrypted 4 But still > 20 min (mean) sensitive cleartext per city per day - High variance; lower volume on weekends and holidays

How Sensitive is Sensitive? ^The P 25 unintended cleartext they live-sampled included some of the most sensitive investigative data 4 Names and/or identifying features of targets and confidential informants, their locations, description of undercover agents 4 Information relayed by Title III wiretap plants 4 Plans forthcoming takedowns and operations 4 Wide range of crimes, some involving targets that appeared to employ reasonably sophisticated countermeasures 4 Sensitive cleartext captured from virtually every Do. J & DHS LE agency ^Mostly law enforcement / criminal, but we were not looking for military or intelligence traffic.

How Sensitive is Sensitive? ^The P 25 unintended cleartext they live-sampled included some of the most sensitive investigative data 4 Names and/or identifying features of targets and confidential informants, their locations, description of undercover agents 4 Information relayed by Title III wiretap plants 4 Plans forthcoming takedowns and operations 4 Wide range of crimes, some involving targets that appeared to employ reasonably sophisticated countermeasures 4 Sensitive cleartext captured from virtually every Do. J & DHS LE agency ^Mostly law enforcement / criminal, but we were not looking for military or intelligence traffic.

What is going wrong? ^Three categories of unintended cleartext: 4 Single user error: one user transmitting in clear, but communicating with an encrypted team 4 Group error: everyone in clear, indicated they were encrypted, no one noticed they weren’t 4 Keying failure: one member of group did not have key, so everyone went to clear ^Cleartext they sampled was roughly evenly split between single/group error and keying failure.

What is going wrong? ^Three categories of unintended cleartext: 4 Single user error: one user transmitting in clear, but communicating with an encrypted team 4 Group error: everyone in clear, indicated they were encrypted, no one noticed they weren’t 4 Keying failure: one member of group did not have key, so everyone went to clear ^Cleartext they sampled was roughly evenly split between single/group error and keying failure.

Observations ^P 25 tactical radio crypto capability is now widely deployed by federal law enforcement ^Yet, Federal P 25 networks still carry quite a bit of easily intercepted LE sensitive cleartext ^Two dominant causes, each requiring different mitigating approaches 4 Accidental cleartext (about half the time) 4 Keying failure (about half the time) ^Mitigations 4 P 25 protocols and products require a top-to-bottom redesign for security 4 Should not be considered reliable secure, until then. 4 Authors suggested some short term solution.

Observations ^P 25 tactical radio crypto capability is now widely deployed by federal law enforcement ^Yet, Federal P 25 networks still carry quite a bit of easily intercepted LE sensitive cleartext ^Two dominant causes, each requiring different mitigating approaches 4 Accidental cleartext (about half the time) 4 Keying failure (about half the time) ^Mitigations 4 P 25 protocols and products require a top-to-bottom redesign for security 4 Should not be considered reliable secure, until then. 4 Authors suggested some short term solution.