579e3e67466634bdab2fa36b1ce0f6fe.ppt

- Количество слайдов: 38

e. Science – Grid Computing Graduate Lecture 9 th November 2011 Robin Middleton – PPD/RAL/STFC I am indebted to the EGEE, EGI, LCG and Grid. PP projects and to colleagues therein for much of the material presented here. 1

e. Science – Grid Computing Graduate Lecture 9 th November 2011 Robin Middleton – PPD/RAL/STFC I am indebted to the EGEE, EGI, LCG and Grid. PP projects and to colleagues therein for much of the material presented here. 1

e. Science Graduate Lecture A high-level look at some aspects of computing for particle physics today q q q What is e. Science, what is the Grid ? Essential grid components Grids in HEP The wider picture Summary 2

e. Science Graduate Lecture A high-level look at some aspects of computing for particle physics today q q q What is e. Science, what is the Grid ? Essential grid components Grids in HEP The wider picture Summary 2

What is e. Science ? • • …also : e-Infrastructure, cyberinfrastructure, e-Research, … Includes – grid computing (e. g. WLCG, EGEE, EGI, OSG, Tera. Grid, NGS…) • computationally and/or data intensive; highly distributed over wide area – – • digital curation digital libraries collaborative tools (e. g. Access Grid) …other areas Most UK Research Councils active in e-Science – – – BBSRC NERC ESRC AHRC EPSRC STFC (e. g. climate studies, NERC Data. Grid - http: //ndg. nerc. ac. uk/ ) (e. g. NCe. SS - http: //www. merc. ac. uk/ ) (e. g. studies in collaborative performing arts) (e. g. My. Grid - http: //www. mygrid. org. uk/ ) (e. g. Grid. PP - http: //www. gridpp. ac. uk/ ) 3

What is e. Science ? • • …also : e-Infrastructure, cyberinfrastructure, e-Research, … Includes – grid computing (e. g. WLCG, EGEE, EGI, OSG, Tera. Grid, NGS…) • computationally and/or data intensive; highly distributed over wide area – – • digital curation digital libraries collaborative tools (e. g. Access Grid) …other areas Most UK Research Councils active in e-Science – – – BBSRC NERC ESRC AHRC EPSRC STFC (e. g. climate studies, NERC Data. Grid - http: //ndg. nerc. ac. uk/ ) (e. g. NCe. SS - http: //www. merc. ac. uk/ ) (e. g. studies in collaborative performing arts) (e. g. My. Grid - http: //www. mygrid. org. uk/ ) (e. g. Grid. PP - http: //www. gridpp. ac. uk/ ) 3

e. Science – year ~2000 • Professor Sir John Taylor, former (1999 -2003) Director General of the UK Research Councils, defined e. Science thus: – science increasingly done through distributed global collaborations enabled by the internet, using very large data collections, terascale computing resources and high performance visualisation’. • Also quotes from Professor Taylor… – ‘e-Science is about global collaboration in key areas of science, and the next generation of infrastructure that will enable it. ’ – ‘e-Science will change the dynamic of the way science is undertaken. ’ 4

e. Science – year ~2000 • Professor Sir John Taylor, former (1999 -2003) Director General of the UK Research Councils, defined e. Science thus: – science increasingly done through distributed global collaborations enabled by the internet, using very large data collections, terascale computing resources and high performance visualisation’. • Also quotes from Professor Taylor… – ‘e-Science is about global collaboration in key areas of science, and the next generation of infrastructure that will enable it. ’ – ‘e-Science will change the dynamic of the way science is undertaken. ’ 4

What is Grid Computing ? • Grid Computing – term invented in 1990 s as metaphor for making computer power as easy to access as the electric power grid (Foster & Kesselman - "The Grid: Blueprint for a new computing infrastructure“) – combines computing resources from multiple administrative domains • CPU and storage…loosely coupled – serves the needs of one or more virtual organisations (e. g. LHC experiments) – different from • Cloud Computing (e. g. Amazon Elastic Compute Cloud - http: //aws. amazon. com/ec 2/ ) • Volunteer Computing (SETI@home, LHC@home http: //boinc. berkeley. edu/projects. php ) 5

What is Grid Computing ? • Grid Computing – term invented in 1990 s as metaphor for making computer power as easy to access as the electric power grid (Foster & Kesselman - "The Grid: Blueprint for a new computing infrastructure“) – combines computing resources from multiple administrative domains • CPU and storage…loosely coupled – serves the needs of one or more virtual organisations (e. g. LHC experiments) – different from • Cloud Computing (e. g. Amazon Elastic Compute Cloud - http: //aws. amazon. com/ec 2/ ) • Volunteer Computing (SETI@home, LHC@home http: //boinc. berkeley. edu/projects. php ) 5

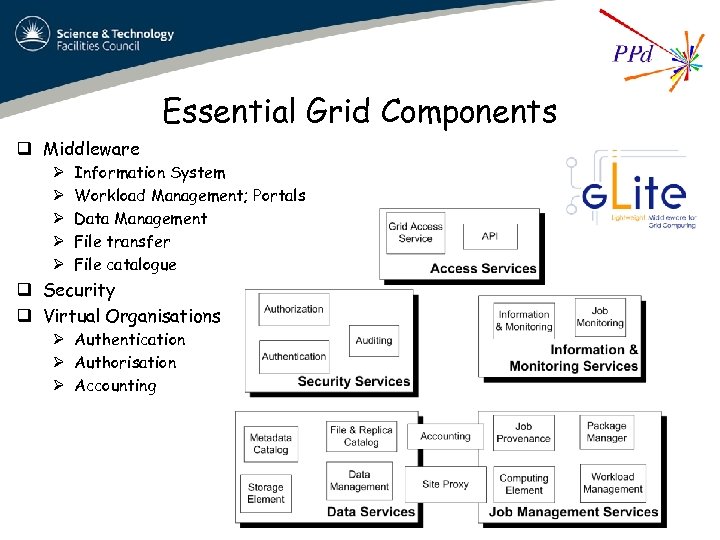

Essential Grid Components q Middleware Ø Ø Ø Information System Workload Management; Portals Data Management File transfer File catalogue q Security q Virtual Organisations Ø Authentication Ø Authorisation Ø Accounting 6

Essential Grid Components q Middleware Ø Ø Ø Information System Workload Management; Portals Data Management File transfer File catalogue q Security q Virtual Organisations Ø Authentication Ø Authorisation Ø Accounting 6

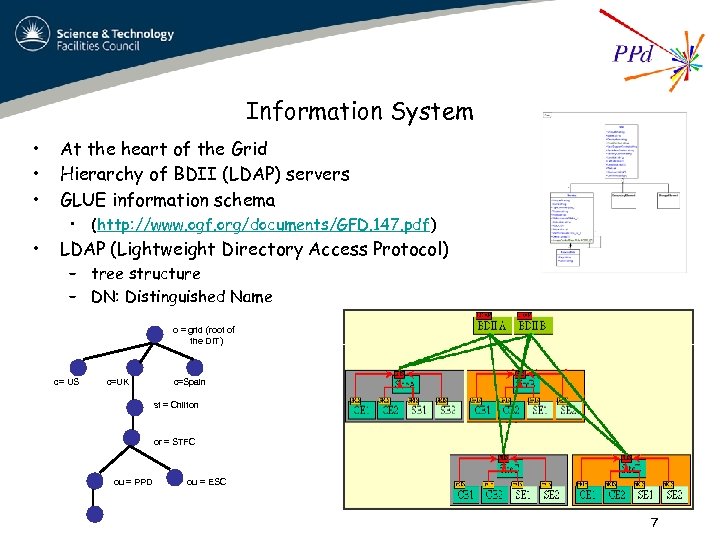

Information System • • • At the heart of the Grid Hierarchy of BDII (LDAP) servers GLUE information schema • LDAP (Lightweight Directory Access Protocol) • (http: //www. ogf. org/documents/GFD. 147. pdf) – tree structure – DN: Distinguished Name o = grid (root of the DIT) c= US c=UK c=Spain st = Chilton or = STFC ou = PPD ou = ESC 7

Information System • • • At the heart of the Grid Hierarchy of BDII (LDAP) servers GLUE information schema • LDAP (Lightweight Directory Access Protocol) • (http: //www. ogf. org/documents/GFD. 147. pdf) – tree structure – DN: Distinguished Name o = grid (root of the DIT) c= US c=UK c=Spain st = Chilton or = STFC ou = PPD ou = ESC 7

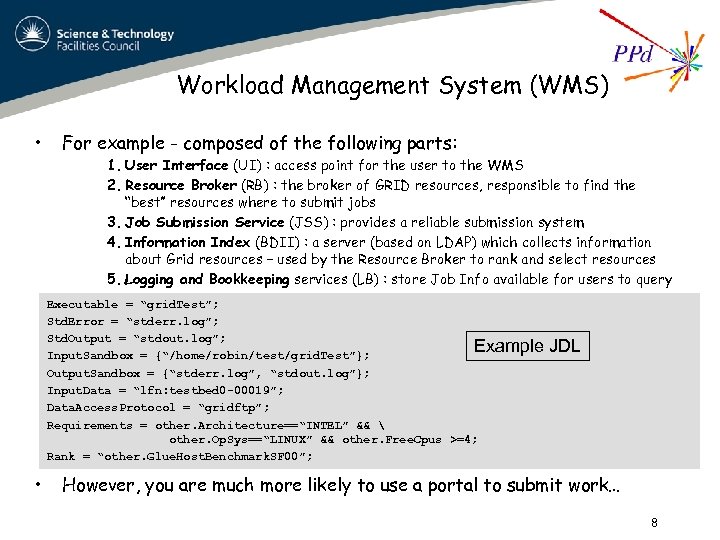

Workload Management System (WMS) • For example - composed of the following parts: 1. User Interface (UI) : access point for the user to the WMS 2. Resource Broker (RB) : the broker of GRID resources, responsible to find the “best” resources where to submit jobs 3. Job Submission Service (JSS) : provides a reliable submission system 4. Information Index (BDII) : a server (based on LDAP) which collects information about Grid resources – used by the Resource Broker to rank and select resources 5. Logging and Bookkeeping services (LB) : store Job Info available for users to query Executable = “grid. Test”; Std. Error = “stderr. log”; Std. Output = “stdout. log”; Example Input. Sandbox = {“/home/robin/test/grid. Test”}; Output. Sandbox = {“stderr. log”, “stdout. log”}; Input. Data = “lfn: testbed 0 -00019”; Data. Access. Protocol = “gridftp”; Requirements = other. Architecture==“INTEL” && other. Op. Sys==“LINUX” && other. Free. Cpus >=4; Rank = “other. Glue. Host. Benchmark. SF 00”; • JDL However, you are much more likely to use a portal to submit work… 8

Workload Management System (WMS) • For example - composed of the following parts: 1. User Interface (UI) : access point for the user to the WMS 2. Resource Broker (RB) : the broker of GRID resources, responsible to find the “best” resources where to submit jobs 3. Job Submission Service (JSS) : provides a reliable submission system 4. Information Index (BDII) : a server (based on LDAP) which collects information about Grid resources – used by the Resource Broker to rank and select resources 5. Logging and Bookkeeping services (LB) : store Job Info available for users to query Executable = “grid. Test”; Std. Error = “stderr. log”; Std. Output = “stdout. log”; Example Input. Sandbox = {“/home/robin/test/grid. Test”}; Output. Sandbox = {“stderr. log”, “stdout. log”}; Input. Data = “lfn: testbed 0 -00019”; Data. Access. Protocol = “gridftp”; Requirements = other. Architecture==“INTEL” && other. Op. Sys==“LINUX” && other. Free. Cpus >=4; Rank = “other. Glue. Host. Benchmark. SF 00”; • JDL However, you are much more likely to use a portal to submit work… 8

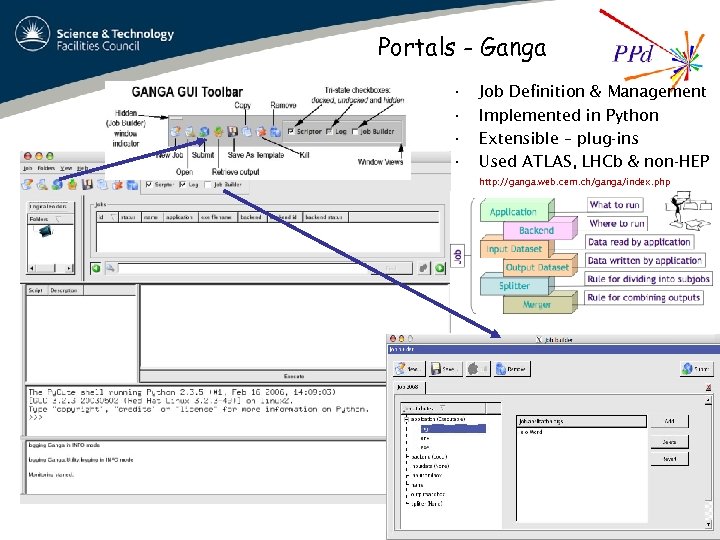

Portals - Ganga • • Job Definition & Management Implemented in Python Extensible – plug-ins Used ATLAS, LHCb & non-HEP http: //ganga. web. cern. ch/ganga/index. php 9

Portals - Ganga • • Job Definition & Management Implemented in Python Extensible – plug-ins Used ATLAS, LHCb & non-HEP http: //ganga. web. cern. ch/ganga/index. php 9

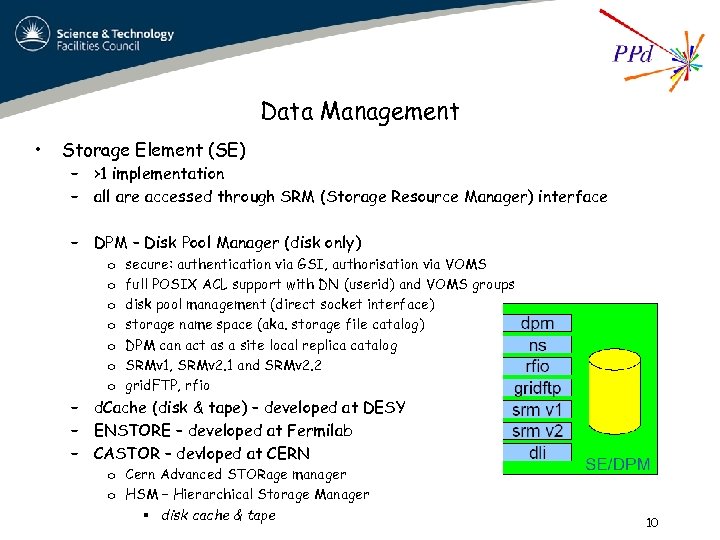

Data Management • Storage Element (SE) – >1 implementation – all are accessed through SRM (Storage Resource Manager) interface – DPM – Disk Pool Manager (disk only) o o o o secure: authentication via GSI, authorisation via VOMS full POSIX ACL support with DN (userid) and VOMS groups disk pool management (direct socket interface) storage name space (aka. storage file catalog) DPM can act as a site local replica catalog SRMv 1, SRMv 2. 1 and SRMv 2. 2 grid. FTP, rfio – d. Cache (disk & tape) – developed at DESY – ENSTORE – developed at Fermilab – CASTOR – devloped at CERN o Cern Advanced STORage manager o HSM – Hierarchical Storage Manager § disk cache & tape 10

Data Management • Storage Element (SE) – >1 implementation – all are accessed through SRM (Storage Resource Manager) interface – DPM – Disk Pool Manager (disk only) o o o o secure: authentication via GSI, authorisation via VOMS full POSIX ACL support with DN (userid) and VOMS groups disk pool management (direct socket interface) storage name space (aka. storage file catalog) DPM can act as a site local replica catalog SRMv 1, SRMv 2. 1 and SRMv 2. 2 grid. FTP, rfio – d. Cache (disk & tape) – developed at DESY – ENSTORE – developed at Fermilab – CASTOR – devloped at CERN o Cern Advanced STORage manager o HSM – Hierarchical Storage Manager § disk cache & tape 10

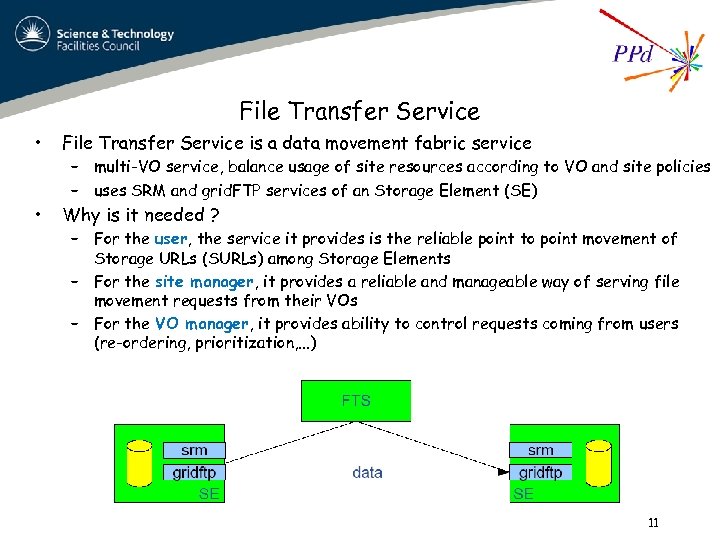

File Transfer Service • File Transfer Service is a data movement fabric service • Why is it needed ? – multi-VO service, balance usage of site resources according to VO and site policies – uses SRM and grid. FTP services of an Storage Element (SE) – For the user, the service it provides is the reliable point to point movement of Storage URLs (SURLs) among Storage Elements – For the site manager, it provides a reliable and manageable way of serving file movement requests from their VOs – For the VO manager, it provides ability to control requests coming from users (re-ordering, prioritization, . . . ) 11

File Transfer Service • File Transfer Service is a data movement fabric service • Why is it needed ? – multi-VO service, balance usage of site resources according to VO and site policies – uses SRM and grid. FTP services of an Storage Element (SE) – For the user, the service it provides is the reliable point to point movement of Storage URLs (SURLs) among Storage Elements – For the site manager, it provides a reliable and manageable way of serving file movement requests from their VOs – For the VO manager, it provides ability to control requests coming from users (re-ordering, prioritization, . . . ) 11

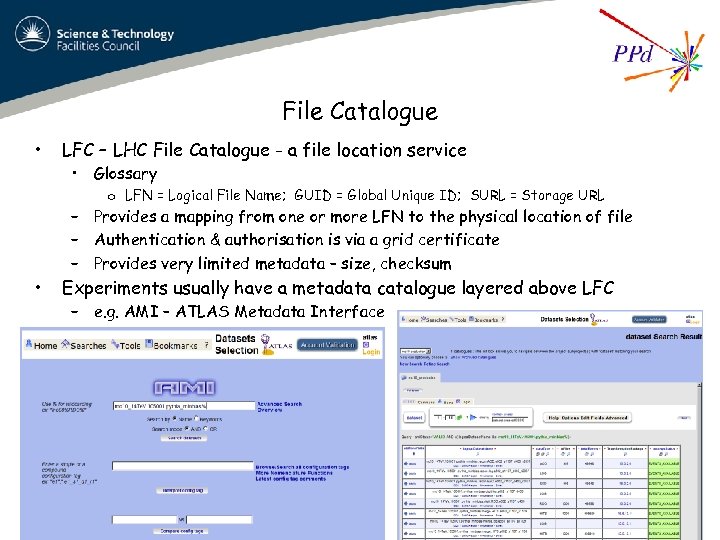

File Catalogue • LFC – LHC File Catalogue - a file location service • Glossary o LFN = Logical File Name; GUID = Global Unique ID; SURL = Storage URL – Provides a mapping from one or more LFN to the physical location of file – Authentication & authorisation is via a grid certificate – Provides very limited metadata – size, checksum • Experiments usually have a metadata catalogue layered above LFC – e. g. AMI – ATLAS Metadata Interface 12

File Catalogue • LFC – LHC File Catalogue - a file location service • Glossary o LFN = Logical File Name; GUID = Global Unique ID; SURL = Storage URL – Provides a mapping from one or more LFN to the physical location of file – Authentication & authorisation is via a grid certificate – Provides very limited metadata – size, checksum • Experiments usually have a metadata catalogue layered above LFC – e. g. AMI – ATLAS Metadata Interface 12

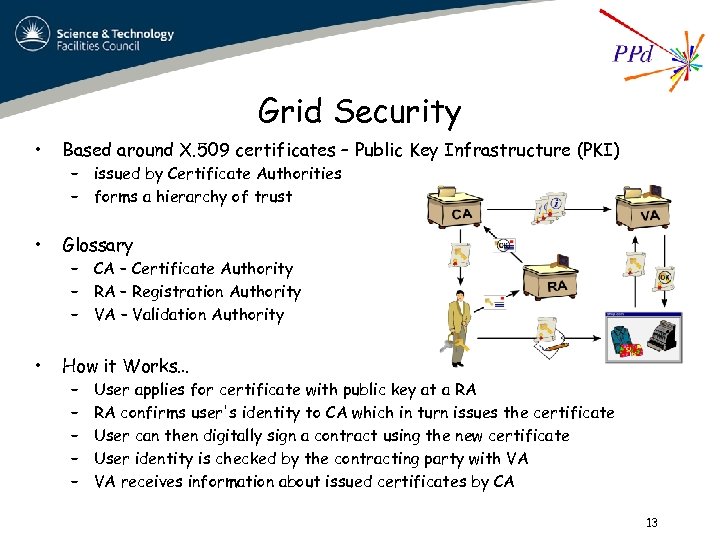

Grid Security • Based around X. 509 certificates – Public Key Infrastructure (PKI) • Glossary • How it Works… – issued by Certificate Authorities – forms a hierarchy of trust – CA – Certificate Authority – RA – Registration Authority – VA – Validation Authority – – – User applies for certificate with public key at a RA RA confirms user's identity to CA which in turn issues the certificate User can then digitally sign a contract using the new certificate User identity is checked by the contracting party with VA VA receives information about issued certificates by CA 13

Grid Security • Based around X. 509 certificates – Public Key Infrastructure (PKI) • Glossary • How it Works… – issued by Certificate Authorities – forms a hierarchy of trust – CA – Certificate Authority – RA – Registration Authority – VA – Validation Authority – – – User applies for certificate with public key at a RA RA confirms user's identity to CA which in turn issues the certificate User can then digitally sign a contract using the new certificate User identity is checked by the contracting party with VA VA receives information about issued certificates by CA 13

Virtual Organisations • Aggregation of groups (& individuals) sharing use of (distributed) resources to a common end under an agreed set of policies – a semi-informal structure orthogonal to normal institutional allegiances – e. g. A HEP Experiment • Grid Policies – Acceptable use; Grid Security; New VO registration; – • http: //proj-lcg-security. web. cern. ch/proj-lcg-security/security_policy. html VO specific environment – experiment libraries, databases, … – resource sites declare which VOs it will support 14

Virtual Organisations • Aggregation of groups (& individuals) sharing use of (distributed) resources to a common end under an agreed set of policies – a semi-informal structure orthogonal to normal institutional allegiances – e. g. A HEP Experiment • Grid Policies – Acceptable use; Grid Security; New VO registration; – • http: //proj-lcg-security. web. cern. ch/proj-lcg-security/security_policy. html VO specific environment – experiment libraries, databases, … – resource sites declare which VOs it will support 14

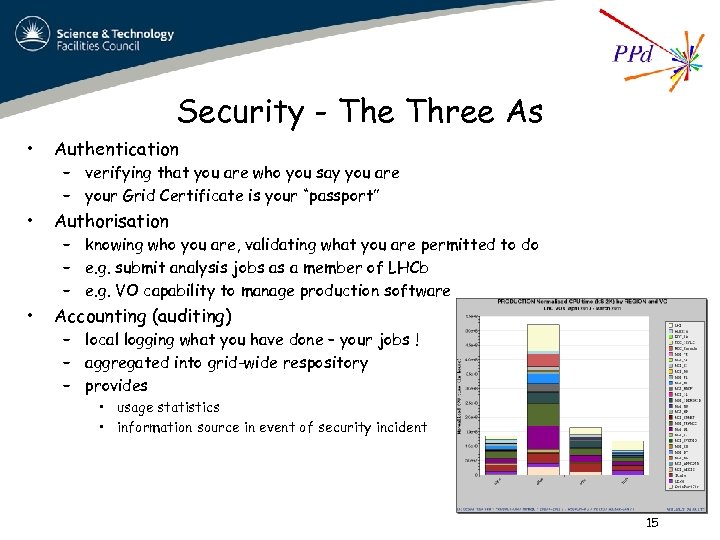

Security - The Three As • Authentication • Authorisation • Accounting (auditing) – verifying that you are who you say you are – your Grid Certificate is your “passport” – knowing who you are, validating what you are permitted to do – e. g. submit analysis jobs as a member of LHCb – e. g. VO capability to manage production software – local logging what you have done – your jobs ! – aggregated into grid-wide respository – provides • usage statistics • information source in event of security incident 15

Security - The Three As • Authentication • Authorisation • Accounting (auditing) – verifying that you are who you say you are – your Grid Certificate is your “passport” – knowing who you are, validating what you are permitted to do – e. g. submit analysis jobs as a member of LHCb – e. g. VO capability to manage production software – local logging what you have done – your jobs ! – aggregated into grid-wide respository – provides • usage statistics • information source in event of security incident 15

Grids in HEP q LCG; EGEE & EGI Projects q Grid. PP q The LHC Computing Grid Ø Ø Tiers 0, 1, 2 The LHC OPN Experiment Computing Models Typical data access patterns q Monitoring Ø Resource providers view Ø VO view Ø End-user view 16

Grids in HEP q LCG; EGEE & EGI Projects q Grid. PP q The LHC Computing Grid Ø Ø Tiers 0, 1, 2 The LHC OPN Experiment Computing Models Typical data access patterns q Monitoring Ø Resource providers view Ø VO view Ø End-user view 16

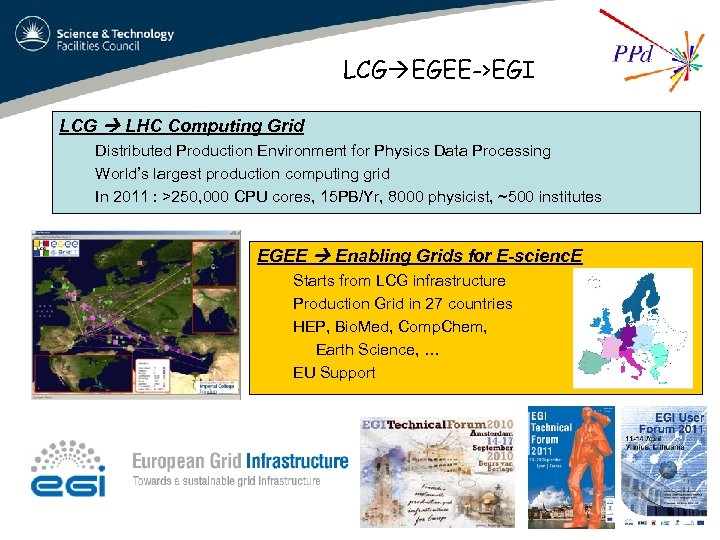

LCG EGEE->EGI LCG LHC Computing Grid Distributed Production Environment for Physics Data Processing World’s largest production computing grid In 2011 : >250, 000 CPU cores, 15 PB/Yr, 8000 physicist, ~500 institutes EGEE Enabling Grids for E-scienc. E Starts from LCG infrastructure Production Grid in 27 countries HEP, Bio. Med, Comp. Chem, Earth Science, … EU Support 17

LCG EGEE->EGI LCG LHC Computing Grid Distributed Production Environment for Physics Data Processing World’s largest production computing grid In 2011 : >250, 000 CPU cores, 15 PB/Yr, 8000 physicist, ~500 institutes EGEE Enabling Grids for E-scienc. E Starts from LCG infrastructure Production Grid in 27 countries HEP, Bio. Med, Comp. Chem, Earth Science, … EU Support 17

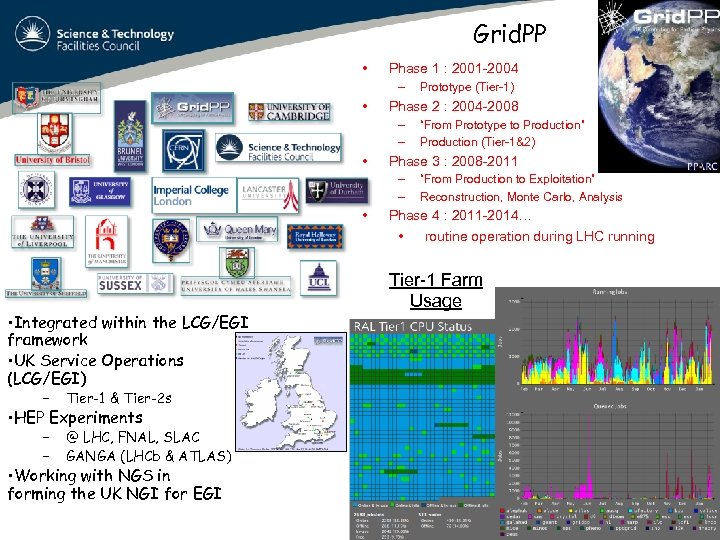

Grid. PP • Phase 1 : 2001 -2004 – • Phase 2 : 2004 -2008 – – • • Integrated within the LCG/EGI framework • UK Service Operations (LCG/EGI) – “From Production to Exploitation” Reconstruction, Monte Carlo, Analysis Phase 4 : 2011 -2014… • routine operation during LHC running Tier-1 Farm Usage Tier-1 & Tier-2 s – – “From Prototype to Production” Production (Tier-1&2) Phase 3 : 2008 -2011 – – • Prototype (Tier-1) @ LHC, FNAL, SLAC GANGA (LHCb & ATLAS) • HEP Experiments • Working with NGS in forming the UK NGI for EGI 18

Grid. PP • Phase 1 : 2001 -2004 – • Phase 2 : 2004 -2008 – – • • Integrated within the LCG/EGI framework • UK Service Operations (LCG/EGI) – “From Production to Exploitation” Reconstruction, Monte Carlo, Analysis Phase 4 : 2011 -2014… • routine operation during LHC running Tier-1 Farm Usage Tier-1 & Tier-2 s – – “From Prototype to Production” Production (Tier-1&2) Phase 3 : 2008 -2011 – – • Prototype (Tier-1) @ LHC, FNAL, SLAC GANGA (LHCb & ATLAS) • HEP Experiments • Working with NGS in forming the UK NGI for EGI 18

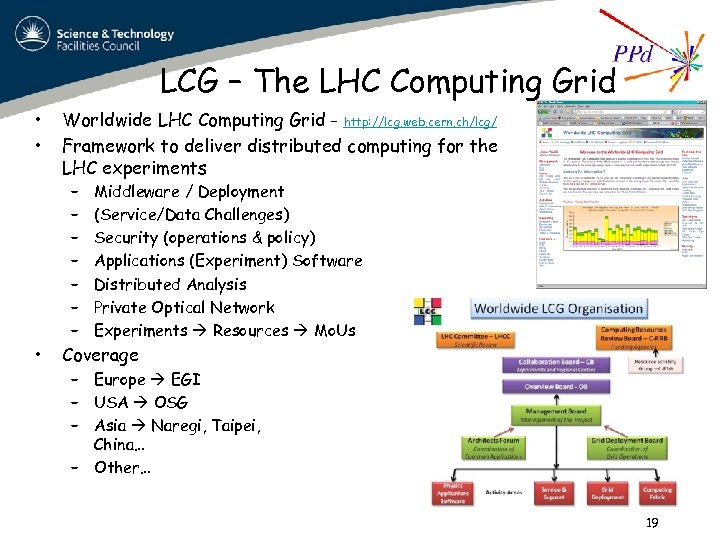

LCG – The LHC Computing Grid • • Worldwide LHC Computing Grid - http: //lcg. web. cern. ch/lcg/ Framework to deliver distributed computing for the LHC experiments – – – – • Middleware / Deployment (Service/Data Challenges) Security (operations & policy) Applications (Experiment) Software Distributed Analysis Private Optical Network Experiments Resources Mo. Us Coverage – Europe EGI – USA OSG – Asia Naregi, Taipei, China… – Other… 19

LCG – The LHC Computing Grid • • Worldwide LHC Computing Grid - http: //lcg. web. cern. ch/lcg/ Framework to deliver distributed computing for the LHC experiments – – – – • Middleware / Deployment (Service/Data Challenges) Security (operations & policy) Applications (Experiment) Software Distributed Analysis Private Optical Network Experiments Resources Mo. Us Coverage – Europe EGI – USA OSG – Asia Naregi, Taipei, China… – Other… 19

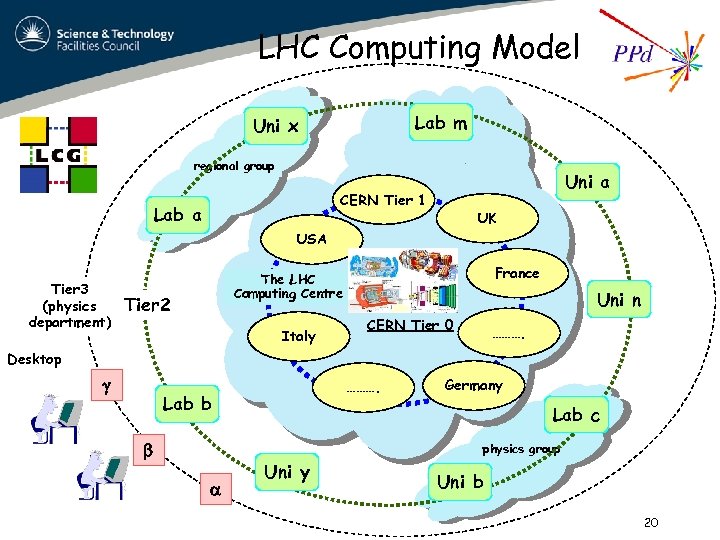

LHC Computing Model Lab m Uni x regional group Uni a CERN Tier 1 Lab a UK USA Tier 3 (physics department) France The Tier LHC 1 Computing Centre Tier 2 Italy Uni n CERN Tier 0 ………. Desktop ………. Lab b Germany Lab c physics group Uni y Uni b 20

LHC Computing Model Lab m Uni x regional group Uni a CERN Tier 1 Lab a UK USA Tier 3 (physics department) France The Tier LHC 1 Computing Centre Tier 2 Italy Uni n CERN Tier 0 ………. Desktop ………. Lab b Germany Lab c physics group Uni y Uni b 20

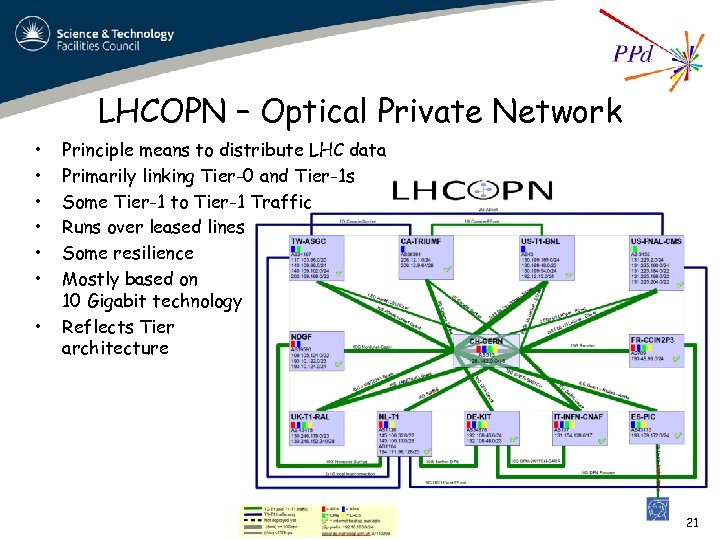

LHCOPN – Optical Private Network • • Principle means to distribute LHC data Primarily linking Tier-0 and Tier-1 s Some Tier-1 to Tier-1 Traffic Runs over leased lines Some resilience Mostly based on 10 Gigabit technology Reflects Tier architecture 21

LHCOPN – Optical Private Network • • Principle means to distribute LHC data Primarily linking Tier-0 and Tier-1 s Some Tier-1 to Tier-1 Traffic Runs over leased lines Some resilience Mostly based on 10 Gigabit technology Reflects Tier architecture 21

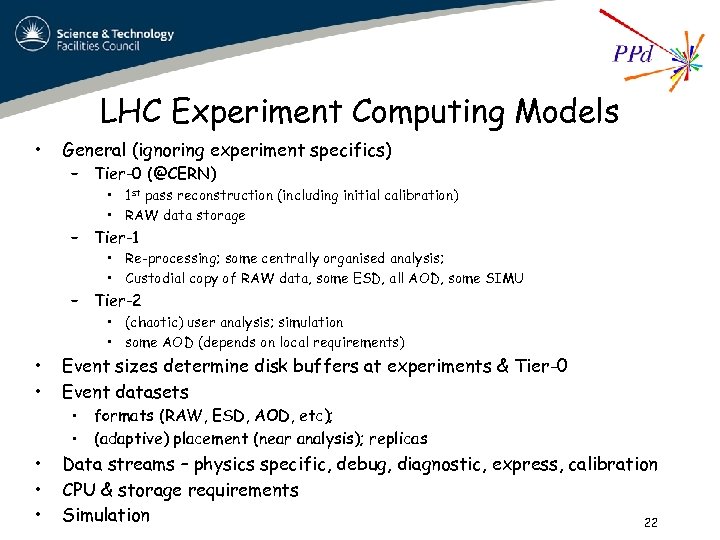

LHC Experiment Computing Models • General (ignoring experiment specifics) – Tier-0 (@CERN) • 1 st pass reconstruction (including initial calibration) • RAW data storage – Tier-1 • Re-processing; some centrally organised analysis; • Custodial copy of RAW data, some ESD, all AOD, some SIMU – Tier-2 • (chaotic) user analysis; simulation • some AOD (depends on local requirements) • • Event sizes determine disk buffers at experiments & Tier-0 Event datasets • • • Data streams – physics specific, debug, diagnostic, express, calibration CPU & storage requirements Simulation 22 • formats (RAW, ESD, AOD, etc); • (adaptive) placement (near analysis); replicas

LHC Experiment Computing Models • General (ignoring experiment specifics) – Tier-0 (@CERN) • 1 st pass reconstruction (including initial calibration) • RAW data storage – Tier-1 • Re-processing; some centrally organised analysis; • Custodial copy of RAW data, some ESD, all AOD, some SIMU – Tier-2 • (chaotic) user analysis; simulation • some AOD (depends on local requirements) • • Event sizes determine disk buffers at experiments & Tier-0 Event datasets • • • Data streams – physics specific, debug, diagnostic, express, calibration CPU & storage requirements Simulation 22 • formats (RAW, ESD, AOD, etc); • (adaptive) placement (near analysis); replicas

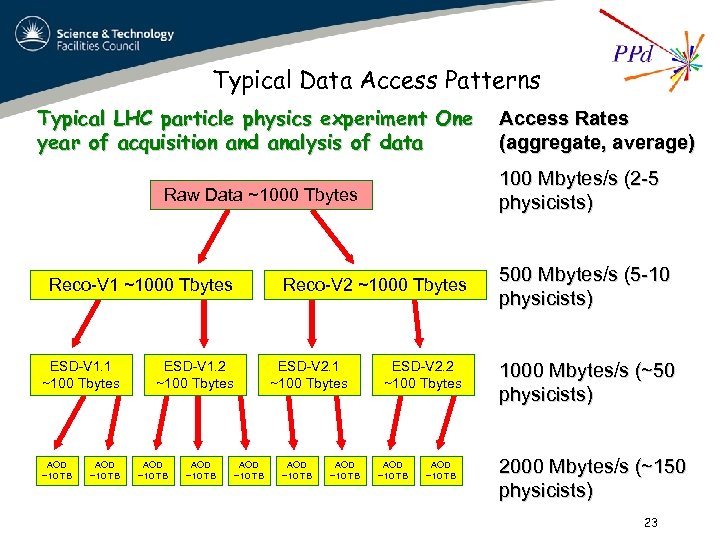

Typical Data Access Patterns Typical LHC particle physics experiment One year of acquisition and analysis of data 100 Mbytes/s (2 -5 physicists) Raw Data ~1000 Tbytes Reco-V 1 ~1000 Tbytes ESD-V 1. 1 ~100 Tbytes AOD ~10 TB Reco-V 2 ~1000 Tbytes ESD-V 1. 2 ~100 Tbytes AOD ~10 TB ESD-V 2. 1 ~100 Tbytes AOD ~10 TB Access Rates (aggregate, average) ESD-V 2. 2 ~100 Tbytes AOD ~10 TB 500 Mbytes/s (5 -10 physicists) 1000 Mbytes/s (~50 physicists) 2000 Mbytes/s (~150 physicists) 23

Typical Data Access Patterns Typical LHC particle physics experiment One year of acquisition and analysis of data 100 Mbytes/s (2 -5 physicists) Raw Data ~1000 Tbytes Reco-V 1 ~1000 Tbytes ESD-V 1. 1 ~100 Tbytes AOD ~10 TB Reco-V 2 ~1000 Tbytes ESD-V 1. 2 ~100 Tbytes AOD ~10 TB ESD-V 2. 1 ~100 Tbytes AOD ~10 TB Access Rates (aggregate, average) ESD-V 2. 2 ~100 Tbytes AOD ~10 TB 500 Mbytes/s (5 -10 physicists) 1000 Mbytes/s (~50 physicists) 2000 Mbytes/s (~150 physicists) 23

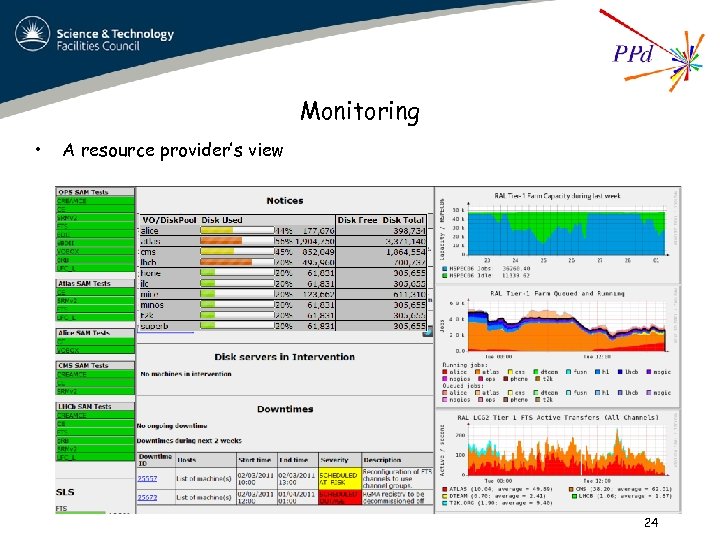

Monitoring • A resource provider’s view 24

Monitoring • A resource provider’s view 24

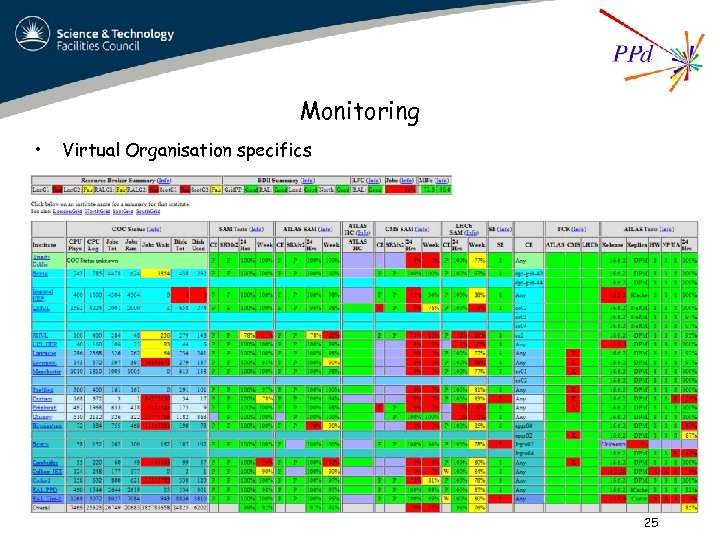

Monitoring • Virtual Organisation specifics 25

Monitoring • Virtual Organisation specifics 25

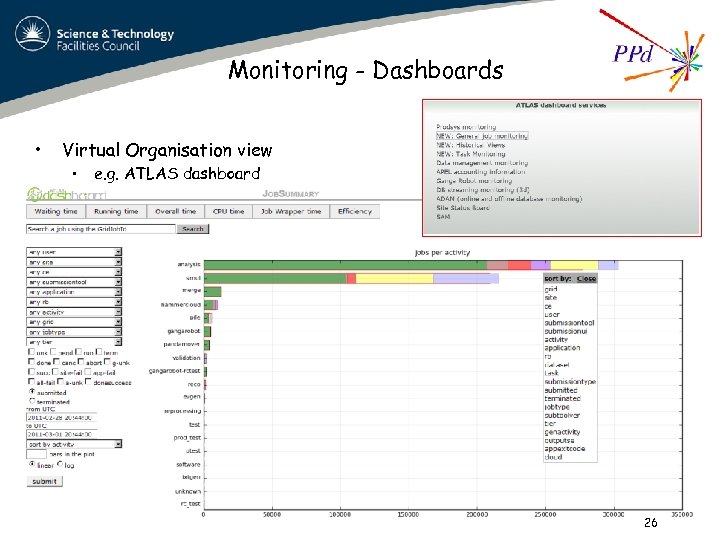

Monitoring - Dashboards • Virtual Organisation view • e. g. ATLAS dashboard 26

Monitoring - Dashboards • Virtual Organisation view • e. g. ATLAS dashboard 26

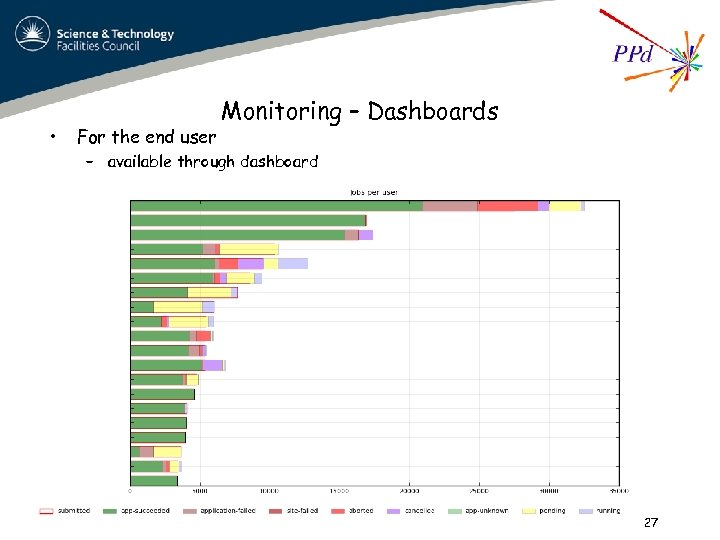

• For the end user Monitoring – Dashboards – available through dashboard 27

• For the end user Monitoring – Dashboards – available through dashboard 27

The wider Picture q q q q What some other communities do with Grids The ESFRI projects Virtual Instruments Digital Curation Clouds Volunteer Computing Virtualisation 28

The wider Picture q q q q What some other communities do with Grids The ESFRI projects Virtual Instruments Digital Curation Clouds Volunteer Computing Virtualisation 28

What are other communities doing with grids ? • Astronomy & Astrophysics o large-scale data acquisition, simulation, data storage/retrieval • Computational Chemistry o use of software packages (incl. commercial) on EGEE • Earth Sciences o Seismology, Atmospheric modeling, Meteorology, Flood forecasting, Pollution • Fusion (build up to ITER) o Ion Kinetic Transport, Massive Ray Tracing, Stellarator Optimization. • • Computer Science – collect data on Grid behaviour (Grid Observatory) High Energy Physics o four LHC experiments, Ba. Bar, D 0, CDF, Lattice QCD, Geant 4, Six. Track, … • Life Sciences o Medical Imaging, Bioinformatics, Drug discovery o WISDOM – drug discovery for neglected / emergent diseases (malaria, H 5 N 1, …) 29

What are other communities doing with grids ? • Astronomy & Astrophysics o large-scale data acquisition, simulation, data storage/retrieval • Computational Chemistry o use of software packages (incl. commercial) on EGEE • Earth Sciences o Seismology, Atmospheric modeling, Meteorology, Flood forecasting, Pollution • Fusion (build up to ITER) o Ion Kinetic Transport, Massive Ray Tracing, Stellarator Optimization. • • Computer Science – collect data on Grid behaviour (Grid Observatory) High Energy Physics o four LHC experiments, Ba. Bar, D 0, CDF, Lattice QCD, Geant 4, Six. Track, … • Life Sciences o Medical Imaging, Bioinformatics, Drug discovery o WISDOM – drug discovery for neglected / emergent diseases (malaria, H 5 N 1, …) 29

ESFRI Projects (European Strategy Forum on Research Infrastructures) • Many are starting to look at their e-Science needs – some at a similar scale to the LHC (petascale) – project design study stage – http: //cordis. europa. eu/esfri/ Cherenkov Telescope Array 30

ESFRI Projects (European Strategy Forum on Research Infrastructures) • Many are starting to look at their e-Science needs – some at a similar scale to the LHC (petascale) – project design study stage – http: //cordis. europa. eu/esfri/ Cherenkov Telescope Array 30

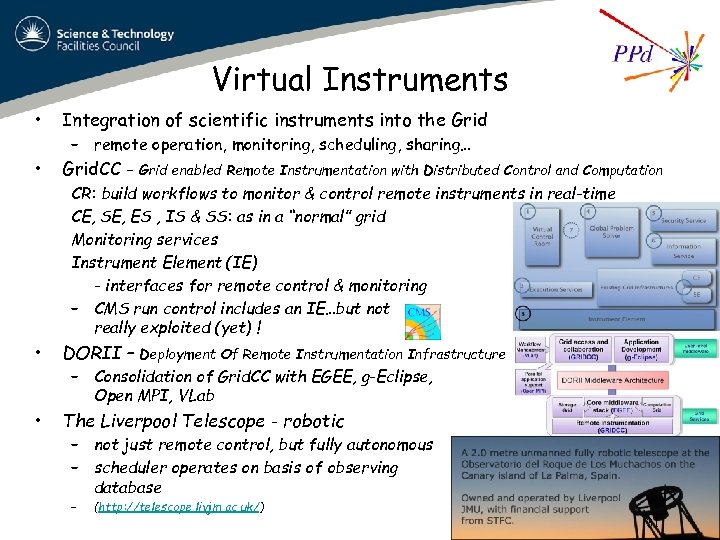

Virtual Instruments • Integration of scientific instruments into the Grid • Grid. CC - – remote operation, monitoring, scheduling, sharing… Grid enabled Remote Instrumentation with Distributed Control and Computation CR: build workflows to monitor & control remote instruments in real-time CE, SE, ES , IS & SS: as in a “normal” grid Monitoring services Instrument Element (IE) - interfaces for remote control & monitoring – CMS run control includes an IE…but not really exploited (yet) ! • DORII – • The Liverpool Telescope - robotic Deployment Of Remote Instrumentation Infrastructure – Consolidation of Grid. CC with EGEE, g-Eclipse, Open MPI, VLab – not just remote control, but fully autonomous – scheduler operates on basis of observing database – (http: //telescope. livjm. ac. uk/) 31

Virtual Instruments • Integration of scientific instruments into the Grid • Grid. CC - – remote operation, monitoring, scheduling, sharing… Grid enabled Remote Instrumentation with Distributed Control and Computation CR: build workflows to monitor & control remote instruments in real-time CE, SE, ES , IS & SS: as in a “normal” grid Monitoring services Instrument Element (IE) - interfaces for remote control & monitoring – CMS run control includes an IE…but not really exploited (yet) ! • DORII – • The Liverpool Telescope - robotic Deployment Of Remote Instrumentation Infrastructure – Consolidation of Grid. CC with EGEE, g-Eclipse, Open MPI, VLab – not just remote control, but fully autonomous – scheduler operates on basis of observing database – (http: //telescope. livjm. ac. uk/) 31

Digital Curation • • Preservation of digital research data for future use Issues • Digital Curation Centre - – media; data formats; metadata; data management tools; reading (FORTRAN); . . . – digital curation lifecycle - http: //www. dcc. ac. uk/digital-curation/what-digital-curation – – – http: //www. dcc. ac. uk/ NOT a repository ! strategic leadership influence national (international) policy expert advice for both users and funders maintains suite of resources and tools raise levels of awareness and expertise 32

Digital Curation • • Preservation of digital research data for future use Issues • Digital Curation Centre - – media; data formats; metadata; data management tools; reading (FORTRAN); . . . – digital curation lifecycle - http: //www. dcc. ac. uk/digital-curation/what-digital-curation – – – http: //www. dcc. ac. uk/ NOT a repository ! strategic leadership influence national (international) policy expert advice for both users and funders maintains suite of resources and tools raise levels of awareness and expertise 32

JADE (1978 -86) • New results from old data – – – new & improved theoretical calculations & MC models; optimised observables better understanding of Standard Model (top, W, Z) re-do measurements – better precision, better systematics new measurements, but at (lower) energies not available today new phenomena – check at lower energies • Challenges • Since 1996 – rescue data from (very) old media; resurrect old software; data management; implement modern analysis techniques – but, luminosity files lost – recovered from ASCII printout in an office cleanup – ~10 publications (as recent as 2009) – ~10 conference contributions – a few Ph. D Theses (ack S. Bethke) 33

JADE (1978 -86) • New results from old data – – – new & improved theoretical calculations & MC models; optimised observables better understanding of Standard Model (top, W, Z) re-do measurements – better precision, better systematics new measurements, but at (lower) energies not available today new phenomena – check at lower energies • Challenges • Since 1996 – rescue data from (very) old media; resurrect old software; data management; implement modern analysis techniques – but, luminosity files lost – recovered from ASCII printout in an office cleanup – ~10 publications (as recent as 2009) – ~10 conference contributions – a few Ph. D Theses (ack S. Bethke) 33

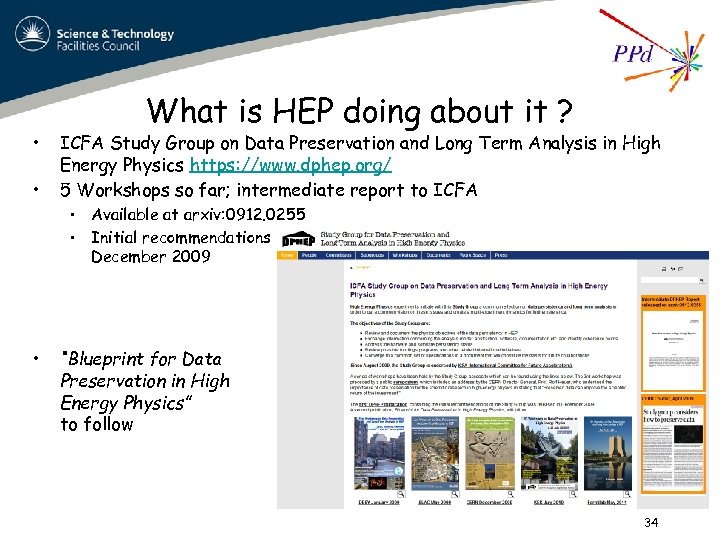

• • • What is HEP doing about it ? ICFA Study Group on Data Preservation and Long Term Analysis in High Energy Physics https: //www. dphep. org/ 5 Workshops so far; intermediate report to ICFA • Available at arxiv: 0912. 0255 • Initial recommendations December 2009 “Blueprint for Data Preservation in High Energy Physics” to follow 34

• • • What is HEP doing about it ? ICFA Study Group on Data Preservation and Long Term Analysis in High Energy Physics https: //www. dphep. org/ 5 Workshops so far; intermediate report to ICFA • Available at arxiv: 0912. 0255 • Initial recommendations December 2009 “Blueprint for Data Preservation in High Energy Physics” to follow 34

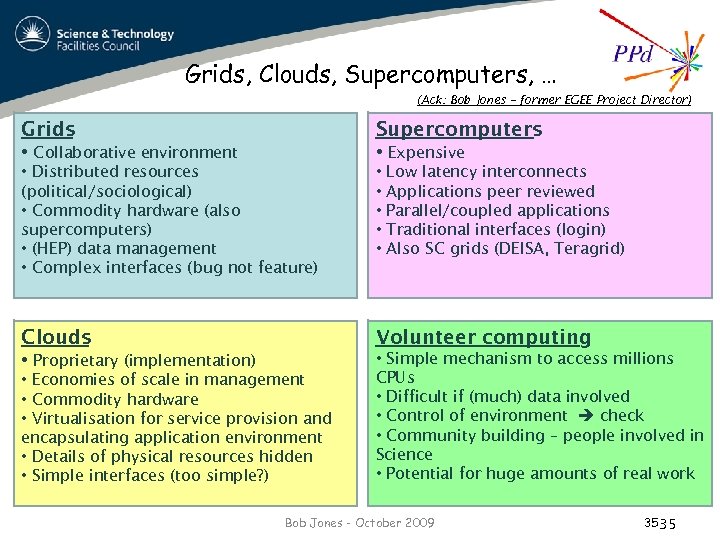

Grids, Clouds, Supercomputers, … (Ack: Bob Jones – former EGEE Project Director) Grids Supercomputers • Collaborative environment • Expensive • Distributed resources (political/sociological) • Commodity hardware (also supercomputers) • (HEP) data management • Complex interfaces (bug not feature) • • • Clouds Volunteer computing • Proprietary (implementation) • Simple mechanism to access millions CPUs • Difficult if (much) data involved • Control of environment check • Community building – people involved in Science • Potential for huge amounts of real work • Economies of scale in management • Commodity hardware • Virtualisation for service provision and encapsulating application environment • Details of physical resources hidden • Simple interfaces (too simple? ) Low latency interconnects Applications peer reviewed Parallel/coupled applications Traditional interfaces (login) Also SC grids (DEISA, Teragrid) Bob Jones - October 2009 35 35

Grids, Clouds, Supercomputers, … (Ack: Bob Jones – former EGEE Project Director) Grids Supercomputers • Collaborative environment • Expensive • Distributed resources (political/sociological) • Commodity hardware (also supercomputers) • (HEP) data management • Complex interfaces (bug not feature) • • • Clouds Volunteer computing • Proprietary (implementation) • Simple mechanism to access millions CPUs • Difficult if (much) data involved • Control of environment check • Community building – people involved in Science • Potential for huge amounts of real work • Economies of scale in management • Commodity hardware • Virtualisation for service provision and encapsulating application environment • Details of physical resources hidden • Simple interfaces (too simple? ) Low latency interconnects Applications peer reviewed Parallel/coupled applications Traditional interfaces (login) Also SC grids (DEISA, Teragrid) Bob Jones - October 2009 35 35

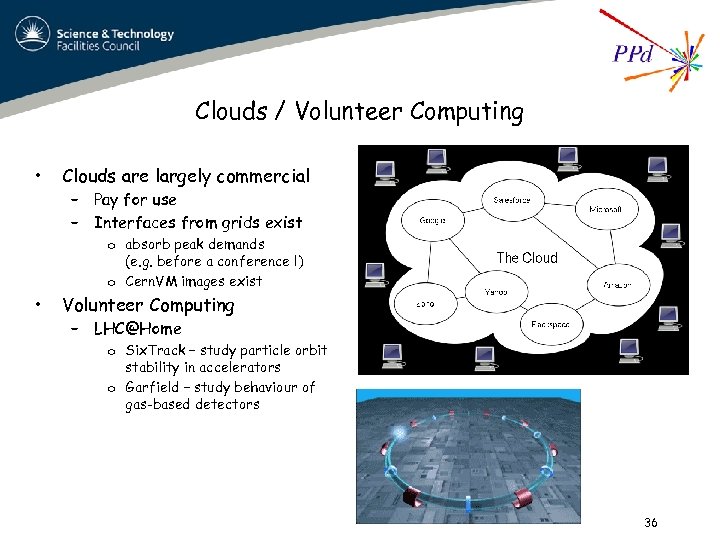

Clouds / Volunteer Computing • Clouds are largely commercial – Pay for use – Interfaces from grids exist o absorb peak demands (e. g. before a conference !) o Cern. VM images exist • Volunteer Computing – LHC@Home o Six. Track – study particle orbit stability in accelerators o Garfield – study behaviour of gas-based detectors 36

Clouds / Volunteer Computing • Clouds are largely commercial – Pay for use – Interfaces from grids exist o absorb peak demands (e. g. before a conference !) o Cern. VM images exist • Volunteer Computing – LHC@Home o Six. Track – study particle orbit stability in accelerators o Garfield – study behaviour of gas-based detectors 36

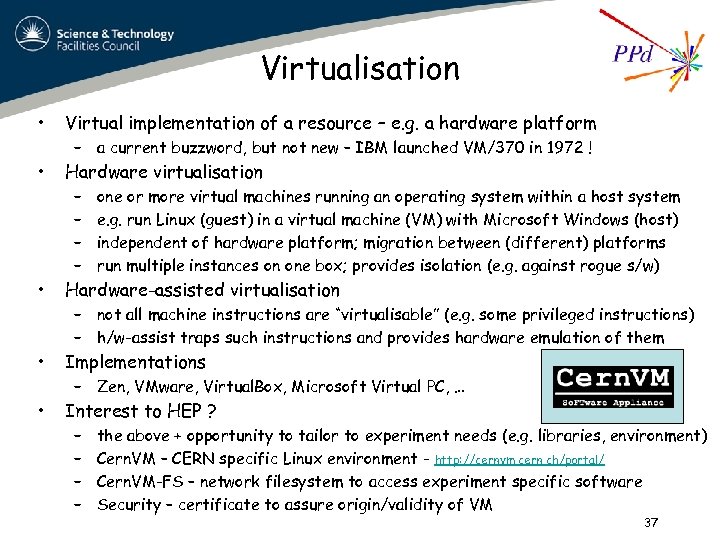

Virtualisation • Virtual implementation of a resource – e. g. a hardware platform • Hardware virtualisation – a current buzzword, but not new – IBM launched VM/370 in 1972 ! – – one or more virtual machines running an operating system within a host system e. g. run Linux (guest) in a virtual machine (VM) with Microsoft Windows (host) independent of hardware platform; migration between (different) platforms run multiple instances on one box; provides isolation (e. g. against rogue s/w) • Hardware-assisted virtualisation • Implementations • Interest to HEP ? – not all machine instructions are “virtualisable” (e. g. some privileged instructions) – h/w-assist traps such instructions and provides hardware emulation of them – Zen, VMware, Virtual. Box, Microsoft Virtual PC, … – – the above + opportunity to tailor to experiment needs (e. g. libraries, environment) Cern. VM – CERN specific Linux environment - http: //cernvm. cern. ch/portal/ Cern. VM-FS – network filesystem to access experiment specific software Security – certificate to assure origin/validity of VM 37

Virtualisation • Virtual implementation of a resource – e. g. a hardware platform • Hardware virtualisation – a current buzzword, but not new – IBM launched VM/370 in 1972 ! – – one or more virtual machines running an operating system within a host system e. g. run Linux (guest) in a virtual machine (VM) with Microsoft Windows (host) independent of hardware platform; migration between (different) platforms run multiple instances on one box; provides isolation (e. g. against rogue s/w) • Hardware-assisted virtualisation • Implementations • Interest to HEP ? – not all machine instructions are “virtualisable” (e. g. some privileged instructions) – h/w-assist traps such instructions and provides hardware emulation of them – Zen, VMware, Virtual. Box, Microsoft Virtual PC, … – – the above + opportunity to tailor to experiment needs (e. g. libraries, environment) Cern. VM – CERN specific Linux environment - http: //cernvm. cern. ch/portal/ Cern. VM-FS – network filesystem to access experiment specific software Security – certificate to assure origin/validity of VM 37

Summary v What is e. Science about and what are Grids v Essential components of a Grid v middleware v virtual organisations v Grids in HEP v LHC Computing GRID v A look outside HEP v examples of what others are doing 38

Summary v What is e. Science about and what are Grids v Essential components of a Grid v middleware v virtual organisations v Grids in HEP v LHC Computing GRID v A look outside HEP v examples of what others are doing 38