afae5d81a4c5df1eaa9c1fcb2794634e.ppt

- Количество слайдов: 20

Dominique Boutigny December 12, 2006 CC-IN 2 P 3 a Tier-1 for W-LCG 1 st Chinese – French Workshop on LHC Physics and associated Grid Computing IHEP - Beijing

CC-IN 2 P 3 presentation (1) CC-IN 2 P 3 is providing computing resources for the whole French community working on: q Particle Physics q Nuclear Physics q Astro-Particle Physics dapnia CC-IN 2 P 3 is located in Villeurbanne near Lyon D. Boutigny 12/12/2006 2

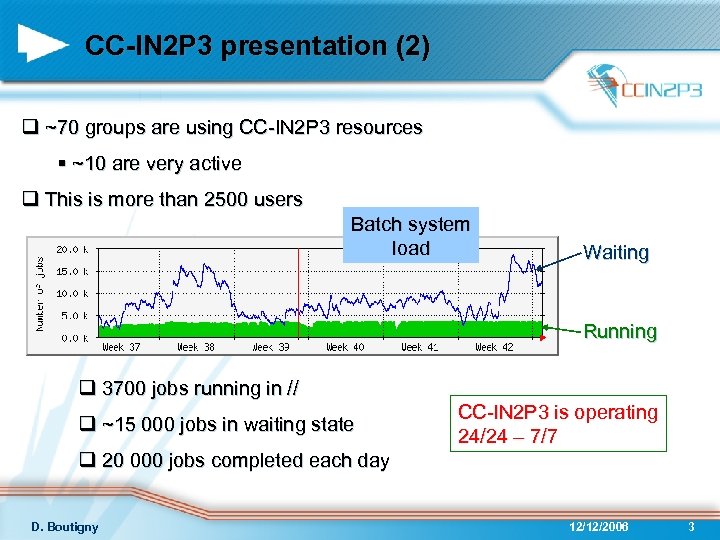

CC-IN 2 P 3 presentation (2) q ~70 groups are using CC-IN 2 P 3 resources § ~10 are very active q This is more than 2500 users Batch system load Waiting Running q 3700 jobs running in // q ~15 000 jobs in waiting state CC-IN 2 P 3 is operating 24/24 – 7/7 q 20 000 jobs completed each day D. Boutigny 12/12/2006 3

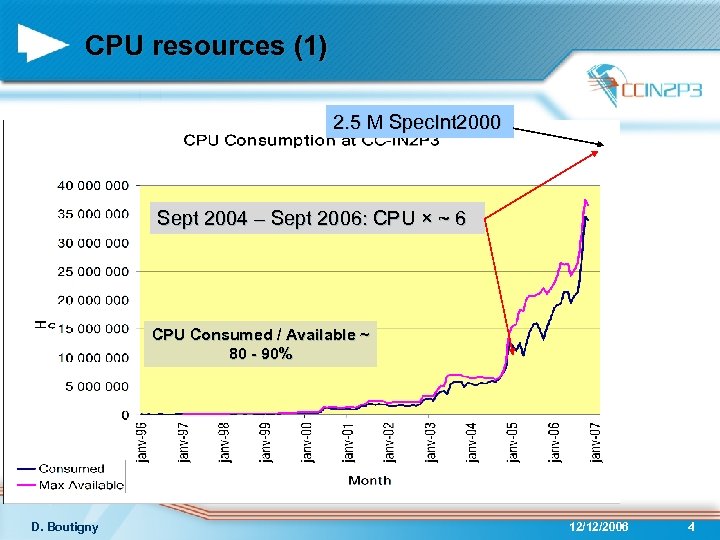

CPU resources (1) 2. 5 M Spec. Int 2000 Sept 2004 – Sept 2006: CPU × ~ 6 CPU Consumed / Available ~ 80 - 90% D. Boutigny 12/12/2006 4

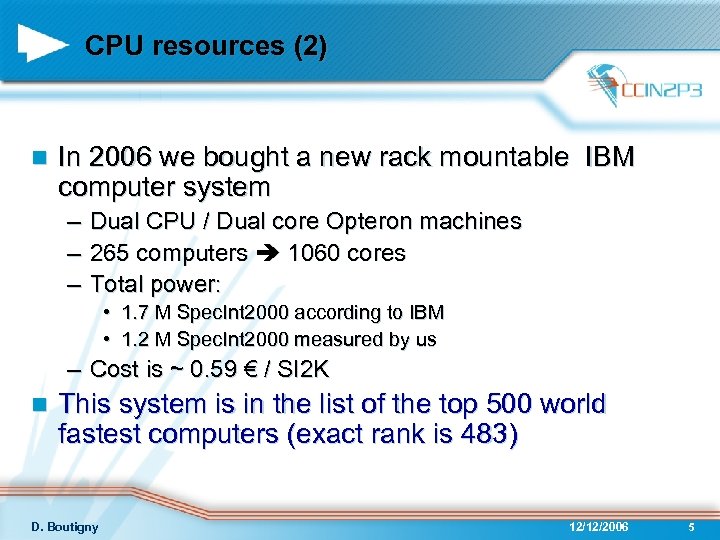

CPU resources (2) n In 2006 we bought a new rack mountable IBM computer system – Dual CPU / Dual core Opteron machines – 265 computers 1060 cores – Total power: • 1. 7 M Spec. Int 2000 according to IBM • 1. 2 M Spec. Int 2000 measured by us – Cost is ~ 0. 59 € / SI 2 K n This system is in the list of the top 500 world fastest computers (exact rank is 483) D. Boutigny 12/12/2006 5

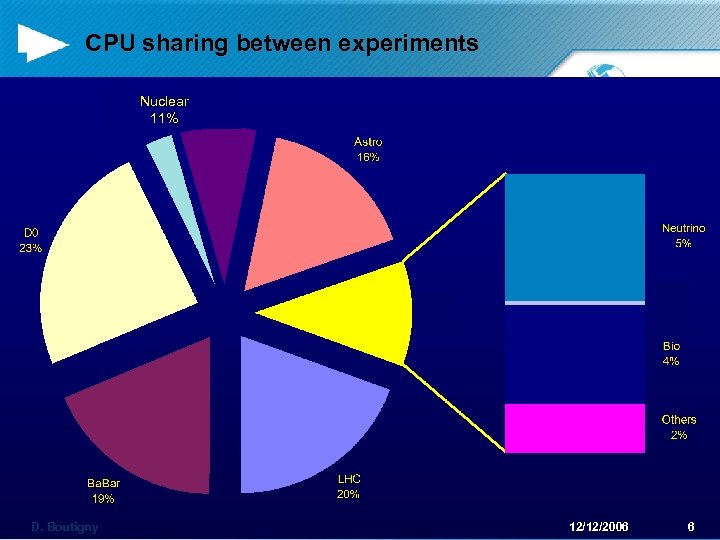

CPU sharing between experiments Nuclear 11% D. Boutigny 12/12/2006 6

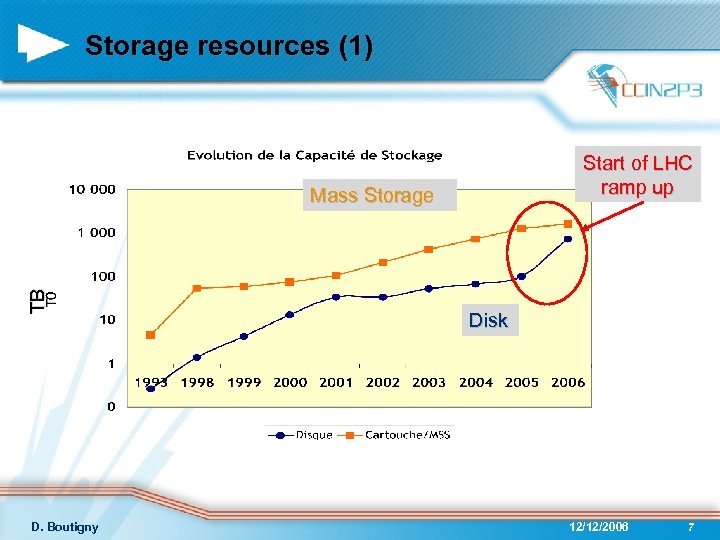

Storage resources (1) Start of LHC ramp up TB Mass Storage D. Boutigny Disk 12/12/2006 7

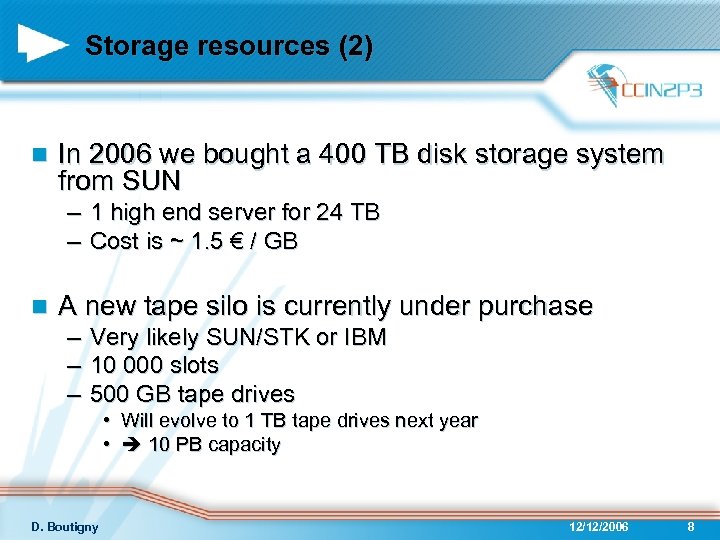

Storage resources (2) n In 2006 we bought a 400 TB disk storage system from SUN – 1 high end server for 24 TB – Cost is ~ 1. 5 € / GB n A new tape silo is currently under purchase – Very likely SUN/STK or IBM – 10 000 slots – 500 GB tape drives • Will evolve to 1 TB tape drives next year • 10 PB capacity D. Boutigny 12/12/2006 8

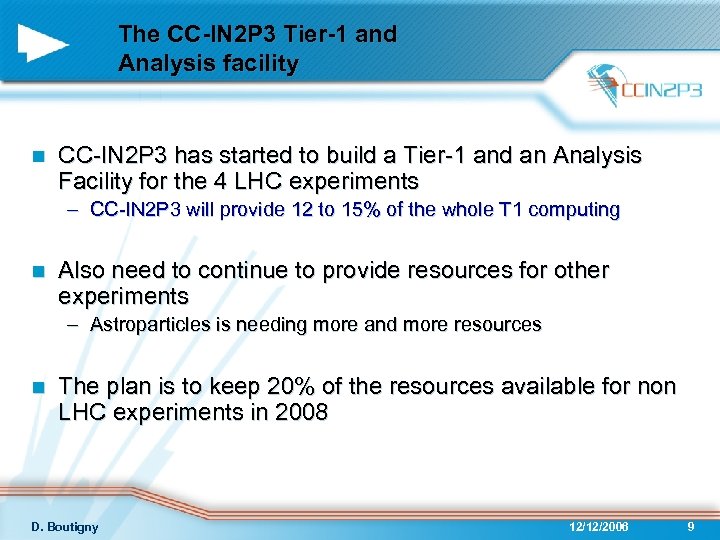

The CC-IN 2 P 3 Tier-1 and Analysis facility n CC-IN 2 P 3 has started to build a Tier-1 and an Analysis Facility for the 4 LHC experiments – CC-IN 2 P 3 will provide 12 to 15% of the whole T 1 computing n Also need to continue to provide resources for other experiments – Astroparticles is needing more and more resources n The plan is to keep 20% of the resources available for non LHC experiments in 2008 D. Boutigny 12/12/2006 9

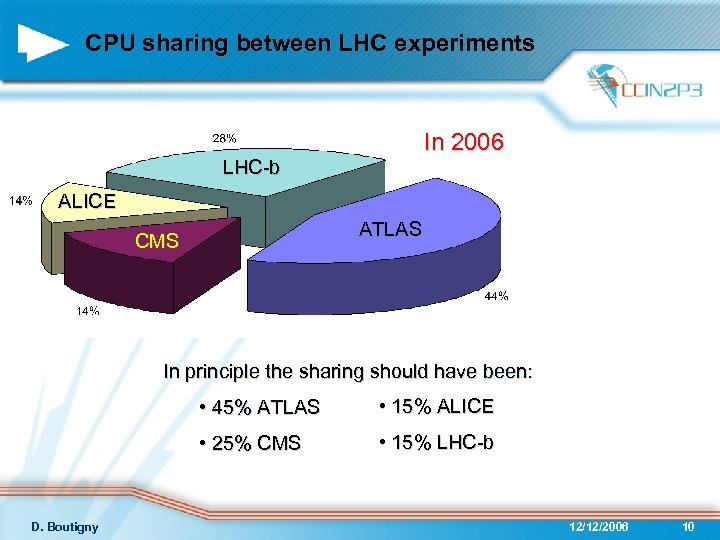

CPU sharing between LHC experiments In 2006 LHC-b ALICE ATLAS CMS In principle the sharing should have been: • 45% ATLAS • 25% CMS D. Boutigny • 15% ALICE • 15% LHC-b 12/12/2006 10

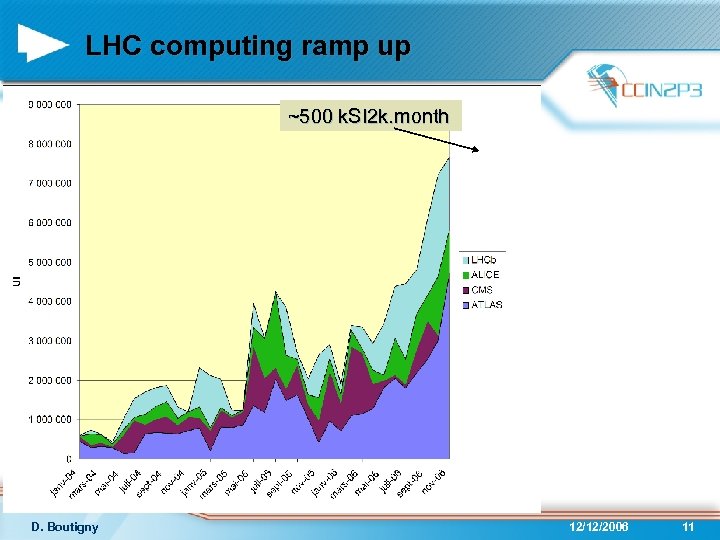

LHC computing ramp up ~500 k. SI 2 k. month D. Boutigny 12/12/2006 11

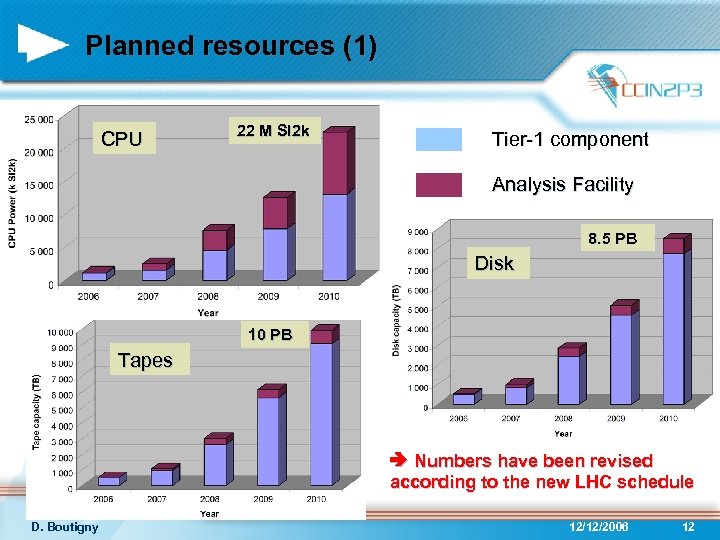

Planned resources (1) CPU 22 M SI 2 k Tier-1 component Analysis Facility 8. 5 PB Disk 10 PB Tapes Numbers have been revised according to the new LHC schedule D. Boutigny 12/12/2006 12

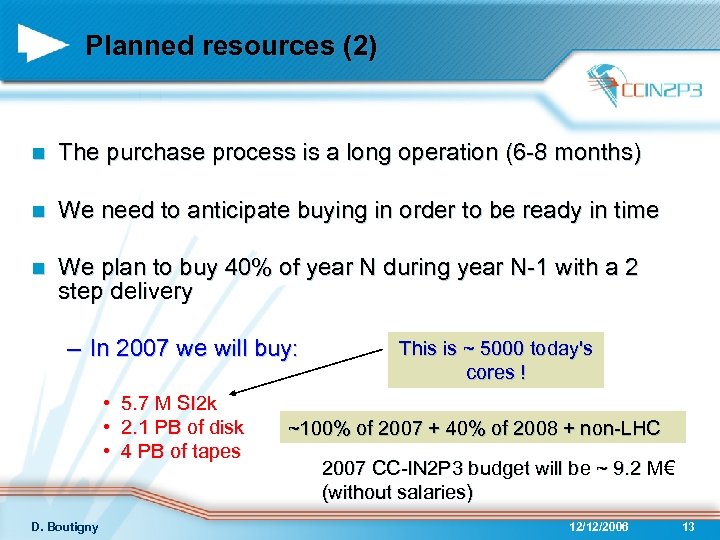

Planned resources (2) n The purchase process is a long operation (6 -8 months) n We need to anticipate buying in order to be ready in time n We plan to buy 40% of year N during year N-1 with a 2 step delivery – In 2007 we will buy: • 5. 7 M SI 2 k • 2. 1 PB of disk • 4 PB of tapes D. Boutigny This is ~ 5000 today's cores ! ~100% of 2007 + 40% of 2008 + non-LHC 2007 CC-IN 2 P 3 budget will be ~ 9. 2 M€ (without salaries) 12/12/2006 13

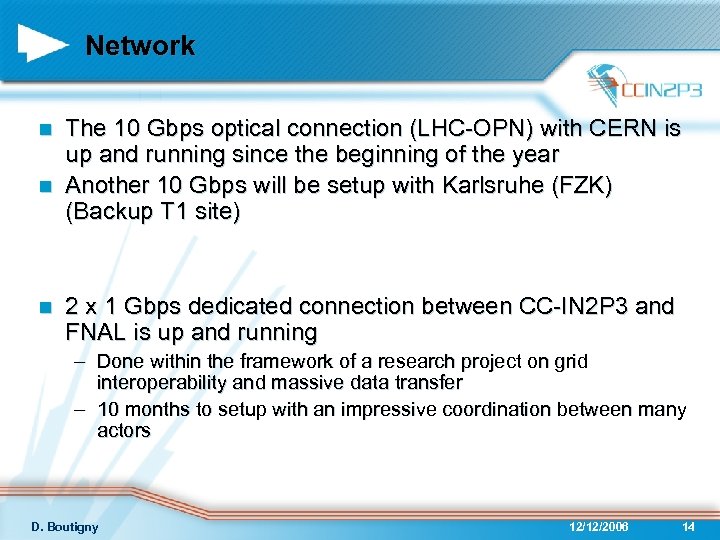

Network The 10 Gbps optical connection (LHC-OPN) with CERN is up and running since the beginning of the year n Another 10 Gbps will be setup with Karlsruhe (FZK) (Backup T 1 site) n n 2 x 1 Gbps dedicated connection between CC-IN 2 P 3 and FNAL is up and running – Done within the framework of a research project on grid interoperability and massive data transfer – 10 months to setup with an impressive coordination between many actors D. Boutigny 12/12/2006 14

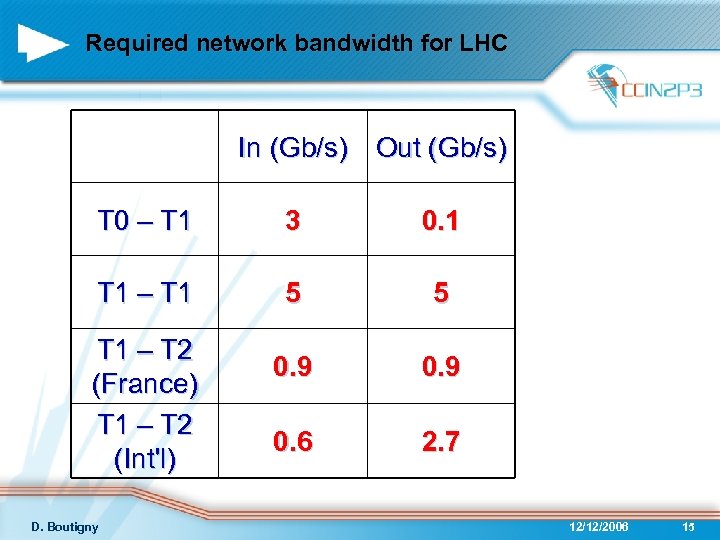

Required network bandwidth for LHC In (Gb/s) Out (Gb/s) T 0 – T 1 3 0. 1 T 1 – T 1 5 5 0. 9 0. 6 2. 7 T 1 – T 2 (France) T 1 – T 2 (Int'l) D. Boutigny 12/12/2006 15

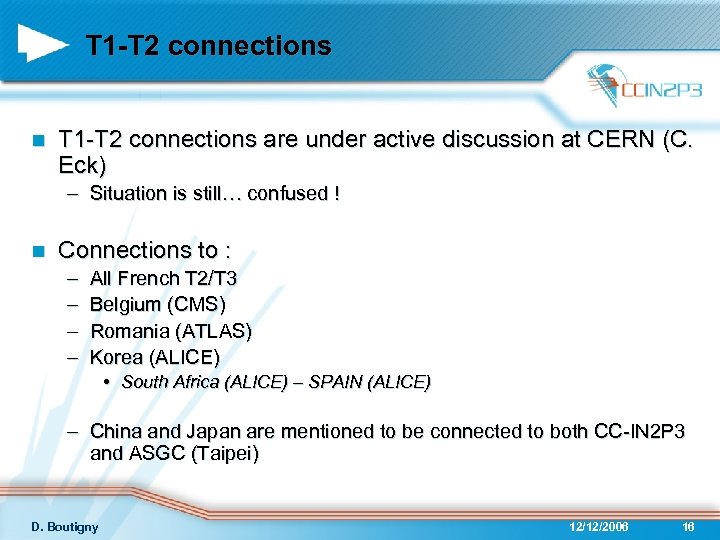

T 1 -T 2 connections n T 1 -T 2 connections are under active discussion at CERN (C. Eck) – Situation is still… confused ! n Connections to : – – All French T 2/T 3 Belgium (CMS) Romania (ATLAS) Korea (ALICE) • South Africa (ALICE) – SPAIN (ALICE) – China and Japan are mentioned to be connected to both CC-IN 2 P 3 and ASGC (Taipei) D. Boutigny 12/12/2006 16

Manpower and Operation n Running a Tier-1 requires manpower and a strong organization – In 2007 large chunks of new computing equipment will arrive every 2 -3 months n Manpower – Total CC-IN 2 P 3 manpower is 65 FTE – 3 computing engineer hired in 2006 – Will continue to hire 3 to 4 engineers / year up to 2008 n Operation – Grid operation is mainly done by people hired under EGEE contracts 12 EGEE people at CC-IN 2 P 3 ~5 FTE dedicated to Grid operation – Very strong involvement in LCG worldwide operation framework n User support is also crucial – We will put 1 engineer in support for each LHC experiment – At the moment we have 2. 5 FTE D. Boutigny 12/12/2006 17

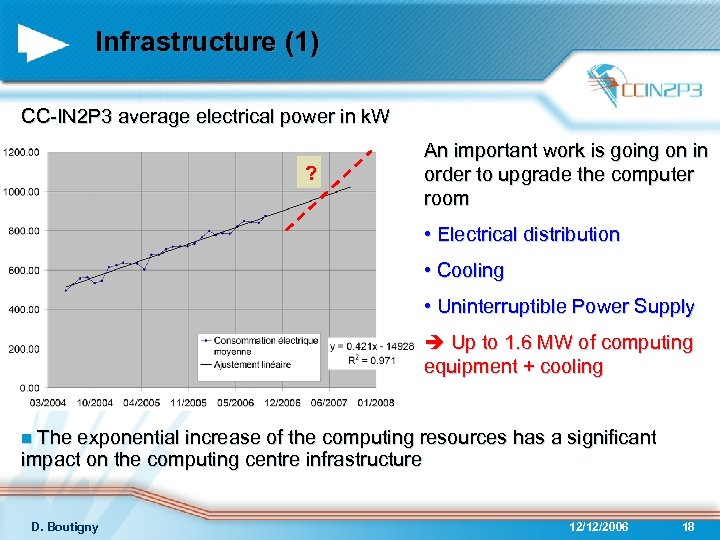

Infrastructure (1) CC-IN 2 P 3 average electrical power in k. W ? An important work is going on in order to upgrade the computer room • Electrical distribution • Cooling • Uninterruptible Power Supply Up to 1. 6 MW of computing equipment + cooling n The exponential increase of the computing resources has a significant impact on the computing centre infrastructure D. Boutigny 12/12/2006 18

Infrastructure (2) n The computer room upgrade is not enough to receive all the computing hardware n A project to build a new building with a new computer room is already started – 800 m 2 new computer room – Up to 2. 5 MW of computing equipment (on top of the existing 1 MW) D. Boutigny 12/12/2006 19

Conclusions CC-IN 2 P 3 is building up its Tier-1 + Analysis Facility n Substantial budget has been allocated for 2007 n – Clearly a strong priority for IN 2 P 3 and CEA/DAPNIA n Impact on infrastructure is huge n An efficient collaboration between China and CCIN 2 P 3 on computing matters requires to setup a good network connection now D. Boutigny 12/12/2006 20

afae5d81a4c5df1eaa9c1fcb2794634e.ppt