6ad92acd576ea9d6811f23d390dd8988.ppt

- Количество слайдов: 30

Distributed Computing and Data Analysis for CMS in view of the LHC startup Peter Kreuzer RWTH-Aachen IIIa International Symposium on Grid Computing (ISGC) Peter Kreuzer - CMS Computing & Analysis Taipei, April 9, 2008

Outline • Brief overview of Worldwide LHC Grid: WLCG • Distributed Computing Challenges at CMS – Simulation – Reconstruction – Analysis • The physicist view • The road to the LHC startup Peter Kreuzer - CMS Computing & Analysis 2

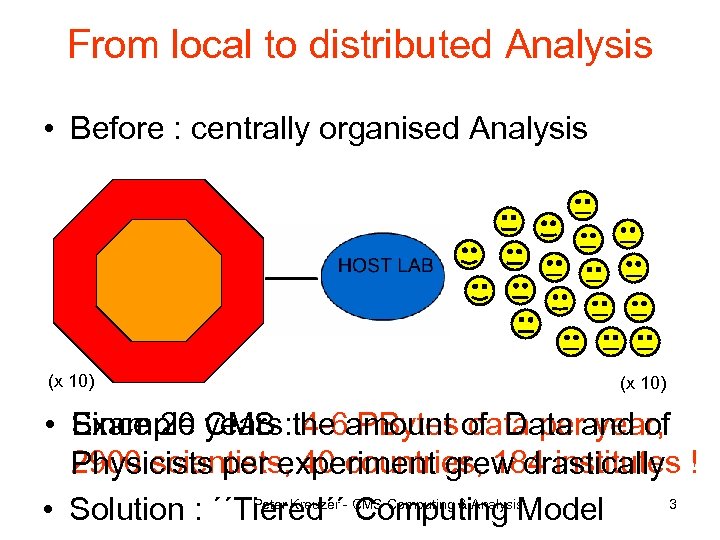

From local to distributed Analysis • Before : centrally organised Analysis (x 10) • Example CMS : the amount of Data and of Since 20 years 4 -6 PBytes data per year, 2900 scientists, 40 countries, 184 institutes ! Physicists per experiment grew drastically Peter Kreuzer 3 • Solution : ´´Tiered´´- CMS Computing & Analysis. Model Computing

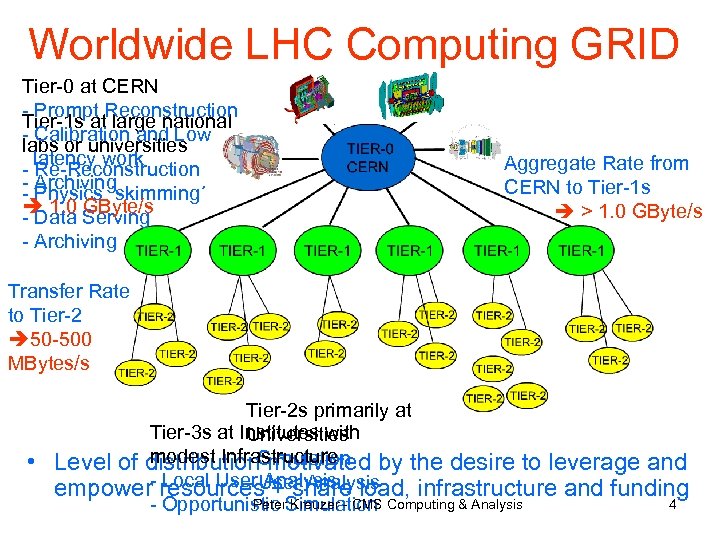

Worldwide LHC Computing GRID Tier-0 at CERN - Prompt Reconstruction Tier-1 s at large national - Calibration and Low labs or universities - latency work Re-Reconstruction - Archiving - Physics ´skimming´ 1. 0 Serving - Data GByte/s - Archiving Aggregate Rate from CERN to Tier-1 s > 1. 0 GByte/s Transfer Rate to Tier-2 50 -500 MBytes/s • Tier-2 s primarily at Tier-3 s at Institutes with Universities modest Infrastructure - motivated Level of distribution. Simulation by the desire to leverage and Local User Analysis - + share empower- resources. Analysis load, infrastructure and funding Peter Kreuzer - CMS 4 - Opportunistic Simulation Computing & Analysis

WLCG Infrastructure • EGEE Enabling Grid for E-Science • OSG Open Science Grid 1 1 Tier-0 + Tier-1 + 67 Tier-2 CMS : Tier-0 + 117 Tier-1 + 35 Tier-2 Peter Kreuzer - CMS Computing & Analysis 5 Tier-0 -- Tier-1: dedicated 10 Gbs Optical Network

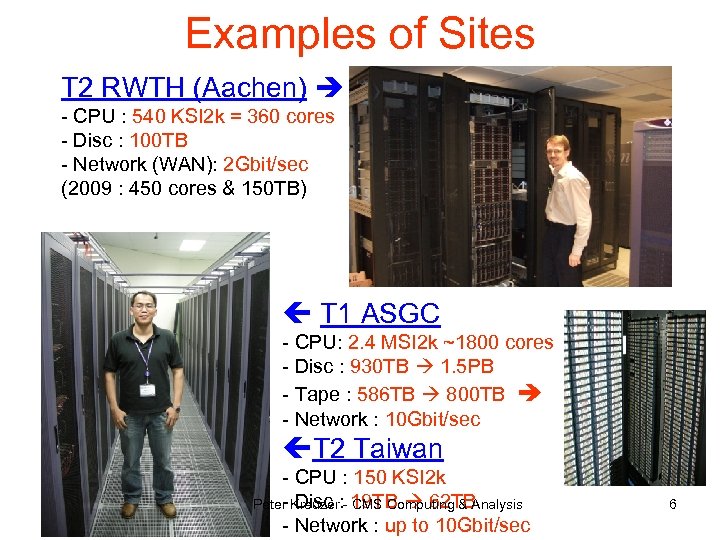

Examples of Sites T 2 RWTH (Aachen) - CPU : 540 KSI 2 k = 360 cores - Disc : 100 TB - Network (WAN): 2 Gbit/sec (2009 : 450 cores & 150 TB) T 1 ASGC - CPU: 2. 4 MSI 2 k ~1800 cores - Disc : 930 TB 1. 5 PB - Tape : 586 TB 800 TB - Network : 10 Gbit/sec T 2 Taiwan - CPU : 150 KSI 2 k - Disc CMS Computing & Analysis Peter Kreuzer: - 19 TB 62 TB - Network : up to 10 Gbit/sec 6

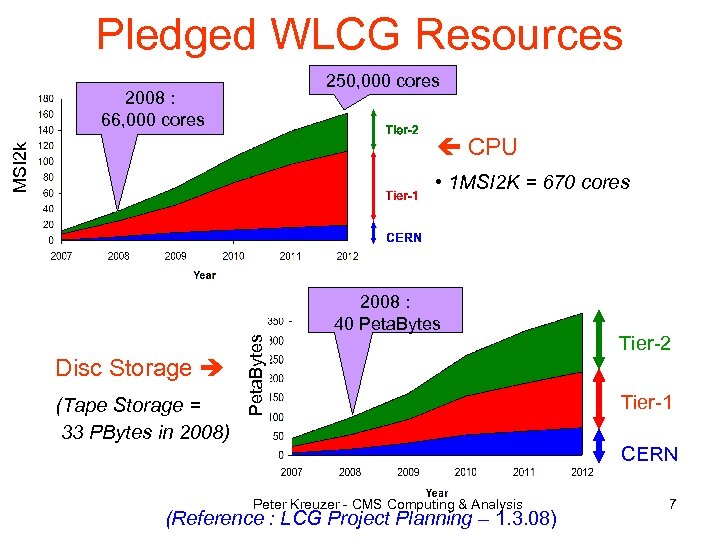

Pledged WLCG Resources 250, 000 cores 2008 : 66, 000 cores MSI 2 k CPU • 1 MSI 2 K = 670 cores Disc Storage (Tape Storage = 33 PBytes in 2008) Peta. Bytes 2008 : 40 Peta. Bytes Tier-2 Tier-1 CERN Peter Kreuzer - CMS Computing & Analysis (Reference : LCG Project Planning – 1. 3. 08) 7

Challenges for Experiments : Example CMS • Scale-up and test distributed Computing Infrastructure – – – Mass Storage Systems and Computing Elements Data Transfer Calibration and Reconstruction Event ´skimming´ Simulation Distributed Data Analysis • Test CMS Software Analysis Framework • Operate in quasi-real data taking conditions and simulateously at various Tier levels Computing & Software Analysis (CSA) Challenge Peter Kreuzer - CMS Computing & Analysis 8

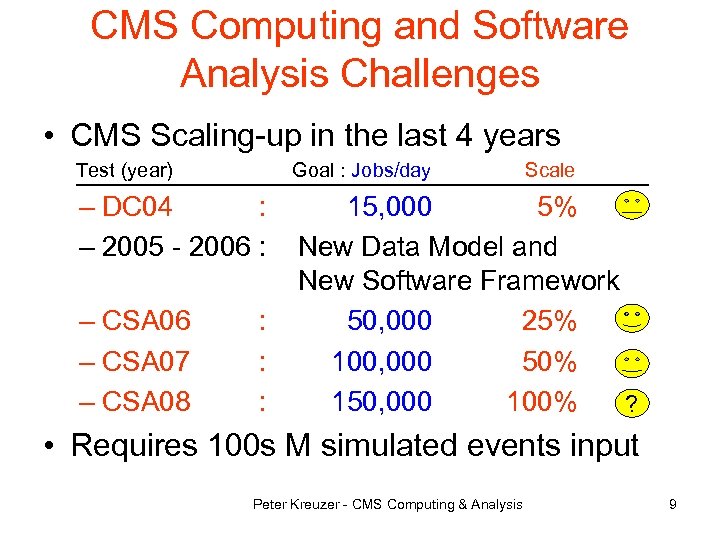

CMS Computing and Software Analysis Challenges • CMS Scaling-up in the last 4 years Test (year) Goal : Jobs/day – DC 04 : – 2005 - 2006 : 15, 000 5% New Data Model and New Software Framework 50, 000 25% 100, 000 50% 150, 000 100% ? – CSA 06 – CSA 07 – CSA 08 : : : Scale • Requires 100 s M simulated events input Peter Kreuzer - CMS Computing & Analysis 9

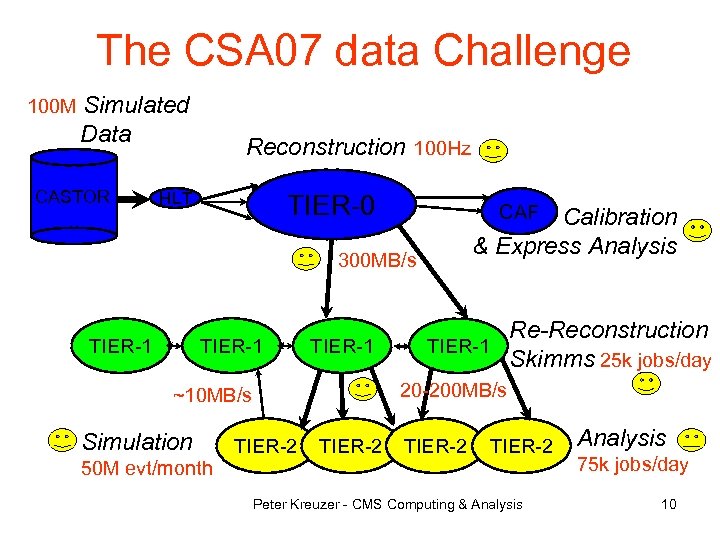

The CSA 07 data Challenge 100 M Simulated Data CASTOR Reconstruction 100 Hz HLT TIER-0 CAF Calibration & Express Analysis 300 MB/s TIER-1 20 -200 MB/s ~10 MB/s Simulation 50 M evt/month Re-Reconstruction TIER-1 Skimms 25 k jobs/day TIER-2 Peter Kreuzer - CMS Computing & Analysis 75 k jobs/day 10

In this presentation • Mainly covering CMS Simulation, Reconstruction and Analysis challenges • Data transfers challenges covered in talk by Daniele Bonacorsi during this session Peter Kreuzer - CMS Computing & Analysis 11

CMS Simulation System Tier-1 CMS Physicist << Please << Where are simulate new my data ? >> physics >> Tier-2 Global Data Bookkeeping (DBS) Prod. Request Prod. Agent Tier-2 Production Manager Tier-2 Prod. Agent GRID Tier-2 Peter Kreuzer - CMS Computing & Analysis Tier-2 12

Prod. Agent workflows 2) Merging: 1) Processing: Local DBS Processing Tier-1 Prod. Agent Grid WMS Local DBS SE Processing Tier-2 Prod. Agent Grid WMS Merging Tier-1 Merging Tier-2 SE SE Merging Processing Tier-2 SE Small output file from Processing job SE SE Large output file from Ph. EDEx Merge job • Data processing / bookkeeping / tracking / monitoring in local-scope • Output promoted to global-scope DBS & Data transfer system Ph. EDEx • Scaling achieved by running in parallel multiple Prod. Agent instances Peter Kreuzer - CMS Computing & Analysis 13

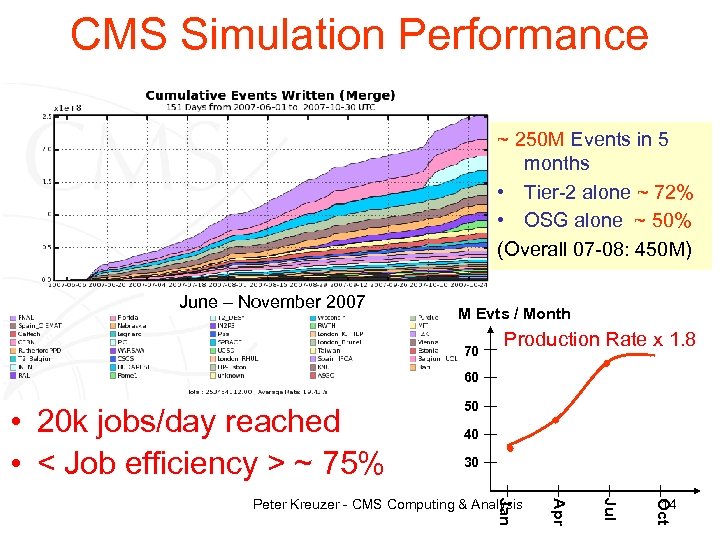

CMS Simulation Performance ~ 250 M Events in 5 months • Tier-2 alone ~ 72% • OSG alone ~ 50% (Overall 07 -08: 450 M) June – November 2007 M Evts / Month 70 Production Rate x 1. 8 60 • 20 k jobs/day reached • < Job efficiency > ~ 75% 50 40 30 14 Oct Jul Apr Jan Peter Kreuzer - CMS Computing & Analysis

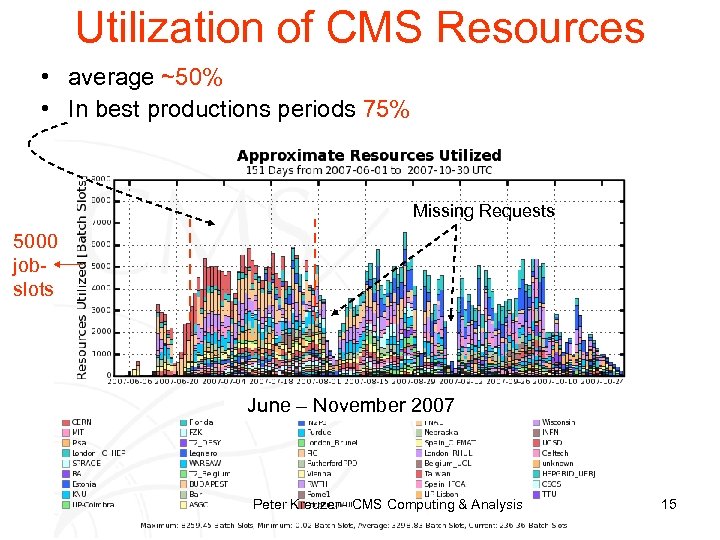

Utilization of CMS Resources • average ~50% • In best productions periods 75% Missing Requests 5000 jobslots June – November 2007 Peter Kreuzer - CMS Computing & Analysis 15

CSA 07 Simulation lessons • Major boost in scale and reliability of production machinery • Still too many manual operations. From 2008 on: – Deploy Prod. Manager component (in CSA 07 was ´human´ !) – Deploy Resource Monitor – Deploy Clean. Up. Schedule component • Further improvments in scale and reliability – g. Lite WMS bulk submission : 20 k jobs/day with 1 WMS server – Condor-G Job. Router + bulk submission : 100 k jobs/day and can saturate all OSG resources in ~1 hour. – Threaded Job. Tracking and Central Job Log Archival • Introduced task-force for CMS Site Commissioning – help detect site issues via stress-test tool (enforce metrics) – couple site-state to production and analysis machinery • Regular CMS Site Availability Monitoring (SAM) checks Peter Kreuzer - CMS Computing & Analysis 16

CMS Site Availability Monitoring Availability Ranking (ARDA ´Dashboard´) 03/22/08 04/03/08 0% 100% Important tool to protect CMS use cases at sites Peter Kreuzer - CMS Computing & Analysis 17

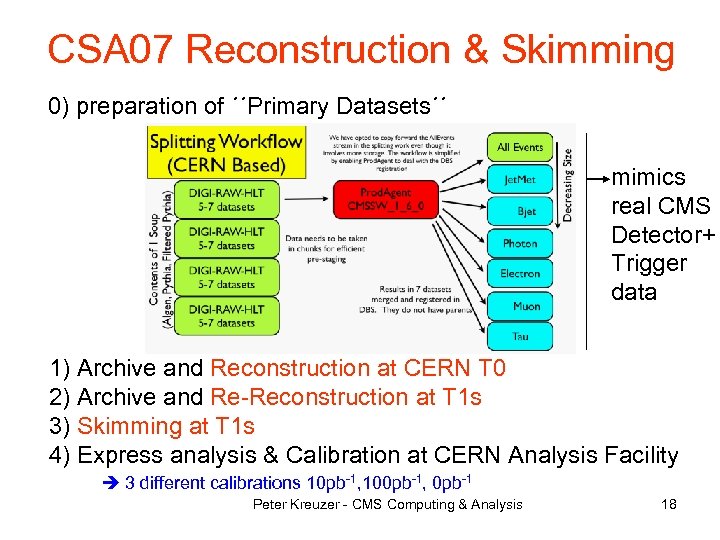

CSA 07 Reconstruction & Skimming 0) preparation of ´´Primary Datasets´´ mimics real CMS Detector+ Trigger data 1) Archive and Reconstruction at CERN T 0 2) Archive and Re-Reconstruction at T 1 s 3) Skimming at T 1 s 4) Express analysis & Calibration at CERN Analysis Facility 3 different calibrations 10 pb-1, 100 pb-1, 0 pb-1 Peter Kreuzer - CMS Computing & Analysis 18

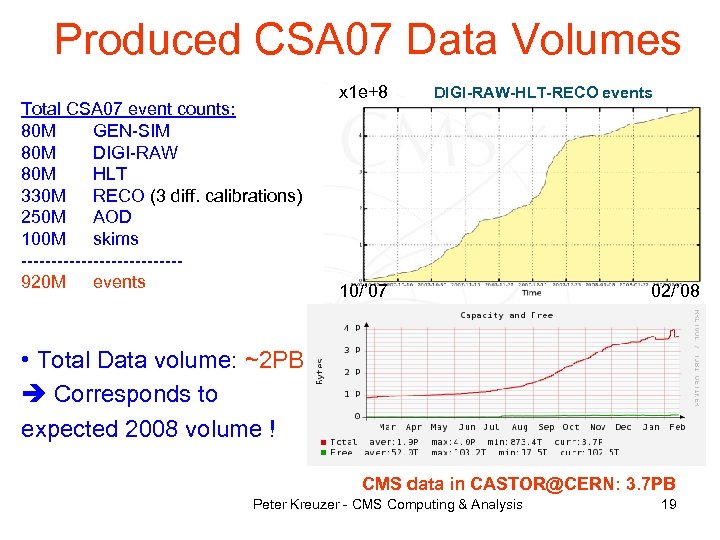

Produced CSA 07 Data Volumes Total CSA 07 event counts: 80 M GEN-SIM 80 M DIGI-RAW 80 M HLT 330 M RECO (3 diff. calibrations) 250 M AOD 100 M skims -------------920 M events x 1 e+8 DIGI-RAW-HLT-RECO events 10/’ 07 02/’ 08 • Total Data volume: ~2 PB Corresponds to expected 2008 volume ! CMS data in CASTOR@CERN: 3. 7 PB Peter Kreuzer - CMS Computing & Analysis 19

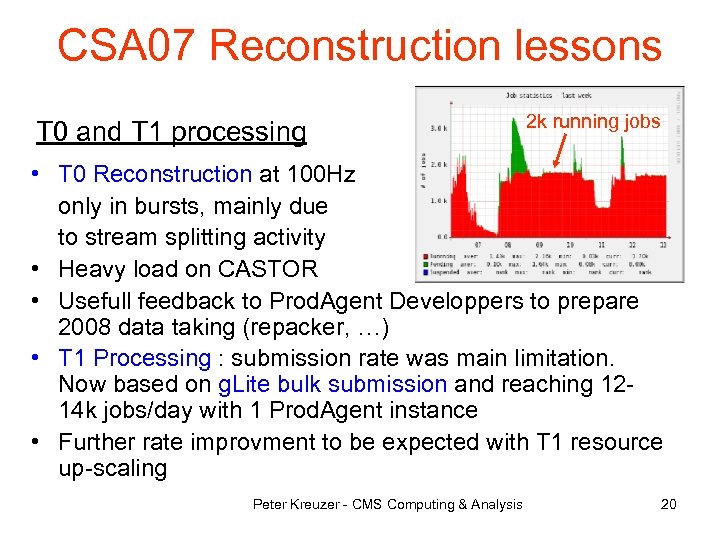

CSA 07 Reconstruction lessons T 0 and T 1 processing 2 k running jobs • T 0 Reconstruction at 100 Hz only in bursts, mainly due to stream splitting activity • Heavy load on CASTOR • Usefull feedback to Prod. Agent Developpers to prepare 2008 data taking (repacker, …) • T 1 Processing : submission rate was main limitation. Now based on g. Lite bulk submission and reaching 1214 k jobs/day with 1 Prod. Agent instance • Further rate improvment to be expected with T 1 resource up-scaling Peter Kreuzer - CMS Computing & Analysis 20

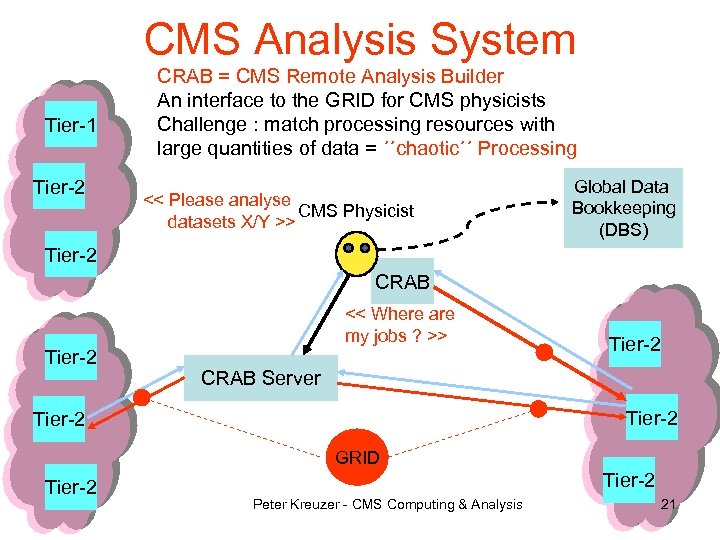

CMS Analysis System Tier-1 Tier-2 CRAB = CMS Remote Analysis Builder An interface to the GRID for CMS physicists Challenge : match processing resources with large quantities of data = ´´chaotic´´ Processing << Please analyse CMS Physicist datasets X/Y >> Global Data Bookkeeping (DBS) Tier-2 CRAB << Where are my jobs ? >> Tier-2 CRAB Server Tier-2 GRID Tier-2 Peter Kreuzer - CMS Computing & Analysis 21

CRAB Architecture • Easy and transparent means for CMS users to submit analysis jobs via the GRID (LCG RB, g. Lite WMS, Condor-G) • CSA 07 analysis: direct submission by user to GRID. Simple, but lacking automation and scalability 2008 : CRAB server • Other new feature: local DBS for “private” users Peter Kreuzer - CMS Computing & Analysis 22

CSA 07 Analysis • 100 k jobs/day not achieved - mainly due to lacking data during the challenge - still limitted by data distribution: 55% jobs at 3 largest Tier-1 s - and failure rate too high 53% Successful Jobs 20% failed Jobs 27% Unknown Main causes: - data-access - remote stage out - manual user settings 20 k jobs/day achieved Number of jobs Peter Kreuzer - submissions + regularly ~30 k/day Job. Robot. CMS Computing & Analysis 23

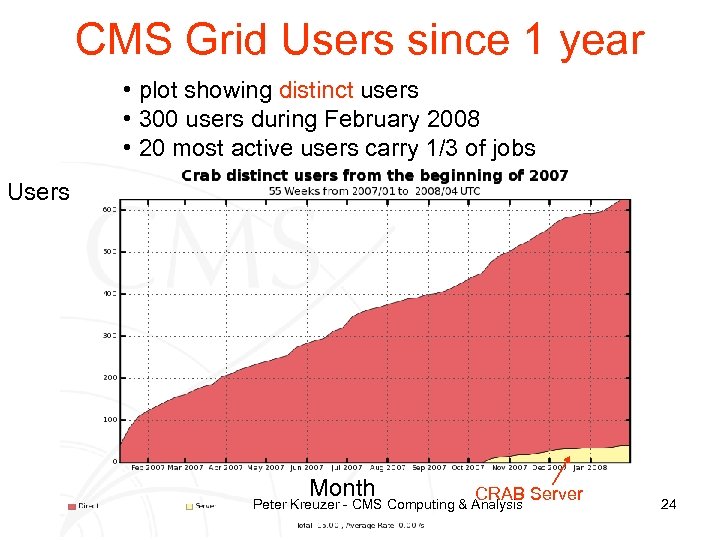

CMS Grid Users since 1 year • plot showing distinct users • 300 users during February 2008 • 20 most active users carry 1/3 of jobs Users Month CRAB Server Peter Kreuzer - CMS Computing & Analysis 24

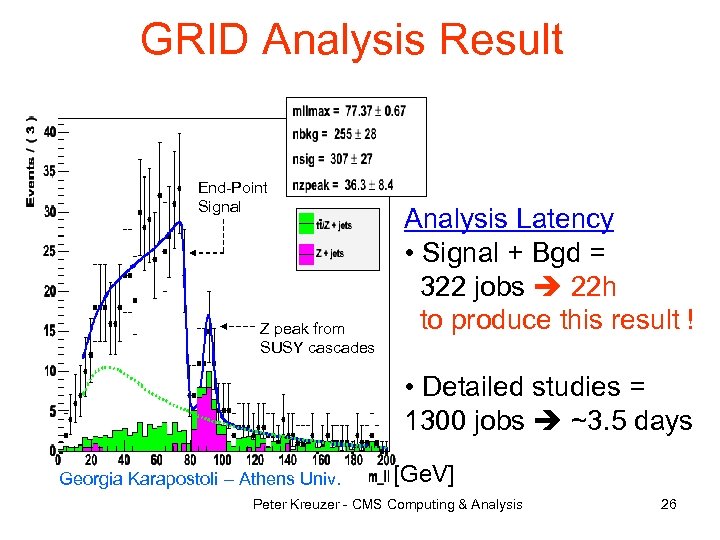

The Physicist View • SUSY Search in di-lepton + jets + MET • Goal : Simulate excess over Standard Model (´LM 1´ at 1 fb-1) • Infrastructure – 1 desktop PC – CMS Software Environment (´CMSSW´ , ´CRAB´, ´Discovery´ GUI, …) – GRID Certificate + member of a Virtual Organisation (CMS) • Input data (CSA 07 simulation/production) – Signal (RECO) : 120 k events = 360 GB – Skimmed Background (AOD) : 3. 3 M events = 721 GB ~1. 1 TB • WW / WZ / ZZ / single top • ttbar / Z / W + jets – Unskimmed Background : 27 M events = 4 TB (for detailed studies only) • Location of input data – T 0/T 1 : CERN (CH), FNAL (US), FZK (Germany) – T 2 : Legnaro (Italy), UCSD (US), IFCA (Spain) Analysis Peter Kreuzer - CMS Computing & 25

GRID Analysis Result End-Point Signal Z peak from SUSY cascades Analysis Latency • Signal + Bgd = 322 jobs 22 h to produce this result ! • Detailed studies = 1300 jobs ~3. 5 days Georgia Karapostoli – Athens Univ. [Ge. V] Peter Kreuzer - CMS Computing & Analysis 26

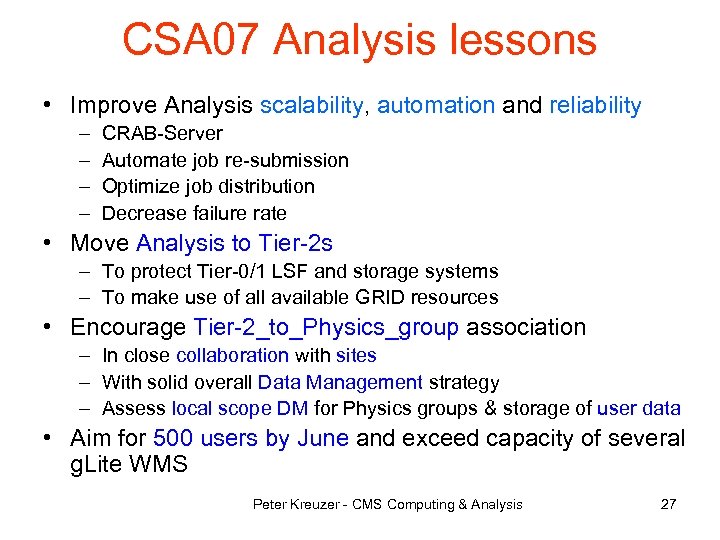

CSA 07 Analysis lessons • Improve Analysis scalability, automation and reliability – – CRAB-Server Automate job re-submission Optimize job distribution Decrease failure rate • Move Analysis to Tier-2 s – To protect Tier-0/1 LSF and storage systems – To make use of all available GRID resources • Encourage Tier-2_to_Physics_group association – In close collaboration with sites – With solid overall Data Management strategy – Assess local scope DM for Physics groups & storage of user data • Aim for 500 users by June and exceed capacity of several g. Lite WMS Peter Kreuzer - CMS Computing & Analysis 27

Goals for CSA 08 (May ’ 08) • “Play through” first 3 months of data taking • Simulation – 150 M events at 1 pb-1 (“S 43”) – 150 M events at 10 pb-1 (“S 156”) • Tier-0 : Prompt reconstruction – S 43 with startup-calibration – S 156 with improved calibration • CERN Analysis Facility (CAF) – Demonstrate low turn-around Alignment&Calibration workflows – Coordinated and time-critical physics analyses – Proof-of-principle of CAF Data and Workflow Managment Systems • Tier-1 : Re-Reconstruction with new calibration constants – S 43 : with improved constants based on 1 pb-1 – S 156 : with improved constants based on 10 pb-1 • Tier-2 : – i. CSA 08 simulation Peter Kreuzer - CMS Computing & Analysis (GEN-SIM-DIGI-RAW-HLT) – repeat CAF-based Physics analyses with Re-Reco data ? 28

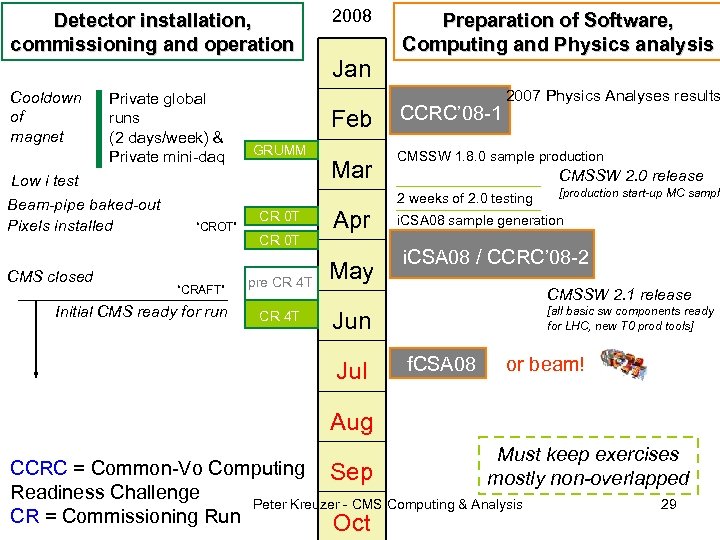

Detector installation, commissioning and operation Cooldown of magnet Private global runs (2 days/week) & Private mini-daq CMS closed Jan Feb GRUMM Low i test Beam-pipe baked-out Pixels installed 2008 Mar Preparation of Software, Computing and Physics analysis CCRC’ 08 -1 2007 Physics Analyses results CMSSW 1. 8. 0 sample production CMSSW 2. 0 release 2 weeks of 2. 0 testing “CROT” “CRAFT” Initial CMS ready for run CR 0 T pre CR 4 T Apr May [production start-up MC sample i. CSA 08 sample generation i. CSA 08 / CCRC’ 08 -2 CMSSW 2. 1 release [all basic sw components ready for LHC, new T 0 prod tools] Jun Jul f. CSA 08 or beam! Aug Must keep exercises CCRC = Common-Vo Computing Sep mostly non-overlapped Readiness Challenge Peter Kreuzer - CMS Computing & Analysis 29 CR = Commissioning Run Oct

Where do we stand ? • WLCG : major up-scaling since 2 years ! • CMS : impressive results and valuable lessons from CSA 07 – – Major boost in Simulation Produced ~2 PBytes data in T 0/T 1 Reconstruction and Skimming Analysis : number of CMS Grid-users ramping up fast ! Software : addressed memory footprint and data size issues • Further Challenges for CMS : scale from 50% to 100% – – Simultaneous and continuous operations at all Tier levels Analysis distribution and automation Transfer rates (see talk by D. Bonacorsi) Upscale and commission the CERN Analysis Facility (CAF) CSA 08, CCRC 08, Commissioning Runs • Challenging and motivating goals in view of Day-1 LHC ! Peter Kreuzer - CMS Computing & Analysis 30

6ad92acd576ea9d6811f23d390dd8988.ppt