75e7d6124516571c0e73567b3d798ff7.ppt

- Количество слайдов: 33

Dependency structure and cognition Richard Hudson Depling 2013, Prague

Dependency structure and cognition Richard Hudson Depling 2013, Prague

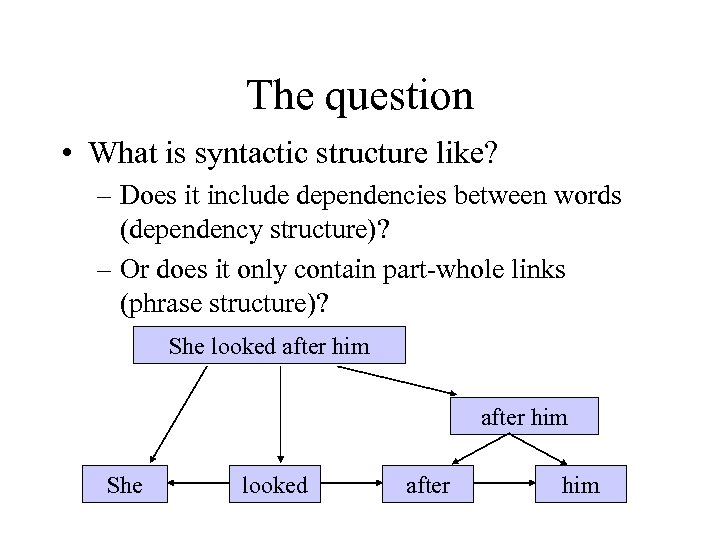

The question • What is syntactic structure like? – Does it include dependencies between words (dependency structure)? – Or does it only contain part-whole links (phrase structure)? She looked after him

The question • What is syntactic structure like? – Does it include dependencies between words (dependency structure)? – Or does it only contain part-whole links (phrase structure)? She looked after him

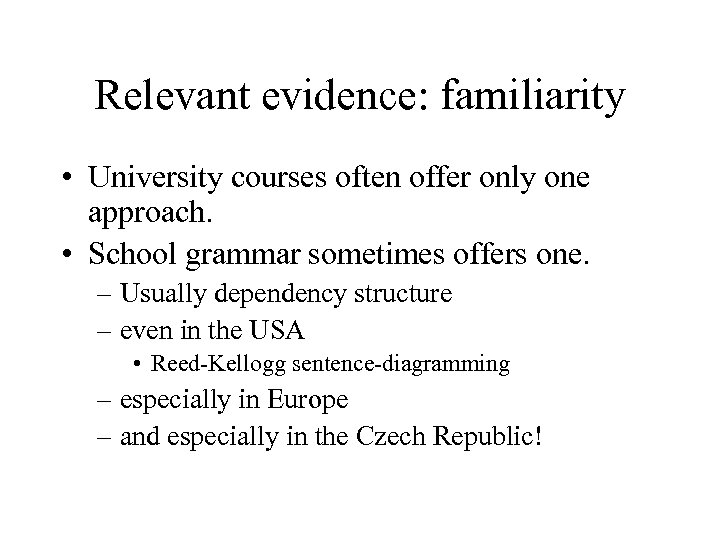

Relevant evidence: familiarity • University courses often offer only one approach. • School grammar sometimes offers one. – Usually dependency structure – even in the USA • Reed-Kellogg sentence-diagramming – especially in Europe – and especially in the Czech Republic!

Relevant evidence: familiarity • University courses often offer only one approach. • School grammar sometimes offers one. – Usually dependency structure – even in the USA • Reed-Kellogg sentence-diagramming – especially in Europe – and especially in the Czech Republic!

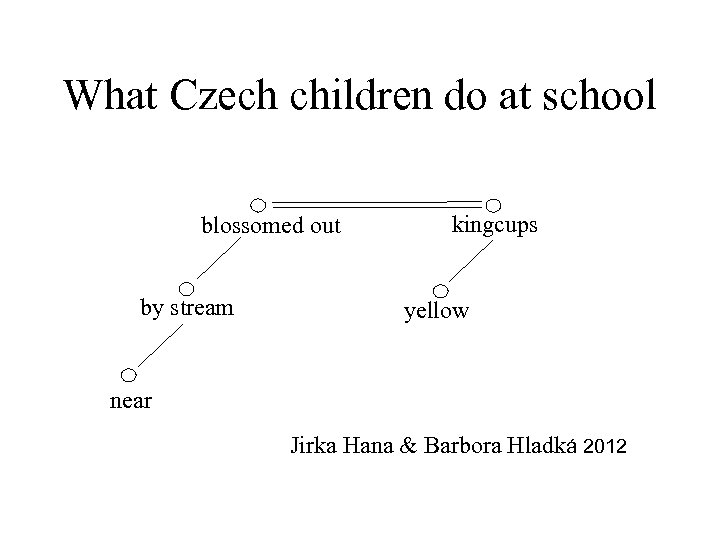

What Czech children do at school blossomed out by stream kingcups yellow near Jirka Hana & Barbora Hladká 2012

What Czech children do at school blossomed out by stream kingcups yellow near Jirka Hana & Barbora Hladká 2012

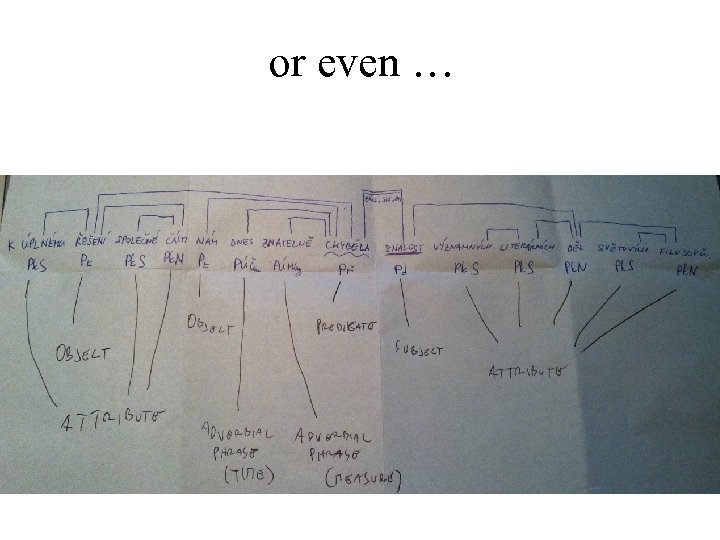

or even …

or even …

Relevant evidence: convenience • Dependency structure is popular in computational linguistics. • Maybe because of its simplicity: – few nodes – little but orthographic words • Good for lexical cooccurrence relations

Relevant evidence: convenience • Dependency structure is popular in computational linguistics. • Maybe because of its simplicity: – few nodes – little but orthographic words • Good for lexical cooccurrence relations

Relevant evidence: cognition • • • Language competence is memory Language processing is thinking Memory and thinking are part of cognition So what do we know about cognition? A. Very generally, cognition is not simple – so maybe syntactic structures aren't in fact simple?

Relevant evidence: cognition • • • Language competence is memory Language processing is thinking Memory and thinking are part of cognition So what do we know about cognition? A. Very generally, cognition is not simple – so maybe syntactic structures aren't in fact simple?

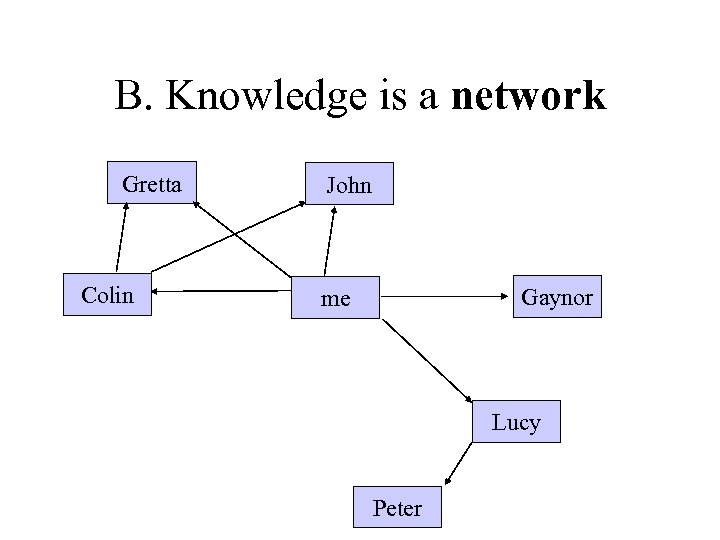

B. Knowledge is a network Gretta Colin John Gaynor me Lucy Peter

B. Knowledge is a network Gretta Colin John Gaynor me Lucy Peter

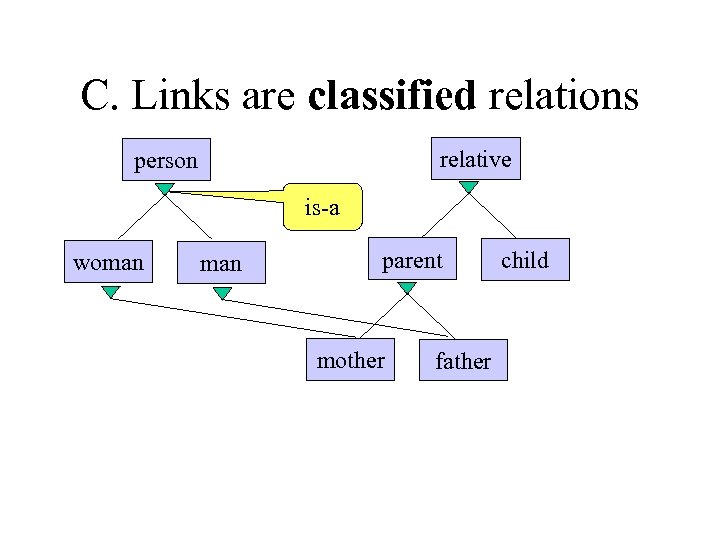

C. Links are classified relations relative person is-a woman parent mother father child

C. Links are classified relations relative person is-a woman parent mother father child

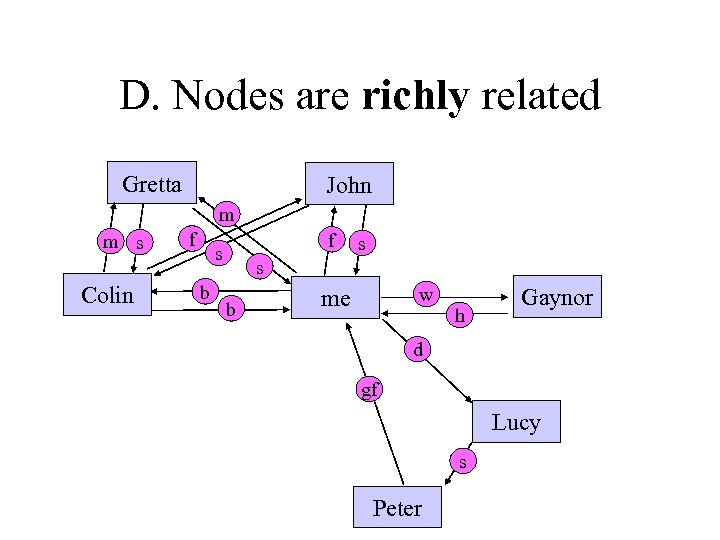

D. Nodes are richly related Gretta John m m s Colin f f s b s s b w me h Gaynor d gf Lucy s Peter

D. Nodes are richly related Gretta John m m s Colin f f s b s s b w me h Gaynor d gf Lucy s Peter

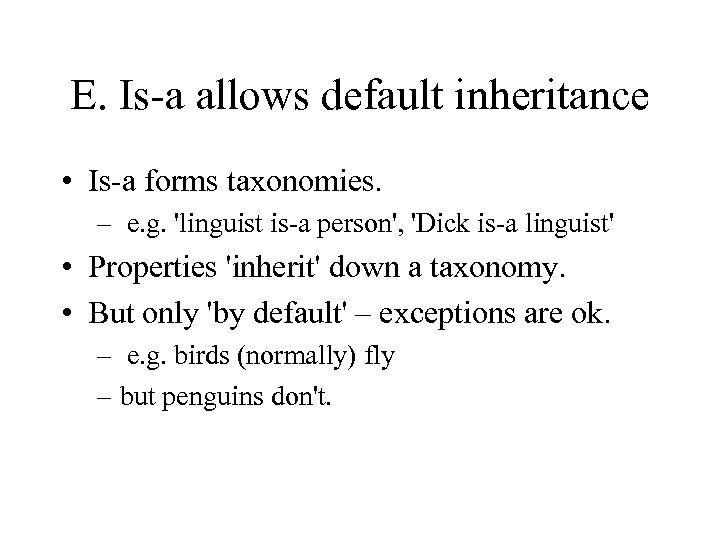

E. Is-a allows default inheritance • Is-a forms taxonomies. – e. g. 'linguist is-a person', 'Dick is-a linguist' • Properties 'inherit' down a taxonomy. • But only 'by default' – exceptions are ok. – e. g. birds (normally) fly – but penguins don't.

E. Is-a allows default inheritance • Is-a forms taxonomies. – e. g. 'linguist is-a person', 'Dick is-a linguist' • Properties 'inherit' down a taxonomy. • But only 'by default' – exceptions are ok. – e. g. birds (normally) fly – but penguins don't.

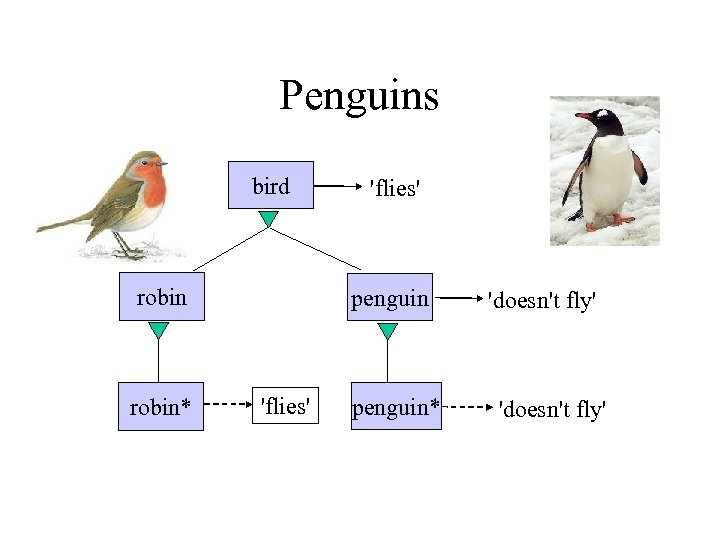

Penguins bird robin* 'flies' penguin* 'doesn't fly'

Penguins bird robin* 'flies' penguin* 'doesn't fly'

Cognitivism • 'Cognitivism' – 'Language is an example of ordinary cognition' • So all our general cognitive abilities are available for language – and we have no special language abilities. • Cognitivism matters for linguistic theory.

Cognitivism • 'Cognitivism' – 'Language is an example of ordinary cognition' • So all our general cognitive abilities are available for language – and we have no special language abilities. • Cognitivism matters for linguistic theory.

Some consequences of cognitivism 1. 2. 3. 4. 5. 6. Word-word dependencies are real. 'Deep' and 'surface' properties combine. Mutual dependency is ok. Dependents create new word tokens. Extra word tokens allow raising. But lowering may be ok too.

Some consequences of cognitivism 1. 2. 3. 4. 5. 6. Word-word dependencies are real. 'Deep' and 'surface' properties combine. Mutual dependency is ok. Dependents create new word tokens. Extra word tokens allow raising. But lowering may be ok too.

1. Word-word dependencies are real • Do word-word dependencies exist (in our minds)? – Why not? – Compare social relations between individuals. • What about phrases? – Why not? – But maybe only their boundaries are relevant? – They're not classified, so no unary branching.

1. Word-word dependencies are real • Do word-word dependencies exist (in our minds)? – Why not? – Compare social relations between individuals. • What about phrases? – Why not? – But maybe only their boundaries are relevant? – They're not classified, so no unary branching.

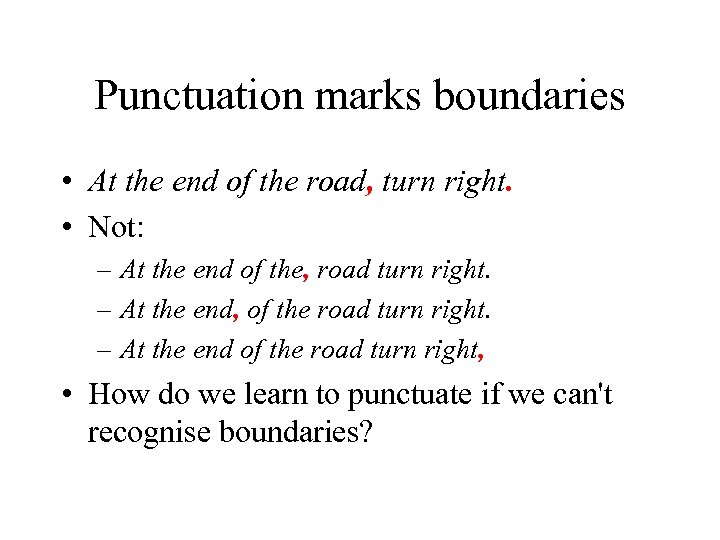

Punctuation marks boundaries • At the end of the road, turn right. • Not: – At the end of the, road turn right. – At the end, of the road turn right. – At the end of the road turn right, • How do we learn to punctuate if we can't recognise boundaries?

Punctuation marks boundaries • At the end of the road, turn right. • Not: – At the end of the, road turn right. – At the end, of the road turn right. – At the end of the road turn right, • How do we learn to punctuate if we can't recognise boundaries?

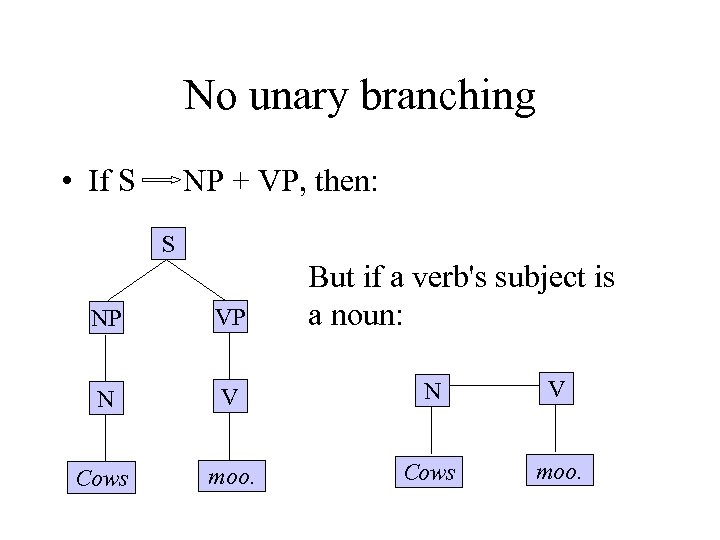

No unary branching • If S NP + VP, then: S But if a verb's subject is a noun: NP VP N V Cows moo.

No unary branching • If S NP + VP, then: S But if a verb's subject is a noun: NP VP N V Cows moo.

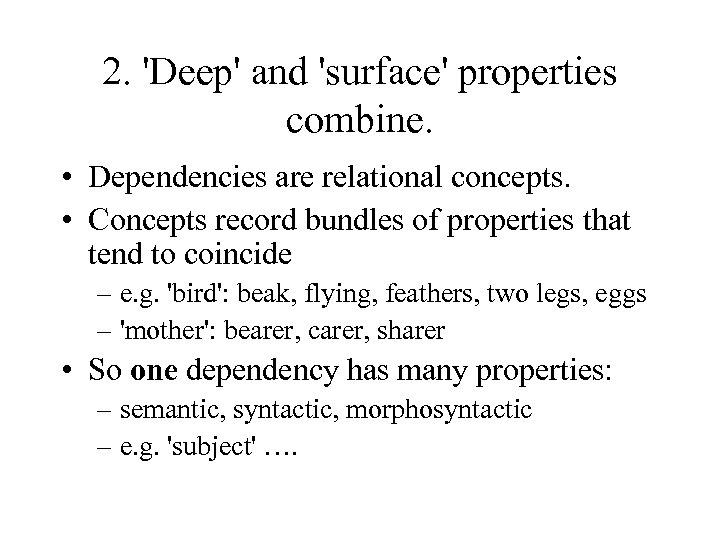

2. 'Deep' and 'surface' properties combine. • Dependencies are relational concepts. • Concepts record bundles of properties that tend to coincide – e. g. 'bird': beak, flying, feathers, two legs, eggs – 'mother': bearer, carer, sharer • So one dependency has many properties: – semantic, syntactic, morphosyntactic – e. g. 'subject' ….

2. 'Deep' and 'surface' properties combine. • Dependencies are relational concepts. • Concepts record bundles of properties that tend to coincide – e. g. 'bird': beak, flying, feathers, two legs, eggs – 'mother': bearer, carer, sharer • So one dependency has many properties: – semantic, syntactic, morphosyntactic – e. g. 'subject' ….

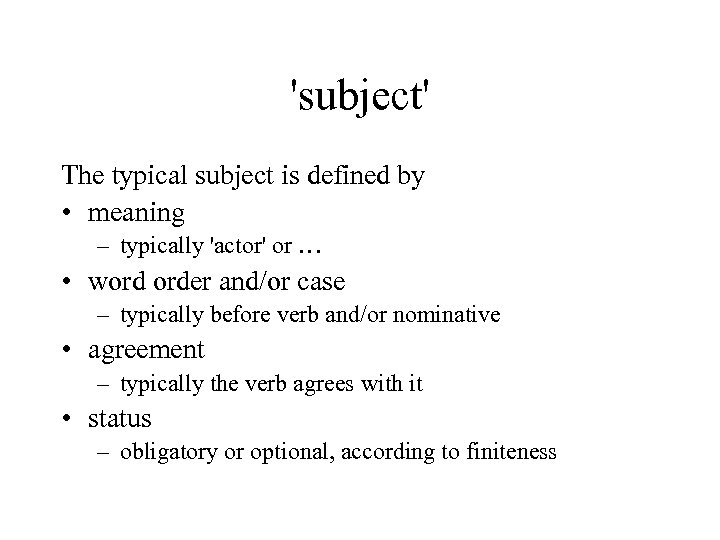

'subject' The typical subject is defined by • meaning – typically 'actor' or … • word order and/or case – typically before verb and/or nominative • agreement – typically the verb agrees with it • status – obligatory or optional, according to finiteness

'subject' The typical subject is defined by • meaning – typically 'actor' or … • word order and/or case – typically before verb and/or nominative • agreement – typically the verb agrees with it • status – obligatory or optional, according to finiteness

So … • Cognition suggests that 'deep' and 'surface' properties should be combined – not separated • They are in harmony by default – but exceptionally they may be out of harmony – this is allowed by default inheritance

So … • Cognition suggests that 'deep' and 'surface' properties should be combined – not separated • They are in harmony by default – but exceptionally they may be out of harmony – this is allowed by default inheritance

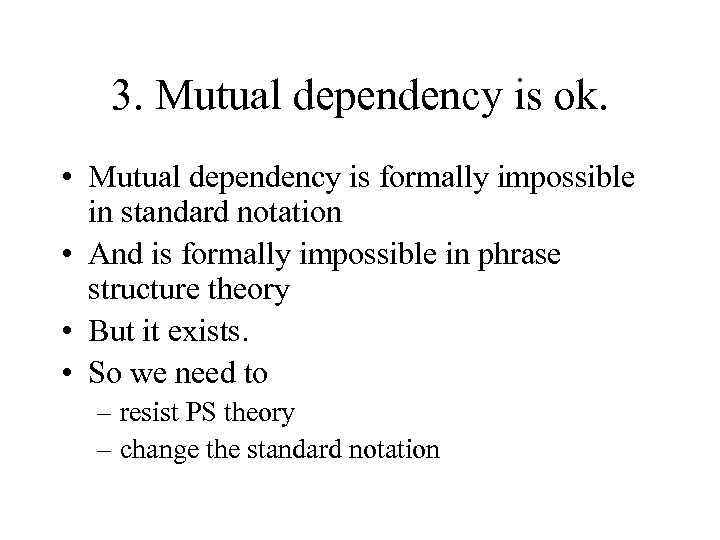

3. Mutual dependency is ok. • Mutual dependency is formally impossible in standard notation • And is formally impossible in phrase structure theory • But it exists. • So we need to – resist PS theory – change the standard notation

3. Mutual dependency is ok. • Mutual dependency is formally impossible in standard notation • And is formally impossible in phrase structure theory • But it exists. • So we need to – resist PS theory – change the standard notation

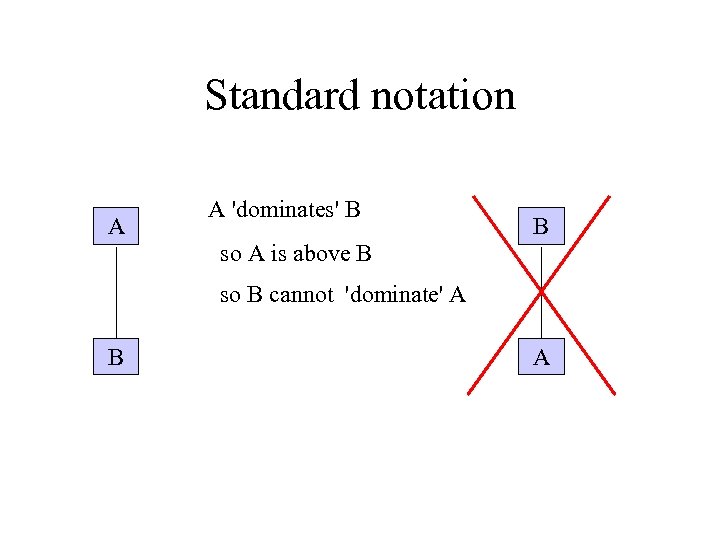

Standard notation A A 'dominates' B so A is above B B so B cannot 'dominate' A B A

Standard notation A A 'dominates' B so A is above B B so B cannot 'dominate' A B A

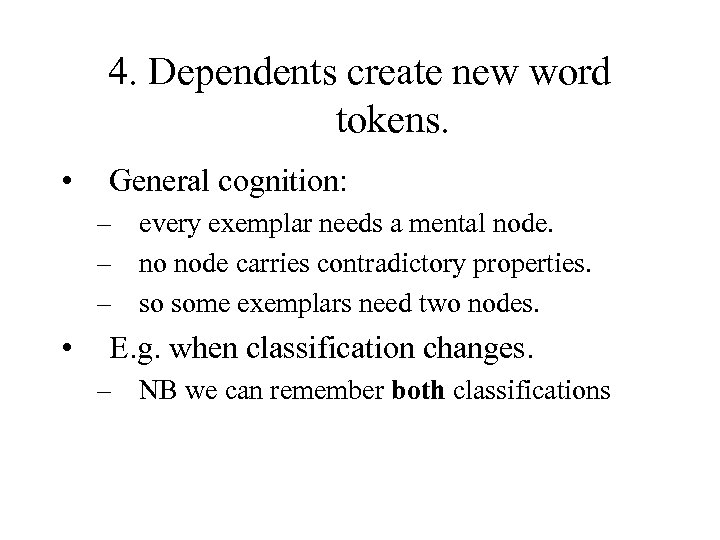

4. Dependents create new word tokens. • General cognition: – every exemplar needs a mental node. – no node carries contradictory properties. – so some exemplars need two nodes. • E. g. when classification changes. – NB we can remember both classifications

4. Dependents create new word tokens. • General cognition: – every exemplar needs a mental node. – no node carries contradictory properties. – so some exemplars need two nodes. • E. g. when classification changes. – NB we can remember both classifications

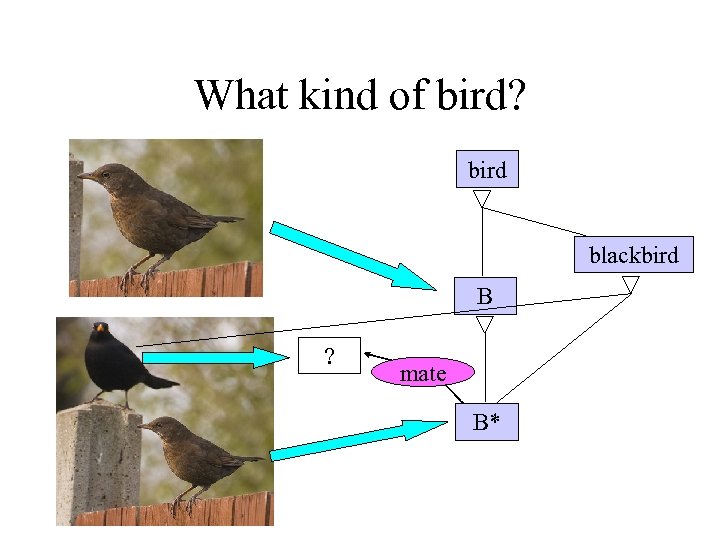

What kind of bird? bird blackbird B ? mate B*

What kind of bird? bird blackbird B ? mate B*

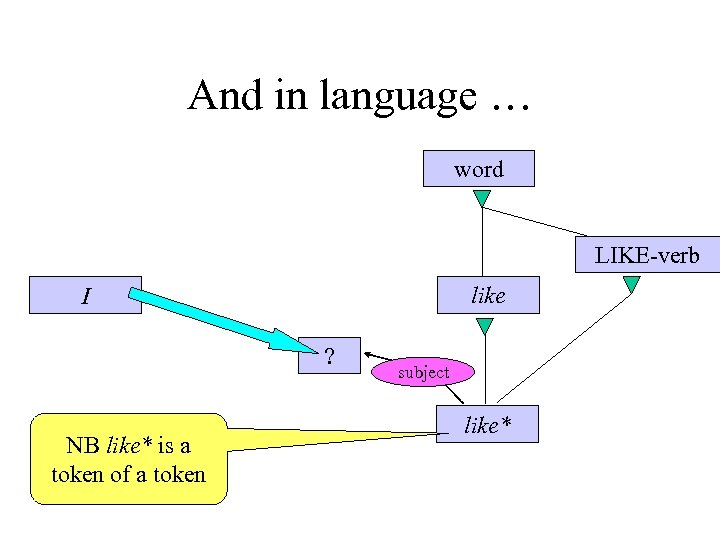

And in language … word LIKE-verb like I ? NB like* is a token of a token subject like*

And in language … word LIKE-verb like I ? NB like* is a token of a token subject like*

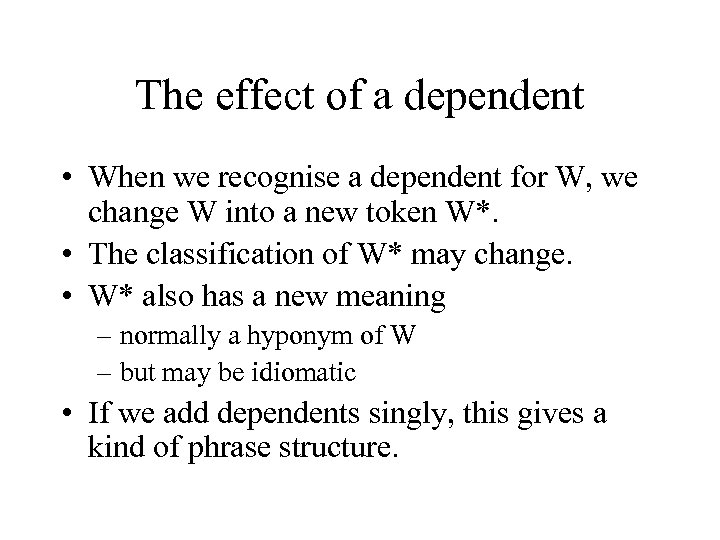

The effect of a dependent • When we recognise a dependent for W, we change W into a new token W*. • The classification of W* may change. • W* also has a new meaning – normally a hyponym of W – but may be idiomatic • If we add dependents singly, this gives a kind of phrase structure.

The effect of a dependent • When we recognise a dependent for W, we change W into a new token W*. • The classification of W* may change. • W* also has a new meaning – normally a hyponym of W – but may be idiomatic • If we add dependents singly, this gives a kind of phrase structure.

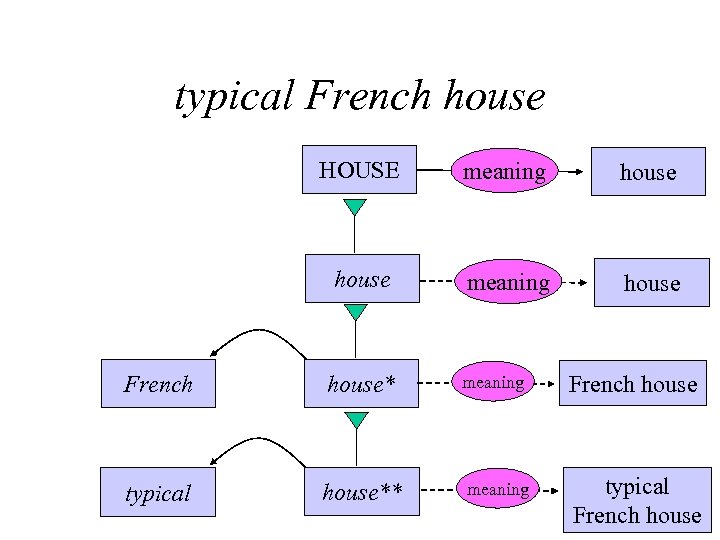

typical French house HOUSE meaning house French house* meaning French house typical house** meaning typical French house

typical French house HOUSE meaning house French house* meaning French house typical house** meaning typical French house

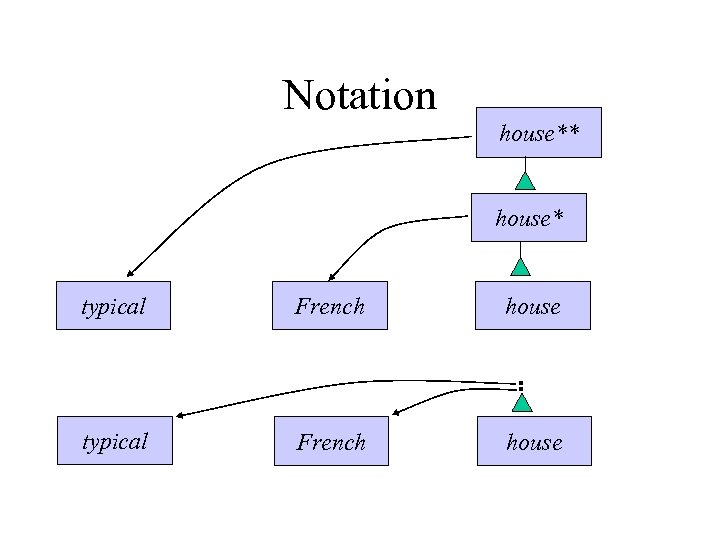

Notation house** house* typical French house

Notation house** house* typical French house

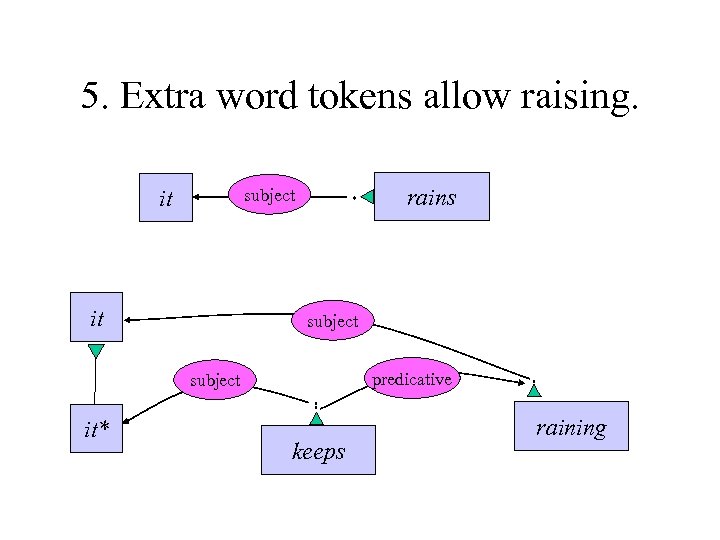

5. Extra word tokens allow raising. it rains subject it subject predicative subject it* keeps raining

5. Extra word tokens allow raising. it rains subject it subject predicative subject it* keeps raining

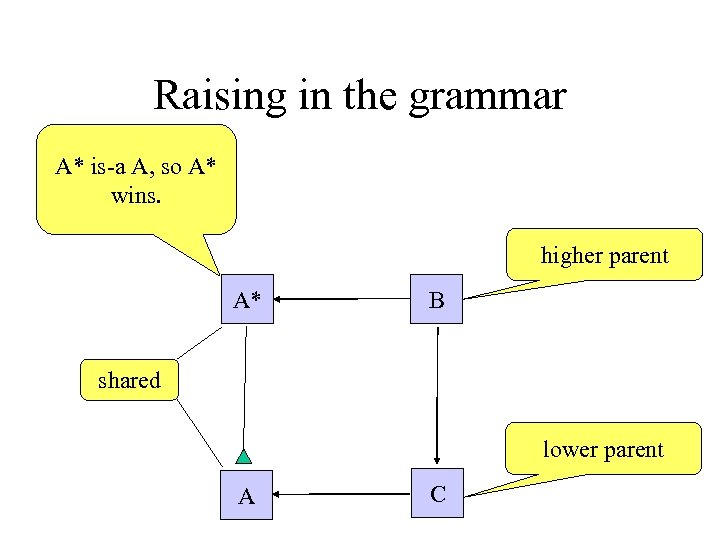

Raising in the grammar A* is-a A, so A* wins. higher parent A* B shared lower parent A C

Raising in the grammar A* is-a A, so A* wins. higher parent A* B shared lower parent A C

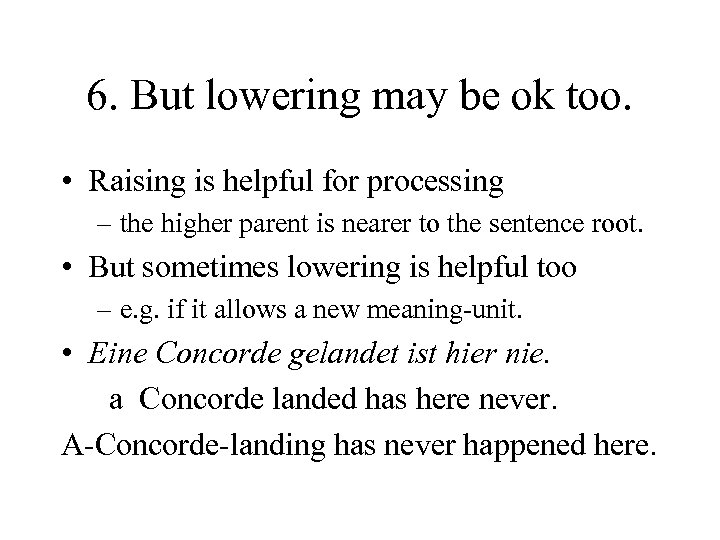

6. But lowering may be ok too. • Raising is helpful for processing – the higher parent is nearer to the sentence root. • But sometimes lowering is helpful too – e. g. if it allows a new meaning-unit. • Eine Concorde gelandet ist hier nie. a Concorde landed has here never. A-Concorde-landing has never happened here.

6. But lowering may be ok too. • Raising is helpful for processing – the higher parent is nearer to the sentence root. • But sometimes lowering is helpful too – e. g. if it allows a new meaning-unit. • Eine Concorde gelandet ist hier nie. a Concorde landed has here never. A-Concorde-landing has never happened here.

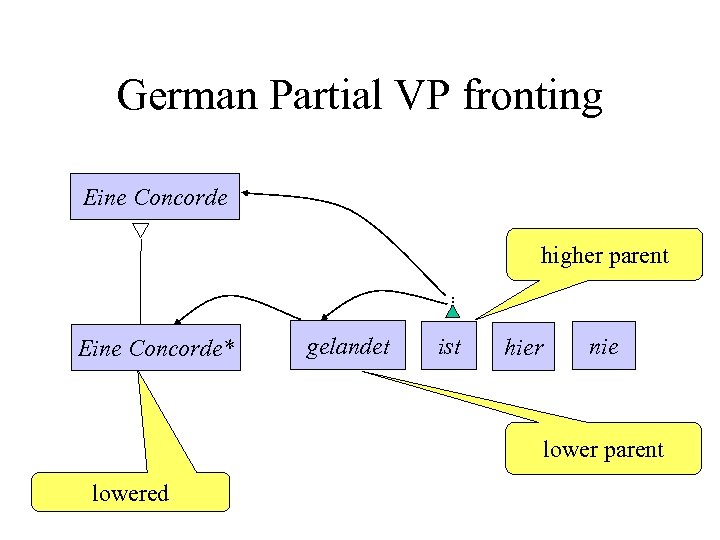

German Partial VP fronting Eine Concorde higher parent Eine Concorde* gelandet ist hier nie lower parent lowered

German Partial VP fronting Eine Concorde higher parent Eine Concorde* gelandet ist hier nie lower parent lowered

Conclusions • Language is part of cognition. • It is no less complex than general cognition. • So syntactic structure allows: – direct word-word dependencies – mutual dependencies – multiple dependencies (raising and lowering) – multiple tokens

Conclusions • Language is part of cognition. • It is no less complex than general cognition. • So syntactic structure allows: – direct word-word dependencies – mutual dependencies – multiple dependencies (raising and lowering) – multiple tokens