b00be7aa1ae73f540c46e1fb115091f5.ppt

- Количество слайдов: 25

Delivering Patient Safety 1. FACING THE FACTS Professor James Reason

Delivering Patient Safety 1. FACING THE FACTS Professor James Reason

These discussion materials do not follow the exact running order of the video programme. They are intended to extend the reach of the project by facilitating exploration of error management in greater depth and the application of these principles to local issues. - Professor James Reason

These discussion materials do not follow the exact running order of the video programme. They are intended to extend the reach of the project by facilitating exploration of error management in greater depth and the application of these principles to local issues. - Professor James Reason

Overview § The error problem § High-level concerns about patient safety § Lessons from other domains: some cautions § The need for a radical change of culture

Overview § The error problem § High-level concerns about patient safety § Lessons from other domains: some cautions § The need for a radical change of culture

Error and health care § Health care (HC) has come relatively late to the realisation that errors dominate the risks to patient safety. § Errors in other domains (aviation, etc. ) can cause very visible disasters. § In HC, however, adverse events (AEs) usually happen to isolated individuals in many different locations. Error problem

Error and health care § Health care (HC) has come relatively late to the realisation that errors dominate the risks to patient safety. § Errors in other domains (aviation, etc. ) can cause very visible disasters. § In HC, however, adverse events (AEs) usually happen to isolated individuals in many different locations. Error problem

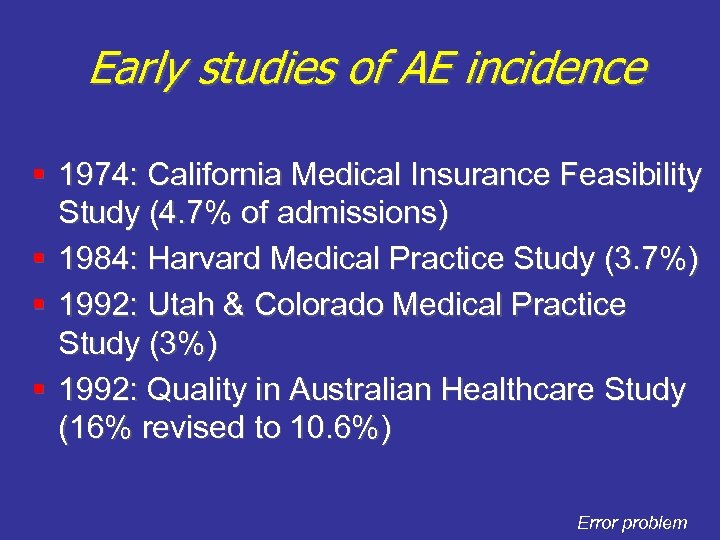

Early studies of AE incidence § 1974: California Medical Insurance Feasibility Study (4. 7% of admissions) § 1984: Harvard Medical Practice Study (3. 7%) § 1992: Utah & Colorado Medical Practice Study (3%) § 1992: Quality in Australian Healthcare Study (16% revised to 10. 6%) Error problem

Early studies of AE incidence § 1974: California Medical Insurance Feasibility Study (4. 7% of admissions) § 1984: Harvard Medical Practice Study (3. 7%) § 1992: Utah & Colorado Medical Practice Study (3%) § 1992: Quality in Australian Healthcare Study (16% revised to 10. 6%) Error problem

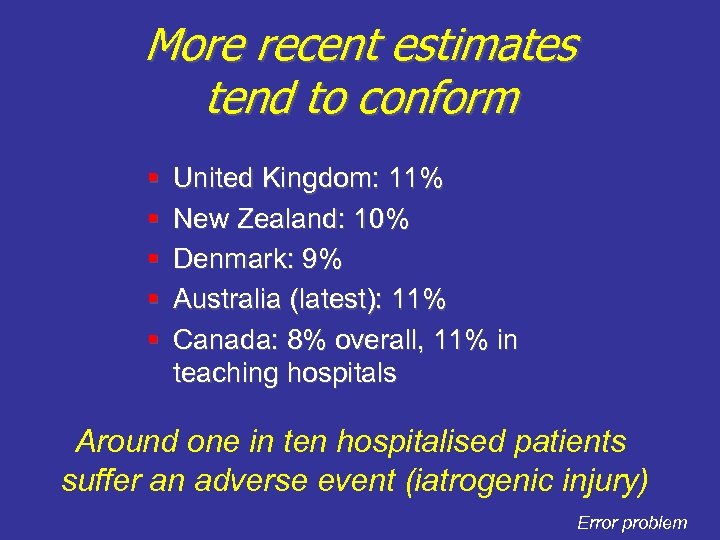

More recent estimates tend to conform § § § United Kingdom: 11% New Zealand: 10% Denmark: 9% Australia (latest): 11% Canada: 8% overall, 11% in teaching hospitals Around one in ten hospitalised patients suffer an adverse event (iatrogenic injury) Error problem

More recent estimates tend to conform § § § United Kingdom: 11% New Zealand: 10% Denmark: 9% Australia (latest): 11% Canada: 8% overall, 11% in teaching hospitals Around one in ten hospitalised patients suffer an adverse event (iatrogenic injury) Error problem

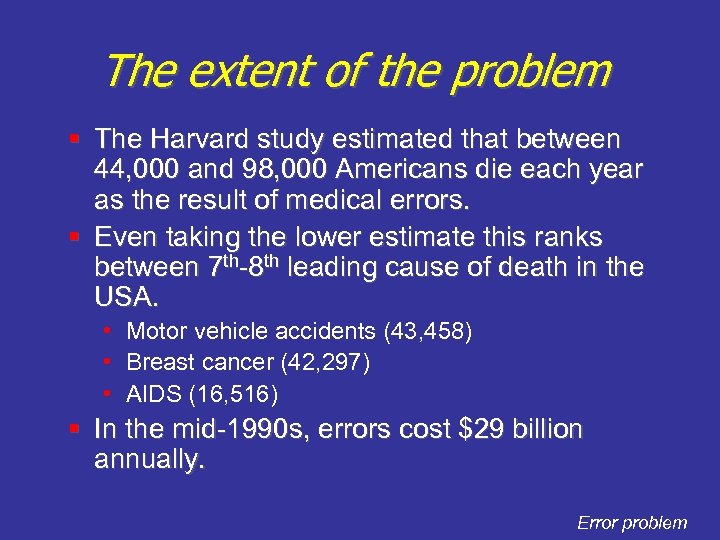

The extent of the problem § The Harvard study estimated that between 44, 000 and 98, 000 Americans die each year as the result of medical errors. § Even taking the lower estimate this ranks between 7 th-8 th leading cause of death in the USA. • Motor vehicle accidents (43, 458) • Breast cancer (42, 297) • AIDS (16, 516) § In the mid-1990 s, errors cost $29 billion annually. Error problem

The extent of the problem § The Harvard study estimated that between 44, 000 and 98, 000 Americans die each year as the result of medical errors. § Even taking the lower estimate this ranks between 7 th-8 th leading cause of death in the USA. • Motor vehicle accidents (43, 458) • Breast cancer (42, 297) • AIDS (16, 516) § In the mid-1990 s, errors cost $29 billion annually. Error problem

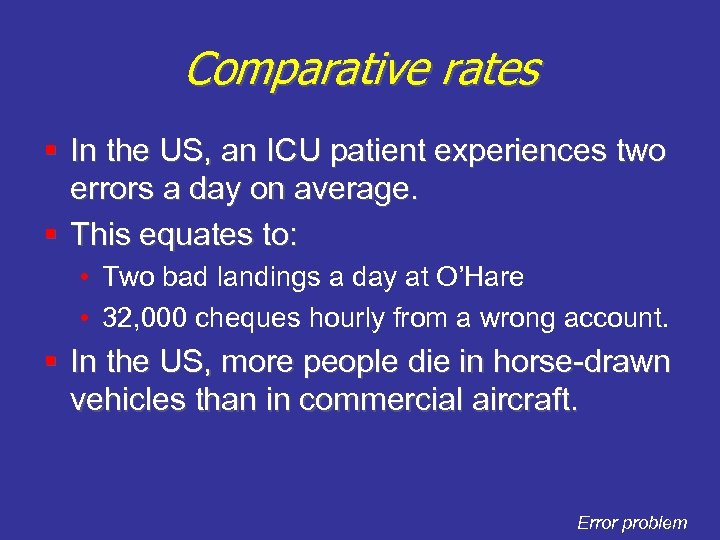

Comparative rates § In the US, an ICU patient experiences two errors a day on average. § This equates to: • Two bad landings a day at O’Hare • 32, 000 cheques hourly from a wrong account. § In the US, more people die in horse-drawn vehicles than in commercial aircraft. Error problem

Comparative rates § In the US, an ICU patient experiences two errors a day on average. § This equates to: • Two bad landings a day at O’Hare • 32, 000 cheques hourly from a wrong account. § In the US, more people die in horse-drawn vehicles than in commercial aircraft. Error problem

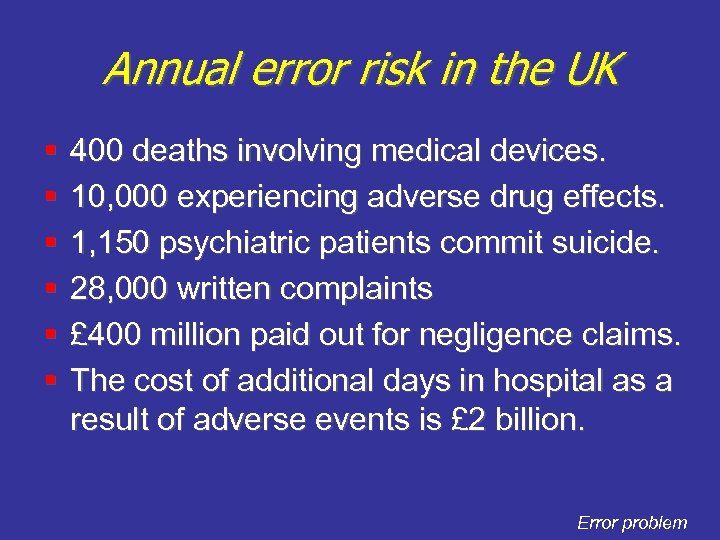

Annual error risk in the UK § § § 400 deaths involving medical devices. 10, 000 experiencing adverse drug effects. 1, 150 psychiatric patients commit suicide. 28, 000 written complaints £ 400 million paid out for negligence claims. The cost of additional days in hospital as a result of adverse events is £ 2 billion. Error problem

Annual error risk in the UK § § § 400 deaths involving medical devices. 10, 000 experiencing adverse drug effects. 1, 150 psychiatric patients commit suicide. 28, 000 written complaints £ 400 million paid out for negligence claims. The cost of additional days in hospital as a result of adverse events is £ 2 billion. Error problem

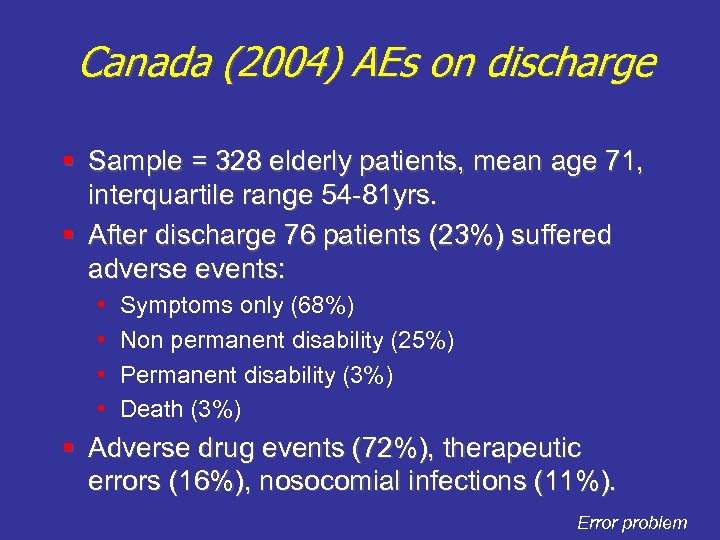

Canada (2004) AEs on discharge § Sample = 328 elderly patients, mean age 71, interquartile range 54 -81 yrs. § After discharge 76 patients (23%) suffered adverse events: • • Symptoms only (68%) Non permanent disability (25%) Permanent disability (3%) Death (3%) § Adverse drug events (72%), therapeutic errors (16%), nosocomial infections (11%). Error problem

Canada (2004) AEs on discharge § Sample = 328 elderly patients, mean age 71, interquartile range 54 -81 yrs. § After discharge 76 patients (23%) suffered adverse events: • • Symptoms only (68%) Non permanent disability (25%) Permanent disability (3%) Death (3%) § Adverse drug events (72%), therapeutic errors (16%), nosocomial infections (11%). Error problem

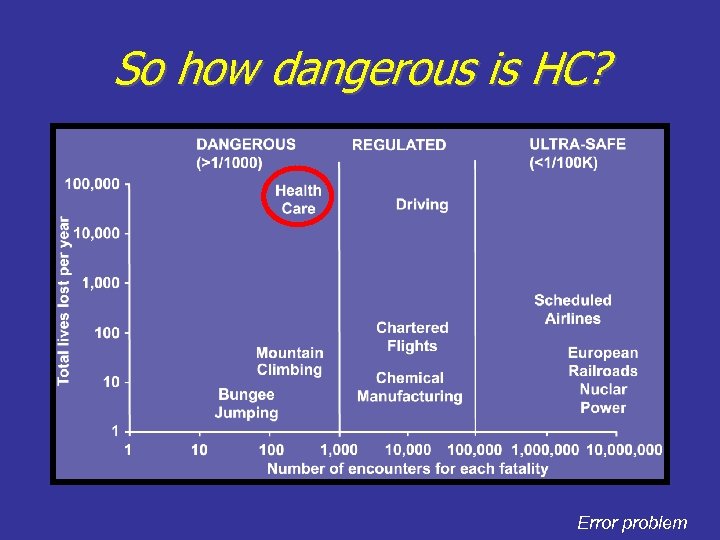

So how dangerous is HC? Error problem

So how dangerous is HC? Error problem

No ‘Big Bang’ wakeup call, just a flurry of high-level reports § U. S. National Academy IOM reports • To Err is Human (2000) • Crossing the Quality Chasm (2001) • Keeping Patients Safe (2004) § U. K. Chief Medical Officer’s reports • An Organisation with a Memory (2000) • Building a Safer NHS for Patients (2001) § § Bristol Royal Infirmary & Shipman Inquiries NPSA formed (2001) High-level concerns

No ‘Big Bang’ wakeup call, just a flurry of high-level reports § U. S. National Academy IOM reports • To Err is Human (2000) • Crossing the Quality Chasm (2001) • Keeping Patients Safe (2004) § U. K. Chief Medical Officer’s reports • An Organisation with a Memory (2000) • Building a Safer NHS for Patients (2001) § § Bristol Royal Infirmary & Shipman Inquiries NPSA formed (2001) High-level concerns

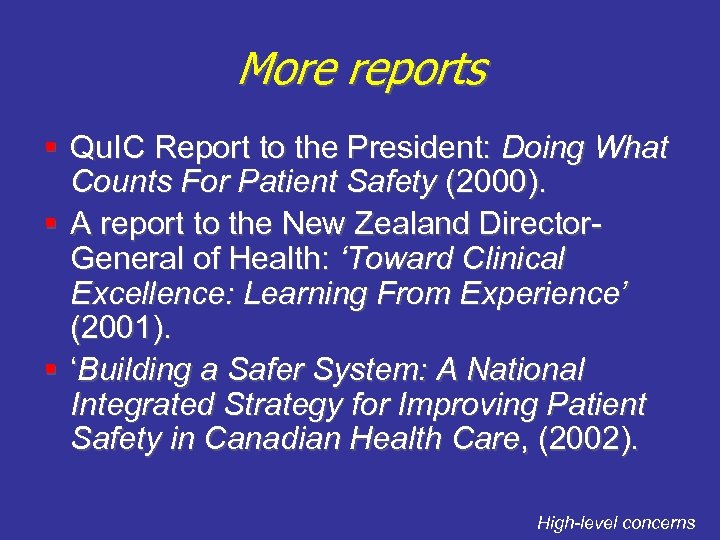

More reports § Qu. IC Report to the President: Doing What Counts For Patient Safety (2000). § A report to the New Zealand Director. General of Health: ‘Toward Clinical Excellence: Learning From Experience’ (2001). § ‘Building a Safer System: A National Integrated Strategy for Improving Patient Safety in Canadian Health Care, (2002). High-level concerns

More reports § Qu. IC Report to the President: Doing What Counts For Patient Safety (2000). § A report to the New Zealand Director. General of Health: ‘Toward Clinical Excellence: Learning From Experience’ (2001). § ‘Building a Safer System: A National Integrated Strategy for Improving Patient Safety in Canadian Health Care, (2002). High-level concerns

Common ground § There is a huge problem, regardless of the precise numbers—and it exists everywhere. § Need agencies to collect, analyse and disseminate data on adverse events (in a way that allows cross-institutional learning. ) § Fund research into patient safety. § Focus on systems rather than fallible people. § Recognise the significance of a safety culture. § Identify recurrent error traps in clinical practice. High-level concerns

Common ground § There is a huge problem, regardless of the precise numbers—and it exists everywhere. § Need agencies to collect, analyse and disseminate data on adverse events (in a way that allows cross-institutional learning. ) § Fund research into patient safety. § Focus on systems rather than fallible people. § Recognise the significance of a safety culture. § Identify recurrent error traps in clinical practice. High-level concerns

A crucial point § The term ‘medical error’ could be taken to mean errors unique to health carers. § But this is not the case. § They are no different from the human factors problems that exist everywhere, § Only the context is specific to HC, not the errors themselves. § Error management principles are universal. High-level concerns

A crucial point § The term ‘medical error’ could be taken to mean errors unique to health carers. § But this is not the case. § They are no different from the human factors problems that exist everywhere, § Only the context is specific to HC, not the errors themselves. § Error management principles are universal. High-level concerns

Importing from other domains: Some cautions § Natural and proper to look to other complex hazardous systems for safety culture and human factors models. § Other domains: aviation, nuclear power generation, chemical process, etc. § But it should be appreciated that health care is distinct in a number of important respects. Lessons from other domains

Importing from other domains: Some cautions § Natural and proper to look to other complex hazardous systems for safety culture and human factors models. § Other domains: aviation, nuclear power generation, chemical process, etc. § But it should be appreciated that health care is distinct in a number of important respects. Lessons from other domains

Health care: Distinctive features § Diverse activities and equipment § ‘Hands on’ work—high error opportunity, small margins of safety § Uncertainty and incomplete knowledge § Patients are vulnerable § Local event investigation § One-to-one or few-to-one delivery Lessons from other domains

Health care: Distinctive features § Diverse activities and equipment § ‘Hands on’ work—high error opportunity, small margins of safety § Uncertainty and incomplete knowledge § Patients are vulnerable § Local event investigation § One-to-one or few-to-one delivery Lessons from other domains

Even if. . . § HC professionals knew all there was to know, they would still make errors. § Like the rest of human kind they are fallible. § But the fact that they are not trained to understand, expect, detect and manage their errors lies at the heart of the patient safety problem. Lessons from other domains

Even if. . . § HC professionals knew all there was to know, they would still make errors. § Like the rest of human kind they are fallible. § But the fact that they are not trained to understand, expect, detect and manage their errors lies at the heart of the patient safety problem. Lessons from other domains

A huge cultural difference § HC professionals undertake long, arduous and expensive training in the reasonable expectation of getting it right. § HC culture does not encourage sharing or discussing fallibility. § By contrast, aviation was predicated from the outset on the expectation of error. § Was it Wilbur or was it Orville that came up with the first checklist? Lessons from other domains

A huge cultural difference § HC professionals undertake long, arduous and expensive training in the reasonable expectation of getting it right. § HC culture does not encourage sharing or discussing fallibility. § By contrast, aviation was predicated from the outset on the expectation of error. § Was it Wilbur or was it Orville that came up with the first checklist? Lessons from other domains

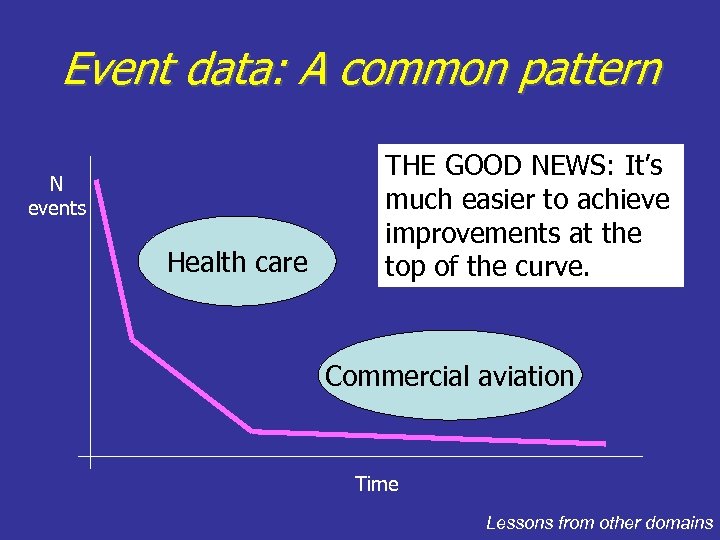

Event data: A common pattern N events Health care THE GOOD NEWS: It’s much easier to achieve improvements at the top of the curve. Commercial aviation Time Lessons from other domains

Event data: A common pattern N events Health care THE GOOD NEWS: It’s much easier to achieve improvements at the top of the curve. Commercial aviation Time Lessons from other domains

BAD NEWS: The awakening may be short-lived § In most hazardous domains, safety only becomes a high priority after some bad event—and it doesn’t last § Health care has had no ‘big bang’, just a flurry of top-level reports—no reason to suppose that their impact will last § Health care has only a limited window of opportunity to put its house in order Lessons from other domains

BAD NEWS: The awakening may be short-lived § In most hazardous domains, safety only becomes a high priority after some bad event—and it doesn’t last § Health care has had no ‘big bang’, just a flurry of top-level reports—no reason to suppose that their impact will last § Health care has only a limited window of opportunity to put its house in order Lessons from other domains

A radical change of culture is needed § Almost every HC professional carries a private burden of guilt. § He or she works in a culture that tolerates, even condones: • • ‘Collateral damage’ to patients Poor working conditions Constant need for ‘workarounds’ A code of silence about patient harm Need for culture change

A radical change of culture is needed § Almost every HC professional carries a private burden of guilt. § He or she works in a culture that tolerates, even condones: • • ‘Collateral damage’ to patients Poor working conditions Constant need for ‘workarounds’ A code of silence about patient harm Need for culture change

Although. . . § Many hi-tech tools are making HC safer: automated dispensing systems, computerised order entry, bar coding, diagnostic decision support, simulators for training, etc. § But the underlying problems of patient safety will not be solved by technological fixes. § We need to engineer a radical change in culture, away from the myth of infallibility and towards an acceptance that errors are here to stay. § They can’t be eliminated, but they can be managed. Need for culture change

Although. . . § Many hi-tech tools are making HC safer: automated dispensing systems, computerised order entry, bar coding, diagnostic decision support, simulators for training, etc. § But the underlying problems of patient safety will not be solved by technological fixes. § We need to engineer a radical change in culture, away from the myth of infallibility and towards an acceptance that errors are here to stay. § They can’t be eliminated, but they can be managed. Need for culture change

The most important thing. . . § Error management (EM) has three parts: • Error reduction • Error containment • Making them work § And the hardest of these is managing EM § EM tools can only make a sustained improvement if they are supported by a safety culture. Need for culture change

The most important thing. . . § Error management (EM) has three parts: • Error reduction • Error containment • Making them work § And the hardest of these is managing EM § EM tools can only make a sustained improvement if they are supported by a safety culture. Need for culture change

Conclusions: Where do we go from here? • The first stage is to confront the facts • • We have to change the culture that has permitted these adverse events to be tolerated for so long • We want to understand the nature of error and the conditions that provoke it • We need to seek out the right tools: such as NPSA’s 7 Steps

Conclusions: Where do we go from here? • The first stage is to confront the facts • • We have to change the culture that has permitted these adverse events to be tolerated for so long • We want to understand the nature of error and the conditions that provoke it • We need to seek out the right tools: such as NPSA’s 7 Steps