82e97942674f76ad5c24710d51ac1508.ppt

- Количество слайдов: 35

DB ES Experiment Support Monitoring in CMS Daniele Bonacorsi Andrea Sciabà CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 19/5/2011 Workshop CCR INFN Grid 2011

ES Outline • • CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it Introduction Service monitoring Site monitoring Dashboard monitoring Transfer monitoring Data popularity Other monitoring Conclusions 5/4/2011 Workshop CCR INFN Grid 2011 2

ES Introduction • CMS uses a large variety of monitoring sources and tools • Main providers are – WLCG (SAM/Nagios, Gridview, etc. ) – CERN IT (Lemon, SLS, Hammercloud, Dashboard, Data popularity, etc. ) – Caltech (Mon. ALISA) – CMS (Ph. EDEx monitoring, Overview, etc. ) – KIT+DESY+… (Happy. Faces) • This is not meant to be an exhaustive review of every monitoring system! – Mainly focused on computing rather than data quality / software release validation etc. CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 3

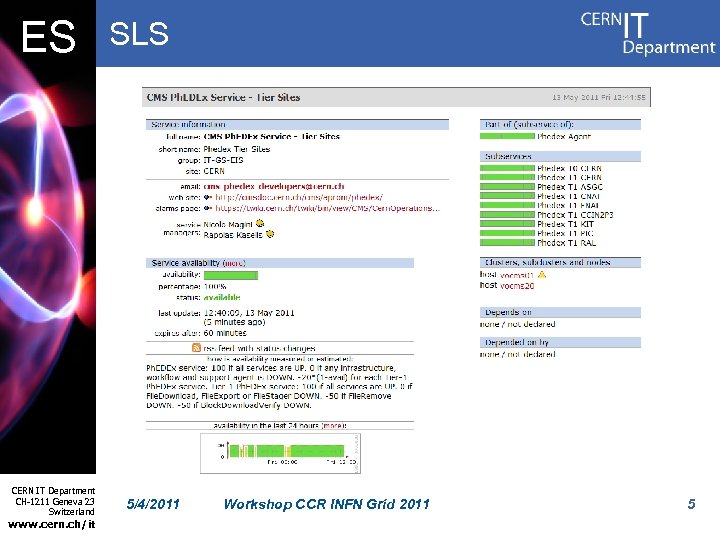

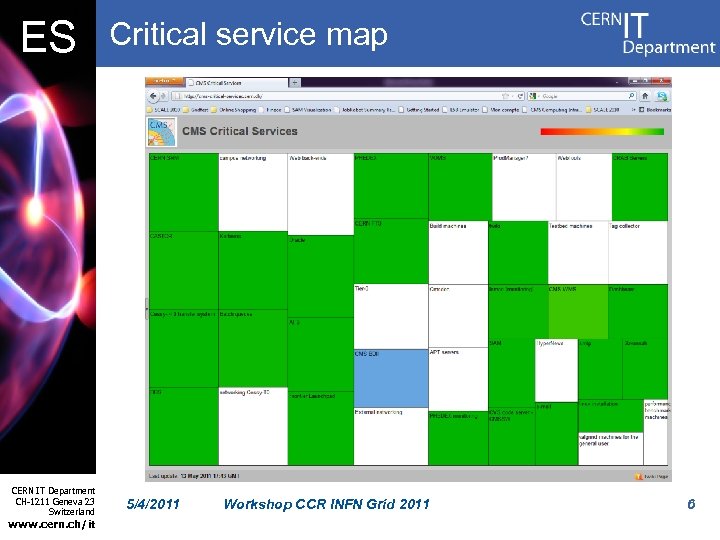

ES Service monitoring • Most CMS services (and IT services used by CMS) are monitored using Lemon and SLS – Lemon: node-centric, standard + custom metrics, provides alarms and actuators – SLS: service-centric, produces one estimator (“availability”) plus arbitrary metrics, provides alarms • Both widely used in CERN IT and LHC experiments • The Critical Service map (developed by the Dashboard team) gives an overview of the status of CMS services CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 4

ES CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it SLS 5/4/2011 Workshop CCR INFN Grid 2011 5

ES CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it Critical service map 5/4/2011 Workshop CCR INFN Grid 2011 6

ES Site monitoring • SAM/Nagios framework – Functional tests run on remote services (computing and storage elements) – Used in WLCG and EGEE/EGI since several years – CMS-specific tests are run with a CMS certificate • CMS Job Robot – “Fake” analysis jobs automatically sent to all sites – Read a dataset replicated everywhere – Job success rate measured • Transfer link quality – Count how many “good” links the site has, looking at the rate of transfer failures CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 7

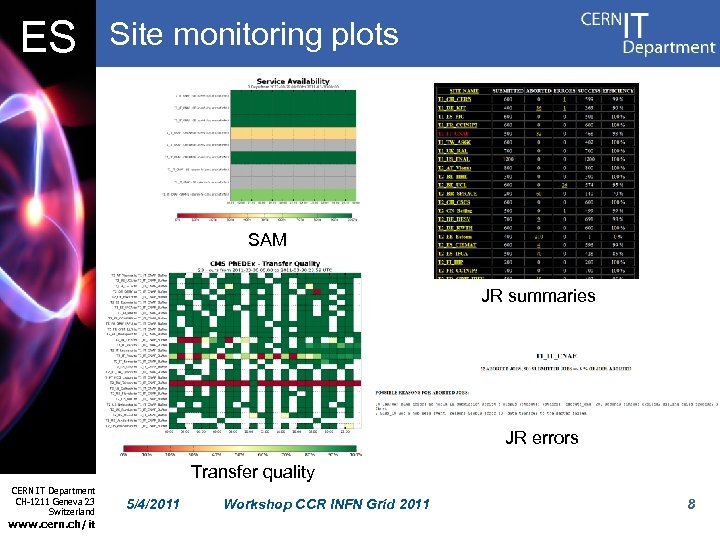

ES Site monitoring plots SAM JR summaries JR errors Transfer quality CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 8

ES Gridview? • The portal is not actively used in CMS • Site availability calculated by the Dashboard using more critical tests than those considered by Gridview • LCG-CE and CREAM-CE already “ORed“ in the Dashboard – Soon possible also in Gridview CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 9

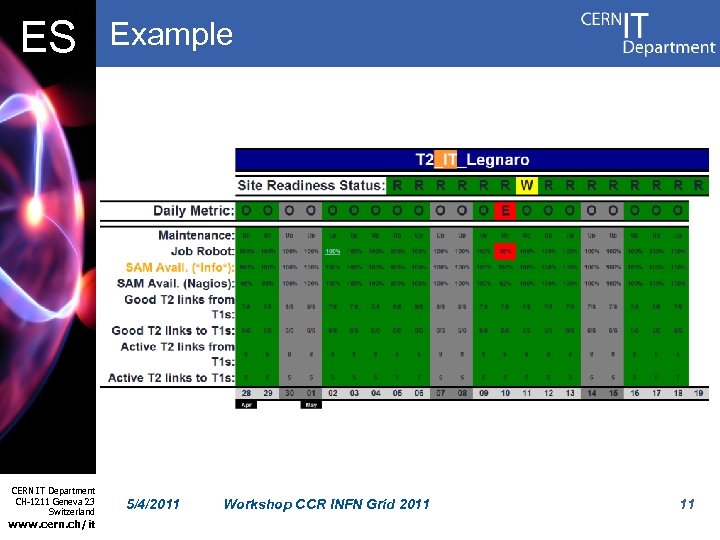

ES CMS Site Readiness • An aggregator of site monitoring information to express if a site is “working” or not • READY / NOT-READY / WARNING / SCHEDULED DOWNTIME – Use the recent history of the tests rather than a simple “AND” combination of the latest results (e. g. READY if all metrics OK for ≥ 5/7 days) • Combines SAM/Nagios, JR and link quality to answer questions like – Do jobs run? Is CMS software properly installed? Can read local data? Can copy output to local storage? Can data be remotely read and written? Can transfer to/from other sites? • Uses GOCDB to find downtimes CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 10

ES CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it Example 5/4/2011 Workshop CCR INFN Grid 2011 11

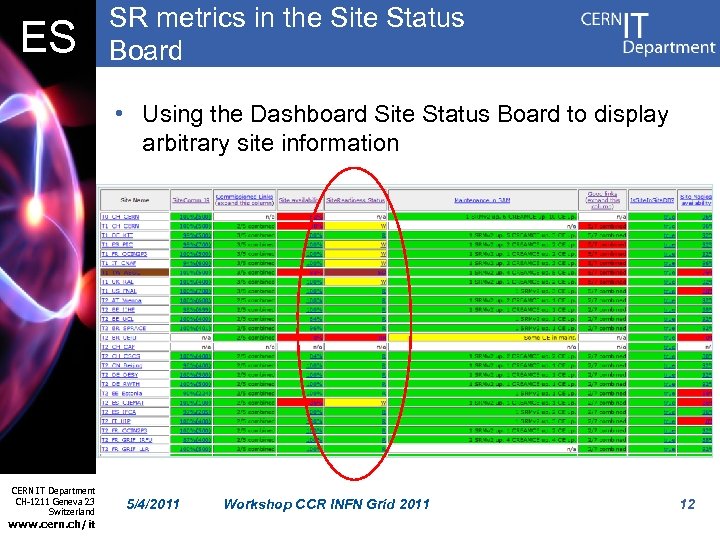

ES SR metrics in the Site Status Board • Using the Dashboard Site Status Board to display arbitrary site information CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 12

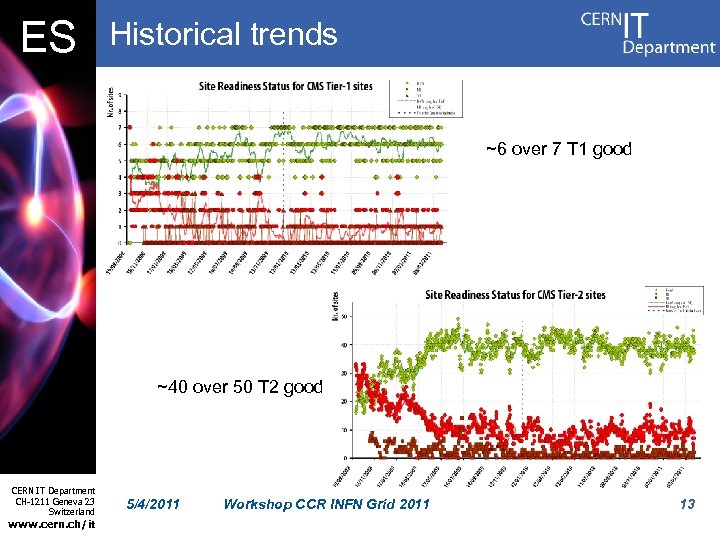

ES Historical trends ~6 over 7 T 1 good ~40 over 50 T 2 good CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 13

ES Hammercloud • A distributed Analysis testing system used in ATLAS, CMS and LHCb serving two usecases: – Robot-like functional testing: frequent “ping” jobs to all sites to perform basic site validation – Stress testing: on-demand large-scale stress tests using real analysis jobs to test one or many sites to: • • CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Help commission new sites Evaluate changes to site infrastructure Evaluate SW changes Compare site performances Workshop CCR INFN Grid 2011 14

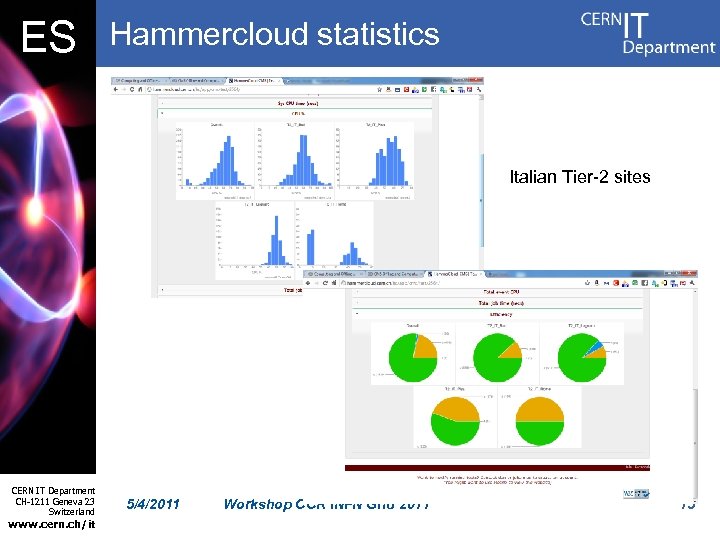

ES Hammercloud statistics Italian Tier-2 sites CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 15

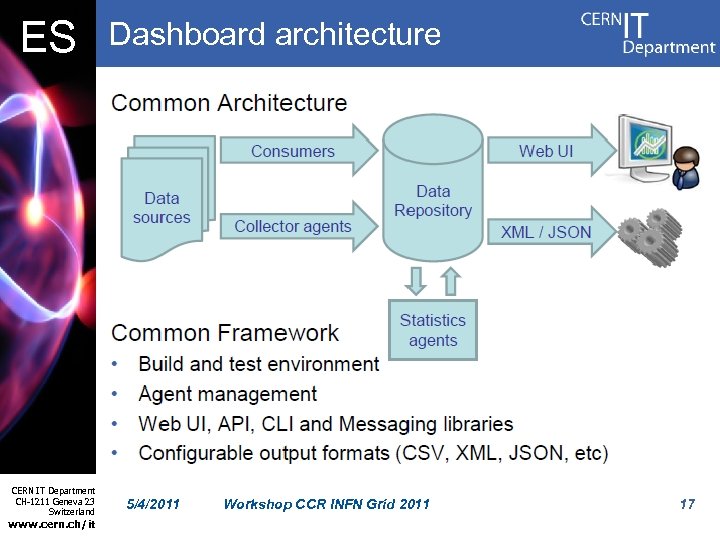

ES The Dashboard framework • Initially developed in IT for CMS, later extended to the other LHC experiments • Covers job monitoring and site/service status monitoring • Provides user/VO monitoring views • Information sent by job submission tools and by running jobs to a Mon. ALISA server as UDP messages – Planned to start using the WLCG MSG system • Information stored in Oracle database CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 16

ES CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it Dashboard architecture 5/4/2011 Workshop CCR INFN Grid 2011 17

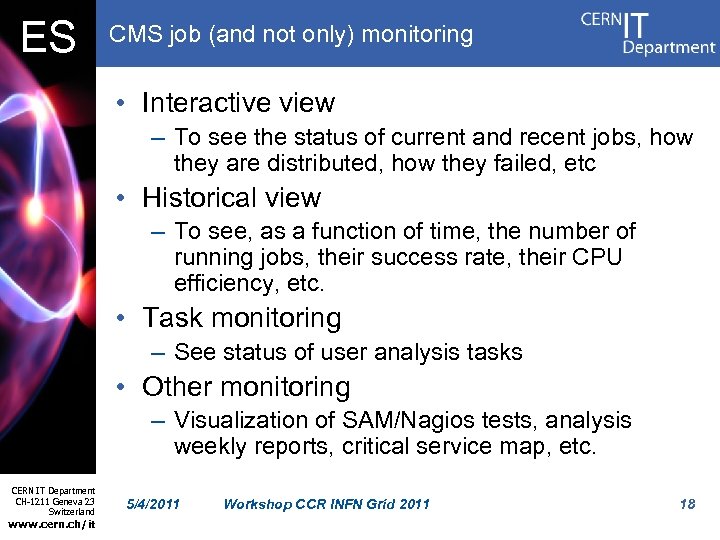

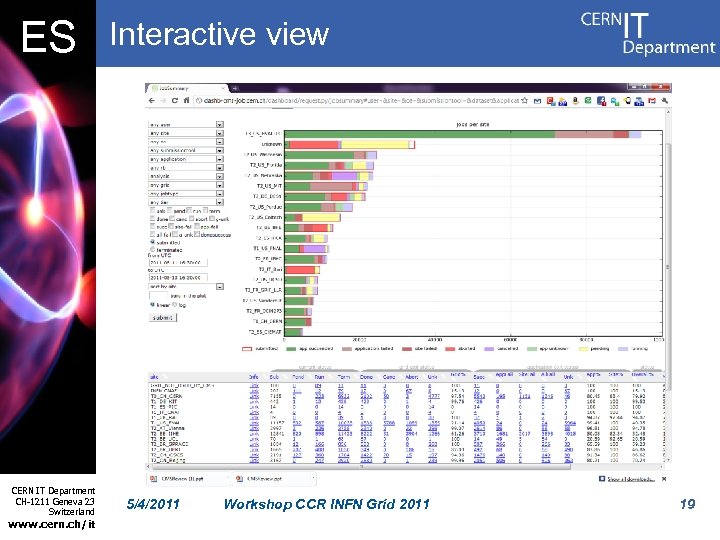

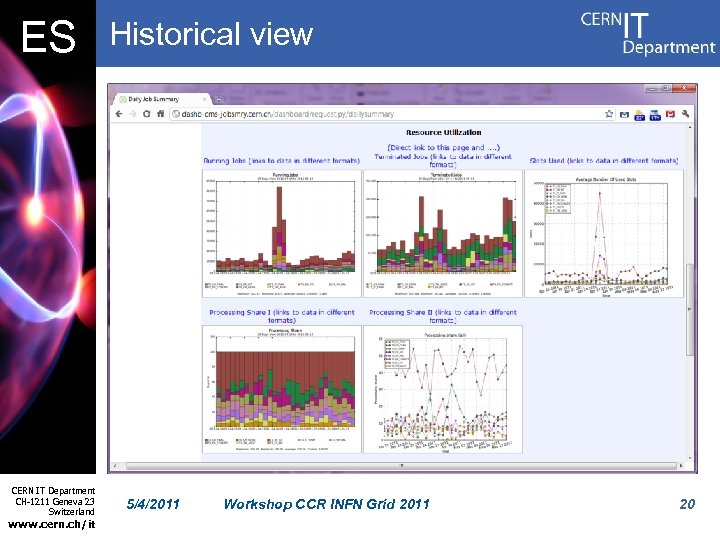

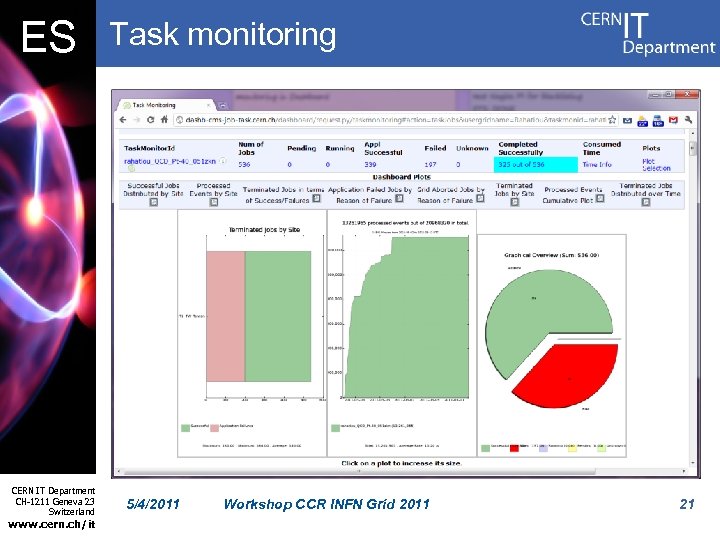

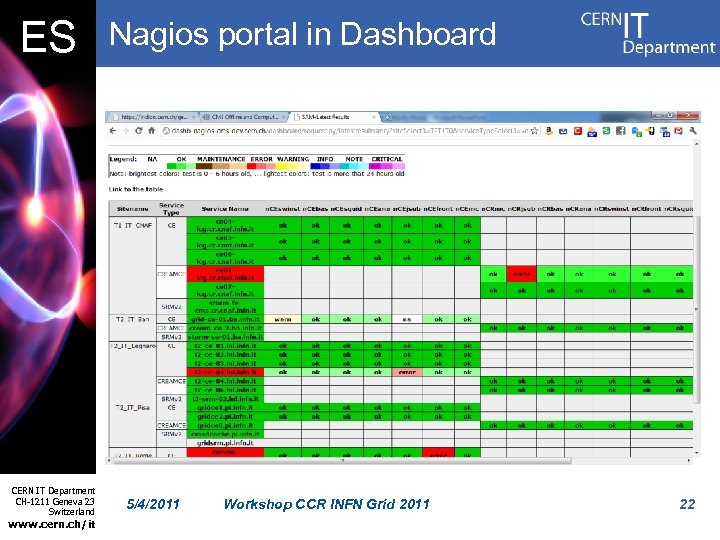

ES CMS job (and not only) monitoring • Interactive view – To see the status of current and recent jobs, how they are distributed, how they failed, etc • Historical view – To see, as a function of time, the number of running jobs, their success rate, their CPU efficiency, etc. • Task monitoring – See status of user analysis tasks • Other monitoring – Visualization of SAM/Nagios tests, analysis weekly reports, critical service map, etc. CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 18

ES CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it Interactive view 5/4/2011 Workshop CCR INFN Grid 2011 19

ES CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it Historical view 5/4/2011 Workshop CCR INFN Grid 2011 20

ES CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it Task monitoring 5/4/2011 Workshop CCR INFN Grid 2011 21

ES CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it Nagios portal in Dashboard 5/4/2011 Workshop CCR INFN Grid 2011 22

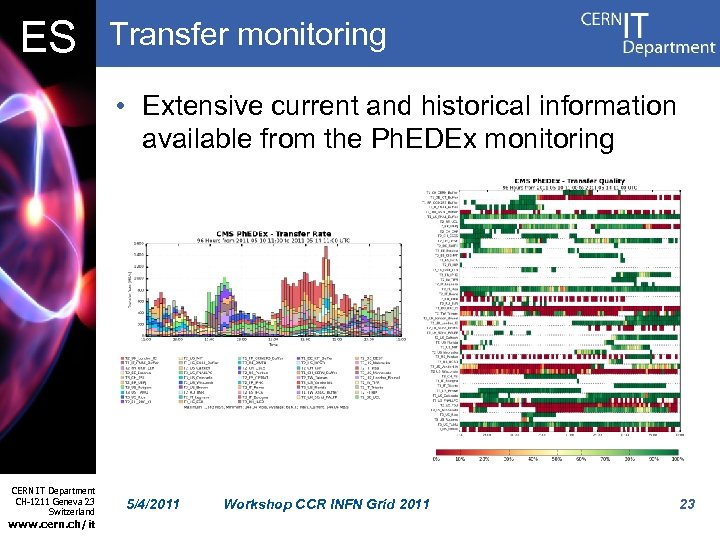

ES Transfer monitoring • Extensive current and historical information available from the Ph. EDEx monitoring CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 23

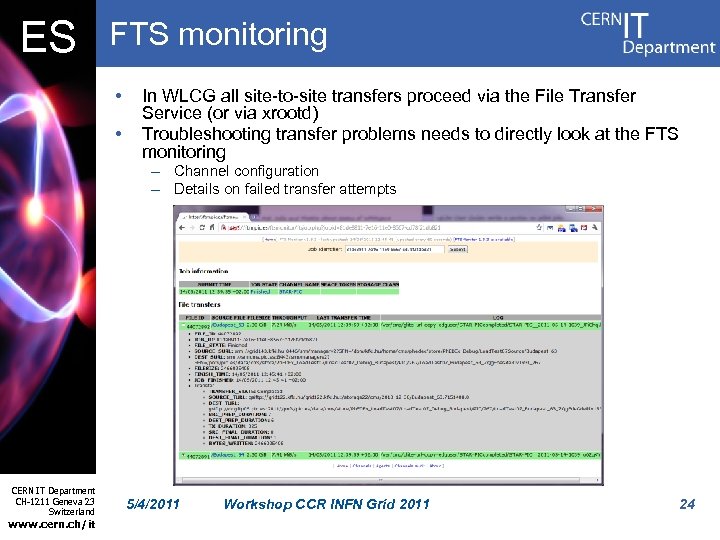

ES FTS monitoring • • In WLCG all site-to-site transfers proceed via the File Transfer Service (or via xrootd) Troubleshooting transfer problems needs to directly look at the FTS monitoring – Channel configuration – Details on failed transfer attempts CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 24

ES FTS monitor parser • Being developed in CMS but usable by anybody • Full statistics about successful transfers from FTS monitors worldwide – Average transfer rates per file/stream and their historical evolution • Useful to – find general issues with endpoints and links – check network performance (e. g. for LHCONE) – Optimize FTS channels CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 25

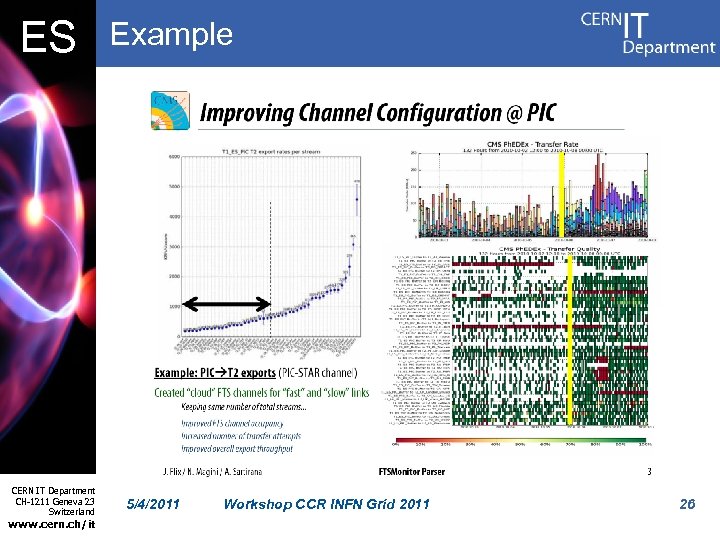

ES CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it Example 5/4/2011 Workshop CCR INFN Grid 2011 26

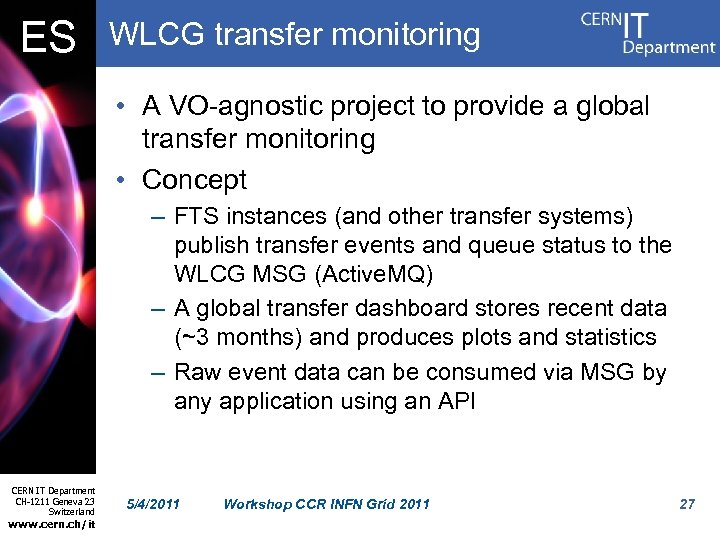

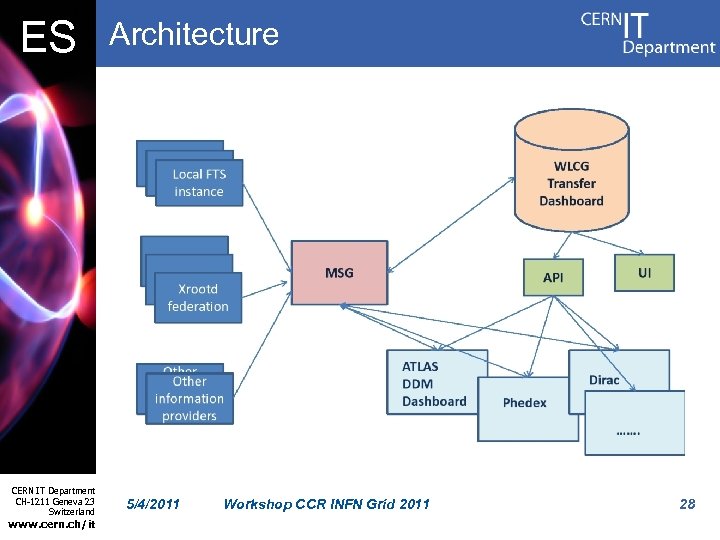

ES WLCG transfer monitoring • A VO-agnostic project to provide a global transfer monitoring • Concept – FTS instances (and other transfer systems) publish transfer events and queue status to the WLCG MSG (Active. MQ) – A global transfer dashboard stores recent data (~3 months) and produces plots and statistics – Raw event data can be consumed via MSG by any application using an API CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 27

ES CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it Architecture 5/4/2011 Workshop CCR INFN Grid 2011 28

ES Advantages and plans • Advantages – – Decouple from local FTS monitoring Cross-technology interface (FTS, xrootd, etc. ) More details on transfers Correlations among VOs • Plans – Defined message format – Implemented prototype of FTS publisher (IT-GT) – Web interface development starts this summer CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 29

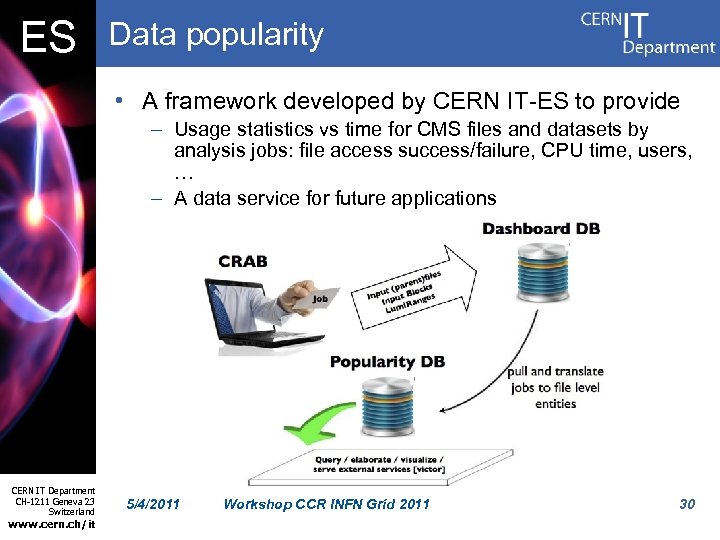

ES Data popularity • A framework developed by CERN IT-ES to provide – Usage statistics vs time for CMS files and datasets by analysis jobs: file access success/failure, CPU time, users, … – A data service for future applications CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 30

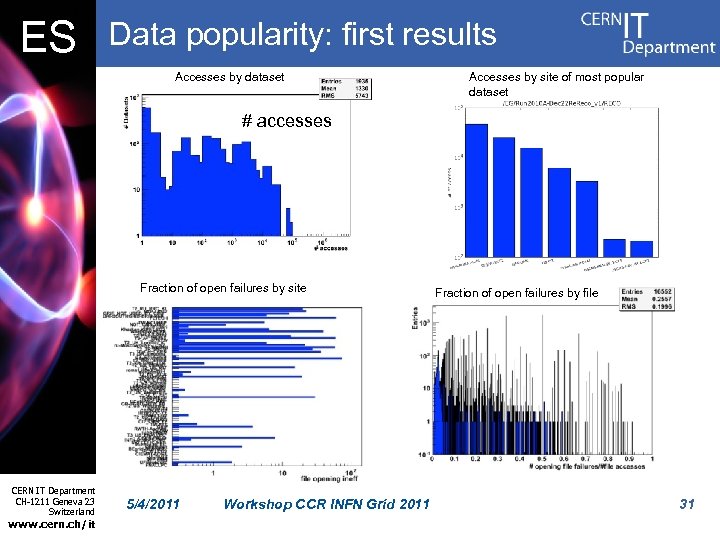

ES Data popularity: first results Accesses by dataset Accesses by site of most popular dataset # accesses Fraction of open failures by site CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 Fraction of open failures by file 31

ES Mon. ALISA • Used “behind the curtains”: – Dashboard – CRAB server monitoring – Xrootd monitoring • Very stable and reliable CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 32

ES Other monitoring • Storage accounting – Using the Site Status Board to publish amount of used and free space on sites – Needs work, as BDII information not reliable • xrootd monitoring – Developed for the CMS xrootd global redirector project • Data operations monitoring – T 0 operations: detailed info on T 0 workflows – Local Tier-1 batch monitoring via Happy. Faces • Information sent as standard XML files CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 33

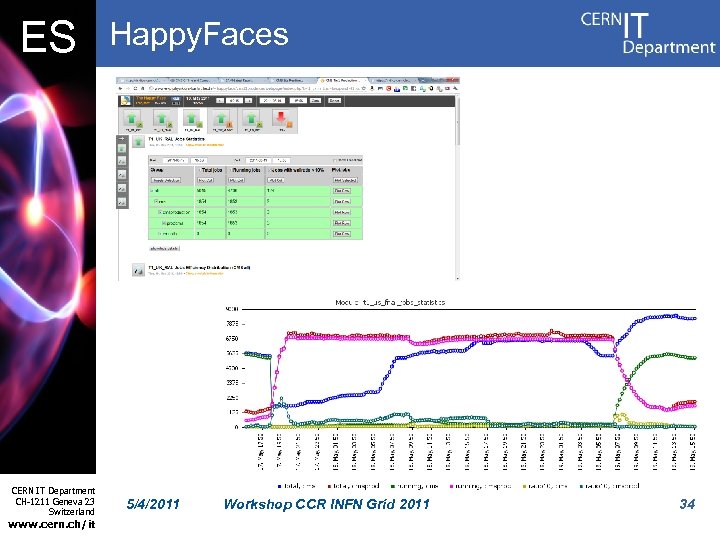

ES CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it Happy. Faces 5/4/2011 Workshop CCR INFN Grid 2011 34

ES Future’s main goals • Move towards a coherent framework for alarms and notifications • Reorganize views to make them more convenient and converge on fewer aggregator technologies • Provide a more powerful monitoring for operators of the workflow management tools, both for production and analysis • “Clean up” and further improve performance of available tools CERN IT Department CH-1211 Geneva 23 Switzerland www. cern. ch/it 5/4/2011 Workshop CCR INFN Grid 2011 35

82e97942674f76ad5c24710d51ac1508.ppt