07065a7308bdff632e091f0207da27be.ppt

- Количество слайдов: 42

Data Warehouse DATA TRANSFORMATION

Data Warehouse DATA TRANSFORMATION

Extract Transform Insert n n Extract data from operational system, transform and insert into data warehouse Why ETI? n n Will your warehouse produce correct information with the current data? How ho w can I ensure warehouse credibility?

Extract Transform Insert n n Extract data from operational system, transform and insert into data warehouse Why ETI? n n Will your warehouse produce correct information with the current data? How ho w can I ensure warehouse credibility?

Excuses for NOT Transforming Legacy Data n n n Old data works fine, new will work as well. Data will be fixed at point of entry through GUI. If needed, data will be cleaned after new system populated; After proof-of-concept pilot. Keys join the data most of the time. Users will not agree to modifying or standardizing their data.

Excuses for NOT Transforming Legacy Data n n n Old data works fine, new will work as well. Data will be fixed at point of entry through GUI. If needed, data will be cleaned after new system populated; After proof-of-concept pilot. Keys join the data most of the time. Users will not agree to modifying or standardizing their data.

Levels of Migration Problem n Existing metadata is insufficient and unreliable n n n Data values incorrectly typed and accessible n n n Metadata must hold for all occurrences Metadata must represent business and technical attributes Values form extracted from storage Values meaning inferred from its content Entity keys unreliable or unavailable n Inferred from related values

Levels of Migration Problem n Existing metadata is insufficient and unreliable n n n Data values incorrectly typed and accessible n n n Metadata must hold for all occurrences Metadata must represent business and technical attributes Values form extracted from storage Values meaning inferred from its content Entity keys unreliable or unavailable n Inferred from related values

Metadata Challenge n Metadata gets out of synch with details it summarizes n n Not at the right level of detail n n Business grows faster than systems designed to capture business info Multiple values in a single field Multiple meanings to a single field No fixed format for value Expressed in awkward of limited terms n Program/compiler view rather than business view

Metadata Challenge n Metadata gets out of synch with details it summarizes n n Not at the right level of detail n n Business grows faster than systems designed to capture business info Multiple values in a single field Multiple meanings to a single field No fixed format for value Expressed in awkward of limited terms n Program/compiler view rather than business view

Character-level Challenge n Value instance level n n Named type level n n n Spelling, aliases Abbreviations, truncations, transpositions Inconsistent storage formats Multiple meanings, contextual meanings Synonyms, homonyms Entity level n n No common keys or representation No integrated view across records, files, systems

Character-level Challenge n Value instance level n n Named type level n n n Spelling, aliases Abbreviations, truncations, transpositions Inconsistent storage formats Multiple meanings, contextual meanings Synonyms, homonyms Entity level n n No common keys or representation No integrated view across records, files, systems

Some Data Quality examples n The magic shrinking vendor file n 127 ways to spell. . . n Data surprises in individual fields n Cowbirds and Data Fields n Magic numbers and embedded intelligence

Some Data Quality examples n The magic shrinking vendor file n 127 ways to spell. . . n Data surprises in individual fields n Cowbirds and Data Fields n Magic numbers and embedded intelligence

The Magic Shrinking Vendor File A Medical claims processor was having trouble with their Insurance Vendor file. They thought they had 300, 000 Insurance Vendors. When they cleaned up their data, they discovered they had only 27, 000 unique Insurance Vendors.

The Magic Shrinking Vendor File A Medical claims processor was having trouble with their Insurance Vendor file. They thought they had 300, 000 Insurance Vendors. When they cleaned up their data, they discovered they had only 27, 000 unique Insurance Vendors.

127 ways to spell. . . n Have over 127 different ways to spell AT&T n Have over 1000 ways to spell du. Pont

127 ways to spell. . . n Have over 127 different ways to spell AT&T n Have over 1000 ways to spell du. Pont

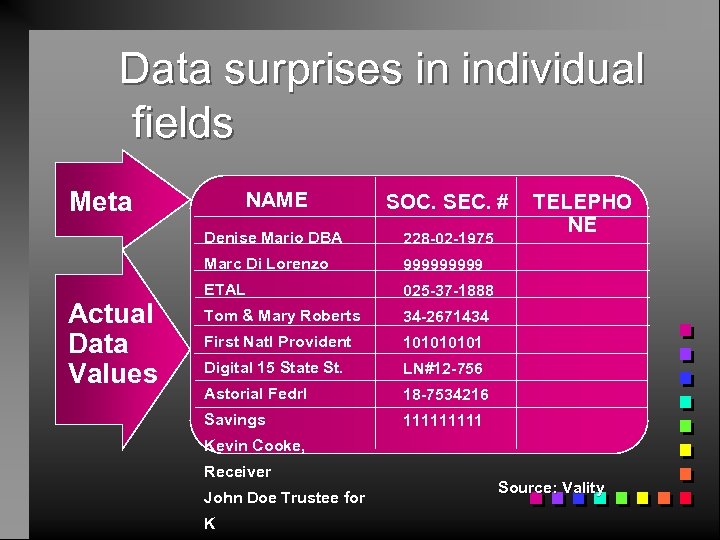

Data surprises in individual fields NAME SOC. SEC. # TELEPHO NE Source: Vality

Data surprises in individual fields NAME SOC. SEC. # TELEPHO NE Source: Vality

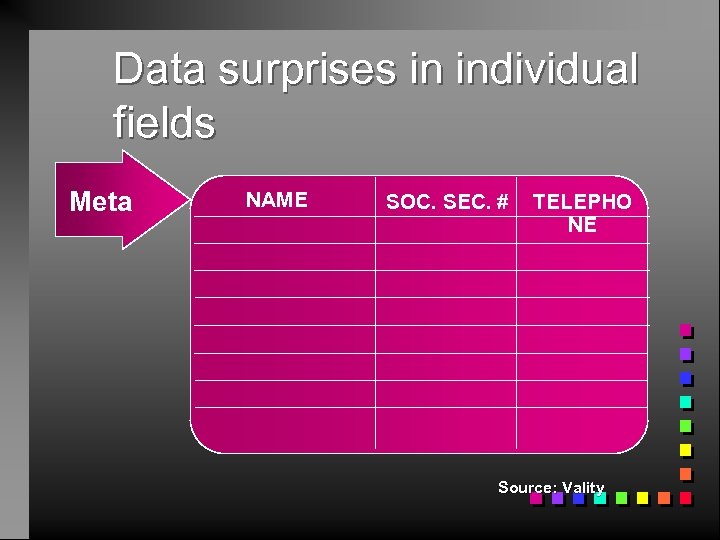

Data surprises in individual fields Meta NAME SOC. SEC. # TELEPHO NE Source: Vality

Data surprises in individual fields Meta NAME SOC. SEC. # TELEPHO NE Source: Vality

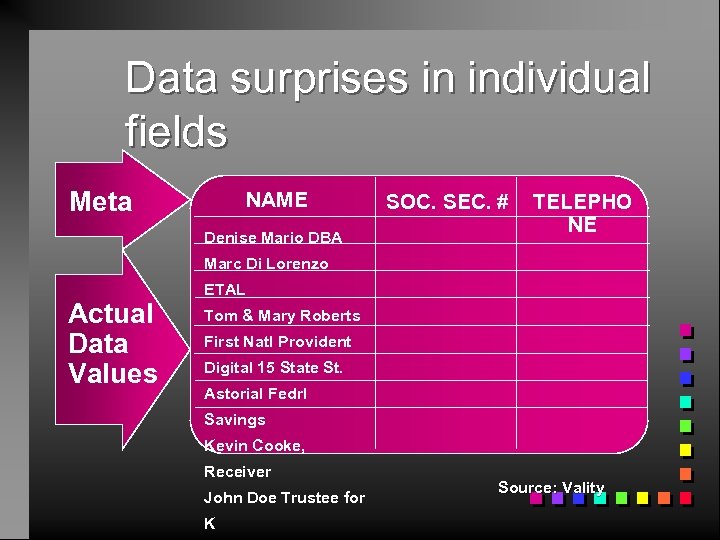

Data surprises in individual fields Meta NAME Denise Mario DBA SOC. SEC. # TELEPHO NE Marc Di Lorenzo Actual Data Values ETAL Tom & Mary Roberts First Natl Provident Digital 15 State St. Astorial Fedrl Savings Kevin Cooke, Receiver John Doe Trustee for K Source: Vality

Data surprises in individual fields Meta NAME Denise Mario DBA SOC. SEC. # TELEPHO NE Marc Di Lorenzo Actual Data Values ETAL Tom & Mary Roberts First Natl Provident Digital 15 State St. Astorial Fedrl Savings Kevin Cooke, Receiver John Doe Trustee for K Source: Vality

Data surprises in individual fields Meta SOC. SEC. # Denise Mario DBA 228 -02 -1975 Marc Di Lorenzo Actual Data Values NAME 99999 ETAL 025 -37 -1888 Tom & Mary Roberts 34 -2671434 First Natl Provident 10101 Digital 15 State St. LN#12 -756 Astorial Fedrl 18 -7534216 Savings 11111 TELEPHO NE Kevin Cooke, Receiver John Doe Trustee for K Source: Vality

Data surprises in individual fields Meta SOC. SEC. # Denise Mario DBA 228 -02 -1975 Marc Di Lorenzo Actual Data Values NAME 99999 ETAL 025 -37 -1888 Tom & Mary Roberts 34 -2671434 First Natl Provident 10101 Digital 15 State St. LN#12 -756 Astorial Fedrl 18 -7534216 Savings 11111 TELEPHO NE Kevin Cooke, Receiver John Doe Trustee for K Source: Vality

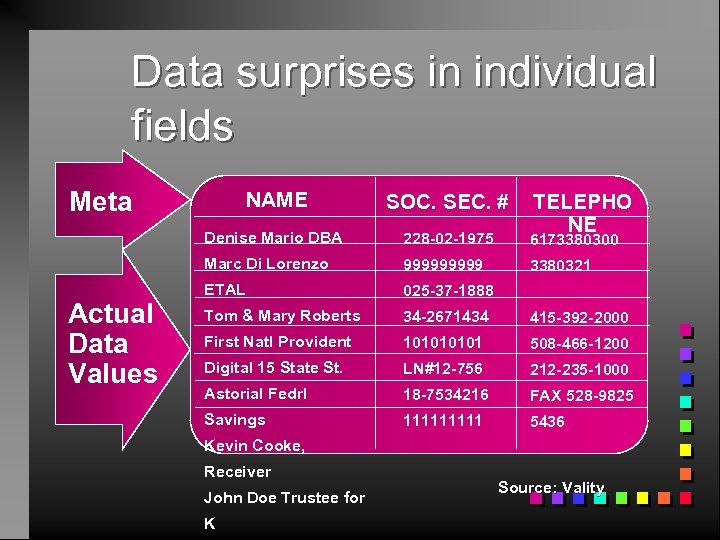

Data surprises in individual fields Meta SOC. SEC. # Denise Mario DBA 228 -02 -1975 6173380300 Marc Di Lorenzo Actual Data Values NAME 99999 3380321 ETAL 025 -37 -1888 Tom & Mary Roberts 34 -2671434 415 -392 -2000 First Natl Provident 10101 508 -466 -1200 Digital 15 State St. LN#12 -756 212 -235 -1000 Astorial Fedrl 18 -7534216 FAX 528 -9825 Savings 11111 5436 TELEPHO NE Kevin Cooke, Receiver John Doe Trustee for K Source: Vality

Data surprises in individual fields Meta SOC. SEC. # Denise Mario DBA 228 -02 -1975 6173380300 Marc Di Lorenzo Actual Data Values NAME 99999 3380321 ETAL 025 -37 -1888 Tom & Mary Roberts 34 -2671434 415 -392 -2000 First Natl Provident 10101 508 -466 -1200 Digital 15 State St. LN#12 -756 212 -235 -1000 Astorial Fedrl 18 -7534216 FAX 528 -9825 Savings 11111 5436 TELEPHO NE Kevin Cooke, Receiver John Doe Trustee for K Source: Vality

Cowbirds and Data Fields n Cowbirds lay their eggs in other birds nets n Users use data fields that are not used for other purposes

Cowbirds and Data Fields n Cowbirds lay their eggs in other birds nets n Users use data fields that are not used for other purposes

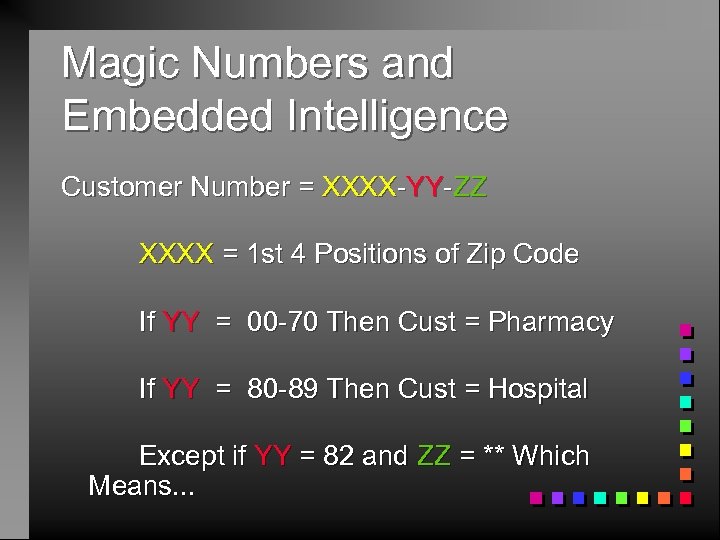

Magic Numbers and Embedded Intelligence Customer Number = XXXX-YY-ZZ XXXX = 1 st 4 Positions of Zip Code If YY = 00 -70 Then Cust = Pharmacy If YY = 80 -89 Then Cust = Hospital Except if YY = 82 and ZZ = ** Which Means. . .

Magic Numbers and Embedded Intelligence Customer Number = XXXX-YY-ZZ XXXX = 1 st 4 Positions of Zip Code If YY = 00 -70 Then Cust = Pharmacy If YY = 80 -89 Then Cust = Hospital Except if YY = 82 and ZZ = ** Which Means. . .

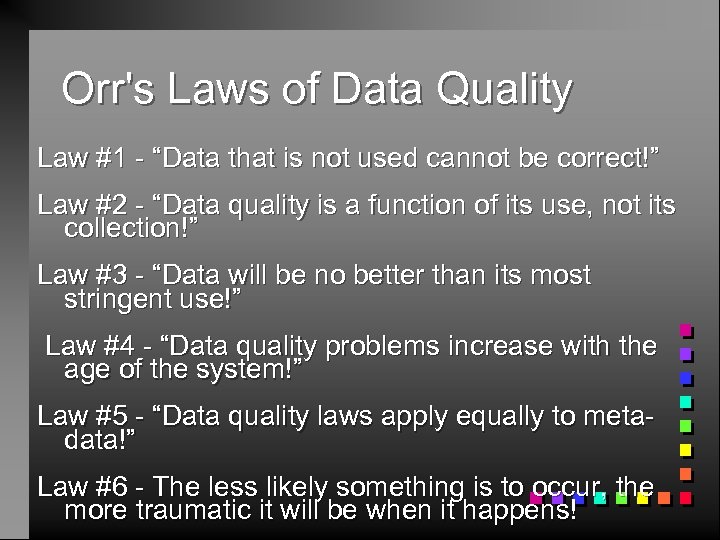

Orr's Laws of Data Quality Law #1 - “Data that is not used cannot be correct!” Law #2 - “Data quality is a function of its use, not its collection!” Law #3 - “Data will be no better than its most stringent use!” Law #4 - “Data quality problems increase with the age of the system!” Law #5 - “Data quality laws apply equally to metadata!” Law #6 - The less likely something is to occur, the more traumatic it will be when it happens!

Orr's Laws of Data Quality Law #1 - “Data that is not used cannot be correct!” Law #2 - “Data quality is a function of its use, not its collection!” Law #3 - “Data will be no better than its most stringent use!” Law #4 - “Data quality problems increase with the age of the system!” Law #5 - “Data quality laws apply equally to metadata!” Law #6 - The less likely something is to occur, the more traumatic it will be when it happens!

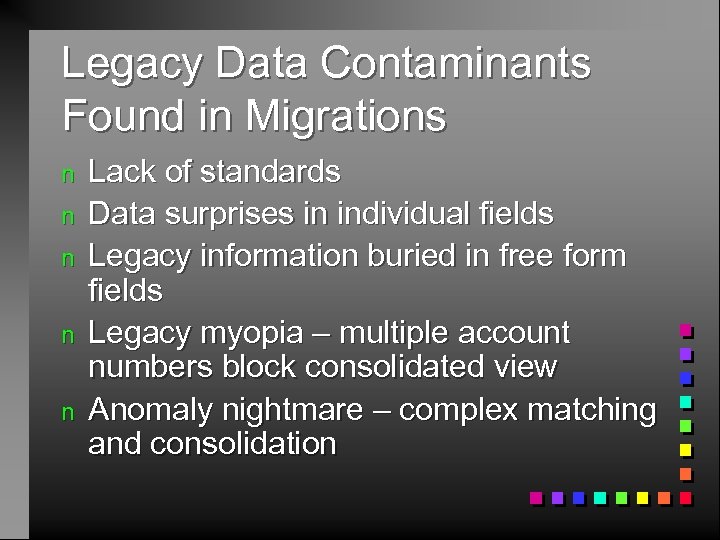

Legacy Data Contaminants Found in Migrations n n n Lack of standards Data surprises in individual fields Legacy information buried in free form fields Legacy myopia – multiple account numbers block consolidated view Anomaly nightmare – complex matching and consolidation

Legacy Data Contaminants Found in Migrations n n n Lack of standards Data surprises in individual fields Legacy information buried in free form fields Legacy myopia – multiple account numbers block consolidated view Anomaly nightmare – complex matching and consolidation

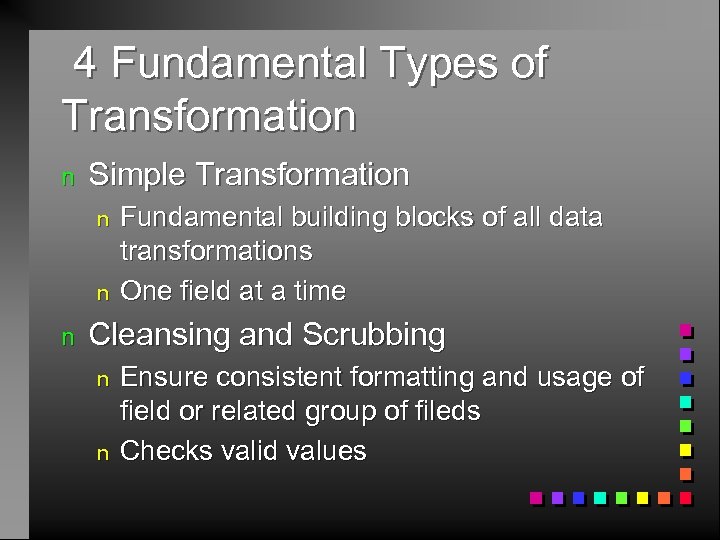

4 Fundamental Types of Transformation n Simple Transformation n Fundamental building blocks of all data transformations One field at a time Cleansing and Scrubbing n n Ensure consistent formatting and usage of field or related group of fileds Checks valid values

4 Fundamental Types of Transformation n Simple Transformation n Fundamental building blocks of all data transformations One field at a time Cleansing and Scrubbing n n Ensure consistent formatting and usage of field or related group of fileds Checks valid values

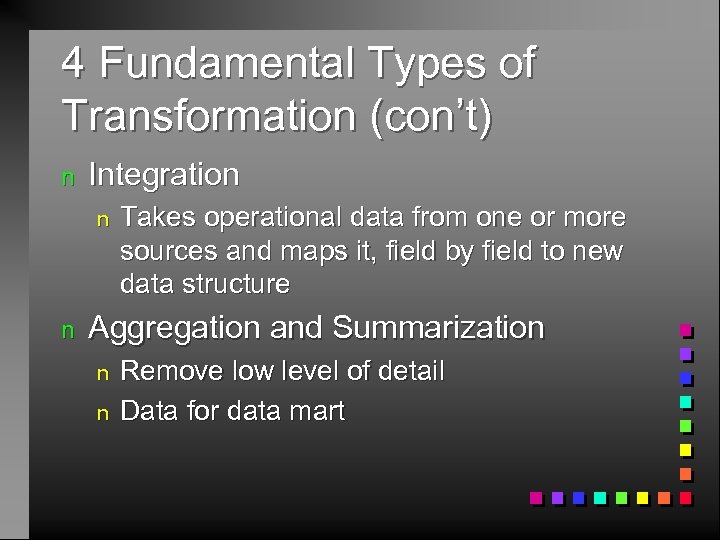

4 Fundamental Types of Transformation (con’t) n Integration n n Takes operational data from one or more sources and maps it, field by field to new data structure Aggregation and Summarization n n Remove low level of detail Data for data mart

4 Fundamental Types of Transformation (con’t) n Integration n n Takes operational data from one or more sources and maps it, field by field to new data structure Aggregation and Summarization n n Remove low level of detail Data for data mart

Simple Transformation n Convert data element from one type to another n n n Date time conversion n n semantic value same rename elements standard warehouse format Decode encoded fields n M F vs C S MM

Simple Transformation n Convert data element from one type to another n n n Date time conversion n n semantic value same rename elements standard warehouse format Decode encoded fields n M F vs C S MM

Cleansing and Scrubbing n Actual content examined n n Range checking, enumerated lists, dependency checking Uniform representation for dw n address information n parse to components

Cleansing and Scrubbing n Actual content examined n n Range checking, enumerated lists, dependency checking Uniform representation for dw n address information n parse to components

Integration n n Simple field level mappings -80 -90% Complex integration n No common identifier n n n Multiple sources for same target element n n n probable matches 2 -stage process, isolation/reconciliation contradictory Missing data Derived/calculated data n redundant?

Integration n n Simple field level mappings -80 -90% Complex integration n No common identifier n n n Multiple sources for same target element n n n probable matches 2 -stage process, isolation/reconciliation contradictory Missing data Derived/calculated data n redundant?

Aggregation and Summarization n Summarization is the addition of like values along one or more business dimensions n n Aggregation is the addition of different business elements into common total n n add daily sales by stores for monthly sales by region daily product sales plus monthly consulting sales give monthly combined sales amount Details of process available in metadata

Aggregation and Summarization n Summarization is the addition of like values along one or more business dimensions n n Aggregation is the addition of different business elements into common total n n add daily sales by stores for monthly sales by region daily product sales plus monthly consulting sales give monthly combined sales amount Details of process available in metadata

Data Re-engineering Problem n Programming for the unknown n n Programming for noise and uncertainty n n Unanticipated values, structures and patterns Conflicting and missing values Programming for productivity and efficiency Changing data values, changing user requirements n High volumes, non-linear searches Conventional data transformation methods do not solve the metadata and data value challenges – need data re-engineering Stephen Brown, Vality Corp. n

Data Re-engineering Problem n Programming for the unknown n n Programming for noise and uncertainty n n Unanticipated values, structures and patterns Conflicting and missing values Programming for productivity and efficiency Changing data values, changing user requirements n High volumes, non-linear searches Conventional data transformation methods do not solve the metadata and data value challenges – need data re-engineering Stephen Brown, Vality Corp. n

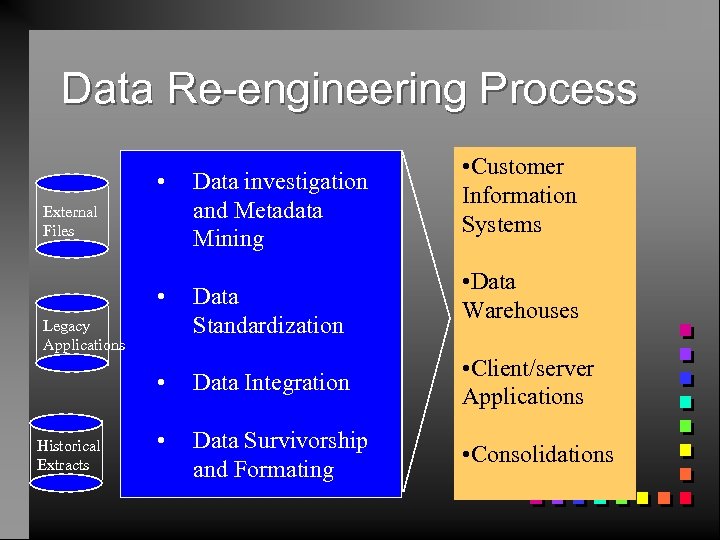

Data Re-engineering Process • Data investigation and Metadata Mining • Data Standardization External Files Legacy Applications • Customer Information Systems • Data Warehouses • Historical Extracts Data Integration • Client/server Applications • Data Survivorship and Formating • Consolidations

Data Re-engineering Process • Data investigation and Metadata Mining • Data Standardization External Files Legacy Applications • Customer Information Systems • Data Warehouses • Historical Extracts Data Integration • Client/server Applications • Data Survivorship and Formating • Consolidations

Natural Laws of Data Re-engineering n n n n n Data has no standard You can’t predict or legislate format or content Data will evolve faster than its capture and storage systems You can’t write rules for what you don’t know and can’t see Instructions for handling data are within the data Don’t trust the metadata, make the data reveal itself Revealed metadata is knowledge about the business Revealed metadata validates warehouse design Revealed metadata supports conversion project management Revealed metadata is insurance against misinformation

Natural Laws of Data Re-engineering n n n n n Data has no standard You can’t predict or legislate format or content Data will evolve faster than its capture and storage systems You can’t write rules for what you don’t know and can’t see Instructions for handling data are within the data Don’t trust the metadata, make the data reveal itself Revealed metadata is knowledge about the business Revealed metadata validates warehouse design Revealed metadata supports conversion project management Revealed metadata is insurance against misinformation

Buy tool or manually code programs ?

Buy tool or manually code programs ?

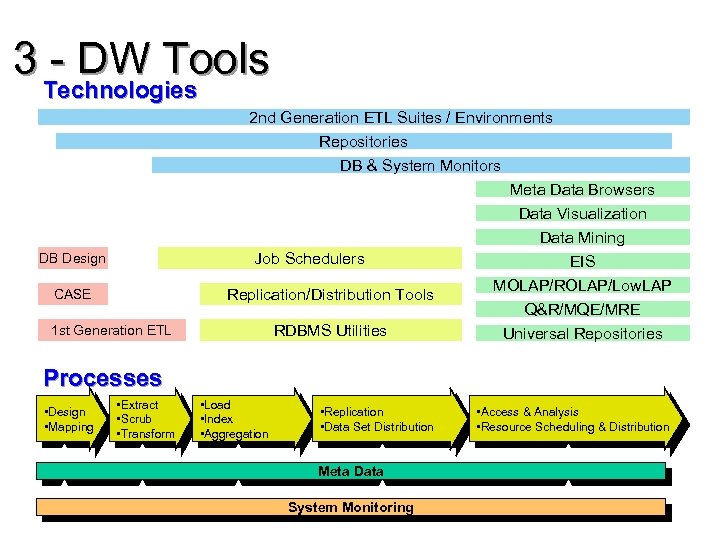

3 - DW Tools Technologies 2 nd Generation ETL Suites / Environments Repositories DB & System Monitors Job Schedulers DB Design Replication/Distribution Tools CASE RDBMS Utilities 1 st Generation ETL Meta Data Browsers Data Visualization Data Mining EIS MOLAP/ROLAP/Low. LAP Q&R/MQE/MRE Universal Repositories Processes • Design • Mapping • Extract • Scrub • Transform • Load • Index • Aggregation • Replication • Data Set Distribution Meta Data System Monitoring • Access & Analysis • Resource Scheduling & Distribution

3 - DW Tools Technologies 2 nd Generation ETL Suites / Environments Repositories DB & System Monitors Job Schedulers DB Design Replication/Distribution Tools CASE RDBMS Utilities 1 st Generation ETL Meta Data Browsers Data Visualization Data Mining EIS MOLAP/ROLAP/Low. LAP Q&R/MQE/MRE Universal Repositories Processes • Design • Mapping • Extract • Scrub • Transform • Load • Index • Aggregation • Replication • Data Set Distribution Meta Data System Monitoring • Access & Analysis • Resource Scheduling & Distribution

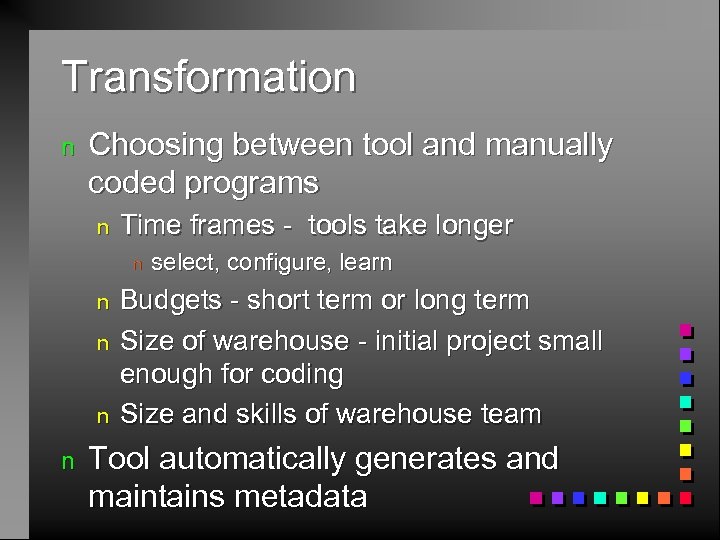

Transformation n Choosing between tool and manually coded programs n Time frames - tools take longer n n n select, configure, learn Budgets - short term or long term Size of warehouse - initial project small enough for coding Size and skills of warehouse team Tool automatically generates and maintains metadata

Transformation n Choosing between tool and manually coded programs n Time frames - tools take longer n n n select, configure, learn Budgets - short term or long term Size of warehouse - initial project small enough for coding Size and skills of warehouse team Tool automatically generates and maintains metadata

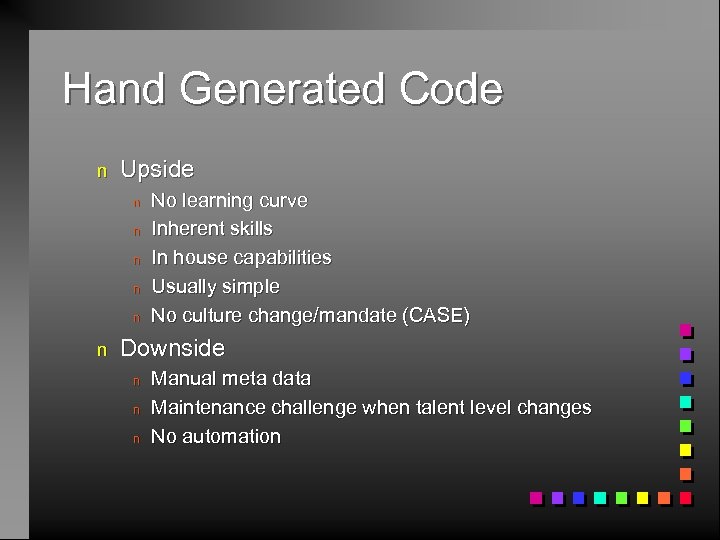

Hand Generated Code n Upside n n n No learning curve Inherent skills In house capabilities Usually simple No culture change/mandate (CASE) Downside n n n Manual meta data Maintenance challenge when talent level changes No automation

Hand Generated Code n Upside n n n No learning curve Inherent skills In house capabilities Usually simple No culture change/mandate (CASE) Downside n n n Manual meta data Maintenance challenge when talent level changes No automation

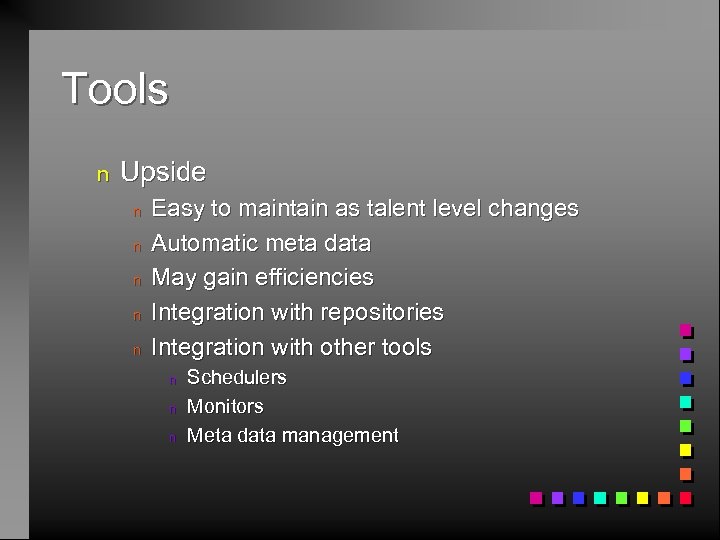

Tools n Upside n n n Easy to maintain as talent level changes Automatic meta data May gain efficiencies Integration with repositories Integration with other tools n n n Schedulers Monitors Meta data management

Tools n Upside n n n Easy to maintain as talent level changes Automatic meta data May gain efficiencies Integration with repositories Integration with other tools n n n Schedulers Monitors Meta data management

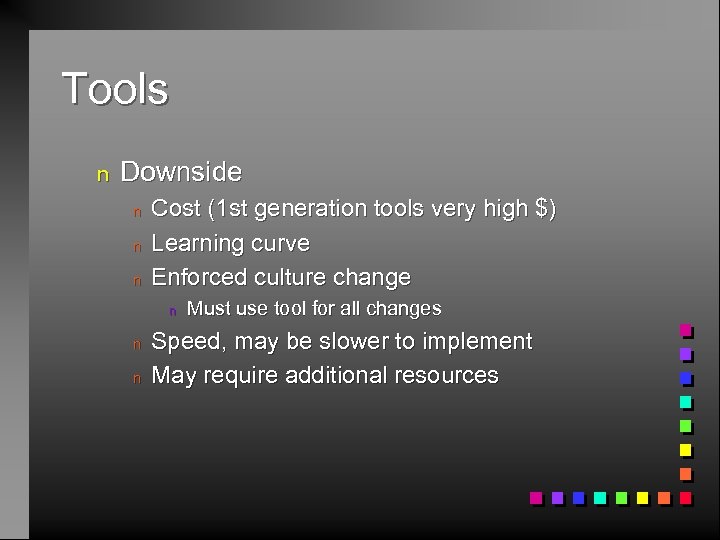

Tools n Downside n n n Cost (1 st generation tools very high $) Learning curve Enforced culture change n n n Must use tool for all changes Speed, may be slower to implement May require additional resources

Tools n Downside n n n Cost (1 st generation tools very high $) Learning curve Enforced culture change n n n Must use tool for all changes Speed, may be slower to implement May require additional resources

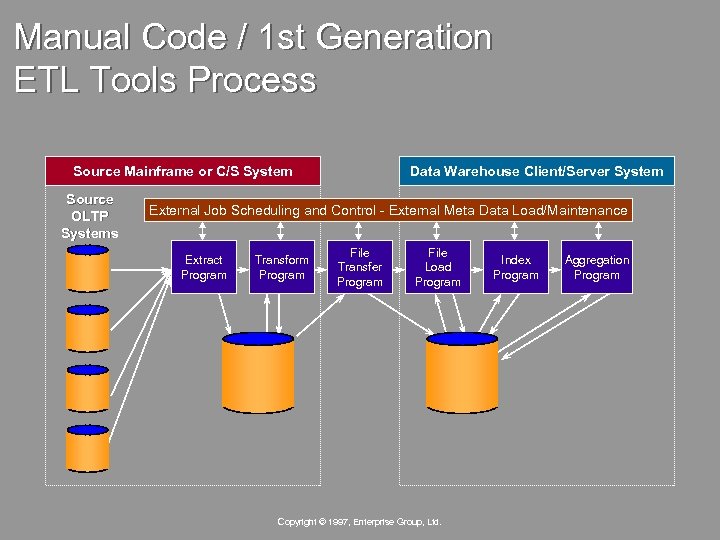

Manual Code / 1 st Generation ETL Tools Process Source Mainframe or C/S System Source OLTP Systems Data Warehouse Client/Server System External Job Scheduling and Control - External Meta Data Load/Maintenance Extract Program Transform Program File Transfer Program File Load Program Copyright © 1997, Enterprise Group, Ltd. Index Program Aggregation Program

Manual Code / 1 st Generation ETL Tools Process Source Mainframe or C/S System Source OLTP Systems Data Warehouse Client/Server System External Job Scheduling and Control - External Meta Data Load/Maintenance Extract Program Transform Program File Transfer Program File Load Program Copyright © 1997, Enterprise Group, Ltd. Index Program Aggregation Program

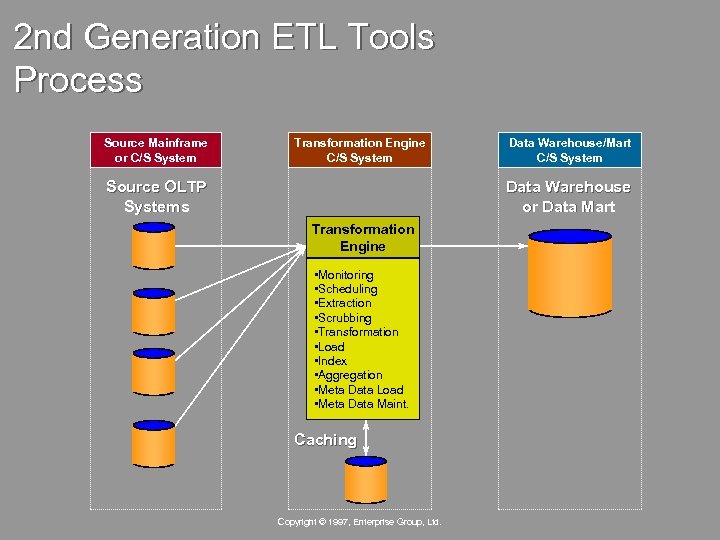

2 nd Generation ETL Tools Process Source Mainframe or C/S System Transformation Engine C/S System Source OLTP Systems Data Warehouse/Mart C/S System Data Warehouse or Data Mart Transformation Engine • Monitoring • Scheduling • Extraction • Scrubbing • Transformation • Load • Index • Aggregation • Meta Data Load • Meta Data Maint. Caching Copyright © 1997, Enterprise Group, Ltd.

2 nd Generation ETL Tools Process Source Mainframe or C/S System Transformation Engine C/S System Source OLTP Systems Data Warehouse/Mart C/S System Data Warehouse or Data Mart Transformation Engine • Monitoring • Scheduling • Extraction • Scrubbing • Transformation • Load • Index • Aggregation • Meta Data Load • Meta Data Maint. Caching Copyright © 1997, Enterprise Group, Ltd.

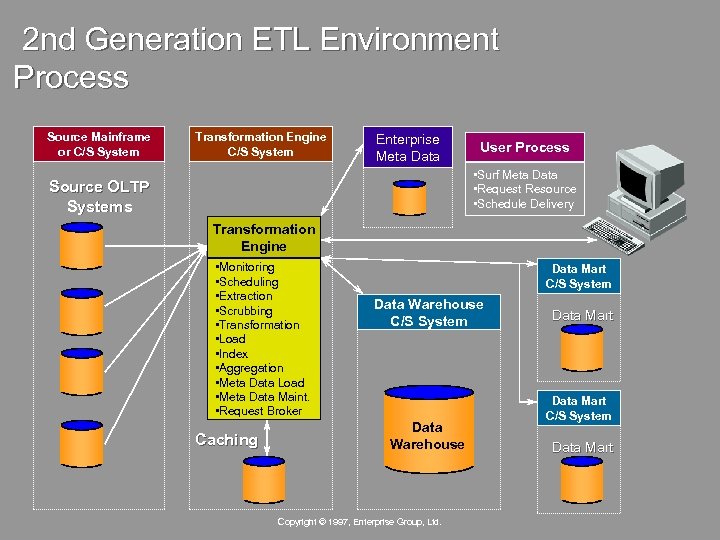

2 nd Generation ETL Environment Process Source Mainframe or C/S System Transformation Engine C/S System Enterprise Meta Data User Process • Surf Meta Data • Request Resource • Schedule Delivery Source OLTP Systems Transformation Engine • Monitoring • Scheduling • Extraction • Scrubbing • Transformation • Load • Index • Aggregation • Meta Data Load • Meta Data Maint. • Request Broker Caching Data Mart C/S System Data Warehouse Copyright © 1997, Enterprise Group, Ltd. Data Mart C/S System Data Mart

2 nd Generation ETL Environment Process Source Mainframe or C/S System Transformation Engine C/S System Enterprise Meta Data User Process • Surf Meta Data • Request Resource • Schedule Delivery Source OLTP Systems Transformation Engine • Monitoring • Scheduling • Extraction • Scrubbing • Transformation • Load • Index • Aggregation • Meta Data Load • Meta Data Maint. • Request Broker Caching Data Mart C/S System Data Warehouse Copyright © 1997, Enterprise Group, Ltd. Data Mart C/S System Data Mart

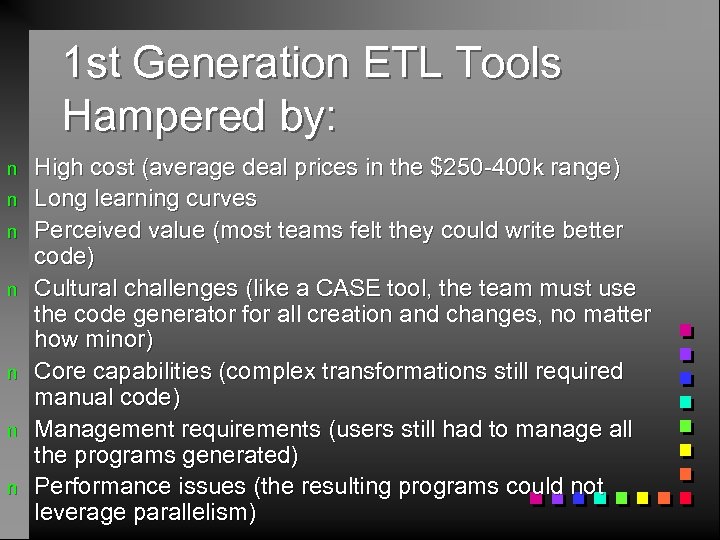

1 st Generation ETL Tools Hampered by: n n n n High cost (average deal prices in the $250 -400 k range) Long learning curves Perceived value (most teams felt they could write better code) Cultural challenges (like a CASE tool, the team must use the code generator for all creation and changes, no matter how minor) Core capabilities (complex transformations still required manual code) Management requirements (users still had to manage all the programs generated) Performance issues (the resulting programs could not leverage parallelism)

1 st Generation ETL Tools Hampered by: n n n n High cost (average deal prices in the $250 -400 k range) Long learning curves Perceived value (most teams felt they could write better code) Cultural challenges (like a CASE tool, the team must use the code generator for all creation and changes, no matter how minor) Core capabilities (complex transformations still required manual code) Management requirements (users still had to manage all the programs generated) Performance issues (the resulting programs could not leverage parallelism)

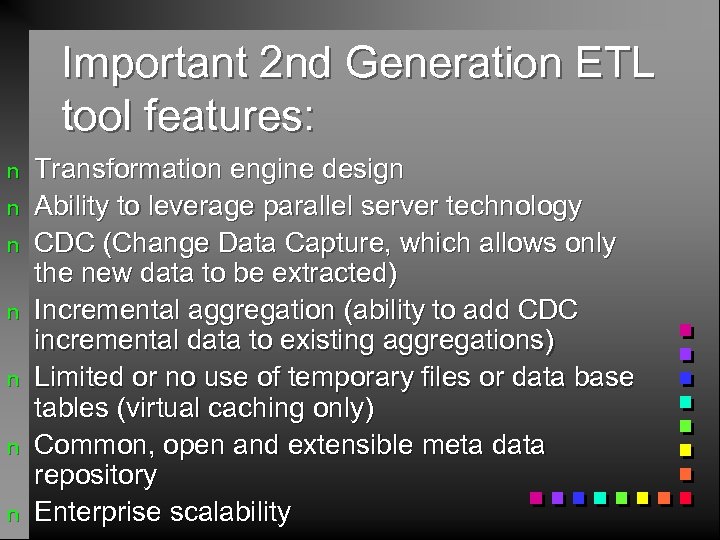

Important 2 nd Generation ETL tool features: n n n n Transformation engine design Ability to leverage parallel server technology CDC (Change Data Capture, which allows only the new data to be extracted) Incremental aggregation (ability to add CDC incremental data to existing aggregations) Limited or no use of temporary files or data base tables (virtual caching only) Common, open and extensible meta data repository Enterprise scalability

Important 2 nd Generation ETL tool features: n n n n Transformation engine design Ability to leverage parallel server technology CDC (Change Data Capture, which allows only the new data to be extracted) Incremental aggregation (ability to add CDC incremental data to existing aggregations) Limited or no use of temporary files or data base tables (virtual caching only) Common, open and extensible meta data repository Enterprise scalability

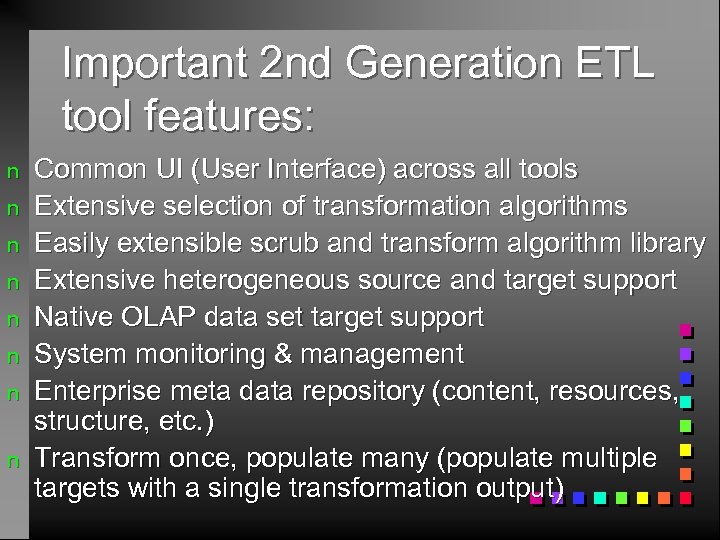

Important 2 nd Generation ETL tool features: n n n n Common UI (User Interface) across all tools Extensive selection of transformation algorithms Easily extensible scrub and transform algorithm library Extensive heterogeneous source and target support Native OLAP data set target support System monitoring & management Enterprise meta data repository (content, resources, structure, etc. ) Transform once, populate many (populate multiple targets with a single transformation output)

Important 2 nd Generation ETL tool features: n n n n Common UI (User Interface) across all tools Extensive selection of transformation algorithms Easily extensible scrub and transform algorithm library Extensive heterogeneous source and target support Native OLAP data set target support System monitoring & management Enterprise meta data repository (content, resources, structure, etc. ) Transform once, populate many (populate multiple targets with a single transformation output)

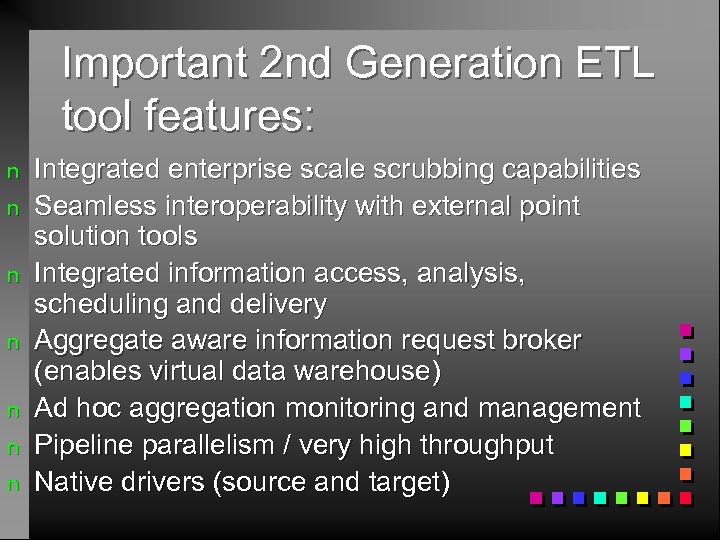

Important 2 nd Generation ETL tool features: n n n n Integrated enterprise scale scrubbing capabilities Seamless interoperability with external point solution tools Integrated information access, analysis, scheduling and delivery Aggregate aware information request broker (enables virtual data warehouse) Ad hoc aggregation monitoring and management Pipeline parallelism / very high throughput Native drivers (source and target)

Important 2 nd Generation ETL tool features: n n n n Integrated enterprise scale scrubbing capabilities Seamless interoperability with external point solution tools Integrated information access, analysis, scheduling and delivery Aggregate aware information request broker (enables virtual data warehouse) Ad hoc aggregation monitoring and management Pipeline parallelism / very high throughput Native drivers (source and target)

OLTP <> OLAP n OLTP n n normalized OLAP n n tools must provide multidimensional conceptual view of data ? ? ? Providing OLAP to User Analysts, E. F. Codd redundant data

OLTP <> OLAP n OLTP n n normalized OLAP n n tools must provide multidimensional conceptual view of data ? ? ? Providing OLAP to User Analysts, E. F. Codd redundant data

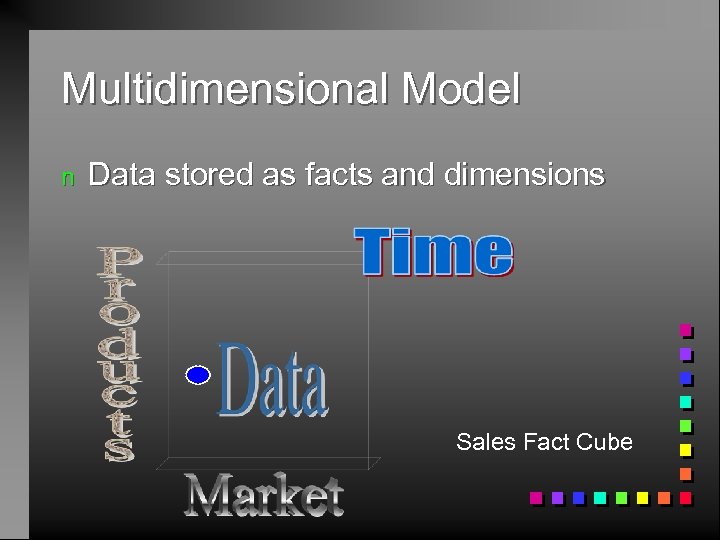

Multidimensional Model n Data stored as facts and dimensions Sales Fact Cube

Multidimensional Model n Data stored as facts and dimensions Sales Fact Cube