491c3e84204ed78cade15e8ae51a7419.ppt

- Количество слайдов: 97

CS 60057 Speech &Natural Language Processing Autumn 2007 Lecture 16 5 September 2007 Lecture 1, 7/21/2005 Natural Language Processing 1

CS 60057 Speech &Natural Language Processing Autumn 2007 Lecture 16 5 September 2007 Lecture 1, 7/21/2005 Natural Language Processing 1

A Brief History Machine translation was one of the first applications envisioned for computers p Warren Weaver (1949): “I have a text in front of me which is p written in Russian but I am going to pretend that it is really written in English and that it has been coded in some strange symbols. All I need to do is strip off the code in order to retrieve the information contained in the text. ” p First demonstrated by IBM in 1954 with a basic word-for-word translation system Lecture 1, 7/21/2005 Natural Language Processing 2

A Brief History Machine translation was one of the first applications envisioned for computers p Warren Weaver (1949): “I have a text in front of me which is p written in Russian but I am going to pretend that it is really written in English and that it has been coded in some strange symbols. All I need to do is strip off the code in order to retrieve the information contained in the text. ” p First demonstrated by IBM in 1954 with a basic word-for-word translation system Lecture 1, 7/21/2005 Natural Language Processing 2

Rule-Based vs. Statistical MT p p Rule-based MT: n Hand-written transfer rules n Rules can be based on lexical or structural transfer n Pro: firm grip on complex translation phenomena n Con: Often very labor-intensive -> lack of robustness Statistical MT n Mainly word or phrase-based translations n Translation are learned from actual data n Pro: Translations are learned automatically n Con: Difficult to model complex translation phenomena Lecture 1, 7/21/2005 Natural Language Processing 3

Rule-Based vs. Statistical MT p p Rule-based MT: n Hand-written transfer rules n Rules can be based on lexical or structural transfer n Pro: firm grip on complex translation phenomena n Con: Often very labor-intensive -> lack of robustness Statistical MT n Mainly word or phrase-based translations n Translation are learned from actual data n Pro: Translations are learned automatically n Con: Difficult to model complex translation phenomena Lecture 1, 7/21/2005 Natural Language Processing 3

Parallel Resources p p Newswire: DE-News (German-English), Hong-Kong News, Xinhua News (Chinese-English), Government: Canadian-Hansards (French-English), Europarl (Danish, Dutch, English, Finnish, French, German, Greek, Italian, Portugese, Spanish, Swedish), UN Treaties (Russian, English, Arabic, . . . ) Manuals: PHP, KDE, Open. Office (all from OPUS, many languages) Web pages: STRAND project (Philip Resnik) Lecture 1, 7/21/2005 Natural Language Processing 4

Parallel Resources p p Newswire: DE-News (German-English), Hong-Kong News, Xinhua News (Chinese-English), Government: Canadian-Hansards (French-English), Europarl (Danish, Dutch, English, Finnish, French, German, Greek, Italian, Portugese, Spanish, Swedish), UN Treaties (Russian, English, Arabic, . . . ) Manuals: PHP, KDE, Open. Office (all from OPUS, many languages) Web pages: STRAND project (Philip Resnik) Lecture 1, 7/21/2005 Natural Language Processing 4

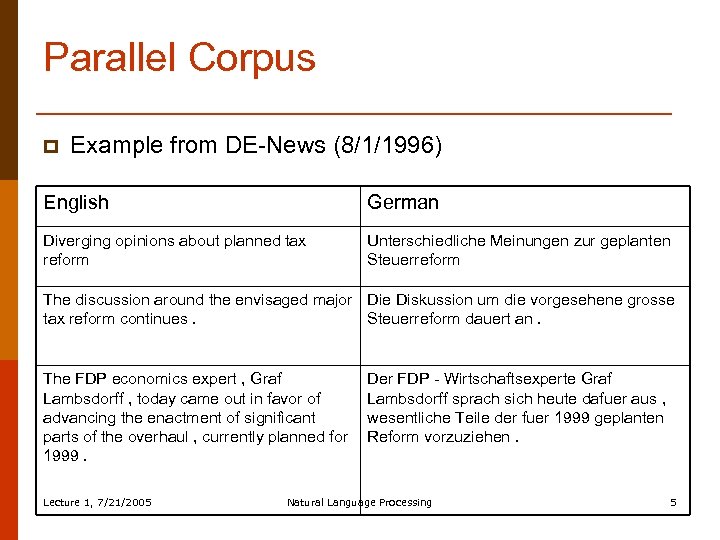

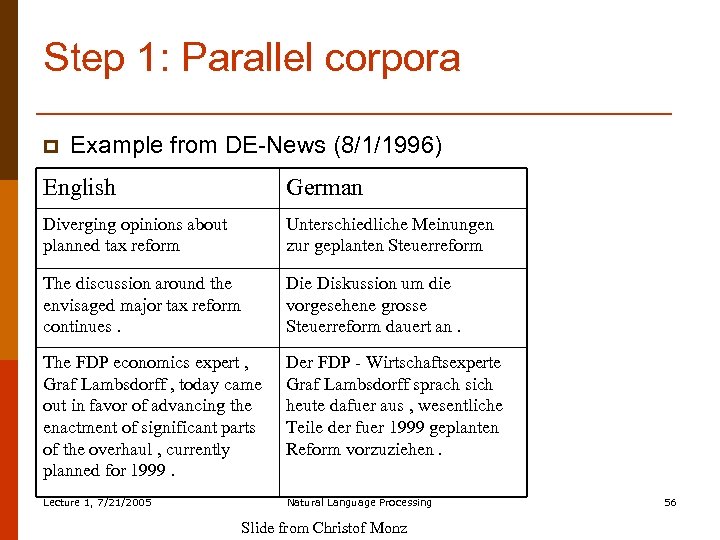

Parallel Corpus p Example from DE-News (8/1/1996) English German Diverging opinions about planned tax reform Unterschiedliche Meinungen zur geplanten Steuerreform The discussion around the envisaged major Die Diskussion um die vorgesehene grosse tax reform continues. Steuerreform dauert an. The FDP economics expert , Graf Lambsdorff , today came out in favor of advancing the enactment of significant parts of the overhaul , currently planned for 1999. Lecture 1, 7/21/2005 Der FDP - Wirtschaftsexperte Graf Lambsdorff sprach sich heute dafuer aus , wesentliche Teile der fuer 1999 geplanten Reform vorzuziehen. Natural Language Processing 5

Parallel Corpus p Example from DE-News (8/1/1996) English German Diverging opinions about planned tax reform Unterschiedliche Meinungen zur geplanten Steuerreform The discussion around the envisaged major Die Diskussion um die vorgesehene grosse tax reform continues. Steuerreform dauert an. The FDP economics expert , Graf Lambsdorff , today came out in favor of advancing the enactment of significant parts of the overhaul , currently planned for 1999. Lecture 1, 7/21/2005 Der FDP - Wirtschaftsexperte Graf Lambsdorff sprach sich heute dafuer aus , wesentliche Teile der fuer 1999 geplanten Reform vorzuziehen. Natural Language Processing 5

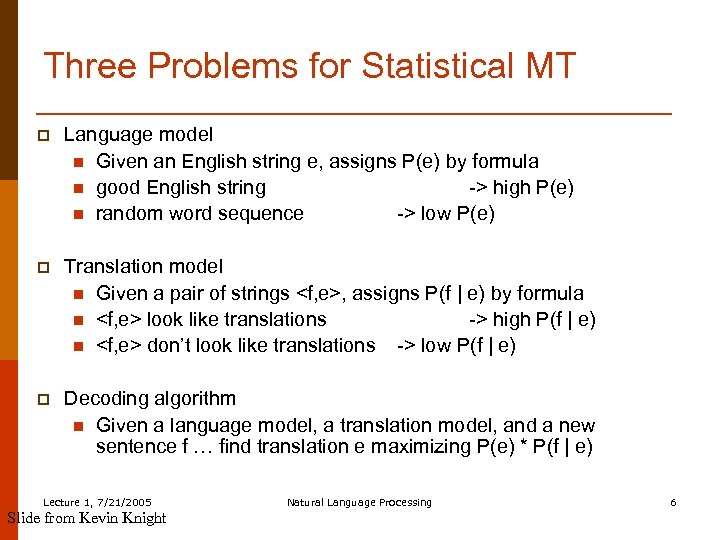

Three Problems for Statistical MT p Language model n Given an English string e, assigns P(e) by formula n good English string -> high P(e) n random word sequence -> low P(e) p Translation model n Given a pair of strings

Three Problems for Statistical MT p Language model n Given an English string e, assigns P(e) by formula n good English string -> high P(e) n random word sequence -> low P(e) p Translation model n Given a pair of strings

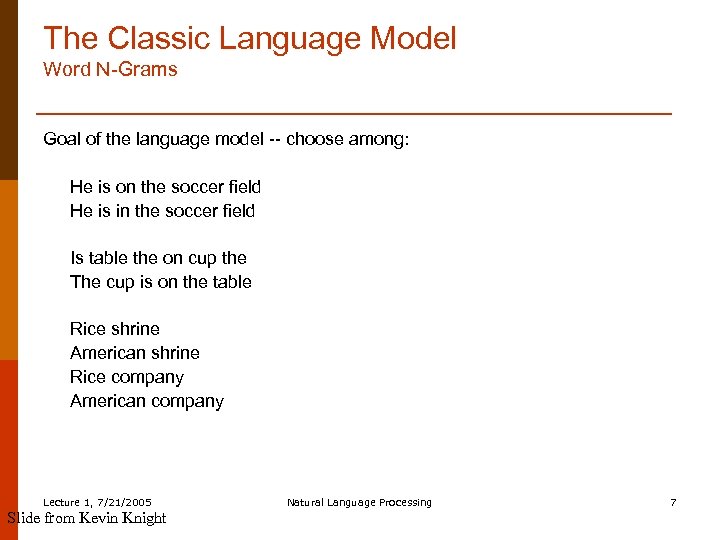

The Classic Language Model Word N-Grams Goal of the language model -- choose among: He is on the soccer field He is in the soccer field Is table the on cup the The cup is on the table Rice shrine American shrine Rice company American company Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 7

The Classic Language Model Word N-Grams Goal of the language model -- choose among: He is on the soccer field He is in the soccer field Is table the on cup the The cup is on the table Rice shrine American shrine Rice company American company Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 7

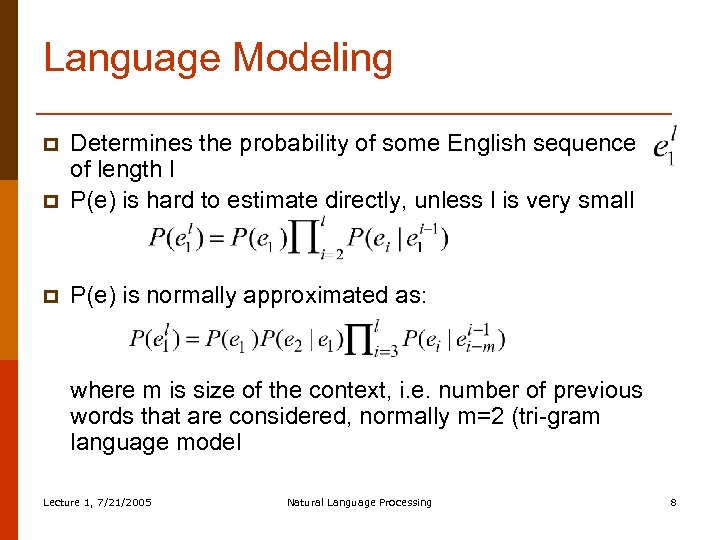

Language Modeling p Determines the probability of some English sequence of length l P(e) is hard to estimate directly, unless l is very small p P(e) is normally approximated as: p where m is size of the context, i. e. number of previous words that are considered, normally m=2 (tri-gram language model Lecture 1, 7/21/2005 Natural Language Processing 8

Language Modeling p Determines the probability of some English sequence of length l P(e) is hard to estimate directly, unless l is very small p P(e) is normally approximated as: p where m is size of the context, i. e. number of previous words that are considered, normally m=2 (tri-gram language model Lecture 1, 7/21/2005 Natural Language Processing 8

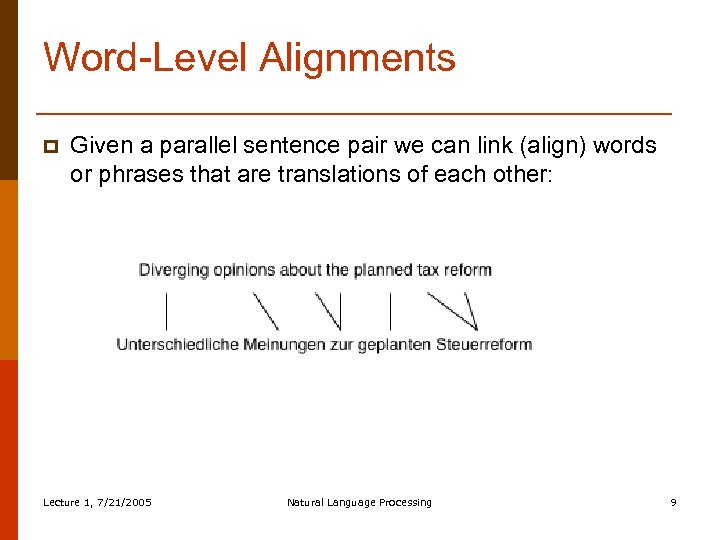

Word-Level Alignments p Given a parallel sentence pair we can link (align) words or phrases that are translations of each other: Lecture 1, 7/21/2005 Natural Language Processing 9

Word-Level Alignments p Given a parallel sentence pair we can link (align) words or phrases that are translations of each other: Lecture 1, 7/21/2005 Natural Language Processing 9

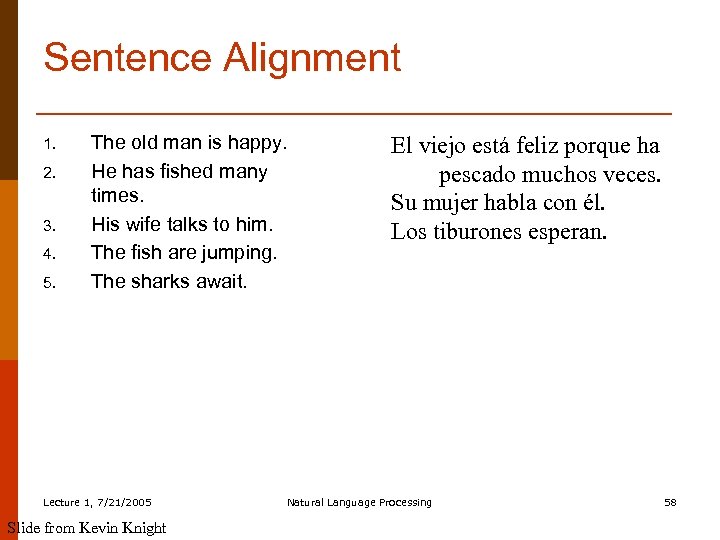

Sentence Alignment p p If document De is translation of document Df how do we find the translation for each sentence? The n-th sentence in De is not necessarily the translation of the n-th sentence in document Df In addition to 1: 1 alignments, there also 1: 0, 0: 1, 1: n, and n: 1 alignments Approximately 90% of the sentence alignments are 1: 1 Lecture 1, 7/21/2005 Natural Language Processing 10

Sentence Alignment p p If document De is translation of document Df how do we find the translation for each sentence? The n-th sentence in De is not necessarily the translation of the n-th sentence in document Df In addition to 1: 1 alignments, there also 1: 0, 0: 1, 1: n, and n: 1 alignments Approximately 90% of the sentence alignments are 1: 1 Lecture 1, 7/21/2005 Natural Language Processing 10

Sentence Alignment (c’ntd) p There are several sentence alignment algorithms: n Align (Gale & Church): Aligns sentences based on their character length (shorter sentences tend to have shorter translations then longer sentences). Works astonishingly well n Char-align: (Church): Aligns based on shared character sequences. Works fine for similar languages or technical domains n K-Vec (Fung & Church): Induces a translation lexicon from the parallel texts based on the distribution of foreign-English word pairs. Lecture 1, 7/21/2005 Natural Language Processing 11

Sentence Alignment (c’ntd) p There are several sentence alignment algorithms: n Align (Gale & Church): Aligns sentences based on their character length (shorter sentences tend to have shorter translations then longer sentences). Works astonishingly well n Char-align: (Church): Aligns based on shared character sequences. Works fine for similar languages or technical domains n K-Vec (Fung & Church): Induces a translation lexicon from the parallel texts based on the distribution of foreign-English word pairs. Lecture 1, 7/21/2005 Natural Language Processing 11

Computing Translation Probabilities p p Given a parallel corpus we can estimate P(e | f) The maximum likelihood estimation of P(e | f) is: freq(e, f)/freq(f) Way too specific to get any reasonable frequencies! Vast majority of unseen data will have zero counts! P(e | f ) could be re-defined as: Problem: The English words maximizing P(e | f ) might not result in a readable sentence Lecture 1, 7/21/2005 Natural Language Processing 12

Computing Translation Probabilities p p Given a parallel corpus we can estimate P(e | f) The maximum likelihood estimation of P(e | f) is: freq(e, f)/freq(f) Way too specific to get any reasonable frequencies! Vast majority of unseen data will have zero counts! P(e | f ) could be re-defined as: Problem: The English words maximizing P(e | f ) might not result in a readable sentence Lecture 1, 7/21/2005 Natural Language Processing 12

Computing Translation Probabilities (c’tnd) p p p We can account for adequacy: each foreign word translates into its most likely English word We cannot guarantee that this will result in a fluent English sentence Solution: transform P(e | f) with Bayes’ rule: P(e | f) = P(e) P(f | e) / P(f) P(f | e) accounts for adequacy P(e) accounts for fluency Lecture 1, 7/21/2005 Natural Language Processing 13

Computing Translation Probabilities (c’tnd) p p p We can account for adequacy: each foreign word translates into its most likely English word We cannot guarantee that this will result in a fluent English sentence Solution: transform P(e | f) with Bayes’ rule: P(e | f) = P(e) P(f | e) / P(f) P(f | e) accounts for adequacy P(e) accounts for fluency Lecture 1, 7/21/2005 Natural Language Processing 13

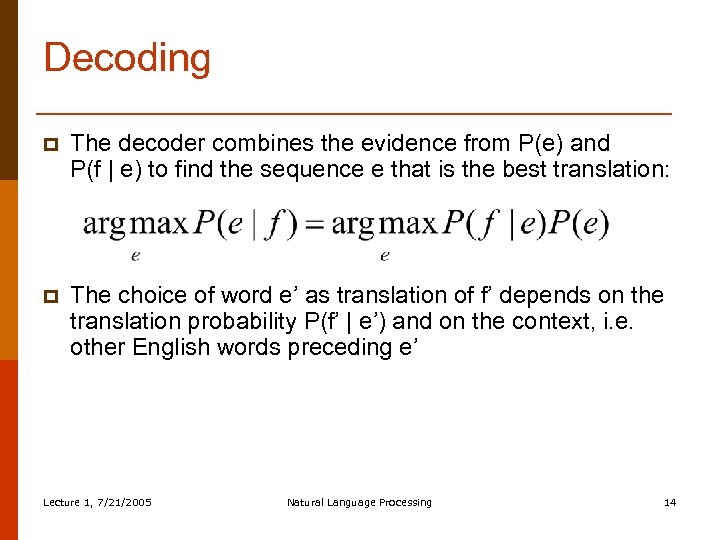

Decoding p The decoder combines the evidence from P(e) and P(f | e) to find the sequence e that is the best translation: p The choice of word e’ as translation of f’ depends on the translation probability P(f’ | e’) and on the context, i. e. other English words preceding e’ Lecture 1, 7/21/2005 Natural Language Processing 14

Decoding p The decoder combines the evidence from P(e) and P(f | e) to find the sequence e that is the best translation: p The choice of word e’ as translation of f’ depends on the translation probability P(f’ | e’) and on the context, i. e. other English words preceding e’ Lecture 1, 7/21/2005 Natural Language Processing 14

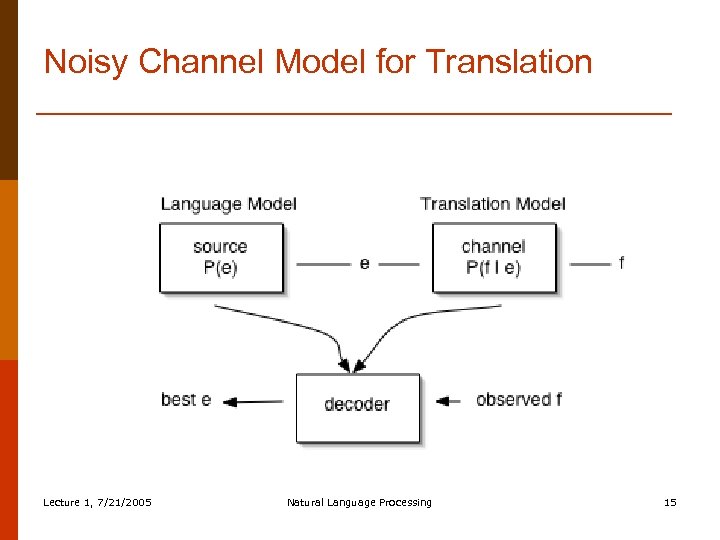

Noisy Channel Model for Translation Lecture 1, 7/21/2005 Natural Language Processing 15

Noisy Channel Model for Translation Lecture 1, 7/21/2005 Natural Language Processing 15

Translation Modeling p p p Determines the probability that the foreign word f is a translation of the English word e How to compute P(f | e) from a parallel corpus? Statistical approaches rely on the co-occurrence of e and f in the parallel data: If e and f tend to co-occur in parallel sentence pairs, they are likely to be translations of one another Lecture 1, 7/21/2005 Natural Language Processing 16

Translation Modeling p p p Determines the probability that the foreign word f is a translation of the English word e How to compute P(f | e) from a parallel corpus? Statistical approaches rely on the co-occurrence of e and f in the parallel data: If e and f tend to co-occur in parallel sentence pairs, they are likely to be translations of one another Lecture 1, 7/21/2005 Natural Language Processing 16

Finding Translations in a Parallel Corpus p p Into which foreign words f, . . . , f’ does e translate? Commonly, four factors are used: n How often do e and f co-occur? (translation) n How likely is a word occurring at position i to translate into a word occurring at position j? (distortion) For example: English is a verb-second language, whereas German is a verb-final language n How likely is e to translate into more than one word? (fertility) For example: defeated can translate into eine Niederlage erleiden n How likely is a foreign word to be spuriously generated? (null translation) Lecture 1, 7/21/2005 Natural Language Processing 17

Finding Translations in a Parallel Corpus p p Into which foreign words f, . . . , f’ does e translate? Commonly, four factors are used: n How often do e and f co-occur? (translation) n How likely is a word occurring at position i to translate into a word occurring at position j? (distortion) For example: English is a verb-second language, whereas German is a verb-final language n How likely is e to translate into more than one word? (fertility) For example: defeated can translate into eine Niederlage erleiden n How likely is a foreign word to be spuriously generated? (null translation) Lecture 1, 7/21/2005 Natural Language Processing 17

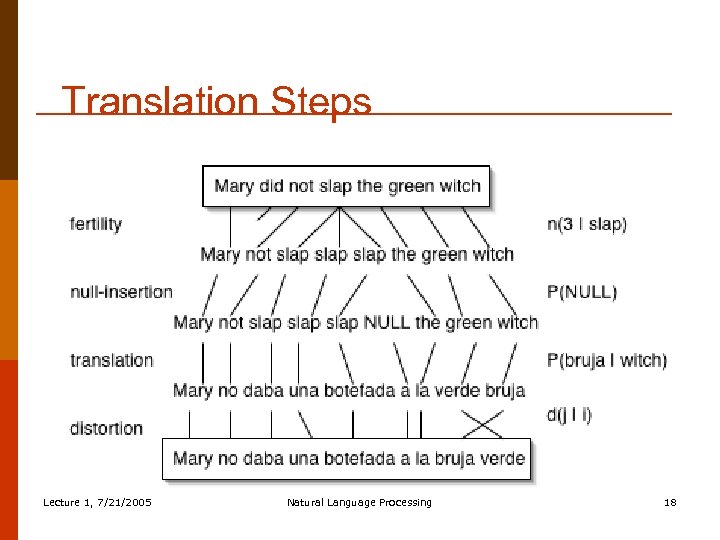

Translation Steps Lecture 1, 7/21/2005 Natural Language Processing 18

Translation Steps Lecture 1, 7/21/2005 Natural Language Processing 18

IBM Models 1– 5 p p p Model 1: Bag of words n Unique local maxima n Efficient EM algorithm (Model 1– 2) Model 2: General alignment: Model 3: fertility: n(k | e) n No full EM, count only neighbors (Model 3– 5) n Deficient (Model 3– 4) Model 4: Relative distortion, word classes Model 5: Extra variables to avoid deficiency Lecture 1, 7/21/2005 Natural Language Processing 19

IBM Models 1– 5 p p p Model 1: Bag of words n Unique local maxima n Efficient EM algorithm (Model 1– 2) Model 2: General alignment: Model 3: fertility: n(k | e) n No full EM, count only neighbors (Model 3– 5) n Deficient (Model 3– 4) Model 4: Relative distortion, word classes Model 5: Extra variables to avoid deficiency Lecture 1, 7/21/2005 Natural Language Processing 19

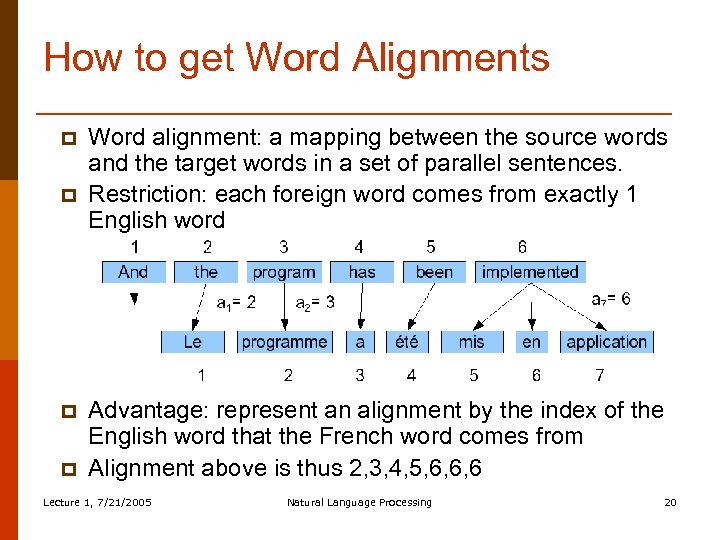

How to get Word Alignments p p Word alignment: a mapping between the source words and the target words in a set of parallel sentences. Restriction: each foreign word comes from exactly 1 English word Advantage: represent an alignment by the index of the English word that the French word comes from Alignment above is thus 2, 3, 4, 5, 6, 6, 6 Lecture 1, 7/21/2005 Natural Language Processing 20

How to get Word Alignments p p Word alignment: a mapping between the source words and the target words in a set of parallel sentences. Restriction: each foreign word comes from exactly 1 English word Advantage: represent an alignment by the index of the English word that the French word comes from Alignment above is thus 2, 3, 4, 5, 6, 6, 6 Lecture 1, 7/21/2005 Natural Language Processing 20

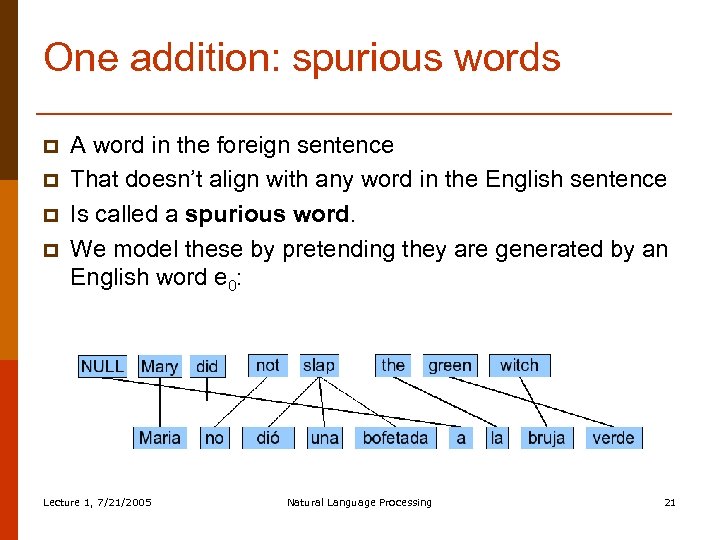

One addition: spurious words p p A word in the foreign sentence That doesn’t align with any word in the English sentence Is called a spurious word. We model these by pretending they are generated by an English word e 0: Lecture 1, 7/21/2005 Natural Language Processing 21

One addition: spurious words p p A word in the foreign sentence That doesn’t align with any word in the English sentence Is called a spurious word. We model these by pretending they are generated by an English word e 0: Lecture 1, 7/21/2005 Natural Language Processing 21

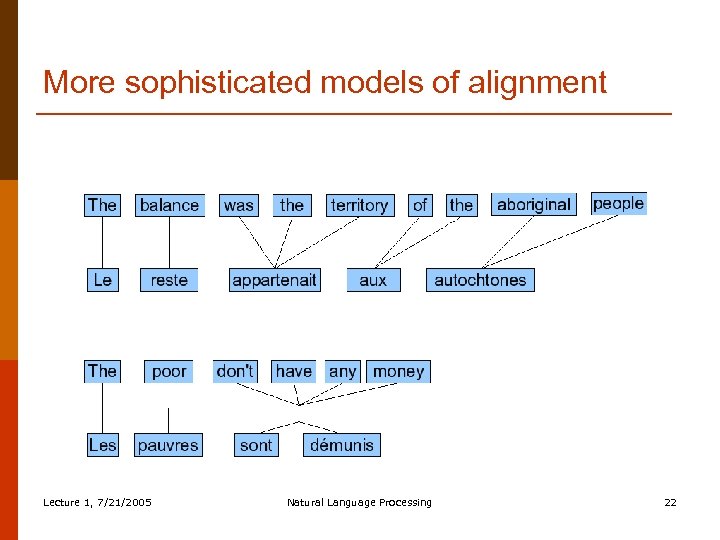

More sophisticated models of alignment Lecture 1, 7/21/2005 Natural Language Processing 22

More sophisticated models of alignment Lecture 1, 7/21/2005 Natural Language Processing 22

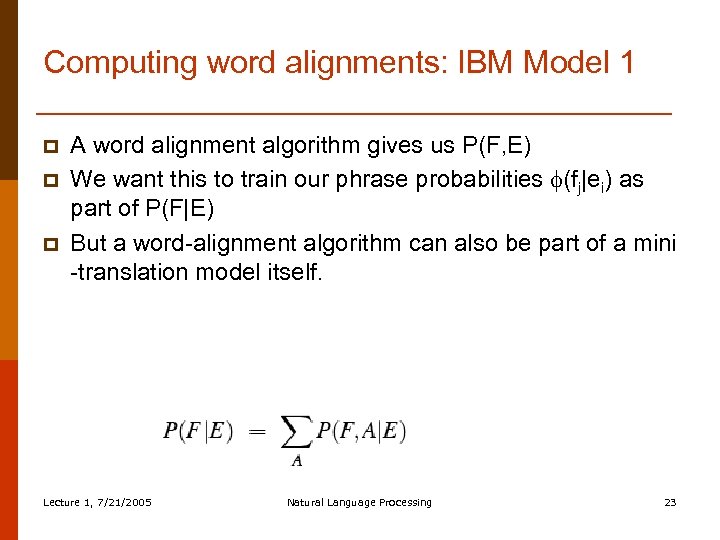

Computing word alignments: IBM Model 1 p p p A word alignment algorithm gives us P(F, E) We want this to train our phrase probabilities (fj|ei) as part of P(F|E) But a word-alignment algorithm can also be part of a mini -translation model itself. Lecture 1, 7/21/2005 Natural Language Processing 23

Computing word alignments: IBM Model 1 p p p A word alignment algorithm gives us P(F, E) We want this to train our phrase probabilities (fj|ei) as part of P(F|E) But a word-alignment algorithm can also be part of a mini -translation model itself. Lecture 1, 7/21/2005 Natural Language Processing 23

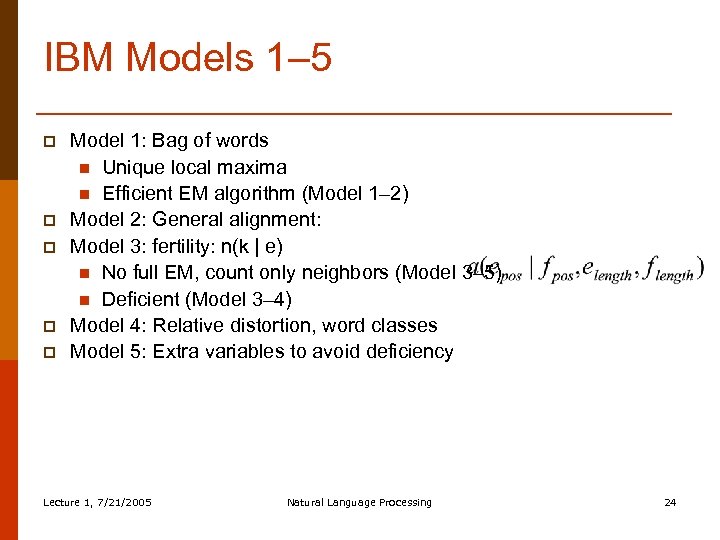

IBM Models 1– 5 p p p Model 1: Bag of words n Unique local maxima n Efficient EM algorithm (Model 1– 2) Model 2: General alignment: Model 3: fertility: n(k | e) n No full EM, count only neighbors (Model 3– 5) n Deficient (Model 3– 4) Model 4: Relative distortion, word classes Model 5: Extra variables to avoid deficiency Lecture 1, 7/21/2005 Natural Language Processing 24

IBM Models 1– 5 p p p Model 1: Bag of words n Unique local maxima n Efficient EM algorithm (Model 1– 2) Model 2: General alignment: Model 3: fertility: n(k | e) n No full EM, count only neighbors (Model 3– 5) n Deficient (Model 3– 4) Model 4: Relative distortion, word classes Model 5: Extra variables to avoid deficiency Lecture 1, 7/21/2005 Natural Language Processing 24

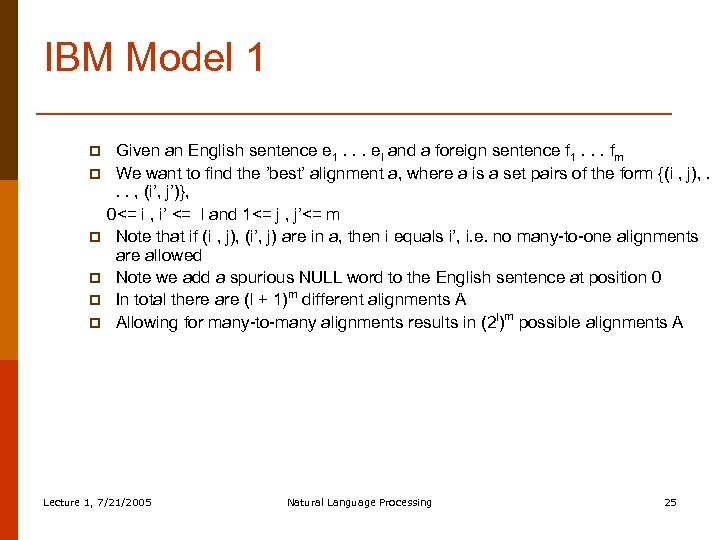

IBM Model 1 p p p Given an English sentence e 1. . . el and a foreign sentence f 1. . . fm We want to find the ’best’ alignment a, where a is a set pairs of the form {(i , j), . . . , (i’, j’)}, 0<= i , i’ <= l and 1<= j , j’<= m Note that if (i , j), (i’, j) are in a, then i equals i’, i. e. no many-to-one alignments are allowed Note we add a spurious NULL word to the English sentence at position 0 In total there are (l + 1)m different alignments A Allowing for many-to-many alignments results in (2 l)m possible alignments A Lecture 1, 7/21/2005 Natural Language Processing 25

IBM Model 1 p p p Given an English sentence e 1. . . el and a foreign sentence f 1. . . fm We want to find the ’best’ alignment a, where a is a set pairs of the form {(i , j), . . . , (i’, j’)}, 0<= i , i’ <= l and 1<= j , j’<= m Note that if (i , j), (i’, j) are in a, then i equals i’, i. e. no many-to-one alignments are allowed Note we add a spurious NULL word to the English sentence at position 0 In total there are (l + 1)m different alignments A Allowing for many-to-many alignments results in (2 l)m possible alignments A Lecture 1, 7/21/2005 Natural Language Processing 25

IBM Model 1 p p p Simplest of the IBM models Does not consider word order (bag-of-words approach) Does not model one-to-many alignments Computationally inexpensive Useful for parameter estimations that are passed on to more elaborate models Lecture 1, 7/21/2005 Natural Language Processing 26

IBM Model 1 p p p Simplest of the IBM models Does not consider word order (bag-of-words approach) Does not model one-to-many alignments Computationally inexpensive Useful for parameter estimations that are passed on to more elaborate models Lecture 1, 7/21/2005 Natural Language Processing 26

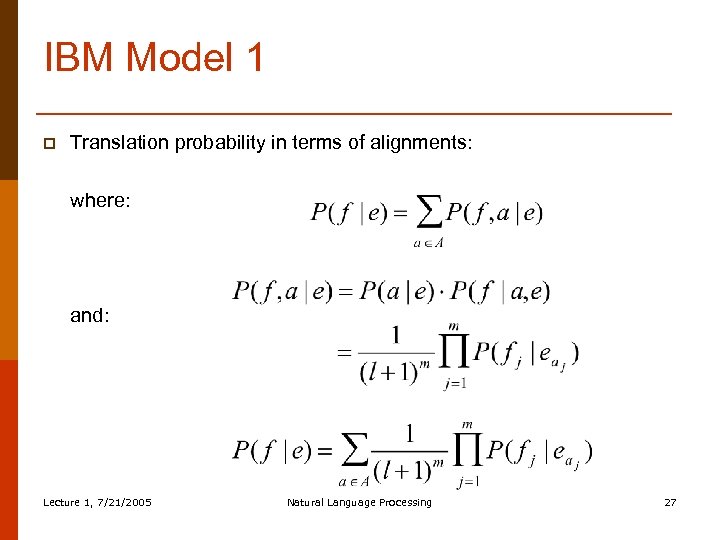

IBM Model 1 p Translation probability in terms of alignments: where: and: Lecture 1, 7/21/2005 Natural Language Processing 27

IBM Model 1 p Translation probability in terms of alignments: where: and: Lecture 1, 7/21/2005 Natural Language Processing 27

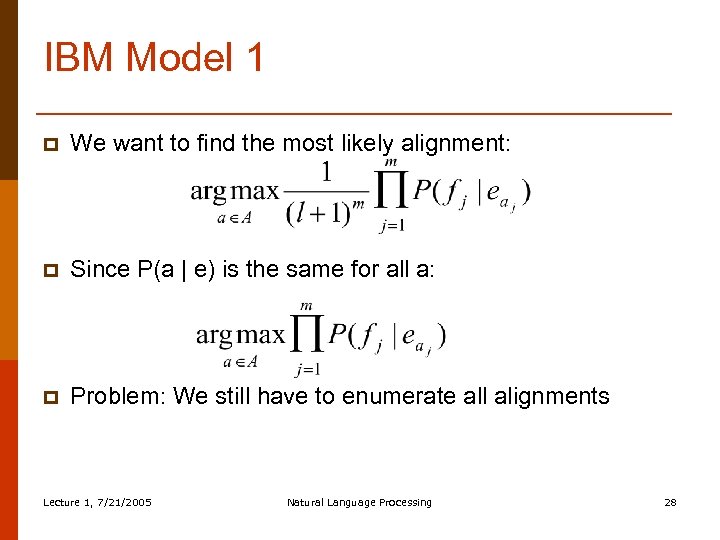

IBM Model 1 p We want to find the most likely alignment: p Since P(a | e) is the same for all a: p Problem: We still have to enumerate all alignments Lecture 1, 7/21/2005 Natural Language Processing 28

IBM Model 1 p We want to find the most likely alignment: p Since P(a | e) is the same for all a: p Problem: We still have to enumerate all alignments Lecture 1, 7/21/2005 Natural Language Processing 28

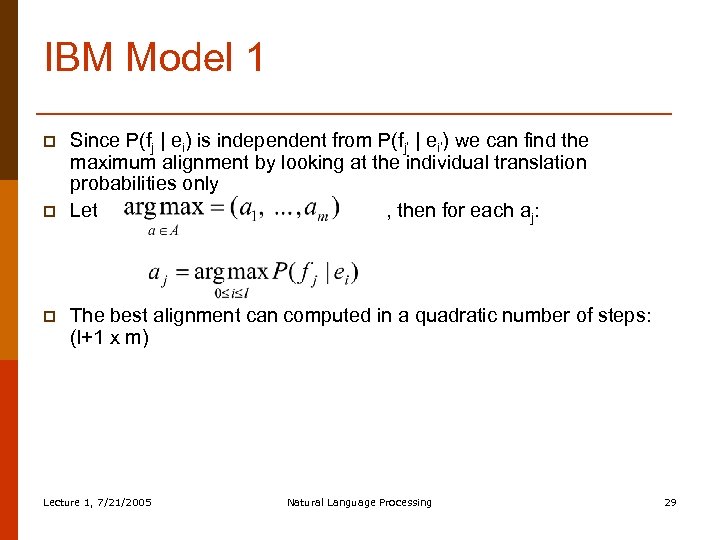

IBM Model 1 p p p Since P(fj | ei) is independent from P(fj’ | ei’) we can find the maximum alignment by looking at the individual translation probabilities only Let , then for each aj: The best alignment can computed in a quadratic number of steps: (l+1 x m) Lecture 1, 7/21/2005 Natural Language Processing 29

IBM Model 1 p p p Since P(fj | ei) is independent from P(fj’ | ei’) we can find the maximum alignment by looking at the individual translation probabilities only Let , then for each aj: The best alignment can computed in a quadratic number of steps: (l+1 x m) Lecture 1, 7/21/2005 Natural Language Processing 29

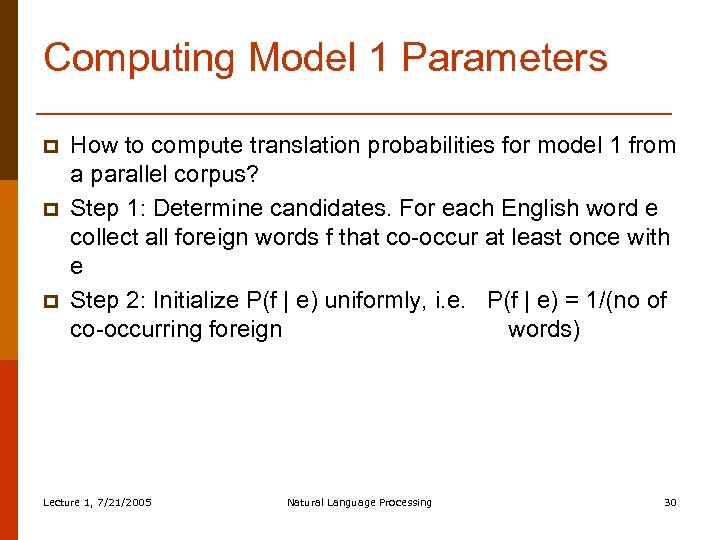

Computing Model 1 Parameters p p p How to compute translation probabilities for model 1 from a parallel corpus? Step 1: Determine candidates. For each English word e collect all foreign words f that co-occur at least once with e Step 2: Initialize P(f | e) uniformly, i. e. P(f | e) = 1/(no of co-occurring foreign words) Lecture 1, 7/21/2005 Natural Language Processing 30

Computing Model 1 Parameters p p p How to compute translation probabilities for model 1 from a parallel corpus? Step 1: Determine candidates. For each English word e collect all foreign words f that co-occur at least once with e Step 2: Initialize P(f | e) uniformly, i. e. P(f | e) = 1/(no of co-occurring foreign words) Lecture 1, 7/21/2005 Natural Language Processing 30

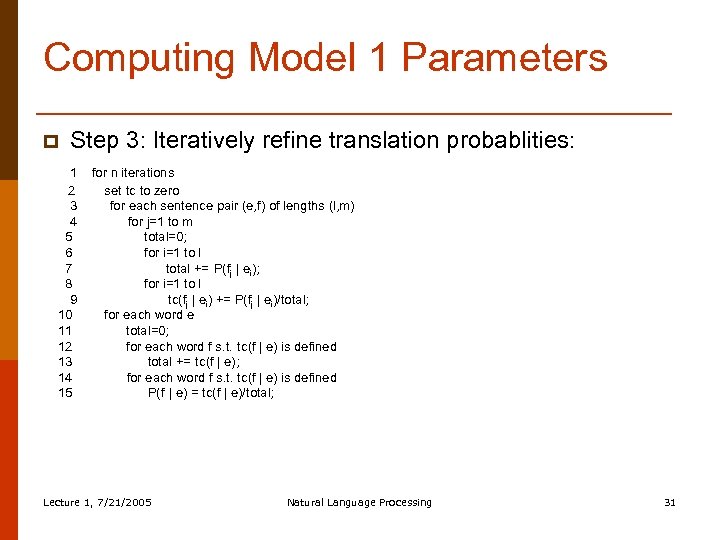

Computing Model 1 Parameters p Step 3: Iteratively refine translation probablities: 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 for n iterations set tc to zero for each sentence pair (e, f) of lengths (l, m) for j=1 to m total=0; for i=1 to l total += P(fj | ei); for i=1 to l tc(fj | ei) += P(fj | ei)/total; for each word e total=0; for each word f s. t. tc(f | e) is defined total += tc(f | e); for each word f s. t. tc(f | e) is defined P(f | e) = tc(f | e)/total; Lecture 1, 7/21/2005 Natural Language Processing 31

Computing Model 1 Parameters p Step 3: Iteratively refine translation probablities: 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 for n iterations set tc to zero for each sentence pair (e, f) of lengths (l, m) for j=1 to m total=0; for i=1 to l total += P(fj | ei); for i=1 to l tc(fj | ei) += P(fj | ei)/total; for each word e total=0; for each word f s. t. tc(f | e) is defined total += tc(f | e); for each word f s. t. tc(f | e) is defined P(f | e) = tc(f | e)/total; Lecture 1, 7/21/2005 Natural Language Processing 31

IBM Model 1 Example p p Parallel ‘corpus’: the dog : : le chien the cat : : le chat Step 1+2 (collect candidates and initialize uniformly): P(le | the) = P(chien | the) = P(chat | the) = 1/3 P(le | dog) = P(chien | dog) = P(chat | dog) = 1/3 P(le | cat) = P(chien | cat) = P(chat | cat) = 1/3 P(le | NULL) = P(chien | NULL) = P(chat | NULL) = 1/3 Lecture 1, 7/21/2005 Natural Language Processing 32

IBM Model 1 Example p p Parallel ‘corpus’: the dog : : le chien the cat : : le chat Step 1+2 (collect candidates and initialize uniformly): P(le | the) = P(chien | the) = P(chat | the) = 1/3 P(le | dog) = P(chien | dog) = P(chat | dog) = 1/3 P(le | cat) = P(chien | cat) = P(chat | cat) = 1/3 P(le | NULL) = P(chien | NULL) = P(chat | NULL) = 1/3 Lecture 1, 7/21/2005 Natural Language Processing 32

IBM Model 1 Example p p Step 3: Iterate NULL the dog : : le chien n n j=1 total = P(le | NULL)+P(le | the)+P(le | dog)= 1 tc(le | NULL) += P(le | NULL)/1 = 0 +=. 333/1 = 0. 333 tc(le | the) += P(le | the)/1 = 0 +=. 333/1 = 0. 333 tc(le | dog) += P(le | dog)/1 = 0 +=. 333/1 = 0. 333 j=2 total = P(chien | NULL)+P(chien | the)+P(chien | dog)=1 tc(chien | NULL) += P(chien | NULL)/1 = 0 +=. 333/1 = 0. 333 tc(chien | the) += P(chien | the)/1 = 0 +=. 333/1 = 0. 333 tc(chien | dog) += P(chien | dog)/1 = 0 +=. 333/1 = 0. 333 Lecture 1, 7/21/2005 Natural Language Processing 33

IBM Model 1 Example p p Step 3: Iterate NULL the dog : : le chien n n j=1 total = P(le | NULL)+P(le | the)+P(le | dog)= 1 tc(le | NULL) += P(le | NULL)/1 = 0 +=. 333/1 = 0. 333 tc(le | the) += P(le | the)/1 = 0 +=. 333/1 = 0. 333 tc(le | dog) += P(le | dog)/1 = 0 +=. 333/1 = 0. 333 j=2 total = P(chien | NULL)+P(chien | the)+P(chien | dog)=1 tc(chien | NULL) += P(chien | NULL)/1 = 0 +=. 333/1 = 0. 333 tc(chien | the) += P(chien | the)/1 = 0 +=. 333/1 = 0. 333 tc(chien | dog) += P(chien | dog)/1 = 0 +=. 333/1 = 0. 333 Lecture 1, 7/21/2005 Natural Language Processing 33

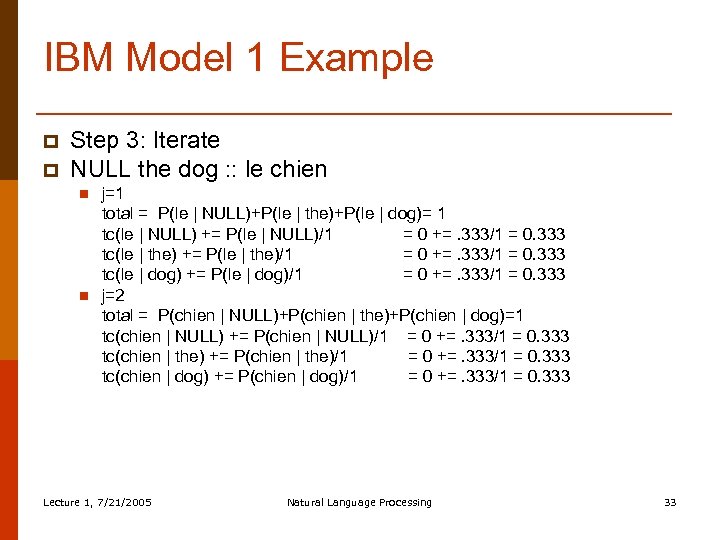

IBM Model 1 Example p NULL the cat : : le chat n n j=1 total = P(le | NULL)+P(le | the)+P(le | cat)=1 tc(le | NULL) += P(le | NULL)/1 = 0. 333 +=. 333/1 = 0. 666 tc(le | the) += P(le | the)/1 = 0. 333 +=. 333/1 = 0. 666 tc(le | cat) += P(le | cat)/1 =0 +=. 333/1 = 0. 333 j=2 total = P(chien | NULL)+P(chien | the)+P(chien | dog)=1 tc(chat | NULL) += P(chat | NULL)/1 = 0 +=. 333/1 = 0. 333 tc(chat | the) += P(chat | the)/1 = 0 +=. 333/1 = 0. 333 tc(chat | cat) += P(chat | dog)/1 = 0 +=. 333/1 = 0. 333 Lecture 1, 7/21/2005 Natural Language Processing 34

IBM Model 1 Example p NULL the cat : : le chat n n j=1 total = P(le | NULL)+P(le | the)+P(le | cat)=1 tc(le | NULL) += P(le | NULL)/1 = 0. 333 +=. 333/1 = 0. 666 tc(le | the) += P(le | the)/1 = 0. 333 +=. 333/1 = 0. 666 tc(le | cat) += P(le | cat)/1 =0 +=. 333/1 = 0. 333 j=2 total = P(chien | NULL)+P(chien | the)+P(chien | dog)=1 tc(chat | NULL) += P(chat | NULL)/1 = 0 +=. 333/1 = 0. 333 tc(chat | the) += P(chat | the)/1 = 0 +=. 333/1 = 0. 333 tc(chat | cat) += P(chat | dog)/1 = 0 +=. 333/1 = 0. 333 Lecture 1, 7/21/2005 Natural Language Processing 34

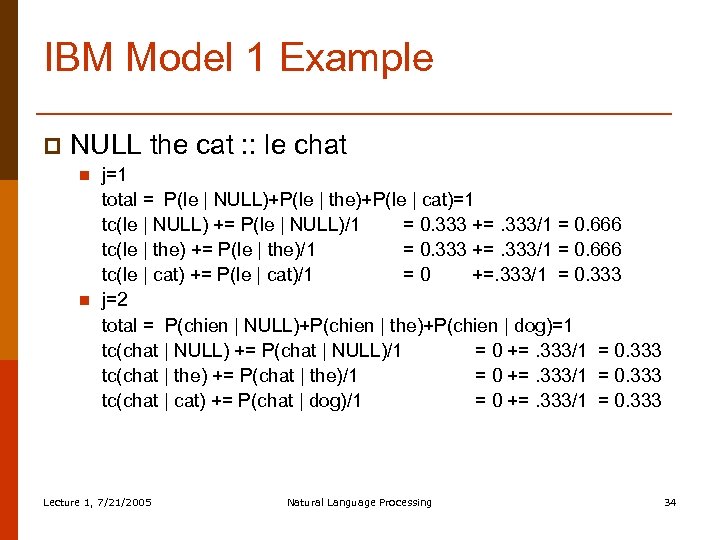

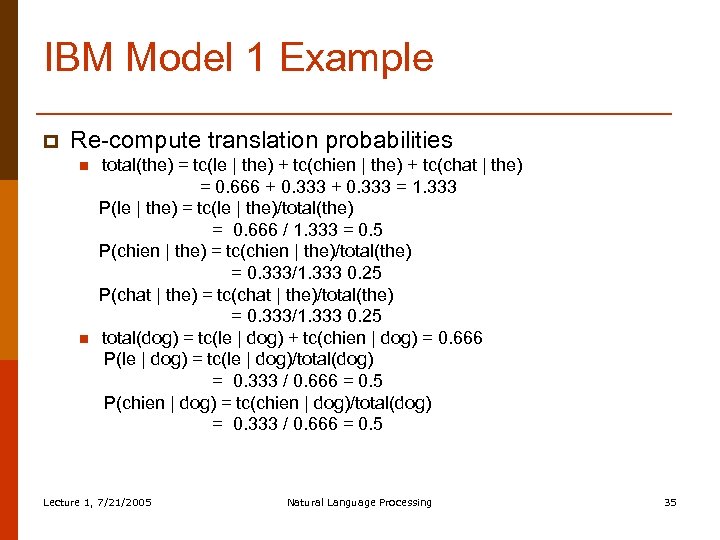

IBM Model 1 Example p Re-compute translation probabilities n n total(the) = tc(le | the) + tc(chien | the) + tc(chat | the) = 0. 666 + 0. 333 = 1. 333 P(le | the) = tc(le | the)/total(the) = 0. 666 / 1. 333 = 0. 5 P(chien | the) = tc(chien | the)/total(the) = 0. 333/1. 333 0. 25 P(chat | the) = tc(chat | the)/total(the) = 0. 333/1. 333 0. 25 total(dog) = tc(le | dog) + tc(chien | dog) = 0. 666 P(le | dog) = tc(le | dog)/total(dog) = 0. 333 / 0. 666 = 0. 5 P(chien | dog) = tc(chien | dog)/total(dog) = 0. 333 / 0. 666 = 0. 5 Lecture 1, 7/21/2005 Natural Language Processing 35

IBM Model 1 Example p Re-compute translation probabilities n n total(the) = tc(le | the) + tc(chien | the) + tc(chat | the) = 0. 666 + 0. 333 = 1. 333 P(le | the) = tc(le | the)/total(the) = 0. 666 / 1. 333 = 0. 5 P(chien | the) = tc(chien | the)/total(the) = 0. 333/1. 333 0. 25 P(chat | the) = tc(chat | the)/total(the) = 0. 333/1. 333 0. 25 total(dog) = tc(le | dog) + tc(chien | dog) = 0. 666 P(le | dog) = tc(le | dog)/total(dog) = 0. 333 / 0. 666 = 0. 5 P(chien | dog) = tc(chien | dog)/total(dog) = 0. 333 / 0. 666 = 0. 5 Lecture 1, 7/21/2005 Natural Language Processing 35

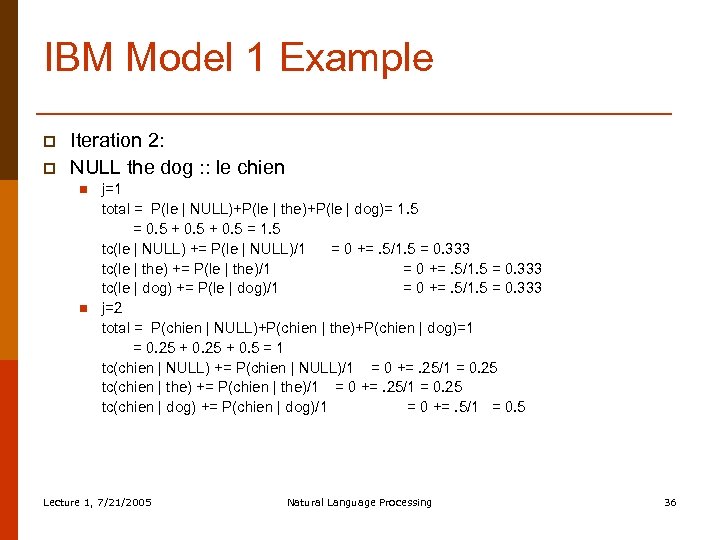

IBM Model 1 Example p p Iteration 2: NULL the dog : : le chien n n j=1 total = P(le | NULL)+P(le | the)+P(le | dog)= 1. 5 = 0. 5 + 0. 5 = 1. 5 tc(le | NULL) += P(le | NULL)/1 = 0 +=. 5/1. 5 = 0. 333 tc(le | the) += P(le | the)/1 = 0 +=. 5/1. 5 = 0. 333 tc(le | dog) += P(le | dog)/1 = 0 +=. 5/1. 5 = 0. 333 j=2 total = P(chien | NULL)+P(chien | the)+P(chien | dog)=1 = 0. 25 + 0. 5 = 1 tc(chien | NULL) += P(chien | NULL)/1 = 0 +=. 25/1 = 0. 25 tc(chien | the) += P(chien | the)/1 = 0 +=. 25/1 = 0. 25 tc(chien | dog) += P(chien | dog)/1 = 0 +=. 5/1 = 0. 5 Lecture 1, 7/21/2005 Natural Language Processing 36

IBM Model 1 Example p p Iteration 2: NULL the dog : : le chien n n j=1 total = P(le | NULL)+P(le | the)+P(le | dog)= 1. 5 = 0. 5 + 0. 5 = 1. 5 tc(le | NULL) += P(le | NULL)/1 = 0 +=. 5/1. 5 = 0. 333 tc(le | the) += P(le | the)/1 = 0 +=. 5/1. 5 = 0. 333 tc(le | dog) += P(le | dog)/1 = 0 +=. 5/1. 5 = 0. 333 j=2 total = P(chien | NULL)+P(chien | the)+P(chien | dog)=1 = 0. 25 + 0. 5 = 1 tc(chien | NULL) += P(chien | NULL)/1 = 0 +=. 25/1 = 0. 25 tc(chien | the) += P(chien | the)/1 = 0 +=. 25/1 = 0. 25 tc(chien | dog) += P(chien | dog)/1 = 0 +=. 5/1 = 0. 5 Lecture 1, 7/21/2005 Natural Language Processing 36

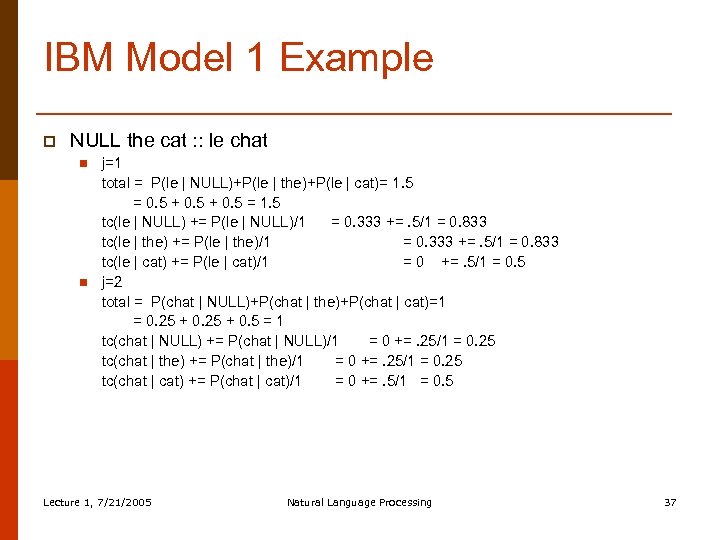

IBM Model 1 Example p NULL the cat : : le chat n n j=1 total = P(le | NULL)+P(le | the)+P(le | cat)= 1. 5 = 0. 5 + 0. 5 = 1. 5 tc(le | NULL) += P(le | NULL)/1 = 0. 333 +=. 5/1 = 0. 833 tc(le | the) += P(le | the)/1 = 0. 333 +=. 5/1 = 0. 833 tc(le | cat) += P(le | cat)/1 = 0 +=. 5/1 = 0. 5 j=2 total = P(chat | NULL)+P(chat | the)+P(chat | cat)=1 = 0. 25 + 0. 5 = 1 tc(chat | NULL) += P(chat | NULL)/1 = 0 +=. 25/1 = 0. 25 tc(chat | the) += P(chat | the)/1 = 0 +=. 25/1 = 0. 25 tc(chat | cat) += P(chat | cat)/1 = 0 +=. 5/1 = 0. 5 Lecture 1, 7/21/2005 Natural Language Processing 37

IBM Model 1 Example p NULL the cat : : le chat n n j=1 total = P(le | NULL)+P(le | the)+P(le | cat)= 1. 5 = 0. 5 + 0. 5 = 1. 5 tc(le | NULL) += P(le | NULL)/1 = 0. 333 +=. 5/1 = 0. 833 tc(le | the) += P(le | the)/1 = 0. 333 +=. 5/1 = 0. 833 tc(le | cat) += P(le | cat)/1 = 0 +=. 5/1 = 0. 5 j=2 total = P(chat | NULL)+P(chat | the)+P(chat | cat)=1 = 0. 25 + 0. 5 = 1 tc(chat | NULL) += P(chat | NULL)/1 = 0 +=. 25/1 = 0. 25 tc(chat | the) += P(chat | the)/1 = 0 +=. 25/1 = 0. 25 tc(chat | cat) += P(chat | cat)/1 = 0 +=. 5/1 = 0. 5 Lecture 1, 7/21/2005 Natural Language Processing 37

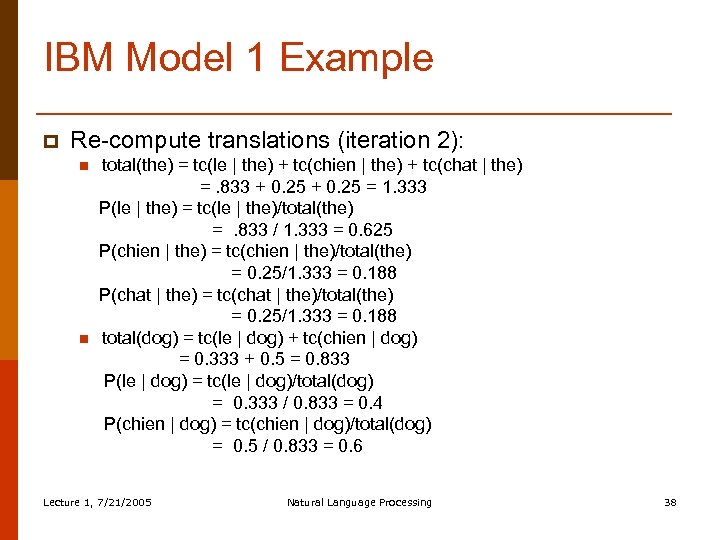

IBM Model 1 Example p Re-compute translations (iteration 2): n n total(the) = tc(le | the) + tc(chien | the) + tc(chat | the) =. 833 + 0. 25 = 1. 333 P(le | the) = tc(le | the)/total(the) =. 833 / 1. 333 = 0. 625 P(chien | the) = tc(chien | the)/total(the) = 0. 25/1. 333 = 0. 188 P(chat | the) = tc(chat | the)/total(the) = 0. 25/1. 333 = 0. 188 total(dog) = tc(le | dog) + tc(chien | dog) = 0. 333 + 0. 5 = 0. 833 P(le | dog) = tc(le | dog)/total(dog) = 0. 333 / 0. 833 = 0. 4 P(chien | dog) = tc(chien | dog)/total(dog) = 0. 5 / 0. 833 = 0. 6 Lecture 1, 7/21/2005 Natural Language Processing 38

IBM Model 1 Example p Re-compute translations (iteration 2): n n total(the) = tc(le | the) + tc(chien | the) + tc(chat | the) =. 833 + 0. 25 = 1. 333 P(le | the) = tc(le | the)/total(the) =. 833 / 1. 333 = 0. 625 P(chien | the) = tc(chien | the)/total(the) = 0. 25/1. 333 = 0. 188 P(chat | the) = tc(chat | the)/total(the) = 0. 25/1. 333 = 0. 188 total(dog) = tc(le | dog) + tc(chien | dog) = 0. 333 + 0. 5 = 0. 833 P(le | dog) = tc(le | dog)/total(dog) = 0. 333 / 0. 833 = 0. 4 P(chien | dog) = tc(chien | dog)/total(dog) = 0. 5 / 0. 833 = 0. 6 Lecture 1, 7/21/2005 Natural Language Processing 38

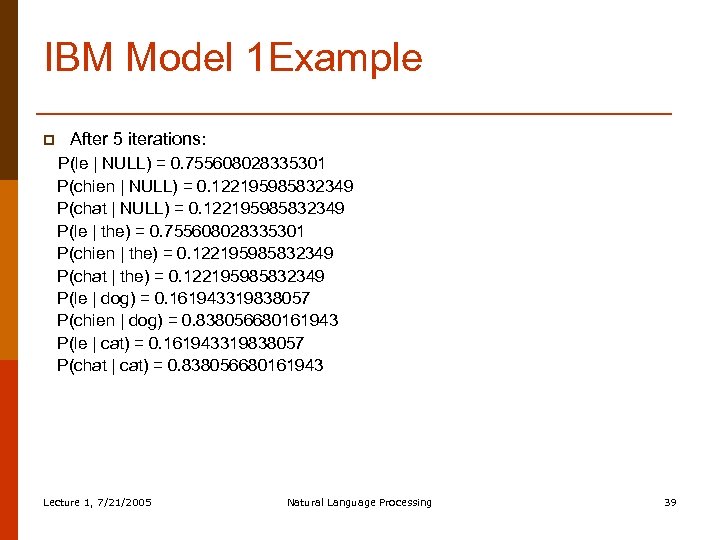

IBM Model 1 Example p After 5 iterations: P(le | NULL) = 0. 755608028335301 P(chien | NULL) = 0. 122195985832349 P(chat | NULL) = 0. 122195985832349 P(le | the) = 0. 755608028335301 P(chien | the) = 0. 122195985832349 P(chat | the) = 0. 122195985832349 P(le | dog) = 0. 161943319838057 P(chien | dog) = 0. 838056680161943 P(le | cat) = 0. 161943319838057 P(chat | cat) = 0. 838056680161943 Lecture 1, 7/21/2005 Natural Language Processing 39

IBM Model 1 Example p After 5 iterations: P(le | NULL) = 0. 755608028335301 P(chien | NULL) = 0. 122195985832349 P(chat | NULL) = 0. 122195985832349 P(le | the) = 0. 755608028335301 P(chien | the) = 0. 122195985832349 P(chat | the) = 0. 122195985832349 P(le | dog) = 0. 161943319838057 P(chien | dog) = 0. 838056680161943 P(le | cat) = 0. 161943319838057 P(chat | cat) = 0. 838056680161943 Lecture 1, 7/21/2005 Natural Language Processing 39

IBM Model 1 Recap p IBM Model 1 allows for an efficient computation of translation probabilities No notion of fertility, i. e. , it’s possible that the same English word is the best translation for all foreign words No positional information, i. e. , depending on the language pair, there might be a tendency that words occurring at the beginning of the English sentence are more likely to align to words at the beginning of the foreign sentence Lecture 1, 7/21/2005 Natural Language Processing 40

IBM Model 1 Recap p IBM Model 1 allows for an efficient computation of translation probabilities No notion of fertility, i. e. , it’s possible that the same English word is the best translation for all foreign words No positional information, i. e. , depending on the language pair, there might be a tendency that words occurring at the beginning of the English sentence are more likely to align to words at the beginning of the foreign sentence Lecture 1, 7/21/2005 Natural Language Processing 40

IBM Model 3 p IBM Model 3 offers two additional features compared to IBM Model 1: n How likely is an English word e to align to k foreign words (fertility)? n Positional information (distortion), how likely is a word in position i to align to a word in position j? Lecture 1, 7/21/2005 Natural Language Processing 41

IBM Model 3 p IBM Model 3 offers two additional features compared to IBM Model 1: n How likely is an English word e to align to k foreign words (fertility)? n Positional information (distortion), how likely is a word in position i to align to a word in position j? Lecture 1, 7/21/2005 Natural Language Processing 41

IBM Model 3: Fertility p p p The best Model 1 alignment could be that a single English word aligns to all foreign words This is clearly not desirable and we want to constrain the number of words an English word can align to Fertility models a probability distribution that word e aligns to k words: n(k, e) Consequence: translation probabilities cannot be computed independently of each other anymore IBM Model 3 has to work with full alignments, note there are up to (l+1)m different alignments Lecture 1, 7/21/2005 Natural Language Processing 42

IBM Model 3: Fertility p p p The best Model 1 alignment could be that a single English word aligns to all foreign words This is clearly not desirable and we want to constrain the number of words an English word can align to Fertility models a probability distribution that word e aligns to k words: n(k, e) Consequence: translation probabilities cannot be computed independently of each other anymore IBM Model 3 has to work with full alignments, note there are up to (l+1)m different alignments Lecture 1, 7/21/2005 Natural Language Processing 42

IBM Model 1 + Model 3 p p p Iterating over all possible alignments is computationally infeasible Solution: Compute the best alignment with Model 1 and change some of the alignments to generate a set of likely alignments (pegging) Model 3 takes this restricted set of alignments as input Lecture 1, 7/21/2005 Natural Language Processing 43

IBM Model 1 + Model 3 p p p Iterating over all possible alignments is computationally infeasible Solution: Compute the best alignment with Model 1 and change some of the alignments to generate a set of likely alignments (pegging) Model 3 takes this restricted set of alignments as input Lecture 1, 7/21/2005 Natural Language Processing 43

Pegging p p Given an alignment a we can derive additional alignments from it by making small changes: n Changing a link (j, i) to (j, i’) n Swapping a pair of links (j, i) and (j’, i’) to (j, i’) and (j’, i) The resulting set of alignments is called the neighborhood of a Lecture 1, 7/21/2005 Natural Language Processing 44

Pegging p p Given an alignment a we can derive additional alignments from it by making small changes: n Changing a link (j, i) to (j, i’) n Swapping a pair of links (j, i) and (j’, i’) to (j, i’) and (j’, i) The resulting set of alignments is called the neighborhood of a Lecture 1, 7/21/2005 Natural Language Processing 44

IBM Model 3: Distortion p p The distortion factor determines how likely it is that an English word in position i aligns to a foreign word in position j, given the lengths of both sentences: d(j | i, l, m) Note, positions are absolute positions Lecture 1, 7/21/2005 Natural Language Processing 45

IBM Model 3: Distortion p p The distortion factor determines how likely it is that an English word in position i aligns to a foreign word in position j, given the lengths of both sentences: d(j | i, l, m) Note, positions are absolute positions Lecture 1, 7/21/2005 Natural Language Processing 45

Deficiency p p p Problem with IBM Model 3: It assigns probability mass to impossible strings n Well formed string: “This is possible” n Ill-formed but possible string: “This possible is” n Impossible string: Impossible strings are due to distortion values that generate different words at the same position Impossible strings can still be filtered out in later stages of the translation process Lecture 1, 7/21/2005 Natural Language Processing 46

Deficiency p p p Problem with IBM Model 3: It assigns probability mass to impossible strings n Well formed string: “This is possible” n Ill-formed but possible string: “This possible is” n Impossible string: Impossible strings are due to distortion values that generate different words at the same position Impossible strings can still be filtered out in later stages of the translation process Lecture 1, 7/21/2005 Natural Language Processing 46

Limitations of IBM Models p p p Only 1 -to-N word mapping Handling fertility-zero words (difficult for decoding) Almost no syntactic information n Word classes n Relative distortion Long-distance word movement Fluency of the output depends entirely on the English language model Lecture 1, 7/21/2005 Natural Language Processing 47

Limitations of IBM Models p p p Only 1 -to-N word mapping Handling fertility-zero words (difficult for decoding) Almost no syntactic information n Word classes n Relative distortion Long-distance word movement Fluency of the output depends entirely on the English language model Lecture 1, 7/21/2005 Natural Language Processing 47

Decoding p p p How to translate new sentences? A decoder uses the parameters learned on a parallel corpus n Translation probabilities n Fertilities n Distortions In combination with a language model the decoder generates the most likely translation Standard algorithms can be used to explore the search space (A*, greedy searching, …) Similar to the traveling salesman problem Lecture 1, 7/21/2005 Natural Language Processing 48

Decoding p p p How to translate new sentences? A decoder uses the parameters learned on a parallel corpus n Translation probabilities n Fertilities n Distortions In combination with a language model the decoder generates the most likely translation Standard algorithms can be used to explore the search space (A*, greedy searching, …) Similar to the traveling salesman problem Lecture 1, 7/21/2005 Natural Language Processing 48

IBM Model 1 Lecture 1, 7/21/2005 Natural Language Processing 49

IBM Model 1 Lecture 1, 7/21/2005 Natural Language Processing 49

IBM Model 1 Lecture 1, 7/21/2005 Natural Language Processing 50

IBM Model 1 Lecture 1, 7/21/2005 Natural Language Processing 50

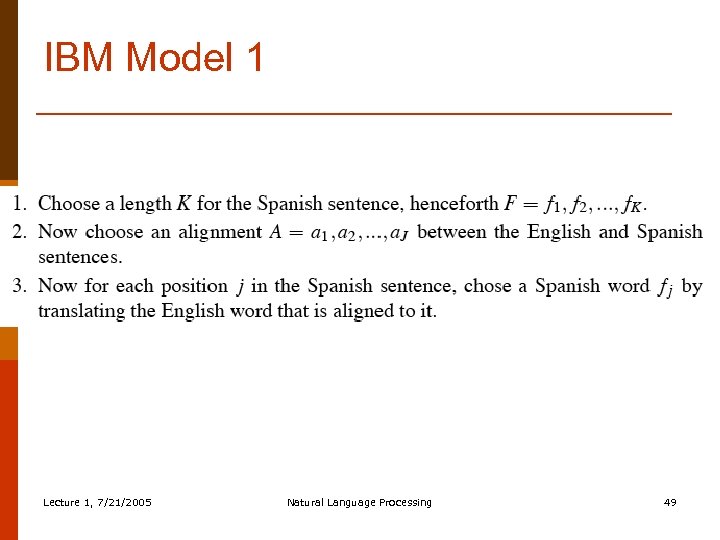

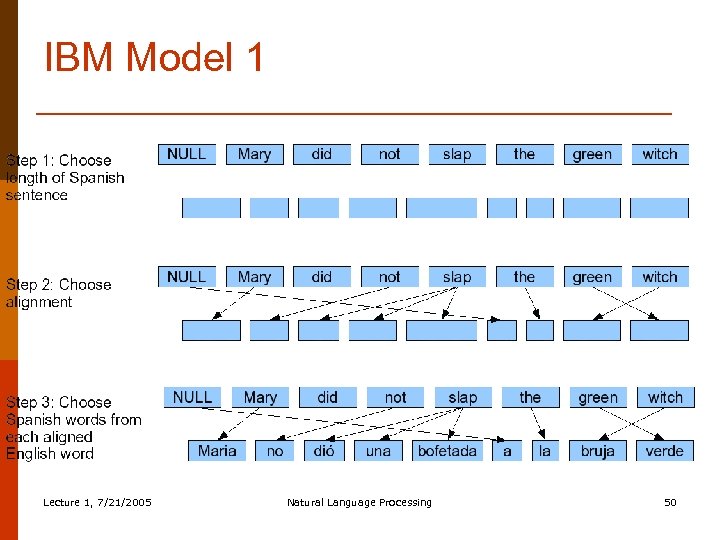

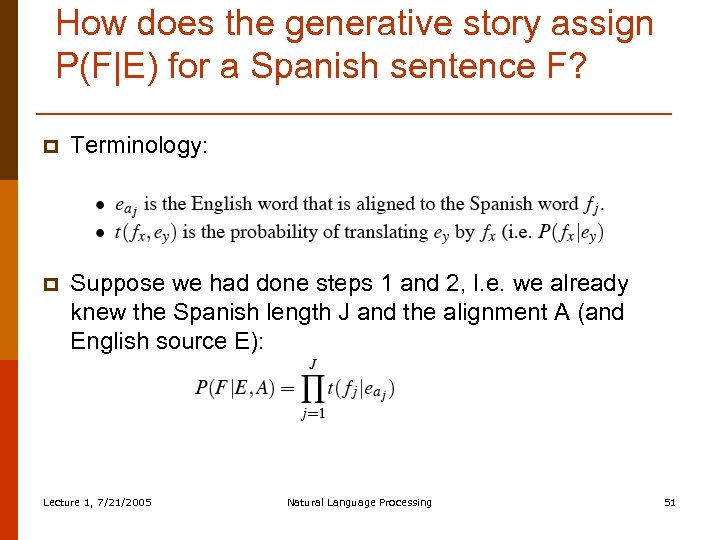

How does the generative story assign P(F|E) for a Spanish sentence F? p Terminology: p Suppose we had done steps 1 and 2, I. e. we already knew the Spanish length J and the alignment A (and English source E): Lecture 1, 7/21/2005 Natural Language Processing 51

How does the generative story assign P(F|E) for a Spanish sentence F? p Terminology: p Suppose we had done steps 1 and 2, I. e. we already knew the Spanish length J and the alignment A (and English source E): Lecture 1, 7/21/2005 Natural Language Processing 51

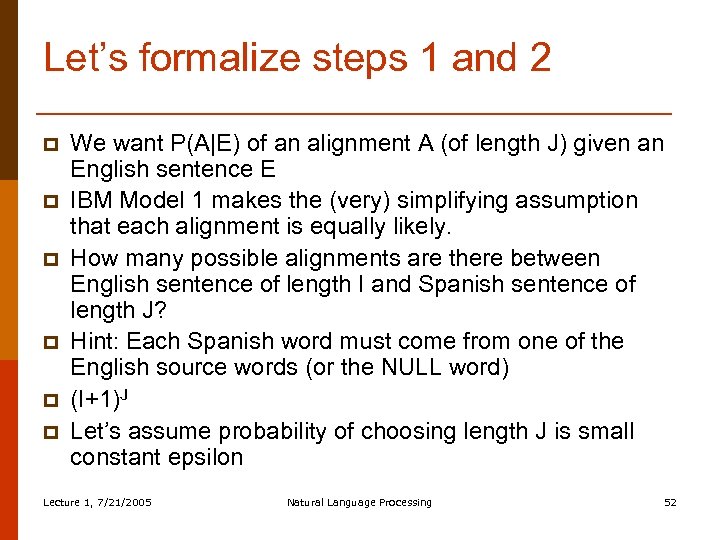

Let’s formalize steps 1 and 2 p p p We want P(A|E) of an alignment A (of length J) given an English sentence E IBM Model 1 makes the (very) simplifying assumption that each alignment is equally likely. How many possible alignments are there between English sentence of length I and Spanish sentence of length J? Hint: Each Spanish word must come from one of the English source words (or the NULL word) (I+1)J Let’s assume probability of choosing length J is small constant epsilon Lecture 1, 7/21/2005 Natural Language Processing 52

Let’s formalize steps 1 and 2 p p p We want P(A|E) of an alignment A (of length J) given an English sentence E IBM Model 1 makes the (very) simplifying assumption that each alignment is equally likely. How many possible alignments are there between English sentence of length I and Spanish sentence of length J? Hint: Each Spanish word must come from one of the English source words (or the NULL word) (I+1)J Let’s assume probability of choosing length J is small constant epsilon Lecture 1, 7/21/2005 Natural Language Processing 52

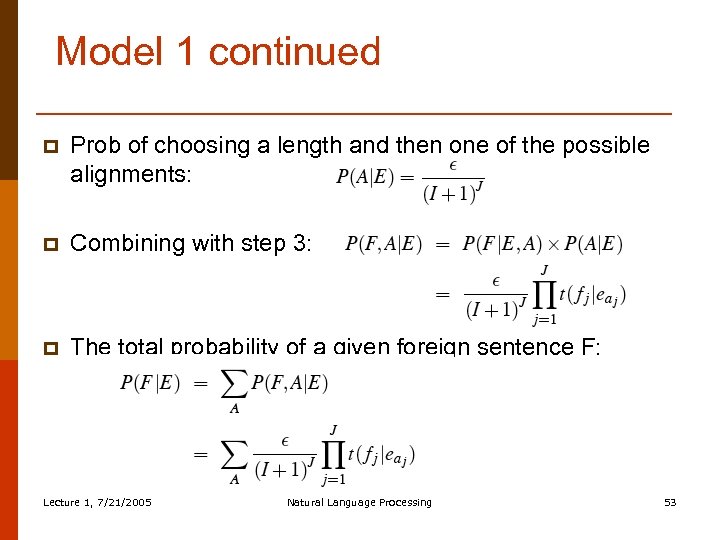

Model 1 continued p Prob of choosing a length and then one of the possible alignments: p Combining with step 3: p The total probability of a given foreign sentence F: Lecture 1, 7/21/2005 Natural Language Processing 53

Model 1 continued p Prob of choosing a length and then one of the possible alignments: p Combining with step 3: p The total probability of a given foreign sentence F: Lecture 1, 7/21/2005 Natural Language Processing 53

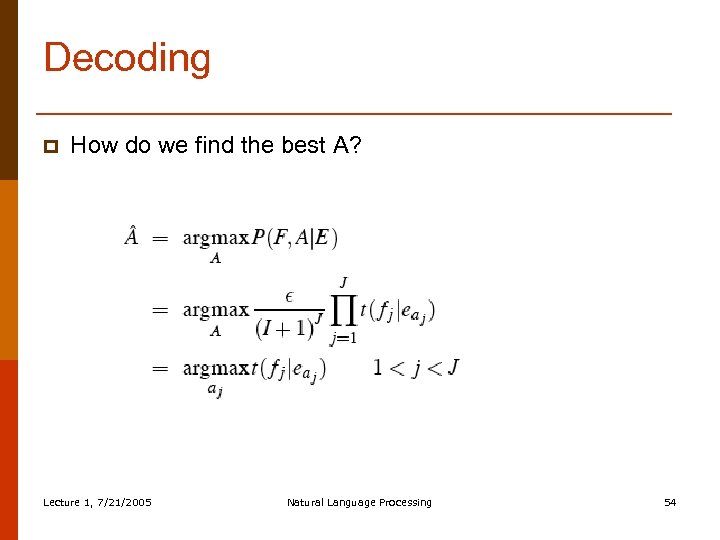

Decoding p How do we find the best A? Lecture 1, 7/21/2005 Natural Language Processing 54

Decoding p How do we find the best A? Lecture 1, 7/21/2005 Natural Language Processing 54

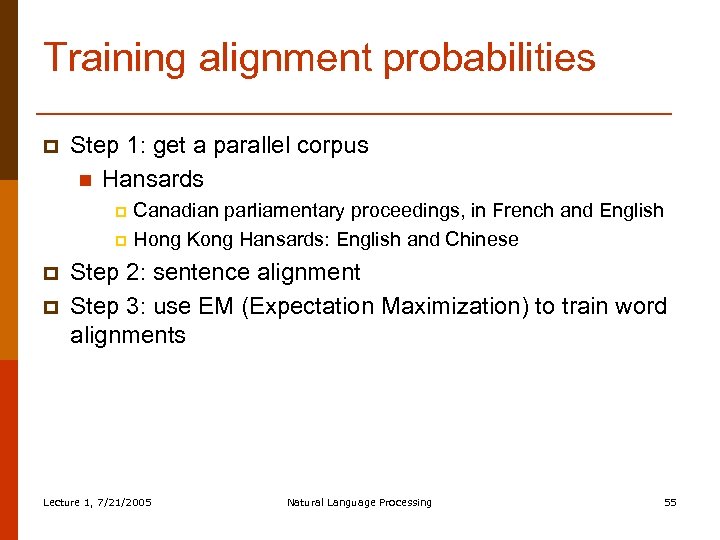

Training alignment probabilities p Step 1: get a parallel corpus n Hansards Canadian parliamentary proceedings, in French and English p Hong Kong Hansards: English and Chinese p p p Step 2: sentence alignment Step 3: use EM (Expectation Maximization) to train word alignments Lecture 1, 7/21/2005 Natural Language Processing 55

Training alignment probabilities p Step 1: get a parallel corpus n Hansards Canadian parliamentary proceedings, in French and English p Hong Kong Hansards: English and Chinese p p p Step 2: sentence alignment Step 3: use EM (Expectation Maximization) to train word alignments Lecture 1, 7/21/2005 Natural Language Processing 55

Step 1: Parallel corpora p Example from DE-News (8/1/1996) English German Diverging opinions about planned tax reform Unterschiedliche Meinungen zur geplanten Steuerreform The discussion around the envisaged major tax reform continues. Die Diskussion um die vorgesehene grosse Steuerreform dauert an. The FDP economics expert , Graf Lambsdorff , today came out in favor of advancing the enactment of significant parts of the overhaul , currently planned for 1999. Der FDP - Wirtschaftsexperte Graf Lambsdorff sprach sich heute dafuer aus , wesentliche Teile der fuer 1999 geplanten Reform vorzuziehen. Lecture 1, 7/21/2005 Natural Language Processing Slide from Christof Monz 56

Step 1: Parallel corpora p Example from DE-News (8/1/1996) English German Diverging opinions about planned tax reform Unterschiedliche Meinungen zur geplanten Steuerreform The discussion around the envisaged major tax reform continues. Die Diskussion um die vorgesehene grosse Steuerreform dauert an. The FDP economics expert , Graf Lambsdorff , today came out in favor of advancing the enactment of significant parts of the overhaul , currently planned for 1999. Der FDP - Wirtschaftsexperte Graf Lambsdorff sprach sich heute dafuer aus , wesentliche Teile der fuer 1999 geplanten Reform vorzuziehen. Lecture 1, 7/21/2005 Natural Language Processing Slide from Christof Monz 56

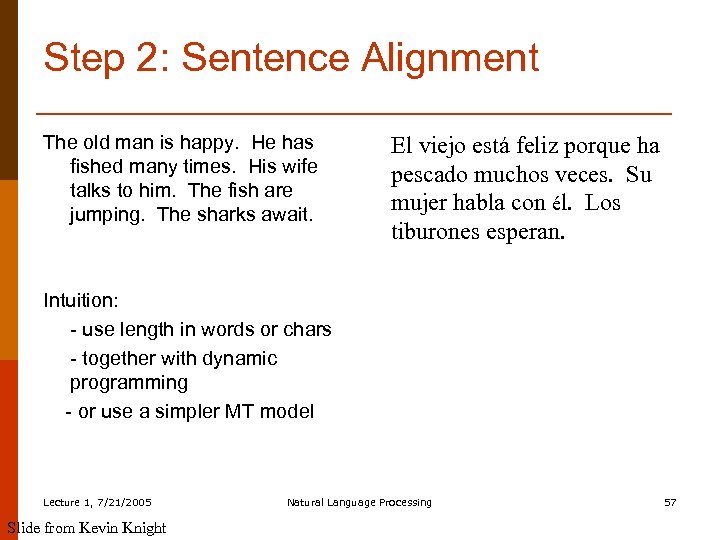

Step 2: Sentence Alignment The old man is happy. He has fished many times. His wife talks to him. The fish are jumping. The sharks await. El viejo está feliz porque ha pescado muchos veces. Su mujer habla con él. Los tiburones esperan. Intuition: - use length in words or chars - together with dynamic programming - or use a simpler MT model Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 57

Step 2: Sentence Alignment The old man is happy. He has fished many times. His wife talks to him. The fish are jumping. The sharks await. El viejo está feliz porque ha pescado muchos veces. Su mujer habla con él. Los tiburones esperan. Intuition: - use length in words or chars - together with dynamic programming - or use a simpler MT model Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 57

Sentence Alignment 1. 2. 3. 4. 5. The old man is happy. He has fished many times. His wife talks to him. The fish are jumping. The sharks await. Lecture 1, 7/21/2005 Slide from Kevin Knight El viejo está feliz porque ha pescado muchos veces. Su mujer habla con él. Los tiburones esperan. Natural Language Processing 58

Sentence Alignment 1. 2. 3. 4. 5. The old man is happy. He has fished many times. His wife talks to him. The fish are jumping. The sharks await. Lecture 1, 7/21/2005 Slide from Kevin Knight El viejo está feliz porque ha pescado muchos veces. Su mujer habla con él. Los tiburones esperan. Natural Language Processing 58

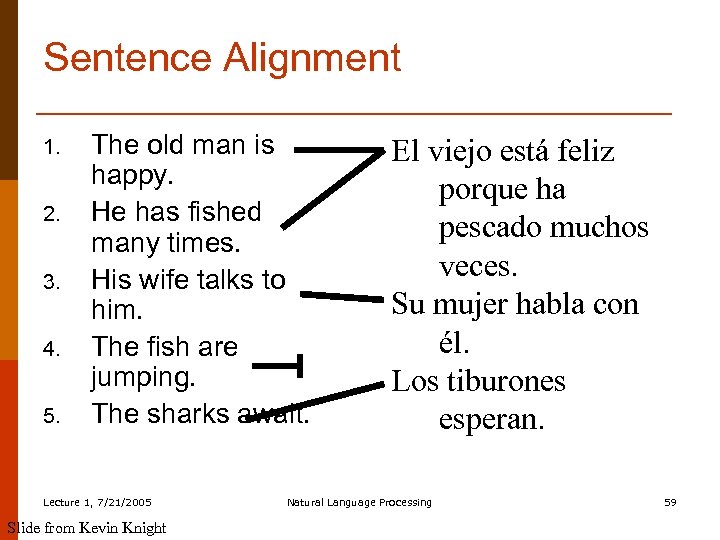

Sentence Alignment 1. 2. 3. 4. 5. The old man is happy. He has fished many times. His wife talks to him. The fish are jumping. The sharks await. Lecture 1, 7/21/2005 Slide from Kevin Knight El viejo está feliz porque ha pescado muchos veces. Su mujer habla con él. Los tiburones esperan. Natural Language Processing 59

Sentence Alignment 1. 2. 3. 4. 5. The old man is happy. He has fished many times. His wife talks to him. The fish are jumping. The sharks await. Lecture 1, 7/21/2005 Slide from Kevin Knight El viejo está feliz porque ha pescado muchos veces. Su mujer habla con él. Los tiburones esperan. Natural Language Processing 59

Sentence Alignment 1. 2. 3. The old man is happy. He has fished many times. His wife talks to him. The sharks await. El viejo está feliz porque ha pescado muchos veces. Su mujer habla con él. Los tiburones esperan. Note that unaligned sentences are thrown out, and sentences are merged in n-to-m alignments (n, m > 0). Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 60

Sentence Alignment 1. 2. 3. The old man is happy. He has fished many times. His wife talks to him. The sharks await. El viejo está feliz porque ha pescado muchos veces. Su mujer habla con él. Los tiburones esperan. Note that unaligned sentences are thrown out, and sentences are merged in n-to-m alignments (n, m > 0). Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 60

Step 3: word alignments § § § It turns out we can bootstrap alignments From a sentence-aligned bilingual corpus We use is the Expectation-Maximization or EM algorithm Lecture 1, 7/21/2005 Natural Language Processing 61

Step 3: word alignments § § § It turns out we can bootstrap alignments From a sentence-aligned bilingual corpus We use is the Expectation-Maximization or EM algorithm Lecture 1, 7/21/2005 Natural Language Processing 61

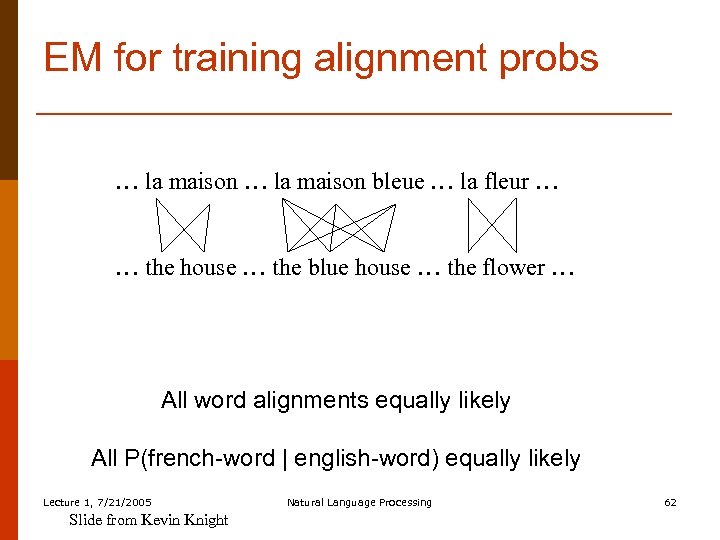

EM for training alignment probs … la maison bleue … la fleur … … the house … the blue house … the flower … All word alignments equally likely All P(french-word | english-word) equally likely Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 62

EM for training alignment probs … la maison bleue … la fleur … … the house … the blue house … the flower … All word alignments equally likely All P(french-word | english-word) equally likely Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 62

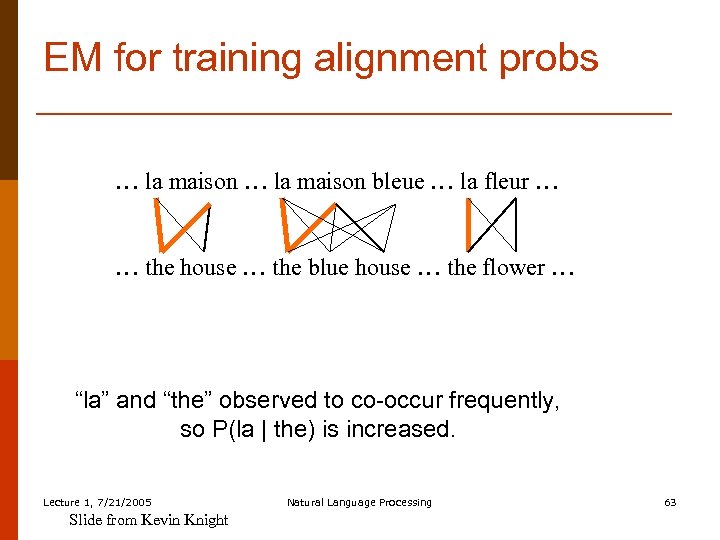

EM for training alignment probs … la maison bleue … la fleur … … the house … the blue house … the flower … “la” and “the” observed to co-occur frequently, so P(la | the) is increased. Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 63

EM for training alignment probs … la maison bleue … la fleur … … the house … the blue house … the flower … “la” and “the” observed to co-occur frequently, so P(la | the) is increased. Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 63

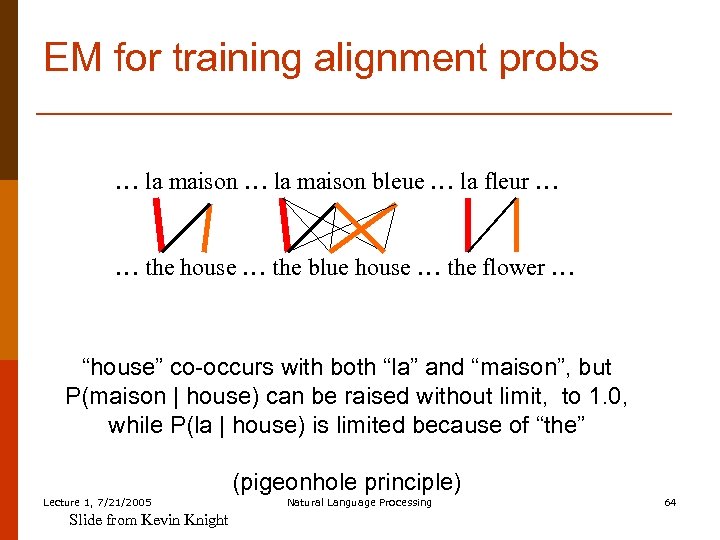

EM for training alignment probs … la maison bleue … la fleur … … the house … the blue house … the flower … “house” co-occurs with both “la” and “maison”, but P(maison | house) can be raised without limit, to 1. 0, while P(la | house) is limited because of “the” (pigeonhole principle) Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 64

EM for training alignment probs … la maison bleue … la fleur … … the house … the blue house … the flower … “house” co-occurs with both “la” and “maison”, but P(maison | house) can be raised without limit, to 1. 0, while P(la | house) is limited because of “the” (pigeonhole principle) Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 64

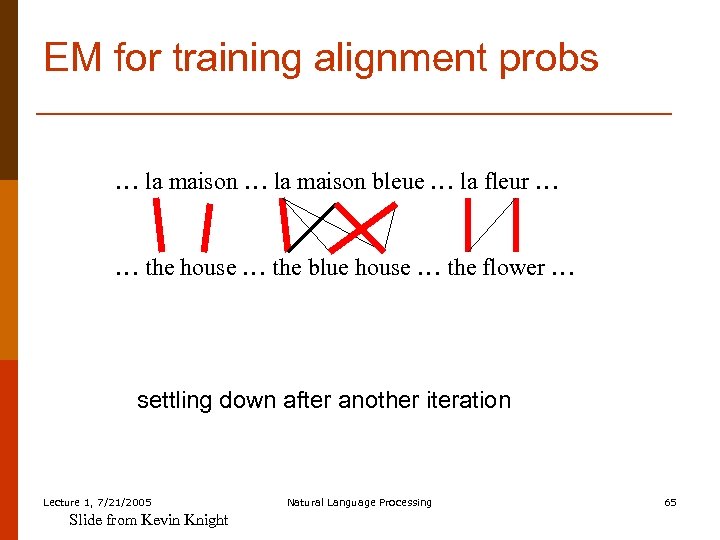

EM for training alignment probs … la maison bleue … la fleur … … the house … the blue house … the flower … settling down after another iteration Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 65

EM for training alignment probs … la maison bleue … la fleur … … the house … the blue house … the flower … settling down after another iteration Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 65

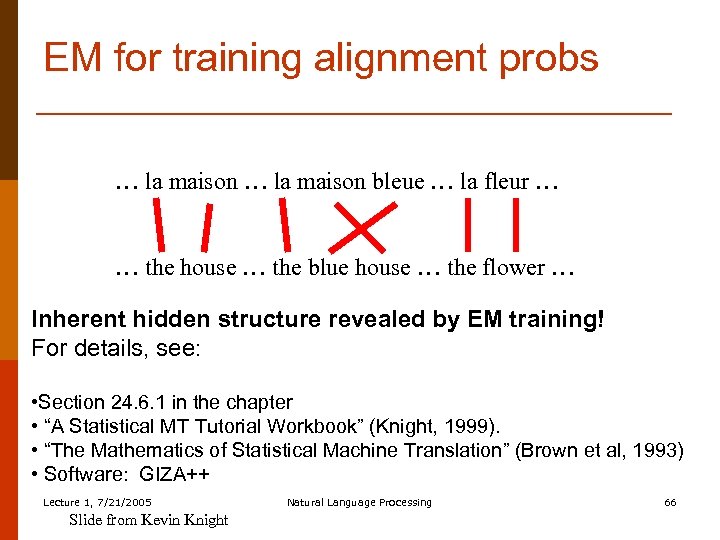

EM for training alignment probs … la maison bleue … la fleur … … the house … the blue house … the flower … Inherent hidden structure revealed by EM training! For details, see: • Section 24. 6. 1 in the chapter • “A Statistical MT Tutorial Workbook” (Knight, 1999). • “The Mathematics of Statistical Machine Translation” (Brown et al, 1993) • Software: GIZA++ Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 66

EM for training alignment probs … la maison bleue … la fleur … … the house … the blue house … the flower … Inherent hidden structure revealed by EM training! For details, see: • Section 24. 6. 1 in the chapter • “A Statistical MT Tutorial Workbook” (Knight, 1999). • “The Mathematics of Statistical Machine Translation” (Brown et al, 1993) • Software: GIZA++ Lecture 1, 7/21/2005 Slide from Kevin Knight Natural Language Processing 66

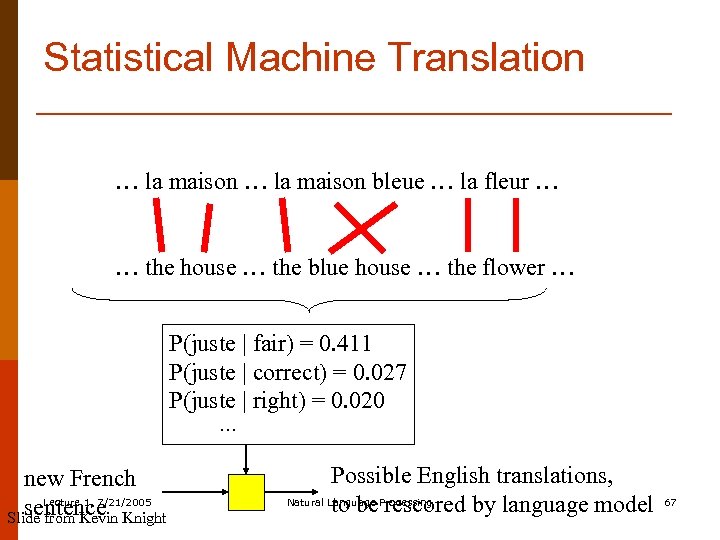

Statistical Machine Translation … la maison bleue … la fleur … … the house … the blue house … the flower … P(juste | fair) = 0. 411 P(juste | correct) = 0. 027 P(juste | right) = 0. 020 … new French Lecture 1, 7/21/2005 sentence Knight Slide from Kevin Possible English translations, Natural Language Processing to be rescored by language model 67

Statistical Machine Translation … la maison bleue … la fleur … … the house … the blue house … the flower … P(juste | fair) = 0. 411 P(juste | correct) = 0. 027 P(juste | right) = 0. 020 … new French Lecture 1, 7/21/2005 sentence Knight Slide from Kevin Possible English translations, Natural Language Processing to be rescored by language model 67

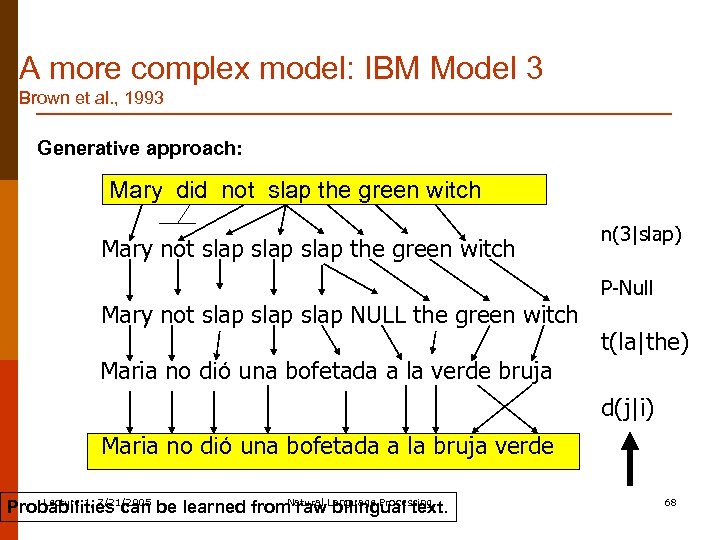

A more complex model: IBM Model 3 Brown et al. , 1993 Generative approach: Mary did not slap the green witch Mary not slap slap NULL the green witch n(3|slap) P-Null t(la|the) Maria no dió una bofetada a la verde bruja d(j|i) Maria no dió una bofetada a la bruja verde Lecture 1, 7/21/2005 Natural Probabilities can be learned from raw. Language Processing bilingual text. 68

A more complex model: IBM Model 3 Brown et al. , 1993 Generative approach: Mary did not slap the green witch Mary not slap slap NULL the green witch n(3|slap) P-Null t(la|the) Maria no dió una bofetada a la verde bruja d(j|i) Maria no dió una bofetada a la bruja verde Lecture 1, 7/21/2005 Natural Probabilities can be learned from raw. Language Processing bilingual text. 68

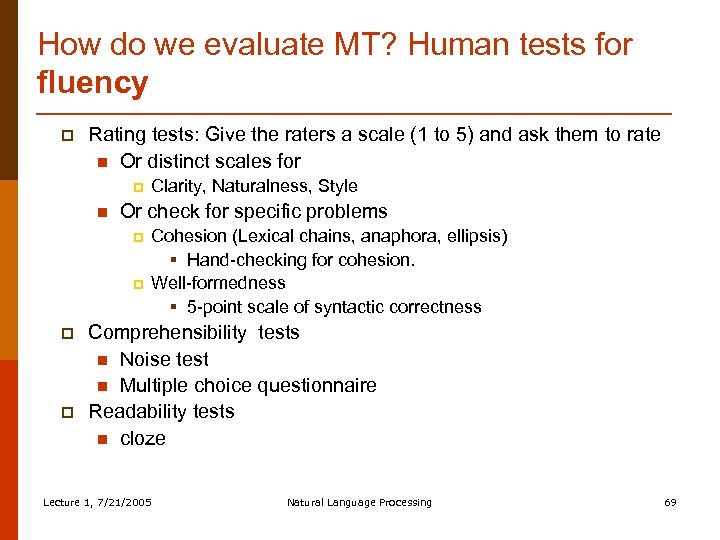

How do we evaluate MT? Human tests for fluency p Rating tests: Give the raters a scale (1 to 5) and ask them to rate n Or distinct scales for p n Or check for specific problems p p Clarity, Naturalness, Style Cohesion (Lexical chains, anaphora, ellipsis) § Hand-checking for cohesion. Well-formedness § 5 -point scale of syntactic correctness Comprehensibility tests n Noise test n Multiple choice questionnaire Readability tests n cloze Lecture 1, 7/21/2005 Natural Language Processing 69

How do we evaluate MT? Human tests for fluency p Rating tests: Give the raters a scale (1 to 5) and ask them to rate n Or distinct scales for p n Or check for specific problems p p Clarity, Naturalness, Style Cohesion (Lexical chains, anaphora, ellipsis) § Hand-checking for cohesion. Well-formedness § 5 -point scale of syntactic correctness Comprehensibility tests n Noise test n Multiple choice questionnaire Readability tests n cloze Lecture 1, 7/21/2005 Natural Language Processing 69

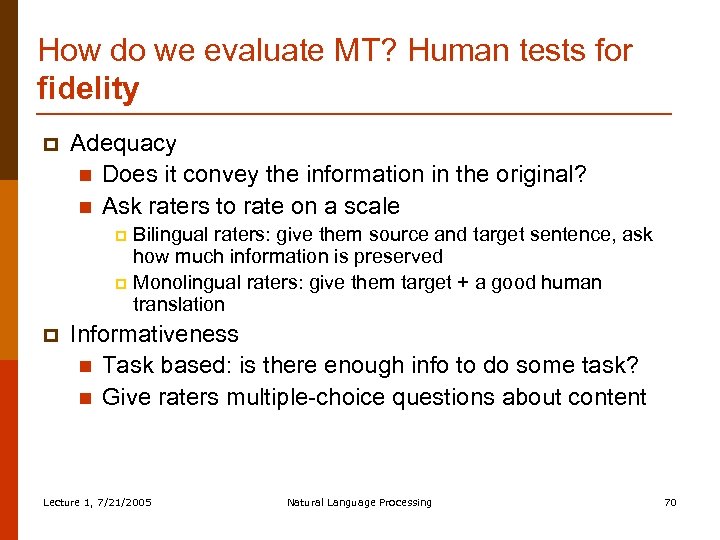

How do we evaluate MT? Human tests for fidelity p Adequacy n Does it convey the information in the original? n Ask raters to rate on a scale Bilingual raters: give them source and target sentence, ask how much information is preserved p Monolingual raters: give them target + a good human translation p p Informativeness n Task based: is there enough info to do some task? n Give raters multiple-choice questions about content Lecture 1, 7/21/2005 Natural Language Processing 70

How do we evaluate MT? Human tests for fidelity p Adequacy n Does it convey the information in the original? n Ask raters to rate on a scale Bilingual raters: give them source and target sentence, ask how much information is preserved p Monolingual raters: give them target + a good human translation p p Informativeness n Task based: is there enough info to do some task? n Give raters multiple-choice questions about content Lecture 1, 7/21/2005 Natural Language Processing 70

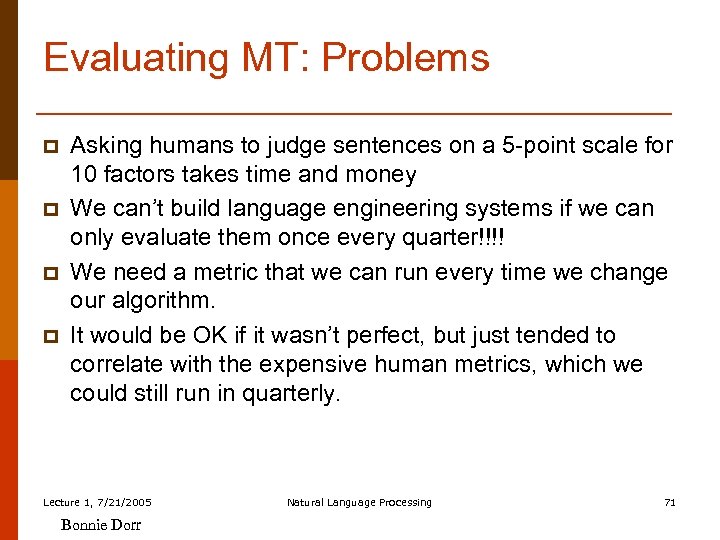

Evaluating MT: Problems p p Asking humans to judge sentences on a 5 -point scale for 10 factors takes time and money We can’t build language engineering systems if we can only evaluate them once every quarter!!!! We need a metric that we can run every time we change our algorithm. It would be OK if it wasn’t perfect, but just tended to correlate with the expensive human metrics, which we could still run in quarterly. Lecture 1, 7/21/2005 Bonnie Dorr Natural Language Processing 71

Evaluating MT: Problems p p Asking humans to judge sentences on a 5 -point scale for 10 factors takes time and money We can’t build language engineering systems if we can only evaluate them once every quarter!!!! We need a metric that we can run every time we change our algorithm. It would be OK if it wasn’t perfect, but just tended to correlate with the expensive human metrics, which we could still run in quarterly. Lecture 1, 7/21/2005 Bonnie Dorr Natural Language Processing 71

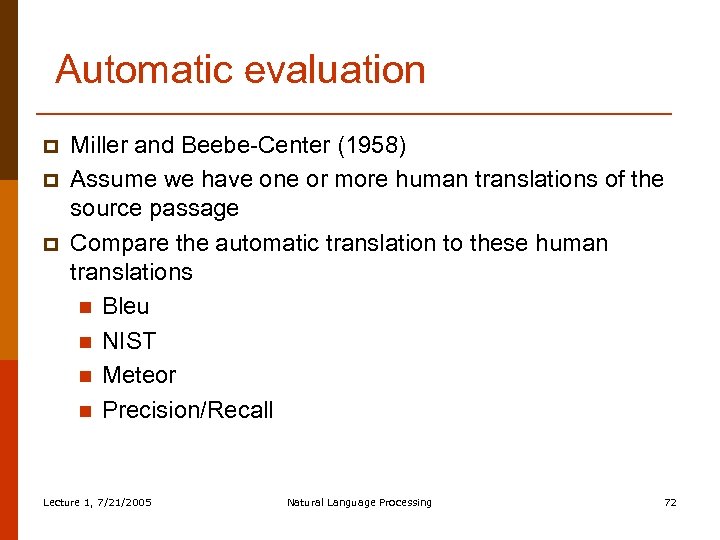

Automatic evaluation p p p Miller and Beebe-Center (1958) Assume we have one or more human translations of the source passage Compare the automatic translation to these human translations n Bleu n NIST n Meteor n Precision/Recall Lecture 1, 7/21/2005 Natural Language Processing 72

Automatic evaluation p p p Miller and Beebe-Center (1958) Assume we have one or more human translations of the source passage Compare the automatic translation to these human translations n Bleu n NIST n Meteor n Precision/Recall Lecture 1, 7/21/2005 Natural Language Processing 72

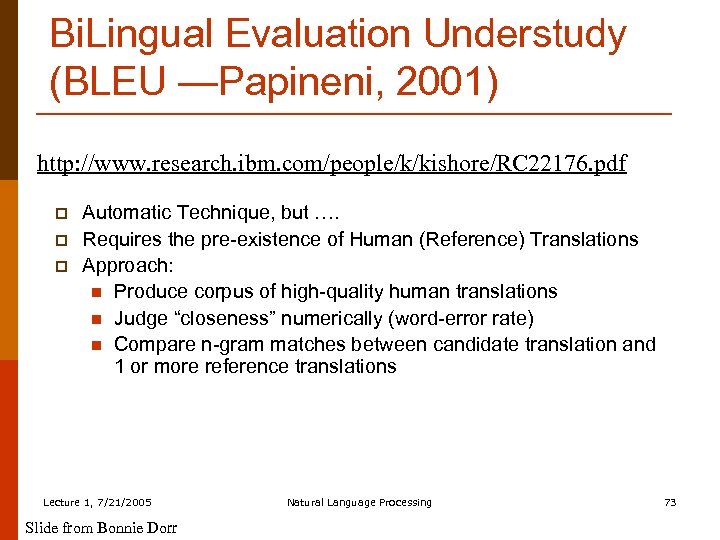

Bi. Lingual Evaluation Understudy (BLEU —Papineni, 2001) http: //www. research. ibm. com/people/k/kishore/RC 22176. pdf p p p Automatic Technique, but …. Requires the pre-existence of Human (Reference) Translations Approach: n Produce corpus of high-quality human translations n Judge “closeness” numerically (word-error rate) n Compare n-gram matches between candidate translation and 1 or more reference translations Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 73

Bi. Lingual Evaluation Understudy (BLEU —Papineni, 2001) http: //www. research. ibm. com/people/k/kishore/RC 22176. pdf p p p Automatic Technique, but …. Requires the pre-existence of Human (Reference) Translations Approach: n Produce corpus of high-quality human translations n Judge “closeness” numerically (word-error rate) n Compare n-gram matches between candidate translation and 1 or more reference translations Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 73

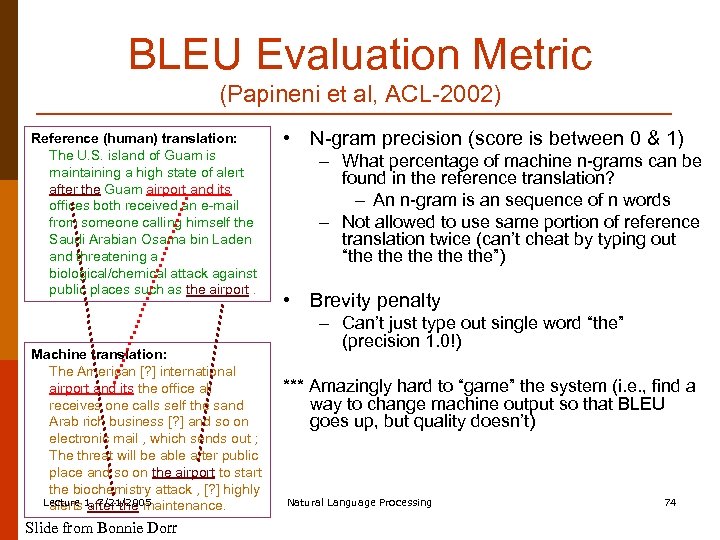

BLEU Evaluation Metric (Papineni et al, ACL-2002) Reference (human) translation: The U. S. island of Guam is maintaining a high state of alert after the Guam airport and its offices both received an e-mail from someone calling himself the Saudi Arabian Osama bin Laden and threatening a biological/chemical attack against public places such as the airport. Machine translation: The American [? ] international airport and its the office all receives one calls self the sand Arab rich business [? ] and so on electronic mail , which sends out ; The threat will be able after public place and so on the airport to start the biochemistry attack , [? ] highly Lecture 1, 7/21/2005 alerts after the maintenance. Slide from Bonnie Dorr • N-gram precision (score is between 0 & 1) – What percentage of machine n-grams can be found in the reference translation? – An n-gram is an sequence of n words – Not allowed to use same portion of reference translation twice (can’t cheat by typing out “the the the”) • Brevity penalty – Can’t just type out single word “the” (precision 1. 0!) *** Amazingly hard to “game” the system (i. e. , find a way to change machine output so that BLEU goes up, but quality doesn’t) Natural Language Processing 74

BLEU Evaluation Metric (Papineni et al, ACL-2002) Reference (human) translation: The U. S. island of Guam is maintaining a high state of alert after the Guam airport and its offices both received an e-mail from someone calling himself the Saudi Arabian Osama bin Laden and threatening a biological/chemical attack against public places such as the airport. Machine translation: The American [? ] international airport and its the office all receives one calls self the sand Arab rich business [? ] and so on electronic mail , which sends out ; The threat will be able after public place and so on the airport to start the biochemistry attack , [? ] highly Lecture 1, 7/21/2005 alerts after the maintenance. Slide from Bonnie Dorr • N-gram precision (score is between 0 & 1) – What percentage of machine n-grams can be found in the reference translation? – An n-gram is an sequence of n words – Not allowed to use same portion of reference translation twice (can’t cheat by typing out “the the the”) • Brevity penalty – Can’t just type out single word “the” (precision 1. 0!) *** Amazingly hard to “game” the system (i. e. , find a way to change machine output so that BLEU goes up, but quality doesn’t) Natural Language Processing 74

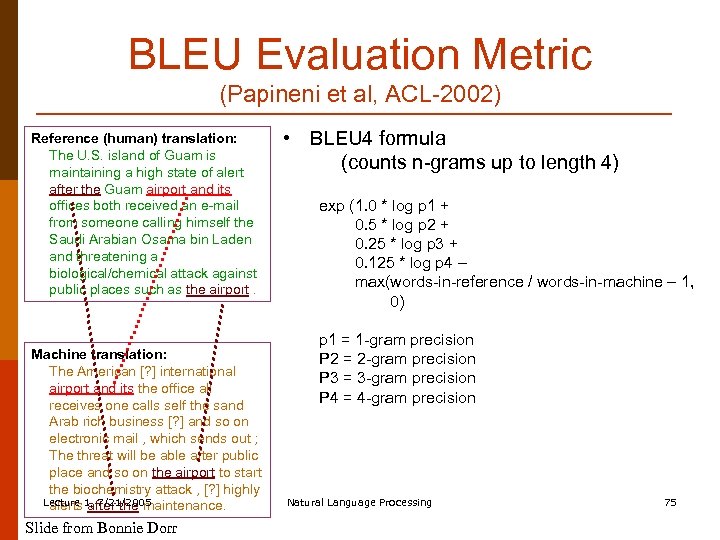

BLEU Evaluation Metric (Papineni et al, ACL-2002) Reference (human) translation: The U. S. island of Guam is maintaining a high state of alert after the Guam airport and its offices both received an e-mail from someone calling himself the Saudi Arabian Osama bin Laden and threatening a biological/chemical attack against public places such as the airport. Machine translation: The American [? ] international airport and its the office all receives one calls self the sand Arab rich business [? ] and so on electronic mail , which sends out ; The threat will be able after public place and so on the airport to start the biochemistry attack , [? ] highly Lecture 1, 7/21/2005 alerts after the maintenance. Slide from Bonnie Dorr • BLEU 4 formula (counts n-grams up to length 4) exp (1. 0 * log p 1 + 0. 5 * log p 2 + 0. 25 * log p 3 + 0. 125 * log p 4 – max(words-in-reference / words-in-machine – 1, 0) p 1 = 1 -gram precision P 2 = 2 -gram precision P 3 = 3 -gram precision P 4 = 4 -gram precision Natural Language Processing 75

BLEU Evaluation Metric (Papineni et al, ACL-2002) Reference (human) translation: The U. S. island of Guam is maintaining a high state of alert after the Guam airport and its offices both received an e-mail from someone calling himself the Saudi Arabian Osama bin Laden and threatening a biological/chemical attack against public places such as the airport. Machine translation: The American [? ] international airport and its the office all receives one calls self the sand Arab rich business [? ] and so on electronic mail , which sends out ; The threat will be able after public place and so on the airport to start the biochemistry attack , [? ] highly Lecture 1, 7/21/2005 alerts after the maintenance. Slide from Bonnie Dorr • BLEU 4 formula (counts n-grams up to length 4) exp (1. 0 * log p 1 + 0. 5 * log p 2 + 0. 25 * log p 3 + 0. 125 * log p 4 – max(words-in-reference / words-in-machine – 1, 0) p 1 = 1 -gram precision P 2 = 2 -gram precision P 3 = 3 -gram precision P 4 = 4 -gram precision Natural Language Processing 75

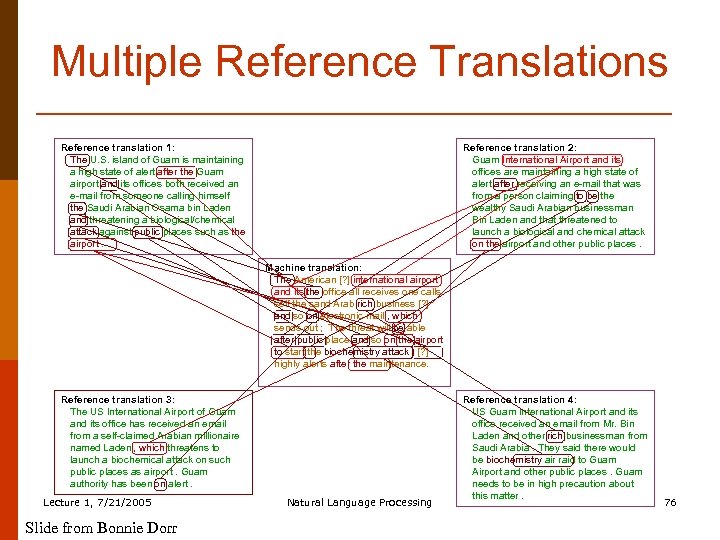

Multiple Reference Translations Reference translation 1: The U. S. island of Guam is maintaining a high state of alert after the Guam airport and its offices both received an e-mail from someone calling himself the Saudi Arabian Osama bin Laden and threatening a biological/chemical attack against public places such as the airport. Reference translation 2: Guam International Airport and its offices are maintaining a high state of alert after receiving an e-mail that was from a person claiming to be the wealthy Saudi Arabian businessman Bin Laden and that threatened to launch a biological and chemical attack on the airport and other public places. Machine translation: The American [? ] international airport and its the office all receives one calls self the sand Arab rich business [? ] and so on electronic mail , which sends out ; The threat will be able after public place and so on the airport to start the biochemistry attack , [? ] highly alerts after the maintenance. Reference translation 3: The US International Airport of Guam and its office has received an email from a self-claimed Arabian millionaire named Laden , which threatens to launch a biochemical attack on such public places as airport. Guam authority has been on alert. Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing Reference translation 4: US Guam International Airport and its office received an email from Mr. Bin Laden and other rich businessman from Saudi Arabia. They said there would be biochemistry air raid to Guam Airport and other public places. Guam needs to be in high precaution about this matter. 76

Multiple Reference Translations Reference translation 1: The U. S. island of Guam is maintaining a high state of alert after the Guam airport and its offices both received an e-mail from someone calling himself the Saudi Arabian Osama bin Laden and threatening a biological/chemical attack against public places such as the airport. Reference translation 2: Guam International Airport and its offices are maintaining a high state of alert after receiving an e-mail that was from a person claiming to be the wealthy Saudi Arabian businessman Bin Laden and that threatened to launch a biological and chemical attack on the airport and other public places. Machine translation: The American [? ] international airport and its the office all receives one calls self the sand Arab rich business [? ] and so on electronic mail , which sends out ; The threat will be able after public place and so on the airport to start the biochemistry attack , [? ] highly alerts after the maintenance. Reference translation 3: The US International Airport of Guam and its office has received an email from a self-claimed Arabian millionaire named Laden , which threatens to launch a biochemical attack on such public places as airport. Guam authority has been on alert. Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing Reference translation 4: US Guam International Airport and its office received an email from Mr. Bin Laden and other rich businessman from Saudi Arabia. They said there would be biochemistry air raid to Guam Airport and other public places. Guam needs to be in high precaution about this matter. 76

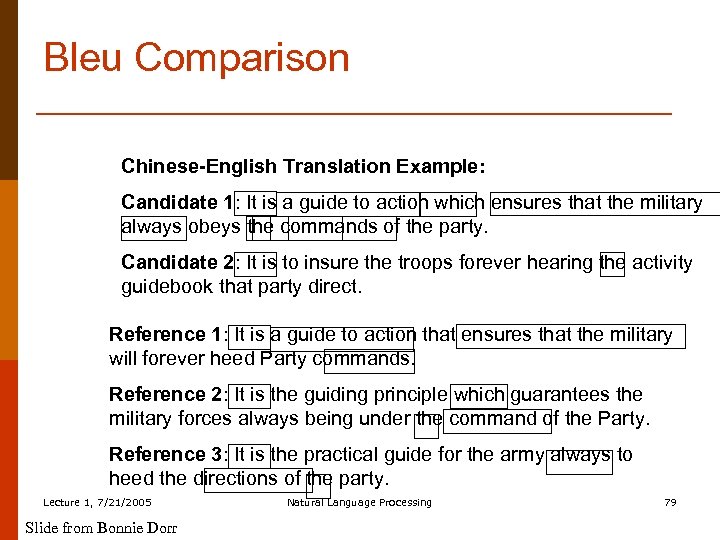

Bleu Comparison Chinese-English Translation Example: Candidate 1: It is a guide to action which ensures that the military always obeys the commands of the party. Candidate 2: It is to insure the troops forever hearing the activity guidebook that party direct. Reference 1: It is a guide to action that ensures that the military will forever heed Party commands. Reference 2: It is the guiding principle which guarantees the military forces always being under the command of the Party. Reference 3: It is the practical guide for the army always to heed the directions of the party. Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 79

Bleu Comparison Chinese-English Translation Example: Candidate 1: It is a guide to action which ensures that the military always obeys the commands of the party. Candidate 2: It is to insure the troops forever hearing the activity guidebook that party direct. Reference 1: It is a guide to action that ensures that the military will forever heed Party commands. Reference 2: It is the guiding principle which guarantees the military forces always being under the command of the Party. Reference 3: It is the practical guide for the army always to heed the directions of the party. Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 79

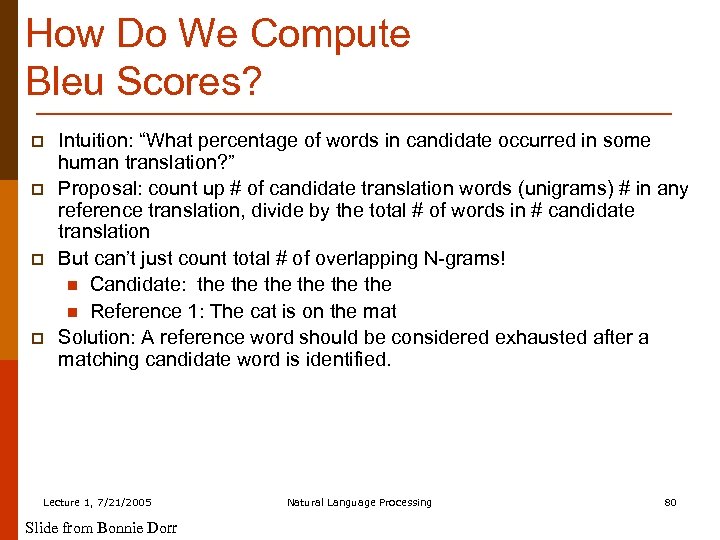

How Do We Compute Bleu Scores? p p Intuition: “What percentage of words in candidate occurred in some human translation? ” Proposal: count up # of candidate translation words (unigrams) # in any reference translation, divide by the total # of words in # candidate translation But can’t just count total # of overlapping N-grams! n Candidate: the the the n Reference 1: The cat is on the mat Solution: A reference word should be considered exhausted after a matching candidate word is identified. Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 80

How Do We Compute Bleu Scores? p p Intuition: “What percentage of words in candidate occurred in some human translation? ” Proposal: count up # of candidate translation words (unigrams) # in any reference translation, divide by the total # of words in # candidate translation But can’t just count total # of overlapping N-grams! n Candidate: the the the n Reference 1: The cat is on the mat Solution: A reference word should be considered exhausted after a matching candidate word is identified. Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 80

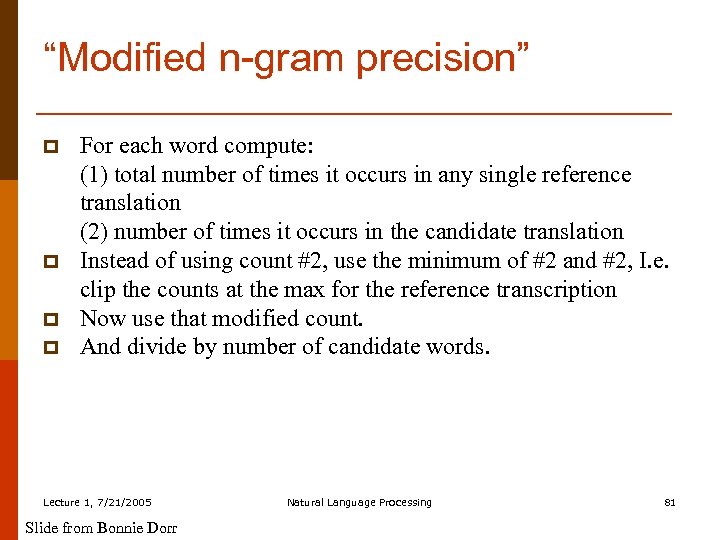

“Modified n-gram precision” p p For each word compute: (1) total number of times it occurs in any single reference translation (2) number of times it occurs in the candidate translation Instead of using count #2, use the minimum of #2 and #2, I. e. clip the counts at the max for the reference transcription Now use that modified count. And divide by number of candidate words. Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 81

“Modified n-gram precision” p p For each word compute: (1) total number of times it occurs in any single reference translation (2) number of times it occurs in the candidate translation Instead of using count #2, use the minimum of #2 and #2, I. e. clip the counts at the max for the reference transcription Now use that modified count. And divide by number of candidate words. Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 81

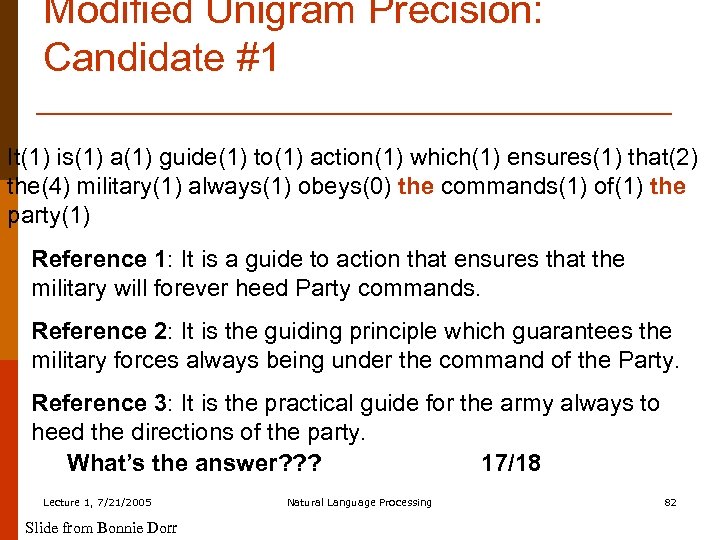

Modified Unigram Precision: Candidate #1 It(1) is(1) a(1) guide(1) to(1) action(1) which(1) ensures(1) that(2) the(4) military(1) always(1) obeys(0) the commands(1) of(1) the party(1) Reference 1: It is a guide to action that ensures that the military will forever heed Party commands. Reference 2: It is the guiding principle which guarantees the military forces always being under the command of the Party. Reference 3: It is the practical guide for the army always to heed the directions of the party. What’s the answer? ? ? 17/18 Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 82

Modified Unigram Precision: Candidate #1 It(1) is(1) a(1) guide(1) to(1) action(1) which(1) ensures(1) that(2) the(4) military(1) always(1) obeys(0) the commands(1) of(1) the party(1) Reference 1: It is a guide to action that ensures that the military will forever heed Party commands. Reference 2: It is the guiding principle which guarantees the military forces always being under the command of the Party. Reference 3: It is the practical guide for the army always to heed the directions of the party. What’s the answer? ? ? 17/18 Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 82

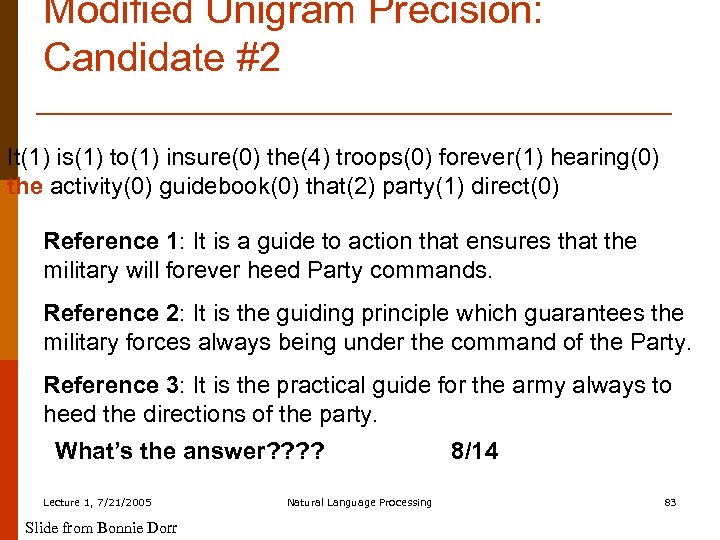

Modified Unigram Precision: Candidate #2 It(1) is(1) to(1) insure(0) the(4) troops(0) forever(1) hearing(0) the activity(0) guidebook(0) that(2) party(1) direct(0) Reference 1: It is a guide to action that ensures that the military will forever heed Party commands. Reference 2: It is the guiding principle which guarantees the military forces always being under the command of the Party. Reference 3: It is the practical guide for the army always to heed the directions of the party. What’s the answer? ? Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 8/14 83

Modified Unigram Precision: Candidate #2 It(1) is(1) to(1) insure(0) the(4) troops(0) forever(1) hearing(0) the activity(0) guidebook(0) that(2) party(1) direct(0) Reference 1: It is a guide to action that ensures that the military will forever heed Party commands. Reference 2: It is the guiding principle which guarantees the military forces always being under the command of the Party. Reference 3: It is the practical guide for the army always to heed the directions of the party. What’s the answer? ? Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 8/14 83

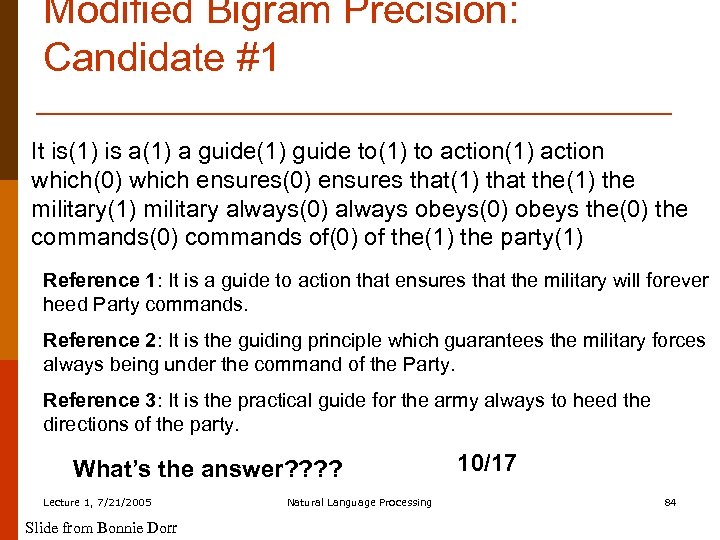

Modified Bigram Precision: Candidate #1 It is(1) is a(1) a guide(1) guide to(1) to action(1) action which(0) which ensures(0) ensures that(1) that the(1) the military(1) military always(0) always obeys(0) obeys the(0) the commands(0) commands of(0) of the(1) the party(1) Reference 1: It is a guide to action that ensures that the military will forever heed Party commands. Reference 2: It is the guiding principle which guarantees the military forces always being under the command of the Party. Reference 3: It is the practical guide for the army always to heed the directions of the party. What’s the answer? ? Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 10/17 84

Modified Bigram Precision: Candidate #1 It is(1) is a(1) a guide(1) guide to(1) to action(1) action which(0) which ensures(0) ensures that(1) that the(1) the military(1) military always(0) always obeys(0) obeys the(0) the commands(0) commands of(0) of the(1) the party(1) Reference 1: It is a guide to action that ensures that the military will forever heed Party commands. Reference 2: It is the guiding principle which guarantees the military forces always being under the command of the Party. Reference 3: It is the practical guide for the army always to heed the directions of the party. What’s the answer? ? Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 10/17 84

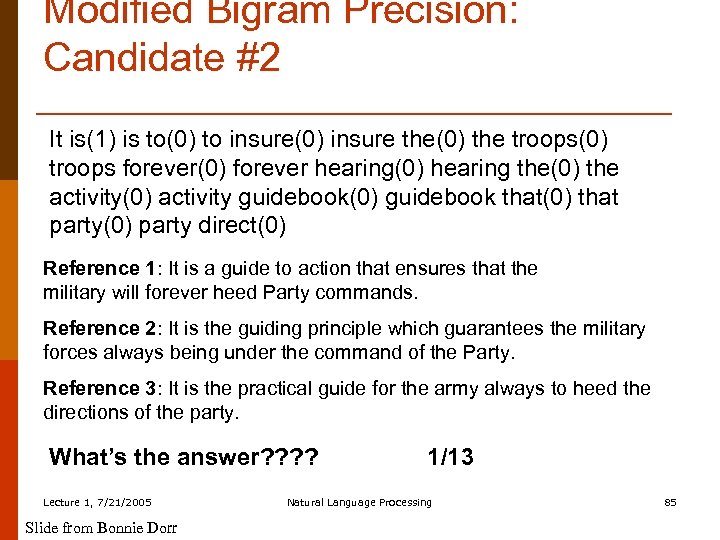

Modified Bigram Precision: Candidate #2 It is(1) is to(0) to insure(0) insure the(0) the troops(0) troops forever(0) forever hearing(0) hearing the(0) the activity(0) activity guidebook(0) guidebook that(0) that party(0) party direct(0) Reference 1: It is a guide to action that ensures that the military will forever heed Party commands. Reference 2: It is the guiding principle which guarantees the military forces always being under the command of the Party. Reference 3: It is the practical guide for the army always to heed the directions of the party. What’s the answer? ? Lecture 1, 7/21/2005 Slide from Bonnie Dorr 1/13 Natural Language Processing 85

Modified Bigram Precision: Candidate #2 It is(1) is to(0) to insure(0) insure the(0) the troops(0) troops forever(0) forever hearing(0) hearing the(0) the activity(0) activity guidebook(0) guidebook that(0) that party(0) party direct(0) Reference 1: It is a guide to action that ensures that the military will forever heed Party commands. Reference 2: It is the guiding principle which guarantees the military forces always being under the command of the Party. Reference 3: It is the practical guide for the army always to heed the directions of the party. What’s the answer? ? Lecture 1, 7/21/2005 Slide from Bonnie Dorr 1/13 Natural Language Processing 85

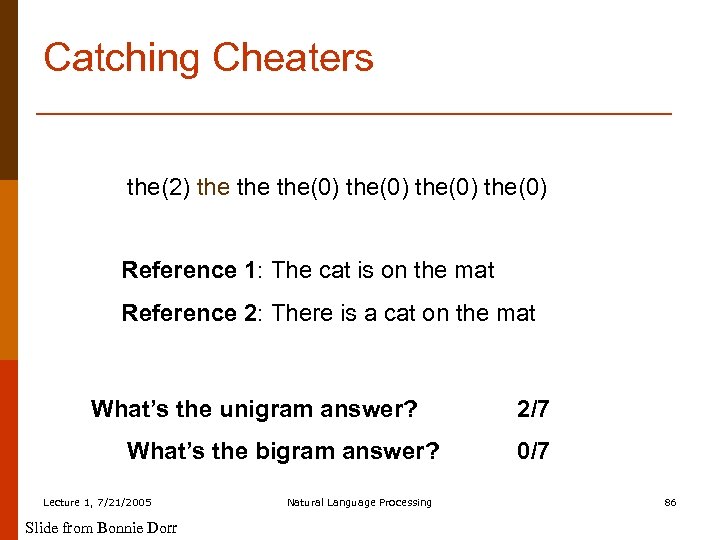

Catching Cheaters the(2) the the(0) Reference 1: The cat is on the mat Reference 2: There is a cat on the mat What’s the unigram answer? What’s the bigram answer? Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 2/7 0/7 86

Catching Cheaters the(2) the the(0) Reference 1: The cat is on the mat Reference 2: There is a cat on the mat What’s the unigram answer? What’s the bigram answer? Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 2/7 0/7 86

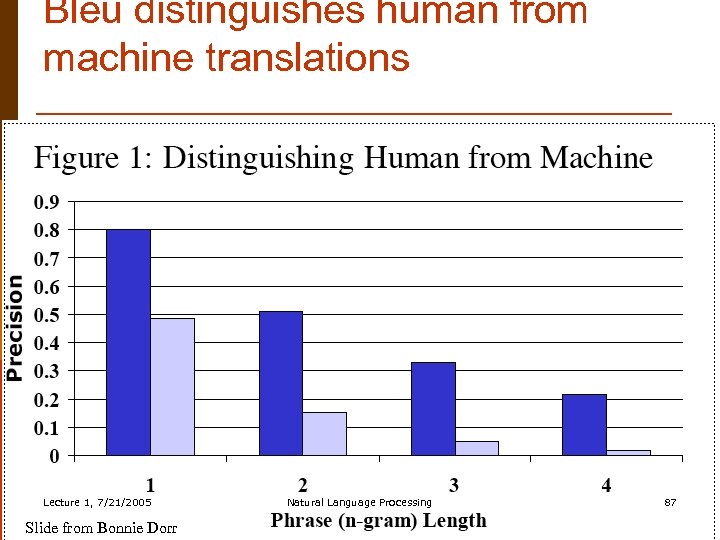

Bleu distinguishes human from machine translations Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 87

Bleu distinguishes human from machine translations Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 87

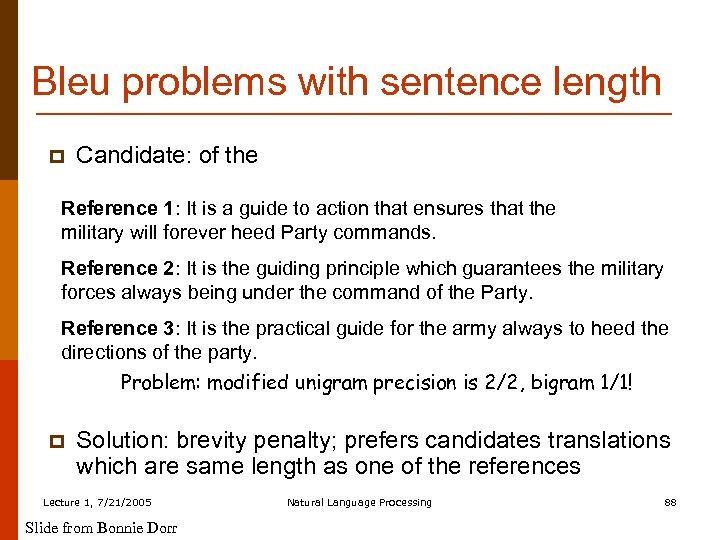

Bleu problems with sentence length p Candidate: of the Reference 1: It is a guide to action that ensures that the military will forever heed Party commands. Reference 2: It is the guiding principle which guarantees the military forces always being under the command of the Party. Reference 3: It is the practical guide for the army always to heed the directions of the party. Problem: modified unigram precision is 2/2, bigram 1/1! p Solution: brevity penalty; prefers candidates translations which are same length as one of the references Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 88

Bleu problems with sentence length p Candidate: of the Reference 1: It is a guide to action that ensures that the military will forever heed Party commands. Reference 2: It is the guiding principle which guarantees the military forces always being under the command of the Party. Reference 3: It is the practical guide for the army always to heed the directions of the party. Problem: modified unigram precision is 2/2, bigram 1/1! p Solution: brevity penalty; prefers candidates translations which are same length as one of the references Lecture 1, 7/21/2005 Slide from Bonnie Dorr Natural Language Processing 88

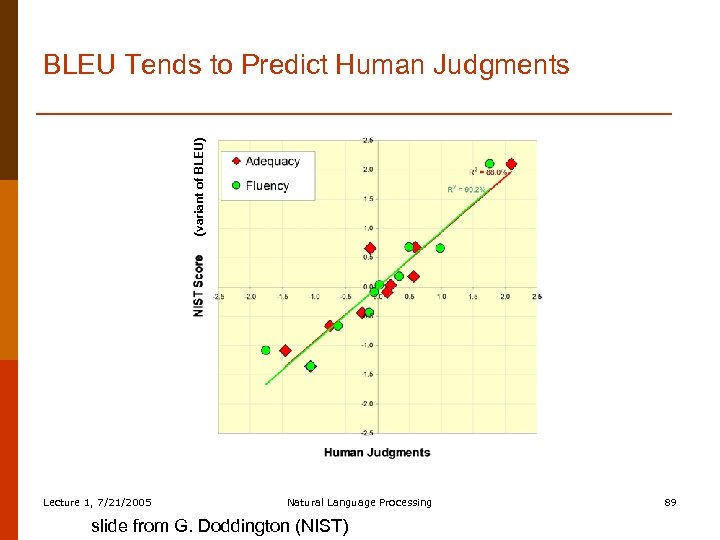

(variant of BLEU) BLEU Tends to Predict Human Judgments Lecture 1, 7/21/2005 Natural Language Processing slide from G. Doddington (NIST) 89

(variant of BLEU) BLEU Tends to Predict Human Judgments Lecture 1, 7/21/2005 Natural Language Processing slide from G. Doddington (NIST) 89

Summary p p Intro and a little history Language Similarities and Divergences Four main MT Approaches n Transfer n Interlingua n Direct n Statistical Evaluation Lecture 1, 7/21/2005 Natural Language Processing 90

Summary p p Intro and a little history Language Similarities and Divergences Four main MT Approaches n Transfer n Interlingua n Direct n Statistical Evaluation Lecture 1, 7/21/2005 Natural Language Processing 90

Classes p p LINGUIST 139 M/239 M. Human and Machine Translation. (Martin Kay) CS 224 N. Natural Language Processing (Chris Manning) Lecture 1, 7/21/2005 Natural Language Processing 91

Classes p p LINGUIST 139 M/239 M. Human and Machine Translation. (Martin Kay) CS 224 N. Natural Language Processing (Chris Manning) Lecture 1, 7/21/2005 Natural Language Processing 91

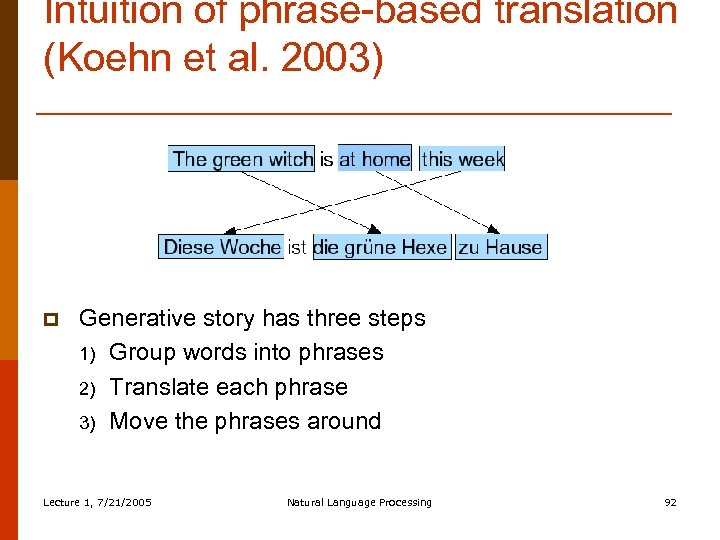

Intuition of phrase-based translation (Koehn et al. 2003) p Generative story has three steps 1) Group words into phrases 2) Translate each phrase 3) Move the phrases around Lecture 1, 7/21/2005 Natural Language Processing 92

Intuition of phrase-based translation (Koehn et al. 2003) p Generative story has three steps 1) Group words into phrases 2) Translate each phrase 3) Move the phrases around Lecture 1, 7/21/2005 Natural Language Processing 92

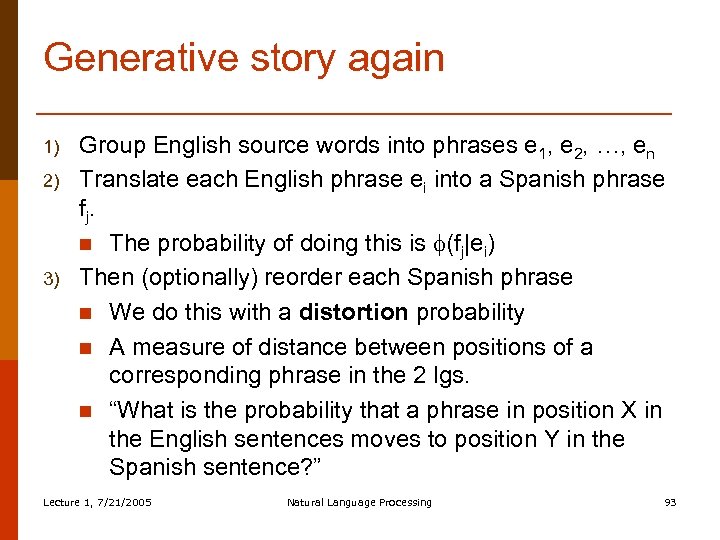

Generative story again 1) 2) 3) Group English source words into phrases e 1, e 2, …, en Translate each English phrase ei into a Spanish phrase f j. n The probability of doing this is (fj|ei) Then (optionally) reorder each Spanish phrase n We do this with a distortion probability n A measure of distance between positions of a corresponding phrase in the 2 lgs. n “What is the probability that a phrase in position X in the English sentences moves to position Y in the Spanish sentence? ” Lecture 1, 7/21/2005 Natural Language Processing 93

Generative story again 1) 2) 3) Group English source words into phrases e 1, e 2, …, en Translate each English phrase ei into a Spanish phrase f j. n The probability of doing this is (fj|ei) Then (optionally) reorder each Spanish phrase n We do this with a distortion probability n A measure of distance between positions of a corresponding phrase in the 2 lgs. n “What is the probability that a phrase in position X in the English sentences moves to position Y in the Spanish sentence? ” Lecture 1, 7/21/2005 Natural Language Processing 93

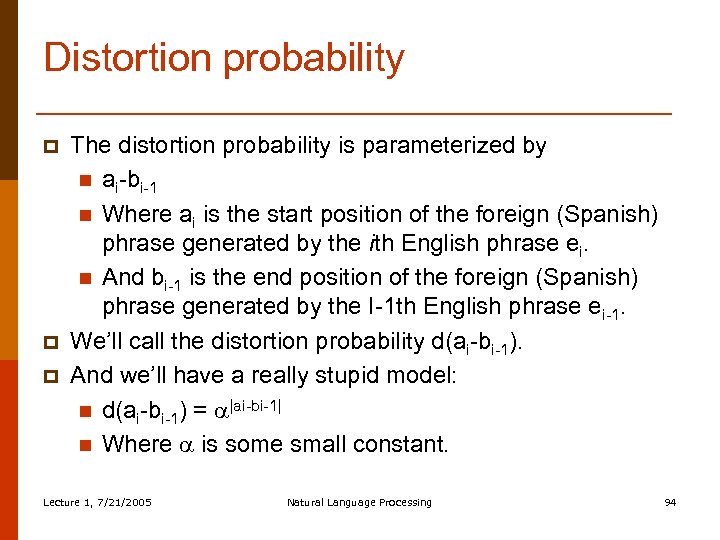

Distortion probability p p p The distortion probability is parameterized by n ai-bi-1 n Where ai is the start position of the foreign (Spanish) phrase generated by the ith English phrase ei. n And bi-1 is the end position of the foreign (Spanish) phrase generated by the I-1 th English phrase ei-1. We’ll call the distortion probability d(ai-bi-1). And we’ll have a really stupid model: n d(ai-bi-1) = |ai-bi-1| n Where is some small constant. Lecture 1, 7/21/2005 Natural Language Processing 94

Distortion probability p p p The distortion probability is parameterized by n ai-bi-1 n Where ai is the start position of the foreign (Spanish) phrase generated by the ith English phrase ei. n And bi-1 is the end position of the foreign (Spanish) phrase generated by the I-1 th English phrase ei-1. We’ll call the distortion probability d(ai-bi-1). And we’ll have a really stupid model: n d(ai-bi-1) = |ai-bi-1| n Where is some small constant. Lecture 1, 7/21/2005 Natural Language Processing 94

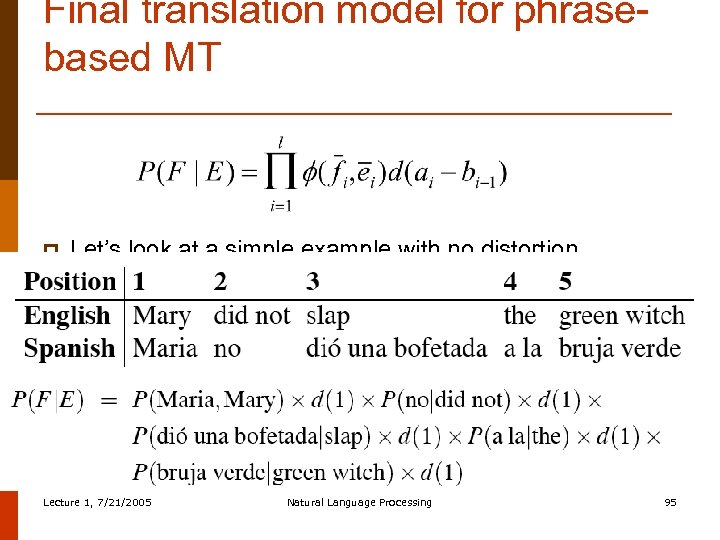

Final translation model for phrasebased MT p Let’s look at a simple example with no distortion Lecture 1, 7/21/2005 Natural Language Processing 95

Final translation model for phrasebased MT p Let’s look at a simple example with no distortion Lecture 1, 7/21/2005 Natural Language Processing 95

Phrase-based MT p p p Language model P(E) Translation model P(F|E) n Model n How to train the model Decoder: finding the sentence E that is most probable Lecture 1, 7/21/2005 Natural Language Processing 96

Phrase-based MT p p p Language model P(E) Translation model P(F|E) n Model n How to train the model Decoder: finding the sentence E that is most probable Lecture 1, 7/21/2005 Natural Language Processing 96

Training P(F|E) p p p What we mainly need to train is (fj|ei) Suppose we had a large bilingual training corpus n A bitext n In which each English sentence is paired with a Spanish sentence And suppose we knew exactly which phrase in Spanish was the translation of which phrase in the English We call this a phrase alignment If we had this, we could just count-and-divide: Lecture 1, 7/21/2005 Natural Language Processing 97

Training P(F|E) p p p What we mainly need to train is (fj|ei) Suppose we had a large bilingual training corpus n A bitext n In which each English sentence is paired with a Spanish sentence And suppose we knew exactly which phrase in Spanish was the translation of which phrase in the English We call this a phrase alignment If we had this, we could just count-and-divide: Lecture 1, 7/21/2005 Natural Language Processing 97

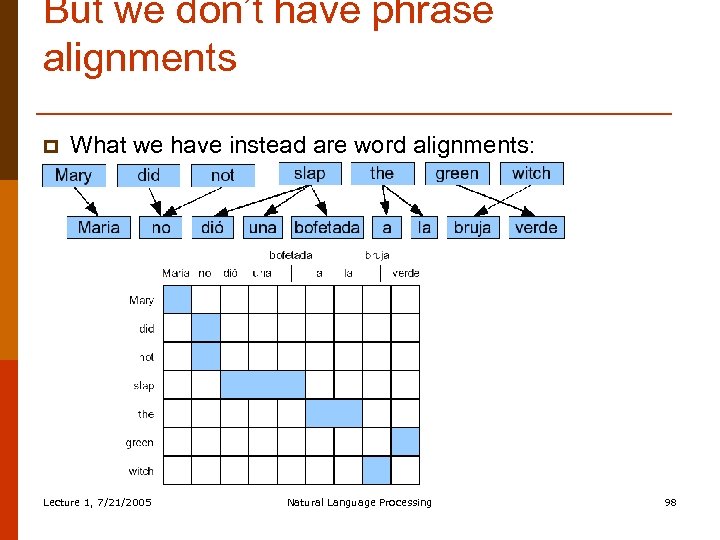

But we don’t have phrase alignments p What we have instead are word alignments: Lecture 1, 7/21/2005 Natural Language Processing 98

But we don’t have phrase alignments p What we have instead are word alignments: Lecture 1, 7/21/2005 Natural Language Processing 98

Getting phrase alignments p To get phrase alignments: 1) We first get word alignments 2) Then we “symmetrize” the word alignments into phrase alignments Lecture 1, 7/21/2005 Natural Language Processing 99

Getting phrase alignments p To get phrase alignments: 1) We first get word alignments 2) Then we “symmetrize” the word alignments into phrase alignments Lecture 1, 7/21/2005 Natural Language Processing 99