36587b260855c2a9c41c3e1dd350e72a.ppt

- Количество слайдов: 45

Cristina Vistoli INFN CNAF , Bologna Italy The Grid technology and infrastructure in Italy present and future – n° 1

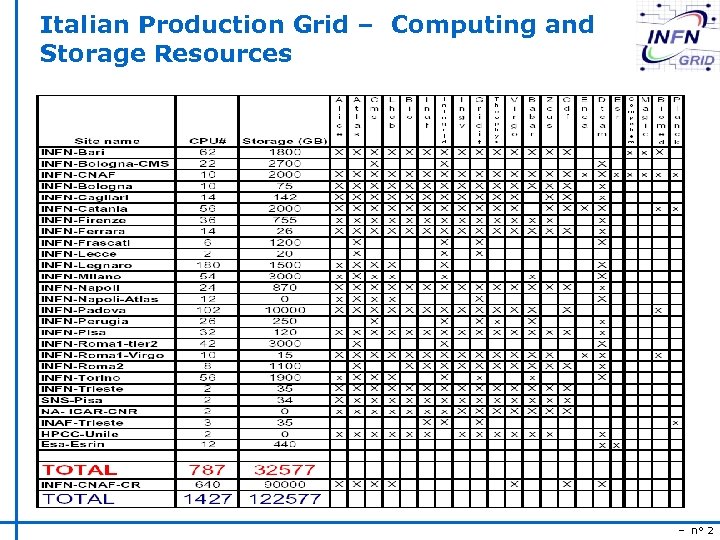

Italian Production Grid – Computing and Storage Resources – n° 2

Italian Grid Production Services u Resource Brokers: n EGEE/LCG infrastructure open to all EGEE VO (LHC exps , Biomed, MAgic, Planck, Compchem, ESR) s s egee-rb-01. cnaf. infn. it ; grid 008 g. cnaf. infn. it (DAG enabled) n n u ATLAS VO egee-rb-02. cnaf. infn. it, egee-rb-05. cnaf. infn. it, egee-rb-06 CMS VO egee-rb-04. cnaf. infn. it, egee-rb-06. cnaf. infn. it Replica Location Service for babar, virgo, cdf, planck and other Italian VOs n n u datatag 2. cnaf. infn. it, To be replaced by LFC: lfcserver. cnaf. infn. it Voms server: n n u testbed 008. cnaf. infn. it VOs: infngrid, zeus, cdf, planck, compchem LDAP SERVER FOR National Vos (bio, inaf, ingv, gridit, theophys, virgo) : n grid-vo. cnaf. infn. it – n° 3

Italian Grid Services u My. Proxy servers: testbed 013. cnaf. infn. it n u User Interfaces: n UIs are not Core servives. . . , anyway you can find a list of italian UIs s http: //grid-it. cnaf. infn. it/index. php? userinterface&type=1 u Monitoring: Grid. ICE server: n EGEE/LCG Production infrastructure s n Italian Production Infrastructure s n http: //edt 002. cnaf. infn. it: 50080/gridice/site. php Atlas s n http: //gridice 2. cnaf. infn. it: 50080/gridice/site. php http: //egee 005. infn. it: 50080/gridice/site. php CMS s http: //egee 004. cnaf. infn. it: 50080/gridice/site. php – n° 4

INFN-GRID Middleware release u Based on LCG u Several VOs added (about 15) u Authorization via VOMS + LCAS/LCMAPS u MPI configuration u Cert queue for site functional tests u Gridice includes WNs monitor u AFS support in the WN u DAG jobs support u DGAS accounting system – n° 5

INFN Production Grid Support The access point for user and site mangers at INFN is at the INFN Production Grid Website: http: //grid-it. cnaf. infn. it There you can find pointers to: Documentation (prerequisites, middleware installation, upgrade, testing, using the Grid, current applications and VOs, etc. ) A software repository Monitoring of resources and services (Grid. ICE) Tools for the CMT (Central Management Team), site managers and supporters (Calendar/downtime manager) Trouble ticketing system for problems, advisories and suggestions A knowledge base to complement the documentation – n° 6

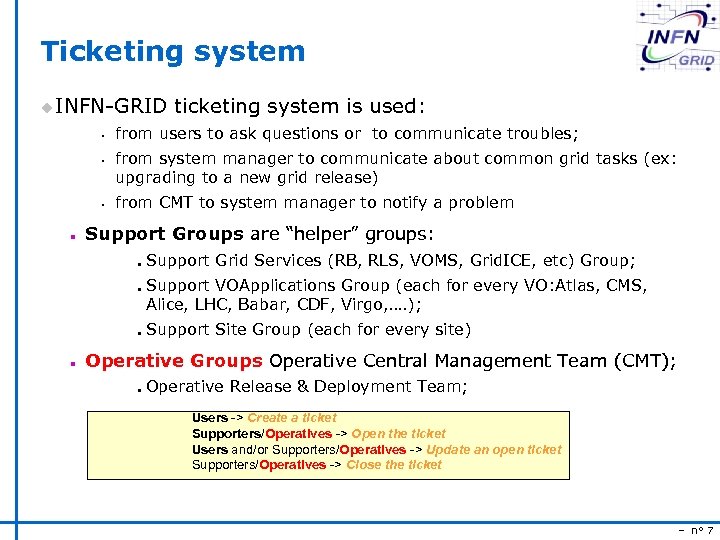

Ticketing system u INFN-GRID ticketing system is used: s s s n from users to ask questions or to communicate troubles; from system manager to communicate about common grid tasks (ex: upgrading to a new grid release) from CMT to system manager to notify a problem Support Groups are “helper” groups: n n Support Grid Services (RB, RLS, VOMS, Grid. ICE, etc) Group; Support VOApplications Group (each for every VO: Atlas, CMS, Alice, LHC, Babar, CDF, Virgo, …. ); Support Site Group (each for every site) Operative Groups Operative Central Management Team (CMT); n Operative Release & Deployment Team; Users -> Create a ticket Supporters/Operatives -> Open the ticket Users and/or Supporters/Operatives -> Update an open ticket Supporters/Operatives -> Close the ticket – n° 7

Operations activities u Operation of the grid infrastructure: n Shifts (Monday to Friday 08: 00 -20: 00): about 20 people from different sites monitor and test the status of the grid resources (STF, others), certify of the italian sites/resources and do VO and user support n n u Management of the grid services (general purpose and VO specific) Participation to CIC-on-Duty shifts Support (operations, user and VO): n The regional ticketing system, based on Oneor. Zero, is going to be replaced by a new system (x. Help+Xoops) interfaced to GGUS n n u Support groups are defined and operational Participation to Support-on-Duty shifts Release and installation: n n u currently INFN-GRID release is based on LCG. INFN-GRID-2. 4. 0 contains DAG, DGAS, VOMS, support for afs client and Grid. Ice monitoring for the WNs; it is now in the deployment phase on the italian grid infrastructure Installation and configuration scripts (YAIM) are provided Grid Install working group Fabric and software management WG – n° 8

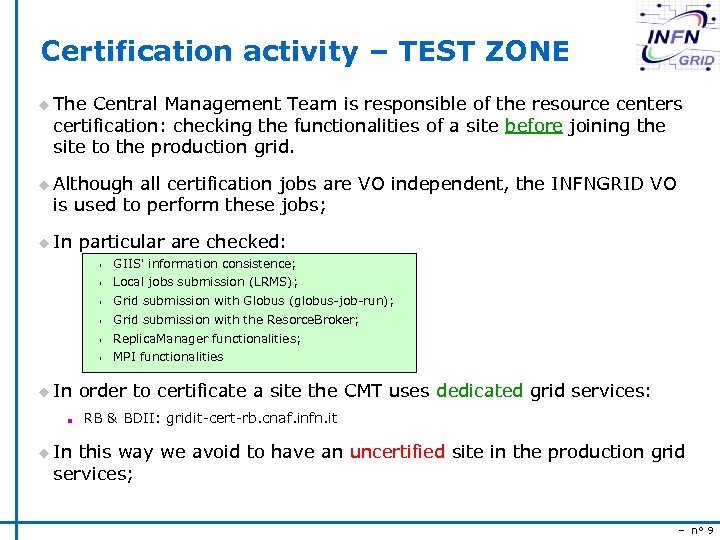

Certification activity – TEST ZONE u The Central Management Team is responsible of the resource centers certification: checking the functionalities of a site before joining the site to the production grid. u Although all certification jobs are VO independent, the INFNGRID VO is used to perform these jobs; u In particular are checked: s GIIS' information consistence; s Local jobs submission (LRMS); s Grid submission with Globus (globus-job-run); s Grid submission with the Resorce. Broker; s Replica. Manager functionalities; s MPI functionalities u In order to certificate a site the CMT uses dedicated grid services: n RB & BDII: gridit-cert-rb. cnaf. infn. it u In this way we avoid to have an uncertified site in the production grid services; – n° 9

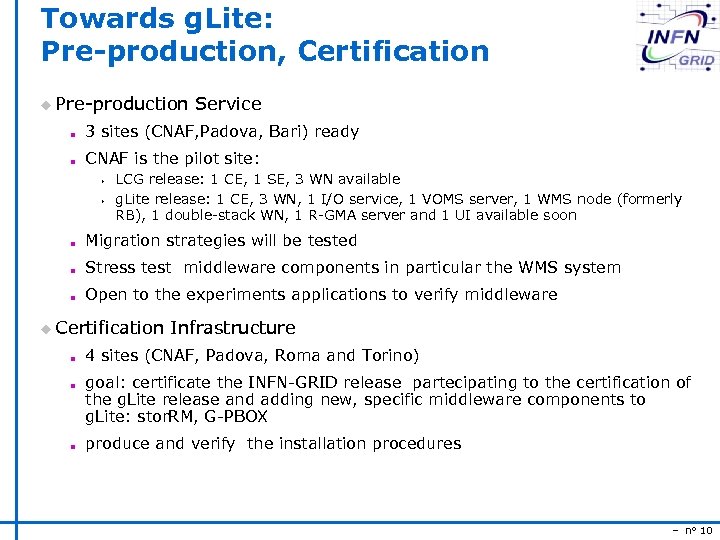

Towards g. Lite: Pre-production, Certification u Pre-production Service n 3 sites (CNAF, Padova, Bari) ready n CNAF is the pilot site: s s LCG release: 1 CE, 1 SE, 3 WN available g. Lite release: 1 CE, 3 WN, 1 I/O service, 1 VOMS server, 1 WMS node (formerly RB), 1 double-stack WN, 1 R-GMA server and 1 UI available soon n Migration strategies will be tested n Stress test middleware components in particular the WMS system n Open to the experiments applications to verify middleware u Certification Infrastructure n n n 4 sites (CNAF, Padova, Roma and Torino) goal: certificate the INFN-GRID release partecipating to the certification of the g. Lite release and adding new, specific middleware components to g. Lite: stor. RM, G-PBOX produce and verify the installation procedures – n° 10

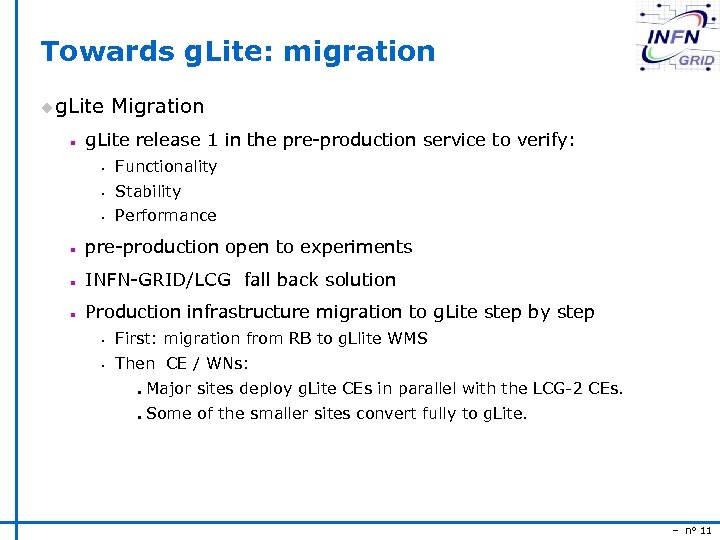

Towards g. Lite: migration u g. Lite Migration n g. Lite release 1 in the pre-production service to verify: s Functionality s Stability s Performance n pre-production open to experiments n INFN-GRID/LCG fall back solution n Production infrastructure migration to g. Lite step by step s First: migration from RB to g. Llite WMS s Then CE / WNs: n n Major sites deploy g. Lite CEs in parallel with the LCG-2 CEs. Some of the smaller sites convert fully to g. Lite. – n° 11

Current activities u Production service quality and stability improvements n Improve monitoring systems: Gridice with reactive alarms and notification, new release n Site isolation - need simple mechanism to remove sites n Improve the grid management and monitoring structure u INFN-GRID Support infrastructure: s VO support - Experiments s Site administartors s Grid services support u Accounting and policy system management – n° 12

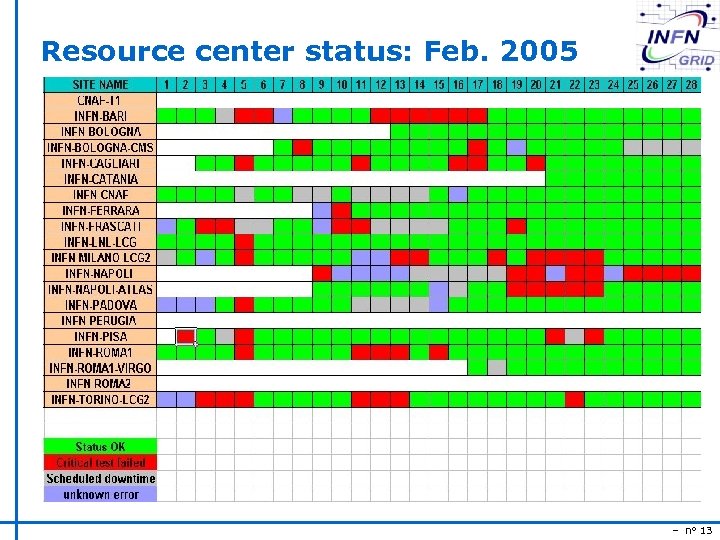

Resource center status: Feb. 2005 – n° 13

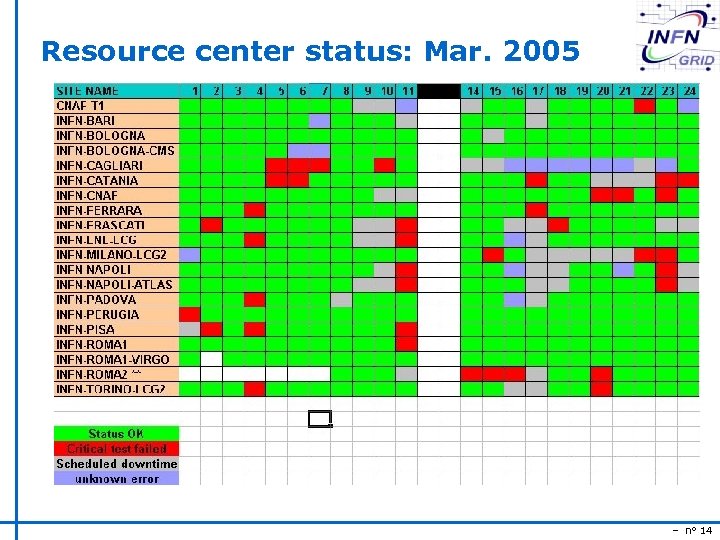

Resource center status: Mar. 2005 – n° 14

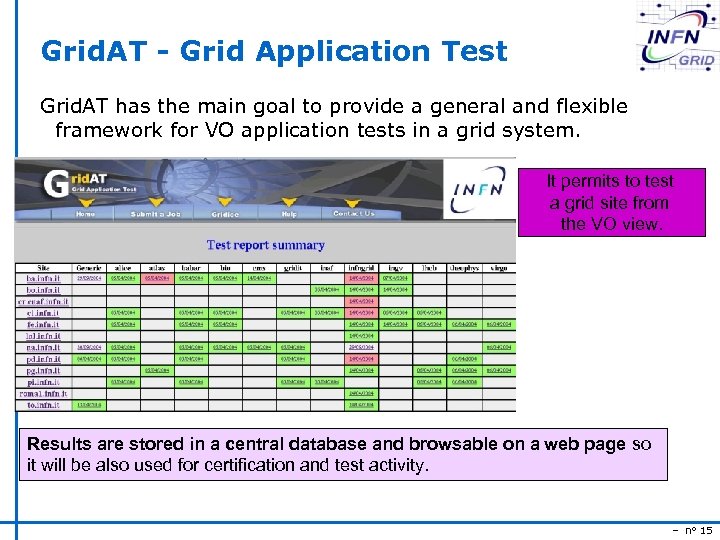

Grid. AT - Grid Application Test Grid. AT has the main goal to provide a general and flexible framework for VO application tests in a grid system. It permits to test a grid site from the VO view. Results are stored in a central database and browsable on a web page so it will be also used for certification and test activity. – n° 15

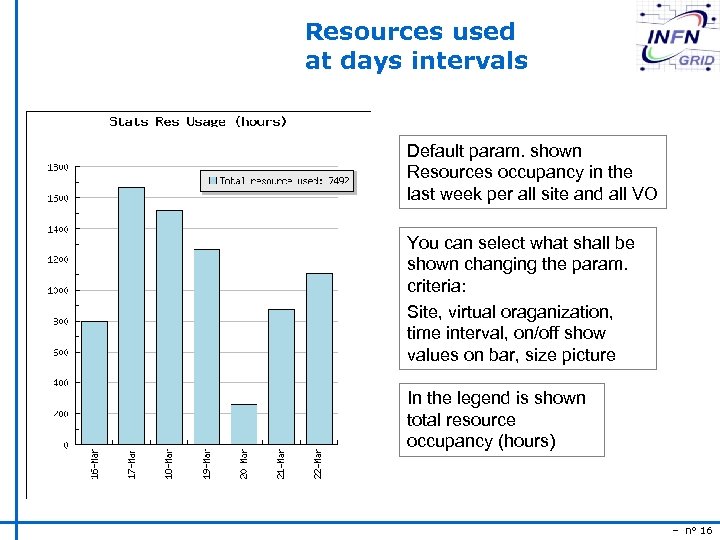

Resources used at days intervals Default param. shown Resources occupancy in the last week per all site and all VO You can select what shall be shown changing the param. criteria: Site, virtual oraganization, time interval, on/off show values on bar, size picture In the legend is shown total resource occupancy (hours) – n° 16

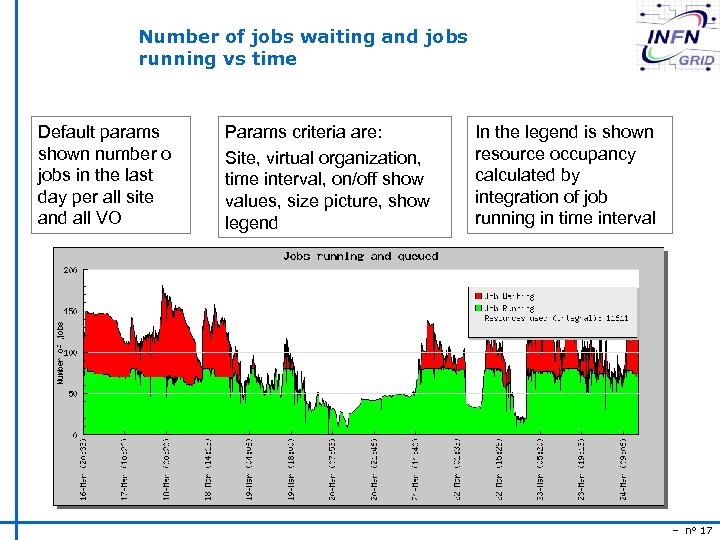

Number of jobs waiting and jobs running vs time Default params shown number o jobs in the last day per all site and all VO Params criteria are: Site, virtual organization, time interval, on/off show values, size picture, show legend In the legend is shown resource occupancy calculated by integration of job running in time interval – n° 17

Number of jobs CPU hours Used memory (average job size) Wall Time hours – n° 18

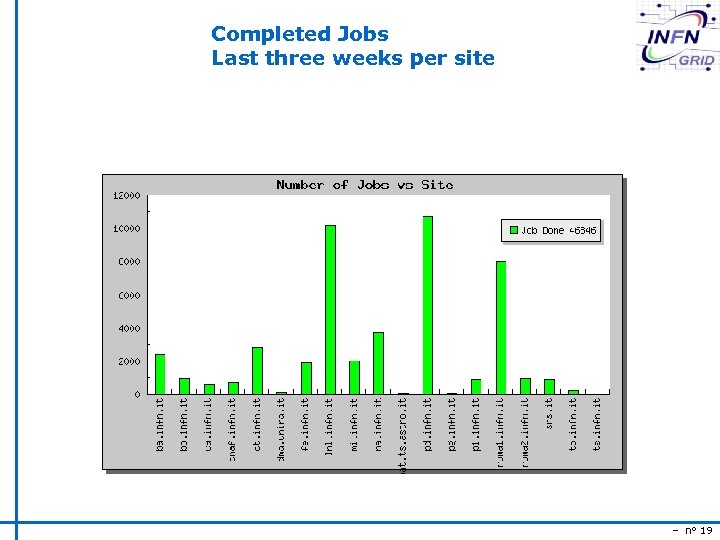

Completed Jobs Last three weeks per site – n° 19

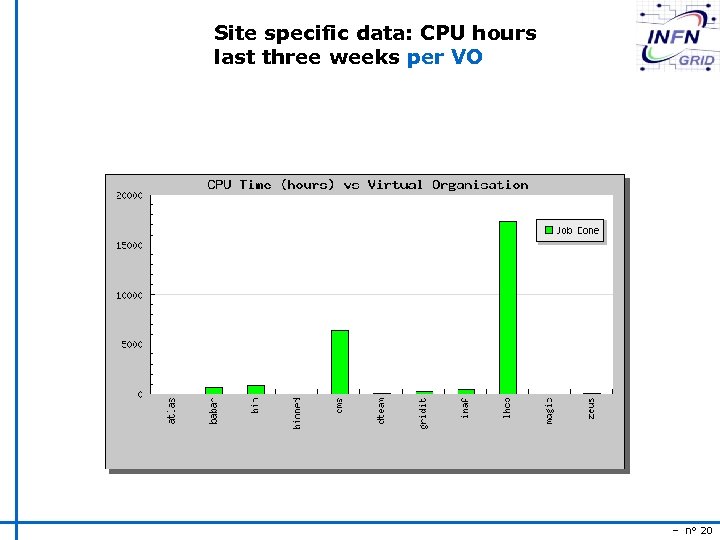

Site specific data: CPU hours last three weeks per VO – n° 20

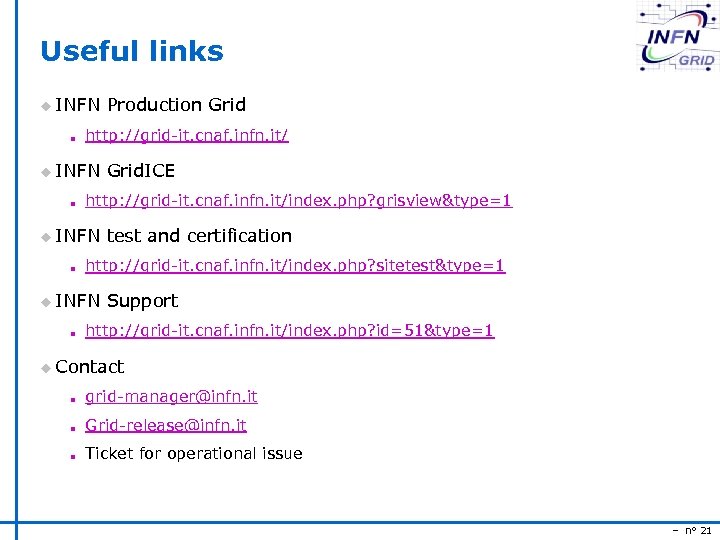

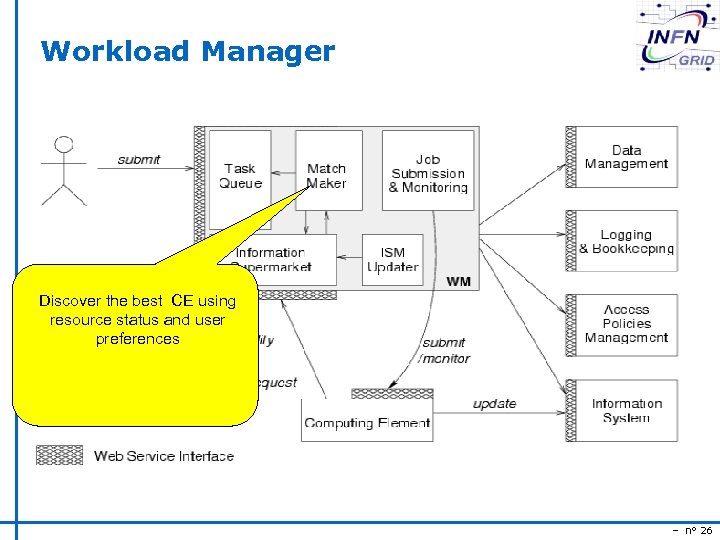

Useful links u INFN Production Grid n http: //grid-it. cnaf. infn. it/ u INFN Grid. ICE n http: //grid-it. cnaf. infn. it/index. php? grisview&type=1 u INFN test and certification n http: //grid-it. cnaf. infn. it/index. php? sitetest&type=1 u INFN Support n http: //grid-it. cnaf. infn. it/index. php? id=51&type=1 u Contact n grid-manager@infn. it n Grid-release@infn. it n Ticket for operational issue – n° 21

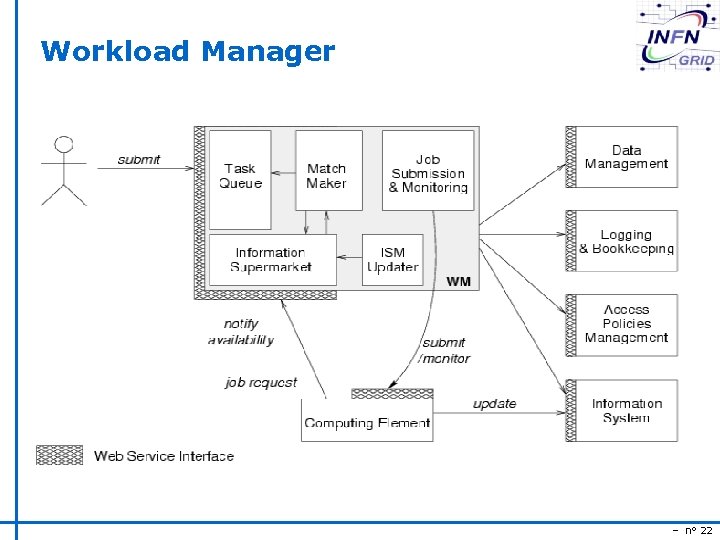

Workload Manager – n° 22

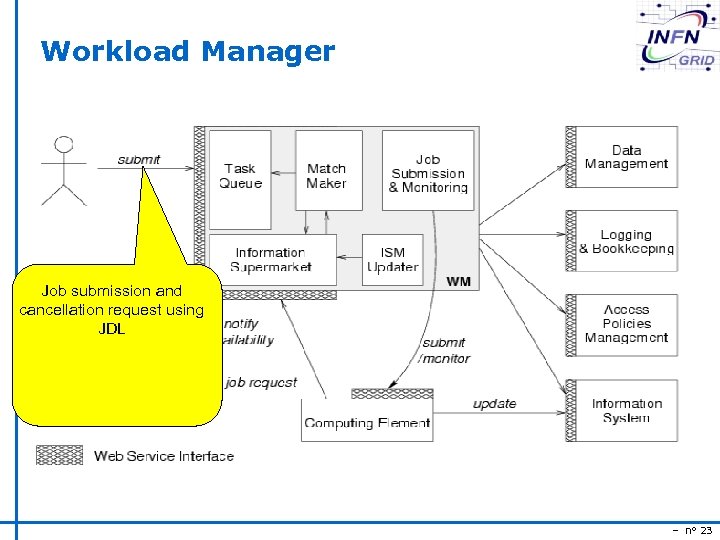

Workload Manager Job submission and cancellation request using JDL – n° 23

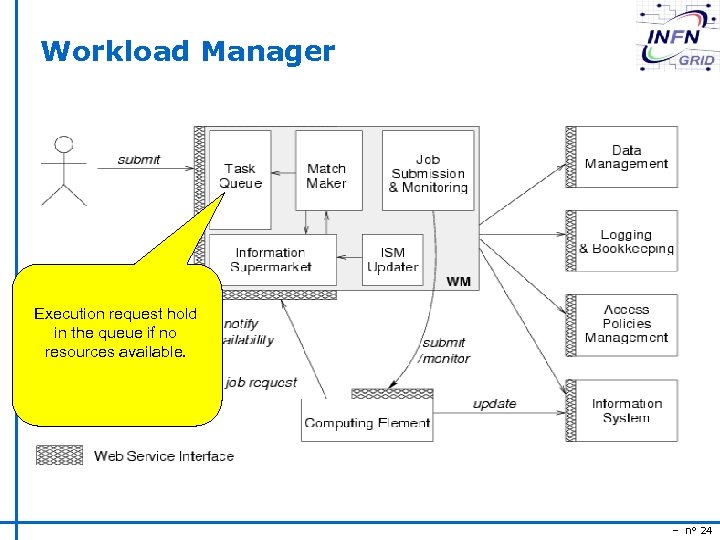

Workload Manager Execution request hold in the queue if no resources available. – n° 24

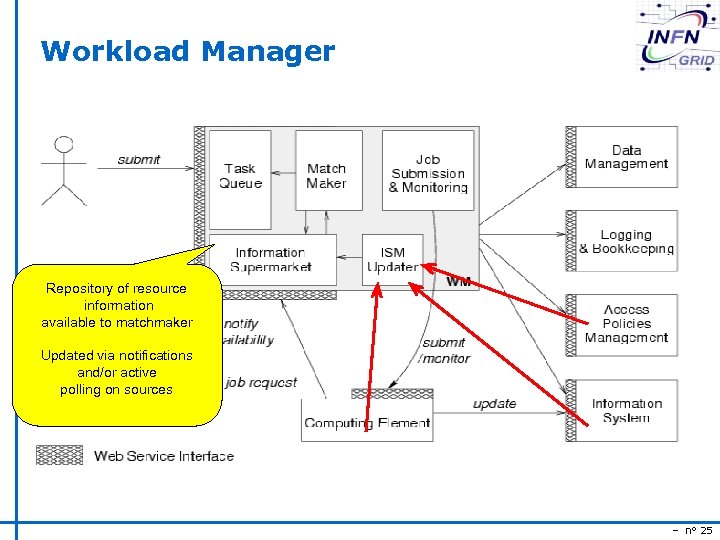

Workload Manager Repository of resource information available to matchmaker Updated via notifications and/or active polling on sources – n° 25

Workload Manager Discover the best CE using resource status and user preferences – n° 26

State of the art a short while ago u Globus GRAM de-facto standard for resource access u Various job submission systems built on top of it n u Used by most Grid projects worldwide n u E. g. Condor-G E. g. LCG Allowed interoperability among different Grid systems – n° 27

Resource access in EGEE u u Italy and INFN has to address the resource access problem in the context of the EGEE project Goals n Provide a simple Computing Element which must prove to efficiently allow remote job submission and job control s s n Possibly addressing open problems Stick to emerging standards s n To be used by the “Broker” (Workload Manager) or by a generic client (e. g. an end-user) Service oriented architecture Facilitate the integration of other important software components already implemented/being implemented – n° 28

Integration of other software components u u Having “control” on the Computing Element can facilitate the integration of other software components to be deployed in the CE Relevant examples: n Grid accounting s n DGAS sensors for resource metering Policy framework s Integration of G-PBox n n n For policy evaluation given a submission request Resource monitoring s n For setting site policies Grid. ICE Resource reservation and co-allocation (? ) – n° 29

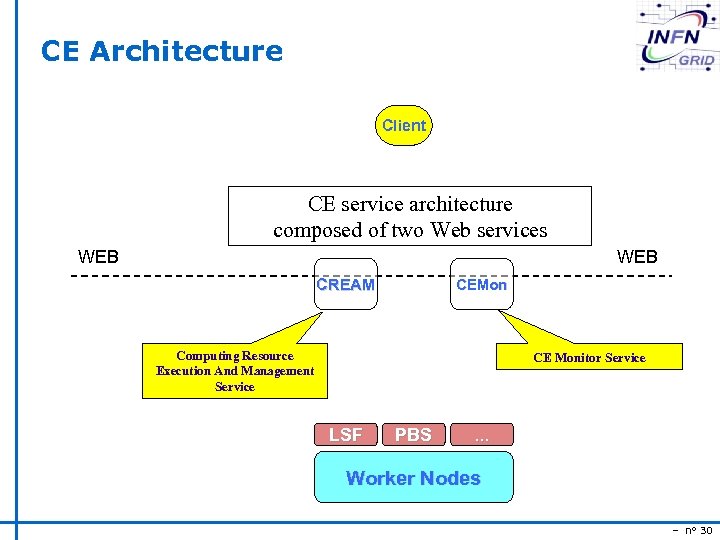

CE Architecture Client CE service architecture composed of two Web services WEB CREAM CEMon Computing Resource Execution And Management Service CE Monitor Service LSF PBS . . . Worker Nodes – n° 30

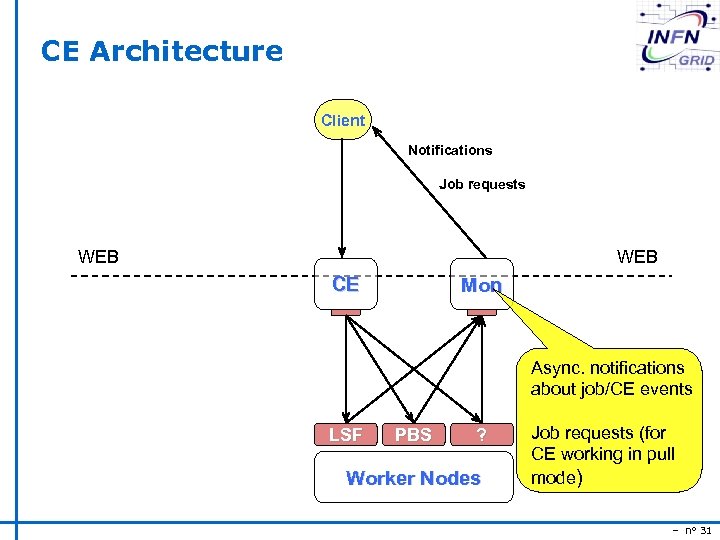

CE Architecture Client Notifications Job requests WEB CE Mon Async. notifications about job/CE events LSF PBS ? Worker Nodes Job requests (for CE working in pull mode) – n° 31

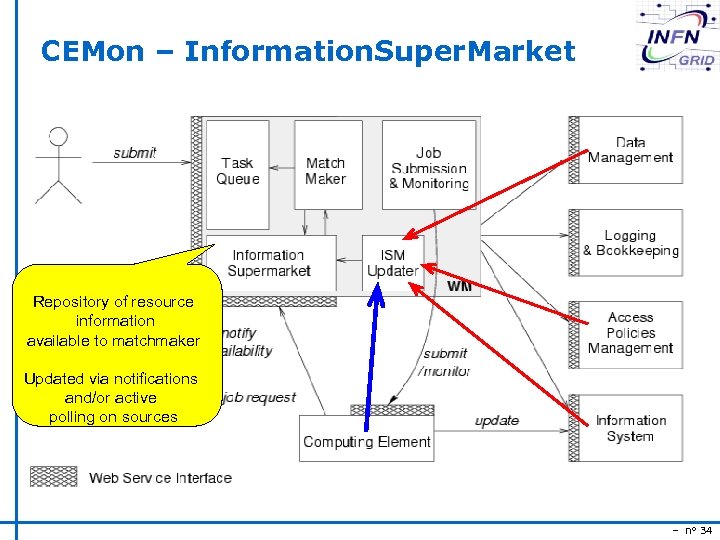

CEMon – Information. Super. Market Repository of resource information available to matchmaker Updated via notifications and/or active polling on sources – n° 34

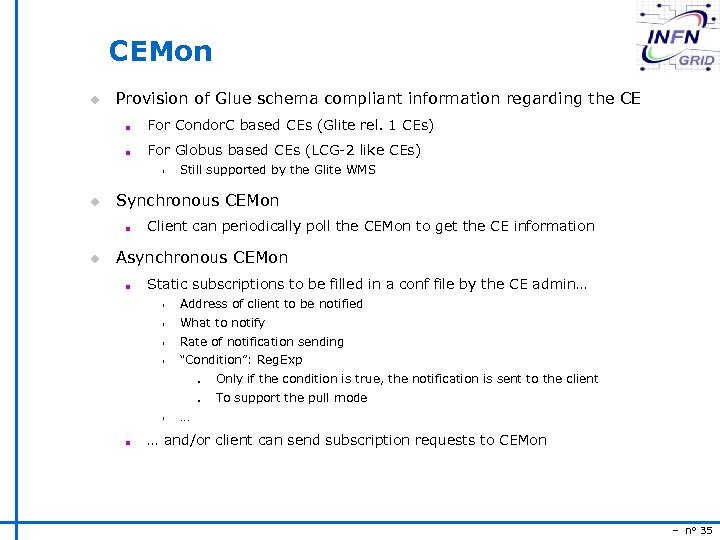

CEMon u Provision of Glue schema compliant information regarding the CE n For Condor. C based CEs (Glite rel. 1 CEs) n For Globus based CEs (LCG-2 like CEs) s u Synchronous CEMon n u Still supported by the Glite WMS Client can periodically poll the CEMon to get the CE information Asynchronous CEMon n Static subscriptions to be filled in a conf file by the CE admin… s Address of client to be notified s What to notify s Rate of notification sending s “Condition”: Reg. Exp n n s n Only if the condition is true, the notification is sent to the client To support the pull mode … … and/or client can send subscription requests to CEMon – n° 35

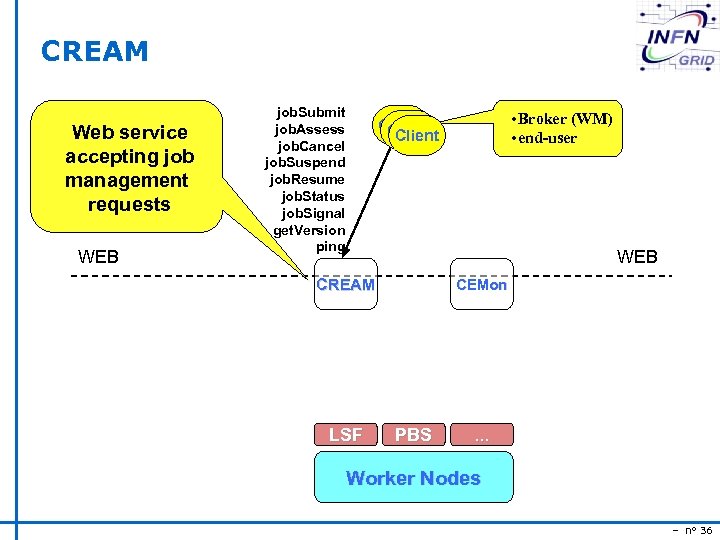

CREAM Web service accepting job management requests WEB job. Submit job. Assess job. Cancel job. Suspend job. Resume job. Status job. Signal get. Version ping • Broker (WM) • end-user Client WEB CREAM LSF CEMon PBS . . . Worker Nodes – n° 36

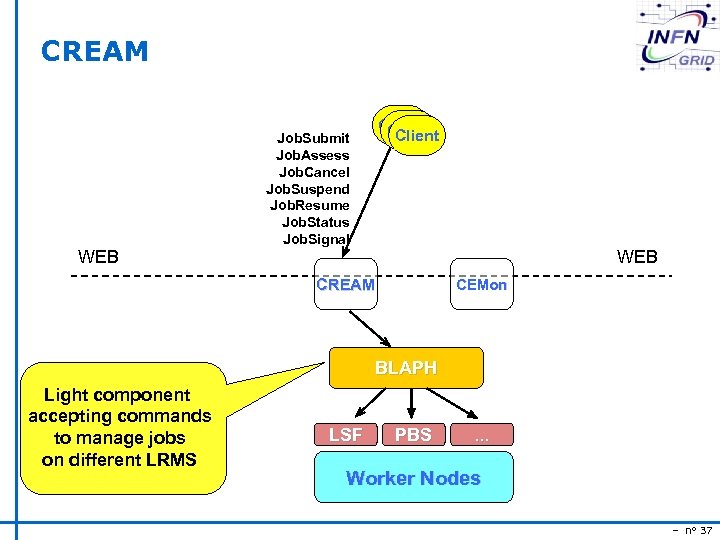

CREAM WEB Client Job. Submit Job. Assess Job. Cancel Job. Suspend Job. Resume Job. Status Job. Signal WEB CREAM CEMon BLAPH Light component accepting commands to manage jobs on different LRMS LSF PBS . . . Worker Nodes – n° 37

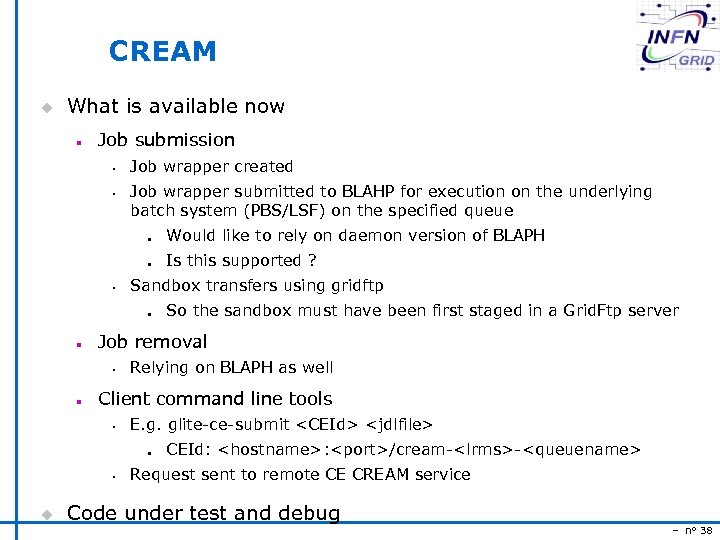

CREAM u What is available now n Job submission s s Job wrapper created Job wrapper submitted to BLAHP for execution on the underlying batch system (PBS/LSF) on the specified queue n n s Relying on BLAPH as well Client command line tools s E. g. glite-ce-submit <CEId> <jdlfile> n s u So the sandbox must have been first staged in a Grid. Ftp server Job removal s n Is this supported ? Sandbox transfers using gridftp n n Would like to rely on daemon version of BLAPH CEId: <hostname>: <port>/cream-<lrms>-<queuename> Request sent to remote CE CREAM service Code under test and debug – n° 38

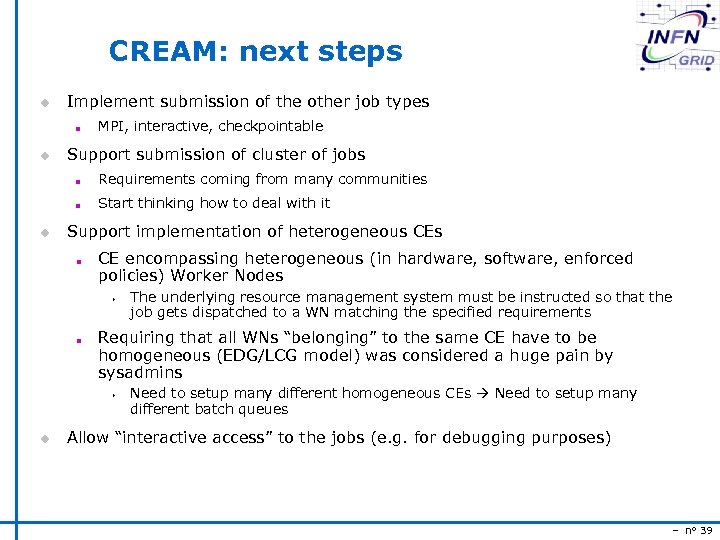

CREAM: next steps u Implement submission of the other job types n u MPI, interactive, checkpointable Support submission of cluster of jobs n n u Requirements coming from many communities Start thinking how to deal with it Support implementation of heterogeneous CEs n CE encompassing heterogeneous (in hardware, software, enforced policies) Worker Nodes s n Requiring that all WNs “belonging” to the same CE have to be homogeneous (EDG/LCG model) was considered a huge pain by sysadmins s u The underlying resource management system must be instructed so that the job gets dispatched to a WN matching the specified requirements Need to setup many different homogeneous CEs Need to setup many different batch queues Allow “interactive access” to the jobs (e. g. for debugging purposes) – n° 39

CE standardization u What’s the issue and the goal n Globus GRAM was the de-facto standard for CE resource access This favored the interoperability with other Grids n n Now this is not the case anymore (Globus GRAM, Nordugrid CE, Unicore CE, Condor. C based CE, …) s n E. g. EDG WMS able to submit to US resources Competition usually helps to produce good artifacts, but this is a problem for the interoperability Standardization needed s Standard Job Description Language s Standard interface – n° 40

Data. Grid Accounting System DGAS The Purpose of Data Grid Accounting System (DGAS) is to implement Resource Usage Metering, Accounting and Billing in a fully distributed Grid environment. It is conceived to be distributed, secure and extensible. The system is designed in order for Usage Metering, Accounting and Billing to be independent layers. – n° 41

Usage Metering and Accounting The usage of Grid Resources by Grid Users is registered in appropriate servers, known as HLRs (Home Location Registers) where both users and resources are registered. In order to achieve scalability, there can be many independent HLRs. At least one HLR per VO are foreseen, although a finer granularity is possible. Each HLR keeps the records of every grid job executed by each of its registered users or resources, thus being able to furnish usage information with many granularity levels: Per user or resource, per group of users or resources, per VO. – n° 42

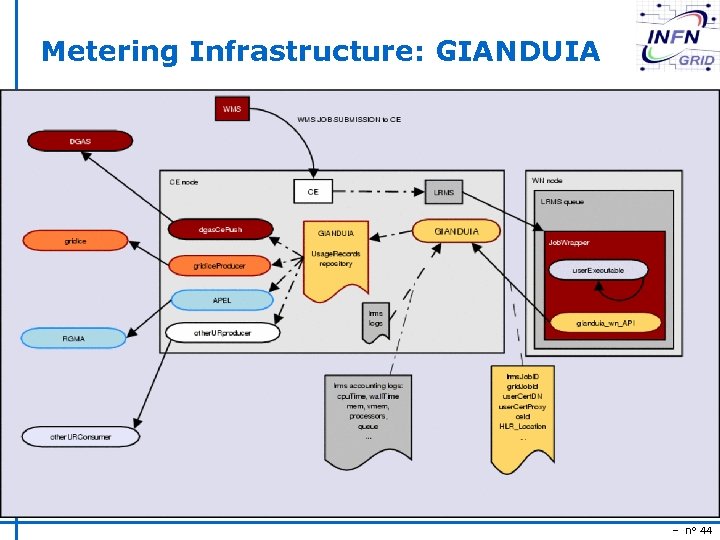

Gianduia in g. Lite: Description ● ● ● GIANDUIA (Gianduia Is a Nice Distributed Utility Infrastructure for Accounting) collects information about a Grid Job from the WMS Job. Wrapper and make them available on the CE node (or the LRMS Head node if it is different from the CE) to other applications that needs to retrieve the job usage record. The job Grid related information are integrated with the information about the same job available to the LRMS, thus a complete view of the job is available, in a single place, without the need to parse many different logs to monitor a job. These information are stored on the CE filesystem and can then be used by producers for different Accounting or Monitoring systems. Information available are, for example: LRMSJob. ID, Grid. Job. ID, User. Cert. Subject, user. Proxy. Certificate, Grid. CEId, CPU Time, Wall. Clock Time, Memory (physical and virtual), number of processors assigned to a job, and all the other records available to the LRMS. Log format is similar to native LRMS one, so it is easy to adapt existents sw to use Gianduia actually works with PBS, LSF, MAUI/Torque. – n° 43

Metering Infrastructure: GIANDUIA – n° 44

Sto. RM : Storage Resource Manager u Disk-Storage with interface SRM 2. 1 to parallel file system with Posix like interface. u ‘storage reservation’ u VOMS roles e u G-Pbox policy aware u Implementation and deployment on-going – n° 45

Policy Management: G-Pbox u Policy management for computing and storage resource in a Grid. u CE policy aware u WMS policy aware u Prototype available – n° 46

VOMS u VO Membership Service: n Support for the Vo group and role management n Based on attribute certificate u Data. TAG&Data. Grid activity – CERN admin interface u In the INFN production Grid system since last year u Included in g. Lite - RC 1 u Distributed in VDT and used by Grid 3 – n° 47

36587b260855c2a9c41c3e1dd350e72a.ppt