Crawling Paolo Ferragina Dipartimento di Informatica Università di Pisa Reading 20. 1, 20. 2 and 20. 3

Crawling Paolo Ferragina Dipartimento di Informatica Università di Pisa Reading 20. 1, 20. 2 and 20. 3

Spidering n 24 h, 7 days “walking” over a Graph n What about the Graph? n n Bow. Tie Direct graph G = (N, E) N changes (insert, delete) >> 50 * 109 nodes E changes (insert, delete) > 10 links per node n 10*50*109 = 500*109 1 -entries in adj matrix

Spidering n 24 h, 7 days “walking” over a Graph n What about the Graph? n n Bow. Tie Direct graph G = (N, E) N changes (insert, delete) >> 50 * 109 nodes E changes (insert, delete) > 10 links per node n 10*50*109 = 500*109 1 -entries in adj matrix

Crawling Issues n How to crawl? n n How much to crawl? How much to index? n n n Coverage: How big is the Web? How much do we cover? Relative Coverage: How much do competitors have? How often to crawl? n n Quality: “Best” pages first Efficiency: Avoid duplication (or near duplication) Etiquette: Robots. txt, Server load concerns (Minimize load) Freshness: How much has changed? How to parallelize the process

Crawling Issues n How to crawl? n n How much to crawl? How much to index? n n n Coverage: How big is the Web? How much do we cover? Relative Coverage: How much do competitors have? How often to crawl? n n Quality: “Best” pages first Efficiency: Avoid duplication (or near duplication) Etiquette: Robots. txt, Server load concerns (Minimize load) Freshness: How much has changed? How to parallelize the process

Page selection n Given a page P, define how “good” P is. n Several metrics: n n BFS, DFS, Random Popularity driven (Page. Rank, full vs partial) Topic driven or focused crawling Combined

Page selection n Given a page P, define how “good” P is. n Several metrics: n n BFS, DFS, Random Popularity driven (Page. Rank, full vs partial) Topic driven or focused crawling Combined

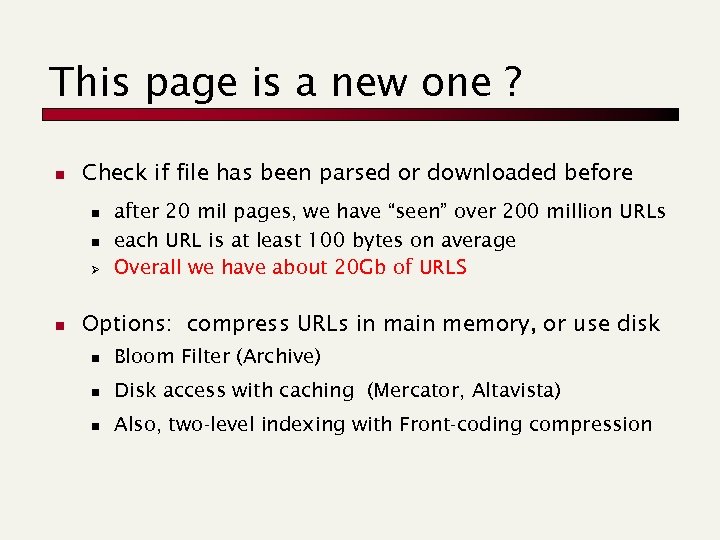

This page is a new one ? n Check if file has been parsed or downloaded before n n Ø n after 20 mil pages, we have “seen” over 200 million URLs each URL is at least 100 bytes on average Overall we have about 20 Gb of URLS Options: compress URLs in main memory, or use disk n Bloom Filter (Archive) n Disk access with caching (Mercator, Altavista) n Also, two-level indexing with Front-coding compression

This page is a new one ? n Check if file has been parsed or downloaded before n n Ø n after 20 mil pages, we have “seen” over 200 million URLs each URL is at least 100 bytes on average Overall we have about 20 Gb of URLS Options: compress URLs in main memory, or use disk n Bloom Filter (Archive) n Disk access with caching (Mercator, Altavista) n Also, two-level indexing with Front-coding compression

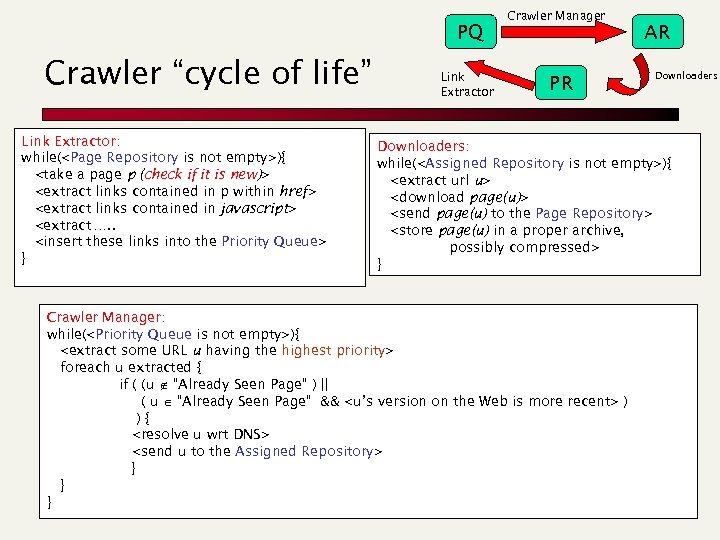

PQ Crawler “cycle of life” Link Extractor: while(){ } Link Extractor Crawler Manager PR AR Downloaders: while(){ } Crawler Manager: while(){ foreach u extracted { if ( (u “Already Seen Page” ) || ( u “Already Seen Page” && ) ){ } } }

PQ Crawler “cycle of life” Link Extractor: while(){ } Link Extractor Crawler Manager PR AR Downloaders: while(){ } Crawler Manager: while(){ foreach u extracted { if ( (u “Already Seen Page” ) || ( u “Already Seen Page” && ) ){ } } }

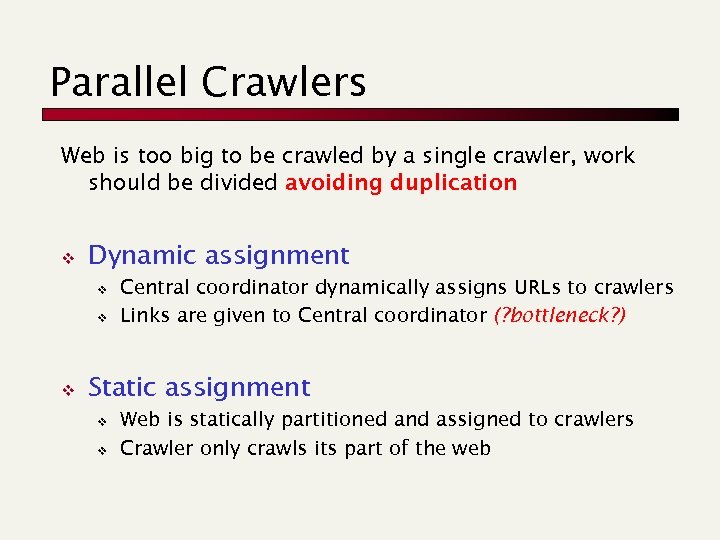

Parallel Crawlers Web is too big to be crawled by a single crawler, work should be divided avoiding duplication v Dynamic assignment v v v Central coordinator dynamically assigns URLs to crawlers Links are given to Central coordinator (? bottleneck? ) Static assignment v v Web is statically partitioned and assigned to crawlers Crawler only crawls its part of the web

Parallel Crawlers Web is too big to be crawled by a single crawler, work should be divided avoiding duplication v Dynamic assignment v v v Central coordinator dynamically assigns URLs to crawlers Links are given to Central coordinator (? bottleneck? ) Static assignment v v Web is statically partitioned and assigned to crawlers Crawler only crawls its part of the web

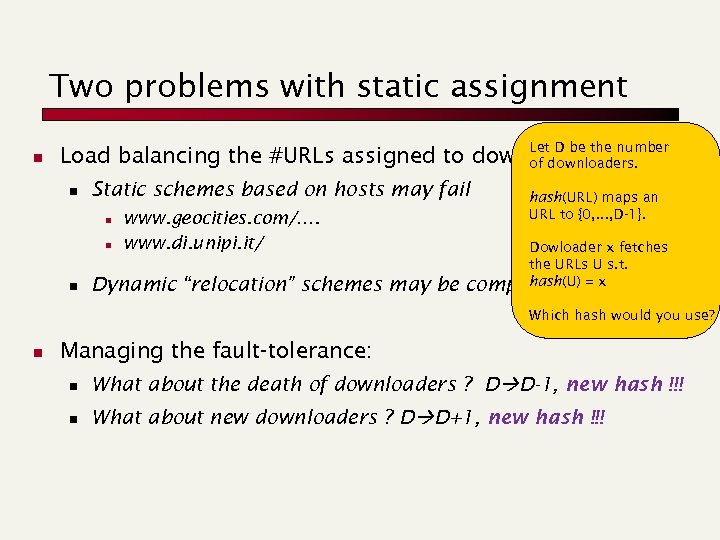

Two problems with static assignment n Let D be the number Load balancing the #URLs assigned to downloaders: of downloaders. n Static schemes based on hosts may fail n n n www. geocities. com/…. www. di. unipi. it/ Dynamic “relocation” schemes may be hash(URL) maps an URL to {0, . . . , D-1}. Dowloader x fetches the URLs U s. t. hash(U) complicated = x Which hash would you use? n Managing the fault-tolerance: n What about the death of downloaders ? D D-1, new hash !!! n What about new downloaders ? D D+1, new hash !!!

Two problems with static assignment n Let D be the number Load balancing the #URLs assigned to downloaders: of downloaders. n Static schemes based on hosts may fail n n n www. geocities. com/…. www. di. unipi. it/ Dynamic “relocation” schemes may be hash(URL) maps an URL to {0, . . . , D-1}. Dowloader x fetches the URLs U s. t. hash(U) complicated = x Which hash would you use? n Managing the fault-tolerance: n What about the death of downloaders ? D D-1, new hash !!! n What about new downloaders ? D D+1, new hash !!!

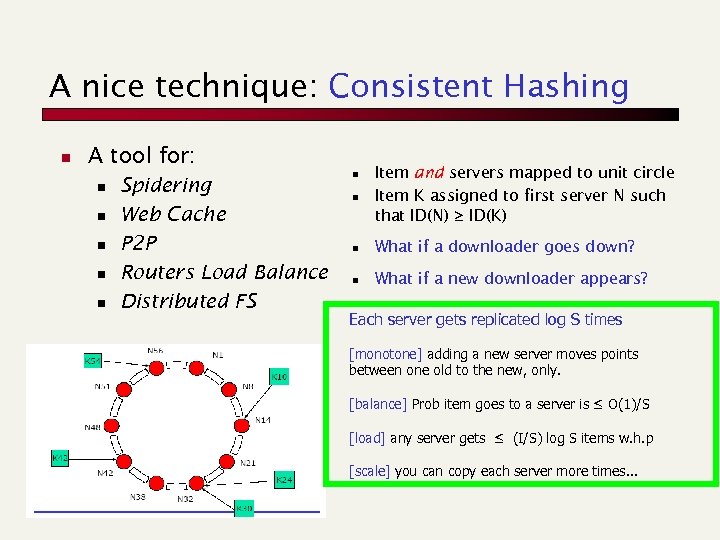

A nice technique: Consistent Hashing n A tool for: n n n Spidering Web Cache P 2 P Routers Load Balance Distributed FS n Item and servers mapped to unit circle Item K assigned to first server N such that ID(N) ≥ ID(K) n What if a downloader goes down? n What if a new downloader appears? n Each server gets replicated log S times [monotone] adding a new server moves points between one old to the new, only. [balance] Prob item goes to a server is ≤ O(1)/S [load] any server gets ≤ (I/S) log S items w. h. p [scale] you can copy each server more times. . .

A nice technique: Consistent Hashing n A tool for: n n n Spidering Web Cache P 2 P Routers Load Balance Distributed FS n Item and servers mapped to unit circle Item K assigned to first server N such that ID(N) ≥ ID(K) n What if a downloader goes down? n What if a new downloader appears? n Each server gets replicated log S times [monotone] adding a new server moves points between one old to the new, only. [balance] Prob item goes to a server is ≤ O(1)/S [load] any server gets ≤ (I/S) log S items w. h. p [scale] you can copy each server more times. . .

Examples: Open Source n Nutch, also used by Wiki. Search n http: //nutch. apache. org/

Examples: Open Source n Nutch, also used by Wiki. Search n http: //nutch. apache. org/