01fb4b7adc5553e72aeb77758f2b62e6.ppt

- Количество слайдов: 51

CPE 631 Memory Electrical and Computer Engineering University of Alabama in Huntsville Aleksandar Milenkovic milenka@ece. uah. edu http: //www. ece. uah. edu/~milenka UAH-CPE 631

CPE 631 Memory Electrical and Computer Engineering University of Alabama in Huntsville Aleksandar Milenkovic milenka@ece. uah. edu http: //www. ece. uah. edu/~milenka UAH-CPE 631

Virtual Memory: Topics n n Why virtual memory? Virtual to physical address translation Page Table Translation Lookaside Buffer (TLB) AM La. CASA 2

Virtual Memory: Topics n n Why virtual memory? Virtual to physical address translation Page Table Translation Lookaside Buffer (TLB) AM La. CASA 2

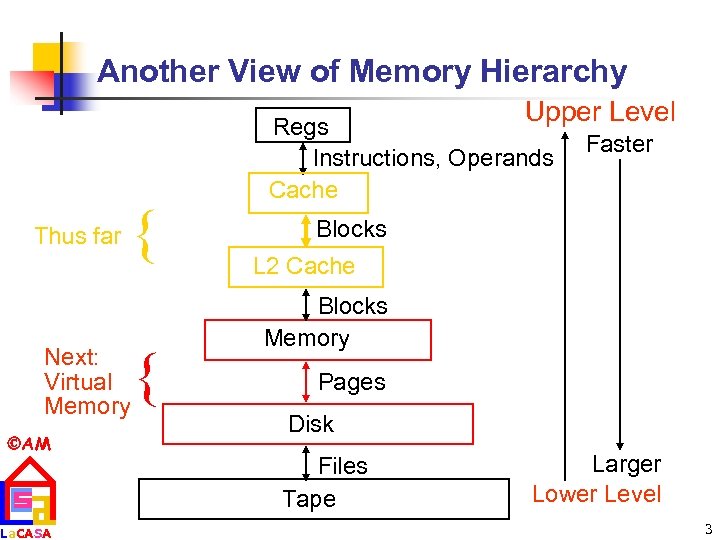

Another View of Memory Hierarchy Upper Level Thus far { Next: Virtual Memory AM La. CASA { Regs Instructions, Operands Cache Faster Blocks L 2 Cache Blocks Memory Pages Disk Files Tape Larger Lower Level 3

Another View of Memory Hierarchy Upper Level Thus far { Next: Virtual Memory AM La. CASA { Regs Instructions, Operands Cache Faster Blocks L 2 Cache Blocks Memory Pages Disk Files Tape Larger Lower Level 3

Why Virtual Memory? n n n Today computers run multiple processes, each with its own address space Too expensive to dedicate a full-address-space worth of memory for each process Principle of Locality n n AM n La. CASA allows caches to offer speed of cache memory with size of DRAM memory DRAM can act as a “cache” for secondary storage (disk) Virtual Memory Virtual memory – divides physical memory into blocks and allocate them to different processes 4

Why Virtual Memory? n n n Today computers run multiple processes, each with its own address space Too expensive to dedicate a full-address-space worth of memory for each process Principle of Locality n n AM n La. CASA allows caches to offer speed of cache memory with size of DRAM memory DRAM can act as a “cache” for secondary storage (disk) Virtual Memory Virtual memory – divides physical memory into blocks and allocate them to different processes 4

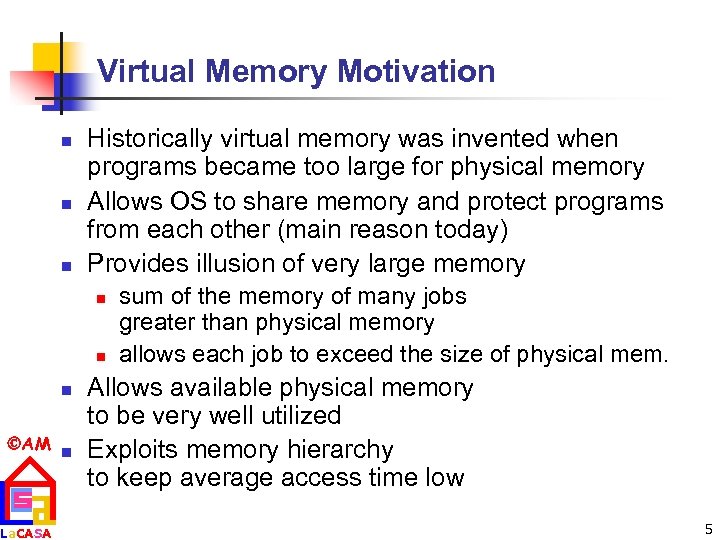

Virtual Memory Motivation n Historically virtual memory was invented when programs became too large for physical memory Allows OS to share memory and protect programs from each other (main reason today) Provides illusion of very large memory n n n AM n La. CASA sum of the memory of many jobs greater than physical memory allows each job to exceed the size of physical mem. Allows available physical memory to be very well utilized Exploits memory hierarchy to keep average access time low 5

Virtual Memory Motivation n Historically virtual memory was invented when programs became too large for physical memory Allows OS to share memory and protect programs from each other (main reason today) Provides illusion of very large memory n n n AM n La. CASA sum of the memory of many jobs greater than physical memory allows each job to exceed the size of physical mem. Allows available physical memory to be very well utilized Exploits memory hierarchy to keep average access time low 5

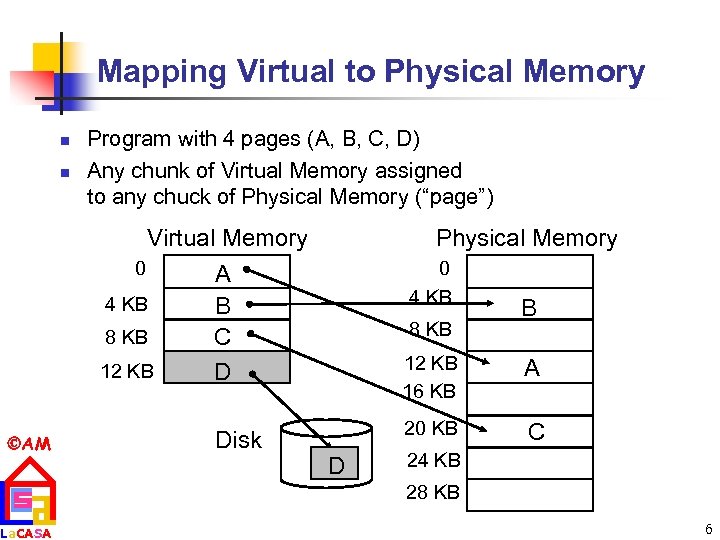

Mapping Virtual to Physical Memory n n Program with 4 pages (A, B, C, D) Any chunk of Virtual Memory assigned to any chuck of Physical Memory (“page”) Virtual Memory 0 4 KB 8 KB 12 KB AM La. CASA Physical Memory 0 4 KB A B C D Disk 8 KB B 12 KB 16 KB 20 KB D A C 24 KB 28 KB 6

Mapping Virtual to Physical Memory n n Program with 4 pages (A, B, C, D) Any chunk of Virtual Memory assigned to any chuck of Physical Memory (“page”) Virtual Memory 0 4 KB 8 KB 12 KB AM La. CASA Physical Memory 0 4 KB A B C D Disk 8 KB B 12 KB 16 KB 20 KB D A C 24 KB 28 KB 6

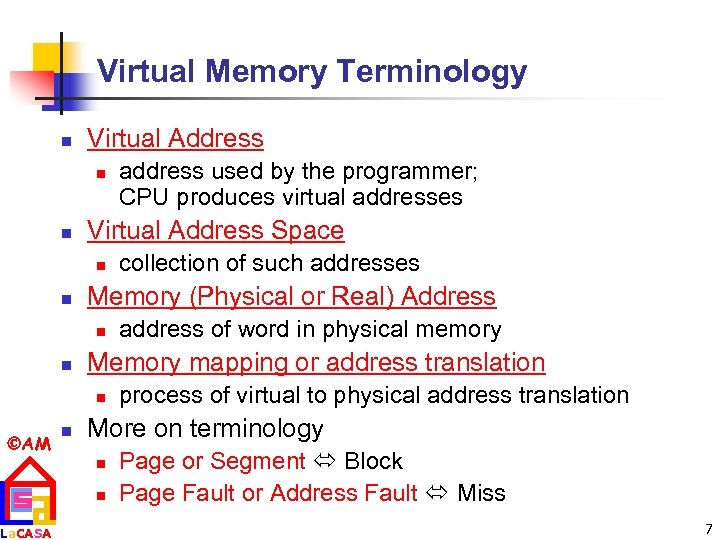

Virtual Memory Terminology n Virtual Address n n Virtual Address Space n n La. CASA n address of word in physical memory Memory mapping or address translation n AM collection of such addresses Memory (Physical or Real) Address n n address used by the programmer; CPU produces virtual addresses process of virtual to physical address translation More on terminology n n Page or Segment Block Page Fault or Address Fault Miss 7

Virtual Memory Terminology n Virtual Address n n Virtual Address Space n n La. CASA n address of word in physical memory Memory mapping or address translation n AM collection of such addresses Memory (Physical or Real) Address n n address used by the programmer; CPU produces virtual addresses process of virtual to physical address translation More on terminology n n Page or Segment Block Page Fault or Address Fault Miss 7

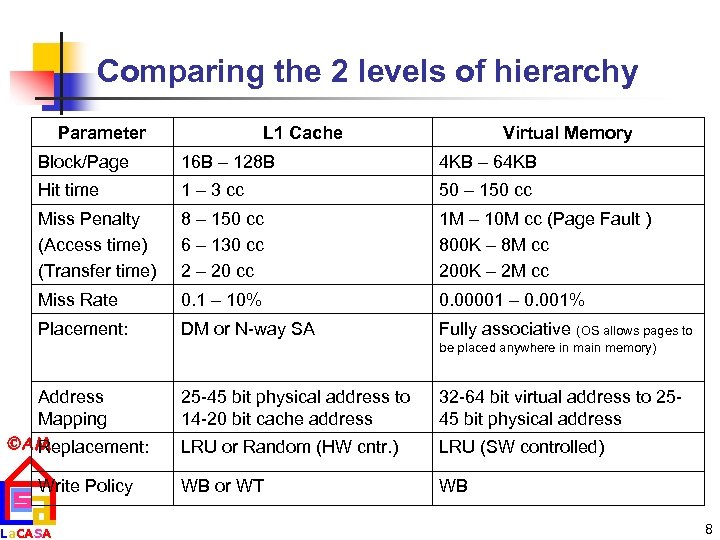

Comparing the 2 levels of hierarchy Parameter L 1 Cache Virtual Memory Block/Page 16 B – 128 B 4 KB – 64 KB Hit time 1 – 3 cc 50 – 150 cc Miss Penalty (Access time) (Transfer time) 8 – 150 cc 6 – 130 cc 2 – 20 cc 1 M – 10 M cc (Page Fault ) 800 K – 8 M cc 200 K – 2 M cc Miss Rate 0. 1 – 10% 0. 00001 – 0. 001% Placement: DM or N-way SA Fully associative (OS allows pages to be placed anywhere in main memory) Address Mapping AM Replacement: Write Policy La. CASA 25 -45 bit physical address to 14 -20 bit cache address 32 -64 bit virtual address to 2545 bit physical address LRU or Random (HW cntr. ) LRU (SW controlled) WB or WT WB 8

Comparing the 2 levels of hierarchy Parameter L 1 Cache Virtual Memory Block/Page 16 B – 128 B 4 KB – 64 KB Hit time 1 – 3 cc 50 – 150 cc Miss Penalty (Access time) (Transfer time) 8 – 150 cc 6 – 130 cc 2 – 20 cc 1 M – 10 M cc (Page Fault ) 800 K – 8 M cc 200 K – 2 M cc Miss Rate 0. 1 – 10% 0. 00001 – 0. 001% Placement: DM or N-way SA Fully associative (OS allows pages to be placed anywhere in main memory) Address Mapping AM Replacement: Write Policy La. CASA 25 -45 bit physical address to 14 -20 bit cache address 32 -64 bit virtual address to 2545 bit physical address LRU or Random (HW cntr. ) LRU (SW controlled) WB or WT WB 8

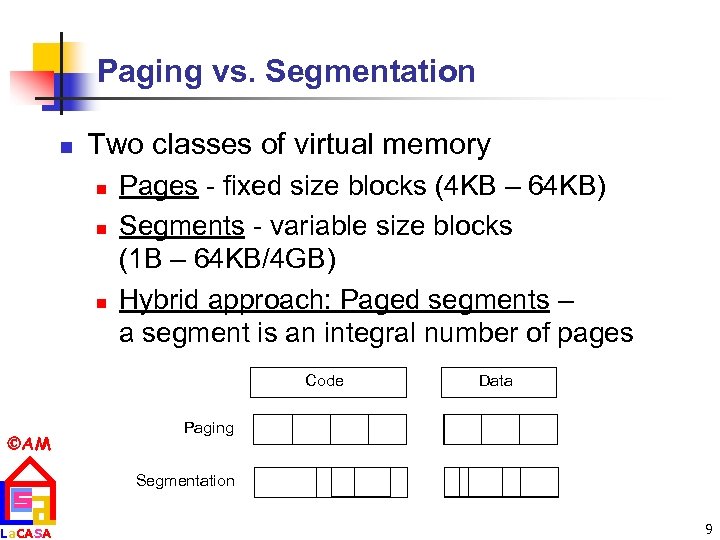

Paging vs. Segmentation n Two classes of virtual memory n n n Pages - fixed size blocks (4 KB – 64 KB) Segments - variable size blocks (1 B – 64 KB/4 GB) Hybrid approach: Paged segments – a segment is an integral number of pages Code AM La. CASA Data Paging Segmentation 9

Paging vs. Segmentation n Two classes of virtual memory n n n Pages - fixed size blocks (4 KB – 64 KB) Segments - variable size blocks (1 B – 64 KB/4 GB) Hybrid approach: Paged segments – a segment is an integral number of pages Code AM La. CASA Data Paging Segmentation 9

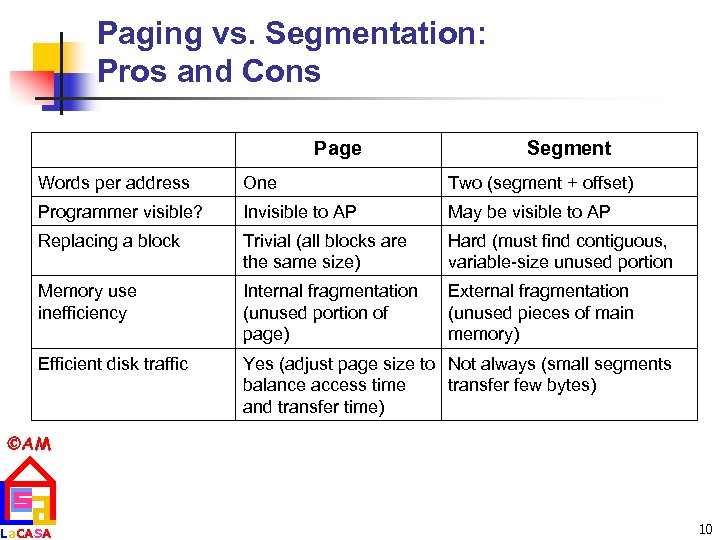

Paging vs. Segmentation: Pros and Cons Page Segment Words per address One Two (segment + offset) Programmer visible? Invisible to AP May be visible to AP Replacing a block Trivial (all blocks are the same size) Hard (must find contiguous, variable-size unused portion Memory use inefficiency Internal fragmentation (unused portion of page) External fragmentation (unused pieces of main memory) Efficient disk traffic Yes (adjust page size to Not always (small segments balance access time transfer few bytes) and transfer time) AM La. CASA 10

Paging vs. Segmentation: Pros and Cons Page Segment Words per address One Two (segment + offset) Programmer visible? Invisible to AP May be visible to AP Replacing a block Trivial (all blocks are the same size) Hard (must find contiguous, variable-size unused portion Memory use inefficiency Internal fragmentation (unused portion of page) External fragmentation (unused pieces of main memory) Efficient disk traffic Yes (adjust page size to Not always (small segments balance access time transfer few bytes) and transfer time) AM La. CASA 10

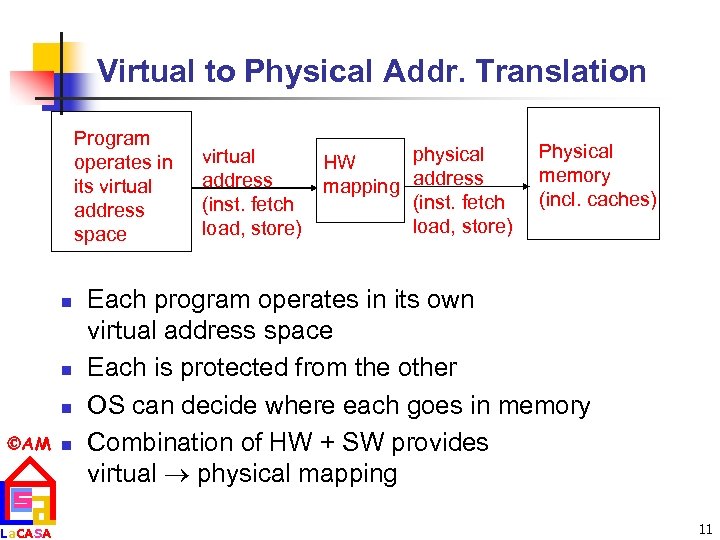

Virtual to Physical Addr. Translation Program operates in its virtual address space n n n AM n La. CASA virtual address (inst. fetch load, store) physical HW mapping address (inst. fetch load, store) Physical memory (incl. caches) Each program operates in its own virtual address space Each is protected from the other OS can decide where each goes in memory Combination of HW + SW provides virtual physical mapping 11

Virtual to Physical Addr. Translation Program operates in its virtual address space n n n AM n La. CASA virtual address (inst. fetch load, store) physical HW mapping address (inst. fetch load, store) Physical memory (incl. caches) Each program operates in its own virtual address space Each is protected from the other OS can decide where each goes in memory Combination of HW + SW provides virtual physical mapping 11

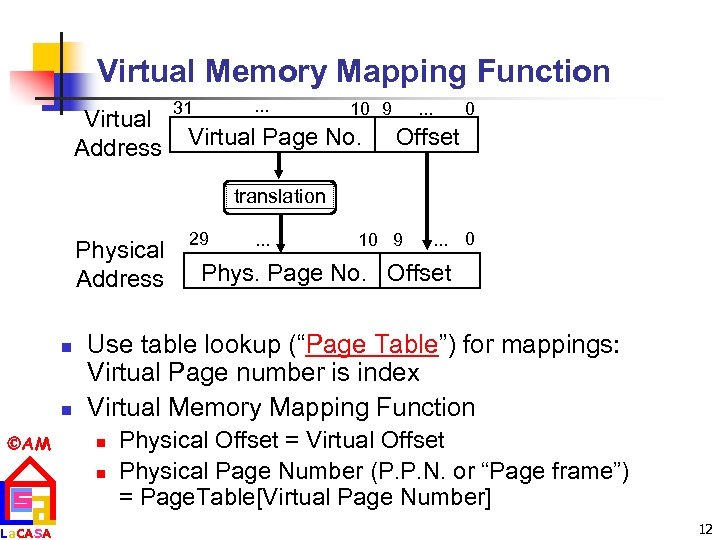

Virtual Memory Mapping Function Virtual Address . . . 31 10 9 Virtual Page No. 0 . . . Offset translation Physical Address n n AM La. CASA 29 . . . 10 9 . . . 0 Phys. Page No. Offset Use table lookup (“Page Table”) for mappings: Virtual Page number is index Virtual Memory Mapping Function n n Physical Offset = Virtual Offset Physical Page Number (P. P. N. or “Page frame”) = Page. Table[Virtual Page Number] 12

Virtual Memory Mapping Function Virtual Address . . . 31 10 9 Virtual Page No. 0 . . . Offset translation Physical Address n n AM La. CASA 29 . . . 10 9 . . . 0 Phys. Page No. Offset Use table lookup (“Page Table”) for mappings: Virtual Page number is index Virtual Memory Mapping Function n n Physical Offset = Virtual Offset Physical Page Number (P. P. N. or “Page frame”) = Page. Table[Virtual Page Number] 12

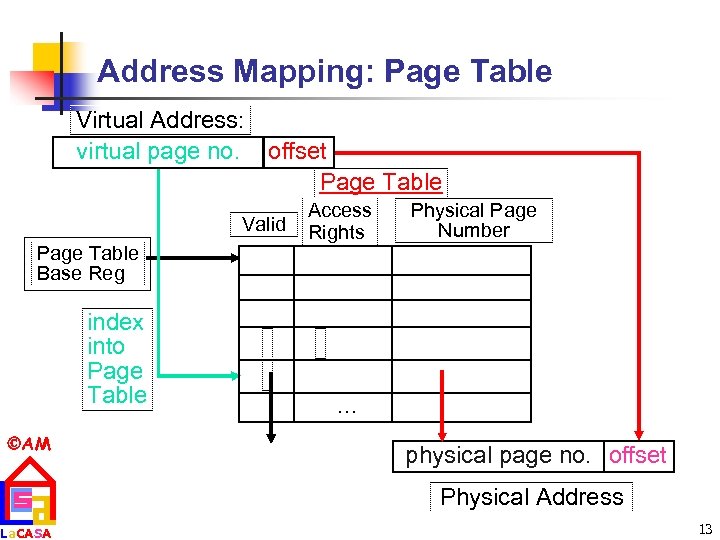

Address Mapping: Page Table Virtual Address: virtual page no. offset Page Table Valid Page Table Base Reg index into Page Table AM La. CASA Access Rights Physical Page Number . . . physical page no. offset Physical Address 13

Address Mapping: Page Table Virtual Address: virtual page no. offset Page Table Valid Page Table Base Reg index into Page Table AM La. CASA Access Rights Physical Page Number . . . physical page no. offset Physical Address 13

Page Table n A page table is an operating system structure which contains the mapping of virtual addresses to physical locations n n Each process running in the operating system has its own page table n AM La. CASA There are several different ways, all up to the operating system, to keep this data around n “State” of process is PC, all registers, plus page table OS changes page tables by changing contents of Page Table Base Register 14

Page Table n A page table is an operating system structure which contains the mapping of virtual addresses to physical locations n n Each process running in the operating system has its own page table n AM La. CASA There are several different ways, all up to the operating system, to keep this data around n “State” of process is PC, all registers, plus page table OS changes page tables by changing contents of Page Table Base Register 14

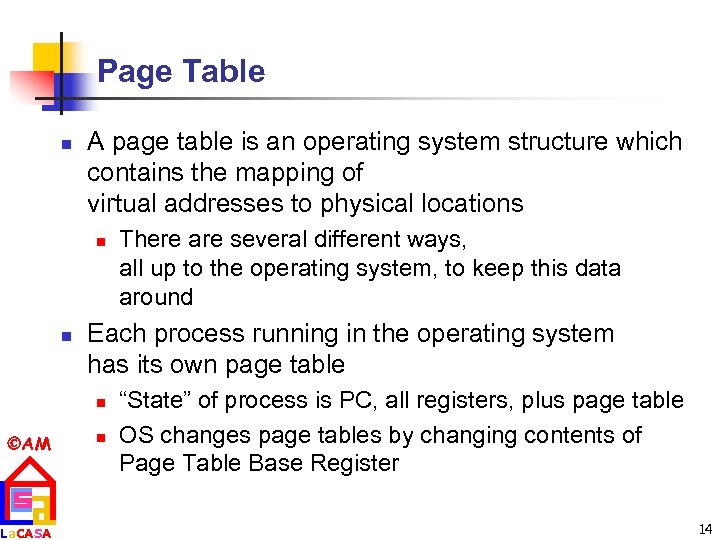

Page Table Entry (PTE) Format n Valid bit indicates if page is in memory n n OS maps to disk if Not Valid (V = 0) Contains mappings for every possible virtual page V. La. CASA n Valid Access Rights Physical Page Number A. R. P. P. T . . . AM P. P. T. V. Page Table A. R. . . . P. T. E. If valid, also check if have permission to use page: Access Rights (A. R. ) may be Read Only, Read/Write, Executable 15

Page Table Entry (PTE) Format n Valid bit indicates if page is in memory n n OS maps to disk if Not Valid (V = 0) Contains mappings for every possible virtual page V. La. CASA n Valid Access Rights Physical Page Number A. R. P. P. T . . . AM P. P. T. V. Page Table A. R. . . . P. T. E. If valid, also check if have permission to use page: Access Rights (A. R. ) may be Read Only, Read/Write, Executable 15

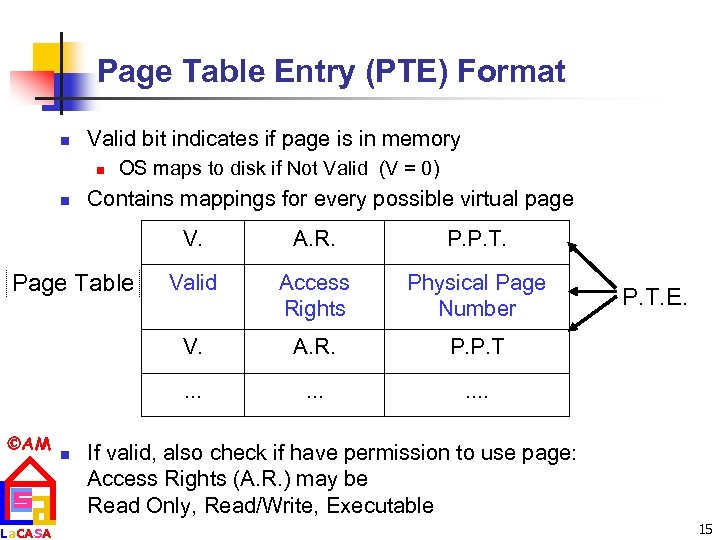

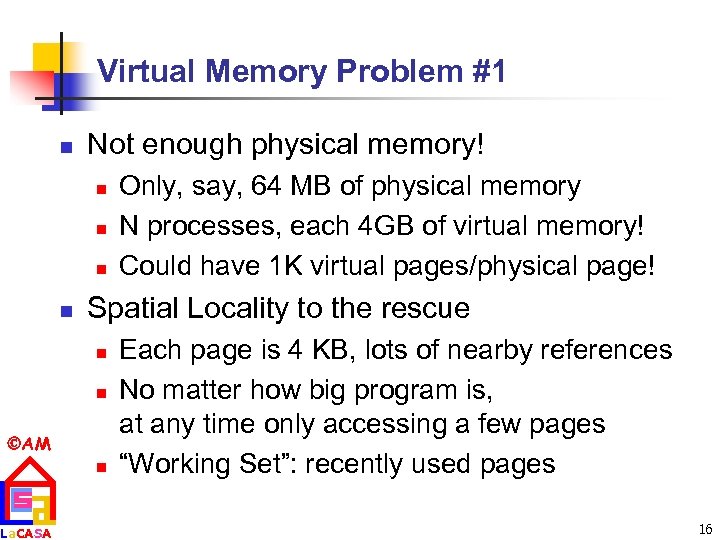

Virtual Memory Problem #1 n Not enough physical memory! n n Spatial Locality to the rescue n n AM La. CASA Only, say, 64 MB of physical memory N processes, each 4 GB of virtual memory! Could have 1 K virtual pages/physical page! n Each page is 4 KB, lots of nearby references No matter how big program is, at any time only accessing a few pages “Working Set”: recently used pages 16

Virtual Memory Problem #1 n Not enough physical memory! n n Spatial Locality to the rescue n n AM La. CASA Only, say, 64 MB of physical memory N processes, each 4 GB of virtual memory! Could have 1 K virtual pages/physical page! n Each page is 4 KB, lots of nearby references No matter how big program is, at any time only accessing a few pages “Working Set”: recently used pages 16

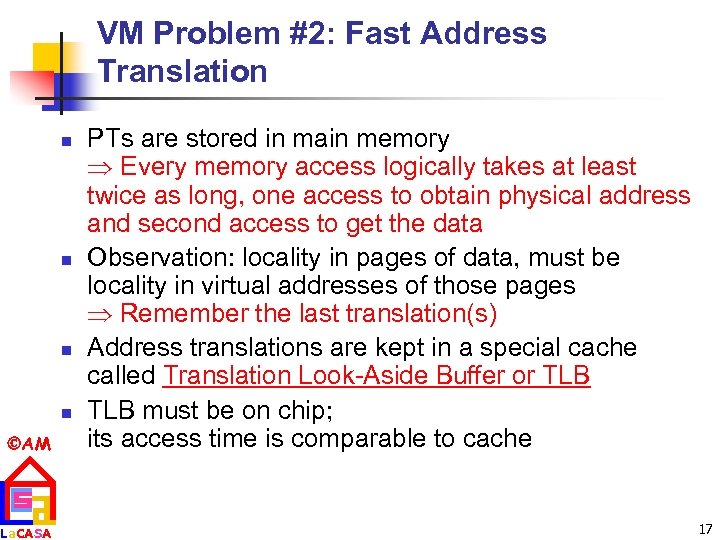

VM Problem #2: Fast Address Translation n n AM La. CASA PTs are stored in main memory Every memory access logically takes at least twice as long, one access to obtain physical address and second access to get the data Observation: locality in pages of data, must be locality in virtual addresses of those pages Remember the last translation(s) Address translations are kept in a special cache called Translation Look-Aside Buffer or TLB must be on chip; its access time is comparable to cache 17

VM Problem #2: Fast Address Translation n n AM La. CASA PTs are stored in main memory Every memory access logically takes at least twice as long, one access to obtain physical address and second access to get the data Observation: locality in pages of data, must be locality in virtual addresses of those pages Remember the last translation(s) Address translations are kept in a special cache called Translation Look-Aside Buffer or TLB must be on chip; its access time is comparable to cache 17

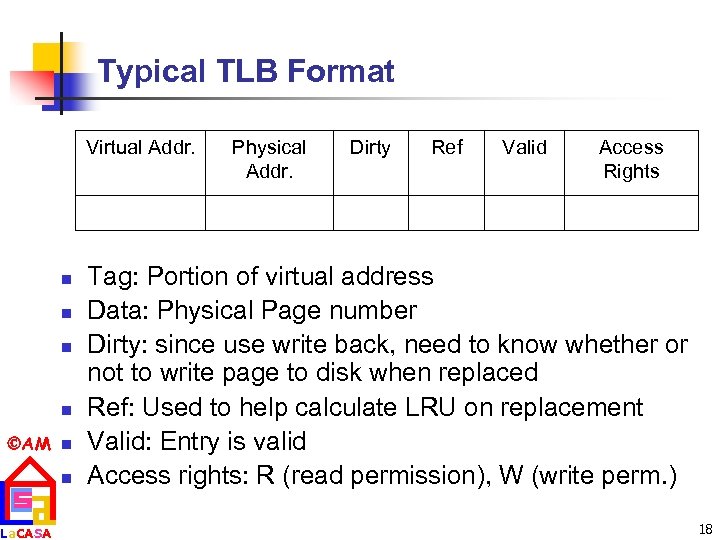

Typical TLB Format Virtual Addr. n n AM n La. CASA n Physical Addr. Dirty Ref Valid Access Rights Tag: Portion of virtual address Data: Physical Page number Dirty: since use write back, need to know whether or not to write page to disk when replaced Ref: Used to help calculate LRU on replacement Valid: Entry is valid Access rights: R (read permission), W (write perm. ) 18

Typical TLB Format Virtual Addr. n n AM n La. CASA n Physical Addr. Dirty Ref Valid Access Rights Tag: Portion of virtual address Data: Physical Page number Dirty: since use write back, need to know whether or not to write page to disk when replaced Ref: Used to help calculate LRU on replacement Valid: Entry is valid Access rights: R (read permission), W (write perm. ) 18

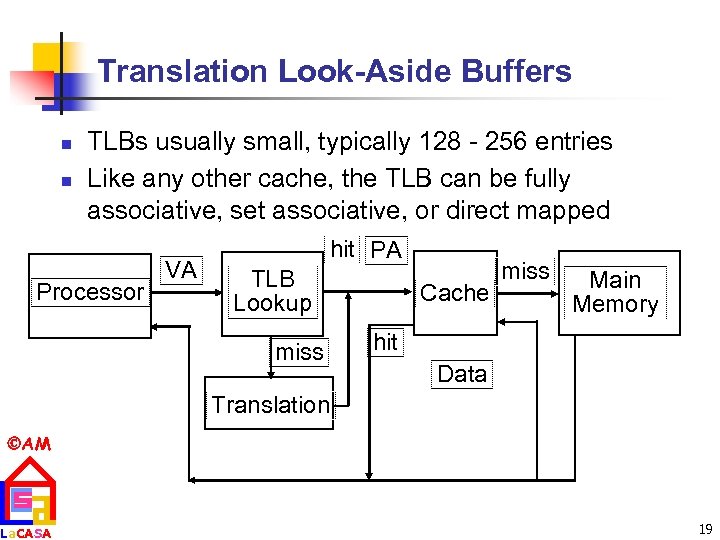

Translation Look-Aside Buffers n n TLBs usually small, typically 128 - 256 entries Like any other cache, the TLB can be fully associative, set associative, or direct mapped Processor VA hit PA TLB Lookup miss Cache miss Main Memory hit Data Translation AM La. CASA 19

Translation Look-Aside Buffers n n TLBs usually small, typically 128 - 256 entries Like any other cache, the TLB can be fully associative, set associative, or direct mapped Processor VA hit PA TLB Lookup miss Cache miss Main Memory hit Data Translation AM La. CASA 19

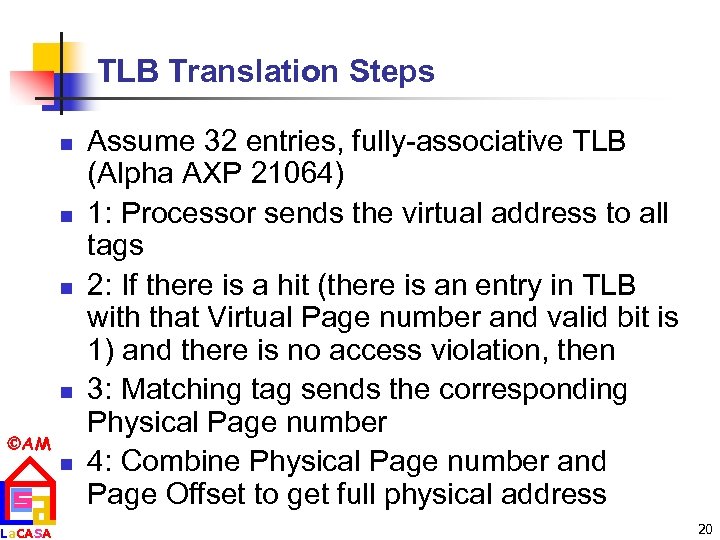

TLB Translation Steps n n AM La. CASA n Assume 32 entries, fully-associative TLB (Alpha AXP 21064) 1: Processor sends the virtual address to all tags 2: If there is a hit (there is an entry in TLB with that Virtual Page number and valid bit is 1) and there is no access violation, then 3: Matching tag sends the corresponding Physical Page number 4: Combine Physical Page number and Page Offset to get full physical address 20

TLB Translation Steps n n AM La. CASA n Assume 32 entries, fully-associative TLB (Alpha AXP 21064) 1: Processor sends the virtual address to all tags 2: If there is a hit (there is an entry in TLB with that Virtual Page number and valid bit is 1) and there is no access violation, then 3: Matching tag sends the corresponding Physical Page number 4: Combine Physical Page number and Page Offset to get full physical address 20

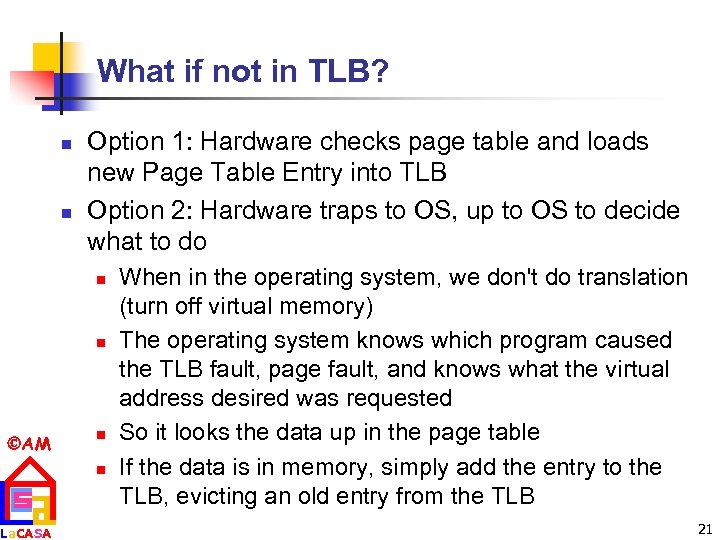

What if not in TLB? n n Option 1: Hardware checks page table and loads new Page Table Entry into TLB Option 2: Hardware traps to OS, up to OS to decide what to do n n AM La. CASA n n When in the operating system, we don't do translation (turn off virtual memory) The operating system knows which program caused the TLB fault, page fault, and knows what the virtual address desired was requested So it looks the data up in the page table If the data is in memory, simply add the entry to the TLB, evicting an old entry from the TLB 21

What if not in TLB? n n Option 1: Hardware checks page table and loads new Page Table Entry into TLB Option 2: Hardware traps to OS, up to OS to decide what to do n n AM La. CASA n n When in the operating system, we don't do translation (turn off virtual memory) The operating system knows which program caused the TLB fault, page fault, and knows what the virtual address desired was requested So it looks the data up in the page table If the data is in memory, simply add the entry to the TLB, evicting an old entry from the TLB 21

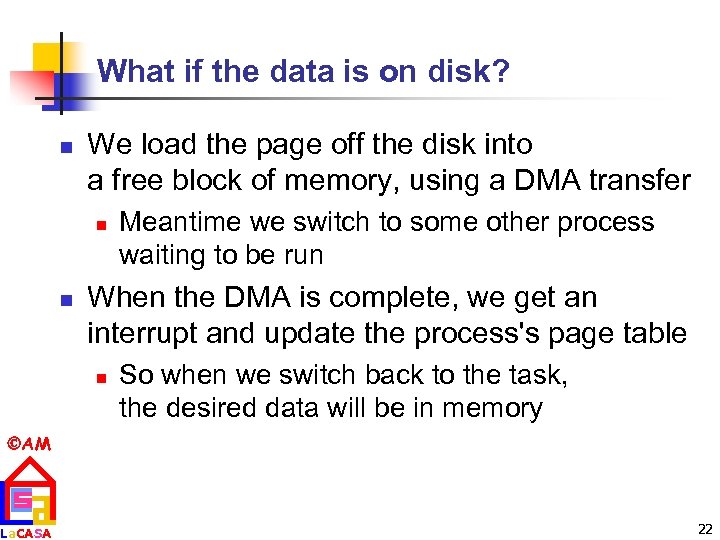

What if the data is on disk? n We load the page off the disk into a free block of memory, using a DMA transfer n n Meantime we switch to some other process waiting to be run When the DMA is complete, we get an interrupt and update the process's page table n So when we switch back to the task, the desired data will be in memory AM La. CASA 22

What if the data is on disk? n We load the page off the disk into a free block of memory, using a DMA transfer n n Meantime we switch to some other process waiting to be run When the DMA is complete, we get an interrupt and update the process's page table n So when we switch back to the task, the desired data will be in memory AM La. CASA 22

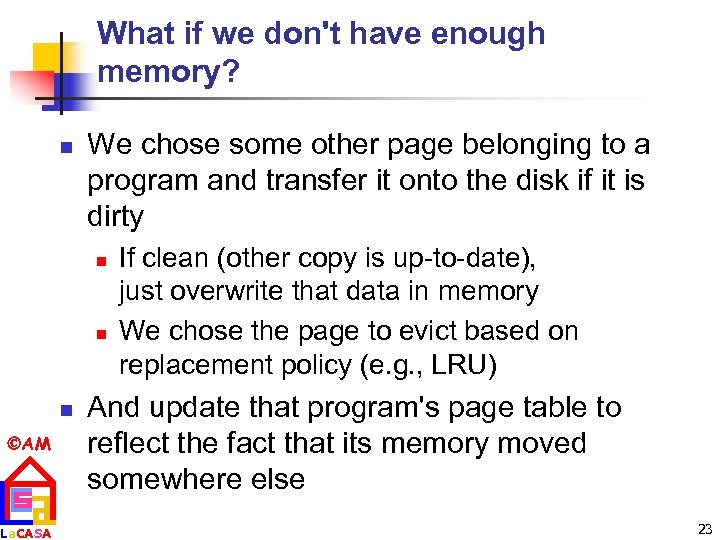

What if we don't have enough memory? n We chose some other page belonging to a program and transfer it onto the disk if it is dirty n n n AM La. CASA If clean (other copy is up-to-date), just overwrite that data in memory We chose the page to evict based on replacement policy (e. g. , LRU) And update that program's page table to reflect the fact that its memory moved somewhere else 23

What if we don't have enough memory? n We chose some other page belonging to a program and transfer it onto the disk if it is dirty n n n AM La. CASA If clean (other copy is up-to-date), just overwrite that data in memory We chose the page to evict based on replacement policy (e. g. , LRU) And update that program's page table to reflect the fact that its memory moved somewhere else 23

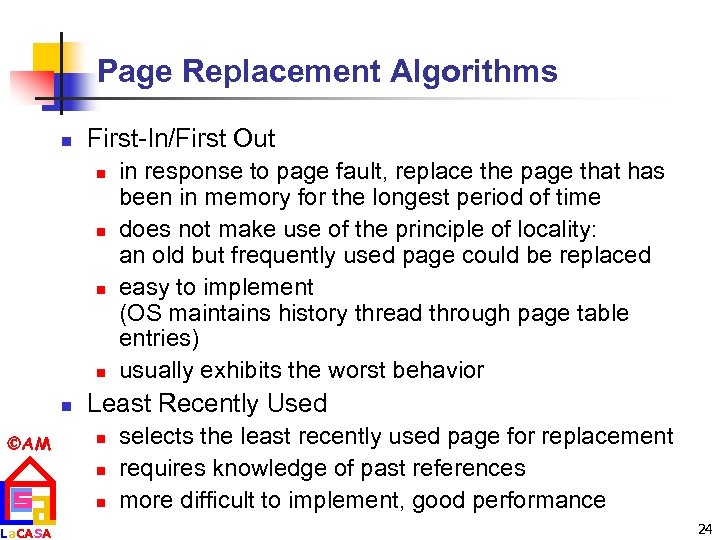

Page Replacement Algorithms n First-In/First Out n n n AM La. CASA in response to page fault, replace the page that has been in memory for the longest period of time does not make use of the principle of locality: an old but frequently used page could be replaced easy to implement (OS maintains history thread through page table entries) usually exhibits the worst behavior Least Recently Used n n n selects the least recently used page for replacement requires knowledge of past references more difficult to implement, good performance 24

Page Replacement Algorithms n First-In/First Out n n n AM La. CASA in response to page fault, replace the page that has been in memory for the longest period of time does not make use of the principle of locality: an old but frequently used page could be replaced easy to implement (OS maintains history thread through page table entries) usually exhibits the worst behavior Least Recently Used n n n selects the least recently used page for replacement requires knowledge of past references more difficult to implement, good performance 24

Page Replacement Algorithms (cont’d) n Not Recently Used (an estimation of LRU) n A reference bit flag is associated to each page table entry such that n n n AM La. CASA n n Ref flag = 1 - if page has been referenced in recent past Ref flag = 0 - otherwise If replacement is necessary, choose any page frame such that its reference bit is 0 OS periodically clears the reference bits Reference bit is set whenever a page is accessed 25

Page Replacement Algorithms (cont’d) n Not Recently Used (an estimation of LRU) n A reference bit flag is associated to each page table entry such that n n n AM La. CASA n n Ref flag = 1 - if page has been referenced in recent past Ref flag = 0 - otherwise If replacement is necessary, choose any page frame such that its reference bit is 0 OS periodically clears the reference bits Reference bit is set whenever a page is accessed 25

Selecting a Page Size n n Balance forces in favor of larger pages versus those in favoring smaller pages Larger page n n n AM La. CASA Reduce size PT (save space) Larger caches with fast hits More efficient transfer from the disk or possibly over the networks Less TLB entries or less TLB misses Smaller page n n better conserve space, less wasted storage (Internal Fragmentation) shorten startup time, especially with plenty of small processes 26

Selecting a Page Size n n Balance forces in favor of larger pages versus those in favoring smaller pages Larger page n n n AM La. CASA Reduce size PT (save space) Larger caches with fast hits More efficient transfer from the disk or possibly over the networks Less TLB entries or less TLB misses Smaller page n n better conserve space, less wasted storage (Internal Fragmentation) shorten startup time, especially with plenty of small processes 26

VM Problem #3: Page Table too big! n Example n n n 4 GB Virtual Memory ÷ 4 KB page => ~ 1 million Page Table Entries => 4 MB just for Page Table for 1 process, 25 processes => 100 MB for Page Tables! Problem gets worse on modern 64 -bits machines Solution is Hierarchical Page Table AM La. CASA 27

VM Problem #3: Page Table too big! n Example n n n 4 GB Virtual Memory ÷ 4 KB page => ~ 1 million Page Table Entries => 4 MB just for Page Table for 1 process, 25 processes => 100 MB for Page Tables! Problem gets worse on modern 64 -bits machines Solution is Hierarchical Page Table AM La. CASA 27

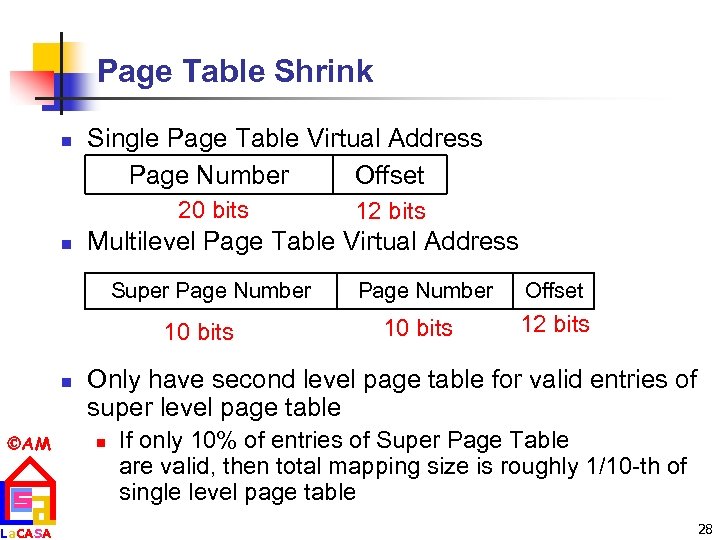

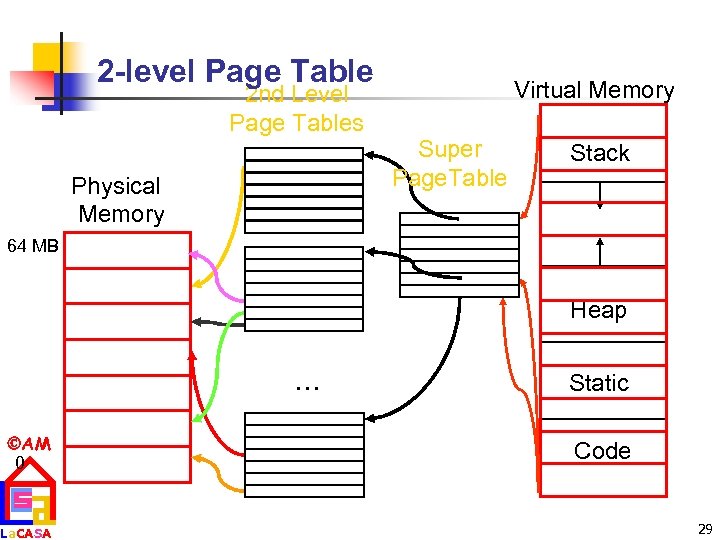

Page Table Shrink n Single Page Table Virtual Address Page Number Offset 20 bits n Multilevel Page Table Virtual Address Super Page Number 10 bits n AM La. CASA 12 bits Page Number 10 bits Offset 12 bits Only have second level page table for valid entries of super level page table n If only 10% of entries of Super Page Table are valid, then total mapping size is roughly 1/10 -th of single level page table 28

Page Table Shrink n Single Page Table Virtual Address Page Number Offset 20 bits n Multilevel Page Table Virtual Address Super Page Number 10 bits n AM La. CASA 12 bits Page Number 10 bits Offset 12 bits Only have second level page table for valid entries of super level page table n If only 10% of entries of Super Page Table are valid, then total mapping size is roughly 1/10 -th of single level page table 28

2 -level Page Table 2 nd Level Page Tables Physical Memory Virtual Memory Super Page. Table Stack 64 MB Heap . . . AM 0 La. CASA Static Code 29

2 -level Page Table 2 nd Level Page Tables Physical Memory Virtual Memory Super Page. Table Stack 64 MB Heap . . . AM 0 La. CASA Static Code 29

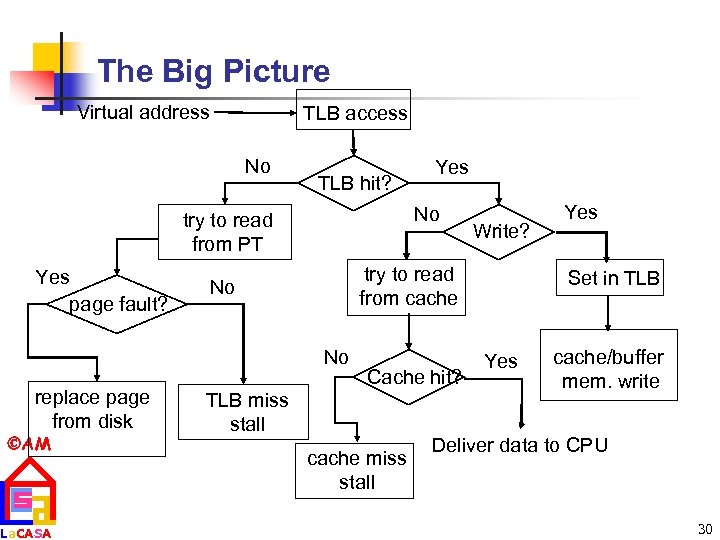

The Big Picture Virtual address TLB access No TLB hit? No try to read from PT Yes page fault? AM La. CASA Write? try to read from cache No No replace page from disk Yes Cache hit? TLB miss stall cache miss stall Yes Set in TLB Yes cache/buffer mem. write Deliver data to CPU 30

The Big Picture Virtual address TLB access No TLB hit? No try to read from PT Yes page fault? AM La. CASA Write? try to read from cache No No replace page from disk Yes Cache hit? TLB miss stall cache miss stall Yes Set in TLB Yes cache/buffer mem. write Deliver data to CPU 30

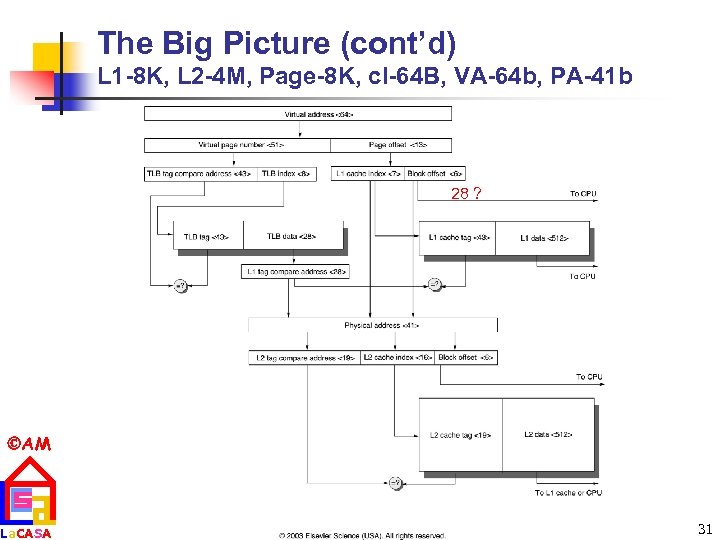

The Big Picture (cont’d) L 1 -8 K, L 2 -4 M, Page-8 K, cl-64 B, VA-64 b, PA-41 b 28 ? AM La. CASA 31

The Big Picture (cont’d) L 1 -8 K, L 2 -4 M, Page-8 K, cl-64 B, VA-64 b, PA-41 b 28 ? AM La. CASA 31

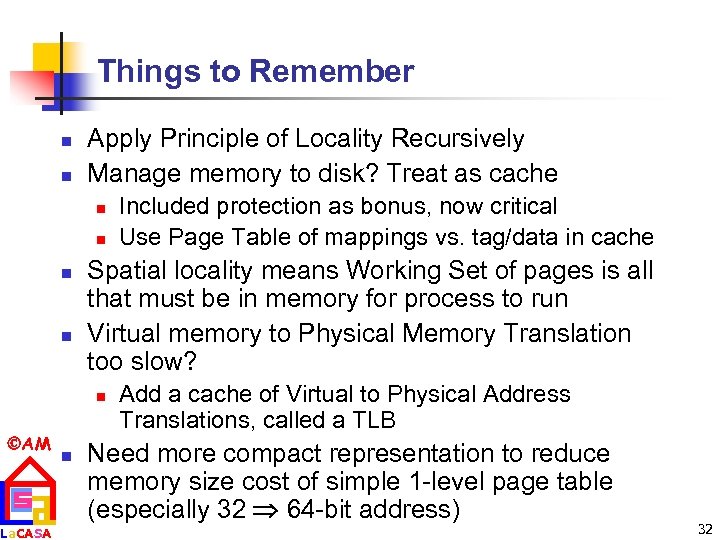

Things to Remember n n Apply Principle of Locality Recursively Manage memory to disk? Treat as cache n n Spatial locality means Working Set of pages is all that must be in memory for process to run Virtual memory to Physical Memory Translation too slow? n AM La. CASA n Included protection as bonus, now critical Use Page Table of mappings vs. tag/data in cache Add a cache of Virtual to Physical Address Translations, called a TLB Need more compact representation to reduce memory size cost of simple 1 -level page table (especially 32 64 -bit address) 32

Things to Remember n n Apply Principle of Locality Recursively Manage memory to disk? Treat as cache n n Spatial locality means Working Set of pages is all that must be in memory for process to run Virtual memory to Physical Memory Translation too slow? n AM La. CASA n Included protection as bonus, now critical Use Page Table of mappings vs. tag/data in cache Add a cache of Virtual to Physical Address Translations, called a TLB Need more compact representation to reduce memory size cost of simple 1 -level page table (especially 32 64 -bit address) 32

Main Memory Background n Next level down in the hierarchy n n satisfies the demands of caches + serves as the I/O interface Performance of Main Memory: n Latency: Cache Miss Penalty n n La. CASA Bandwidth (the number of bytes read or written per unit time): I/O & Large Block Miss Penalty (L 2) Main Memory is DRAM: Dynamic Random Access Memory n n AM Dynamic since needs to be refreshed periodically (8 ms, 1% time) Addresses divided into 2 halves (Memory as a 2 D matrix): n n Access Time: time between when a read is requested and when the desired word arrives Cycle Time: minimum time between requests to memory RAS or Row Access Strobe + CAS or Column Access Strobe Cache uses SRAM: Static Random Access Memory n No refresh (6 transistors/bit vs. 1 transistor) 33

Main Memory Background n Next level down in the hierarchy n n satisfies the demands of caches + serves as the I/O interface Performance of Main Memory: n Latency: Cache Miss Penalty n n La. CASA Bandwidth (the number of bytes read or written per unit time): I/O & Large Block Miss Penalty (L 2) Main Memory is DRAM: Dynamic Random Access Memory n n AM Dynamic since needs to be refreshed periodically (8 ms, 1% time) Addresses divided into 2 halves (Memory as a 2 D matrix): n n Access Time: time between when a read is requested and when the desired word arrives Cycle Time: minimum time between requests to memory RAS or Row Access Strobe + CAS or Column Access Strobe Cache uses SRAM: Static Random Access Memory n No refresh (6 transistors/bit vs. 1 transistor) 33

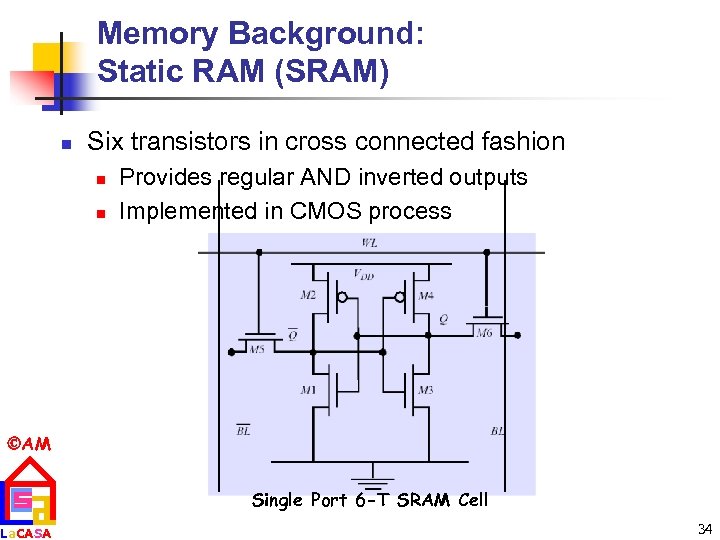

Memory Background: Static RAM (SRAM) n Six transistors in cross connected fashion n n Provides regular AND inverted outputs Implemented in CMOS process AM La. CASA Single Port 6 -T SRAM Cell 34

Memory Background: Static RAM (SRAM) n Six transistors in cross connected fashion n n Provides regular AND inverted outputs Implemented in CMOS process AM La. CASA Single Port 6 -T SRAM Cell 34

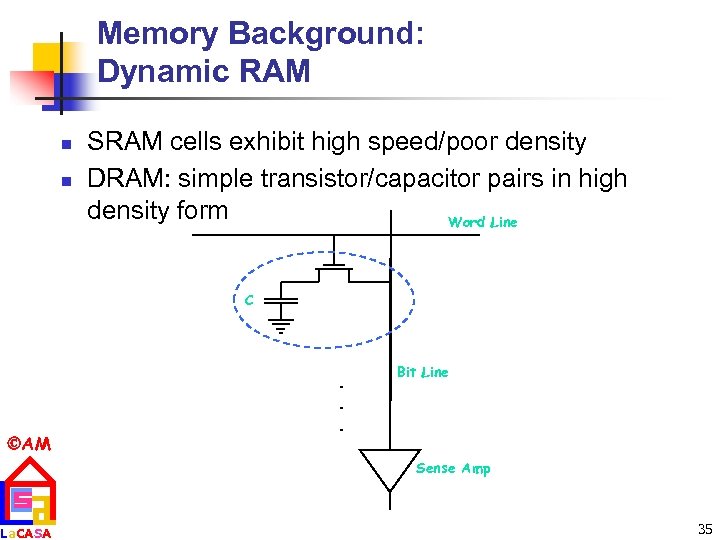

Memory Background: Dynamic RAM n n SRAM cells exhibit high speed/poor density DRAM: simple transistor/capacitor pairs in high density form Word Line C AM La. CASA . . . Bit Line Sense Amp 35

Memory Background: Dynamic RAM n n SRAM cells exhibit high speed/poor density DRAM: simple transistor/capacitor pairs in high density form Word Line C AM La. CASA . . . Bit Line Sense Amp 35

Techniques for Improving Performance n n n 1. Wider Main Memory 2. Simple Interleaved Memory 3. Independent Memory Banks AM La. CASA 36

Techniques for Improving Performance n n n 1. Wider Main Memory 2. Simple Interleaved Memory 3. Independent Memory Banks AM La. CASA 36

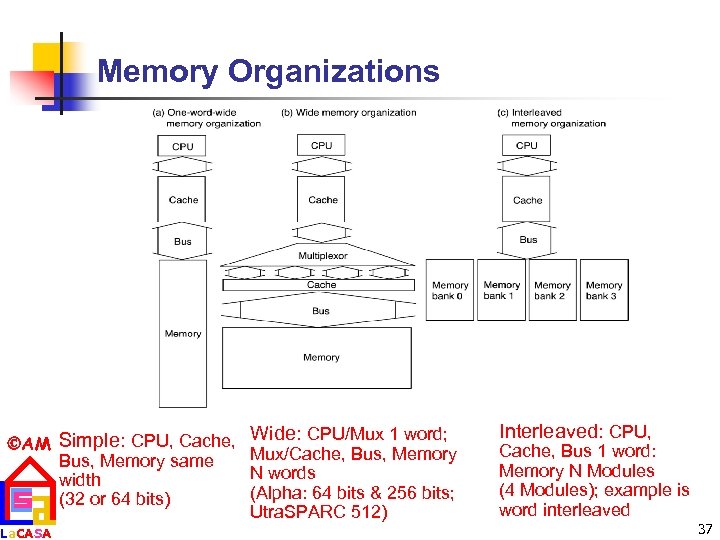

Memory Organizations AM Simple: CPU, Cache, Bus, Memory same width (32 or 64 bits) La. CASA Wide: CPU/Mux 1 word; Mux/Cache, Bus, Memory N words (Alpha: 64 bits & 256 bits; Utra. SPARC 512) Interleaved: CPU, Cache, Bus 1 word: Memory N Modules (4 Modules); example is word interleaved 37

Memory Organizations AM Simple: CPU, Cache, Bus, Memory same width (32 or 64 bits) La. CASA Wide: CPU/Mux 1 word; Mux/Cache, Bus, Memory N words (Alpha: 64 bits & 256 bits; Utra. SPARC 512) Interleaved: CPU, Cache, Bus 1 word: Memory N Modules (4 Modules); example is word interleaved 37

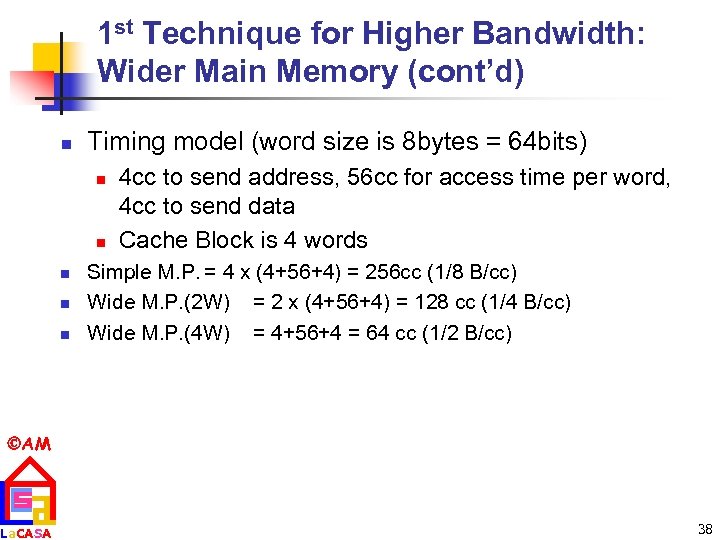

1 st Technique for Higher Bandwidth: Wider Main Memory (cont’d) n Timing model (word size is 8 bytes = 64 bits) n n n 4 cc to send address, 56 cc for access time per word, 4 cc to send data Cache Block is 4 words Simple M. P. = 4 x (4+56+4) = 256 cc (1/8 B/cc) Wide M. P. (2 W) = 2 x (4+56+4) = 128 cc (1/4 B/cc) Wide M. P. (4 W) = 4+56+4 = 64 cc (1/2 B/cc) AM La. CASA 38

1 st Technique for Higher Bandwidth: Wider Main Memory (cont’d) n Timing model (word size is 8 bytes = 64 bits) n n n 4 cc to send address, 56 cc for access time per word, 4 cc to send data Cache Block is 4 words Simple M. P. = 4 x (4+56+4) = 256 cc (1/8 B/cc) Wide M. P. (2 W) = 2 x (4+56+4) = 128 cc (1/4 B/cc) Wide M. P. (4 W) = 4+56+4 = 64 cc (1/2 B/cc) AM La. CASA 38

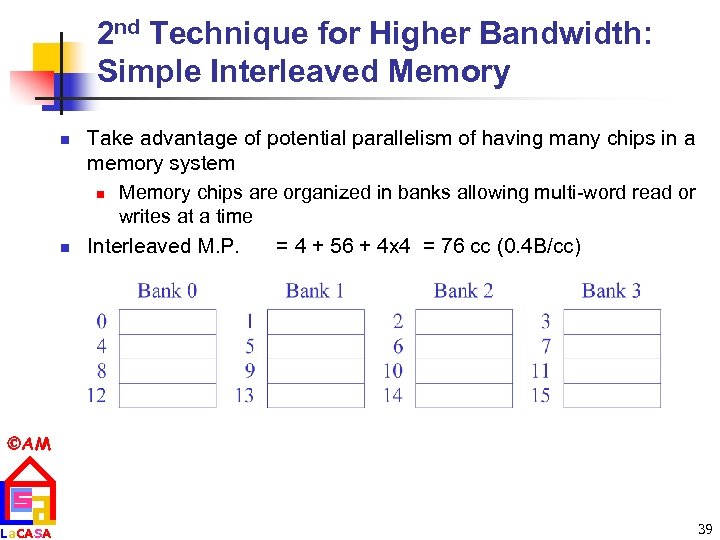

2 nd Technique for Higher Bandwidth: Simple Interleaved Memory n n Take advantage of potential parallelism of having many chips in a memory system n Memory chips are organized in banks allowing multi-word read or writes at a time Interleaved M. P. = 4 + 56 + 4 x 4 = 76 cc (0. 4 B/cc) AM La. CASA 39

2 nd Technique for Higher Bandwidth: Simple Interleaved Memory n n Take advantage of potential parallelism of having many chips in a memory system n Memory chips are organized in banks allowing multi-word read or writes at a time Interleaved M. P. = 4 + 56 + 4 x 4 = 76 cc (0. 4 B/cc) AM La. CASA 39

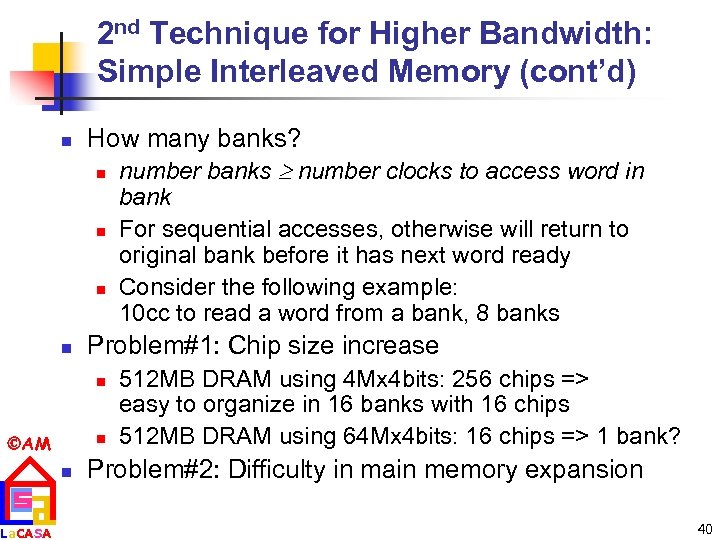

2 nd Technique for Higher Bandwidth: Simple Interleaved Memory (cont’d) n How many banks? n number banks number clocks to access word in n Problem#1: Chip size increase n AM La. CASA n n bank For sequential accesses, otherwise will return to original bank before it has next word ready Consider the following example: 10 cc to read a word from a bank, 8 banks 512 MB DRAM using 4 Mx 4 bits: 256 chips => easy to organize in 16 banks with 16 chips 512 MB DRAM using 64 Mx 4 bits: 16 chips => 1 bank? Problem#2: Difficulty in main memory expansion 40

2 nd Technique for Higher Bandwidth: Simple Interleaved Memory (cont’d) n How many banks? n number banks number clocks to access word in n Problem#1: Chip size increase n AM La. CASA n n bank For sequential accesses, otherwise will return to original bank before it has next word ready Consider the following example: 10 cc to read a word from a bank, 8 banks 512 MB DRAM using 4 Mx 4 bits: 256 chips => easy to organize in 16 banks with 16 chips 512 MB DRAM using 64 Mx 4 bits: 16 chips => 1 bank? Problem#2: Difficulty in main memory expansion 40

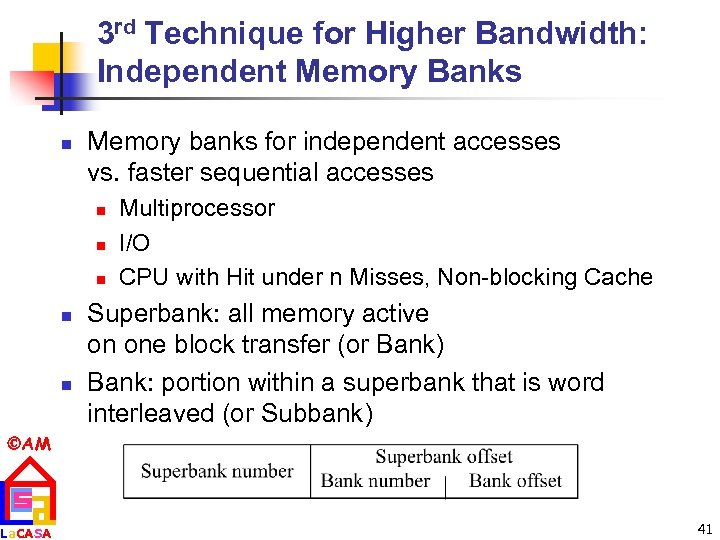

3 rd Technique for Higher Bandwidth: Independent Memory Banks n Memory banks for independent accesses vs. faster sequential accesses n n n Multiprocessor I/O CPU with Hit under n Misses, Non-blocking Cache Superbank: all memory active on one block transfer (or Bank) Bank: portion within a superbank that is word interleaved (or Subbank) AM La. CASA 41

3 rd Technique for Higher Bandwidth: Independent Memory Banks n Memory banks for independent accesses vs. faster sequential accesses n n n Multiprocessor I/O CPU with Hit under n Misses, Non-blocking Cache Superbank: all memory active on one block transfer (or Bank) Bank: portion within a superbank that is word interleaved (or Subbank) AM La. CASA 41

![Avoiding Bank Conflicts n n AM La. CASA int x[256][512]; Lots of banks for Avoiding Bank Conflicts n n AM La. CASA int x[256][512]; Lots of banks for](https://present5.com/presentation/01fb4b7adc5553e72aeb77758f2b62e6/image-42.jpg) Avoiding Bank Conflicts n n AM La. CASA int x[256][512]; Lots of banks for (j = 0; j < 512; j = j+1) for (i = 0; i < 256; i = i+1) Even with 128 banks, x[i][j] = 2 * x[i][j]; since 512 is multiple of 128, conflict on word accesses SW: loop interchange or declaring array not power of 2 (“array padding”) HW: Prime number of banks n bank number = address mod number of banks n address within bank = address / number of words in bank n modulo & divide per memory access with prime no. banks? n address within bank = address mod number words in bank number? easy if 2 N words per bank 42

Avoiding Bank Conflicts n n AM La. CASA int x[256][512]; Lots of banks for (j = 0; j < 512; j = j+1) for (i = 0; i < 256; i = i+1) Even with 128 banks, x[i][j] = 2 * x[i][j]; since 512 is multiple of 128, conflict on word accesses SW: loop interchange or declaring array not power of 2 (“array padding”) HW: Prime number of banks n bank number = address mod number of banks n address within bank = address / number of words in bank n modulo & divide per memory access with prime no. banks? n address within bank = address mod number words in bank number? easy if 2 N words per bank 42

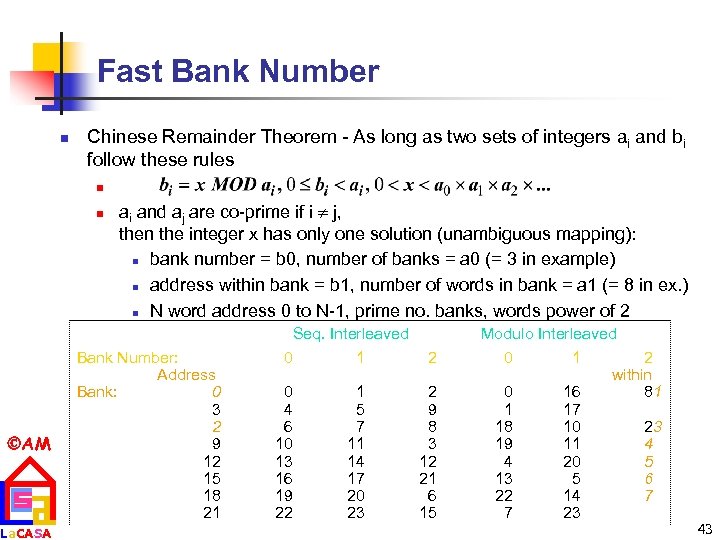

Fast Bank Number n Chinese Remainder Theorem - As long as two sets of integers ai and bi follow these rules n n AM La. CASA ai and aj are co-prime if i j, then the integer x has only one solution (unambiguous mapping): n bank number = b 0, number of banks = a 0 (= 3 in example) n address within bank = b 1, number of words in bank = a 1 (= 8 in ex. ) n N word address 0 to N-1, prime no. banks, words power of 2 Bank Number: Address Bank: 0 3 2 9 12 15 18 21 Seq. Interleaved 0 1 0 4 6 10 13 16 19 22 1 5 7 11 14 17 20 23 2 2 9 8 3 12 21 6 15 Modulo Interleaved 0 1 2 within 0 16 81 1 17 18 10 23 19 11 4 4 20 5 13 5 6 22 14 7 7 23 43

Fast Bank Number n Chinese Remainder Theorem - As long as two sets of integers ai and bi follow these rules n n AM La. CASA ai and aj are co-prime if i j, then the integer x has only one solution (unambiguous mapping): n bank number = b 0, number of banks = a 0 (= 3 in example) n address within bank = b 1, number of words in bank = a 1 (= 8 in ex. ) n N word address 0 to N-1, prime no. banks, words power of 2 Bank Number: Address Bank: 0 3 2 9 12 15 18 21 Seq. Interleaved 0 1 0 4 6 10 13 16 19 22 1 5 7 11 14 17 20 23 2 2 9 8 3 12 21 6 15 Modulo Interleaved 0 1 2 within 0 16 81 1 17 18 10 23 19 11 4 4 20 5 13 5 6 22 14 7 7 23 43

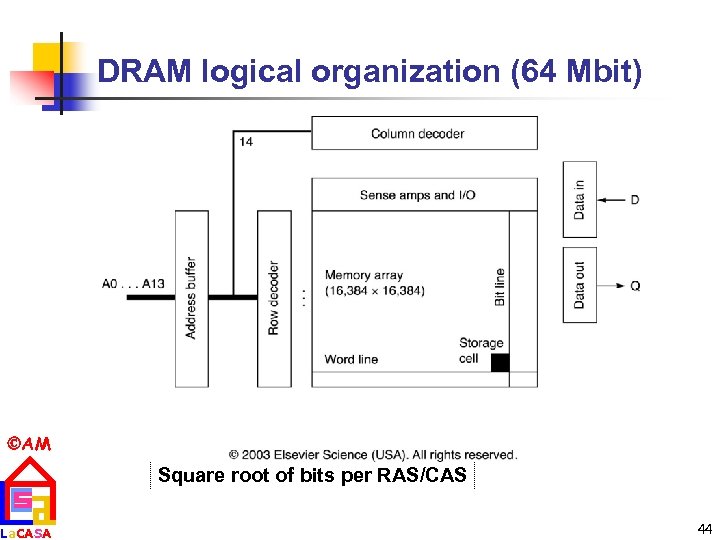

DRAM logical organization (64 Mbit) AM La. CASA Square root of bits per RAS/CAS 44

DRAM logical organization (64 Mbit) AM La. CASA Square root of bits per RAS/CAS 44

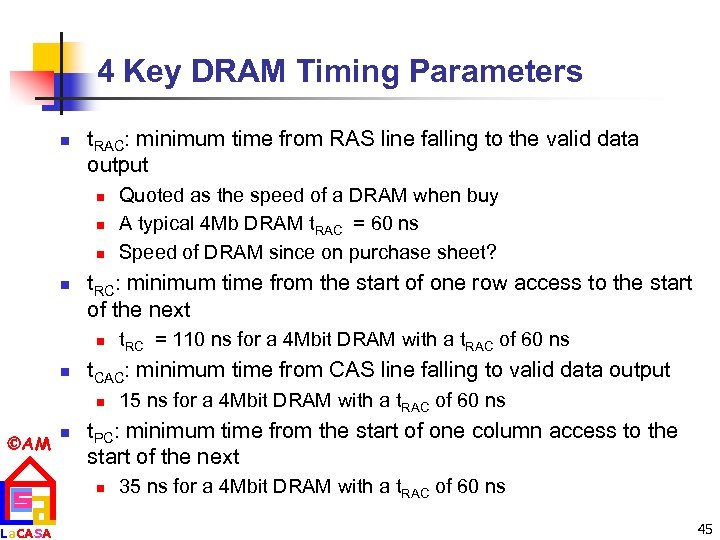

4 Key DRAM Timing Parameters n t. RAC: minimum time from RAS line falling to the valid data output n n t. RC: minimum time from the start of one row access to the start of the next n n La. CASA n t. RC = 110 ns for a 4 Mbit DRAM with a t. RAC of 60 ns t. CAC: minimum time from CAS line falling to valid data output n AM Quoted as the speed of a DRAM when buy A typical 4 Mb DRAM t. RAC = 60 ns Speed of DRAM since on purchase sheet? 15 ns for a 4 Mbit DRAM with a t. RAC of 60 ns t. PC: minimum time from the start of one column access to the start of the next n 35 ns for a 4 Mbit DRAM with a t. RAC of 60 ns 45

4 Key DRAM Timing Parameters n t. RAC: minimum time from RAS line falling to the valid data output n n t. RC: minimum time from the start of one row access to the start of the next n n La. CASA n t. RC = 110 ns for a 4 Mbit DRAM with a t. RAC of 60 ns t. CAC: minimum time from CAS line falling to valid data output n AM Quoted as the speed of a DRAM when buy A typical 4 Mb DRAM t. RAC = 60 ns Speed of DRAM since on purchase sheet? 15 ns for a 4 Mbit DRAM with a t. RAC of 60 ns t. PC: minimum time from the start of one column access to the start of the next n 35 ns for a 4 Mbit DRAM with a t. RAC of 60 ns 45

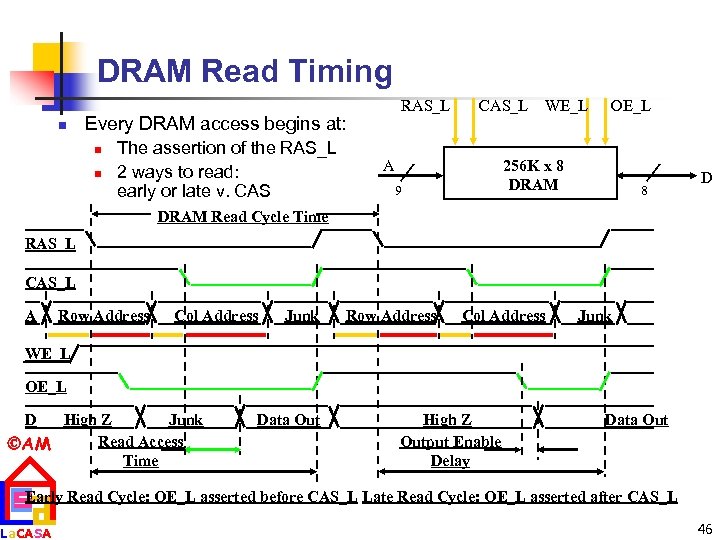

DRAM Read Timing n RAS_L Every DRAM access begins at: n n The assertion of the RAS_L 2 ways to read: early or late v. CAS_L A WE_L OE_L 256 K x 8 DRAM 9 8 D DRAM Read Cycle Time RAS_L CAS_L A Row Address Col Address Junk WE_L OE_L D High Z Junk Read Access AM Time Data Out High Z Output Enable Delay Data Out Early Read Cycle: OE_L asserted before CAS_L Late Read Cycle: OE_L asserted after CAS_L La. CASA 46

DRAM Read Timing n RAS_L Every DRAM access begins at: n n The assertion of the RAS_L 2 ways to read: early or late v. CAS_L A WE_L OE_L 256 K x 8 DRAM 9 8 D DRAM Read Cycle Time RAS_L CAS_L A Row Address Col Address Junk WE_L OE_L D High Z Junk Read Access AM Time Data Out High Z Output Enable Delay Data Out Early Read Cycle: OE_L asserted before CAS_L Late Read Cycle: OE_L asserted after CAS_L La. CASA 46

DRAM Performance n A 60 ns (t. RAC) DRAM can n n perform a row access only every 110 ns (t. RC) perform column access (t. CAC) in 15 ns, but time between column accesses is at least 35 ns (t. PC). n n AM La. CASA In practice, external address delays and turning around buses make it 40 to 50 ns These times do not include the time to drive the addresses off the microprocessor nor the memory controller overhead! 47

DRAM Performance n A 60 ns (t. RAC) DRAM can n n perform a row access only every 110 ns (t. RC) perform column access (t. CAC) in 15 ns, but time between column accesses is at least 35 ns (t. PC). n n AM La. CASA In practice, external address delays and turning around buses make it 40 to 50 ns These times do not include the time to drive the addresses off the microprocessor nor the memory controller overhead! 47

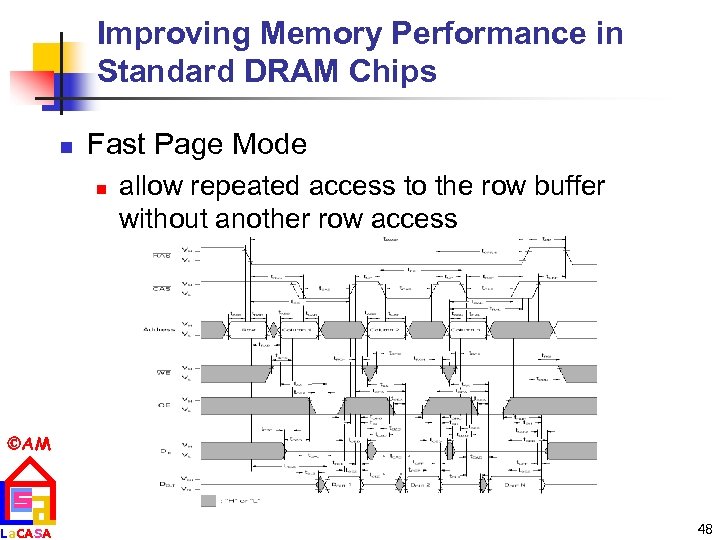

Improving Memory Performance in Standard DRAM Chips n Fast Page Mode n allow repeated access to the row buffer without another row access AM La. CASA 48

Improving Memory Performance in Standard DRAM Chips n Fast Page Mode n allow repeated access to the row buffer without another row access AM La. CASA 48

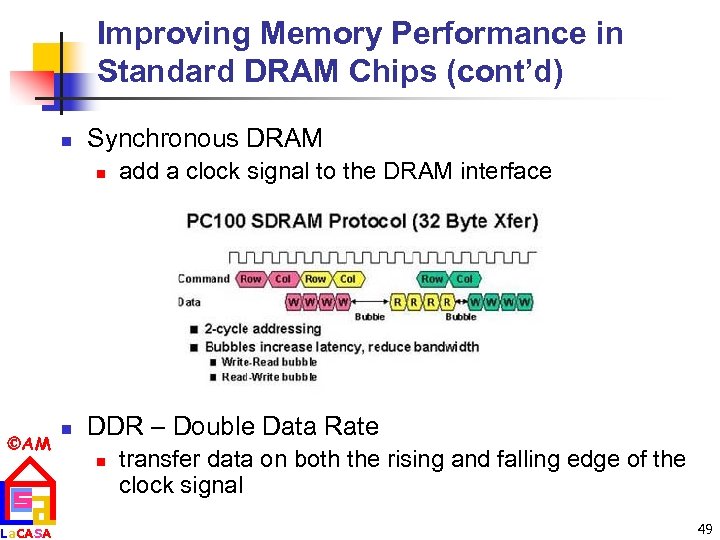

Improving Memory Performance in Standard DRAM Chips (cont’d) n Synchronous DRAM n AM La. CASA n add a clock signal to the DRAM interface DDR – Double Data Rate n transfer data on both the rising and falling edge of the clock signal 49

Improving Memory Performance in Standard DRAM Chips (cont’d) n Synchronous DRAM n AM La. CASA n add a clock signal to the DRAM interface DDR – Double Data Rate n transfer data on both the rising and falling edge of the clock signal 49

Improving Memory Performance via a New DRAM Interface: RAMBUS (cont’d) n n RAMBUS provides a new interface – memory chip now acts more like a system First generation: RDRAM n Protocol based RAM w/ narrow (16 -bit) bus n n n AM La. CASA n High clock rate (400 Mhz), but long latency Pipelined operation Multiple arrays w/ data transferred on both edges of clock Second generation: direct RDRAM (DRDRAM) offers up to 1. 6 GB/s 50

Improving Memory Performance via a New DRAM Interface: RAMBUS (cont’d) n n RAMBUS provides a new interface – memory chip now acts more like a system First generation: RDRAM n Protocol based RAM w/ narrow (16 -bit) bus n n n AM La. CASA n High clock rate (400 Mhz), but long latency Pipelined operation Multiple arrays w/ data transferred on both edges of clock Second generation: direct RDRAM (DRDRAM) offers up to 1. 6 GB/s 50

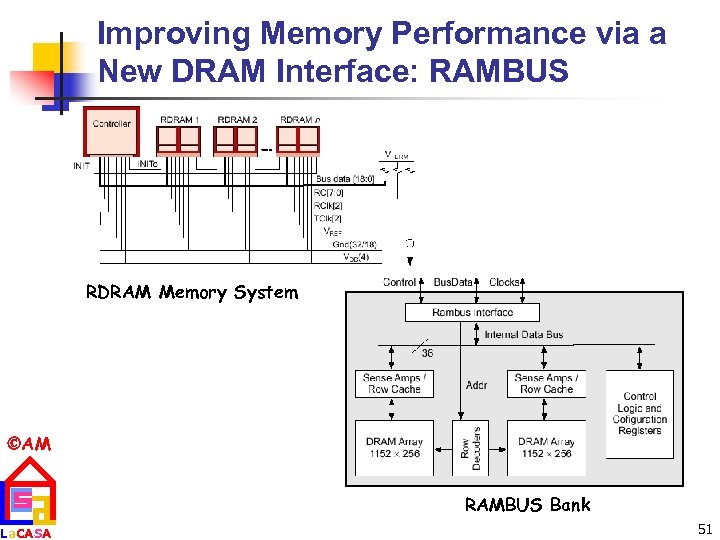

Improving Memory Performance via a New DRAM Interface: RAMBUS RDRAM Memory System AM La. CASA RAMBUS Bank 51

Improving Memory Performance via a New DRAM Interface: RAMBUS RDRAM Memory System AM La. CASA RAMBUS Bank 51