506593bb137d61a29fb5f6258be26130.ppt

- Количество слайдов: 189

Condor Users Tutorial National e-Science Centre Edinburgh, Scotland October 2003 Condor Project Computer Sciences Department University of Wisconsin-Madison condor-admin@cs. wisc. edu http: //www. cs. wisc. edu/condor

The Condor Project (Established ‘ 85) Distributed High Throughput Computing research performed by a team of ~35 faculty, full time staff and students. http: //www. cs. wisc. edu/condor 2

The Condor Project (Established ‘ 85) Distributed High Throughput Computing research performed by a team of ~35 faculty, full time staff and students who: hface software engineering challenges in a distributed UNIX/Linux/NT environment hare involved in national and international grid collaborations, hactively interact with academic and commercial users, hmaintain and support large distributed production environments, hand educate and train students. Funding – US Govt. (Do. D, Do. E, NASA, NSF, NIH), AT&T, IBM, INTEL, Microsoft, UW-Madison, … http: //www. cs. wisc. edu/condor 3

A Multifaceted Project › Harnessing the power of clusters - opportunistic and/or › › › › dedicated (Condor) Job management services for Grid applications (Condor-G, Stork) Fabric management services for Grid resources (Condor, Glide. Ins, Ne. ST) Distributed I/O technology (Parrot, Kangaroo, Ne. ST) Job-flow management (DAGMan, Condor, Hawk) Distributed monitoring and management (Hawk. Eye) Technology for Distributed Systems (Class. AD, MW) Packaging and Integration (NMI, VDT) http: //www. cs. wisc. edu/condor 4

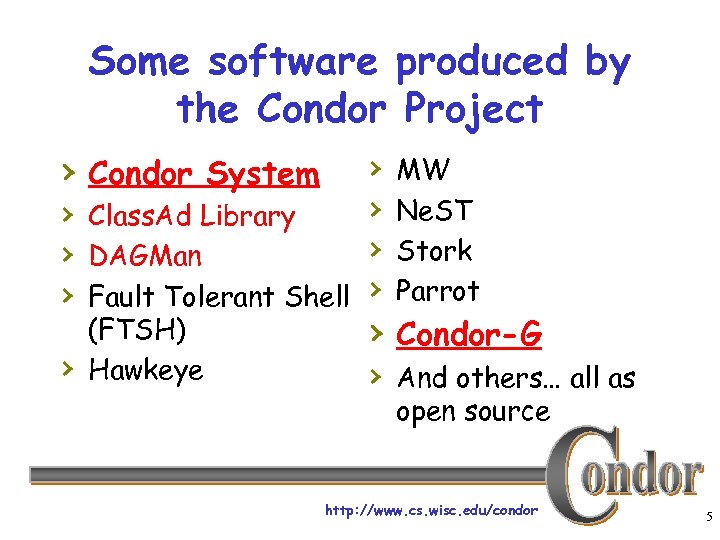

Some software produced by the Condor Project › Condor System › › › Class. Ad Library › › DAGMan › Fault Tolerant Shell › › (FTSH) Hawkeye MW Ne. ST Stork Parrot › Condor-G › And others… all as open source http: //www. cs. wisc. edu/condor 5

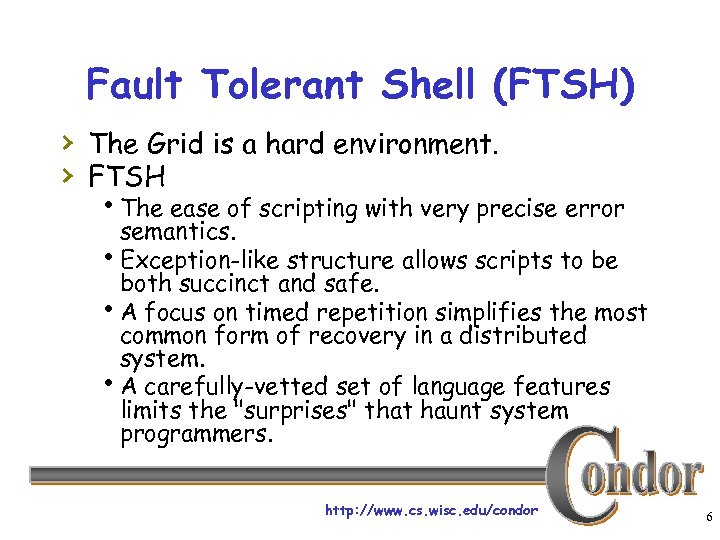

Fault Tolerant Shell (FTSH) › The Grid is a hard environment. › FTSH h. The ease of scripting with very precise error semantics. h. Exception-like structure allows scripts to be both succinct and safe. h. A focus on timed repetition simplifies the most common form of recovery in a distributed system. h. A carefully-vetted set of language features limits the "surprises" that haunt system programmers. http: //www. cs. wisc. edu/condor 6

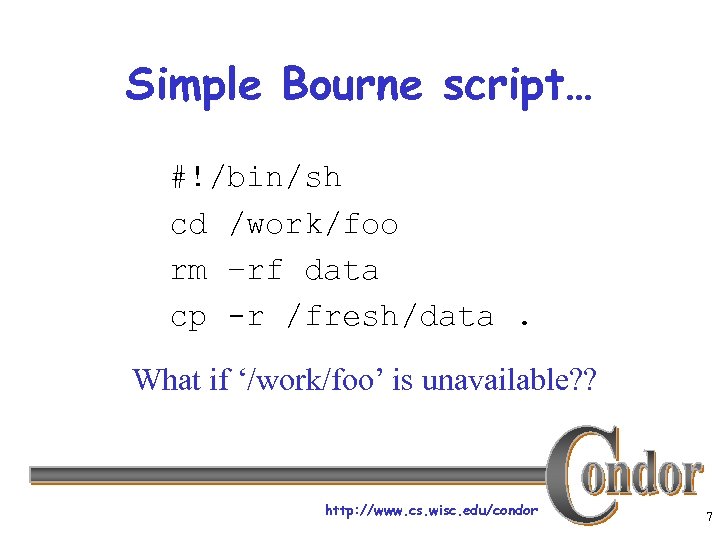

Simple Bourne script… #!/bin/sh cd /work/foo rm –rf data cp -r /fresh/data. What if ‘/work/foo’ is unavailable? ? http: //www. cs. wisc. edu/condor 7

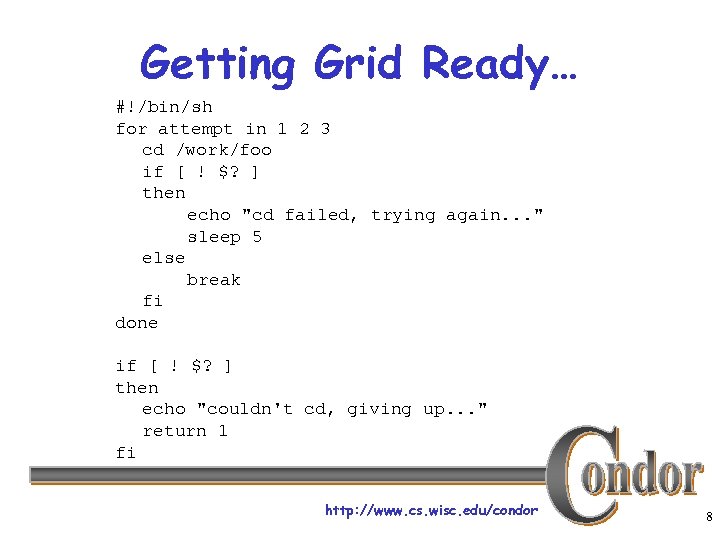

Getting Grid Ready… #!/bin/sh for attempt in 1 2 3 cd /work/foo if [ ! $? ] then echo "cd failed, trying again. . . " sleep 5 else break fi done if [ ! $? ] then echo "couldn't cd, giving up. . . " return 1 fi http: //www. cs. wisc. edu/condor 8

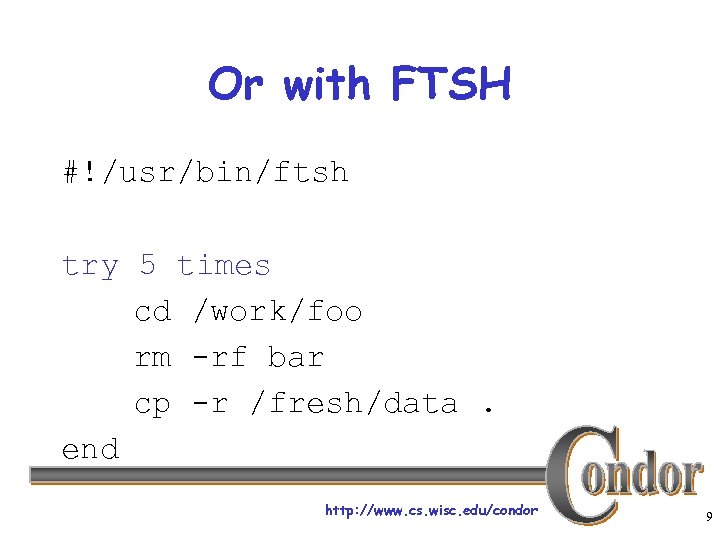

Or with FTSH #!/usr/bin/ftsh try 5 times cd /work/foo rm -rf bar cp -r /fresh/data. end http: //www. cs. wisc. edu/condor 9

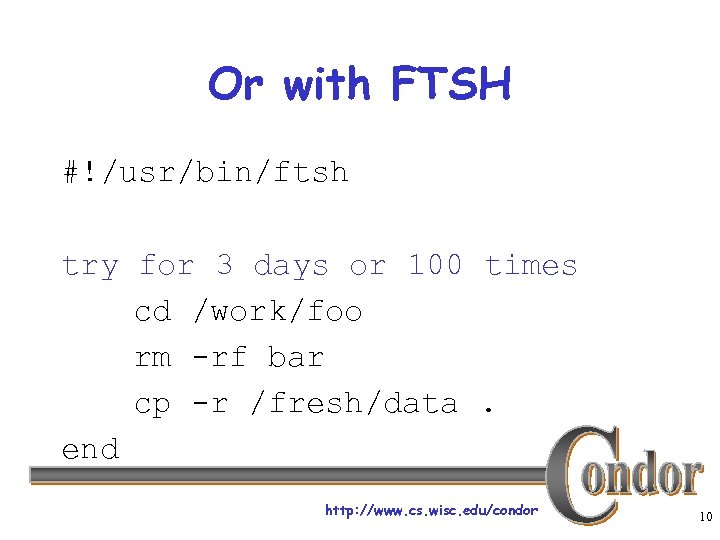

Or with FTSH #!/usr/bin/ftsh try for 3 days or 100 times cd /work/foo rm -rf bar cp -r /fresh/data. end http: //www. cs. wisc. edu/condor 10

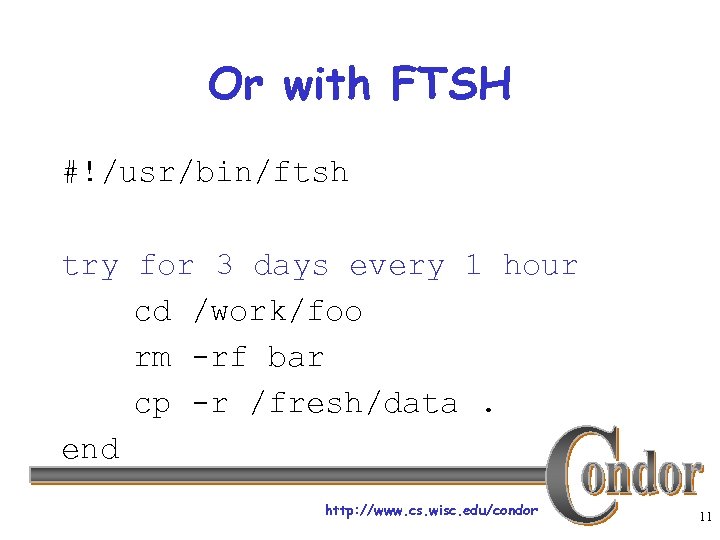

Or with FTSH #!/usr/bin/ftsh try for 3 days every 1 hour cd /work/foo rm -rf bar cp -r /fresh/data. end http: //www. cs. wisc. edu/condor 11

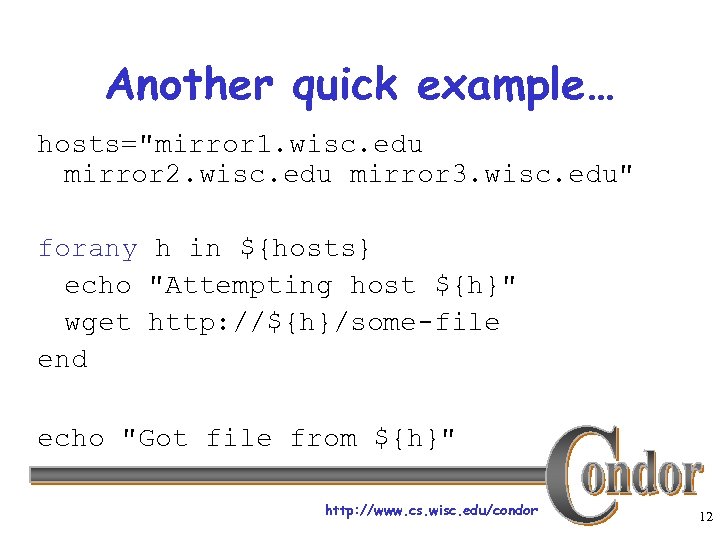

Another quick example… hosts="mirror 1. wisc. edu mirror 2. wisc. edu mirror 3. wisc. edu" forany h in ${hosts} echo "Attempting host ${h}" wget http: //${h}/some-file end echo "Got file from ${h}" http: //www. cs. wisc. edu/condor 12

FTSH › All the usual constructs h. Redirection, loops, conditionals, functions, expressions, nesting, … › And more h. Logging h. Timeouts h. Process Cancellation h. Complete parsing at startup h. File cleanup › Used on Linux, Solaris, Irix, Cygwin, … › Simplify your life! http: //www. cs. wisc. edu/condor 13

More Software… › Hawk. Eye h. A monitoring tool › MW h. Framework to create a master-worker style application in a opportunistic environment › Ne. ST h. Flexible Network Storage appliance h“Lots” : reserved space › Stork h. A scheduler for grid data placement activities h. Treat data movement as a “first class citizen” http: //www. cs. wisc. edu/condor 14

More Software, cont. › Parrot h. Useful in distributed batch systems where one has access to many CPUs, but no consistent distributed filesystem (BYOFS!). h. Works with any program % gv /gsiftp/www. cs. wisc. edu/condor/doc/usenix_1. 92. ps % grep Yahoo /http/www. yahoo. com http: //www. cs. wisc. edu/condor 15

What is Condor? › Condor converts collections of › › distributively owned workstations and dedicated clusters into a distributed highthroughput computing (HTC) facility. Condor manages both resources (machines) and resource requests (jobs) Condor has several unique mechanisms such as : h. Class. Ad Matchmaking h. Process checkpoint/ restart / migration h. Remote System Calls h. Grid Awareness http: //www. cs. wisc. edu/condor 16

Condor can manage a large number of jobs › Managing a large number of jobs h. You specify the jobs in a file and submit them to Condor, which runs them all and keeps you notified on their progress h. Mechanisms to help you manage huge numbers of jobs (1000’s), all the data, etc. h. Condor can handle inter-job dependencies (DAGMan) h. Condor users can set job priorities h. Condor administrators can set user priorities http: //www. cs. wisc. edu/condor 17

Condor can manage Dedicated Resources… › Dedicated Resources h. Compute Clusters › Manage h. Node monitoring, scheduling h Job launch, monitor & cleanup http: //www. cs. wisc. edu/condor 18

…and Condor can manage non-dedicated resources › Non-dedicated resources examples: h. Desktop workstations in offices h. Workstations in student labs › Non-dedicated resources are often idle --› ~70% of the time! Condor can effectively harness the otherwise wasted compute cycles from nondedicated resources http: //www. cs. wisc. edu/condor 19

Mechanisms in Condor used to harness non-dedicated workstations › Transparent Process Checkpoint / › › Restart Transparent Process Migration Transparent Redirection of I/O (Condor’s Remote System Calls) http: //www. cs. wisc. edu/condor 20

What else is Condor Good For? › Robustness h. Checkpointing allows guaranteed forward progress of your jobs, even jobs that run for weeks before completion h. If an execute machine crashes, you only lose work done since the last checkpoint h. Condor maintains a persistent job queue - if the submit machine crashes, Condor will recover http: //www. cs. wisc. edu/condor 21

What else is Condor Good For? (cont’d) › Giving you access to more computing resources h. Dedicated compute cluster workstations h. Non-dedicated workstations h. Resources at other institutions • Remote Condor Pools via Condor Flocking • Remote resources via Globus Grid protocols http: //www. cs. wisc. edu/condor 22

What is Class. Ad Matchmaking? › Condor uses Class. Ad Matchmaking to make › sure that work gets done within the constraints of both users and owners. Users (jobs) have constraints: h“I need an Alpha with 256 MB RAM” › Owners (machines) have constraints: h“Only run jobs when I am away from my desk and never run jobs owned by Bob. ” › Semi-structured data --- no fixed schema http: //www. cs. wisc. edu/condor 23

Some HTC Challenges › Condor does whatever it takes to run your jobs, even if some machines… h. Crash (or are disconnected) h. Run out of disk space h. Don’t have your software installed h. Are frequently needed by others h. Are far away & managed by someone else http: //www. cs. wisc. edu/condor 24

The Condor System › Unix and NT › Operational since 1986 › More than 400 pools installed, managing › › more than 17000 CPUs worldwide. More than 1800 CPUs in 10 pools on our campus Software available free on the web h. Open license › Adopted by the “real world” (Galileo, Maxtor, Micron, Oracle, Tigr, CORE… ) http: //www. cs. wisc. edu/condor 25

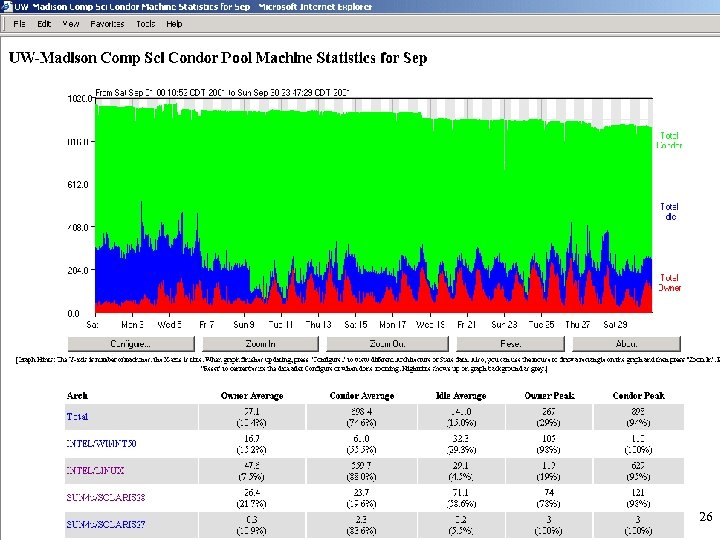

http: //www. cs. wisc. edu/condor 26

Globus Toolkit › The Globus Toolkit is an open source implementation of Grid-related protocols & middleware services designed by the Globus Project and collaborators h. Remote job execution, security infrastructure, directory services, data transfer, … http: //www. cs. wisc. edu/condor 28

The Condor Project and the Grid … › Close collaboration and coordination with › › the Globus Project – joint development, adoption of common protocols, technology exchange, … Partner in major national Grid R&D 2 (Research, Development and Deployment) efforts (Gri. Phy. N, i. VDGL, IPG, Tera. Grid) Close collaboration with Grid projects in Europe (EDG, Grid. Lab, e-Science) http: //www. cs. wisc. edu/condor 29

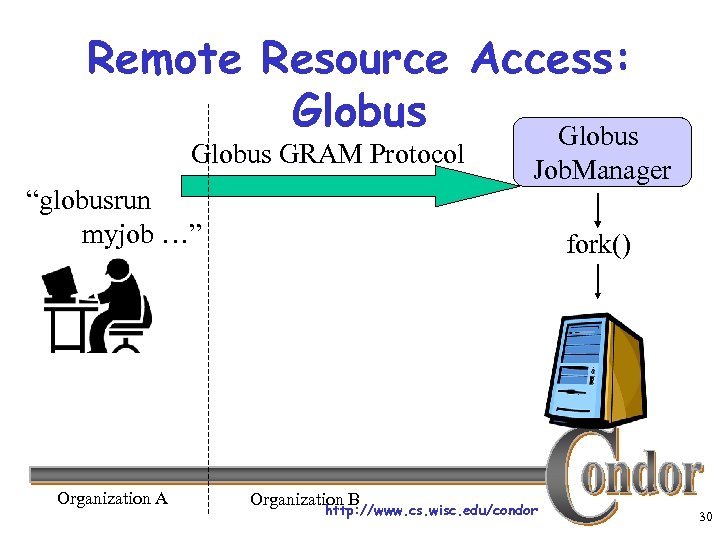

Remote Resource Access: Globus GRAM Protocol “globusrun myjob …” Organization A Job. Manager fork() Organization B http: //www. cs. wisc. edu/condor 30

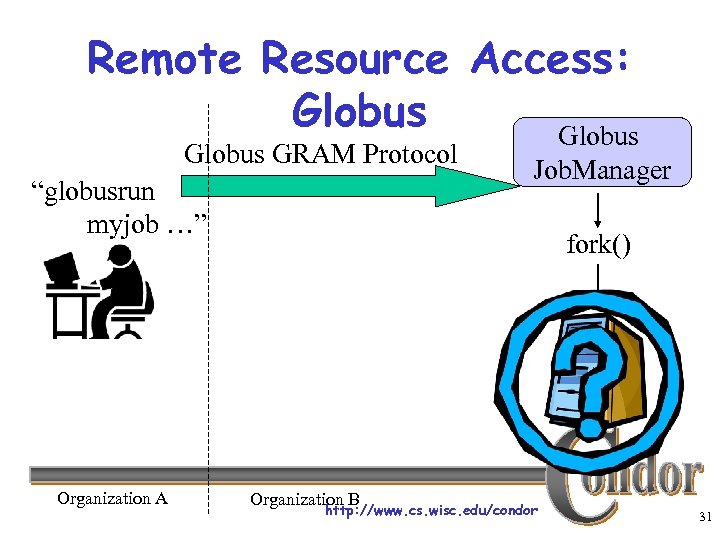

Remote Resource Access: Globus GRAM Protocol “globusrun myjob …” Organization A Job. Manager fork() Organization B http: //www. cs. wisc. edu/condor 31

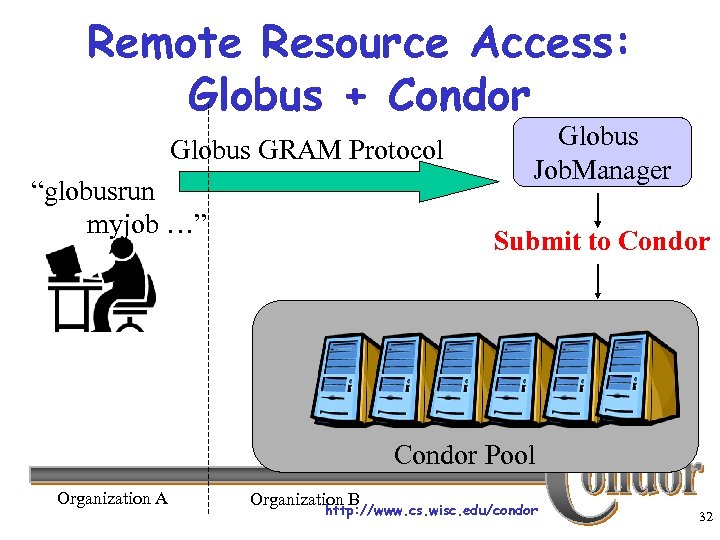

Remote Resource Access: Globus + Condor Globus GRAM Protocol “globusrun myjob …” Globus Job. Manager Submit to Condor Pool Organization A Organization B http: //www. cs. wisc. edu/condor 32

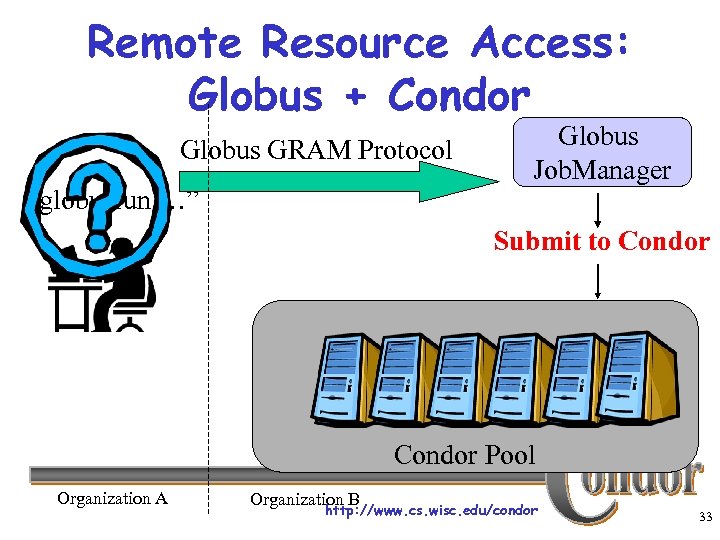

Remote Resource Access: Globus + Condor Globus GRAM Protocol “globusrun …” Globus Job. Manager Submit to Condor Pool Organization A Organization B http: //www. cs. wisc. edu/condor 33

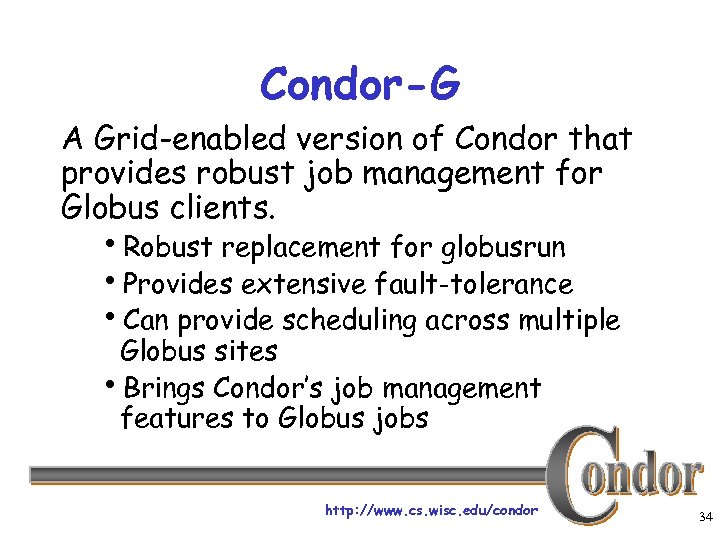

Condor-G A Grid-enabled version of Condor that provides robust job management for Globus clients. h. Robust replacement for globusrun h. Provides extensive fault-tolerance h. Can provide scheduling across multiple Globus sites h. Brings Condor’s job management features to Globus jobs http: //www. cs. wisc. edu/condor 34

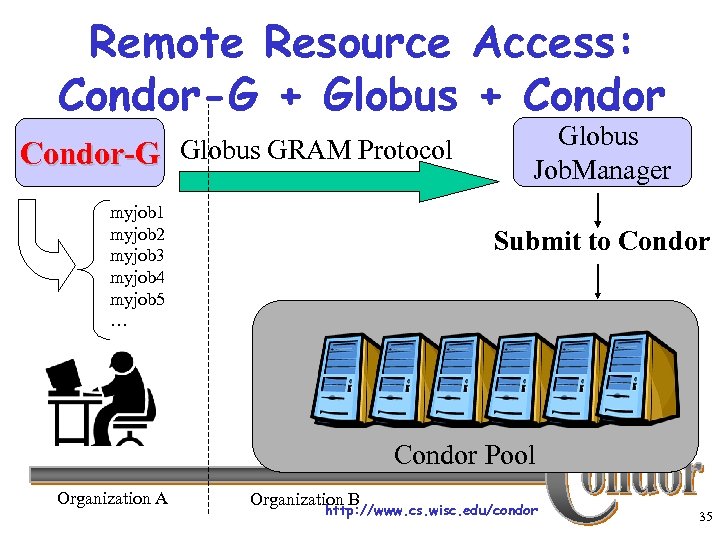

Remote Resource Access: Condor-G + Globus + Condor-G Globus GRAM Protocol myjob 1 myjob 2 myjob 3 myjob 4 myjob 5 … Globus Job. Manager Submit to Condor Pool Organization A Organization B http: //www. cs. wisc. edu/condor 35

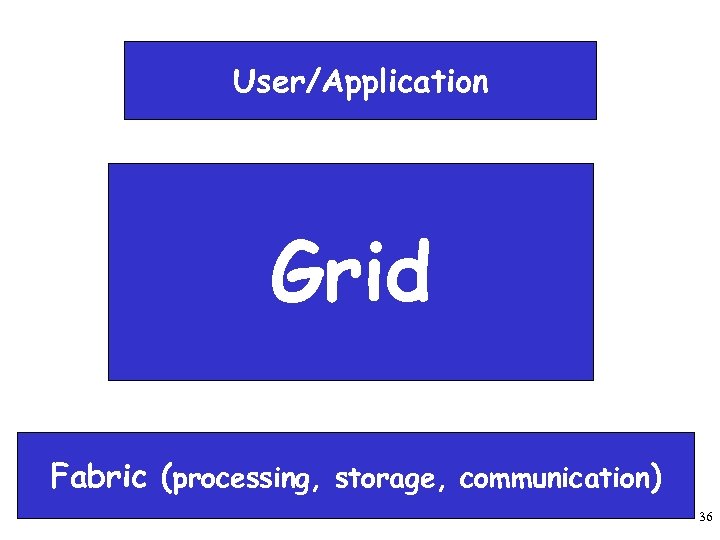

User/Application Grid Fabric (processing, storage, communication) http: //www. cs. wisc. edu/condor 36

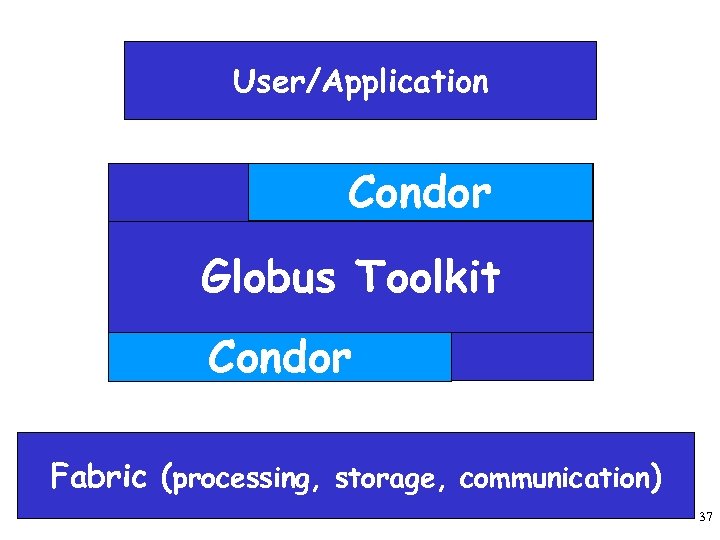

User/Application Condor Grid Globus Toolkit Condor Fabric (processing, storage, communication) http: //www. cs. wisc. edu/condor 37

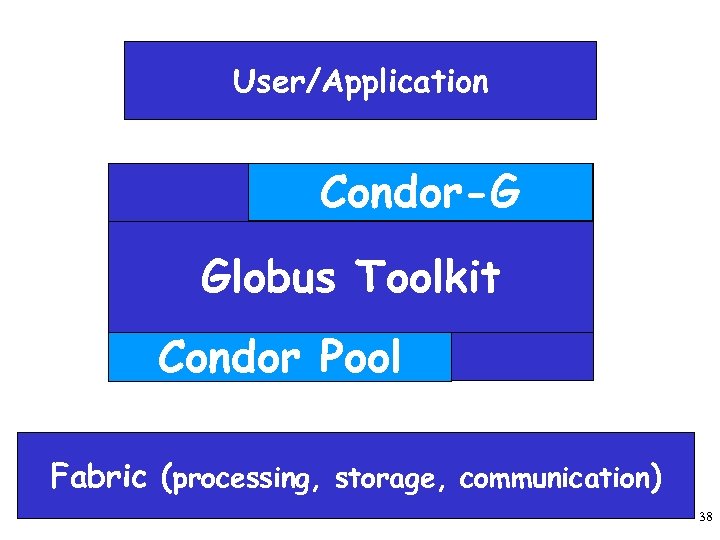

User/Application Condor-G Grid Globus Toolkit Condor Pool Fabric (processing, storage, communication) http: //www. cs. wisc. edu/condor 38

The Idea Computing power is everywhere, we try to make it usable by anyone. http: //www. cs. wisc. edu/condor 39

Meet Frieda. She is a scientist. But she has a big problem. http: //www. cs. wisc. edu/condor 40

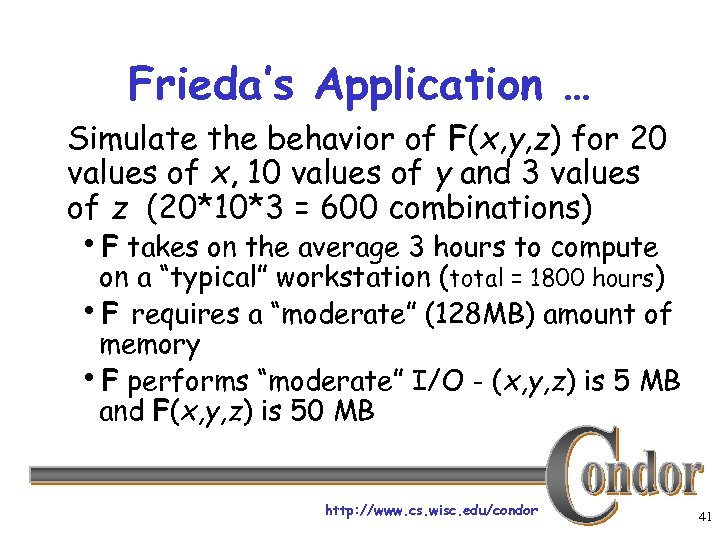

Frieda’s Application … Simulate the behavior of F(x, y, z) for 20 values of x, 10 values of y and 3 values of z (20*10*3 = 600 combinations) h. F takes on the average 3 hours to compute on a “typical” workstation (total = 1800 hours) h. F requires a “moderate” (128 MB) amount of memory h. F performs “moderate” I/O - (x, y, z) is 5 MB and F(x, y, z) is 50 MB http: //www. cs. wisc. edu/condor 41

I have 600 simulations to run. Where can I get help? http: //www. cs. wisc. edu/condor 42

Install a Personal Condor! http: //www. cs. wisc. edu/condor 43

Installing Condor › Download Condor for your operating system › Available as a free download from › http: //www. cs. wisc. edu/condor Stable –vs- Developer Releases h. Naming scheme similar to the Linux Kernel… › Available for most Unix platforms and Windows NT http: //www. cs. wisc. edu/condor 44

So Frieda Installs Personal Condor on her machine… › What do we mean by a “Personal” Condor? h. Condor on your own workstation, no root access required, no system administrator intervention needed › So after installation, Frieda submits her jobs to her Personal Condor… http: //www. cs. wisc. edu/condor 45

600 Condor jobs personal your workstation Condor http: //www. cs. wisc. edu/condor 46

Personal Condor? ! What’s the benefit of a Condor “Pool” with just one user and one machine? http: //www. cs. wisc. edu/condor 47

Your Personal Condor will. . . › … keep an eye on your jobs and will keep you › › posted on their progress … implement your policy on the execution order of the jobs … keep a log of your job activities … add fault tolerance to your jobs … implement your policy on when the jobs can run on your workstation http: //www. cs. wisc. edu/condor 48

Getting Started: Submitting Jobs to Condor › Choosing a “Universe” for your job h. Just use VANILLA for now › Make your job “batch-ready” › Creating a submit description file › Run condor_submit on your submit description file http: //www. cs. wisc. edu/condor 49

Making your job ready › Must be able to run in the background: › › no interactive input, windows, GUI, etc. Can still use STDIN, STDOUT, and STDERR (the keyboard and the screen), but files are used for these instead of the actual devices Organize data files http: //www. cs. wisc. edu/condor 50

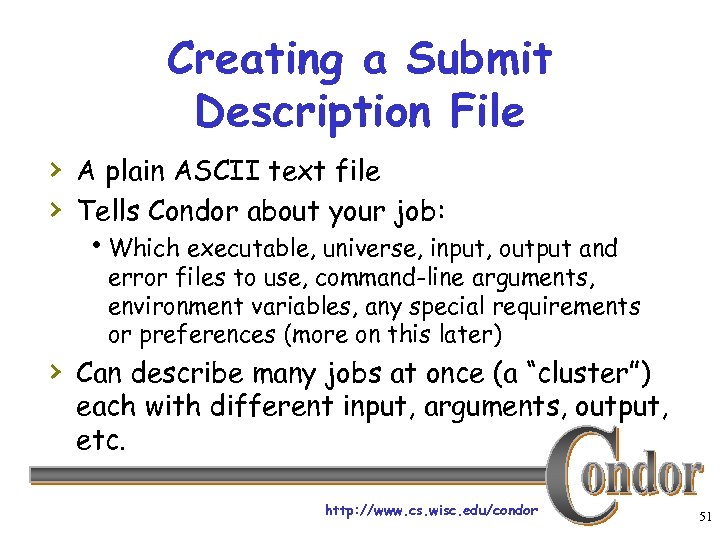

Creating a Submit Description File › A plain ASCII text file › Tells Condor about your job: h. Which executable, universe, input, output and error files to use, command-line arguments, environment variables, any special requirements or preferences (more on this later) › Can describe many jobs at once (a “cluster”) each with different input, arguments, output, etc. http: //www. cs. wisc. edu/condor 51

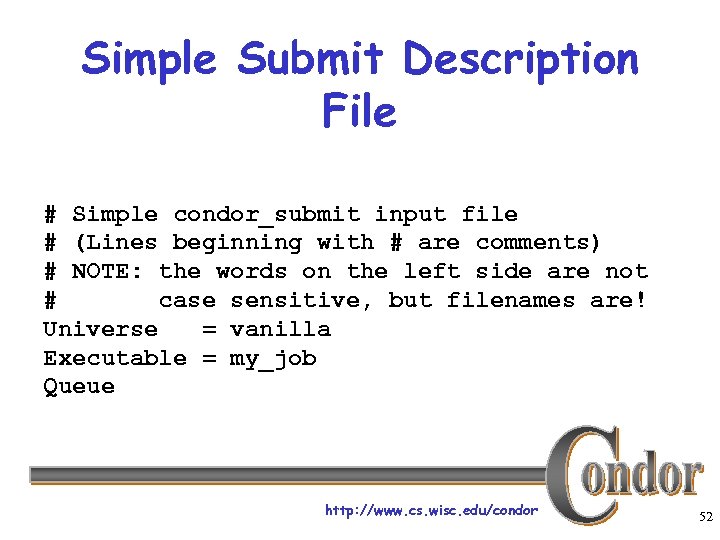

Simple Submit Description File # Simple condor_submit input file # (Lines beginning with # are comments) # NOTE: the words on the left side are not # case sensitive, but filenames are! Universe = vanilla Executable = my_job Queue http: //www. cs. wisc. edu/condor 52

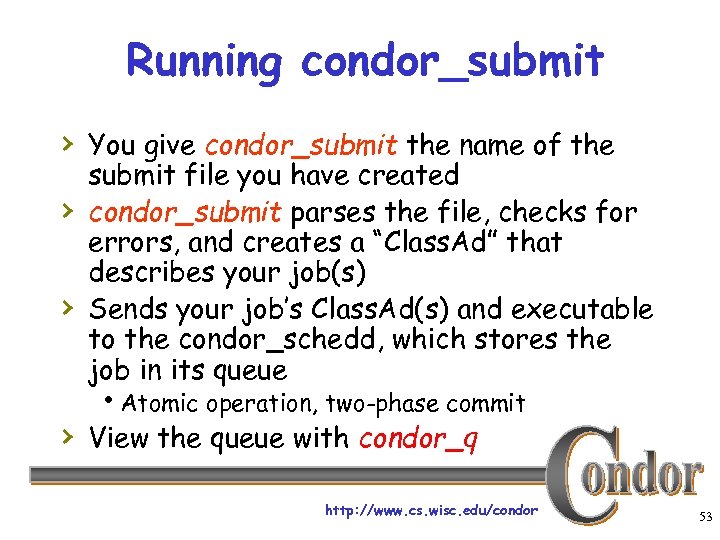

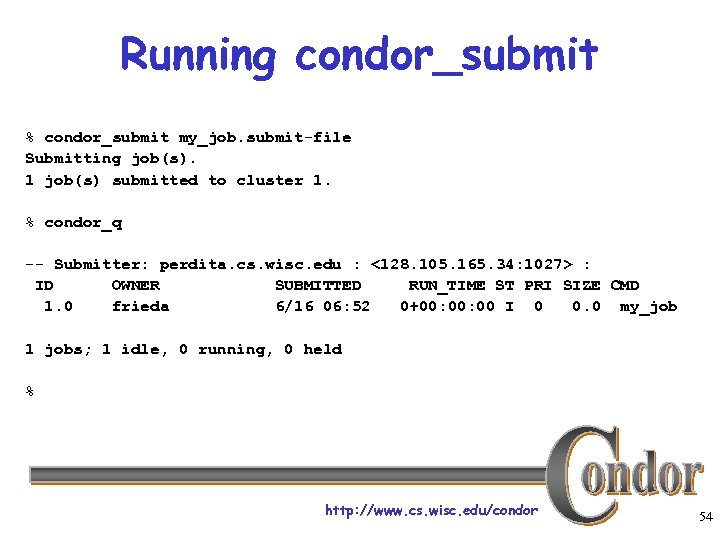

Running condor_submit › You give condor_submit the name of the › › submit file you have created condor_submit parses the file, checks for errors, and creates a “Class. Ad” that describes your job(s) Sends your job’s Class. Ad(s) and executable to the condor_schedd, which stores the job in its queue h. Atomic operation, two-phase commit › View the queue with condor_q http: //www. cs. wisc. edu/condor 53

Running condor_submit % condor_submit my_job. submit-file Submitting job(s). 1 job(s) submitted to cluster 1. % condor_q -- Submitter: perdita. cs. wisc. edu : <128. 105. 165. 34: 1027> : ID OWNER SUBMITTED RUN_TIME ST PRI SIZE CMD 1. 0 frieda 6/16 06: 52 0+00: 00 I 0 0. 0 my_job 1 jobs; 1 idle, 0 running, 0 held % http: //www. cs. wisc. edu/condor 54

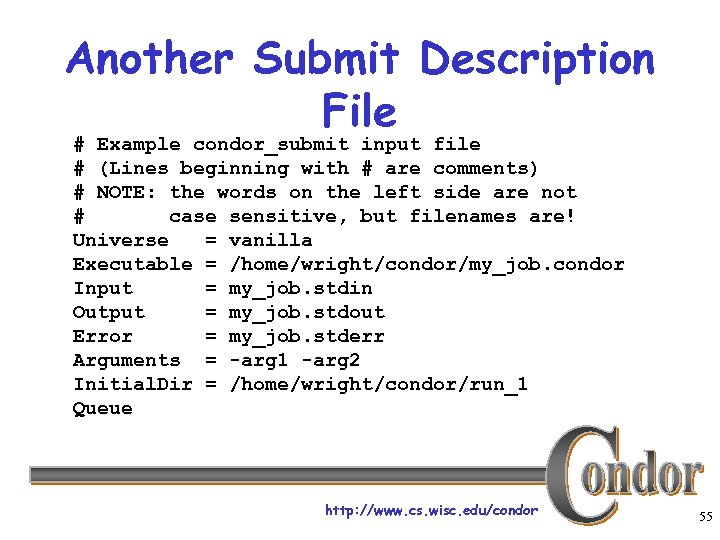

Another Submit Description File # Example condor_submit input file # (Lines beginning with # are comments) # NOTE: the words on the left side are not # case sensitive, but filenames are! Universe = vanilla Executable = /home/wright/condor/my_job. condor Input = my_job. stdin Output = my_job. stdout Error = my_job. stderr Arguments = -arg 1 -arg 2 Initial. Dir = /home/wright/condor/run_1 Queue http: //www. cs. wisc. edu/condor 55

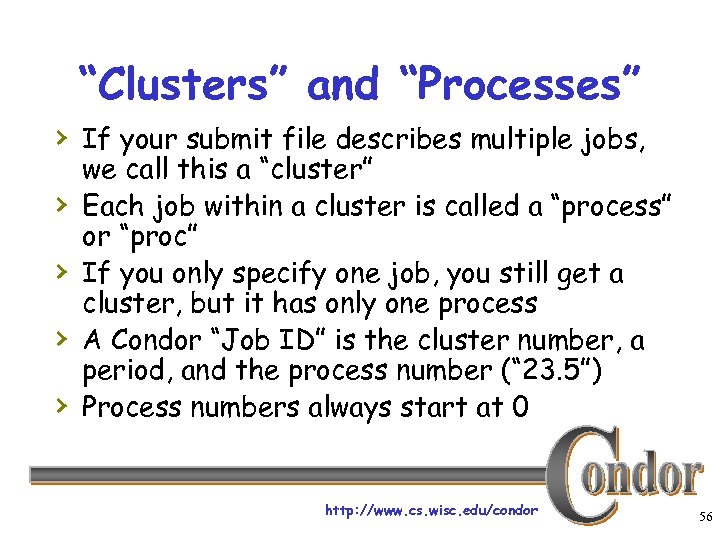

“Clusters” and “Processes” › If your submit file describes multiple jobs, › › we call this a “cluster” Each job within a cluster is called a “process” or “proc” If you only specify one job, you still get a cluster, but it has only one process A Condor “Job ID” is the cluster number, a period, and the process number (“ 23. 5”) Process numbers always start at 0 http: //www. cs. wisc. edu/condor 56

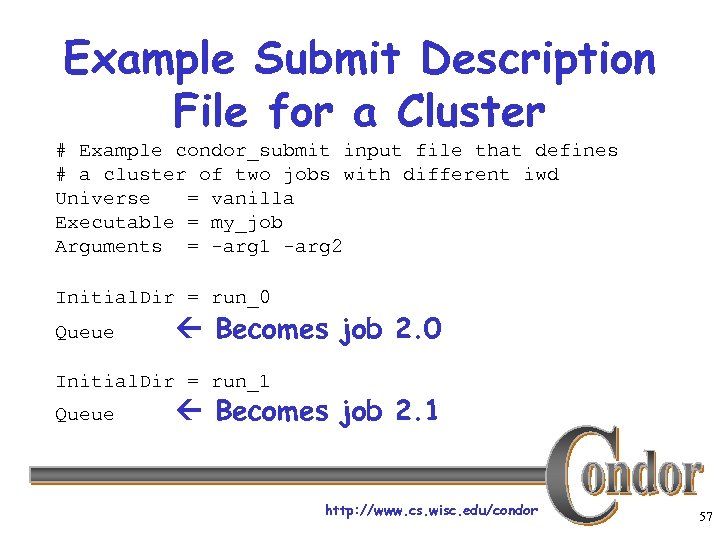

Example Submit Description File for a Cluster # Example condor_submit input file that defines # a cluster of two jobs with different iwd Universe = vanilla Executable = my_job Arguments = -arg 1 -arg 2 Initial. Dir = run_0 Queue Becomes job 2. 0 Initial. Dir = run_1 Queue Becomes job 2. 1 http: //www. cs. wisc. edu/condor 57

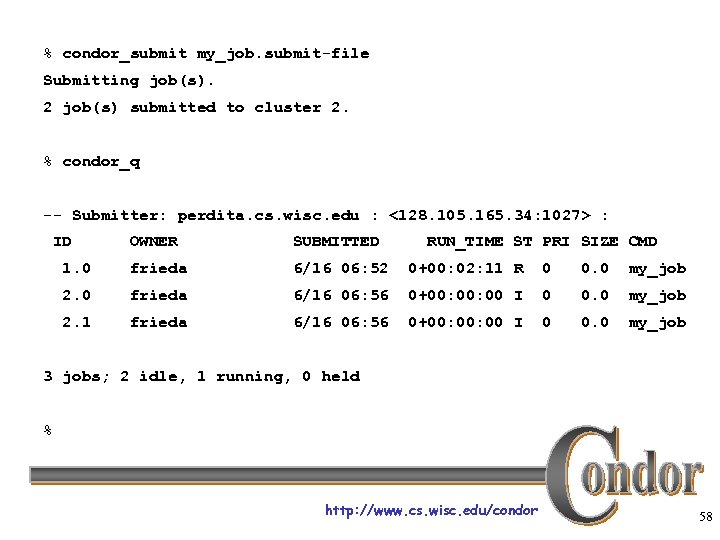

% condor_submit my_job. submit-file Submitting job(s). 2 job(s) submitted to cluster 2. % condor_q -- Submitter: perdita. cs. wisc. edu : <128. 105. 165. 34: 1027> : ID OWNER SUBMITTED RUN_TIME ST PRI SIZE CMD 1. 0 frieda 6/16 06: 52 0+00: 02: 11 R 0 0. 0 my_job 2. 0 frieda 6/16 06: 56 0+00: 00 I 0 0. 0 my_job 2. 1 frieda 6/16 06: 56 0+00: 00 I 0 0. 0 my_job 3 jobs; 2 idle, 1 running, 0 held % http: //www. cs. wisc. edu/condor 58

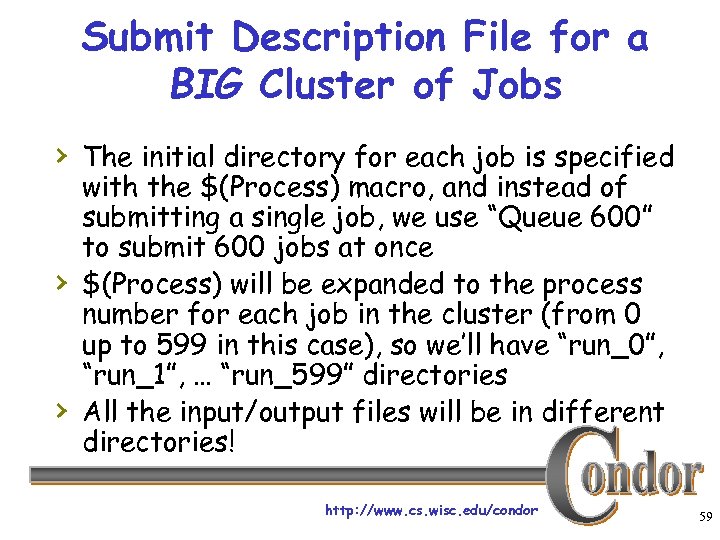

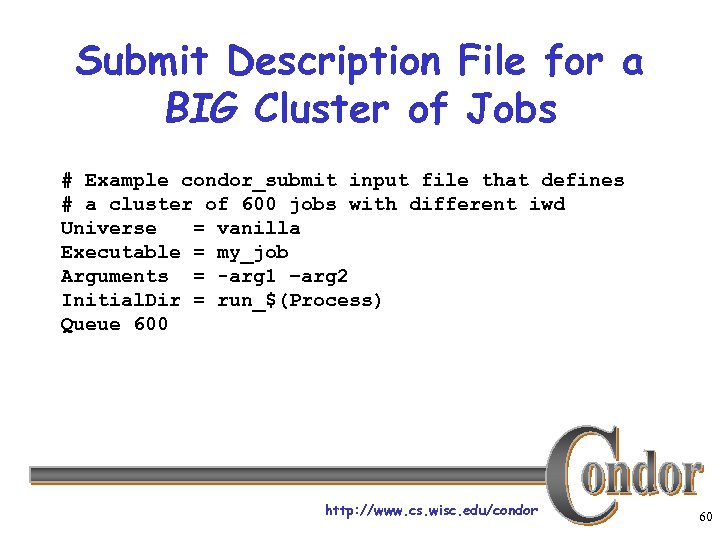

Submit Description File for a BIG Cluster of Jobs › The initial directory for each job is specified › › with the $(Process) macro, and instead of submitting a single job, we use “Queue 600” to submit 600 jobs at once $(Process) will be expanded to the process number for each job in the cluster (from 0 up to 599 in this case), so we’ll have “run_0”, “run_1”, … “run_599” directories All the input/output files will be in different directories! http: //www. cs. wisc. edu/condor 59

Submit Description File for a BIG Cluster of Jobs # Example condor_submit input file that defines # a cluster of 600 jobs with different iwd Universe = vanilla Executable = my_job Arguments = -arg 1 –arg 2 Initial. Dir = run_$(Process) Queue 600 http: //www. cs. wisc. edu/condor 60

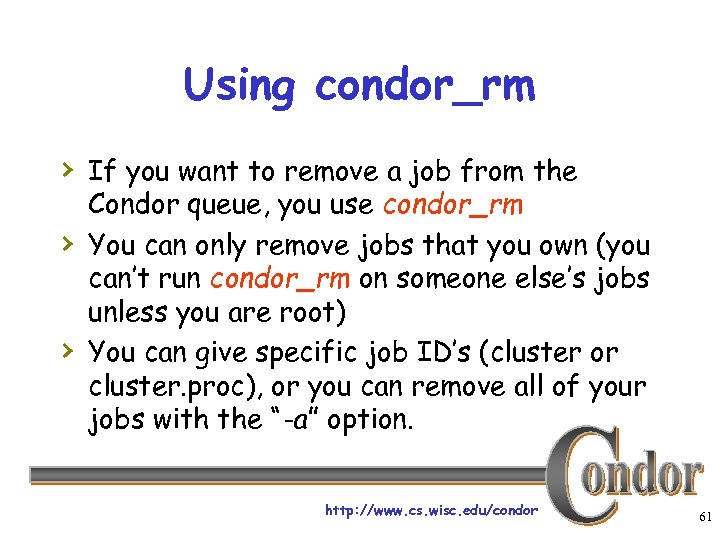

Using condor_rm › If you want to remove a job from the › › Condor queue, you use condor_rm You can only remove jobs that you own (you can’t run condor_rm on someone else’s jobs unless you are root) You can give specific job ID’s (cluster or cluster. proc), or you can remove all of your jobs with the “-a” option. http: //www. cs. wisc. edu/condor 61

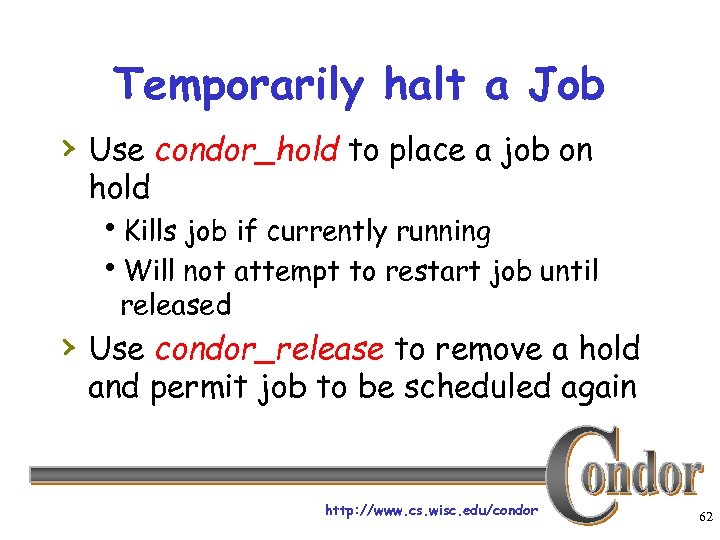

Temporarily halt a Job › Use condor_hold to place a job on hold h. Kills job if currently running h. Will not attempt to restart job until released › Use condor_release to remove a hold and permit job to be scheduled again http: //www. cs. wisc. edu/condor 62

Using condor_history › Once your job completes, it will no longer › › show up in condor_q You can use condor_history to view information about a completed job The status field (“ST”) will have either a “C” for “completed”, or an “X” if the job was removed with condor_rm http: //www. cs. wisc. edu/condor 63

Getting Email from Condor › By default, Condor will send you email when your jobs completes h. With lots of information about the run › If you don’t want this email, put this in your submit file: notification = never › If you want email every time something happens to your job (preempt, exit, etc), use this: notification = always http: //www. cs. wisc. edu/condor 64

Getting Email from Condor (cont’d) › If you only want email in case of errors, use this: notification = error › By default, the email is sent to your account on the host you submitted from. If you want the email to go to a different address, use this: notify_user = email@address. here http: //www. cs. wisc. edu/condor 65

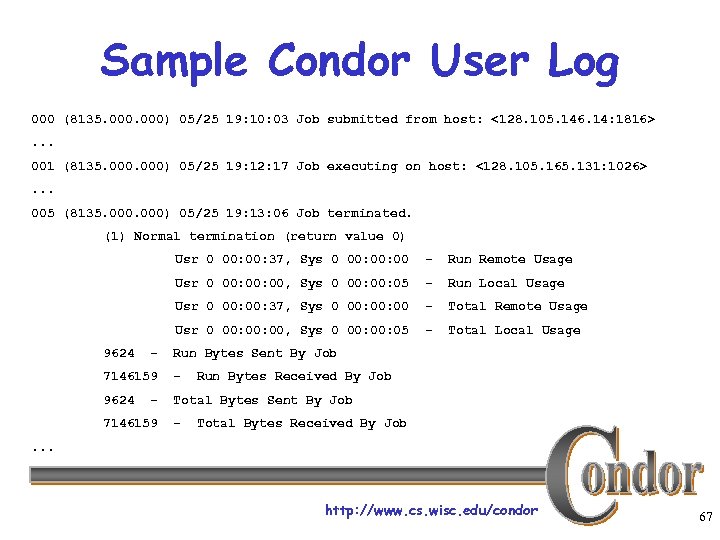

A Job’s life story: The “User Log” file › A User. Log must be specified in your submit file: h. Log = filename › You get a log entry for everything that happens to your job: h. When it was submitted, when it starts executing, preempted, restarted, completes, if there any problems, etc. › Very useful! Highly recommended! http: //www. cs. wisc. edu/condor 66

Sample Condor User Log 000 (8135. 000) 05/25 19: 10: 03 Job submitted from host: <128. 105. 146. 14: 1816>. . . 001 (8135. 000) 05/25 19: 12: 17 Job executing on host: <128. 105. 165. 131: 1026>. . . 005 (8135. 000) 05/25 19: 13: 06 Job terminated. (1) Normal termination (return value 0) Usr 0 00: 37, Sys 0 00: 00 - Run Local Usage Usr 0 00: 37, Sys 0 00: 00 - Total Remote Usage Usr 0 00: 00, Sys 0 00: 05 - Run Remote Usage Usr 0 00: 00, Sys 0 00: 05 9624 - - Total Local Usage Run Bytes Sent By Job 7146159 - 9624 Total Bytes Sent By Job - 7146159 - Run Bytes Received By Job Total Bytes Received By Job . . . http: //www. cs. wisc. edu/condor 67

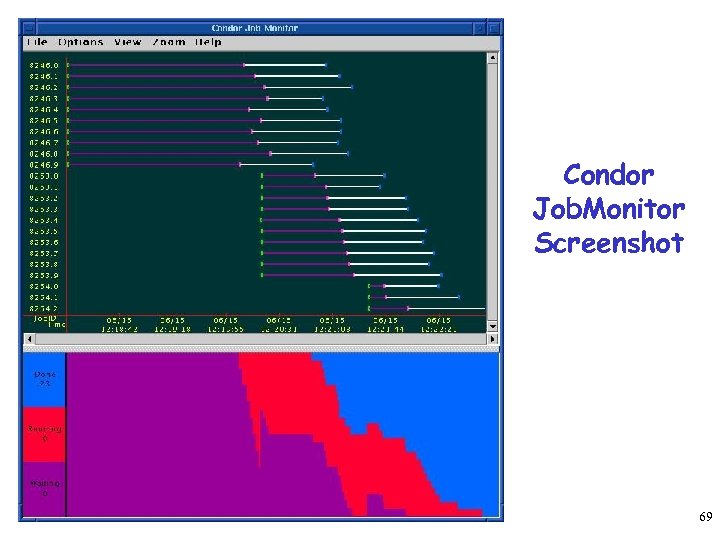

Uses for the User Log › Easily read by human or machine h. C++ library and Perl Module for parsing User. Logs is available hlog_xml=True – XML formatted › Event triggers for meta-schedulers h. Like Dag. Man… › Visualizations of job progress h. Condor Job. Monitor Viewer http: //www. cs. wisc. edu/condor 68

Condor Job. Monitor Screenshot 69

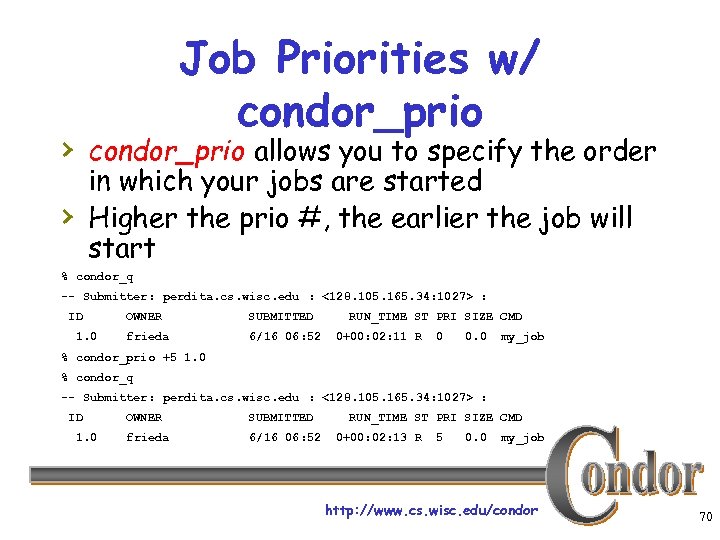

Job Priorities w/ condor_prio › condor_prio allows you to specify the order in which your jobs are started Higher the prio #, the earlier the job will start › % condor_q -- Submitter: perdita. cs. wisc. edu : <128. 105. 165. 34: 1027> : ID 1. 0 OWNER SUBMITTED frieda 6/16 06: 52 RUN_TIME ST PRI SIZE CMD 0+00: 02: 11 R 0 0. 0 my_job % condor_prio +5 1. 0 % condor_q -- Submitter: perdita. cs. wisc. edu : <128. 105. 165. 34: 1027> : ID 1. 0 OWNER SUBMITTED frieda 6/16 06: 52 RUN_TIME ST PRI SIZE CMD 0+00: 02: 13 R 5 0. 0 my_job http: //www. cs. wisc. edu/condor 70

Want other Scheduling possibilities? Use the Scheduler Universe › In addition to VANILLA, another job › › universe is the Scheduler Universe jobs run on the submitting machine and serve as a meta-scheduler. DAGMan meta-scheduler included http: //www. cs. wisc. edu/condor 71

DAGMan › Directed Acyclic Graph Manager › DAGMan allows you to specify the dependencies between your Condor jobs, so it can manage them automatically for you. › (e. g. , “Don’t run job “B” until job “A” has completed successfully. ”) http: //www. cs. wisc. edu/condor 72

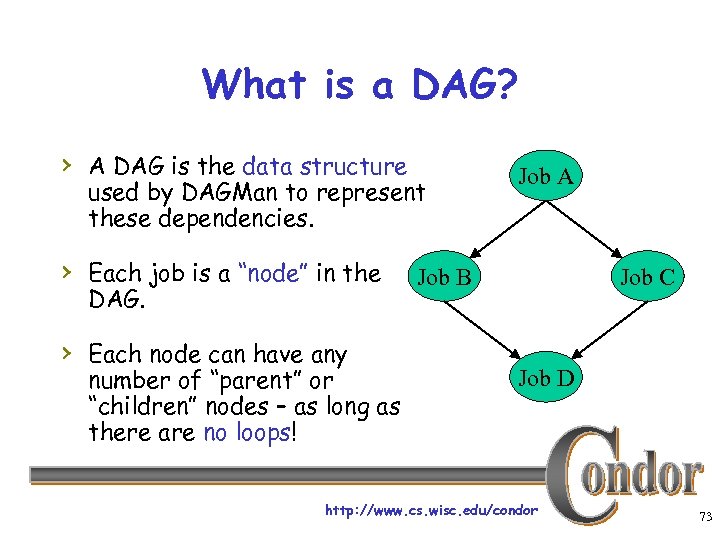

What is a DAG? › A DAG is the data structure used by DAGMan to represent these dependencies. › Each job is a “node” in the DAG. › Each node can have any number of “parent” or “children” nodes – as long as there are no loops! Job A Job B Job C Job D http: //www. cs. wisc. edu/condor 73

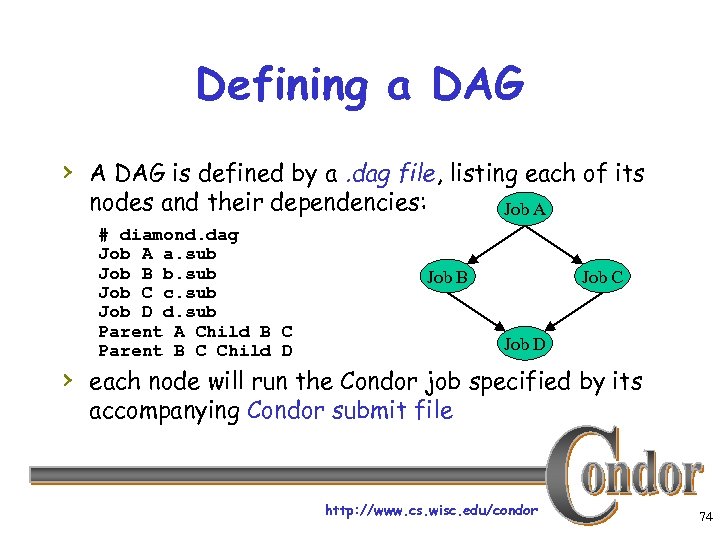

Defining a DAG › A DAG is defined by a. dag file, listing each of its nodes and their dependencies: # diamond. dag Job A a. sub Job B b. sub Job C c. sub Job D d. sub Parent A Child B C Parent B C Child D Job A Job B Job C Job D › each node will run the Condor job specified by its accompanying Condor submit file http: //www. cs. wisc. edu/condor 74

Submitting a DAG › To start your DAG, just run condor_submit_dag with your. dag file, and Condor will start a personal DAGMan daemon which to begin running your jobs: % condor_submit_dag diamond. dag › condor_submit_dag submits a Scheduler Universe › Job with DAGMan as the executable. Thus the DAGMan daemon itself runs as a Condor job, so you don’t have to baby-sit it. http: //www. cs. wisc. edu/condor 75

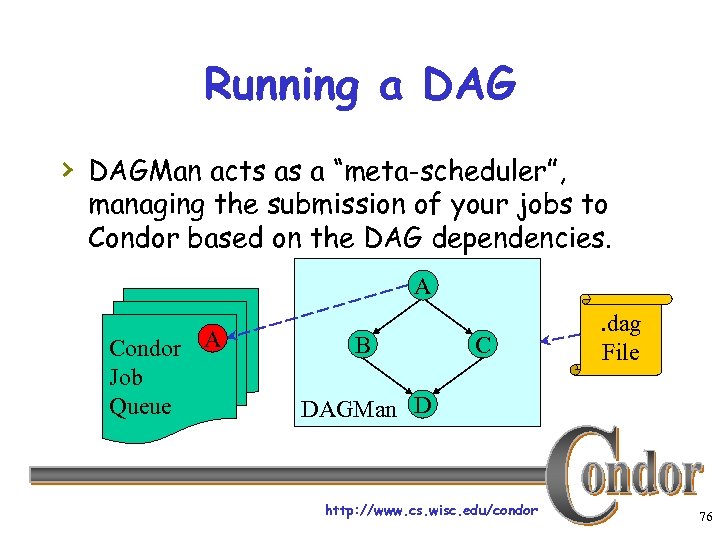

Running a DAG › DAGMan acts as a “meta-scheduler”, managing the submission of your jobs to Condor based on the DAG dependencies. A Condor A Job Queue B C . dag File DAGMan D http: //www. cs. wisc. edu/condor 76

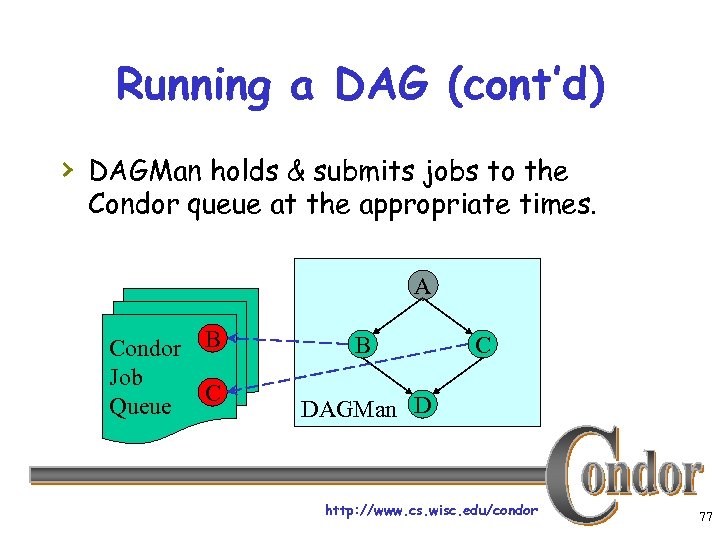

Running a DAG (cont’d) › DAGMan holds & submits jobs to the Condor queue at the appropriate times. A Condor B Job C Queue B C DAGMan D http: //www. cs. wisc. edu/condor 77

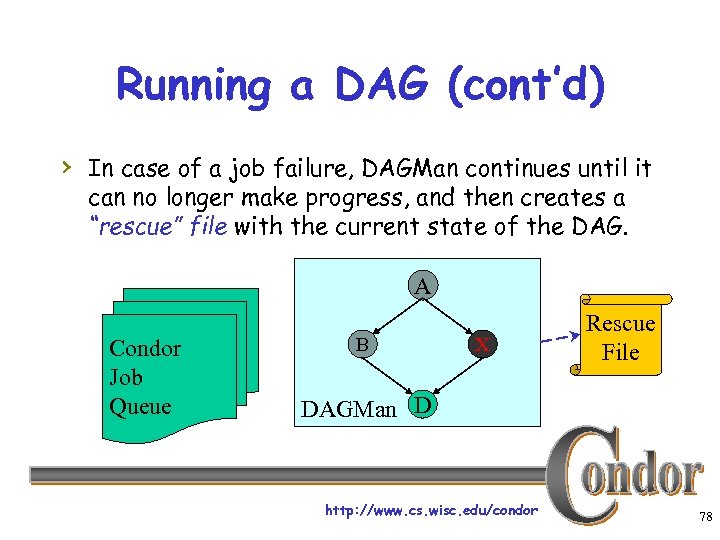

Running a DAG (cont’d) › In case of a job failure, DAGMan continues until it can no longer make progress, and then creates a “rescue” file with the current state of the DAG. A Condor Job Queue B X Rescue File DAGMan D http: //www. cs. wisc. edu/condor 78

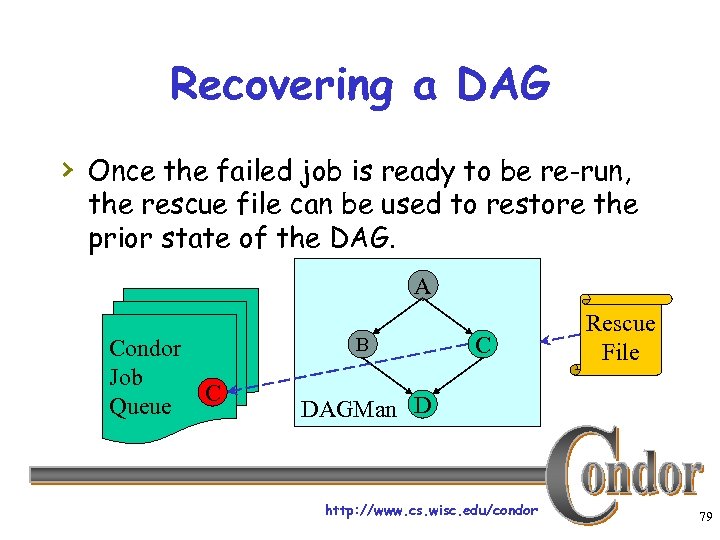

Recovering a DAG › Once the failed job is ready to be re-run, the rescue file can be used to restore the prior state of the DAG. A Condor Job C Queue B C Rescue File DAGMan D http: //www. cs. wisc. edu/condor 79

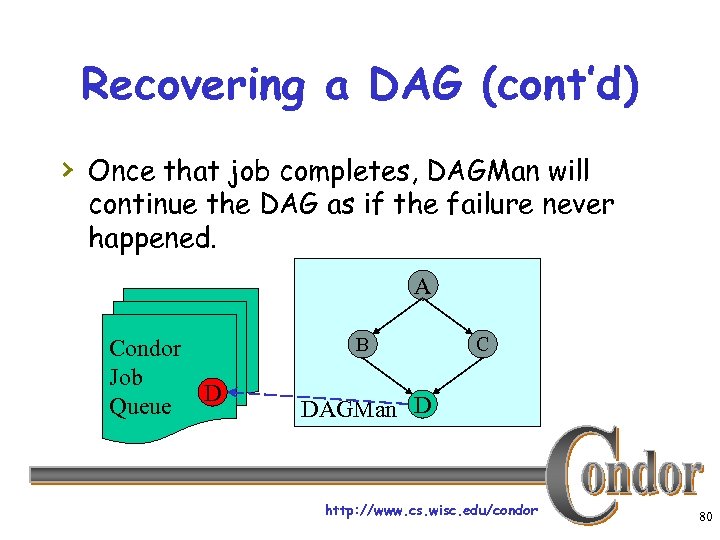

Recovering a DAG (cont’d) › Once that job completes, DAGMan will continue the DAG as if the failure never happened. A Condor Job D Queue B C DAGMan D http: //www. cs. wisc. edu/condor 80

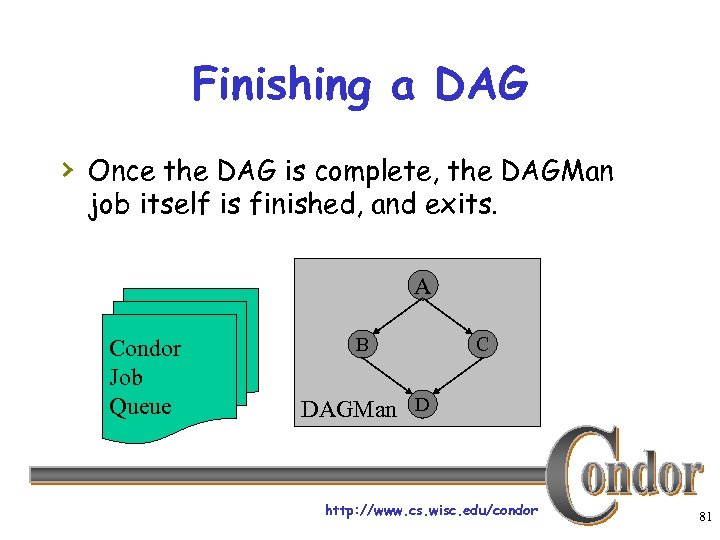

Finishing a DAG › Once the DAG is complete, the DAGMan job itself is finished, and exits. A Condor Job Queue B C DAGMan D http: //www. cs. wisc. edu/condor 81

Additional DAGMan Features › Provides other handy features for job management… hnodes can have PRE & POST scripts hfailed nodes can be automatically re- tried a configurable number of times hjob submission can be “throttled” http: //www. cs. wisc. edu/condor 82

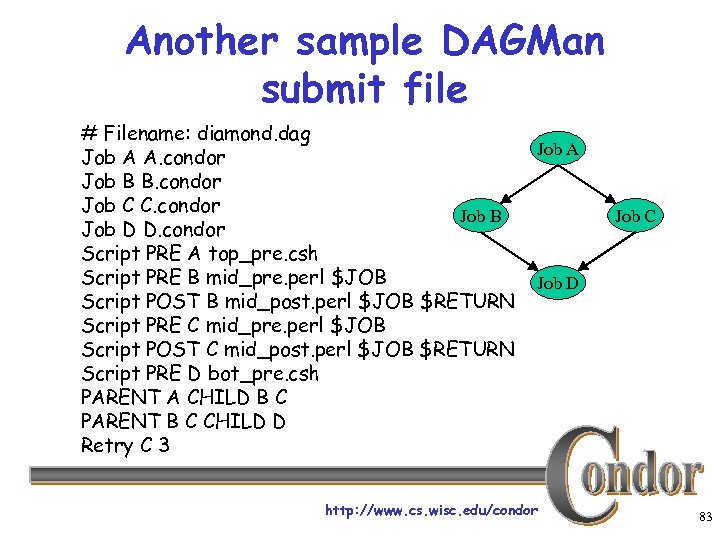

Another sample DAGMan submit file # Filename: diamond. dag Job A A. condor Job B B. condor Job C C. condor Job B Job D D. condor Script PRE A top_pre. csh Script PRE B mid_pre. perl $JOB Script POST B mid_post. perl $JOB $RETURN Script PRE C mid_pre. perl $JOB Script POST C mid_post. perl $JOB $RETURN Script PRE D bot_pre. csh PARENT A CHILD B C PARENT B C CHILD D Retry C 3 Job A Job C Job D http: //www. cs. wisc. edu/condor 83

DAGMan, cont. › DAGMan can help w/ visualization of the DAG h. Can create input files for AT&T’s graphviz package (dot input). › Why not just use make? › In the works: dynamic DAGs. http: //www. cs. wisc. edu/condor 84

We’ve seen how Condor will … keep an eye on your jobs and will keep you posted on their progress … implement your policy on the execution order of the jobs … keep a log of your job activities … add fault tolerance to your jobs ? http: //www. cs. wisc. edu/condor 85

What if each job needed to run for 20 days? What if I wanted to interrupt a job with a higher priority job? http: //www. cs. wisc. edu/condor 86

Condor’s Standard Universe to the rescue! › Condor can support various combinations of › features/environments in different “Universes” Different Universes provide different functionality for your job: h. Vanilla – Run any Serial Job h. Scheduler – Plug in a meta-scheduler h. Standard – Support for transparent process checkpoint and restart http: //www. cs. wisc. edu/condor 87

Process Checkpointing › Condor’s Process Checkpointing mechanism saves all the state of a process into a checkpoint file h. Memory, CPU, I/O, etc. › The process can then be restarted from › right where it left off Typically no changes to your job’s source code needed – however, your job must be relinked with Condor’s Standard Universe support library http: //www. cs. wisc. edu/condor 88

Relinking Your Job for submission to the Standard Universe To do this, just place “condor_compile” in front of the command you normally use to link your job: condor_compile gcc -o myjob. c OR condor_compile f 77 -o myjob filea. f fileb. f OR condor_compile make –f My. Makefile http: //www. cs. wisc. edu/condor 89

Limitations in the Standard Universe › Condor’s checkpointing is not at the kernel level. Thus in the Standard Universe the job may not h. Fork() h. Use kernel threads h. Use some forms of IPC, such as pipes and shared memory › Many typical scientific jobs are OK http: //www. cs. wisc. edu/condor 90

When will Condor checkpoint your job? › Periodically, if desired h. For fault tolerance › To free the machine to do a higher priority task (higher priority job, or a job from a user with higher priority) h. Preemptive-resume scheduling › When you explicitly run condor_checkpoint, condor_vacate, condor_off or condor_restart command http: //www. cs. wisc. edu/condor 91

“Standalone” Checkpointing › Can use Condor Project’s checkpoint technology outside of Condor… h. SIGTSTP = checkpoint and exit h. SIGUSR 2 = periodic checkpoint condor_compile cc myapp. c –o myapp -_condor_ckpt foo-image. ckpt … myapp -_condor_restart foo-image. ckpt http: //www. cs. wisc. edu/condor 92

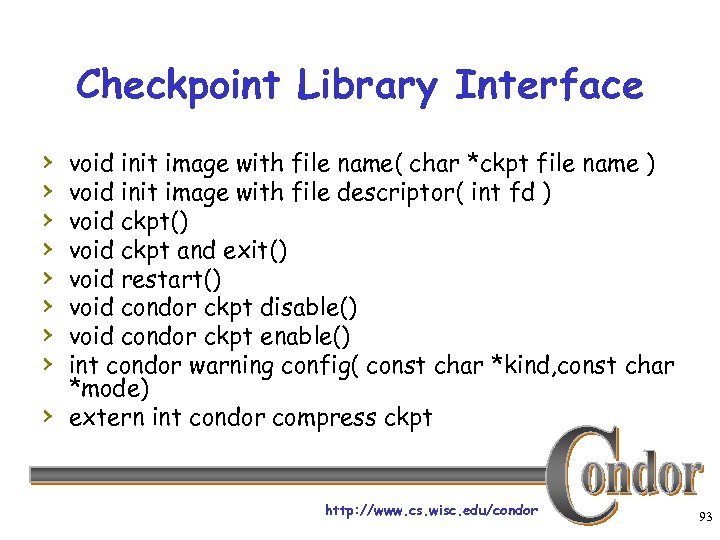

Checkpoint Library Interface › › › › › void init image with file name( char *ckpt file name ) void init image with file descriptor( int fd ) void ckpt() void ckpt and exit() void restart() void condor ckpt disable() void condor ckpt enable() int condor warning config( const char *kind, const char *mode) extern int condor compress ckpt http: //www. cs. wisc. edu/condor 93

What Condor Daemons are running on my machine, and what do they do? http: //www. cs. wisc. edu/condor 94

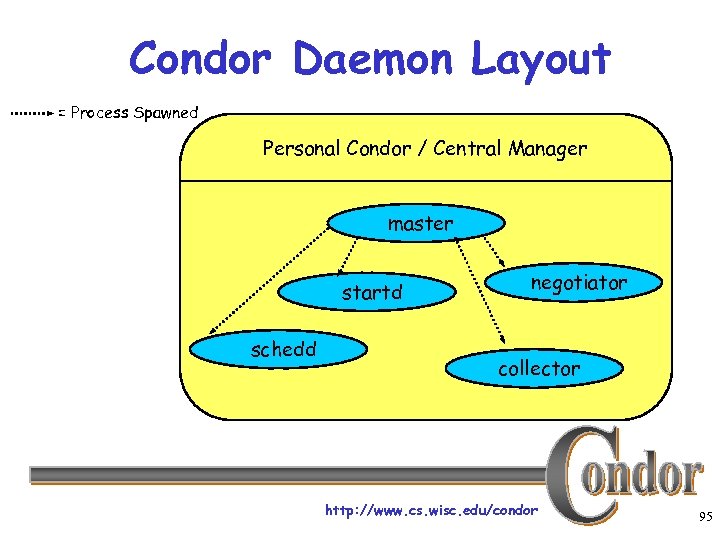

Condor Daemon Layout = Process Spawned Personal Condor / Central Manager master startd schedd negotiator collector http: //www. cs. wisc. edu/condor 95

condor_master › Starts up all other Condor daemons › If there any problems and a › daemon exits, it restarts the daemon and sends email to the administrator Checks the time stamps on the binaries of the other Condor daemons, and if new binaries appear, the master will gracefully shutdown the currently running version and start the new version http: //www. cs. wisc. edu/condor master 96

condor_master (cont’d) › Acts as the server for many Condor remote administration commands: hcondor_reconfig, condor_restart, condor_off, condor_on, condor_config_val, etc. http: //www. cs. wisc. edu/condor 97

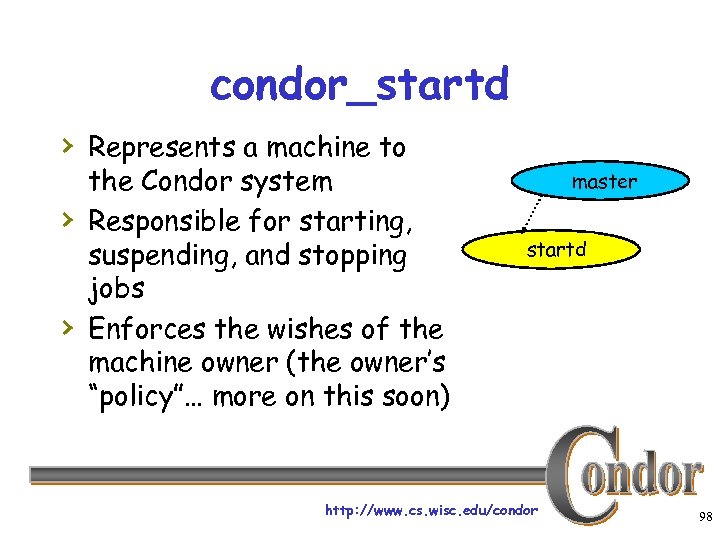

condor_startd › Represents a machine to › › the Condor system Responsible for starting, suspending, and stopping jobs Enforces the wishes of the machine owner (the owner’s “policy”… more on this soon) master startd http: //www. cs. wisc. edu/condor 98

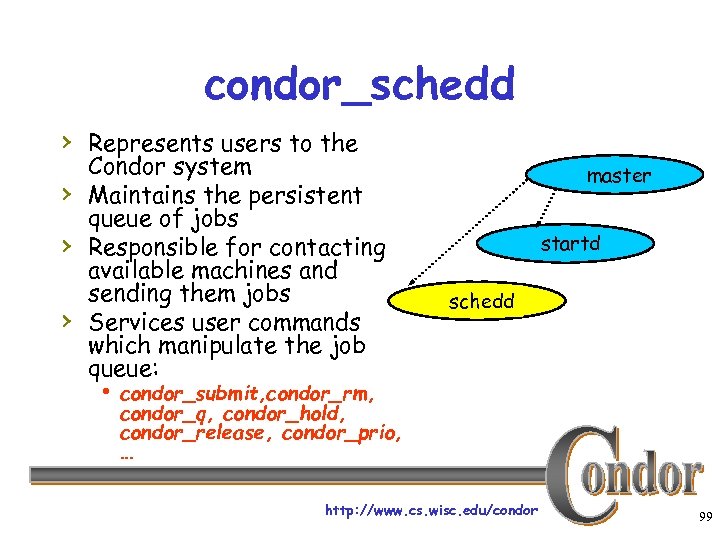

condor_schedd › Represents users to the › › › Condor system Maintains the persistent queue of jobs Responsible for contacting available machines and sending them jobs Services user commands which manipulate the job queue: master startd schedd h condor_submit, condor_rm, condor_q, condor_hold, condor_release, condor_prio, … http: //www. cs. wisc. edu/condor 99

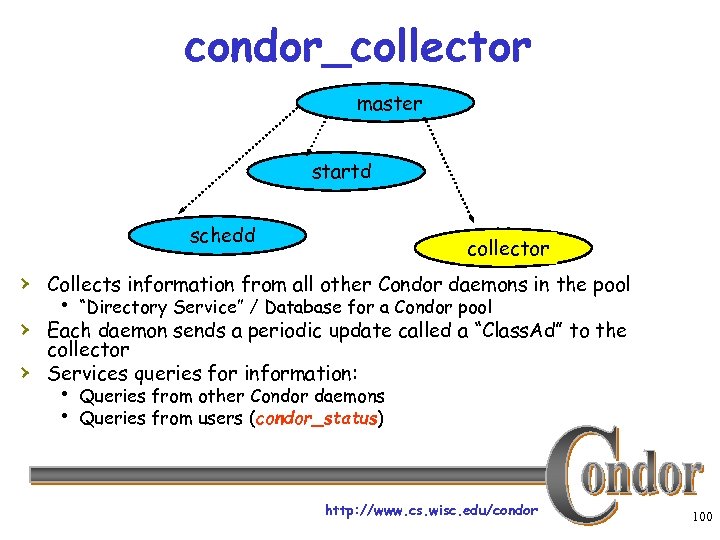

condor_collector master startd schedd collector › Collects information from all other Condor daemons in the pool h “Directory Service” / Database for a Condor pool › Each daemon sends a periodic update called a “Class. Ad” to the › collector Services queries for information: h Queries from other Condor daemons h Queries from users (condor_status) http: //www. cs. wisc. edu/condor 100

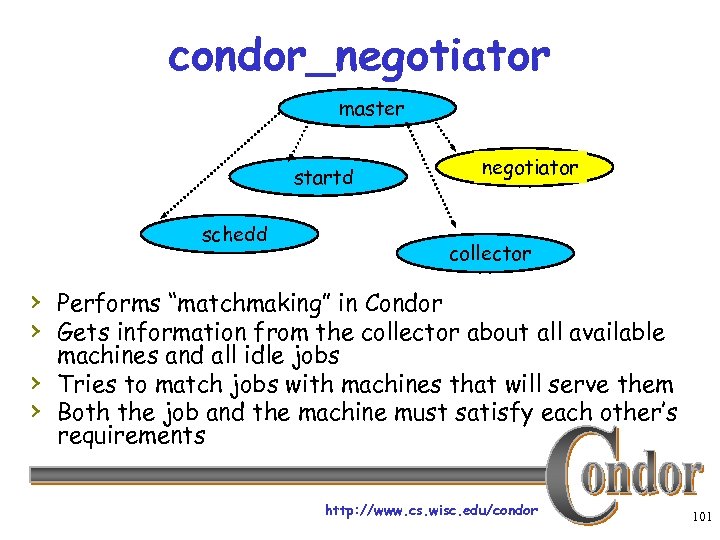

condor_negotiator master startd schedd negotiator collector › Performs “matchmaking” in Condor › Gets information from the collector about all available › › machines and all idle jobs Tries to match jobs with machines that will serve them Both the job and the machine must satisfy each other’s requirements http: //www. cs. wisc. edu/condor 101

Happy Day! Frieda’s organization purchased a Beowulf Cluster! › Frieda Installs Condor on › all the dedicated Cluster nodes, and configures them with her machine as the central manager… Now her Condor Pool can run multiple jobs at once http: //www. cs. wisc. edu/condor 102

600 Condor jobs personal your Condor Pool workstation Condor http: //www. cs. wisc. edu/condor 103

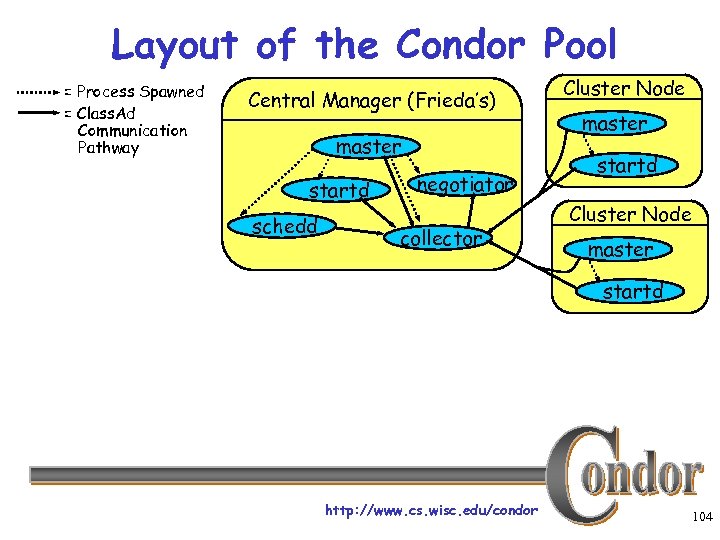

Layout of the Condor Pool = Process Spawned = Class. Ad Communication Pathway Central Manager (Frieda’s) master startd schedd negotiator collector Cluster Node master startd http: //www. cs. wisc. edu/condor 104

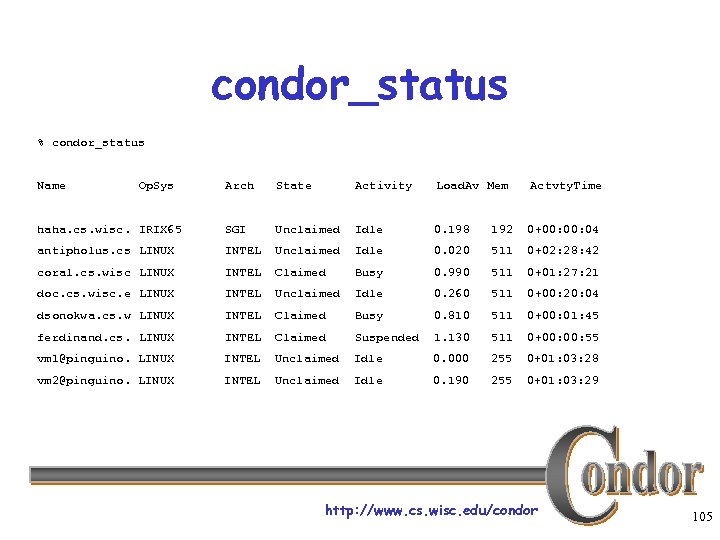

condor_status % condor_status Name Op. Sys Arch State Activity Load. Av Mem Actvty. Time haha. cs. wisc. IRIX 65 SGI Unclaimed Idle 0. 198 192 0+00: 04 antipholus. cs LINUX INTEL Unclaimed Idle 0. 020 511 0+02: 28: 42 coral. cs. wisc LINUX INTEL Claimed Busy 0. 990 511 0+01: 27: 21 doc. cs. wisc. e LINUX INTEL Unclaimed Idle 0. 260 511 0+00: 20: 04 dsonokwa. cs. w LINUX INTEL Claimed Busy 0. 810 511 0+00: 01: 45 ferdinand. cs. LINUX INTEL Claimed Suspended 1. 130 511 0+00: 55 vm 1@pinguino. LINUX INTEL Unclaimed Idle 0. 000 255 0+01: 03: 28 vm 2@pinguino. LINUX INTEL Unclaimed Idle 0. 190 255 0+01: 03: 29 http: //www. cs. wisc. edu/condor 105

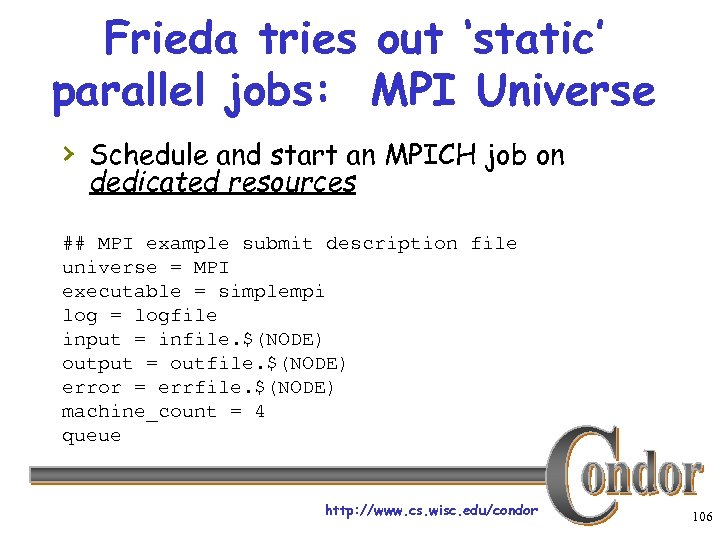

Frieda tries out ‘static’ parallel jobs: MPI Universe › Schedule and start an MPICH job on dedicated resources ## MPI example submit description file universe = MPI executable = simplempi log = logfile input = infile. $(NODE) output = outfile. $(NODE) error = errfile. $(NODE) machine_count = 4 queue http: //www. cs. wisc. edu/condor 106

(Boss Fat Cat) The Boss says Frieda can add her co-workers’ desktop machines into her Condor pool as well… but only if they can also submit jobs. http: //www. cs. wisc. edu/condor 107

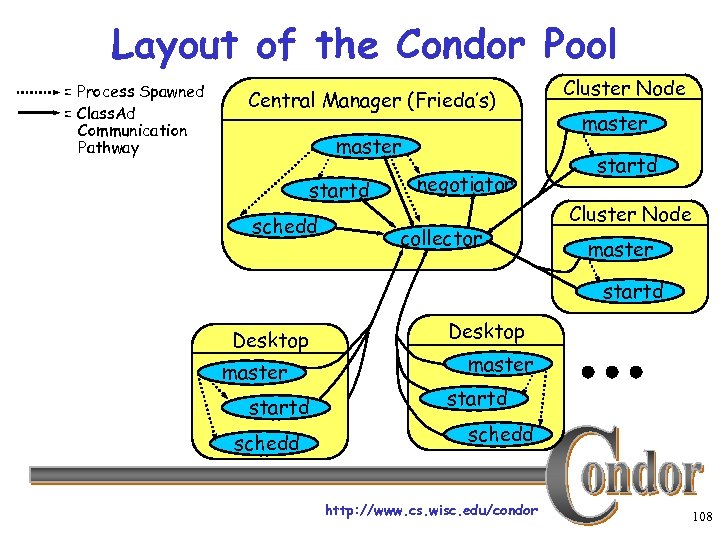

Layout of the Condor Pool = Process Spawned = Class. Ad Communication Pathway Central Manager (Frieda’s) master startd schedd negotiator collector Cluster Node master startd Desktop master startd schedd http: //www. cs. wisc. edu/condor 108

Some of the machines in the Pool do not have enough memory or scratch disk space to run my job! http: //www. cs. wisc. edu/condor 109

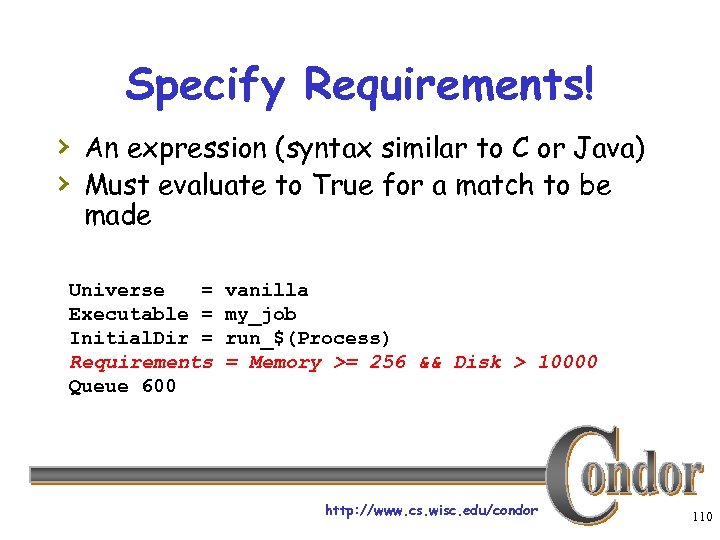

Specify Requirements! › An expression (syntax similar to C or Java) › Must evaluate to True for a match to be made Universe = Executable = Initial. Dir = Requirements Queue 600 vanilla my_job run_$(Process) = Memory >= 256 && Disk > 10000 http: //www. cs. wisc. edu/condor 110

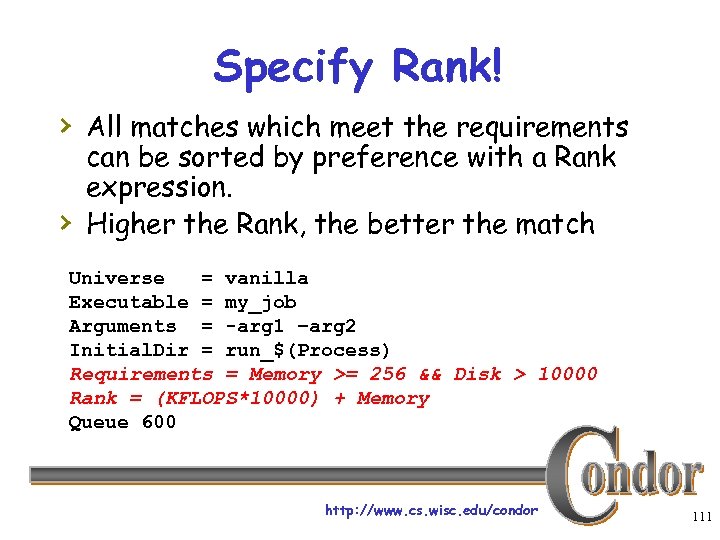

Specify Rank! › All matches which meet the requirements › can be sorted by preference with a Rank expression. Higher the Rank, the better the match Universe = vanilla Executable = my_job Arguments = -arg 1 –arg 2 Initial. Dir = run_$(Process) Requirements = Memory >= 256 && Disk > 10000 Rank = (KFLOPS*10000) + Memory Queue 600 http: //www. cs. wisc. edu/condor 111

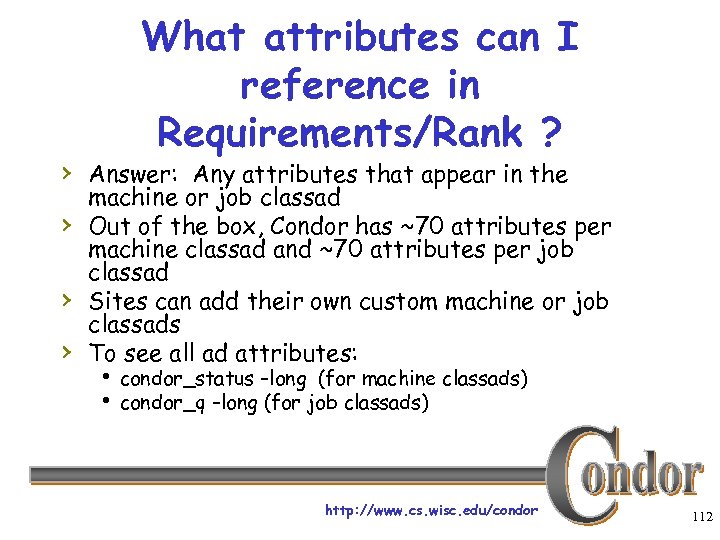

What attributes can I reference in Requirements/Rank ? › Answer: Any attributes that appear in the › › › machine or job classad Out of the box, Condor has ~70 attributes per machine classad and ~70 attributes per job classad Sites can add their own custom machine or job classads To see all ad attributes: h condor_status –long (for machine classads) h condor_q –long (for job classads) http: //www. cs. wisc. edu/condor 112

How can my jobs access their data files? http: //www. cs. wisc. edu/condor 113

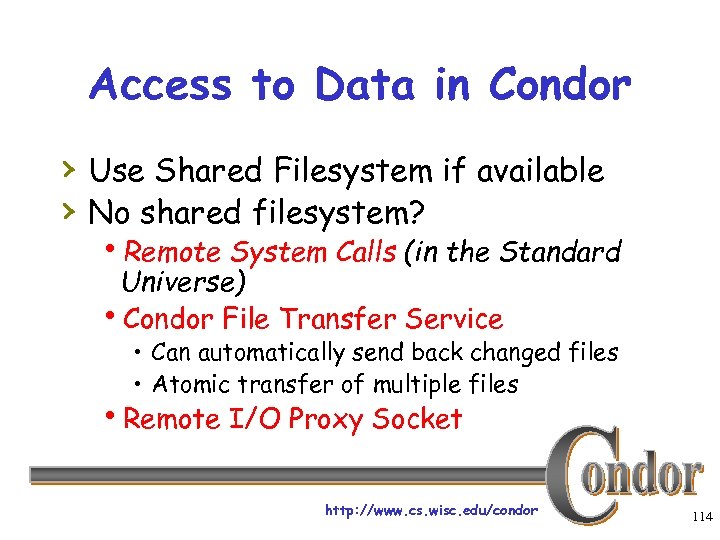

Access to Data in Condor › Use Shared Filesystem if available › No shared filesystem? h. Remote System Calls (in the Standard Universe) h. Condor File Transfer Service • Can automatically send back changed files • Atomic transfer of multiple files h. Remote I/O Proxy Socket http: //www. cs. wisc. edu/condor 114

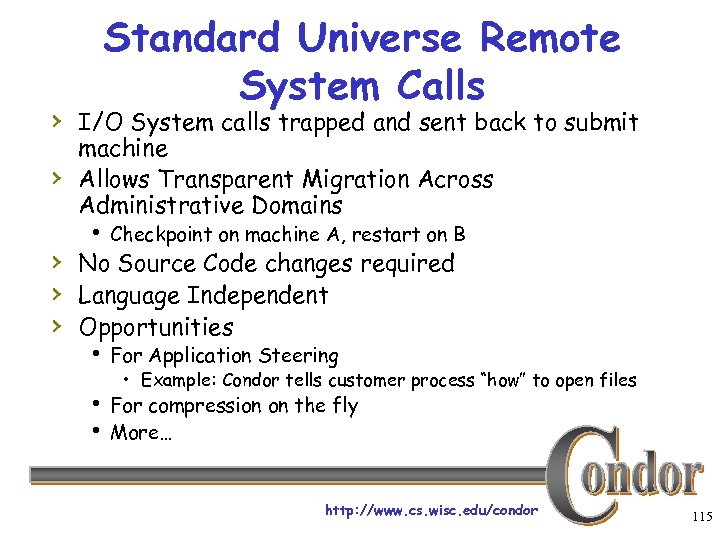

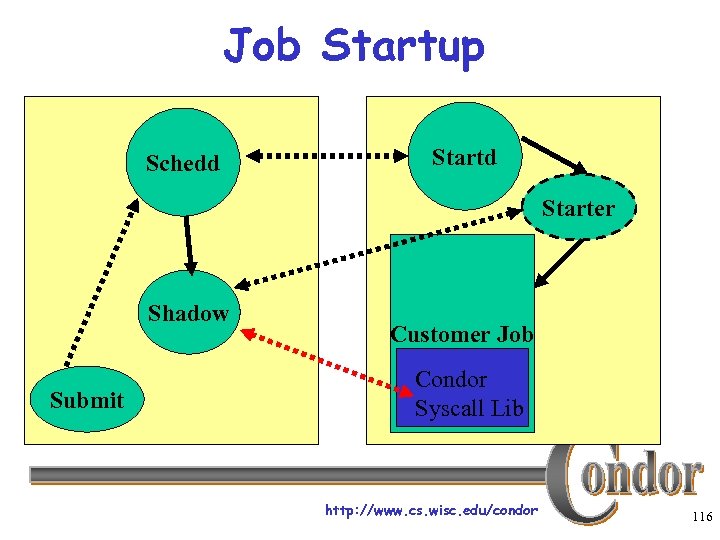

Standard Universe Remote System Calls › I/O System calls trapped and sent back to submit › machine Allows Transparent Migration Across Administrative Domains h Checkpoint on machine A, restart on B › No Source Code changes required › Language Independent › Opportunities h For Application Steering • Example: Condor tells customer process “how” to open files h For compression on the fly h More… http: //www. cs. wisc. edu/condor 115

Job Startup Schedd Starter Shadow Submit Customer Job Condor Syscall Lib http: //www. cs. wisc. edu/condor 116

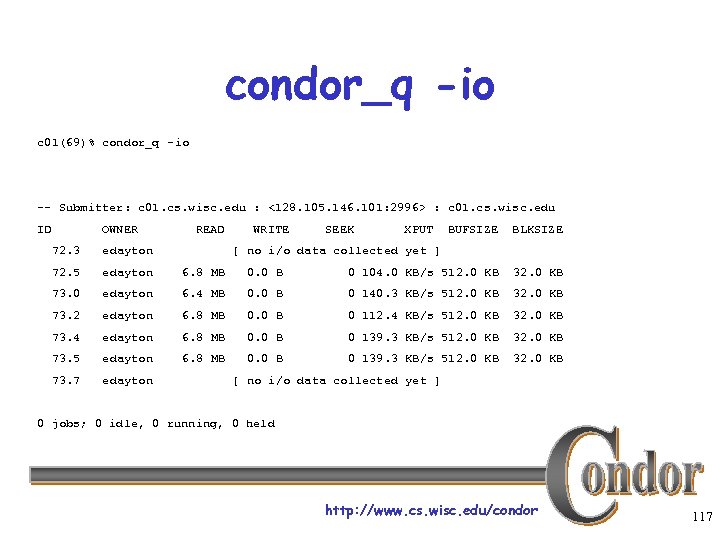

condor_q -io c 01(69)% condor_q -io -- Submitter: c 01. cs. wisc. edu : <128. 105. 146. 101: 2996> : c 01. cs. wisc. edu ID OWNER READ WRITE SEEK XPUT BUFSIZE BLKSIZE 72. 3 edayton [ no i/o data collected yet ] 72. 5 edayton 6. 8 MB 0. 0 B 0 104. 0 KB/s 512. 0 KB 32. 0 KB 73. 0 edayton 6. 4 MB 0. 0 B 0 140. 3 KB/s 512. 0 KB 32. 0 KB 73. 2 edayton 6. 8 MB 0. 0 B 0 112. 4 KB/s 512. 0 KB 32. 0 KB 73. 4 edayton 6. 8 MB 0. 0 B 0 139. 3 KB/s 512. 0 KB 32. 0 KB 73. 5 edayton 6. 8 MB 0. 0 B 0 139. 3 KB/s 512. 0 KB 32. 0 KB 73. 7 edayton [ no i/o data collected yet ] 0 jobs; 0 idle, 0 running, 0 held http: //www. cs. wisc. edu/condor 117

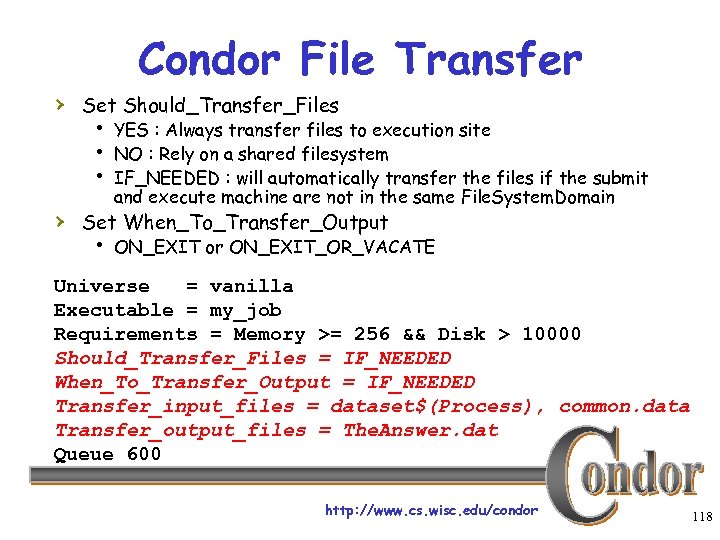

Condor File Transfer › Set Should_Transfer_Files h YES : Always transfer files to execution site h NO : Rely on a shared filesystem h IF_NEEDED : will automatically transfer the files if the submit and execute machine are not in the same File. System. Domain › Set When_To_Transfer_Output h ON_EXIT or ON_EXIT_OR_VACATE Universe = vanilla Executable = my_job Requirements = Memory >= 256 && Disk > 10000 Should_Transfer_Files = IF_NEEDED When_To_Transfer_Output = IF_NEEDED Transfer_input_files = dataset$(Process), common. data Transfer_output_files = The. Answer. dat Queue 600 http: //www. cs. wisc. edu/condor 118

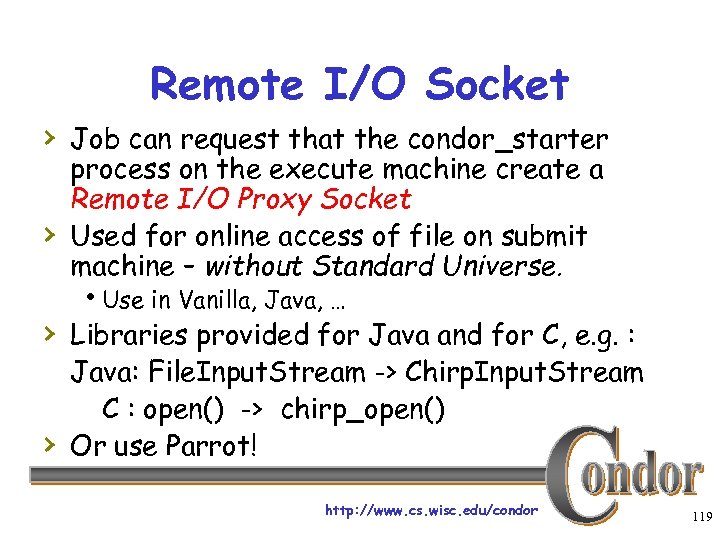

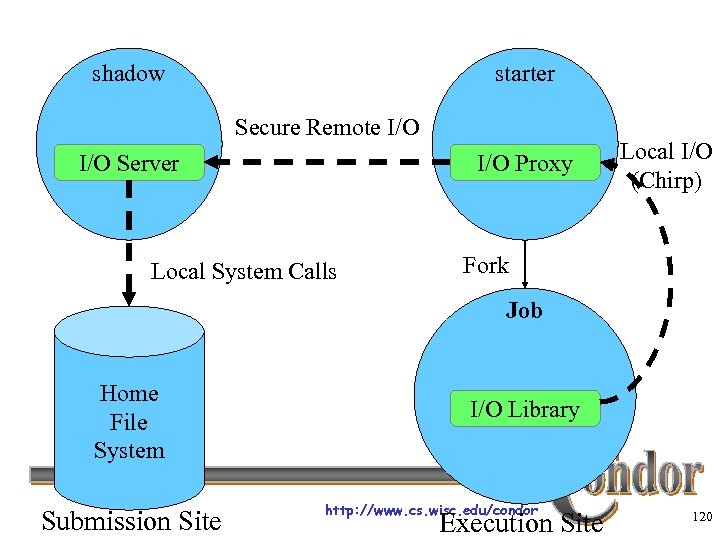

Remote I/O Socket › Job can request that the condor_starter › process on the execute machine create a Remote I/O Proxy Socket Used for online access of file on submit machine – without Standard Universe. h. Use in Vanilla, Java, … › Libraries provided for Java and for C, e. g. : › Java: File. Input. Stream -> Chirp. Input. Stream C : open() -> chirp_open() Or use Parrot! http: //www. cs. wisc. edu/condor 119

shadow starter Secure Remote I/O Server I/O Proxy Local System Calls Local I/O (Chirp) Fork Job Home File System Submission Site I/O Library http: //www. cs. wisc. edu/condor Execution Site 120

Policy Configuration (Boss Fat Cat) I am adding nodes to the Cluster… but the Engineering Department has priority on these nodes. http: //www. cs. wisc. edu/condor 121

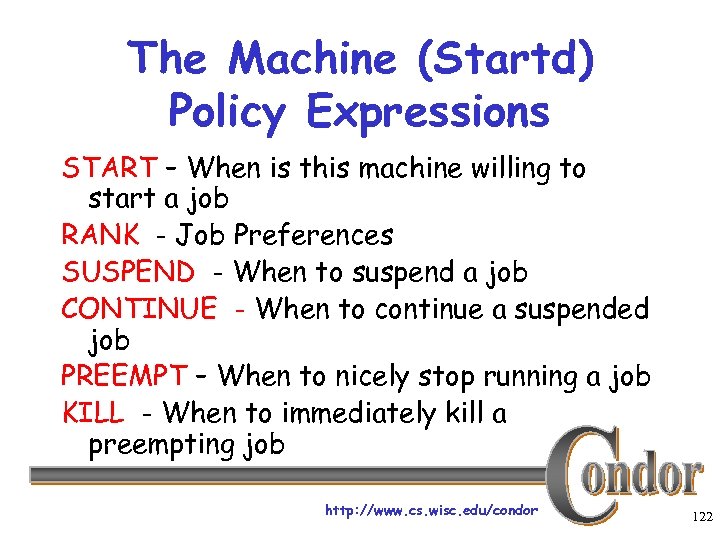

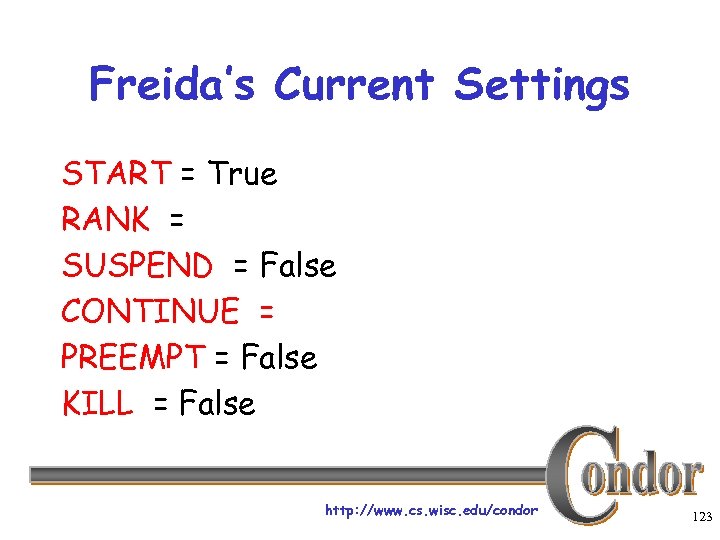

The Machine (Startd) Policy Expressions START – When is this machine willing to start a job RANK - Job Preferences SUSPEND - When to suspend a job CONTINUE - When to continue a suspended job PREEMPT – When to nicely stop running a job KILL - When to immediately kill a preempting job http: //www. cs. wisc. edu/condor 122

Freida’s Current Settings START = True RANK = SUSPEND = False CONTINUE = PREEMPT = False KILL = False http: //www. cs. wisc. edu/condor 123

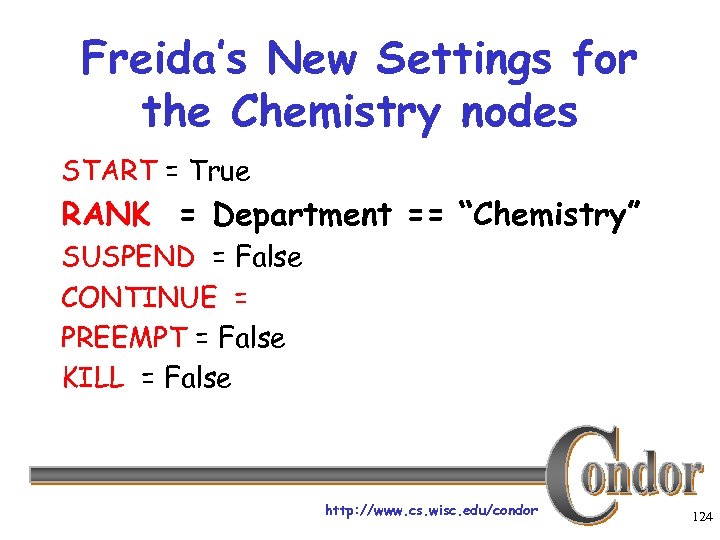

Freida’s New Settings for the Chemistry nodes START = True RANK = Department == “Chemistry” SUSPEND = False CONTINUE = PREEMPT = False KILL = False http: //www. cs. wisc. edu/condor 124

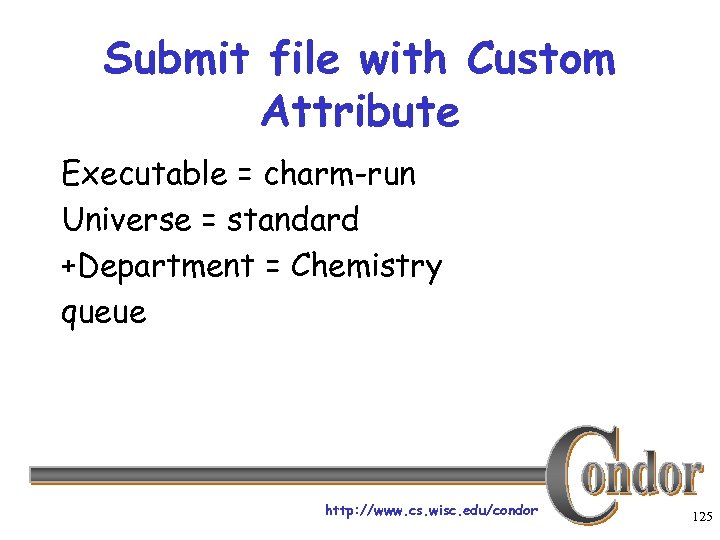

Submit file with Custom Attribute Executable = charm-run Universe = standard +Department = Chemistry queue http: //www. cs. wisc. edu/condor 125

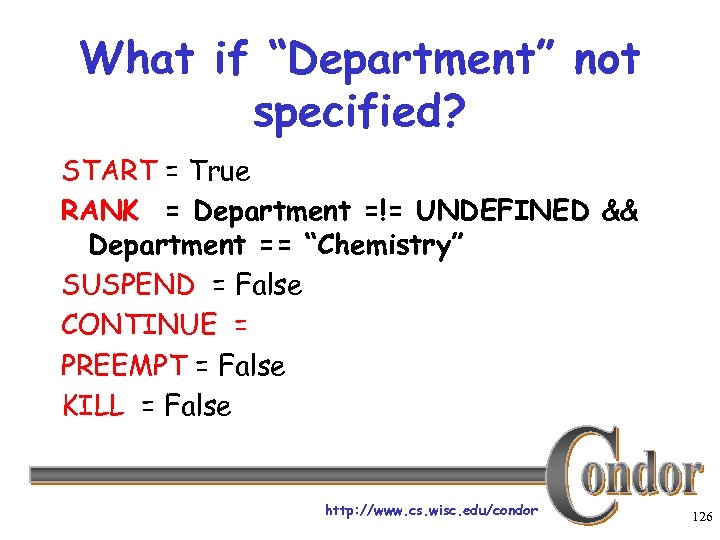

What if “Department” not specified? START = True RANK = Department =!= UNDEFINED && Department == “Chemistry” SUSPEND = False CONTINUE = PREEMPT = False KILL = False http: //www. cs. wisc. edu/condor 126

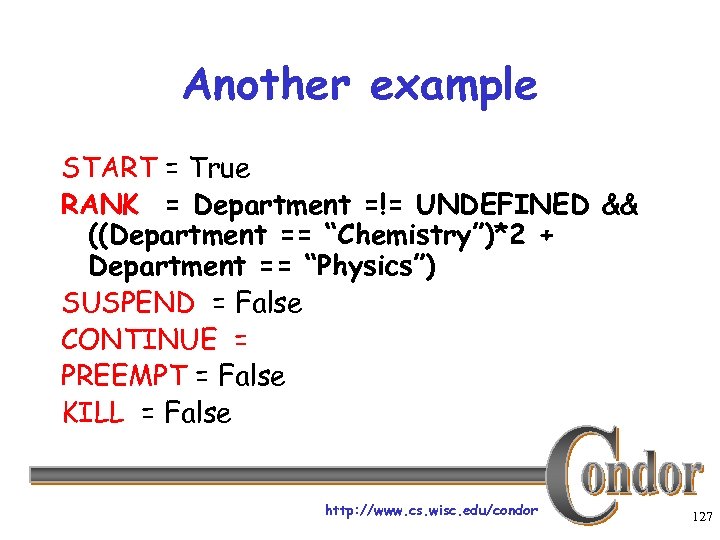

Another example START = True RANK = Department =!= UNDEFINED && ((Department == “Chemistry”)*2 + Department == “Physics”) SUSPEND = False CONTINUE = PREEMPT = False KILL = False http: //www. cs. wisc. edu/condor 127

Policy Configuration, cont (Boss Fat Cat) The Cluster is fine. But not the desktop machines. Condor can only use the desktops when they would otherwise be idle. http: //www. cs. wisc. edu/condor 128

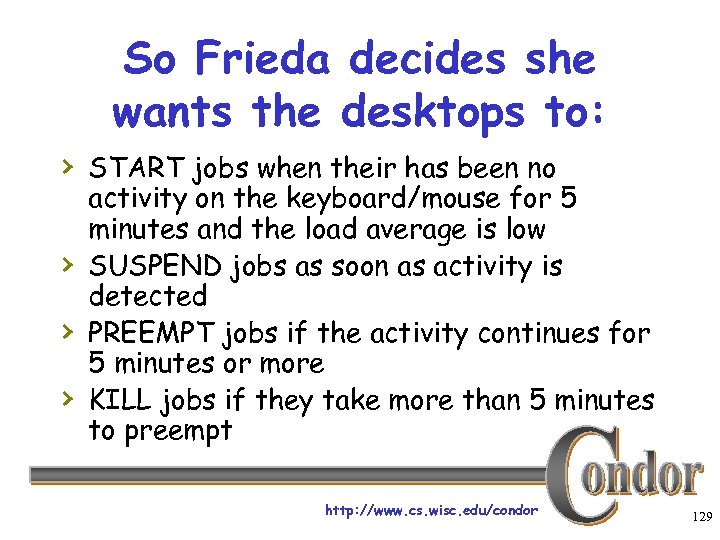

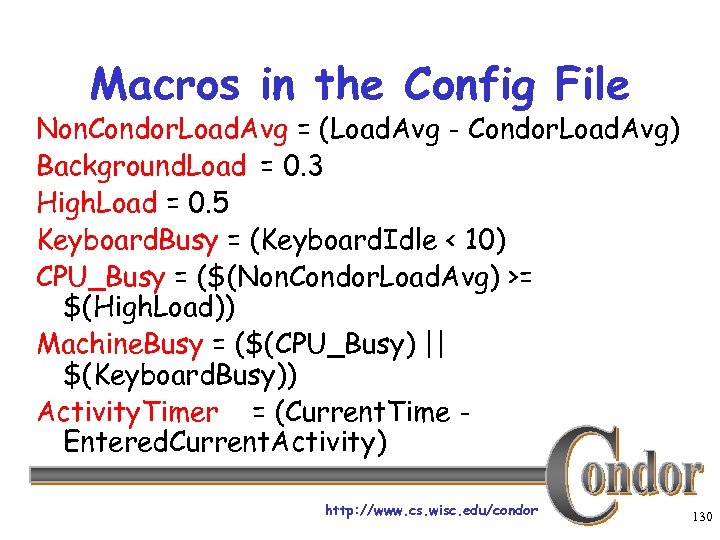

So Frieda decides she wants the desktops to: › START jobs when their has been no › › › activity on the keyboard/mouse for 5 minutes and the load average is low SUSPEND jobs as soon as activity is detected PREEMPT jobs if the activity continues for 5 minutes or more KILL jobs if they take more than 5 minutes to preempt http: //www. cs. wisc. edu/condor 129

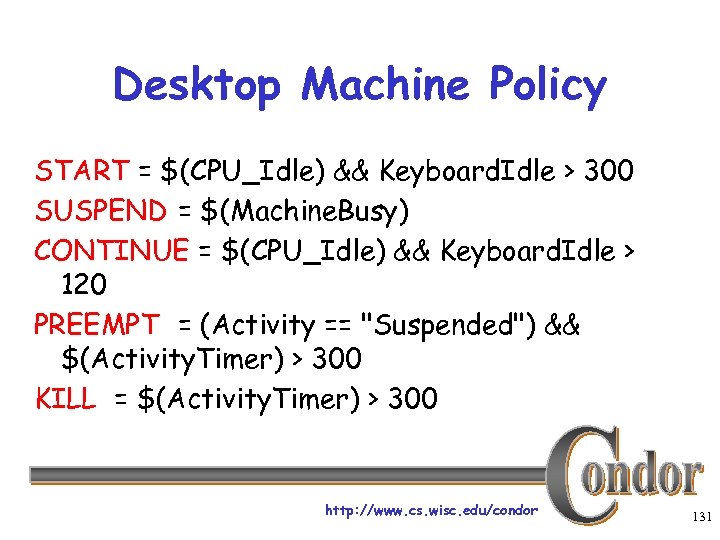

Macros in the Config File Non. Condor. Load. Avg = (Load. Avg - Condor. Load. Avg) Background. Load = 0. 3 High. Load = 0. 5 Keyboard. Busy = (Keyboard. Idle < 10) CPU_Busy = ($(Non. Condor. Load. Avg) >= $(High. Load)) Machine. Busy = ($(CPU_Busy) || $(Keyboard. Busy)) Activity. Timer = (Current. Time Entered. Current. Activity) http: //www. cs. wisc. edu/condor 130

Desktop Machine Policy START = $(CPU_Idle) && Keyboard. Idle > 300 SUSPEND = $(Machine. Busy) CONTINUE = $(CPU_Idle) && Keyboard. Idle > 120 PREEMPT = (Activity == "Suspended") && $(Activity. Timer) > 300 KILL = $(Activity. Timer) > 300 http: //www. cs. wisc. edu/condor 131

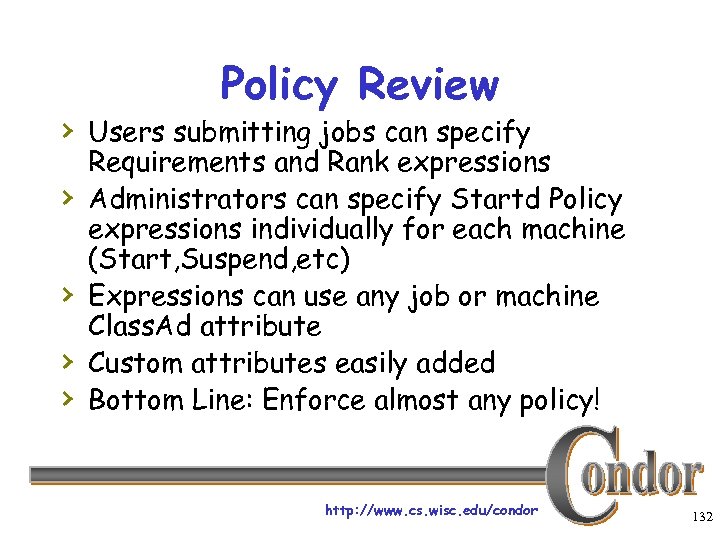

Policy Review › Users submitting jobs can specify › › Requirements and Rank expressions Administrators can specify Startd Policy expressions individually for each machine (Start, Suspend, etc) Expressions can use any job or machine Class. Ad attribute Custom attributes easily added Bottom Line: Enforce almost any policy! http: //www. cs. wisc. edu/condor 132

I want to use Java. Is there any easy way to run Java programs via Condor? http: //www. cs. wisc. edu/condor 133

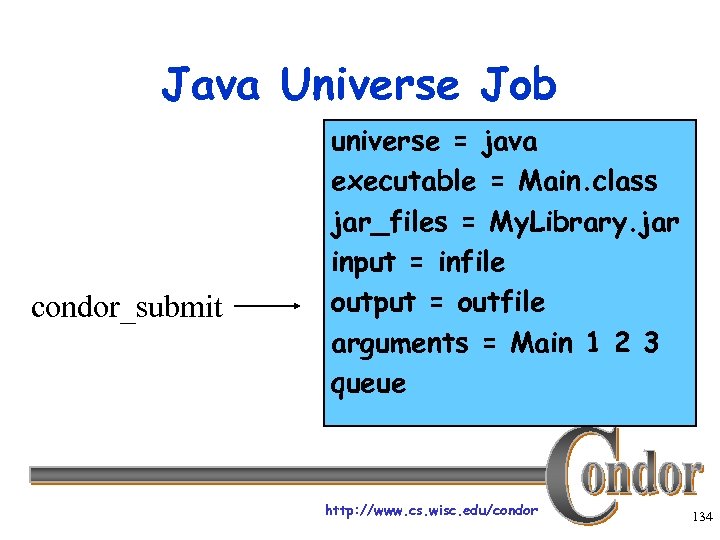

Java Universe Job condor_submit universe = java executable = Main. class jar_files = My. Library. jar input = infile output = outfile arguments = Main 1 2 3 queue http: //www. cs. wisc. edu/condor 134

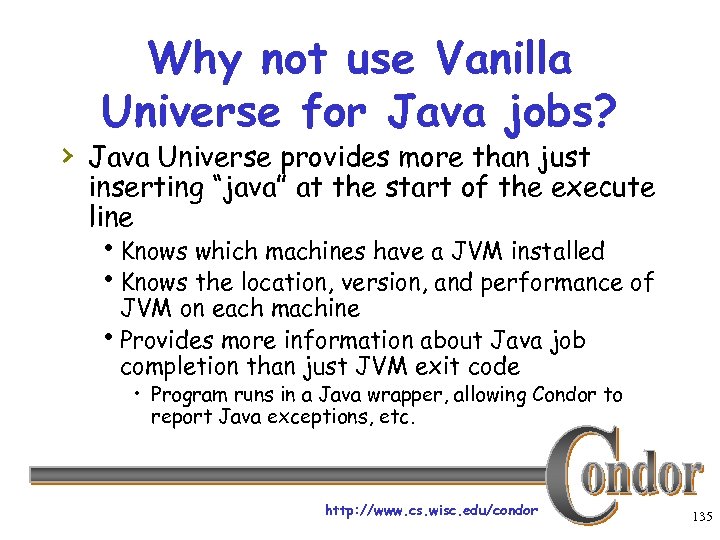

Why not use Vanilla Universe for Java jobs? › Java Universe provides more than just inserting “java” at the start of the execute line h. Knows which machines have a JVM installed h. Knows the location, version, and performance of JVM on each machine h. Provides more information about Java job completion than just JVM exit code • Program runs in a Java wrapper, allowing Condor to report Java exceptions, etc. http: //www. cs. wisc. edu/condor 135

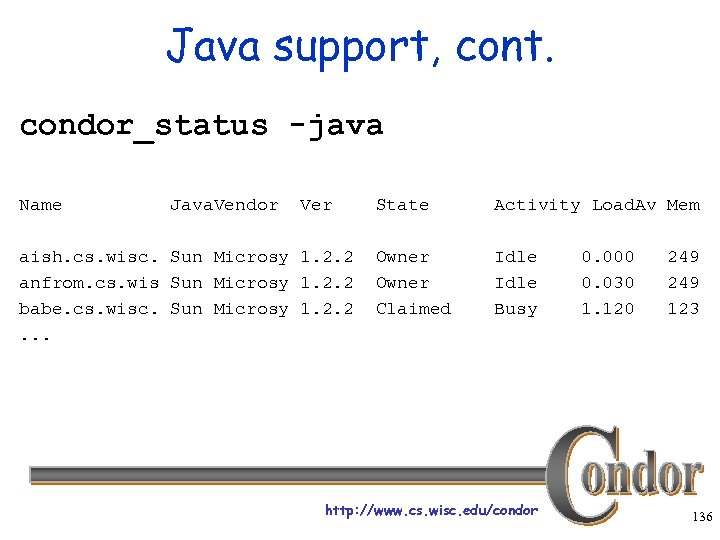

Java support, cont. condor_status -java Name Java. Vendor Ver aish. cs. wisc. Sun Microsy 1. 2. 2 anfrom. cs. wis Sun Microsy 1. 2. 2 babe. cs. wisc. Sun Microsy 1. 2. 2. . . State Activity Load. Av Mem Owner Claimed Idle Busy http: //www. cs. wisc. edu/condor 0. 000 0. 030 1. 120 249 123 136

My MPI programs are running on the dedicated nodes. Can I run parallel jobs on the non-dedicated nodes? http: //www. cs. wisc. edu/condor 137

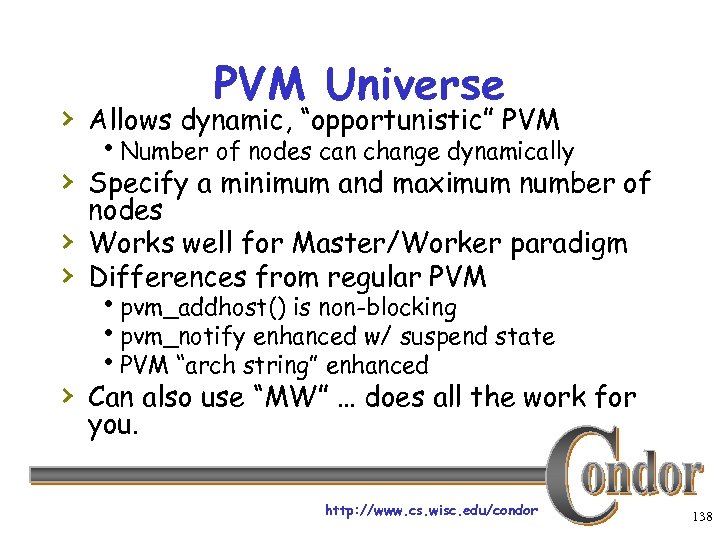

PVM Universe › Allows dynamic, “opportunistic” PVM h. Number of nodes can change dynamically › Specify a minimum and maximum number of › › nodes Works well for Master/Worker paradigm Differences from regular PVM hpvm_addhost() is non-blocking hpvm_notify enhanced w/ suspend state h. PVM “arch string” enhanced › Can also use “MW” … does all the work for you. http: //www. cs. wisc. edu/condor 138

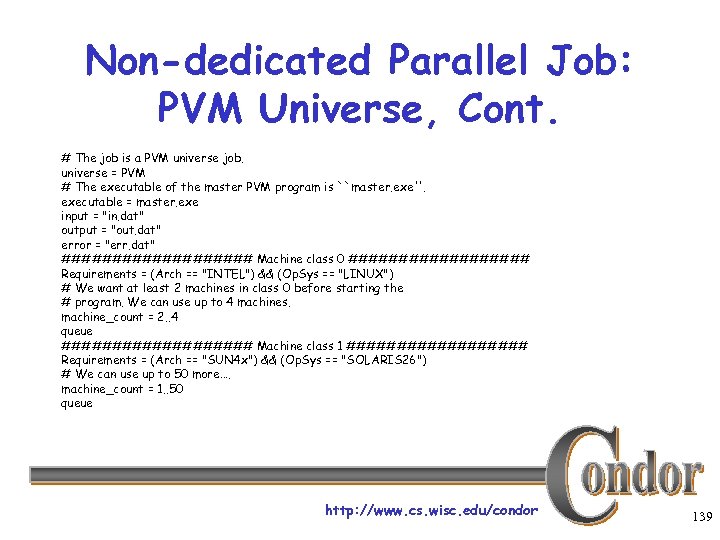

Non-dedicated Parallel Job: PVM Universe, Cont. # The job is a PVM universe job. universe = PVM # The executable of the master PVM program is ``master. exe''. executable = master. exe input = "in. dat" output = "out. dat" error = "err. dat" ########## Machine class 0 ######### Requirements = (Arch == "INTEL") && (Op. Sys == "LINUX") # We want at least 2 machines in class 0 before starting the # program. We can use up to 4 machines. machine_count = 2. . 4 queue ########## Machine class 1 ######### Requirements = (Arch == "SUN 4 x") && (Op. Sys == "SOLARIS 26") # We can use up to 50 more…. machine_count = 1. . 50 queue http: //www. cs. wisc. edu/condor 139

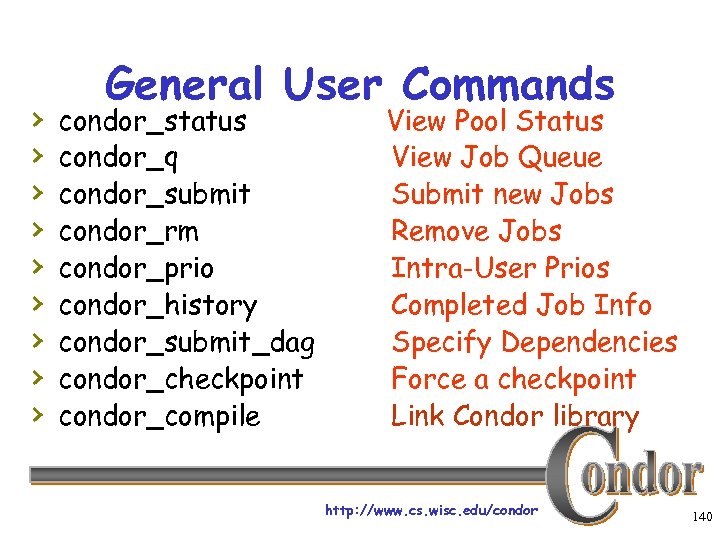

› › › › › General User Commands condor_status condor_q condor_submit condor_rm condor_prio condor_history condor_submit_dag condor_checkpoint condor_compile View Pool Status View Job Queue Submit new Jobs Remove Jobs Intra-User Prios Completed Job Info Specify Dependencies Force a checkpoint Link Condor library http: //www. cs. wisc. edu/condor 140

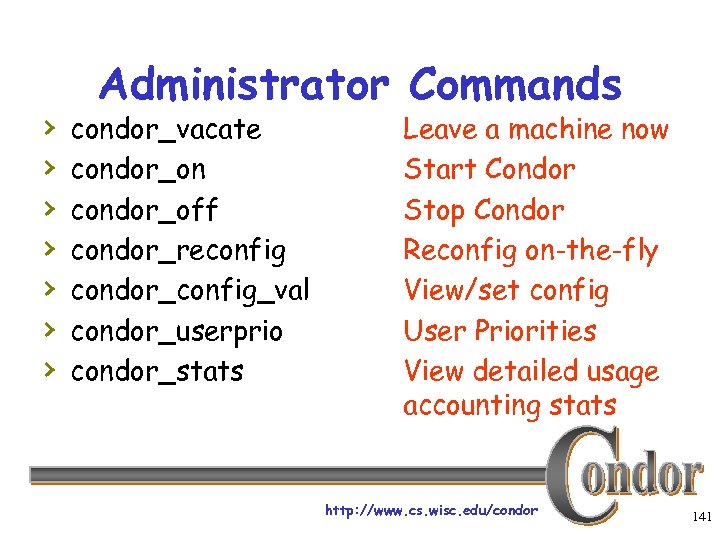

› › › › Administrator Commands condor_vacate condor_on condor_off condor_reconfig condor_config_val condor_userprio condor_stats Leave a machine now Start Condor Stop Condor Reconfig on-the-fly View/set config User Priorities View detailed usage accounting stats http: //www. cs. wisc. edu/condor 141

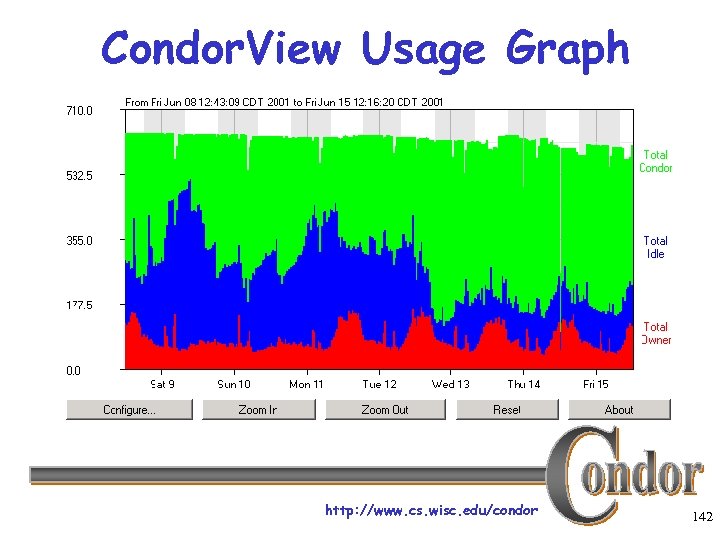

Condor. View Usage Graph http: //www. cs. wisc. edu/condor 142

Back to the Story… Frieda Needs Remote Resources… http: //www. cs. wisc. edu/condor 143

Frieda Builds a Grid! › First Frieda takes advantage of her › › Condor friends! She knows people with their own Condor pools, and gets permission to access their resources She then configures her Condor pool to “flock” to these pools flock http: //www. cs. wisc. edu/condor 144

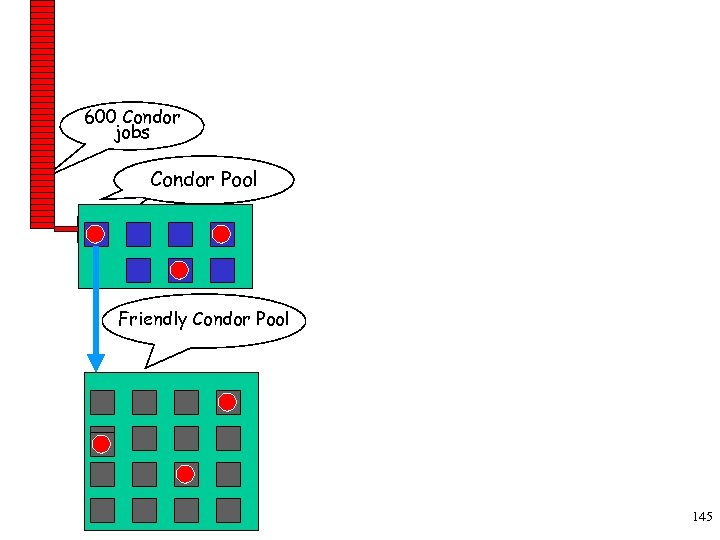

600 Condor jobs personal your Condor Pool workstation Condor Friendly Condor Pool http: //www. cs. wisc. edu/condor 145

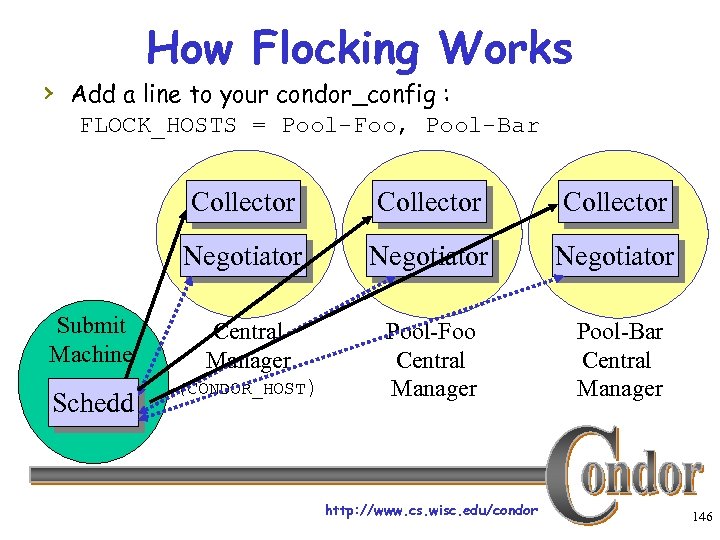

How Flocking Works › Add a line to your condor_config : FLOCK_HOSTS = Pool-Foo, Pool-Bar Collector Schedd Collector Negotiator Submit Machine Collector Negotiator Central Manager Pool-Foo Central Manager Pool-Bar Central Manager (CONDOR_HOST) http: //www. cs. wisc. edu/condor 146

Condor Flocking › Remote pools are contacted in the order › specified until jobs are satisfied The list of remote pools is a property of the Schedd, not the Central Manager h. So different users can Flock to different pools h. And remote pools can allow specific users › User-priority system is “flocking-aware” h. A pool’s local users can have priority over remote users “flocking” in. http: //www. cs. wisc. edu/condor 147

Condor Flocking, cont. › Flocking is “Condor” specific technology… › Frieda also has access to Globus resources she wants to use h. She has certificates and access to Globus gatekeepers at remote institutions › But Frieda wants Condor’s queue › management features for her Globus jobs! She installs Condor-G so she can submit “Globus Universe” jobs to Condor http: //www. cs. wisc. edu/condor 148

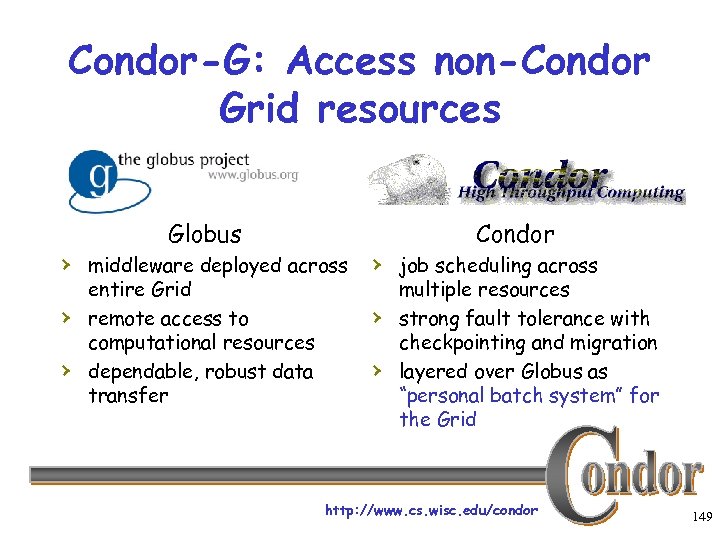

Condor-G: Access non-Condor Grid resources Globus Condor › middleware deployed across › job scheduling across › › entire Grid remote access to computational resources dependable, robust data transfer › › multiple resources strong fault tolerance with checkpointing and migration layered over Globus as “personal batch system” for the Grid http: //www. cs. wisc. edu/condor 149

![Condor-G Job Description (Job Class. Ad) Condor-G GT 2 [. 1|2|4] HTTPS Condor Nordu. Condor-G Job Description (Job Class. Ad) Condor-G GT 2 [. 1|2|4] HTTPS Condor Nordu.](https://present5.com/presentation/506593bb137d61a29fb5f6258be26130/image-149.jpg)

Condor-G Job Description (Job Class. Ad) Condor-G GT 2 [. 1|2|4] HTTPS Condor Nordu. Grid Oracle GT 3 OGSI http: //www. cs. wisc. edu/condor Unicore? 150

Frieda Submits a Globus Universe Job › In her submit description file, she specifies: h. Universe = Globus h. Which Globus Gatekeeper to use h. Optional: Location of file containing your Globus certificate universe = globusscheduler = beak. cs. wisc. edu/jobmanager executable = progname queue http: //www. cs. wisc. edu/condor 151

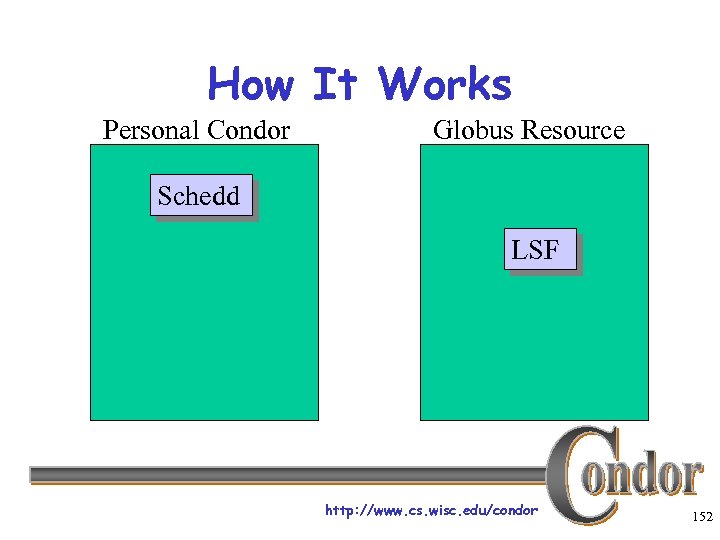

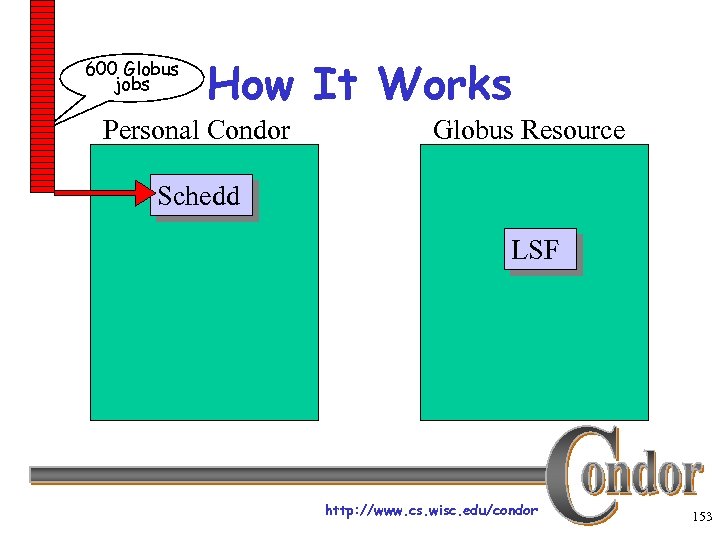

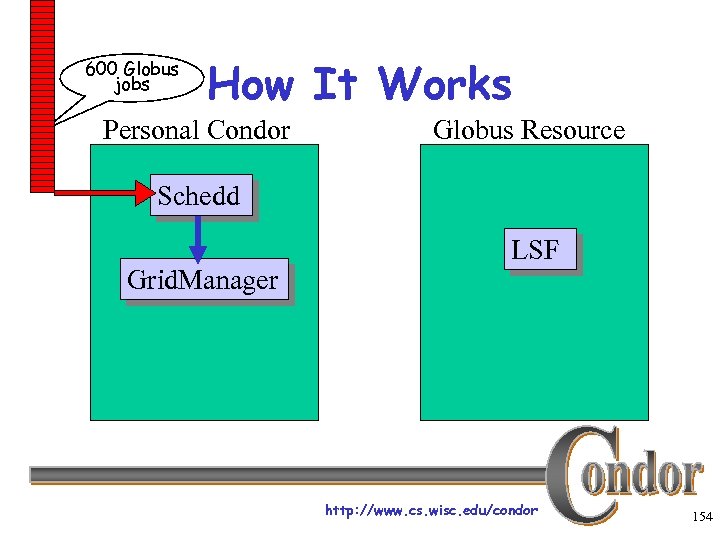

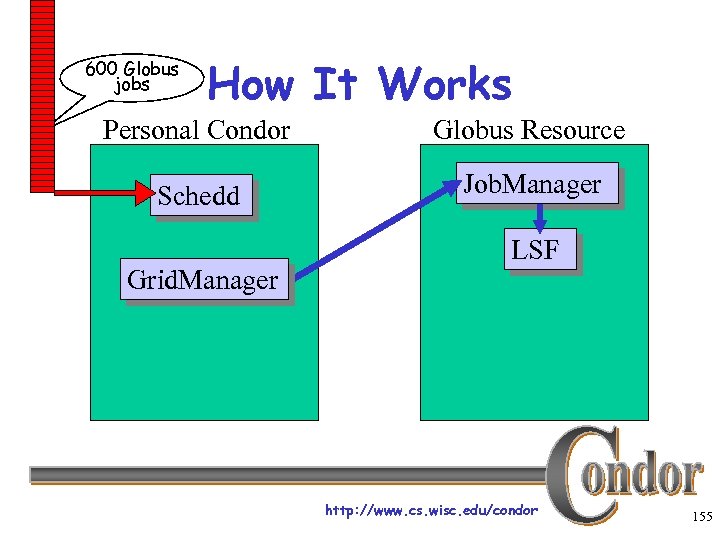

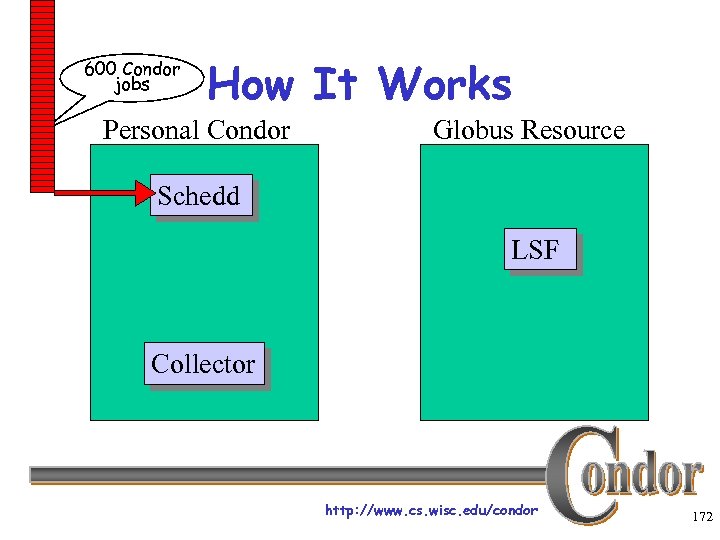

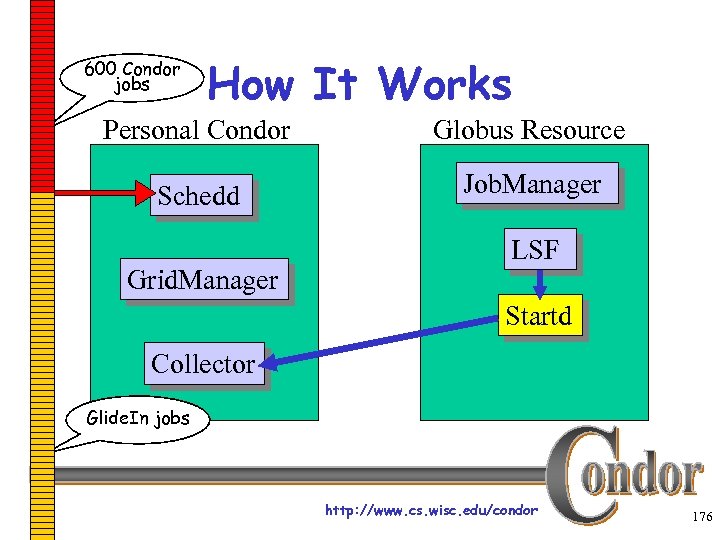

How It Works Personal Condor Globus Resource Schedd LSF http: //www. cs. wisc. edu/condor 152

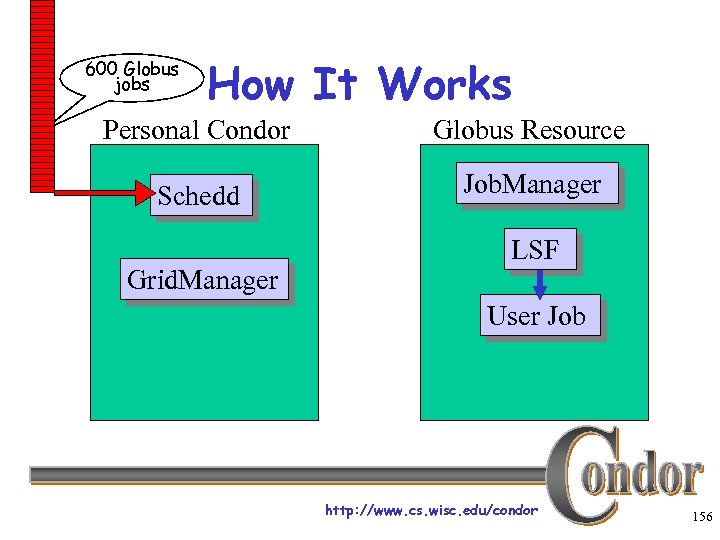

600 Globus jobs How It Works Personal Condor Globus Resource Schedd LSF http: //www. cs. wisc. edu/condor 153

600 Globus jobs How It Works Personal Condor Globus Resource Schedd Grid. Manager LSF http: //www. cs. wisc. edu/condor 154

600 Globus jobs How It Works Personal Condor Globus Resource Schedd Job. Manager Grid. Manager LSF http: //www. cs. wisc. edu/condor 155

600 Globus jobs How It Works Personal Condor Globus Resource Schedd Job. Manager Grid. Manager LSF User Job http: //www. cs. wisc. edu/condor 156

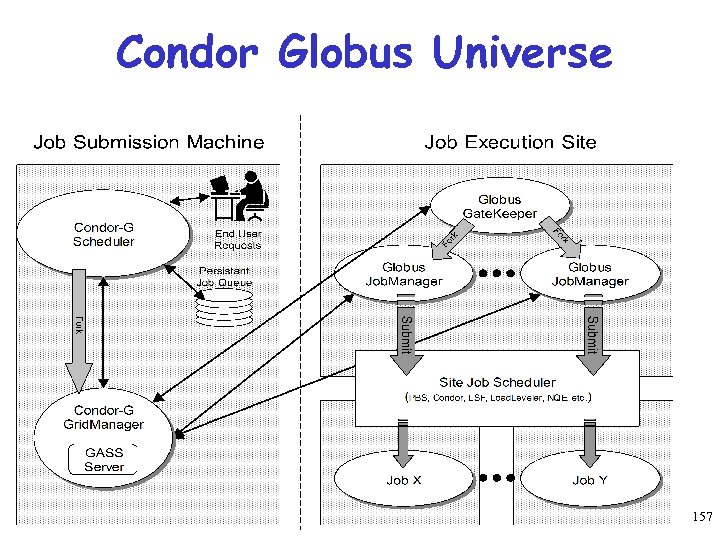

Condor Globus Universe 157

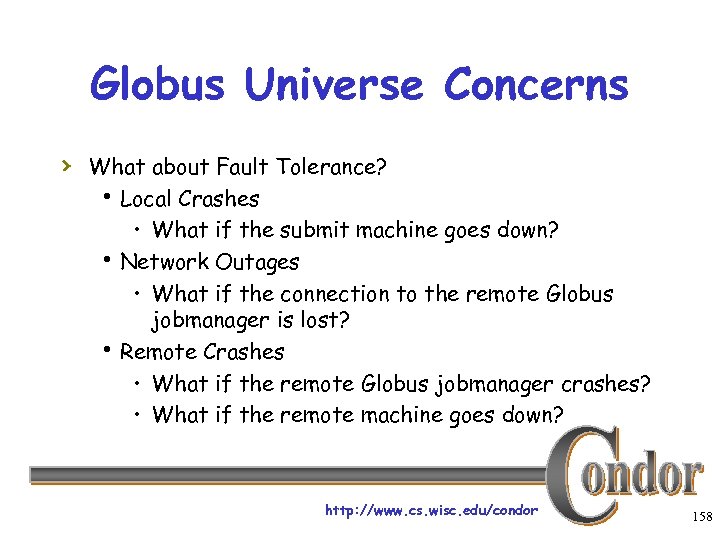

Globus Universe Concerns › What about Fault Tolerance? h Local Crashes • What if the submit machine goes down? h Network Outages • What if the connection to the remote Globus jobmanager is lost? h Remote Crashes • What if the remote Globus jobmanager crashes? • What if the remote machine goes down? http: //www. cs. wisc. edu/condor 158

Changes to the Globus Job. Manager for Fault Tolerance › Ability to restart a Job. Manager › Enhanced two-phase commit submit protocol http: //www. cs. wisc. edu/condor 159

Globus Universe Fault-Tolerance: Submit-side Failures › All relevant state for each submitted job is › › stored persistently in the Condor job queue. This persistent information allows the Condor Grid. Manager upon restart to read the state information and reconnect to Job. Managers that were running at the time of the crash. If a Job. Manager fails to respond… http: //www. cs. wisc. edu/condor 160

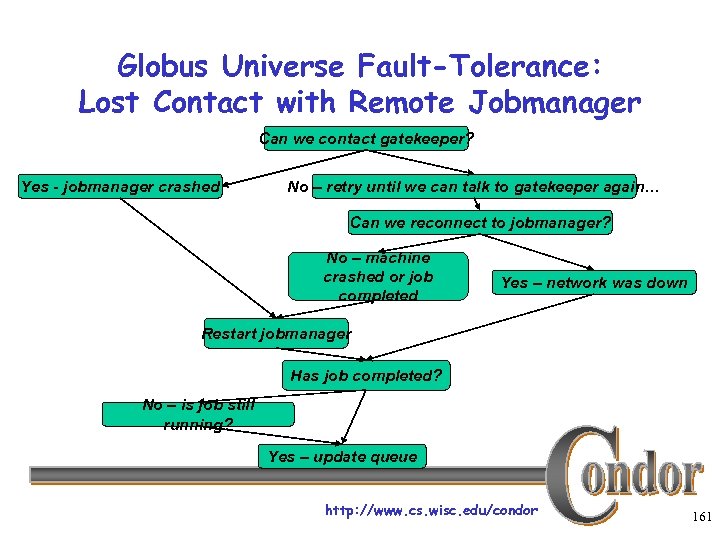

Globus Universe Fault-Tolerance: Lost Contact with Remote Jobmanager Can we contact gatekeeper? Yes - jobmanager crashed No – retry until we can talk to gatekeeper again… Can we reconnect to jobmanager? No – machine crashed or job completed Yes – network was down Restart jobmanager Has job completed? No – is job still running? Yes – update queue http: //www. cs. wisc. edu/condor 161

Globus Universe Fault-Tolerance: Credential Management › Authentication in Globus is done with › › › limited-lifetime X 509 proxies Proxy may expire before jobs finish executing Condor can put jobs on hold and email user to refresh proxy Todo: Interface with My. Proxy… http: //www. cs. wisc. edu/condor 162

Can Condor-G decide where to run my jobs? http: //www. cs. wisc. edu/condor 163

Condor-G Matchmaking › Alternative to Glidein: Use Condor-G › › matchmaking with globus universe jobs Allows Condor-G to dynamically assign computing jobs to grid sites An example of lazy planning http: //www. cs. wisc. edu/condor 164

Condor-G Matchmaking, cont. › Normally a globus universe job must specify the site in the submit description file via the “globusscheduler” attribute like so: Executable = foo Universe = globus Globusscheduler = beak. cs. wisc. edu/jobmanager-pbs queue http: //www. cs. wisc. edu/condor 165

Condor-G Matchmaking, cont. › With matchmaking, globus universe jobs can use requirements and rank: Executable = foo Universe = globus Globusscheduler = $$(Gatekeeper. Url) Requirements = arch == LINUX Rank = Number. Of. Nodes Queue › The $$(x) syntax inserts information from the target Class. Ad when a match is made. http: //www. cs. wisc. edu/condor 166

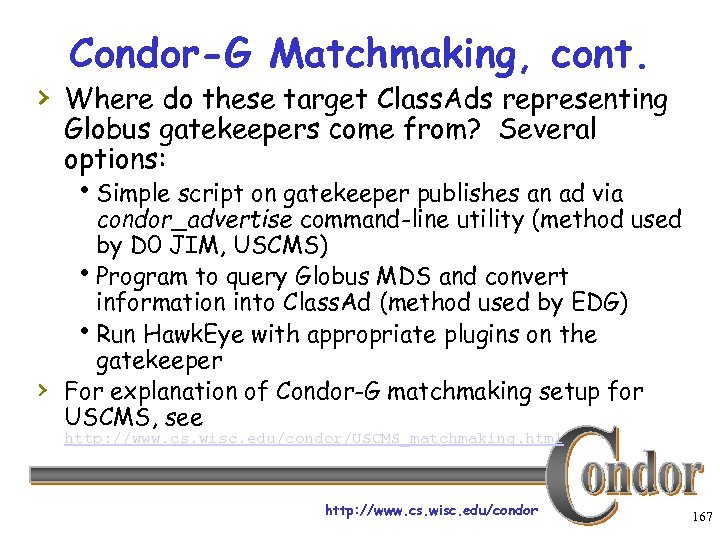

Condor-G Matchmaking, cont. › Where do these target Class. Ads representing Globus gatekeepers come from? Several options: h. Simple script on gatekeeper publishes an ad via › condor_advertise command-line utility (method used by D 0 JIM, USCMS) h. Program to query Globus MDS and convert information into Class. Ad (method used by EDG) h. Run Hawk. Eye with appropriate plugins on the gatekeeper For explanation of Condor-G matchmaking setup for USCMS, see http: //www. cs. wisc. edu/condor/USCMS_matchmaking. html http: //www. cs. wisc. edu/condor 167

DAGMan Callouts › Another mechanism to achieve lazy planning: › › › DAGMan callouts Define DAGMAN_HELPER_COMMAND in condor_config (usually a script) The helper command is passed a copy of the job submit file when DAGMan is about to submit that node in the graph This allows changes to be made to the submit file (such as changing Globus. Scheduler) at the last minute http: //www. cs. wisc. edu/condor 168

But Frieda Wants More… › She wants to run standard universe jobs on Globus-managed resources h. For matchmaking and dynamic scheduling of jobs h. For job checkpointing and migration h. For remote system calls http: //www. cs. wisc. edu/condor 169

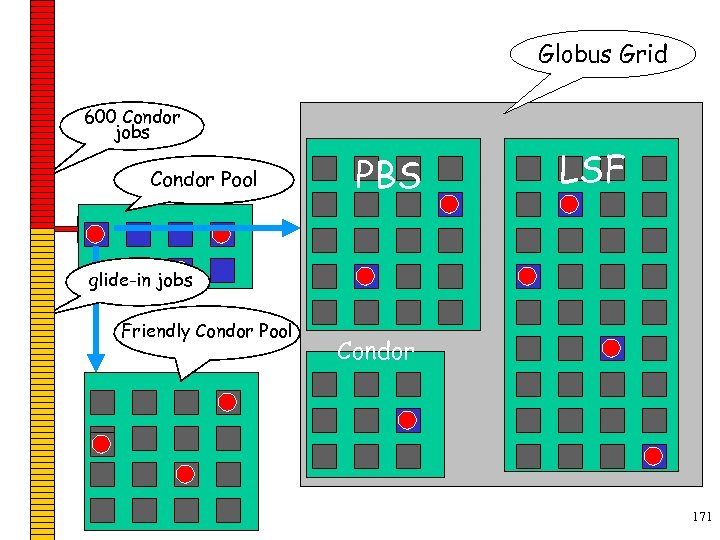

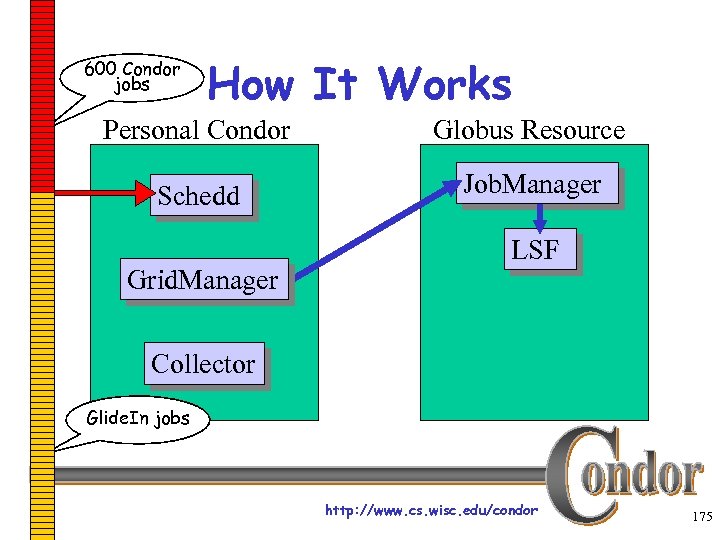

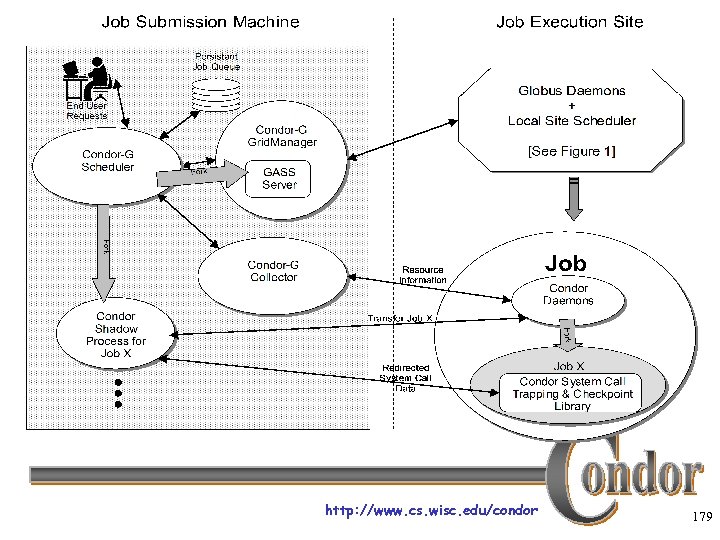

One Solution: Condor-G Glide. In › Frieda can use the Globus Universe to run › › Condor daemons on Globus resources When the resources run these Glide. In jobs, they will temporarily join her Condor Pool She can then submit Standard, Vanilla, PVM, or MPI Universe jobs and they will be matched and run on the Globus resources http: //www. cs. wisc. edu/condor 170

Globus Grid 600 Condor jobs personal your Condor Pool workstation Condor PBS LSF glide-in jobs Friendly Condor Pool Condor http: //www. cs. wisc. edu/condor 171

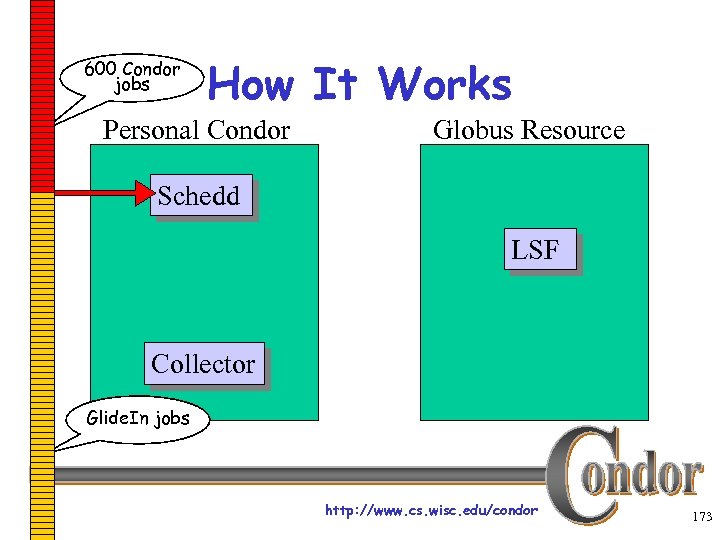

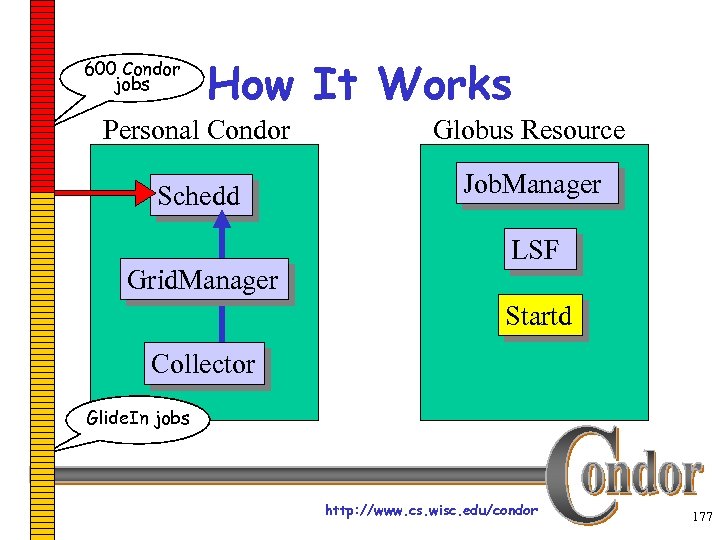

600 Condor jobs How It Works Personal Condor Globus Resource Schedd LSF Collector http: //www. cs. wisc. edu/condor 172

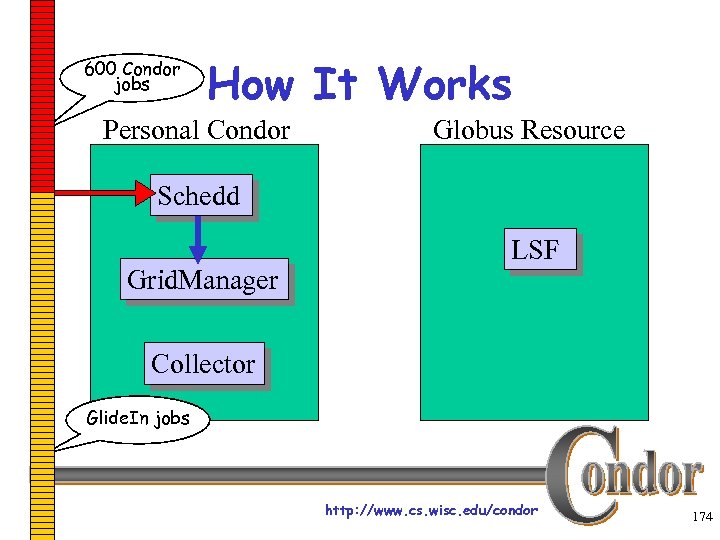

600 Condor jobs How It Works Personal Condor Globus Resource Schedd LSF Collector Glide. In jobs http: //www. cs. wisc. edu/condor 173

600 Condor jobs How It Works Personal Condor Globus Resource Schedd Grid. Manager LSF Collector Glide. In jobs http: //www. cs. wisc. edu/condor 174

600 Condor jobs How It Works Personal Condor Globus Resource Schedd Job. Manager Grid. Manager LSF Collector Glide. In jobs http: //www. cs. wisc. edu/condor 175

600 Condor jobs How It Works Personal Condor Globus Resource Schedd Job. Manager Grid. Manager LSF Startd Collector Glide. In jobs http: //www. cs. wisc. edu/condor 176

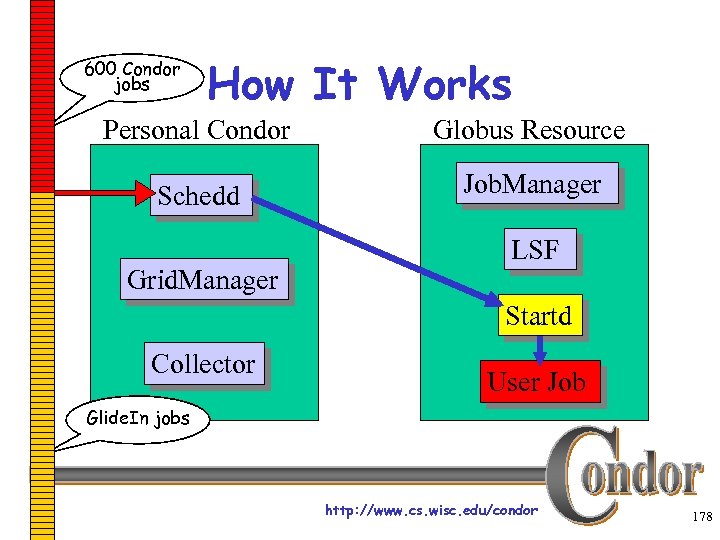

600 Condor jobs How It Works Personal Condor Globus Resource Schedd Job. Manager Grid. Manager LSF Startd Collector Glide. In jobs http: //www. cs. wisc. edu/condor 177

600 Condor jobs How It Works Personal Condor Globus Resource Schedd Job. Manager Grid. Manager LSF Startd Collector User Job Glide. In jobs http: //www. cs. wisc. edu/condor 178

http: //www. cs. wisc. edu/condor 179

Glide. In Concerns › What if a Globus resource kills my Glide. In job? h That resource will disappear from your pool and your jobs will be rescheduled on other machines h Standard universe jobs will resume from their last checkpoint like usual › What if all my jobs are completed before a Glide. In job runs? h If a Glide. In Condor daemon is not matched with a job in 10 minutes, it terminates, freeing the resource http: //www. cs. wisc. edu/condor 180

Common Questions, cont. My Personal Condor is flocking with a bunch of Solaris machines, and also doing a Glide. In to a Silicon Graphics O 2 K. I do not want to statically partition my jobs. Solution: In your submit file, say: Executable = myjob. $$(Op. Sys). $$(Arch) The “$$(xxx)” notation is replaced with attributes from the machine Class. Ad which was matched with your job. http: //www. cs. wisc. edu/condor 181

In Review With Condor Frieda can… h… manage her compute job workload h… access local machines h… build a grid to access remote Condor Pools via flocking h… access remote compute resources on grids via Globus Universe jobs h… carve out her own personal Condor Pool from a grid with Glide. In technology http: //www. cs. wisc. edu/condor 182

I wan to create a portal to Condor. Is there a developer API to Condor? http: //www. cs. wisc. edu/condor 183

Developer API › Do not underestimate the flexibility › of the command line tools! If not possible, consider SOAP http: //www. cs. wisc. edu/condor 184

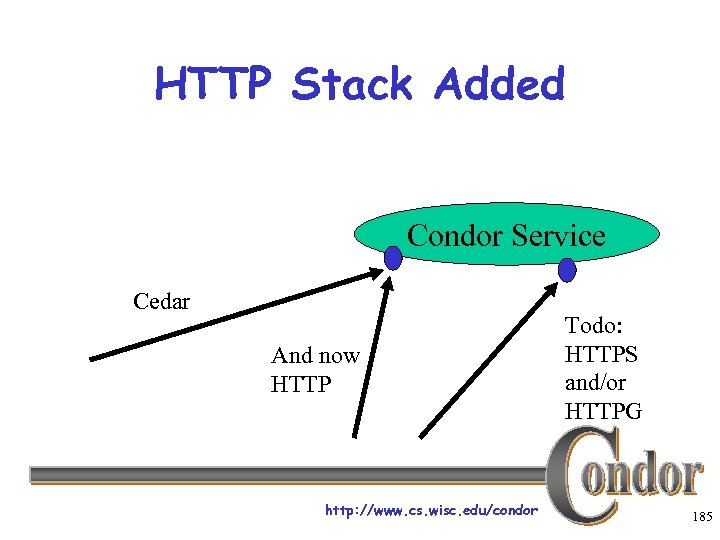

HTTP Stack Added Condor Service Cedar And now HTTP http: //www. cs. wisc. edu/condor Todo: HTTPS and/or HTTPG 185

Current SOAP status › Clients can now use CEDAR or HTTP › protocol to communicate to Condor daemons. If HTTP command is h. GET : use a built-in “mini” web server • Useful for retrieving WSDL from the service itself h. POST : assumed to be a SOAP RPC http: //www. cs. wisc. edu/condor 186

Current SOAP status, cont. › Created first pass XML Schema › representation of a list of Class. Ads, and first pass WSDL files. CEDAR is more of a message-passing model instead of a true RPC model hmany back-and-forth messages. hwe working on the considerable task of re-arranging the implementation in the Condor daemons from message-passing model to a true RPC model. http: //www. cs. wisc. edu/condor 187

Current SOAP status, cont. › Started with the Collector hmodified the implementation of the collector so all of the query operations are ignorant of the underlying transport (CEDAR or SOAP, it no longer knows or cares) hcreated SOAP stubs for all collector query operations h. Proof of concept: simple “condor_status” was written in Perl. It works! http: //www. cs. wisc. edu/condor 188

Current Activity › Currently adding soap stubs in the schedd for our queue management API. h. This will give the equal of condor_q, condor_prio, condor_qedit, condor_rm, … › Adding DIME support (binary attachments to SOAP messages) in preparation for job sandbox delivery for submit interface. http: //www. cs. wisc. edu/condor 189

Thank you! Check us out on the Web: http: //www. cs. wisc. edu/condor Email: condor-admin@cs. wisc. edu http: //www. cs. wisc. edu/condor 190

506593bb137d61a29fb5f6258be26130.ppt