81be6743bd1c255bd728014552e6bfb6.ppt

- Количество слайдов: 16

Computing Issues & Status L. Pinsky Computing Coordinator ALICE-USA 16 October 2005 LSP@ALICE-USA Collaboration Meeting 1

Computing Issues & Status L. Pinsky Computing Coordinator ALICE-USA 16 October 2005 LSP@ALICE-USA Collaboration Meeting 1

Overview of the ALICE Computing Plan • Some Raw Numbers (All from TDR) – Recording Rate: 100 Hz of 13. 75 MB/Event Raw • w/reconstruction 3. 8 PB/Yr (Pb-Pb Only) • 2 Copies— 1 FULL Archival @ CERN • …& 1 Net Distributed (Working) Copy – Simulated Data: 3. 9 PB/Yr (Pb-Pb Only) – Event Summary Data (ESD): 3. 03 MB/Event • 2 Net Copies— 1 Archival @ CERN • …& 1 Net Distributed (Working) Copy – (Physics) Analysis Object Data (AOD): ~0. 333 MB/Event • Multiple local working copies • Size varies depending upon application… • Archival copies of everything @ CERN 16 October 2005 LSP@ALICE-USA Collaboration Meeting 2

Overview of the ALICE Computing Plan • Some Raw Numbers (All from TDR) – Recording Rate: 100 Hz of 13. 75 MB/Event Raw • w/reconstruction 3. 8 PB/Yr (Pb-Pb Only) • 2 Copies— 1 FULL Archival @ CERN • …& 1 Net Distributed (Working) Copy – Simulated Data: 3. 9 PB/Yr (Pb-Pb Only) – Event Summary Data (ESD): 3. 03 MB/Event • 2 Net Copies— 1 Archival @ CERN • …& 1 Net Distributed (Working) Copy – (Physics) Analysis Object Data (AOD): ~0. 333 MB/Event • Multiple local working copies • Size varies depending upon application… • Archival copies of everything @ CERN 16 October 2005 LSP@ALICE-USA Collaboration Meeting 2

Embedded Aside Comment on Trigger • Note that an “ESD”-like file will be saved from ALL High Level Trigger processed events (but the full raw data will be saved only for the “selected” events). • We should think about what information we want preserved in these “HLTESD’s” from the Jet-Finding exercises… 16 October 2005 LSP@ALICE-USA Collaboration Meeting 3

Embedded Aside Comment on Trigger • Note that an “ESD”-like file will be saved from ALL High Level Trigger processed events (but the full raw data will be saved only for the “selected” events). • We should think about what information we want preserved in these “HLTESD’s” from the Jet-Finding exercises… 16 October 2005 LSP@ALICE-USA Collaboration Meeting 3

ALICE Computing Resources Needed • TOTALS: Pb-Pb & p-p (~10% of Pb-Pb) – CPU: 35 MSi 2 K • 8. 3 MSi 2 K @ CERN & 26. 7 MSi 2 K Distributed – Working Disk Storage: 14 PB • ~1. 5 PB @ CERN & 12. 5 PB Distributed – Mass Storage: 11 PB/Year • ~3. 6 PB/Yr @ CERN & 7. 4 PB/Yr Distributed 16 October 2005 LSP@ALICE-USA Collaboration Meeting 4

ALICE Computing Resources Needed • TOTALS: Pb-Pb & p-p (~10% of Pb-Pb) – CPU: 35 MSi 2 K • 8. 3 MSi 2 K @ CERN & 26. 7 MSi 2 K Distributed – Working Disk Storage: 14 PB • ~1. 5 PB @ CERN & 12. 5 PB Distributed – Mass Storage: 11 PB/Year • ~3. 6 PB/Yr @ CERN & 7. 4 PB/Yr Distributed 16 October 2005 LSP@ALICE-USA Collaboration Meeting 4

ALICE “Cloud” Computing Model • Tier-0 Facility @ CERN – HLT + First Reconstruction (? ) + Archive • 7 Tier-1 Facilities (1 @ CERN) – Mass Storage for Distributed (Working) Copies of Raw Data & ESD – Tasks—Reconstruction, ESD and AOD Creation – Tier-1’s are a NET Capability and may themselves be distributed (Cloud Model) • N Tier-2 Facilities – CPU and Working Disk w/no Mass Storage – Focus mostly on simulation and AOD analysis 16 October 2005 LSP@ALICE-USA Collaboration Meeting 5

ALICE “Cloud” Computing Model • Tier-0 Facility @ CERN – HLT + First Reconstruction (? ) + Archive • 7 Tier-1 Facilities (1 @ CERN) – Mass Storage for Distributed (Working) Copies of Raw Data & ESD – Tasks—Reconstruction, ESD and AOD Creation – Tier-1’s are a NET Capability and may themselves be distributed (Cloud Model) • N Tier-2 Facilities – CPU and Working Disk w/no Mass Storage – Focus mostly on simulation and AOD analysis 16 October 2005 LSP@ALICE-USA Collaboration Meeting 5

Computing Resources Status before Mo. U approval by RRB http: //pcaliweb 02. cern. ch/Collaboration/Boards/Computing/Resources/ 1 o October 2005 YS@Computing Board 6

Computing Resources Status before Mo. U approval by RRB http: //pcaliweb 02. cern. ch/Collaboration/Boards/Computing/Resources/ 1 o October 2005 YS@Computing Board 6

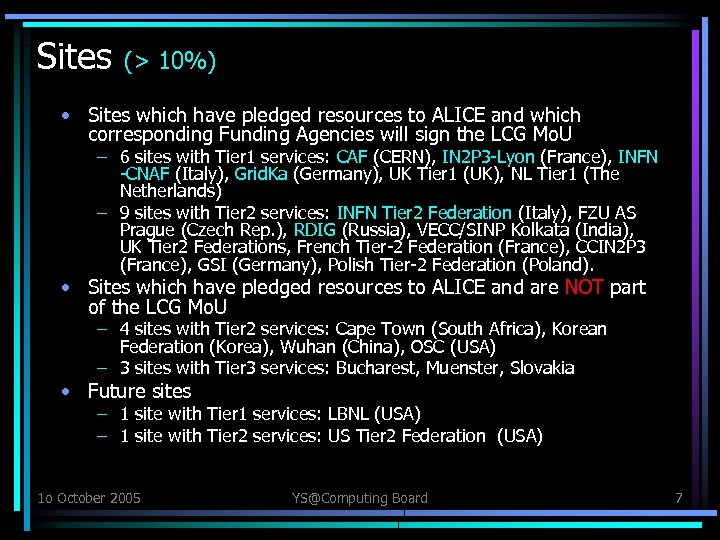

Sites (> 10%) • Sites which have pledged resources to ALICE and which corresponding Funding Agencies will sign the LCG Mo. U – 6 sites with Tier 1 services: CAF (CERN), IN 2 P 3 -Lyon (France), INFN -CNAF (Italy), Grid. Ka (Germany), UK Tier 1 (UK), NL Tier 1 (The Netherlands) – 9 sites with Tier 2 services: INFN Tier 2 Federation (Italy), FZU AS Prague (Czech Rep. ), RDIG (Russia), VECC/SINP Kolkata (India), UK Tier 2 Federations, French Tier-2 Federation (France), CCIN 2 P 3 (France), GSI (Germany), Polish Tier-2 Federation (Poland). • Sites which have pledged resources to ALICE and are NOT part of the LCG Mo. U – 4 sites with Tier 2 services: Cape Town (South Africa), Korean Federation (Korea), Wuhan (China), OSC (USA) – 3 sites with Tier 3 services: Bucharest, Muenster, Slovakia • Future sites – 1 site with Tier 1 services: LBNL (USA) – 1 site with Tier 2 services: US Tier 2 Federation (USA) 1 o October 2005 YS@Computing Board 7

Sites (> 10%) • Sites which have pledged resources to ALICE and which corresponding Funding Agencies will sign the LCG Mo. U – 6 sites with Tier 1 services: CAF (CERN), IN 2 P 3 -Lyon (France), INFN -CNAF (Italy), Grid. Ka (Germany), UK Tier 1 (UK), NL Tier 1 (The Netherlands) – 9 sites with Tier 2 services: INFN Tier 2 Federation (Italy), FZU AS Prague (Czech Rep. ), RDIG (Russia), VECC/SINP Kolkata (India), UK Tier 2 Federations, French Tier-2 Federation (France), CCIN 2 P 3 (France), GSI (Germany), Polish Tier-2 Federation (Poland). • Sites which have pledged resources to ALICE and are NOT part of the LCG Mo. U – 4 sites with Tier 2 services: Cape Town (South Africa), Korean Federation (Korea), Wuhan (China), OSC (USA) – 3 sites with Tier 3 services: Bucharest, Muenster, Slovakia • Future sites – 1 site with Tier 1 services: LBNL (USA) – 1 site with Tier 2 services: US Tier 2 Federation (USA) 1 o October 2005 YS@Computing Board 7

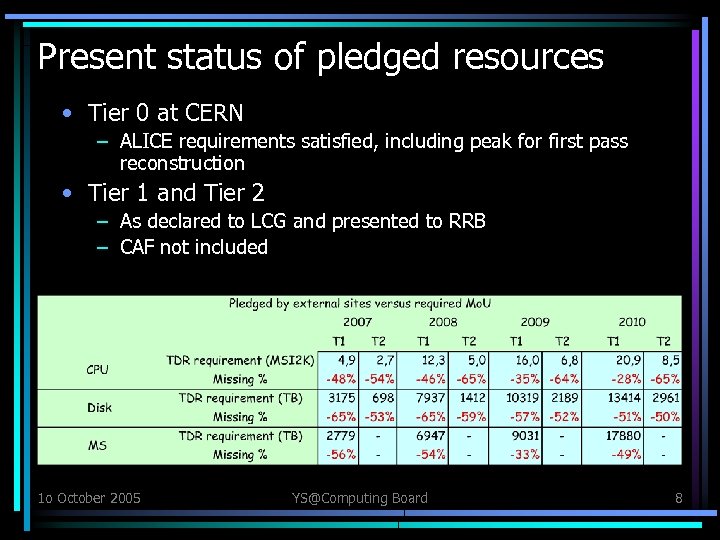

Present status of pledged resources • Tier 0 at CERN – ALICE requirements satisfied, including peak for first pass reconstruction • Tier 1 and Tier 2 – As declared to LCG and presented to RRB – CAF not included 1 o October 2005 YS@Computing Board 8

Present status of pledged resources • Tier 0 at CERN – ALICE requirements satisfied, including peak for first pass reconstruction • Tier 1 and Tier 2 – As declared to LCG and presented to RRB – CAF not included 1 o October 2005 YS@Computing Board 8

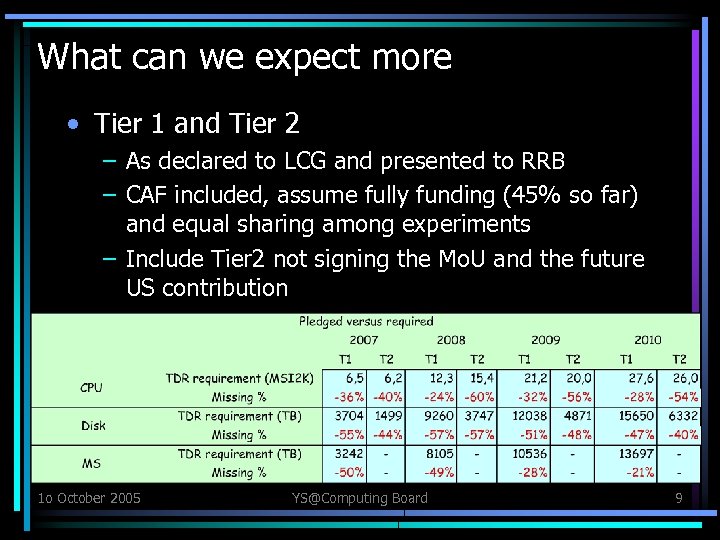

What can we expect more • Tier 1 and Tier 2 – As declared to LCG and presented to RRB – CAF included, assume fully funding (45% so far) and equal sharing among experiments – Include Tier 2 not signing the Mo. U and the future US contribution 1 o October 2005 YS@Computing Board 9

What can we expect more • Tier 1 and Tier 2 – As declared to LCG and presented to RRB – CAF included, assume fully funding (45% so far) and equal sharing among experiments – Include Tier 2 not signing the Mo. U and the future US contribution 1 o October 2005 YS@Computing Board 9

Remarks • Information: – mismatch between what I get from you and what is reported by LCG: NDGF, RDIG, INFN T 2, Poland (!) 1 o October 2005 YS@Computing Board 10

Remarks • Information: – mismatch between what I get from you and what is reported by LCG: NDGF, RDIG, INFN T 2, Poland (!) 1 o October 2005 YS@Computing Board 10

We have a problem! • Solutions? – Ask additional resources to main Funding Agencies … who already have pledged most of the resources – Ask main resources providers to re-consider the sharing algorithm among the LHC experiments • ALICE produces same quantity of data than ATLAS and CMS and much more than LHCb • ALICE computing/physicist twice as much expensive as for the other experiments – Ask all collaborating institutes (or at least those who provide nothing or only a small fraction) to provide a share of computing resources following M&O sharing mechanism – Have very selective triggers and take less data or analyze all data later waiting for better times 1 o October 2005 YS@Computing Board 11

We have a problem! • Solutions? – Ask additional resources to main Funding Agencies … who already have pledged most of the resources – Ask main resources providers to re-consider the sharing algorithm among the LHC experiments • ALICE produces same quantity of data than ATLAS and CMS and much more than LHCb • ALICE computing/physicist twice as much expensive as for the other experiments – Ask all collaborating institutes (or at least those who provide nothing or only a small fraction) to provide a share of computing resources following M&O sharing mechanism – Have very selective triggers and take less data or analyze all data later waiting for better times 1 o October 2005 YS@Computing Board 11

“Nominal” US Contribution (From TDR) • 1/7 of the External Distributed Resources— Includes combined Tier-1 & Tier 2 assets – CPU — 3. 44 MSi 2 K (net total) – Disk Storage — 1. 26 PB (net total) – Mass Storage— 0. 94 PB (by 2010 & then annually) • Acquisition Schedule… – 20% by early 2008 – Additional 20% added by early 2009 – Final 60% on-line by early 2010 16 October 2005 LSP@ALICE-USA Collaboration Meeting 12

“Nominal” US Contribution (From TDR) • 1/7 of the External Distributed Resources— Includes combined Tier-1 & Tier 2 assets – CPU — 3. 44 MSi 2 K (net total) – Disk Storage — 1. 26 PB (net total) – Mass Storage— 0. 94 PB (by 2010 & then annually) • Acquisition Schedule… – 20% by early 2008 – Additional 20% added by early 2009 – Final 60% on-line by early 2010 16 October 2005 LSP@ALICE-USA Collaboration Meeting 12

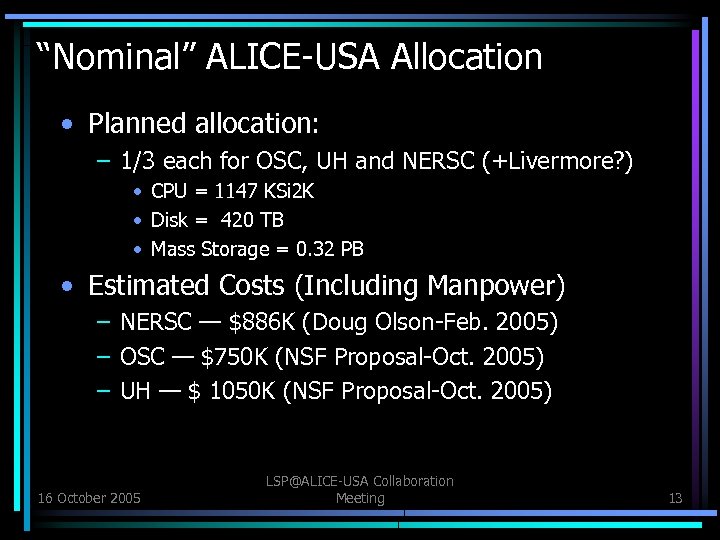

“Nominal” ALICE-USA Allocation • Planned allocation: – 1/3 each for OSC, UH and NERSC (+Livermore? ) • CPU = 1147 KSi 2 K • Disk = 420 TB • Mass Storage = 0. 32 PB • Estimated Costs (Including Manpower) – NERSC — $886 K (Doug Olson-Feb. 2005) – OSC — $750 K (NSF Proposal-Oct. 2005) – UH — $ 1050 K (NSF Proposal-Oct. 2005) 16 October 2005 LSP@ALICE-USA Collaboration Meeting 13

“Nominal” ALICE-USA Allocation • Planned allocation: – 1/3 each for OSC, UH and NERSC (+Livermore? ) • CPU = 1147 KSi 2 K • Disk = 420 TB • Mass Storage = 0. 32 PB • Estimated Costs (Including Manpower) – NERSC — $886 K (Doug Olson-Feb. 2005) – OSC — $750 K (NSF Proposal-Oct. 2005) – UH — $ 1050 K (NSF Proposal-Oct. 2005) 16 October 2005 LSP@ALICE-USA Collaboration Meeting 13

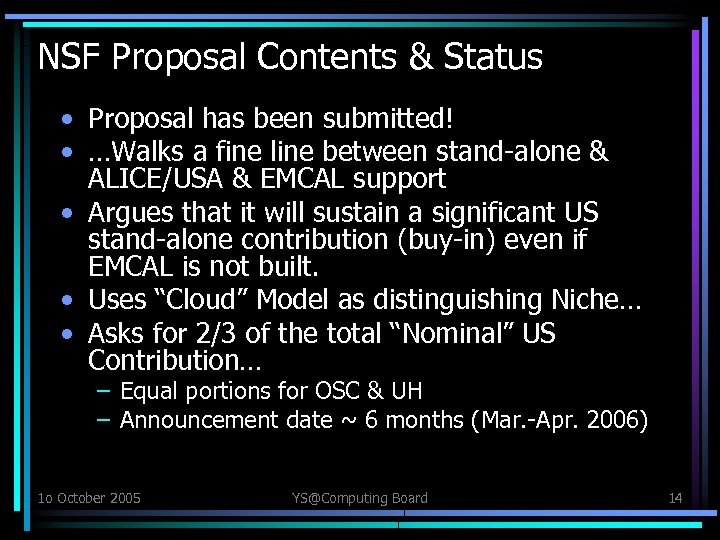

NSF Proposal Contents & Status • Proposal has been submitted! • …Walks a fine line between stand-alone & ALICE/USA & EMCAL support • Argues that it will sustain a significant US stand-alone contribution (buy-in) even if EMCAL is not built. • Uses “Cloud” Model as distinguishing Niche… • Asks for 2/3 of the total “Nominal” US Contribution… – Equal portions for OSC & UH – Announcement date ~ 6 months (Mar. -Apr. 2006) 1 o October 2005 YS@Computing Board 14

NSF Proposal Contents & Status • Proposal has been submitted! • …Walks a fine line between stand-alone & ALICE/USA & EMCAL support • Argues that it will sustain a significant US stand-alone contribution (buy-in) even if EMCAL is not built. • Uses “Cloud” Model as distinguishing Niche… • Asks for 2/3 of the total “Nominal” US Contribution… – Equal portions for OSC & UH – Announcement date ~ 6 months (Mar. -Apr. 2006) 1 o October 2005 YS@Computing Board 14

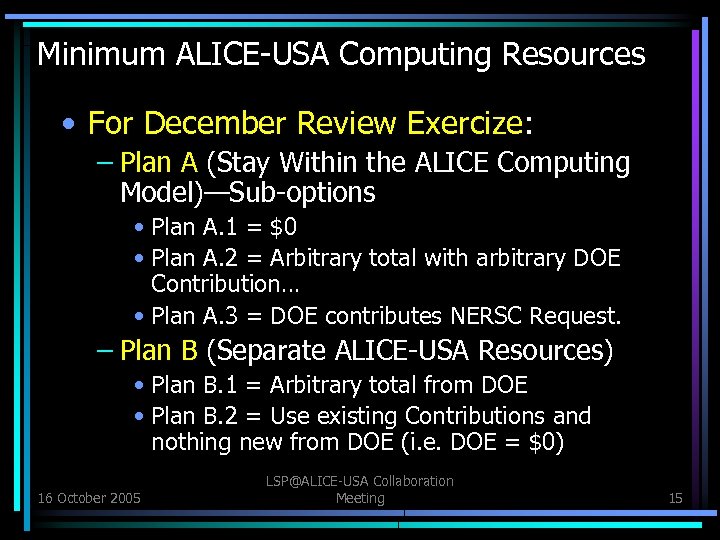

Minimum ALICE-USA Computing Resources • For December Review Exercize: – Plan A (Stay Within the ALICE Computing Model)—Sub-options • Plan A. 1 = $0 • Plan A. 2 = Arbitrary total with arbitrary DOE Contribution… • Plan A. 3 = DOE contributes NERSC Request. – Plan B (Separate ALICE-USA Resources) • Plan B. 1 = Arbitrary total from DOE • Plan B. 2 = Use existing Contributions and nothing new from DOE (i. e. DOE = $0) 16 October 2005 LSP@ALICE-USA Collaboration Meeting 15

Minimum ALICE-USA Computing Resources • For December Review Exercize: – Plan A (Stay Within the ALICE Computing Model)—Sub-options • Plan A. 1 = $0 • Plan A. 2 = Arbitrary total with arbitrary DOE Contribution… • Plan A. 3 = DOE contributes NERSC Request. – Plan B (Separate ALICE-USA Resources) • Plan B. 1 = Arbitrary total from DOE • Plan B. 2 = Use existing Contributions and nothing new from DOE (i. e. DOE = $0) 16 October 2005 LSP@ALICE-USA Collaboration Meeting 15

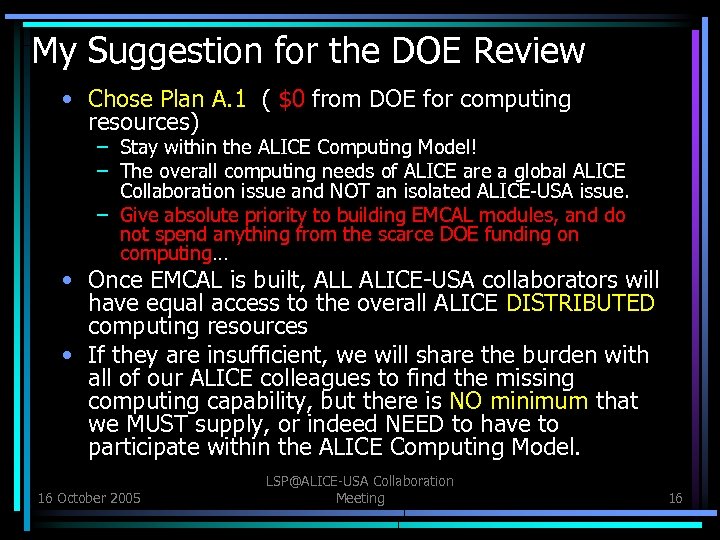

My Suggestion for the DOE Review • Chose Plan A. 1 ( $0 from DOE for computing resources) – Stay within the ALICE Computing Model! – The overall computing needs of ALICE are a global ALICE Collaboration issue and NOT an isolated ALICE-USA issue. – Give absolute priority to building EMCAL modules, and do not spend anything from the scarce DOE funding on computing… • Once EMCAL is built, ALL ALICE-USA collaborators will have equal access to the overall ALICE DISTRIBUTED computing resources • If they are insufficient, we will share the burden with all of our ALICE colleagues to find the missing computing capability, but there is NO minimum that we MUST supply, or indeed NEED to have to participate within the ALICE Computing Model. 16 October 2005 LSP@ALICE-USA Collaboration Meeting 16

My Suggestion for the DOE Review • Chose Plan A. 1 ( $0 from DOE for computing resources) – Stay within the ALICE Computing Model! – The overall computing needs of ALICE are a global ALICE Collaboration issue and NOT an isolated ALICE-USA issue. – Give absolute priority to building EMCAL modules, and do not spend anything from the scarce DOE funding on computing… • Once EMCAL is built, ALL ALICE-USA collaborators will have equal access to the overall ALICE DISTRIBUTED computing resources • If they are insufficient, we will share the burden with all of our ALICE colleagues to find the missing computing capability, but there is NO minimum that we MUST supply, or indeed NEED to have to participate within the ALICE Computing Model. 16 October 2005 LSP@ALICE-USA Collaboration Meeting 16