46446fa82a9c1f004981c34cb87efe68.ppt

- Количество слайдов: 50

Computational lexicology, morphology and syntax Diana Trandabăț 2017 -2018

Dependency parsing • The syntactic parsing of a sentence consists of finding the correct syntactic structure of that sentence in a given formalism/grammar. • Dependency Grammar (DG) and Phrase Structure Grammar (PSG) are two such formalisms.

Phrase Structure Grammar (PSG) • Breaks sentence into constituents (phrases) – Which are then broken into smaller constituents • Describes phrase structure, clause structure – E. g. . NP, PP, VP etc. . • Structures often recursive – The clever tall blue-eyed old … man

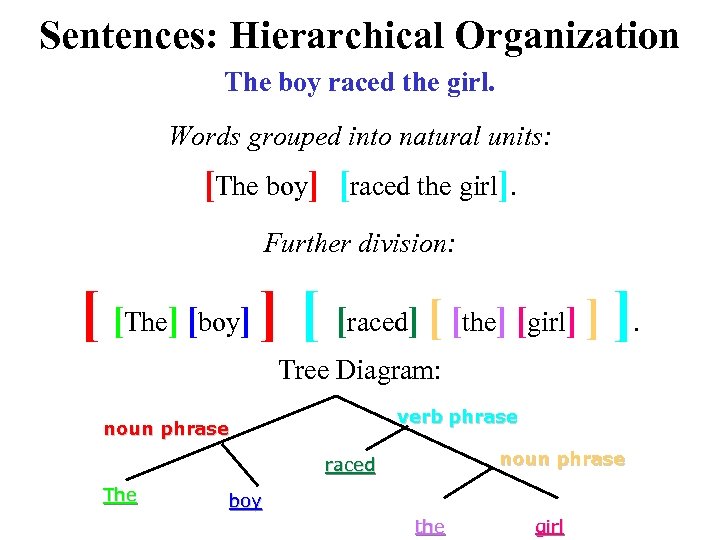

Constituents & Constituency Tests • Constituents = the natural groupings of a sentence • A constituent is a string of words such that there is one node that dominates those words and no other words.

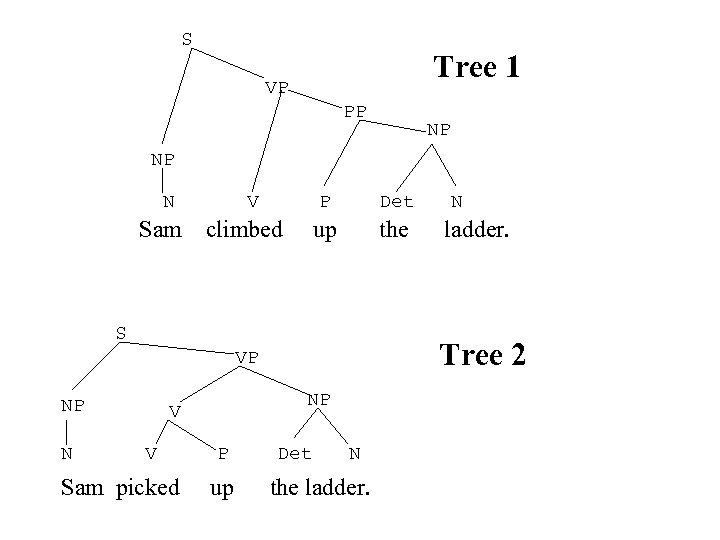

S Tree 1 VP PP NP NP N Sam V P climbed Det up the S N NP V V Sam picked P up ladder. Tree 2 VP NP N Det N the ladder.

Discussion • What are the constituents of Tree 1 and Tree 2? • Which strings of words are constituents in one tree but not the other?

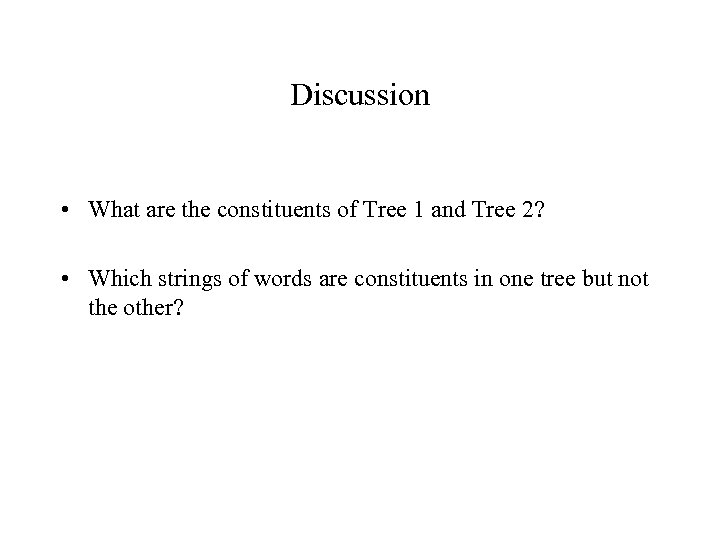

Well-formed constituents – A constituent is well formed if… – 1) a group of words can stand alone • Ex. “What did you find? ” “A puppy” (not “found a”) – 2) pronouns can substitute for natural groups • Ex. “Where did you find a puppy? ” “I found HIM in the park. ” – 3) a group of words can be move. [move unit] • Ex. It was [a puppy] that the child found. • [A puppy] was found by the child.

Sentences: Hierarchical Organization The boy raced the girl. Words grouped into natural units: [The boy] [raced the girl]. Further division: [ [The] [boy] ] [ [raced] [ [the] [girl] ] ]. Tree Diagram: verb phrase noun phrase raced The boy the girl

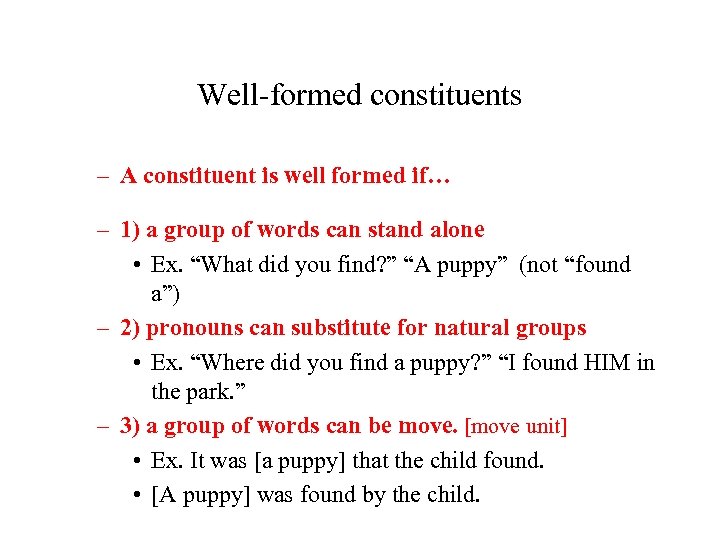

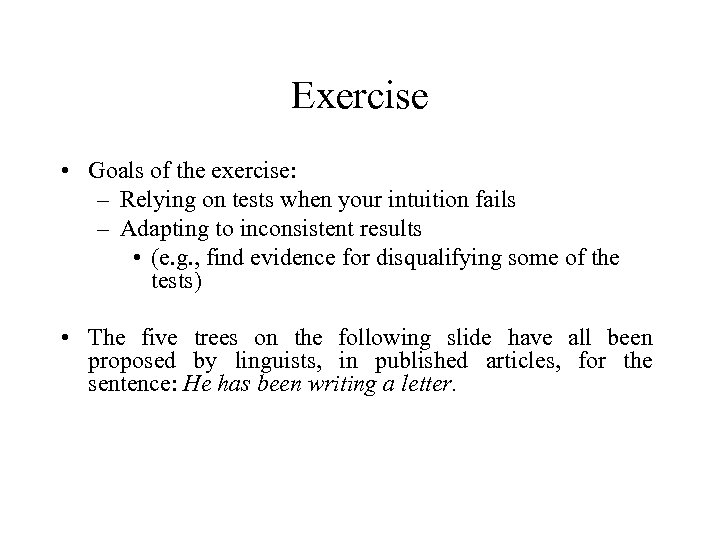

Exercise • Goals of the exercise: – Relying on tests when your intuition fails – Adapting to inconsistent results • (e. g. , find evidence for disqualifying some of the tests) • The five trees on the following slide have all been proposed by linguists, in published articles, for the sentence: He has been writing a letter.

S S TREE 1 VP AUX V He has NP PERF PROG V S V He has TREE 4 VP AUX V VP NP VP V He NP has VP AUX NP He has TREE 5 V NP been writing a letter. VP V been writing a letter. S NP been writing a letter. S TREE 3 V NP NP been writing a letter. VP VP NP PERF PROG V AUX He has TREE 2 NP been writing a letter.

![[reminder] Phrase Structure Tree [reminder] Phrase Structure Tree](https://present5.com/presentation/46446fa82a9c1f004981c34cb87efe68/image-11.jpg)

[reminder] Phrase Structure Tree

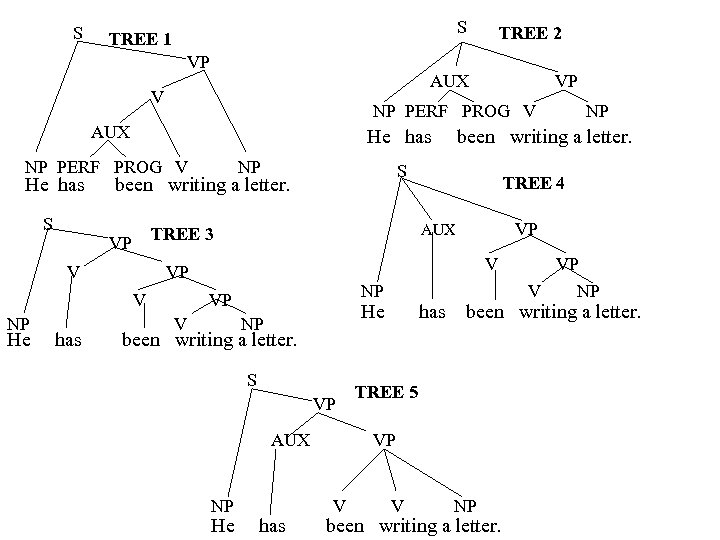

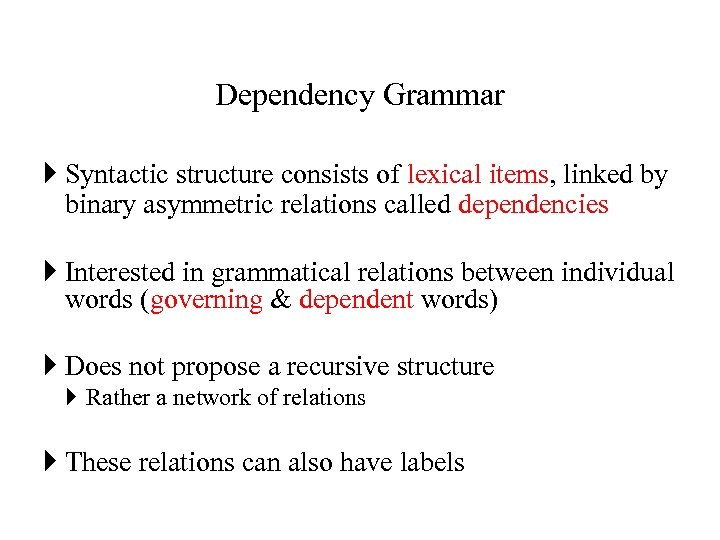

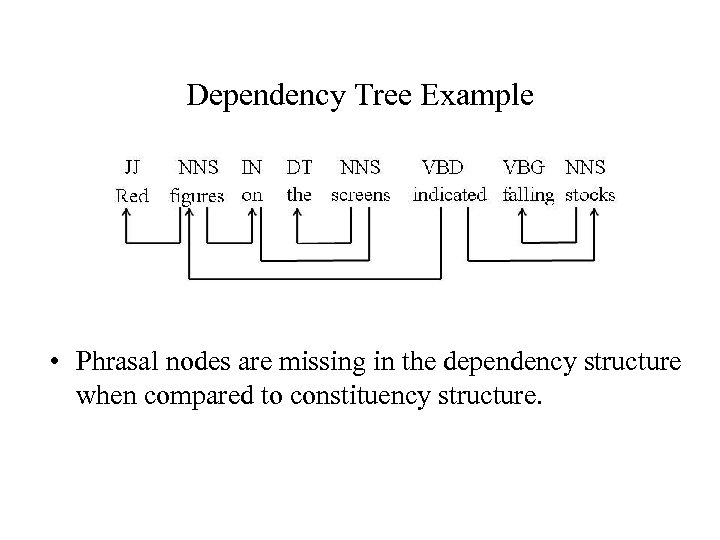

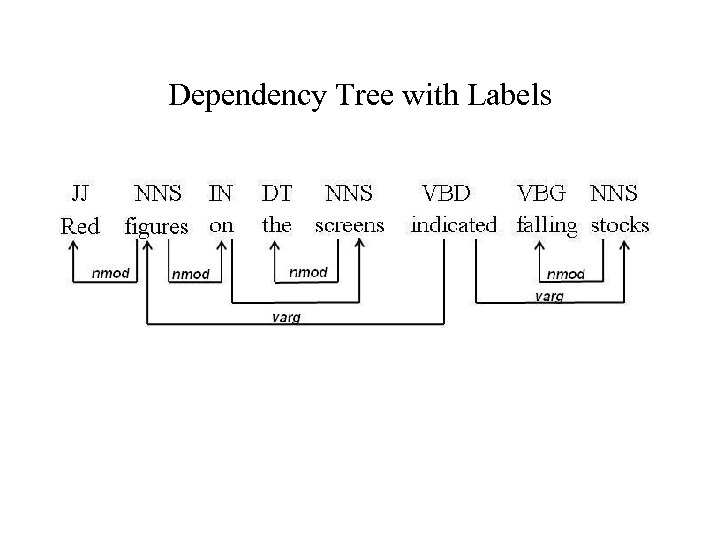

Dependency Grammar Syntactic structure consists of lexical items, linked by binary asymmetric relations called dependencies Interested in grammatical relations between individual words (governing & dependent words) Does not propose a recursive structure Rather a network of relations These relations can also have labels

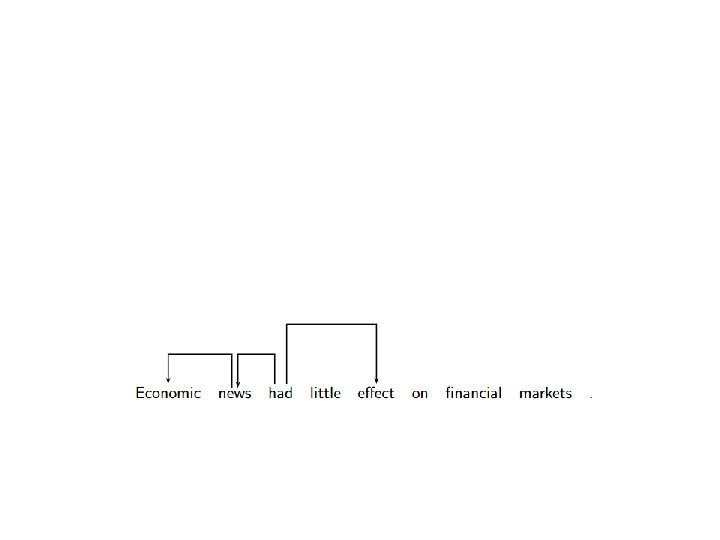

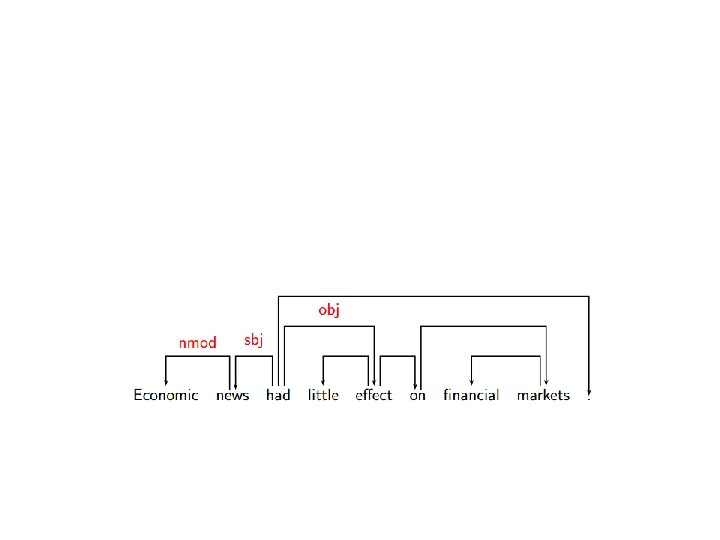

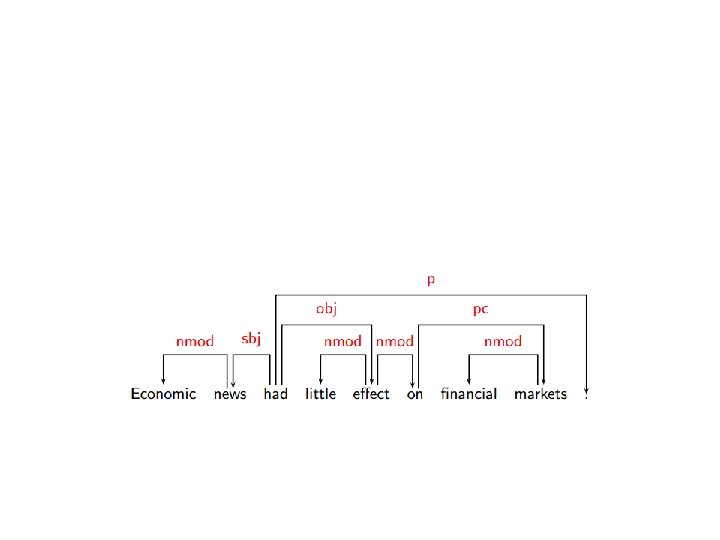

Dependency Tree Example • Phrasal nodes are missing in the dependency structure when compared to constituency structure.

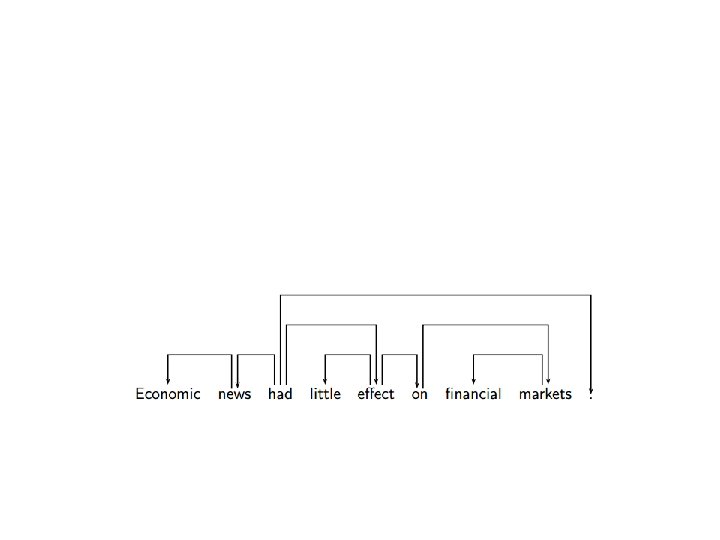

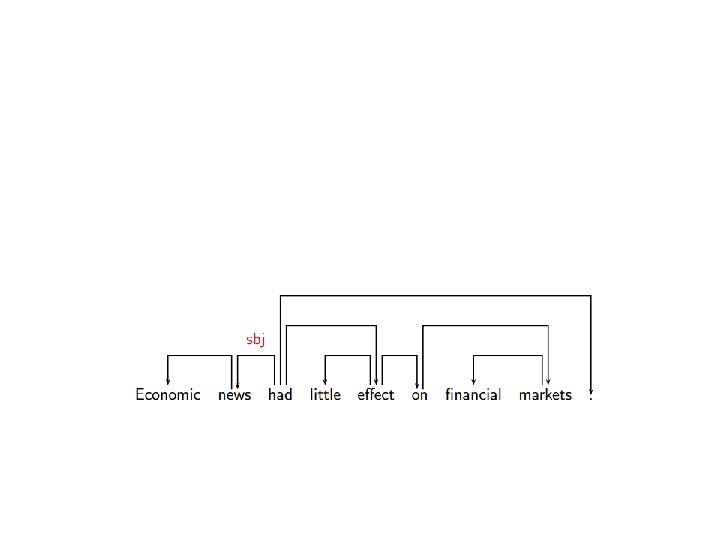

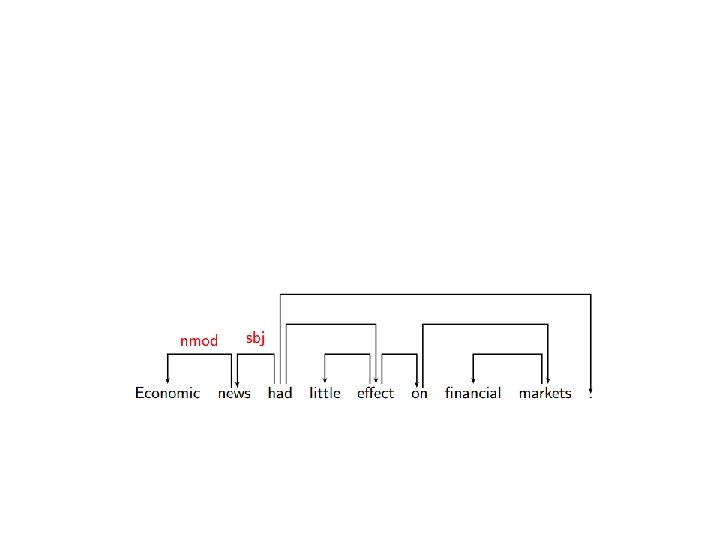

Dependency Tree with Labels

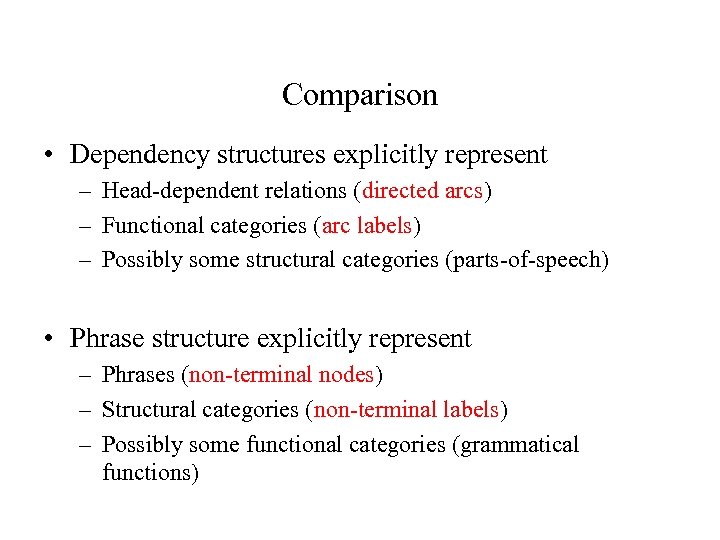

Comparison • Dependency structures explicitly represent – Head-dependent relations (directed arcs) – Functional categories (arc labels) – Possibly some structural categories (parts-of-speech) • Phrase structure explicitly represent – Phrases (non-terminal nodes) – Structural categories (non-terminal labels) – Possibly some functional categories (grammatical functions)

Comparison • Dependency Parsing is more straightforward – Parsing can be reduced to labeling each token pair wi and wj • Direct encoding of predicate-argument structure – Fragments are directly interpretable • Dependency structure independent of word order – Suitable for free word order languages (like Indian languages)

Predicate-argument structure • Predicates (predicational words) designate events, properties of, or relations between, entities. • Linguistic expressions can be dependent or independent. – hat - can be understood outside any circumstance, time, or person, it is an individual. – red - cannot be understood outside its association with an individual: red hat. • In linguistic terms, dependent phenomena are predicates, while individuals are arguments. • Predication - the way individuals instantiate properties, actions, attributes and states.

Predicate-argument structure • Who, what, where, when, why? – Predicates • Verbs: sell, buy, cost, etc. • Nouns: acquisition, etc. – Arguments • • • buyer seller goods money time etc. .

![Examples of types of Arguments (Semantic Roles) • • • • Agent: [Bill]Agent ate Examples of types of Arguments (Semantic Roles) • • • • Agent: [Bill]Agent ate](https://present5.com/presentation/46446fa82a9c1f004981c34cb87efe68/image-28.jpg)

Examples of types of Arguments (Semantic Roles) • • • • Agent: [Bill]Agent ate his soup quietly. Experiencer: The smell of lilies filled [Jennifer's]Experiencer nostrils. Theme: I like [Kim]Theme. Patient: The falling rocks crushed [the car]Patient. Instrument: Jamie cut the ribbon [with a pair of scissors]Instrument. Location: Johnny and Linda played carelessly [in the park]Location. Recipient: I sent [John]Recipient the letter. Source: The rocket was launched [from Central Command]Source. Time: The rocket was launched [yesterday]Time. Beneficiary: I baked [Reggie]Beneficiary a cake. Manner: [With great urgency]Manner, Agatha phoned 911. Purpose: Agatha phoned 911 right away [in order to get some help]P urpose. Cause: [Since Clyde was hungry]Cause, he ate the cake.

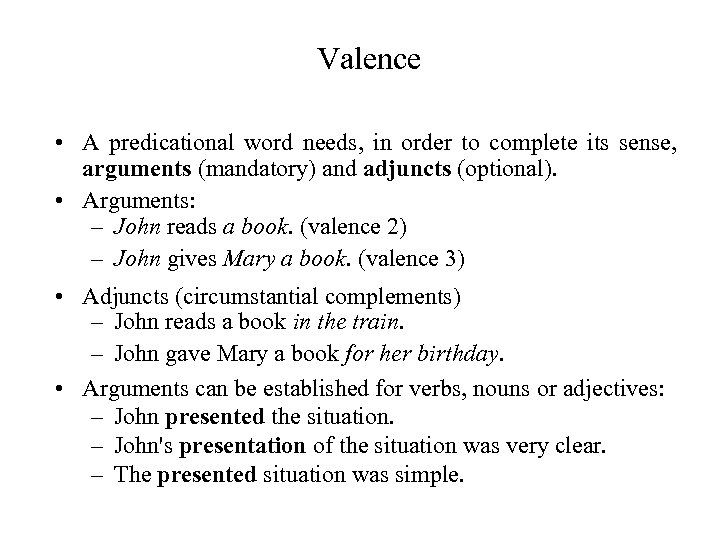

Valence • A predicational word needs, in order to complete its sense, arguments (mandatory) and adjuncts (optional). • Arguments: – John reads a book. (valence 2) – John gives Mary a book. (valence 3) • Adjuncts (circumstantial complements) – John reads a book in the train. – John gave Mary a book for her birthday. • Arguments can be established for verbs, nouns or adjectives: – John presented the situation. – John's presentation of the situation was very clear. – The presented situation was simple.

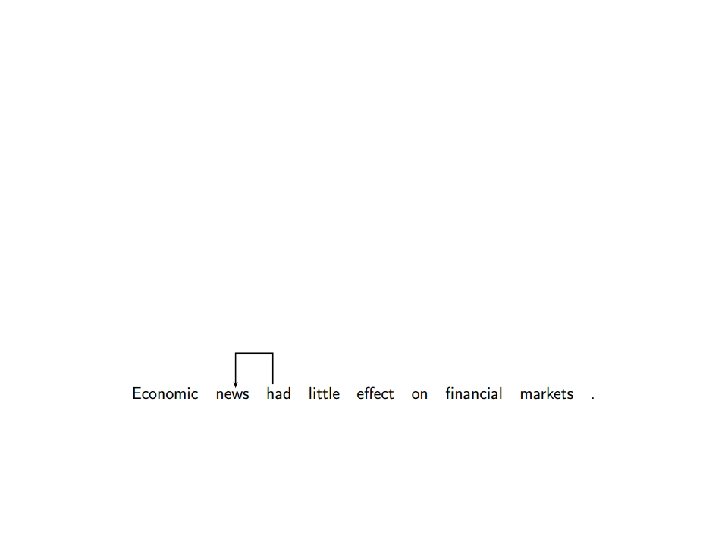

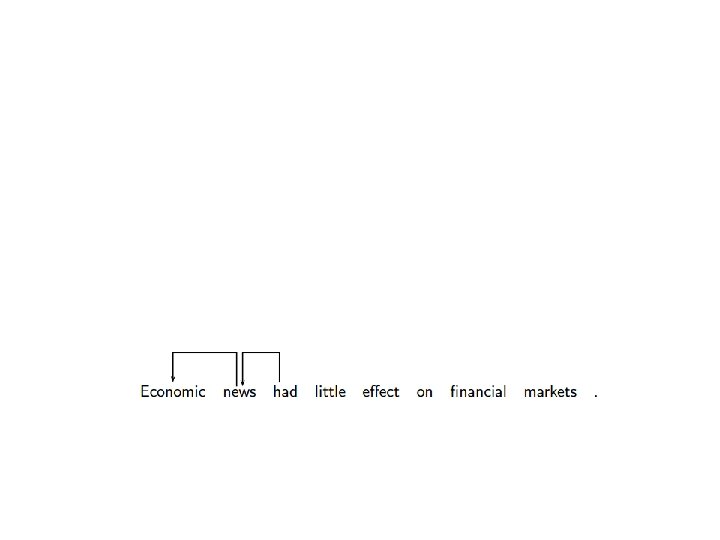

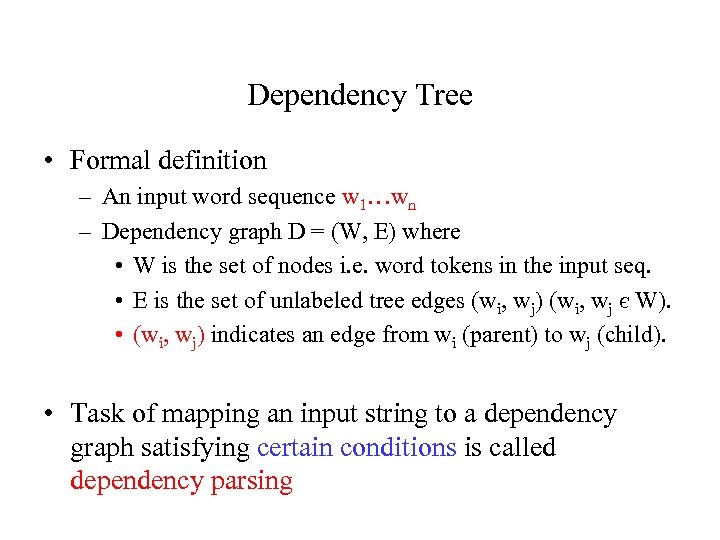

Dependency Tree • Formal definition – An input word sequence w 1…wn – Dependency graph D = (W, E) where • W is the set of nodes i. e. word tokens in the input seq. • E is the set of unlabeled tree edges (wi, wj) (wi, wj є W). • (wi, wj) indicates an edge from wi (parent) to wj (child). • Task of mapping an input string to a dependency graph satisfying certain conditions is called dependency parsing

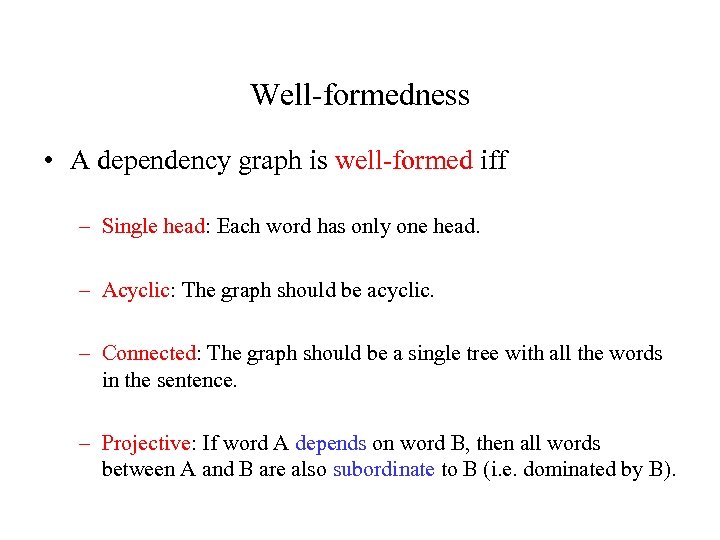

Well-formedness • A dependency graph is well-formed iff – Single head: Each word has only one head. – Acyclic: The graph should be acyclic. – Connected: The graph should be a single tree with all the words in the sentence. – Projective: If word A depends on word B, then all words between A and B are also subordinate to B (i. e. dominated by B).

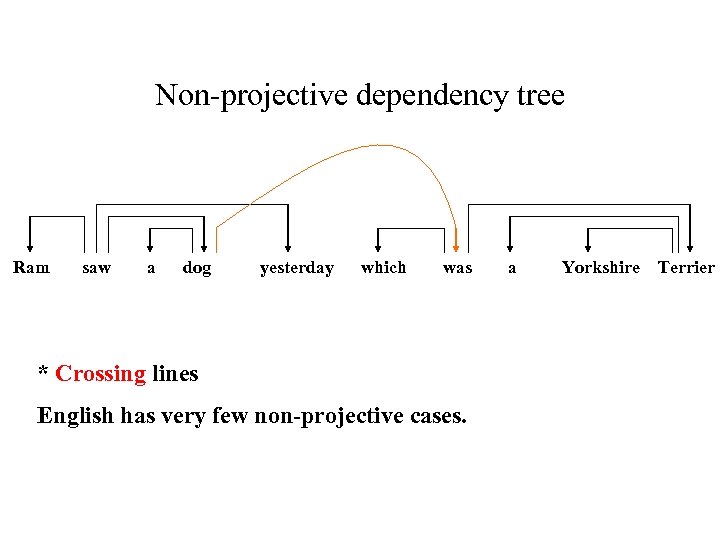

Non-projective dependency tree Ram saw a dog yesterday which was * Crossing lines English has very few non-projective cases. a Yorkshire Terrier

Dependency Parsing • Dependency based parsers can be broadly categorized into – Grammar driven approaches • Parsing done using grammars. – Data driven approaches • Parsing by training on annotated/un-annotated data. • These approaches are not mutually exclusive

Parsing Methods • Three main traditions – Dynamic programming • CKY, Eisner, Mc. Donald – Constraint satisfaction • Maruyama, Foth et al. , Duchier – Deterministic search • Covington, Yamada and Matsumuto, Nivre

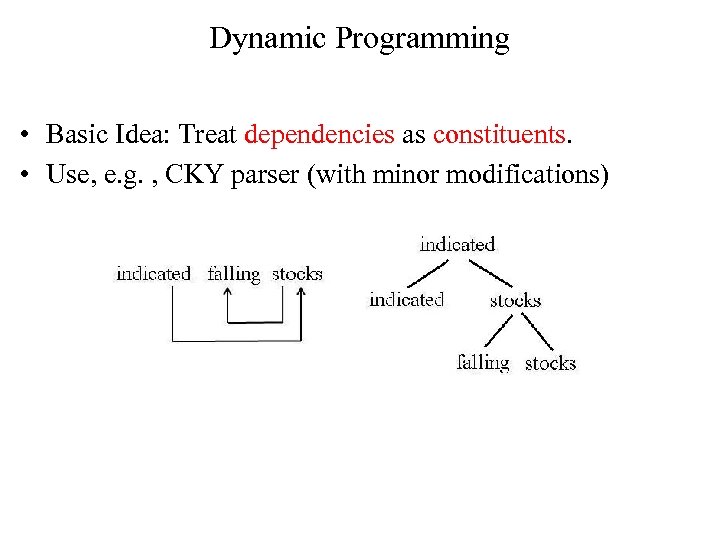

Dynamic Programming • Basic Idea: Treat dependencies as constituents. • Use, e. g. , CKY parser (with minor modifications)

Dependency Chart Parsing • Grammar is regarded as context-free, in which each node is lexicalized • Chart entries are subtrees, i. e. , words with all their left and right dependents • Problem: Different entries for different subtrees spanning a sequence of words with different heads • Time requirement: O(n 5)

![Dynamic Programming Approaches • • Original version [Hays 1964] (grammar driven) Link grammar [Sleator Dynamic Programming Approaches • • Original version [Hays 1964] (grammar driven) Link grammar [Sleator](https://present5.com/presentation/46446fa82a9c1f004981c34cb87efe68/image-37.jpg)

Dynamic Programming Approaches • • Original version [Hays 1964] (grammar driven) Link grammar [Sleator and Temperley 1991] (grammar driven) Bilexical grammar [Eisner 1996] (data driven) Maximum spanning tree [Mc. Donald 2006] (data driven)

Constraint Satisfaction • Uses Constraint Dependency Grammar • Grammar consists of a set of boolean constraints, i. e. logical formulas that describe well-formed trees • A constraint is a logical formula with variables that range over a set of predefined values • Parsing is defined as a constraint satisfaction problem • Constraint satisfaction removes values that contradict constraints

Constraint Satisfaction • Parsing is an eliminative process rather than a constructive one such as in CFG parsing • Constraint satisfaction in general is NP complete • Parser design must ensure practical efficiency • Different approaches – Constraint propagation techniques which ensure local consistency [Maruyama 1990] – Weighted CDG [Foth et al. 2000, Menzel and Schroder 1998]

Weighted Constraint Parsing • Robust parser, which uses soft constraints • Each constraint is assigned a weight between 0. 0 and 1. 0 • Weight 0. 0: hard constraint, can only be violated when no other parse is possible • Constraints assigned manually (or estimated from treebank) • Efficiency: uses a heuristic transformation-based constraint resolution method

Transformation-Based Constraint Resolution • Heuristic search • Very efficient • Idea: first construct arbitrary dependency structure, then try to correct errors • Error correction by transformations • Selection of transformations based on constraints that cause conflicts • Anytime property: parser maintains a complete analysis at anytime → can be stopped at any time and return a complete analysis

Deterministic Parsing • Basic idea: – Derive a single syntactic representation (dependency graph) through a deterministic sequence of elementary parsing actions – Sometimes combined with backtracking or repair • Motivation: – Psycholinguistic modeling – Efficiency – Simplicity

![Shift-Reduce Type Algorithms • Data structures: – Stack [. . . , wi ]S Shift-Reduce Type Algorithms • Data structures: – Stack [. . . , wi ]S](https://present5.com/presentation/46446fa82a9c1f004981c34cb87efe68/image-43.jpg)

Shift-Reduce Type Algorithms • Data structures: – Stack [. . . , wi ]S of partially processed tokens – Queue [wj , . . . ]Q of remaining input tokens • Parsing actions built from atomic actions: – Adding arcs (wi → wj , wi ← wj ) – Stack and queue operations • Left-to-right parsing • Restricted to projective dependency graphs

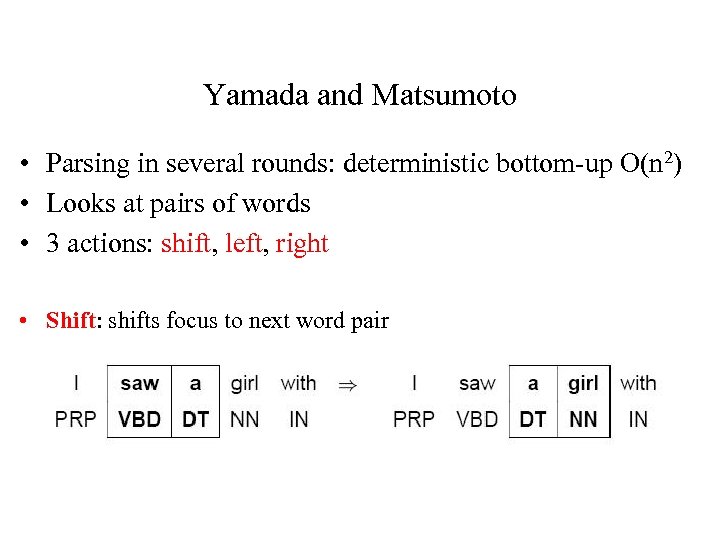

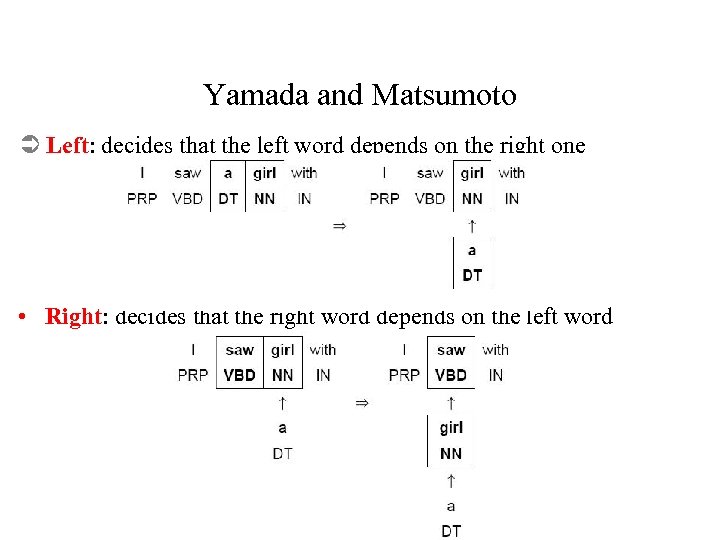

Yamada and Matsumoto • Parsing in several rounds: deterministic bottom-up O(n 2) • Looks at pairs of words • 3 actions: shift, left, right • Shift: shifts focus to next word pair

Yamada and Matsumoto Ü Left: decides that the left word depends on the right one • Right: decides that the right word depends on the left word

Parsing Algorithm • Go through each pair of words – Decide which action to take • If a relation was detected in a pass, do another pass • E. g. the little girl – First pass: relation between little and girl – Second pass: relation between the and girl • Decision on action depends on word pair and context

Classifier-Based Parsing • Data-driven deterministic parsing: – Deterministic parsing requires an oracle. – An oracle can be approximated by a classifier. – A classifier can be trained using treebank data. • Learning algorithms: – Support vector machines (SVM) [Kudo and Matsumoto 2002, Yamada and Matsumoto 2003, Isozaki et al. 2004, Cheng et al. 2004, Nivre et al. 2006] – Memory-based learning (MBL) [Nivre et al. 2004, Nivre and Scholz 2004] – Maximum entropy modeling (Max. Ent) [Cheng et al. 2005]

Feature Models • Learning problem: – Approximate a function from parser states, represented by feature vectors to parser actions, given a training set of gold standard derivations. • Typical features: – Tokens and POS tags of : • Target words • Linear context (neighbors in S and Q) • Structural context (parents, children, siblings in G) – Can not be used in dynamic programming algorithms.

Dependency Parsers for download • MST parser by Ryan Mc. Donald • Malt parser by Joakim Nivre • Stanford parser

Great! See you next time!

46446fa82a9c1f004981c34cb87efe68.ppt