3972d2030ab1cf2167f4594461e5d804.ppt

- Количество слайдов: 86

Cluster analysis and segmentation of customers

Cluster analysis and segmentation of customers

What is Clustering analysis? • Cluster analysis or clustering is the task of grouping a set of objects in such a way that objects in the same group (called a cluster) are more similar (in some sense or another) to each other than to those in other groups (clusters). • It is a collection of algorithms • Knowledge Discovery in Databases (KDD) - It is a main task of exploratory data mining, and a common technique for statistical data analysis, used in many fields. • The difference with classification and other techniques is that we do not know a priori the identity of the clusters

What is Clustering analysis? • Cluster analysis or clustering is the task of grouping a set of objects in such a way that objects in the same group (called a cluster) are more similar (in some sense or another) to each other than to those in other groups (clusters). • It is a collection of algorithms • Knowledge Discovery in Databases (KDD) - It is a main task of exploratory data mining, and a common technique for statistical data analysis, used in many fields. • The difference with classification and other techniques is that we do not know a priori the identity of the clusters

Why Clustering? A very wide range of marketing research application: • Customer segmentation, profiling etc • Market segmentation based on demographics, consumer behavior or other dimensions • Very useful for the design of recommending systems and search engines

Why Clustering? A very wide range of marketing research application: • Customer segmentation, profiling etc • Market segmentation based on demographics, consumer behavior or other dimensions • Very useful for the design of recommending systems and search engines

Examples • A chain of radio-stores uses cluster analysis for identifying three different customer types with varying needs. • An insurance company is using cluster analysis for classifying customers into segments like the “self confident customer”, “the price conscious customer” etc. • A producer of copying machines succeeds in classifying industrial customers into “satisfied” and “non-satisfied or quarrelling” customers.

Examples • A chain of radio-stores uses cluster analysis for identifying three different customer types with varying needs. • An insurance company is using cluster analysis for classifying customers into segments like the “self confident customer”, “the price conscious customer” etc. • A producer of copying machines succeeds in classifying industrial customers into “satisfied” and “non-satisfied or quarrelling” customers.

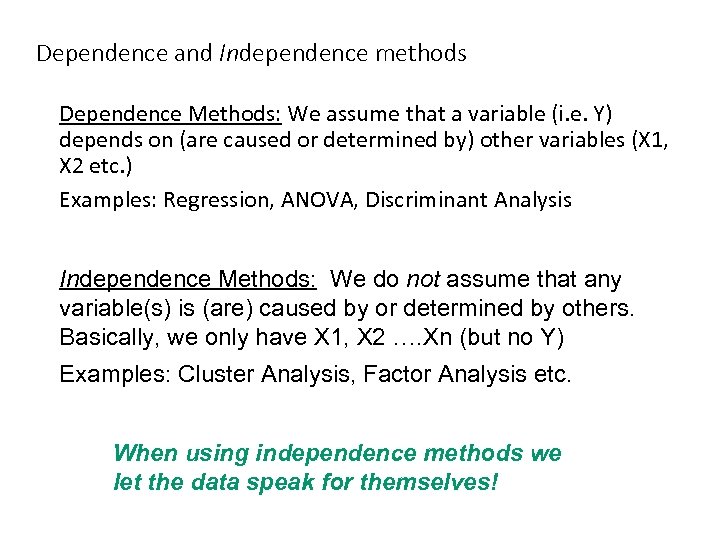

Dependence and Independence methods Dependence Methods: We assume that a variable (i. e. Y) depends on (are caused or determined by) other variables (X 1, X 2 etc. ) Examples: Regression, ANOVA, Discriminant Analysis Independence Methods: We do not assume that any variable(s) is (are) caused by or determined by others. Basically, we only have X 1, X 2 …. Xn (but no Y) Examples: Cluster Analysis, Factor Analysis etc. When using independence methods we let the data speak for themselves!

Dependence and Independence methods Dependence Methods: We assume that a variable (i. e. Y) depends on (are caused or determined by) other variables (X 1, X 2 etc. ) Examples: Regression, ANOVA, Discriminant Analysis Independence Methods: We do not assume that any variable(s) is (are) caused by or determined by others. Basically, we only have X 1, X 2 …. Xn (but no Y) Examples: Cluster Analysis, Factor Analysis etc. When using independence methods we let the data speak for themselves!

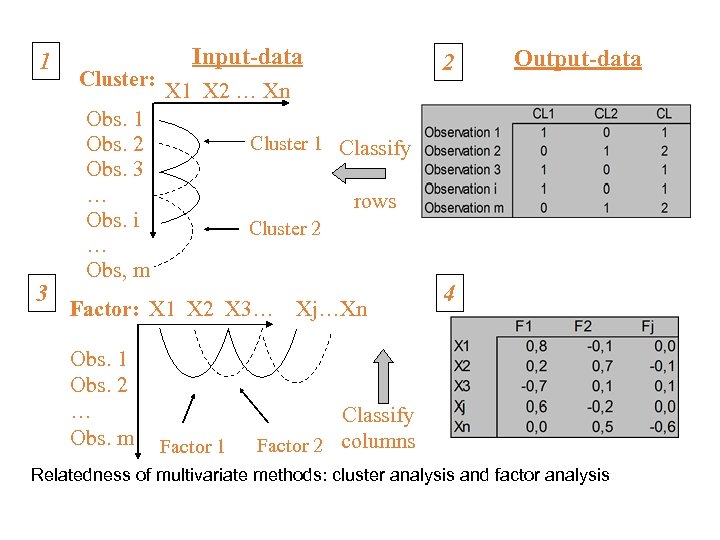

1 3 Cluster: Input-data Output-data X 1 X 2 … Xn Obs. 1 Obs. 2 Obs. 3 … Obs. i … Obs, m Cluster 1 Classify rows Cluster 2 Factor: X 1 X 2 X 3… Obs. 1 Obs. 2 … Obs. m 2 Factor 1 Xj…Xn 4 Classify Factor 2 columns Relatedness of multivariate methods: cluster analysis and factor analysis

1 3 Cluster: Input-data Output-data X 1 X 2 … Xn Obs. 1 Obs. 2 Obs. 3 … Obs. i … Obs, m Cluster 1 Classify rows Cluster 2 Factor: X 1 X 2 X 3… Obs. 1 Obs. 2 … Obs. m 2 Factor 1 Xj…Xn 4 Classify Factor 2 columns Relatedness of multivariate methods: cluster analysis and factor analysis

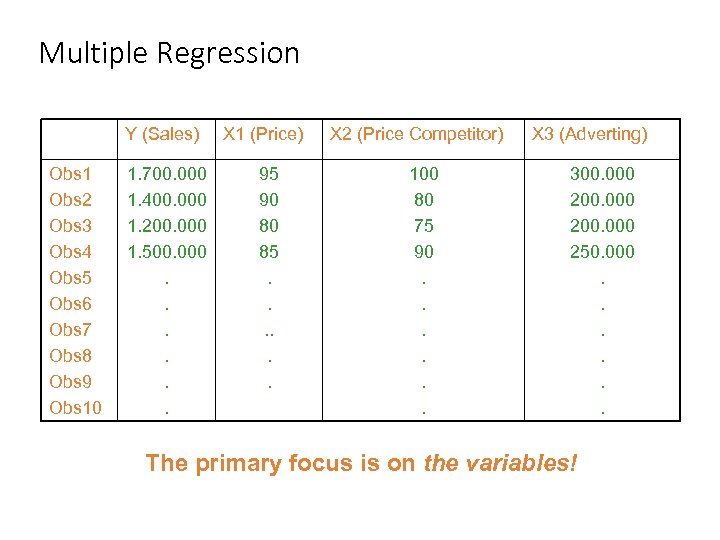

Multiple Regression Y (Sales) Obs 1 Obs 2 Obs 3 Obs 4 Obs 5 Obs 6 Obs 7 Obs 8 Obs 9 Obs 10 1. 700. 000 1. 400. 000 1. 200. 000 1. 500. 000. . . X 1 (Price) 95 90 80 85. . . X 2 (Price Competitor) 100 80 75 90. . . X 3 (Adverting) 300. 000 200. 000 250. 000. . . The primary focus is on the variables!

Multiple Regression Y (Sales) Obs 1 Obs 2 Obs 3 Obs 4 Obs 5 Obs 6 Obs 7 Obs 8 Obs 9 Obs 10 1. 700. 000 1. 400. 000 1. 200. 000 1. 500. 000. . . X 1 (Price) 95 90 80 85. . . X 2 (Price Competitor) 100 80 75 90. . . X 3 (Adverting) 300. 000 200. 000 250. 000. . . The primary focus is on the variables!

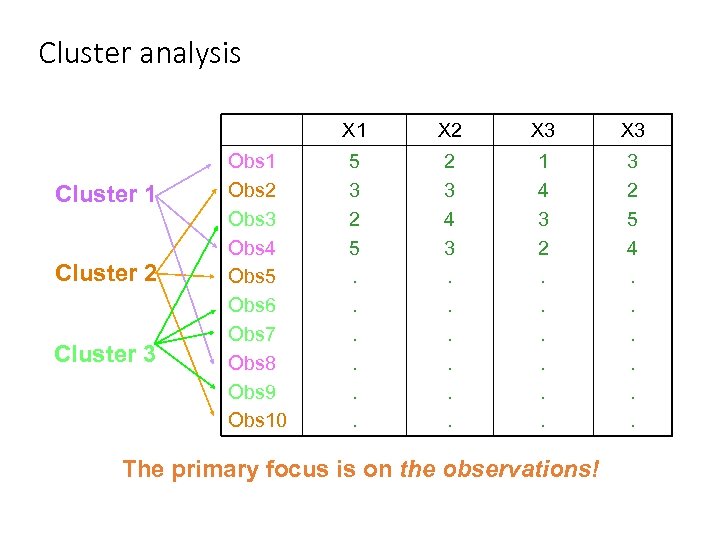

Cluster analysis X 1 Cluster 2 Cluster 3 Obs 1 Obs 2 Obs 3 Obs 4 Obs 5 Obs 6 Obs 7 Obs 8 Obs 9 Obs 10 X 2 X 3 5 3 2 5. . . 2 3 4 3. . . 1 4 3 2. . . 3 2 5 4. . . The primary focus is on the observations!

Cluster analysis X 1 Cluster 2 Cluster 3 Obs 1 Obs 2 Obs 3 Obs 4 Obs 5 Obs 6 Obs 7 Obs 8 Obs 9 Obs 10 X 2 X 3 5 3 2 5. . . 2 3 4 3. . . 1 4 3 2. . . 3 2 5 4. . . The primary focus is on the observations!

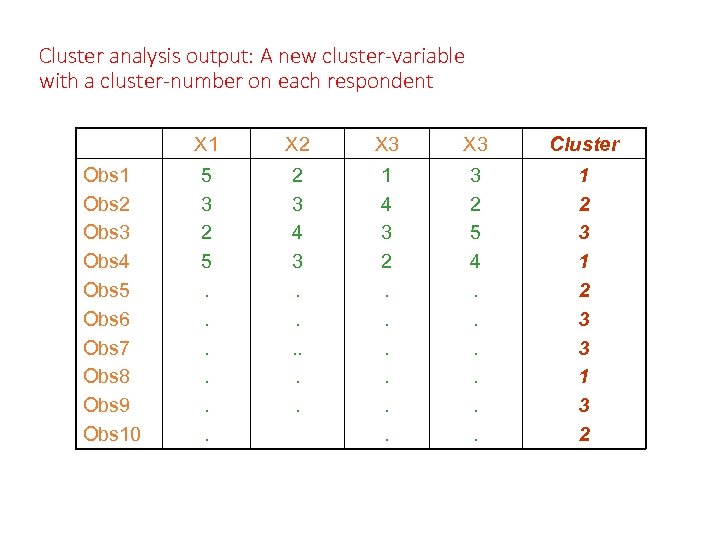

Cluster analysis output: A new cluster-variable with a cluster-number on each respondent X 1 Obs 2 Obs 3 Obs 4 Obs 5 Obs 6 Obs 7 Obs 8 Obs 9 Obs 10 X 2 X 3 Cluster 5 3 2 5. . . 2 3 4 3. . . 1 4 3 2. . . 3 2 5 4. . . 1 2 3 3 1 3 2

Cluster analysis output: A new cluster-variable with a cluster-number on each respondent X 1 Obs 2 Obs 3 Obs 4 Obs 5 Obs 6 Obs 7 Obs 8 Obs 9 Obs 10 X 2 X 3 Cluster 5 3 2 5. . . 2 3 4 3. . . 1 4 3 2. . . 3 2 5 4. . . 1 2 3 3 1 3 2

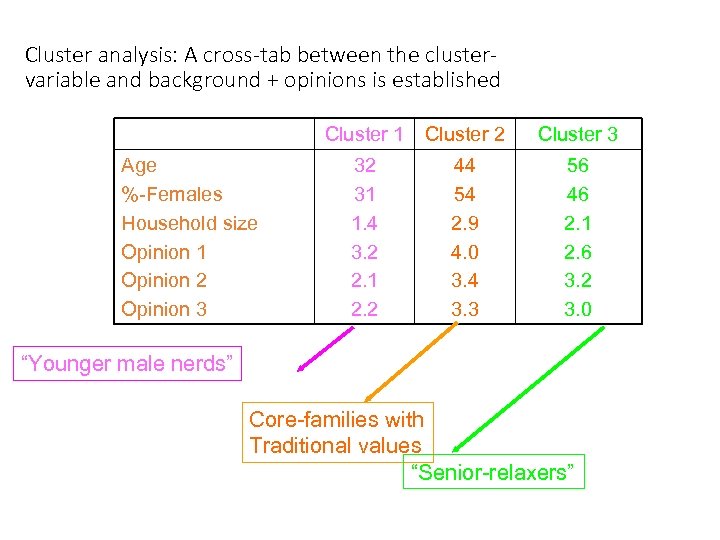

Cluster analysis: A cross-tab between the clustervariable and background + opinions is established Cluster 1 Age %-Females Household size Opinion 1 Opinion 2 Opinion 3 Cluster 2 Cluster 3 32 31 1. 4 3. 2 2. 1 2. 2 44 54 2. 9 4. 0 3. 4 3. 3 56 46 2. 1 2. 6 3. 2 3. 0 “Younger male nerds” Core-families with Traditional values “Senior-relaxers”

Cluster analysis: A cross-tab between the clustervariable and background + opinions is established Cluster 1 Age %-Females Household size Opinion 1 Opinion 2 Opinion 3 Cluster 2 Cluster 3 32 31 1. 4 3. 2 2. 1 2. 2 44 54 2. 9 4. 0 3. 4 3. 3 56 46 2. 1 2. 6 3. 2 3. 0 “Younger male nerds” Core-families with Traditional values “Senior-relaxers”

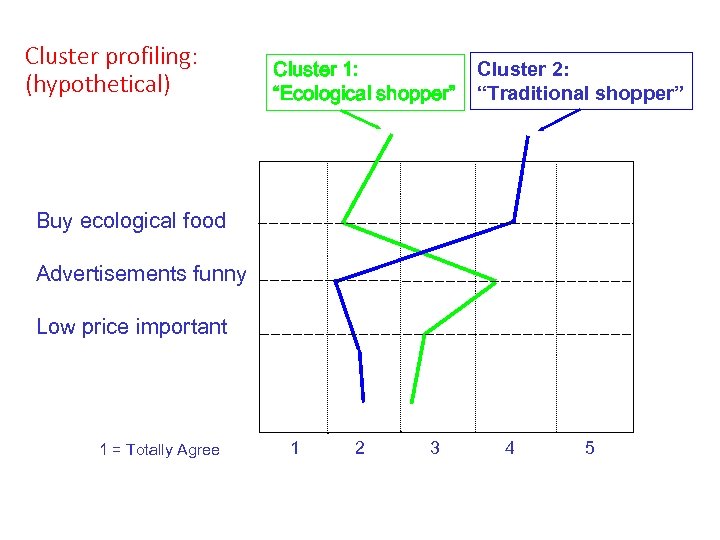

Cluster profiling: (hypothetical) Cluster 1: “Ecological shopper” Cluster 2: “Traditional shopper” Buy ecological food Advertisements funny Low price important 1 = Totally Agree 1 2 3 4 5

Cluster profiling: (hypothetical) Cluster 1: “Ecological shopper” Cluster 2: “Traditional shopper” Buy ecological food Advertisements funny Low price important 1 = Totally Agree 1 2 3 4 5

Governing principle Maximization of homogeneity within clusters and simultaneously Maximization of heterogeneity across clusters

Governing principle Maximization of homogeneity within clusters and simultaneously Maximization of heterogeneity across clusters

Clustering Steps 1. Data cleaning and processing 2. Select similarity measurement 3. Select and apply clustering method 4. Interpret the result

Clustering Steps 1. Data cleaning and processing 2. Select similarity measurement 3. Select and apply clustering method 4. Interpret the result

Data Cleaning and Processing The data can be numerical, binary, categorical, text and numbers, images etc A typical marketing data set may contain: • How recently the customer has purchased from our company (POS data) • The value of the items purchased (POS data) • The customer’s zip code (derived from loyalty cards, credit cards, manual input at check-outs) • The median house value for that zip code (secondary data found online) • Promo variables (e. g. if the customer redeemed a coupon, rebate or an email offer) • Other demographic and geographic data (age, gender, income, country, etc) • Other supplemental survey data yield the customer’s favorite TV shows, Internet sites etc. Do we need all that? Can we calculate a new figure and add this to our data set? GIGO – Garbage In – Garbage Out

Data Cleaning and Processing The data can be numerical, binary, categorical, text and numbers, images etc A typical marketing data set may contain: • How recently the customer has purchased from our company (POS data) • The value of the items purchased (POS data) • The customer’s zip code (derived from loyalty cards, credit cards, manual input at check-outs) • The median house value for that zip code (secondary data found online) • Promo variables (e. g. if the customer redeemed a coupon, rebate or an email offer) • Other demographic and geographic data (age, gender, income, country, etc) • Other supplemental survey data yield the customer’s favorite TV shows, Internet sites etc. Do we need all that? Can we calculate a new figure and add this to our data set? GIGO – Garbage In – Garbage Out

Similarity • The success of clustering depends on the measurement of similarity (or the opposite) • We need to establish a distance metric. • This depends on the type of the data we have available

Similarity • The success of clustering depends on the measurement of similarity (or the opposite) • We need to establish a distance metric. • This depends on the type of the data we have available

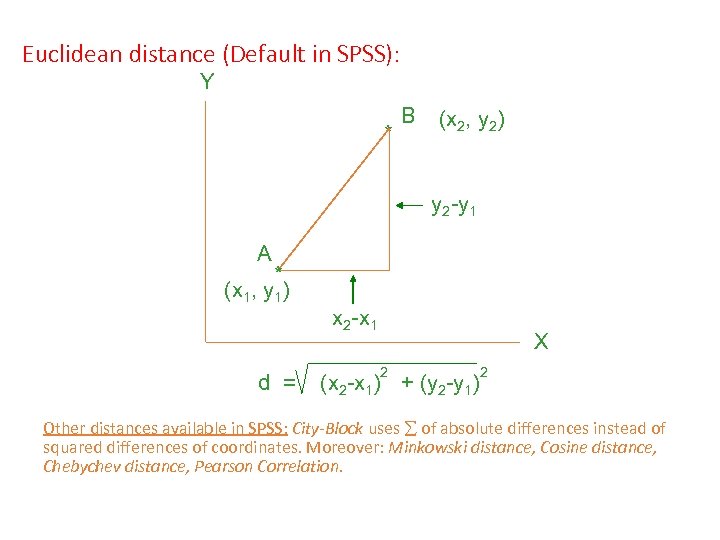

Euclidean distance (Default in SPSS): Y * B (x 2, y 2) y 2 -y 1 A * (x 1, y 1) d = x 2 -x 1 X 2 (x 2 -x 1) + (y 2 -y 1) 2 Other distances available in SPSS: City-Block uses of absolute differences instead of squared differences of coordinates. Moreover: Minkowski distance, Cosine distance, Chebychev distance, Pearson Correlation.

Euclidean distance (Default in SPSS): Y * B (x 2, y 2) y 2 -y 1 A * (x 1, y 1) d = x 2 -x 1 X 2 (x 2 -x 1) + (y 2 -y 1) 2 Other distances available in SPSS: City-Block uses of absolute differences instead of squared differences of coordinates. Moreover: Minkowski distance, Cosine distance, Chebychev distance, Pearson Correlation.

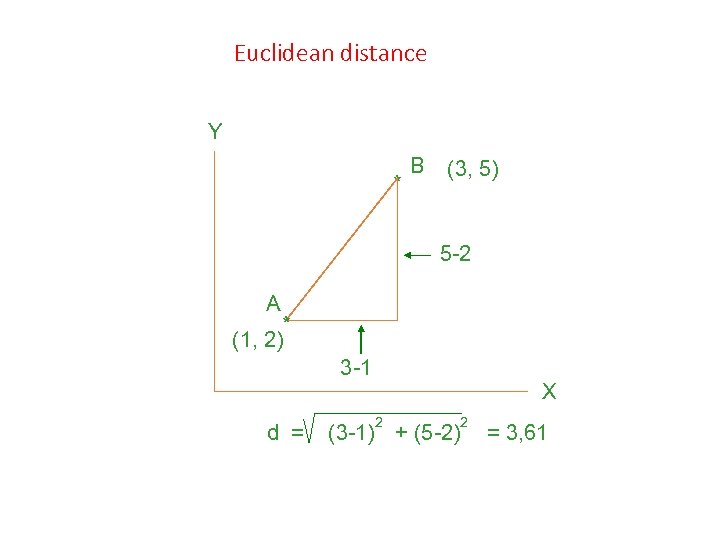

Euclidean distance Y * B (3, 5) 5 -2 A * (1, 2) 3 -1 d = X 2 (3 -1) + (5 -2) 2 = 3, 61

Euclidean distance Y * B (3, 5) 5 -2 A * (1, 2) 3 -1 d = X 2 (3 -1) + (5 -2) 2 = 3, 61

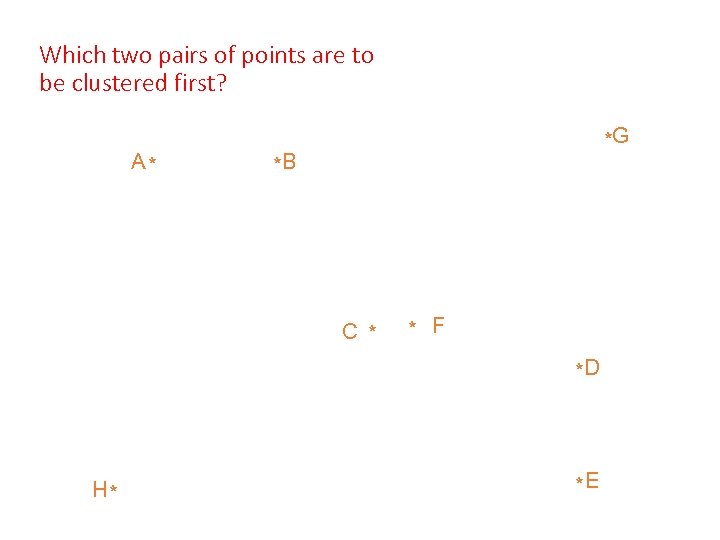

Which two pairs of points are to be clustered first? A* *G *B C * * F *D H* *E

Which two pairs of points are to be clustered first? A* *G *B C * * F *D H* *E

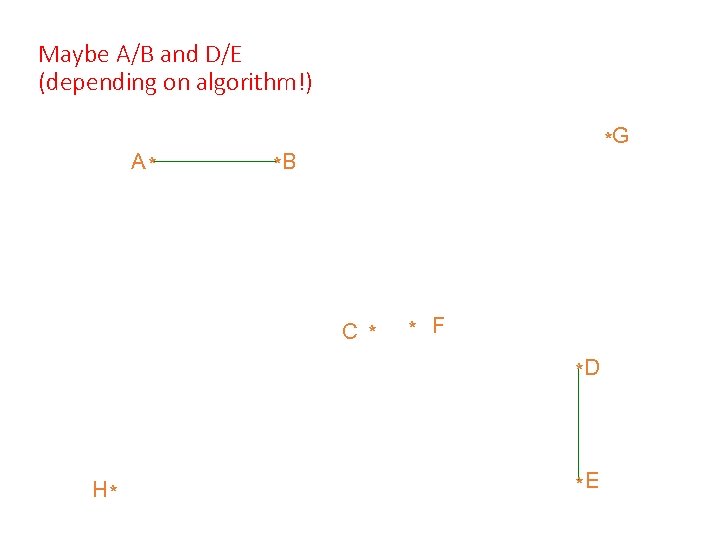

Maybe A/B and D/E (depending on algorithm!) A* *G *B C * * F *D H* *E

Maybe A/B and D/E (depending on algorithm!) A* *G *B C * * F *D H* *E

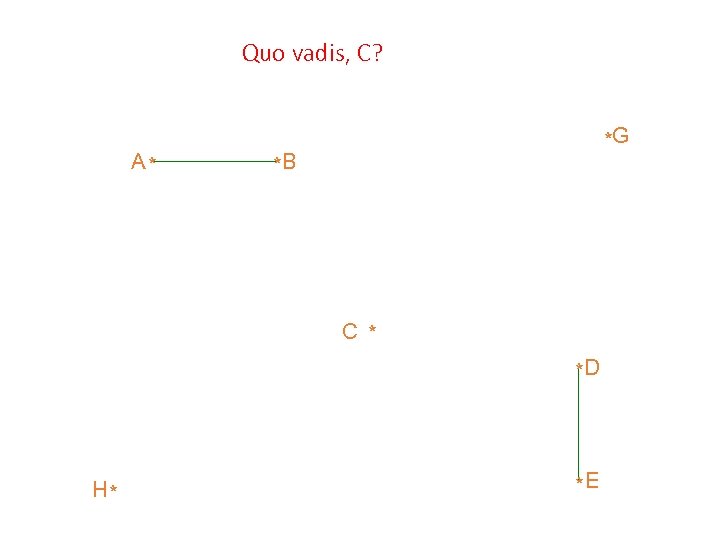

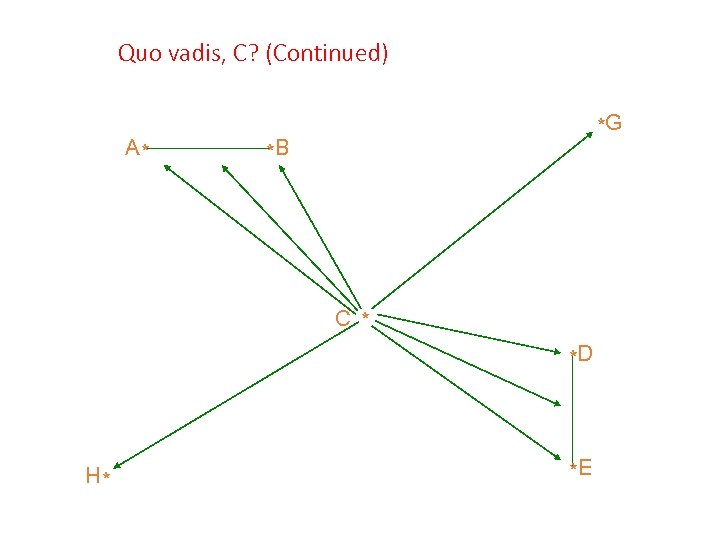

Quo vadis, C? A* *G *B C * *D H* *E

Quo vadis, C? A* *G *B C * *D H* *E

Quo vadis, C? (Continued) A* *G *B C * *D H* *E

Quo vadis, C? (Continued) A* *G *B C * *D H* *E

How does one decide which cluster a “newcoming” point is to join? Measuring distances from point to clusters or points: • “Farthest neighbour” (complete linkage) • “Nearest neighbour” (single linkage) • “Neighbourhood centre” (average linkage)

How does one decide which cluster a “newcoming” point is to join? Measuring distances from point to clusters or points: • “Farthest neighbour” (complete linkage) • “Nearest neighbour” (single linkage) • “Neighbourhood centre” (average linkage)

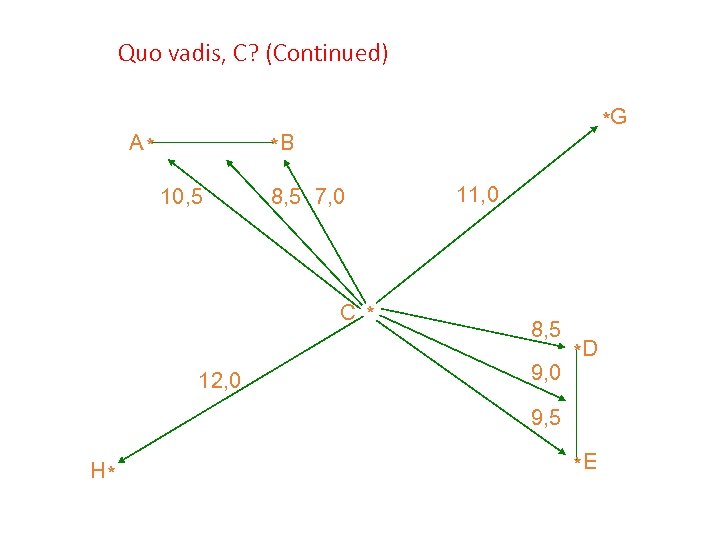

Quo vadis, C? (Continued) A* *G *B 10, 5 8, 5 7, 0 C * 12, 0 11, 0 8, 5 9, 0 *D 9, 5 H* *E

Quo vadis, C? (Continued) A* *G *B 10, 5 8, 5 7, 0 C * 12, 0 11, 0 8, 5 9, 0 *D 9, 5 H* *E

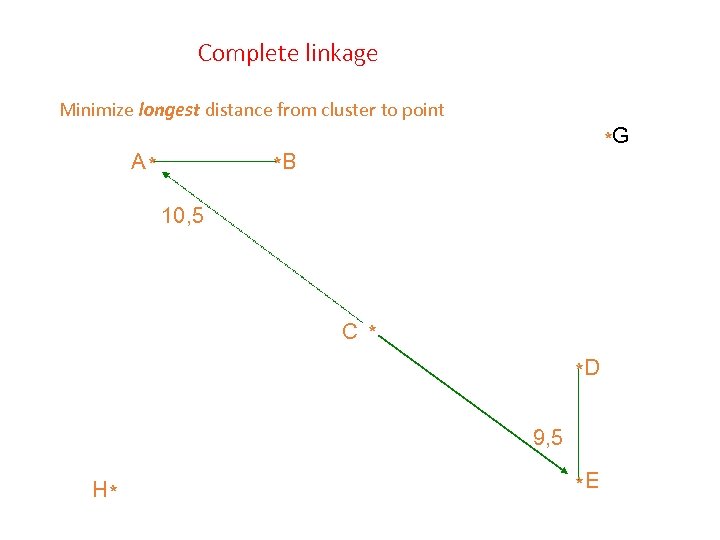

Complete linkage Minimize longest distance from cluster to point A* *G *B 10, 5 C * *D 9, 5 H* *E

Complete linkage Minimize longest distance from cluster to point A* *G *B 10, 5 C * *D 9, 5 H* *E

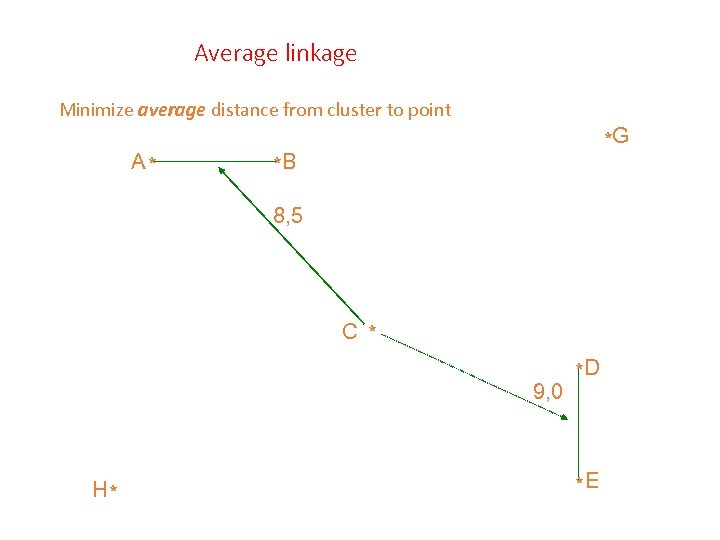

Average linkage Minimize average distance from cluster to point A* *G *B 8, 5 C * 9, 0 H* *D *E

Average linkage Minimize average distance from cluster to point A* *G *B 8, 5 C * 9, 0 H* *D *E

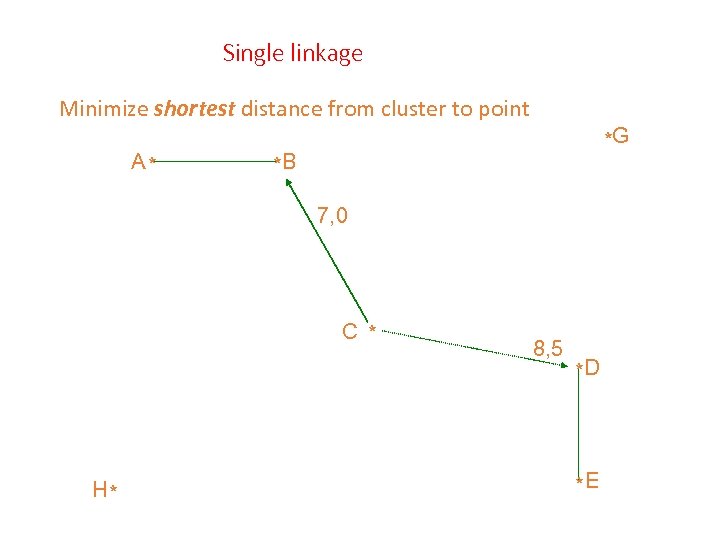

Single linkage Minimize shortest distance from cluster to point A* *G *B 7, 0 C * H* 8, 5 *D *E

Single linkage Minimize shortest distance from cluster to point A* *G *B 7, 0 C * H* 8, 5 *D *E

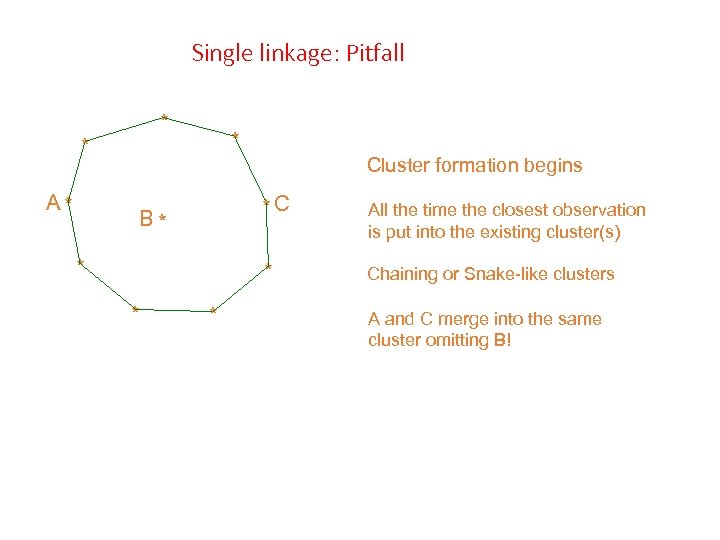

Single linkage: Pitfall * * A* * Cluster formation begins *C * * * All the time the closest observation is put into the existing cluster(s) * B* Chaining or Snake-like clusters A and C merge into the same cluster omitting B!

Single linkage: Pitfall * * A* * Cluster formation begins *C * * * All the time the closest observation is put into the existing cluster(s) * B* Chaining or Snake-like clusters A and C merge into the same cluster omitting B!

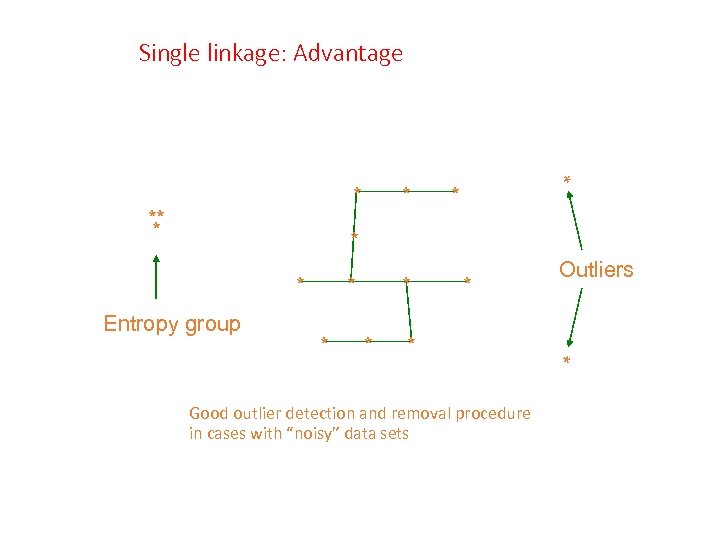

Single linkage: Advantage ** * * * Entropy group * * * Good outlier detection and removal procedure in cases with “noisy” data sets Outliers *

Single linkage: Advantage ** * * * Entropy group * * * Good outlier detection and removal procedure in cases with “noisy” data sets Outliers *

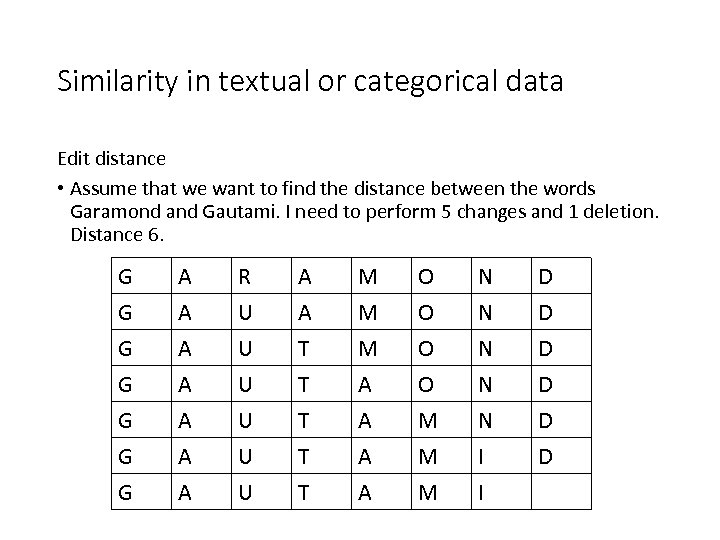

Similarity in textual or categorical data Edit distance • Assume that we want to find the distance between the words Garamond and Gautami. I need to perform 5 changes and 1 deletion. Distance 6. G G A A R U U U A A T T M M M A O O N N D D G G G A A A U U U T T T A A A M M M N I I D D

Similarity in textual or categorical data Edit distance • Assume that we want to find the distance between the words Garamond and Gautami. I need to perform 5 changes and 1 deletion. Distance 6. G G A A R U U U A A T T M M M A O O N N D D G G G A A A U U U T T T A A A M M M N I I D D

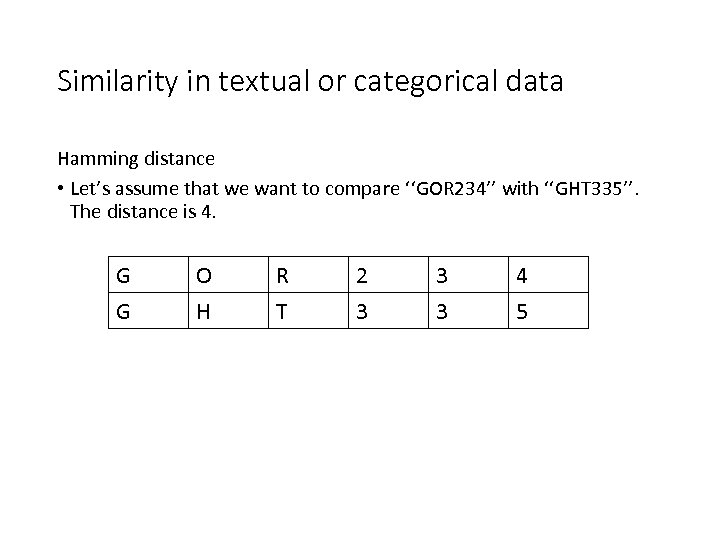

Similarity in textual or categorical data Hamming distance • Let’s assume that we want to compare ‘‘GOR 234’’ with ‘‘GHT 335’’. The distance is 4. G G O H R T 2 3 3 3 4 5

Similarity in textual or categorical data Hamming distance • Let’s assume that we want to compare ‘‘GOR 234’’ with ‘‘GHT 335’’. The distance is 4. G G O H R T 2 3 3 3 4 5

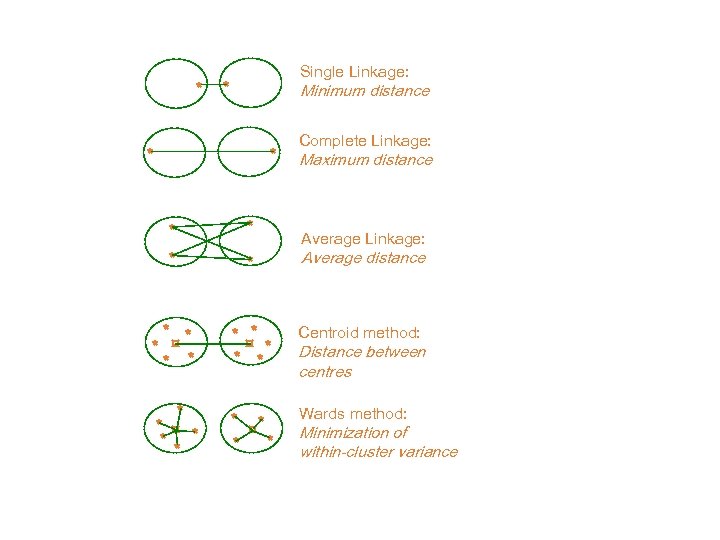

* Single Linkage: * Minimum distance * * Complete Linkage: Maximum distance * * Average distance * * * ¤ * * * Centroid method: * * ¤ * * Wards method: * ¤* Average Linkage: * Distance between centres Minimization of within-cluster variance

* Single Linkage: * Minimum distance * * Complete Linkage: Maximum distance * * Average distance * * * ¤ * * * Centroid method: * * ¤ * * Wards method: * ¤* Average Linkage: * Distance between centres Minimization of within-cluster variance

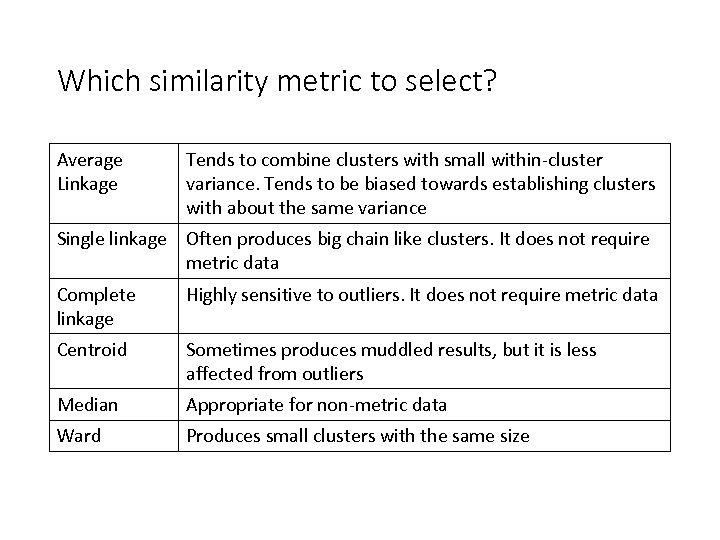

Which similarity metric to select? Average Linkage Tends to combine clusters with small within-cluster variance. Tends to be biased towards establishing clusters with about the same variance Single linkage Often produces big chain like clusters. It does not require metric data Complete linkage Highly sensitive to outliers. It does not require metric data Centroid Sometimes produces muddled results, but it is less affected from outliers Median Appropriate for non-metric data Ward Produces small clusters with the same size

Which similarity metric to select? Average Linkage Tends to combine clusters with small within-cluster variance. Tends to be biased towards establishing clusters with about the same variance Single linkage Often produces big chain like clusters. It does not require metric data Complete linkage Highly sensitive to outliers. It does not require metric data Centroid Sometimes produces muddled results, but it is less affected from outliers Median Appropriate for non-metric data Ward Produces small clusters with the same size

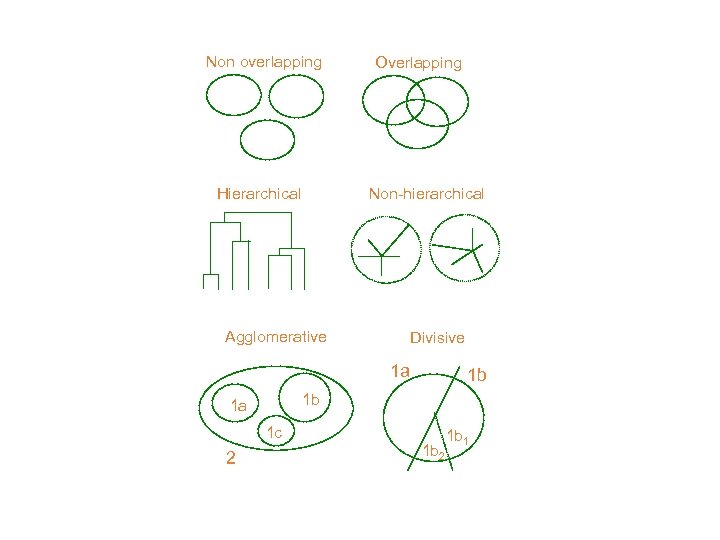

Non overlapping Hierarchical Overlapping Non-hierarchical Agglomerative Divisive 1 a 1 b 1 a 1 c 2 1 b 1 b 2 1 b 1

Non overlapping Hierarchical Overlapping Non-hierarchical Agglomerative Divisive 1 a 1 b 1 a 1 c 2 1 b 1 b 2 1 b 1

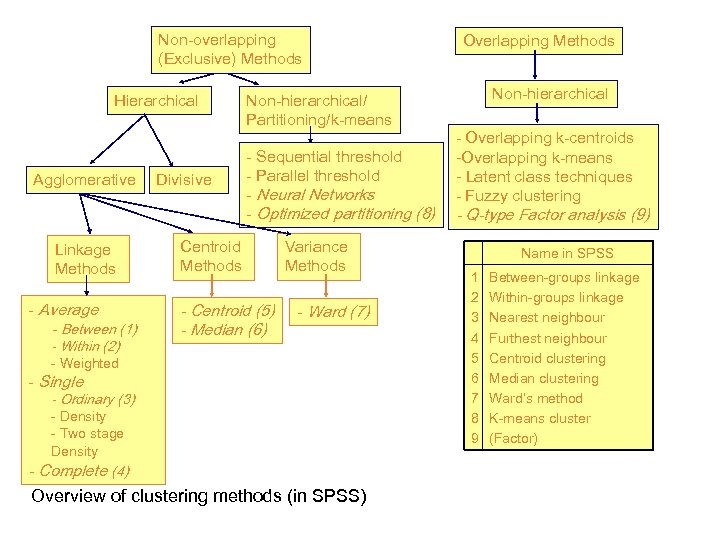

Non-overlapping (Exclusive) Methods Hierarchical Agglomerative Linkage Methods - Average - Between (1) - Within (2) Divisive Non-hierarchical/ Partitioning/k-means - Sequential threshold - Parallel threshold - Neural Networks - Optimized partitioning (8) Centroid Methods - Centroid (5) - Median (6) Variance Methods - Ward (7) - Weighted - Single - Ordinary (3) - Density - Two stage Density - Complete (4) Overview of clustering methods (in SPSS) Overlapping Methods Non-hierarchical - Overlapping k-centroids -Overlapping k-means - Latent class techniques - Fuzzy clustering - Q-type Factor analysis (9) Name in SPSS 1 2 3 4 5 6 7 8 9 Between-groups linkage Within-groups linkage Nearest neighbour Furthest neighbour Centroid clustering Median clustering Ward’s method K-means cluster (Factor)

Non-overlapping (Exclusive) Methods Hierarchical Agglomerative Linkage Methods - Average - Between (1) - Within (2) Divisive Non-hierarchical/ Partitioning/k-means - Sequential threshold - Parallel threshold - Neural Networks - Optimized partitioning (8) Centroid Methods - Centroid (5) - Median (6) Variance Methods - Ward (7) - Weighted - Single - Ordinary (3) - Density - Two stage Density - Complete (4) Overview of clustering methods (in SPSS) Overlapping Methods Non-hierarchical - Overlapping k-centroids -Overlapping k-means - Latent class techniques - Fuzzy clustering - Q-type Factor analysis (9) Name in SPSS 1 2 3 4 5 6 7 8 9 Between-groups linkage Within-groups linkage Nearest neighbour Furthest neighbour Centroid clustering Median clustering Ward’s method K-means cluster (Factor)

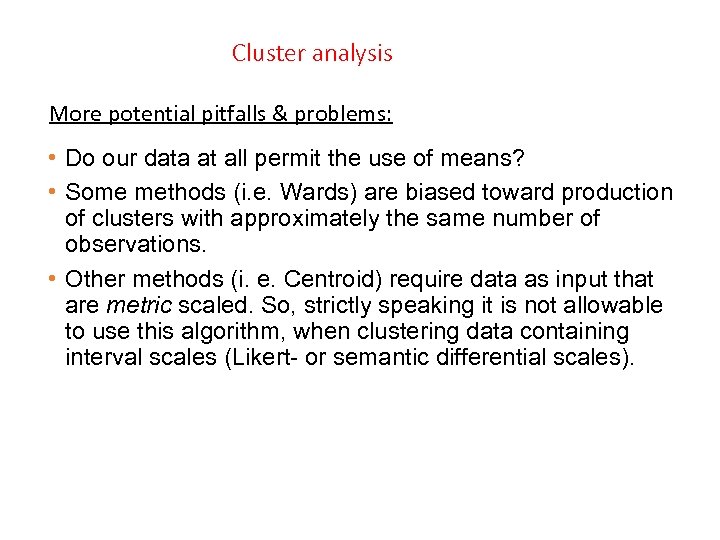

Cluster analysis More potential pitfalls & problems: • Do our data at all permit the use of means? • Some methods (i. e. Wards) are biased toward production of clusters with approximately the same number of observations. • Other methods (i. e. Centroid) require data as input that are metric scaled. So, strictly speaking it is not allowable to use this algorithm, when clustering data containing interval scales (Likert- or semantic differential scales).

Cluster analysis More potential pitfalls & problems: • Do our data at all permit the use of means? • Some methods (i. e. Wards) are biased toward production of clusters with approximately the same number of observations. • Other methods (i. e. Centroid) require data as input that are metric scaled. So, strictly speaking it is not allowable to use this algorithm, when clustering data containing interval scales (Likert- or semantic differential scales).

Hierarchical Clustering Example I

Hierarchical Clustering Example I

Steps 1. Let’s assume that each point is a cluster. 2. We find the closest distance between clusters, and we merge them. 3. We recalculate the distances (many alternatives here!). 4. We continue until all points belong to one cluster 5. We draw the dendrogram and we select were to cut.

Steps 1. Let’s assume that each point is a cluster. 2. We find the closest distance between clusters, and we merge them. 3. We recalculate the distances (many alternatives here!). 4. We continue until all points belong to one cluster 5. We draw the dendrogram and we select were to cut.

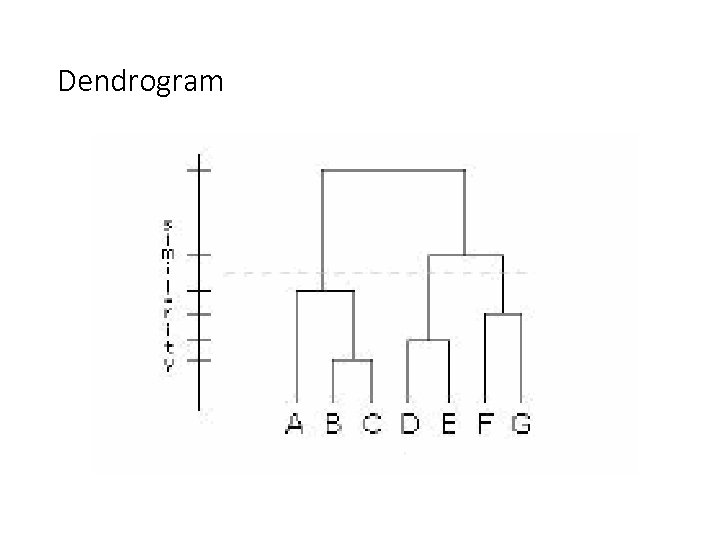

Dendrogram

Dendrogram

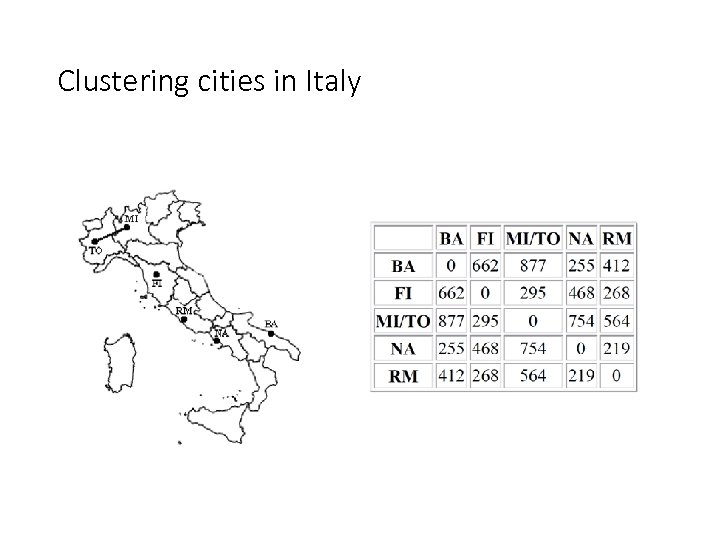

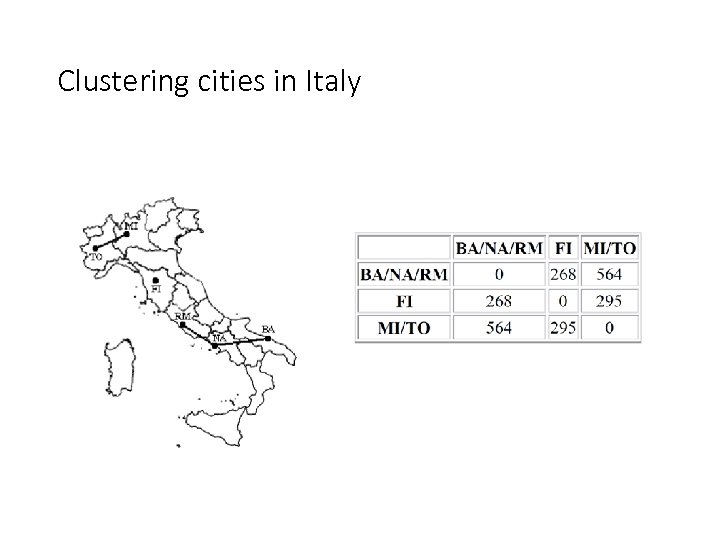

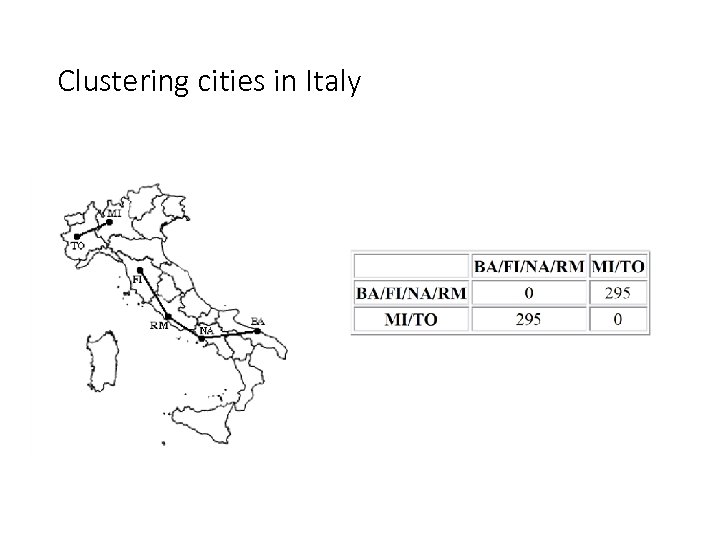

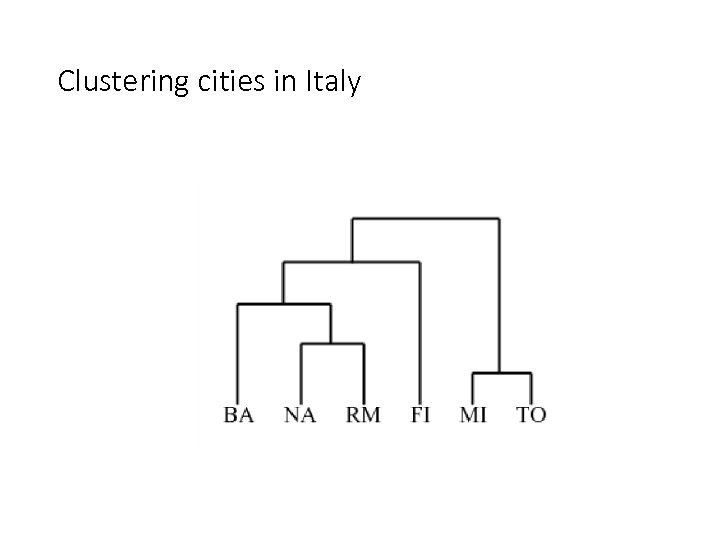

Clustering cities in Italy

Clustering cities in Italy

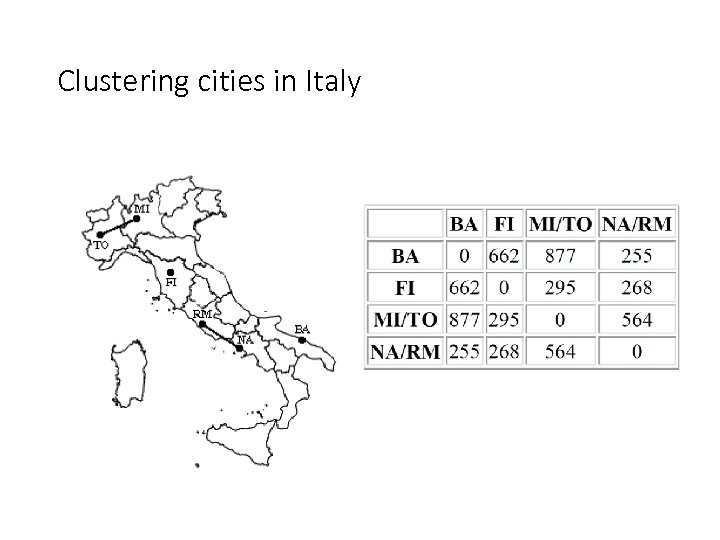

Clustering cities in Italy

Clustering cities in Italy

Clustering cities in Italy

Clustering cities in Italy

Clustering cities in Italy

Clustering cities in Italy

Clustering cities in Italy

Clustering cities in Italy

Clustering cities in Italy

Clustering cities in Italy

Hierarchical Clustering Example II

Hierarchical Clustering Example II

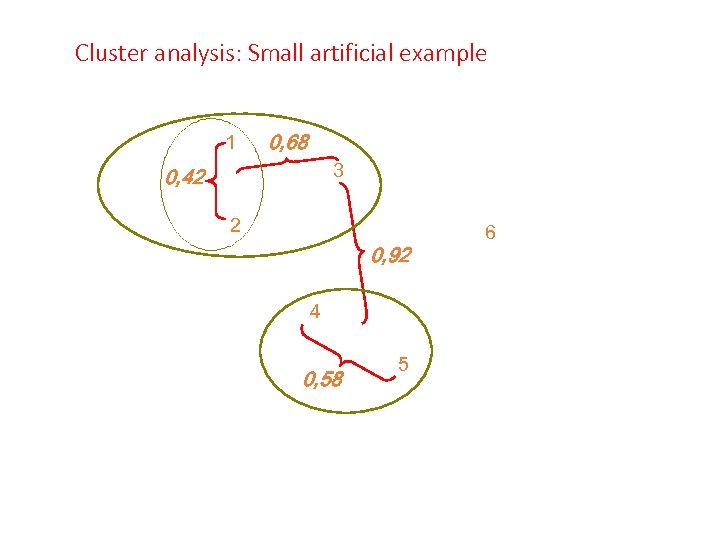

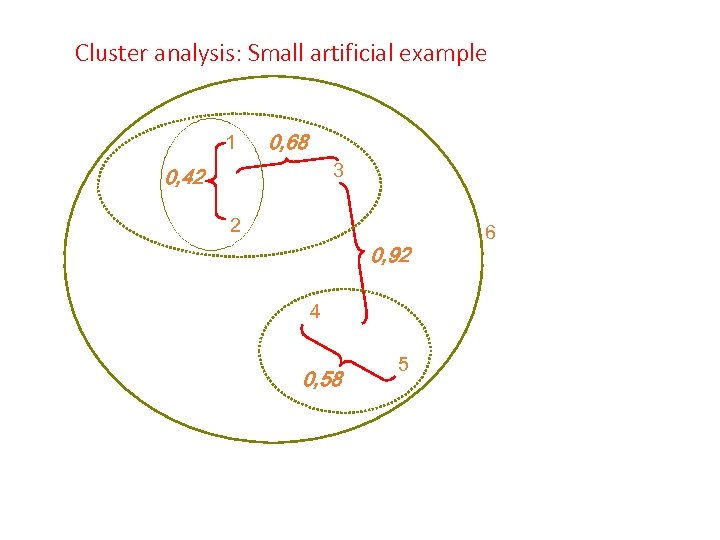

Cluster analysis: Small artificial example 1 0, 68 3 0, 42 2 0, 92 4 0, 58 5 6

Cluster analysis: Small artificial example 1 0, 68 3 0, 42 2 0, 92 4 0, 58 5 6

Cluster analysis: Small artificial example 1 0, 68 3 0, 42 2 0, 92 4 0, 58 5 6

Cluster analysis: Small artificial example 1 0, 68 3 0, 42 2 0, 92 4 0, 58 5 6

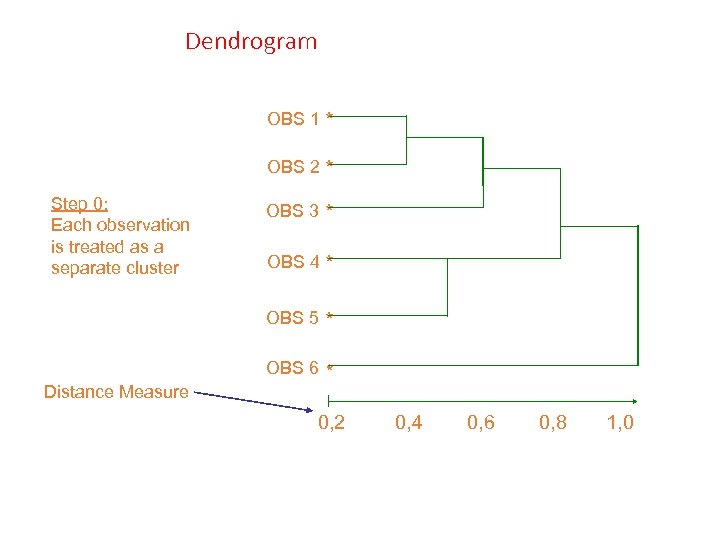

Dendrogram OBS 1 * OBS 2 * Step 0: Each observation is treated as a separate cluster OBS 3 OBS 4 * OBS 5 Distance Measure * * OBS 6 * 0, 2 0, 4 0, 6 0, 8 1, 0

Dendrogram OBS 1 * OBS 2 * Step 0: Each observation is treated as a separate cluster OBS 3 OBS 4 * OBS 5 Distance Measure * * OBS 6 * 0, 2 0, 4 0, 6 0, 8 1, 0

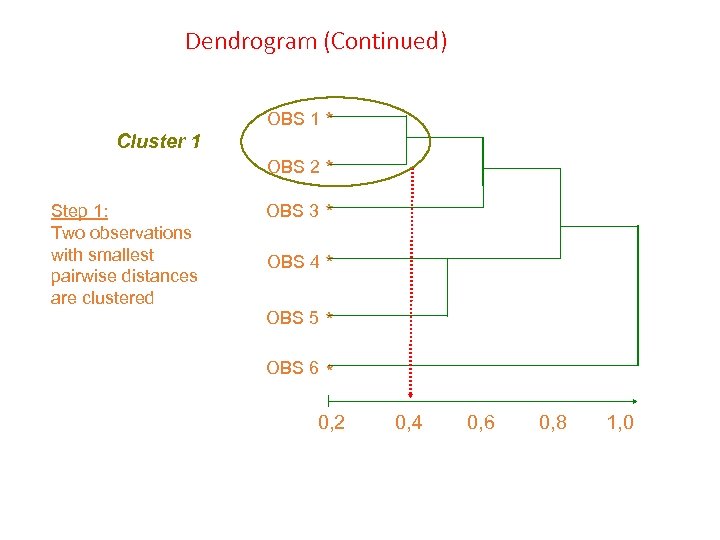

Dendrogram (Continued) Cluster 1 OBS 1 * OBS 2 * Step 1: Two observations with smallest pairwise distances are clustered OBS 3 * OBS 4 * OBS 5 * OBS 6 * 0, 2 0, 4 0, 6 0, 8 1, 0

Dendrogram (Continued) Cluster 1 OBS 1 * OBS 2 * Step 1: Two observations with smallest pairwise distances are clustered OBS 3 * OBS 4 * OBS 5 * OBS 6 * 0, 2 0, 4 0, 6 0, 8 1, 0

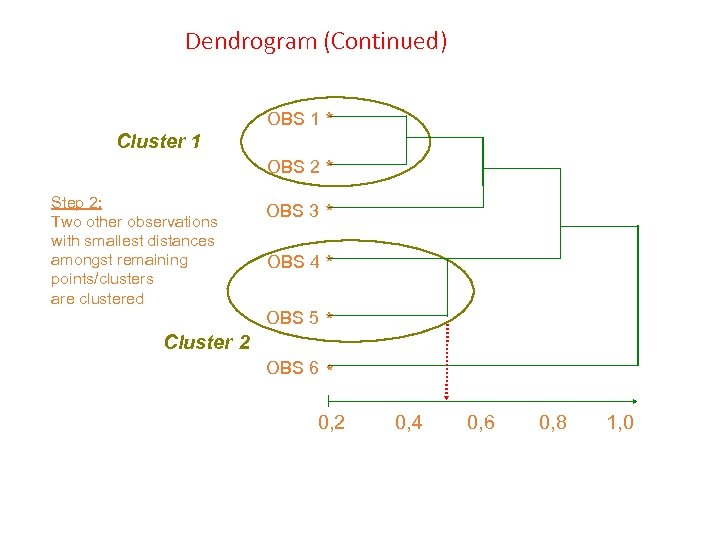

Dendrogram (Continued) Cluster 1 OBS 1 * OBS 2 * Step 2: Two other observations with smallest distances amongst remaining points/clusters are clustered Cluster 2 OBS 3 * OBS 4 * OBS 5 * OBS 6 * 0, 2 0, 4 0, 6 0, 8 1, 0

Dendrogram (Continued) Cluster 1 OBS 1 * OBS 2 * Step 2: Two other observations with smallest distances amongst remaining points/clusters are clustered Cluster 2 OBS 3 * OBS 4 * OBS 5 * OBS 6 * 0, 2 0, 4 0, 6 0, 8 1, 0

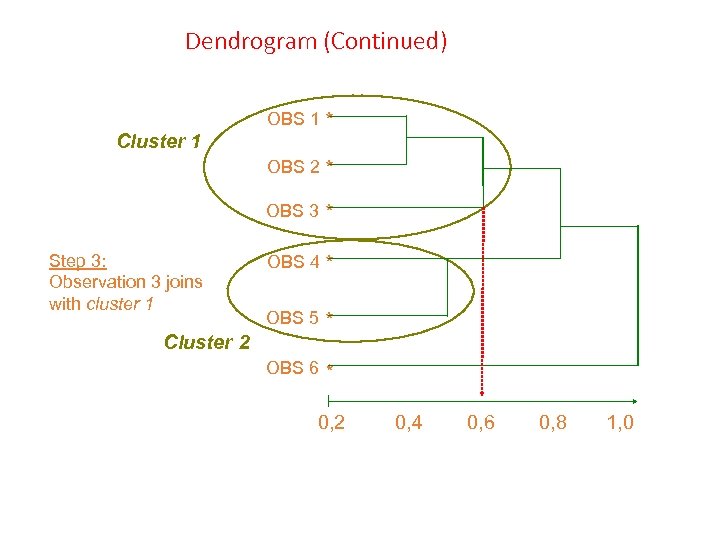

Dendrogram (Continued) Cluster 1 OBS 1 * OBS 2 * OBS 3 Step 3: Observation 3 joins with cluster 1 Cluster 2 * OBS 4 * OBS 5 * OBS 6 * 0, 2 0, 4 0, 6 0, 8 1, 0

Dendrogram (Continued) Cluster 1 OBS 1 * OBS 2 * OBS 3 Step 3: Observation 3 joins with cluster 1 Cluster 2 * OBS 4 * OBS 5 * OBS 6 * 0, 2 0, 4 0, 6 0, 8 1, 0

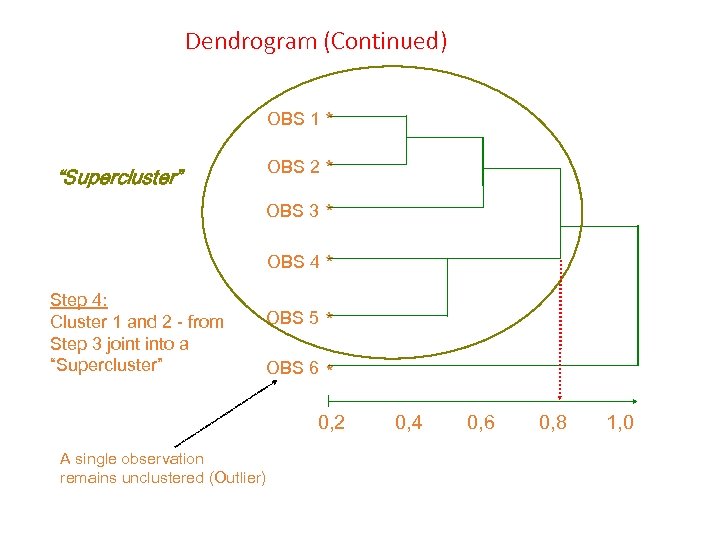

Dendrogram (Continued) OBS 1 * OBS 2 * “Supercluster” OBS 3 * OBS 4 * Step 4: Cluster 1 and 2 - from Step 3 joint into a “Supercluster” OBS 5 * OBS 6 * 0, 2 A single observation remains unclustered (Outlier) 0, 4 0, 6 0, 8 1, 0

Dendrogram (Continued) OBS 1 * OBS 2 * “Supercluster” OBS 3 * OBS 4 * Step 4: Cluster 1 and 2 - from Step 3 joint into a “Supercluster” OBS 5 * OBS 6 * 0, 2 A single observation remains unclustered (Outlier) 0, 4 0, 6 0, 8 1, 0

Hierarchical Clustering Example III

Hierarchical Clustering Example III

Market research for exporting activities Manager Risk aversion Propensity to internationalize Α 2 6 Β 3 9 C 7 1 D 8 3 E 2 2

Market research for exporting activities Manager Risk aversion Propensity to internationalize Α 2 6 Β 3 9 C 7 1 D 8 3 E 2 2

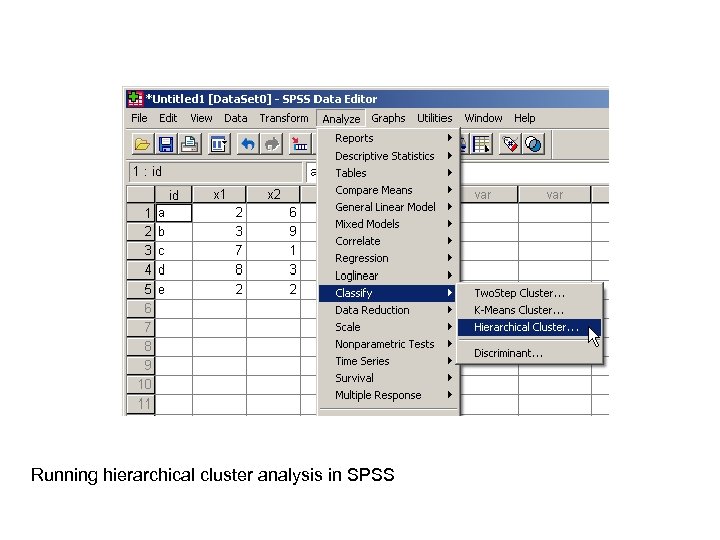

Running hierarchical cluster analysis in SPSS

Running hierarchical cluster analysis in SPSS

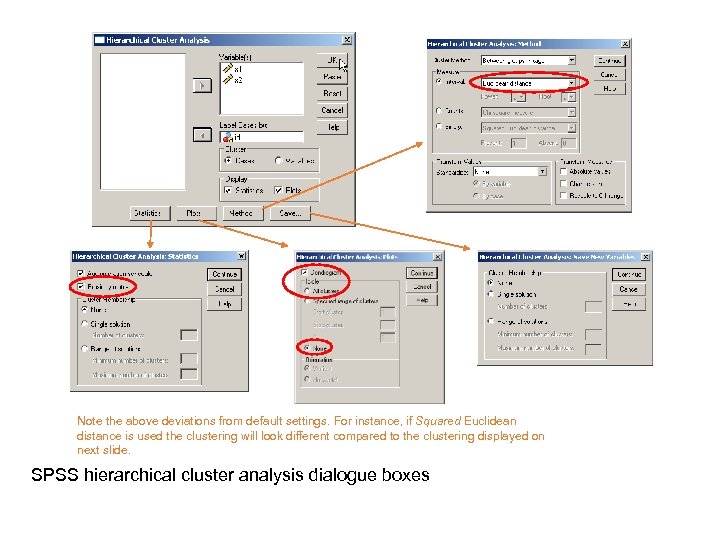

Note the above deviations from default settings. For instance, if Squared Euclidean distance is used the clustering will look different compared to the clustering displayed on next slide. SPSS hierarchical cluster analysis dialogue boxes

Note the above deviations from default settings. For instance, if Squared Euclidean distance is used the clustering will look different compared to the clustering displayed on next slide. SPSS hierarchical cluster analysis dialogue boxes

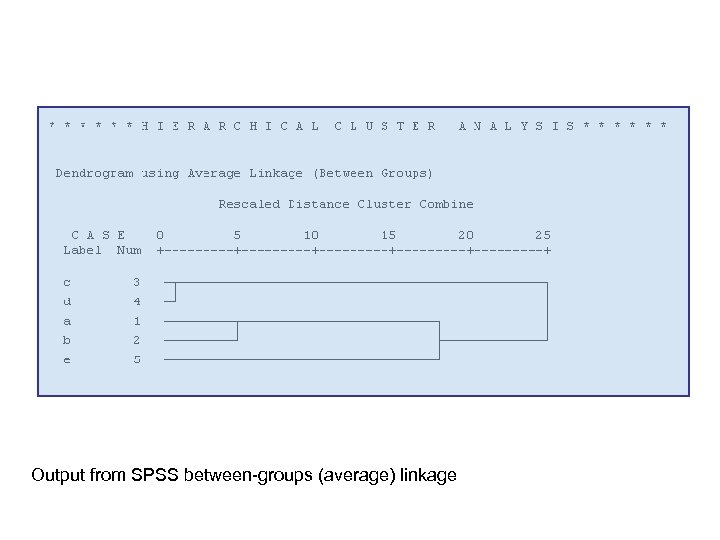

Output from SPSS between-groups (average) linkage

Output from SPSS between-groups (average) linkage

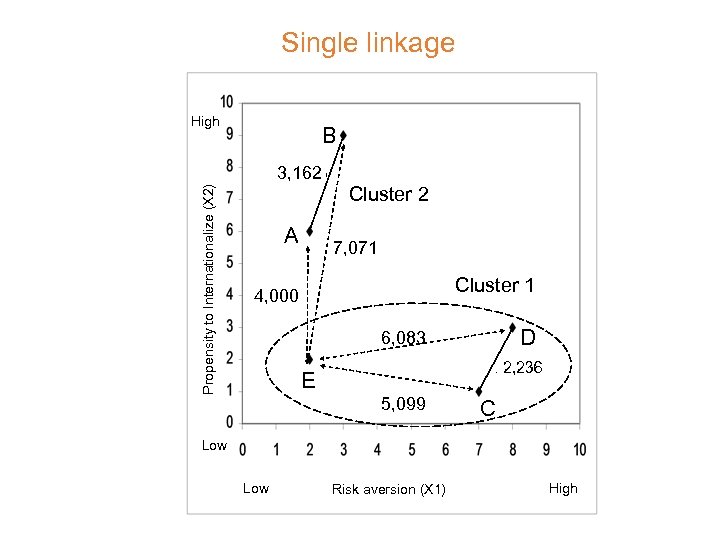

Single linkage High B Propensity to Internationalize (X 2) 3, 162 A Cluster 2 7, 071 Cluster 1 4, 000 D 6, 083 E 2, 236 5, 099 C Low Risk aversion (X 1) High

Single linkage High B Propensity to Internationalize (X 2) 3, 162 A Cluster 2 7, 071 Cluster 1 4, 000 D 6, 083 E 2, 236 5, 099 C Low Risk aversion (X 1) High

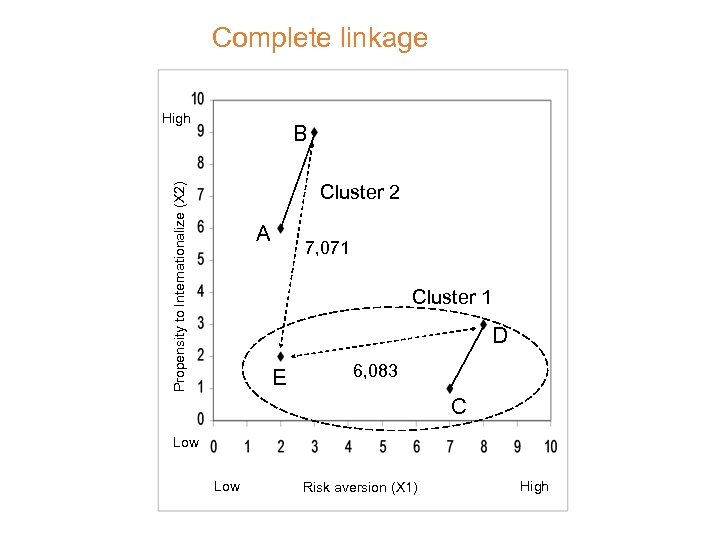

Complete linkage High B Propensity to Internationalize (X 2) Cluster 2 A 7, 071 Cluster 1 D E 6, 083 C Low Risk aversion (X 1) High

Complete linkage High B Propensity to Internationalize (X 2) Cluster 2 A 7, 071 Cluster 1 D E 6, 083 C Low Risk aversion (X 1) High

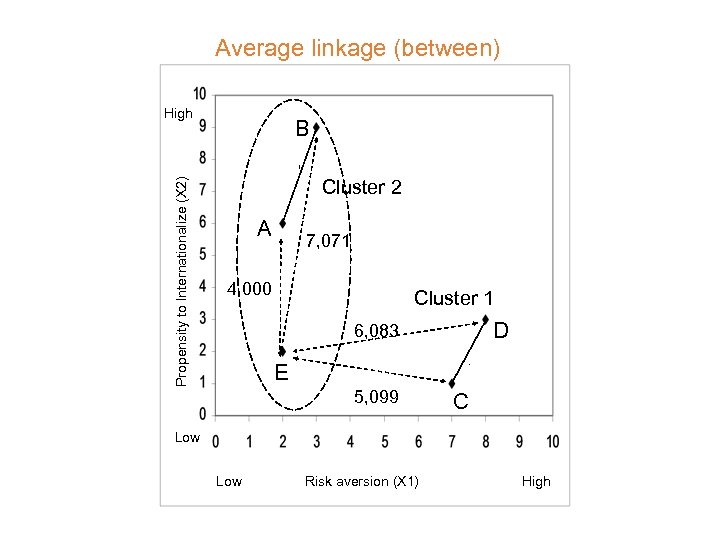

Average linkage (between) Propensity to Internationalize (X 2) High B Cluster 2 A 7, 071 4, 000 Cluster 1 D 6, 083 E 5, 099 C Low Risk aversion (X 1) High

Average linkage (between) Propensity to Internationalize (X 2) High B Cluster 2 A 7, 071 4, 000 Cluster 1 D 6, 083 E 5, 099 C Low Risk aversion (X 1) High

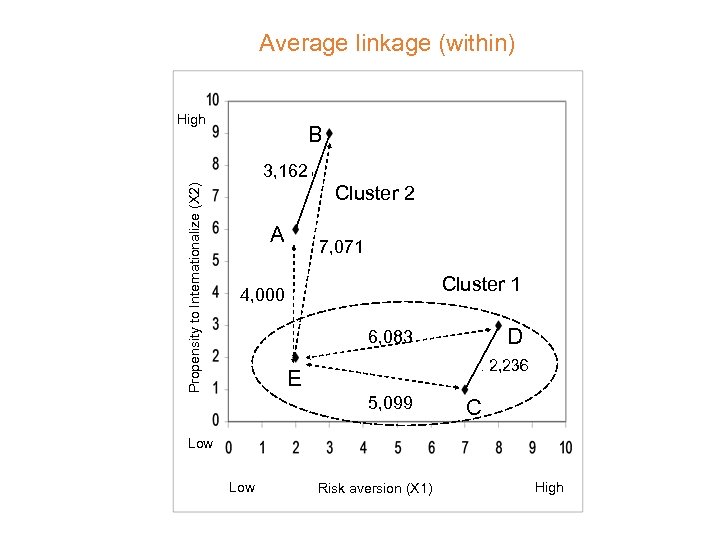

Average linkage (within) High B Propensity to Internationalize (X 2) 3, 162 A Cluster 2 7, 071 Cluster 1 4, 000 D 6, 083 E 2, 236 5, 099 C Low Risk aversion (X 1) High

Average linkage (within) High B Propensity to Internationalize (X 2) 3, 162 A Cluster 2 7, 071 Cluster 1 4, 000 D 6, 083 E 2, 236 5, 099 C Low Risk aversion (X 1) High

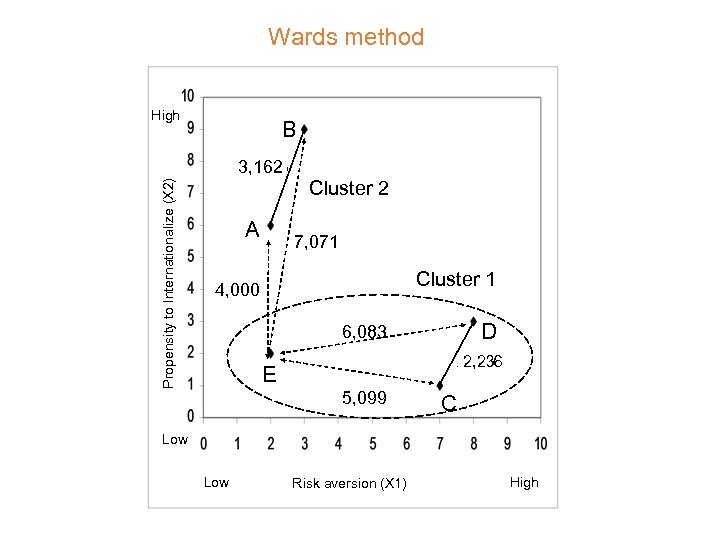

Wards method High B Propensity to Internationalize (X 2) 3, 162 A Cluster 2 7, 071 Cluster 1 4, 000 D 6, 083 E 2, 236 5, 099 C Low Risk aversion (X 1) High

Wards method High B Propensity to Internationalize (X 2) 3, 162 A Cluster 2 7, 071 Cluster 1 4, 000 D 6, 083 E 2, 236 5, 099 C Low Risk aversion (X 1) High

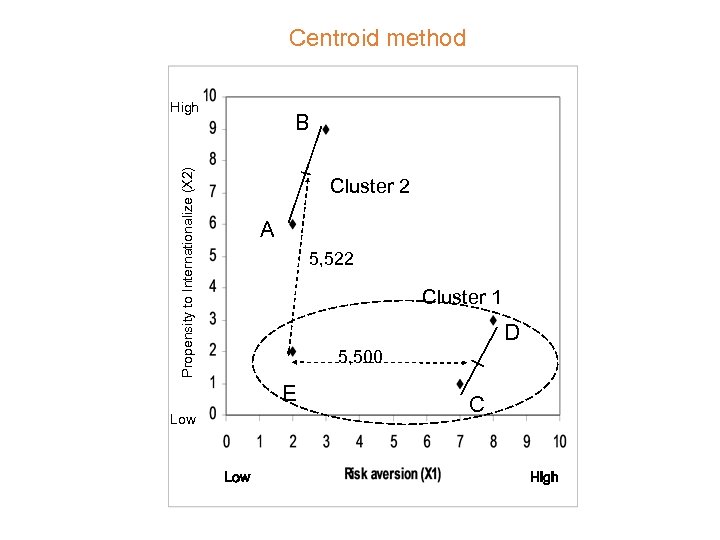

Centroid method High Propensity to Internationalize (X 2) B Cluster 2 A 5, 522 Cluster 1 D 5, 500 E Low C High

Centroid method High Propensity to Internationalize (X 2) B Cluster 2 A 5, 522 Cluster 1 D 5, 500 E Low C High

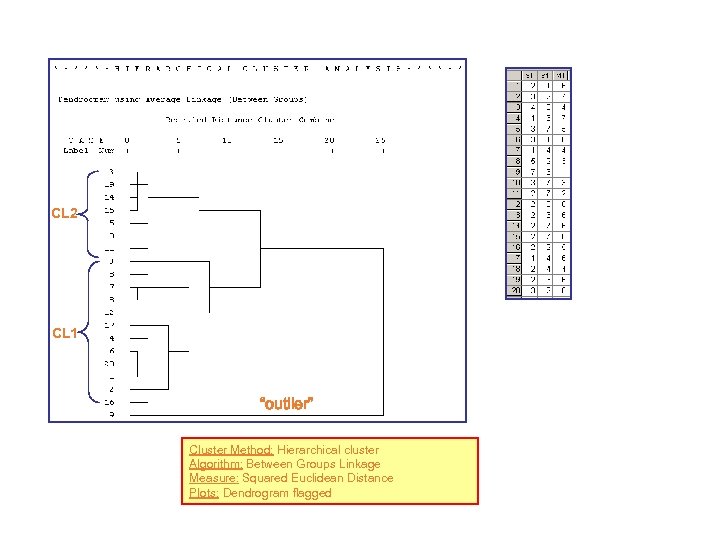

CL 2 CL 1 “outlier” Cluster Method: Hierarchical cluster Algorithm: Between Groups Linkage Measure: Squared Euclidean Distance Plots: Dendrogram flagged

CL 2 CL 1 “outlier” Cluster Method: Hierarchical cluster Algorithm: Between Groups Linkage Measure: Squared Euclidean Distance Plots: Dendrogram flagged

Non-hierarchical Clustering K-means clustering algorithm

Non-hierarchical Clustering K-means clustering algorithm

Concept • At first we select the number of groups and we randomly assign each point to each team. • Next, we revisit the assignments. • The above process is repeated until a criterion is met. • We need a priori to determine the number of clusters • Popular algorithm in this category (non-hierarchical and nonoverlapping) is the k-means. Synonyms partitioning and nearest centroid method

Concept • At first we select the number of groups and we randomly assign each point to each team. • Next, we revisit the assignments. • The above process is repeated until a criterion is met. • We need a priori to determine the number of clusters • Popular algorithm in this category (non-hierarchical and nonoverlapping) is the k-means. Synonyms partitioning and nearest centroid method

K-means Algorithm 1. 2. 3. 4. We select k cluster centers from the points of the data set. We assign each point to the closest center. We recalculate the centers. If the centers remain the same, we stop; Otherwise, we continue with step 2.

K-means Algorithm 1. 2. 3. 4. We select k cluster centers from the points of the data set. We assign each point to the closest center. We recalculate the centers. If the centers remain the same, we stop; Otherwise, we continue with step 2.

Example • A study has been made among export managers within twenty industries (pharmaceuticals, heavy machinery, banking etc). • Within each industry, interviews were carried out with 10 to 20 managers. Each manager has asked to comment on 2 statements.

Example • A study has been made among export managers within twenty industries (pharmaceuticals, heavy machinery, banking etc). • Within each industry, interviews were carried out with 10 to 20 managers. Each manager has asked to comment on 2 statements.

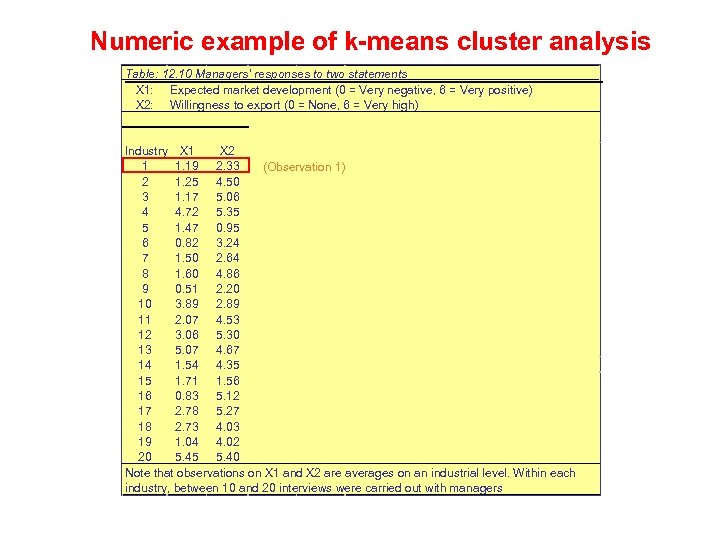

Numeric example of k-means cluster analysis Table: 12. 10 Managers’ responses to two statements X 1: Expected market development (0 = Very negative, 6 = Very positive) X 2: Willingness to export (0 = None, 6 = Very high) Industry X 1 X 2 1 1. 19 2. 33 (Observation 1) 2 1. 25 4. 50 3 1. 17 5. 06 4 4. 72 5. 35 5 1. 47 0. 95 6 0. 82 3. 24 7 1. 50 2. 64 8 1. 60 4. 86 9 0. 51 2. 20 10 3. 89 2. 89 11 2. 07 4. 53 12 3. 06 5. 30 13 5. 07 4. 67 14 1. 54 4. 35 15 1. 71 1. 56 16 0. 83 5. 12 17 2. 78 5. 27 18 2. 73 4. 03 19 1. 04 4. 02 20 5. 45 5. 40 Note that observations on X 1 and X 2 are averages on an industrial level. Within each industry, between 10 and 20 interviews were carried out with managers

Numeric example of k-means cluster analysis Table: 12. 10 Managers’ responses to two statements X 1: Expected market development (0 = Very negative, 6 = Very positive) X 2: Willingness to export (0 = None, 6 = Very high) Industry X 1 X 2 1 1. 19 2. 33 (Observation 1) 2 1. 25 4. 50 3 1. 17 5. 06 4 4. 72 5. 35 5 1. 47 0. 95 6 0. 82 3. 24 7 1. 50 2. 64 8 1. 60 4. 86 9 0. 51 2. 20 10 3. 89 2. 89 11 2. 07 4. 53 12 3. 06 5. 30 13 5. 07 4. 67 14 1. 54 4. 35 15 1. 71 1. 56 16 0. 83 5. 12 17 2. 78 5. 27 18 2. 73 4. 03 19 1. 04 4. 02 20 5. 45 5. 40 Note that observations on X 1 and X 2 are averages on an industrial level. Within each industry, between 10 and 20 interviews were carried out with managers

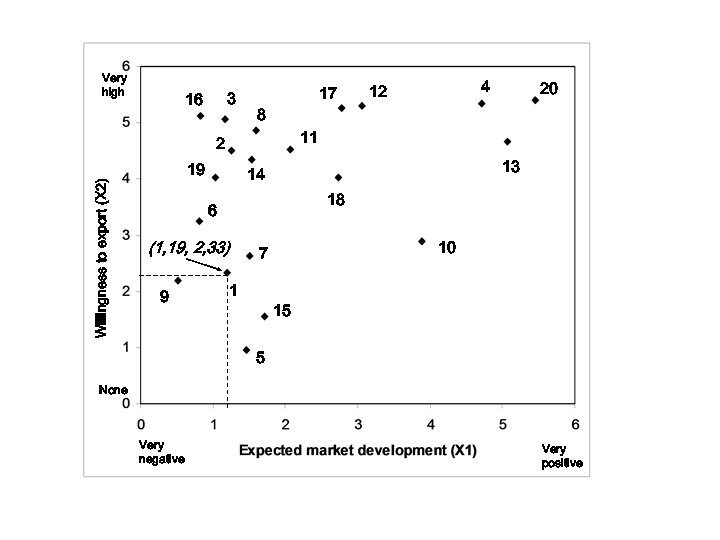

Very high 3 16 17 8 Willingness to export (X 2) 20 11 2 19 13 14 18 6 (1, 19, 2, 33) 9 4 12 10 7 1 15 5 None Very negative Very positive

Very high 3 16 17 8 Willingness to export (X 2) 20 11 2 19 13 14 18 6 (1, 19, 2, 33) 9 4 12 10 7 1 15 5 None Very negative Very positive

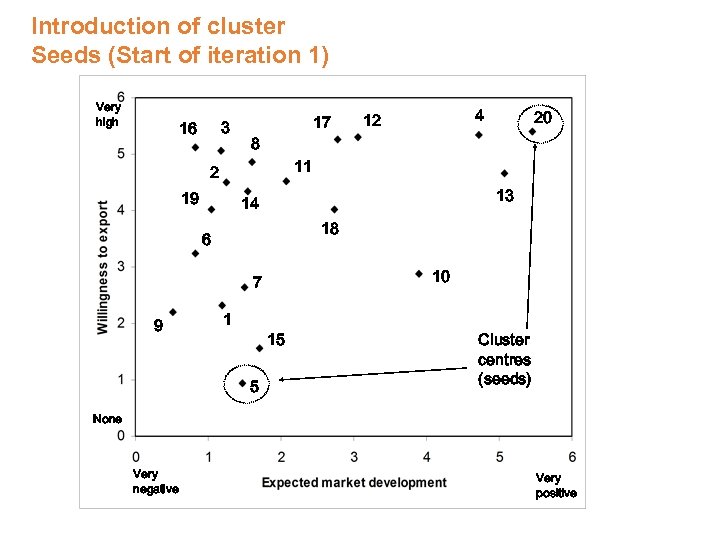

Introduction of cluster Seeds (Start of iteration 1) Very high 3 16 17 8 20 11 2 19 13 14 18 6 10 7 9 4 12 1 15 5 Cluster centres (seeds) None Very negative Very positive

Introduction of cluster Seeds (Start of iteration 1) Very high 3 16 17 8 20 11 2 19 13 14 18 6 10 7 9 4 12 1 15 5 Cluster centres (seeds) None Very negative Very positive

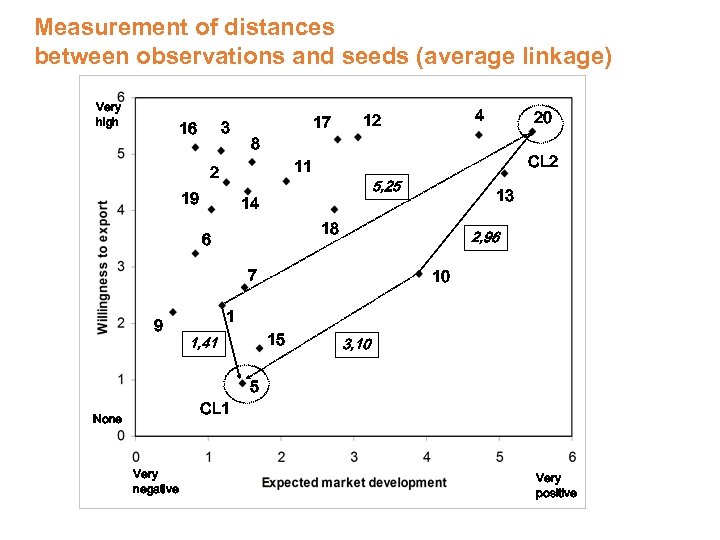

Measurement of distances between observations and seeds (average linkage) Very high 3 16 17 8 19 5, 25 14 2, 96 7 9 13 18 6 20 CL 2 11 2 4 12 10 1 15 1, 41 3, 10 5 CL 1 None Very negative Very positive

Measurement of distances between observations and seeds (average linkage) Very high 3 16 17 8 19 5, 25 14 2, 96 7 9 13 18 6 20 CL 2 11 2 4 12 10 1 15 1, 41 3, 10 5 CL 1 None Very negative Very positive

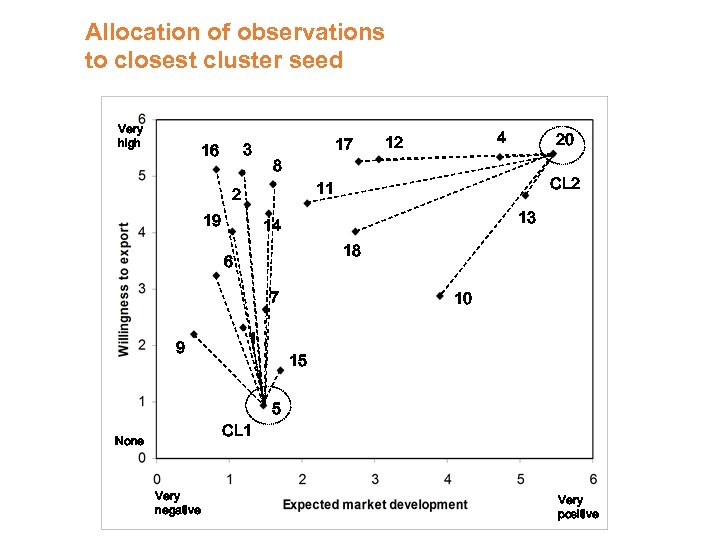

Allocation of observations to closest cluster seed Very high 3 16 17 8 20 CL 2 11 2 19 13 14 18 6 7 9 4 12 10 1 15 5 CL 1 None Very negative Very positive

Allocation of observations to closest cluster seed Very high 3 16 17 8 20 CL 2 11 2 19 13 14 18 6 7 9 4 12 10 1 15 5 CL 1 None Very negative Very positive

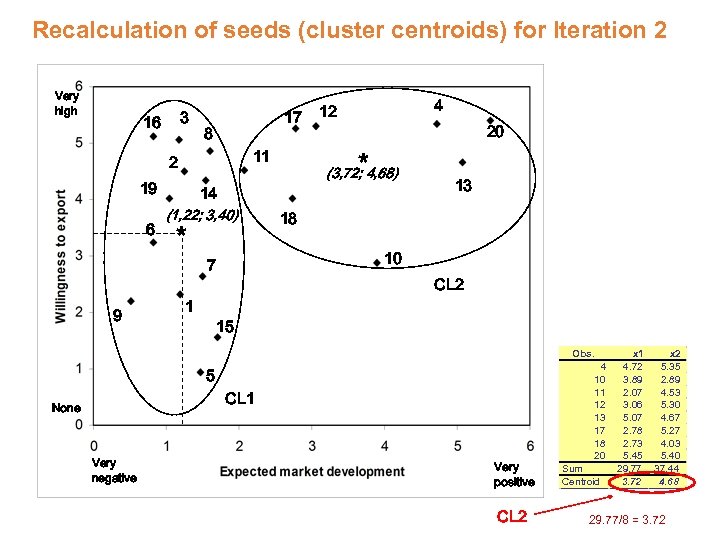

Recalculation of seeds (cluster centroids) for Iteration 2 Very high 3 16 17 8 19 6 9 (3, 72; 4, 68) 14 (1, 22; 3, 40) * 13 18 10 7 1 20 * 11 2 4 12 CL 2 15 Obs. 5 CL 1 None Very negative Very positive CL 2 4 10 11 12 13 17 18 20 Sum Centroid x 1 4. 72 3. 89 2. 07 3. 06 5. 07 2. 78 2. 73 5. 45 29. 77 3. 72 x 2 5. 35 2. 89 4. 53 5. 30 4. 67 5. 27 4. 03 5. 40 37. 44 4. 68 29. 77/8 = 3. 72

Recalculation of seeds (cluster centroids) for Iteration 2 Very high 3 16 17 8 19 6 9 (3, 72; 4, 68) 14 (1, 22; 3, 40) * 13 18 10 7 1 20 * 11 2 4 12 CL 2 15 Obs. 5 CL 1 None Very negative Very positive CL 2 4 10 11 12 13 17 18 20 Sum Centroid x 1 4. 72 3. 89 2. 07 3. 06 5. 07 2. 78 2. 73 5. 45 29. 77 3. 72 x 2 5. 35 2. 89 4. 53 5. 30 4. 67 5. 27 4. 03 5. 40 37. 44 4. 68 29. 77/8 = 3. 72

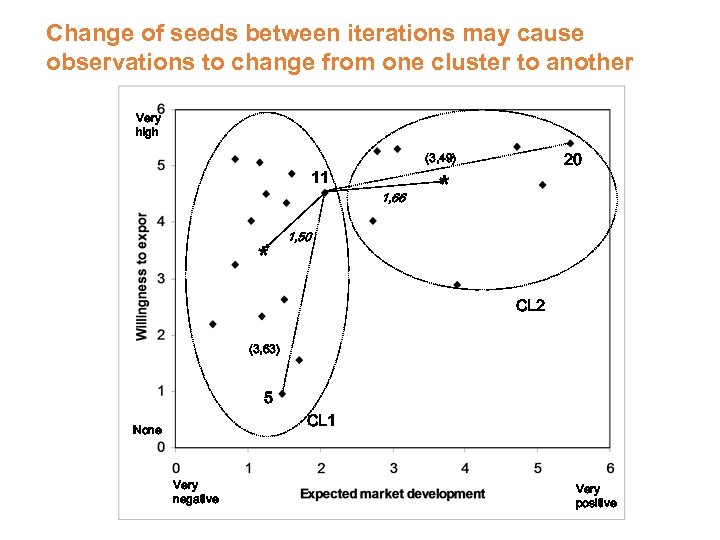

Change of seeds between iterations may cause observations to change from one cluster to another Very high 20 (3, 49) 11 1, 66 * * 1, 50 CL 2 (3, 63) 5 CL 1 None Very negative Very positive

Change of seeds between iterations may cause observations to change from one cluster to another Very high 20 (3, 49) 11 1, 66 * * 1, 50 CL 2 (3, 63) 5 CL 1 None Very negative Very positive

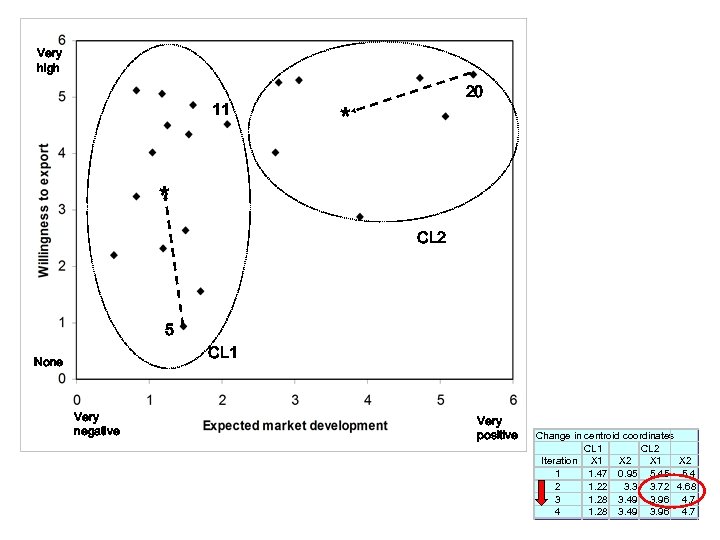

Very high 11 20 * * CL 2 5 CL 1 None Very negative Very positive Change in centroid coordinates CL 1 CL 2 Iteration X 1 X 2 1 1. 47 0. 95 5. 4 2 1. 22 3. 3 3. 72 4. 68 3 1. 28 3. 49 3. 96 4. 7 4 1. 28 3. 49 3. 96 4. 7

Very high 11 20 * * CL 2 5 CL 1 None Very negative Very positive Change in centroid coordinates CL 1 CL 2 Iteration X 1 X 2 1 1. 47 0. 95 5. 4 2 1. 22 3. 3 3. 72 4. 68 3 1. 28 3. 49 3. 96 4. 7 4 1. 28 3. 49 3. 96 4. 7

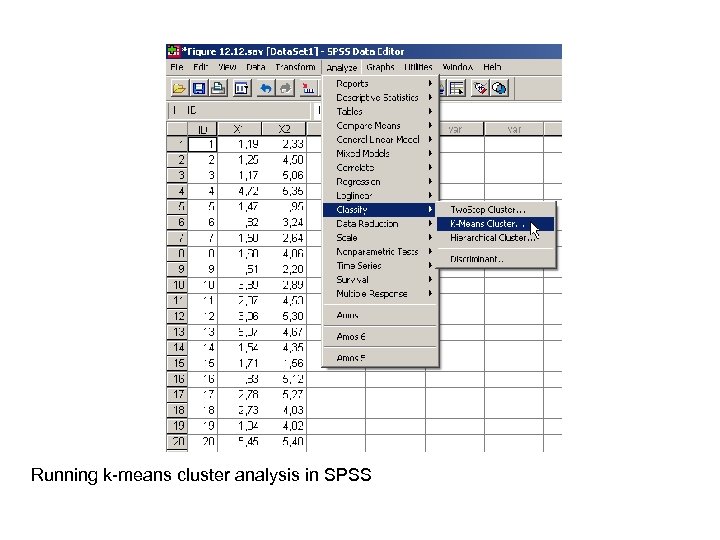

Running k-means cluster analysis in SPSS

Running k-means cluster analysis in SPSS

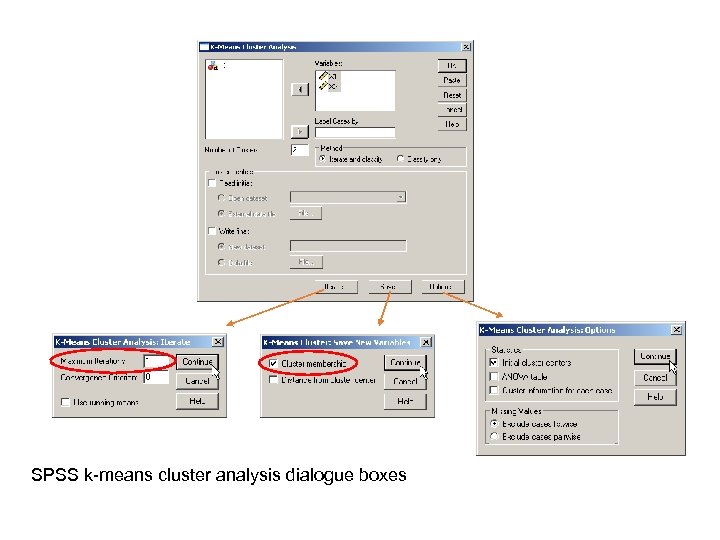

SPSS k-means cluster analysis dialogue boxes

SPSS k-means cluster analysis dialogue boxes

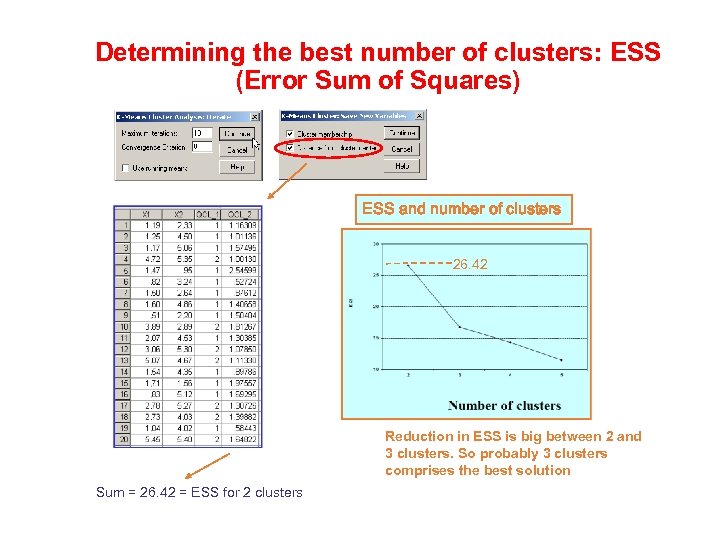

Determining the best number of clusters: ESS (Error Sum of Squares) ESS and number of clusters 26. 42 Reduction in ESS is big between 2 and 3 clusters. So probably 3 clusters comprises the best solution Sum = 26. 42 = ESS for 2 clusters

Determining the best number of clusters: ESS (Error Sum of Squares) ESS and number of clusters 26. 42 Reduction in ESS is big between 2 and 3 clusters. So probably 3 clusters comprises the best solution Sum = 26. 42 = ESS for 2 clusters

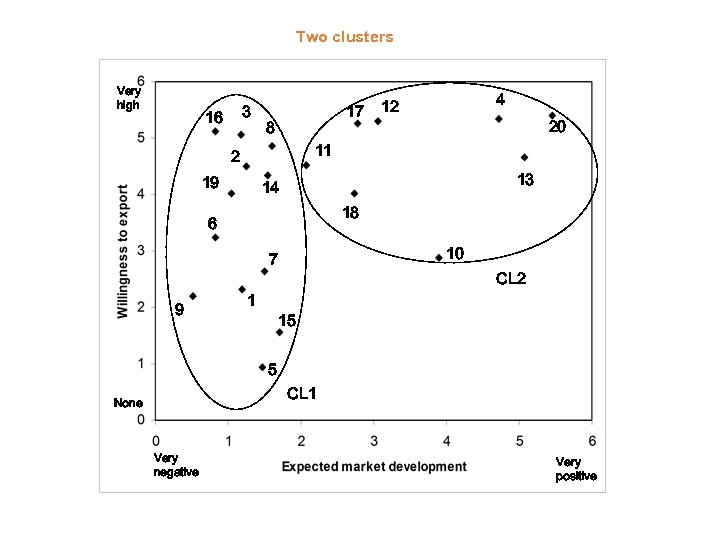

Two clusters Very high 3 16 17 8 20 11 2 19 13 14 18 6 10 7 9 4 12 1 CL 2 15 5 CL 1 None Very negative Very positive

Two clusters Very high 3 16 17 8 20 11 2 19 13 14 18 6 10 7 9 4 12 1 CL 2 15 5 CL 1 None Very negative Very positive

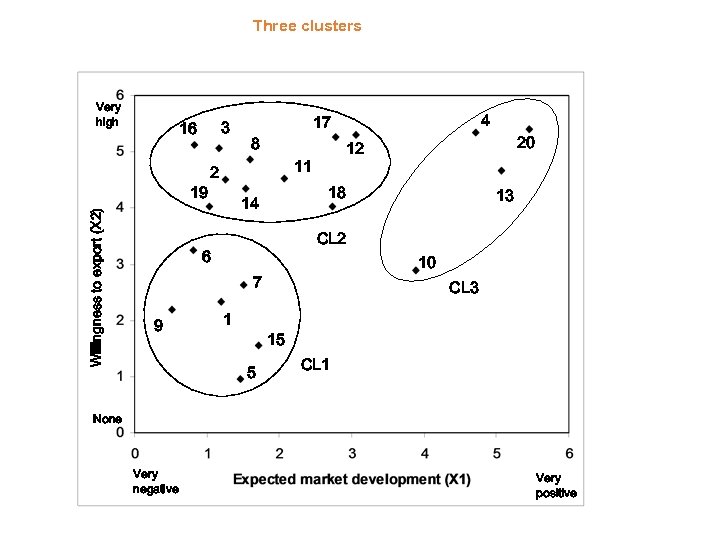

Three clusters Very high 3 16 Willingness to export (X 2) 19 8 20 12 11 2 18 14 13 CL 2 6 10 7 9 4 17 1 CL 3 15 5 CL 1 None Very negative Very positive

Three clusters Very high 3 16 Willingness to export (X 2) 19 8 20 12 11 2 18 14 13 CL 2 6 10 7 9 4 17 1 CL 3 15 5 CL 1 None Very negative Very positive

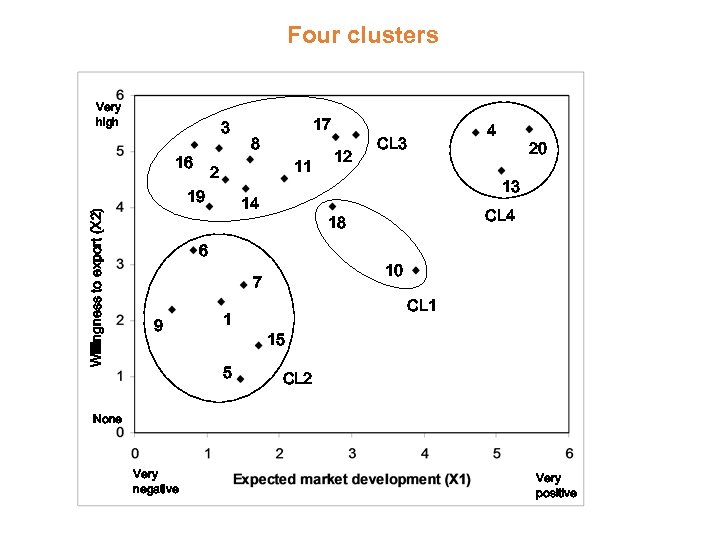

Four clusters Very high 3 16 8 11 2 19 Willingness to export (X 2) 17 14 5 CL 4 10 7 1 20 13 18 6 9 12 4 CL 3 CL 1 15 CL 2 None Very negative Very positive

Four clusters Very high 3 16 8 11 2 19 Willingness to export (X 2) 17 14 5 CL 4 10 7 1 20 13 18 6 9 12 4 CL 3 CL 1 15 CL 2 None Very negative Very positive

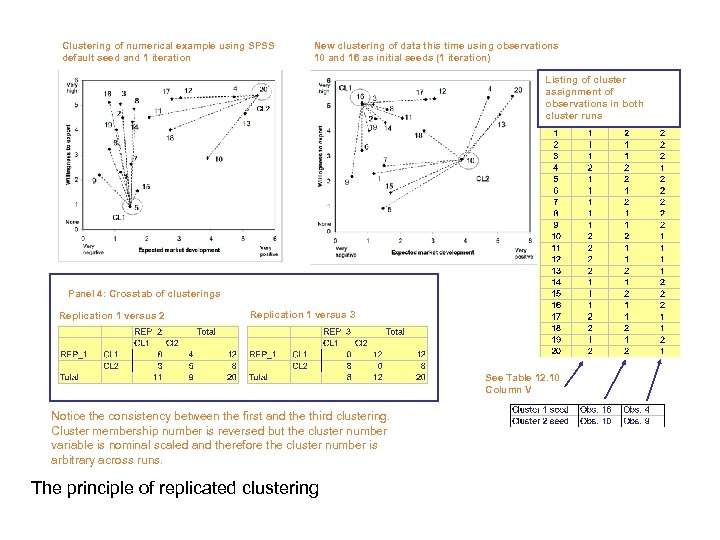

Clustering of numerical example using SPSS default seed and 1 iteration New clustering of data this time using observations 10 and 16 as initial seeds (1 iteration) Listing of cluster assignment of observations in both cluster runs Panel 4: Crosstab of clusterings Replication 1 versus 2 Replication 1 versus 3 See Table 12. 10 Column V Notice the consistency between the first and the third clustering. Cluster membership number is reversed but the cluster number variable is nominal scaled and therefore the cluster number is arbitrary across runs. The principle of replicated clustering

Clustering of numerical example using SPSS default seed and 1 iteration New clustering of data this time using observations 10 and 16 as initial seeds (1 iteration) Listing of cluster assignment of observations in both cluster runs Panel 4: Crosstab of clusterings Replication 1 versus 2 Replication 1 versus 3 See Table 12. 10 Column V Notice the consistency between the first and the third clustering. Cluster membership number is reversed but the cluster number variable is nominal scaled and therefore the cluster number is arbitrary across runs. The principle of replicated clustering

Non-hierarchical method: Recap • It is an iterative procedure, so – unlike when using the hierarchical methods – there is no guarantee that the optimal solution is found (however, it usually comes quite close) • The analyst must subjectively select the “best” number of clusters (some clues may help, though) • The procedure is fast • Applying (running) is straightforward • It is rather easy to understand (interpret) • However, in applied settings, differences between clusters may be small, thereby disappointing the analyst.

Non-hierarchical method: Recap • It is an iterative procedure, so – unlike when using the hierarchical methods – there is no guarantee that the optimal solution is found (however, it usually comes quite close) • The analyst must subjectively select the “best” number of clusters (some clues may help, though) • The procedure is fast • Applying (running) is straightforward • It is rather easy to understand (interpret) • However, in applied settings, differences between clusters may be small, thereby disappointing the analyst.

Non-hierarchical cluster analysis • How does one determine the optimal number of clusters? • Unfortunately, no formal help (i. e. a built-in option) is presently available in SPSS for determining the best number of clusters. • So: Try 2, 3, and 4 clusters and choose the one that “feels” best. It is recommended to use the ESS-procedure covered above in combination with sound reasoning.

Non-hierarchical cluster analysis • How does one determine the optimal number of clusters? • Unfortunately, no formal help (i. e. a built-in option) is presently available in SPSS for determining the best number of clusters. • So: Try 2, 3, and 4 clusters and choose the one that “feels” best. It is recommended to use the ESS-procedure covered above in combination with sound reasoning.

Textbooks in Cluster Analysis, 1981 Brian S. Everitt Cluster Analysis for Social Scientists, 1983 Maurice Lorr Cluster Analysis for Researchers, 1984 Charles Romesburg Cluster Analysis, 1984 Aldenderfer and Blashfield

Textbooks in Cluster Analysis, 1981 Brian S. Everitt Cluster Analysis for Social Scientists, 1983 Maurice Lorr Cluster Analysis for Researchers, 1984 Charles Romesburg Cluster Analysis, 1984 Aldenderfer and Blashfield