bc69318c0e54d7d0e4d66633e9d69e52.ppt

- Количество слайдов: 28

Classification of Microarray Data

Classification of Microarray Data

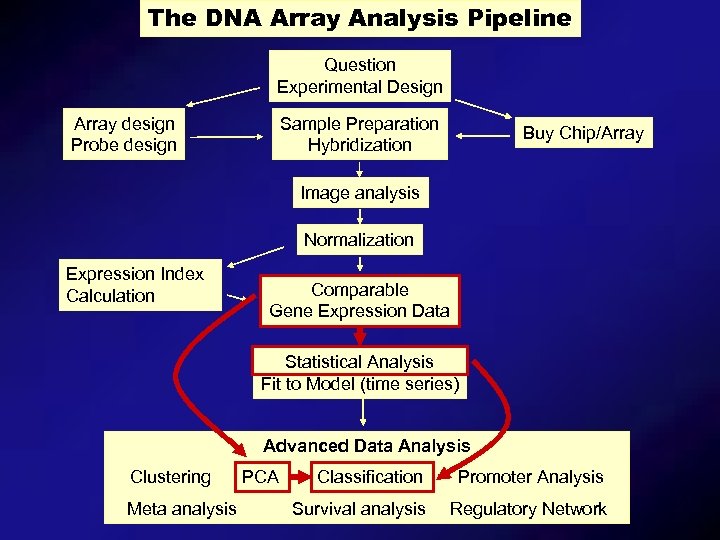

The DNA Array Analysis Pipeline Question Experimental Design Array design Probe design Sample Preparation Hybridization Buy Chip/Array Image analysis Normalization Expression Index Calculation Comparable Gene Expression Data Statistical Analysis Fit to Model (time series) Advanced Data Analysis Clustering Meta analysis PCA Classification Promoter Analysis Survival analysis Regulatory Network

The DNA Array Analysis Pipeline Question Experimental Design Array design Probe design Sample Preparation Hybridization Buy Chip/Array Image analysis Normalization Expression Index Calculation Comparable Gene Expression Data Statistical Analysis Fit to Model (time series) Advanced Data Analysis Clustering Meta analysis PCA Classification Promoter Analysis Survival analysis Regulatory Network

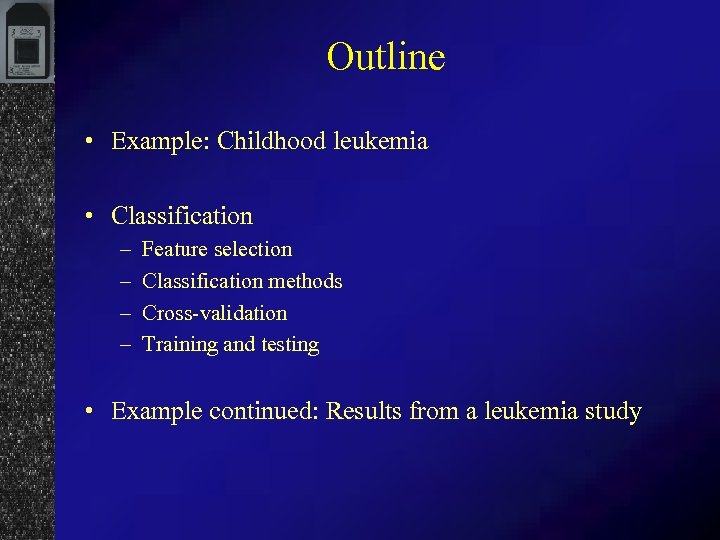

Outline • Example: Childhood leukemia • Classification – – Feature selection Classification methods Cross-validation Training and testing • Example continued: Results from a leukemia study

Outline • Example: Childhood leukemia • Classification – – Feature selection Classification methods Cross-validation Training and testing • Example continued: Results from a leukemia study

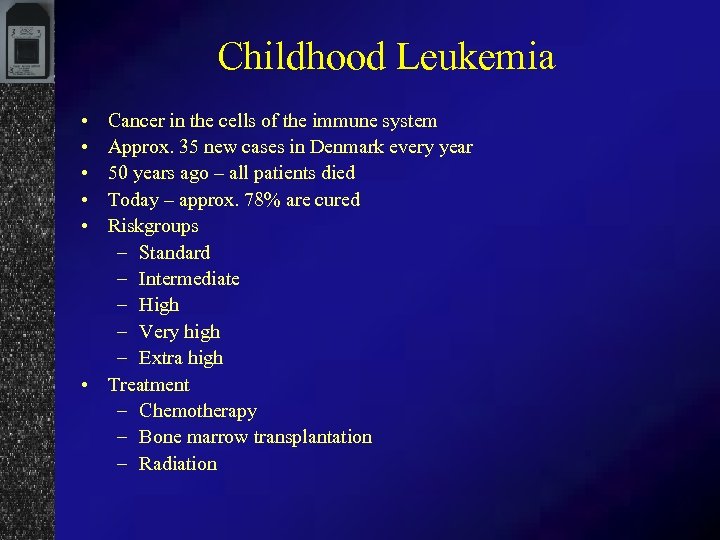

Childhood Leukemia • • • Cancer in the cells of the immune system Approx. 35 new cases in Denmark every year 50 years ago – all patients died Today – approx. 78% are cured Riskgroups – Standard – Intermediate – High – Very high – Extra high • Treatment – Chemotherapy – Bone marrow transplantation – Radiation

Childhood Leukemia • • • Cancer in the cells of the immune system Approx. 35 new cases in Denmark every year 50 years ago – all patients died Today – approx. 78% are cured Riskgroups – Standard – Intermediate – High – Very high – Extra high • Treatment – Chemotherapy – Bone marrow transplantation – Radiation

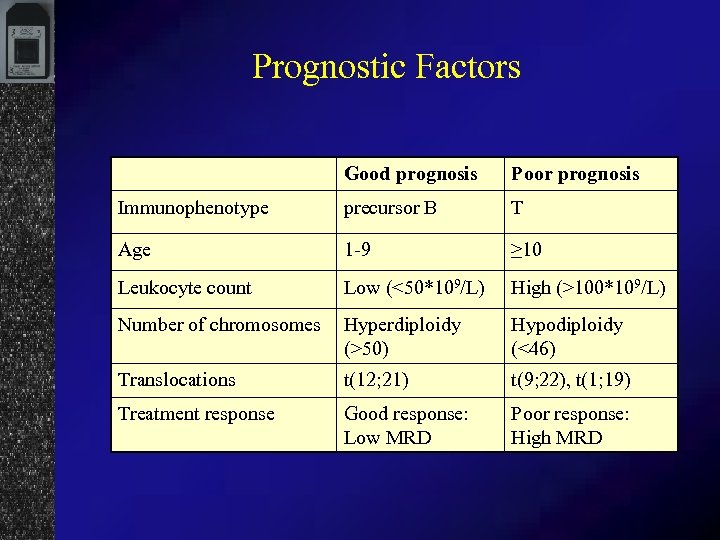

Prognostic Factors Good prognosis Poor prognosis Immunophenotype precursor B T Age 1 -9 ≥ 10 Leukocyte count Low (<50*109/L) High (>100*109/L) Number of chromosomes Hyperdiploidy (>50) Hypodiploidy (<46) Translocations t(12; 21) t(9; 22), t(1; 19) Treatment response Good response: Low MRD Poor response: High MRD

Prognostic Factors Good prognosis Poor prognosis Immunophenotype precursor B T Age 1 -9 ≥ 10 Leukocyte count Low (<50*109/L) High (>100*109/L) Number of chromosomes Hyperdiploidy (>50) Hypodiploidy (<46) Translocations t(12; 21) t(9; 22), t(1; 19) Treatment response Good response: Low MRD Poor response: High MRD

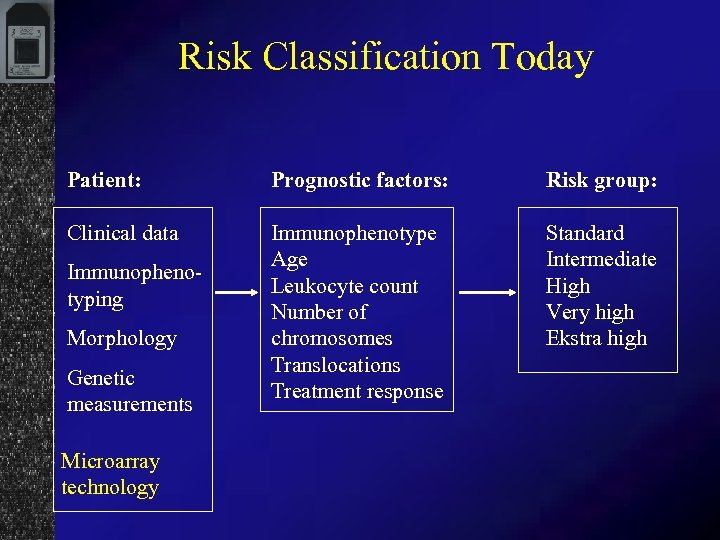

Risk Classification Today Patient: Prognostic factors: Risk group: Clinical data Immunophenotype Age Leukocyte count Number of chromosomes Translocations Treatment response Standard Intermediate High Very high Ekstra high Immunophenotyping Morphology Genetic measurements Microarray technology

Risk Classification Today Patient: Prognostic factors: Risk group: Clinical data Immunophenotype Age Leukocyte count Number of chromosomes Translocations Treatment response Standard Intermediate High Very high Ekstra high Immunophenotyping Morphology Genetic measurements Microarray technology

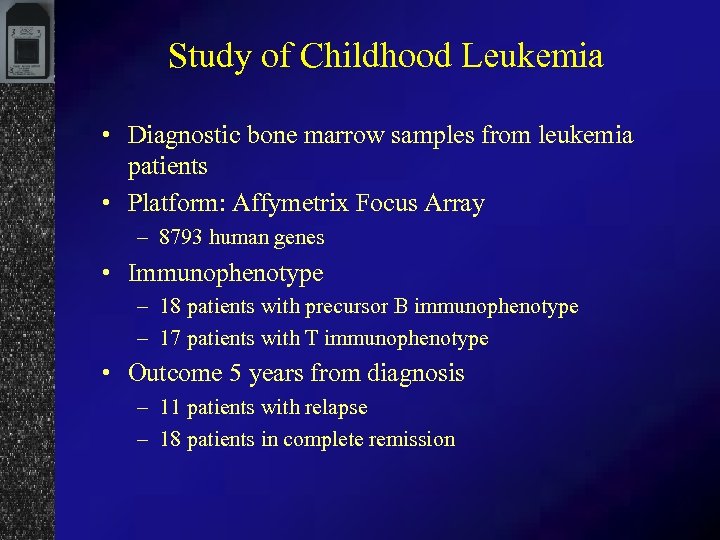

Study of Childhood Leukemia • Diagnostic bone marrow samples from leukemia patients • Platform: Affymetrix Focus Array – 8793 human genes • Immunophenotype – 18 patients with precursor B immunophenotype – 17 patients with T immunophenotype • Outcome 5 years from diagnosis – 11 patients with relapse – 18 patients in complete remission

Study of Childhood Leukemia • Diagnostic bone marrow samples from leukemia patients • Platform: Affymetrix Focus Array – 8793 human genes • Immunophenotype – 18 patients with precursor B immunophenotype – 17 patients with T immunophenotype • Outcome 5 years from diagnosis – 11 patients with relapse – 18 patients in complete remission

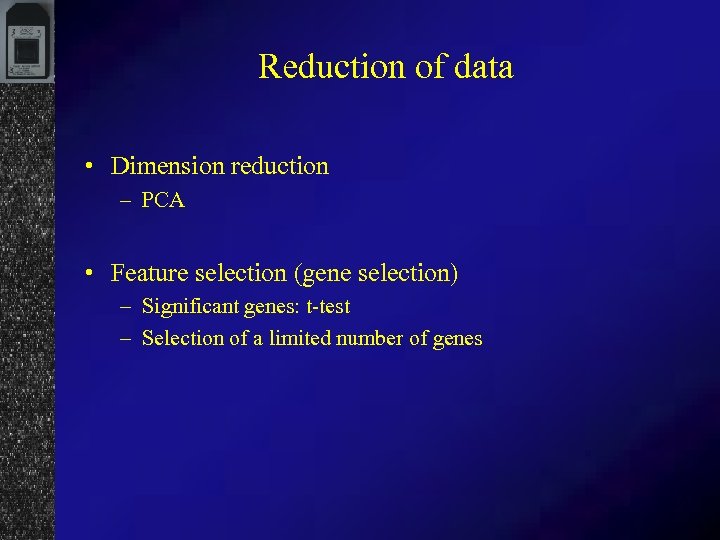

Reduction of data • Dimension reduction – PCA • Feature selection (gene selection) – Significant genes: t-test – Selection of a limited number of genes

Reduction of data • Dimension reduction – PCA • Feature selection (gene selection) – Significant genes: t-test – Selection of a limited number of genes

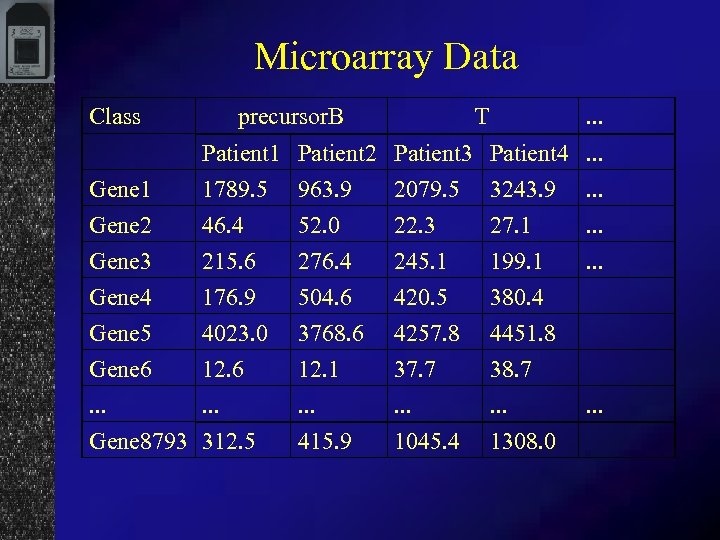

Microarray Data Class precursor. B T . . . Gene 1 Gene 2 Patient 1 Patient 2 Patient 3 Patient 4. . . 1789. 5 963. 9 2079. 5 3243. 9. . . 46. 4 52. 0 22. 3 27. 1. . . Gene 3 Gene 4 Gene 5 Gene 6. . . Gene 8793 215. 6 176. 9 4023. 0 12. 6. . . 312. 5 276. 4 504. 6 3768. 6 12. 1. . . 415. 9 245. 1 420. 5 4257. 8 37. 7. . . 1045. 4 199. 1 380. 4 4451. 8 38. 7. . . 1308. 0 . . .

Microarray Data Class precursor. B T . . . Gene 1 Gene 2 Patient 1 Patient 2 Patient 3 Patient 4. . . 1789. 5 963. 9 2079. 5 3243. 9. . . 46. 4 52. 0 22. 3 27. 1. . . Gene 3 Gene 4 Gene 5 Gene 6. . . Gene 8793 215. 6 176. 9 4023. 0 12. 6. . . 312. 5 276. 4 504. 6 3768. 6 12. 1. . . 415. 9 245. 1 420. 5 4257. 8 37. 7. . . 1045. 4 199. 1 380. 4 4451. 8 38. 7. . . 1308. 0 . . .

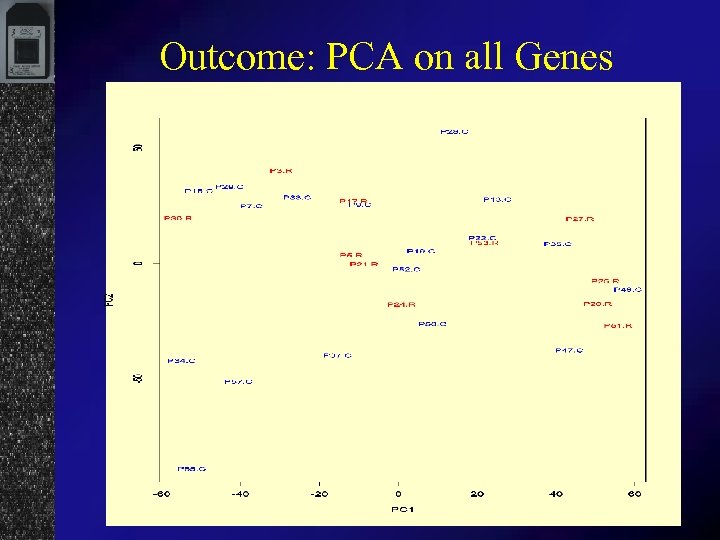

Outcome: PCA on all Genes

Outcome: PCA on all Genes

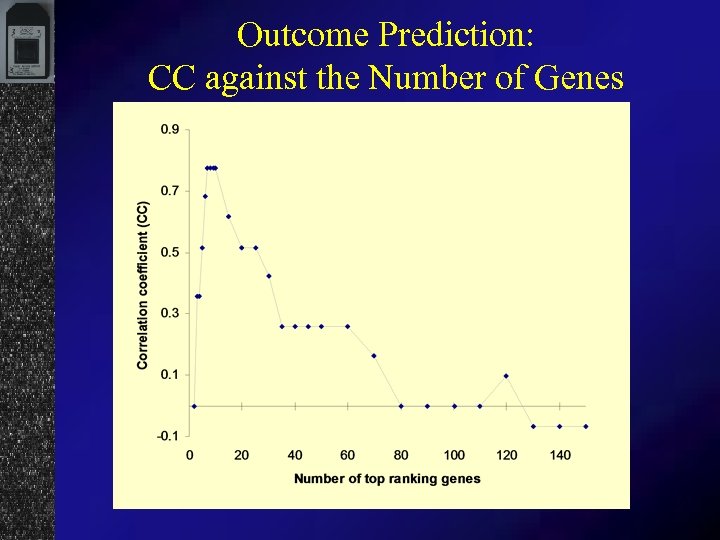

Outcome Prediction: CC against the Number of Genes

Outcome Prediction: CC against the Number of Genes

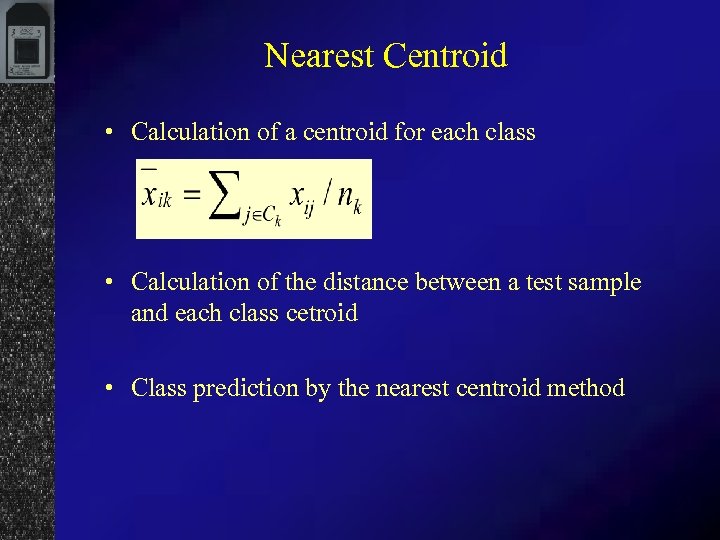

Nearest Centroid • Calculation of a centroid for each class • Calculation of the distance between a test sample and each class cetroid • Class prediction by the nearest centroid method

Nearest Centroid • Calculation of a centroid for each class • Calculation of the distance between a test sample and each class cetroid • Class prediction by the nearest centroid method

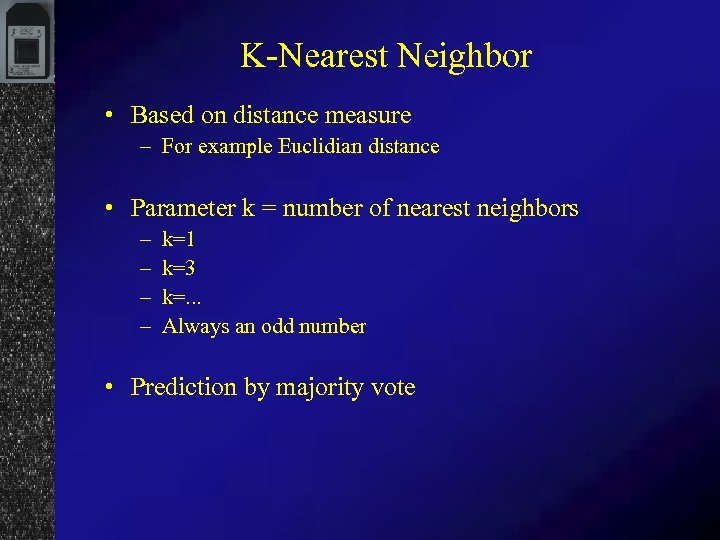

K-Nearest Neighbor • Based on distance measure – For example Euclidian distance • Parameter k = number of nearest neighbors – – k=1 k=3 k=. . . Always an odd number • Prediction by majority vote

K-Nearest Neighbor • Based on distance measure – For example Euclidian distance • Parameter k = number of nearest neighbors – – k=1 k=3 k=. . . Always an odd number • Prediction by majority vote

Support Vector Machines • Machine learning • Relatively new and highely theoretic • Works on non-linearly seperable data • Finding a hyperplane between the two classes by minimizing of the distance between the hyperplane and closest points

Support Vector Machines • Machine learning • Relatively new and highely theoretic • Works on non-linearly seperable data • Finding a hyperplane between the two classes by minimizing of the distance between the hyperplane and closest points

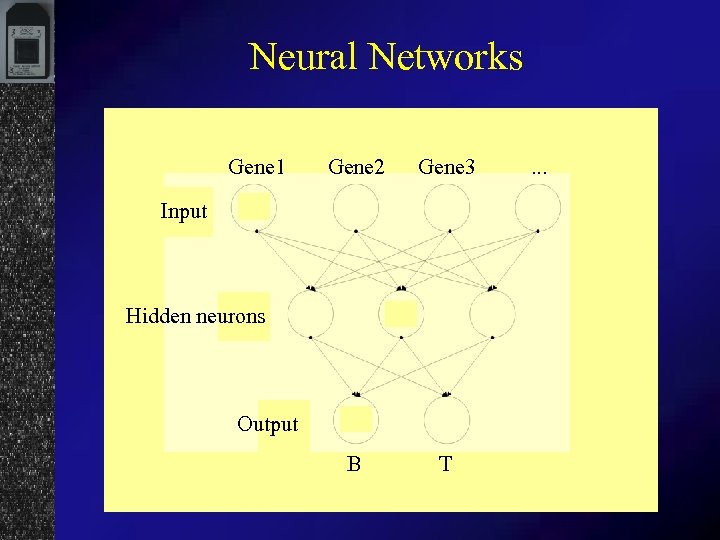

Neural Networks Gene 1 Gene 2 Gene 3 B T Input Hidden neurons Output . . .

Neural Networks Gene 1 Gene 2 Gene 3 B T Input Hidden neurons Output . . .

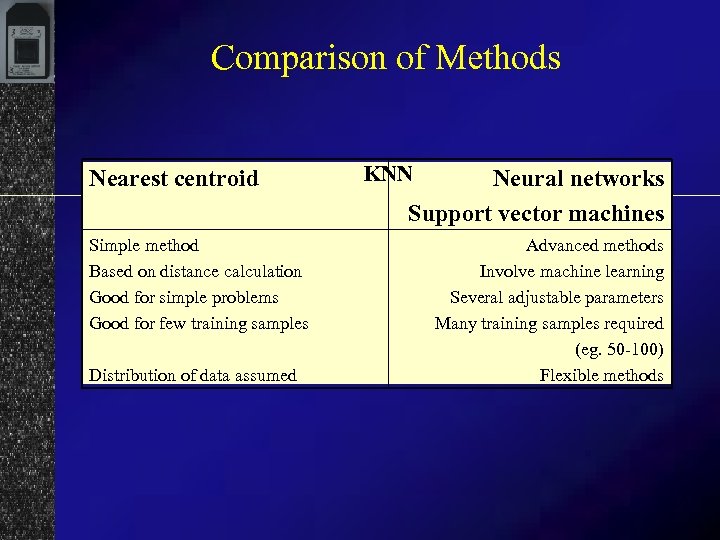

Comparison of Methods Nearest centroid Simple method Based on distance calculation Good for simple problems Good for few training samples Distribution of data assumed KNN Neural networks Support vector machines Advanced methods Involve machine learning Several adjustable parameters Many training samples required (eg. 50 -100) Flexible methods

Comparison of Methods Nearest centroid Simple method Based on distance calculation Good for simple problems Good for few training samples Distribution of data assumed KNN Neural networks Support vector machines Advanced methods Involve machine learning Several adjustable parameters Many training samples required (eg. 50 -100) Flexible methods

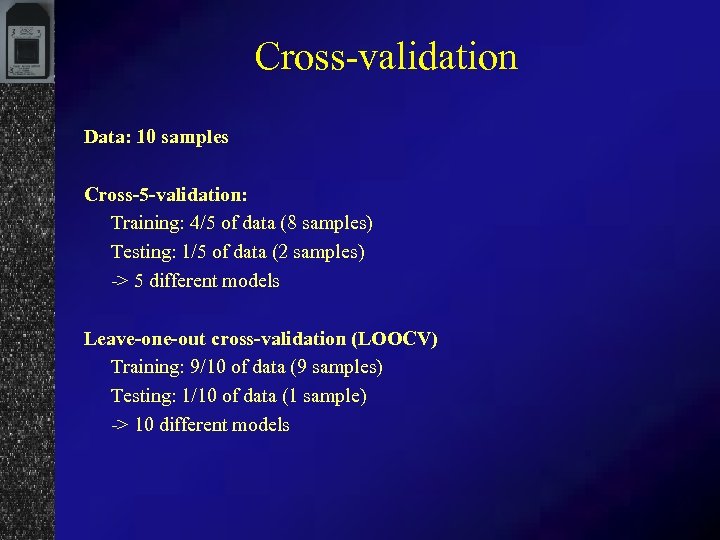

Cross-validation Data: 10 samples Cross-5 -validation: Training: 4/5 of data (8 samples) Testing: 1/5 of data (2 samples) -> 5 different models Leave-one-out cross-validation (LOOCV) Training: 9/10 of data (9 samples) Testing: 1/10 of data (1 sample) -> 10 different models

Cross-validation Data: 10 samples Cross-5 -validation: Training: 4/5 of data (8 samples) Testing: 1/5 of data (2 samples) -> 5 different models Leave-one-out cross-validation (LOOCV) Training: 9/10 of data (9 samples) Testing: 1/10 of data (1 sample) -> 10 different models

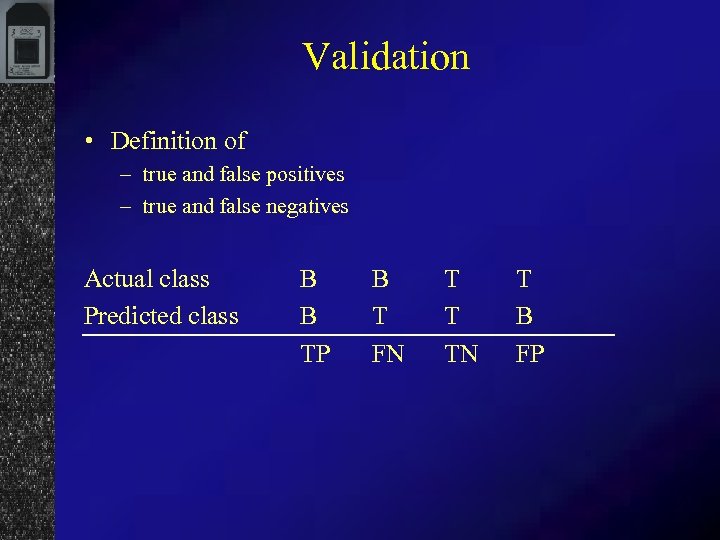

Validation • Definition of – true and false positives – true and false negatives Actual class Predicted class B B TP B T FN T T TN T B FP

Validation • Definition of – true and false positives – true and false negatives Actual class Predicted class B B TP B T FN T T TN T B FP

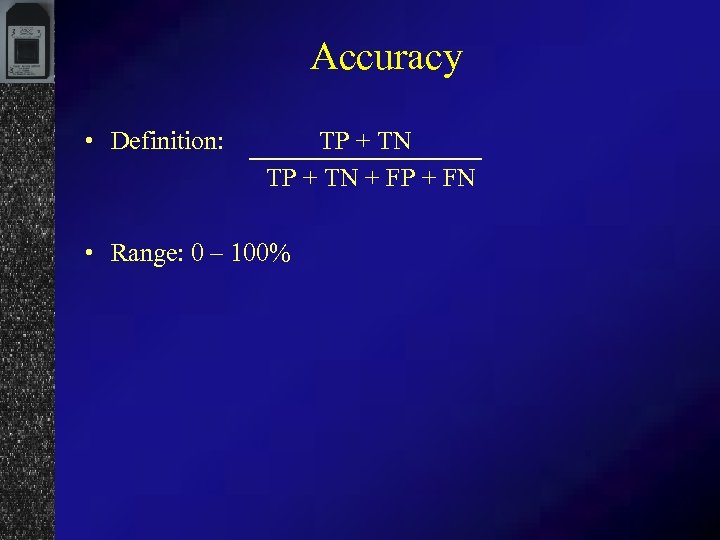

Accuracy • Definition: TP + TN + FP + FN • Range: 0 – 100%

Accuracy • Definition: TP + TN + FP + FN • Range: 0 – 100%

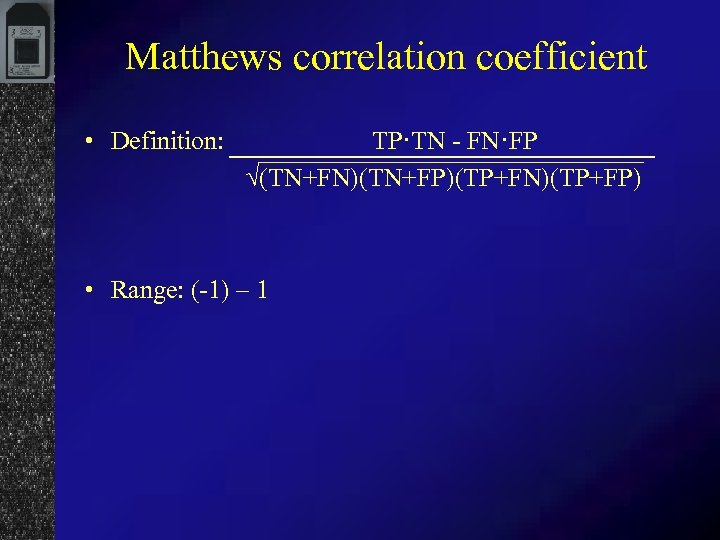

Matthews correlation coefficient • Definition: TP·TN - FN·FP √(TN+FN)(TN+FP)(TP+FN)(TP+FP) • Range: (-1) – 1

Matthews correlation coefficient • Definition: TP·TN - FN·FP √(TN+FN)(TN+FP)(TP+FN)(TP+FP) • Range: (-1) – 1

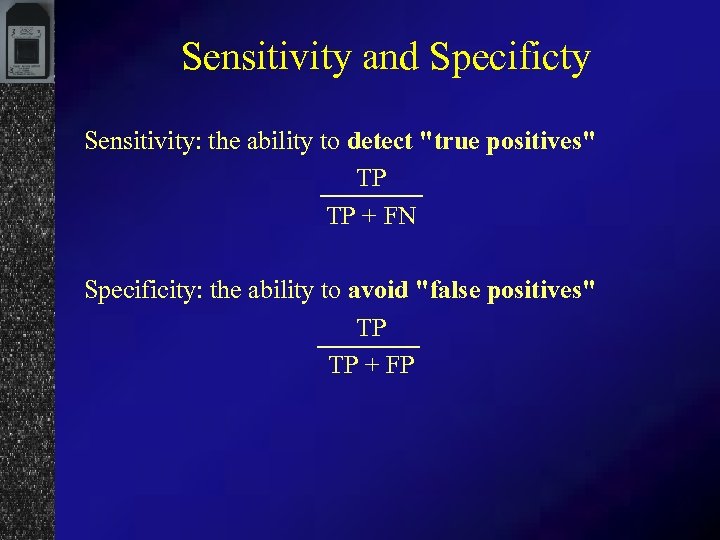

Sensitivity and Specificty Sensitivity: the ability to detect "true positives" TP TP + FN Specificity: the ability to avoid "false positives" TP TP + FP

Sensitivity and Specificty Sensitivity: the ability to detect "true positives" TP TP + FN Specificity: the ability to avoid "false positives" TP TP + FP

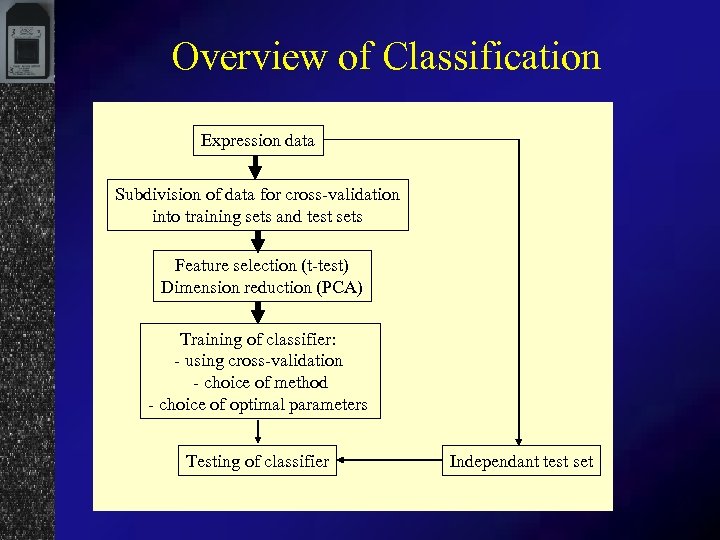

Overview of Classification Expression data Subdivision of data for cross-validation into training sets and test sets Feature selection (t-test) Dimension reduction (PCA) Training of classifier: - using cross-validation - choice of method - choice of optimal parameters Testing of classifier Independant test set

Overview of Classification Expression data Subdivision of data for cross-validation into training sets and test sets Feature selection (t-test) Dimension reduction (PCA) Training of classifier: - using cross-validation - choice of method - choice of optimal parameters Testing of classifier Independant test set

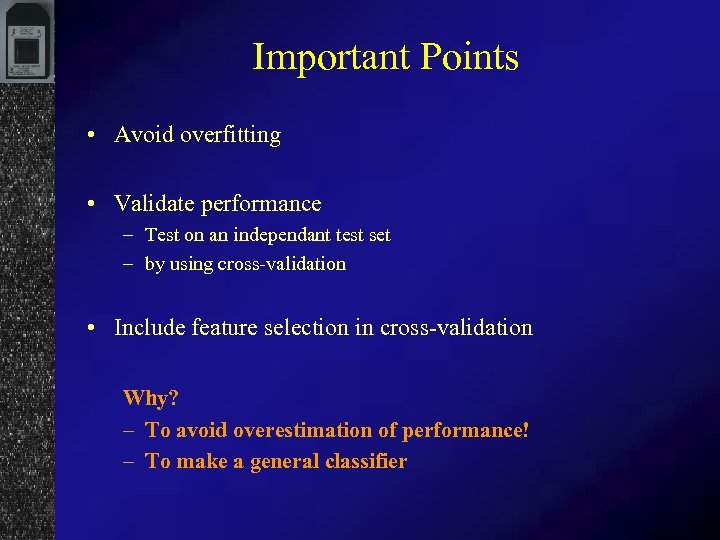

Important Points • Avoid overfitting • Validate performance – Test on an independant test set – by using cross-validation • Include feature selection in cross-validation Why? – To avoid overestimation of performance! – To make a general classifier

Important Points • Avoid overfitting • Validate performance – Test on an independant test set – by using cross-validation • Include feature selection in cross-validation Why? – To avoid overestimation of performance! – To make a general classifier

Multiclassification • Same prediction methods – multiclass prediction often implemented – Often bad performance • Solution – Division in small binary (2 -class) problems

Multiclassification • Same prediction methods – multiclass prediction often implemented – Often bad performance • Solution – Division in small binary (2 -class) problems

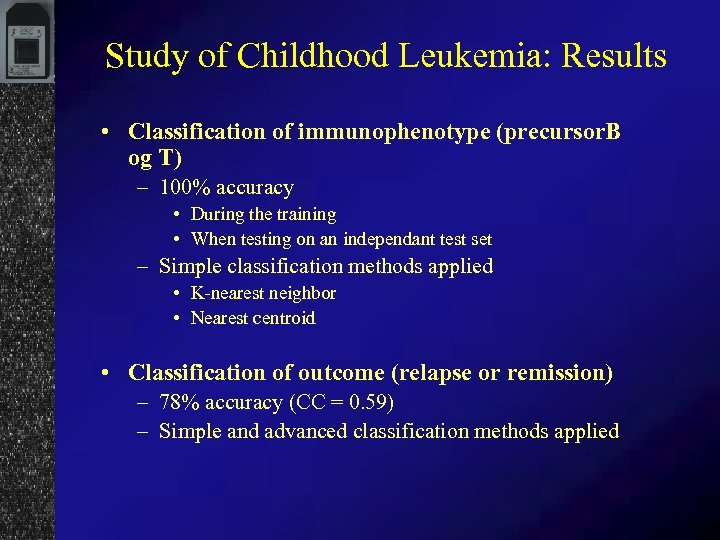

Study of Childhood Leukemia: Results • Classification of immunophenotype (precursor. B og T) – 100% accuracy • During the training • When testing on an independant test set – Simple classification methods applied • K-nearest neighbor • Nearest centroid • Classification of outcome (relapse or remission) – 78% accuracy (CC = 0. 59) – Simple and advanced classification methods applied

Study of Childhood Leukemia: Results • Classification of immunophenotype (precursor. B og T) – 100% accuracy • During the training • When testing on an independant test set – Simple classification methods applied • K-nearest neighbor • Nearest centroid • Classification of outcome (relapse or remission) – 78% accuracy (CC = 0. 59) – Simple and advanced classification methods applied

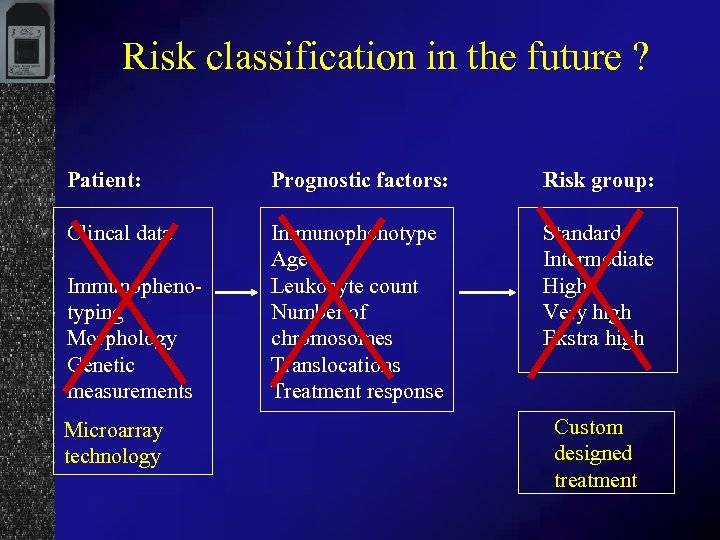

Risk classification in the future ? Patient: Prognostic factors: Risk group: Clincal data Immunophenotype Age Leukocyte count Number of chromosomes Translocations Treatment response Standard Intermediate High Very high Ekstra high Immunophenotyping Morphology Genetic measurements Microarray technology Custom designed treatment

Risk classification in the future ? Patient: Prognostic factors: Risk group: Clincal data Immunophenotype Age Leukocyte count Number of chromosomes Translocations Treatment response Standard Intermediate High Very high Ekstra high Immunophenotyping Morphology Genetic measurements Microarray technology Custom designed treatment

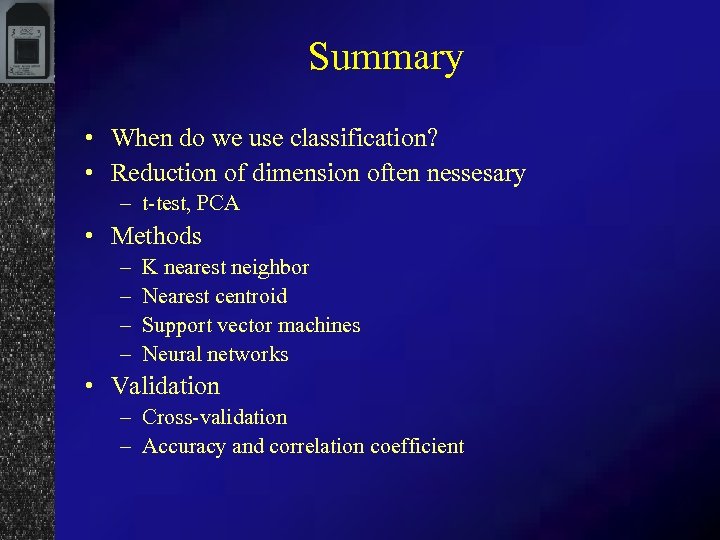

Summary • When do we use classification? • Reduction of dimension often nessesary – t-test, PCA • Methods – – K nearest neighbor Nearest centroid Support vector machines Neural networks • Validation – Cross-validation – Accuracy and correlation coefficient

Summary • When do we use classification? • Reduction of dimension often nessesary – t-test, PCA • Methods – – K nearest neighbor Nearest centroid Support vector machines Neural networks • Validation – Cross-validation – Accuracy and correlation coefficient

Coffee break Next: Exercises in classification

Coffee break Next: Exercises in classification