8b9777a4a353c7960834db028580c38b.ppt

- Количество слайдов: 45

CIS 842: Specification and Verification of Reactive Systems Introduction to Source-level Model Reduction Copyright 2001, Matt Dwyer, John Hatcliff, and Radu Iosif. The syllabus and all lectures for this course are copyrighted materials and may not be used in other course settings outside of Kansas State University in their current form or modified form without the express written permission of one of the copyright holders. During this course, students are prohibited from selling notes to or being paid for taking notes by any person or commercial firm without the express written permission of one of the copyright holders.

CIS 842: Specification and Verification of Reactive Systems Introduction to Source-level Model Reduction Copyright 2001, Matt Dwyer, John Hatcliff, and Radu Iosif. The syllabus and all lectures for this course are copyrighted materials and may not be used in other course settings outside of Kansas State University in their current form or modified form without the express written permission of one of the copyright holders. During this course, students are prohibited from selling notes to or being paid for taking notes by any person or commercial firm without the express written permission of one of the copyright holders.

Motivation Model-checking is expensive, so we need to find multiple ways to reduce the cost. l Cost can be reduced by reducing the number of state explored (the size of the state-space). l State-space can be reduced by l – “internal optimizations” such as partial-order or symmetry reductions. – “external optimizations” realized by transforming the model description (e. g. , Promela, Java, etc. ). In this lecture, we will study several external optimizations including state-coarsening, slicing, data abstraction, and predicate abstraction. l We will informally introduce the notion of a simulation which will help us to understand when our optimizations have been correctly applied. l In later lectures, we will give a more formal presentation of many of the ideas presented here. l

Motivation Model-checking is expensive, so we need to find multiple ways to reduce the cost. l Cost can be reduced by reducing the number of state explored (the size of the state-space). l State-space can be reduced by l – “internal optimizations” such as partial-order or symmetry reductions. – “external optimizations” realized by transforming the model description (e. g. , Promela, Java, etc. ). In this lecture, we will study several external optimizations including state-coarsening, slicing, data abstraction, and predicate abstraction. l We will informally introduce the notion of a simulation which will help us to understand when our optimizations have been correctly applied. l In later lectures, we will give a more formal presentation of many of the ideas presented here. l

Outline l Control-point Coarsening l Slicing l Data Abstraction l Predicate Abstraction l Model-restriction (bounded models)

Outline l Control-point Coarsening l Slicing l Data Abstraction l Predicate Abstraction l Model-restriction (bounded models)

Property-driven Reduction reduction Original System S Reduced System S’ l The goal of applying model-checking is to establish that some system S satisfies a given property P (S ² P). l It is often the case that one can generate a system S’ with a smaller state-space and then check P on S’. l To make this work, we generally require that the reduction be sound, I. e. , – If S’ ² P then S ² P …this ensures that the transformation preserves verification results.

Property-driven Reduction reduction Original System S Reduced System S’ l The goal of applying model-checking is to establish that some system S satisfies a given property P (S ² P). l It is often the case that one can generate a system S’ with a smaller state-space and then check P on S’. l To make this work, we generally require that the reduction be sound, I. e. , – If S’ ² P then S ² P …this ensures that the transformation preserves verification results.

Property-driven Reduction reduction Original System S l Reduced System S’ Ideally, we would like for the reduction to be complete as well, I. e. , – If not S’ ² P then not S ² P …this ensures that the transformation preserves falsification results. – In complete reductions, if we have an error trace on S’ we know there is a corresponding error trace on S (we have definitely found a bug). – If a reduction is not complete, then there may be an error trace on S’ that does not represent a bug in S. Such error traces are often called ‘spurious’ or ‘infeasible’.

Property-driven Reduction reduction Original System S l Reduced System S’ Ideally, we would like for the reduction to be complete as well, I. e. , – If not S’ ² P then not S ² P …this ensures that the transformation preserves falsification results. – In complete reductions, if we have an error trace on S’ we know there is a corresponding error trace on S (we have definitely found a bug). – If a reduction is not complete, then there may be an error trace on S’ that does not represent a bug in S. Such error traces are often called ‘spurious’ or ‘infeasible’.

Property Observability What sort of reductions can be performed? P-equivalent states reduction Original System S Reduced System S’ Remember reductions of S are being performed with respect to a property P. l Think of P as an observer of the state-space of S. l If P cannot tell the difference between two S states t 1 and t 2, then t 1 and t 2 can be lumped together in S’. l In such a situation, we say that t 1 and t 2 are P-equivalent. l

Property Observability What sort of reductions can be performed? P-equivalent states reduction Original System S Reduced System S’ Remember reductions of S are being performed with respect to a property P. l Think of P as an observer of the state-space of S. l If P cannot tell the difference between two S states t 1 and t 2, then t 1 and t 2 can be lumped together in S’. l In such a situation, we say that t 1 and t 2 are P-equivalent. l

Property Observability What sort of reductions can be performed? P-equivalent states reduction Original System S Reduced System S’ Of course, this discussion and associated correctness issues mean that we have to consider the “power” of the observer (the power of the logic used for P). l Unless we say otherwise, we will consider only LTL properties. l We will try to note when there are differences between LTL and CTL. l

Property Observability What sort of reductions can be performed? P-equivalent states reduction Original System S Reduced System S’ Of course, this discussion and associated correctness issues mean that we have to consider the “power” of the observer (the power of the logic used for P). l Unless we say otherwise, we will consider only LTL properties. l We will try to note when there are differences between LTL and CTL. l

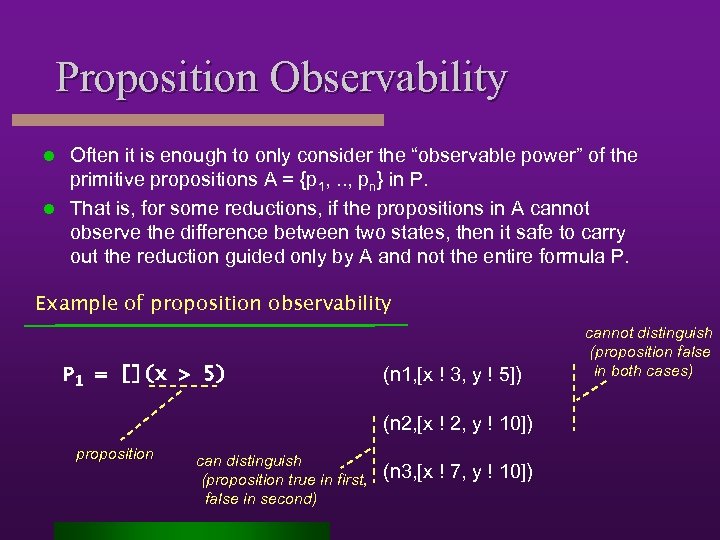

Proposition Observability Often it is enough to only consider the “observable power” of the primitive propositions A = {p 1, . . , pn} in P. l That is, for some reductions, if the propositions in A cannot observe the difference between two states, then it safe to carry out the reduction guided only by A and not the entire formula P. l Example of proposition observability P 1 = [](x > 5) (n 1, [x ! 3, y ! 5]) (n 2, [x ! 2, y ! 10]) proposition can distinguish (proposition true in first, false in second) (n 3, [x ! 7, y ! 10]) cannot distinguish (proposition false in both cases)

Proposition Observability Often it is enough to only consider the “observable power” of the primitive propositions A = {p 1, . . , pn} in P. l That is, for some reductions, if the propositions in A cannot observe the difference between two states, then it safe to carry out the reduction guided only by A and not the entire formula P. l Example of proposition observability P 1 = [](x > 5) (n 1, [x ! 3, y ! 5]) (n 2, [x ! 2, y ! 10]) proposition can distinguish (proposition true in first, false in second) (n 3, [x ! 7, y ! 10]) cannot distinguish (proposition false in both cases)

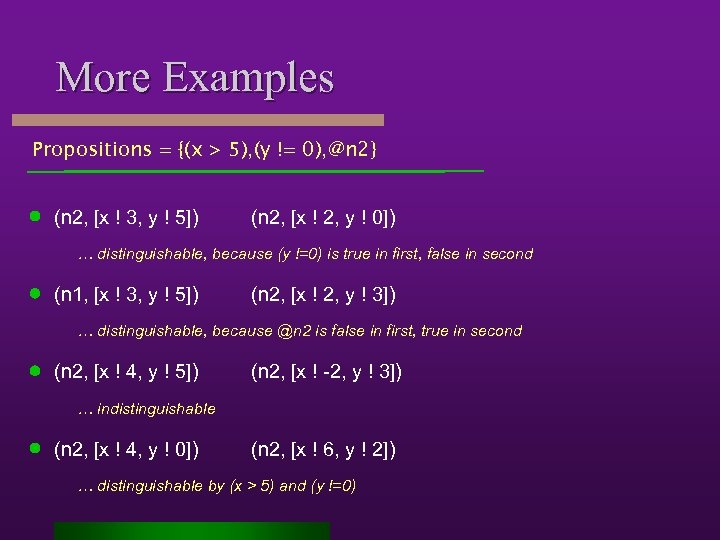

More Examples Propositions = {(x > 5), (y != 0), @n 2} (n 2, [x ! 3, y ! 5]) (n 2, [x ! 2, y ! 0]) … distinguishable, because (y !=0) is true in first, false in second (n 1, [x ! 3, y ! 5]) (n 2, [x ! 2, y ! 3]) … distinguishable, because @n 2 is false in first, true in second (n 2, [x ! 4, y ! 5]) (n 2, [x ! -2, y ! 3]) … indistinguishable (n 2, [x ! 4, y ! 0]) (n 2, [x ! 6, y ! 2]) … distinguishable by (x > 5) and (y !=0)

More Examples Propositions = {(x > 5), (y != 0), @n 2} (n 2, [x ! 3, y ! 5]) (n 2, [x ! 2, y ! 0]) … distinguishable, because (y !=0) is true in first, false in second (n 1, [x ! 3, y ! 5]) (n 2, [x ! 2, y ! 3]) … distinguishable, because @n 2 is false in first, true in second (n 2, [x ! 4, y ! 5]) (n 2, [x ! -2, y ! 3]) … indistinguishable (n 2, [x ! 4, y ! 0]) (n 2, [x ! 6, y ! 2]) … distinguishable by (x > 5) and (y !=0)

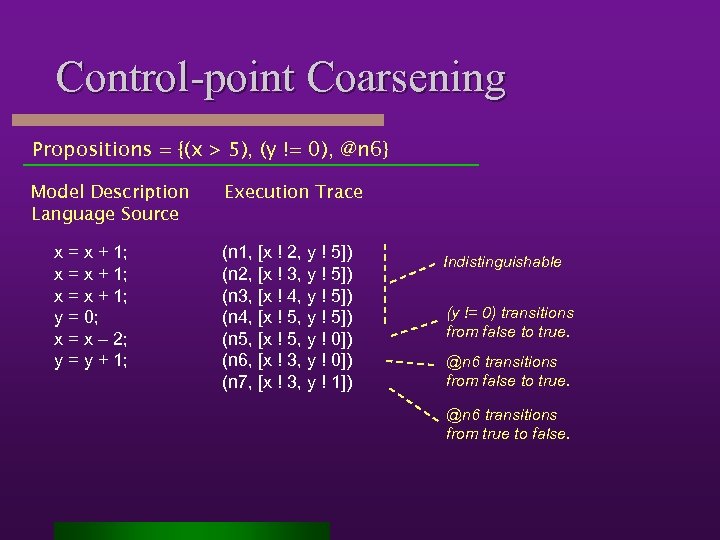

Control-point Coarsening Propositions = {(x > 5), (y != 0), @n 6} Model Description Language Source x = x + 1; y = 0; x = x – 2; y = y + 1; Execution Trace (n 1, [x ! 2, y ! 5]) (n 2, [x ! 3, y ! 5]) (n 3, [x ! 4, y ! 5]) (n 4, [x ! 5, y ! 5]) (n 5, [x ! 5, y ! 0]) (n 6, [x ! 3, y ! 0]) (n 7, [x ! 3, y ! 1]) Indistinguishable (y != 0) transitions from false to true. @n 6 transitions from true to false.

Control-point Coarsening Propositions = {(x > 5), (y != 0), @n 6} Model Description Language Source x = x + 1; y = 0; x = x – 2; y = y + 1; Execution Trace (n 1, [x ! 2, y ! 5]) (n 2, [x ! 3, y ! 5]) (n 3, [x ! 4, y ! 5]) (n 4, [x ! 5, y ! 5]) (n 5, [x ! 5, y ! 0]) (n 6, [x ! 3, y ! 0]) (n 7, [x ! 3, y ! 1]) Indistinguishable (y != 0) transitions from false to true. @n 6 transitions from true to false.

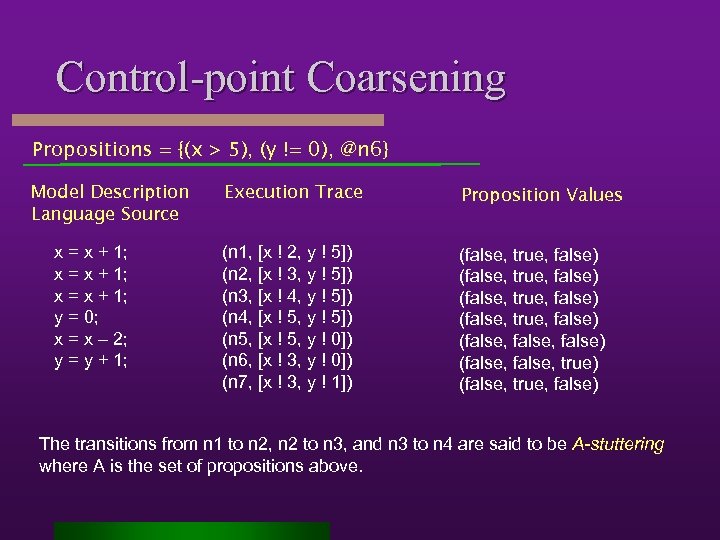

Control-point Coarsening Propositions = {(x > 5), (y != 0), @n 6} Model Description Language Source x = x + 1; y = 0; x = x – 2; y = y + 1; Execution Trace Proposition Values (n 1, [x ! 2, y ! 5]) (n 2, [x ! 3, y ! 5]) (n 3, [x ! 4, y ! 5]) (n 4, [x ! 5, y ! 5]) (n 5, [x ! 5, y ! 0]) (n 6, [x ! 3, y ! 0]) (n 7, [x ! 3, y ! 1]) (false, true, false) (false, false) (false, true) (false, true, false) The transitions from n 1 to n 2, n 2 to n 3, and n 3 to n 4 are said to be A-stuttering where A is the set of propositions above.

Control-point Coarsening Propositions = {(x > 5), (y != 0), @n 6} Model Description Language Source x = x + 1; y = 0; x = x – 2; y = y + 1; Execution Trace Proposition Values (n 1, [x ! 2, y ! 5]) (n 2, [x ! 3, y ! 5]) (n 3, [x ! 4, y ! 5]) (n 4, [x ! 5, y ! 5]) (n 5, [x ! 5, y ! 0]) (n 6, [x ! 3, y ! 0]) (n 7, [x ! 3, y ! 1]) (false, true, false) (false, false) (false, true) (false, true, false) The transitions from n 1 to n 2, n 2 to n 3, and n 3 to n 4 are said to be A-stuttering where A is the set of propositions above.

Correctness Stuttering theorem

Correctness Stuttering theorem

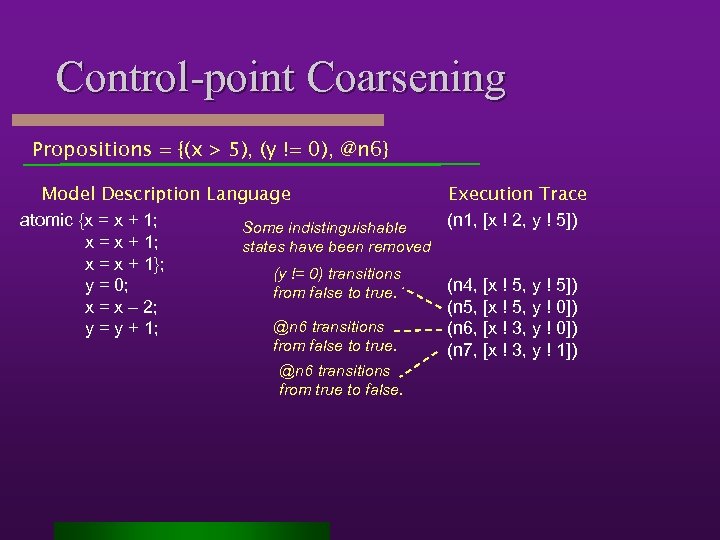

Control-point Coarsening Propositions = {(x > 5), (y != 0), @n 6} Model Description Language atomic {x = x + 1; Some indistinguishable x = x + 1; states have been removed x = x + 1}; (y != 0) transitions y = 0; from false to true. x = x – 2; @n 6 transitions y = y + 1; from false to true. @n 6 transitions from true to false. Execution Trace (n 1, [x ! 2, y ! 5]) (n 4, [x ! 5, y ! 5]) (n 5, [x ! 5, y ! 0]) (n 6, [x ! 3, y ! 0]) (n 7, [x ! 3, y ! 1])

Control-point Coarsening Propositions = {(x > 5), (y != 0), @n 6} Model Description Language atomic {x = x + 1; Some indistinguishable x = x + 1; states have been removed x = x + 1}; (y != 0) transitions y = 0; from false to true. x = x – 2; @n 6 transitions y = y + 1; from false to true. @n 6 transitions from true to false. Execution Trace (n 1, [x ! 2, y ! 5]) (n 4, [x ! 5, y ! 5]) (n 5, [x ! 5, y ! 0]) (n 6, [x ! 3, y ! 0]) (n 7, [x ! 3, y ! 1])

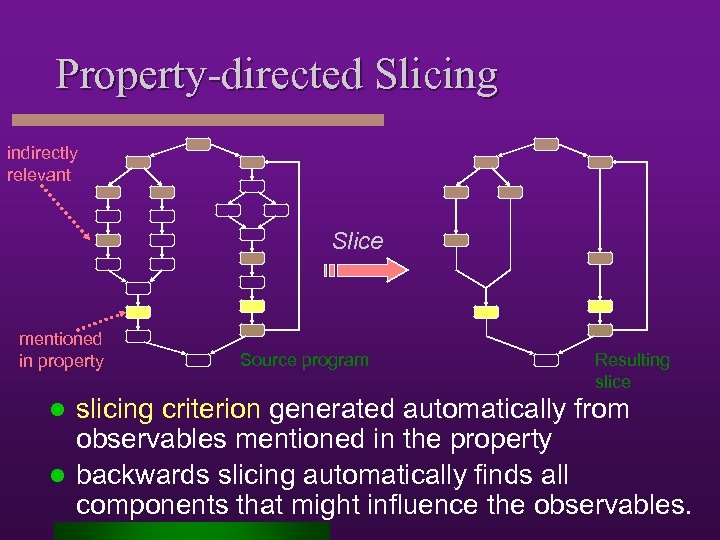

Property-directed Slicing indirectly relevant Slice mentioned in property Source program Resulting slice slicing criterion generated automatically from observables mentioned in the property l backwards slicing automatically finds all components that might influence the observables. l

Property-directed Slicing indirectly relevant Slice mentioned in property Source program Resulting slice slicing criterion generated automatically from observables mentioned in the property l backwards slicing automatically finds all components that might influence the observables. l

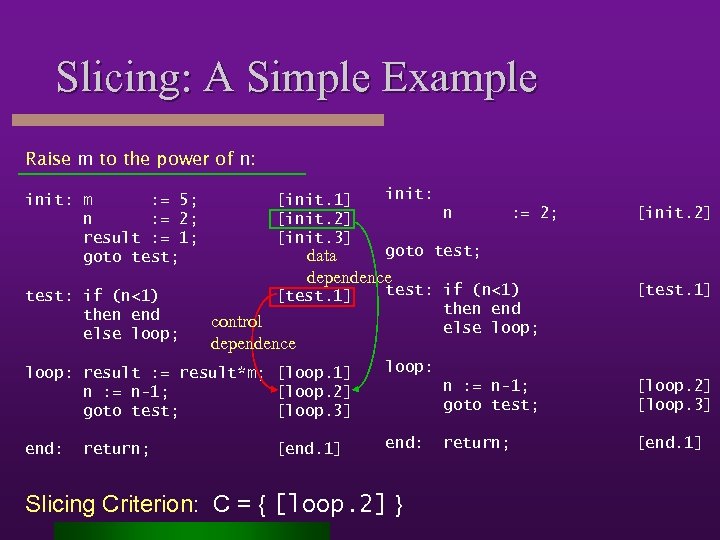

Slicing: A Simple Example Raise m to the power of n: init: m : = 5; n : = 2; result : = 1; goto test; [init. 1] [init. 2] [init. 3] init: test: if (n<1) then end else loop; [test. 1] test: if (n<1) then end else loop; n : = 2; goto test; data dependence control dependence loop: result : = result*m; [loop. 1] n : = n-1; [loop. 2] goto test; [loop. 3] end: [test. 1] loop: end: [init. 2] return; [end. 1] n : = n-1; goto test; Slicing Criterion: C = { [loop. 2] } [loop. 2] [loop. 3] return; [end. 1]

Slicing: A Simple Example Raise m to the power of n: init: m : = 5; n : = 2; result : = 1; goto test; [init. 1] [init. 2] [init. 3] init: test: if (n<1) then end else loop; [test. 1] test: if (n<1) then end else loop; n : = 2; goto test; data dependence control dependence loop: result : = result*m; [loop. 1] n : = n-1; [loop. 2] goto test; [loop. 3] end: [test. 1] loop: end: [init. 2] return; [end. 1] n : = n-1; goto test; Slicing Criterion: C = { [loop. 2] } [loop. 2] [loop. 3] return; [end. 1]

![Computing Slices: The Program Dependence Graph Approach CFG Source Program PDG [init. 1] [init. Computing Slices: The Program Dependence Graph Approach CFG Source Program PDG [init. 1] [init.](https://present5.com/presentation/8b9777a4a353c7960834db028580c38b/image-16.jpg) Computing Slices: The Program Dependence Graph Approach CFG Source Program PDG [init. 1] [init. 2] [init. 3] [test. 1] loop: result : = result*m; [loop. 1] n : = n-1; [loop. 2] goto test; [loop. 3] [loop. 1] [loop. 2] end: [loop. 3] [end. 1] init: m : = 5; n : = 2; result : = 1; goto test; [init. 1] [init. 2] [init. 3] test: if (n<1) then end else loop; [test. 1] return; [end. 1]

Computing Slices: The Program Dependence Graph Approach CFG Source Program PDG [init. 1] [init. 2] [init. 3] [test. 1] loop: result : = result*m; [loop. 1] n : = n-1; [loop. 2] goto test; [loop. 3] [loop. 1] [loop. 2] end: [loop. 3] [end. 1] init: m : = 5; n : = 2; result : = 1; goto test; [init. 1] [init. 2] [init. 3] test: if (n<1) then end else loop; [test. 1] return; [end. 1]

![Computing Slices: The Program Dependence Graph Approach CFG Source Program PDG [init. 1] [init. Computing Slices: The Program Dependence Graph Approach CFG Source Program PDG [init. 1] [init.](https://present5.com/presentation/8b9777a4a353c7960834db028580c38b/image-17.jpg) Computing Slices: The Program Dependence Graph Approach CFG Source Program PDG [init. 1] [init. 2] [init. 3] [test. 1] loop: result : = result*m; [loop. 1] n : = n-1; [loop. 2] goto test; [loop. 3] [loop. 1] [loop. 2] end: [loop. 3] [end. 1] init: m : = 5; n : = 2; result : = 1; goto test; [init. 1] [init. 2] [init. 3] test: if (n<1) then end else loop; [test. 1] return; [end. 1] Slice set wrt Criterion C: {m | m * n where n in C}

Computing Slices: The Program Dependence Graph Approach CFG Source Program PDG [init. 1] [init. 2] [init. 3] [test. 1] loop: result : = result*m; [loop. 1] n : = n-1; [loop. 2] goto test; [loop. 3] [loop. 1] [loop. 2] end: [loop. 3] [end. 1] init: m : = 5; n : = 2; result : = 1; goto test; [init. 1] [init. 2] [init. 3] test: if (n<1) then end else loop; [test. 1] return; [end. 1] Slice set wrt Criterion C: {m | m * n where n in C}

![Traditional Slicing Correction: Notion of Projection Slicing Criterion: C = { [loop. 2] } Traditional Slicing Correction: Notion of Projection Slicing Criterion: C = { [loop. 2] }](https://present5.com/presentation/8b9777a4a353c7960834db028580c38b/image-18.jpg) Traditional Slicing Correction: Notion of Projection Slicing Criterion: C = { [loop. 2] } Source Program Trace ([init. 1], ([init. 2], ([init. 3], ([init. 4], ([test. 1], ([loop. 2], ([loop. 3], ([test. 1], ([end. 1], (halt, [m=0, n=0, result=0]) [m=5, n=2, result=1]) [m=5, n=2, result=5]) [m=5, n=1, result=25]) [m=5, n=0, result=25]) Residual Program (Slice) Trace ([init. 2], [n=0]) Project out states for nodes listed in the criteria, and at those states, values for variables referenced at those nodes ([init. 4], [n=2]) ([test. 1], [n=2]) ([loop. 2], [n=2]) ([loop. 3], [n=1]) ([test. 1], [n=1]) ([loop. 2], ([loop. 3], ([test. 1], ([end. 1], (halt, [n=1]) [n=0])

Traditional Slicing Correction: Notion of Projection Slicing Criterion: C = { [loop. 2] } Source Program Trace ([init. 1], ([init. 2], ([init. 3], ([init. 4], ([test. 1], ([loop. 2], ([loop. 3], ([test. 1], ([end. 1], (halt, [m=0, n=0, result=0]) [m=5, n=2, result=1]) [m=5, n=2, result=5]) [m=5, n=1, result=25]) [m=5, n=0, result=25]) Residual Program (Slice) Trace ([init. 2], [n=0]) Project out states for nodes listed in the criteria, and at those states, values for variables referenced at those nodes ([init. 4], [n=2]) ([test. 1], [n=2]) ([loop. 2], [n=2]) ([loop. 3], [n=1]) ([test. 1], [n=1]) ([loop. 2], ([loop. 3], ([test. 1], ([end. 1], (halt, [n=1]) [n=0])

Connecting to Stuttering

Connecting to Stuttering

Back to Property-directed Slicing l We want to form a slicing criterion from a property P (atomic propositions A) that guarantees that any Anon-stuttering transitions are preserved

Back to Property-directed Slicing l We want to form a slicing criterion from a property P (atomic propositions A) that guarantees that any Anon-stuttering transitions are preserved

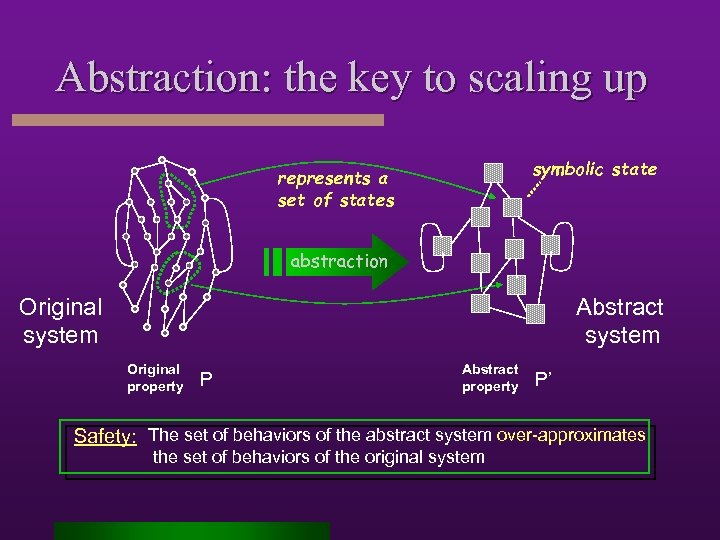

Abstraction: the key to scaling up symbolic state represents a set of states abstraction Original system Abstract system Original property P Abstract property P’ Safety: The set of behaviors of the abstract system over-approximates the set of behaviors of the original system

Abstraction: the key to scaling up symbolic state represents a set of states abstraction Original system Abstract system Original property P Abstract property P’ Safety: The set of behaviors of the abstract system over-approximates the set of behaviors of the original system

(n 2, Data Abstraction Example of proposition observability P 1 = [](x > 5) (n 2,](https://present5.com/presentation/8b9777a4a353c7960834db028580c38b/image-22.jpg) Data Abstraction Example of proposition observability P 1 = [](x > 5) (n 2, [x ! 3, y ! 5]) (n 2, [x ! 2, y ! 10]) proposition can distinguish (proposition true in first, false in second) (n 2, [x ! 7, y ! 10]) Collapse states at same control point with indistinguishable stores Replace integer values with symbolic values whose “meaning” is related to the proposition • For x values, use {LT 5, EQ 5, GT 5} • For y values, use {T} --- since proposition doesn’t observe y, we can use a single value that stands for “any value” (n 2, [x ! LT 5, y ! T]) (n 2, [x ! GT 5, y ! T]) One abstract state represents more than one concrete state

Data Abstraction Example of proposition observability P 1 = [](x > 5) (n 2, [x ! 3, y ! 5]) (n 2, [x ! 2, y ! 10]) proposition can distinguish (proposition true in first, false in second) (n 2, [x ! 7, y ! 10]) Collapse states at same control point with indistinguishable stores Replace integer values with symbolic values whose “meaning” is related to the proposition • For x values, use {LT 5, EQ 5, GT 5} • For y values, use {T} --- since proposition doesn’t observe y, we can use a single value that stands for “any value” (n 2, [x ! LT 5, y ! T]) (n 2, [x ! GT 5, y ! T]) One abstract state represents more than one concrete state

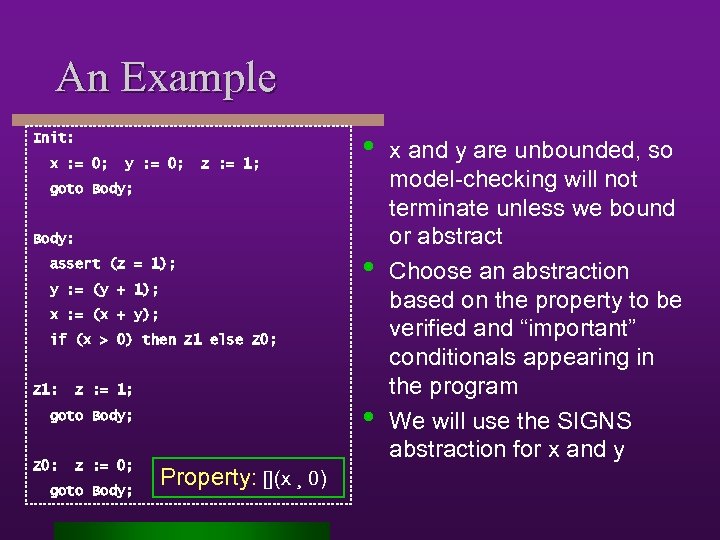

An Example Init: x : = 0; y : = 0; z : = 1; • goto Body; Body: assert (z = 1); y : = (y + 1); • x : = (x + y); if (x > 0) then Z 1 else Z 0; Z 1: z : = 1; • goto Body; Z 0: z : = 0; goto Body; Property: [](x ¸ 0) x and y are unbounded, so model-checking will not terminate unless we bound or abstract Choose an abstraction based on the property to be verified and “important” conditionals appearing in the program We will use the SIGNS abstraction for x and y

An Example Init: x : = 0; y : = 0; z : = 1; • goto Body; Body: assert (z = 1); y : = (y + 1); • x : = (x + y); if (x > 0) then Z 1 else Z 0; Z 1: z : = 1; • goto Body; Z 0: z : = 0; goto Body; Property: [](x ¸ 0) x and y are unbounded, so model-checking will not terminate unless we bound or abstract Choose an abstraction based on the property to be verified and “important” conditionals appearing in the program We will use the SIGNS abstraction for x and y

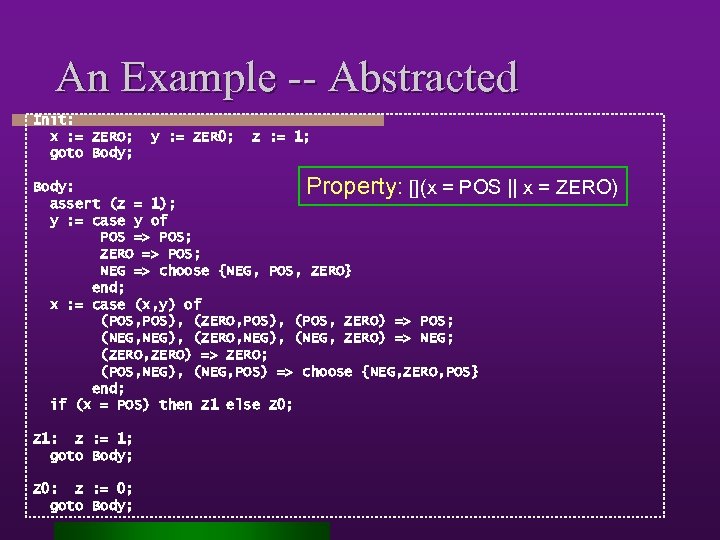

An Example -- Abstracted Init: x : = ZERO; goto Body; y : = ZER 0; z : = 1; Property: Body: [](x = POS assert (z = 1); y : = case y of POS => POS; ZERO => POS; NEG => choose {NEG, POS, ZERO} end; x : = case (x, y) of (POS, POS), (ZERO, POS), (POS, ZERO) => POS; (NEG, NEG), (ZERO, NEG), (NEG, ZERO) => NEG; (ZERO, ZERO) => ZERO; (POS, NEG), (NEG, POS) => choose {NEG, ZERO, POS} end; if (x = POS) then Z 1 else Z 0; Z 1: z : = 1; goto Body; Z 0: z : = 0; goto Body; || x = ZERO)

An Example -- Abstracted Init: x : = ZERO; goto Body; y : = ZER 0; z : = 1; Property: Body: [](x = POS assert (z = 1); y : = case y of POS => POS; ZERO => POS; NEG => choose {NEG, POS, ZERO} end; x : = case (x, y) of (POS, POS), (ZERO, POS), (POS, ZERO) => POS; (NEG, NEG), (ZERO, NEG), (NEG, ZERO) => NEG; (ZERO, ZERO) => ZERO; (POS, NEG), (NEG, POS) => choose {NEG, ZERO, POS} end; if (x = POS) then Z 1 else Z 0; Z 1: z : = 1; goto Body; Z 0: z : = 0; goto Body; || x = ZERO)

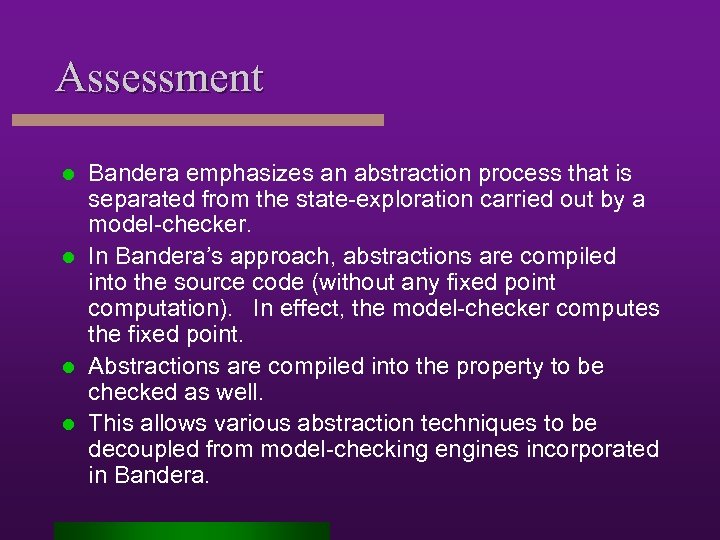

Assessment Bandera emphasizes an abstraction process that is separated from the state-exploration carried out by a model-checker. l In Bandera’s approach, abstractions are compiled into the source code (without any fixed point computation). In effect, the model-checker computes the fixed point. l Abstractions are compiled into the property to be checked as well. l This allows various abstraction techniques to be decoupled from model-checking engines incorporated in Bandera. l

Assessment Bandera emphasizes an abstraction process that is separated from the state-exploration carried out by a model-checker. l In Bandera’s approach, abstractions are compiled into the source code (without any fixed point computation). In effect, the model-checker computes the fixed point. l Abstractions are compiled into the property to be checked as well. l This allows various abstraction techniques to be decoupled from model-checking engines incorporated in Bandera. l

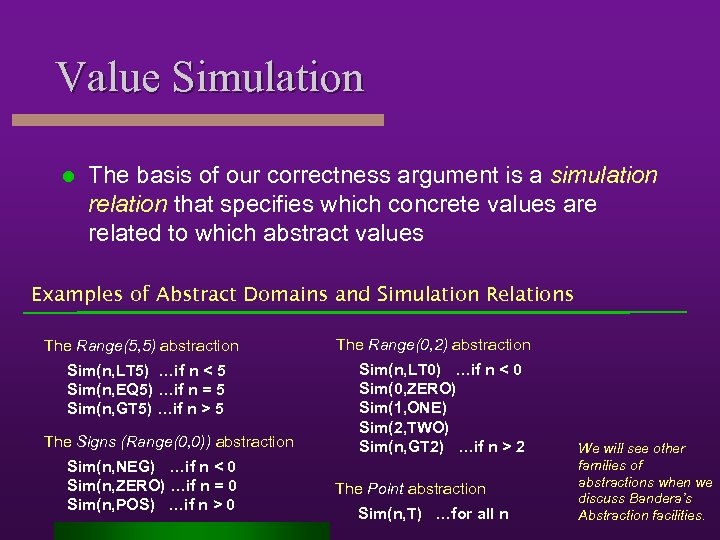

Value Simulation l The basis of our correctness argument is a simulation relation that specifies which concrete values are related to which abstract values Examples of Abstract Domains and Simulation Relations The Range(5, 5) abstraction Sim(n, LT 5) …if n < 5 Sim(n, EQ 5) …if n = 5 Sim(n, GT 5) …if n > 5 The Signs (Range(0, 0)) abstraction Sim(n, NEG) …if n < 0 Sim(n, ZERO) …if n = 0 Sim(n, POS) …if n > 0 The Range(0, 2) abstraction Sim(n, LT 0) …if n < 0 Sim(0, ZERO) Sim(1, ONE) Sim(2, TWO) Sim(n, GT 2) …if n > 2 The Point abstraction Sim(n, T) …for all n We will see other families of abstractions when we discuss Bandera’s Abstraction facilities.

Value Simulation l The basis of our correctness argument is a simulation relation that specifies which concrete values are related to which abstract values Examples of Abstract Domains and Simulation Relations The Range(5, 5) abstraction Sim(n, LT 5) …if n < 5 Sim(n, EQ 5) …if n = 5 Sim(n, GT 5) …if n > 5 The Signs (Range(0, 0)) abstraction Sim(n, NEG) …if n < 0 Sim(n, ZERO) …if n = 0 Sim(n, POS) …if n > 0 The Range(0, 2) abstraction Sim(n, LT 0) …if n < 0 Sim(0, ZERO) Sim(1, ONE) Sim(2, TWO) Sim(n, GT 2) …if n > 2 The Point abstraction Sim(n, T) …for all n We will see other families of abstractions when we discuss Bandera’s Abstraction facilities.

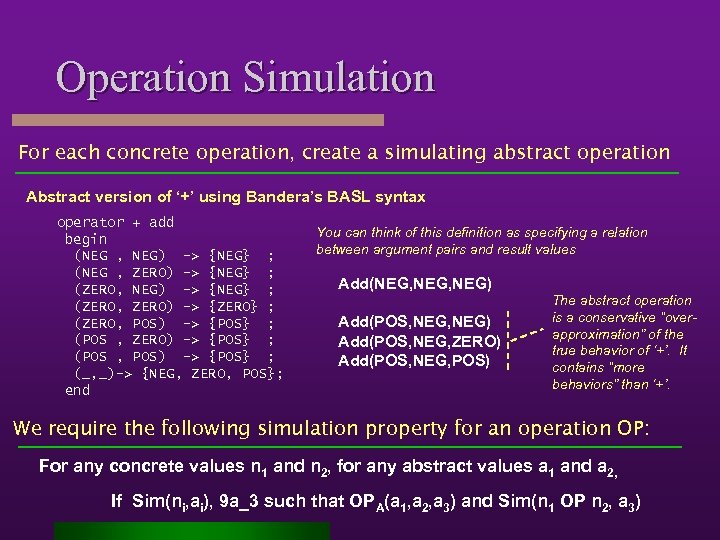

Operation Simulation For each concrete operation, create a simulating abstract operation Abstract version of ‘+’ using Bandera’s BASL syntax operator + add begin (NEG , NEG) -> {NEG} ; (NEG , ZERO) -> {NEG} ; (ZERO, NEG) -> {NEG} ; (ZERO, ZERO) -> {ZERO} ; (ZERO, POS) -> {POS} ; (POS , ZERO) -> {POS} ; (POS , POS) -> {POS} ; (_, _)-> {NEG, ZERO, POS}; end You can think of this definition as specifying a relation between argument pairs and result values Add(NEG, NEG) Add(POS, NEG, ZERO) Add(POS, NEG, POS) The abstract operation is a conservative “overapproximation” of the true behavior of ‘+’. It contains “more behaviors” than ‘+’. We require the following simulation property for an operation OP: For any concrete values n 1 and n 2, for any abstract values a 1 and a 2, If Sim(ni, ai), 9 a_3 such that OPA(a 1, a 2, a 3) and Sim(n 1 OP n 2, a 3)

Operation Simulation For each concrete operation, create a simulating abstract operation Abstract version of ‘+’ using Bandera’s BASL syntax operator + add begin (NEG , NEG) -> {NEG} ; (NEG , ZERO) -> {NEG} ; (ZERO, NEG) -> {NEG} ; (ZERO, ZERO) -> {ZERO} ; (ZERO, POS) -> {POS} ; (POS , ZERO) -> {POS} ; (POS , POS) -> {POS} ; (_, _)-> {NEG, ZERO, POS}; end You can think of this definition as specifying a relation between argument pairs and result values Add(NEG, NEG) Add(POS, NEG, ZERO) Add(POS, NEG, POS) The abstract operation is a conservative “overapproximation” of the true behavior of ‘+’. It contains “more behaviors” than ‘+’. We require the following simulation property for an operation OP: For any concrete values n 1 and n 2, for any abstract values a 1 and a 2, If Sim(ni, ai), 9 a_3 such that OPA(a 1, a 2, a 3) and Sim(n 1 OP n 2, a 3)

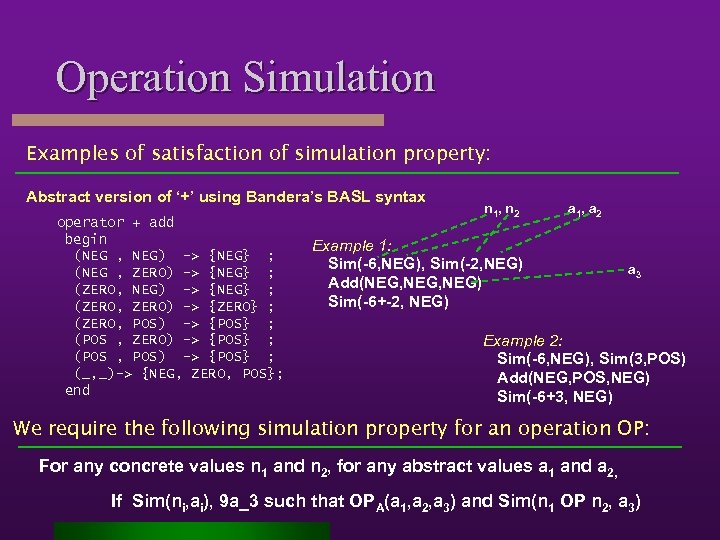

Operation Simulation Examples of satisfaction of simulation property: Abstract version of ‘+’ using Bandera’s BASL syntax operator + add begin (NEG , NEG) -> {NEG} ; (NEG , ZERO) -> {NEG} ; (ZERO, NEG) -> {NEG} ; (ZERO, ZERO) -> {ZERO} ; (ZERO, POS) -> {POS} ; (POS , ZERO) -> {POS} ; (POS , POS) -> {POS} ; (_, _)-> {NEG, ZERO, POS}; end n 1, n 2 a 1, a 2 Example 1: Sim(-6, NEG), Sim(-2, NEG) Add(NEG, NEG) Sim(-6+-2, NEG) a 3 Example 2: Sim(-6, NEG), Sim(3, POS) Add(NEG, POS, NEG) Sim(-6+3, NEG) We require the following simulation property for an operation OP: For any concrete values n 1 and n 2, for any abstract values a 1 and a 2, If Sim(ni, ai), 9 a_3 such that OPA(a 1, a 2, a 3) and Sim(n 1 OP n 2, a 3)

Operation Simulation Examples of satisfaction of simulation property: Abstract version of ‘+’ using Bandera’s BASL syntax operator + add begin (NEG , NEG) -> {NEG} ; (NEG , ZERO) -> {NEG} ; (ZERO, NEG) -> {NEG} ; (ZERO, ZERO) -> {ZERO} ; (ZERO, POS) -> {POS} ; (POS , ZERO) -> {POS} ; (POS , POS) -> {POS} ; (_, _)-> {NEG, ZERO, POS}; end n 1, n 2 a 1, a 2 Example 1: Sim(-6, NEG), Sim(-2, NEG) Add(NEG, NEG) Sim(-6+-2, NEG) a 3 Example 2: Sim(-6, NEG), Sim(3, POS) Add(NEG, POS, NEG) Sim(-6+3, NEG) We require the following simulation property for an operation OP: For any concrete values n 1 and n 2, for any abstract values a 1 and a 2, If Sim(ni, ai), 9 a_3 such that OPA(a 1, a 2, a 3) and Sim(n 1 OP n 2, a 3)

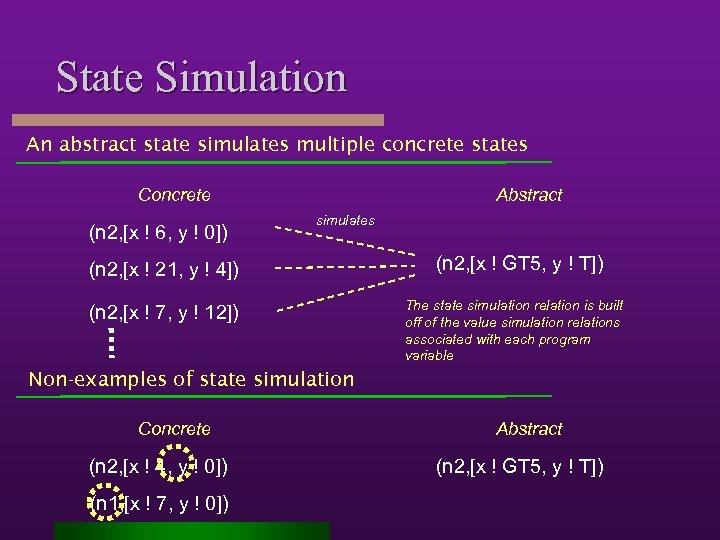

State Simulation An abstract state simulates multiple concrete states Concrete (n 2, [x ! 6, y ! 0]) Abstract simulates (n 2, [x ! 21, y ! 4]) (n 2, [x ! 7, y ! 12]) (n 2, [x ! GT 5, y ! T]) The state simulation relation is built off of the value simulation relations associated with each program variable Non-examples of state simulation Concrete (n 2, [x ! 4, y ! 0]) (n 1, [x ! 7, y ! 0]) Abstract (n 2, [x ! GT 5, y ! T])

State Simulation An abstract state simulates multiple concrete states Concrete (n 2, [x ! 6, y ! 0]) Abstract simulates (n 2, [x ! 21, y ! 4]) (n 2, [x ! 7, y ! 12]) (n 2, [x ! GT 5, y ! T]) The state simulation relation is built off of the value simulation relations associated with each program variable Non-examples of state simulation Concrete (n 2, [x ! 4, y ! 0]) (n 1, [x ! 7, y ! 0]) Abstract (n 2, [x ! GT 5, y ! T])

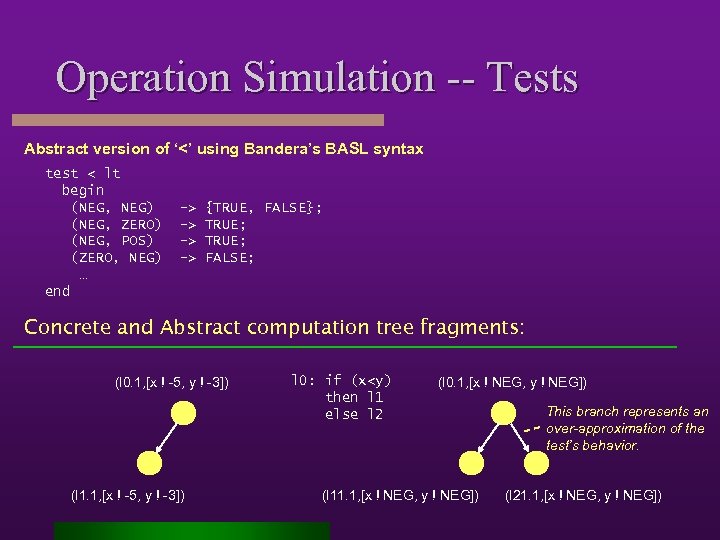

Operation Simulation -- Tests Abstract version of ‘<’ using Bandera’s BASL syntax test < lt begin (NEG, NEG) (NEG, ZERO) (NEG, POS) (ZERO, NEG) … end -> -> {TRUE, FALSE}; TRUE; FALSE; Concrete and Abstract computation tree fragments: (l 0. 1, [x ! -5, y ! -3]) (l 1. 1, [x ! -5, y ! -3]) l 0: if (x

Operation Simulation -- Tests Abstract version of ‘<’ using Bandera’s BASL syntax test < lt begin (NEG, NEG) (NEG, ZERO) (NEG, POS) (ZERO, NEG) … end -> -> {TRUE, FALSE}; TRUE; FALSE; Concrete and Abstract computation tree fragments: (l 0. 1, [x ! -5, y ! -3]) (l 1. 1, [x ! -5, y ! -3]) l 0: if (x

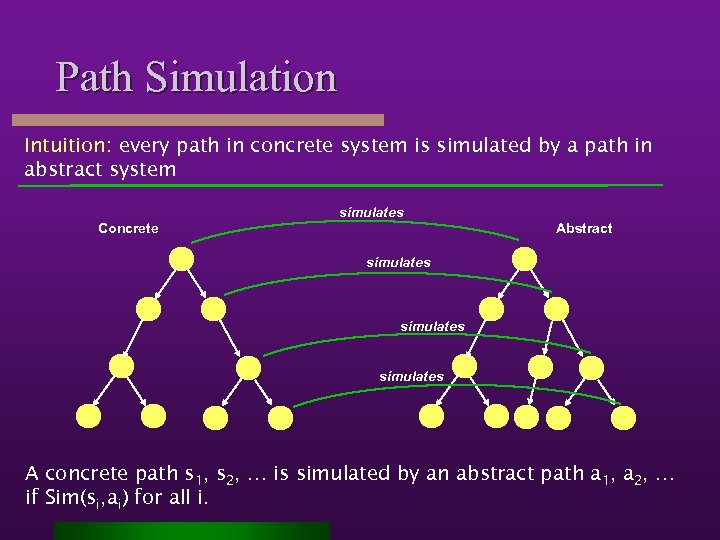

Path Simulation Intuition: every path in concrete system is simulated by a path in abstract system simulates Concrete Abstract simulates A concrete path s 1, s 2, … is simulated by an abstract path a 1, a 2, … if Sim(si, ai) for all i.

Path Simulation Intuition: every path in concrete system is simulated by a path in abstract system simulates Concrete Abstract simulates A concrete path s 1, s 2, … is simulated by an abstract path a 1, a 2, … if Sim(si, ai) for all i.

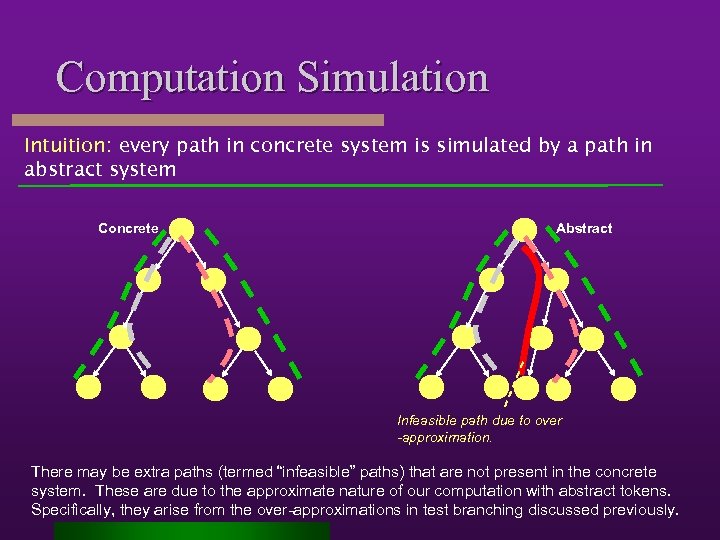

Computation Simulation Intuition: every path in concrete system is simulated by a path in abstract system Concrete Abstract Infeasible path due to over -approximation. There may be extra paths (termed “infeasible” paths) that are not present in the concrete system. These are due to the approximate nature of our computation with abstract tokens. Specifically, they arise from the over-approximations in test branching discussed previously.

Computation Simulation Intuition: every path in concrete system is simulated by a path in abstract system Concrete Abstract Infeasible path due to over -approximation. There may be extra paths (termed “infeasible” paths) that are not present in the concrete system. These are due to the approximate nature of our computation with abstract tokens. Specifically, they arise from the over-approximations in test branching discussed previously.

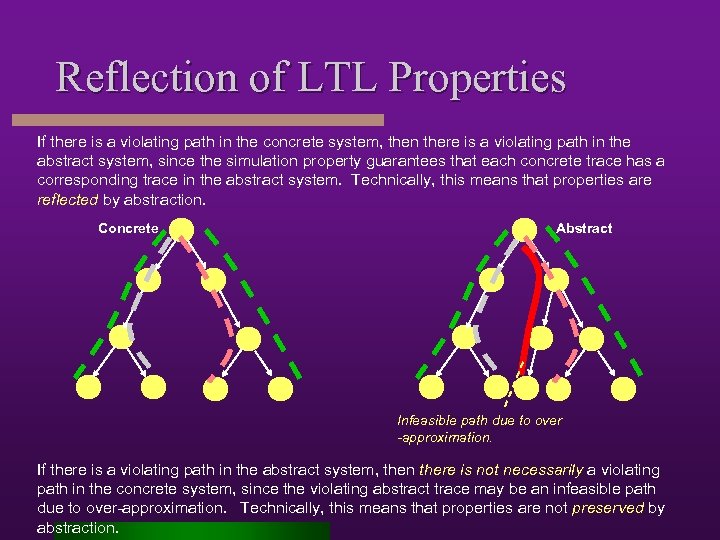

Reflection of LTL Properties If there is a violating path in the concrete system, then there is a violating path in the abstract system, since the simulation property guarantees that each concrete trace has a corresponding trace in the abstract system. Technically, this means that properties are reflected by abstraction. Concrete Abstract Infeasible path due to over -approximation. If there is a violating path in the abstract system, then there is not necessarily a violating path in the concrete system, since the violating abstract trace may be an infeasible path due to over-approximation. Technically, this means that properties are not preserved by abstraction.

Reflection of LTL Properties If there is a violating path in the concrete system, then there is a violating path in the abstract system, since the simulation property guarantees that each concrete trace has a corresponding trace in the abstract system. Technically, this means that properties are reflected by abstraction. Concrete Abstract Infeasible path due to over -approximation. If there is a violating path in the abstract system, then there is not necessarily a violating path in the concrete system, since the violating abstract trace may be an infeasible path due to over-approximation. Technically, this means that properties are not preserved by abstraction.

Summary of Data Abstraction The methodology that we will follow includes the following steps: l l l Define an abstract domain (a set of symbolic values) Define a relation that relates concrete values to symbolic values Define an abstract version of each concrete operation (automatic in Bandera) Bind an appropriate abstraction to a variable (automated support in Bandera) Transform source code to replace concrete values and operations with abstract values and operations (automatic in Bandera) Transform property to replace atomic conditions on concrete values to atomic conditions on abstract values (automatic in Bandera)

Summary of Data Abstraction The methodology that we will follow includes the following steps: l l l Define an abstract domain (a set of symbolic values) Define a relation that relates concrete values to symbolic values Define an abstract version of each concrete operation (automatic in Bandera) Bind an appropriate abstraction to a variable (automated support in Bandera) Transform source code to replace concrete values and operations with abstract values and operations (automatic in Bandera) Transform property to replace atomic conditions on concrete values to atomic conditions on abstract values (automatic in Bandera)

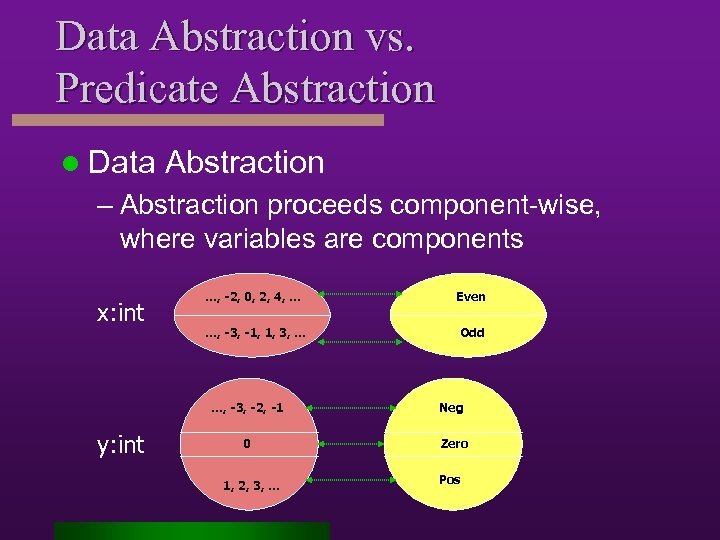

Data Abstraction vs. Predicate Abstraction l Data Abstraction – Abstraction proceeds component-wise, where variables are components x: int …, -2, 0, 2, 4, … Even …, -3, -1, 1, 3, … Odd …, -3, -2, -1 y: int Neg 0 Zero 1, 2, 3, … Pos

Data Abstraction vs. Predicate Abstraction l Data Abstraction – Abstraction proceeds component-wise, where variables are components x: int …, -2, 0, 2, 4, … Even …, -3, -1, 1, 3, … Odd …, -3, -2, -1 y: int Neg 0 Zero 1, 2, 3, … Pos

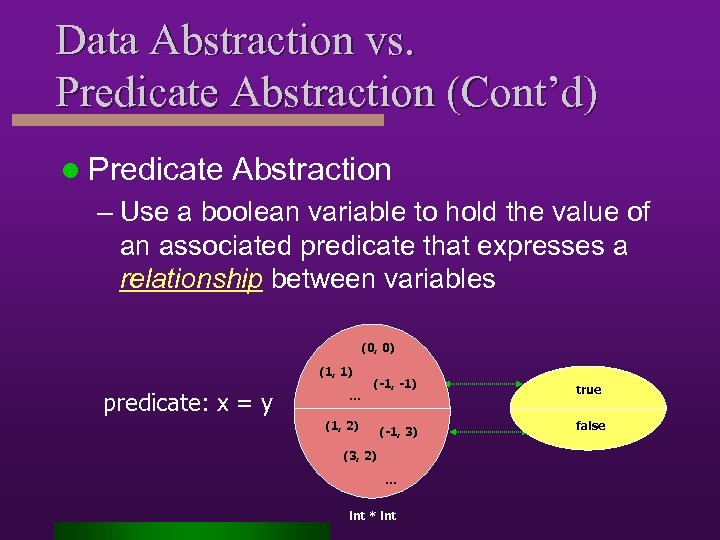

Data Abstraction vs. Predicate Abstraction (Cont’d) l Predicate Abstraction – Use a boolean variable to hold the value of an associated predicate that expresses a relationship between variables (0, 0) (1, 1) predicate: x = y … (-1, -1) true (-1, 3) false (1, 2) (3, 2) … int * int

Data Abstraction vs. Predicate Abstraction (Cont’d) l Predicate Abstraction – Use a boolean variable to hold the value of an associated predicate that expresses a relationship between variables (0, 0) (1, 1) predicate: x = y … (-1, -1) true (-1, 3) false (1, 2) (3, 2) … int * int

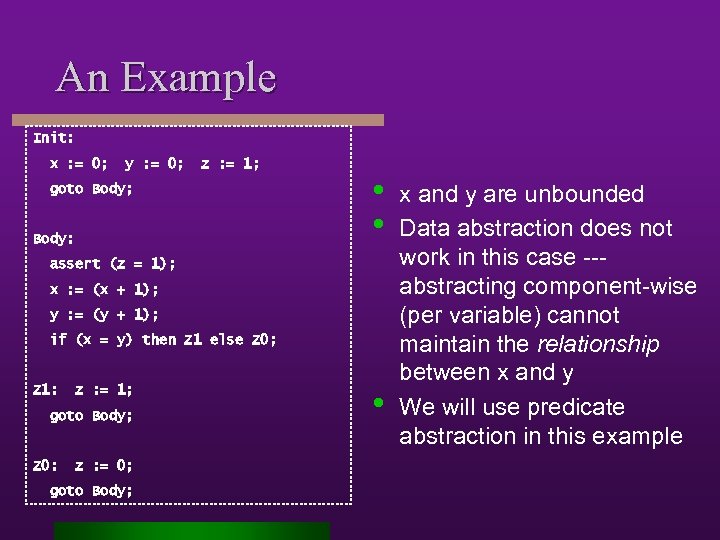

An Example Init: x : = 0; y : = 0; z : = 1; goto Body; Body: • • assert (z = 1); x : = (x + 1); y : = (y + 1); if (x = y) then Z 1 else Z 0; Z 1: z : = 1; goto Body; Z 0: z : = 0; goto Body; • x and y are unbounded Data abstraction does not work in this case --abstracting component-wise (per variable) cannot maintain the relationship between x and y We will use predicate abstraction in this example

An Example Init: x : = 0; y : = 0; z : = 1; goto Body; Body: • • assert (z = 1); x : = (x + 1); y : = (y + 1); if (x = y) then Z 1 else Z 0; Z 1: z : = 1; goto Body; Z 0: z : = 0; goto Body; • x and y are unbounded Data abstraction does not work in this case --abstracting component-wise (per variable) cannot maintain the relationship between x and y We will use predicate abstraction in this example

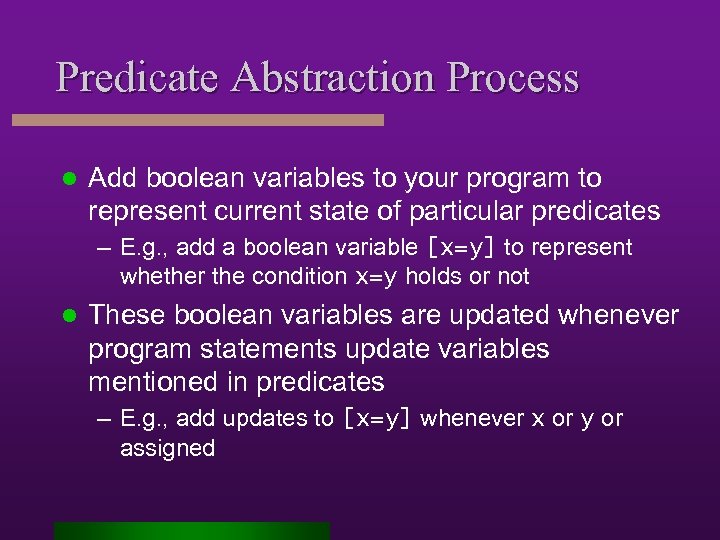

Predicate Abstraction Process l Add boolean variables to your program to represent current state of particular predicates – E. g. , add a boolean variable [x=y] to represent whether the condition x=y holds or not l These boolean variables are updated whenever program statements update variables mentioned in predicates – E. g. , add updates to [x=y] whenever x or y or assigned

Predicate Abstraction Process l Add boolean variables to your program to represent current state of particular predicates – E. g. , add a boolean variable [x=y] to represent whether the condition x=y holds or not l These boolean variables are updated whenever program statements update variables mentioned in predicates – E. g. , add updates to [x=y] whenever x or y or assigned

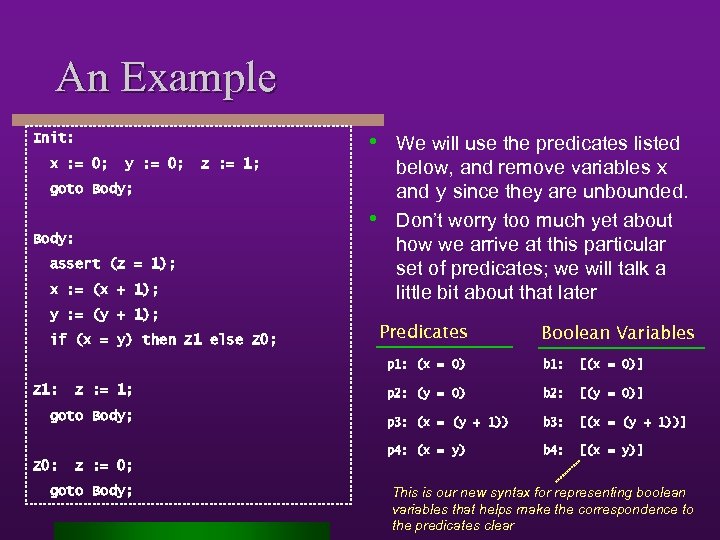

An Example • We will use the predicates listed Init: x : = 0; y : = 0; z : = 1; goto Body; • Body: assert (z = 1); x : = (x + 1); y : = (y + 1); if (x = y) then Z 1 else Z 0; below, and remove variables x and y since they are unbounded. Don’t worry too much yet about how we arrive at this particular set of predicates; we will talk a little bit about that later Predicates Boolean Variables p 1: (x = 0) z : = 1; goto Body; Z 0: [(x = 0)] p 2: (y = 0) b 2: [(y = 0)] p 3: (x = (y + 1)) b 3: [(x = (y + 1))] p 4: (x = y) Z 1: b 4: [(x = y)] z : = 0; goto Body; This is our new syntax for representing boolean variables that helps make the correspondence to the predicates clear

An Example • We will use the predicates listed Init: x : = 0; y : = 0; z : = 1; goto Body; • Body: assert (z = 1); x : = (x + 1); y : = (y + 1); if (x = y) then Z 1 else Z 0; below, and remove variables x and y since they are unbounded. Don’t worry too much yet about how we arrive at this particular set of predicates; we will talk a little bit about that later Predicates Boolean Variables p 1: (x = 0) z : = 1; goto Body; Z 0: [(x = 0)] p 2: (y = 0) b 2: [(y = 0)] p 3: (x = (y + 1)) b 3: [(x = (y + 1))] p 4: (x = y) Z 1: b 4: [(x = y)] z : = 0; goto Body; This is our new syntax for representing boolean variables that helps make the correspondence to the predicates clear

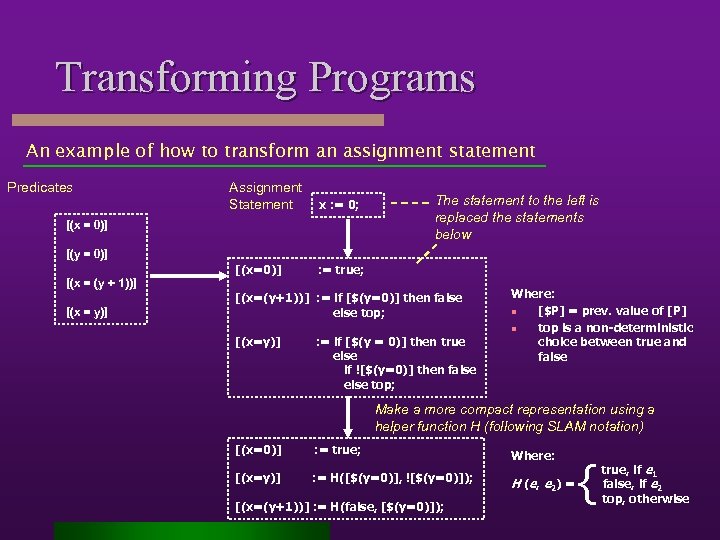

Transforming Programs An example of how to transform an assignment statement Predicates Assignment Statement x : = 0; [(x = 0)] The statement to the left is replaced the statements below [(y = 0)] [(x=0)] : = true; [(x = (y + 1))] [(x = y)] [(x=(y+1))] : = if [$(y=0)] then false else top; [(x=y)] : = if [$(y = 0)] then true else if ![$(y=0)] then false else top; Where: n [$P] = prev. value of [P] n top is a non-deterministic choice between true and false Make a more compact representation using a helper function H (following SLAM notation) [(x=0)] : = true; [(x=y)] : = H([$(y=0)], ![$(y=0)]); [(x=(y+1))] : = H(false, [$(y=0)]); Where: H (e, e 2) = { true, if e 1 false, if e 2 top, otherwise

Transforming Programs An example of how to transform an assignment statement Predicates Assignment Statement x : = 0; [(x = 0)] The statement to the left is replaced the statements below [(y = 0)] [(x=0)] : = true; [(x = (y + 1))] [(x = y)] [(x=(y+1))] : = if [$(y=0)] then false else top; [(x=y)] : = if [$(y = 0)] then true else if ![$(y=0)] then false else top; Where: n [$P] = prev. value of [P] n top is a non-deterministic choice between true and false Make a more compact representation using a helper function H (following SLAM notation) [(x=0)] : = true; [(x=y)] : = H([$(y=0)], ![$(y=0)]); [(x=(y+1))] : = H(false, [$(y=0)]); Where: H (e, e 2) = { true, if e 1 false, if e 2 top, otherwise

Abstracted Program Generating the complete abstract program is pretty tedious – even for this small example. You can complete it as an exercise.

Abstracted Program Generating the complete abstract program is pretty tedious – even for this small example. You can complete it as an exercise.

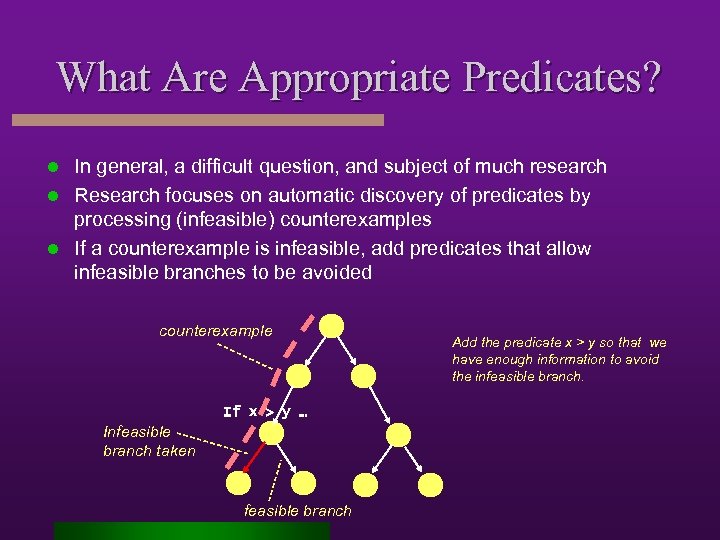

What Are Appropriate Predicates? In general, a difficult question, and subject of much research l Research focuses on automatic discovery of predicates by processing (infeasible) counterexamples l If a counterexample is infeasible, add predicates that allow infeasible branches to be avoided l counterexample If x > y … Infeasible branch taken feasible branch Add the predicate x > y so that we have enough information to avoid the infeasible branch.

What Are Appropriate Predicates? In general, a difficult question, and subject of much research l Research focuses on automatic discovery of predicates by processing (infeasible) counterexamples l If a counterexample is infeasible, add predicates that allow infeasible branches to be avoided l counterexample If x > y … Infeasible branch taken feasible branch Add the predicate x > y so that we have enough information to avoid the infeasible branch.

What Are Appropriate Predicates? Some general heuristics that we will follow l Use the predicates A mentioned in property P, if variables mentioned in predicates are being removed by abstraction – At least, we want to tell if our property predicates are true or not l Use predicates that appear in conditionals along “important paths” – E. g. , (x=y) l Predicates that allow predicates above to be decided – E. g. , (x=0), (y=0), (x = (y + 1))

What Are Appropriate Predicates? Some general heuristics that we will follow l Use the predicates A mentioned in property P, if variables mentioned in predicates are being removed by abstraction – At least, we want to tell if our property predicates are true or not l Use predicates that appear in conditionals along “important paths” – E. g. , (x=y) l Predicates that allow predicates above to be decided – E. g. , (x=0), (y=0), (x = (y + 1))

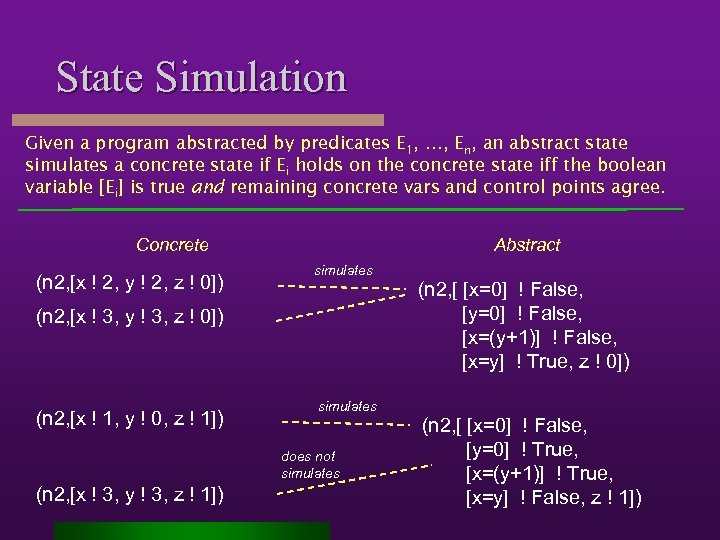

State Simulation Given a program abstracted by predicates E 1, …, En, an abstract state simulates a concrete state if Ei holds on the concrete state iff the boolean variable [Ei] is true and remaining concrete vars and control points agree. Concrete (n 2, [x ! 2, y ! 2, z ! 0]) Abstract simulates (n 2, [x ! 3, y ! 3, z ! 0]) (n 2, [x ! 1, y ! 0, z ! 1]) simulates does not simulates (n 2, [x ! 3, y ! 3, z ! 1]) (n 2, [ [x=0] ! False, [y=0] ! False, [x=(y+1)] ! False, [x=y] ! True, z ! 0]) (n 2, [ [x=0] ! False, [y=0] ! True, [x=(y+1)] ! True, [x=y] ! False, z ! 1])

State Simulation Given a program abstracted by predicates E 1, …, En, an abstract state simulates a concrete state if Ei holds on the concrete state iff the boolean variable [Ei] is true and remaining concrete vars and control points agree. Concrete (n 2, [x ! 2, y ! 2, z ! 0]) Abstract simulates (n 2, [x ! 3, y ! 3, z ! 0]) (n 2, [x ! 1, y ! 0, z ! 1]) simulates does not simulates (n 2, [x ! 3, y ! 3, z ! 1]) (n 2, [ [x=0] ! False, [y=0] ! False, [x=(y+1)] ! False, [x=y] ! True, z ! 0]) (n 2, [ [x=0] ! False, [y=0] ! True, [x=(y+1)] ! True, [x=y] ! False, z ! 1])

To Be Added l Stuttering Theorem l Model-restriction l Control-point coarsening, slicing preserves/reflects CTL & LTL l Abstraction reflects LTL

To Be Added l Stuttering Theorem l Model-restriction l Control-point coarsening, slicing preserves/reflects CTL & LTL l Abstraction reflects LTL