6044ce869ff6670e7cbf53f1efdb9202.ppt

- Количество слайдов: 63

Charm++ Tutorial Parallel Programming Laboratory, UIUC 1

Overview n Introduction – Virtualization – Data Driven Execution in Charm++ – Object-based Parallelization n Charm++ features with simple examples – – – Chares and Chare Arrays Parameter Marshalling Structured Dagger Construct Load Balancing Tools – Projections – Live. Viz 2

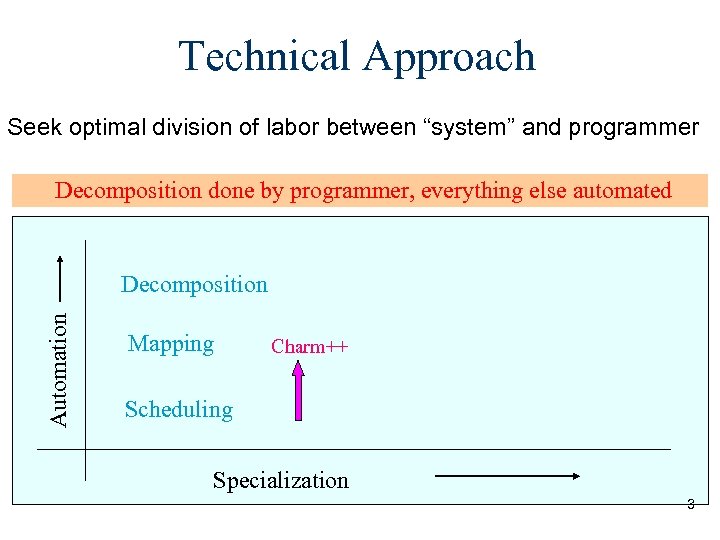

Technical Approach Seek optimal division of labor between “system” and programmer Decomposition done by programmer, everything else automated Automation Decomposition Mapping Charm++ Scheduling Specialization 3

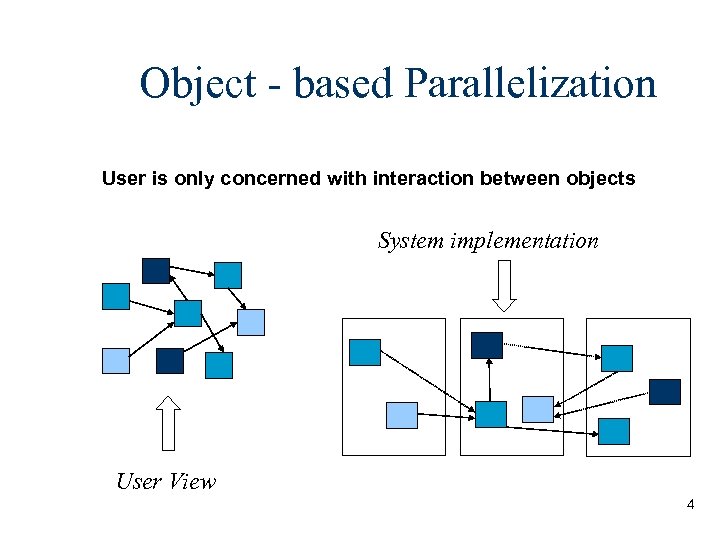

Object - based Parallelization User is only concerned with interaction between objects System implementation User View 4

Virtualization: Object-based Decomposition n Divide the computation into a large number of pieces – Independent of number of processors – Typically larger than number of processors n Let the system map objects to processors 5

Chares – Concurrent Objects Can be dynamically created on any available processor n Can be accessed from remote processors n Send messages to each other asynchronously n Contain “entry methods” n 6

“Hello World!”. ci file mainmodule hello { mainchare mymain { entry mymain(); }; }; Generates hello. decl. h hello. def. h #include “hello. decl. h” class mymain : public CBase_mymain{ public: mymain(int argc, char **argv) { ckout <<“Hello World” <<endl; Ck. Exit(); } }; #include “hello. def. h” . C file 7

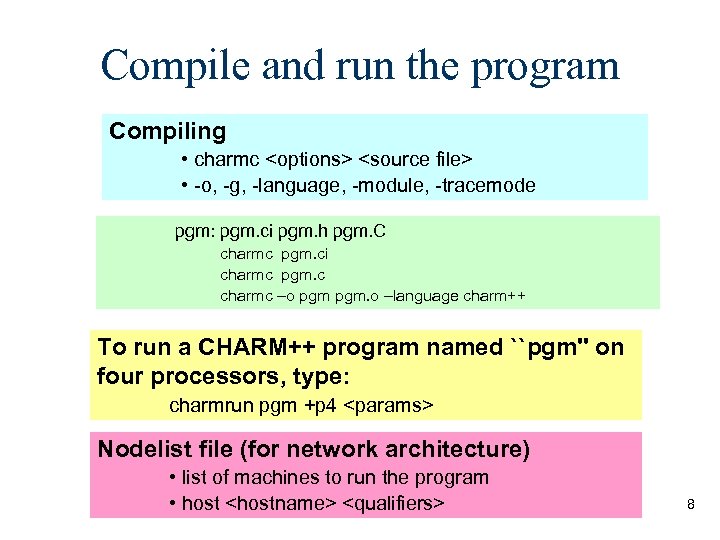

Compile and run the program Compiling • charmc <options> <source file> • -o, -g, -language, -module, -tracemode pgm: pgm. ci pgm. h pgm. C charmc pgm. ci charmc pgm. c charmc –o pgm. o –language charm++ To run a CHARM++ program named ``pgm'' on four processors, type: charmrun pgm +p 4 <params> Nodelist file (for network architecture) • list of machines to run the program • host <hostname> <qualifiers> 8

Data Driven Execution in Charm++ Ck. Exit() x Objects y y->f() ? ? Scheduler Message Q 9

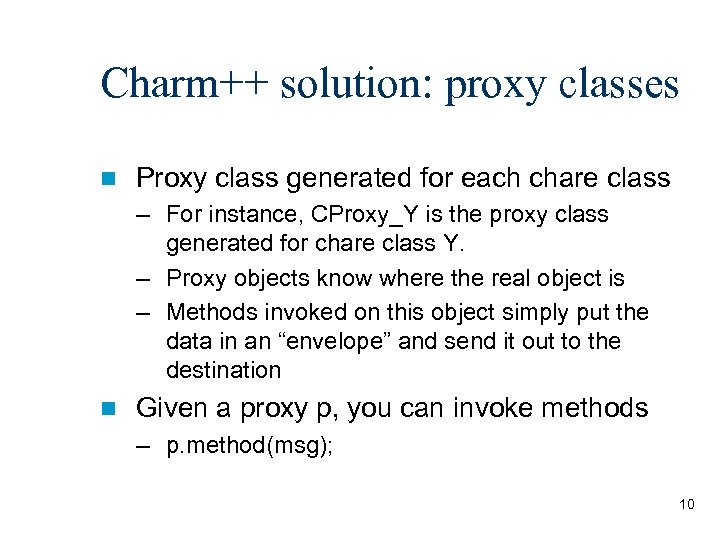

Charm++ solution: proxy classes n Proxy class generated for each chare class – For instance, CProxy_Y is the proxy class generated for chare class Y. – Proxy objects know where the real object is – Methods invoked on this object simply put the data in an “envelope” and send it out to the destination n Given a proxy p, you can invoke methods – p. method(msg); 10

Ring program • Array of Objects of the same kind • Each one communicates with the next one • Individual chares – cumbersome and not practical A collection of chares, –with a single global name for the collection –each member addressed by an index –Mapping of element objects to processors handled by the system 11

![Chare Arrays A[0] A[1] A[2] A[3] A[. . ] User’s view System view A[0] Chare Arrays A[0] A[1] A[2] A[3] A[. . ] User’s view System view A[0]](https://present5.com/presentation/6044ce869ff6670e7cbf53f1efdb9202/image-12.jpg)

Chare Arrays A[0] A[1] A[2] A[3] A[. . ] User’s view System view A[0] A[1] 12

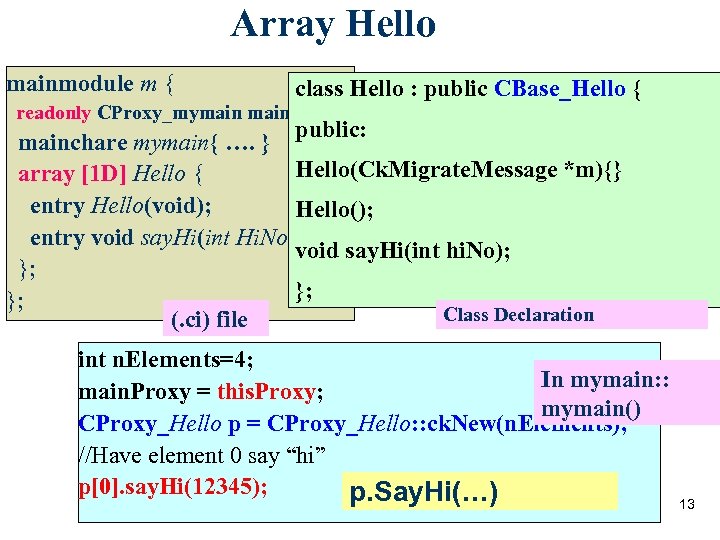

Array Hello mainmodule m { class Hello : public CBase_Hello { readonly CProxy_mymain. Proxy; public: mainchare mymain{ …. } Hello(Ck. Migrate. Message *m){} array [1 D] Hello { entry Hello(void); Hello(); entry void say. Hi(int Hi. No); void say. Hi(int hi. No); }; }; }; Class Declaration (. ci) file int n. Elements=4; In mymain: : main. Proxy = this. Proxy; mymain() CProxy_Hello p = CProxy_Hello: : ck. New(n. Elements); //Have element 0 say “hi” p[0]. say. Hi(12345); p. Say. Hi(…) 13

Array Hello Element index void Hello: : say. Hi(int hi. No) { ckout << hi. No <<"from element" << this. Index << endl; if (this. Index < n. Elements-1) Array //Pass the hello on: Proxy this. Proxy[this. Index+1]. say. Hi(hi. No+1); else //We've been around once-- we're done. main. Proxy. done(); void mymain: : done(void){ } Ck. Exit(); } Read-only 14

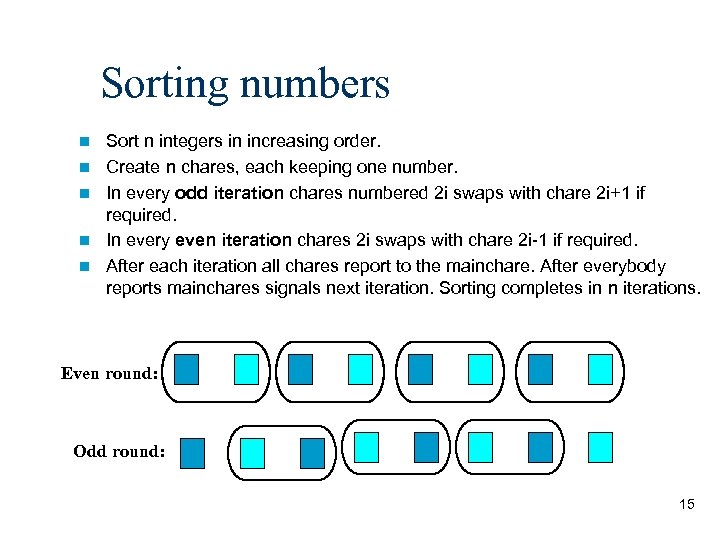

Sorting numbers n n n Sort n integers in increasing order. Create n chares, each keeping one number. In every odd iteration chares numbered 2 i swaps with chare 2 i+1 if required. In every even iteration chares 2 i swaps with chare 2 i-1 if required. After each iteration all chares report to the mainchare. After everybody reports mainchares signals next iteration. Sorting completes in n iterations. Even round: Odd round: 15

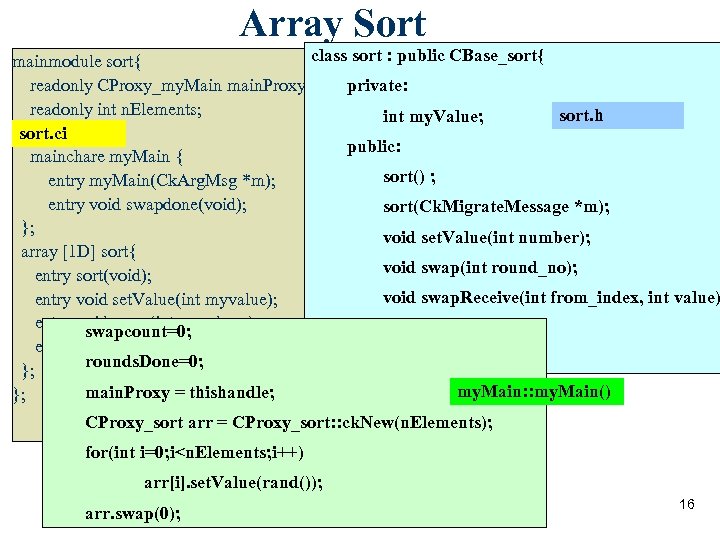

Array Sort class sort : public CBase_sort{ mainmodule sort{ readonly CProxy_my. Main main. Proxy; private: readonly int n. Elements; sort. h int my. Value; sort. ci public: mainchare my. Main { sort() ; entry my. Main(Ck. Arg. Msg *m); entry void swapdone(void); sort(Ck. Migrate. Message *m); }; void set. Value(int number); array [1 D] sort{ void swap(int round_no); entry sort(void); void swap. Receive(int from_index, int value) entry void set. Value(int myvalue); entry void swap(int round_no); }; swapcount=0; entry void swap. Receive(int from_index, int value); rounds. Done=0; }; my. Main: : my. Main() main. Proxy = thishandle; }; CProxy_sort arr = CProxy_sort: : ck. New(n. Elements); for(int i=0; i<n. Elements; i++) arr[i]. set. Value(rand()); arr. swap(0); 16

Array Sort(contd. . ) void sort: : swap(int roundno) { bool sendright=false; if (roundno%2==0 && this. Index%2==0|| roundno%2==1 && this. Index%2==1) sendright=true; //sendright is true if I have to send to right } void || (!sendright && this. Index==0)) if((sendright && this. Index==n. Elements-1)sort: : swap. Receive(int from_index, int value) { main. Proxy. swapdone(); if(from_index==this. Index-1 && value>my. Value) else{ my. Value=value; if(sendright) if(from_index==this. Index+1 && value<my. Value) this. Proxy[this. Index+1]. swap. Receive(this. Index, my. Value); else void my. Main: : swapdone(void){ my. Value=value; main. Proxy. swapdone(); this. Proxy[this. Index-1]. swap. Receive(this. Index, my. Value); if (++swapcount==n. Elements){ } } swapcount=0; rounds. Done++; if (rounds. Done==n. Elements) Ck. Exit(); else arr. swap(rounds. Done); } Error!! } 17

Remember : swap. Receive 3 swap ü Message passing is asynchronous. ü Messages can be delivered out of order. 3 2 swap. Receive is 2 t! os l 18

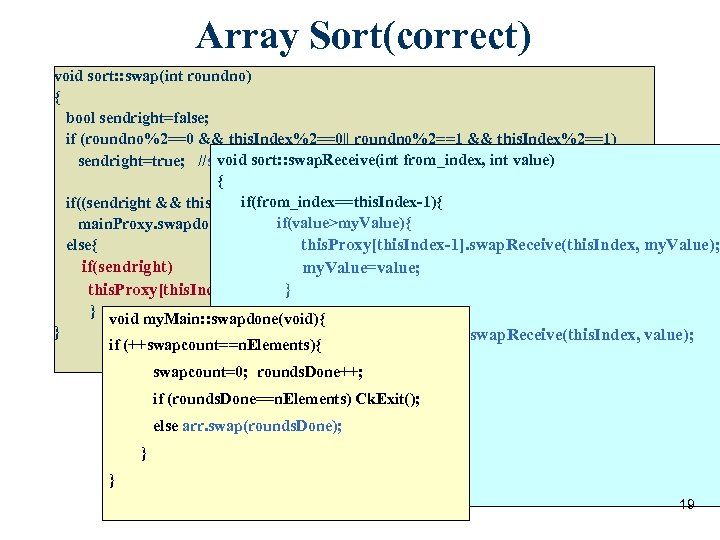

Array Sort(correct) void sort: : swap(int roundno) { bool sendright=false; if (roundno%2==0 && this. Index%2==0|| roundno%2==1 && this. Index%2==1) void sort: : swap. Receive(int from_index, sendright=true; //sendright is true if I have to send to right int value) { if(from_index==this. Index-1){ if((sendright && this. Index==n. Elements-1) || (!sendright && this. Index==0)) if(value>my. Value){ main. Proxy. swapdone(); else{ this. Proxy[this. Index-1]. swap. Receive(this. Index, my. Value); } if(sendright) my. Value=value; this. Proxy[this. Index+1]. swap. Receive(this. Index, my. Value); } } void my. Main: : swapdone(void){ else this. Proxy[this. Index-1]. swap. Receive(this. Index, value); if (++swapcount==n. Elements){ } swapcount=0; rounds. Done++; if(from_index==this. Index+1) if (rounds. Done==n. Elements) Ck. Exit(); my. Value=value; main. Proxy. swapdone(); else arr. swap(rounds. Done); } } } 19

Basic Entities in Charm++ Programs n Sequential Objects - ordinary sequential C++ code and objects Chares - concurrent objects n Chare Arrays n - an indexed collection of chares 20

Illustrative example: Jacobi 1 D Hot temperature on two sides will slowly spread across the entire grid. 21

Illustrative example: Jacobi 1 D n Input: 2 D array of values with boundary conditions n In each iteration, each array element is computed as the average of itself and its neighbors n Iterations are repeated till some threshold error value is reached 22

Jacobi 1 D: Parallel Solution! 23

Jacobi 1 D: Parallel Solution! n Slice up the 2 D array into sets of columns n Chare = computations in one set n At the end of each iteration – Chares exchange boundaries – Determine maximum change in computation n Output result when threshold is reached 24

Arrays as Parameters n Array cannot be passed as pointer n specify the length of the array in the interface file – entry void bar(int n, double arr[n]) 25

![Jacobi Code void Ar 1: : do. Work(int senders. ID, int n, double arr[n]) Jacobi Code void Ar 1: : do. Work(int senders. ID, int n, double arr[n])](https://present5.com/presentation/6044ce869ff6670e7cbf53f1efdb9202/image-26.jpg)

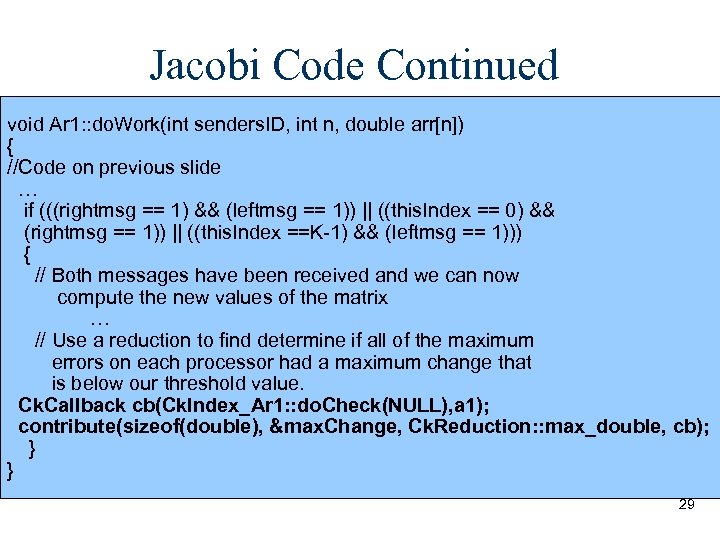

Jacobi Code void Ar 1: : do. Work(int senders. ID, int n, double arr[n]) { max. Change = 0. 0; if (senders. ID == this. Index-1) { leftmsg = 1; // set boolean to indicate we received the left message } else if (senders. ID == this. Index+1) { rightmsg = 1; // set boolean to indicate we received the right message } // Rest of the code on the next slide … } 26

Reduction Apply a single operation (add, max, min, . . . ) to data items scattered across many processors n Collect the result in one place n Reduce x across all elements n – contribute(sizeof(x), &x, Ck. Reduction: : sum_int, process. Result ); – Function “process. Result()” n All contribute calls from one array must name the same function 27

Callbacks n n n A generic way to transfer control back to a client after a library has finished. After finishing a reduction, the results have to be passed to some chare's entry method. To do this, create an object of type Ck. Callback with chare's ID & entry method index Different types of callbacks One commonly used type: Ck. Callback cb(<chare’s entry method>, <chare’s proxy>); 28

Jacobi Code Continued void Ar 1: : do. Work(int senders. ID, int n, double arr[n]) { //Code on previous slide … if (((rightmsg == 1) && (leftmsg == 1)) || ((this. Index == 0) && (rightmsg == 1)) || ((this. Index ==K-1) && (leftmsg == 1))) { // Both messages have been received and we can now compute the new values of the matrix … // Use a reduction to find determine if all of the maximum errors on each processor had a maximum change that is below our threshold value. Ck. Callback cb(Ck. Index_Ar 1: : do. Check(NULL), a 1); contribute(sizeof(double), &max. Change, Ck. Reduction: : max_double, cb); } } 29

Types of Reductions n Predefined Reductions – A number of reductions are predefined, including ones that – – – – n Sum values or arrays Calculate the product of values or arrays Calculate the maximum contributed value Calculate the minimum contributed value Calculate the logical and of integer values Calculate the logical or of contributed integer values Form a set of all contributed values Concatenate bytes of all contributed values Plus, you can create your own 30

Structured Dagger n What is it? – A coordination language built on top of Charm++ n Motivation: – To reduce the complexity of program development without adding any overhead 31

Structured Dagger Constructs n atomic {code} – Specifies that no structured dagger constructs appear inside of the code so it executes atomically. n overlap {code} – Enables all of its component constructs concurrently and can execute these constructs in any order. n when <entrylist> {code} – Specifies dependencies between computation and message arrival. 32

Structure Dagger Constructs Continued n if / else/ while / for – These are the same as their C++ conterparts, except that they can contain when blocks in their respective code segments. Hence execution can be suspended while they wait for messages. n forall – Functions like a for statement, but enables its component constructs for its entire iteration space at once. As a result it doesn’t need to execute its iteration space in strict sequence. 33

![Jacobi Example Using Structured Dagger jacobi. ci array[1 D] Ar 1 { … entry Jacobi Example Using Structured Dagger jacobi. ci array[1 D] Ar 1 { … entry](https://present5.com/presentation/6044ce869ff6670e7cbf53f1efdb9202/image-34.jpg)

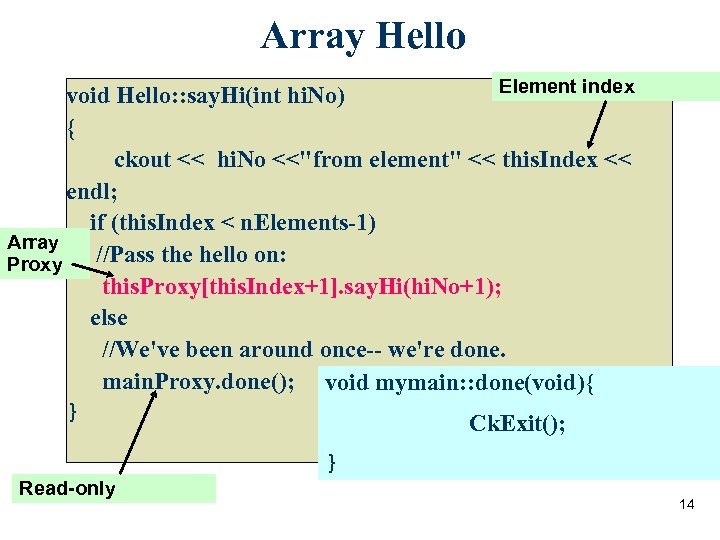

Jacobi Example Using Structured Dagger jacobi. ci array[1 D] Ar 1 { … entry void Get. Messages (My. Msg *msg) { when rightmsg. Entry(My. Msg *right), leftmsg. Entry(My. Msg *left) { atomic { Ck. Printf(“Got both left and right messages n”); do. Work(right, left); } } }; entry void rightmsg. Entry(My. Msg *m); entry void leftmsg. Entry(My. Msg *m); … }; In a 1 D jacobi that doesn’t use structured dagger the code in the. C file for the do. Work function is much more complex. The code needs to manually check if both messages have been received by using if/else statements. By using structured dagger, the do. Work function will not be called until both messages have been received. The compiler will translate the structured dagger code into code that will 34 do the appropriate checks, hence making the programmers job simpler.

Another Example of Structured Dagger : Lean. MD n Lean. MD is a molecular dynamics simulation application written in Charm++ and Structured Dagger. n Here, at every timestep, each cell sends its atom positions to its neighbors. Then it receives forces and integrates the information to calculate new positions. 35

Another Example of Structured Dagger : Lean. MD for (time. Step_=1; time. Step_<=Num. Steps; time. Step_++) { atomic { send. Atom. Pos(); } //send atom positions to all neighbors for (force. Msg. Count_=0; force. Msg. Count_<num. Force. Msg_; force. Msg. Count_++) { when recv. Forces(Forces. Msg* msg) { atomic { proc. Forces(msg); } } } atomic { do. Integration(); } if (Sim. Param. Do. Migrate && time. Step_ % Sim. Param. Migrate. Freq == 0) { atomic { migrate(); } for (migrate. Msg. Count_=0; migrate. Msg. Count_<num. Migrate. Msg_; migrate. Msg. Count_++) { when recv. Migrate. Msg(Migrate. Msg* msg) { atomic { proc. Migrate. Msg(msg); } } } atomic { collect. Atoms(); } } This a code sample from the lean. MD program. It underlines the } importance of using Structured Dagger. Here the code is highly simplified due to the use of Structured Dagger constructs 36

Load Balancing n Projections n Object Migration n Load Balancing Strategies n Using Load Balancing 37

Projections: Quick Introduction Projections is a tool used to analyze the performance of your application n The tracemode option is used when you build your application to enable tracing n You get one log file per processor, plus a separate file with global information n These files are read by Projections so you can use the Projections views to analyze performance n 38

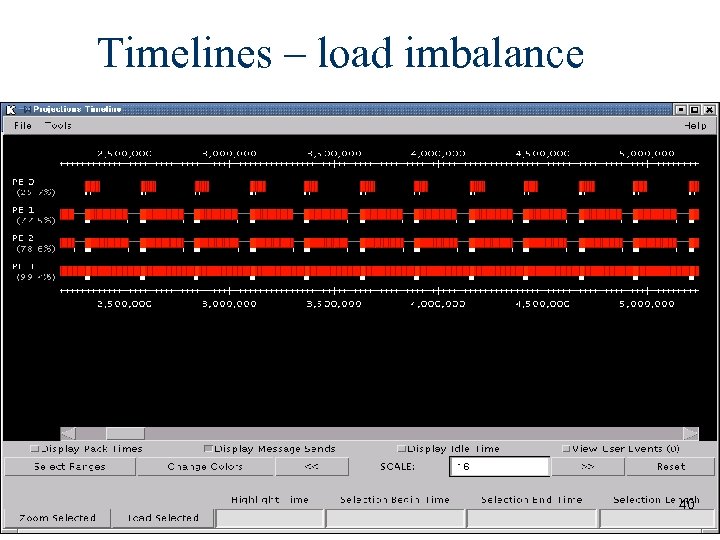

Screen shots – Load imbalance Jacobi 2048 X 2048 Threshold 0. 1 Chares 32 Processors 4 39

Timelines – load imbalance 40

Migration Array objects can migrate from one PE to another To migrate, must implement pack/unpack or pup method Need this because migration creates a new object on the destination processor while destroying the original n pup combines 3 functions into one n n n – Data structure traversal : compute message size, in bytes – Pack : write object into message – Unpack : read object out of message n Basic Contract : here are my fields (types, sizes and a pointer) 41

Pup – How to write it? Class Show. Pup { double a; int x; char y; unsigned long z; float q[3]; int *r; // heap allocated memory public: … other methods … void pup(PUP: : er &p) { p | a; // notice that you can either use | operator p | x; p | y; p(z); // or () p(q, 3); // but you need () for arrays if(p. is. Unpacking() ) r = new int[ARRAY_SIZE]; p(r, ARRAY_SIZE); } 42

Load Balancing n All you need is a working pup n link a LB module – -module <strategy> – Refine. LB, Neighbor. LB, Greedy. Comm. LB, others… – Every. LB will include all load balancing strategies n compile time option (specify default balancer) – -balancer Refine. LB n runtime option – +balancer Refine. LB 43

Centralized Load Balancing Uses information about activity on all processors to make load balancing decisions n Advantage: since it has the entire object communication graph, it can make the best global decision n Disadvantage: Higher communication costs/latency, since this requires information from all running chares n 44

Neighborhood Load Balancing n Load balances among a small set of processors (the neighborhood) to decrease communication costs n Advantage: Lower communication costs, since communication is between a smaller subset of processors n Disadvantage: Could leave a system which is globally poorly balanced 45

Main Centralized Load Balancing Strategies Greedy. Comm. LB – a “greedy” load balancing strategy which uses the process load and communications graph to map the processes with the highest load onto the processors with the lowest load, while trying to keep communicating processes on the same processor n Refine. LB – move objects off overloaded processors to under-utilized processors to reach average load n Others – the manual discusses several other load balancers which are not used as often, but may be useful in some cases; also, more are being developed n 46

Neighborhood Load Balancing Strategies n Neighbor. LB – neighborhood load balancer, currently uses a neighborhood of 4 processors 47

When to Re-balance Load? n Default: Load balancer will migrate when needed n Programmer Control: At. Sync load balancing At. Sync method: enable load balancing at specific point – Object ready to migrate – Re-balance if needed – At. Sync() called when your chare is ready to be load balanced – load balancing may not start right away – Resume. From. Sync() called when load balancing for this chare has finished 48

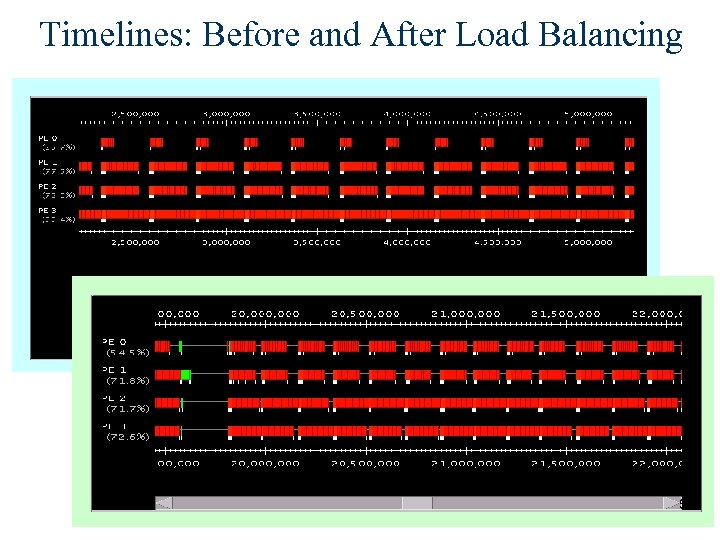

Processor Utilization: After Load Balance 49

Timelines: Before and After Load Balancing 50

Advanced Features n Groups n Node Groups n Priorities n Entry Method Attributes n Communications Optimization n Checkpoint/Restart 51

Advanced Features: Groups With arrays, the standard method of communications between elements is message passing n With a large number of chares, this can lead to large numbers of messages in the system n For global operations like reductions, this could make the receiving chare a bottleneck n Can this be fixed? n 52

Advanced Features: Groups Solution: Groups are more of a “system level” programming feature of Charm++, versus “user level” arrays n Groups are similar to arrays, except only one element is on each processor – the index to access the group is the processor ID n Groups can be used to batch messages from chares running on a single processor, which cuts down on the message traffic n Disadvantage: Does not allow for effective load balancing, since groups are stationary (they are not virtualized) n n 53

Advanced Features: Node Groups Similar to groups, but only one per node, instead of one per processor – the index is the node number n Can be used to solve similar problems as well – with one node group per SMP node, the node group could act as a collection point for messages on the node, lowering message traffic on interconnects between nodes n 54

Advanced Features: Priorities n n n In general, messages in Charm++ are unordered: but what if an order is needed? Solution: Priorities Messages can be assigned different priorities The simplest priorities just specify that the message should either go on the end of the queue (standard behavior) or the beginning of the queue Specific priorities can also be assigned to messages, using either numbers or bit vectors Note that messages are non-preemptive: a lower priority message will continue processing, even if a higher priority message shows up 55

![Advanced Features: Entry Method Attributes entry [attribute 1, . . . , attribute. N] Advanced Features: Entry Method Attributes entry [attribute 1, . . . , attribute. N]](https://present5.com/presentation/6044ce869ff6670e7cbf53f1efdb9202/image-56.jpg)

Advanced Features: Entry Method Attributes entry [attribute 1, . . . , attribute. N] void Entry. Method(parameters); Attributes: n threaded – entry methods which are run in their own nonpreemptible threads n sync – methods return message as a result 56

Advanced Features: Communications Optimization Used to optimize communication patterns in your application n Can use either bracketed strategies or streaming strategies n Bracketed strategies are those where a specific start and end point for the communication are flagged n Streaming strategies use a preset time interval for bracketing messages n 57

Advanced Features: Communications Optimization n For strategies, you need to specify a communications topology, which specifies the message pattern you will be using n When compiling with the communications optimization library, you must include –module commlib 58

Advanced Features: Checkpoint/Restart If you have a long running application, it would be nice to be able to save its state, just in case something happens n Checkpointing gives you this ability n When you checkpoint an application, it uses the migrate code already present for load balancing to store the state of array objects n State information is saved in a directory of your choosing n 59

Advanced Features: Checkpoint/Restart The application can be restarted, with a different number of processors, using the checkpoint information n The charmrun option ++restart <dir> is used to restart n You can also restart groups by marking them migratable and writing a PUP routine – they still will not load balance, though n 60

Other Advanced Features n Custom array indexes n Array creation/mapping options n Additional load balancers n Local versus proxied calls 61

Benefits of Virtualization n Better Software Engineering – Logical Units decoupled from “Number of processors” n Message Driven Execution – Adaptive overlap between computation and communication – Predictability of execution n Flexible and dynamic mapping to processors – Flexible mapping on clusters – Change the set of processors for a given job – Automatic Checkpointing n Principle of Persistence 62

More Information n http: //charm. cs. uiuc. edu – Manuals – Papers – Download files – FAQs n ppl@cs. uiuc. edu 63

6044ce869ff6670e7cbf53f1efdb9202.ppt