653baef9db29d174cc75737a94ffe7df.ppt

- Количество слайдов: 60

Chapter 8 Testing the Programs Shari L. Pfleeger Joann M. Atlee 4 th Edition

Chapter 8 Testing the Programs Shari L. Pfleeger Joann M. Atlee 4 th Edition

Contents 8. 1 Software Faults and Failures 8. 2 Testing Issues 8. 3 Unit Testing 8. 4 Integration Testing 8. 5 Testing Object Oriented Systems 8. 6 Test Planning 8. 7 Automated Testing Tools 8. 8 When to Stop Testing 8. 9 Information System Example 8. 10 Real Time Example 8. 11 What this Chapter Means for You Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 2

Contents 8. 1 Software Faults and Failures 8. 2 Testing Issues 8. 3 Unit Testing 8. 4 Integration Testing 8. 5 Testing Object Oriented Systems 8. 6 Test Planning 8. 7 Automated Testing Tools 8. 8 When to Stop Testing 8. 9 Information System Example 8. 10 Real Time Example 8. 11 What this Chapter Means for You Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 2

Chapter 8 Objectives • Types of faults and how to classify them • The purpose of testing • Unit testing • Integration testing strategies • Test planning • When to stop testing Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 3

Chapter 8 Objectives • Types of faults and how to classify them • The purpose of testing • Unit testing • Integration testing strategies • Test planning • When to stop testing Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 3

8. 1 Software Faults and Failures Why Does Software Fail? • A wrong or missing requirement – Not what the customer wants or needs • A requirement that is impossible to implement – Given prescribed hardware and software • A faulty system design – A database design restrictions • A faulty program design • The program code may be wrong Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 4

8. 1 Software Faults and Failures Why Does Software Fail? • A wrong or missing requirement – Not what the customer wants or needs • A requirement that is impossible to implement – Given prescribed hardware and software • A faulty system design – A database design restrictions • A faulty program design • The program code may be wrong Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 4

8. 1 Software Faults and Failures Objective of Testing • Objective of testing: discover faults • A test is successful only when a fault is discovered – Fault identification is the process of determining what fault(s) caused the failure – Fault correction is the process of making changes to the system so that the faults are removed Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 5

8. 1 Software Faults and Failures Objective of Testing • Objective of testing: discover faults • A test is successful only when a fault is discovered – Fault identification is the process of determining what fault(s) caused the failure – Fault correction is the process of making changes to the system so that the faults are removed Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 5

8. 1 Software Faults and Failures Types of Faults • Algorithmic fault or syntax faults – A component’s logic doesn’t produce proper output • Computation and precision faults – A formula’s implementation is wrong • Documentation faults – The documentation doesn’t match what a program does • Stress or overload faults – Data structures filled past their specified capacity • Capacity or boundary faults – The system’s performance becomes unacceptable as activity reaches its specified limit Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 6

8. 1 Software Faults and Failures Types of Faults • Algorithmic fault or syntax faults – A component’s logic doesn’t produce proper output • Computation and precision faults – A formula’s implementation is wrong • Documentation faults – The documentation doesn’t match what a program does • Stress or overload faults – Data structures filled past their specified capacity • Capacity or boundary faults – The system’s performance becomes unacceptable as activity reaches its specified limit Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 6

8. 1 Software Faults and Failures Types of Faults (continued) • Timing or coordination faults – Code executing in improper timing • Performance faults – System does not perform at the speed prescribed • Recovery Faults – Failures don’t behave as required • Hardware and system software faults – Supplied hardware and system software do not work according to the documented conditions and procedures • Standard and procedure faults – Code doesn’t follow organizational or procedural standards Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 7

8. 1 Software Faults and Failures Types of Faults (continued) • Timing or coordination faults – Code executing in improper timing • Performance faults – System does not perform at the speed prescribed • Recovery Faults – Failures don’t behave as required • Hardware and system software faults – Supplied hardware and system software do not work according to the documented conditions and procedures • Standard and procedure faults – Code doesn’t follow organizational or procedural standards Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 7

8. 1 Software Faults and Failures Typical Algorithmic Faults • An algorithmic fault occurs when a component’s algorithm or logic does not produce proper output – – – Branching too soon Branching too late Testing for the wrong condition Forgetting to initialize variable or set loop invariants Forgetting to test for a particular condition Comparing variables of inappropriate data types • Syntax faults Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 8

8. 1 Software Faults and Failures Typical Algorithmic Faults • An algorithmic fault occurs when a component’s algorithm or logic does not produce proper output – – – Branching too soon Branching too late Testing for the wrong condition Forgetting to initialize variable or set loop invariants Forgetting to test for a particular condition Comparing variables of inappropriate data types • Syntax faults Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 8

8. 1 Software Faults and Failures Orthogonal Defect Classification • Historical information leads to trends • Trends can lead to changes in designs or requirements – Leading to reduced number of faults injected • How: – Categorize faults using IBM Orthogonal Classifications • Track faults of omission and commission also – Must be product- and organization-independent – Scheme must be applicable to all development stages Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 9

8. 1 Software Faults and Failures Orthogonal Defect Classification • Historical information leads to trends • Trends can lead to changes in designs or requirements – Leading to reduced number of faults injected • How: – Categorize faults using IBM Orthogonal Classifications • Track faults of omission and commission also – Must be product- and organization-independent – Scheme must be applicable to all development stages Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 9

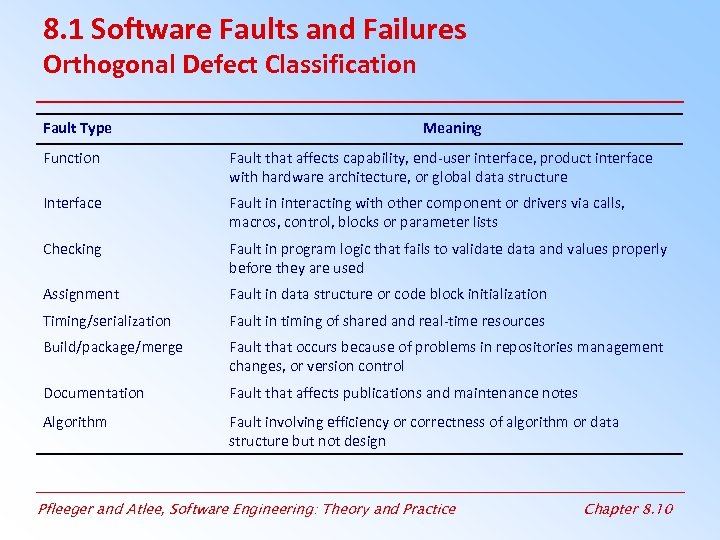

8. 1 Software Faults and Failures Orthogonal Defect Classification Fault Type Meaning Function Fault that affects capability, end-user interface, product interface with hardware architecture, or global data structure Interface Fault in interacting with other component or drivers via calls, macros, control, blocks or parameter lists Checking Fault in program logic that fails to validate data and values properly before they are used Assignment Fault in data structure or code block initialization Timing/serialization Fault in timing of shared and real-time resources Build/package/merge Fault that occurs because of problems in repositories management changes, or version control Documentation Fault that affects publications and maintenance notes Algorithm Fault involving efficiency or correctness of algorithm or data structure but not design Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 10

8. 1 Software Faults and Failures Orthogonal Defect Classification Fault Type Meaning Function Fault that affects capability, end-user interface, product interface with hardware architecture, or global data structure Interface Fault in interacting with other component or drivers via calls, macros, control, blocks or parameter lists Checking Fault in program logic that fails to validate data and values properly before they are used Assignment Fault in data structure or code block initialization Timing/serialization Fault in timing of shared and real-time resources Build/package/merge Fault that occurs because of problems in repositories management changes, or version control Documentation Fault that affects publications and maintenance notes Algorithm Fault involving efficiency or correctness of algorithm or data structure but not design Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 10

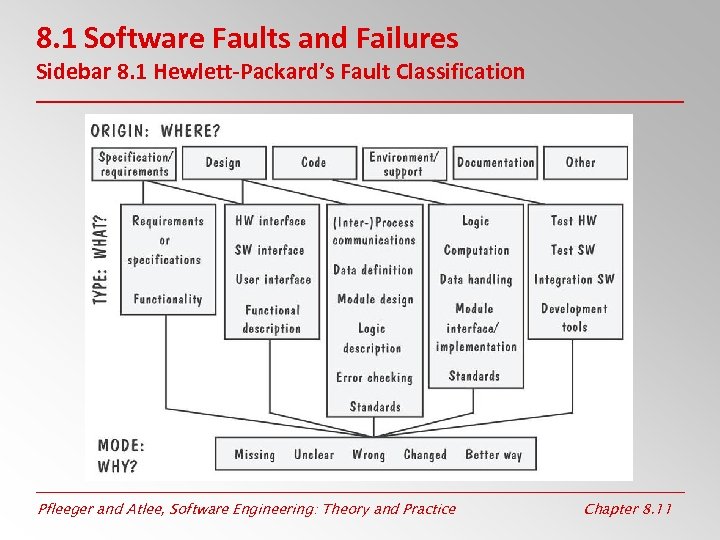

8. 1 Software Faults and Failures Sidebar 8. 1 Hewlett-Packard’s Fault Classification Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 11

8. 1 Software Faults and Failures Sidebar 8. 1 Hewlett-Packard’s Fault Classification Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 11

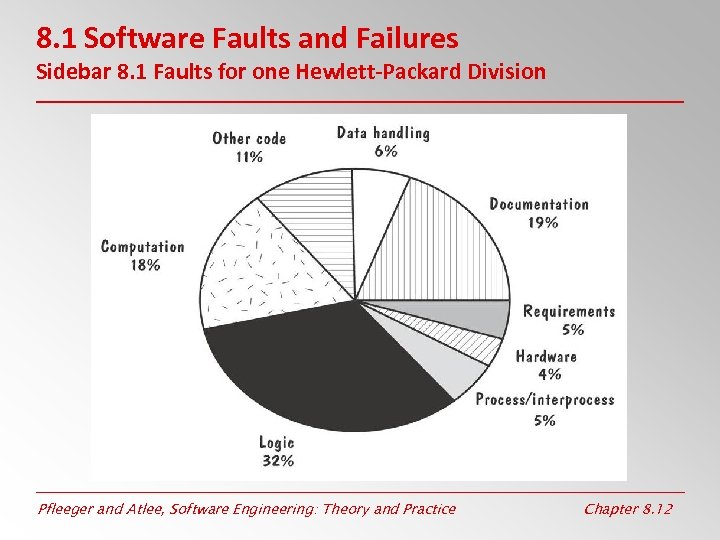

8. 1 Software Faults and Failures Sidebar 8. 1 Faults for one Hewlett-Packard Division Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 12

8. 1 Software Faults and Failures Sidebar 8. 1 Faults for one Hewlett-Packard Division Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 12

8. 2 Testing Issues Test Organization • Module testing, component testing, or unit testing • Integration testing • Function testing • Performance testing • Acceptance testing • Installation testing • System testing Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 13

8. 2 Testing Issues Test Organization • Module testing, component testing, or unit testing • Integration testing • Function testing • Performance testing • Acceptance testing • Installation testing • System testing Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 13

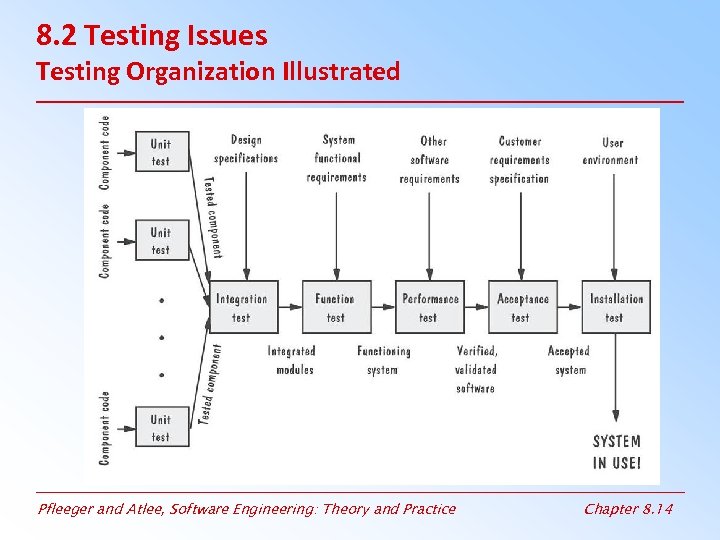

8. 2 Testing Issues Testing Organization Illustrated Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 14

8. 2 Testing Issues Testing Organization Illustrated Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 14

8. 2 Testing Issues Attitude Toward Testing • The problem: – In academia students are given a grade for the correctness and operability of their programs – Test cases generated to show correctness – Critiques of program are considered critiques of ability • The solution: – Egoless programming; programs are viewed as components of a larger system, not as the property of those who wrote them – Development team focused on correcting faults, not placing blame Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 15

8. 2 Testing Issues Attitude Toward Testing • The problem: – In academia students are given a grade for the correctness and operability of their programs – Test cases generated to show correctness – Critiques of program are considered critiques of ability • The solution: – Egoless programming; programs are viewed as components of a larger system, not as the property of those who wrote them – Development team focused on correcting faults, not placing blame Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 15

8. 2 Testing Issues Who Performs the Test? • Independent test team – Avoid conflict for personal responsibility for faults – Improve objectivity between design and implementation – Allow testing and coding concurrently Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 16

8. 2 Testing Issues Who Performs the Test? • Independent test team – Avoid conflict for personal responsibility for faults – Improve objectivity between design and implementation – Allow testing and coding concurrently Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 16

8. 2 Testing Issues Views of the Test Objects • Closed box or black box – Functionality of the test objects – No view of code or data structure – input and output only • Clear box or white box – Structure of the test objects – Internal view of code and data structures Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 17

8. 2 Testing Issues Views of the Test Objects • Closed box or black box – Functionality of the test objects – No view of code or data structure – input and output only • Clear box or white box – Structure of the test objects – Internal view of code and data structures Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 17

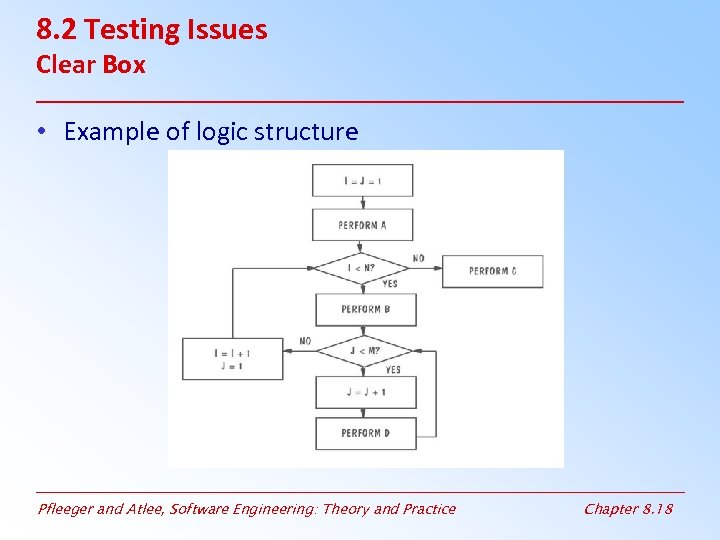

8. 2 Testing Issues Clear Box • Example of logic structure Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 18

8. 2 Testing Issues Clear Box • Example of logic structure Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 18

8. 2 Testing Issues Factors Affecting the Choice of Test Philosophy • White box or Black box testing – – The number of possible logical paths The nature of the input data The amount of computation involved The complexity of algorithms • Don’t have to chose! – Combination of each could be the right approach Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 19

8. 2 Testing Issues Factors Affecting the Choice of Test Philosophy • White box or Black box testing – – The number of possible logical paths The nature of the input data The amount of computation involved The complexity of algorithms • Don’t have to chose! – Combination of each could be the right approach Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 19

8. 3 Unit Testing Code Review • Code walkthrough – – Present code and documentation to review team Team comments on correctness Focus is on the code not the coder No influence on developer performance – – Check code and documentation against list of concerns Review correctness and efficiency of algorithms Check comments for completeness Formalized process • Code inspection Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 20

8. 3 Unit Testing Code Review • Code walkthrough – – Present code and documentation to review team Team comments on correctness Focus is on the code not the coder No influence on developer performance – – Check code and documentation against list of concerns Review correctness and efficiency of algorithms Check comments for completeness Formalized process • Code inspection Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 20

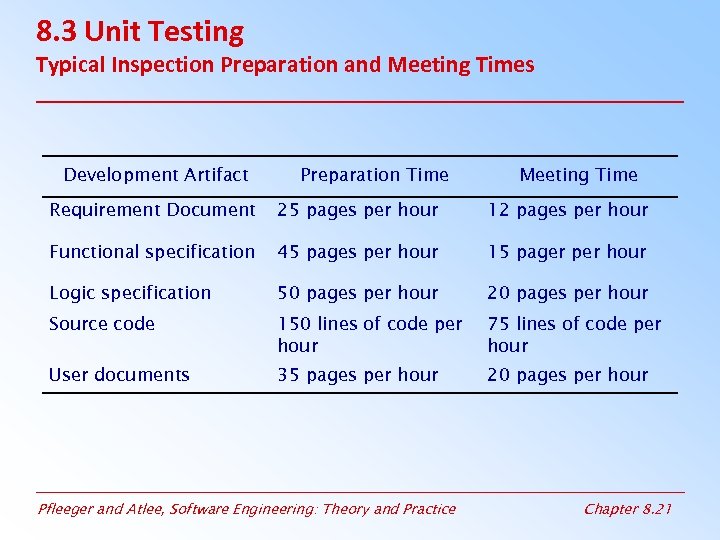

8. 3 Unit Testing Typical Inspection Preparation and Meeting Times Development Artifact Preparation Time Meeting Time Requirement Document 25 pages per hour 12 pages per hour Functional specification 45 pages per hour 15 pager per hour Logic specification 50 pages per hour 20 pages per hour Source code 150 lines of code per hour 75 lines of code per hour User documents 35 pages per hour 20 pages per hour Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 21

8. 3 Unit Testing Typical Inspection Preparation and Meeting Times Development Artifact Preparation Time Meeting Time Requirement Document 25 pages per hour 12 pages per hour Functional specification 45 pages per hour 15 pager per hour Logic specification 50 pages per hour 20 pages per hour Source code 150 lines of code per hour 75 lines of code per hour User documents 35 pages per hour 20 pages per hour Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 21

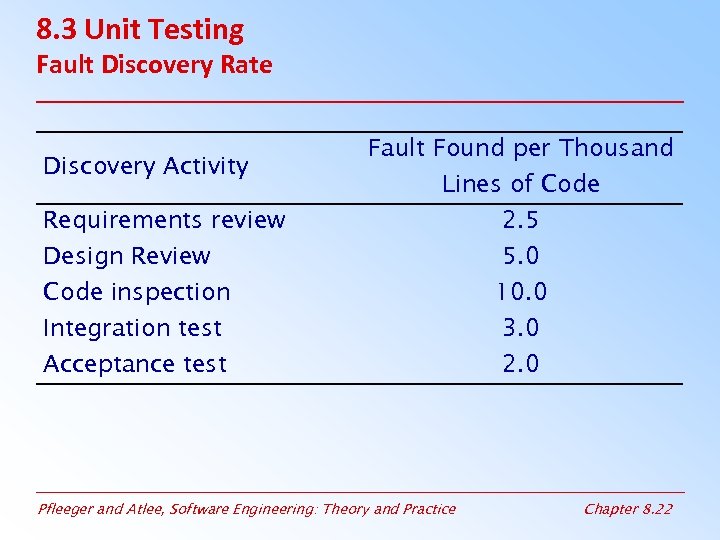

8. 3 Unit Testing Fault Discovery Rate Discovery Activity Requirements review Design Review Code inspection Integration test Acceptance test Fault Found per Thousand Lines of Code 2. 5 5. 0 10. 0 3. 0 2. 0 Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 22

8. 3 Unit Testing Fault Discovery Rate Discovery Activity Requirements review Design Review Code inspection Integration test Acceptance test Fault Found per Thousand Lines of Code 2. 5 5. 0 10. 0 3. 0 2. 0 Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 22

8. 3 Unit Testing Sidebar 8. 3 The Best Team Size for Inspections • The preparation rate, not the team size, determines inspection effectiveness • The team’s effectiveness and efficiency depend on their familiarity with their product Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 23

8. 3 Unit Testing Sidebar 8. 3 The Best Team Size for Inspections • The preparation rate, not the team size, determines inspection effectiveness • The team’s effectiveness and efficiency depend on their familiarity with their product Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 23

8. 3 Unit Testing Proving Code Correct • Formal proof techniques – – – Write assertions to describe inputoutput conditions Draw a flow diagram depicting logical flow Generate theorems to be proven Locate loops and define if-then assertions for each Identify all paths from A 1 to An Cover each path so that each input assertion implies an output assertion – Prove that the program terminates • Only proves design is correct, not implementation • Expensive Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 24

8. 3 Unit Testing Proving Code Correct • Formal proof techniques – – – Write assertions to describe inputoutput conditions Draw a flow diagram depicting logical flow Generate theorems to be proven Locate loops and define if-then assertions for each Identify all paths from A 1 to An Cover each path so that each input assertion implies an output assertion – Prove that the program terminates • Only proves design is correct, not implementation • Expensive Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 24

8. 3 Unit Testing Proving Code Correct (continued) • Symbolic execution – Execution using symbols not data variables – Execute each line, checking for state – Program is viewed as a series of state changes • Automated theorem-proving – Prove software is correct by developing tools to execute it • The input data and conditions • The output data and conditions • The lines of code for the component to be tested Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 25

8. 3 Unit Testing Proving Code Correct (continued) • Symbolic execution – Execution using symbols not data variables – Execute each line, checking for state – Program is viewed as a series of state changes • Automated theorem-proving – Prove software is correct by developing tools to execute it • The input data and conditions • The output data and conditions • The lines of code for the component to be tested Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 25

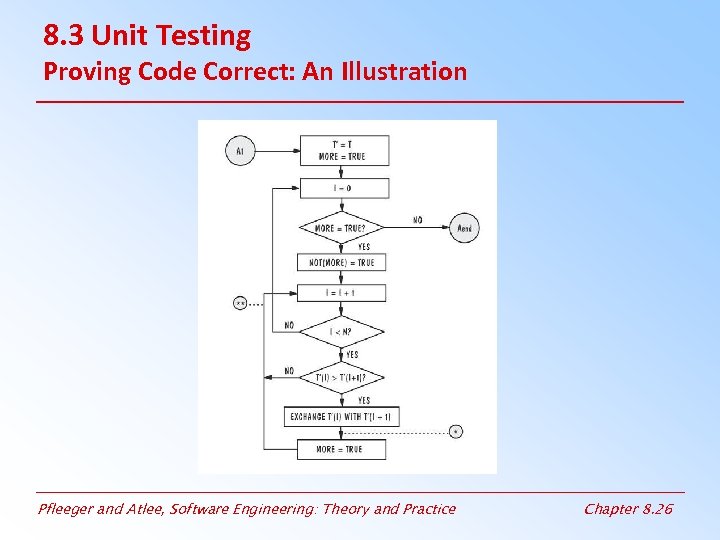

8. 3 Unit Testing Proving Code Correct: An Illustration Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 26

8. 3 Unit Testing Proving Code Correct: An Illustration Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 26

8. 3 Unit Testing versus Proving • Proving: – Hypothetical environment – Code is viewed as classes of data and conditions – Proof may not involve execution • Testing: – Actual operating environment – Demonstrate actual use of program – Series of experiments Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 27

8. 3 Unit Testing versus Proving • Proving: – Hypothetical environment – Code is viewed as classes of data and conditions – Proof may not involve execution • Testing: – Actual operating environment – Demonstrate actual use of program – Series of experiments Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 27

8. 3 Unit Testing Steps in Choosing Test Cases • Determining test objectives – Coverage criteria • Selecting test cases – Inputs that demonstrate the behavior of the code • Defining a test – Detailing execution instructions Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 28

8. 3 Unit Testing Steps in Choosing Test Cases • Determining test objectives – Coverage criteria • Selecting test cases – Inputs that demonstrate the behavior of the code • Defining a test – Detailing execution instructions Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 28

8. 3 Unit Testing Test Thoroughness • Statement testing • Branch testing • Path testing • Definition-use testing • All-uses testing • All-predicate-uses/some-computational-uses testing • All-computational-uses/some-predicate-uses testing Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 29

8. 3 Unit Testing Test Thoroughness • Statement testing • Branch testing • Path testing • Definition-use testing • All-uses testing • All-predicate-uses/some-computational-uses testing • All-computational-uses/some-predicate-uses testing Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 29

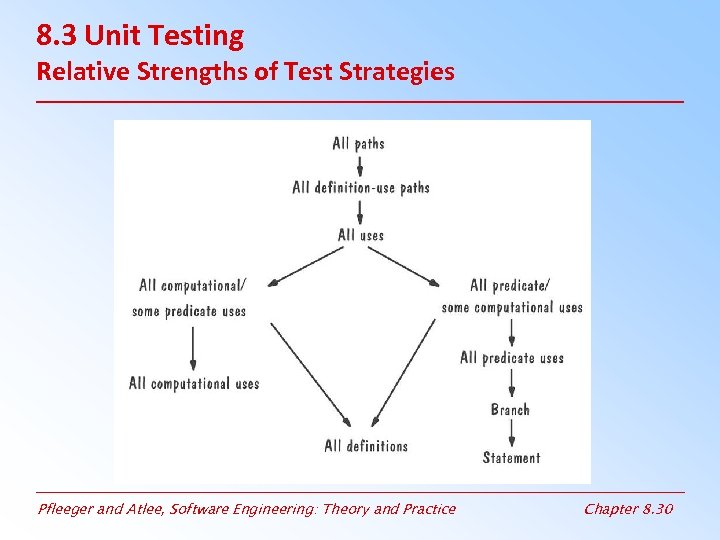

8. 3 Unit Testing Relative Strengths of Test Strategies Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 30

8. 3 Unit Testing Relative Strengths of Test Strategies Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 30

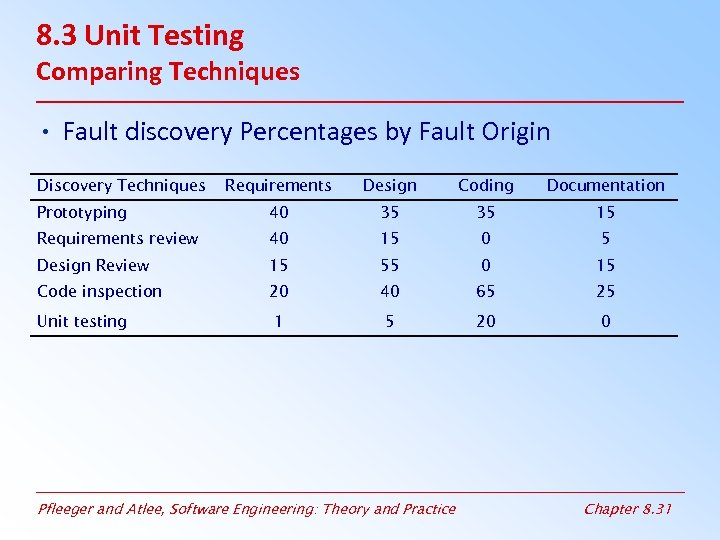

8. 3 Unit Testing Comparing Techniques • Fault discovery Percentages by Fault Origin Discovery Techniques Requirements Design Coding Documentation Prototyping 40 35 35 15 Requirements review 40 15 0 5 Design Review 15 55 0 15 Code inspection 20 40 65 25 1 5 20 0 Unit testing Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 31

8. 3 Unit Testing Comparing Techniques • Fault discovery Percentages by Fault Origin Discovery Techniques Requirements Design Coding Documentation Prototyping 40 35 35 15 Requirements review 40 15 0 5 Design Review 15 55 0 15 Code inspection 20 40 65 25 1 5 20 0 Unit testing Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 31

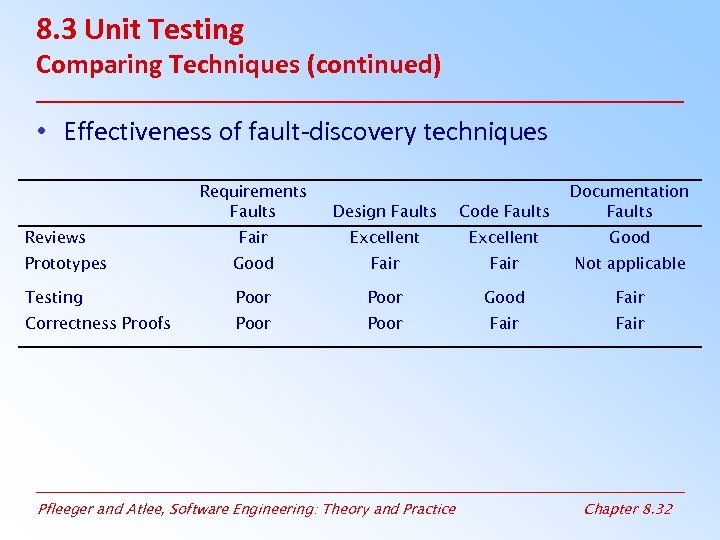

8. 3 Unit Testing Comparing Techniques (continued) • Effectiveness of fault-discovery techniques Requirements Faults Design Faults Code Faults Documentation Faults Fair Excellent Good Prototypes Good Fair Not applicable Testing Poor Good Fair Correctness Proofs Poor Fair Reviews Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 32

8. 3 Unit Testing Comparing Techniques (continued) • Effectiveness of fault-discovery techniques Requirements Faults Design Faults Code Faults Documentation Faults Fair Excellent Good Prototypes Good Fair Not applicable Testing Poor Good Fair Correctness Proofs Poor Fair Reviews Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 32

8. 3 Unit Testing Sidebar 8. 4 Fault Discovery Efficiency at Contel IPC • 17. 3% during inspections of the system design • 19. 1% during component design inspection • 15. 1% during code inspection • 29. 4% during integration testing • 16. 6% during system and regression testing • 0. 1% after the system was placed in the field Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 33

8. 3 Unit Testing Sidebar 8. 4 Fault Discovery Efficiency at Contel IPC • 17. 3% during inspections of the system design • 19. 1% during component design inspection • 15. 1% during code inspection • 29. 4% during integration testing • 16. 6% during system and regression testing • 0. 1% after the system was placed in the field Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 33

8. 4 Integration Testing • Bottom-up • Top-down • Big-bang • Sandwich testing • Modified top-down • Modified sandwich Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 34

8. 4 Integration Testing • Bottom-up • Top-down • Big-bang • Sandwich testing • Modified top-down • Modified sandwich Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 34

8. 4 Integration Testing Terminology • Component Driver: a routine that calls a particular component and passes a test case to it • Stub: a special-purpose program to simulate the activity of the missing component Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 35

8. 4 Integration Testing Terminology • Component Driver: a routine that calls a particular component and passes a test case to it • Stub: a special-purpose program to simulate the activity of the missing component Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 35

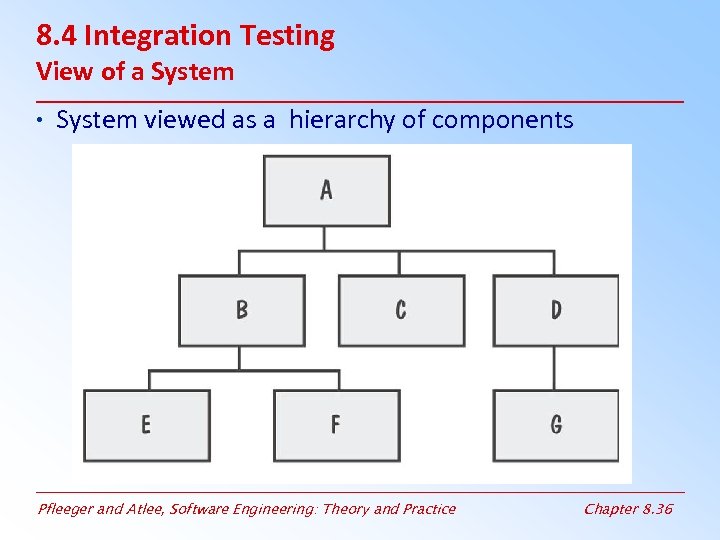

8. 4 Integration Testing View of a System • System viewed as a hierarchy of components Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 36

8. 4 Integration Testing View of a System • System viewed as a hierarchy of components Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 36

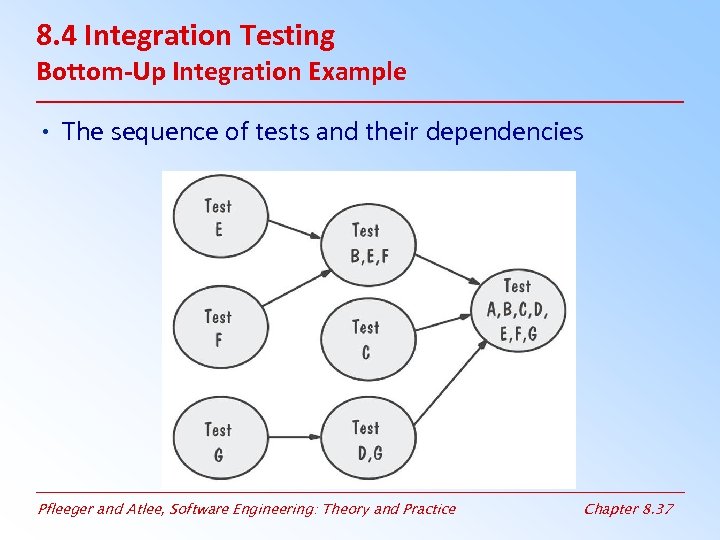

8. 4 Integration Testing Bottom-Up Integration Example • The sequence of tests and their dependencies Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 37

8. 4 Integration Testing Bottom-Up Integration Example • The sequence of tests and their dependencies Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 37

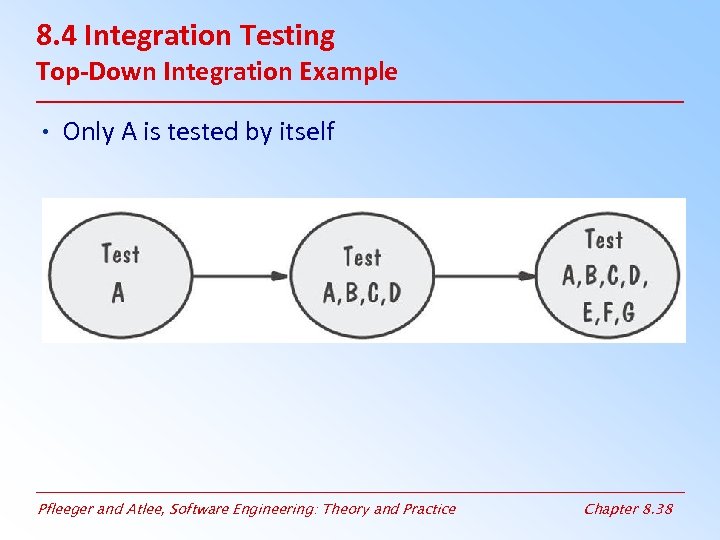

8. 4 Integration Testing Top-Down Integration Example • Only A is tested by itself Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 38

8. 4 Integration Testing Top-Down Integration Example • Only A is tested by itself Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 38

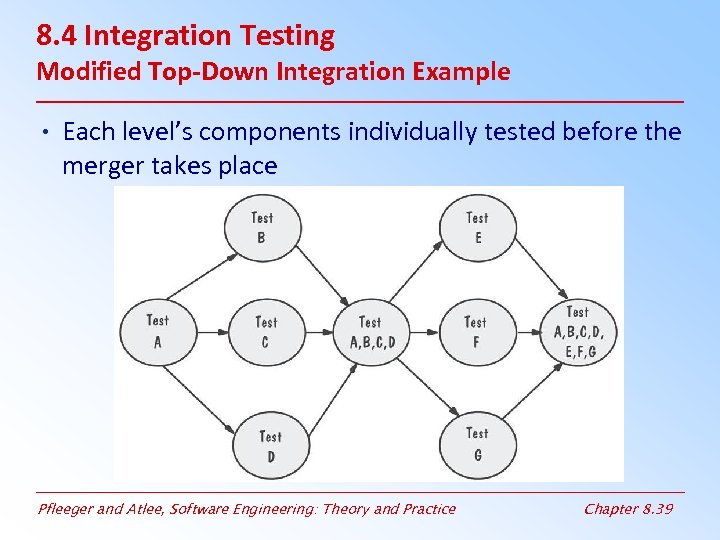

8. 4 Integration Testing Modified Top-Down Integration Example • Each level’s components individually tested before the merger takes place Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 39

8. 4 Integration Testing Modified Top-Down Integration Example • Each level’s components individually tested before the merger takes place Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 39

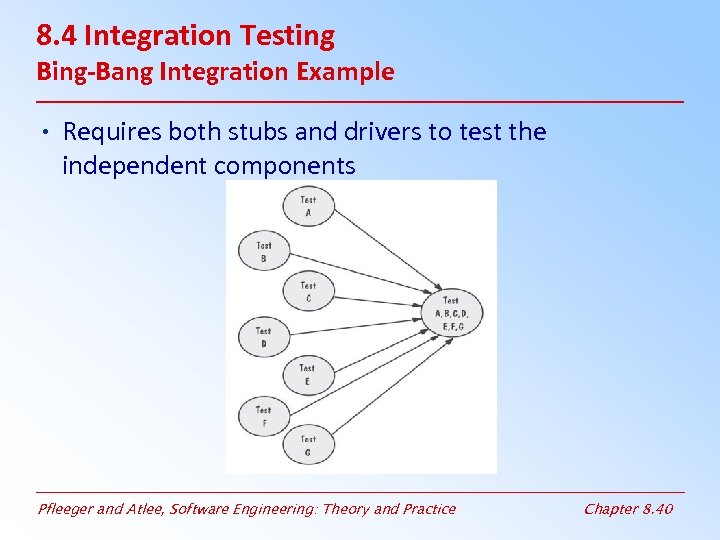

8. 4 Integration Testing Bing-Bang Integration Example • Requires both stubs and drivers to test the independent components Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 40

8. 4 Integration Testing Bing-Bang Integration Example • Requires both stubs and drivers to test the independent components Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 40

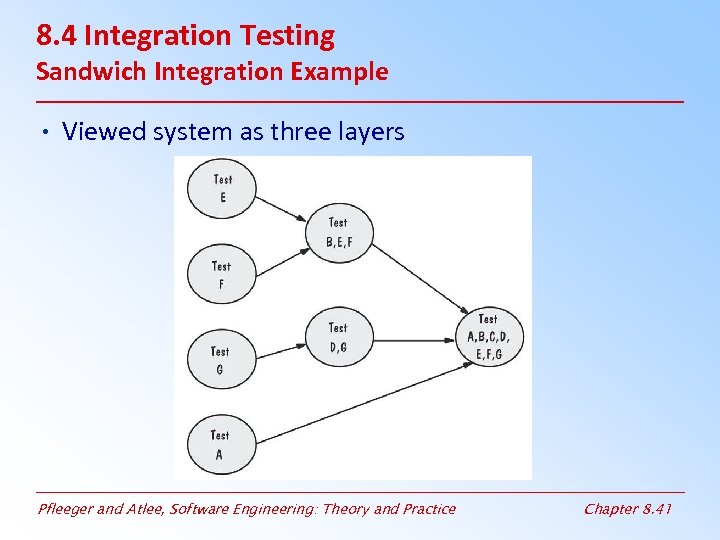

8. 4 Integration Testing Sandwich Integration Example • Viewed system as three layers Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 41

8. 4 Integration Testing Sandwich Integration Example • Viewed system as three layers Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 41

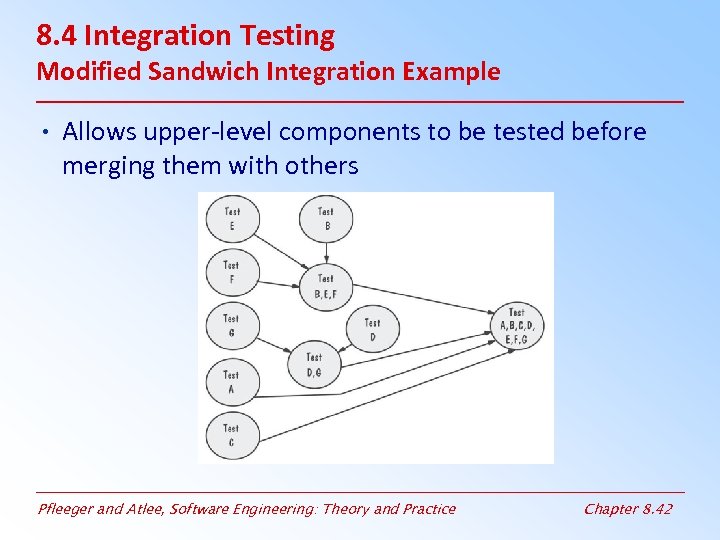

8. 4 Integration Testing Modified Sandwich Integration Example • Allows upper-level components to be tested before merging them with others Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 42

8. 4 Integration Testing Modified Sandwich Integration Example • Allows upper-level components to be tested before merging them with others Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 42

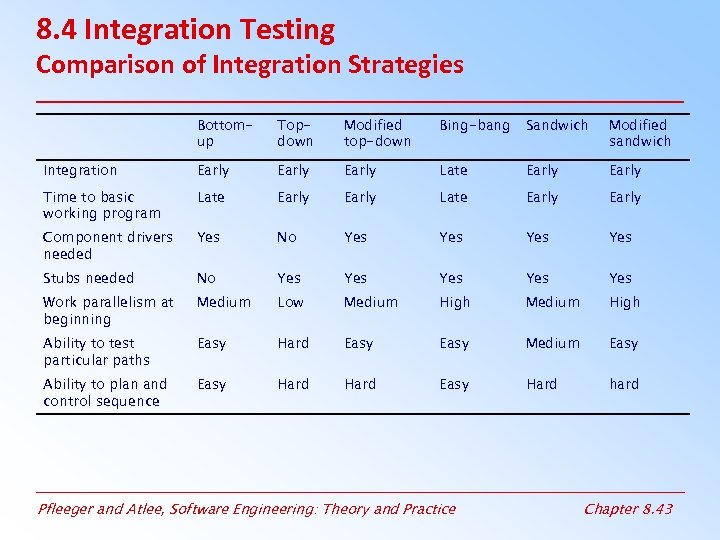

8. 4 Integration Testing Comparison of Integration Strategies Bottomup Topdown Modified top-down Bing-bang Sandwich Modified sandwich Integration Early Late Early Time to basic working program Late Early Component drivers needed Yes No Yes Yes Stubs needed No Yes Yes Yes Work parallelism at beginning Medium Low Medium High Ability to test particular paths Easy Hard Easy Medium Easy Ability to plan and control sequence Easy Hard hard Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 43

8. 4 Integration Testing Comparison of Integration Strategies Bottomup Topdown Modified top-down Bing-bang Sandwich Modified sandwich Integration Early Late Early Time to basic working program Late Early Component drivers needed Yes No Yes Yes Stubs needed No Yes Yes Yes Work parallelism at beginning Medium Low Medium High Ability to test particular paths Easy Hard Easy Medium Easy Ability to plan and control sequence Easy Hard hard Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 43

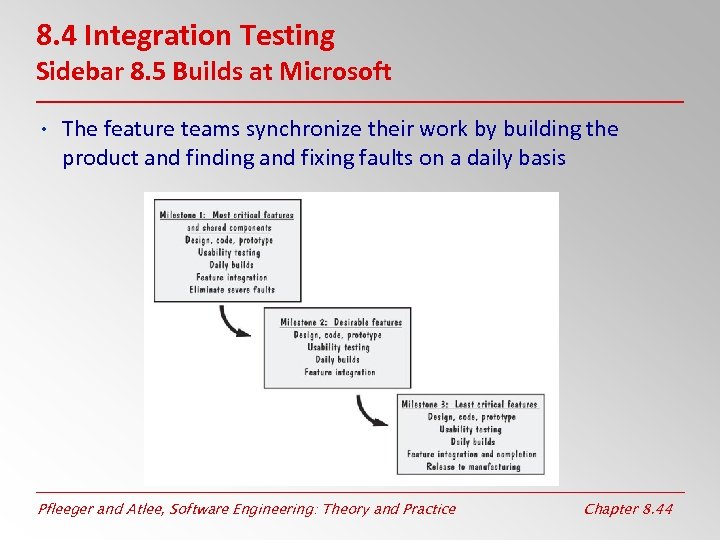

8. 4 Integration Testing Sidebar 8. 5 Builds at Microsoft • The feature teams synchronize their work by building the product and finding and fixing faults on a daily basis Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 44

8. 4 Integration Testing Sidebar 8. 5 Builds at Microsoft • The feature teams synchronize their work by building the product and finding and fixing faults on a daily basis Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 44

8. 5 Testing Object-Oriented Systems Testing the Code • Questions at the Beginning of Testing OO Systems – Is there a path that generates a unique result? – Is there a way to select a unique result? – Are there useful cases that are not handled? • Check objects for excesses and deficiencies – – Missing objects Unnecessary classes Missing or unnecessary associations Incorrect placement of associations or attributes Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 45

8. 5 Testing Object-Oriented Systems Testing the Code • Questions at the Beginning of Testing OO Systems – Is there a path that generates a unique result? – Is there a way to select a unique result? – Are there useful cases that are not handled? • Check objects for excesses and deficiencies – – Missing objects Unnecessary classes Missing or unnecessary associations Incorrect placement of associations or attributes Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 45

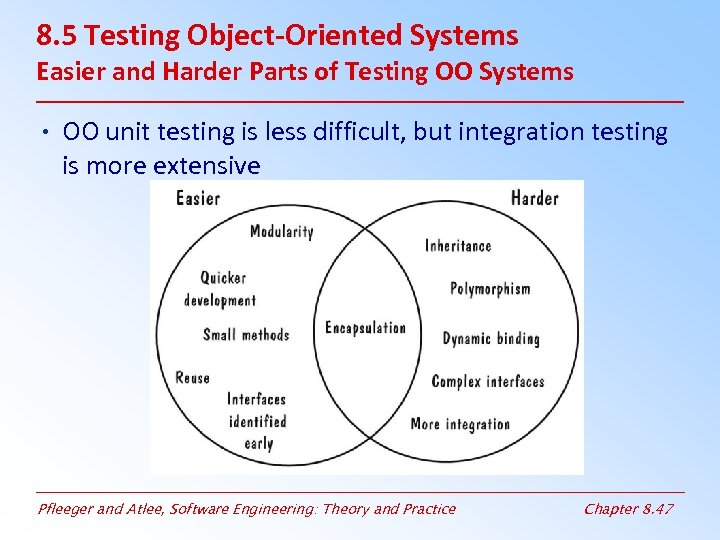

8. 5 Testing Object-Oriented Systems Easier and Harder Parts of Testing OO Systems • OO unit testing is less difficult, but integration testing is more extensive Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 47

8. 5 Testing Object-Oriented Systems Easier and Harder Parts of Testing OO Systems • OO unit testing is less difficult, but integration testing is more extensive Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 47

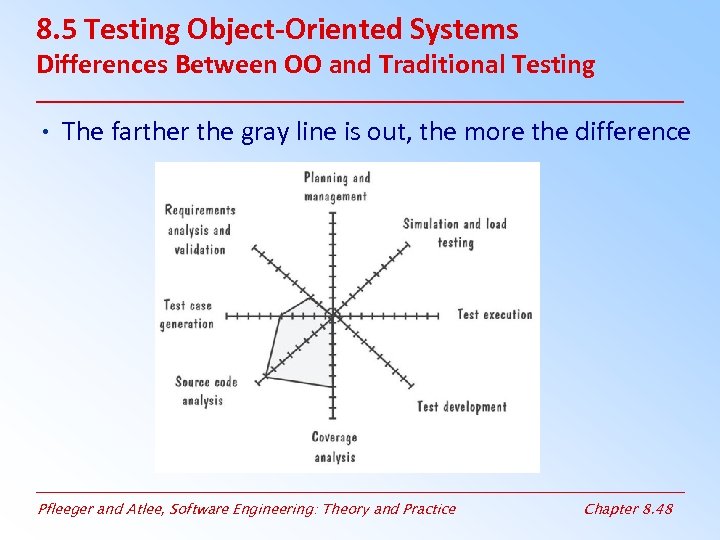

8. 5 Testing Object-Oriented Systems Differences Between OO and Traditional Testing • The farther the gray line is out, the more the difference Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 48

8. 5 Testing Object-Oriented Systems Differences Between OO and Traditional Testing • The farther the gray line is out, the more the difference Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 48

8. 6 Test Planning • Establish test objectives • Design test cases • Write test cases • Testing test cases • Execute tests • Evaluate test results Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 49

8. 6 Test Planning • Establish test objectives • Design test cases • Write test cases • Testing test cases • Execute tests • Evaluate test results Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 49

8. 6 Test Planning Purpose of the Plan • Test plan explains – – who does the testing why the tests are performed how tests are conducted when the tests are scheduled • Test plan describes – That the software works correctly and is free of faults – That the software performs the functions as specified • The test plan is a guide to the entire testing activity Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 50

8. 6 Test Planning Purpose of the Plan • Test plan explains – – who does the testing why the tests are performed how tests are conducted when the tests are scheduled • Test plan describes – That the software works correctly and is free of faults – That the software performs the functions as specified • The test plan is a guide to the entire testing activity Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 50

8. 6 Test Planning Contents of the Plan • What the test objectives are • How the test will be run • Criteria to determine that testing is complete • Detailed list of test cases • How test data is generated • How output data will be captured • Complete picture of how and why testing will be performed Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 51

8. 6 Test Planning Contents of the Plan • What the test objectives are • How the test will be run • Criteria to determine that testing is complete • Detailed list of test cases • How test data is generated • How output data will be captured • Complete picture of how and why testing will be performed Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 51

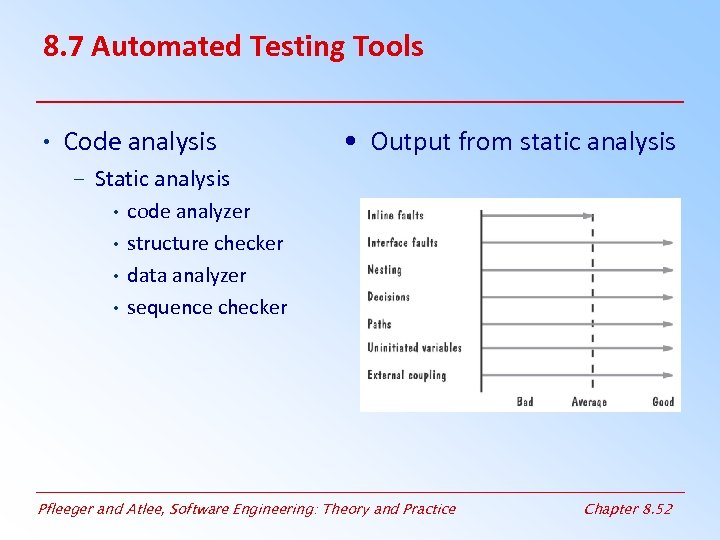

8. 7 Automated Testing Tools • Code analysis • Output from static analysis – Static analysis • code analyzer • structure checker • data analyzer • sequence checker Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 52

8. 7 Automated Testing Tools • Code analysis • Output from static analysis – Static analysis • code analyzer • structure checker • data analyzer • sequence checker Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 52

8. 7 Automated Testing Tools (continued) • Dynamic analysis – program monitors: watch and report program’s behavior • Test execution – Capture and replay – Stubs and drivers – Automated testing environments • Test case generators Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 53

8. 7 Automated Testing Tools (continued) • Dynamic analysis – program monitors: watch and report program’s behavior • Test execution – Capture and replay – Stubs and drivers – Automated testing environments • Test case generators Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 53

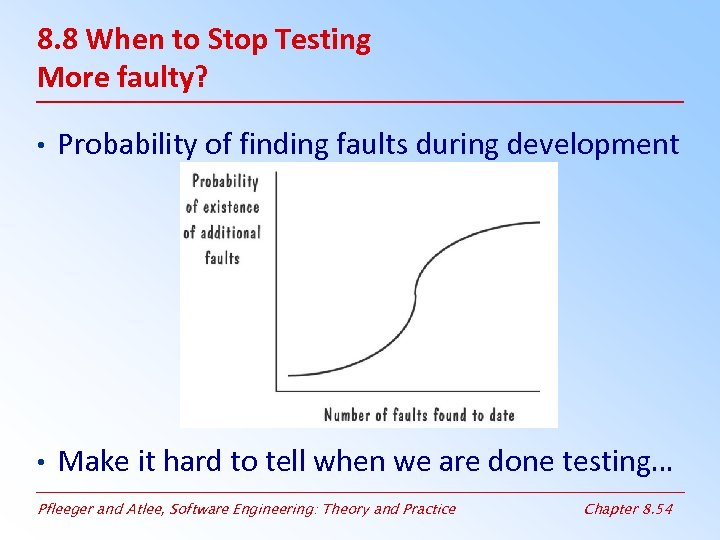

8. 8 When to Stop Testing More faulty? • Probability of finding faults during development • Make it hard to tell when we are done testing… Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 54

8. 8 When to Stop Testing More faulty? • Probability of finding faults during development • Make it hard to tell when we are done testing… Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 54

8. 8 When to Stop Testing Stopping Approaches • Fault seeding – Intentionally inserts a known number of faults – Undiscovered faults leads to total faults : detected seeded Faults total seeded faults = detected nonseeded faults total nonseeded faults – Improved by basing number of seeds on historical data – Improved by using two independent test groups • Comparing results • Coverage Criteria – Determine how many statement, path or branch tests are required – Use total to track completeness of tests Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 55

8. 8 When to Stop Testing Stopping Approaches • Fault seeding – Intentionally inserts a known number of faults – Undiscovered faults leads to total faults : detected seeded Faults total seeded faults = detected nonseeded faults total nonseeded faults – Improved by basing number of seeds on historical data – Improved by using two independent test groups • Comparing results • Coverage Criteria – Determine how many statement, path or branch tests are required – Use total to track completeness of tests Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 55

8. 8 When to Stop Testing Stopping Approaches (continued) • Confidence in the software – Based on fault estimates to find confidence • e. g. 95% confident the software is fault free C = 1, if n > N = S/(S – N + 1) if n ≤ N • Where S = number of seeds N = number of actual faults n = number of found faults • Assumes all faults have equal probability of being detected Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 56

8. 8 When to Stop Testing Stopping Approaches (continued) • Confidence in the software – Based on fault estimates to find confidence • e. g. 95% confident the software is fault free C = 1, if n > N = S/(S – N + 1) if n ≤ N • Where S = number of seeds N = number of actual faults n = number of found faults • Assumes all faults have equal probability of being detected Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 56

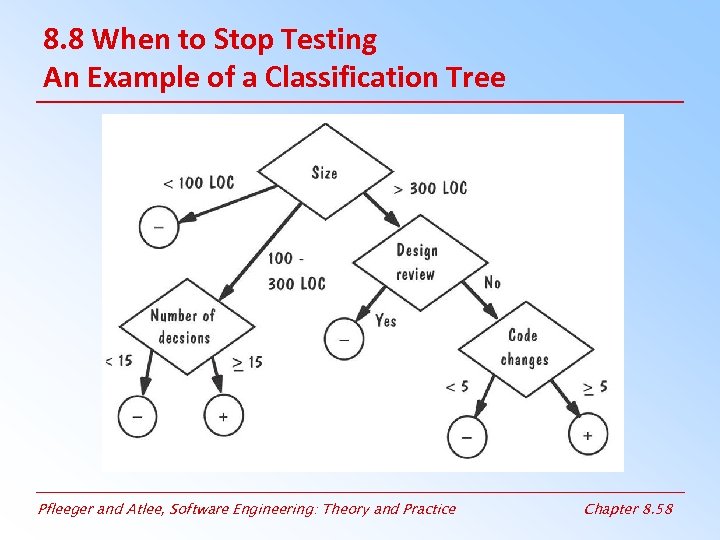

8. 8 When to Stop Testing Identifying Fault-Prone Code • Track the number of faults found in each component during the development • Collect measurement (e. g. , size, number of decisions) about each component • Classification trees: a statistical technique that sorts through large arrays of measurement information and creates a decision tree to show best predictors – A tree helps in deciding the which components are likely to have a large number of errors Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 57

8. 8 When to Stop Testing Identifying Fault-Prone Code • Track the number of faults found in each component during the development • Collect measurement (e. g. , size, number of decisions) about each component • Classification trees: a statistical technique that sorts through large arrays of measurement information and creates a decision tree to show best predictors – A tree helps in deciding the which components are likely to have a large number of errors Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 57

8. 8 When to Stop Testing An Example of a Classification Tree Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 58

8. 8 When to Stop Testing An Example of a Classification Tree Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 58

8. 9 Information Systems Example Piccadilly System • Test strategy that exercises every path • Test scripts describe input and expected outcome • If actual outcome is equal to expected outcome – Is the component fault-free? – Are we done testing? • Use data-flow testing strategy rather than structural – Definition-use testing – Ensure each path is exercised Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 59

8. 9 Information Systems Example Piccadilly System • Test strategy that exercises every path • Test scripts describe input and expected outcome • If actual outcome is equal to expected outcome – Is the component fault-free? – Are we done testing? • Use data-flow testing strategy rather than structural – Definition-use testing – Ensure each path is exercised Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 59

8. 10 Real-Time Example The Ariane-5 System • The Ariane-5’s flight control system was tested in four ways – – equipment testing on-board computer software testing staged integration system validation tests • The Ariane-5 developers relied on insufficient reviews and test coverage Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 60

8. 10 Real-Time Example The Ariane-5 System • The Ariane-5’s flight control system was tested in four ways – – equipment testing on-board computer software testing staged integration system validation tests • The Ariane-5 developers relied on insufficient reviews and test coverage Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 60

8. 11 What this Chapter Means for You • It is important to understand the difference between faults and failures • The goal of testing is to find faults, not to prove correctness • Describes techniques for testing • Absence of faults doesn’t guarantee correctness Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 61

8. 11 What this Chapter Means for You • It is important to understand the difference between faults and failures • The goal of testing is to find faults, not to prove correctness • Describes techniques for testing • Absence of faults doesn’t guarantee correctness Pfleeger and Atlee, Software Engineering: Theory and Practice Chapter 8. 61