94c20ec38edc170670ae8baabb5ac0b5.ppt

- Количество слайдов: 49

Chapter 6 System Integration and Performance Chapter Outline • • • System Bus Logical and Physical Access Device Controllers Interrupt Processing Buffers and Caches Processing Parallelism High-Performance Clustering Compression Technology Focus—MPEG and MP 3

Chapter 6 System Integration and Performance Chapter Outline • • • System Bus Logical and Physical Access Device Controllers Interrupt Processing Buffers and Caches Processing Parallelism High-Performance Clustering Compression Technology Focus—MPEG and MP 3

Chapter Goals • Describe the system bus and bus protocol • Describe how the CPU and bus interact with peripheral devices • Describe the purpose and function of device controllers • Describe how interrupt processing coordinates the CPU with secondary storage and I/O devices • Describe how buffers, caches, and data compression improve computer system performance

Chapter Goals • Describe the system bus and bus protocol • Describe how the CPU and bus interact with peripheral devices • Describe the purpose and function of device controllers • Describe how interrupt processing coordinates the CPU with secondary storage and I/O devices • Describe how buffers, caches, and data compression improve computer system performance

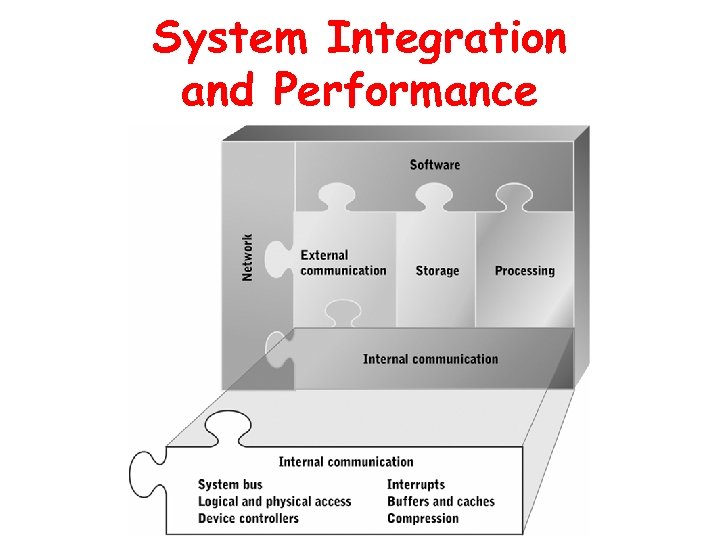

System Integration and Performance

System Integration and Performance

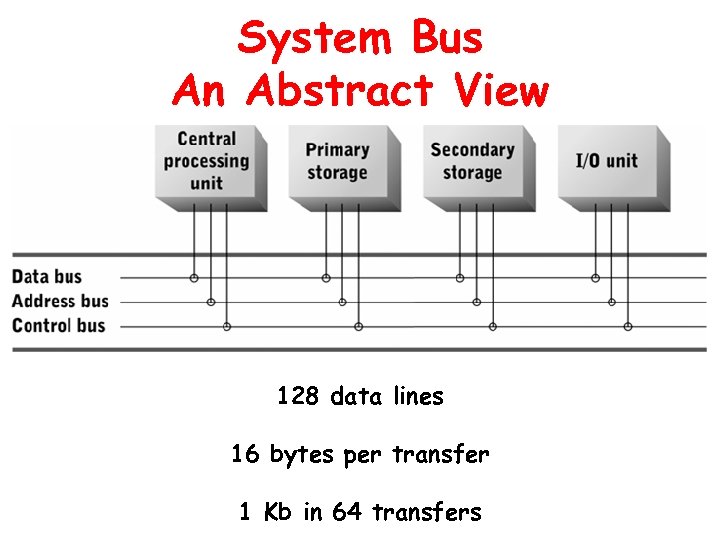

System Bus • Connects CPU with main memory and peripheral devices • Set of data lines, control lines, and status lines • Bus protocol – Number and use of lines – Procedures for controlling access to the bus • Subsets of bus lines: data bus, address bus, control bus

System Bus • Connects CPU with main memory and peripheral devices • Set of data lines, control lines, and status lines • Bus protocol – Number and use of lines – Procedures for controlling access to the bus • Subsets of bus lines: data bus, address bus, control bus

System Bus An Abstract View 128 data lines 16 bytes per transfer 1 Kb in 64 transfers

System Bus An Abstract View 128 data lines 16 bytes per transfer 1 Kb in 64 transfers

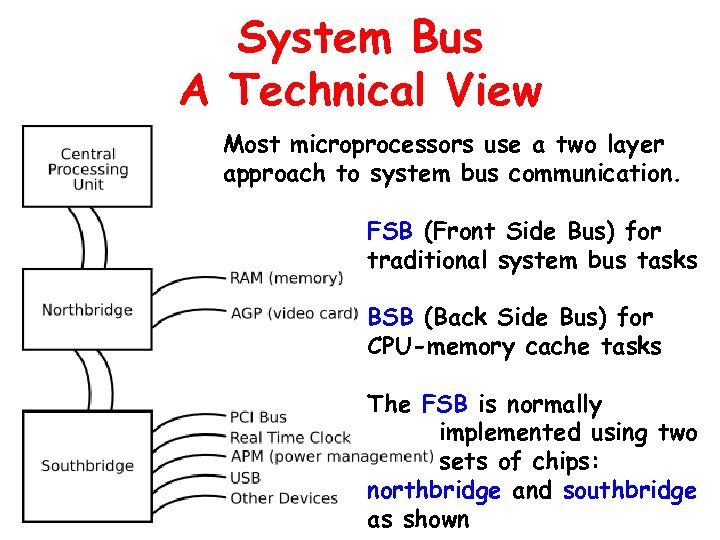

System Bus A Technical View Most microprocessors use a two layer approach to system bus communication. FSB (Front Side Bus) for traditional system bus tasks BSB (Back Side Bus) for CPU-memory cache tasks The FSB is normally implemented using two sets of chips: northbridge and southbridge as shown

System Bus A Technical View Most microprocessors use a two layer approach to system bus communication. FSB (Front Side Bus) for traditional system bus tasks BSB (Back Side Bus) for CPU-memory cache tasks The FSB is normally implemented using two sets of chips: northbridge and southbridge as shown

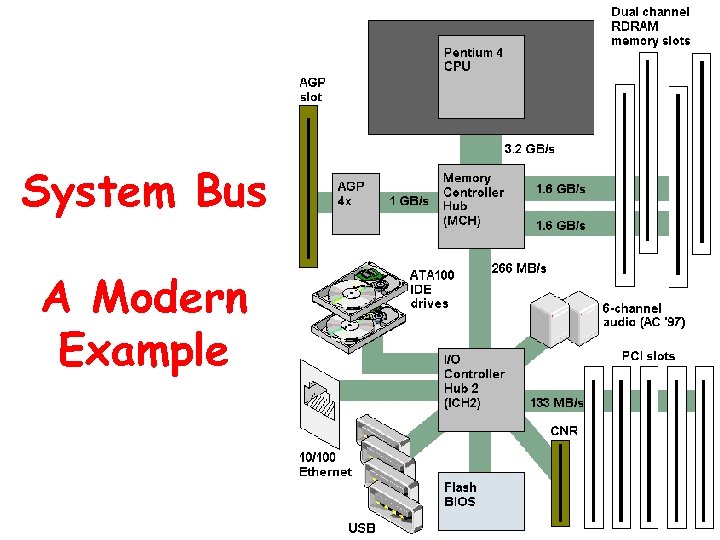

System Bus A Modern Example

System Bus A Modern Example

Bus Clock and Data Transfer Rate • Bus clock pulse – Common timing reference for all attached devices – Frequency measured in MHz • Bus cycle – Time interval from one clock pulse to the next • Data transfer rate – Measure of communication capacity – Bus capacity = data transfer unit x clock rate

Bus Clock and Data Transfer Rate • Bus clock pulse – Common timing reference for all attached devices – Frequency measured in MHz • Bus cycle – Time interval from one clock pulse to the next • Data transfer rate – Measure of communication capacity – Bus capacity = data transfer unit x clock rate

Bus Protocol • Governs format, content, timing of data, memory addresses, and control messages sent across bus • Approaches for access control – Master-slave approach – Peer-to-peer approach • Approaches for transferring data without CPU – Direct memory access (DMA) – Peer-to-peer buses

Bus Protocol • Governs format, content, timing of data, memory addresses, and control messages sent across bus • Approaches for access control – Master-slave approach – Peer-to-peer approach • Approaches for transferring data without CPU – Direct memory access (DMA) – Peer-to-peer buses

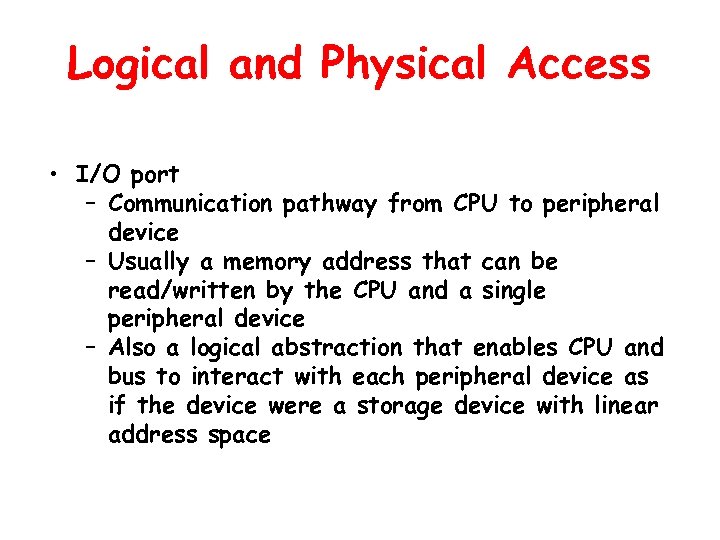

Logical and Physical Access • I/O port – Communication pathway from CPU to peripheral device – Usually a memory address that can be read/written by the CPU and a single peripheral device – Also a logical abstraction that enables CPU and bus to interact with each peripheral device as if the device were a storage device with linear address space

Logical and Physical Access • I/O port – Communication pathway from CPU to peripheral device – Usually a memory address that can be read/written by the CPU and a single peripheral device – Also a logical abstraction that enables CPU and bus to interact with each peripheral device as if the device were a storage device with linear address space

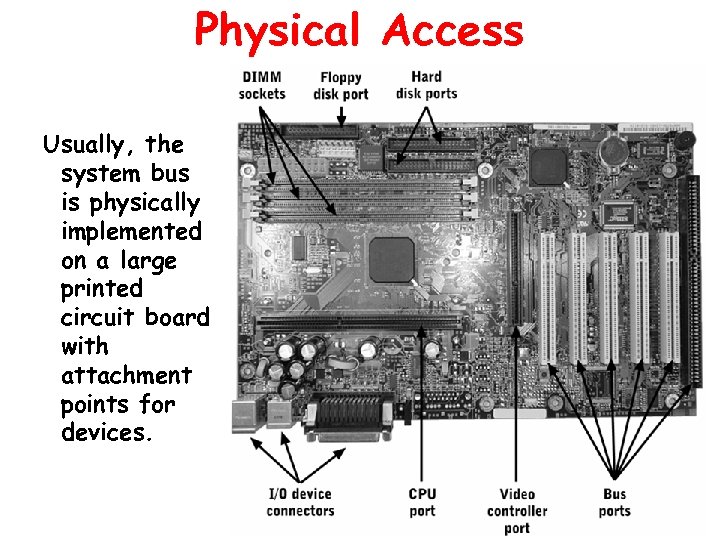

Physical Access Usually, the system bus is physically implemented on a large printed circuit board with attachment points for devices.

Physical Access Usually, the system bus is physically implemented on a large printed circuit board with attachment points for devices.

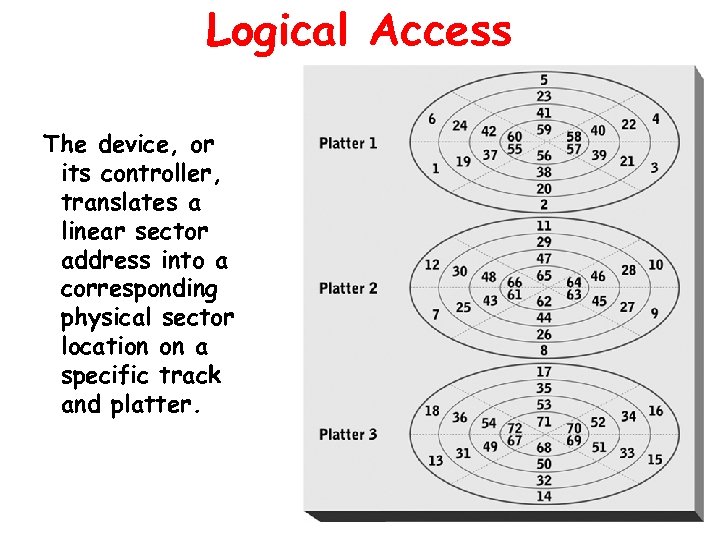

Logical Access The device, or its controller, translates a linear sector address into a corresponding physical sector location on a specific track and platter.

Logical Access The device, or its controller, translates a linear sector address into a corresponding physical sector location on a specific track and platter.

Device Controllers • Implement the bus interface and access protocols • Translate logical addresses into physical addresses • Enable several devices to share access to a bus connection

Device Controllers • Implement the bus interface and access protocols • Translate logical addresses into physical addresses • Enable several devices to share access to a bus connection

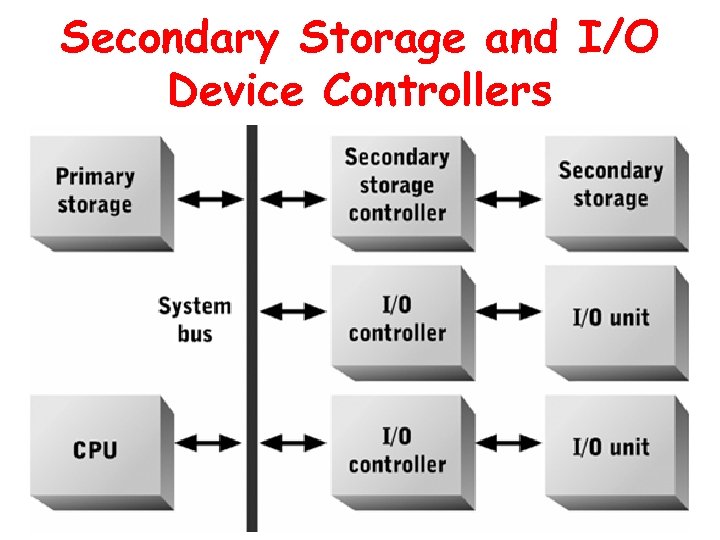

Secondary Storage and I/O Device Controllers

Secondary Storage and I/O Device Controllers

Mainframe Channels • Advanced type of device controller used in mainframe controllers • Compared with device controllers: – Greater data transfer capacity – Larger maximum number of attached peripheral devices – Greater variability in types of devices that can be controlled

Mainframe Channels • Advanced type of device controller used in mainframe controllers • Compared with device controllers: – Greater data transfer capacity – Larger maximum number of attached peripheral devices – Greater variability in types of devices that can be controlled

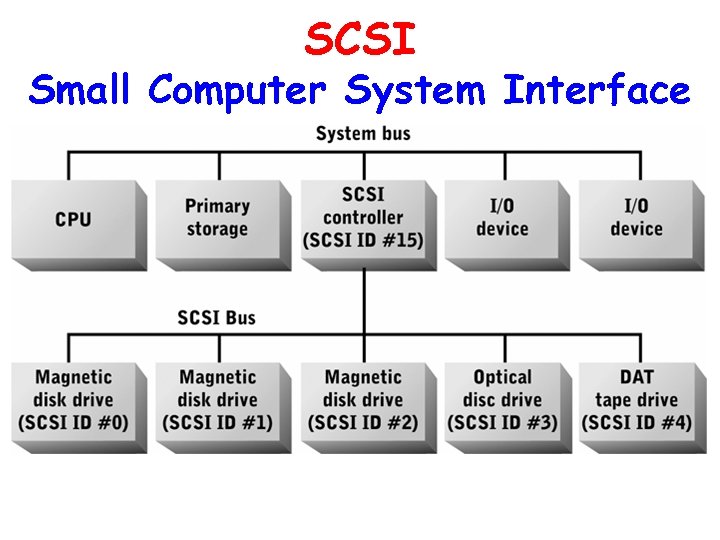

SCSI Small Computer System Interface • Family of standard buses designed primarily for secondary storage devices • Implements both a low-level physical I/O protocol and a high-level logical device control protocol

SCSI Small Computer System Interface • Family of standard buses designed primarily for secondary storage devices • Implements both a low-level physical I/O protocol and a high-level logical device control protocol

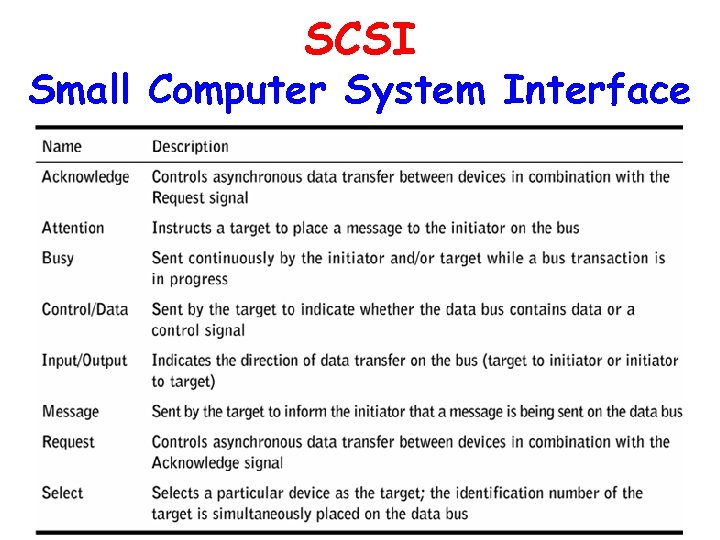

SCSI Small Computer System Interface

SCSI Small Computer System Interface

SCSI Small Computer System Interface

SCSI Small Computer System Interface

Desirable Characteristics of a SCSI Bus • Non-proprietary standard • High data transfer rate • Peer-to-peer capability • High-level (logical) data access commands • Multiple command execution • Interleaved command execution

Desirable Characteristics of a SCSI Bus • Non-proprietary standard • High data transfer rate • Peer-to-peer capability • High-level (logical) data access commands • Multiple command execution • Interleaved command execution

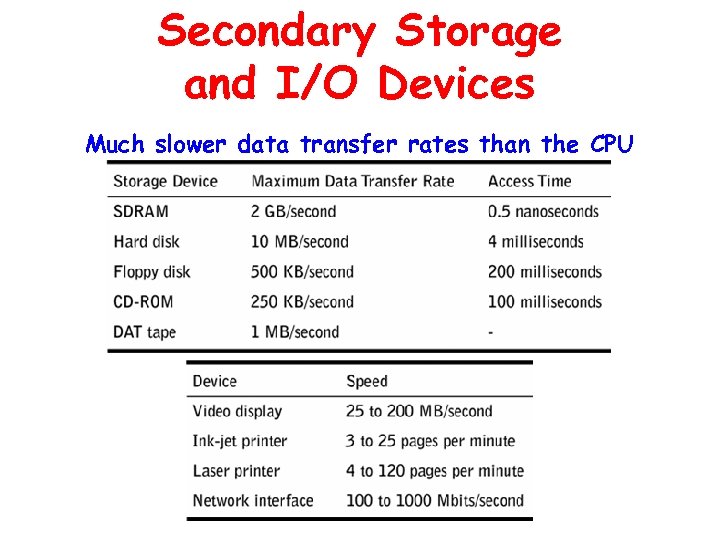

Secondary Storage and I/O Devices Much slower data transfer rates than the CPU

Secondary Storage and I/O Devices Much slower data transfer rates than the CPU

Interrupt Processing • Used by application programs to coordinate data transfers to/from peripherals, notify CPU of errors, and call operating system service programs • When interrupt is detected, executing program is suspended; pushes current register values onto the stack and transfers control to an interrupt handler • When interrupt handler finishes executing, the stack is popped and suspended process resumes from point of interruption

Interrupt Processing • Used by application programs to coordinate data transfers to/from peripherals, notify CPU of errors, and call operating system service programs • When interrupt is detected, executing program is suspended; pushes current register values onto the stack and transfers control to an interrupt handler • When interrupt handler finishes executing, the stack is popped and suspended process resumes from point of interruption

Multiple Interrupts • Categories of interrupts – I/O event – Error condition – Service request

Multiple Interrupts • Categories of interrupts – I/O event – Error condition – Service request

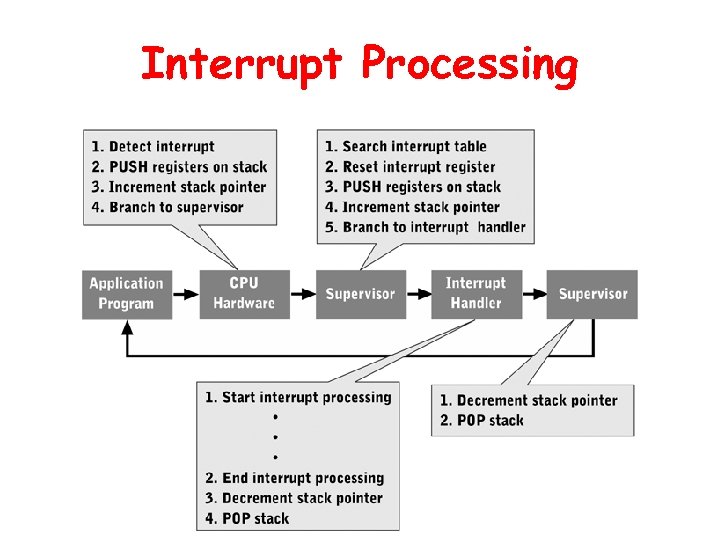

Interrupt Processing

Interrupt Processing

Buffers and Caches Both used for the same kind of trade-off: HARDWARE (MEMORY) for CPU TIME Both improve overall computer system performance by employing RAM to overcome mismatches in – data transfer rate – data transfer unit size

Buffers and Caches Both used for the same kind of trade-off: HARDWARE (MEMORY) for CPU TIME Both improve overall computer system performance by employing RAM to overcome mismatches in – data transfer rate – data transfer unit size

Buffers • Small storage areas (usually DRAM or SRAM) for data moving from one device to another – Data automatically forwarded – Each buffer is unidirectional – Size determined by device types • Use interrupts to enable devices with different data transfer rates and unit sizes to efficiently coordinate data transfer – Buffer overflow possible – Used for almost all I/O device accesses – Data managed simply

Buffers • Small storage areas (usually DRAM or SRAM) for data moving from one device to another – Data automatically forwarded – Each buffer is unidirectional – Size determined by device types • Use interrupts to enable devices with different data transfer rates and unit sizes to efficiently coordinate data transfer – Buffer overflow possible – Used for almost all I/O device accesses – Data managed simply

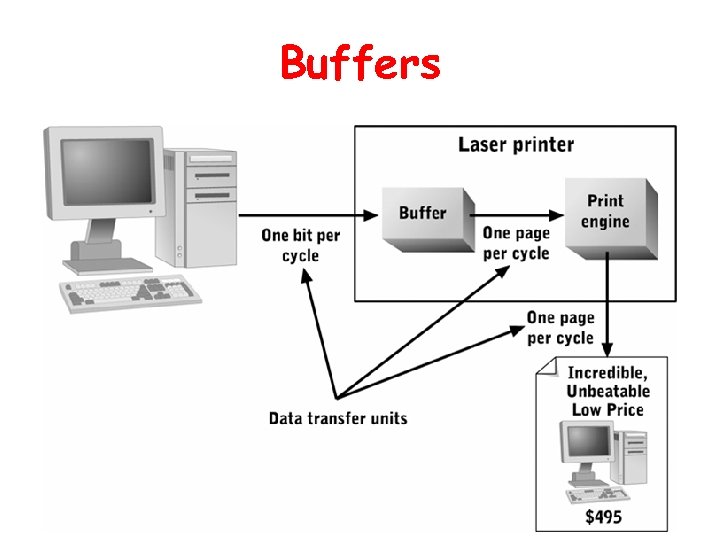

Buffers

Buffers

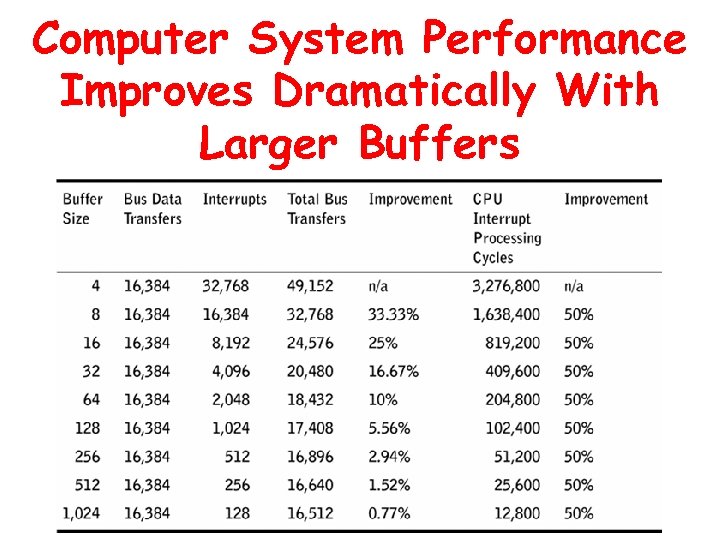

Computer System Performance Improves Dramatically With Larger Buffers

Computer System Performance Improves Dramatically With Larger Buffers

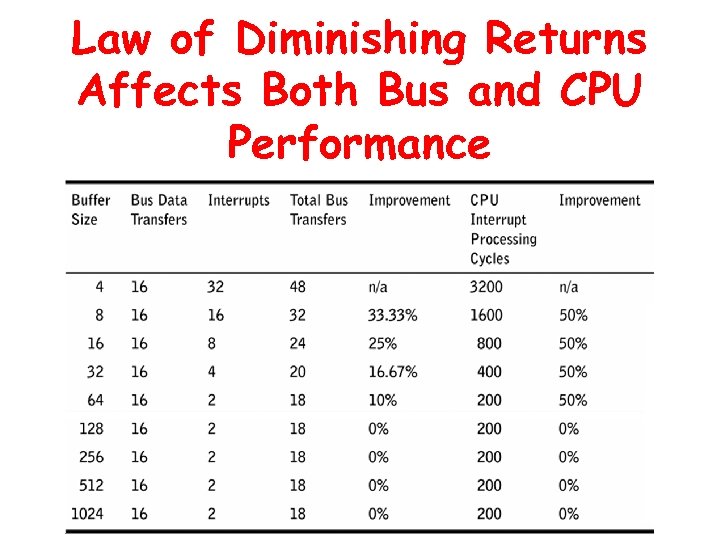

The Law of Diminishing Returns “When multiple resources are required to produce something useful, adding more and more of a single resource produces fewer and fewer benefits. ” Applicable to buffer size

The Law of Diminishing Returns “When multiple resources are required to produce something useful, adding more and more of a single resource produces fewer and fewer benefits. ” Applicable to buffer size

Law of Diminishing Returns Affects Both Bus and CPU Performance

Law of Diminishing Returns Affects Both Bus and CPU Performance

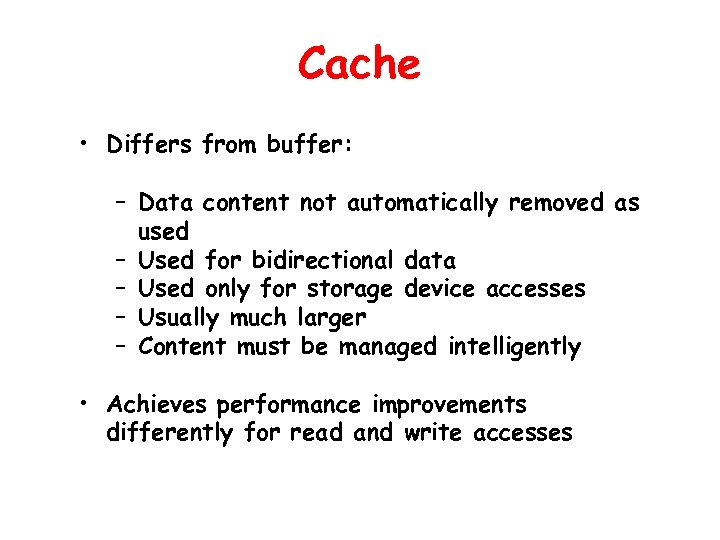

Cache • Differs from buffer: – Data content not automatically removed as used – Used for bidirectional data – Used only for storage device accesses – Usually much larger – Content must be managed intelligently • Achieves performance improvements differently for read and write accesses

Cache • Differs from buffer: – Data content not automatically removed as used – Used for bidirectional data – Used only for storage device accesses – Usually much larger – Content must be managed intelligently • Achieves performance improvements differently for read and write accesses

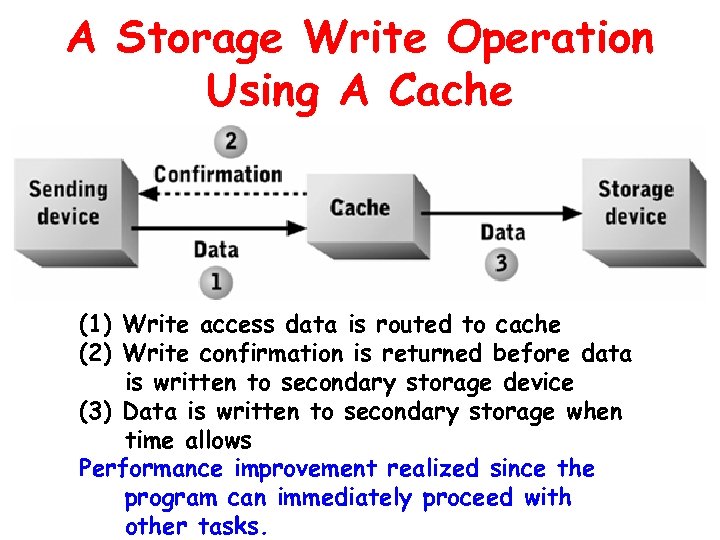

A Storage Write Operation Using A Cache (1) Write access data is routed to cache (2) Write confirmation is returned before data is written to secondary storage device (3) Data is written to secondary storage when time allows Performance improvement realized since the program can immediately proceed with other tasks.

A Storage Write Operation Using A Cache (1) Write access data is routed to cache (2) Write confirmation is returned before data is written to secondary storage device (3) Data is written to secondary storage when time allows Performance improvement realized since the program can immediately proceed with other tasks.

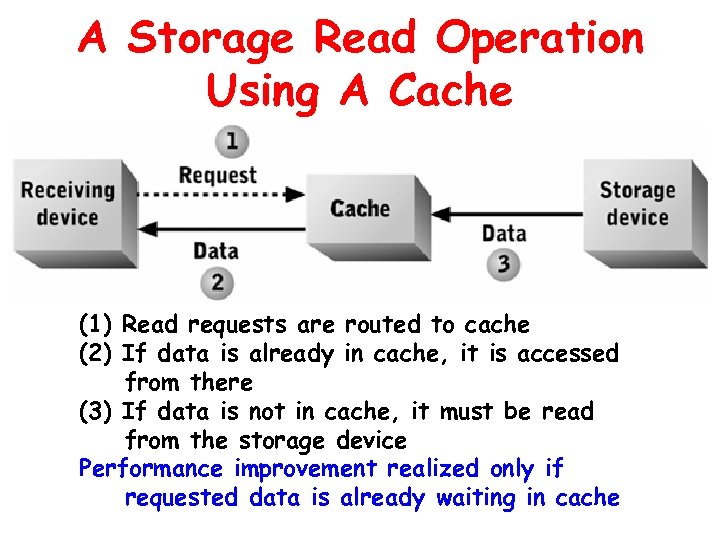

A Storage Read Operation Using A Cache (1) Read requests are routed to cache (2) If data is already in cache, it is accessed from there (3) If data is not in cache, it must be read from the storage device Performance improvement realized only if requested data is already waiting in cache

A Storage Read Operation Using A Cache (1) Read requests are routed to cache (2) If data is already in cache, it is accessed from there (3) If data is not in cache, it must be read from the storage device Performance improvement realized only if requested data is already waiting in cache

Cache Controller • Processor that manages cache content • Guesses what data will be requested; loads it from storage device into cache before it is requested • Can be implemented in – A storage device storage controller or communication channel – Operating system

Cache Controller • Processor that manages cache content • Guesses what data will be requested; loads it from storage device into cache before it is requested • Can be implemented in – A storage device storage controller or communication channel – Operating system

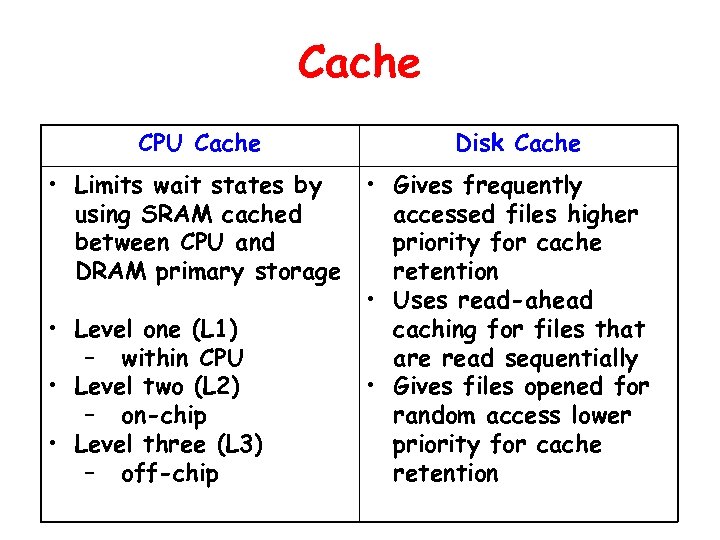

Cache CPU Cache • Limits wait states by using SRAM cached between CPU and DRAM primary storage • Level one (L 1) – within CPU • Level two (L 2) – on-chip • Level three (L 3) – off-chip Disk Cache • Gives frequently accessed files higher priority for cache retention • Uses read-ahead caching for files that are read sequentially • Gives files opened for random access lower priority for cache retention

Cache CPU Cache • Limits wait states by using SRAM cached between CPU and DRAM primary storage • Level one (L 1) – within CPU • Level two (L 2) – on-chip • Level three (L 3) – off-chip Disk Cache • Gives frequently accessed files higher priority for cache retention • Uses read-ahead caching for files that are read sequentially • Gives files opened for random access lower priority for cache retention

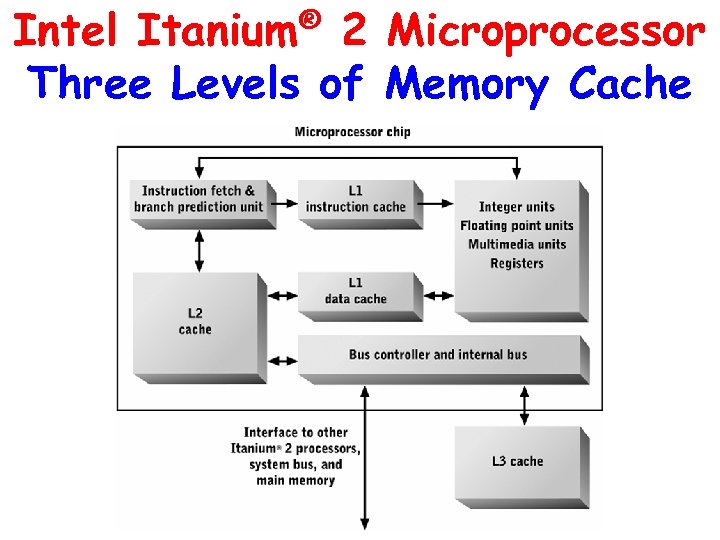

Intel Itanium® 2 Microprocessor Three Levels of Memory Cache

Intel Itanium® 2 Microprocessor Three Levels of Memory Cache

Processing Parallelism • Increases computer system computational capacity – breaks problems into pieces – solves each piece in parallel with separate CPUs • Techniques – Multicore processors – Multi-CPU architecture – Clustering

Processing Parallelism • Increases computer system computational capacity – breaks problems into pieces – solves each piece in parallel with separate CPUs • Techniques – Multicore processors – Multi-CPU architecture – Clustering

Multicore Processors • Include multiple CPUs and shared memory cache in a single microchip • Typically share memory cache, memory interface, and off-chip I/O circuitry among the cores • Reduce total transistor count and cost and provide synergistic benefits

Multicore Processors • Include multiple CPUs and shared memory cache in a single microchip • Typically share memory cache, memory interface, and off-chip I/O circuitry among the cores • Reduce total transistor count and cost and provide synergistic benefits

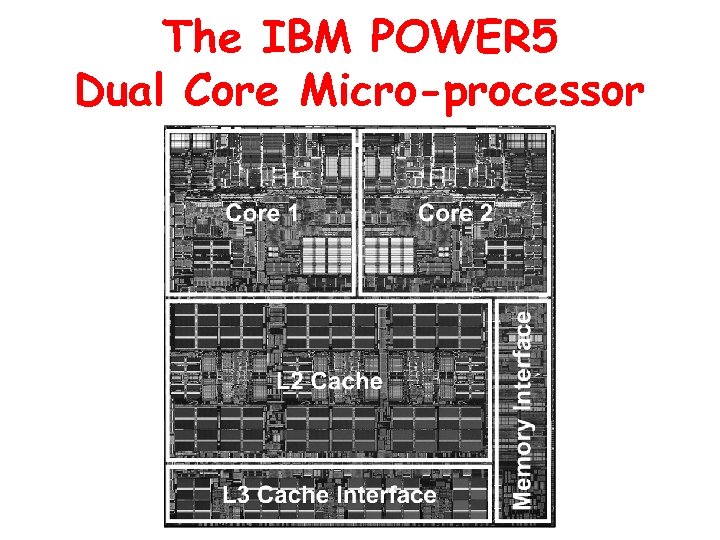

The IBM POWER 5 Dual Core Micro-processor

The IBM POWER 5 Dual Core Micro-processor

Multi-CPU Architecture • Employs multiple single or multicore processors sharing main memory and the system bus within a single motherboard or computer system • Common in midrange computers, mainframe computers, and supercomputers • Cost-effective for – Single system that executes many different application programs and services – Workstations

Multi-CPU Architecture • Employs multiple single or multicore processors sharing main memory and the system bus within a single motherboard or computer system • Common in midrange computers, mainframe computers, and supercomputers • Cost-effective for – Single system that executes many different application programs and services – Workstations

Scaling Up • Increasing processing by using larger and more powerful computers • Used to be the most cost-effective • Still cost-effective when maximal computer power is required and flexibility is not as important

Scaling Up • Increasing processing by using larger and more powerful computers • Used to be the most cost-effective • Still cost-effective when maximal computer power is required and flexibility is not as important

Scaling Out • Partitioning processing among multiple systems • Speed of communication networks – diminished relative performance penalty • Economies of scale have lowered costs • Distributed organizational structures emphasize flexibility • Improved software for managing multiprocessor configurations

Scaling Out • Partitioning processing among multiple systems • Speed of communication networks – diminished relative performance penalty • Economies of scale have lowered costs • Distributed organizational structures emphasize flexibility • Improved software for managing multiprocessor configurations

High-Performance Clustering • Connects separate computer systems with high-speed interconnections • Used for the largest computational problems (e. g. , modeling three-dimensional physical phenomena)

High-Performance Clustering • Connects separate computer systems with high-speed interconnections • Used for the largest computational problems (e. g. , modeling three-dimensional physical phenomena)

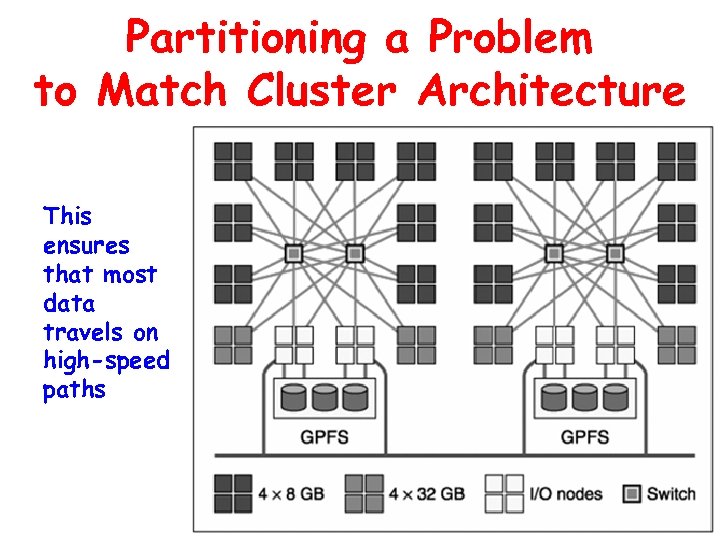

Partitioning a Problem to Match Cluster Architecture This ensures that most data travels on high-speed paths

Partitioning a Problem to Match Cluster Architecture This ensures that most data travels on high-speed paths

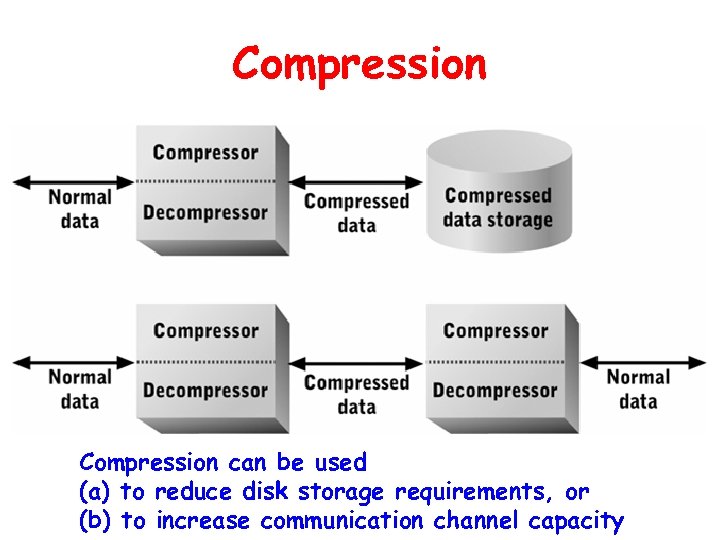

Compression • Reduces number of bits required to encode a data set or stream • Effectively increases capacity of a communication channel or storage device • Requires increased processing resources to implement compression/decompression algorithms while reducing resources needed for data storage and/or communication

Compression • Reduces number of bits required to encode a data set or stream • Effectively increases capacity of a communication channel or storage device • Requires increased processing resources to implement compression/decompression algorithms while reducing resources needed for data storage and/or communication

Compression Algorithms • Vary in: – Type(s) of data for which they are best suited – Whether information is lost during compression – Amount by which data is compressed – Computational complexity • Lossless versus lossy compression

Compression Algorithms • Vary in: – Type(s) of data for which they are best suited – Whether information is lost during compression – Amount by which data is compressed – Computational complexity • Lossless versus lossy compression

Compression can be used (a) to reduce disk storage requirements, or (b) to increase communication channel capacity

Compression can be used (a) to reduce disk storage requirements, or (b) to increase communication channel capacity

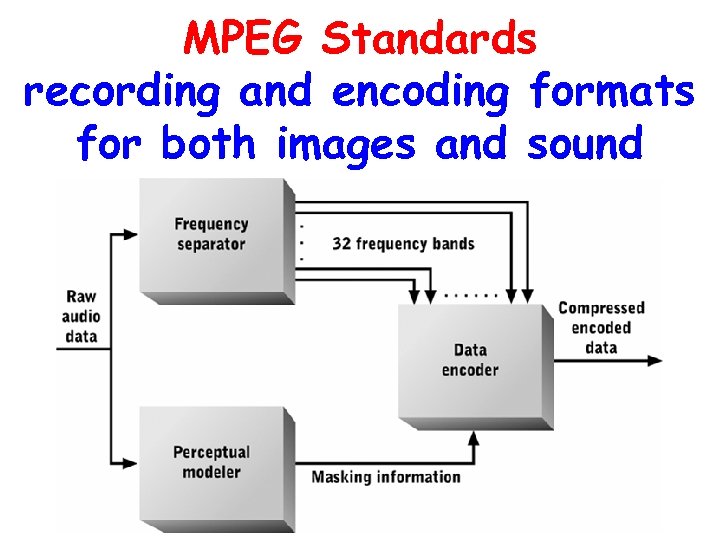

MPEG Standards recording and encoding formats for both images and sound

MPEG Standards recording and encoding formats for both images and sound

Summary • How the CPU uses the system bus and device controllers to communicate with secondary storage and input/output devices • Hardware and software techniques for improving data efficiency, and thus, overall computer system performance: bus protocols, interrupt processing, buffering, caching, and compression

Summary • How the CPU uses the system bus and device controllers to communicate with secondary storage and input/output devices • Hardware and software techniques for improving data efficiency, and thus, overall computer system performance: bus protocols, interrupt processing, buffering, caching, and compression

Chapter Goals • Describe the system bus and bus protocol • Describe how the CPU and bus interact with peripheral devices • Describe the purpose and function of device controllers • Describe how interrupt processing coordinates the CPU with secondary storage and I/O devices • Describe how buffers, caches, and data compression improve computer system performance

Chapter Goals • Describe the system bus and bus protocol • Describe how the CPU and bus interact with peripheral devices • Describe the purpose and function of device controllers • Describe how interrupt processing coordinates the CPU with secondary storage and I/O devices • Describe how buffers, caches, and data compression improve computer system performance