6a2464fa8f91d90139b1860c99b39b33.ppt

- Количество слайдов: 35

Central Michigan University Educator Evaluation Discussion Jennifer S. Hammond, Ph. D. Grand Blanc High School Principal Michigan Association of Secondary School Principals Past President Michigan Council for Educator Effectiveness Member

Central Michigan University Educator Evaluation Discussion Jennifer S. Hammond, Ph. D. Grand Blanc High School Principal Michigan Association of Secondary School Principals Past President Michigan Council for Educator Effectiveness Member

• Discuss the charge of Objectives: the Michigan Council for Educator Effectiveness • Review National Reform (MCEE) on Educator Evaluations • Summarize the MCEE • Review changes to Interim Report Michigan law regarding evaluations • Review the 2012 -13 Pilot

• Discuss the charge of Objectives: the Michigan Council for Educator Effectiveness • Review National Reform (MCEE) on Educator Evaluations • Summarize the MCEE • Review changes to Interim Report Michigan law regarding evaluations • Review the 2012 -13 Pilot

National Council on Teacher Quality • Teacher quality is the most important schoollevel variable in student achievement. • Recognition that increasing teacher quality is key to raising student achievement. • Specific emphasis on teacher effectiveness.

National Council on Teacher Quality • Teacher quality is the most important schoollevel variable in student achievement. • Recognition that increasing teacher quality is key to raising student achievement. • Specific emphasis on teacher effectiveness.

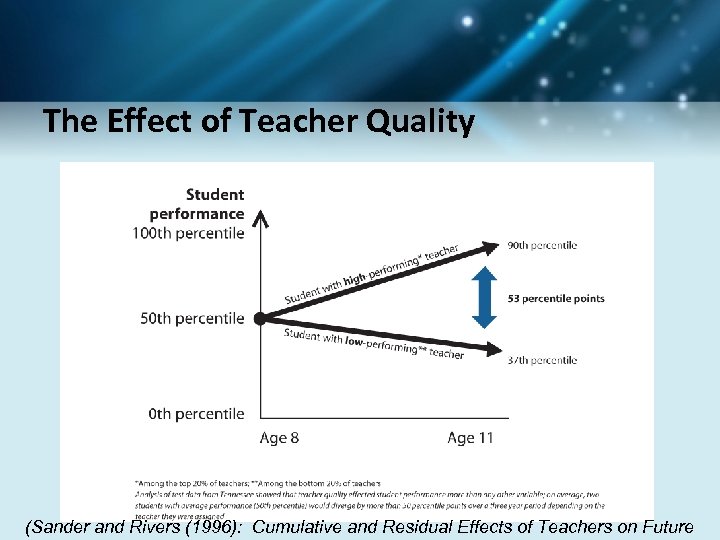

The Effect of Teacher Quality (Sander and Rivers (1996): Cumulative and Residual Effects of Teachers on Future

The Effect of Teacher Quality (Sander and Rivers (1996): Cumulative and Residual Effects of Teachers on Future

Implementation Issues • Timeline • State Data System • Training – Teachers – Principals/Other Evaluators • Validation • Funding • Rewards/Consequences

Implementation Issues • Timeline • State Data System • Training – Teachers – Principals/Other Evaluators • Validation • Funding • Rewards/Consequences

The Education Trust Whether schools are charters or traditional public schools, several features distinguish the high performers from all the rest. They don’t leave anything about teaching and learning to chance. An awful lot of our teachers—even brand new ones—are left to figure out on their own what to teach and what constitutes “good enough” work. www. edtrust. org

The Education Trust Whether schools are charters or traditional public schools, several features distinguish the high performers from all the rest. They don’t leave anything about teaching and learning to chance. An awful lot of our teachers—even brand new ones—are left to figure out on their own what to teach and what constitutes “good enough” work. www. edtrust. org

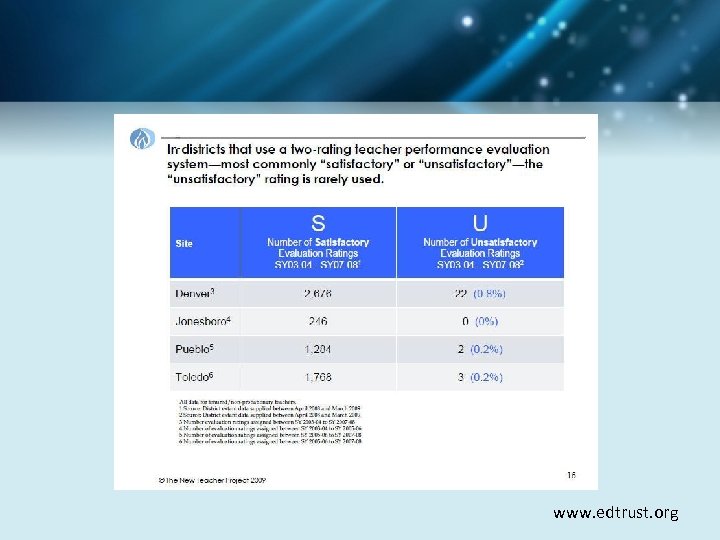

The Education Trust The Widget Effect: “When it comes to measuring instructional performance, current policies and systems overlook significant differences between teachers. There is little or no differentiation of excellent teaching from good, good from fair, or fair from poor. This is the Widget Effect: a tendency to treat all teachers as roughly interchangeable, even when their teaching is quite variable. Consequently, teachers are not developed as professionals with individual strengths and capabilities, and poor performance is rarely identified or addressed. ” • The New Teacher Project, 2009 www. edtrust. org

The Education Trust The Widget Effect: “When it comes to measuring instructional performance, current policies and systems overlook significant differences between teachers. There is little or no differentiation of excellent teaching from good, good from fair, or fair from poor. This is the Widget Effect: a tendency to treat all teachers as roughly interchangeable, even when their teaching is quite variable. Consequently, teachers are not developed as professionals with individual strengths and capabilities, and poor performance is rarely identified or addressed. ” • The New Teacher Project, 2009 www. edtrust. org

www. edtrust. org

www. edtrust. org

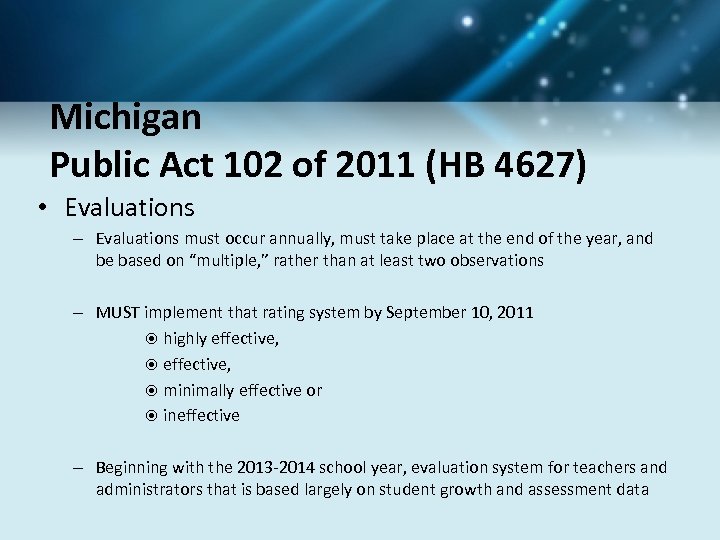

Michigan Public Act 102 of 2011 (HB 4627) • Evaluations – Evaluations must occur annually, must take place at the end of the year, and be based on “multiple, ” rather than at least two observations – MUST implement that rating system by September 10, 2011 highly effective, minimally effective or ineffective – Beginning with the 2013 -2014 school year, evaluation system for teachers and administrators that is based largely on student growth and assessment data

Michigan Public Act 102 of 2011 (HB 4627) • Evaluations – Evaluations must occur annually, must take place at the end of the year, and be based on “multiple, ” rather than at least two observations – MUST implement that rating system by September 10, 2011 highly effective, minimally effective or ineffective – Beginning with the 2013 -2014 school year, evaluation system for teachers and administrators that is based largely on student growth and assessment data

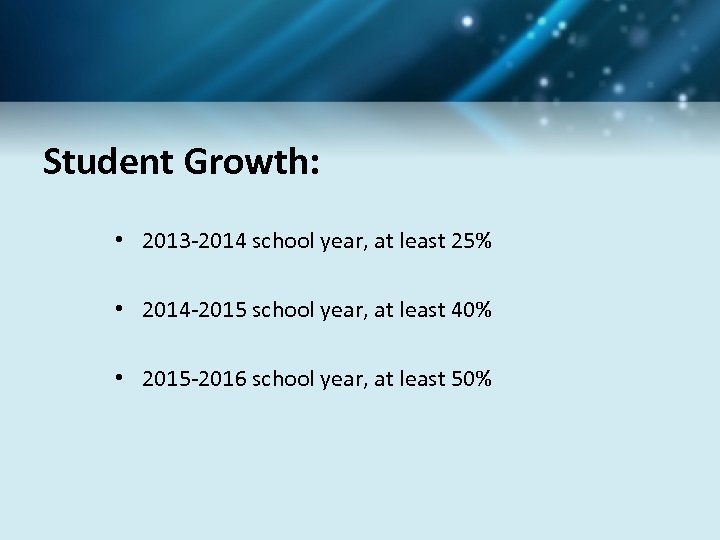

Student Growth: • 2013 -2014 school year, at least 25% • 2014 -2015 school year, at least 40% • 2015 -2016 school year, at least 50%

Student Growth: • 2013 -2014 school year, at least 25% • 2014 -2015 school year, at least 40% • 2015 -2016 school year, at least 50%

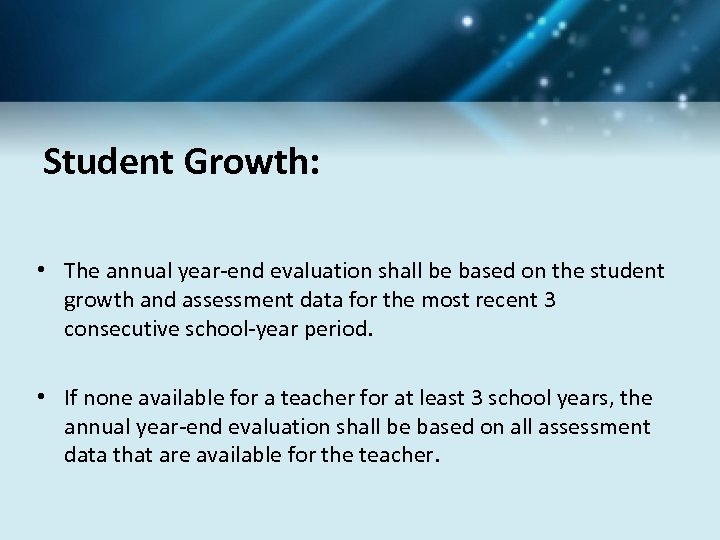

Student Growth: • The annual year-end evaluation shall be based on the student growth and assessment data for the most recent 3 consecutive school-year period. • If none available for a teacher for at least 3 school years, the annual year-end evaluation shall be based on all assessment data that are available for the teacher.

Student Growth: • The annual year-end evaluation shall be based on the student growth and assessment data for the most recent 3 consecutive school-year period. • If none available for a teacher for at least 3 school years, the annual year-end evaluation shall be based on all assessment data that are available for the teacher.

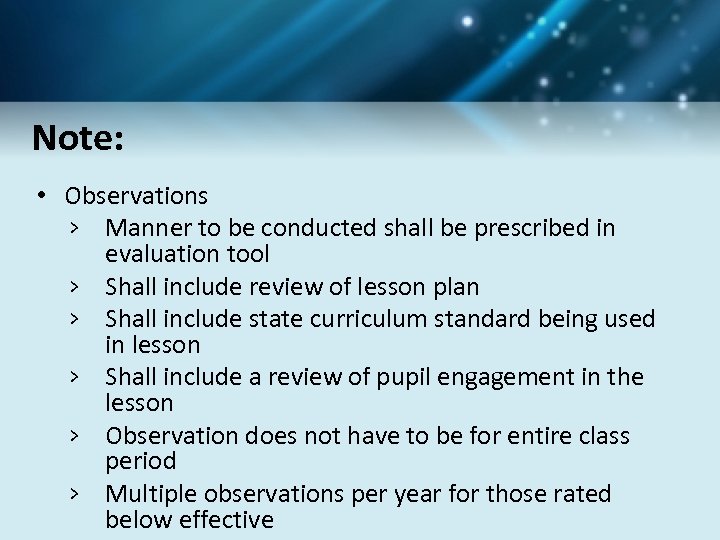

Note: • Observations › Manner to be conducted shall be prescribed in evaluation tool › Shall include review of lesson plan › Shall include state curriculum standard being used in lesson › Shall include a review of pupil engagement in the lesson › Observation does not have to be for entire class period › Multiple observations per year for those rated below effective

Note: • Observations › Manner to be conducted shall be prescribed in evaluation tool › Shall include review of lesson plan › Shall include state curriculum standard being used in lesson › Shall include a review of pupil engagement in the lesson › Observation does not have to be for entire class period › Multiple observations per year for those rated below effective

Michigan Public Act 102 of 2011 (HB 4627) Ineffective Ratings – Beginning with the 2015 -16 school year, a board must notify the parent of a student assigned to a teacher who has been ineffective on his or her two most recent annual year-end evaluations. – Any teacher or administrator who is rated ineffective on three consecutive annual year-end evaluations must be dismissed from employment.

Michigan Public Act 102 of 2011 (HB 4627) Ineffective Ratings – Beginning with the 2015 -16 school year, a board must notify the parent of a student assigned to a teacher who has been ineffective on his or her two most recent annual year-end evaluations. – Any teacher or administrator who is rated ineffective on three consecutive annual year-end evaluations must be dismissed from employment.

Note: Districts are not required to comply with Governor’s teacher/administrator evaluation tools if they have an evaluation system that: › Most significant portion is based on student growth and assessment data › Uses research based measures to determine student growth › Teacher effectiveness and ratings, as measured by student achievement and growth data, are factored in teacher retention, promotion and termination decisions › Teacher/administrator results are used to inform teacher of professional development for the succeeding year › Ensures that teachers/administrators are evaluated annually Must notify Gov. Council by November 1 st of exemption

Note: Districts are not required to comply with Governor’s teacher/administrator evaluation tools if they have an evaluation system that: › Most significant portion is based on student growth and assessment data › Uses research based measures to determine student growth › Teacher effectiveness and ratings, as measured by student achievement and growth data, are factored in teacher retention, promotion and termination decisions › Teacher/administrator results are used to inform teacher of professional development for the succeeding year › Ensures that teachers/administrators are evaluated annually Must notify Gov. Council by November 1 st of exemption

Michigan Council for Educator Effectiveness (MCEE) Appointed by Governor Snyder: › Deborah Ball › Mark Reckase › Nick Sheltrown Appointed by Senate Majority Leader: › David Vensel Appointed by Speaker of the House: › Jennifer Hammond Appointed by Superintendent of Public Instruction: › Joseph Martineau

Michigan Council for Educator Effectiveness (MCEE) Appointed by Governor Snyder: › Deborah Ball › Mark Reckase › Nick Sheltrown Appointed by Senate Majority Leader: › David Vensel Appointed by Speaker of the House: › Jennifer Hammond Appointed by Superintendent of Public Instruction: › Joseph Martineau

Michigan Council for Educator Effectiveness (MCEE) Advisory committee appointed by the Governor – Provide input on the Council’s recommendations – Teachers, administrators, parents

Michigan Council for Educator Effectiveness (MCEE) Advisory committee appointed by the Governor – Provide input on the Council’s recommendations – Teachers, administrators, parents

Michigan Council for Educator Effectiveness (MCEE) No later than April 30, 2012 the Council must submit: A student growth and assessment tool A value-added model Measures growth in core areas and other areas Complies with laws for students with disabilities Has at least a pre- and post-test Can be used with students of varying ability levels

Michigan Council for Educator Effectiveness (MCEE) No later than April 30, 2012 the Council must submit: A student growth and assessment tool A value-added model Measures growth in core areas and other areas Complies with laws for students with disabilities Has at least a pre- and post-test Can be used with students of varying ability levels

Michigan Council for Educator Effectiveness (MCEE) No later than April 30, 2012 the Council must submit: A state evaluation tool for teachers (general and special education teachers) Including instructional leadership abilities, attendance, professional contributions, training, progress reports, school improvement progress, peer input and pupil and parent feedback Council must seek input from local districts

Michigan Council for Educator Effectiveness (MCEE) No later than April 30, 2012 the Council must submit: A state evaluation tool for teachers (general and special education teachers) Including instructional leadership abilities, attendance, professional contributions, training, progress reports, school improvement progress, peer input and pupil and parent feedback Council must seek input from local districts

Michigan Council for Educator Effectiveness (MCEE) No later than April 30, 2012 the Council must submit: • A state evaluation tool for administrators Including attendance, graduation rates, professional contributions, training, progress reports, school improvement plan progress, peer input and pupil/parent feedback • • Recommended changes for requirements for professional teaching certificate A process for evaluating and approving local evaluation tools

Michigan Council for Educator Effectiveness (MCEE) No later than April 30, 2012 the Council must submit: • A state evaluation tool for administrators Including attendance, graduation rates, professional contributions, training, progress reports, school improvement plan progress, peer input and pupil/parent feedback • • Recommended changes for requirements for professional teaching certificate A process for evaluating and approving local evaluation tools

Interim Report Vision Statement: The Michigan Council for Educator Effectiveness will develop a fair, transparent, and feasible evaluation system for teachers and school administrators. The system will be based on rigorous standards of professional practice and of measurement. The goals of this system is to contribute to enhanced instruction, improve student achievement, and support ongoing professional learning.

Interim Report Vision Statement: The Michigan Council for Educator Effectiveness will develop a fair, transparent, and feasible evaluation system for teachers and school administrators. The system will be based on rigorous standards of professional practice and of measurement. The goals of this system is to contribute to enhanced instruction, improve student achievement, and support ongoing professional learning.

Interim Report Teacher Evaluation: Observation Tool Selection Criteria 1. Alignment with State Standards 2. Instruments describe practice and support teacher development 3. Rigorous and ongoing training program for evaluators 4. Independent research to confirm validity and reliability 5. Feasibility

Interim Report Teacher Evaluation: Observation Tool Selection Criteria 1. Alignment with State Standards 2. Instruments describe practice and support teacher development 3. Rigorous and ongoing training program for evaluators 4. Independent research to confirm validity and reliability 5. Feasibility

Interim Report Teacher Evaluation: Observation Tool Systems 1. 2. 3. 4. 5. 6. Marzano Observation Protocol* Thoughtful Classroom* Five Dimensions of Teaching and Learning* Framework for Teaching* Classroom Assessment Scoring System TAP

Interim Report Teacher Evaluation: Observation Tool Systems 1. 2. 3. 4. 5. 6. Marzano Observation Protocol* Thoughtful Classroom* Five Dimensions of Teaching and Learning* Framework for Teaching* Classroom Assessment Scoring System TAP

Interim Report Teacher Evaluation: Observation Tool Lesson Learned from other States: 1. Pilot is essential 2. Phasing in 3. Number of observations 4. Other important components

Interim Report Teacher Evaluation: Observation Tool Lesson Learned from other States: 1. Pilot is essential 2. Phasing in 3. Number of observations 4. Other important components

Interim Report Teacher Evaluation: Observation Tool Challenges 1. 2. 3. 4. Being fiscally responsible Ensuring fairness and reliability Assessing the fidelity of protocol implementation Determining the equivalence of different instruments

Interim Report Teacher Evaluation: Observation Tool Challenges 1. 2. 3. 4. Being fiscally responsible Ensuring fairness and reliability Assessing the fidelity of protocol implementation Determining the equivalence of different instruments

Interim Report Teacher Evaluation: Student Growth Model • Recognize that student growth can give insight into teacher effectiveness • Admit that “student growth” is not clearly defined • Descriptions of growth vary and include: – Tests – Analytic techniques for scoring – Measures of value-added modeling • Simple vs. Complex statistics • VAM

Interim Report Teacher Evaluation: Student Growth Model • Recognize that student growth can give insight into teacher effectiveness • Admit that “student growth” is not clearly defined • Descriptions of growth vary and include: – Tests – Analytic techniques for scoring – Measures of value-added modeling • Simple vs. Complex statistics • VAM

Interim Report Teacher Evaluation: Student Growth Model Challenges 1. Measurement error in standardized and local measurements 2. Balancing fairness toward educators with fairness toward students 3. Non-tested grades and subjects 4. Tenuous roster connections between students and teachers 5. Number of years of data

Interim Report Teacher Evaluation: Student Growth Model Challenges 1. Measurement error in standardized and local measurements 2. Balancing fairness toward educators with fairness toward students 3. Non-tested grades and subjects 4. Tenuous roster connections between students and teachers 5. Number of years of data

Considerations for Student Growth Measures Should the State evaluation data (i. e. MEAP, MME, etc. ) be the only source of student growth data? Why or why not? Should local student growth models be allowed? Why or why not? If you agree that multiple measures should be allowed, what percentage would you give each of the multiple measures? – For example if educators are permitted to use MME data, a local tool such as an end of course assessment, and a personally developed measure how should those three measures be weighted? How should we measure teachers in non-tested subjects such as band or auto mechanics?

Considerations for Student Growth Measures Should the State evaluation data (i. e. MEAP, MME, etc. ) be the only source of student growth data? Why or why not? Should local student growth models be allowed? Why or why not? If you agree that multiple measures should be allowed, what percentage would you give each of the multiple measures? – For example if educators are permitted to use MME data, a local tool such as an end of course assessment, and a personally developed measure how should those three measures be weighted? How should we measure teachers in non-tested subjects such as band or auto mechanics?

Pilot for 2012 -2013 • • 14 school districts Pilot the teacher observation tool Pilot the administrator evaluation tool Train evaluators Provide information on validity Gather feedback from teachers and principals 4 observation tools Student growth model/VAM pilot

Pilot for 2012 -2013 • • 14 school districts Pilot the teacher observation tool Pilot the administrator evaluation tool Train evaluators Provide information on validity Gather feedback from teachers and principals 4 observation tools Student growth model/VAM pilot

5 Dimensions of Teaching and Learning: Clare, Leslie, Marshall, Mt. Morris Framework for Teaching: Garden City, Montrose, Port Huron Marzano Evaluation Framework: Big Rapids, Farmington, North Branch The Thoughtful Classroom: Cassopolis, Gibraltar, Harper Creek, Lincoln

5 Dimensions of Teaching and Learning: Clare, Leslie, Marshall, Mt. Morris Framework for Teaching: Garden City, Montrose, Port Huron Marzano Evaluation Framework: Big Rapids, Farmington, North Branch The Thoughtful Classroom: Cassopolis, Gibraltar, Harper Creek, Lincoln

Pilot for 2012 -2013 Questions to be answered about observational tool: – – Ratings of teachers Satisfaction with tool Adequate training Correlation between observation tool and student growth

Pilot for 2012 -2013 Questions to be answered about observational tool: – – Ratings of teachers Satisfaction with tool Adequate training Correlation between observation tool and student growth

Pilot for 2012 -2013 Testing Protocol NWEA K-2, 3 -6 Explore 7, 8 PLAN 9, 10 ACT 11, 12 Value Added Modeling Sample size State-wide data collection tool Vendors

Pilot for 2012 -2013 Testing Protocol NWEA K-2, 3 -6 Explore 7, 8 PLAN 9, 10 ACT 11, 12 Value Added Modeling Sample size State-wide data collection tool Vendors

Pilot for 2012 -2013 Questions to be answered about observational tool: – – Ratings of teachers Satisfaction with tool Adequate training Correlation between observation tool and student growth

Pilot for 2012 -2013 Questions to be answered about observational tool: – – Ratings of teachers Satisfaction with tool Adequate training Correlation between observation tool and student growth

Pilot for 2012 -2013 Testing Protocol NWEA K-2, 3 -6 Explore 7, 8 PLAN 9, 10 ACT 11, 12 Value Added Modeling Sample size State-wide data collection tool Vendors

Pilot for 2012 -2013 Testing Protocol NWEA K-2, 3 -6 Explore 7, 8 PLAN 9, 10 ACT 11, 12 Value Added Modeling Sample size State-wide data collection tool Vendors

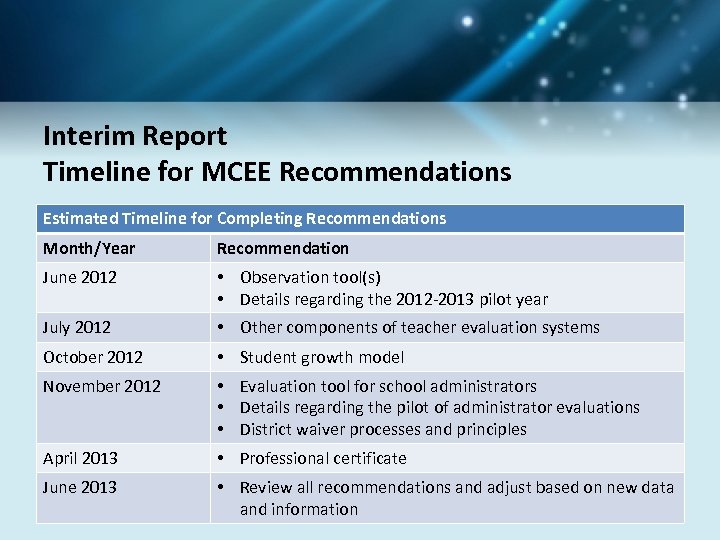

Interim Report Timeline for MCEE Recommendations Estimated Timeline for Completing Recommendations Month/Year Recommendation June 2012 • Observation tool(s) • Details regarding the 2012 -2013 pilot year July 2012 • Other components of teacher evaluation systems October 2012 • Student growth model November 2012 • Evaluation tool for school administrators • Details regarding the pilot of administrator evaluations • District waiver processes and principles April 2013 • Professional certificate June 2013 • Review all recommendations and adjust based on new data and information

Interim Report Timeline for MCEE Recommendations Estimated Timeline for Completing Recommendations Month/Year Recommendation June 2012 • Observation tool(s) • Details regarding the 2012 -2013 pilot year July 2012 • Other components of teacher evaluation systems October 2012 • Student growth model November 2012 • Evaluation tool for school administrators • Details regarding the pilot of administrator evaluations • District waiver processes and principles April 2013 • Professional certificate June 2013 • Review all recommendations and adjust based on new data and information

Educator Evaluation Discussion www. mcede. org Jennifer S. Hammond, Ph. D. jhammond@grandblancschools. org (810) 591 -6637

Educator Evaluation Discussion www. mcede. org Jennifer S. Hammond, Ph. D. jhammond@grandblancschools. org (810) 591 -6637