6dd8968b6eb958357a8699e20fd1a8a9.ppt

- Количество слайдов: 15

CDF-UK MINI-GRID Ian Mc. Arthur Oxford University, Physics Department Ian. Mc. Arthur@physics. ox. ac. uk 3 rd Nov 2000 HEPi. X/HEPNT 2000 1

CDF-UK MINI-GRID Ian Mc. Arthur Oxford University, Physics Department Ian. Mc. Arthur@physics. ox. ac. uk 3 rd Nov 2000 HEPi. X/HEPNT 2000 1

Background CDF collaborators in the UK applied for JIF grant for IT equipment in 1998. Awarded £ 1. 67 M in summer 2000. l First half of grant will buy l – Multiprocessor systems plus 1 TB of disk for 4 Universities – 2 multiprocessors plus 2. 5 TB of disk for RAL – A 32 CPU farm for RAL – 5 TB of disk and 8 high end workstations for FNAL Emphasis on high IO throughput ‘superworkstations’. l A dedicated network link from London to FNAL l 3 rd Nov 2000 HEPi. X/HEPNT 2000 2

Background CDF collaborators in the UK applied for JIF grant for IT equipment in 1998. Awarded £ 1. 67 M in summer 2000. l First half of grant will buy l – Multiprocessor systems plus 1 TB of disk for 4 Universities – 2 multiprocessors plus 2. 5 TB of disk for RAL – A 32 CPU farm for RAL – 5 TB of disk and 8 high end workstations for FNAL Emphasis on high IO throughput ‘superworkstations’. l A dedicated network link from London to FNAL l 3 rd Nov 2000 HEPi. X/HEPNT 2000 2

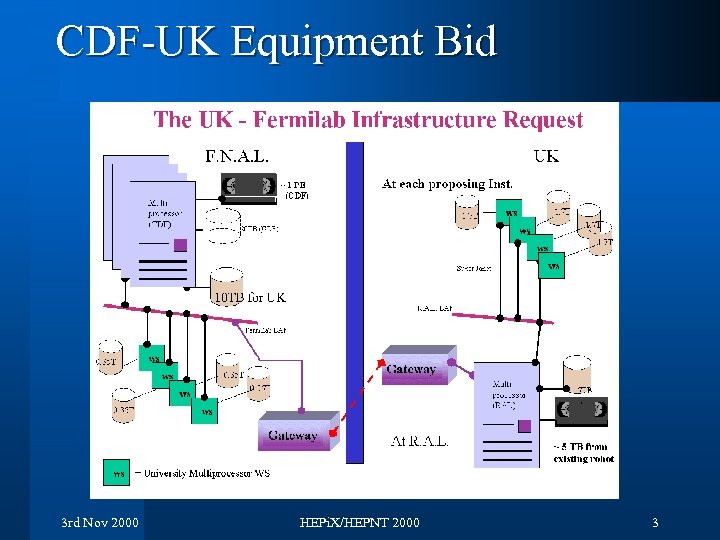

CDF-UK Equipment Bid 3 rd Nov 2000 HEPi. X/HEPNT 2000 3

CDF-UK Equipment Bid 3 rd Nov 2000 HEPi. X/HEPNT 2000 3

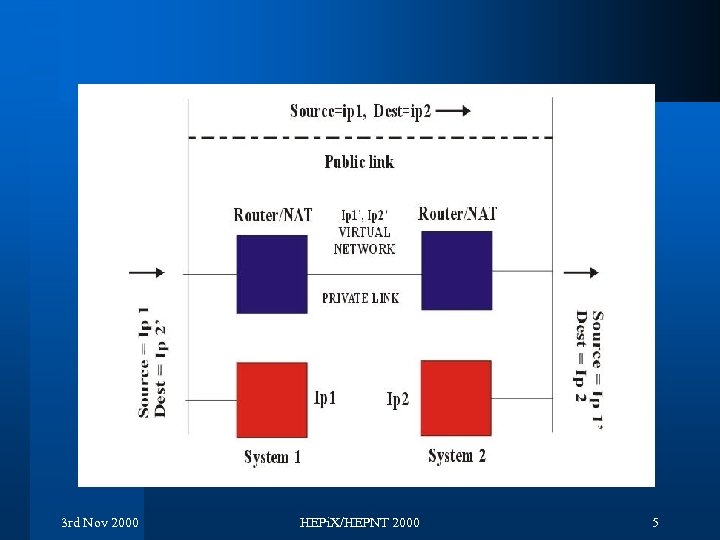

Hardware and Network Tender document is written and schedule is on target for equipment delivery in May 2001. Second phase starts June 2002 l Developed a scheme for transparent access to CDF systems via the US link. l – Each system CDF-UK requires to use the link has an alternative IP name and address to allow the data to be sent down the dedicated link. – A Network Address Translation scheme ensures that return traffic takes the same path (symmetric routing) – Demonstrated the scheme working with 2 Cisco routers on a local network. – Starting to talk to network providers to implement physical link. – Must try to make Kerberos work across this link 3 rd Nov 2000 HEPi. X/HEPNT 2000 4

Hardware and Network Tender document is written and schedule is on target for equipment delivery in May 2001. Second phase starts June 2002 l Developed a scheme for transparent access to CDF systems via the US link. l – Each system CDF-UK requires to use the link has an alternative IP name and address to allow the data to be sent down the dedicated link. – A Network Address Translation scheme ensures that return traffic takes the same path (symmetric routing) – Demonstrated the scheme working with 2 Cisco routers on a local network. – Starting to talk to network providers to implement physical link. – Must try to make Kerberos work across this link 3 rd Nov 2000 HEPi. X/HEPNT 2000 4

3 rd Nov 2000 HEPi. X/HEPNT 2000 5

3 rd Nov 2000 HEPi. X/HEPNT 2000 5

Software Project l l l JIF proposal only covered hardware but in the meantime GRID has arrived ! Aim to provide a scheme to allow efficient use of the new equipment and other distributed resources. Concentrate on solving real-user issues. Develop an architecture for locating data, data transfer and job submission within a distributed environment Based on the GRID architecture initially on top of the Globus toolkit. Gives us experience in this rapidly developing field. 3 rd Nov 2000 HEPi. X/HEPNT 2000 6

Software Project l l l JIF proposal only covered hardware but in the meantime GRID has arrived ! Aim to provide a scheme to allow efficient use of the new equipment and other distributed resources. Concentrate on solving real-user issues. Develop an architecture for locating data, data transfer and job submission within a distributed environment Based on the GRID architecture initially on top of the Globus toolkit. Gives us experience in this rapidly developing field. 3 rd Nov 2000 HEPi. X/HEPNT 2000 6

Some Requirements Want an efficient environment: so automate routine tasks as much as possible l With few resources available must make best use of the existing packages and require few or no modifications to existing software. l To make best use of the systems available: l – data may need to be moved to where these is available CPU, – or a job may need to be submitted to a remote site to avoid moving the data. l Produce a simple but useful system ASAP. 3 rd Nov 2000 HEPi. X/HEPNT 2000 7

Some Requirements Want an efficient environment: so automate routine tasks as much as possible l With few resources available must make best use of the existing packages and require few or no modifications to existing software. l To make best use of the systems available: l – data may need to be moved to where these is available CPU, – or a job may need to be submitted to a remote site to avoid moving the data. l Produce a simple but useful system ASAP. 3 rd Nov 2000 HEPi. X/HEPNT 2000 7

Design principles All sites are equal l All sites hold meta-data describing only local data l Use LDAP to publish meta-data kept in: l – Oracle - at FNAL – msql - at most other places • may go to My. SQL – Can introduce caching but keep it simple at first Use local intelligence at each end of data transfer – allows us to take account of local idiosyncrasies e. g. use of near-line storage, disk space management l Use existing Disk Inventory Manager l 3 rd Nov 2000 HEPi. X/HEPNT 2000 8

Design principles All sites are equal l All sites hold meta-data describing only local data l Use LDAP to publish meta-data kept in: l – Oracle - at FNAL – msql - at most other places • may go to My. SQL – Can introduce caching but keep it simple at first Use local intelligence at each end of data transfer – allows us to take account of local idiosyncrasies e. g. use of near-line storage, disk space management l Use existing Disk Inventory Manager l 3 rd Nov 2000 HEPi. X/HEPNT 2000 8

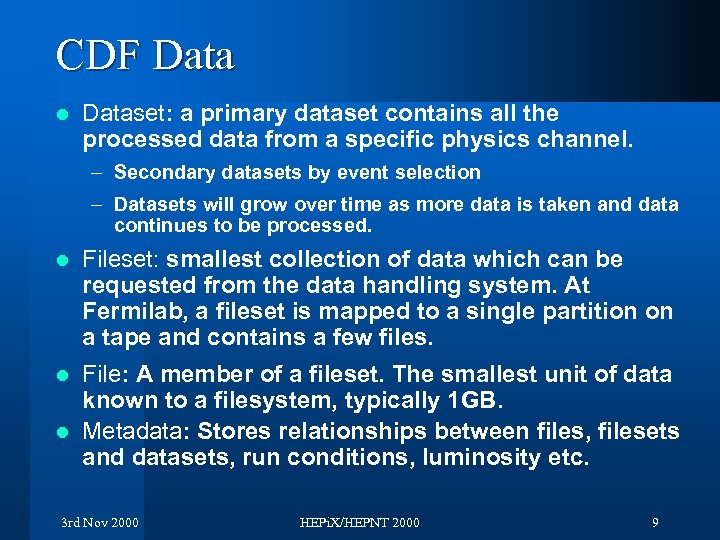

CDF Data l Dataset: a primary dataset contains all the processed data from a specific physics channel. – Secondary datasets by event selection – Datasets will grow over time as more data is taken and data continues to be processed. l Fileset: smallest collection of data which can be requested from the data handling system. At Fermilab, a fileset is mapped to a single partition on a tape and contains a few files. File: A member of a fileset. The smallest unit of data known to a filesystem, typically 1 GB. l Metadata: Stores relationships between files, filesets and datasets, run conditions, luminosity etc. l 3 rd Nov 2000 HEPi. X/HEPNT 2000 9

CDF Data l Dataset: a primary dataset contains all the processed data from a specific physics channel. – Secondary datasets by event selection – Datasets will grow over time as more data is taken and data continues to be processed. l Fileset: smallest collection of data which can be requested from the data handling system. At Fermilab, a fileset is mapped to a single partition on a tape and contains a few files. File: A member of a fileset. The smallest unit of data known to a filesystem, typically 1 GB. l Metadata: Stores relationships between files, filesets and datasets, run conditions, luminosity etc. l 3 rd Nov 2000 HEPi. X/HEPNT 2000 9

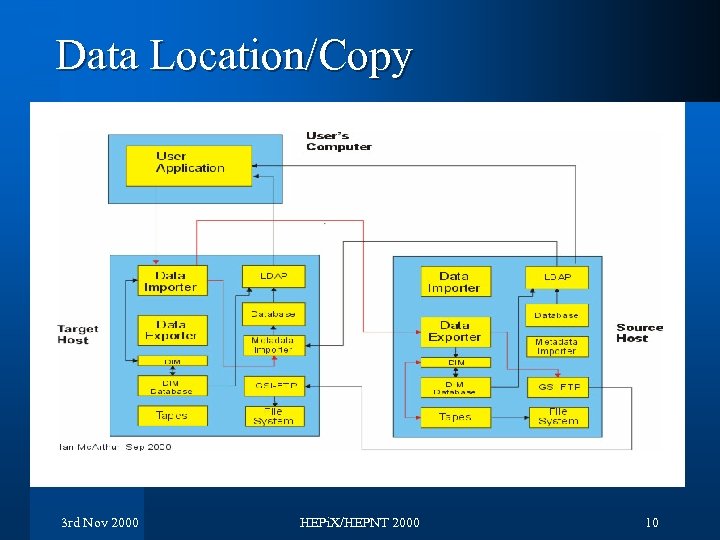

Data Location/Copy 3 rd Nov 2000 HEPi. X/HEPNT 2000 10

Data Location/Copy 3 rd Nov 2000 HEPi. X/HEPNT 2000 10

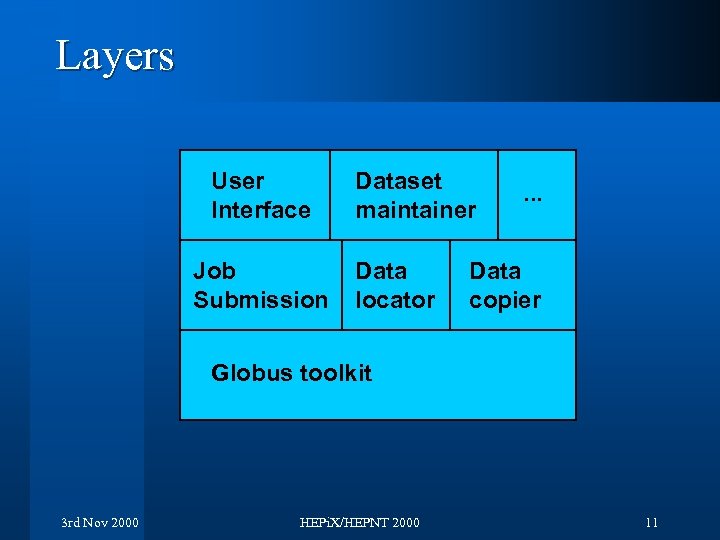

Layers User Interface Job Submission Dataset maintainer Data locator . . . Data copier Globus toolkit 3 rd Nov 2000 HEPi. X/HEPNT 2000 11

Layers User Interface Job Submission Dataset maintainer Data locator . . . Data copier Globus toolkit 3 rd Nov 2000 HEPi. X/HEPNT 2000 11

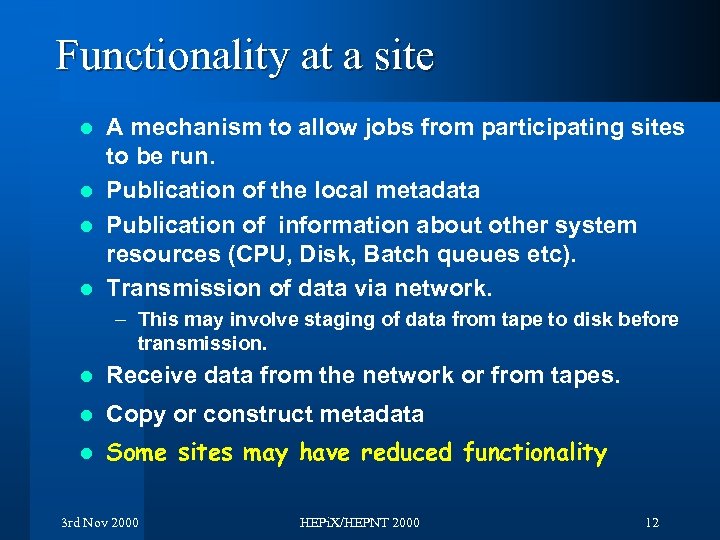

Functionality at a site A mechanism to allow jobs from participating sites to be run. l Publication of the local metadata l Publication of information about other system resources (CPU, Disk, Batch queues etc). l Transmission of data via network. l – This may involve staging of data from tape to disk before transmission. l Receive data from the network or from tapes. l Copy or construct metadata l Some sites may have reduced functionality 3 rd Nov 2000 HEPi. X/HEPNT 2000 12

Functionality at a site A mechanism to allow jobs from participating sites to be run. l Publication of the local metadata l Publication of information about other system resources (CPU, Disk, Batch queues etc). l Transmission of data via network. l – This may involve staging of data from tape to disk before transmission. l Receive data from the network or from tapes. l Copy or construct metadata l Some sites may have reduced functionality 3 rd Nov 2000 HEPi. X/HEPNT 2000 12

Scope l Plan to install at – 4 UK universities (Glasgow, Liverpool, Oxford, UCL) – RAL – FNAL (although this would be reduced functionality, data and metadata exporter) – More non-UK sites could be included l Intend to have basic utilities in place at time of equipment installation (May 2001) 3 rd Nov 2000 HEPi. X/HEPNT 2000 13

Scope l Plan to install at – 4 UK universities (Glasgow, Liverpool, Oxford, UCL) – RAL – FNAL (although this would be reduced functionality, data and metadata exporter) – More non-UK sites could be included l Intend to have basic utilities in place at time of equipment installation (May 2001) 3 rd Nov 2000 HEPi. X/HEPNT 2000 13

Work so far Project plan under development – once finished additional resources will be requested. l Globus installed at a number of sites. Remote execution of shell commands checked. l Some bits demonstrated: l – LDAP to Oracle via Python script • Python convenient scripting language for the job • May use a daemon to hold connection to ORACLE • LDAP only implement search - and even this is quite tricky because your script should support filter, base and scope. • LDAP schema will not reflect full SQL schema but just what is needed. – Java to LDAP (via JNDI) • JNDI (Java Naming and Directory Interface) gives very elegant interface to LDAP 3 rd Nov 2000 HEPi. X/HEPNT 2000 14

Work so far Project plan under development – once finished additional resources will be requested. l Globus installed at a number of sites. Remote execution of shell commands checked. l Some bits demonstrated: l – LDAP to Oracle via Python script • Python convenient scripting language for the job • May use a daemon to hold connection to ORACLE • LDAP only implement search - and even this is quite tricky because your script should support filter, base and scope. • LDAP schema will not reflect full SQL schema but just what is needed. – Java to LDAP (via JNDI) • JNDI (Java Naming and Directory Interface) gives very elegant interface to LDAP 3 rd Nov 2000 HEPi. X/HEPNT 2000 14

Longer Term Goals User Interface to be implemented as Java application to give platform independence. l UI to automate or suggest strategies for moving data/submitting jobs l – Need to include cost/elapsed time estimates for task completion – Need to look up dataset sizes, network health, time to copy from tape or disk, cpu load etc. Look for more generic solutions l Evaluate any new GRID tools which might standardize any parts we’ve implemented ourselves. l Consolidation with other GRID projects l 3 rd Nov 2000 HEPi. X/HEPNT 2000 15

Longer Term Goals User Interface to be implemented as Java application to give platform independence. l UI to automate or suggest strategies for moving data/submitting jobs l – Need to include cost/elapsed time estimates for task completion – Need to look up dataset sizes, network health, time to copy from tape or disk, cpu load etc. Look for more generic solutions l Evaluate any new GRID tools which might standardize any parts we’ve implemented ourselves. l Consolidation with other GRID projects l 3 rd Nov 2000 HEPi. X/HEPNT 2000 15