b7163456b42f0e5349061a7df5fd2b31.ppt

- Количество слайдов: 23

Canadian Weather Analysis Using Connectionist Learning Paradigms Imran Maqsood*, Muhammad Riaz Khan , Ajith Abraham *Environmental Systems Engineering Program, Faculty of Engineering, University of Regina, Saskatchewan S 4 S 0 A 2, Canada, E-mail: maqsoodi@uregina. ca Partner Technologies Incorporated, 1155 Park Street, Regina, Saskatchewan S 4 N 4 Y 8, Canada, E-mail: riaz 917@hotmail. com Faculty of Information Technology, School of Business Systems, Monash University, Clayton 3800, Australia, E-mail: ajith. abraham@ieee. org WSC 7 1 7 th 23 Sep - 04 Oct, 2002 Online World Conference on Soft Computing in Industrial Applications (on WWW), September 23 - October 4, 2002

Canadian Weather Analysis Using Connectionist Learning Paradigms Imran Maqsood*, Muhammad Riaz Khan , Ajith Abraham *Environmental Systems Engineering Program, Faculty of Engineering, University of Regina, Saskatchewan S 4 S 0 A 2, Canada, E-mail: maqsoodi@uregina. ca Partner Technologies Incorporated, 1155 Park Street, Regina, Saskatchewan S 4 N 4 Y 8, Canada, E-mail: riaz 917@hotmail. com Faculty of Information Technology, School of Business Systems, Monash University, Clayton 3800, Australia, E-mail: ajith. abraham@ieee. org WSC 7 1 7 th 23 Sep - 04 Oct, 2002 Online World Conference on Soft Computing in Industrial Applications (on WWW), September 23 - October 4, 2002

CONTENTS • • • Introduction MLP, ERNN and RBFN Background Experimental Setup of a Case Study Test Results Conclusions 23 Sep - 04 Oct, 2002 WSC 7 2

CONTENTS • • • Introduction MLP, ERNN and RBFN Background Experimental Setup of a Case Study Test Results Conclusions 23 Sep - 04 Oct, 2002 WSC 7 2

1. INTRODUCTION • Weather forecasts provide critical information about future weather • Weather forecasting remains a complex business, due to its chaotic and unpredictable nature • Combined with threat by the global warming and green house gas effect, impact of extreme weather phenomena on society is growing costly, causing infrastructure damage, injury and the loss of life. 23 Sep - 04 Oct, 2002 WSC 7 3

1. INTRODUCTION • Weather forecasts provide critical information about future weather • Weather forecasting remains a complex business, due to its chaotic and unpredictable nature • Combined with threat by the global warming and green house gas effect, impact of extreme weather phenomena on society is growing costly, causing infrastructure damage, injury and the loss of life. 23 Sep - 04 Oct, 2002 WSC 7 3

• Accurate weather forecast models are important to the countries, where the entire agriculture depends upon weather • Previously, several artificial intelligence techniques have been used in the past for modeling chaotic behavior of weather • However, several of them use simple feed-forward neural network training methods using backpropagation algorithm 23 Sep - 04 Oct, 2002 WSC 7 4

• Accurate weather forecast models are important to the countries, where the entire agriculture depends upon weather • Previously, several artificial intelligence techniques have been used in the past for modeling chaotic behavior of weather • However, several of them use simple feed-forward neural network training methods using backpropagation algorithm 23 Sep - 04 Oct, 2002 WSC 7 4

Study Objectives • To develop an accurate and reliable predictive models forecasting the weather of Vancouver, BC, Canada. • To compare performance of multi-layered perception (MLP) neural networks, Elman recurrent neural networks (ERNN) and radial basis function network (RBFN) for the weather analysis. 23 Sep - 04 Oct, 2002 WSC 7 5

Study Objectives • To develop an accurate and reliable predictive models forecasting the weather of Vancouver, BC, Canada. • To compare performance of multi-layered perception (MLP) neural networks, Elman recurrent neural networks (ERNN) and radial basis function network (RBFN) for the weather analysis. 23 Sep - 04 Oct, 2002 WSC 7 5

2. ANN BACKGROUND INFORMATION • • • ANN Advantages An ability to solve complex and non-linear problems Quick response Self-organization Real time operation Fault tolerance via redundant information coding Adaptability and generalization 23 Sep - 04 Oct, 2002 WSC 7 6

2. ANN BACKGROUND INFORMATION • • • ANN Advantages An ability to solve complex and non-linear problems Quick response Self-organization Real time operation Fault tolerance via redundant information coding Adaptability and generalization 23 Sep - 04 Oct, 2002 WSC 7 6

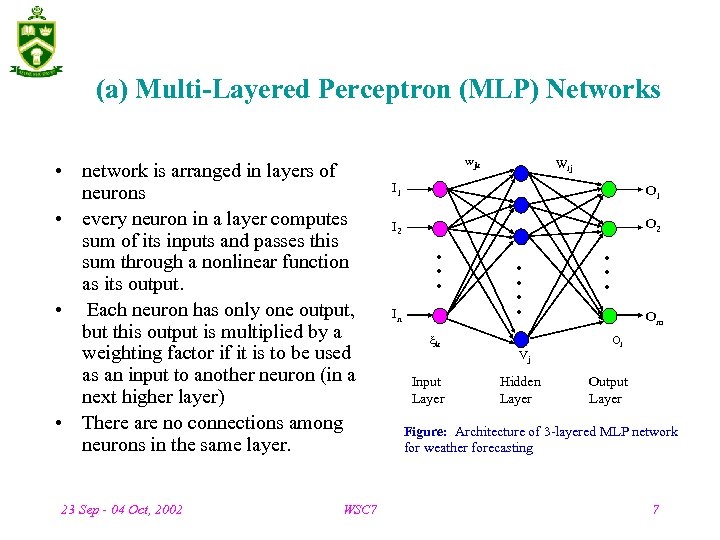

(a) Multi-Layered Perceptron (MLP) Networks • network is arranged in layers of neurons • every neuron in a layer computes sum of its inputs and passes this sum through a nonlinear function as its output. • Each neuron has only one output, but this output is multiplied by a weighting factor if it is to be used as an input to another neuron (in a next higher layer) • There are no connections among neurons in the same layer. 23 Sep - 04 Oct, 2002 WSC 7 wjk Wij I 1 O 1 I 2 O 2 In k Input Layer Vj Hidden Layer Om Oi Output Layer Figure: Architecture of 3 -layered MLP network for weather forecasting 7

(a) Multi-Layered Perceptron (MLP) Networks • network is arranged in layers of neurons • every neuron in a layer computes sum of its inputs and passes this sum through a nonlinear function as its output. • Each neuron has only one output, but this output is multiplied by a weighting factor if it is to be used as an input to another neuron (in a next higher layer) • There are no connections among neurons in the same layer. 23 Sep - 04 Oct, 2002 WSC 7 wjk Wij I 1 O 1 I 2 O 2 In k Input Layer Vj Hidden Layer Om Oi Output Layer Figure: Architecture of 3 -layered MLP network for weather forecasting 7

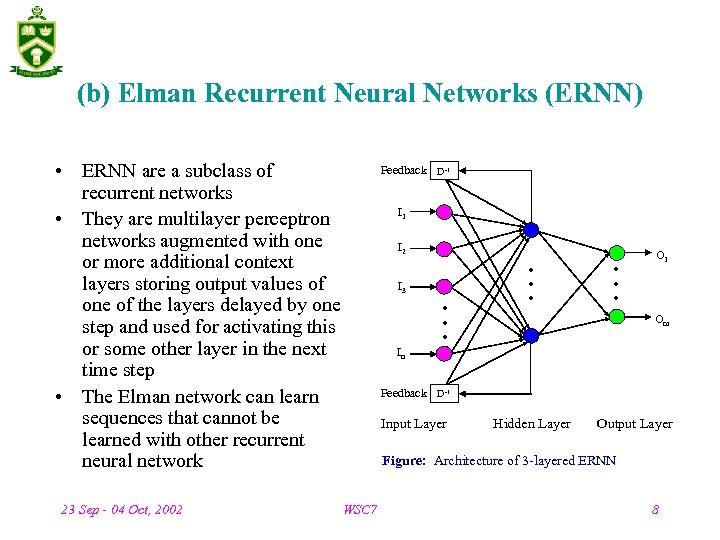

(b) Elman Recurrent Neural Networks (ERNN) • ERNN are a subclass of recurrent networks • They are multilayer perceptron networks augmented with one or more additional context layers storing output values of one of the layers delayed by one step and used for activating this or some other layer in the next time step • The Elman network can learn sequences that cannot be learned with other recurrent neural network 23 Sep - 04 Oct, 2002 WSC 7 Feedback D-1 I 2 I 3 O 1 Om In Feedback D-1 Input Layer Hidden Layer Output Layer Figure: Architecture of 3 -layered ERNN 8

(b) Elman Recurrent Neural Networks (ERNN) • ERNN are a subclass of recurrent networks • They are multilayer perceptron networks augmented with one or more additional context layers storing output values of one of the layers delayed by one step and used for activating this or some other layer in the next time step • The Elman network can learn sequences that cannot be learned with other recurrent neural network 23 Sep - 04 Oct, 2002 WSC 7 Feedback D-1 I 2 I 3 O 1 Om In Feedback D-1 Input Layer Hidden Layer Output Layer Figure: Architecture of 3 -layered ERNN 8

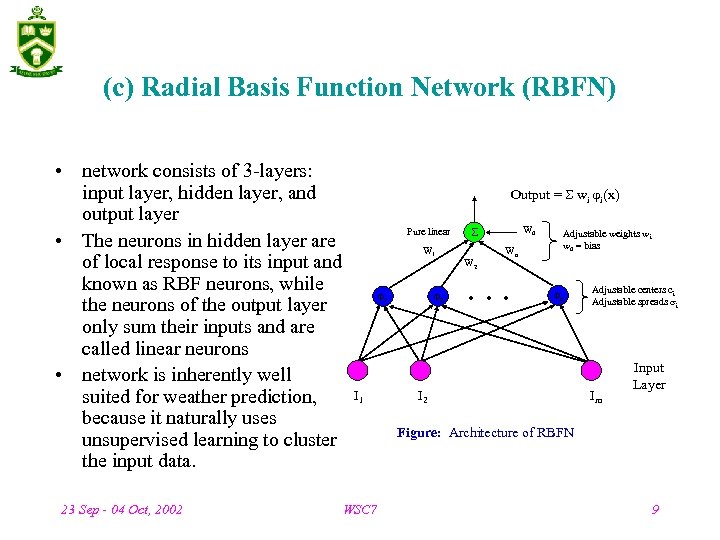

(c) Radial Basis Function Network (RBFN) • network consists of 3 -layers: input layer, hidden layer, and output layer • The neurons in hidden layer are of local response to its input and known as RBF neurons, while the neurons of the output layer only sum their inputs and are called linear neurons • network is inherently well suited for weather prediction, because it naturally uses unsupervised learning to cluster the input data. 23 Sep - 04 Oct, 2002 Output = wi i(x) Pure linear W 1 1 I 1 WSC 7 2 W 0 W 2 Adjustable weights wi w 0 = bias Wn n I 2 Adjustable centers c i Adjustable spreads i Im Input Layer Figure: Architecture of RBFN 9

(c) Radial Basis Function Network (RBFN) • network consists of 3 -layers: input layer, hidden layer, and output layer • The neurons in hidden layer are of local response to its input and known as RBF neurons, while the neurons of the output layer only sum their inputs and are called linear neurons • network is inherently well suited for weather prediction, because it naturally uses unsupervised learning to cluster the input data. 23 Sep - 04 Oct, 2002 Output = wi i(x) Pure linear W 1 1 I 1 WSC 7 2 W 0 W 2 Adjustable weights wi w 0 = bias Wn n I 2 Adjustable centers c i Adjustable spreads i Im Input Layer Figure: Architecture of RBFN 9

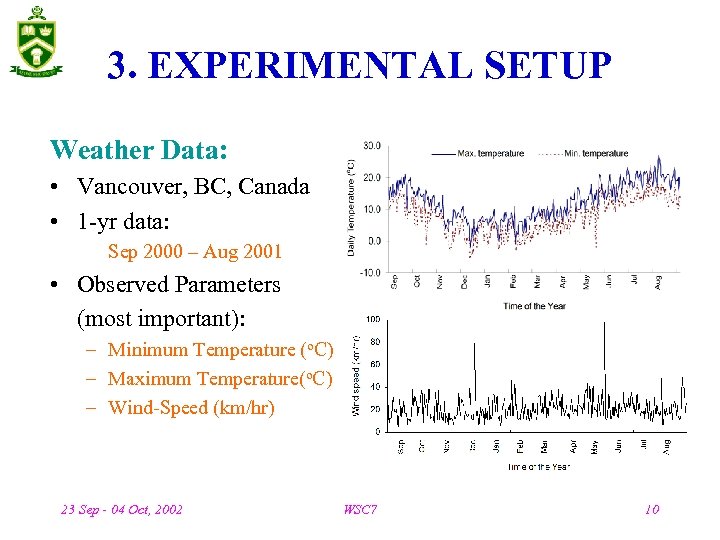

3. EXPERIMENTAL SETUP Weather Data: • Vancouver, BC, Canada • 1 -yr data: Sep 2000 – Aug 2001 • Observed Parameters (most important): – Minimum Temperature (o. C) – Maximum Temperature(o. C) – Wind-Speed (km/hr) 23 Sep - 04 Oct, 2002 WSC 7 10

3. EXPERIMENTAL SETUP Weather Data: • Vancouver, BC, Canada • 1 -yr data: Sep 2000 – Aug 2001 • Observed Parameters (most important): – Minimum Temperature (o. C) – Maximum Temperature(o. C) – Wind-Speed (km/hr) 23 Sep - 04 Oct, 2002 WSC 7 10

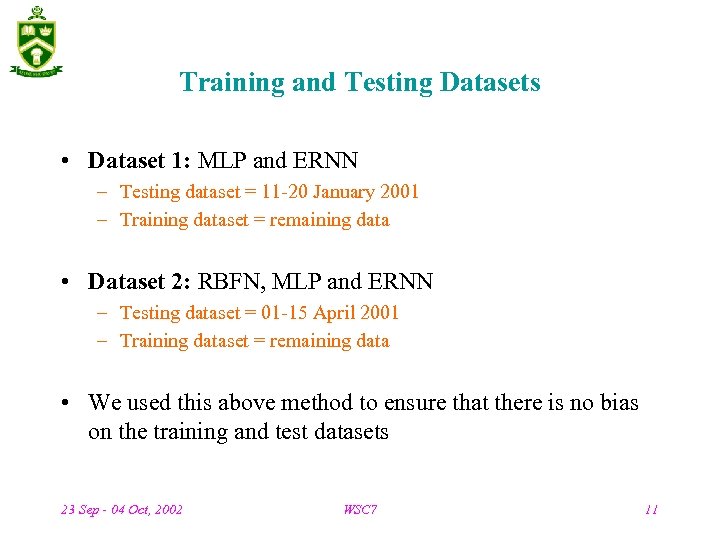

Training and Testing Datasets • Dataset 1: MLP and ERNN – Testing dataset = 11 -20 January 2001 – Training dataset = remaining data • Dataset 2: RBFN, MLP and ERNN – Testing dataset = 01 -15 April 2001 – Training dataset = remaining data • We used this above method to ensure that there is no bias on the training and test datasets 23 Sep - 04 Oct, 2002 WSC 7 11

Training and Testing Datasets • Dataset 1: MLP and ERNN – Testing dataset = 11 -20 January 2001 – Training dataset = remaining data • Dataset 2: RBFN, MLP and ERNN – Testing dataset = 01 -15 April 2001 – Training dataset = remaining data • We used this above method to ensure that there is no bias on the training and test datasets 23 Sep - 04 Oct, 2002 WSC 7 11

Simulation System Used • Pentium-III, 1 GHz processor 256 MB RAM • all the experiments were simulated using MATLAB Steps taken before starting the training process: • Error level was set to a value (10 -4) • The hidden neurons were varied (10 -80) and the optimal number for each network were then decided. 23 Sep - 04 Oct, 2002 WSC 7 12

Simulation System Used • Pentium-III, 1 GHz processor 256 MB RAM • all the experiments were simulated using MATLAB Steps taken before starting the training process: • Error level was set to a value (10 -4) • The hidden neurons were varied (10 -80) and the optimal number for each network were then decided. 23 Sep - 04 Oct, 2002 WSC 7 12

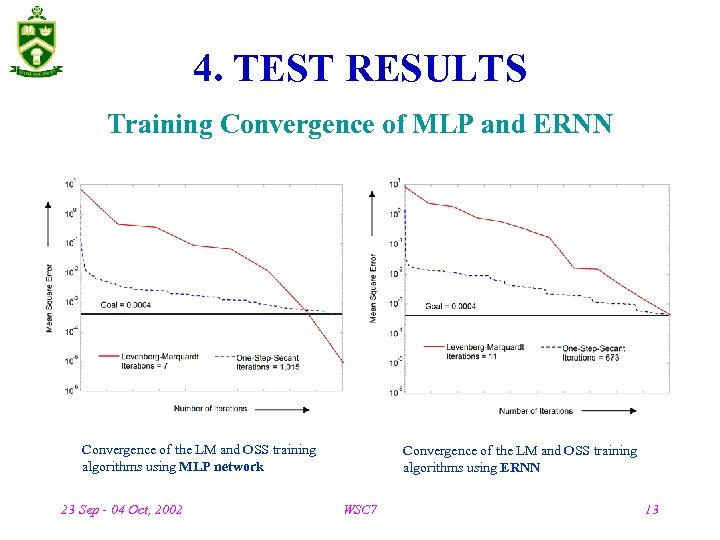

4. TEST RESULTS Training Convergence of MLP and ERNN Convergence of the LM and OSS training algorithms using MLP network 23 Sep - 04 Oct, 2002 Convergence of the LM and OSS training algorithms using ERNN WSC 7 13

4. TEST RESULTS Training Convergence of MLP and ERNN Convergence of the LM and OSS training algorithms using MLP network 23 Sep - 04 Oct, 2002 Convergence of the LM and OSS training algorithms using ERNN WSC 7 13

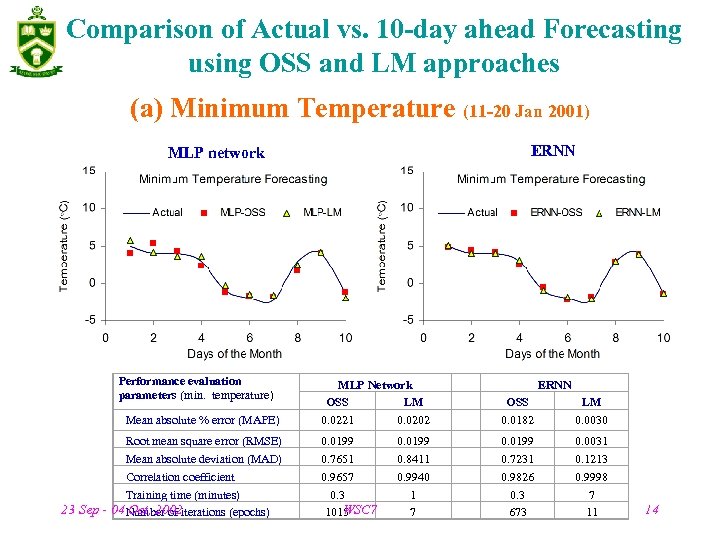

Comparison of Actual vs. 10 -day ahead Forecasting using OSS and LM approaches (a) Minimum Temperature (11 -20 Jan 2001) ERNN MLP network Performance evaluation parameters (min. temperature) Mean absolute % error (MAPE) Root mean square error (RMSE) Mean absolute deviation (MAD) Correlation coefficient Training time (minutes) 23 Sep - 04 Number of iterations (epochs) Oct, 2002 MLP Network OSS LM 0. 0221 0. 0202 OSS 0. 0182 LM 0. 0030 0. 0199 0. 7651 0. 9657 0. 3 WSC 7 1015 0. 0199 0. 7231 0. 9826 0. 3 673 0. 0031 0. 1213 0. 9998 7 11 0. 0199 0. 8411 0. 9940 1 7 ERNN 14

Comparison of Actual vs. 10 -day ahead Forecasting using OSS and LM approaches (a) Minimum Temperature (11 -20 Jan 2001) ERNN MLP network Performance evaluation parameters (min. temperature) Mean absolute % error (MAPE) Root mean square error (RMSE) Mean absolute deviation (MAD) Correlation coefficient Training time (minutes) 23 Sep - 04 Number of iterations (epochs) Oct, 2002 MLP Network OSS LM 0. 0221 0. 0202 OSS 0. 0182 LM 0. 0030 0. 0199 0. 7651 0. 9657 0. 3 WSC 7 1015 0. 0199 0. 7231 0. 9826 0. 3 673 0. 0031 0. 1213 0. 9998 7 11 0. 0199 0. 8411 0. 9940 1 7 ERNN 14

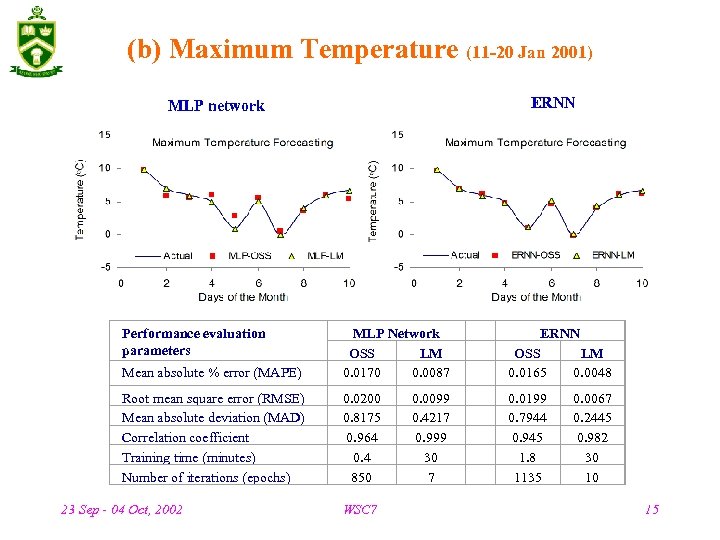

(b) Maximum Temperature (11 -20 Jan 2001) ERNN MLP network Performance evaluation parameters Mean absolute % error (MAPE) MLP Network OSS LM 0. 0170 0. 0087 ERNN OSS LM 0. 0165 0. 0048 Root mean square error (RMSE) Mean absolute deviation (MAD) Correlation coefficient Training time (minutes) Number of iterations (epochs) 0. 0200 0. 8175 0. 964 0. 4 850 0. 0199 0. 7944 0. 945 1. 8 1135 23 Sep - 04 Oct, 2002 WSC 7 0. 0099 0. 4217 0. 999 30 7 0. 0067 0. 2445 0. 982 30 10 15

(b) Maximum Temperature (11 -20 Jan 2001) ERNN MLP network Performance evaluation parameters Mean absolute % error (MAPE) MLP Network OSS LM 0. 0170 0. 0087 ERNN OSS LM 0. 0165 0. 0048 Root mean square error (RMSE) Mean absolute deviation (MAD) Correlation coefficient Training time (minutes) Number of iterations (epochs) 0. 0200 0. 8175 0. 964 0. 4 850 0. 0199 0. 7944 0. 945 1. 8 1135 23 Sep - 04 Oct, 2002 WSC 7 0. 0099 0. 4217 0. 999 30 7 0. 0067 0. 2445 0. 982 30 10 15

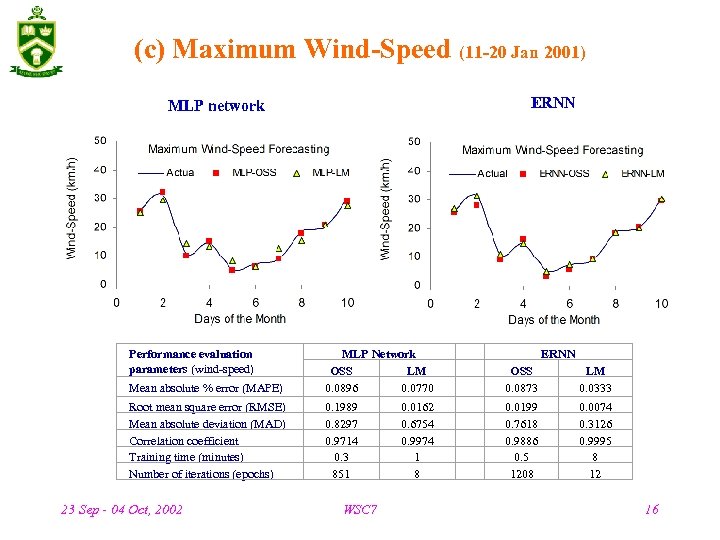

(c) Maximum Wind-Speed (11 -20 Jan 2001) ERNN MLP network Performance evaluation parameters (wind-speed) Mean absolute % error (MAPE) MLP Network OSS LM 0. 0896 0. 0770 OSS 0. 0873 LM 0. 0333 Root mean square error (RMSE) Mean absolute deviation (MAD) Correlation coefficient Training time (minutes) Number of iterations (epochs) 0. 1989 0. 8297 0. 9714 0. 3 851 0. 0199 0. 7618 0. 9886 0. 5 1208 0. 0074 0. 3126 0. 9995 8 12 23 Sep - 04 Oct, 2002 WSC 7 0. 0162 0. 6754 0. 9974 1 8 ERNN 16

(c) Maximum Wind-Speed (11 -20 Jan 2001) ERNN MLP network Performance evaluation parameters (wind-speed) Mean absolute % error (MAPE) MLP Network OSS LM 0. 0896 0. 0770 OSS 0. 0873 LM 0. 0333 Root mean square error (RMSE) Mean absolute deviation (MAD) Correlation coefficient Training time (minutes) Number of iterations (epochs) 0. 1989 0. 8297 0. 9714 0. 3 851 0. 0199 0. 7618 0. 9886 0. 5 1208 0. 0074 0. 3126 0. 9995 8 12 23 Sep - 04 Oct, 2002 WSC 7 0. 0162 0. 6754 0. 9974 1 8 ERNN 16

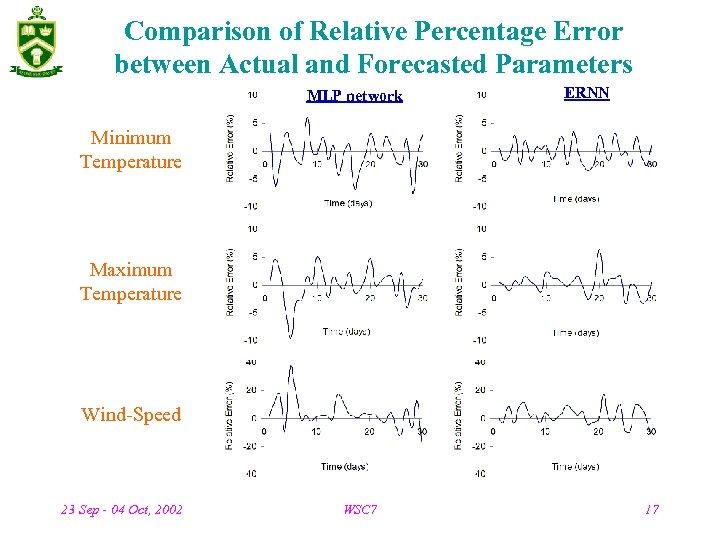

Comparison of Relative Percentage Error between Actual and Forecasted Parameters MLP network ERNN Minimum Temperature Maximum Temperature Wind-Speed 23 Sep - 04 Oct, 2002 WSC 7 17

Comparison of Relative Percentage Error between Actual and Forecasted Parameters MLP network ERNN Minimum Temperature Maximum Temperature Wind-Speed 23 Sep - 04 Oct, 2002 WSC 7 17

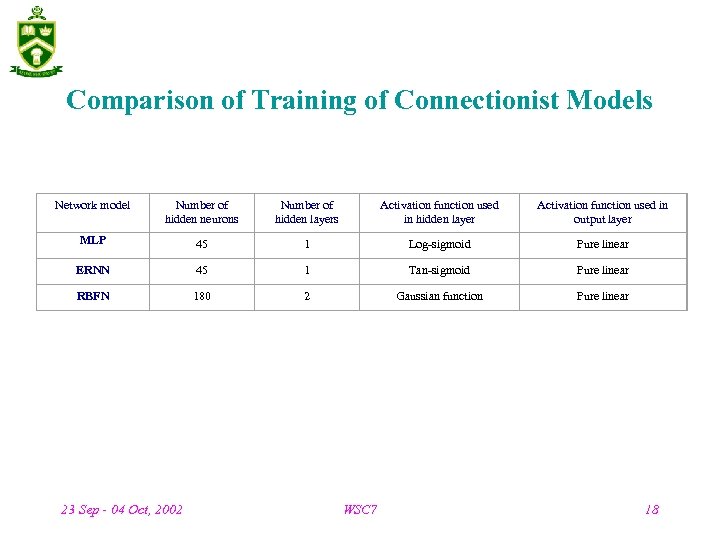

Comparison of Training of Connectionist Models Network model Number of hidden neurons Number of hidden layers Activation function used in hidden layer Activation function used in output layer MLP 45 1 Log-sigmoid Pure linear ERNN 45 1 Tan-sigmoid Pure linear RBFN 180 2 Gaussian function Pure linear 23 Sep - 04 Oct, 2002 WSC 7 18

Comparison of Training of Connectionist Models Network model Number of hidden neurons Number of hidden layers Activation function used in hidden layer Activation function used in output layer MLP 45 1 Log-sigmoid Pure linear ERNN 45 1 Tan-sigmoid Pure linear RBFN 180 2 Gaussian function Pure linear 23 Sep - 04 Oct, 2002 WSC 7 18

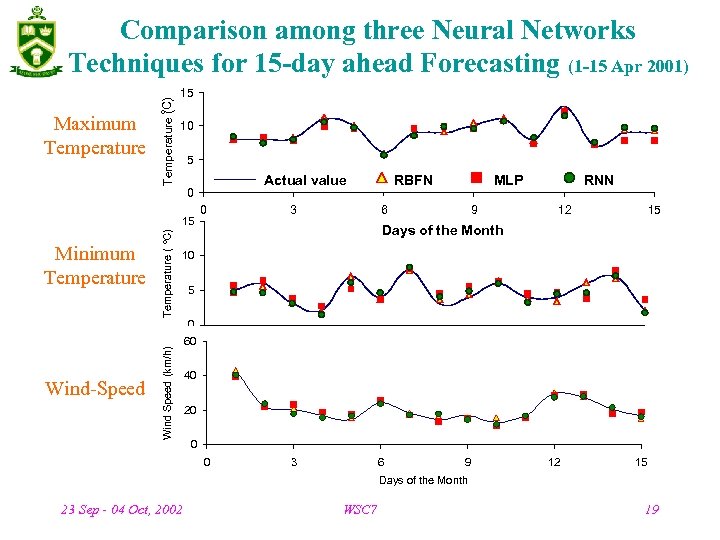

15 o Temperature (C) Comparison among three Neural Networks Techniques for 15 -day ahead Forecasting (1 -15 Apr 2001) Maximum Temperature 10 5 Actual value 0 Temperature ( o. C) 15 Minimum Temperature 0 RBFN 3 MLP 6 9 RNN 12 15 Days of the Month 10 5 Wind-Speed Wind Speed (km/h) 0 60 0 3 6 9 12 Days of the Month 40 15 20 0 0 3 6 9 12 15 Days of the Month 23 Sep - 04 Oct, 2002 WSC 7 19

15 o Temperature (C) Comparison among three Neural Networks Techniques for 15 -day ahead Forecasting (1 -15 Apr 2001) Maximum Temperature 10 5 Actual value 0 Temperature ( o. C) 15 Minimum Temperature 0 RBFN 3 MLP 6 9 RNN 12 15 Days of the Month 10 5 Wind-Speed Wind Speed (km/h) 0 60 0 3 6 9 12 Days of the Month 40 15 20 0 0 3 6 9 12 15 Days of the Month 23 Sep - 04 Oct, 2002 WSC 7 19

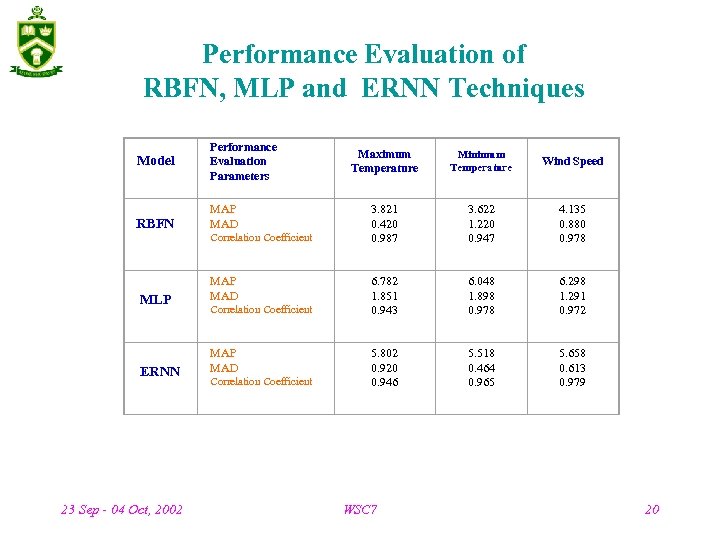

Performance Evaluation of RBFN, MLP and ERNN Techniques Model Performance Evaluation Parameters RBFN MAP MAD MLP MAD ERNN MAP MAD 23 Sep - 04 Oct, 2002 Correlation Coefficient Maximum Temperature Minimum Temperature Wind Speed 3. 821 0. 420 0. 987 3. 622 1. 220 0. 947 4. 135 0. 880 0. 978 6. 782 1. 851 0. 943 6. 048 1. 898 0. 978 6. 298 1. 291 0. 972 5. 802 0. 920 0. 946 5. 518 0. 464 0. 965 5. 658 0. 613 0. 979 WSC 7 20

Performance Evaluation of RBFN, MLP and ERNN Techniques Model Performance Evaluation Parameters RBFN MAP MAD MLP MAD ERNN MAP MAD 23 Sep - 04 Oct, 2002 Correlation Coefficient Maximum Temperature Minimum Temperature Wind Speed 3. 821 0. 420 0. 987 3. 622 1. 220 0. 947 4. 135 0. 880 0. 978 6. 782 1. 851 0. 943 6. 048 1. 898 0. 978 6. 298 1. 291 0. 972 5. 802 0. 920 0. 946 5. 518 0. 464 0. 965 5. 658 0. 613 0. 979 WSC 7 20

5. CONCLUSIONS • In this paper, we developed and compared the performance of multi-layered perceptron (MLP) neural network, Elman recurrent neural network (ERNN) and radial basis functions network (RBFN). • It can be inferred that ERNN could yield more accurate results, if good data selection strategies, training paradigms, and network input and output representations are determined properly. 23 Sep - 04 Oct, 2002 WSC 7 21

5. CONCLUSIONS • In this paper, we developed and compared the performance of multi-layered perceptron (MLP) neural network, Elman recurrent neural network (ERNN) and radial basis functions network (RBFN). • It can be inferred that ERNN could yield more accurate results, if good data selection strategies, training paradigms, and network input and output representations are determined properly. 23 Sep - 04 Oct, 2002 WSC 7 21

• Levenberg-Marquardt (LM) approach appears to be the best learning algorithm. However, it requires more memory and is computationally complex while compared to one-step-secant (OSS) algorithm. • Empirical results clearly demonstrate that compared to MLP neural network and ERNN, RBFN are much faster and more reliable for the weather forecasting problem considered. • A comparison of the neurocomputing techniques with other statistical techniques would be another future research topic. 23 Sep - 04 Oct, 2002 WSC 7 22

• Levenberg-Marquardt (LM) approach appears to be the best learning algorithm. However, it requires more memory and is computationally complex while compared to one-step-secant (OSS) algorithm. • Empirical results clearly demonstrate that compared to MLP neural network and ERNN, RBFN are much faster and more reliable for the weather forecasting problem considered. • A comparison of the neurocomputing techniques with other statistical techniques would be another future research topic. 23 Sep - 04 Oct, 2002 WSC 7 22

THANK YOU ! 23 Sep - 04 Oct, 2002 WSC 7 23

THANK YOU ! 23 Sep - 04 Oct, 2002 WSC 7 23