c7668b143548c6b626c0c638847a604d.ppt

- Количество слайдов: 17

Cal. REN Networks future capabilities ken lindahl Chair, CENIC High Performance Research network Technical Advisory Council lindahl@berkeley. edu Tier 3 Science Data/Network Requirements Workshop 15 Mar 2007, UC Davis Tier 3 Science Data/Network Initiatives in California Mar 2007, UC Davis The Corporation for Education Requirements Workshop, 15 • (714) 220 -3400 • info@cenic. org • www. cenic. org 1

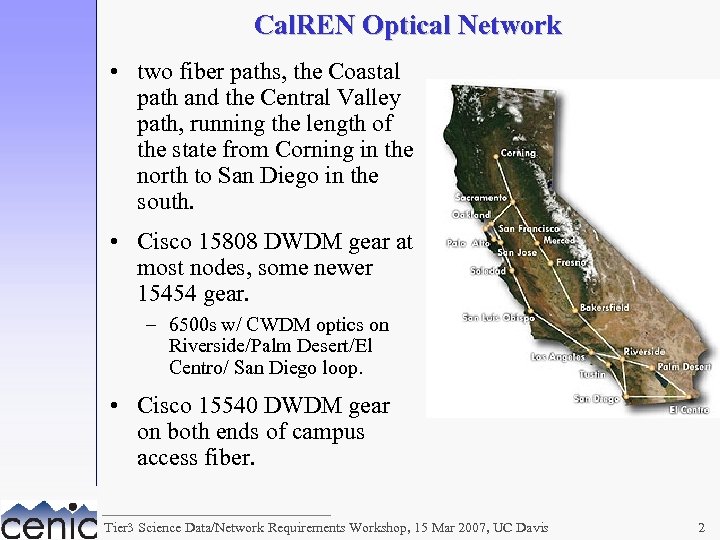

Cal. REN Optical Network • two fiber paths, the Coastal path and the Central Valley path, running the length of the state from Corning in the north to San Diego in the south. • Cisco 15808 DWDM gear at most nodes, some newer 15454 gear. – 6500 s w/ CWDM optics on Riverside/Palm Desert/El Centro/ San Diego loop. • Cisco 15540 DWDM gear on both ends of campus access fiber. Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 2

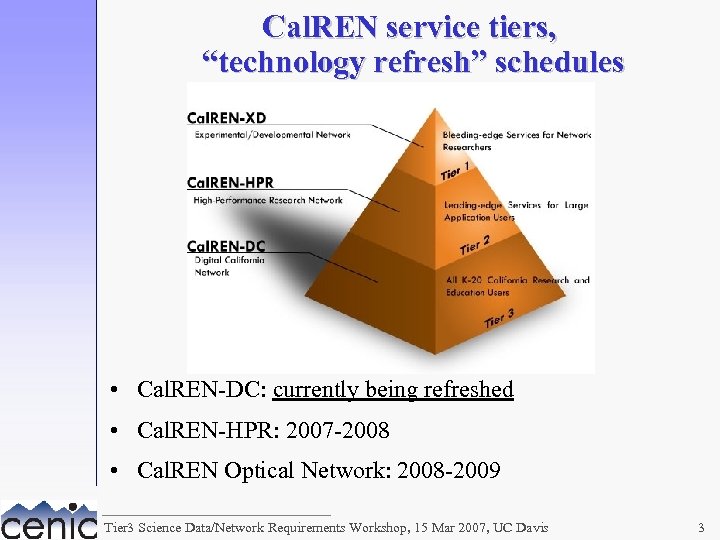

Cal. REN service tiers, “technology refresh” schedules • Cal. REN-DC: currently being refreshed • Cal. REN-HPR: 2007 -2008 • Cal. REN Optical Network: 2008 -2009 Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 3

national/international connectivity • Abilene: – 10 GE connection in Los Angeles; – 10 GE connection in San Jose, via Pacific. Wave to PNWGP, Seattle, for backup. • National Lambda. Rail: – 10 GE Packet. Net connection in Los Angeles; – 10 GE Frame. Net connection in San Jose. – Easy access to additional NLR services in Los Angeles and San Jose. • Pacific. Wave: – international peering exchange facility, running on Cal. REN and NLR waves, 10 Gbps switched fabric with 10 GE connections to Cal. REN at Los Angeles and San Jose. Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 4

Cal. REN-HPR refresh • planned enhancements to routed IP network: – capacity for more 10 GE campus connections (largely a matter of router real estate); – capability for >10 Gbps on backbone connections (multiple 10 GEs or 40 GE/OC-768 or 100 GE). • planning some layer 1 and 2 services, as well: – motivated by researchers’ requirements that we’ve learned over the past 3 years; – “XD Services” white paper written by Board subcommittee (comprised of mostly researchers rather than network guys); – deploying shared infrastructure on the optical backbone, will (hopefully) reduce costs to researchers; – reasonably good fit with New. Net and NLR wave and frame services. Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 5

researchers’ requirements • Researchers have requested dedicated, layer 2 private networks between campuses, e. g. : – DETER requested a 1 Gbps layer 2 network connecting labs at Berkeley and USC-ISI, that could be disconnected from any production network. – CITRIS requested a dedicated GE VLAN between labs at Berkeley and UC Davis, for testing/demonstrating video applications they are developing. • UC Grid requirements • LHC network requirements Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 6

“XD Services” white paper • Requirements: – – Standing lambdas available to researchers. Rapid set up/tear down – 1 -2 hours. Convenient set up/tear down – email to NOC. “Bypass networks. ” • Services: – 1 Gbps L 2 -switched VLANs. – 1 Gbps optically switched lambdas. – 10 Gbps optically switched lambdas. • 32 standing lambdas requested: – requires complete replacement of current optronics. – hopefully we can sneak by with slightly fewer lambdas. Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 7

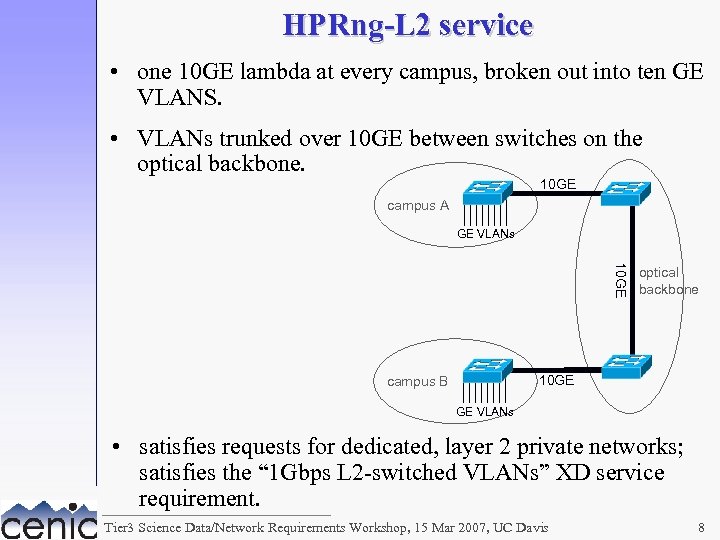

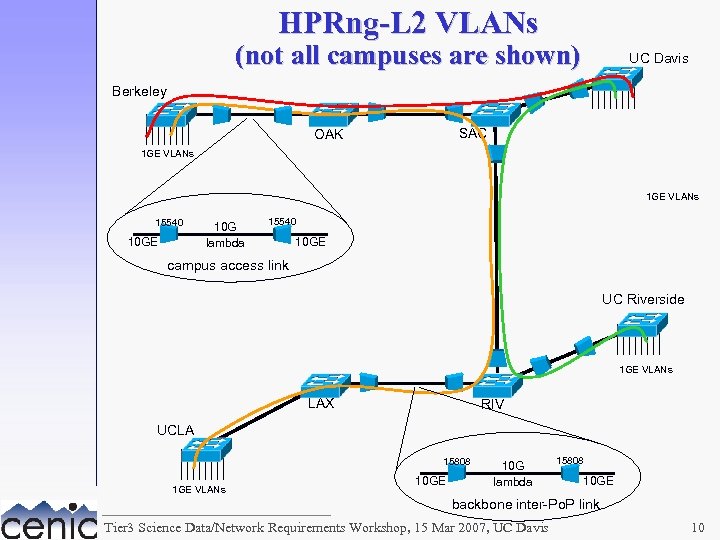

HPRng-L 2 service • one 10 GE lambda at every campus, broken out into ten GE VLANS. • VLANs trunked over 10 GE between switches on the optical backbone. 10 GE campus A GE VLANs 10 GE optical backbone 10 GE campus B GE VLANs • satisfies requests for dedicated, layer 2 private networks; satisfies the “ 1 Gbps L 2 -switched VLANs” XD service requirement. Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 8

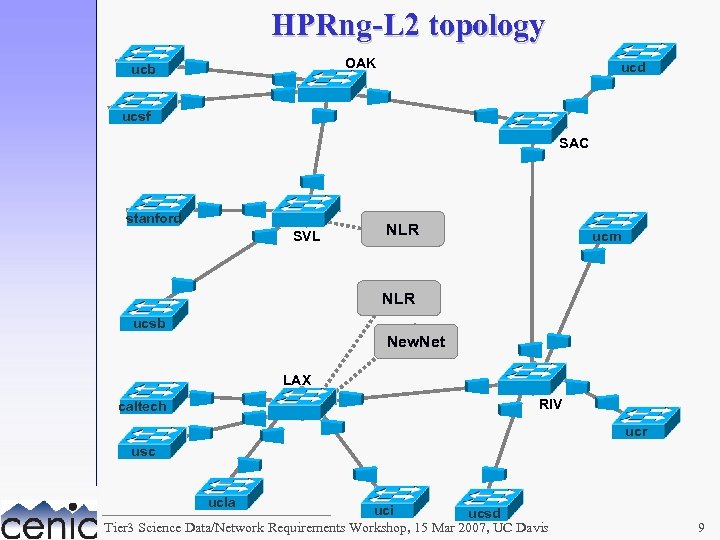

HPRng-L 2 topology OAK ucb ucd ucsf SAC stanford SVL NLR ucm NLR ucsb New. Net LAX RIV caltech ucr usc ucla uci ucsd Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 9

HPRng-L 2 VLANs (not all campuses are shown) UC Davis Berkeley SAC OAK 1 GE VLANs 15540 10 GE 10 G lambda 15540 10 GE campus access link UC Riverside 1 GE VLANs LAX RIV UCLA 15808 1 GE VLANs 10 GE 10 G lambda 15808 10 GE backbone inter-Po. P link Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 10

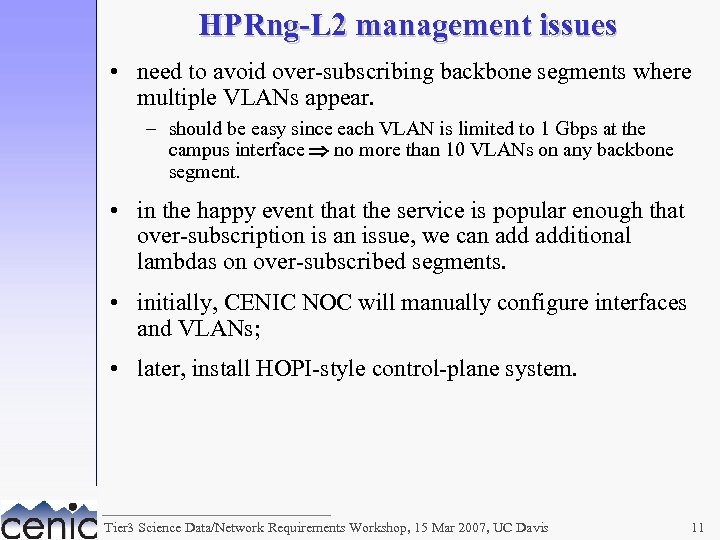

HPRng-L 2 management issues • need to avoid over-subscribing backbone segments where multiple VLANs appear. – should be easy since each VLAN is limited to 1 Gbps at the campus interface no more than 10 VLANs on any backbone segment. • in the happy event that the service is popular enough that over-subscription is an issue, we can additional lambdas on over-subscribed segments. • initially, CENIC NOC will manually configure interfaces and VLANs; • later, install HOPI-style control-plane system. Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 11

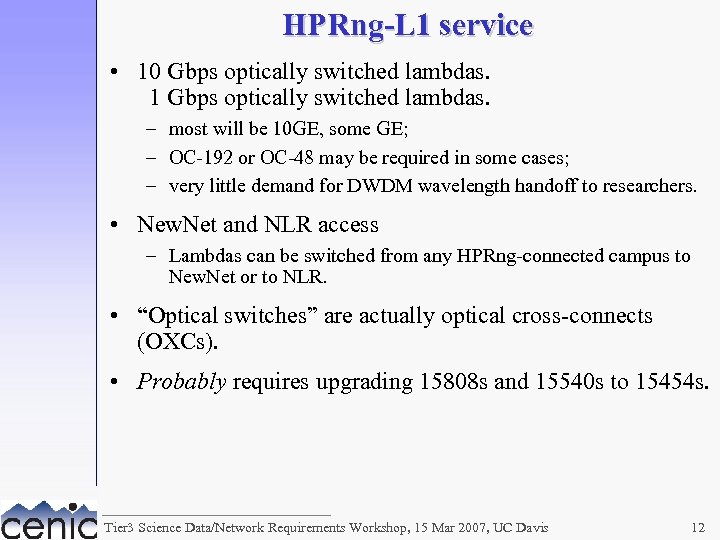

HPRng-L 1 service • 10 Gbps optically switched lambdas. 1 Gbps optically switched lambdas. – most will be 10 GE, some GE; – OC-192 or OC-48 may be required in some cases; – very little demand for DWDM wavelength handoff to researchers. • New. Net and NLR access – Lambdas can be switched from any HPRng-connected campus to New. Net or to NLR. • “Optical switches” are actually optical cross-connects (OXCs). • Probably requires upgrading 15808 s and 15540 s to 15454 s. Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 12

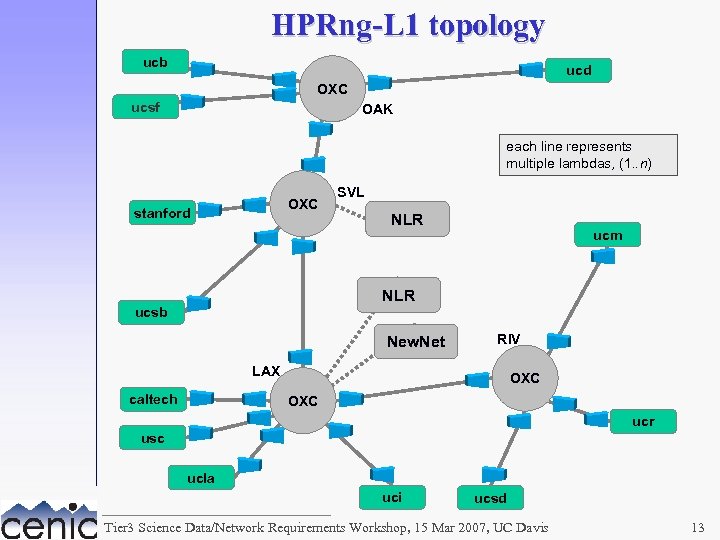

HPRng-L 1 topology ucb ucd OXC ucsf OAK each line represents multiple lambdas, (1. . n) OXC stanford SVL NLR ucm NLR ucsb New. Net RIV LAX caltech OXC ucr usc ucla uci ucsd Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 13

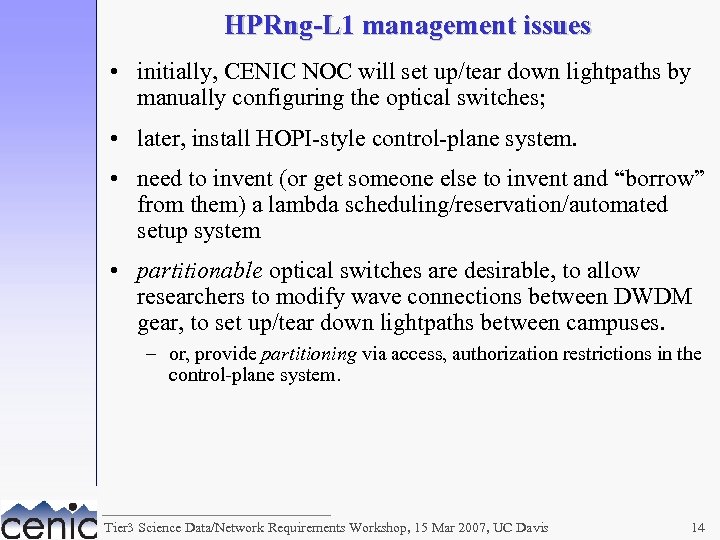

HPRng-L 1 management issues • initially, CENIC NOC will set up/tear down lightpaths by manually configuring the optical switches; • later, install HOPI-style control-plane system. • need to invent (or get someone else to invent and “borrow” from them) a lambda scheduling/reservation/automated setup system • partitionable optical switches are desirable, to allow researchers to modify wave connections between DWDM gear, to set up/tear down lightpaths between campuses. – or, provide partitioning via access, authorization restrictions in the control-plane system. Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 14

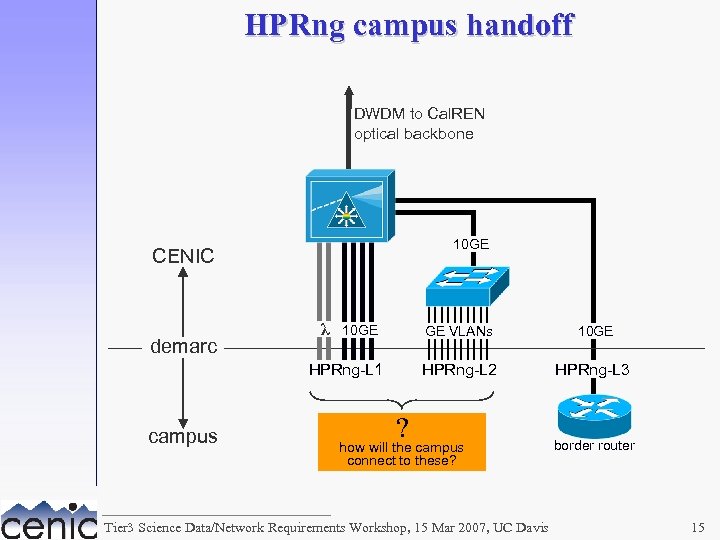

HPRng campus handoff DWDM to Cal. REN optical backbone 10 GE CENIC demarc λ 10 GE GE VLANs HPRng-L 2 HPRng-L 1 campus 10 GE HPRng-L 3 ? how will the campus connect to these? Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis border router 15

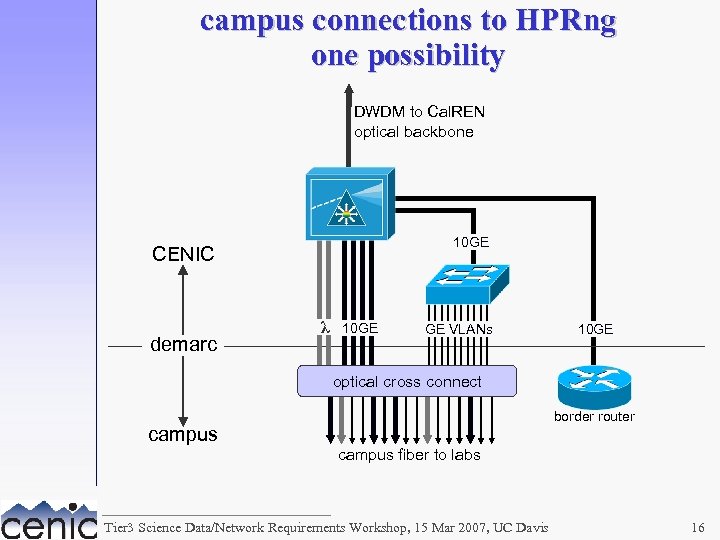

campus connections to HPRng one possibility DWDM to Cal. REN optical backbone 10 GE CENIC demarc λ 10 GE GE VLANs 10 GE optical cross connect border router campus fiber to labs Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 16

HPRng design committee • Mark Boolootian, UC Santa Cruz • Brian Court, CENIC • John Haskins, UC Santa Barbara • Rodger Hess, UC Davis • Tom Hutton, SDSC • Michael Van Norman, UCLA • Ken Lindahl, UC Berkeley Tier 3 Science Data/Network Requirements Workshop, 15 Mar 2007, UC Davis 17

c7668b143548c6b626c0c638847a604d.ppt