384e5b8e4094d027b3722da633a221fb.ppt

- Количество слайдов: 27

Bust a Move Young MC

Modeling and Predicting Machine Availability in Volatile Computing Environments Rich Wolski John Brevik Dan Nurmi University of California, Santa Barbara

Explorations In Grid Computing • Performance: How can programs extract high performance levels given that the resource pool is heterogeneous and dynamically changing? —The Network Weather Service —On-line performance monitoring and prediction • Programming: What programming abstractions are needed to enable the Grid paradigm? —Every. Ware —Toolkit for building global programs • Analysis: How do we reason about the Grid globally? —G-Commerce —Systemwide efficiency, stability, etc.

Fortune Telling • Grid resource performance varies dynamically —Machines, networks and storage systems are shared by competing applications —Federation • Either the system or the application itself must “tolerate” performance variation —Dynamic scheduling • Scheduling requires a prediction of future performance levels —What performance level will be deliverable?

Skepticism • Is it really possible to predict future performance levels? —Self-similarity —Non-stationarity —With what accuracy? —For how long into the future? • NWS On-line, semi non-parametric time series techniques —Use running tabulation of forecast error to choose between competing forecasters —Bandwidth, latency, CPU load, available memory, battery power

What About Machine Availability?

The “Normal” Approach • Each measurement is modeled as a “sample” from a random variable —Time invariant —IID (independent, identically distributed) —Stationary (IID forever) • Well studied in the literature —Exponential distributions - Compose well - Memoryless - Popular in database and fault-tolerance communities —Pareto distributions - Potentially related to self-similarity - “heavy-tailed” implying non-predictability - Popular in networking, Internet, and Dist. System communities

Our “Abnormal” Approach • Measure availability as “lifetime” in a variety of settings —Student lab at UCSB, Condor pool - New NWS availability sensors —Data used in fault-tolerance community for checkpointing research - Predicting optimal checkpoint • Develop robust software for MLE parameter estimation • Fit Exponential, Pareto, and Weibull distributions • Compare the fits —Visually —Goodness of fit tests • Goal is to provide an automated mechanism for the NWS —Let the best distribution win

UCSB Student Computing Labs • Approximately 85 machines running Red Hat Linux located in three separate buildings • Open to all Computer Science graduate and undergraduates —Only graduates have building keys • Power-switch is not protected —Anyone with physical access to the machine can reboot it by power cycling it • Students routinely “clean off” competing users or intrusive processes to gain better performance response • NWS deployed and monitoring duration between restarts • Can we model the time-to-reboot?

UCSB Empirical CDF

MLE Weibull Fit to UCSB Data

Comparing Fits at UCSB

The Visual Acid Test

More Systems • Condor: Cycle harvesting system (M. Livny, U. Wisconsin) —Workstations in a “pool” run the (trusted) Condor daemons —When a machine running a Condor job becomes “busy” Job is terminated (vanilla universe) —Unknown and constantly changing number of workstations in UWisc Condor Pool (~ 1000 Linux Workstations) • Long, Muir, Golding Internet Survey (1995) —Pinged the rpc. statd as a heartbeat —Used extensive in fault-tolerance community to model host failure — 1170 hosts covering 3 months of Spring

The Condor Picture April 2003 through Oct 2004, 600 hosts

More Condor April 2003 through July 2005, 900 hosts

Condor Clusters April 2003 through July 2005, 730 hosts

Condor Non-cluster April 2003 through July 2005, 170 hosts

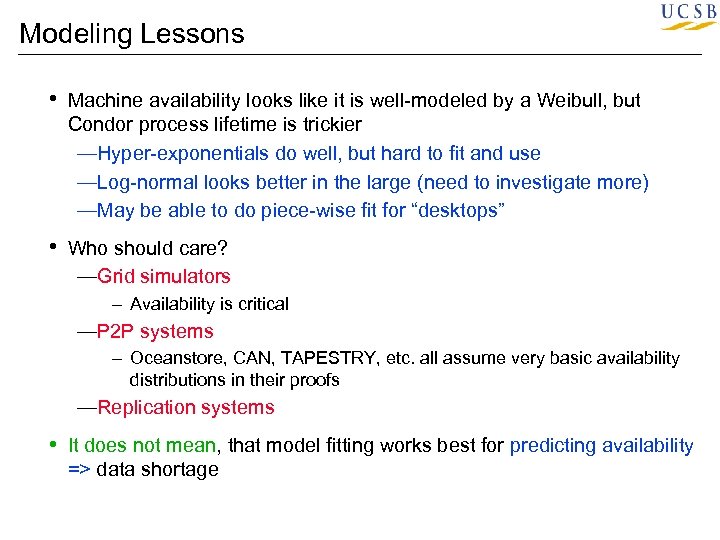

Modeling Lessons • Machine availability looks like it is well-modeled by a Weibull, but Condor process lifetime is trickier —Hyper-exponentials do well, but hard to fit and use —Log-normal looks better in the large (need to investigate more) —May be able to do piece-wise fit for “desktops” • Who should care? —Grid simulators - Availability is critical —P 2 P systems - Oceanstore, CAN, TAPESTRY, etc. all assume very basic availability distributions in their proofs —Replication systems • It does not mean, that model fitting works best for predicting availability => data shortage

Predicting Individual Machine Behavior • Estimating Mean Time to Failure (MTTF) is relatively easy —Unless the data is Pareto, the mean is the “expected value” —Probably not what is needed to support scheduling - The cost of being below the mean is not the same as the cost of being above it • “At least how much time will elapse before this machine reboots with 95% certainty? ” —The answer is the 0. 05 quantile (not an expectation) from the cumulative distribution function (CDF)

Certainty in an Uncertain World • Predictions of the form —“For at least how long with this machine be available with X% certainty? ” • Requires two estimates if certainty is to be quantified —Estimate the (1 -X) quantile for the distribution of availability => Qx —Estimate the lower X% confidence bound on the statistic Qx => Q(x, lb) • If the estimates are unbiased, and the distribution is stationary, future availability duration will be larger than Q(x, lb) X% of the time, guaranteed

Neo-classical Methods • The classical (parametric) method has some drawbacks —Which distribution? —MLE is computationally challenging or impossible for some distributions and/or data sets —Requires quite a bit of data to get a good fit —Quantiles near the tails are “squeezed” so fit error is significant —Estimating confidence bounds for high-order models is computationally (and theoretically) difficult • Non-parametric techniques —Can usually only recover a statistic and not the distribution —Those that appeal to the CLT may have an asymptote problem • New non-parametric invention based on Binomial assumptions

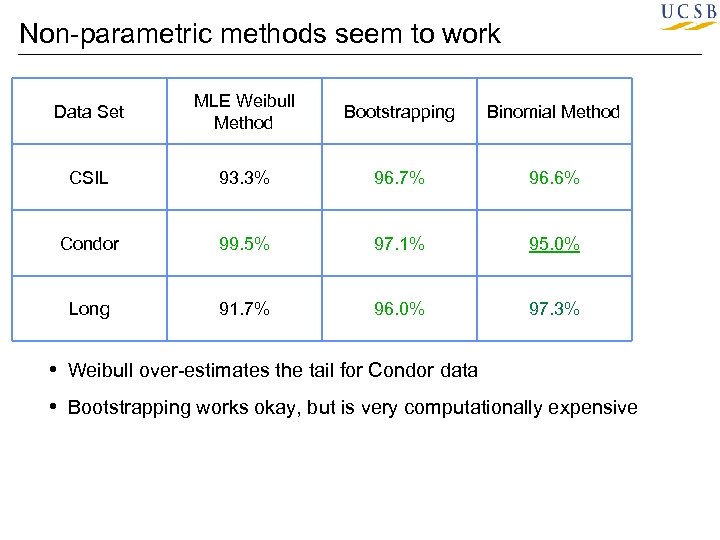

Experiments in Fortune Telling • CSIL, Condor (2 years), and Long data sets • Split into training and experimental periods —Use only machines with 20 training samples or more —Using synthetic data we noticed that be best method needed at least 20 samples • Use methods to estimate 95% confidence on 0. 05 quantile from training period • Record success if 95%-100% of the remaining experimental availability durations >= estimate • Report % success (want to see 95%)

Non-parametric methods seem to work Data Set MLE Weibull Method Bootstrapping Binomial Method CSIL 93. 3% 96. 7% 96. 6% Condor 99. 5% 97. 1% 95. 0% Long 91. 7% 96. 0% 97. 3% • Weibull over-estimates the tail for Condor data • Bootstrapping works okay, but is very computationally expensive

On-going Work with Condor • Checkpoint scheduling —Parametric method reduces network load dramatically • Applications —LDPC investigation => lowest observed error rates —Ramsey search —Grid. SAT —UCSBGrid • Automatic Program Overlay —On-demand Condor as a grid programming middleware • NWS Condor Integration —Publishing NWS forecasts via Hawkeye —Incorporating machine availability predictor

Thanks and More • • • Miron Livny and the Condor group at the University of Wisconsin Darrell Long (UCSC) and James Plank (UTK) UCSB Facilities Staff NSF SCI and DOE Middleware and Applications Yielding Heterogeneous Environments for Metacomputing at UCSB • Students: Matthew Allen, Wahid Chrabakh, Ryan Garver, Andrew Mutz, Dan Nurmi, Erik Peterson, Fred Tu, Lamia Youseff, Ye Wen • Research Staff: John Brevik, Graziano Obertelli • www. cs. ucsb. edu/~rich • rich@cs. ucsb. edu

384e5b8e4094d027b3722da633a221fb.ppt