dd083c026e1f19784cbe7310010b44ea.ppt

- Количество слайдов: 32

Better Improvement Research Resources download from: http: //homepage. mac. com/johnovr/File. Sharing 2. html John Øvretveit, Director of Research, Professor, Karolinska Medical Management Centre Sweden and Professor of Health Management, Faculty of Medicine, Bergen University 3/15/2018 1

Recognition of AHRQ & researchers You are making a difference… Just some achievements : Shojania ed 2001 700 page review of safety interventions Quality and safety indicators Culture survey Team STEPS & other tools Innovations exchange 2

Achievements Notable research funded by AHRQ: Closing the quality gap series http: //www. ahrq. gov/clinic/epc/qgapfact. htm Henriksen K, Battles JB, Marks ES, Lewin DI, editors. Advances in patient safety: from research to implementation. Vol. 1, Vol 2. Vol 3 implement Vol 4 AHRQ Publication No. 05 -0021 -1. Rockville, MD: Agency for Healthcare Research and Quality; Feb. 2005. http: //www. ncbi. nlm. nih. gov/books/bv. fcgi? rid=aps. part. 1 Partnerships in Implementing Patient Safety (PIPS) grants REAIM studies (eg Magid et al 2008) 3

Acknowledge also: QUERY series , Mittman et al, eg Yano 2008 4

Achievements Questions are the answer 5

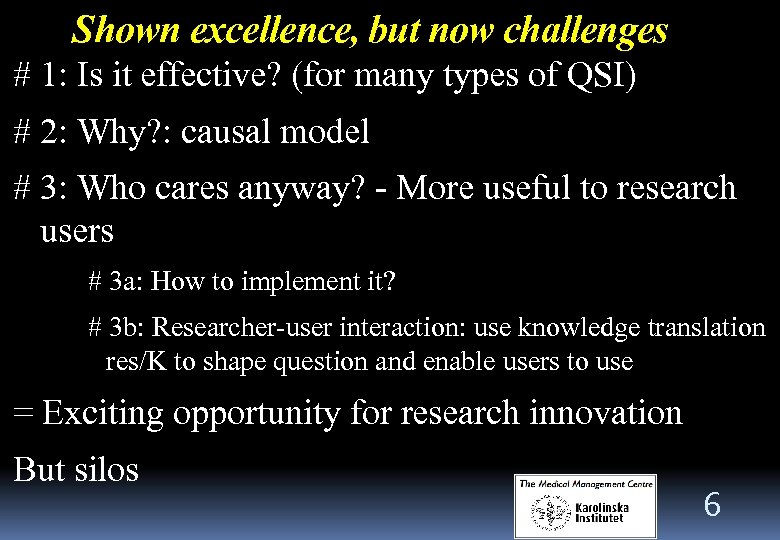

Shown excellence, but now challenges # 1: Is it effective? (for many types of QSI) # 2: Why? : causal model # 3: Who cares anyway? - More useful to research users # 3 a: How to implement it? # 3 b: Researcher-user interaction: use knowledge translation res/K to shape question and enable users to use = Exciting opportunity for research innovation But silos 6

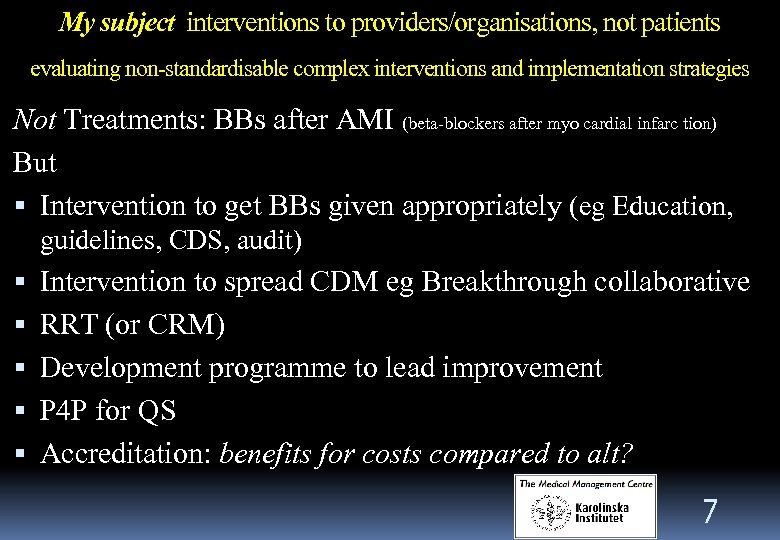

My subject interventions to providers/organisations, not patients evaluating non-standardisable complex interventions and implementation strategies Not Treatments: BBs after AMI (beta-blockers after myo cardial infarc tion) But Intervention to get BBs given appropriately (eg Education, guidelines, CDS, audit) Intervention to spread CDM eg Breakthrough collaborative RRT (or CRM) Development programme to lead improvement P 4 P for QS Accreditation: benefits for costs compared to alt? 7

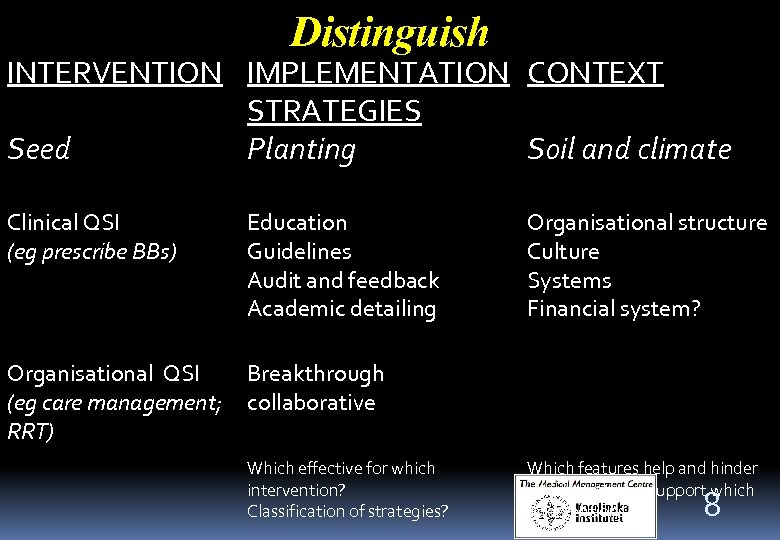

Distinguish INTERVENTION IMPLEMENTATION CONTEXT STRATEGIES Seed Planting Soil and climate Clinical QSI (eg prescribe BBs) Education Guidelines Audit and feedback Academic detailing Organisational QSI (eg care management; RRT) Breakthrough collaborative Which effective for which intervention? Classification of strategies? Organisational structure Culture Systems Financial system? Which features help and hinder which strateges/support which interventions? 8

Themes Horses for courses Match method to question and type of QSI More flexibility and innovation Its not the camera, but what’s behind and in front that makes a quality picture Its not the intervention, but the context and the beneficiaries that makes the impact 9

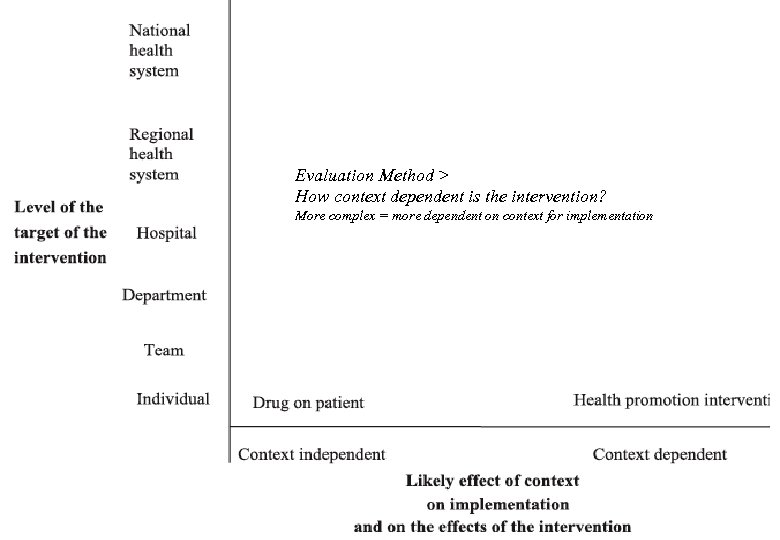

How Evaluation Method > How context dependent is the intervention? More complex = more dependent on context for implementation 1 0

Next: 4 challenges and resolutions Useful research Efficacy Effectiveness/generalisation Translation Examples: RRT; CRM; Transition interventions; Accreditation. 3/15/2018 11

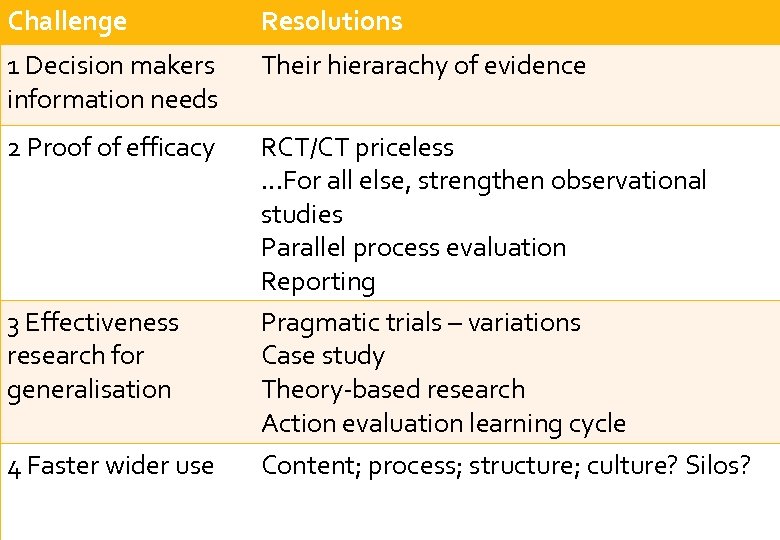

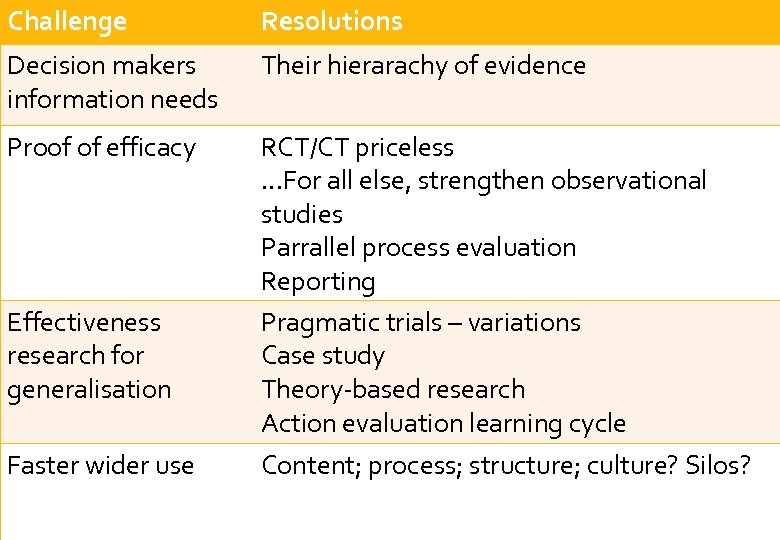

Challenge 1 Decision makers information needs 2 Proof of efficacy 3 Effectiveness research for generalisation 4 Faster wider use 3/15/2018 Resolutions Summary Their hierarachy of evidence RCT/CT priceless. . . For all else, strengthen observational studies Parallel process evaluation Reporting Pragmatic trials – variations Case study Theory-based research Action evaluation learning cycle 1 Content; process; structure; culture? Silos? 2

#1 challenge: decision makers information needs Go/not go decision – pilot, full-scale? Implementer’s guidance: adapt and progress it? Install update? Needs: useful credible information, now!, about: Costs, savings, benefits, risks – for our organisation Implementation to maximise success Don’t even think about it unless…. Utility not purity: “Good enough validity” &some attention to bias Researcher response? No compromise – publication and promotion 3/15/2018 13

#1 challenge: decision makers information needs "Many QIs have small to moderate effect" Research design limitations? Does quantitative RCT/CT design a) fail to measure enough intermediate or ultimate outcomes? b) obscure extremes, where context important? c) require prescribed implementation, when iterative adaption necessary? 3/15/2018 1 4

#1 challenge: decision maker’s information needs Resolution by decision-maker’s : Hierarchy of evidence: 1)Face validity/make sense? - Try it on a small scale 2)Steve or Jane’s experience in Kansas 3)IHI practitioner reports: O 1 > I > O 2 data (Before>Intervention>After) 4)Published practitioner-scientist study 5)High-church medical journal publication Proportionality of proof – cost/ease, risk, benefit 3/15/2018 15

#2 Challenge: Efficacy proof Does it work – anywhere? Maximise certainty of attribution of outcomes to intervention Causal assumptions: why/how does it work? Resolutions: Paradigm: O 1 > I > O 2 quantitative experimental black box Is there are difference? Better: O 1 > I > O 2 Bigger difference? O 1 > ? > O 2 1 Control, randomise, compare, hygiene to avoid contamination by confounders 6 Other explanations for difference? 3/15/2018

Disconnect between A Linear – sequential – intervention – outcome assumptions underlying research designs and explanation and B Sophisticated systems understanding of causes Outcomes the result of a number of causes Causes interact with each other and with influences outside the boundary of the system Eg Senge Architypes (latent predisposing factors/active “cause”) ref Anderson et al 2005 17

#2 Resolutions to increase proof of Efficacy Strengths √ specifiable, controllable interventions like drug = √ Unchanging, control known confounders and randomise-out others, 2/3 measures all you need Limitations Absence of above. Works for whom? - Multiple perspectives. Unintended consquences – study more outcomes Decision makers translation – info they need in addition 3/15/2018 1 8

#2 Resolutions to increase proof of Efficacy Strengthening Parallel process evaluation Reporting ("SQUIRE" etc) (labels for what implemented, not the brand) Attribution steriods for observational studies (sensitivity analyses to assess results Propensity score (Johnson et al 2006) and instrumental variable (Harless and Mark 2006) methods 3/15/2018 1 9

#3 Challenge: effectiveness research for generalisation Effectiveness in different situations? Issues: Many interventions sensitive to context Implementable only if changed to suit context Evolve in interaction with changing context - journey/story Ie efficacy guarantee violated by user adaption of some interventions For others: guarantee failure if you do not adapt Or buy installation and 3 year guarantee 3/15/2018 2 0

#3 Resolutions: generalisable effectiveness research R 1: Maintain paradigm: “Pragmatic trials” Minimise loss of attribution with Time series, Step-wise wedge, SPC (but increase cost and time) Some √ for routine practice feedback Generalisable to similar situations and interventions Add more situations and variations of the intervention Compare many pragmatic trials and assess what works best where Invite trails in X situations? Improve reporting (standardise and details) - ve: no answer to why? – explanation helps adapt, and contributes to science 3/15/2018 2 1

#3 Resolutions: context sensitive generalisable effectiveness research R 2: Case study research √ Describes intervention as it evolves & context helpers and hinderers √ Assesses intermediate changes √ Links these to ultimate patient/cost outcomes, if possible Multiple case study in selected situations (eg Dopson 2002) NEXT: What we have learned in doing this research 3/15/2018 2 2

What we have learned in doing this research The research: 12 Action evaluation case studies of innovation implementation in Swedish health care & variety of “research into practice” implementation and change studies 3/15/2018 2 3

L 2: Distinguish Safer clinical practices Changed providers behaviour = reduce adverse events? Safer organisation and processes The seed support changes in provider behaviour and address latent causes Implementation actions to achieve the above Planting at team, organisation, system and national levels Soil & climate (is a MET/RRT a safe clinical practice or a "safer organisation or process" 2 change, or both? ) 4 External context helpers and hinders 3/15/2018

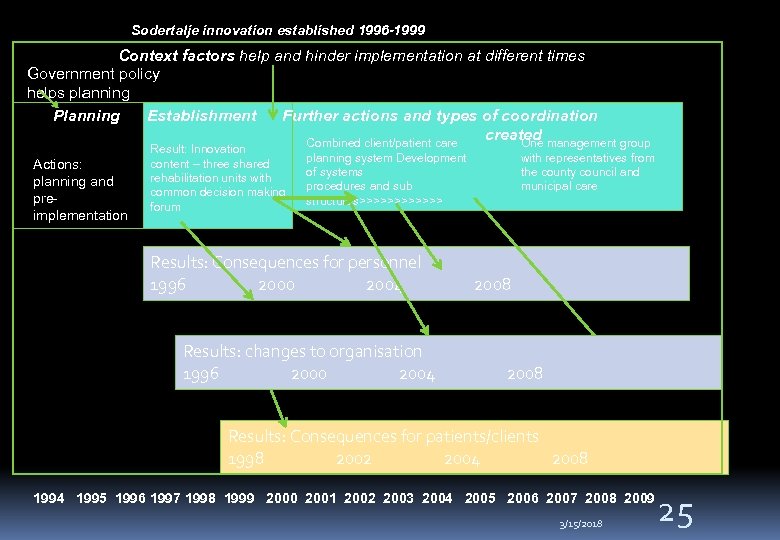

Sodertalje innovation established 1996 -1999 Context factors help and hinder implementation at different times Government policy helps planning Planning Establishment Further actions and types of coordination created management group Combined client/patient care One Actions: planning and preimplementation Result: Innovation content – three shared rehabilitation units with common decision making forum planning system Development of systems procedures and sub structures>>>>>> Results: Consequences for personnel 1996 2000 2004 Results: changes to organisation 1996 2000 2004 with representatives from the county council and municipal care 2008 Results: Consequences for patients/clients 1998 2002 2004 2008 1994 1995 1996 1997 1998 1999 2000 2001 2002 2003 2004 2005 2006 2007 2008 2009 3/15/2018 25

L 3: Theory essential - of intervention pathway to outcomes To decide which data to gather To provide explanations to test To give implementers to help them adapt. (Program theory Weiss 1972, 1997 Rog&Fournier 1997; Logic Model Wholey 1979; Theory-driven evaluation Chen 1990, Sidani & Braden 1998; realist evaluation Henry et al 1998, Pawson & Tilley 1997; Theories Grol et al 2007) 2 3/15/2018 6

L 4: Action evaluation learning cycle Feedback findings during implementation + and – for science Assess effect of researcher on implementation and results Helps develop intervention during the implementation journey Increases cooperation and access to data Partnership, but distinct roles Study how implementers use knowledge and help use more 3/15/2018 27

#4 Challenge: use – faster, wider Demand? - Real men don’t need research Supply? - Real researchers don’t write exec summaries Make sure unusable and ”throw over the fence” delivery Closing the research/practice gap 3/15/2018 2 8

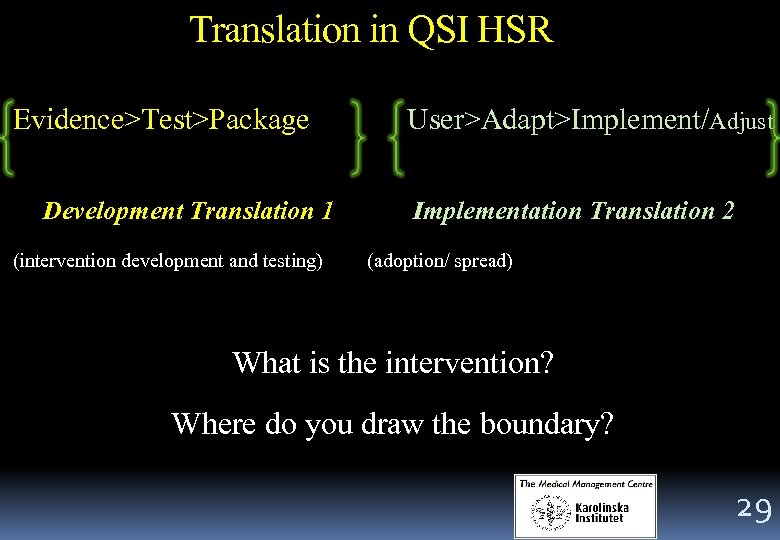

Translation in QSI HSR Evidence>Test>Package Development Translation 1 (intervention development and testing) User>Adapt>Implement/Adjust Implementation Translation 2 (adoption/ spread) What is the intervention? Where do you draw the boundary? 29

#4 Resolutions – our experience Use KT/KM literature – what works? Content: accessibility and relevance Service implications; many examples; 3: 20: Appx reports; ghost writers and mediator authors; Engage emotionally: patient describes experience or video Process: interact with users at each stage Structure: forums, networks, joint appointments, brokers 3 0 3/15/2018

Challenge Decision makers information needs Proof of efficacy Effectiveness research for generalisation Faster wider use 3/15/2018 Resolutions Summary Their hierarachy of evidence RCT/CT priceless. . . For all else, strengthen observational studies Parrallel process evaluation Reporting Pragmatic trials – variations Case study Theory-based research Action evaluation learning cycle Content; process; structure; culture? Silos? 31

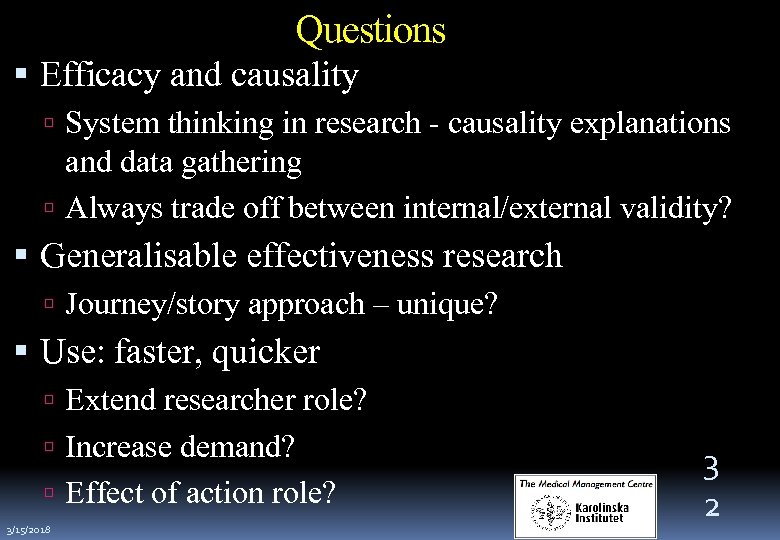

Questions Efficacy and causality System thinking in research - causality explanations and data gathering Always trade off between internal/external validity? Generalisable effectiveness research Journey/story approach – unique? Use: faster, quicker Extend researcher role? Increase demand? Effect of action role? 3/15/2018 3 2

dd083c026e1f19784cbe7310010b44ea.ppt