628bc7e99dd7bace6d68b0e436dbdaff.ppt

- Количество слайдов: 42

Automating the Committee Meeting: Intelligent Integration of Information From Diverse Sources Pedrito Maynard-Zhang Department of Computer Science & Systems Analysis

Automating the Committee Meeting: Intelligent Integration of Information From Diverse Sources Pedrito Maynard-Zhang Department of Computer Science & Systems Analysis

Information Integration Information integration is ubiquitous: • Committee meetings • Research papers • Information retrieval on the web • Assessing intelligence on the battlefield • …

Information Integration Information integration is ubiquitous: • Committee meetings • Research papers • Information retrieval on the web • Assessing intelligence on the battlefield • …

Information Integration

Information Integration

Outline • Introduction • Automating Information Integration – Database Integration – Model Integration – Conflict Resolution and Meta-Information • Integrating Learned Probabilistic Information • Conclusion and Current Work

Outline • Introduction • Automating Information Integration – Database Integration – Model Integration – Conflict Resolution and Meta-Information • Integrating Learned Probabilistic Information • Conclusion and Current Work

Multi-Disciplinary Research • Databases (e. g. , Halevy’s group at U. of Washington) • Artificial Intelligence (e. g. , Stanford’s Knowledge Systems Laboratory) • Business (e. g. , MIT-Sloan’s Aggregators Group) • Decision Analysis (e. g. , Clemen & Winkler’s work at Duke)

Multi-Disciplinary Research • Databases (e. g. , Halevy’s group at U. of Washington) • Artificial Intelligence (e. g. , Stanford’s Knowledge Systems Laboratory) • Business (e. g. , MIT-Sloan’s Aggregators Group) • Decision Analysis (e. g. , Clemen & Winkler’s work at Duke)

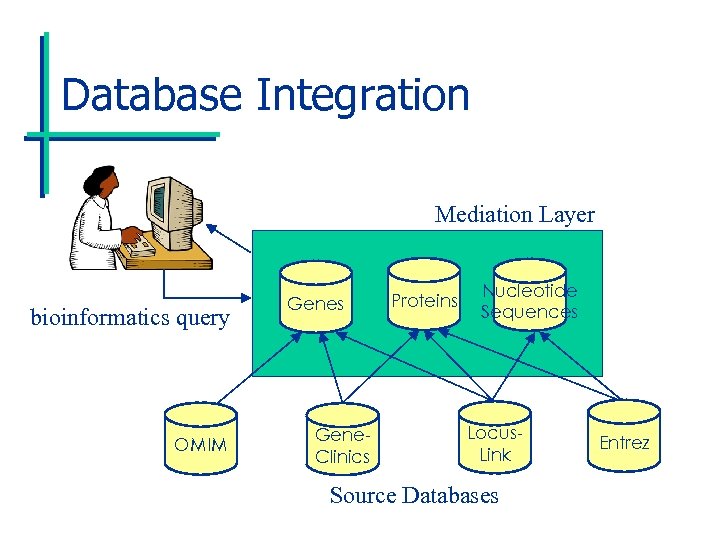

Database Integration Mediation Layer bioinformatics query OMIM Genes Gene. Clinics Proteins Nucleotide Sequences Locus. Link Source Databases Entrez

Database Integration Mediation Layer bioinformatics query OMIM Genes Gene. Clinics Proteins Nucleotide Sequences Locus. Link Source Databases Entrez

Database Integration • Application: Querying distributed databases • Examples – Bioinformatics – Corporate data management – Question-answer systems on the web – Detecting bioterrorism

Database Integration • Application: Querying distributed databases • Examples – Bioinformatics – Corporate data management – Question-answer systems on the web – Detecting bioterrorism

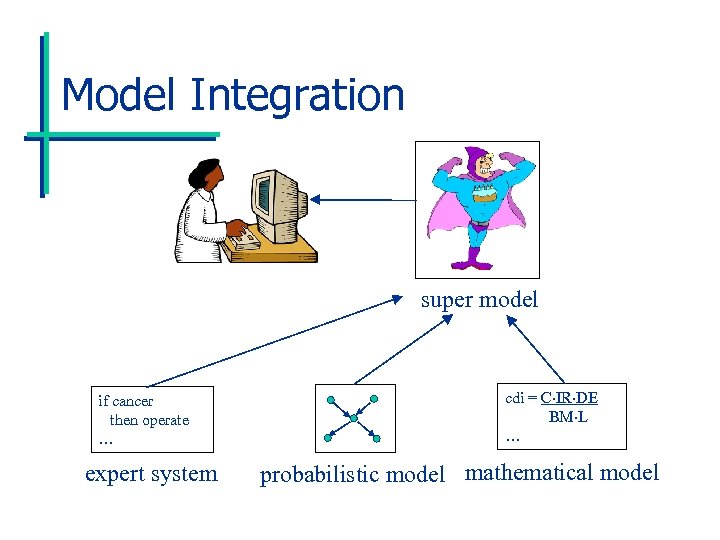

Model Integration super model if cancer then operate … expert system cdi = C IR DE BM L … probabilistic model mathematical model

Model Integration super model if cancer then operate … expert system cdi = C IR DE BM L … probabilistic model mathematical model

Model Integration • Applications: Diagnosis and prediction • Examples: – Medical diagnosis – NASA spacecraft design and diagnosis – Expert system integration – Combining commonsense knowledge bases

Model Integration • Applications: Diagnosis and prediction • Examples: – Medical diagnosis – NASA spacecraft design and diagnosis – Expert system integration – Combining commonsense knowledge bases

Challenges • Efficient query processing and optimization – Parsing XML • Defining expressive yet tractable mediator languages • Handling heterogeneous source languages – Wrapper technology development

Challenges • Efficient query processing and optimization – Parsing XML • Defining expressive yet tractable mediator languages • Handling heterogeneous source languages – Wrapper technology development

Challenges • Resolving ontological differences – e. g. , realizing that the field “Name” for one source stores the same information as “First Name” and “Last Name” for another. • Detecting conflicts • Resolving conflicts – Resolution done manually in practice – We can automate more!

Challenges • Resolving ontological differences – e. g. , realizing that the field “Name” for one source stores the same information as “First Name” and “Last Name” for another. • Detecting conflicts • Resolving conflicts – Resolution done manually in practice – We can automate more!

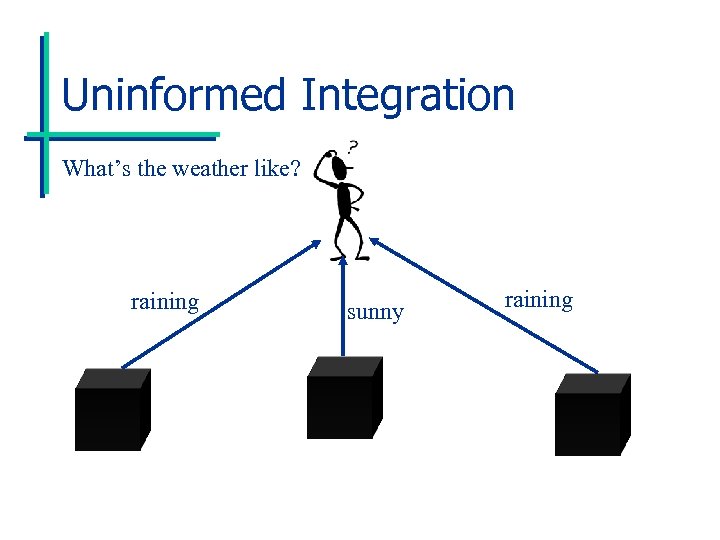

Uninformed Integration What’s the weather like? raining sunny raining

Uninformed Integration What’s the weather like? raining sunny raining

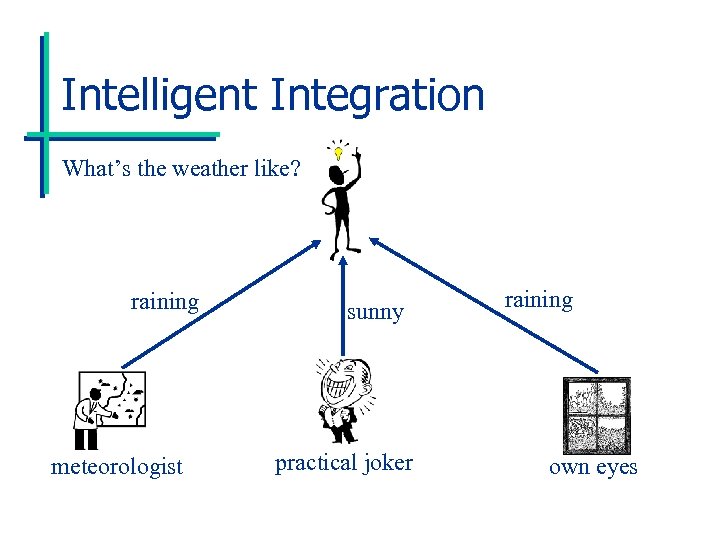

Intelligent Integration What’s the weather like? raining meteorologist sunny practical joker raining own eyes

Intelligent Integration What’s the weather like? raining meteorologist sunny practical joker raining own eyes

Types of Meta-Information • Credibility, experience, political clout • Areas of expertise • How source acquired information: – Source’s sources – Processes source used to accumulate information • Structure of the data representation

Types of Meta-Information • Credibility, experience, political clout • Areas of expertise • How source acquired information: – Source’s sources – Processes source used to accumulate information • Structure of the data representation

Outline • Introduction • Automating Information Integration • Integrating Learned Probabilistic Information – – – Medical Scenario Semantic Framework Lin. OP-Based Aggregation Aggregating Bayesian Networks Experimental Validation • Conclusion and Current Work

Outline • Introduction • Automating Information Integration • Integrating Learned Probabilistic Information – – – Medical Scenario Semantic Framework Lin. OP-Based Aggregation Aggregating Bayesian Networks Experimental Validation • Conclusion and Current Work

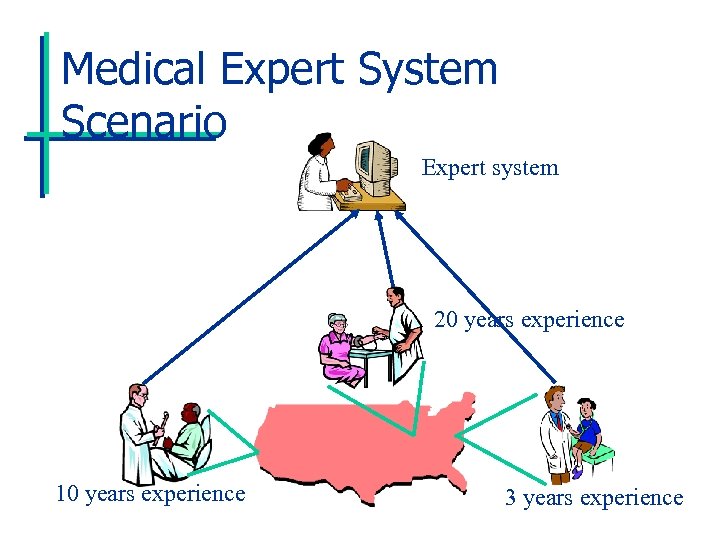

Medical Expert System Scenario Expert system 20 years experience 10 years experience 3 years experience

Medical Expert System Scenario Expert system 20 years experience 10 years experience 3 years experience

Source Meta-Information • Doctors learned probabilistic models from patient data using some known standard learning algorithm. • We know the relative amount of experience doctors have had (i. e. , years of practice).

Source Meta-Information • Doctors learned probabilistic models from patient data using some known standard learning algorithm. • We know the relative amount of experience doctors have had (i. e. , years of practice).

Popular Aggregation Approaches • Intuition approach: Take simple weighted averages, etc. unexpected behavior • Axiomatic approach: Find aggregation algorithm satisfying certain “obvious” properties impossibility results • Problem: Not semantically grounded

Popular Aggregation Approaches • Intuition approach: Take simple weighted averages, etc. unexpected behavior • Axiomatic approach: Find aggregation algorithm satisfying certain “obvious” properties impossibility results • Problem: Not semantically grounded

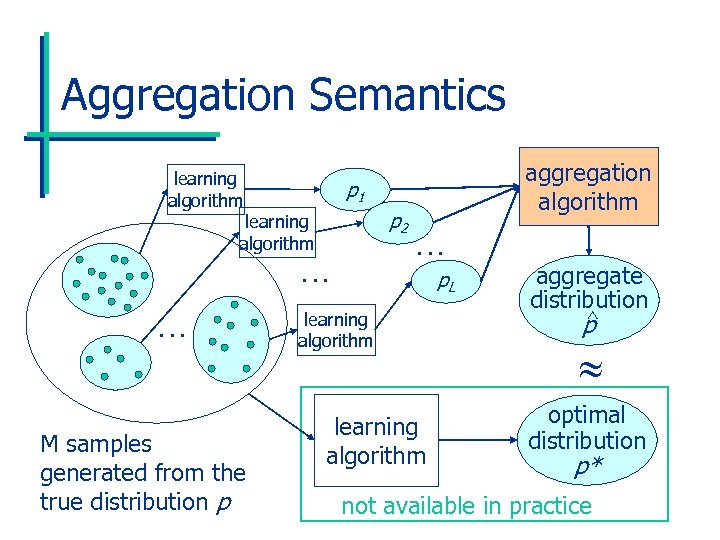

Aggregation Semantics learning algorithm p 1 p 2 learning algorithm … … M samples generated from the true distribution p aggregation algorithm … p. L learning algorithm aggregate distribution ^ p optimal distribution p* not available in practice

Aggregation Semantics learning algorithm p 1 p 2 learning algorithm … … M samples generated from the true distribution p aggregation algorithm … p. L learning algorithm aggregate distribution ^ p optimal distribution p* not available in practice

Linear Opinion Pool (Lin. OP) • Lin. OP: Weighted sum of joint distributions. • Precisely, for joint distributions pi and joint variable instantiation w, Lin. OP(p 1, p 2, …, p. L)(w) = i ipi(w). • i weights: relative experience. • Satisfies unanimity, non-dictatorship, and marginalization. • Doesn’t preserve shared independences.

Linear Opinion Pool (Lin. OP) • Lin. OP: Weighted sum of joint distributions. • Precisely, for joint distributions pi and joint variable instantiation w, Lin. OP(p 1, p 2, …, p. L)(w) = i ipi(w). • i weights: relative experience. • Satisfies unanimity, non-dictatorship, and marginalization. • Doesn’t preserve shared independences.

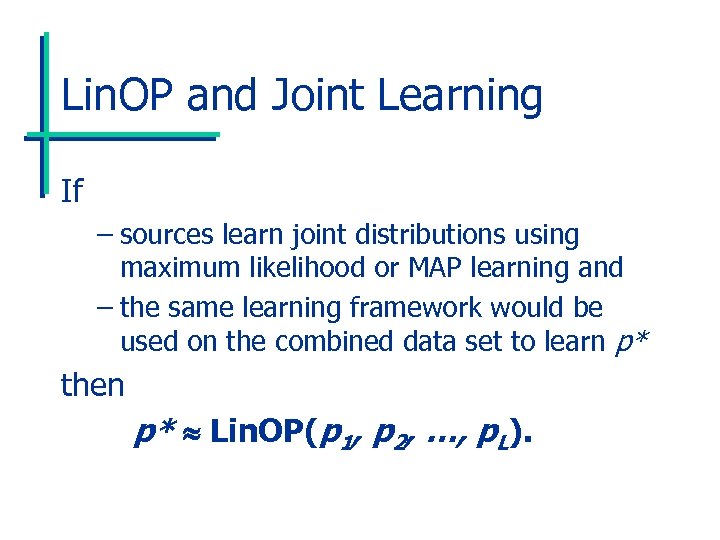

Lin. OP and Joint Learning If – sources learn joint distributions using maximum likelihood or MAP learning and – the same learning framework would be used on the combined data set to learn p* then p* Lin. OP(p 1, p 2, …, p. L).

Lin. OP and Joint Learning If – sources learn joint distributions using maximum likelihood or MAP learning and – the same learning framework would be used on the combined data set to learn p* then p* Lin. OP(p 1, p 2, …, p. L).

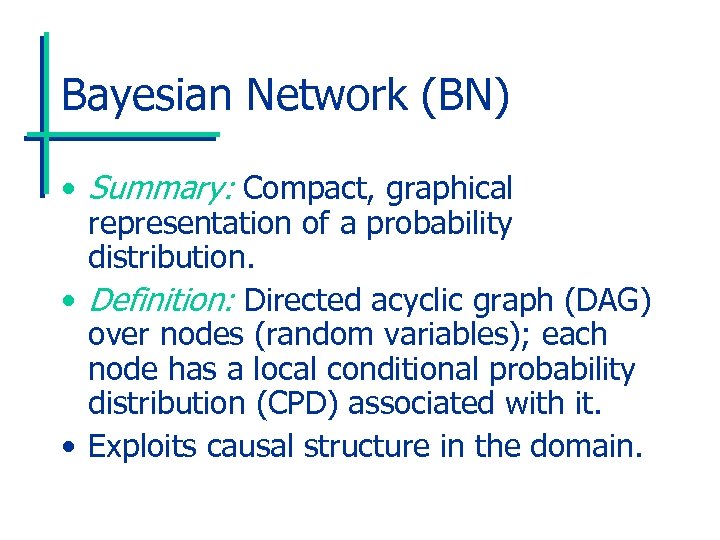

Bayesian Network (BN) • Summary: Compact, graphical representation of a probability distribution. • Definition: Directed acyclic graph (DAG) over nodes (random variables); each node has a local conditional probability distribution (CPD) associated with it. • Exploits causal structure in the domain.

Bayesian Network (BN) • Summary: Compact, graphical representation of a probability distribution. • Definition: Directed acyclic graph (DAG) over nodes (random variables); each node has a local conditional probability distribution (CPD) associated with it. • Exploits causal structure in the domain.

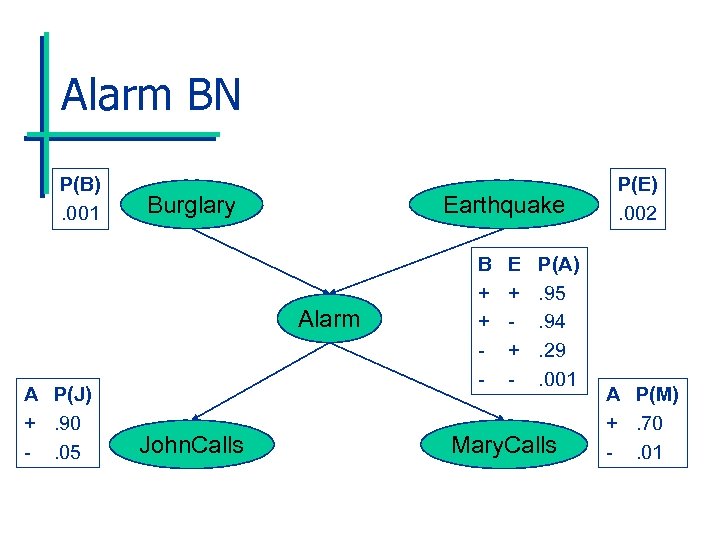

Alarm BN P(B). 001 Burglary Earthquake Alarm A P(J) +. 90 -. 05 John. Calls B + + - E + + - P(A). 95. 94. 29. 001 Mary. Calls P(E). 002 A P(M) +. 70 -. 01

Alarm BN P(B). 001 Burglary Earthquake Alarm A P(J) +. 90 -. 05 John. Calls B + + - E + + - P(A). 95. 94. 29. 001 Mary. Calls P(E). 002 A P(M) +. 70 -. 01

BN Advantages • Compact representation and graph encodes conditional independences. • Elicitation easy in practice. • Inference efficient in practice. • Can be learned from data. • Deployed successfully – medical diagnosis, Microsoft Office, NASA Mission Control, and more.

BN Advantages • Compact representation and graph encodes conditional independences. • Elicitation easy in practice. • Inference efficient in practice. • Can be learned from data. • Deployed successfully – medical diagnosis, Microsoft Office, NASA Mission Control, and more.

BN Learning • Idea: Select BN most likely to have generated data. • Standard algorithm: – Search over structures by adding, deleting, and reversing edges. – Parameterize and score structures using statistics from the data. – Penalize complex structures.

BN Learning • Idea: Select BN most likely to have generated data. • Standard algorithm: – Search over structures by adding, deleting, and reversing edges. – Parameterize and score structures using statistics from the data. – Penalize complex structures.

Aggregating BNs • Each source i learns BN pi. • p* is the BN we would learn from the combined data set. • We want to approximate p* as closely as possible by aggregating p 1, …, p. L. • Source information: estimates for the relative experience of the sources and the total amount of data seen (M).

Aggregating BNs • Each source i learns BN pi. • p* is the BN we would learn from the combined data set. • We want to approximate p* as closely as possible by aggregating p 1, …, p. L. • Source information: estimates for the relative experience of the sources and the total amount of data seen (M).

AGGR: BN Aggregation Algorithm • Idea: Use BN learning algorithm. • Problem: We don’t have data. • Key observation: We can use Lin. OP to approximate the statistics needed for the parameterization and scoring steps! • Also, we can use Lin. OP properties to make algorithm reasonably efficient.

AGGR: BN Aggregation Algorithm • Idea: Use BN learning algorithm. • Problem: We don’t have data. • Key observation: We can use Lin. OP to approximate the statistics needed for the parameterization and scoring steps! • Also, we can use Lin. OP properties to make algorithm reasonably efficient.

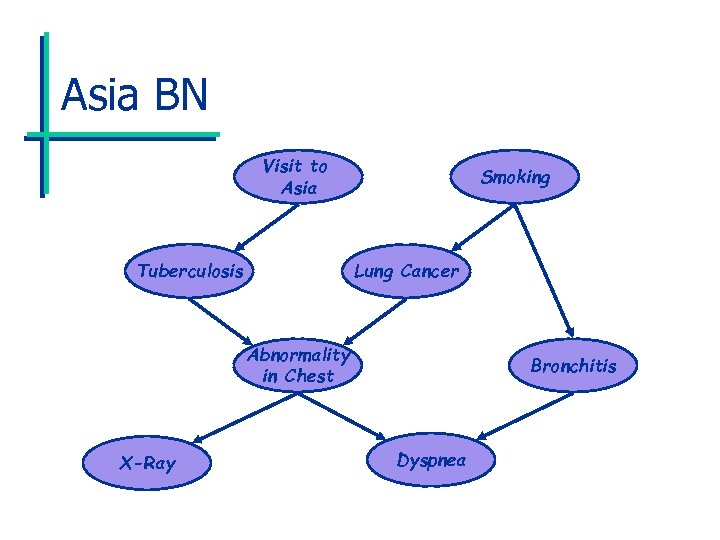

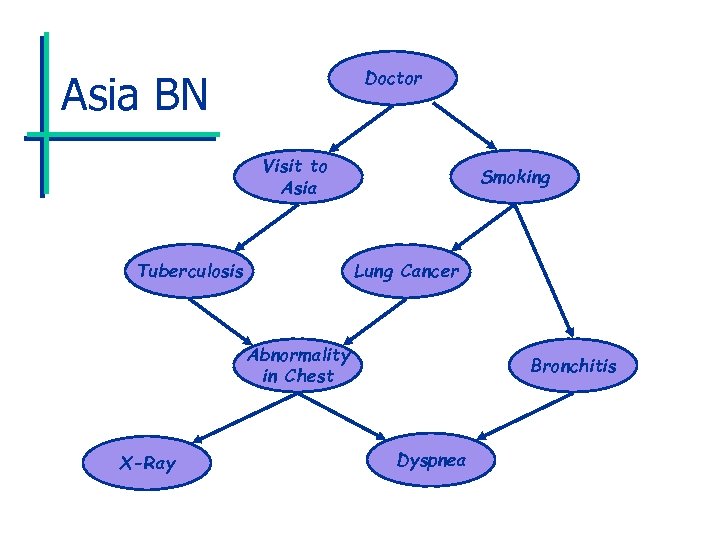

Asia BN Visit to Asia Tuberculosis Smoking Lung Cancer Abnormality in Chest X-Ray Bronchitis Dyspnea

Asia BN Visit to Asia Tuberculosis Smoking Lung Cancer Abnormality in Chest X-Ray Bronchitis Dyspnea

Experimental Setup • Generate data for sources from wellknown ASIA BN which relates smoking, visiting Asia, and lung cancer. • Compare our algorithm AGGR against the optimal algorithm OPT that has access to the combined data set. • Accuracy measure: KL divergence from generating distribution.

Experimental Setup • Generate data for sources from wellknown ASIA BN which relates smoking, visiting Asia, and lung cancer. • Compare our algorithm AGGR against the optimal algorithm OPT that has access to the combined data set. • Accuracy measure: KL divergence from generating distribution.

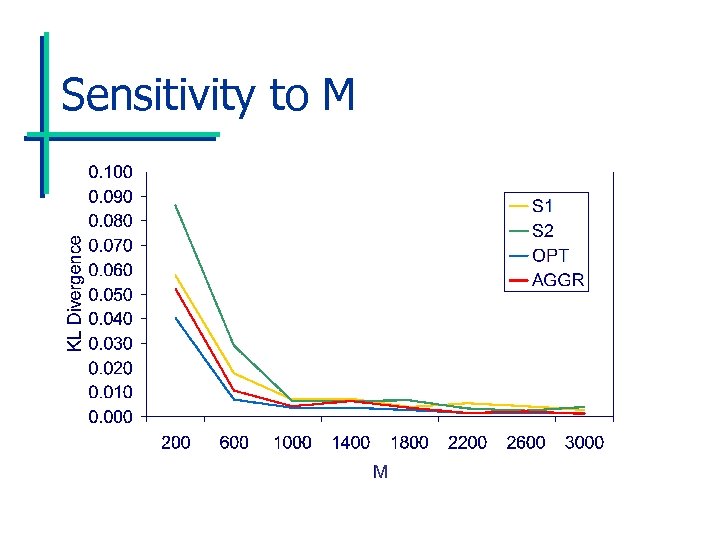

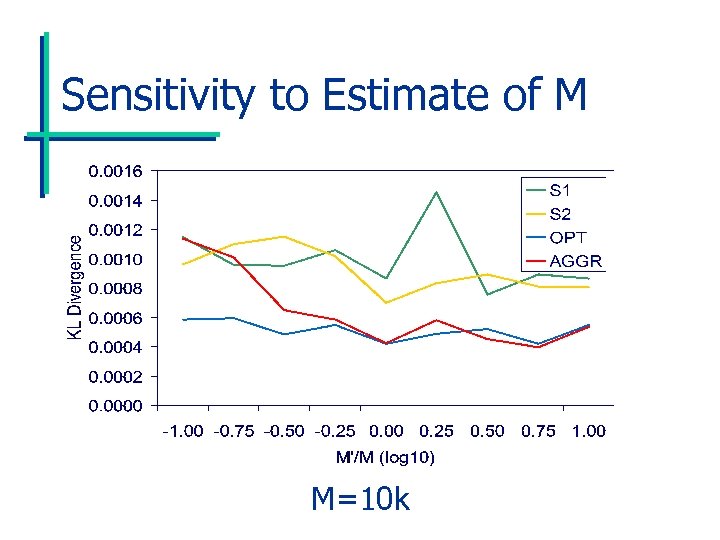

Sensitivity to M Experiments • Sensitivity to M – Size of the combined data set M varies. – AGGR’s estimate of M is accurate. • Sensitivity to Estimate of M – Size of the combined data set M is fixed. – AGGR’s estimate of M varies.

Sensitivity to M Experiments • Sensitivity to M – Size of the combined data set M varies. – AGGR’s estimate of M is accurate. • Sensitivity to Estimate of M – Size of the combined data set M is fixed. – AGGR’s estimate of M varies.

Sensitivity to M

Sensitivity to M

Sensitivity to Estimate of M M=10 k

Sensitivity to Estimate of M M=10 k

Subpopulations • Each source’s data may come from a different subpopulation P(D|Si), where D is the data. • We want to learn P(D). • P(D) = Lin. OP(P(D|S 1), P(D|S 2), …, P(D|SL)) with sources’ weights based on P(Si). • We can apply the same algorithm.

Subpopulations • Each source’s data may come from a different subpopulation P(D|Si), where D is the data. • We want to learn P(D). • P(D) = Lin. OP(P(D|S 1), P(D|S 2), …, P(D|SL)) with sources’ weights based on P(Si). • We can apply the same algorithm.

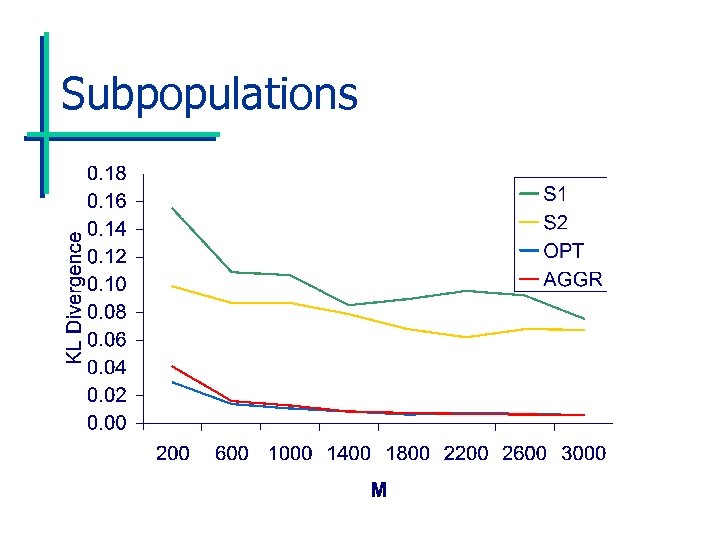

Subpopulations Experiments • In the Asia network domain, one doctor practices in San Francisco, another in Cincinnati. • Subpopulations have different priors for smoking and having visited Asia, so doctors’ beliefs are biased. • The aggregate distribution comes much closer to the original distribution.

Subpopulations Experiments • In the Asia network domain, one doctor practices in San Francisco, another in Cincinnati. • Subpopulations have different priors for smoking and having visited Asia, so doctors’ beliefs are biased. • The aggregate distribution comes much closer to the original distribution.

Doctor Asia BN Visit to Asia Tuberculosis Smoking Lung Cancer Abnormality in Chest X-Ray Bronchitis Dyspnea

Doctor Asia BN Visit to Asia Tuberculosis Smoking Lung Cancer Abnormality in Chest X-Ray Bronchitis Dyspnea

Subpopulations

Subpopulations

Contributions • A semantic framework for aggregating learned probabilistic models. • A Lin. OP-based algorithm for aggregating learned BNs. • Experiments showing algorithm behaves well.

Contributions • A semantic framework for aggregating learned probabilistic models. • A Lin. OP-based algorithm for aggregating learned BNs. • Experiments showing algorithm behaves well.

Outline • Introduction • Automating Information Integration • Integrating Learned Probabilistic Information • Conclusion and Current Work

Outline • Introduction • Automating Information Integration • Integrating Learned Probabilistic Information • Conclusion and Current Work

Conclusion • Conflict resolution is key in automated information integration. • This is a difficult task in general. • However, information about sources is often readily available. • Principled use of this information can greatly enhance the ability to resolve conflicts intelligently.

Conclusion • Conflict resolution is key in automated information integration. • This is a difficult task in general. • However, information about sources is often readily available. • Principled use of this information can greatly enhance the ability to resolve conflicts intelligently.

Current Work • Allow dependence between sources’ data sets in probabilistic aggregation work. • Apply semantic framework to aggregation in other learning paradigms. • Explore application of algorithms to database integration, Robo. Cup, stock market prediction, etc. • Making committee meetings obsolete!

Current Work • Allow dependence between sources’ data sets in probabilistic aggregation work. • Apply semantic framework to aggregation in other learning paradigms. • Explore application of algorithms to database integration, Robo. Cup, stock market prediction, etc. • Making committee meetings obsolete!

Multi-Agent Research Zone • Research interests: – Information integration – Multi-agent machine learning – Robo. Cup soccer simulation league testbed • Masters students – Jian Xu: Information integration in medical informatics – Linxin Gan: Ensemble learning in stock market prediction

Multi-Agent Research Zone • Research interests: – Information integration – Multi-agent machine learning – Robo. Cup soccer simulation league testbed • Masters students – Jian Xu: Information integration in medical informatics – Linxin Gan: Ensemble learning in stock market prediction

CSA Graduate Program • Masters in Computer Science • Research areas include: – machine learning, KRR, and MAS – information retrieval, databases, and NLP – networking and virtual environments – simulation and evolutionary computation – software engineering and formal methods http: //unixgen. muohio. edu/~maynarp/ maynarp@muohio. edu

CSA Graduate Program • Masters in Computer Science • Research areas include: – machine learning, KRR, and MAS – information retrieval, databases, and NLP – networking and virtual environments – simulation and evolutionary computation – software engineering and formal methods http: //unixgen. muohio. edu/~maynarp/ maynarp@muohio. edu