54cda74a64bfb7e89726f39daef559de.ppt

- Количество слайдов: 22

ATLAS DC 2 ISGC-2005 Taipei 27 th April 2005 Gilbert Poulard (CERN PH-ATC) on behalf of ATLAS Data Challenges; Grid and Operations teams ISGC-2005 G. Poulard - CERN PH

ATLAS DC 2 ISGC-2005 Taipei 27 th April 2005 Gilbert Poulard (CERN PH-ATC) on behalf of ATLAS Data Challenges; Grid and Operations teams ISGC-2005 G. Poulard - CERN PH

Overview q Introduction o o ATLAS experiment ATLAS Data Challenges program q ATLAS production system q Data Challenge 2 o o The 3 Grid flavors (LCG; Grid 3 and Nordu. Grid) ATLAS DC 2 production q Conclusions ISGC-2005 G. Poulard - CERN PH 2

Overview q Introduction o o ATLAS experiment ATLAS Data Challenges program q ATLAS production system q Data Challenge 2 o o The 3 Grid flavors (LCG; Grid 3 and Nordu. Grid) ATLAS DC 2 production q Conclusions ISGC-2005 G. Poulard - CERN PH 2

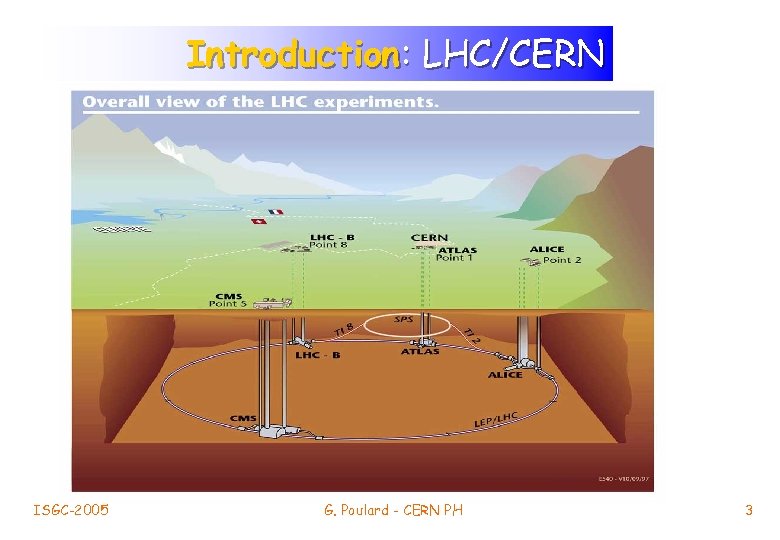

LHC Introduction: LHC/CERN (CERN) Mont Blanc, 4810 m Geneva ISGC-2005 G. Poulard - CERN PH 3

LHC Introduction: LHC/CERN (CERN) Mont Blanc, 4810 m Geneva ISGC-2005 G. Poulard - CERN PH 3

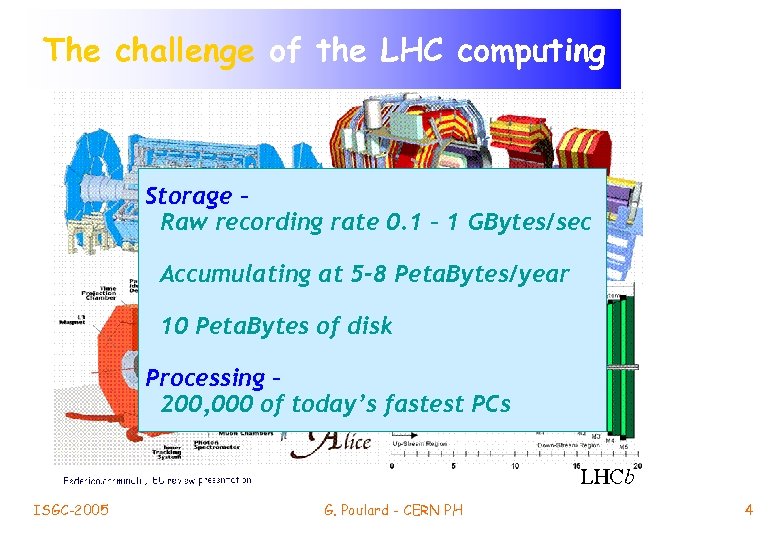

The challenge of the LHC computing Storage – Raw recording rate 0. 1 – 1 GBytes/sec Accumulating at 5 -8 Peta. Bytes/year 10 Peta. Bytes of disk Processing – 200, 000 of today’s fastest PCs ISGC-2005 G. Poulard - CERN PH 4

The challenge of the LHC computing Storage – Raw recording rate 0. 1 – 1 GBytes/sec Accumulating at 5 -8 Peta. Bytes/year 10 Peta. Bytes of disk Processing – 200, 000 of today’s fastest PCs ISGC-2005 G. Poulard - CERN PH 4

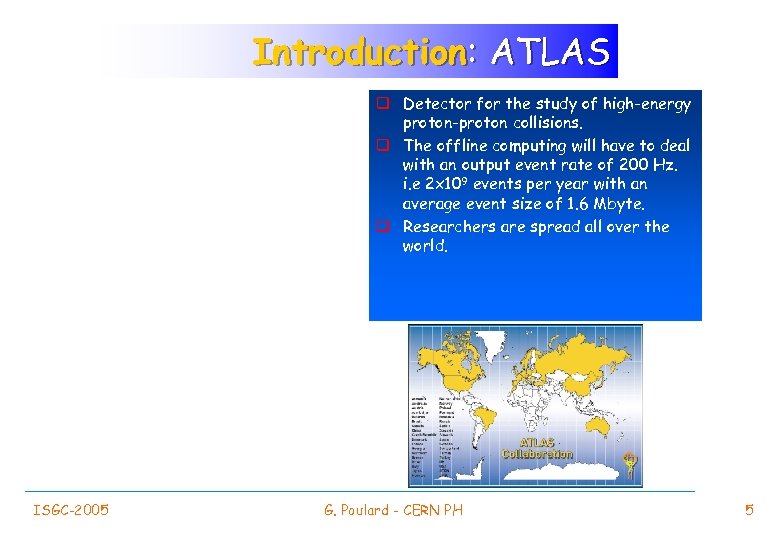

Introduction: ATLAS q Detector for the study of high-energy proton-proton collisions. q The offline computing will have to deal with an output event rate of 200 Hz. i. e 2 x 109 events per year with an average event size of 1. 6 Mbyte. q Researchers are spread all over the world. ISGC-2005 G. Poulard - CERN PH 5

Introduction: ATLAS q Detector for the study of high-energy proton-proton collisions. q The offline computing will have to deal with an output event rate of 200 Hz. i. e 2 x 109 events per year with an average event size of 1. 6 Mbyte. q Researchers are spread all over the world. ISGC-2005 G. Poulard - CERN PH 5

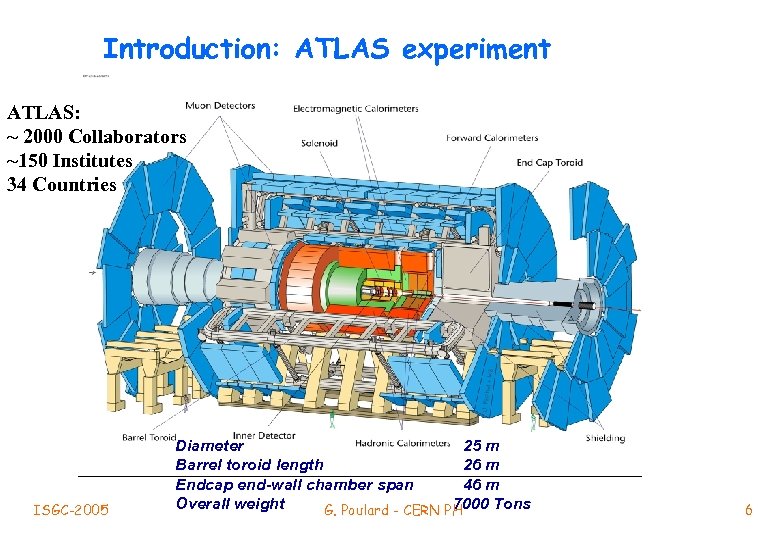

Introduction: ATLAS experiment ATLAS: ~ 2000 Collaborators ~150 Institutes 34 Countries ISGC-2005 Diameter 25 m Barrel toroid length 26 m Endcap end-wall chamber span 46 m Overall weight 7000 Tons G. Poulard - CERN PH 6

Introduction: ATLAS experiment ATLAS: ~ 2000 Collaborators ~150 Institutes 34 Countries ISGC-2005 Diameter 25 m Barrel toroid length 26 m Endcap end-wall chamber span 46 m Overall weight 7000 Tons G. Poulard - CERN PH 6

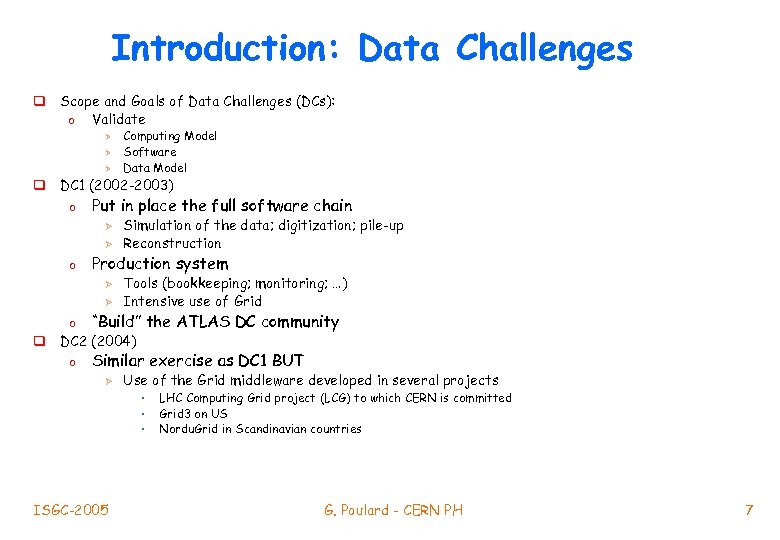

Introduction: Data Challenges q Scope and Goals of Data Challenges (DCs): o Validate Ø Ø Ø Computing Model Software Data Model q DC 1 (2002 -2003) o Put in place the full software chain Ø Ø o Simulation of the data; digitization; pile-up Reconstruction Production system Ø Ø Tools (bookkeeping; monitoring; …) Intensive use of Grid o “Build” the ATLAS DC community o Similar exercise as DC 1 BUT q DC 2 (2004) Ø Use of the Grid middleware developed in several projects • • • ISGC-2005 LHC Computing Grid project (LCG) to which CERN is committed Grid 3 on US Nordu. Grid in Scandinavian countries G. Poulard - CERN PH 7

Introduction: Data Challenges q Scope and Goals of Data Challenges (DCs): o Validate Ø Ø Ø Computing Model Software Data Model q DC 1 (2002 -2003) o Put in place the full software chain Ø Ø o Simulation of the data; digitization; pile-up Reconstruction Production system Ø Ø Tools (bookkeeping; monitoring; …) Intensive use of Grid o “Build” the ATLAS DC community o Similar exercise as DC 1 BUT q DC 2 (2004) Ø Use of the Grid middleware developed in several projects • • • ISGC-2005 LHC Computing Grid project (LCG) to which CERN is committed Grid 3 on US Nordu. Grid in Scandinavian countries G. Poulard - CERN PH 7

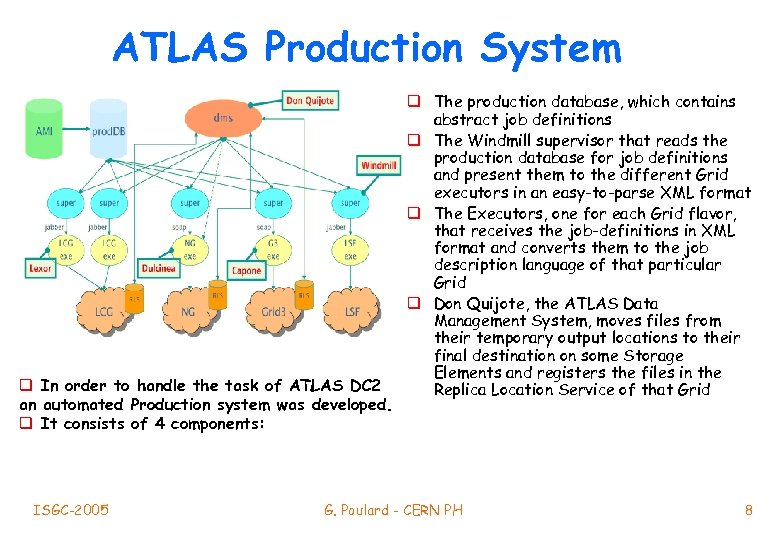

ATLAS Production System q In order to handle the task of ATLAS DC 2 an automated Production system was developed. q It consists of 4 components: ISGC-2005 q The production database, which contains abstract job definitions q The Windmill supervisor that reads the production database for job definitions and present them to the different Grid executors in an easy-to-parse XML format q The Executors, one for each Grid flavor, that receives the job-definitions in XML format and converts them to the job description language of that particular Grid q Don Quijote, the ATLAS Data Management System, moves files from their temporary output locations to their final destination on some Storage Elements and registers the files in the Replica Location Service of that Grid G. Poulard - CERN PH 8

ATLAS Production System q In order to handle the task of ATLAS DC 2 an automated Production system was developed. q It consists of 4 components: ISGC-2005 q The production database, which contains abstract job definitions q The Windmill supervisor that reads the production database for job definitions and present them to the different Grid executors in an easy-to-parse XML format q The Executors, one for each Grid flavor, that receives the job-definitions in XML format and converts them to the job description language of that particular Grid q Don Quijote, the ATLAS Data Management System, moves files from their temporary output locations to their final destination on some Storage Elements and registers the files in the Replica Location Service of that Grid G. Poulard - CERN PH 8

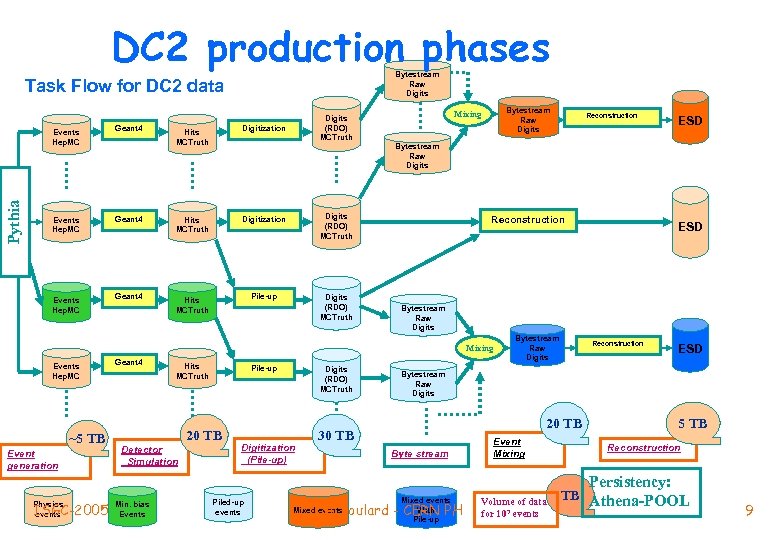

DC 2 production phases Bytestream Raw Digits Task Flow for DC 2 data Events Hep. MC Pythia Events Hep. MC Geant 4 Digits (RDO) MCTruth Hits MCTruth Digitization Geant 4 Hits MCTruth Digitization Digits (RDO) MCTruth Geant 4 Hits MCTruth Pile-up Digits (RDO) MCTruth Bytestream Raw Digits Mixing Events Hep. MC ~5 TB Event generation ISGC-2005 Physics events Hits MCTruth Pile-up 20 TB Detector Simulation Min. bias Events Digits (RDO) MCTruth Digitization (Pile-up) Piled-up events ESD Bytestream Raw Digits Reconstruction ESD Bytestream Raw Digits Mixing Geant 4 Reconstruction Bytestream Raw Digits Reconstruction ESD Bytestream Raw Digits 20 TB 30 TB Byte stream Mixed events With G. Poulard - CERN PH Mixed events Pile-up Event Mixing Volume of data for 107 events 5 TB Reconstruction Persistency: TB Athena-POOL 9

DC 2 production phases Bytestream Raw Digits Task Flow for DC 2 data Events Hep. MC Pythia Events Hep. MC Geant 4 Digits (RDO) MCTruth Hits MCTruth Digitization Geant 4 Hits MCTruth Digitization Digits (RDO) MCTruth Geant 4 Hits MCTruth Pile-up Digits (RDO) MCTruth Bytestream Raw Digits Mixing Events Hep. MC ~5 TB Event generation ISGC-2005 Physics events Hits MCTruth Pile-up 20 TB Detector Simulation Min. bias Events Digits (RDO) MCTruth Digitization (Pile-up) Piled-up events ESD Bytestream Raw Digits Reconstruction ESD Bytestream Raw Digits Mixing Geant 4 Reconstruction Bytestream Raw Digits Reconstruction ESD Bytestream Raw Digits 20 TB 30 TB Byte stream Mixed events With G. Poulard - CERN PH Mixed events Pile-up Event Mixing Volume of data for 107 events 5 TB Reconstruction Persistency: TB Athena-POOL 9

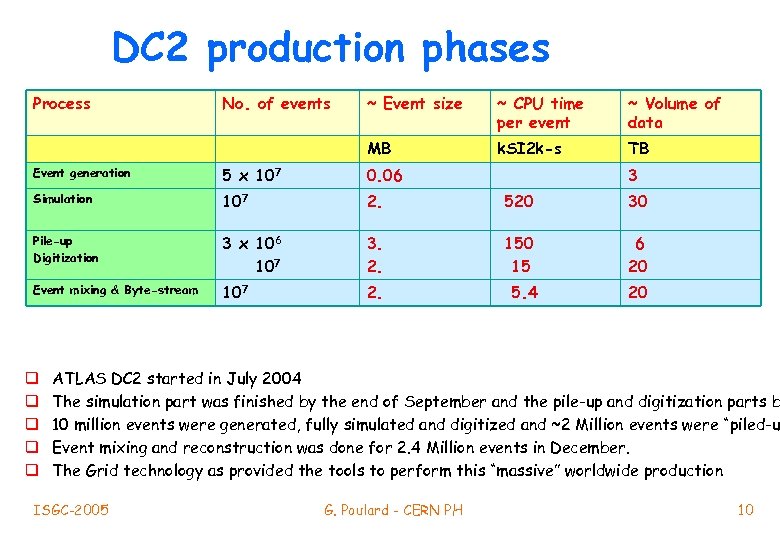

DC 2 production phases Process No. of events ~ Event size ~ CPU time per event ~ Volume of data MB k. SI 2 k-s TB Event generation 5 x 107 0. 06 Simulation 107 2. 520 30 Pile-up Digitization 3 x 106 107 3. 2. 150 15 6 20 Event mixing & Byte-stream 107 2. 5. 4 20 q q q 3 ATLAS DC 2 started in July 2004 The simulation part was finished by the end of September and the pile-up and digitization parts b 10 million events were generated, fully simulated and digitized and ~2 Million events were “piled-u Event mixing and reconstruction was done for 2. 4 Million events in December. The Grid technology as provided the tools to perform this “massive” worldwide production ISGC-2005 G. Poulard - CERN PH 10

DC 2 production phases Process No. of events ~ Event size ~ CPU time per event ~ Volume of data MB k. SI 2 k-s TB Event generation 5 x 107 0. 06 Simulation 107 2. 520 30 Pile-up Digitization 3 x 106 107 3. 2. 150 15 6 20 Event mixing & Byte-stream 107 2. 5. 4 20 q q q 3 ATLAS DC 2 started in July 2004 The simulation part was finished by the end of September and the pile-up and digitization parts b 10 million events were generated, fully simulated and digitized and ~2 Million events were “piled-u Event mixing and reconstruction was done for 2. 4 Million events in December. The Grid technology as provided the tools to perform this “massive” worldwide production ISGC-2005 G. Poulard - CERN PH 10

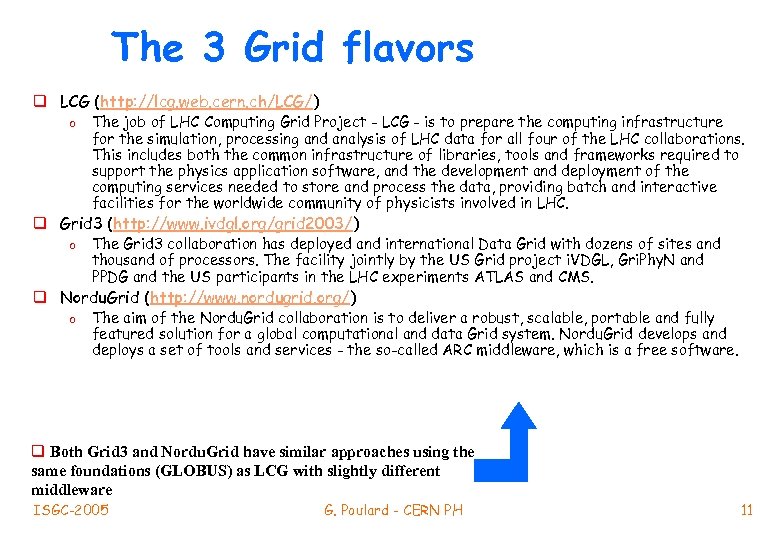

The 3 Grid flavors q LCG (http: //lcg. web. cern. ch/LCG/) o The job of LHC Computing Grid Project - LCG - is to prepare the computing infrastructure for the simulation, processing and analysis of LHC data for all four of the LHC collaborations. This includes both the common infrastructure of libraries, tools and frameworks required to support the physics application software, and the development and deployment of the computing services needed to store and process the data, providing batch and interactive facilities for the worldwide community of physicists involved in LHC. q Grid 3 (http: //www. ivdgl. org/grid 2003/) o The Grid 3 collaboration has deployed and international Data Grid with dozens of sites and thousand of processors. The facility jointly by the US Grid project i. VDGL, Gri. Phy. N and PPDG and the US participants in the LHC experiments ATLAS and CMS. q Nordu. Grid (http: //www. nordugrid. org/) o The aim of the Nordu. Grid collaboration is to deliver a robust, scalable, portable and fully featured solution for a global computational and data Grid system. Nordu. Grid develops and deploys a set of tools and services - the so-called ARC middleware, which is a free software. q Both Grid 3 and Nordu. Grid have similar approaches using the same foundations (GLOBUS) as LCG with slightly different middleware ISGC-2005 G. Poulard - CERN PH 11

The 3 Grid flavors q LCG (http: //lcg. web. cern. ch/LCG/) o The job of LHC Computing Grid Project - LCG - is to prepare the computing infrastructure for the simulation, processing and analysis of LHC data for all four of the LHC collaborations. This includes both the common infrastructure of libraries, tools and frameworks required to support the physics application software, and the development and deployment of the computing services needed to store and process the data, providing batch and interactive facilities for the worldwide community of physicists involved in LHC. q Grid 3 (http: //www. ivdgl. org/grid 2003/) o The Grid 3 collaboration has deployed and international Data Grid with dozens of sites and thousand of processors. The facility jointly by the US Grid project i. VDGL, Gri. Phy. N and PPDG and the US participants in the LHC experiments ATLAS and CMS. q Nordu. Grid (http: //www. nordugrid. org/) o The aim of the Nordu. Grid collaboration is to deliver a robust, scalable, portable and fully featured solution for a global computational and data Grid system. Nordu. Grid develops and deploys a set of tools and services - the so-called ARC middleware, which is a free software. q Both Grid 3 and Nordu. Grid have similar approaches using the same foundations (GLOBUS) as LCG with slightly different middleware ISGC-2005 G. Poulard - CERN PH 11

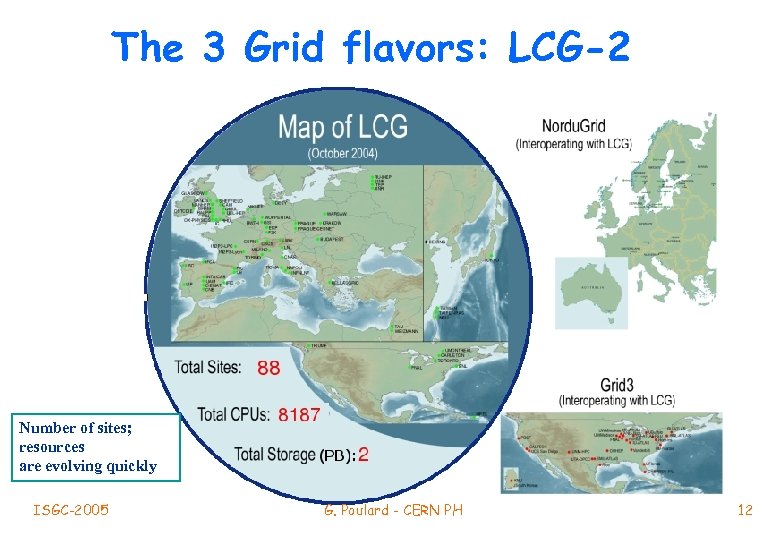

The 3 Grid flavors: LCG-2 Number of sites; resources are evolving quickly ISGC-2005 G. Poulard - CERN PH 12

The 3 Grid flavors: LCG-2 Number of sites; resources are evolving quickly ISGC-2005 G. Poulard - CERN PH 12

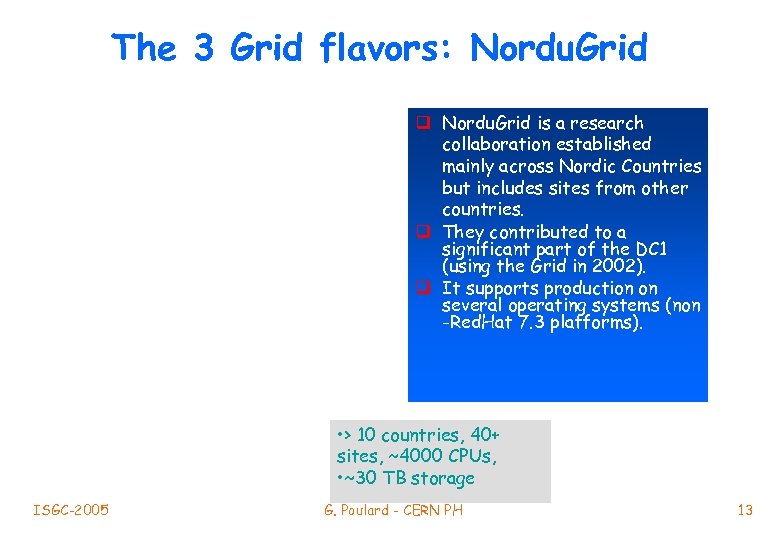

The 3 Grid flavors: Nordu. Grid q Nordu. Grid is a research collaboration established mainly across Nordic Countries but includes sites from other countries. q They contributed to a significant part of the DC 1 (using the Grid in 2002). q It supports production on several operating systems (non -Red. Hat 7. 3 platforms). • > 10 countries, 40+ sites, ~4000 CPUs, • ~30 TB storage ISGC-2005 G. Poulard - CERN PH 13

The 3 Grid flavors: Nordu. Grid q Nordu. Grid is a research collaboration established mainly across Nordic Countries but includes sites from other countries. q They contributed to a significant part of the DC 1 (using the Grid in 2002). q It supports production on several operating systems (non -Red. Hat 7. 3 platforms). • > 10 countries, 40+ sites, ~4000 CPUs, • ~30 TB storage ISGC-2005 G. Poulard - CERN PH 13

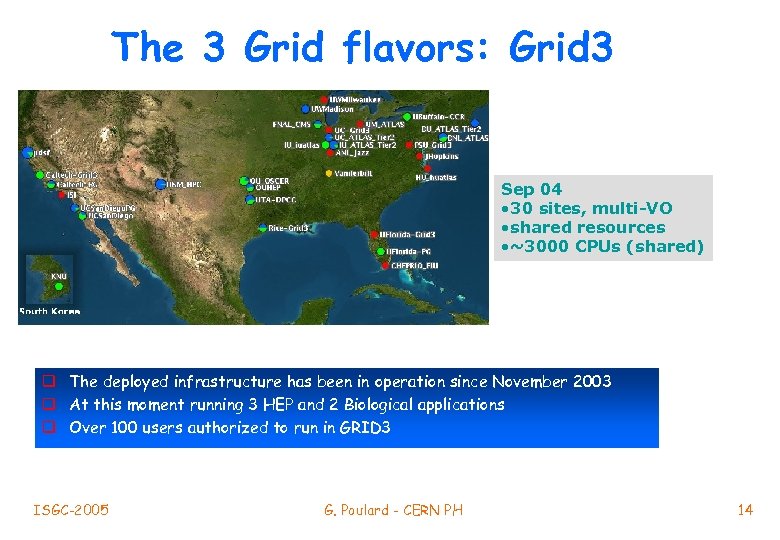

The 3 Grid flavors: Grid 3 Sep 04 • 30 sites, multi-VO • shared resources • ~3000 CPUs (shared) q The deployed infrastructure has been in operation since November 2003 q At this moment running 3 HEP and 2 Biological applications q Over 100 users authorized to run in GRID 3 ISGC-2005 G. Poulard - CERN PH 14

The 3 Grid flavors: Grid 3 Sep 04 • 30 sites, multi-VO • shared resources • ~3000 CPUs (shared) q The deployed infrastructure has been in operation since November 2003 q At this moment running 3 HEP and 2 Biological applications q Over 100 users authorized to run in GRID 3 ISGC-2005 G. Poulard - CERN PH 14

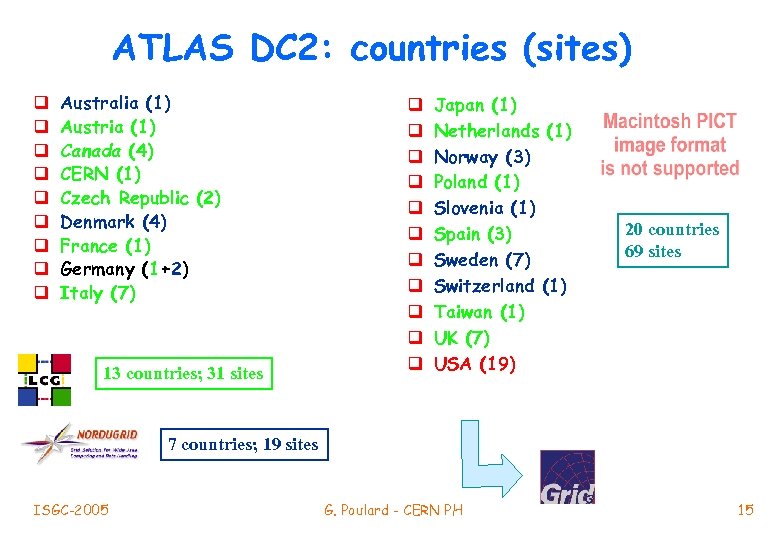

ATLAS DC 2: countries (sites) q q q q q Australia (1) Austria (1) Canada (4) CERN (1) Czech Republic (2) Denmark (4) France (1) Germany (1+2) Italy (7) 13 countries; 31 sites q q q Japan (1) Netherlands (1) Norway (3) Poland (1) Slovenia (1) Spain (3) Sweden (7) Switzerland (1) Taiwan (1) UK (7) USA (19) 20 countries 69 sites 7 countries; 19 sites ISGC-2005 G. Poulard - CERN PH 15

ATLAS DC 2: countries (sites) q q q q q Australia (1) Austria (1) Canada (4) CERN (1) Czech Republic (2) Denmark (4) France (1) Germany (1+2) Italy (7) 13 countries; 31 sites q q q Japan (1) Netherlands (1) Norway (3) Poland (1) Slovenia (1) Spain (3) Sweden (7) Switzerland (1) Taiwan (1) UK (7) USA (19) 20 countries 69 sites 7 countries; 19 sites ISGC-2005 G. Poulard - CERN PH 15

ATLAS DC 2 production Total ISGC-2005 G. Poulard - CERN PH 16

ATLAS DC 2 production Total ISGC-2005 G. Poulard - CERN PH 16

ATLAS DC 2 production ISGC-2005 G. Poulard - CERN PH 17

ATLAS DC 2 production ISGC-2005 G. Poulard - CERN PH 17

ATLAS Production (July 2004 - April 2005) Rome (mix jobs) DC 2 (short jobs period) DC 2 (long jobs period) ISGC-2005 G. Poulard - CERN PH 18

ATLAS Production (July 2004 - April 2005) Rome (mix jobs) DC 2 (short jobs period) DC 2 (long jobs period) ISGC-2005 G. Poulard - CERN PH 18

Jobs Total 4 r m 0 f 3 e ov be 0 20 N so A 20 countries 69 sites ~ 260000 Jobs ~ 2 MSi 2 k. months ISGC-2005 G. Poulard - CERN PH 19

Jobs Total 4 r m 0 f 3 e ov be 0 20 N so A 20 countries 69 sites ~ 260000 Jobs ~ 2 MSi 2 k. months ISGC-2005 G. Poulard - CERN PH 19

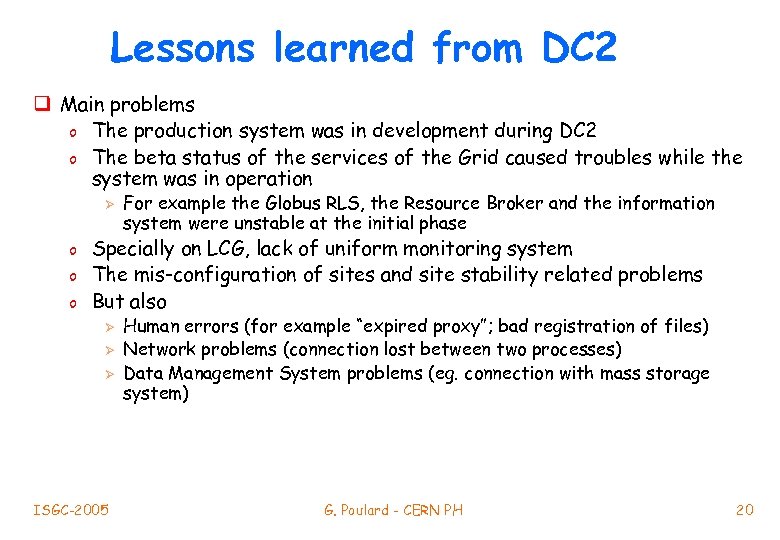

Lessons learned from DC 2 q Main problems o The production system was in development during DC 2 o The beta status of the services of the Grid caused troubles while the system was in operation Ø o o o For example the Globus RLS, the Resource Broker and the information system were unstable at the initial phase Specially on LCG, lack of uniform monitoring system The mis-configuration of sites and site stability related problems But also Ø Ø Ø ISGC-2005 Human errors (for example “expired proxy”; bad registration of files) Network problems (connection lost between two processes) Data Management System problems (eg. connection with mass storage system) G. Poulard - CERN PH 20

Lessons learned from DC 2 q Main problems o The production system was in development during DC 2 o The beta status of the services of the Grid caused troubles while the system was in operation Ø o o o For example the Globus RLS, the Resource Broker and the information system were unstable at the initial phase Specially on LCG, lack of uniform monitoring system The mis-configuration of sites and site stability related problems But also Ø Ø Ø ISGC-2005 Human errors (for example “expired proxy”; bad registration of files) Network problems (connection lost between two processes) Data Management System problems (eg. connection with mass storage system) G. Poulard - CERN PH 20

Lessons learned from DC 2 q Main achievements o o o To have run a large scale production on Grid ONLY, using 3 Grid flavors To have an automatic production system making use of Grid infrastructure Few “ 10 TB” of data have been moved among the different Grid flavors using Don. Quijote (ATLAS Data Management) servers ~260000 jobs were submitted by the production system ~260000 logical files were produced and ~2500 jobs were run per day ISGC-2005 G. Poulard - CERN PH 21

Lessons learned from DC 2 q Main achievements o o o To have run a large scale production on Grid ONLY, using 3 Grid flavors To have an automatic production system making use of Grid infrastructure Few “ 10 TB” of data have been moved among the different Grid flavors using Don. Quijote (ATLAS Data Management) servers ~260000 jobs were submitted by the production system ~260000 logical files were produced and ~2500 jobs were run per day ISGC-2005 G. Poulard - CERN PH 21

Conclusions q The generation, simulation and digitization of events for ATLAS DC 2 have been completed using 3 flavors of Grid Technology (LCG; Grid 3; Nordu. Grid) o They have been proven to be usable in a coherent way for a real production and this is a major achievement q This exercise has taught us that all the involved elements (Grid middleware, production system, deployment and monitoring tools, …) need improvements q From July to end November 2004, the automatic production system has submitted ~260000 jobs, they consumed ~2000 k. SI 2 k months of CPU and produced more than 60 TB of data q If one includes on-going production one reaches ~700000 jobs, more than 100 TB and ~500 k. SI 2 k. years ISGC-2005 G. Poulard - CERN PH 22

Conclusions q The generation, simulation and digitization of events for ATLAS DC 2 have been completed using 3 flavors of Grid Technology (LCG; Grid 3; Nordu. Grid) o They have been proven to be usable in a coherent way for a real production and this is a major achievement q This exercise has taught us that all the involved elements (Grid middleware, production system, deployment and monitoring tools, …) need improvements q From July to end November 2004, the automatic production system has submitted ~260000 jobs, they consumed ~2000 k. SI 2 k months of CPU and produced more than 60 TB of data q If one includes on-going production one reaches ~700000 jobs, more than 100 TB and ~500 k. SI 2 k. years ISGC-2005 G. Poulard - CERN PH 22