a47439db324a205c63977d0d70824de8.ppt

- Количество слайдов: 48

Applying Corpus Based Approaches using Syntactic Patterns and Predicate Argument Relations to Hypernym Recognition for Question Answering Kieran White and Richard Sutcliffe

Contents Motivation Objectives Experimental Framework P-System Classifications Larger Evaluation Comparison of Three Models Conclusions

Motivation

Motivation Question answering and the DLT system TREC, CLEF and NTCIR Four stages to answering a factoid query in a standard question answering system Identification of answer type required Document retrieval Named Entity recognition Answer selection

Motivation Example from TREC 2003 How long is a quarter in an NBA game? Identity type of answer required length_of_time Document retrieval Boolean query: quarter AND NBA AND game Relax query if no documents returned

Motivation Example from TREC 2003 Named Entity recognition Locate instances of length_of_time Named Entities in documents Answer selection Select one length_of_time Named Entity (e. g. 12 minutes) using a scoring function

Motivation Comparing query terms with those in supporting sentences White and Sutcliffe (2004) Compared terms in 50 TREC question answering factoid queries from 2003 Supporting sentences Morphological relationships Identical terms (e. g. Washington Monument and Washington Monument) Different inflections of terms (e. g. New York and New York's Terms with different parts of speech (e. g. France and French)

Motivation Comparing query terms with those in supporting sentences Semantic relationships Synonyms (e. g. orca and killer whale) Terms linked by a causal relationship (e. g. die and typhus) Word chains (e. g. Oscar and best-actress) Hypernyms (e. g. city and Berlin) Hyponyms (e. g. Titanic and ship) Meronyms (e. g. Death Valley and California Desert) Holonyms (e. g. 20 th century and 1945) Attributes and units to quantify them (e. g. hot and degrees) Co-occurrence (e. g. old and 18)

Motivation Comparing query terms with those in supporting sentences Hypernyms / Hyponyms most common semantic relationship type TREC Examples What stadium was the first televised MLB game played in? In 1939, the first televised major league baseball games were shown on experimental station W 2 XBS when the Cincinnati Reds and the Brooklyn Dodgers split a doubleheader at Ebbets Field.

Motivation TREC Examples What actress has received the most Oscar nominations? Oscar perennial Meryl Streep is up for best actress for the film, tying Katharine Hepburn for most acting nominations with 12. What ancient tribe of Mexico left behind huge stone heads standing 6 -11 feet tall? The company's founder, geophysicist Sheldon Breiner, is a Stanford University graduate who used the first cesium magnetometer to discover two colossal and ancient Olmec heads in Mexico in the 1960 s.

Motivation TREC Examples When was the Titanic built? The techniques used today to analyze the defects in the metal did not exist back in 1910 when the ship was being built, he said.

Motivation How to classify? Common nouns in ontologies (e. g. Word. Net) Proper nouns? ? ? Feature co-occurrence Labelling clusters of semantically related terms (Pantel and Ravichandran, 2004) Responding with the hypernym of similar previously classified hyponyms (Alfonseca and Manandhar, 2002; Pekar and Staab, 2003; Takenobu et al. , 1997)

Motivation Search patterns (Fleischman et al. , 2003; Girju, 2001; Hearst, 1992; 1998; Mann, 2002; Moldovan et al. , 2000) Feature co-occurrence and search patterns (Hahn and Schattinger, 1998)

Objectives

Objectives Create one or more hyponym classifiers for use as a component in a question answering system Evaluate accuracy of classifiers when identifying the occupations of people

Experimental Framework

Experimental Framework P-System Takes a name as input and attempts to respond with the person's occupation Predicate-argument co-occurrence frequencies A-System Takes a name as input and attempts to respond with the person's occupation Search pattern

Experimental Framework H-System Takes a name as input and attempts to respond with the correct occupation sense P-System and A-System hybrid

Experimental Framework AQUAINT corpus (Graff, 2002) List of 364 occupations 257 names from 2002 -2004 TREC Question Answering track queries 250 were classifiable by a person

P-System Classifications

P-System Classifications Minipar (Lin, 1998) Subject‑verb and verb‑object pairs Frequencies passed to Okapi's BM 25 matching function Candidate hypernyms (occupations) indexed documents Hyponyms (names) presented as queries as Ordered list of hypernyms returned in response to a hyponym Top-ranking hypernym selected as answer

P-System Classifications 50 names from TREC queries No co-reference resolution 0. 30 accuracy Full names substituted for partial names 0. 44 accuracy

P-System Classifications Accuracy increases with name co-occurrence frequency Occupation co-occurrence frequency was not a limiting factor in our experiments Grouping similar occupations allowed us to perform a 194 -way classification experiment Accuracy increased from 0. 44 to 0. 56

P-System Classifications Tuning constants provide some control over the specificity of occupations returned Best assignment of constants penalises occupations occurring in fewer than 1, 000 predicate-argument pairs Some occupations could be classified better than others Which could P-System classify accurately? How accurately? Test set too small

Larger Evaluation

Larger Evaluation Apposition pattern Ontology of occupations and an ontological similarity measure Provides reference judgements Quantifies similarity between returned occupation and nearest occupation in reference judgements Threshold for the ontological similarity measure A value greater than or equal to this indicates that the response of P-System is correct

Larger Evaluation Apposition pattern Search pattern occupation, ? Capitalised Word Sequence Examples For the past year, actor Aaron Eckhart has been receiving hate mail. The landlord, Jon Mendelson, said he would consider any offer from Simmons.

Larger Evaluation Apposition pattern 107, 958 distinct capitalised word sequences found in apposition with an occupation In a random sample of 1, 000 instances 801 were correct 93 attributed some role to a person rather than their occupation (e. g. referred to the leader of an organisation as a chief) 56 indicated an incorrect occupation 50 capitalised word sequence did not refer to the complete name of a person

Larger Evaluation Ontology of occupations Manually constructed ontology of hypernyms Internal nodes comprise filler nodes that provide structure and occupations from the list of 364 Leaf nodes are all taken from the list of occupations

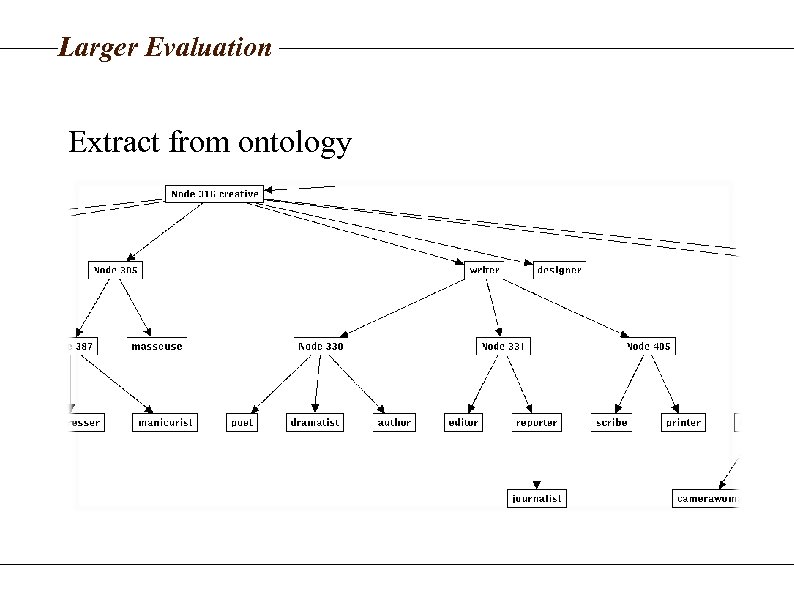

Larger Evaluation Extract from ontology

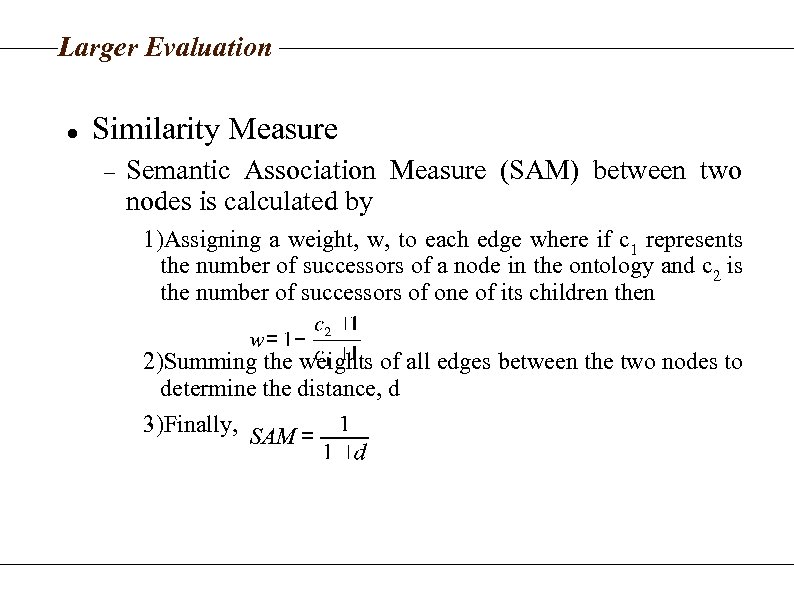

Larger Evaluation Similarity Measure Semantic Association Measure (SAM) between two nodes is calculated by 1)Assigning a weight, w, to each edge where if c 1 represents the number of successors of a node in the ontology and c 2 is the number of successors of one of its children then 2)Summing the weights of all edges between the two nodes to determine the distance, d 3)Finally,

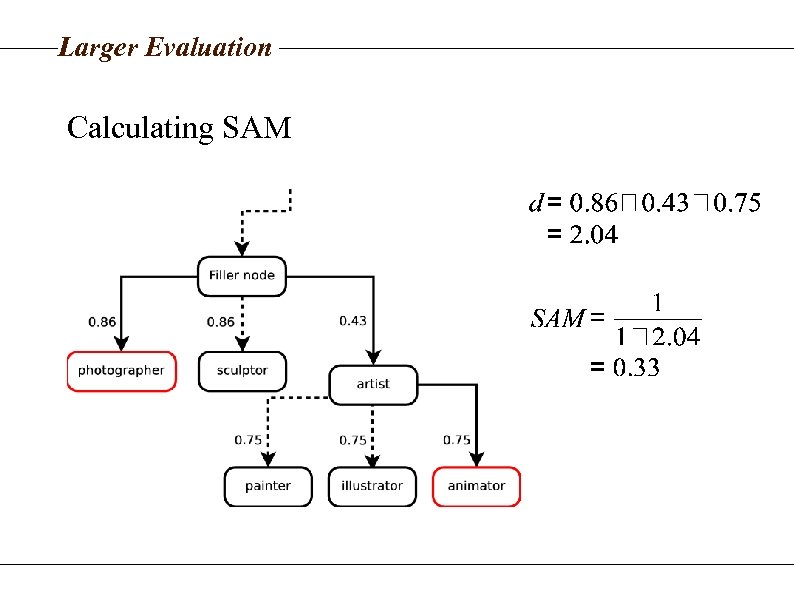

Larger Evaluation Calculating SAM

Larger Evaluation Similarity Threshold Identified the best from a range of candidate thresholds between 0. 20 and 0. 40 Compared a manual evaluation of P-System over 200 names in apposition with an occupation. . . to automated evaluation method using a candidate threshold to produce a binary judgements

Larger Evaluation Similarity Threshold If the SAM between the occupation returned by PSystem and the nearest occupation in apposition was. . . >= candidate threshold Right by automated evaluation < candidate threshold Wrong by automated evaluation

Larger Evaluation Similarity Threshold Calculated proportion of times the automatic and manual evaluations agreed in their judgements of a response Selected candidate threshold with largest agreement level Threshold 0. 28 Agreement level of 0. 872 Or 0. 848 where unusual but otherwise correct classifications was also considered right. High agreement levels validate evaluation method

Larger Evaluation P-System was tested on the 3, 177 names That exists in apposition with at least one occupation Which are present in at least 100 predicateargument pairs Responses were automatically evaluated

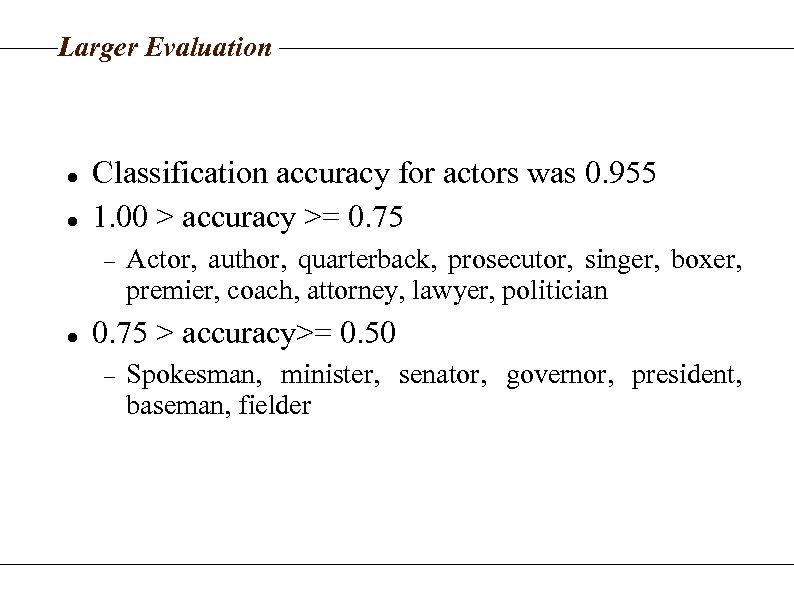

Larger Evaluation Classification accuracy for actors was 0. 955 1. 00 > accuracy >= 0. 75 Actor, author, quarterback, prosecutor, singer, boxer, premier, coach, attorney, lawyer, politician 0. 75 > accuracy>= 0. 50 Spokesman, minister, senator, governor, president, baseman, fielder

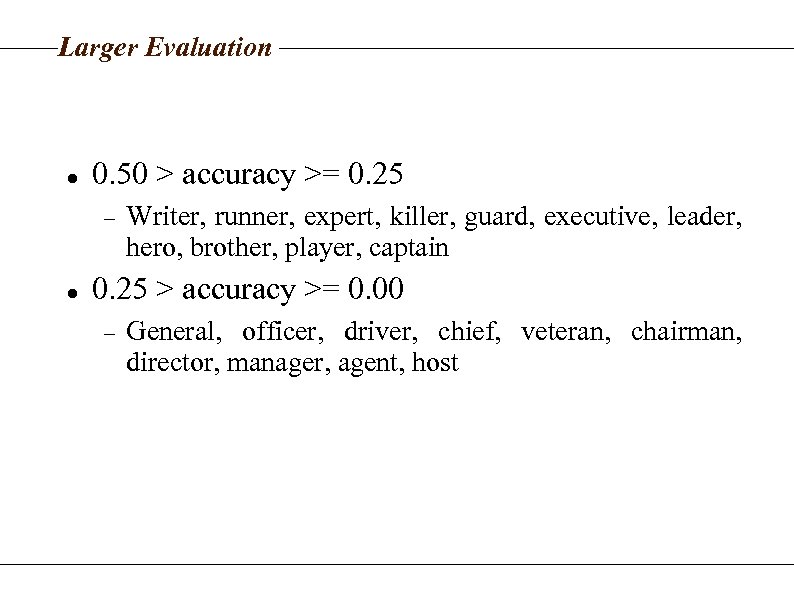

Larger Evaluation 0. 50 > accuracy >= 0. 25 Writer, runner, expert, killer, guard, executive, leader, hero, brother, player, captain 0. 25 > accuracy >= 0. 00 General, officer, driver, chief, veteran, chairman, director, manager, agent, host

Comparison of Three Models

Comparison of Three Models A-System Uses apposition instances from previous experiment Returns occupation that occurs most frequently in apposition with input name

Comparison of Three Models H-System P-System and A-System hybrid If P and A both return an occupation If only P returns an occupation Returns the occupation sense that occurs in apposition that is closest to the response of P Returns a sense of the response of P If only A returns an occupation Returns a sense of the response of A

Comparison of Three Models Three-way comparison between P, A and H 250 classifiable TREC names Manual evaluation Compared the three models In both a strict and lenient evaluation Where all names were classified and also where just those names occurring in at least 100 predicateargument pairs were classified Controlled for the ability of A to classify a name

Comparison of Three Models H is most accurate across all names Significantly better in lenient evaluation Accuracies: H 0. 584, A 0. 492, P 0. 424 In strict evaluation H is also the most accurate A only attempted to classify 0. 632 of names H and P attempted 0. 904 and 0. 892 of names

Comparison of Three Models On names that were found in apposition with an occupation In the lenient evaluation H was most accurate Accuracies: H 0. 797, A 0. 778, P 0. 544 In the strict evaluation A was the best Accuracies: H 0. 722, A 0. 728, P 0. 462

Comparison of Three Models H-System returns more general occupations than A -System An advantage for it in the lenient evaluation A disadvantage in the strict evaluation The principle of combining two very different approaches to classification has been validated

Conclusions

Conclusions Combining two classification models such as in HSystem allowed us to Respond with high accuracy Increase Recall beyond that of component classifiers Experiments demonstrate that we can Identify hypernyms of proper nouns such as people's names In the context of question answering

The End

a47439db324a205c63977d0d70824de8.ppt