aced42075418b5f338b2186226cb5c0d.ppt

- Количество слайдов: 26

Annotation and Detection of Blended Emotions in Real Human-Human Dialogs recorded in a Call Center L. Vidrascu and L. Devillers TLP-LIMSI/CNRS - France IST AMITIES FP 5 Project Automated Multi-lingual Interaction with Information and Services HUMAINE FP 6 No. E Human-Machine Interaction on Emotion CHIL FP 6 Project Computer in the Human Interaction Loop Vidrascu & Devillers - IEEE ICME 2005

Introduction n Study of real-life emotions to improve the capacities of current speech technologies § detecting emotions can help by orienting the evolution of H-C interaction via dynamic modification of dialog strategies § Most previous works on emotion have been conducted on acted or induced data with archetypal emotions. § n Results on artificial data transfer poorly to real data § expression of emotion complex: blended, shaded, masked § dependent of contextual and social factors § expressed at many different levels: prosodic, lexical, etc Challenges for detecting emotions in real-life data § representation of complex emotion § robust annotation validation protocol Vidrascu & Devillers - IEEE ICME 2005

Outline n real-life corpus recorded in a call center § call centers are very interesting environments because the recording can be made imperceptibly emotion annotation n emotion detection n blended emotions n perspectives n Vidrascu & Devillers - IEEE ICME 2005

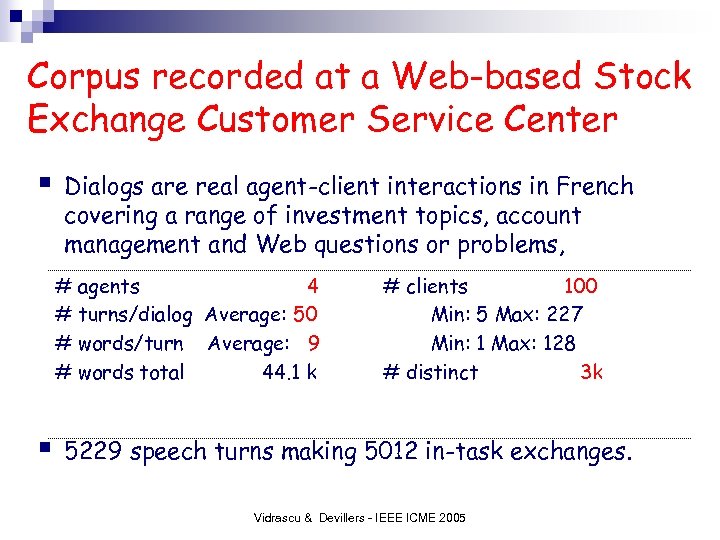

Corpus recorded at a Web-based Stock Exchange Customer Service Center § Dialogs are real agent-client interactions in French covering a range of investment topics, account management and Web questions or problems, # agents 4 # turns/dialog Average: 50 # words/turn Average: 9 # words total 44. 1 k § # clients 100 Min: 5 Max: 227 Min: 1 Max: 128 # distinct 3 k 5229 speech turns making 5012 in-task exchanges. Vidrascu & Devillers - IEEE ICME 2005

Outline n n real-life corpus description emotion annotation phase is complex definition of emotion representation and emotional unit § annotation § validation § n n n emotion detection blended emotions perspectives Vidrascu & Devillers - IEEE ICME 2005

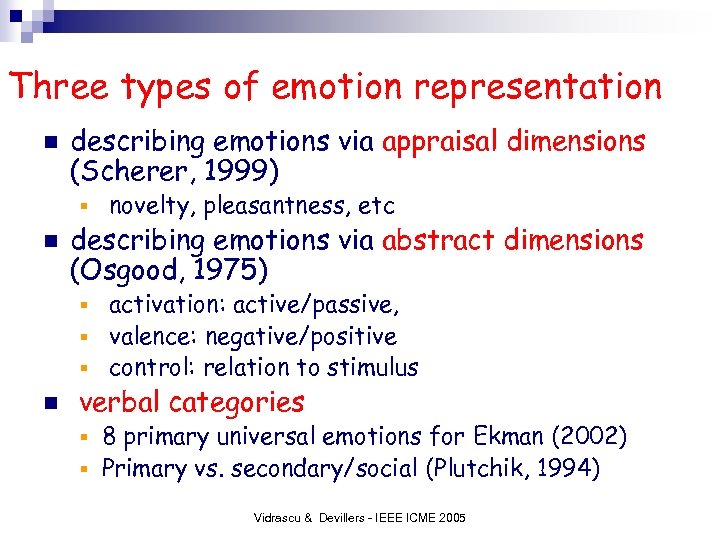

Three types of emotion representation n describing emotions via appraisal dimensions (Scherer, 1999) § n novelty, pleasantness, etc describing emotions via abstract dimensions (Osgood, 1975) activation: active/passive, § valence: negative/positive § control: relation to stimulus § n verbal categories 8 primary universal emotions for Ekman (2002) § Primary vs. secondary/social (Plutchik, 1994) § Vidrascu & Devillers - IEEE ICME 2005

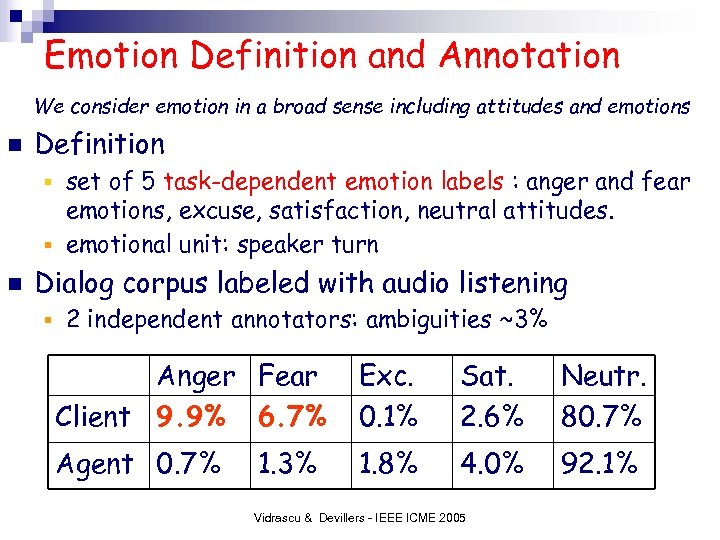

Emotion Definition and Annotation We consider emotion in a broad sense including attitudes and emotions n Definition set of 5 task-dependent emotion labels : anger and fear emotions, excuse, satisfaction, neutral attitudes. § emotional unit: speaker turn § n Dialog corpus labeled with audio listening § 2 independent annotators: ambiguities ~3% Anger Fear Client 9. 9% 6. 7% Exc. 0. 1% Sat. 2. 6% Neutr. 80. 7% Agent 0. 7% 1. 8% 4. 0% 92. 1% 1. 3% Vidrascu & Devillers - IEEE ICME 2005

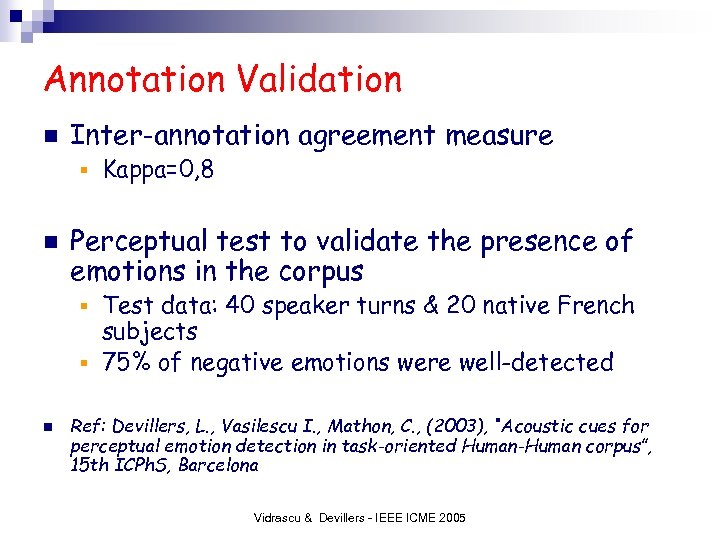

Annotation Validation n Inter-annotation agreement measure § n Kappa=0, 8 Perceptual test to validate the presence of emotions in the corpus Test data: 40 speaker turns & 20 native French subjects § 75% of negative emotions were well-detected § n Ref: Devillers, L. , Vasilescu I. , Mathon, C. , (2003), “Acoustic cues for perceptual emotion detection in task-oriented Human-Human corpus”, 15 th ICPh. S, Barcelona Vidrascu & Devillers - IEEE ICME 2005

Outline n n n real-life corpus description emotion annotation emotion detection Prosodic, acoustic and some disfluencies cues § Neutral/Negative, Fear/Anger classification § n n blended emotions perspectives Vidrascu & Devillers - IEEE ICME 2005

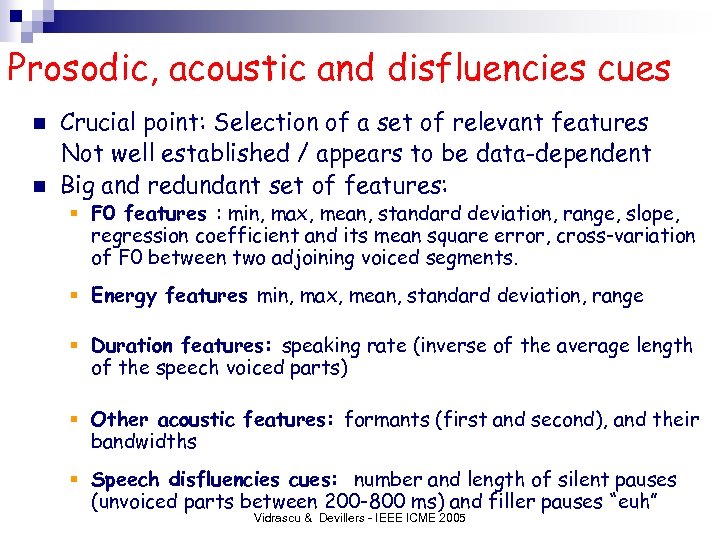

Prosodic, acoustic and disfluencies cues n n Crucial point: Selection of a set of relevant features Not well established / appears to be data-dependent Big and redundant set of features: § F 0 features : min, max, mean, standard deviation, range, slope, regression coefficient and its mean square error, cross-variation of F 0 between two adjoining voiced segments. § Energy features min, max, mean, standard deviation, range § Duration features: speaking rate (inverse of the average length of the speech voiced parts) § Other acoustic features: formants (first and second), and their bandwidths § Speech disfluencies cues: number and length of silent pauses (unvoiced parts between 200 -800 ms) and filler pauses “euh” Vidrascu & Devillers - IEEE ICME 2005

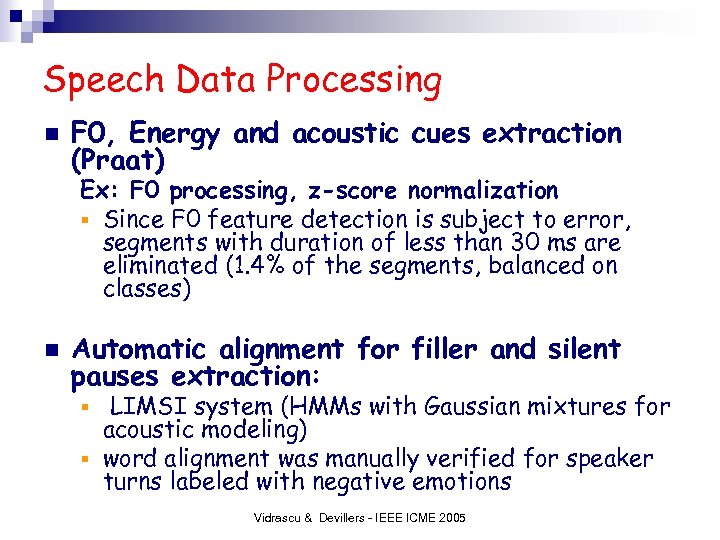

Speech Data Processing n F 0, Energy and acoustic cues extraction (Praat) Ex: F 0 processing, z-score normalization § Since F 0 feature detection is subject to error, segments with duration of less than 30 ms are eliminated (1. 4% of the segments, balanced on classes) n Automatic alignment for filler and silent pauses extraction: LIMSI system (HMMs with Gaussian mixtures for acoustic modeling) § word alignment was manually verified for speaker turns labeled with negative emotions § Vidrascu & Devillers - IEEE ICME 2005

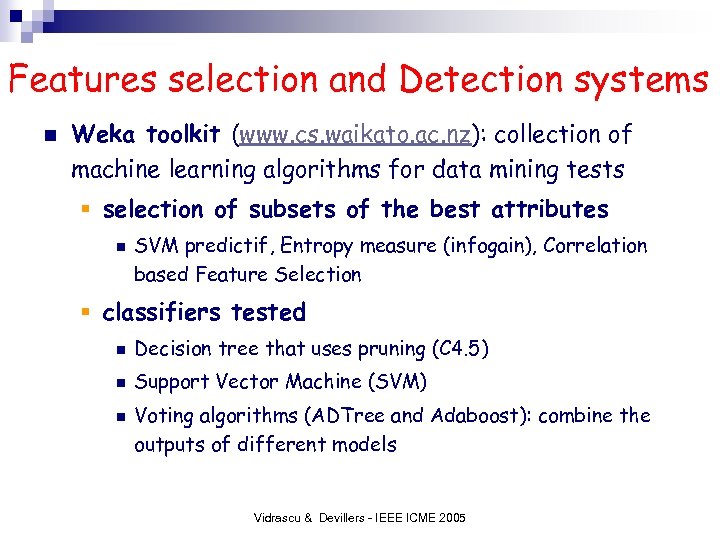

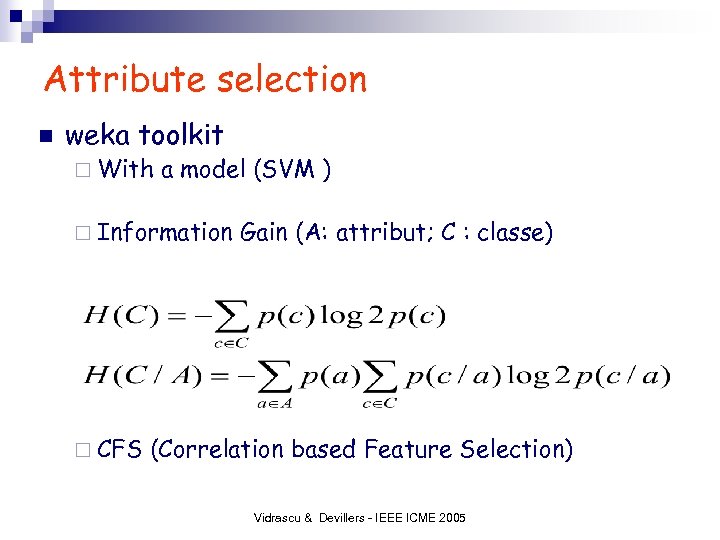

Features selection and Detection systems n Weka toolkit (www. cs. waikato. ac. nz): collection of machine learning algorithms for data mining tests § selection of subsets of the best attributes n SVM predictif, Entropy measure (infogain), Correlation based Feature Selection § classifiers tested n Decision tree that uses pruning (C 4. 5) n Support Vector Machine (SVM) n Voting algorithms (ADTree and Adaboost): combine the outputs of different models Vidrascu & Devillers - IEEE ICME 2005

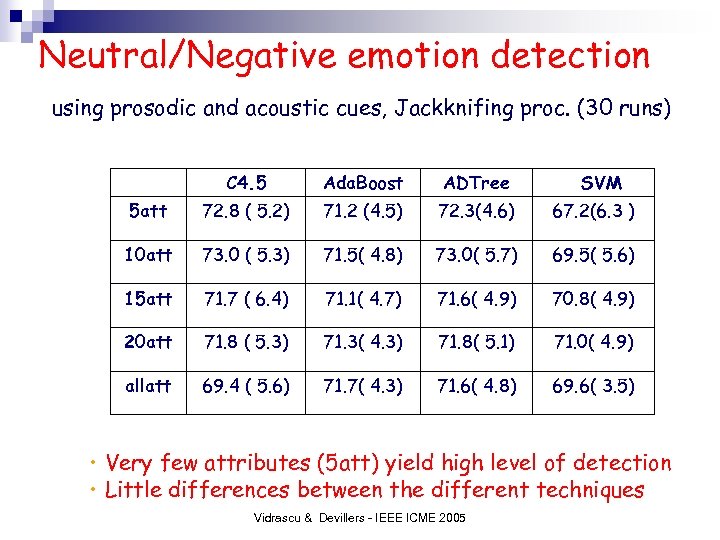

Neutral/Negative emotion detection using prosodic and acoustic cues, Jackknifing proc. (30 runs) C 4. 5 Ada. Boost ADTree SVM 5 att 72. 8 ( 5. 2) 71. 2 (4. 5) 72. 3(4. 6) 67. 2(6. 3 ) 10 att 73. 0 ( 5. 3) 71. 5( 4. 8) 73. 0( 5. 7) 69. 5( 5. 6) 15 att 71. 7 ( 6. 4) 71. 1( 4. 7) 71. 6( 4. 9) 70. 8( 4. 9) 20 att 71. 8 ( 5. 3) 71. 3( 4. 3) 71. 8( 5. 1) 71. 0( 4. 9) allatt 69. 4 ( 5. 6) 71. 7( 4. 3) 71. 6( 4. 8) 69. 6( 3. 5) • Very few attributes (5 att) yield high level of detection • Little differences between the different techniques Vidrascu & Devillers - IEEE ICME 2005

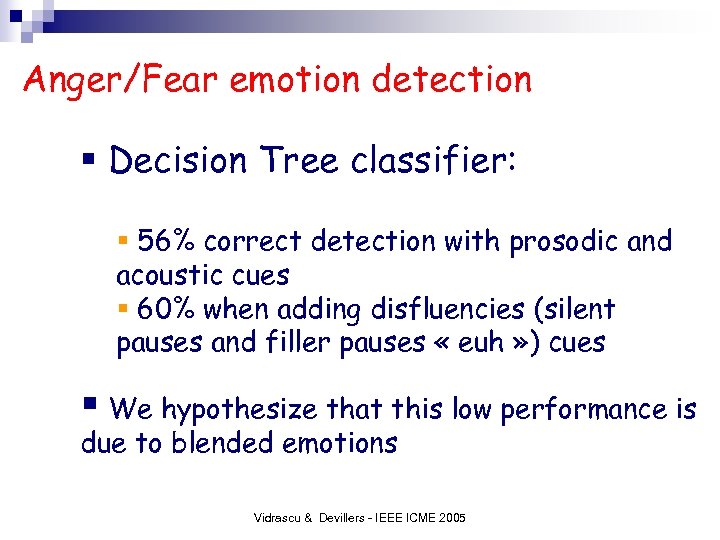

Anger/Fear emotion detection § Decision Tree classifier: § 56% correct detection with prosodic and acoustic cues § 60% when adding disfluencies (silent pauses and filler pauses « euh » ) cues § We hypothesize that this low performance is due to blended emotions Vidrascu & Devillers - IEEE ICME 2005

Outline real-life corpus description n emotion annotation n emotion detection n blended emotions n § n In certain states of mind, it is possible to exhibit more than one emotion when trying to mask a feeling, conflicting emotions, suffering, etc perspectives Vidrascu & Devillers - IEEE ICME 2005

Blended emotions n In this financial task, Anger and Fear can be combined: « Clients can be angry because they are afraid of losing money » Confusion matrix (40% confusion): there as many « Anger classified Fear » as « Fear classified Anger » . § n Re-annotation procedure of negative emotions with a new scheme defined for other tasks (medical call center, Emo. TV), 2 different annotators Vidrascu & Devillers - IEEE ICME 2005

New emotion annotation scheme n allows to choose 2 labels per segment: Major emotion: which is perceived as dominant § Minor emotion: if another emotion is perceived in background (the most intense minor emotion) § n 7 coarse classes (defined for another task) § Fear, Sadness, Anger, Hurt, Positive, Surprise, Neutral attitude Vidrascu & Devillers - IEEE ICME 2005

Perception of emotion is very subjective How to mix different annotations? Labeler 1: Major Anger, Minor Sadness Labeler 2: Major Fear, Minor Anger exploit the differences by combining the labels from multiple annotators in a soft emotion vector -> (w. M/W Anger, wm/W Fear, wm/W Sadness) For w. M=2 , wm=1 , W=6 in this example -> (3/6 Anger, 2/6 Fear, 1/6 Sadness) Vidrascu & Devillers - IEEE ICME 2005

Re-annotation result n Because, we are focusing on Anger and Fear emotions, 4 classes were deduced from emotion vectors: § § n Fear (Fear>0; Anger=0) Anger (Fear=0; Anger>0) Blended emotion (Fear>0; Anger>0) Other (Fear=0; Anger=0) Consistance between the first and the second annotation for 78% utterances § If (Anger >= Fear) and previous annotation Anger -> consistance § Same Major label in 64% utterances § No common labels between the two annotators: 13% Vidrascu & Devillers - IEEE ICME 2005

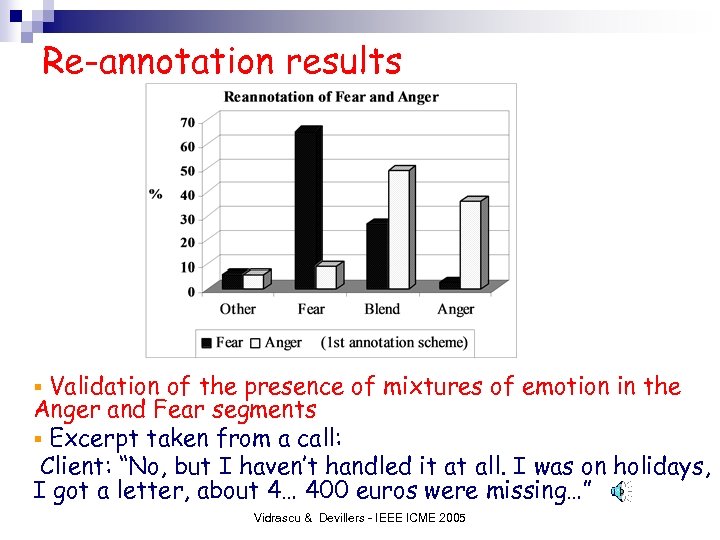

Re-annotation results Validation of the presence of mixtures of emotion in the Anger and Fear segments § Excerpt taken from a call: Client: “No, but I haven’t handled it at all. I was on holidays, I got a letter, about 4… 400 euros were missing…” § Vidrascu & Devillers - IEEE ICME 2005

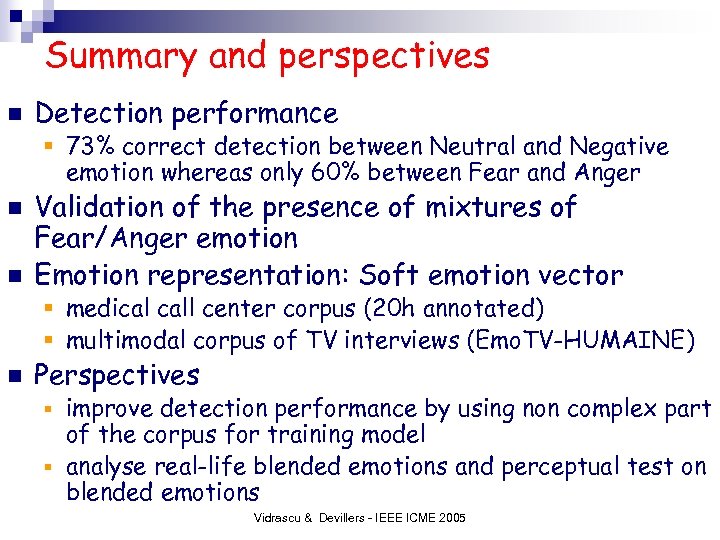

Summary and perspectives n Detection performance § 73% correct detection between Neutral and Negative emotion whereas only 60% between Fear and Anger n n Validation of the presence of mixtures of Fear/Anger emotion Emotion representation: Soft emotion vector § medical call center corpus (20 h annotated) § multimodal corpus of TV interviews (Emo. TV-HUMAINE) n Perspectives improve detection performance by using non complex part of the corpus for training model § analyse real-life blended emotions and perceptual test on blended emotions § Vidrascu & Devillers - IEEE ICME 2005

Thank you for your attention reference: L. Devillers, L. Vidrascu, L. Lamel, “Challenges in real-life emotion annotation and machine learning based detection”, Special issue, Journal of Neural Networks, to appear in July 2005. Vidrascu & Devillers - IEEE ICME 2005

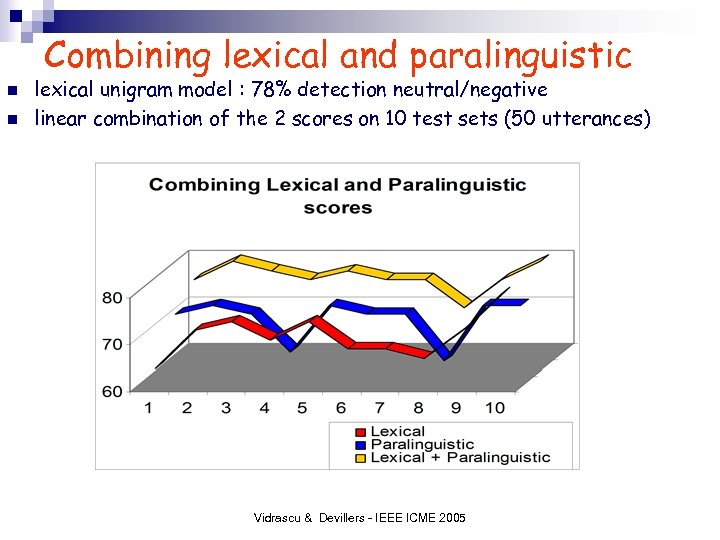

Combining lexical and paralinguistic n n lexical unigram model : 78% detection neutral/negative linear combination of the 2 scores on 10 test sets (50 utterances) Vidrascu & Devillers - IEEE ICME 2005

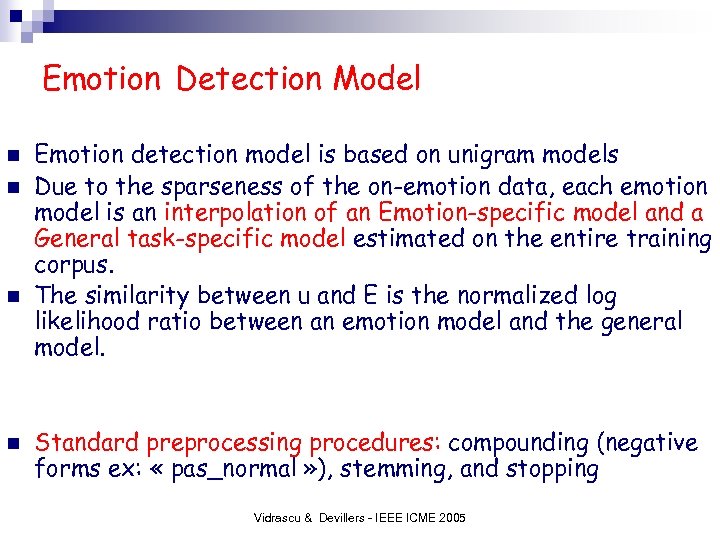

Emotion Detection Model n n Emotion detection model is based on unigram models Due to the sparseness of the on-emotion data, each emotion model is an interpolation of an Emotion-specific model and a General task-specific model estimated on the entire training corpus. The similarity between u and E is the normalized log likelihood ratio between an emotion model and the general model. Standard preprocessing procedures: compounding (negative forms ex: « pas_normal » ), stemming, and stopping Vidrascu & Devillers - IEEE ICME 2005

Experiments on Anger/Fear detection § Prosodic and acoustic cues § 56% of detection § around 60% when disfluencies are added § Lexical cues: ICME 2003 § often same lexical words : problem, abnormal, etc § difference is much more syntactic than lexical Vidrascu & Devillers - IEEE ICME 2005

Attribute selection n weka toolkit ¨ With a model (SVM ) ¨ Information ¨ CFS Gain (A: attribut; C : classe) (Correlation based Feature Selection) Vidrascu & Devillers - IEEE ICME 2005

aced42075418b5f338b2186226cb5c0d.ppt