a732cb6927cb720348511e2e5085bdb2.ppt

- Количество слайдов: 47

An Introduction to Data Mining Prof. S. Sudarshan CSE Dept, IIT Bombay Most slides courtesy: Prof. Sunita Sarawagi School of IT, IIT Bombay

An Introduction to Data Mining Prof. S. Sudarshan CSE Dept, IIT Bombay Most slides courtesy: Prof. Sunita Sarawagi School of IT, IIT Bombay

Why Data Mining z Credit ratings/targeted marketing: y Given a database of 100, 000 names, which persons are the least likely to default on their credit cards? y Identify likely responders to sales promotions z Fraud detection y Which types of transactions are likely to be fraudulent, given the demographics and transactional history of a particular customer? z Customer relationship management: y Which of my customers are likely to be the most loyal, and which are most likely to leave for a competitor? : Data Mining helps extract such information

Why Data Mining z Credit ratings/targeted marketing: y Given a database of 100, 000 names, which persons are the least likely to default on their credit cards? y Identify likely responders to sales promotions z Fraud detection y Which types of transactions are likely to be fraudulent, given the demographics and transactional history of a particular customer? z Customer relationship management: y Which of my customers are likely to be the most loyal, and which are most likely to leave for a competitor? : Data Mining helps extract such information

Data mining z. Process of semi-automatically analyzing large databases to find patterns that are: yvalid: hold on new data with some certainity ynovel: non-obvious to the system yuseful: should be possible to act on the item yunderstandable: humans should be able to interpret the pattern z. Also known as Knowledge Discovery in Databases (KDD)

Data mining z. Process of semi-automatically analyzing large databases to find patterns that are: yvalid: hold on new data with some certainity ynovel: non-obvious to the system yuseful: should be possible to act on the item yunderstandable: humans should be able to interpret the pattern z. Also known as Knowledge Discovery in Databases (KDD)

Applications z Banking: loan/credit card approval y predict good customers based on old customers z Customer relationship management: y identify those who are likely to leave for a competitor. z Targeted marketing: y identify likely responders to promotions z Fraud detection: telecommunications, financial transactions y from an online stream of event identify fraudulent events z Manufacturing and production: y automatically adjust knobs when process parameter changes

Applications z Banking: loan/credit card approval y predict good customers based on old customers z Customer relationship management: y identify those who are likely to leave for a competitor. z Targeted marketing: y identify likely responders to promotions z Fraud detection: telecommunications, financial transactions y from an online stream of event identify fraudulent events z Manufacturing and production: y automatically adjust knobs when process parameter changes

Applications (continued) z Medicine: disease outcome, effectiveness of treatments yanalyze patient disease history: find relationship between diseases z Molecular/Pharmaceutical: identify new drugs z Scientific data analysis: yidentify new galaxies by searching for sub clusters z Web site/store design and promotion: yfind affinity of visitor to pages and modify layout

Applications (continued) z Medicine: disease outcome, effectiveness of treatments yanalyze patient disease history: find relationship between diseases z Molecular/Pharmaceutical: identify new drugs z Scientific data analysis: yidentify new galaxies by searching for sub clusters z Web site/store design and promotion: yfind affinity of visitor to pages and modify layout

The KDD process z Problem fomulation z Data collection y subset data: sampling might hurt if highly skewed data y feature selection: principal component analysis, heuristic search z Pre-processing: cleaning y name/address cleaning, different meanings (annual, yearly), duplicate removal, supplying missing values z Transformation: y map complex objects e. g. time series data to features e. g. frequency z Choosing mining task and mining method: z Result evaluation and Visualization: Knowledge discovery is an iterative process

The KDD process z Problem fomulation z Data collection y subset data: sampling might hurt if highly skewed data y feature selection: principal component analysis, heuristic search z Pre-processing: cleaning y name/address cleaning, different meanings (annual, yearly), duplicate removal, supplying missing values z Transformation: y map complex objects e. g. time series data to features e. g. frequency z Choosing mining task and mining method: z Result evaluation and Visualization: Knowledge discovery is an iterative process

Relationship with other fields z Overlaps with machine learning, statistics, artificial intelligence, databases, visualization but more stress on yscalability of number of features and instances ystress on algorithms and architectures whereas foundations of methods and formulations provided by statistics and machine learning. yautomation for handling large, heterogeneous data

Relationship with other fields z Overlaps with machine learning, statistics, artificial intelligence, databases, visualization but more stress on yscalability of number of features and instances ystress on algorithms and architectures whereas foundations of methods and formulations provided by statistics and machine learning. yautomation for handling large, heterogeneous data

Some basic operations z. Predictive: y. Regression y. Classification y. Collaborative Filtering z. Descriptive: y. Clustering / similarity matching y. Association rules and variants y. Deviation detection

Some basic operations z. Predictive: y. Regression y. Classification y. Collaborative Filtering z. Descriptive: y. Clustering / similarity matching y. Association rules and variants y. Deviation detection

Classification (Supervised learning)

Classification (Supervised learning)

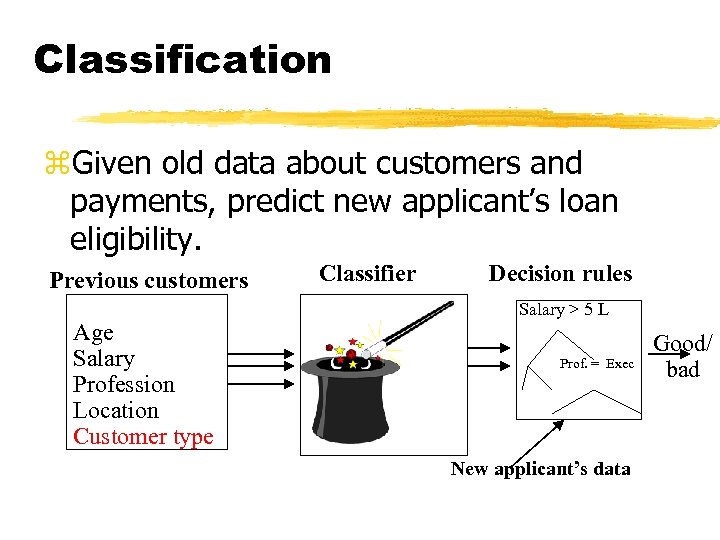

Classification z. Given old data about customers and payments, predict new applicant’s loan eligibility. Previous customers Age Salary Profession Location Customer type Classifier Decision rules Salary > 5 L Prof. = Exec New applicant’s data Good/ bad

Classification z. Given old data about customers and payments, predict new applicant’s loan eligibility. Previous customers Age Salary Profession Location Customer type Classifier Decision rules Salary > 5 L Prof. = Exec New applicant’s data Good/ bad

Classification methods z. Goal: Predict class Ci = f(x 1, x 2, . . Xn) z. Regression: (linear or any other polynomial) ya*x 1 + b*x 2 + c = Ci. z. Nearest neighour z. Decision tree classifier: divide decision space into piecewise constant regions. z. Probabilistic/generative models z. Neural networks: partition by non-linear boundaries

Classification methods z. Goal: Predict class Ci = f(x 1, x 2, . . Xn) z. Regression: (linear or any other polynomial) ya*x 1 + b*x 2 + c = Ci. z. Nearest neighour z. Decision tree classifier: divide decision space into piecewise constant regions. z. Probabilistic/generative models z. Neural networks: partition by non-linear boundaries

Nearest neighbor z. Define proximity between instances, find neighbors of new instance and assign majority class z. Case based reasoning: when attributes are more complicated than real-valued. • Pros + Fast training • Cons – Slow during application. – No feature selection. – Notion of proximity vague

Nearest neighbor z. Define proximity between instances, find neighbors of new instance and assign majority class z. Case based reasoning: when attributes are more complicated than real-valued. • Pros + Fast training • Cons – Slow during application. – No feature selection. – Notion of proximity vague

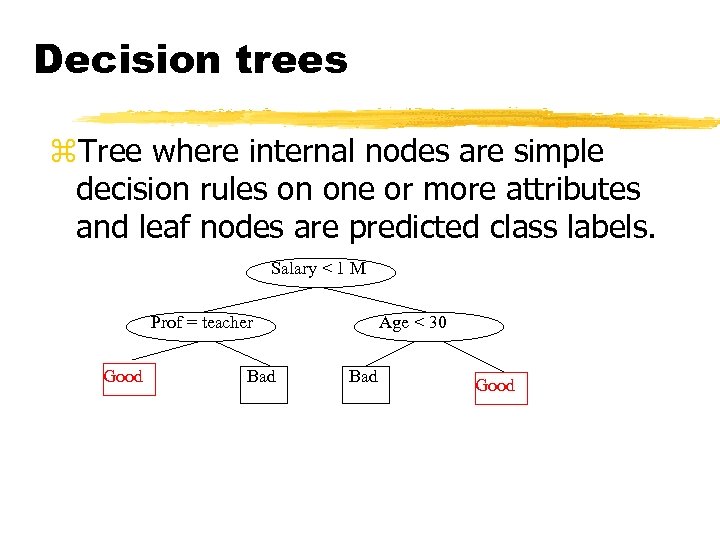

Decision trees z. Tree where internal nodes are simple decision rules on one or more attributes and leaf nodes are predicted class labels. Salary < 1 M Prof = teacher Good Bad Age < 30 Bad Good

Decision trees z. Tree where internal nodes are simple decision rules on one or more attributes and leaf nodes are predicted class labels. Salary < 1 M Prof = teacher Good Bad Age < 30 Bad Good

Decision tree classifiers z Widely used learning method z Easy to interpret: can be re-represented as ifthen-else rules z Approximates function by piece wise constant regions z Does not require any prior knowledge of data distribution, works well on noisy data. z Has been applied to: yclassify medical patients based on the disease, y equipment malfunction by cause, yloan applicant by likelihood of payment.

Decision tree classifiers z Widely used learning method z Easy to interpret: can be re-represented as ifthen-else rules z Approximates function by piece wise constant regions z Does not require any prior knowledge of data distribution, works well on noisy data. z Has been applied to: yclassify medical patients based on the disease, y equipment malfunction by cause, yloan applicant by likelihood of payment.

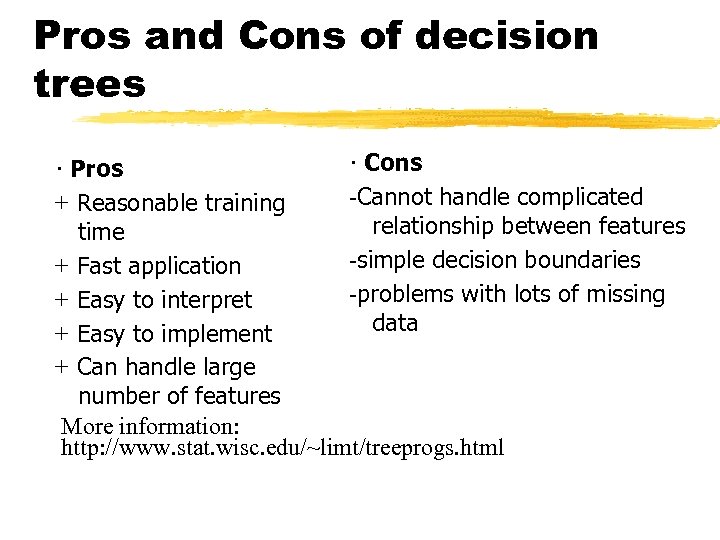

Pros and Cons of decision trees · Cons · Pros Cannot handle complicated + Reasonable training relationship between features time simple decision boundaries + Fast application problems with lots of missing + Easy to interpret data + Easy to implement + Can handle large number of features More information: http: //www. stat. wisc. edu/~limt/treeprogs. html

Pros and Cons of decision trees · Cons · Pros Cannot handle complicated + Reasonable training relationship between features time simple decision boundaries + Fast application problems with lots of missing + Easy to interpret data + Easy to implement + Can handle large number of features More information: http: //www. stat. wisc. edu/~limt/treeprogs. html

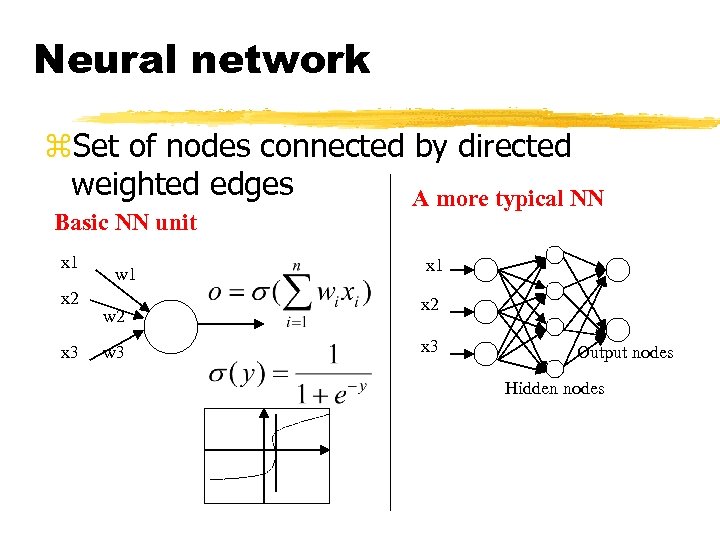

Neural network z. Set of nodes connected by directed weighted edges A more typical NN Basic NN unit x 1 x 2 x 3 w 1 w 2 w 3 x 1 x 2 x 3 Output nodes Hidden nodes

Neural network z. Set of nodes connected by directed weighted edges A more typical NN Basic NN unit x 1 x 2 x 3 w 1 w 2 w 3 x 1 x 2 x 3 Output nodes Hidden nodes

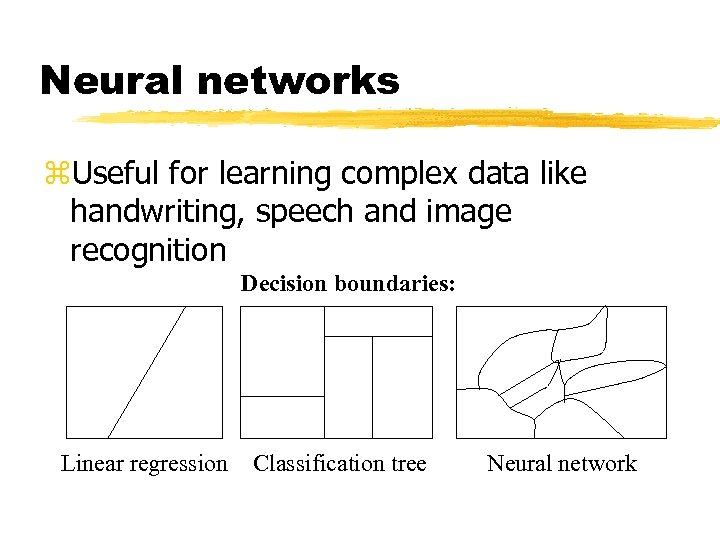

Neural networks z. Useful for learning complex data like handwriting, speech and image recognition Decision boundaries: Linear regression Classification tree Neural network

Neural networks z. Useful for learning complex data like handwriting, speech and image recognition Decision boundaries: Linear regression Classification tree Neural network

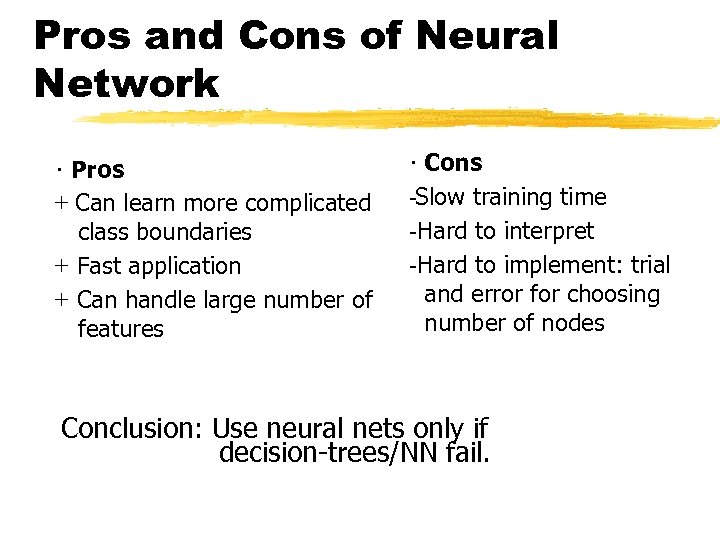

Pros and Cons of Neural Network · Pros + Can learn more complicated class boundaries + Fast application + Can handle large number of features · Cons Slow training time Hard to interpret Hard to implement: trial and error for choosing number of nodes Conclusion: Use neural nets only if decision-trees/NN fail.

Pros and Cons of Neural Network · Pros + Can learn more complicated class boundaries + Fast application + Can handle large number of features · Cons Slow training time Hard to interpret Hard to implement: trial and error for choosing number of nodes Conclusion: Use neural nets only if decision-trees/NN fail.

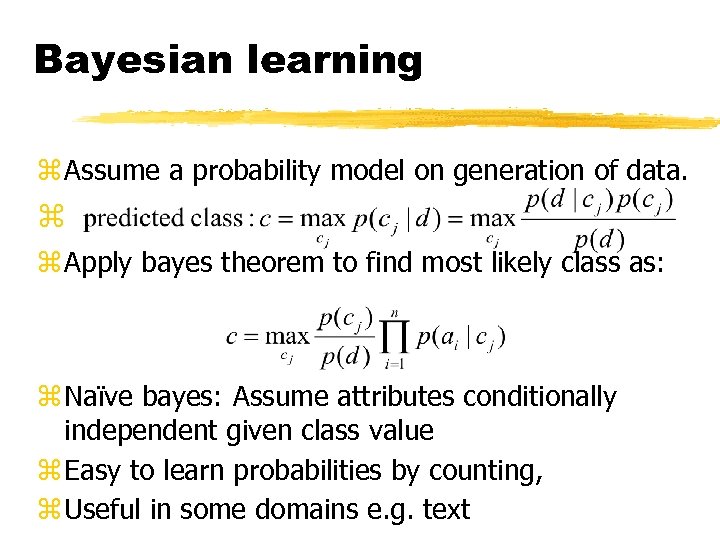

Bayesian learning z Assume a probability model on generation of data. z z Apply bayes theorem to find most likely class as: z Naïve bayes: Assume attributes conditionally independent given class value z Easy to learn probabilities by counting, z Useful in some domains e. g. text

Bayesian learning z Assume a probability model on generation of data. z z Apply bayes theorem to find most likely class as: z Naïve bayes: Assume attributes conditionally independent given class value z Easy to learn probabilities by counting, z Useful in some domains e. g. text

Clustering or Unsupervised Learning

Clustering or Unsupervised Learning

Clustering z Unsupervised learning when old data with class labels not available e. g. when introducing a new product. z Group/cluster existing customers based on time series of payment history such that similar customers in same cluster. z Key requirement: Need a good measure of similarity between instances. z Identify micro-markets and develop policies for each

Clustering z Unsupervised learning when old data with class labels not available e. g. when introducing a new product. z Group/cluster existing customers based on time series of payment history such that similar customers in same cluster. z Key requirement: Need a good measure of similarity between instances. z Identify micro-markets and develop policies for each

Applications z Customer segmentation e. g. for targeted marketing y. Group/cluster existing customers based on time series of payment history such that similar customers in same cluster. y. Identify micro-markets and develop policies for each z Collaborative filtering: ygroup based on common items purchased z Text clustering z Compression

Applications z Customer segmentation e. g. for targeted marketing y. Group/cluster existing customers based on time series of payment history such that similar customers in same cluster. y. Identify micro-markets and develop policies for each z Collaborative filtering: ygroup based on common items purchased z Text clustering z Compression

Distance functions z Numeric data: euclidean, manhattan distances z Categorical data: 0/1 to indicate presence/absence followed by y. Hamming distance (# dissimilarity) y. Jaccard coefficients: #similarity in 1 s/(# of 1 s) ydata dependent measures: similarity of A and B depends on co-occurance with C. z Combined numeric and categorical data: yweighted normalized distance:

Distance functions z Numeric data: euclidean, manhattan distances z Categorical data: 0/1 to indicate presence/absence followed by y. Hamming distance (# dissimilarity) y. Jaccard coefficients: #similarity in 1 s/(# of 1 s) ydata dependent measures: similarity of A and B depends on co-occurance with C. z Combined numeric and categorical data: yweighted normalized distance:

Clustering methods z. Hierarchical clustering yagglomerative Vs divisive ysingle link Vs complete link z. Partitional clustering ydistance-based: K-means ymodel-based: EM ydensity-based:

Clustering methods z. Hierarchical clustering yagglomerative Vs divisive ysingle link Vs complete link z. Partitional clustering ydistance-based: K-means ymodel-based: EM ydensity-based:

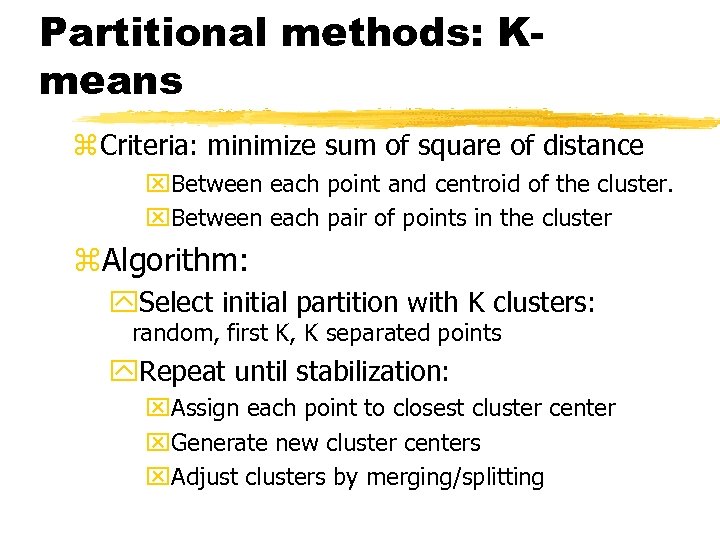

Partitional methods: Kmeans z Criteria: minimize sum of square of distance x. Between each point and centroid of the cluster. x. Between each pair of points in the cluster z. Algorithm: y. Select initial partition with K clusters: random, first K, K separated points y. Repeat until stabilization: x. Assign each point to closest cluster center x. Generate new cluster centers x. Adjust clusters by merging/splitting

Partitional methods: Kmeans z Criteria: minimize sum of square of distance x. Between each point and centroid of the cluster. x. Between each pair of points in the cluster z. Algorithm: y. Select initial partition with K clusters: random, first K, K separated points y. Repeat until stabilization: x. Assign each point to closest cluster center x. Generate new cluster centers x. Adjust clusters by merging/splitting

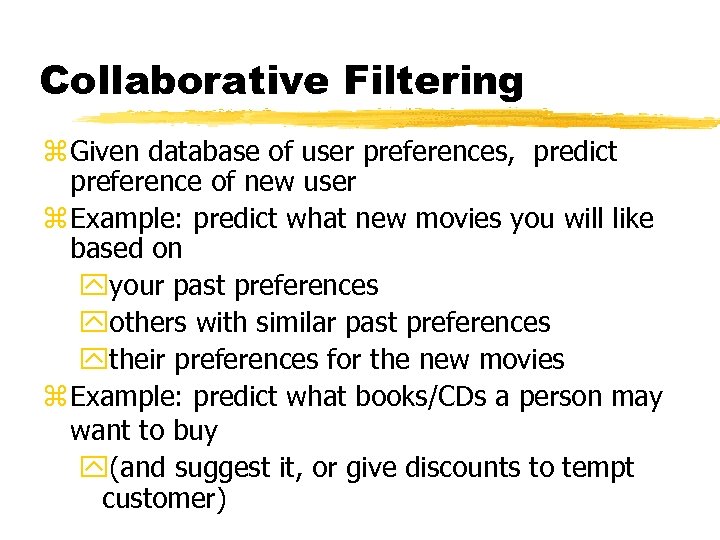

Collaborative Filtering z Given database of user preferences, predict preference of new user z Example: predict what new movies you will like based on yyour past preferences yothers with similar past preferences ytheir preferences for the new movies z Example: predict what books/CDs a person may want to buy y(and suggest it, or give discounts to tempt customer)

Collaborative Filtering z Given database of user preferences, predict preference of new user z Example: predict what new movies you will like based on yyour past preferences yothers with similar past preferences ytheir preferences for the new movies z Example: predict what books/CDs a person may want to buy y(and suggest it, or give discounts to tempt customer)

![Collaborative recommendation • Possible approaches: • Average vote along columns [Same prediction for all] Collaborative recommendation • Possible approaches: • Average vote along columns [Same prediction for all]](https://present5.com/presentation/a732cb6927cb720348511e2e5085bdb2/image-27.jpg) Collaborative recommendation • Possible approaches: • Average vote along columns [Same prediction for all] • Weight vote based on similarity of likings [Group. Lens]

Collaborative recommendation • Possible approaches: • Average vote along columns [Same prediction for all] • Weight vote based on similarity of likings [Group. Lens]

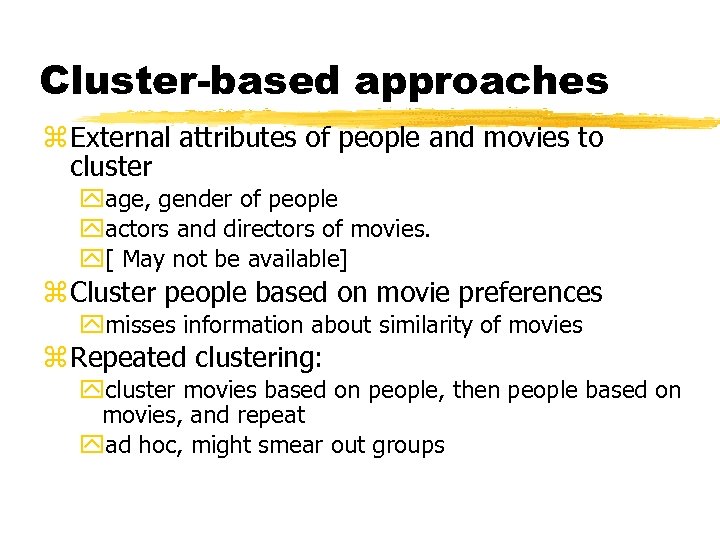

Cluster-based approaches z External attributes of people and movies to cluster yage, gender of people yactors and directors of movies. y[ May not be available] z Cluster people based on movie preferences ymisses information about similarity of movies z Repeated clustering: ycluster movies based on people, then people based on movies, and repeat yad hoc, might smear out groups

Cluster-based approaches z External attributes of people and movies to cluster yage, gender of people yactors and directors of movies. y[ May not be available] z Cluster people based on movie preferences ymisses information about similarity of movies z Repeated clustering: ycluster movies based on people, then people based on movies, and repeat yad hoc, might smear out groups

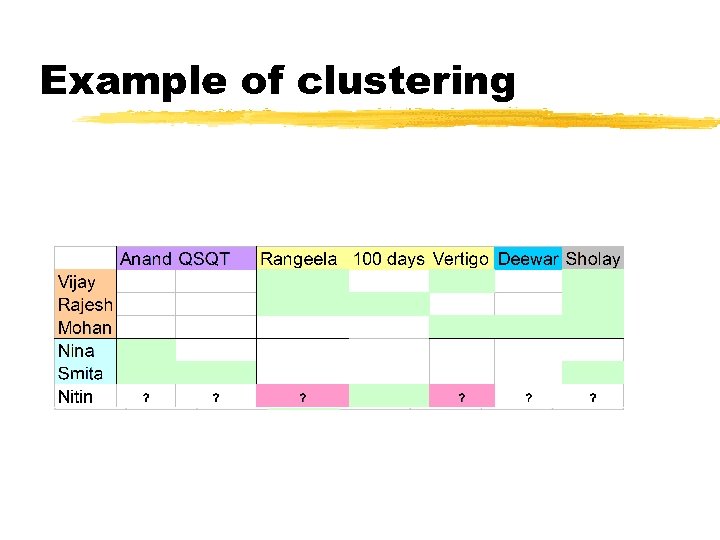

Example of clustering

Example of clustering

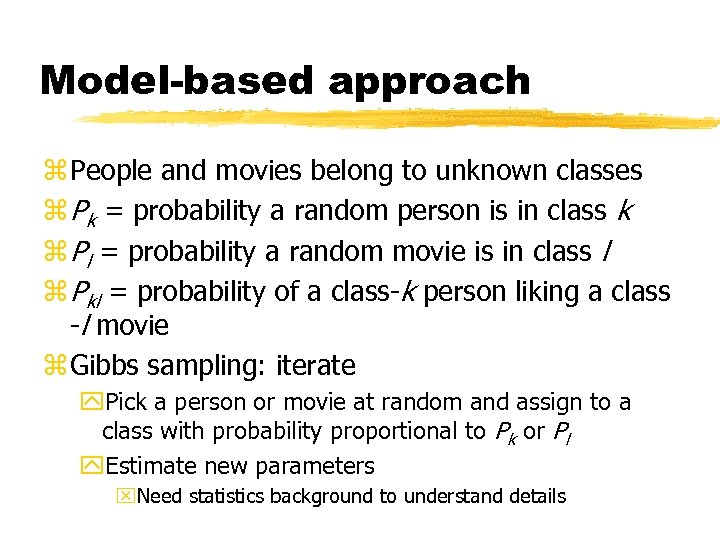

Model-based approach z People and movies belong to unknown classes z Pk = probability a random person is in class k z Pl = probability a random movie is in class l z Pkl = probability of a class-k person liking a class -l movie z Gibbs sampling: iterate y. Pick a person or movie at random and assign to a class with probability proportional to Pk or Pl y. Estimate new parameters x. Need statistics background to understand details

Model-based approach z People and movies belong to unknown classes z Pk = probability a random person is in class k z Pl = probability a random movie is in class l z Pkl = probability of a class-k person liking a class -l movie z Gibbs sampling: iterate y. Pick a person or movie at random and assign to a class with probability proportional to Pk or Pl y. Estimate new parameters x. Need statistics background to understand details

Association Rules

Association Rules

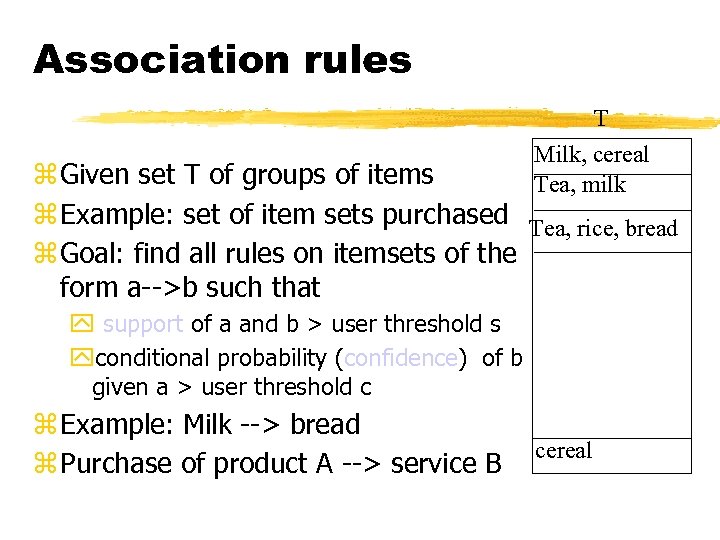

Association rules T Milk, cereal Tea, milk z Given set T of groups of items z Example: set of item sets purchased Tea, rice, bread z Goal: find all rules on itemsets of the form a-->b such that y support of a and b > user threshold s yconditional probability (confidence) of b given a > user threshold c z Example: Milk --> bread z Purchase of product A --> service B cereal

Association rules T Milk, cereal Tea, milk z Given set T of groups of items z Example: set of item sets purchased Tea, rice, bread z Goal: find all rules on itemsets of the form a-->b such that y support of a and b > user threshold s yconditional probability (confidence) of b given a > user threshold c z Example: Milk --> bread z Purchase of product A --> service B cereal

Variants z. High confidence may not imply high correlation z. Use correlations. Find expected support and large departures from that interesting. . ysee statistical literature on contingency tables. z. Still too many rules, need to prune. . .

Variants z. High confidence may not imply high correlation z. Use correlations. Find expected support and large departures from that interesting. . ysee statistical literature on contingency tables. z. Still too many rules, need to prune. . .

Prevalent Interesting z Analysts already know about prevalent rules z Interesting rules are those that deviate from prior expectation z Mining’s payoff is in finding surprising phenomena 1995 Zzzz. . . Milk and cereal sell together! 1998 Milk and cereal sell together!

Prevalent Interesting z Analysts already know about prevalent rules z Interesting rules are those that deviate from prior expectation z Mining’s payoff is in finding surprising phenomena 1995 Zzzz. . . Milk and cereal sell together! 1998 Milk and cereal sell together!

What makes a rule surprising? z Does not match prior expectation y. Correlation between milk and cereal remains roughly constant over time z Cannot be trivially derived from simpler rules y. Milk 10%, cereal 10% y. Milk and cereal 10% … surprising y. Eggs 10% y. Milk, cereal and eggs 0. 1% … surprising! y. Expected 1%

What makes a rule surprising? z Does not match prior expectation y. Correlation between milk and cereal remains roughly constant over time z Cannot be trivially derived from simpler rules y. Milk 10%, cereal 10% y. Milk and cereal 10% … surprising y. Eggs 10% y. Milk, cereal and eggs 0. 1% … surprising! y. Expected 1%

Applications of fast itemset counting Find correlated events: z. Applications in medicine: find redundant tests z. Cross selling in retail, banking z. Improve predictive capability of classifiers that assume attribute independence z New similarity measures of categorical attributes [Mannila et al, KDD 98]

Applications of fast itemset counting Find correlated events: z. Applications in medicine: find redundant tests z. Cross selling in retail, banking z. Improve predictive capability of classifiers that assume attribute independence z New similarity measures of categorical attributes [Mannila et al, KDD 98]

Data Mining in Practice

Data Mining in Practice

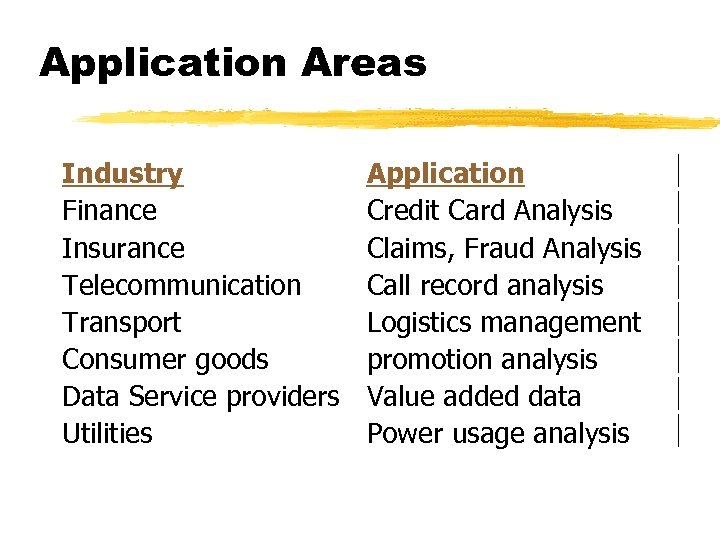

Application Areas Industry Finance Insurance Telecommunication Transport Consumer goods Data Service providers Utilities Application Credit Card Analysis Claims, Fraud Analysis Call record analysis Logistics management promotion analysis Value added data Power usage analysis

Application Areas Industry Finance Insurance Telecommunication Transport Consumer goods Data Service providers Utilities Application Credit Card Analysis Claims, Fraud Analysis Call record analysis Logistics management promotion analysis Value added data Power usage analysis

Why Now? z. Data is being produced z. Data is being warehoused z. The computing power is available z. The computing power is affordable z. The competitive pressures are strong z. Commercial products are available

Why Now? z. Data is being produced z. Data is being warehoused z. The computing power is available z. The computing power is affordable z. The competitive pressures are strong z. Commercial products are available

Data Mining works with Warehouse Data z Data Warehousing provides the Enterprise with a memory ÑData Mining provides the Enterprise with intelligence

Data Mining works with Warehouse Data z Data Warehousing provides the Enterprise with a memory ÑData Mining provides the Enterprise with intelligence

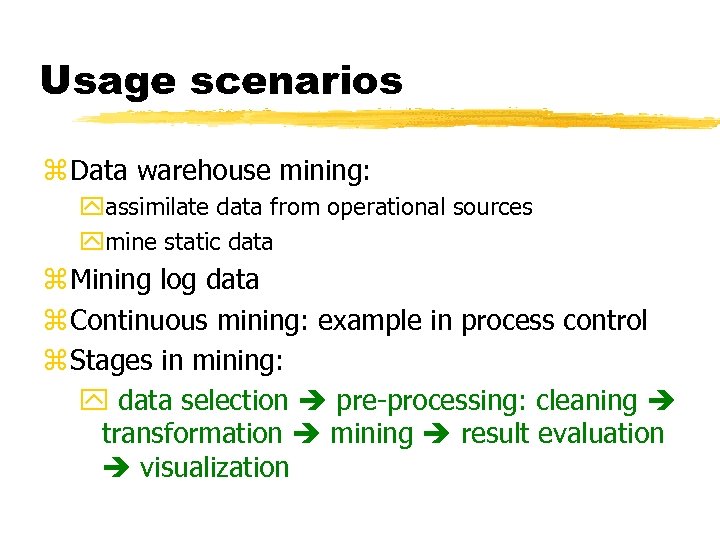

Usage scenarios z Data warehouse mining: yassimilate data from operational sources ymine static data z Mining log data z Continuous mining: example in process control z Stages in mining: y data selection pre-processing: cleaning transformation mining result evaluation visualization

Usage scenarios z Data warehouse mining: yassimilate data from operational sources ymine static data z Mining log data z Continuous mining: example in process control z Stages in mining: y data selection pre-processing: cleaning transformation mining result evaluation visualization

Mining market z Around 20 to 30 mining tool vendors z Major tool players: y. Clementine, y. IBM’s Intelligent Miner, y. SGI’s Mine. Set, y. SAS’s Enterprise Miner. z All pretty much the same set of tools z Many embedded products: y fraud detection: y electronic commerce applications, y health care, y customer relationship management: Epiphany

Mining market z Around 20 to 30 mining tool vendors z Major tool players: y. Clementine, y. IBM’s Intelligent Miner, y. SGI’s Mine. Set, y. SAS’s Enterprise Miner. z All pretty much the same set of tools z Many embedded products: y fraud detection: y electronic commerce applications, y health care, y customer relationship management: Epiphany

Vertical integration: Mining on the web z Web log analysis for site design: ywhat are popular pages, ywhat links are hard to find. z Electronic stores sales enhancements: yrecommendations, advertisement: y. Collaborative filtering: Net perception, Wisewire y. Inventory control: what was a shopper looking for and could not find. .

Vertical integration: Mining on the web z Web log analysis for site design: ywhat are popular pages, ywhat links are hard to find. z Electronic stores sales enhancements: yrecommendations, advertisement: y. Collaborative filtering: Net perception, Wisewire y. Inventory control: what was a shopper looking for and could not find. .

OLAP Mining integration z. OLAP (On Line Analytical Processing) y. Fast interactive exploration of multidim. aggregates. y. Heavy reliance on manual operations for analysis: y. Tedious and error-prone on large multidimensional data z Ideal platform for vertical integration of mining but needs to be interactive instead of batch.

OLAP Mining integration z. OLAP (On Line Analytical Processing) y. Fast interactive exploration of multidim. aggregates. y. Heavy reliance on manual operations for analysis: y. Tedious and error-prone on large multidimensional data z Ideal platform for vertical integration of mining but needs to be interactive instead of batch.

![State of art in mining OLAP integration z Decision trees [Information discovery, Cognos] yfind State of art in mining OLAP integration z Decision trees [Information discovery, Cognos] yfind](https://present5.com/presentation/a732cb6927cb720348511e2e5085bdb2/image-45.jpg) State of art in mining OLAP integration z Decision trees [Information discovery, Cognos] yfind factors influencing high profits z Clustering [Pilot software] ysegment customers to define hierarchy on that dimension z Time series analysis: [Seagate’s Holos] y. Query for various shapes along time: eg. spikes, outliers z. Multi-level Associations [Han et al. ] yfind association between members of dimensions z Sarawagi [VLDB 2000]

State of art in mining OLAP integration z Decision trees [Information discovery, Cognos] yfind factors influencing high profits z Clustering [Pilot software] ysegment customers to define hierarchy on that dimension z Time series analysis: [Seagate’s Holos] y. Query for various shapes along time: eg. spikes, outliers z. Multi-level Associations [Han et al. ] yfind association between members of dimensions z Sarawagi [VLDB 2000]

Data Mining in Use z The US Government uses Data Mining to track fraud z A Supermarket becomes an information broker z Basketball teams use it to track game strategy z Cross Selling z Target Marketing z Holding on to Good Customers z Weeding out Bad Customers

Data Mining in Use z The US Government uses Data Mining to track fraud z A Supermarket becomes an information broker z Basketball teams use it to track game strategy z Cross Selling z Target Marketing z Holding on to Good Customers z Weeding out Bad Customers

Some success stories z Network intrusion detection using a combination of sequential rule discovery and classification tree on 4 GB DARPA data y Won over (manual) knowledge engineering approach y http: //www. cs. columbia. edu/~sal/JAM/PROJECT/ provides good detailed description of the entire process z Major US bank: customer attrition prediction y First segment customers based on financial behavior: found 3 segments y Build attrition models for each of the 3 segments y 40 -50% of attritions were predicted == factor of 18 increase z Targeted credit marketing: major US banks y find customer segments based on 13 months credit balances y build another response model based on surveys y increased response 4 times -- 2%

Some success stories z Network intrusion detection using a combination of sequential rule discovery and classification tree on 4 GB DARPA data y Won over (manual) knowledge engineering approach y http: //www. cs. columbia. edu/~sal/JAM/PROJECT/ provides good detailed description of the entire process z Major US bank: customer attrition prediction y First segment customers based on financial behavior: found 3 segments y Build attrition models for each of the 3 segments y 40 -50% of attritions were predicted == factor of 18 increase z Targeted credit marketing: major US banks y find customer segments based on 13 months credit balances y build another response model based on surveys y increased response 4 times -- 2%