928cf7d9965df6cd6176f3c2ce023d12.ppt

- Количество слайдов: 92

Advanced Question Answering: Plenty of Challenges to Go Around Dr. John D. Prange AQUAINT Program Director JPrange@nsa. gov 301 -688 -7092 http: //www. ic-arda. org 25 March 2002

Advanced Question Answering: Plenty of Challenges to Go Around Dr. John D. Prange AQUAINT Program Director JPrange@nsa. gov 301 -688 -7092 http: //www. ic-arda. org 25 March 2002

Outline • Introducing ARDA • Advanced Question Answering – There is Room for Multiple Approaches – The AQUAINT Program – Challenges from an AQUAINT Perspective • Some Final Thoughts. . . • Questions and Comments AAAI Symposium – 25 March 2002

Outline • Introducing ARDA • Advanced Question Answering – There is Room for Multiple Approaches – The AQUAINT Program – Challenges from an AQUAINT Perspective • Some Final Thoughts. . . • Questions and Comments AAAI Symposium – 25 March 2002

Introducing ARDA A joint Department of Defense / Intelligence Community organization launched in Dec 98 • MISSION: – Incubate revolutionary R&D for the shared benefit of the Intelligence Community • MEANS: – A nimble, cross-community organization – A modest, yet significant budget – Small, outward-looking staff working as “honest brokers” and “agent provocateurs” AAAI Symposium – 25 March 2002

Introducing ARDA A joint Department of Defense / Intelligence Community organization launched in Dec 98 • MISSION: – Incubate revolutionary R&D for the shared benefit of the Intelligence Community • MEANS: – A nimble, cross-community organization – A modest, yet significant budget – Small, outward-looking staff working as “honest brokers” and “agent provocateurs” AAAI Symposium – 25 March 2002

What ARDA Does We originate and manage R&D programs • With fundamental impact on future operational needs and strategies • That demand substantial, long-term venture investment to spur risk-taking • That progress measurably toward mid-term and final goals • That take many forms and employ many delivery vehicles AAAI Symposium – 25 March 2002

What ARDA Does We originate and manage R&D programs • With fundamental impact on future operational needs and strategies • That demand substantial, long-term venture investment to spur risk-taking • That progress measurably toward mid-term and final goals • That take many forms and employ many delivery vehicles AAAI Symposium – 25 March 2002

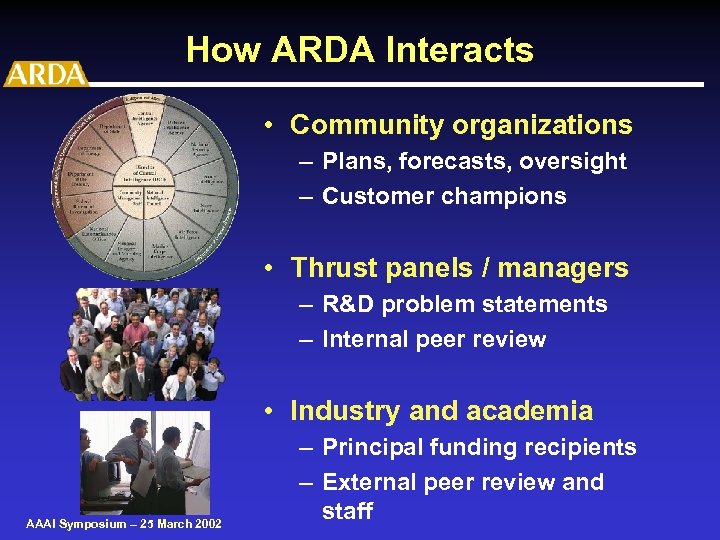

How ARDA Interacts • Community organizations – Plans, forecasts, oversight – Customer champions • Thrust panels / managers – R&D problem statements – Internal peer review • Industry and academia AAAI Symposium – 25 March 2002 – Principal funding recipients – External peer review and staff

How ARDA Interacts • Community organizations – Plans, forecasts, oversight – Customer champions • Thrust panels / managers – R&D problem statements – Internal peer review • Industry and academia AAAI Symposium – 25 March 2002 – Principal funding recipients – External peer review and staff

Where Is ARDA? National Security Agency Fort George G. Meade, MD Room 12 A 69 NBP#1 Building 301 -688 -7092 800 -276 -3747 301 -688 -7410 (FAX) http: //www. ic-arda. org ARDA@nsa. gov AAAI Symposium – 25 March 2002

Where Is ARDA? National Security Agency Fort George G. Meade, MD Room 12 A 69 NBP#1 Building 301 -688 -7092 800 -276 -3747 301 -688 -7410 (FAX) http: //www. ic-arda. org ARDA@nsa. gov AAAI Symposium – 25 March 2002

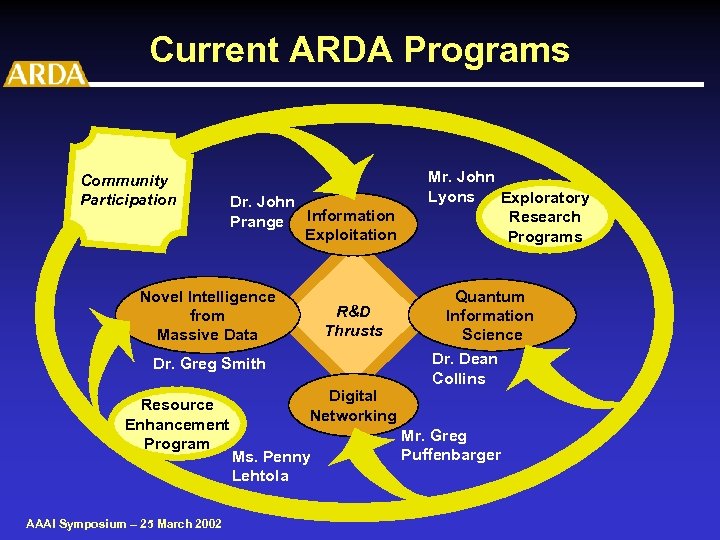

Current ARDA Programs Community Participation Dr. John Prange Information Exploitation Novel Intelligence from Massive Data R&D Thrusts Dr. Greg Smith Resource Enhancement Program AAAI Symposium – 25 March 2002 Digital Networking Ms. Penny Lehtola Mr. John Lyons Exploratory Research Programs Quantum Information Science Dr. Dean Collins Mr. Greg Puffenbarger

Current ARDA Programs Community Participation Dr. John Prange Information Exploitation Novel Intelligence from Massive Data R&D Thrusts Dr. Greg Smith Resource Enhancement Program AAAI Symposium – 25 March 2002 Digital Networking Ms. Penny Lehtola Mr. John Lyons Exploratory Research Programs Quantum Information Science Dr. Dean Collins Mr. Greg Puffenbarger

Outline • Introducing ARDA • Advanced Question Answering – There is Room for Multiple Approaches – The AQUAINT Program – Challenges from an AQUAINT Perspective • Some Final Thoughts. . . • Questions and Comments AAAI Symposium – 25 March 2002

Outline • Introducing ARDA • Advanced Question Answering – There is Room for Multiple Approaches – The AQUAINT Program – Challenges from an AQUAINT Perspective • Some Final Thoughts. . . • Questions and Comments AAAI Symposium – 25 March 2002

Question Answering ala Gary Larson AAAI Symposium – 25 March 2002

Question Answering ala Gary Larson AAAI Symposium – 25 March 2002

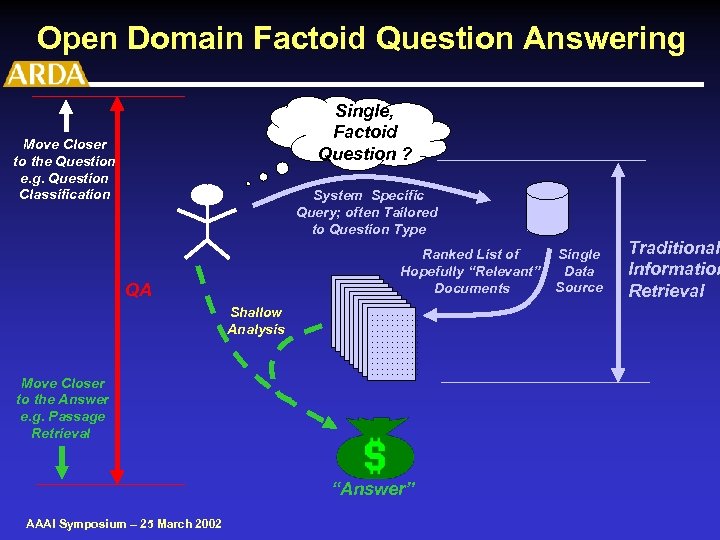

Open Domain Factoid Question Answering Single, Factoid Question ? Move Closer to the Question e. g. Question Classification System Specific Query; often Tailored to Question Type Ranked List of Hopefully “Relevant”. . . . Documents. . . . . QA Shallow Analysis Move Closer to the Answer e. g. Passage Retrieval . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . “Answer” AAAI Symposium – 25 March 2002 Single Data Source Traditional Information Retrieval

Open Domain Factoid Question Answering Single, Factoid Question ? Move Closer to the Question e. g. Question Classification System Specific Query; often Tailored to Question Type Ranked List of Hopefully “Relevant”. . . . Documents. . . . . QA Shallow Analysis Move Closer to the Answer e. g. Passage Retrieval . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . “Answer” AAAI Symposium – 25 March 2002 Single Data Source Traditional Information Retrieval

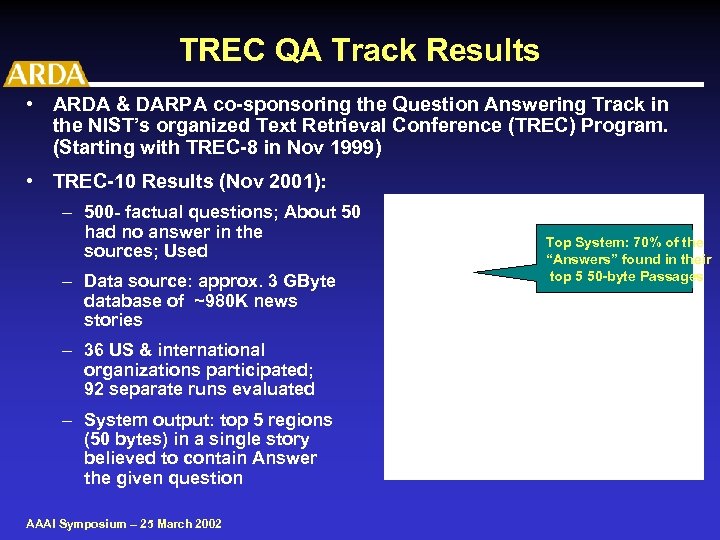

TREC QA Track Results • ARDA & DARPA co-sponsoring the Question Answering Track in the NIST’s organized Text Retrieval Conference (TREC) Program. (Starting with TREC-8 in Nov 1999) • TREC-10 Results (Nov 2001): – 500 - factual questions; About 50 had no answer in the sources; Used – Data source: approx. 3 GByte database of ~980 K news stories questions TREC-10 Data Top System: 70% of the “Real” Questions “Answers” found in their top 5 50 -byte Passages – 36 US & international organizations participated; 92 separate runs evaluated – System output: top 5 regions (50 bytes) in a single story believed to contain Answer the given question AAAI Symposium – 25 March 2002 to

TREC QA Track Results • ARDA & DARPA co-sponsoring the Question Answering Track in the NIST’s organized Text Retrieval Conference (TREC) Program. (Starting with TREC-8 in Nov 1999) • TREC-10 Results (Nov 2001): – 500 - factual questions; About 50 had no answer in the sources; Used – Data source: approx. 3 GByte database of ~980 K news stories questions TREC-10 Data Top System: 70% of the “Real” Questions “Answers” found in their top 5 50 -byte Passages – 36 US & international organizations participated; 92 separate runs evaluated – System output: top 5 regions (50 bytes) in a single story believed to contain Answer the given question AAAI Symposium – 25 March 2002 to

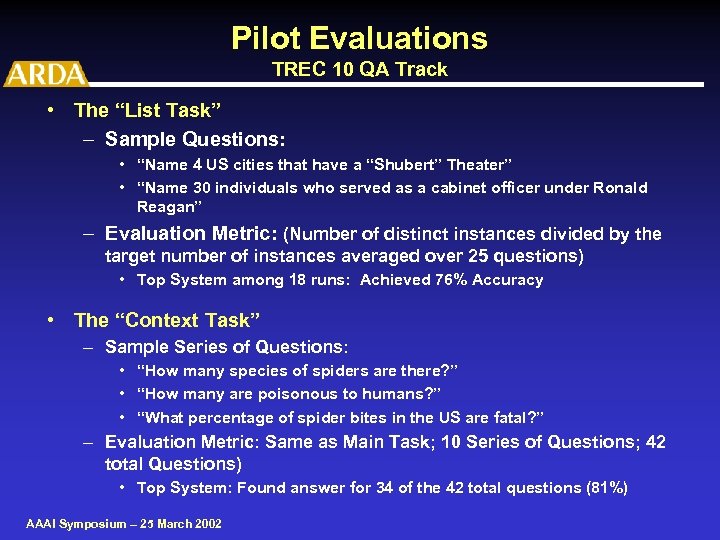

Pilot Evaluations TREC 10 QA Track • The “List Task” – Sample Questions: • “Name 4 US cities that have a “Shubert” Theater” • “Name 30 individuals who served as a cabinet officer under Ronald Reagan” – Evaluation Metric: (Number of distinct instances divided by the target number of instances averaged over 25 questions) • Top System among 18 runs: Achieved 76% Accuracy • The “Context Task” – Sample Series of Questions: • “How many species of spiders are there? ” • “How many are poisonous to humans? ” • “What percentage of spider bites in the US are fatal? ” – Evaluation Metric: Same as Main Task; 10 Series of Questions; 42 total Questions) • Top System: Found answer for 34 of the 42 total questions (81%) AAAI Symposium – 25 March 2002

Pilot Evaluations TREC 10 QA Track • The “List Task” – Sample Questions: • “Name 4 US cities that have a “Shubert” Theater” • “Name 30 individuals who served as a cabinet officer under Ronald Reagan” – Evaluation Metric: (Number of distinct instances divided by the target number of instances averaged over 25 questions) • Top System among 18 runs: Achieved 76% Accuracy • The “Context Task” – Sample Series of Questions: • “How many species of spiders are there? ” • “How many are poisonous to humans? ” • “What percentage of spider bites in the US are fatal? ” – Evaluation Metric: Same as Main Task; 10 Series of Questions; 42 total Questions) • Top System: Found answer for 34 of the 42 total questions (81%) AAAI Symposium – 25 March 2002

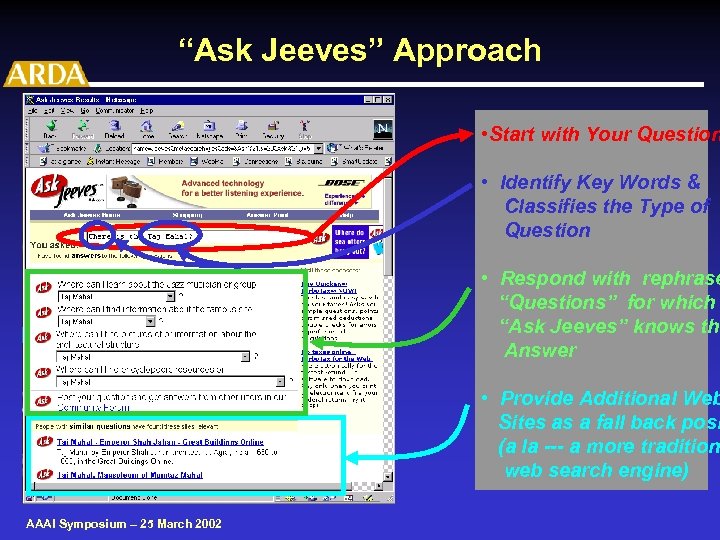

“Ask Jeeves” Approach • Start with Your Question • Identify Key Words & Classifies the Type of Question • Respond with rephrase “Questions” for which “Ask Jeeves” knows the Answer • Provide Additional Web Sites as a fall back posi (a la --- a more tradition web search engine) AAAI Symposium – 25 March 2002

“Ask Jeeves” Approach • Start with Your Question • Identify Key Words & Classifies the Type of Question • Respond with rephrase “Questions” for which “Ask Jeeves” knows the Answer • Provide Additional Web Sites as a fall back posi (a la --- a more tradition web search engine) AAAI Symposium – 25 March 2002

Tailored Question Answering Approaches Multiple Commercial/Research Groups are currently pursuing the Application of Question Answering Methods to: • FAQ (Frequently Asked Questions) • Help Desks / Customer Service Phone Centers • Accessing Complex set of Technical Maintenance Manuals • Integrating QA in Knowledge Management and Portals • Wide variety of Other E-Business Applications AAAI Symposium – 25 March 2002

Tailored Question Answering Approaches Multiple Commercial/Research Groups are currently pursuing the Application of Question Answering Methods to: • FAQ (Frequently Asked Questions) • Help Desks / Customer Service Phone Centers • Accessing Complex set of Technical Maintenance Manuals • Integrating QA in Knowledge Management and Portals • Wide variety of Other E-Business Applications AAAI Symposium – 25 March 2002

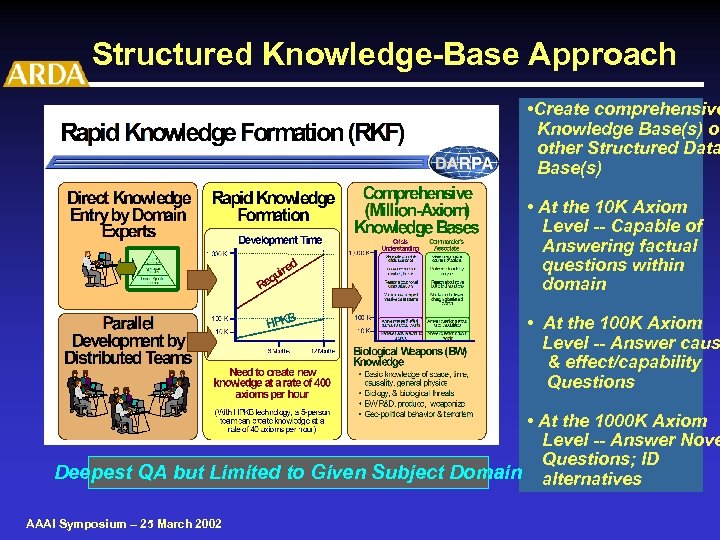

Structured Knowledge-Base Approach • Create comprehensive Knowledge Base(s) or other Structured Data Base(s) • At the 10 K Axiom Level -- Capable of Answering factual questions within domain • At the 100 K Axiom Level -- Answer caus & effect/capability Questions • At the 1000 K Axiom Level -- Answer Nove Questions; ID Deepest QA but Limited to Given Subject Domain alternatives AAAI Symposium – 25 March 2002

Structured Knowledge-Base Approach • Create comprehensive Knowledge Base(s) or other Structured Data Base(s) • At the 10 K Axiom Level -- Capable of Answering factual questions within domain • At the 100 K Axiom Level -- Answer caus & effect/capability Questions • At the 1000 K Axiom Level -- Answer Nove Questions; ID Deepest QA but Limited to Given Subject Domain alternatives AAAI Symposium – 25 March 2002

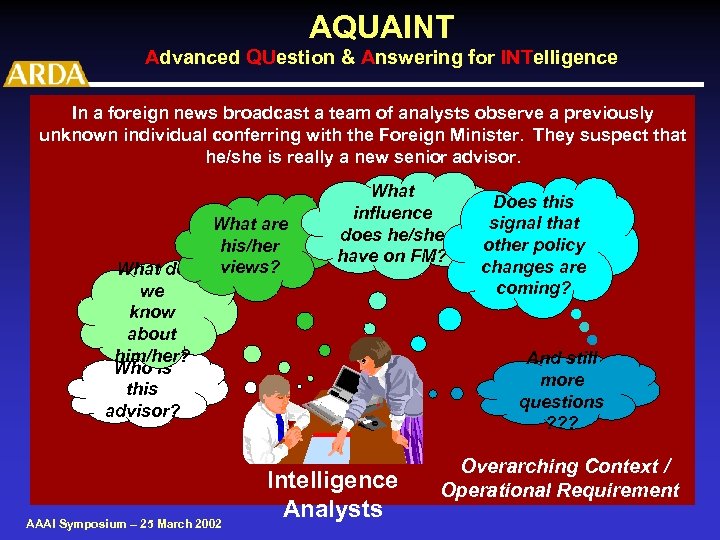

AQUAINT Advanced QUestion & Answering for INTelligence In a foreign news broadcast a team of analysts observe a previously unknown individual conferring with the Foreign Minister. They suspect that he/she is really a new senior advisor. What do we know about him/her? Who is this advisor? What are his/her views? AAAI Symposium – 25 March 2002 What influence does he/she have on FM? Does this signal that other policy changes are coming? And still more questions ? ? ? Intelligence Analysts Overarching Context / Operational Requirement

AQUAINT Advanced QUestion & Answering for INTelligence In a foreign news broadcast a team of analysts observe a previously unknown individual conferring with the Foreign Minister. They suspect that he/she is really a new senior advisor. What do we know about him/her? Who is this advisor? What are his/her views? AAAI Symposium – 25 March 2002 What influence does he/she have on FM? Does this signal that other policy changes are coming? And still more questions ? ? ? Intelligence Analysts Overarching Context / Operational Requirement

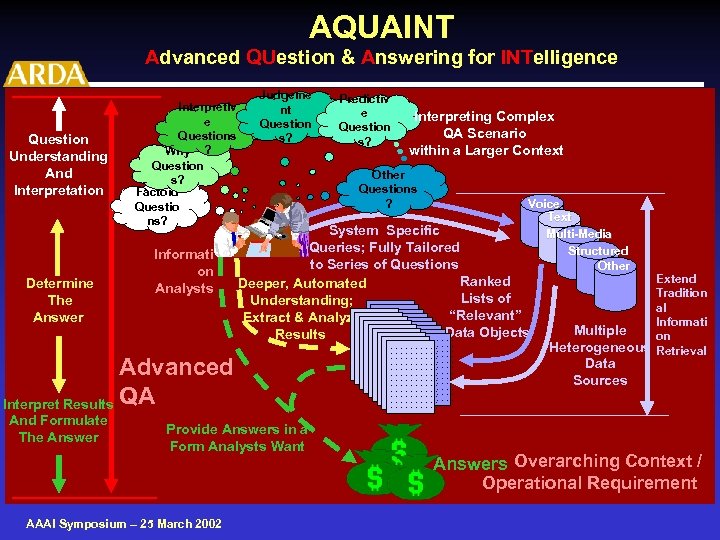

AQUAINT Advanced QUestion & Answering for INTelligence Question Understanding And Interpretation Determine The Answer Interpretiv e Questions Why ? Question s? Factoid Questio ns? Informati on Analysts Judgeme nt Question s? Voice Text Multi-Media Structured Other System Specific Queries; Fully Tailored to Series of Questions Ranked Deeper, Automated Lists of Understanding; . . . . Extract & Analyze. . . . . “Relevant”. . . . . Data Objects. . . . . Results. . . Provide Answers in a Form Analysts Want AAAI Symposium – 25 March 2002 Interpreting Complex QA Scenario within a Larger Context Other Questions ? Advanced Interpret Results QA And Formulate The Answer Predictiv e Question s? . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . Extend Tradition al Informati Multiple on Heterogeneous Retrieval Data Sources Answers Overarching Context / Operational Requirement

AQUAINT Advanced QUestion & Answering for INTelligence Question Understanding And Interpretation Determine The Answer Interpretiv e Questions Why ? Question s? Factoid Questio ns? Informati on Analysts Judgeme nt Question s? Voice Text Multi-Media Structured Other System Specific Queries; Fully Tailored to Series of Questions Ranked Deeper, Automated Lists of Understanding; . . . . Extract & Analyze. . . . . “Relevant”. . . . . Data Objects. . . . . Results. . . Provide Answers in a Form Analysts Want AAAI Symposium – 25 March 2002 Interpreting Complex QA Scenario within a Larger Context Other Questions ? Advanced Interpret Results QA And Formulate The Answer Predictiv e Question s? . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . Extend Tradition al Informati Multiple on Heterogeneous Retrieval Data Sources Answers Overarching Context / Operational Requirement

Outline • Introducing ARDA • Advanced Question Answering – There is Room for Multiple Approaches – The AQUAINT Program – Challenges from an AQUAINT Perspective • Some Final Thoughts. . . • Questions and Comments AAAI Symposium – 25 March 2002

Outline • Introducing ARDA • Advanced Question Answering – There is Room for Multiple Approaches – The AQUAINT Program – Challenges from an AQUAINT Perspective • Some Final Thoughts. . . • Questions and Comments AAAI Symposium – 25 March 2002

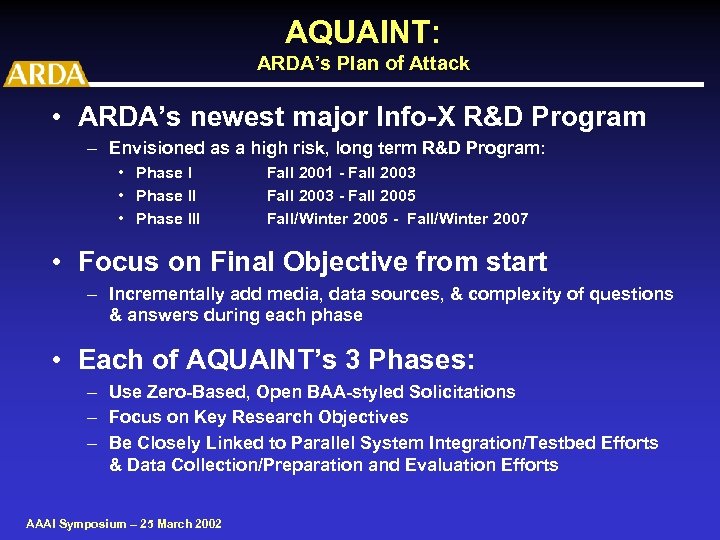

AQUAINT: ARDA’s Plan of Attack • ARDA’s newest major Info-X R&D Program – Envisioned as a high risk, long term R&D Program: • Phase III Fall 2001 - Fall 2003 - Fall 2005 Fall/Winter 2005 - Fall/Winter 2007 • Focus on Final Objective from start – Incrementally add media, data sources, & complexity of questions & answers during each phase • Each of AQUAINT’s 3 Phases: – Use Zero-Based, Open BAA-styled Solicitations – Focus on Key Research Objectives – Be Closely Linked to Parallel System Integration/Testbed Efforts & Data Collection/Preparation and Evaluation Efforts AAAI Symposium – 25 March 2002

AQUAINT: ARDA’s Plan of Attack • ARDA’s newest major Info-X R&D Program – Envisioned as a high risk, long term R&D Program: • Phase III Fall 2001 - Fall 2003 - Fall 2005 Fall/Winter 2005 - Fall/Winter 2007 • Focus on Final Objective from start – Incrementally add media, data sources, & complexity of questions & answers during each phase • Each of AQUAINT’s 3 Phases: – Use Zero-Based, Open BAA-styled Solicitations – Focus on Key Research Objectives – Be Closely Linked to Parallel System Integration/Testbed Efforts & Data Collection/Preparation and Evaluation Efforts AAAI Symposium – 25 March 2002

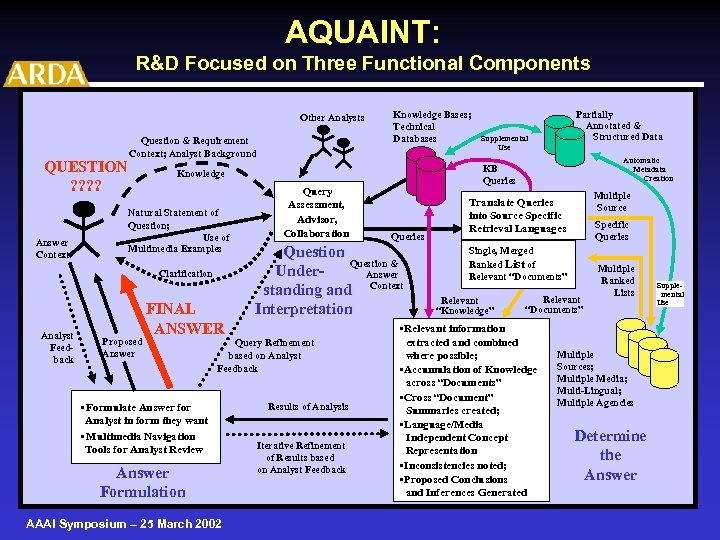

AQUAINT: R&D Focused on Three Functional Components Other Analysts QUESTION ? ? Answer Context Question & Requirement Context; Analyst Background Natural Statement of Question; Use of Multimedia Examples Proposed Answer FINAL ANSWER Query Assessment, Advisor, Collaboration • Formulate Answer for Analyst in form they want • Multimedia Navigation Tools for Analyst Review Answer Formulation AAAI Symposium – 25 March 2002 Queries Question & Under. Answer standing and Context Interpretation Query Refinement based on Analyst Feedback Results of Analysis Iterative Refinement of Results based on Analyst Feedback Partially Annotated & Structured Data Supplemental Use Automatic Metadata Creation KB Queries Knowledge Clarification Analyst Feedback Knowledge Bases; Technical Databases Multiple Source Translate Queries into Source Specific Retrieval Languages Specific Queries Single, Merged Ranked List of Relevant “Documents” Relevant “Knowledge” Relevant “Documents” • Relevant information extracted and combined where possible; • Accumulation of Knowledge across “Documents” • Cross “Document” Summaries created; • Language/Media Independent Concept Representation • Inconsistencies noted; • Proposed Conclusions and Inferences Generated Multiple Ranked Lists Multiple Sources; Multiple Media; Multi-Lingual; Multiple Agencies Determine the Answer Supplemental Use

AQUAINT: R&D Focused on Three Functional Components Other Analysts QUESTION ? ? Answer Context Question & Requirement Context; Analyst Background Natural Statement of Question; Use of Multimedia Examples Proposed Answer FINAL ANSWER Query Assessment, Advisor, Collaboration • Formulate Answer for Analyst in form they want • Multimedia Navigation Tools for Analyst Review Answer Formulation AAAI Symposium – 25 March 2002 Queries Question & Under. Answer standing and Context Interpretation Query Refinement based on Analyst Feedback Results of Analysis Iterative Refinement of Results based on Analyst Feedback Partially Annotated & Structured Data Supplemental Use Automatic Metadata Creation KB Queries Knowledge Clarification Analyst Feedback Knowledge Bases; Technical Databases Multiple Source Translate Queries into Source Specific Retrieval Languages Specific Queries Single, Merged Ranked List of Relevant “Documents” Relevant “Knowledge” Relevant “Documents” • Relevant information extracted and combined where possible; • Accumulation of Knowledge across “Documents” • Cross “Document” Summaries created; • Language/Media Independent Concept Representation • Inconsistencies noted; • Proposed Conclusions and Inferences Generated Multiple Ranked Lists Multiple Sources; Multiple Media; Multi-Lingual; Multiple Agencies Determine the Answer Supplemental Use

AQUAINT: Cross Cutting/Enabling Technologies R&D Areas Specifically Solicited Research Areas include: 1) Advanced Reasoning for Question Answering 2) Sharable Knowledge Sources 3) Content Representation 4) Interactive Question Answering Sessions 5) Role of Context 6) Role of Knowledge 7) Deep, Human Language Processing and Understanding AAAI Symposium – 25 March 2002

AQUAINT: Cross Cutting/Enabling Technologies R&D Areas Specifically Solicited Research Areas include: 1) Advanced Reasoning for Question Answering 2) Sharable Knowledge Sources 3) Content Representation 4) Interactive Question Answering Sessions 5) Role of Context 6) Role of Knowledge 7) Deep, Human Language Processing and Understanding AAAI Symposium – 25 March 2002

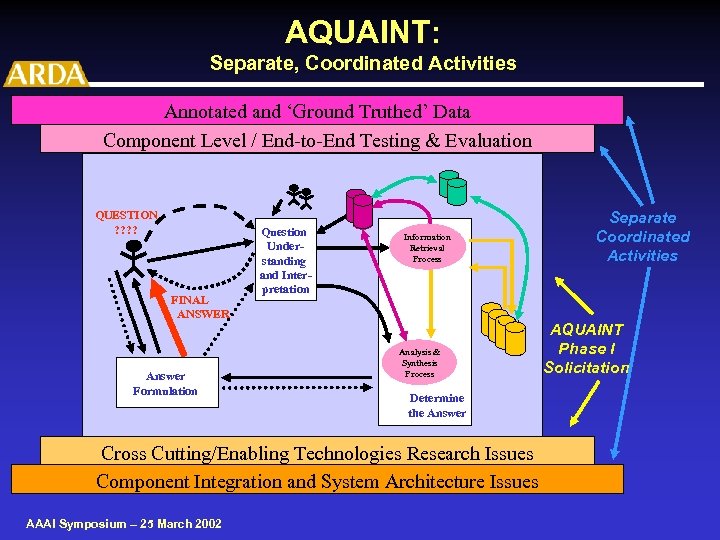

AQUAINT: Separate, Coordinated Activities Annotated and ‘Ground Truthed’ Data Component Level / End-to-End Testing & Evaluation QUESTION ? ? FINAL ANSWER Answer Formulation Question Understanding and Interpretation Information Retrieval Process Analysis & Synthesis Process Determine the Answer Cross Cutting/Enabling Technologies Research Issues Component Integration and System Architecture Issues AAAI Symposium – 25 March 2002 Separate Coordinated Activities AQUAINT Phase I Solicitation

AQUAINT: Separate, Coordinated Activities Annotated and ‘Ground Truthed’ Data Component Level / End-to-End Testing & Evaluation QUESTION ? ? FINAL ANSWER Answer Formulation Question Understanding and Interpretation Information Retrieval Process Analysis & Synthesis Process Determine the Answer Cross Cutting/Enabling Technologies Research Issues Component Integration and System Architecture Issues AAAI Symposium – 25 March 2002 Separate Coordinated Activities AQUAINT Phase I Solicitation

AQUAINT: User Testbed / System Integration • Pull together best available system components emerging from AQUAINT Program research efforts – Couple AQUAINT components with existing GOTS and COTS software • Develop end-to-end AQUAINT prototype(s) aimed at specific Operational QA environments • Government-led effort: – Directly Linked into Sponsoring Agency’s Technology Insertion Organizations – Close, working relationship with working Analysts – Provide external system development support – Mitre/Bedford will lead External System Integration / Testbed efforts – Plan to also utilize additional external researchers as Consultants / Advisors AAAI Symposium – 25 March 2002

AQUAINT: User Testbed / System Integration • Pull together best available system components emerging from AQUAINT Program research efforts – Couple AQUAINT components with existing GOTS and COTS software • Develop end-to-end AQUAINT prototype(s) aimed at specific Operational QA environments • Government-led effort: – Directly Linked into Sponsoring Agency’s Technology Insertion Organizations – Close, working relationship with working Analysts – Provide external system development support – Mitre/Bedford will lead External System Integration / Testbed efforts – Plan to also utilize additional external researchers as Consultants / Advisors AAAI Symposium – 25 March 2002

AQUAINT: Data & Evaluation Issues • Data – Start by Using Existing Data Collections • NIST’s TREC Text Corpora • Linguistic Data Consortium (LDC) Human Language Corpora (e. g. TDT, Switchboard, Call Home, Call Friend Corpora) • Existing Knowledge Bases and Other Structured Databases – Future Data Collection & Annotation and Question/Answer Key Development will be a major effort – Will likely use combined efforts of NIST and LDC • Evaluation – Build upon highly successful TREC Q&A Track Evaluations -NIST has lead and is currently developing a Phased Evaluation Plan tied to AQUAINT Program Plans – Cooperate to maximum extent possible with DARPA’s RKF (Rapid Knowledge Formation) Program Evaluation Efforts AAAI Symposium – 25 March 2002

AQUAINT: Data & Evaluation Issues • Data – Start by Using Existing Data Collections • NIST’s TREC Text Corpora • Linguistic Data Consortium (LDC) Human Language Corpora (e. g. TDT, Switchboard, Call Home, Call Friend Corpora) • Existing Knowledge Bases and Other Structured Databases – Future Data Collection & Annotation and Question/Answer Key Development will be a major effort – Will likely use combined efforts of NIST and LDC • Evaluation – Build upon highly successful TREC Q&A Track Evaluations -NIST has lead and is currently developing a Phased Evaluation Plan tied to AQUAINT Program Plans – Cooperate to maximum extent possible with DARPA’s RKF (Rapid Knowledge Formation) Program Evaluation Efforts AAAI Symposium – 25 March 2002

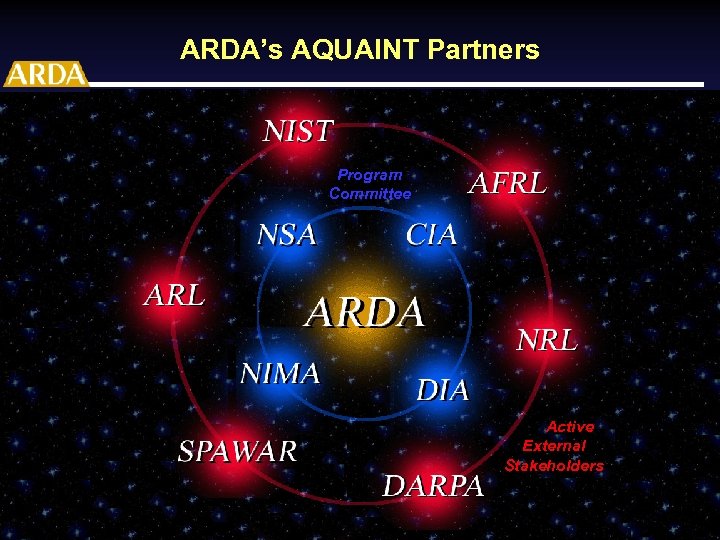

ARDA’s AQUAINT Partners Program Committee Active External Active Stakeholders External Stakeholders AAAI Symposium – 25 March 2002

ARDA’s AQUAINT Partners Program Committee Active External Active Stakeholders External Stakeholders AAAI Symposium – 25 March 2002

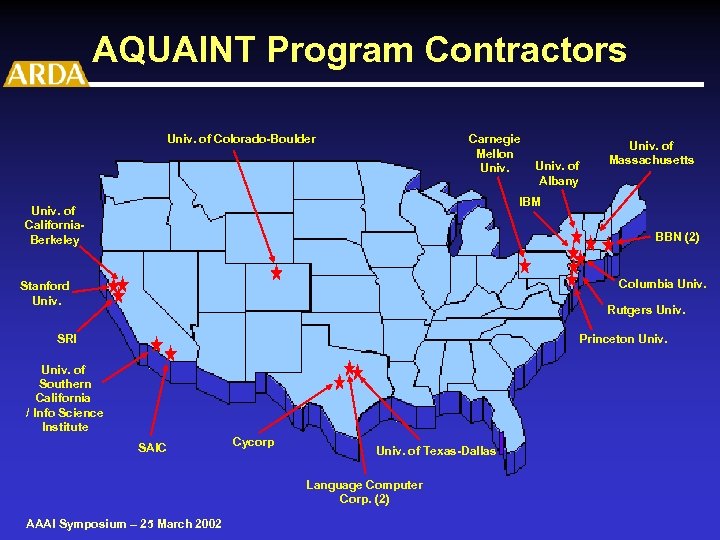

AQUAINT Program Contractors Univ. of Colorado-Boulder Carnegie Mellon Univ. of Albany Univ. of Massachusetts IBM Univ. of California. Berkeley BBN (2) Columbia Univ. Stanford Univ. Rutgers Univ. Princeton Univ. SRI Univ. of Southern California / Info Science Institute SAIC Cycorp Univ. of Texas-Dallas Language Computer Corp. (2) AAAI Symposium – 25 March 2002

AQUAINT Program Contractors Univ. of Colorado-Boulder Carnegie Mellon Univ. of Albany Univ. of Massachusetts IBM Univ. of California. Berkeley BBN (2) Columbia Univ. Stanford Univ. Rutgers Univ. Princeton Univ. SRI Univ. of Southern California / Info Science Institute SAIC Cycorp Univ. of Texas-Dallas Language Computer Corp. (2) AAAI Symposium – 25 March 2002

AQUAINT Phase I Projects (Fall 01 - Fall 03) Total End-to-End Systems (6) AAAI Symposium – 25 March 2002

AQUAINT Phase I Projects (Fall 01 - Fall 03) Total End-to-End Systems (6) AAAI Symposium – 25 March 2002

AQUAINT Phase I Projects (Fall 01 - Fall 03) Emphasis on One or more Advanced QA System Components (6) AAAI Symposium – 25 March 2002

AQUAINT Phase I Projects (Fall 01 - Fall 03) Emphasis on One or more Advanced QA System Components (6) AAAI Symposium – 25 March 2002

AQUAINT Phase I Projects (Fall 01 - Fall 03) Focused Effort -- Cross Cutting / Enabling Technologies (4) AAAI Symposium – 25 March 2002

AQUAINT Phase I Projects (Fall 01 - Fall 03) Focused Effort -- Cross Cutting / Enabling Technologies (4) AAAI Symposium – 25 March 2002

Northeast Regional Research Center Hosted By MITRE, Bedford, MA Administered by CIA • Conduct 6 -8 week workshops on multiple -related challenge problems 2002 AQUAINT during FY • Sep 2001: Planning Workshop held at MITRE. – Attended by Government Technical Leaders, MITRE, and invited set of industrial, FFRDC and Academic researchers in the field – Four Potential Challenge Problems identified; Formal Proposals developed for each Challenge Problem • Two Full Workshops Funded (Temporal Issues & Multiple Perspectives) • One Mini Workshop to further explore challenge problem planned (Re-Use of Accumulated Knowledge) AAAI Symposium – 25 March 2002

Northeast Regional Research Center Hosted By MITRE, Bedford, MA Administered by CIA • Conduct 6 -8 week workshops on multiple -related challenge problems 2002 AQUAINT during FY • Sep 2001: Planning Workshop held at MITRE. – Attended by Government Technical Leaders, MITRE, and invited set of industrial, FFRDC and Academic researchers in the field – Four Potential Challenge Problems identified; Formal Proposals developed for each Challenge Problem • Two Full Workshops Funded (Temporal Issues & Multiple Perspectives) • One Mini Workshop to further explore challenge problem planned (Re-Use of Accumulated Knowledge) AAAI Symposium – 25 March 2002

FY 2002 NRRC Wkshp Challenge Problems 1. Temporal Issues – Generate Sequence of events and activities along evolving timeline, resolving multiple levels of time references across series of documents/sources. – Leader: James Pustejovsky, Brandeis University 2. Multiple Perspectives – Develop approaches for handling situations where relevant information is obtained from multiple sources on the same topic but generated from different perspectives (e. g. cultural or political differences). – Leader: Jan Wiebe, University of Pittsburgh NRRC Web Site: http: //nrrc. mitre. org AAAI Symposium – 25 March 2002

FY 2002 NRRC Wkshp Challenge Problems 1. Temporal Issues – Generate Sequence of events and activities along evolving timeline, resolving multiple levels of time references across series of documents/sources. – Leader: James Pustejovsky, Brandeis University 2. Multiple Perspectives – Develop approaches for handling situations where relevant information is obtained from multiple sources on the same topic but generated from different perspectives (e. g. cultural or political differences). – Leader: Jan Wiebe, University of Pittsburgh NRRC Web Site: http: //nrrc. mitre. org AAAI Symposium – 25 March 2002

NRRC Planning Workshops 3. Re-Use of Accumulated Knowledge – Investigate strategies for structuring and maintaining previously generated knowledge for possible future use. E. g. previous knowledge might include questions and answers (original and amplified) as well as relevant and background information retrieved and processed. – Leaders: Marc Light, MITRE and Abraham Ittycheriah, IBM AAAI Symposium – 25 March 2002

NRRC Planning Workshops 3. Re-Use of Accumulated Knowledge – Investigate strategies for structuring and maintaining previously generated knowledge for possible future use. E. g. previous knowledge might include questions and answers (original and amplified) as well as relevant and background information retrieved and processed. – Leaders: Marc Light, MITRE and Abraham Ittycheriah, IBM AAAI Symposium – 25 March 2002

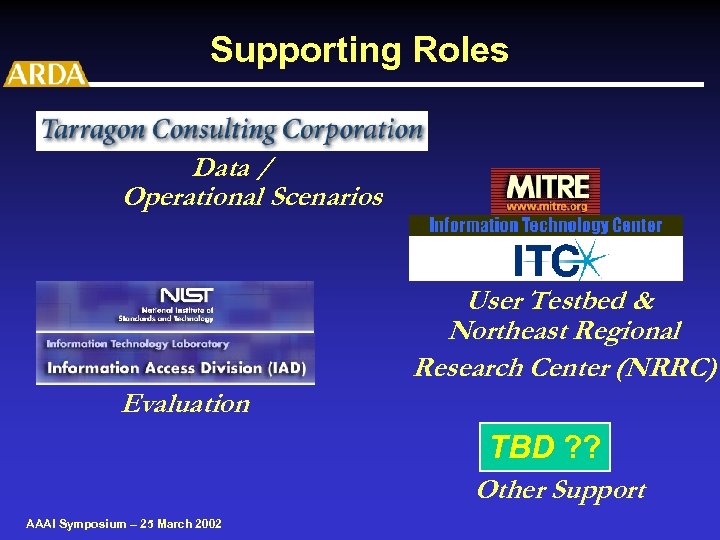

Supporting Roles Data / Operational Scenarios User Testbed & Northeast Regional Research Center (NRRC) Evaluation TBD ? ? Other Support AAAI Symposium – 25 March 2002

Supporting Roles Data / Operational Scenarios User Testbed & Northeast Regional Research Center (NRRC) Evaluation TBD ? ? Other Support AAAI Symposium – 25 March 2002

Outline • Introducing ARDA • Advanced Question Answering – There is Room for Multiple Approaches – The AQUAINT Program – Challenges from an AQUAINT Perspective • Some Final Thoughts. . . • Questions and Comments AAAI Symposium – 25 March 2002

Outline • Introducing ARDA • Advanced Question Answering – There is Room for Multiple Approaches – The AQUAINT Program – Challenges from an AQUAINT Perspective • Some Final Thoughts. . . • Questions and Comments AAAI Symposium – 25 March 2002

Top 10 Challenges 1) Satisfy QA requirements of the “Professional” Information Analyst AAAI Symposium – 25 March 2002

Top 10 Challenges 1) Satisfy QA requirements of the “Professional” Information Analyst AAAI Symposium – 25 March 2002

Professional Information Analysts: Target Audience for AQUAINT -- Who are They? • For ARDA and AQUAINT they are: – Intelligence Community and Military Analysts • But there are other Potential Target Audiences of “Professional Information Analysts”: – – – Investigative / “CNN-type” Reporters Financial Industry Analysts / Investors Historians / Biographers Lawyers / Law Clerks Law Enforcement Detectives And Others AAAI Symposium – 25 March 2002

Professional Information Analysts: Target Audience for AQUAINT -- Who are They? • For ARDA and AQUAINT they are: – Intelligence Community and Military Analysts • But there are other Potential Target Audiences of “Professional Information Analysts”: – – – Investigative / “CNN-type” Reporters Financial Industry Analysts / Investors Historians / Biographers Lawyers / Law Clerks Law Enforcement Detectives And Others AAAI Symposium – 25 March 2002

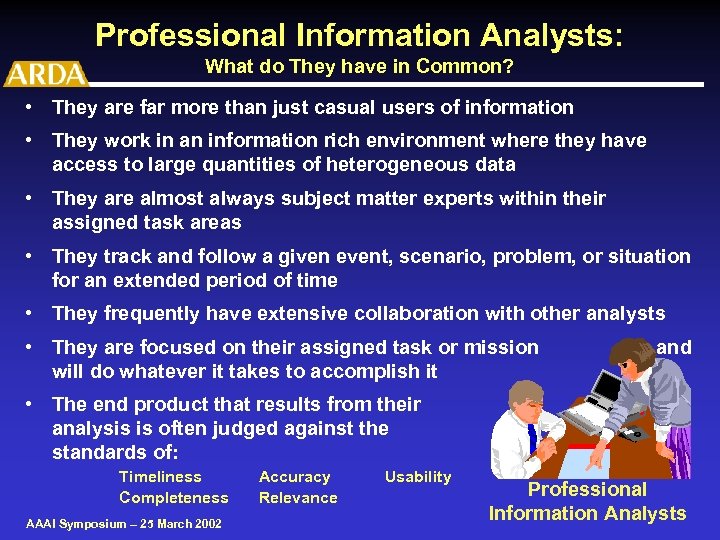

Professional Information Analysts: What do They have in Common? • They are far more than just casual users of information • They work in an information rich environment where they have access to large quantities of heterogeneous data • They are almost always subject matter experts within their assigned task areas • They track and follow a given event, scenario, problem, or situation for an extended period of time • They frequently have extensive collaboration with other analysts • They are focused on their assigned task or mission will do whatever it takes to accomplish it and • The end product that results from their analysis is often judged against the standards of: Timeliness Completeness AAAI Symposium – 25 March 2002 Accuracy Relevance Usability Professional Information Analysts

Professional Information Analysts: What do They have in Common? • They are far more than just casual users of information • They work in an information rich environment where they have access to large quantities of heterogeneous data • They are almost always subject matter experts within their assigned task areas • They track and follow a given event, scenario, problem, or situation for an extended period of time • They frequently have extensive collaboration with other analysts • They are focused on their assigned task or mission will do whatever it takes to accomplish it and • The end product that results from their analysis is often judged against the standards of: Timeliness Completeness AAAI Symposium – 25 March 2002 Accuracy Relevance Usability Professional Information Analysts

Top 10 Challenges 1) Satisfy QA requirements of the “Professional” Information Analyst 2) Pursue QA Scenarios and not just isolated, factually based QA AAAI Symposium – 25 March 2002

Top 10 Challenges 1) Satisfy QA requirements of the “Professional” Information Analyst 2) Pursue QA Scenarios and not just isolated, factually based QA AAAI Symposium – 25 March 2002

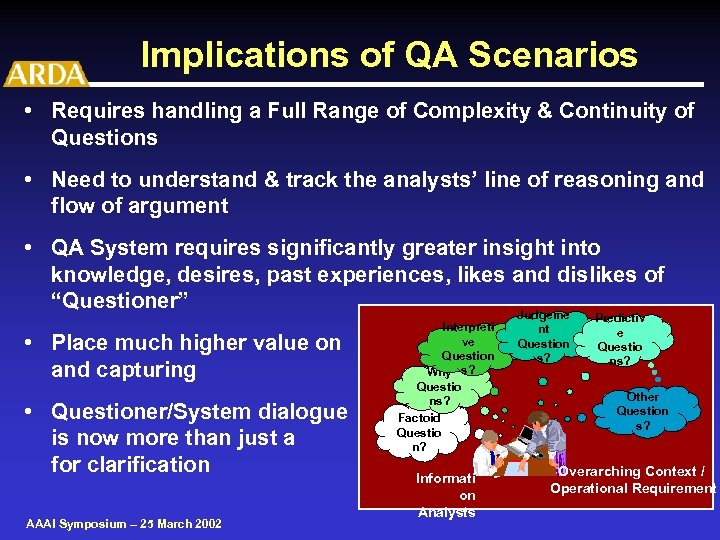

Implications of QA Scenarios • Requires handling a Full Range of Complexity & Continuity of Questions • Need to understand & track the analysts’ line of reasoning and flow of argument • QA System requires significantly greater insight into knowledge, desires, past experiences, likes and dislikes of “Questioner” Judgeme • Place much higher value on and capturing • Questioner/System dialogue is now more than just a for clarification AAAI Symposium – 25 March 2002 Interpreti ve Question Why s? Questio ns? Factoid Questio n? nt Question s? Predictiv e Questio ns? recognizing “background” information Informati on Analysts Other Question s? means Overarching Context / Operational Requirement

Implications of QA Scenarios • Requires handling a Full Range of Complexity & Continuity of Questions • Need to understand & track the analysts’ line of reasoning and flow of argument • QA System requires significantly greater insight into knowledge, desires, past experiences, likes and dislikes of “Questioner” Judgeme • Place much higher value on and capturing • Questioner/System dialogue is now more than just a for clarification AAAI Symposium – 25 March 2002 Interpreti ve Question Why s? Questio ns? Factoid Questio n? nt Question s? Predictiv e Questio ns? recognizing “background” information Informati on Analysts Other Question s? means Overarching Context / Operational Requirement

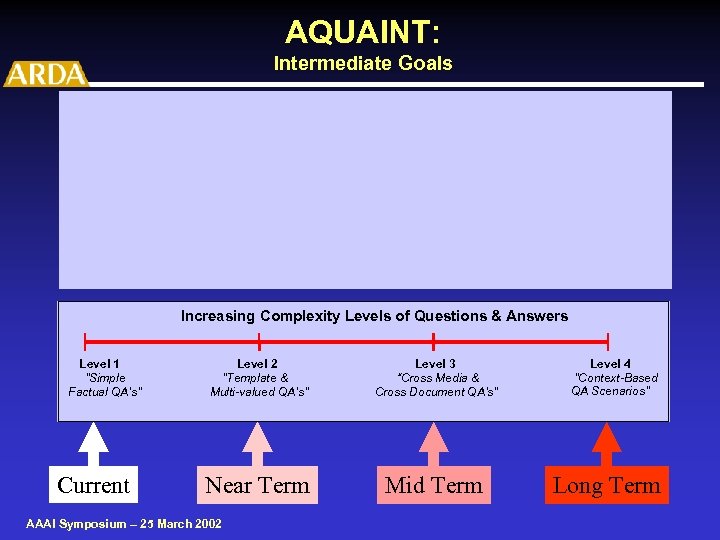

AQUAINT: Intermediate Goals Increasing Complexity Levels of Questions & Answers Level 1 ”Simple Factual QA’s" Current Level 2 "Template & Multi-valued QA’s” Level 3 “Cross Media & Cross Document QA’s" Near Term Mid Term AAAI Symposium – 25 March 2002 Level 4 ”Context-Based QA Scenarios” Long Term

AQUAINT: Intermediate Goals Increasing Complexity Levels of Questions & Answers Level 1 ”Simple Factual QA’s" Current Level 2 "Template & Multi-valued QA’s” Level 3 “Cross Media & Cross Document QA’s" Near Term Mid Term AAAI Symposium – 25 March 2002 Level 4 ”Context-Based QA Scenarios” Long Term

Top 10 Challenges 1) Satisfy QA requirements of the “Professional” Information Analyst 2) Pursue QA Scenarios and not just isolated, factually based QA 3) Support a collaborative, multiple analyst environment AAAI Symposium – 25 March 2002

Top 10 Challenges 1) Satisfy QA requirements of the “Professional” Information Analyst 2) Pursue QA Scenarios and not just isolated, factually based QA 3) Support a collaborative, multiple analyst environment AAAI Symposium – 25 March 2002

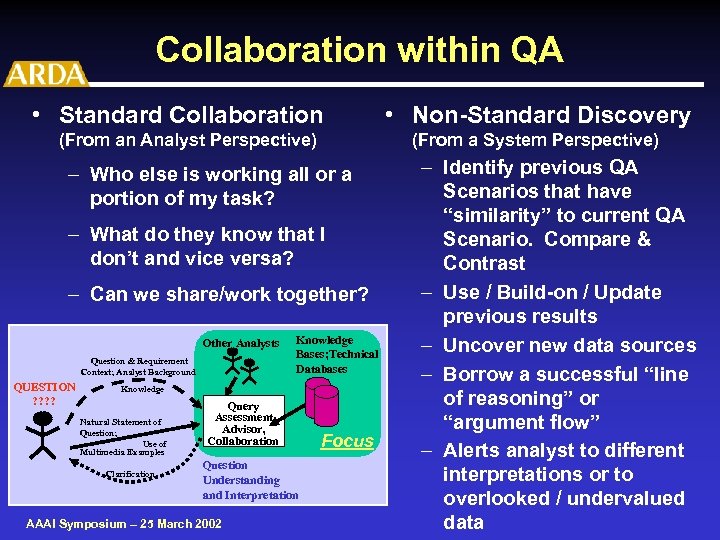

Collaboration within QA • Standard Collaboration (From an Analyst Perspective) (From a System Perspective) – Who else is working all or a portion of my task? – What do they know that I don’t and vice versa? – Can we share/work together? Other Analysts Question & Requirement Context; Analyst Background QUESTION ? ? Knowledge Bases; Technical Databases Knowledge Natural Statement of Question; Use of Multimedia Examples Clarification Query Assessment, Advisor, Collaboration Question Understanding and Interpretation AAAI Symposium – 25 March 2002 • Non-Standard Discovery Focus – Identify previous QA Scenarios that have “similarity” to current QA Scenario. Compare & Contrast – Use / Build-on / Update previous results – Uncover new data sources – Borrow a successful “line of reasoning” or “argument flow” – Alerts analyst to different interpretations or to overlooked / undervalued data

Collaboration within QA • Standard Collaboration (From an Analyst Perspective) (From a System Perspective) – Who else is working all or a portion of my task? – What do they know that I don’t and vice versa? – Can we share/work together? Other Analysts Question & Requirement Context; Analyst Background QUESTION ? ? Knowledge Bases; Technical Databases Knowledge Natural Statement of Question; Use of Multimedia Examples Clarification Query Assessment, Advisor, Collaboration Question Understanding and Interpretation AAAI Symposium – 25 March 2002 • Non-Standard Discovery Focus – Identify previous QA Scenarios that have “similarity” to current QA Scenario. Compare & Contrast – Use / Build-on / Update previous results – Uncover new data sources – Borrow a successful “line of reasoning” or “argument flow” – Alerts analyst to different interpretations or to overlooked / undervalued data

Top 10 Challenges 1) Satisfy QA requirements of the “Professional” Information Analyst 2) Pursue QA Scenarios and not just isolated, factually based QA 3) Support a collaborative, multiple analyst environment 4) Some times SMALL things really matter and other times BIG things don’t AAAI Symposium – 25 March 2002

Top 10 Challenges 1) Satisfy QA requirements of the “Professional” Information Analyst 2) Pursue QA Scenarios and not just isolated, factually based QA 3) Support a collaborative, multiple analyst environment 4) Some times SMALL things really matter and other times BIG things don’t AAAI Symposium – 25 March 2002

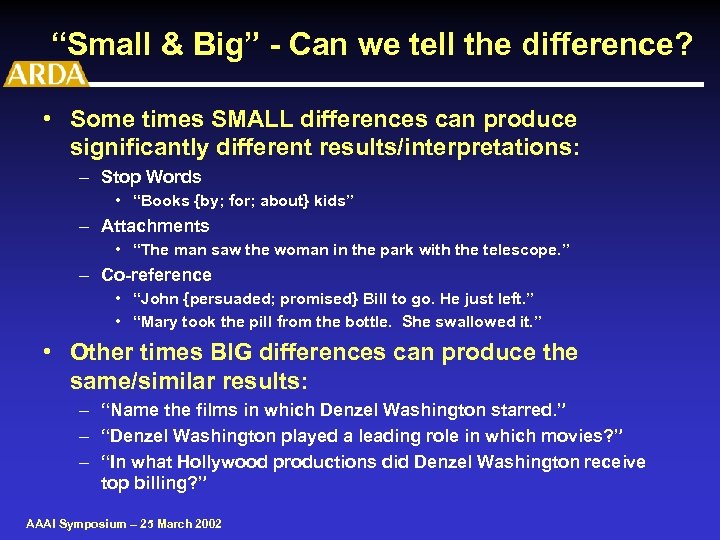

“Small & Big” - Can we tell the difference? • Some times SMALL differences can produce significantly different results/interpretations: – Stop Words • “Books {by; for; about} kids” – Attachments • “The man saw the woman in the park with the telescope. ” – Co-reference • “John {persuaded; promised} Bill to go. He just left. ” • “Mary took the pill from the bottle. She swallowed it. ” • Other times BIG differences can produce the same/similar results: – “Name the films in which Denzel Washington starred. ” – “Denzel Washington played a leading role in which movies? ” – “In what Hollywood productions did Denzel Washington receive top billing? ” AAAI Symposium – 25 March 2002

“Small & Big” - Can we tell the difference? • Some times SMALL differences can produce significantly different results/interpretations: – Stop Words • “Books {by; for; about} kids” – Attachments • “The man saw the woman in the park with the telescope. ” – Co-reference • “John {persuaded; promised} Bill to go. He just left. ” • “Mary took the pill from the bottle. She swallowed it. ” • Other times BIG differences can produce the same/similar results: – “Name the films in which Denzel Washington starred. ” – “Denzel Washington played a leading role in which movies? ” – “In what Hollywood productions did Denzel Washington receive top billing? ” AAAI Symposium – 25 March 2002

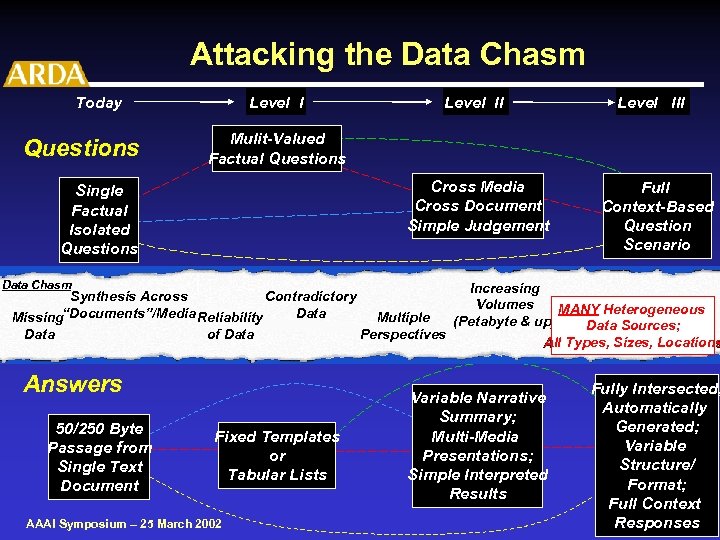

Top 10 Challenges 1) Satisfy QA requirements of the “Professional” Information Analyst 2) Pursue QA Scenarios and not just isolated, factually based QA 3) Support a collaborative, multiple analyst environment 4) Some times SMALL things really matter and other times BIG things don’t 5) Advanced QA must attack the “Data Chasm” AAAI Symposium – 25 March 2002

Top 10 Challenges 1) Satisfy QA requirements of the “Professional” Information Analyst 2) Pursue QA Scenarios and not just isolated, factually based QA 3) Support a collaborative, multiple analyst environment 4) Some times SMALL things really matter and other times BIG things don’t 5) Advanced QA must attack the “Data Chasm” AAAI Symposium – 25 March 2002

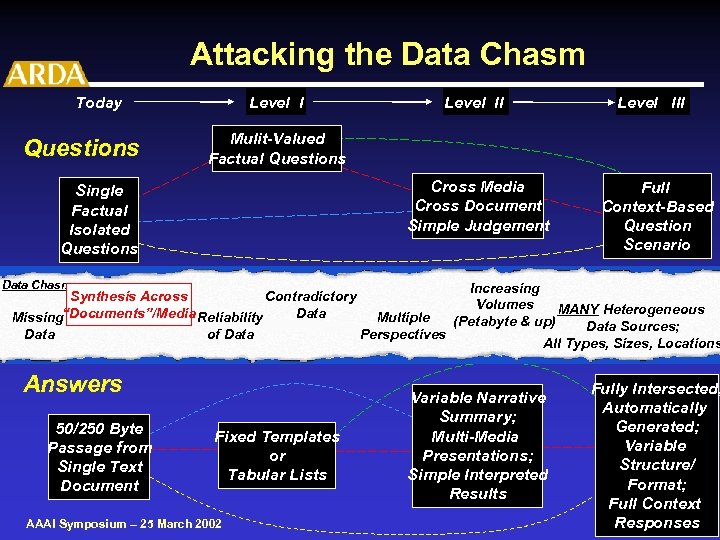

Attacking the Data Chasm Today Questions Level I Mulit-Valued Factual Questions Cross Media Cross Document Simple Judgement Single Factual Isolated Questions Data Chasm Synthesis Across Contradictory Data Missing“Documents”/Media Reliability Data of Data Answers 50/250 Byte Passage from Single Text Document Future Level III Level II Fixed Templates or Tabular Lists AAAI Symposium – 25 March 2002 Multiple Perspectives Full Context-Based Question Scenario Increasing Volumes MANY Heterogeneous (Petabyte & up) Data Sources; All Types, Sizes, Locations Variable Narrative Summary; Multi-Media Presentations; Simple Interpreted Results Fully Intersected; Automatically Generated; Variable Structure/ Format; Full Context Responses

Attacking the Data Chasm Today Questions Level I Mulit-Valued Factual Questions Cross Media Cross Document Simple Judgement Single Factual Isolated Questions Data Chasm Synthesis Across Contradictory Data Missing“Documents”/Media Reliability Data of Data Answers 50/250 Byte Passage from Single Text Document Future Level III Level II Fixed Templates or Tabular Lists AAAI Symposium – 25 March 2002 Multiple Perspectives Full Context-Based Question Scenario Increasing Volumes MANY Heterogeneous (Petabyte & up) Data Sources; All Types, Sizes, Locations Variable Narrative Summary; Multi-Media Presentations; Simple Interpreted Results Fully Intersected; Automatically Generated; Variable Structure/ Format; Full Context Responses

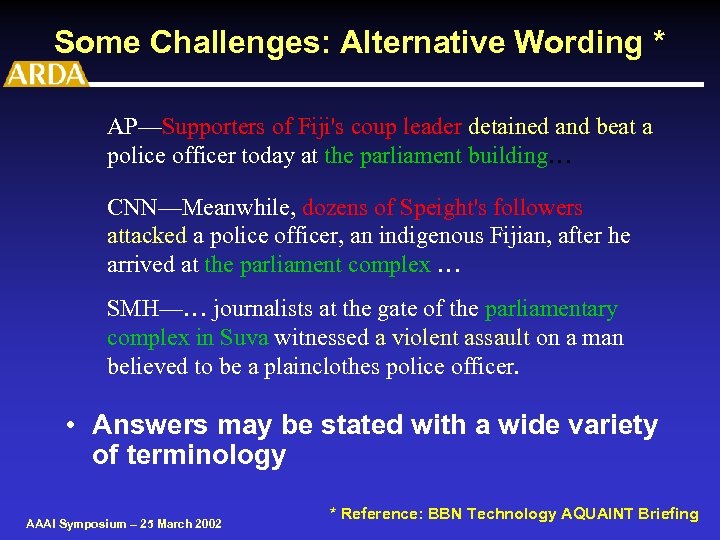

Some Challenges: Alternative Wording * AP—Supporters of Fiji's coup leader detained and beat a police officer today at the parliament building… CNN—Meanwhile, dozens of Speight's followers attacked a police officer, an indigenous Fijian, after he arrived at the parliament complex … SMH—… journalists at the gate of the parliamentary complex in Suva witnessed a violent assault on a man believed to be a plainclothes police officer. • Answers may be stated with a wide variety of terminology AAAI Symposium – 25 March 2002 * Reference: BBN Technology AQUAINT Briefing

Some Challenges: Alternative Wording * AP—Supporters of Fiji's coup leader detained and beat a police officer today at the parliament building… CNN—Meanwhile, dozens of Speight's followers attacked a police officer, an indigenous Fijian, after he arrived at the parliament complex … SMH—… journalists at the gate of the parliamentary complex in Suva witnessed a violent assault on a man believed to be a plainclothes police officer. • Answers may be stated with a wide variety of terminology AAAI Symposium – 25 March 2002 * Reference: BBN Technology AQUAINT Briefing

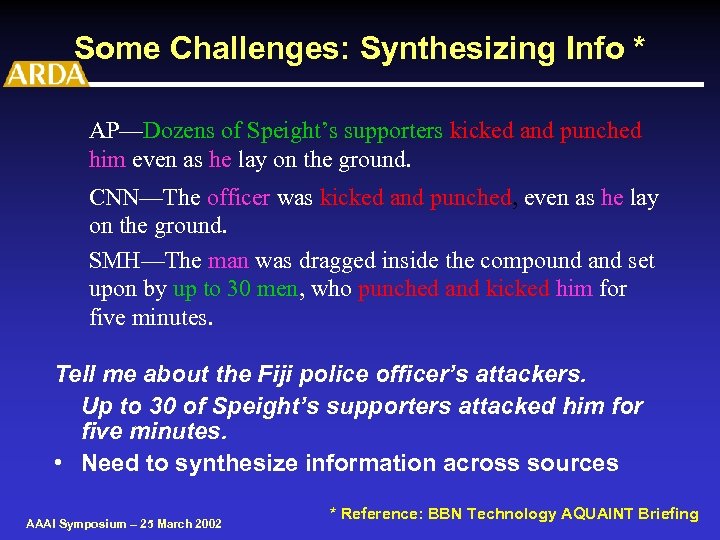

Some Challenges: Synthesizing Info * AP—Dozens of Speight’s supporters kicked and punched him even as he lay on the ground. CNN—The officer was kicked and punched, even as he lay on the ground. SMH—The man was dragged inside the compound and set upon by up to 30 men, who punched and kicked him for five minutes. Tell me about the Fiji police officer’s attackers. Up to 30 of Speight’s supporters attacked him for five minutes. • Need to synthesize information across sources AAAI Symposium – 25 March 2002 * Reference: BBN Technology AQUAINT Briefing

Some Challenges: Synthesizing Info * AP—Dozens of Speight’s supporters kicked and punched him even as he lay on the ground. CNN—The officer was kicked and punched, even as he lay on the ground. SMH—The man was dragged inside the compound and set upon by up to 30 men, who punched and kicked him for five minutes. Tell me about the Fiji police officer’s attackers. Up to 30 of Speight’s supporters attacked him for five minutes. • Need to synthesize information across sources AAAI Symposium – 25 March 2002 * Reference: BBN Technology AQUAINT Briefing

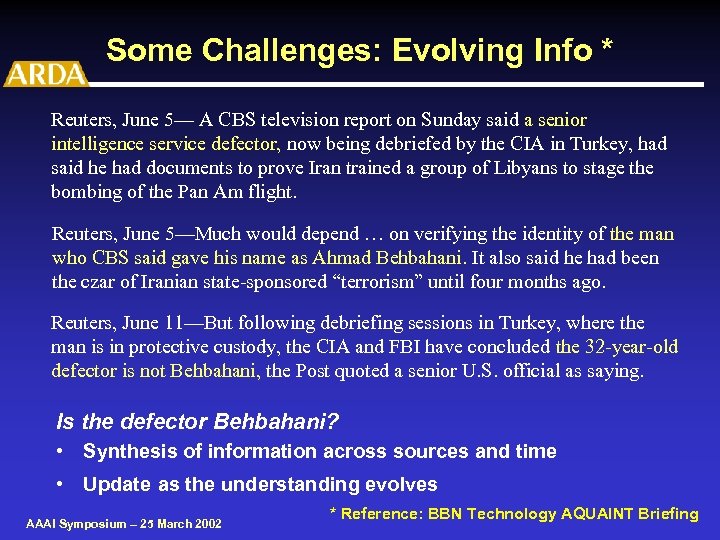

Some Challenges: Evolving Info * Reuters, June 5— A CBS television report on Sunday said a senior intelligence service defector, now being debriefed by the CIA in Turkey, had said he had documents to prove Iran trained a group of Libyans to stage the bombing of the Pan Am flight. Reuters, June 5—Much would depend … on verifying the identity of the man who CBS said gave his name as Ahmad Behbahani. It also said he had been the czar of Iranian state-sponsored “terrorism” until four months ago. Reuters, June 11—But following debriefing sessions in Turkey, where the man is in protective custody, the CIA and FBI have concluded the 32 -year-old defector is not Behbahani, the Post quoted a senior U. S. official as saying. Is the defector Behbahani? • Synthesis of information across sources and time • Update as the understanding evolves AAAI Symposium – 25 March 2002 * Reference: BBN Technology AQUAINT Briefing

Some Challenges: Evolving Info * Reuters, June 5— A CBS television report on Sunday said a senior intelligence service defector, now being debriefed by the CIA in Turkey, had said he had documents to prove Iran trained a group of Libyans to stage the bombing of the Pan Am flight. Reuters, June 5—Much would depend … on verifying the identity of the man who CBS said gave his name as Ahmad Behbahani. It also said he had been the czar of Iranian state-sponsored “terrorism” until four months ago. Reuters, June 11—But following debriefing sessions in Turkey, where the man is in protective custody, the CIA and FBI have concluded the 32 -year-old defector is not Behbahani, the Post quoted a senior U. S. official as saying. Is the defector Behbahani? • Synthesis of information across sources and time • Update as the understanding evolves AAAI Symposium – 25 March 2002 * Reference: BBN Technology AQUAINT Briefing

Attacking the Data Chasm Today Questions Level I Mulit-Valued Factual Questions Cross Media Cross Document Simple Judgement Single Factual Isolated Questions Data Chasm Synthesis Across Contradictory Data Missing“Documents”/Media Reliability Data of Data Answers 50/250 Byte Passage from Single Text Document Future Level III Level II Fixed Templates or Tabular Lists AAAI Symposium – 25 March 2002 Multiple Perspectives Full Context-Based Question Scenario Increasing Volumes MANY Heterogeneous (Petabyte & up) Data Sources; All Types, Sizes, Locations Variable Narrative Summary; Multi-Media Presentations; Simple Interpreted Results Fully Intersected; Automatically Generated; Variable Structure/ Format; Full Context Responses

Attacking the Data Chasm Today Questions Level I Mulit-Valued Factual Questions Cross Media Cross Document Simple Judgement Single Factual Isolated Questions Data Chasm Synthesis Across Contradictory Data Missing“Documents”/Media Reliability Data of Data Answers 50/250 Byte Passage from Single Text Document Future Level III Level II Fixed Templates or Tabular Lists AAAI Symposium – 25 March 2002 Multiple Perspectives Full Context-Based Question Scenario Increasing Volumes MANY Heterogeneous (Petabyte & up) Data Sources; All Types, Sizes, Locations Variable Narrative Summary; Multi-Media Presentations; Simple Interpreted Results Fully Intersected; Automatically Generated; Variable Structure/ Format; Full Context Responses

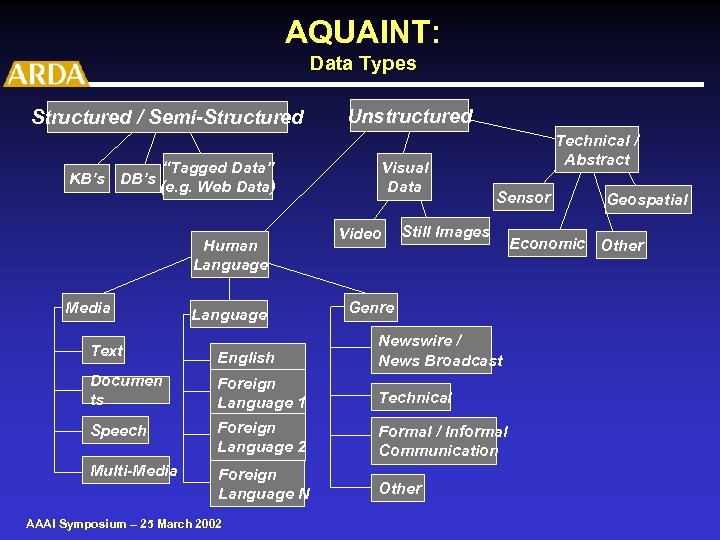

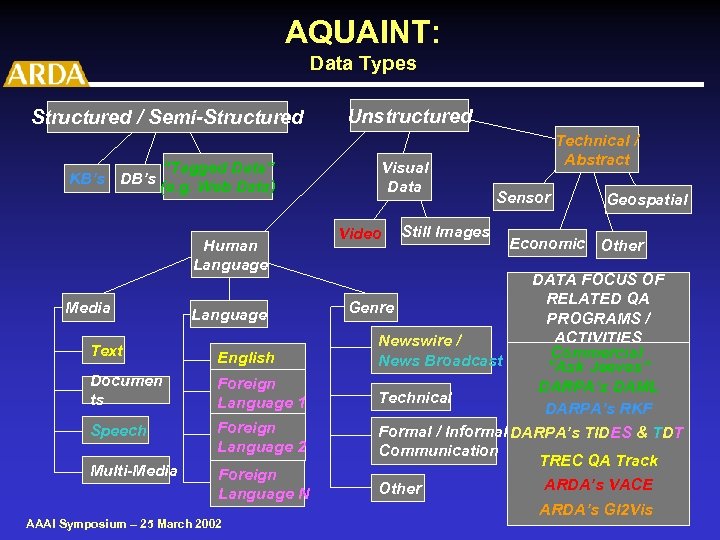

AQUAINT: Data Types Structured / Semi-Structured “Tagged Data” KB’s DB’s (e. g. Web Data) Human Language Media Language Unstructured Visual Data Video Technical / Abstract Sensor Still Images Genre Text English Newswire / News Broadcast Documen ts Foreign Language 1 Technical Speech Foreign Language 2 Formal / Informal Communication Multi-Media Foreign Language N Other AAAI Symposium – 25 March 2002 Geospatial Economic Other

AQUAINT: Data Types Structured / Semi-Structured “Tagged Data” KB’s DB’s (e. g. Web Data) Human Language Media Language Unstructured Visual Data Video Technical / Abstract Sensor Still Images Genre Text English Newswire / News Broadcast Documen ts Foreign Language 1 Technical Speech Foreign Language 2 Formal / Informal Communication Multi-Media Foreign Language N Other AAAI Symposium – 25 March 2002 Geospatial Economic Other

AQUAINT: Data Types Structured / Semi-Structured “Tagged Data” KB’s DB’s (e. g. Web Data) Human Language Media Language Text English Documen ts Foreign Language 1 Speech Foreign Language 2 Multi-Media Foreign Language N AAAI Symposium – 25 March 2002 Unstructured Visual Data Video Still Images Technical / Abstract Sensor Geospatial Economic Other DATA FOCUS OF RELATED QA Genre PROGRAMS / ACTIVITIES Newswire / Commercial News Broadcast “Ask Jeeves” DARPA’s DAML Technical DARPA’s RKF Formal / Informal DARPA’s TIDES & TDT Communication TREC QA Track ARDA’s VACE Other ARDA’s GI 2 Vis

AQUAINT: Data Types Structured / Semi-Structured “Tagged Data” KB’s DB’s (e. g. Web Data) Human Language Media Language Text English Documen ts Foreign Language 1 Speech Foreign Language 2 Multi-Media Foreign Language N AAAI Symposium – 25 March 2002 Unstructured Visual Data Video Still Images Technical / Abstract Sensor Geospatial Economic Other DATA FOCUS OF RELATED QA Genre PROGRAMS / ACTIVITIES Newswire / Commercial News Broadcast “Ask Jeeves” DARPA’s DAML Technical DARPA’s RKF Formal / Informal DARPA’s TIDES & TDT Communication TREC QA Track ARDA’s VACE Other ARDA’s GI 2 Vis

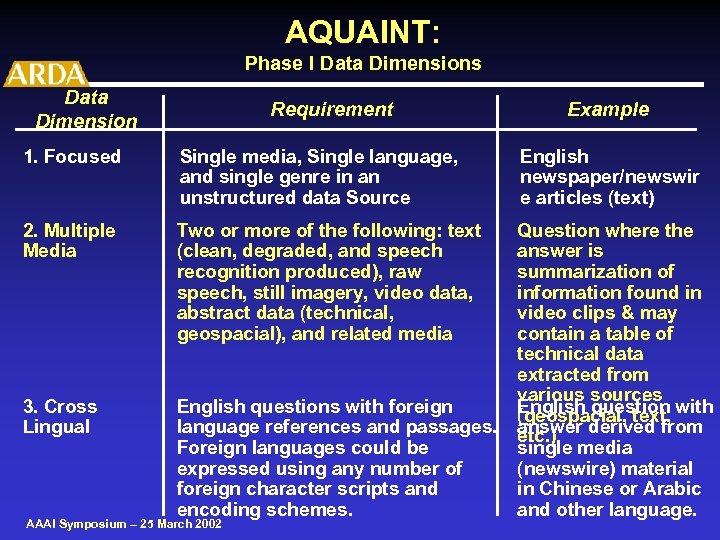

AQUAINT: Phase I Data Dimensions Data Dimension Requirement Example 1. Focused Single media, Single language, and single genre in an unstructured data Source English newspaper/newswir e articles (text) 2. Multiple Media Two or more of the following: text (clean, degraded, and speech recognition produced), raw speech, still imagery, video data, abstract data (technical, geospacial), and related media 3. Cross Lingual English questions with foreign language references and passages. Foreign languages could be expressed using any number of foreign character scripts and encoding schemes. Question where the answer is summarization of information found in video clips & may contain a table of technical data extracted from various sources English question with (geospacial, text, answer derived from etc. ) single media (newswire) material in Chinese or Arabic and other language. AAAI Symposium – 25 March 2002

AQUAINT: Phase I Data Dimensions Data Dimension Requirement Example 1. Focused Single media, Single language, and single genre in an unstructured data Source English newspaper/newswir e articles (text) 2. Multiple Media Two or more of the following: text (clean, degraded, and speech recognition produced), raw speech, still imagery, video data, abstract data (technical, geospacial), and related media 3. Cross Lingual English questions with foreign language references and passages. Foreign languages could be expressed using any number of foreign character scripts and encoding schemes. Question where the answer is summarization of information found in video clips & may contain a table of technical data extracted from various sources English question with (geospacial, text, answer derived from etc. ) single media (newswire) material in Chinese or Arabic and other language. AAAI Symposium – 25 March 2002

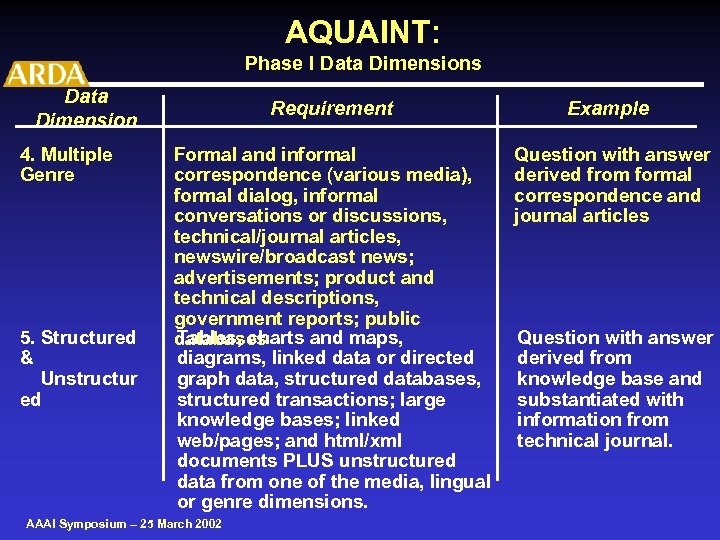

AQUAINT: Phase I Data Dimensions Data Dimension 4. Multiple Genre 5. Structured & Unstructur ed Requirement Example Formal and informal correspondence (various media), formal dialog, informal conversations or discussions, technical/journal articles, newswire/broadcast news; advertisements; product and technical descriptions, government reports; public Tables, charts and maps, databases diagrams, linked data or directed graph data, structured databases, structured transactions; large knowledge bases; linked web/pages; and html/xml documents PLUS unstructured data from one of the media, lingual or genre dimensions. Question with answer derived from formal correspondence and journal articles AAAI Symposium – 25 March 2002 Question with answer derived from knowledge base and substantiated with information from technical journal.

AQUAINT: Phase I Data Dimensions Data Dimension 4. Multiple Genre 5. Structured & Unstructur ed Requirement Example Formal and informal correspondence (various media), formal dialog, informal conversations or discussions, technical/journal articles, newswire/broadcast news; advertisements; product and technical descriptions, government reports; public Tables, charts and maps, databases diagrams, linked data or directed graph data, structured databases, structured transactions; large knowledge bases; linked web/pages; and html/xml documents PLUS unstructured data from one of the media, lingual or genre dimensions. Question with answer derived from formal correspondence and journal articles AAAI Symposium – 25 March 2002 Question with answer derived from knowledge base and substantiated with information from technical journal.

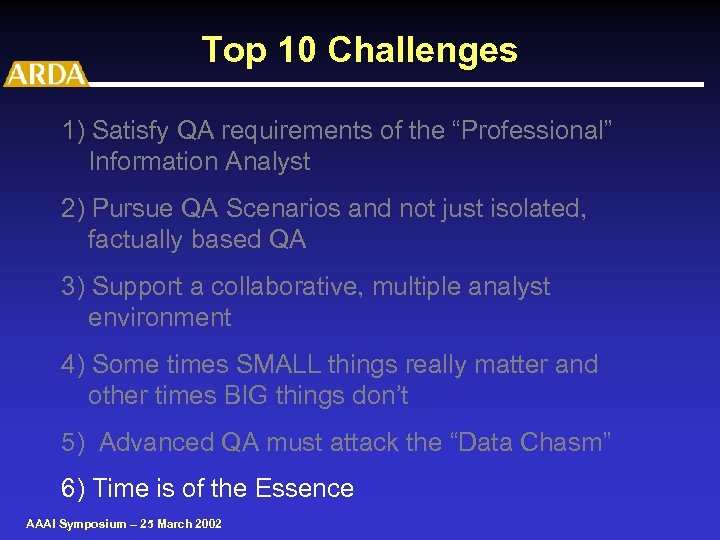

Top 10 Challenges 1) Satisfy QA requirements of the “Professional” Information Analyst 2) Pursue QA Scenarios and not just isolated, factually based QA 3) Support a collaborative, multiple analyst environment 4) Some times SMALL things really matter and other times BIG things don’t 5) Advanced QA must attack the “Data Chasm” 6) Time is of the Essence AAAI Symposium – 25 March 2002

Top 10 Challenges 1) Satisfy QA requirements of the “Professional” Information Analyst 2) Pursue QA Scenarios and not just isolated, factually based QA 3) Support a collaborative, multiple analyst environment 4) Some times SMALL things really matter and other times BIG things don’t 5) Advanced QA must attack the “Data Chasm” 6) Time is of the Essence AAAI Symposium – 25 March 2002

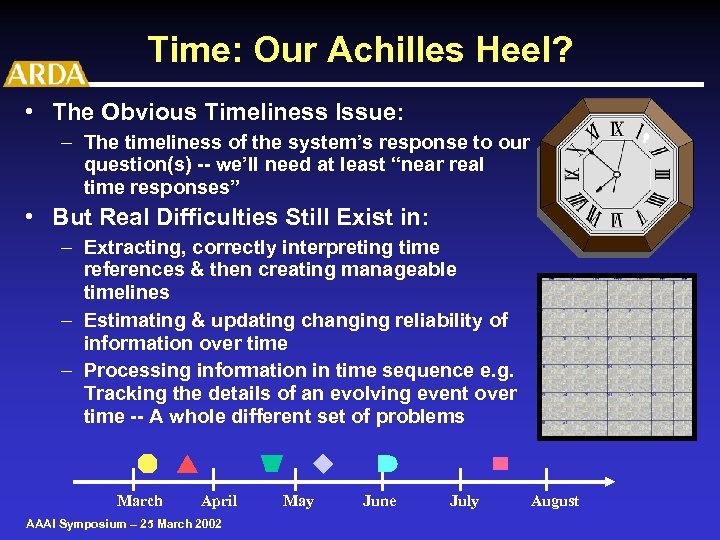

Time: Our Achilles Heel? • The Obvious Timeliness Issue: – The timeliness of the system’s response to our question(s) -- we’ll need at least “near real time responses” • But Real Difficulties Still Exist in: – Extracting, correctly interpreting time references & then creating manageable timelines – Estimating & updating changing reliability of information over time – Processing information in time sequence e. g. Tracking the details of an evolving event over time -- A whole different set of problems March April AAAI Symposium – 25 March 2002 May June July August

Time: Our Achilles Heel? • The Obvious Timeliness Issue: – The timeliness of the system’s response to our question(s) -- we’ll need at least “near real time responses” • But Real Difficulties Still Exist in: – Extracting, correctly interpreting time references & then creating manageable timelines – Estimating & updating changing reliability of information over time – Processing information in time sequence e. g. Tracking the details of an evolving event over time -- A whole different set of problems March April AAAI Symposium – 25 March 2002 May June July August

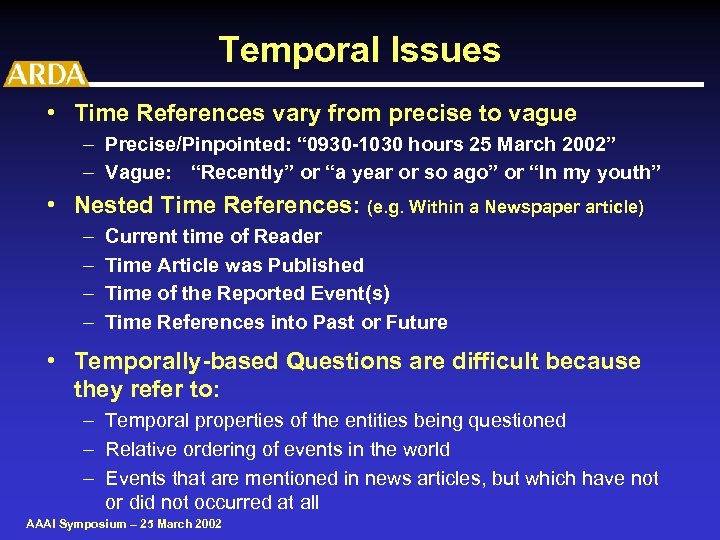

Temporal Issues • Time References vary from precise to vague – Precise/Pinpointed: “ 0930 -1030 hours 25 March 2002” – Vague: “Recently” or “a year or so ago” or “In my youth” • Nested Time References: (e. g. Within a Newspaper article) – – Current time of Reader Time Article was Published Time of the Reported Event(s) Time References into Past or Future • Temporally-based Questions are difficult because they refer to: – Temporal properties of the entities being questioned – Relative ordering of events in the world – Events that are mentioned in news articles, but which have not or did not occurred at all AAAI Symposium – 25 March 2002

Temporal Issues • Time References vary from precise to vague – Precise/Pinpointed: “ 0930 -1030 hours 25 March 2002” – Vague: “Recently” or “a year or so ago” or “In my youth” • Nested Time References: (e. g. Within a Newspaper article) – – Current time of Reader Time Article was Published Time of the Reported Event(s) Time References into Past or Future • Temporally-based Questions are difficult because they refer to: – Temporal properties of the entities being questioned – Relative ordering of events in the world – Events that are mentioned in news articles, but which have not or did not occurred at all AAAI Symposium – 25 March 2002

Top 10 Challenges 7) Must extract, represent and preserve information uncovered when searching for answers AAAI Symposium – 25 March 2002

Top 10 Challenges 7) Must extract, represent and preserve information uncovered when searching for answers AAAI Symposium – 25 March 2002

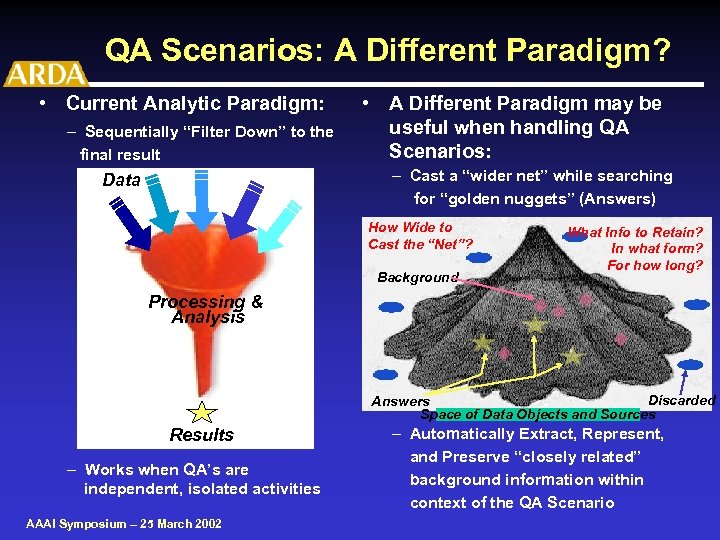

QA Scenarios: A Different Paradigm? • Current Analytic Paradigm: – Sequentially “Filter Down” to the final result • A Different Paradigm may be useful when handling QA Scenarios: – Cast a “wider net” while searching for “golden nuggets” (Answers) Data How Wide to Cast the “Net”? Background What Info to Retain? In what form? For how long? Processing & Analysis Discarded Answers Space of Data Objects and Sources Results – Works when QA’s are independent, isolated activities AAAI Symposium – 25 March 2002 – Automatically Extract, Represent, and Preserve “closely related” background information within context of the QA Scenario

QA Scenarios: A Different Paradigm? • Current Analytic Paradigm: – Sequentially “Filter Down” to the final result • A Different Paradigm may be useful when handling QA Scenarios: – Cast a “wider net” while searching for “golden nuggets” (Answers) Data How Wide to Cast the “Net”? Background What Info to Retain? In what form? For how long? Processing & Analysis Discarded Answers Space of Data Objects and Sources Results – Works when QA’s are independent, isolated activities AAAI Symposium – 25 March 2002 – Automatically Extract, Represent, and Preserve “closely related” background information within context of the QA Scenario

Different Paradigm: “Casting a Net” Areas of Biggest Impact AAAI Symposium – 25 March 2002

Different Paradigm: “Casting a Net” Areas of Biggest Impact AAAI Symposium – 25 March 2002

Top 10 Challenges 7) Must extract, represent and preserve information uncovered when searching for answers 8) Rapidly increasing importance of Knowledge of all types -- regardless of the approach AAAI Symposium – 25 March 2002

Top 10 Challenges 7) Must extract, represent and preserve information uncovered when searching for answers 8) Rapidly increasing importance of Knowledge of all types -- regardless of the approach AAAI Symposium – 25 March 2002

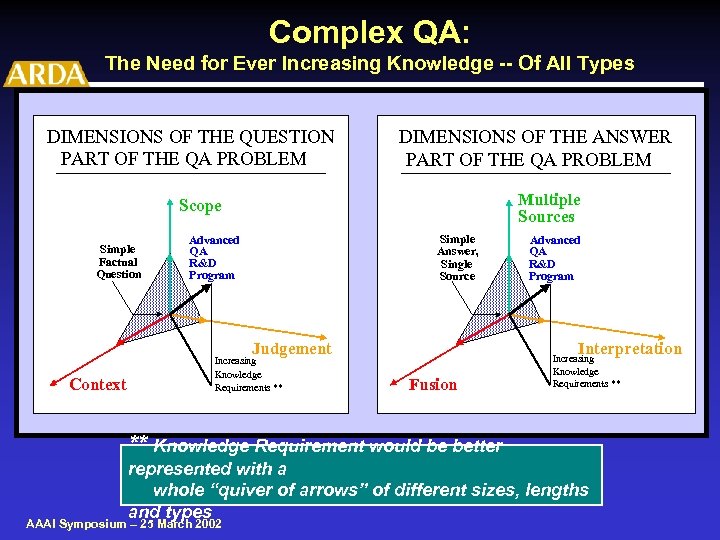

Complex QA: The Need for Ever Increasing Knowledge -- Of All Types DIMENSIONS OF THE QUESTION PART OF THE QA PROBLEM DIMENSIONS OF THE ANSWER PART OF THE QA PROBLEM Multiple Sources Scope Simple Factual Question Simple Answer, Single Source Advanced QA R&D Program Interpretation Judgement Context Increasing Knowledge Requirements ** Advanced QA R&D Program Fusion Increasing Knowledge Requirements ** ** Knowledge Requirement would be better represented with a whole “quiver of arrows” of different sizes, lengths and types AAAI Symposium – 25 March 2002

Complex QA: The Need for Ever Increasing Knowledge -- Of All Types DIMENSIONS OF THE QUESTION PART OF THE QA PROBLEM DIMENSIONS OF THE ANSWER PART OF THE QA PROBLEM Multiple Sources Scope Simple Factual Question Simple Answer, Single Source Advanced QA R&D Program Interpretation Judgement Context Increasing Knowledge Requirements ** Advanced QA R&D Program Fusion Increasing Knowledge Requirements ** ** Knowledge Requirement would be better represented with a whole “quiver of arrows” of different sizes, lengths and types AAAI Symposium – 25 March 2002

Increasing Knowledge Requirements • Types of Knowledge Needed – – – Factual Knowledge & Linguistic Knowledge Common Sense Knowledge & World Knowledge Procedural Knowledge & Explanatory Knowledge Domain Knowledge & Modal Knowledge Tacit Knowledge Etc. • Sources – Hand Crafted by experts; supplemented by end-users – Results from application of: • Learning algorithms • Bootstrapping / Hill-climbing Methods – Extracted from large data corpora – Obtained via “Re-Use” AAAI Symposium – 25 March 2002

Increasing Knowledge Requirements • Types of Knowledge Needed – – – Factual Knowledge & Linguistic Knowledge Common Sense Knowledge & World Knowledge Procedural Knowledge & Explanatory Knowledge Domain Knowledge & Modal Knowledge Tacit Knowledge Etc. • Sources – Hand Crafted by experts; supplemented by end-users – Results from application of: • Learning algorithms • Bootstrapping / Hill-climbing Methods – Extracted from large data corpora – Obtained via “Re-Use” AAAI Symposium – 25 March 2002

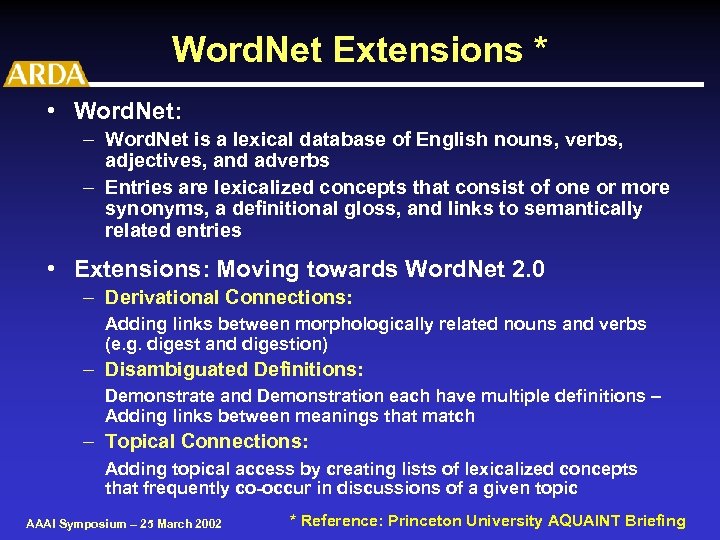

Word. Net Extensions * • Word. Net: – Word. Net is a lexical database of English nouns, verbs, adjectives, and adverbs – Entries are lexicalized concepts that consist of one or more synonyms, a definitional gloss, and links to semantically related entries • Extensions: Moving towards Word. Net 2. 0 – Derivational Connections: Adding links between morphologically related nouns and verbs (e. g. digest and digestion) – Disambiguated Definitions: Demonstrate and Demonstration each have multiple definitions – Adding links between meanings that match – Topical Connections: Adding topical access by creating lists of lexicalized concepts that frequently co-occur in discussions of a given topic AAAI Symposium – 25 March 2002 * Reference: Princeton University AQUAINT Briefing

Word. Net Extensions * • Word. Net: – Word. Net is a lexical database of English nouns, verbs, adjectives, and adverbs – Entries are lexicalized concepts that consist of one or more synonyms, a definitional gloss, and links to semantically related entries • Extensions: Moving towards Word. Net 2. 0 – Derivational Connections: Adding links between morphologically related nouns and verbs (e. g. digest and digestion) – Disambiguated Definitions: Demonstrate and Demonstration each have multiple definitions – Adding links between meanings that match – Topical Connections: Adding topical access by creating lists of lexicalized concepts that frequently co-occur in discussions of a given topic AAAI Symposium – 25 March 2002 * Reference: Princeton University AQUAINT Briefing

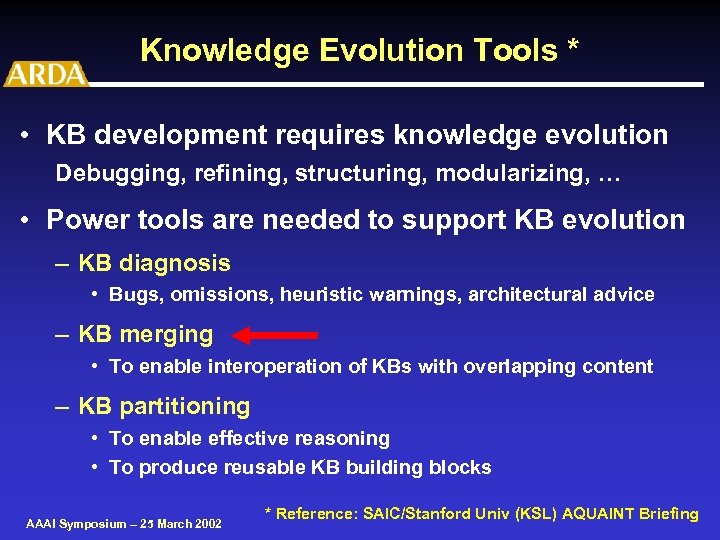

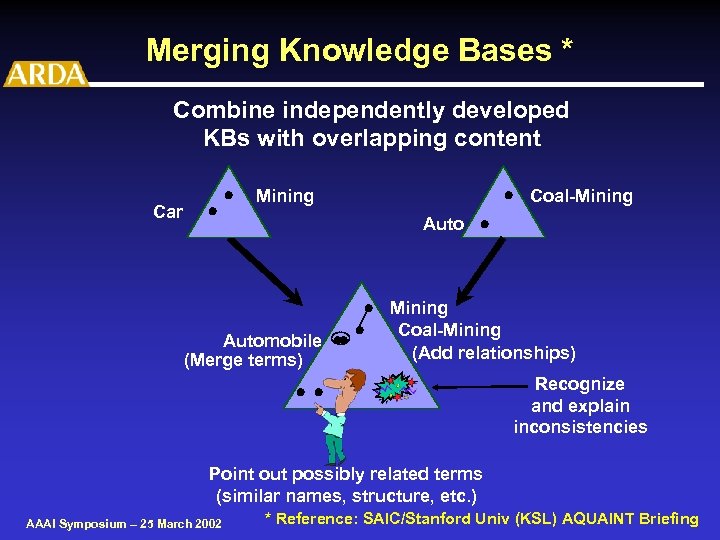

Knowledge Evolution Tools * • KB development requires knowledge evolution Debugging, refining, structuring, modularizing, … • Power tools are needed to support KB evolution – KB diagnosis • Bugs, omissions, heuristic warnings, architectural advice – KB merging • To enable interoperation of KBs with overlapping content – KB partitioning • To enable effective reasoning • To produce reusable KB building blocks AAAI Symposium – 25 March 2002 * Reference: SAIC/Stanford Univ (KSL) AQUAINT Briefing

Knowledge Evolution Tools * • KB development requires knowledge evolution Debugging, refining, structuring, modularizing, … • Power tools are needed to support KB evolution – KB diagnosis • Bugs, omissions, heuristic warnings, architectural advice – KB merging • To enable interoperation of KBs with overlapping content – KB partitioning • To enable effective reasoning • To produce reusable KB building blocks AAAI Symposium – 25 March 2002 * Reference: SAIC/Stanford Univ (KSL) AQUAINT Briefing

Merging Knowledge Bases * Combine independently developed KBs with overlapping content Car · · · Coal-Mining Auto · Automobile ·· (Merge terms) · · Mining Coal-Mining (Add relationships) · · Recognize and explain inconsistencies Point out possibly related terms (similar names, structure, etc. ) AAAI Symposium – 25 March 2002 * Reference: SAIC/Stanford Univ (KSL) AQUAINT Briefing

Merging Knowledge Bases * Combine independently developed KBs with overlapping content Car · · · Coal-Mining Auto · Automobile ·· (Merge terms) · · Mining Coal-Mining (Add relationships) · · Recognize and explain inconsistencies Point out possibly related terms (similar names, structure, etc. ) AAAI Symposium – 25 March 2002 * Reference: SAIC/Stanford Univ (KSL) AQUAINT Briefing

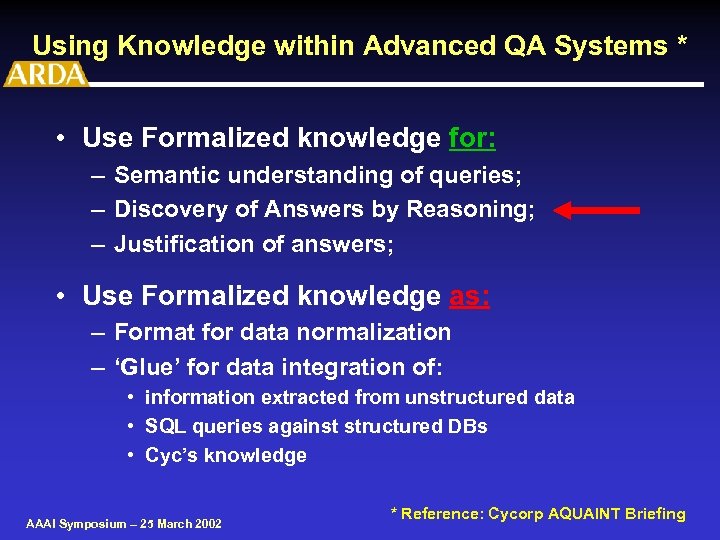

Using Knowledge within Advanced QA Systems * • Use Formalized knowledge for: – Semantic understanding of queries; – Discovery of Answers by Reasoning; – Justification of answers; • Use Formalized knowledge as: – Format for data normalization – ‘Glue’ for data integration of: • information extracted from unstructured data • SQL queries against structured DBs • Cyc’s knowledge AAAI Symposium – 25 March 2002 * Reference: Cycorp AQUAINT Briefing

Using Knowledge within Advanced QA Systems * • Use Formalized knowledge for: – Semantic understanding of queries; – Discovery of Answers by Reasoning; – Justification of answers; • Use Formalized knowledge as: – Format for data normalization – ‘Glue’ for data integration of: • information extracted from unstructured data • SQL queries against structured DBs • Cyc’s knowledge AAAI Symposium – 25 March 2002 * Reference: Cycorp AQUAINT Briefing

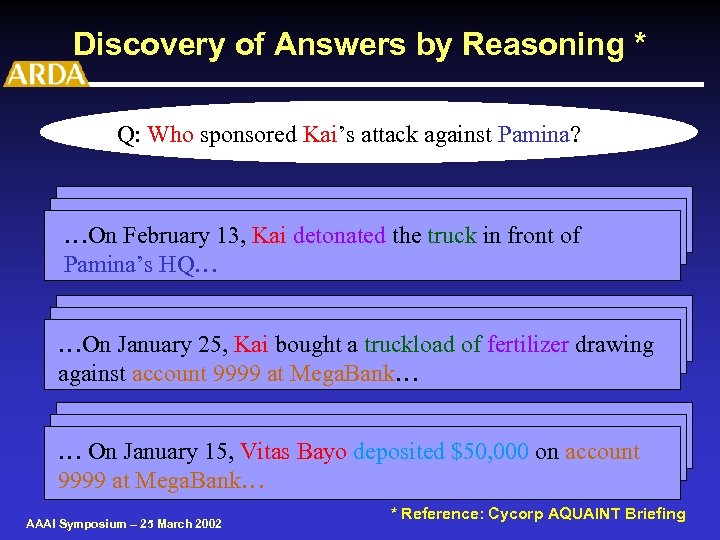

Discovery of Answers by Reasoning * Q: Who sponsored Kai’s attack against Pamina? …On February 13, Kai detonated the truck in front of Pamina’s HQ… …On January 25, Kai bought a truckload of fertilizer drawing against account 9999 at Mega. Bank… … On January 15, Vitas Bayo deposited $50, 000 on account 9999 at Mega. Bank… AAAI Symposium – 25 March 2002 * Reference: Cycorp AQUAINT Briefing

Discovery of Answers by Reasoning * Q: Who sponsored Kai’s attack against Pamina? …On February 13, Kai detonated the truck in front of Pamina’s HQ… …On January 25, Kai bought a truckload of fertilizer drawing against account 9999 at Mega. Bank… … On January 15, Vitas Bayo deposited $50, 000 on account 9999 at Mega. Bank… AAAI Symposium – 25 March 2002 * Reference: Cycorp AQUAINT Briefing

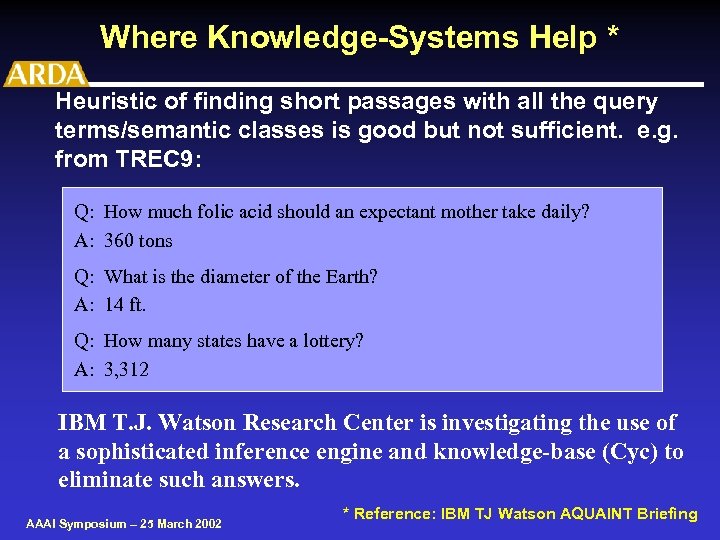

Where Knowledge-Systems Help * Heuristic of finding short passages with all the query terms/semantic classes is good but not sufficient. e. g. from TREC 9: Q: How much folic acid should an expectant mother take daily? A: 360 tons Q: What is the diameter of the Earth? A: 14 ft. Q: How many states have a lottery? A: 3, 312 IBM T. J. Watson Research Center is investigating the use of a sophisticated inference engine and knowledge-base (Cyc) to eliminate such answers. AAAI Symposium – 25 March 2002 * Reference: IBM TJ Watson AQUAINT Briefing

Where Knowledge-Systems Help * Heuristic of finding short passages with all the query terms/semantic classes is good but not sufficient. e. g. from TREC 9: Q: How much folic acid should an expectant mother take daily? A: 360 tons Q: What is the diameter of the Earth? A: 14 ft. Q: How many states have a lottery? A: 3, 312 IBM T. J. Watson Research Center is investigating the use of a sophisticated inference engine and knowledge-base (Cyc) to eliminate such answers. AAAI Symposium – 25 March 2002 * Reference: IBM TJ Watson AQUAINT Briefing

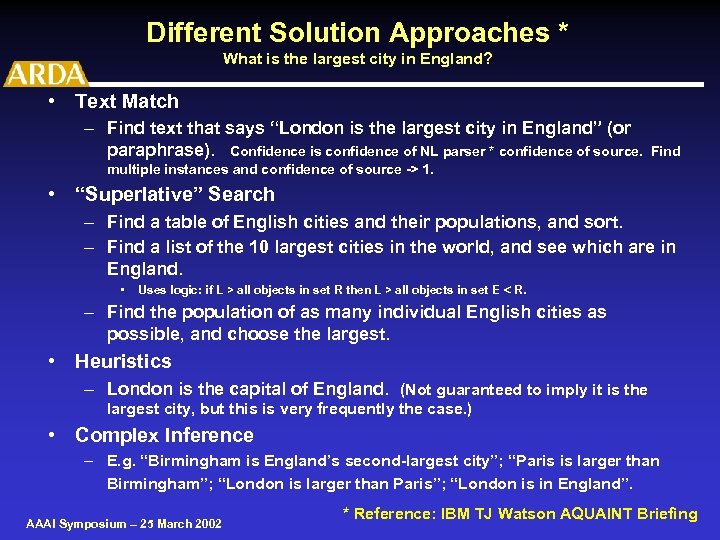

Different Solution Approaches * What is the largest city in England? • Text Match – Find text that says “London is the largest city in England” (or paraphrase). Confidence is confidence of NL parser * confidence of source. Find multiple instances and confidence of source -> 1. • “Superlative” Search – Find a table of English cities and their populations, and sort. – Find a list of the 10 largest cities in the world, and see which are in England. • Uses logic: if L > all objects in set R then L > all objects in set E < R. – Find the population of as many individual English cities as possible, and choose the largest. • Heuristics – London is the capital of England. (Not guaranteed to imply it is the largest city, but this is very frequently the case. ) • Complex Inference – E. g. “Birmingham is England’s second-largest city”; “Paris is larger than Birmingham”; “London is larger than Paris”; “London is in England”. AAAI Symposium – 25 March 2002 * Reference: IBM TJ Watson AQUAINT Briefing

Different Solution Approaches * What is the largest city in England? • Text Match – Find text that says “London is the largest city in England” (or paraphrase). Confidence is confidence of NL parser * confidence of source. Find multiple instances and confidence of source -> 1. • “Superlative” Search – Find a table of English cities and their populations, and sort. – Find a list of the 10 largest cities in the world, and see which are in England. • Uses logic: if L > all objects in set R then L > all objects in set E < R. – Find the population of as many individual English cities as possible, and choose the largest. • Heuristics – London is the capital of England. (Not guaranteed to imply it is the largest city, but this is very frequently the case. ) • Complex Inference – E. g. “Birmingham is England’s second-largest city”; “Paris is larger than Birmingham”; “London is larger than Paris”; “London is in England”. AAAI Symposium – 25 March 2002 * Reference: IBM TJ Watson AQUAINT Briefing

Top 10 Challenges 7) Must extract, represent and preserve information uncovered when searching for answers 8) Rapidly increasing importance of Knowledge of all types -- regardless of the approach 9) Expanding requirements for more advanced learning and reasoning methods/approaches AAAI Symposium – 25 March 2002

Top 10 Challenges 7) Must extract, represent and preserve information uncovered when searching for answers 8) Rapidly increasing importance of Knowledge of all types -- regardless of the approach 9) Expanding requirements for more advanced learning and reasoning methods/approaches AAAI Symposium – 25 March 2002

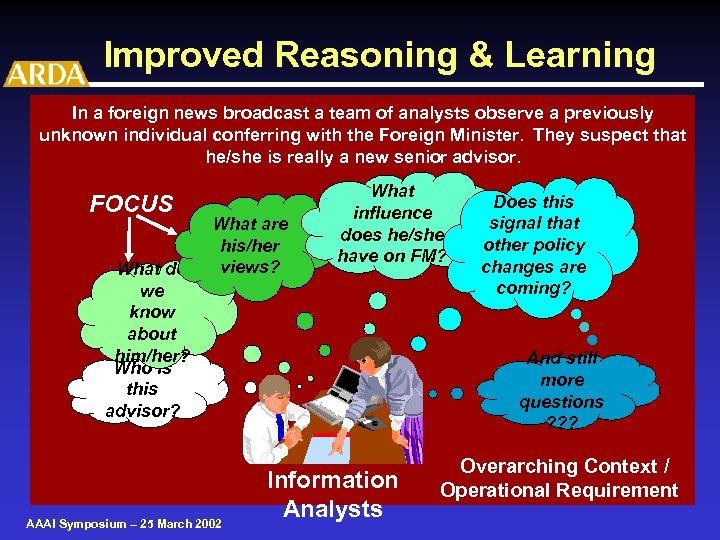

Improved Reasoning & Learning In a foreign news broadcast a team of analysts observe a previously unknown individual conferring with the Foreign Minister. They suspect that he/she is really a new senior advisor. FOCUS What do we know about him/her? Who is this advisor? What are his/her views? AAAI Symposium – 25 March 2002 What influence does he/she have on FM? Does this signal that other policy changes are coming? And still more questions ? ? ? Information Analysts Overarching Context / Operational Requirement

Improved Reasoning & Learning In a foreign news broadcast a team of analysts observe a previously unknown individual conferring with the Foreign Minister. They suspect that he/she is really a new senior advisor. FOCUS What do we know about him/her? Who is this advisor? What are his/her views? AAAI Symposium – 25 March 2002 What influence does he/she have on FM? Does this signal that other policy changes are coming? And still more questions ? ? ? Information Analysts Overarching Context / Operational Requirement

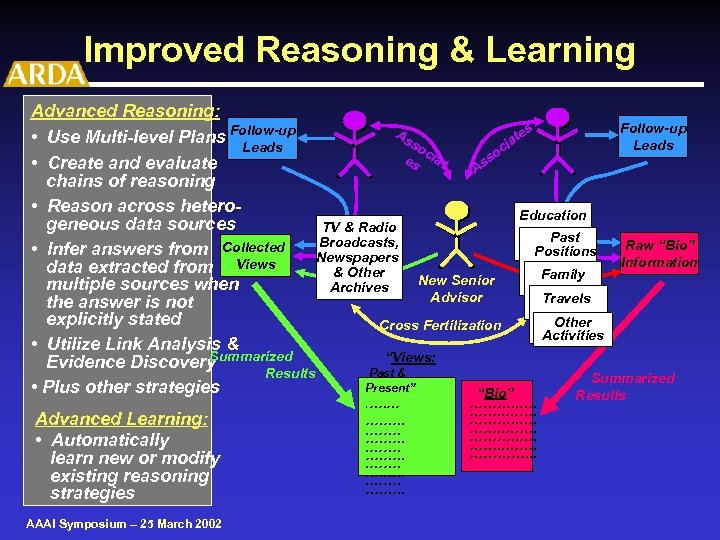

Improved Reasoning & Learning Advanced Reasoning: Follow-up As es • Use Multi-level Plans Follow-up at so Leads ci c es iat so • Create and evaluate As chains of reasoning • Reason across hetero. Education geneous data sources TV & Radio Past Broadcasts, Raw “Bio” • Infer answers from Collected Positions Newspapers Information data extracted from Views & Other Family New Senior multiple sources when Archives Advisor Travels the answer is not explicitly stated Other Cross Fertilization Activities • Utilize Link Analysis & Summarized “Views: Evidence Discovery Past & Results Summarized Present” • Plus other strategies “Bio” Results Advanced Learning: • Automatically learn new or modify existing reasoning strategies AAAI Symposium – 25 March 2002 . …. …. . . ……. …. …. . ………. . ……. ………. . …. ……. …………. . .

Improved Reasoning & Learning Advanced Reasoning: Follow-up As es • Use Multi-level Plans Follow-up at so Leads ci c es iat so • Create and evaluate As chains of reasoning • Reason across hetero. Education geneous data sources TV & Radio Past Broadcasts, Raw “Bio” • Infer answers from Collected Positions Newspapers Information data extracted from Views & Other Family New Senior multiple sources when Archives Advisor Travels the answer is not explicitly stated Other Cross Fertilization Activities • Utilize Link Analysis & Summarized “Views: Evidence Discovery Past & Results Summarized Present” • Plus other strategies “Bio” Results Advanced Learning: • Automatically learn new or modify existing reasoning strategies AAAI Symposium – 25 March 2002 . …. …. . . ……. …. …. . ………. . ……. ………. . …. ……. …………. . .

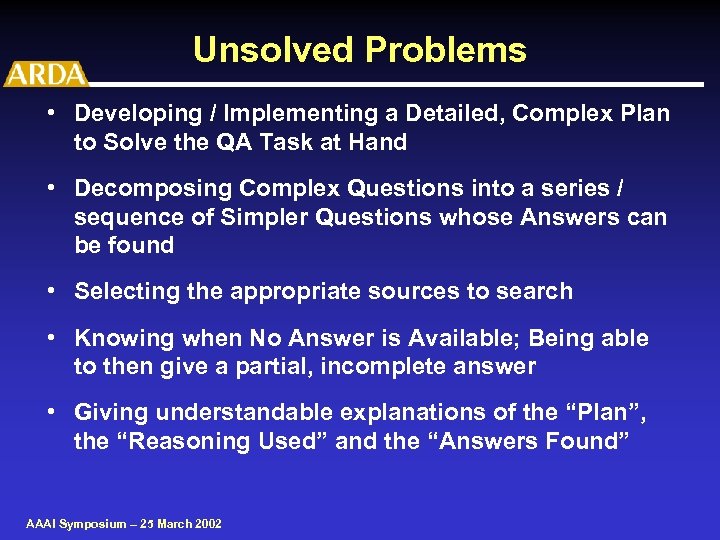

Unsolved Problems • Developing / Implementing a Detailed, Complex Plan to Solve the QA Task at Hand • Decomposing Complex Questions into a series / sequence of Simpler Questions whose Answers can be found • Selecting the appropriate sources to search • Knowing when No Answer is Available; Being able to then give a partial, incomplete answer • Giving understandable explanations of the “Plan”, the “Reasoning Used” and the “Answers Found” AAAI Symposium – 25 March 2002

Unsolved Problems • Developing / Implementing a Detailed, Complex Plan to Solve the QA Task at Hand • Decomposing Complex Questions into a series / sequence of Simpler Questions whose Answers can be found • Selecting the appropriate sources to search • Knowing when No Answer is Available; Being able to then give a partial, incomplete answer • Giving understandable explanations of the “Plan”, the “Reasoning Used” and the “Answers Found” AAAI Symposium – 25 March 2002

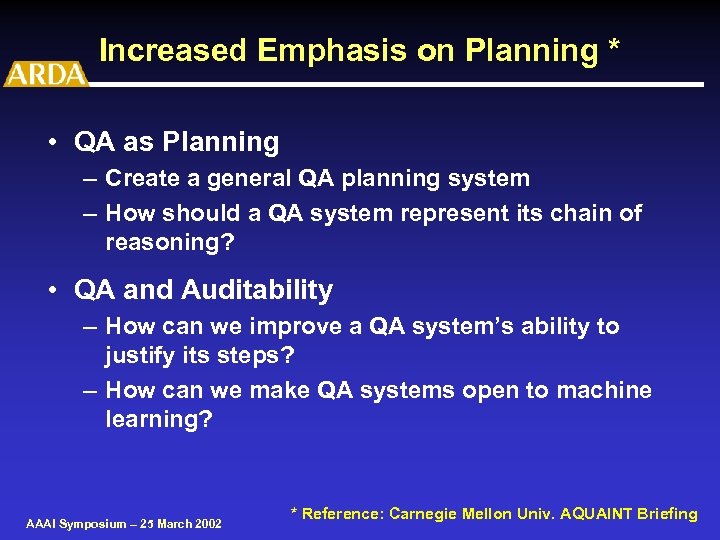

Increased Emphasis on Planning * • QA as Planning – Create a general QA planning system – How should a QA system represent its chain of reasoning? • QA and Auditability – How can we improve a QA system’s ability to justify its steps? – How can we make QA systems open to machine learning? AAAI Symposium – 25 March 2002 * Reference: Carnegie Mellon Univ. AQUAINT Briefing

Increased Emphasis on Planning * • QA as Planning – Create a general QA planning system – How should a QA system represent its chain of reasoning? • QA and Auditability – How can we improve a QA system’s ability to justify its steps? – How can we make QA systems open to machine learning? AAAI Symposium – 25 March 2002 * Reference: Carnegie Mellon Univ. AQUAINT Briefing

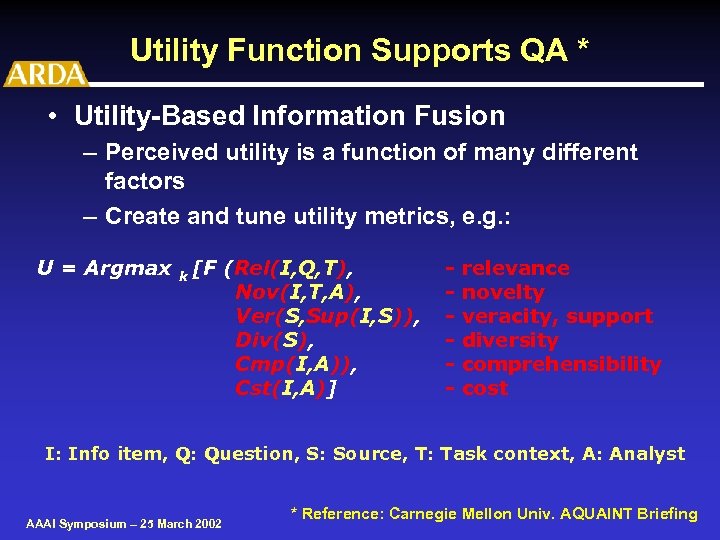

Utility Function Supports QA * • Utility-Based Information Fusion – Perceived utility is a function of many different factors – Create and tune utility metrics, e. g. : U = Argmax k [F (Rel(I, Q, T), Nov(I, T, A), Ver(S, Sup(I, S)), Div(S), Cmp(I, A)), Cst(I, A)] - relevance novelty veracity, support diversity comprehensibility cost I: Info item, Q: Question, S: Source, T: Task context, A: Analyst AAAI Symposium – 25 March 2002 * Reference: Carnegie Mellon Univ. AQUAINT Briefing

Utility Function Supports QA * • Utility-Based Information Fusion – Perceived utility is a function of many different factors – Create and tune utility metrics, e. g. : U = Argmax k [F (Rel(I, Q, T), Nov(I, T, A), Ver(S, Sup(I, S)), Div(S), Cmp(I, A)), Cst(I, A)] - relevance novelty veracity, support diversity comprehensibility cost I: Info item, Q: Question, S: Source, T: Task context, A: Analyst AAAI Symposium – 25 March 2002 * Reference: Carnegie Mellon Univ. AQUAINT Briefing

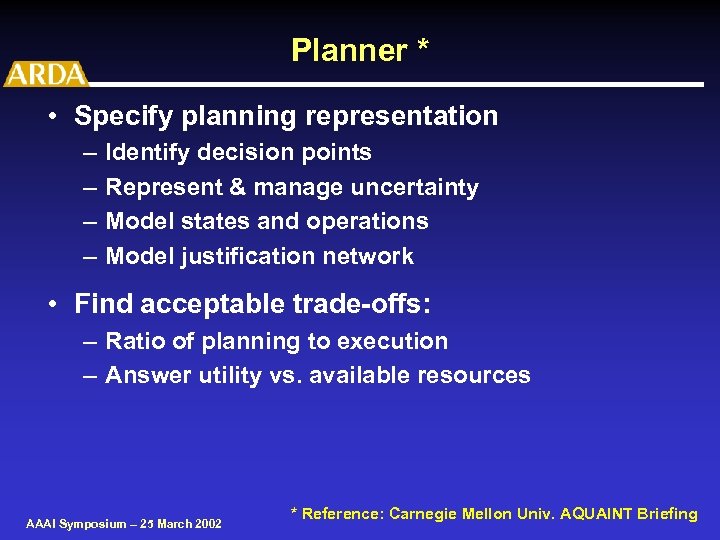

Planner * • Specify planning representation – – Identify decision points Represent & manage uncertainty Model states and operations Model justification network • Find acceptable trade-offs: – Ratio of planning to execution – Answer utility vs. available resources AAAI Symposium – 25 March 2002 * Reference: Carnegie Mellon Univ. AQUAINT Briefing

Planner * • Specify planning representation – – Identify decision points Represent & manage uncertainty Model states and operations Model justification network • Find acceptable trade-offs: – Ratio of planning to execution – Answer utility vs. available resources AAAI Symposium – 25 March 2002 * Reference: Carnegie Mellon Univ. AQUAINT Briefing

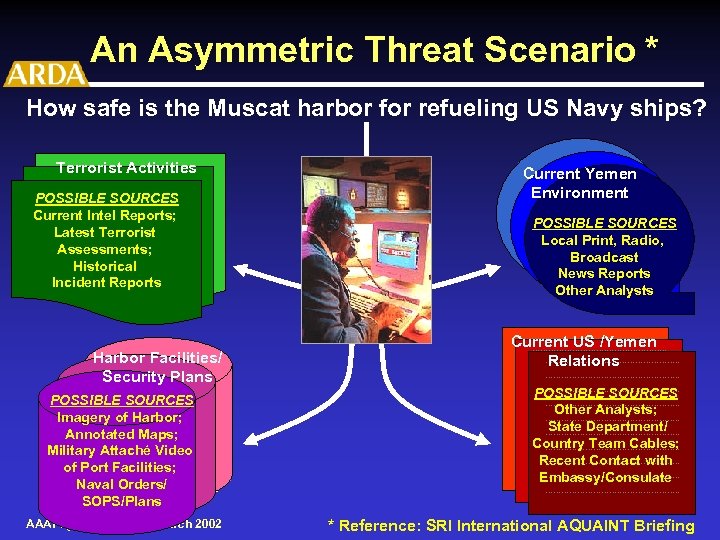

An Asymmetric Threat Scenario * How safe is the Muscat harbor for refueling US Navy ships? Terrorist Activities What recent terrorist POSSIBLE SOURCES activity/Incidents Current Intel Reports; in Yemen? Latest Terrorist Against US Military Assessments; world-wide? Historical What are the Incident Reports potential threats? ? Harbor Facilities/ Security Plans ? POSSIBLE SOURCES How secure is the Imagery of Harbor; Aden Harbor? How secure Annotated Maps; is the Are our security Aden Harbor? Military Attaché Video plans Facilities; adequate? of Port Are our security Navalplans adequate? Orders/ SOPS/Plans AAAI Symposium – 25 March 2002 Current Yemen Environment ? What is the POSSIBLE SOURCES Current Political/Social/ Local Print, Radio, Cultural climate Broadcast in Yemen? News Reports In the region? Other Analysts …………………. . Current US /Yemen ………………………. . Relations ………………………. …………………. . ………………………. . POSSIBLE SOURCES What is the current Other Analysts; state of US ………………………. . State Department/ relations ………………………. . Country Team Cables; …………………. . with Yemen ………………………. . Recent Contact with …………………. . Other countries ………………………. . Embassy/Consulate in region? ? …………………. . * Reference: SRI International AQUAINT Briefing

An Asymmetric Threat Scenario * How safe is the Muscat harbor for refueling US Navy ships? Terrorist Activities What recent terrorist POSSIBLE SOURCES activity/Incidents Current Intel Reports; in Yemen? Latest Terrorist Against US Military Assessments; world-wide? Historical What are the Incident Reports potential threats? ? Harbor Facilities/ Security Plans ? POSSIBLE SOURCES How secure is the Imagery of Harbor; Aden Harbor? How secure Annotated Maps; is the Are our security Aden Harbor? Military Attaché Video plans Facilities; adequate? of Port Are our security Navalplans adequate? Orders/ SOPS/Plans AAAI Symposium – 25 March 2002 Current Yemen Environment ? What is the POSSIBLE SOURCES Current Political/Social/ Local Print, Radio, Cultural climate Broadcast in Yemen? News Reports In the region? Other Analysts …………………. . Current US /Yemen ………………………. . Relations ………………………. …………………. . ………………………. . POSSIBLE SOURCES What is the current Other Analysts; state of US ………………………. . State Department/ relations ………………………. . Country Team Cables; …………………. . with Yemen ………………………. . Recent Contact with …………………. . Other countries ………………………. . Embassy/Consulate in region? ? …………………. . * Reference: SRI International AQUAINT Briefing

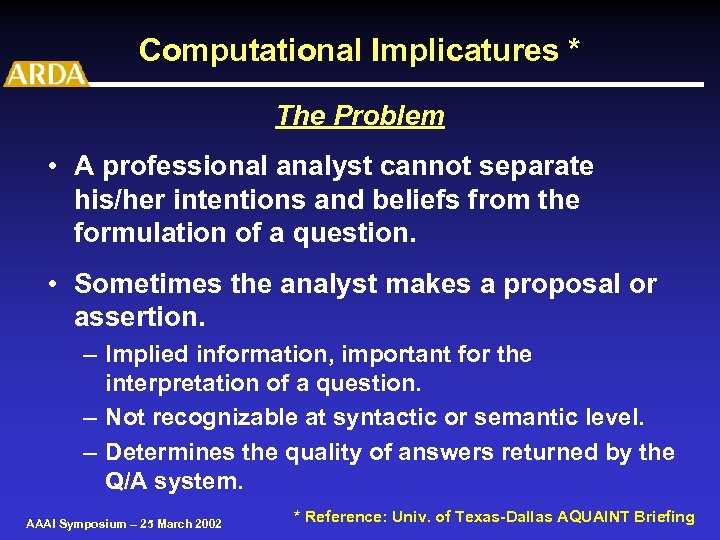

Computational Implicatures * The Problem • A professional analyst cannot separate his/her intentions and beliefs from the formulation of a question. • Sometimes the analyst makes a proposal or assertion. – Implied information, important for the interpretation of a question. – Not recognizable at syntactic or semantic level. – Determines the quality of answers returned by the Q/A system. AAAI Symposium – 25 March 2002 * Reference: Univ. of Texas-Dallas AQUAINT Briefing

Computational Implicatures * The Problem • A professional analyst cannot separate his/her intentions and beliefs from the formulation of a question. • Sometimes the analyst makes a proposal or assertion. – Implied information, important for the interpretation of a question. – Not recognizable at syntactic or semantic level. – Determines the quality of answers returned by the Q/A system. AAAI Symposium – 25 March 2002 * Reference: Univ. of Texas-Dallas AQUAINT Briefing

Example of Computational Implicature * “Will Prime Minister Mori survive the crisis? ” • Implied belief: the position of Prime Minister is in jeopardy. • Problem: none of the question words indicate directly danger. • Question expected answer type: survival • Implicature: DANGER AAAI Symposium – 25 March 2002 * Reference: Univ. of Texas-Dallas AQUAINT Briefing

Example of Computational Implicature * “Will Prime Minister Mori survive the crisis? ” • Implied belief: the position of Prime Minister is in jeopardy. • Problem: none of the question words indicate directly danger. • Question expected answer type: survival • Implicature: DANGER AAAI Symposium – 25 March 2002 * Reference: Univ. of Texas-Dallas AQUAINT Briefing

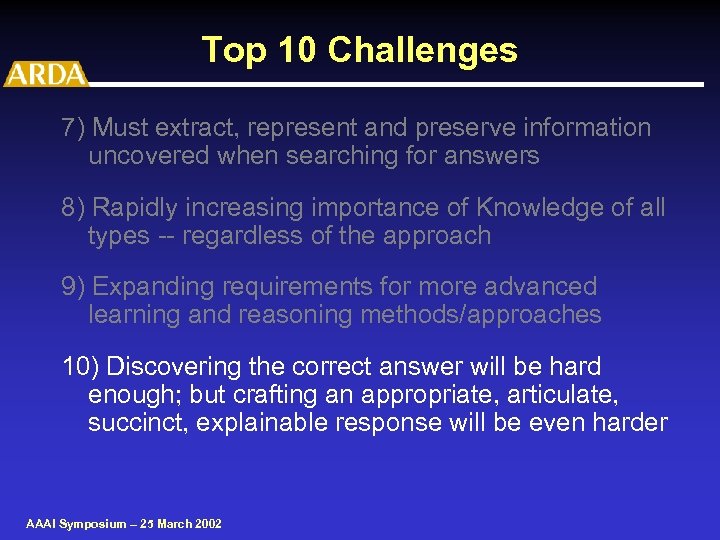

Top 10 Challenges 7) Must extract, represent and preserve information uncovered when searching for answers 8) Rapidly increasing importance of Knowledge of all types -- regardless of the approach 9) Expanding requirements for more advanced learning and reasoning methods/approaches 10) Discovering the correct answer will be hard enough; but crafting an appropriate, articulate, succinct, explainable response will be even harder AAAI Symposium – 25 March 2002

Top 10 Challenges 7) Must extract, represent and preserve information uncovered when searching for answers 8) Rapidly increasing importance of Knowledge of all types -- regardless of the approach 9) Expanding requirements for more advanced learning and reasoning methods/approaches 10) Discovering the correct answer will be hard enough; but crafting an appropriate, articulate, succinct, explainable response will be even harder AAAI Symposium – 25 March 2002