43754441c529285e969cbaf0e6bc878e.ppt

- Количество слайдов: 18

Accurate Parsing ('they worry that air the shows , drink too much , whistle johnny b. goode and watch the other ropes , whistle johnny b. goode and watch closely and suffer through the sale', 2. 1730387621600077 e-11) David Caley Thomas Folz-Donahue Rob Hall Matt Marzilli Computer Science Department

Accurate Parsing ('they worry that air the shows , drink too much , whistle johnny b. goode and watch the other ropes , whistle johnny b. goode and watch closely and suffer through the sale', 2. 1730387621600077 e-11) David Caley Thomas Folz-Donahue Rob Hall Matt Marzilli Computer Science Department

Accurate Parsing: Our Goal Given a grammar • For a sentence S, return the parse tree with the max probability conditioned upon S. arg-max t in T P (t| S) where T is the set of possible parse trees of sentence S Computer Science Department 2

Accurate Parsing: Our Goal Given a grammar • For a sentence S, return the parse tree with the max probability conditioned upon S. arg-max t in T P (t| S) where T is the set of possible parse trees of sentence S Computer Science Department 2

Talking Points § Using the Penn-Treebank • • Reading in n-ary trees Finding Head-tags within n-ary productions Converting to Binary Trees Inducing a CFG grammar § Probabilistic CYK • Handling Unary rules • Dealing with unknowns • Dealing with run times • Beam search, limiting depth of unary rules, further optimizations § Example Parses and Trees § Lexicalization Attempts Computer Science Department 3

Talking Points § Using the Penn-Treebank • • Reading in n-ary trees Finding Head-tags within n-ary productions Converting to Binary Trees Inducing a CFG grammar § Probabilistic CYK • Handling Unary rules • Dealing with unknowns • Dealing with run times • Beam search, limiting depth of unary rules, further optimizations § Example Parses and Trees § Lexicalization Attempts Computer Science Department 3

Using the Penn-Treebank: Our Training Data § Contains tagged data and n-ary trees used from a Wall Street Journal corpus. § Contains some information unneeded by the parser. § Questionable Tagging • (JJ the) ? ? § Example… Computer Science Department 4

Using the Penn-Treebank: Our Training Data § Contains tagged data and n-ary trees used from a Wall Street Journal corpus. § Contains some information unneeded by the parser. § Questionable Tagging • (JJ the) ? ? § Example… Computer Science Department 4

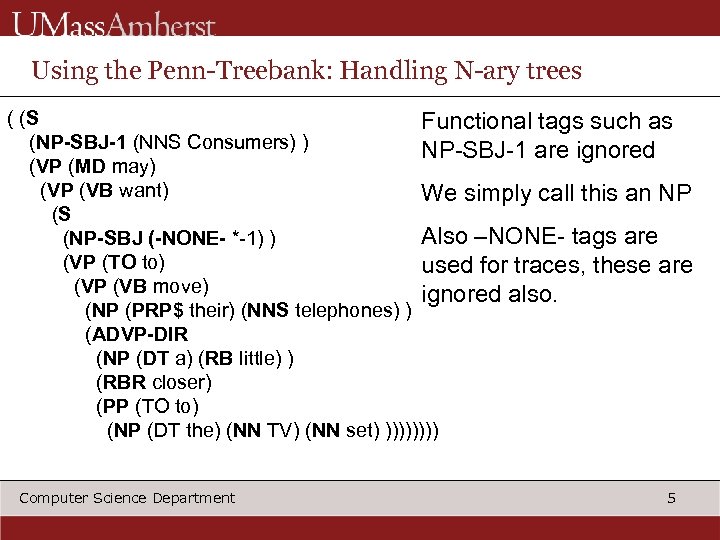

Using the Penn-Treebank: Handling N-ary trees ( (S Functional tags such as (NP-SBJ-1 (NNS Consumers) ) NP-SBJ-1 are ignored (VP (MD may) (VP (VB want) We simply call this an NP (S Also –NONE- tags are (NP-SBJ (-NONE- *-1) ) (VP (TO to) used for traces, these are (VP (VB move) ignored also. (NP (PRP$ their) (NNS telephones) ) (ADVP-DIR (NP (DT a) (RB little) ) (RBR closer) (PP (TO to) (NP (DT the) (NN TV) (NN set) )))) Computer Science Department 5

Using the Penn-Treebank: Handling N-ary trees ( (S Functional tags such as (NP-SBJ-1 (NNS Consumers) ) NP-SBJ-1 are ignored (VP (MD may) (VP (VB want) We simply call this an NP (S Also –NONE- tags are (NP-SBJ (-NONE- *-1) ) (VP (TO to) used for traces, these are (VP (VB move) ignored also. (NP (PRP$ their) (NNS telephones) ) (ADVP-DIR (NP (DT a) (RB little) ) (RBR closer) (PP (TO to) (NP (DT the) (NN TV) (NN set) )))) Computer Science Department 5

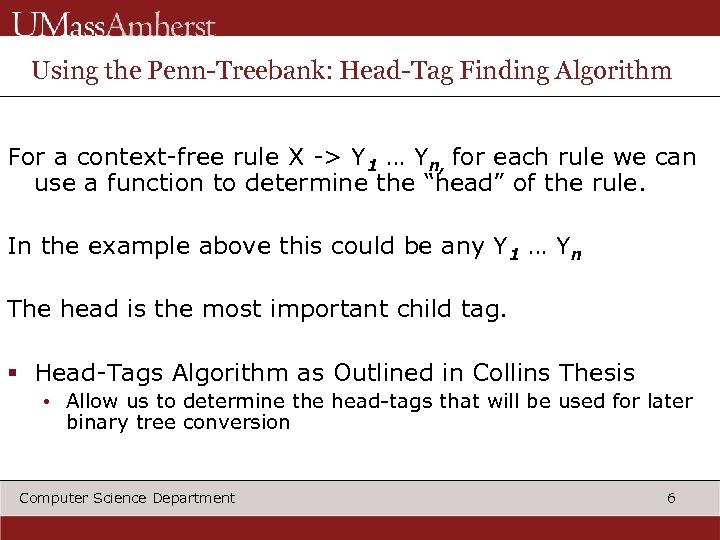

Using the Penn-Treebank: Head-Tag Finding Algorithm For a context-free rule X -> Y 1 … Yn, for each rule we can use a function to determine the “head” of the rule. In the example above this could be any Y 1 … Yn The head is the most important child tag. § Head-Tags Algorithm as Outlined in Collins Thesis • Allow us to determine the head-tags that will be used for later binary tree conversion Computer Science Department 6

Using the Penn-Treebank: Head-Tag Finding Algorithm For a context-free rule X -> Y 1 … Yn, for each rule we can use a function to determine the “head” of the rule. In the example above this could be any Y 1 … Yn The head is the most important child tag. § Head-Tags Algorithm as Outlined in Collins Thesis • Allow us to determine the head-tags that will be used for later binary tree conversion Computer Science Department 6

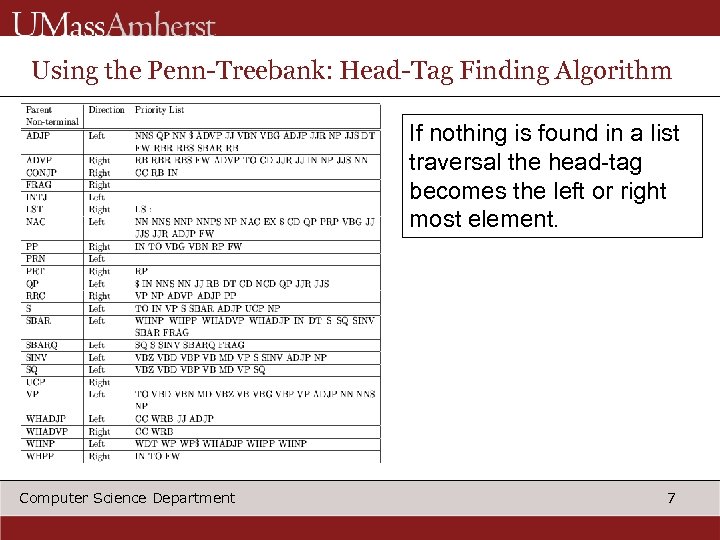

Using the Penn-Treebank: Head-Tag Finding Algorithm If nothing is found in a list traversal the head-tag becomes the left or right most element. Computer Science Department 7

Using the Penn-Treebank: Head-Tag Finding Algorithm If nothing is found in a list traversal the head-tag becomes the left or right most element. Computer Science Department 7

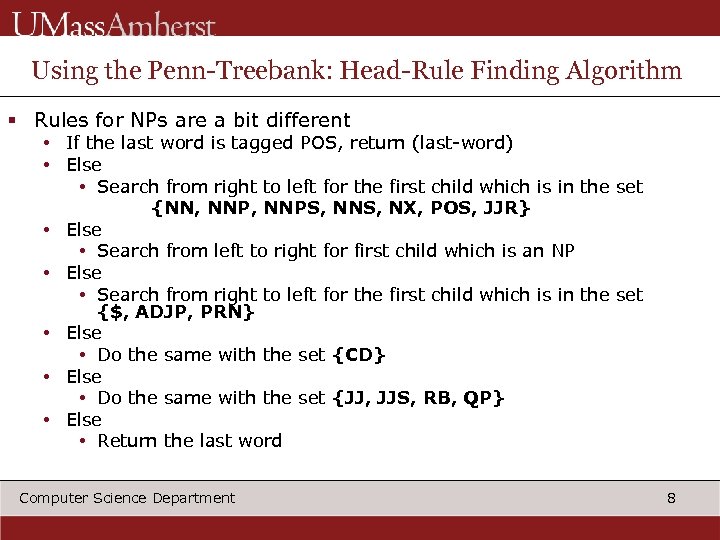

Using the Penn-Treebank: Head-Rule Finding Algorithm § Rules for NPs are a bit different • If the last word is tagged POS, return (last-word) • Else • Search from right to left for the first child which is in the set {NN, NNPS, NNS, NX, POS, JJR} • Else • Search from left to right for first child which is an NP • Else • Search from right to left for the first child which is in the set {$, ADJP, PRN} • Else • Do the same with the set {CD} • Else • Do the same with the set {JJ, JJS, RB, QP} • Else • Return the last word Computer Science Department 8

Using the Penn-Treebank: Head-Rule Finding Algorithm § Rules for NPs are a bit different • If the last word is tagged POS, return (last-word) • Else • Search from right to left for the first child which is in the set {NN, NNPS, NNS, NX, POS, JJR} • Else • Search from left to right for first child which is an NP • Else • Search from right to left for the first child which is in the set {$, ADJP, PRN} • Else • Do the same with the set {CD} • Else • Do the same with the set {JJ, JJS, RB, QP} • Else • Return the last word Computer Science Department 8

Using the Penn-Treebank: Binary Tree Conversion § Now we put the Head-Tags to use • Necessary for CFG grammar use with probabilistic CYK R - > Li. Li-1…L 1 Lo. HRo. R 1 … Ri-1 Ri Li. Li-1…L 1 Lo. HRo. R 1 … Ri-1 A General n-ary rule Ri On right side of H-tag we recursively split last element to make a new binary rule, left recursive. On the left side we do the same by removing the first element, right recursive. Li Li-1…L 1 Lo. H Computer Science Department 9

Using the Penn-Treebank: Binary Tree Conversion § Now we put the Head-Tags to use • Necessary for CFG grammar use with probabilistic CYK R - > Li. Li-1…L 1 Lo. HRo. R 1 … Ri-1 Ri Li. Li-1…L 1 Lo. HRo. R 1 … Ri-1 A General n-ary rule Ri On right side of H-tag we recursively split last element to make a new binary rule, left recursive. On the left side we do the same by removing the first element, right recursive. Li Li-1…L 1 Lo. H Computer Science Department 9

Using the Penn-Treebank: Grammar Induction Procedure § After we have binary trees we can easily begin to identify rules and record their frequency • Identify every production and save them into a python dictionary § Frequencies cached in a local file for later use, read-in on subsequent executions § No immediate smoothing is done on probabilities, Grammar is later trimmed to help with performance Computer Science Department 10

Using the Penn-Treebank: Grammar Induction Procedure § After we have binary trees we can easily begin to identify rules and record their frequency • Identify every production and save them into a python dictionary § Frequencies cached in a local file for later use, read-in on subsequent executions § No immediate smoothing is done on probabilities, Grammar is later trimmed to help with performance Computer Science Department 10

Probabilistic CYK: The Parsing Step § We use a Probabilistic CYK implementation to parse our CFG grammar and also assign probabilities to final parse trees. • Useful to provide multiple parses and disambiguate sentences § New Concerns • Unary Rules and their lengths • Runtime (result of incredibly large grammar) Computer Science Department 11

Probabilistic CYK: The Parsing Step § We use a Probabilistic CYK implementation to parse our CFG grammar and also assign probabilities to final parse trees. • Useful to provide multiple parses and disambiguate sentences § New Concerns • Unary Rules and their lengths • Runtime (result of incredibly large grammar) Computer Science Department 11

Probabilistic CYK: Handling Unary Rules within Grammar § Unary Rules of the form X->Y or X->a are ubiquitous in our grammar • The closure of a constituent is needed to determine all the unary productions that can lead to that constituent. • Def Closure(X) = U{Closure(Y) | Y->X}, i. e all non terminals that are reachable, by unary rules, from X. • We implement this iteratively and also maintain a closed list and limit depth, to prevent possible infinite recursion Computer Science Department 12

Probabilistic CYK: Handling Unary Rules within Grammar § Unary Rules of the form X->Y or X->a are ubiquitous in our grammar • The closure of a constituent is needed to determine all the unary productions that can lead to that constituent. • Def Closure(X) = U{Closure(Y) | Y->X}, i. e all non terminals that are reachable, by unary rules, from X. • We implement this iteratively and also maintain a closed list and limit depth, to prevent possible infinite recursion Computer Science Department 12

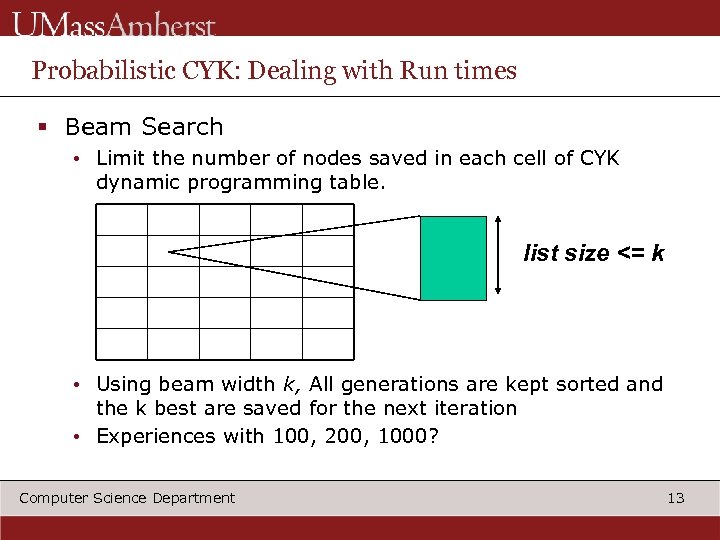

Probabilistic CYK: Dealing with Run times § Beam Search • Limit the number of nodes saved in each cell of CYK dynamic programming table. list size <= k • Using beam width k, All generations are kept sorted and the k best are saved for the next iteration • Experiences with 100, 200, 1000? Computer Science Department 13

Probabilistic CYK: Dealing with Run times § Beam Search • Limit the number of nodes saved in each cell of CYK dynamic programming table. list size <= k • Using beam width k, All generations are kept sorted and the k best are saved for the next iteration • Experiences with 100, 200, 1000? Computer Science Department 13

Probabilistic CYK: Dealing with Run Times § Another optimization was to remove all productions rules with frequency < fc • Used fc = 1, 2… § Also limited depth when calculating the unary rules (closure) of a constituent present in our CYK table • Extensive unary rules found to greatly slow down our parser • Also long chains of unary productions have extremely low probabilities, they are commonly pruned by beam search anyway Computer Science Department 14

Probabilistic CYK: Dealing with Run Times § Another optimization was to remove all productions rules with frequency < fc • Used fc = 1, 2… § Also limited depth when calculating the unary rules (closure) of a constituent present in our CYK table • Extensive unary rules found to greatly slow down our parser • Also long chains of unary productions have extremely low probabilities, they are commonly pruned by beam search anyway Computer Science Department 14

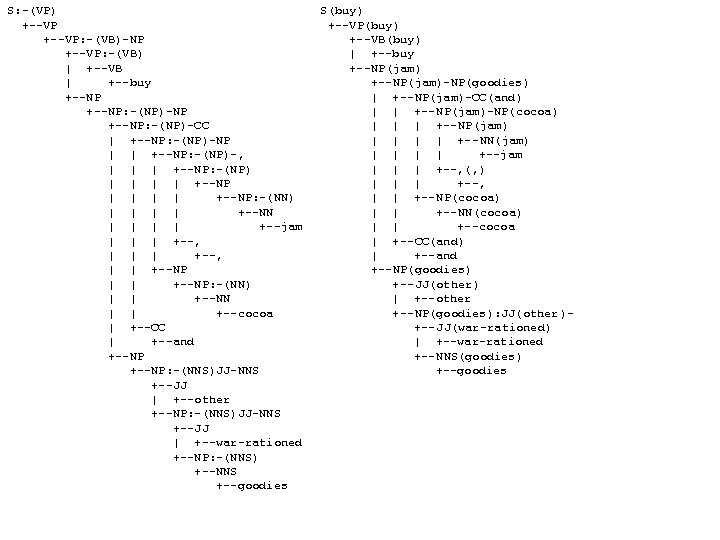

Probabilistic CYK: Random Sentences and Example Trees § Some random sentences from our grammar with associated probabilities. ('buy jam , cocoa and other war-rationed goodies', 0. 0046296296294) ('cartoonist garry trudeau refused to impose sanctions , including petroleum equipment , which go into semiannual payments , including watches , including three , which the federal government , the same company formed by mrs. yeargin school district would be confidential', 2. 9911073159300768 e-33) ('33 men selling individual copies selling securities at the central plaza hotel die', 7. 4942533128815141 e-08) Computer Science Department 15

Probabilistic CYK: Random Sentences and Example Trees § Some random sentences from our grammar with associated probabilities. ('buy jam , cocoa and other war-rationed goodies', 0. 0046296296294) ('cartoonist garry trudeau refused to impose sanctions , including petroleum equipment , which go into semiannual payments , including watches , including three , which the federal government , the same company formed by mrs. yeargin school district would be confidential', 2. 9911073159300768 e-33) ('33 men selling individual copies selling securities at the central plaza hotel die', 7. 4942533128815141 e-08) Computer Science Department 15

Probabilistic CYK: Random Sentences and Example Trees ('young people believe criticism is led by south korea', 1. 3798001044090654 e-11) ('the purchasing managers believe the art is the often amusing , often supercilious , even vicious chronicle of bank of the issue yen -support intervention', 7. 1905882731776209 e-1) Computer Science Department 16

Probabilistic CYK: Random Sentences and Example Trees ('young people believe criticism is led by south korea', 1. 3798001044090654 e-11) ('the purchasing managers believe the art is the often amusing , often supercilious , even vicious chronicle of bank of the issue yen -support intervention', 7. 1905882731776209 e-1) Computer Science Department 16

S: -(VP) +--VP: -(VB)-NP +--VP: -(VB) | +--VB | +--buy +--NP: -(NP)-NP +--NP: -(NP)-CC | +--NP: -(NP)-NP | | +--NP: -(NP)-, | | | +--NP: -(NP) | | | | +--NP: -(NN) | | +--NN | | +--jam | | | +--, | | +--NP: -(NN) | | +--NN | | +--cocoa | +--CC | +--and +--NP: -(NNS)JJ-NNS +--JJ | +--other +--NP: -(NNS)JJ-NNS +--JJ | +--war-rationed +--NP: -(NNS) +--NNS +--goodies Computer Science Department S(buy) +--VP(buy) +--VB(buy) | +--buy +--NP(jam)-NP(goodies) | +--NP(jam)-CC(and) | | +--NP(jam)-NP(cocoa) | | | +--NP(jam) | | +--NN(jam) | | +--jam | | | +--, (, ) | | | +--, | | +--NP(cocoa) | | +--NN(cocoa) | | +--cocoa | +--CC(and) | +--and +--NP(goodies) +--JJ(other) | +--other +--NP(goodies): JJ(other)+--JJ(war-rationed) | +--war-rationed +--NNS(goodies) +--goodies 17

S: -(VP) +--VP: -(VB)-NP +--VP: -(VB) | +--VB | +--buy +--NP: -(NP)-NP +--NP: -(NP)-CC | +--NP: -(NP)-NP | | +--NP: -(NP)-, | | | +--NP: -(NP) | | | | +--NP: -(NN) | | +--NN | | +--jam | | | +--, | | +--NP: -(NN) | | +--NN | | +--cocoa | +--CC | +--and +--NP: -(NNS)JJ-NNS +--JJ | +--other +--NP: -(NNS)JJ-NNS +--JJ | +--war-rationed +--NP: -(NNS) +--NNS +--goodies Computer Science Department S(buy) +--VP(buy) +--VB(buy) | +--buy +--NP(jam)-NP(goodies) | +--NP(jam)-CC(and) | | +--NP(jam)-NP(cocoa) | | | +--NP(jam) | | +--NN(jam) | | +--jam | | | +--, (, ) | | | +--, | | +--NP(cocoa) | | +--NN(cocoa) | | +--cocoa | +--CC(and) | +--and +--NP(goodies) +--JJ(other) | +--other +--NP(goodies): JJ(other)+--JJ(war-rationed) | +--war-rationed +--NNS(goodies) +--goodies 17

Accurate Parsing Conclusion § Massive Lexicalized Grammar § Working Probabilistic Parser • Future Work • Handle sparsity • Smooth Probabilities Computer Science Department 18

Accurate Parsing Conclusion § Massive Lexicalized Grammar § Working Probabilistic Parser • Future Work • Handle sparsity • Smooth Probabilities Computer Science Department 18