34f14b4de927a0e648a1ee7b5b674853.ppt

- Количество слайдов: 41

56 th Northeast Quality Council Conference Mansfield, Massachusetts, October 17 -18, 2006 The Structured Testing Methodology for Software Quality Analyses of Networking Systems Vladimir Riabov, Ph. D. Associate Professor Department of Mathematics & Computer Science Rivier College, Nashua, NH E-mail: vriabov@rivier. edu 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies

56 th Northeast Quality Council Conference Mansfield, Massachusetts, October 17 -18, 2006 The Structured Testing Methodology for Software Quality Analyses of Networking Systems Vladimir Riabov, Ph. D. Associate Professor Department of Mathematics & Computer Science Rivier College, Nashua, NH E-mail: vriabov@rivier. edu 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies

Developing Complex Computer Systems “If you don’t know where you’re going, any road will do, ” - Chinese Proverb “If you don’t know where you are, a map won’t help, ” - Watts S. Humphrey “You can’t improve what you can’t measure, ” - Tim Lister Agenda: • • Structured Software Testing Methodology & Graph Theory: Approach and Tools; Mc. Cabe’s Software Complexity Analysis Techniques; Results of Code Complexity Analysis for two industrial projects in Networking; Study of Networking Protocols Implementation; Predicting Code Errors; Test and Code Coverage; Conclusion: What have the Graph Theory and Structured Testing Methodology done for us? 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 2

Developing Complex Computer Systems “If you don’t know where you’re going, any road will do, ” - Chinese Proverb “If you don’t know where you are, a map won’t help, ” - Watts S. Humphrey “You can’t improve what you can’t measure, ” - Tim Lister Agenda: • • Structured Software Testing Methodology & Graph Theory: Approach and Tools; Mc. Cabe’s Software Complexity Analysis Techniques; Results of Code Complexity Analysis for two industrial projects in Networking; Study of Networking Protocols Implementation; Predicting Code Errors; Test and Code Coverage; Conclusion: What have the Graph Theory and Structured Testing Methodology done for us? 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 2

Mc. Cabe’s Structured Testing Methodology Approach and Tools • Mc. Cabe’s Structured Testing Methodology is: - a unique methodology for software testing developed in 1976 [IEEE Transactions on Software Engineering, Vol. SE-2, No. 4, 1976, pp. 308 -320]; - based on the Theory of Graphs; - approved as the NIST Standard (1996) in the structured testing; - a leading tool in computer, IT, and aerospace industries (HP, GTE, AT&T, Alcatel, GIG, Boeing, NASA, etc. ) since 1977; - provides Code Coverage Capacity. • Author’s Experience with Mc. Cabe IQ Tools since 1998: - leaded three projects in networking industry that required Code Analysis, Code Coverage, and Test Coverage; - completed BCN Code Analysis with Mc. Cabe Tools; - completed BSN Code Analysis with Mc. Cabe Tools; - studied BSN-OSPF Code Coverage & Test Coverage. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 3

Mc. Cabe’s Structured Testing Methodology Approach and Tools • Mc. Cabe’s Structured Testing Methodology is: - a unique methodology for software testing developed in 1976 [IEEE Transactions on Software Engineering, Vol. SE-2, No. 4, 1976, pp. 308 -320]; - based on the Theory of Graphs; - approved as the NIST Standard (1996) in the structured testing; - a leading tool in computer, IT, and aerospace industries (HP, GTE, AT&T, Alcatel, GIG, Boeing, NASA, etc. ) since 1977; - provides Code Coverage Capacity. • Author’s Experience with Mc. Cabe IQ Tools since 1998: - leaded three projects in networking industry that required Code Analysis, Code Coverage, and Test Coverage; - completed BCN Code Analysis with Mc. Cabe Tools; - completed BSN Code Analysis with Mc. Cabe Tools; - studied BSN-OSPF Code Coverage & Test Coverage. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 3

Mc. Cabe’s Publication on the Structured Testing Methodology (1976) 56 th NEQC Conference, 2006 NIST Standard on the Structured Testing Methodology (1996) Complexity Metrics for Networking Software Studies 4

Mc. Cabe’s Publication on the Structured Testing Methodology (1976) 56 th NEQC Conference, 2006 NIST Standard on the Structured Testing Methodology (1996) Complexity Metrics for Networking Software Studies 4

Mc. Cabe’s Structured Testing Methodology • The key requirement of structured testing is that all decision outcomes must be exercised independently during testing. • The number of tests required for a software module is equal to the cyclomatic complexity of that module. • The software complexity is measured by metrics: - cyclomatic complexity, v - essential complexity, ev - module design complexity, iv - system design, S 0 = iv - system integration complexity, S 1 = S 0 - N + 1 for N modules - Halstead metrics, and 52 metrics more. • The testing methodology allows to identify unreliable-andunmaintainable code, predict number of code errors and maintenance efforts, develop strategies for unit/module testing, integration testing, and test/code coverage. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 5

Mc. Cabe’s Structured Testing Methodology • The key requirement of structured testing is that all decision outcomes must be exercised independently during testing. • The number of tests required for a software module is equal to the cyclomatic complexity of that module. • The software complexity is measured by metrics: - cyclomatic complexity, v - essential complexity, ev - module design complexity, iv - system design, S 0 = iv - system integration complexity, S 1 = S 0 - N + 1 for N modules - Halstead metrics, and 52 metrics more. • The testing methodology allows to identify unreliable-andunmaintainable code, predict number of code errors and maintenance efforts, develop strategies for unit/module testing, integration testing, and test/code coverage. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 5

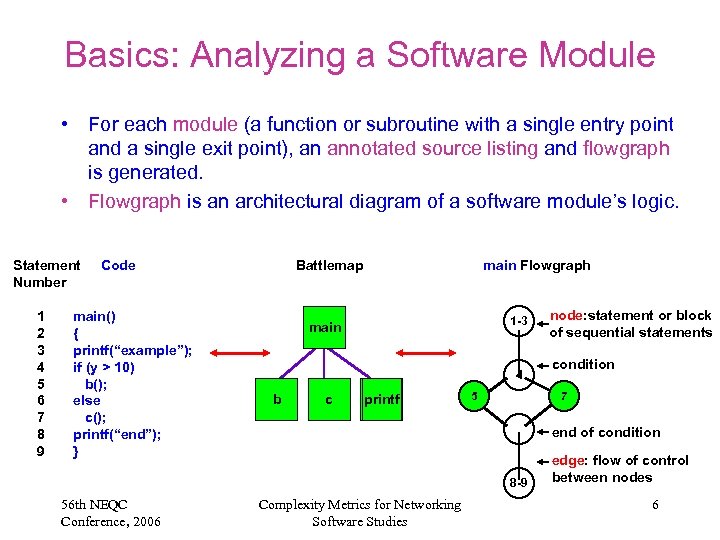

Basics: Analyzing a Software Module • For each module (a function or subroutine with a single entry point and a single exit point), an annotated source listing and flowgraph is generated. • Flowgraph is an architectural diagram of a software module’s logic. Statement Number 1 2 3 4 5 6 7 8 9 Code main() { printf(“example”); if (y > 10) b(); else c(); printf(“end”); } Battlemap main Flowgraph 1 -3 main 4 b c printf 5 condition 7 end of condition 8 -9 56 th NEQC Conference, 2006 node: statement or block of sequential statements Complexity Metrics for Networking Software Studies edge: flow of control between nodes 6

Basics: Analyzing a Software Module • For each module (a function or subroutine with a single entry point and a single exit point), an annotated source listing and flowgraph is generated. • Flowgraph is an architectural diagram of a software module’s logic. Statement Number 1 2 3 4 5 6 7 8 9 Code main() { printf(“example”); if (y > 10) b(); else c(); printf(“end”); } Battlemap main Flowgraph 1 -3 main 4 b c printf 5 condition 7 end of condition 8 -9 56 th NEQC Conference, 2006 node: statement or block of sequential statements Complexity Metrics for Networking Software Studies edge: flow of control between nodes 6

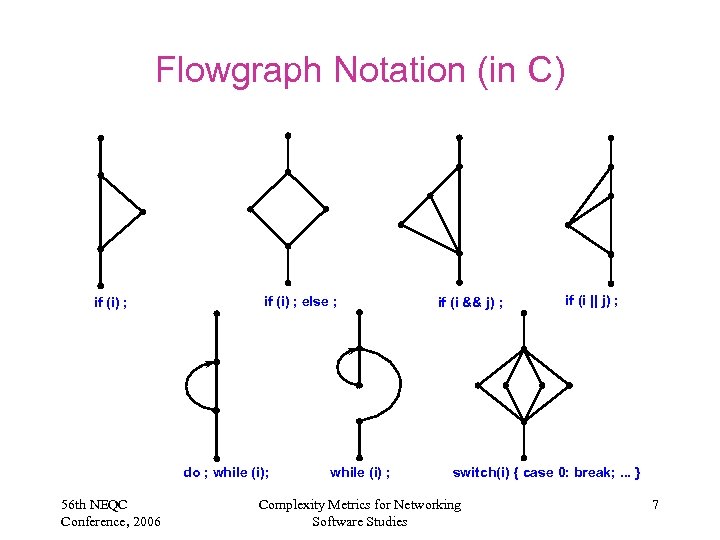

Flowgraph Notation (in C) if (i) ; else ; do ; while (i); 56 th NEQC Conference, 2006 while (i) ; if (i && j) ; if (i || j) ; switch(i) { case 0: break; . . . } Complexity Metrics for Networking Software Studies 7

Flowgraph Notation (in C) if (i) ; else ; do ; while (i); 56 th NEQC Conference, 2006 while (i) ; if (i && j) ; if (i || j) ; switch(i) { case 0: break; . . . } Complexity Metrics for Networking Software Studies 7

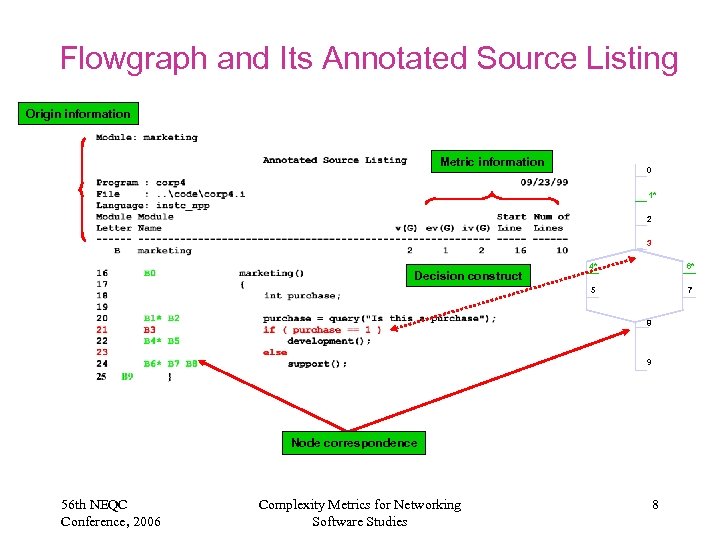

Flowgraph and Its Annotated Source Listing Origin information Metric information 0 1* 2 3 4* 6* 5 Decision construct 7 8 9 Node correspondence 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 8

Flowgraph and Its Annotated Source Listing Origin information Metric information 0 1* 2 3 4* 6* 5 Decision construct 7 8 9 Node correspondence 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 8

Would you buy a used car from this software? • Problem: There are size and complexity boundaries beyond which software becomes hopeless – Too error-prone to use – Too complex to fix – Too large to redevelop • Solution: Control complexity during development and maintenance – Stay away from the boundaries. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 9

Would you buy a used car from this software? • Problem: There are size and complexity boundaries beyond which software becomes hopeless – Too error-prone to use – Too complex to fix – Too large to redevelop • Solution: Control complexity during development and maintenance – Stay away from the boundaries. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 9

Important Complexity Measures • Cyclomatic complexity: v = e - n + 2 (here: e = edges; n = nodes) – Amount of decision logic • Essential complexity: ev – Amount of poorly-structured logic • Module design complexity: iv – Amount of logic involved with subroutine calls • System design complexity: S 0 = iv – Amount of independent unit (module) tests for a system • System integration complexity: S 1 = S 0 - N + 1 – Amount of integration tests for a system of N modules. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 10

Important Complexity Measures • Cyclomatic complexity: v = e - n + 2 (here: e = edges; n = nodes) – Amount of decision logic • Essential complexity: ev – Amount of poorly-structured logic • Module design complexity: iv – Amount of logic involved with subroutine calls • System design complexity: S 0 = iv – Amount of independent unit (module) tests for a system • System integration complexity: S 1 = S 0 - N + 1 – Amount of integration tests for a system of N modules. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 10

Cyclomatic Complexity • Cyclomatic complexity, v - a measure of the decision logic of a software module. – Applies to decision logic embedded within written code. – Is derived from predicates in decision logic. – Is calculated for each module in the Battlemap. – Grows from 1 to high, finite number based on the amount of decision logic. – Is correlated to software quality and testing quantity; units with higher v, v > 10, are less reliable and require high levels of testing. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 11

Cyclomatic Complexity • Cyclomatic complexity, v - a measure of the decision logic of a software module. – Applies to decision logic embedded within written code. – Is derived from predicates in decision logic. – Is calculated for each module in the Battlemap. – Grows from 1 to high, finite number based on the amount of decision logic. – Is correlated to software quality and testing quantity; units with higher v, v > 10, are less reliable and require high levels of testing. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 11

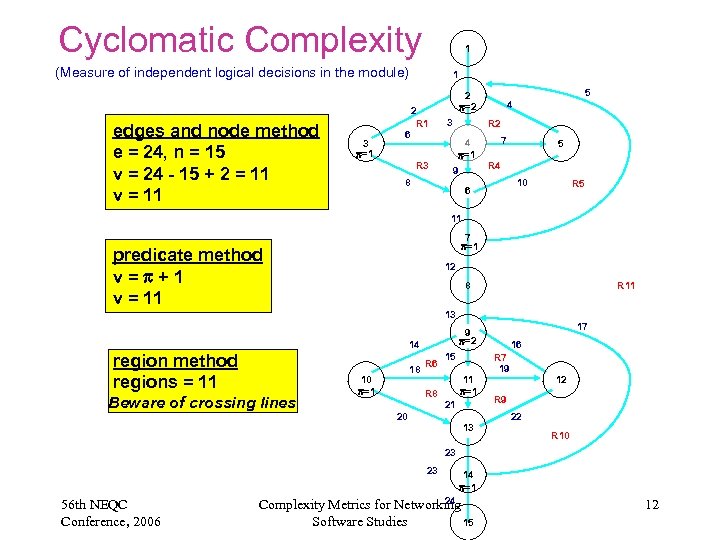

Cyclomatic Complexity 1 (Measure of independent logical decisions in the module) edges and node method e = 24, n = 15 v = 24 - 15 + 2 = 11 v = 11 3 =1 1 6 2 R 1 R 3 5 2 =2 3 4 R 2 4 =1 9 8 7 5 R 4 10 6 R 5 11 7 =1 predicate method v= +1 v = 11 12 8 R 11 13 14 region method regions = 11 Beware of crossing lines 18 10 =1 17 9 =2 R 6 R 8 15 R 7 19 11 =1 21 20 16 13 12 R 9 22 R 10 23 23 56 th NEQC Conference, 2006 14 =1 24 Complexity Metrics for Networking 15 Software Studies 12

Cyclomatic Complexity 1 (Measure of independent logical decisions in the module) edges and node method e = 24, n = 15 v = 24 - 15 + 2 = 11 v = 11 3 =1 1 6 2 R 1 R 3 5 2 =2 3 4 R 2 4 =1 9 8 7 5 R 4 10 6 R 5 11 7 =1 predicate method v= +1 v = 11 12 8 R 11 13 14 region method regions = 11 Beware of crossing lines 18 10 =1 17 9 =2 R 6 R 8 15 R 7 19 11 =1 21 20 16 13 12 R 9 22 R 10 23 23 56 th NEQC Conference, 2006 14 =1 24 Complexity Metrics for Networking 15 Software Studies 12

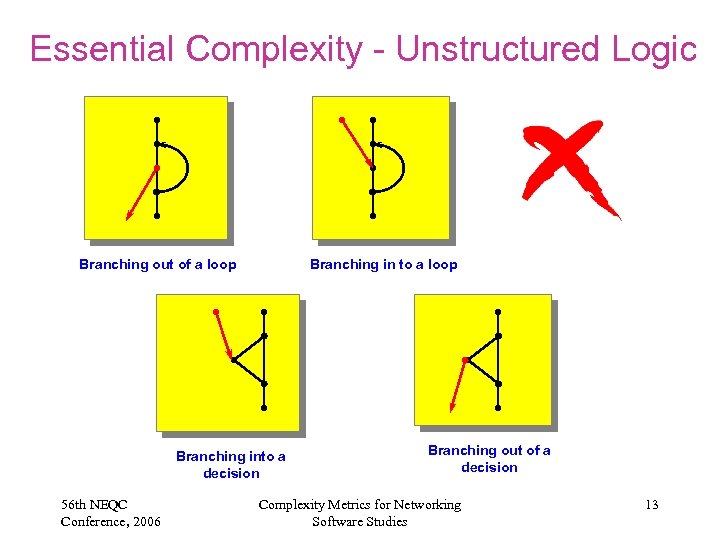

Essential Complexity - Unstructured Logic Branching out of a loop Branching in to a loop Branching into a decision 56 th NEQC Conference, 2006 Branching out of a decision Complexity Metrics for Networking Software Studies 13

Essential Complexity - Unstructured Logic Branching out of a loop Branching in to a loop Branching into a decision 56 th NEQC Conference, 2006 Branching out of a decision Complexity Metrics for Networking Software Studies 13

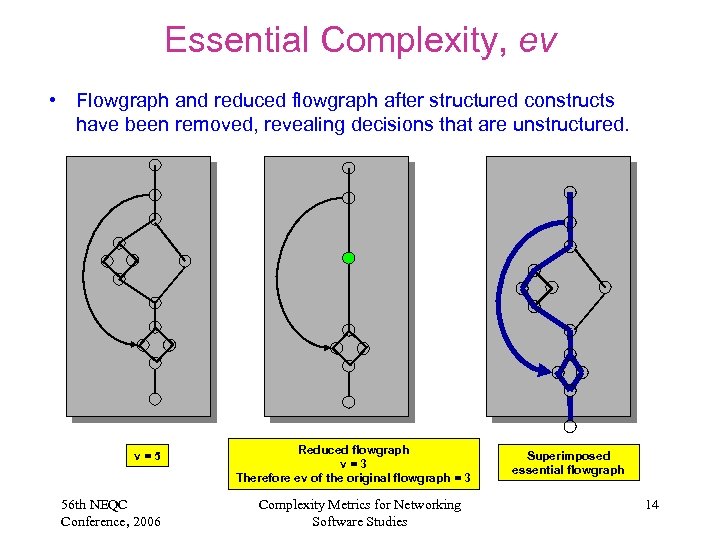

Essential Complexity, ev • Flowgraph and reduced flowgraph after structured constructs have been removed, revealing decisions that are unstructured. v=5 56 th NEQC Conference, 2006 Reduced flowgraph v=3 Therefore ev of the original flowgraph = 3 Complexity Metrics for Networking Software Studies Superimposed essential flowgraph 14

Essential Complexity, ev • Flowgraph and reduced flowgraph after structured constructs have been removed, revealing decisions that are unstructured. v=5 56 th NEQC Conference, 2006 Reduced flowgraph v=3 Therefore ev of the original flowgraph = 3 Complexity Metrics for Networking Software Studies Superimposed essential flowgraph 14

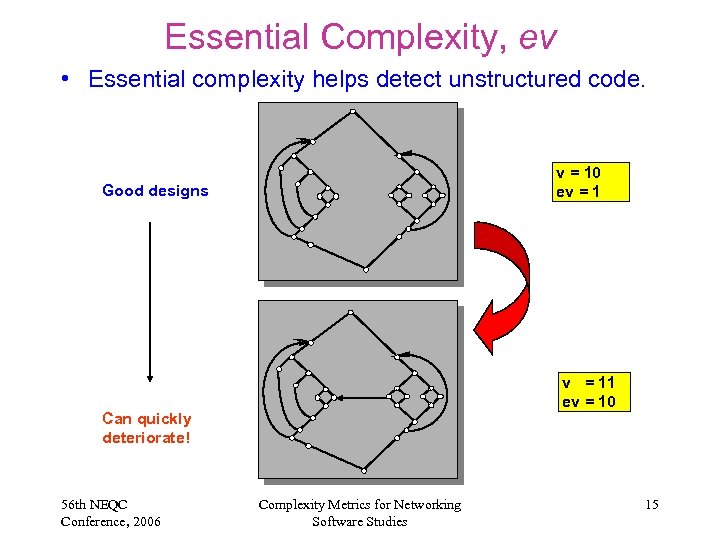

Essential Complexity, ev • Essential complexity helps detect unstructured code. v = 10 ev = 1 Good designs v = 11 ev = 10 Can quickly deteriorate! 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 15

Essential Complexity, ev • Essential complexity helps detect unstructured code. v = 10 ev = 1 Good designs v = 11 ev = 10 Can quickly deteriorate! 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 15

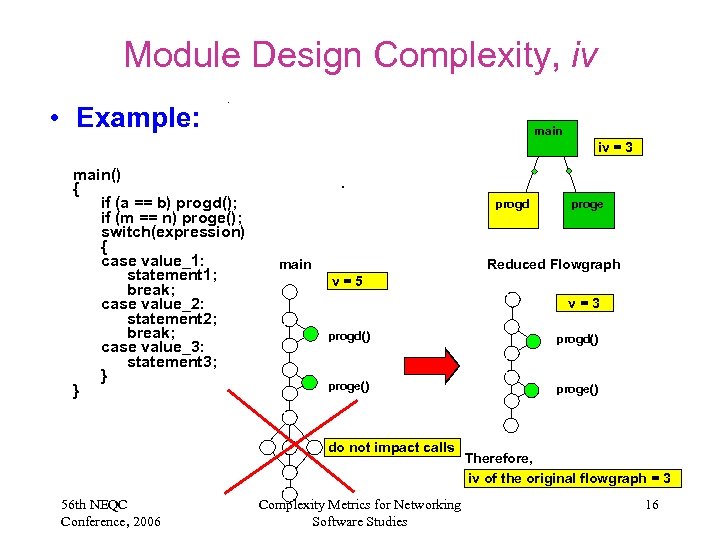

Module Design Complexity, iv • Example: main iv = 3 main() { if (a == b) progd(); if (m == n) proge(); switch(expression) { case value_1: statement 1; break; case value_2: statement 2; break; case value_3: statement 3; } } progd main Reduced Flowgraph v=5 v=3 progd() proge() do not impact calls 56 th NEQC Conference, 2006 proge Complexity Metrics for Networking Software Studies Therefore, iv of the original flowgraph = 3 16

Module Design Complexity, iv • Example: main iv = 3 main() { if (a == b) progd(); if (m == n) proge(); switch(expression) { case value_1: statement 1; break; case value_2: statement 2; break; case value_3: statement 3; } } progd main Reduced Flowgraph v=5 v=3 progd() proge() do not impact calls 56 th NEQC Conference, 2006 proge Complexity Metrics for Networking Software Studies Therefore, iv of the original flowgraph = 3 16

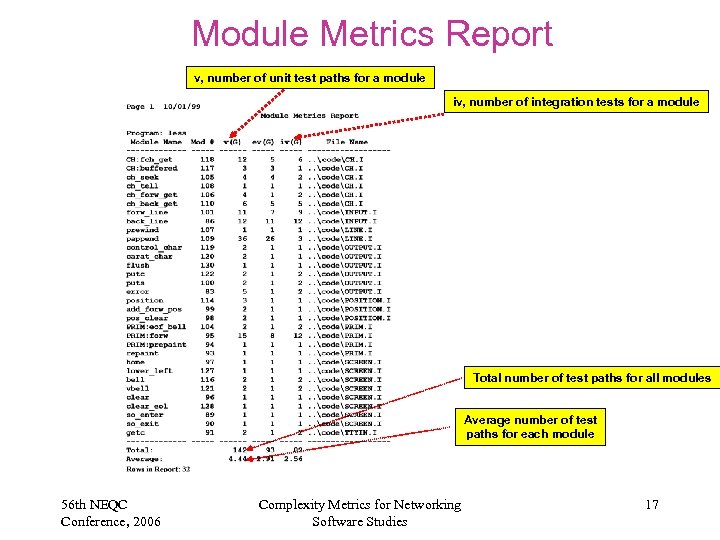

Module Metrics Report v, number of unit test paths for a module iv, number of integration tests for a module Total number of test paths for all modules Average number of test paths for each module 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 17

Module Metrics Report v, number of unit test paths for a module iv, number of integration tests for a module Total number of test paths for all modules Average number of test paths for each module 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 17

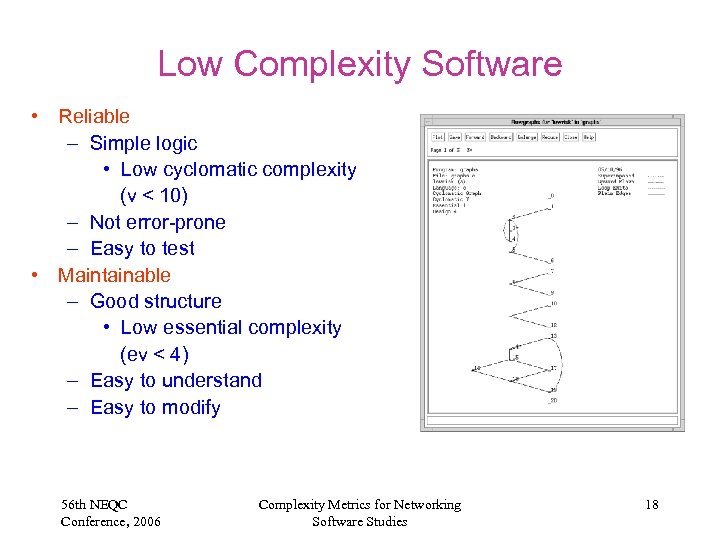

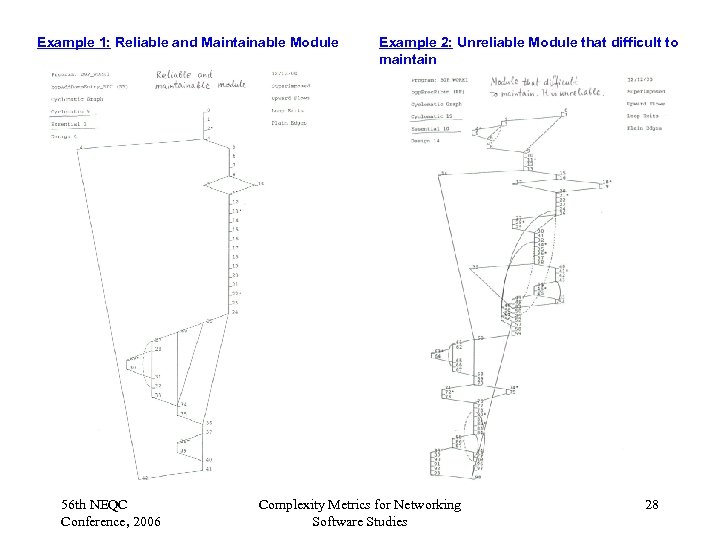

Low Complexity Software • Reliable – Simple logic • Low cyclomatic complexity (v < 10) – Not error-prone – Easy to test • Maintainable – Good structure • Low essential complexity (ev < 4) – Easy to understand – Easy to modify 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 18

Low Complexity Software • Reliable – Simple logic • Low cyclomatic complexity (v < 10) – Not error-prone – Easy to test • Maintainable – Good structure • Low essential complexity (ev < 4) – Easy to understand – Easy to modify 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 18

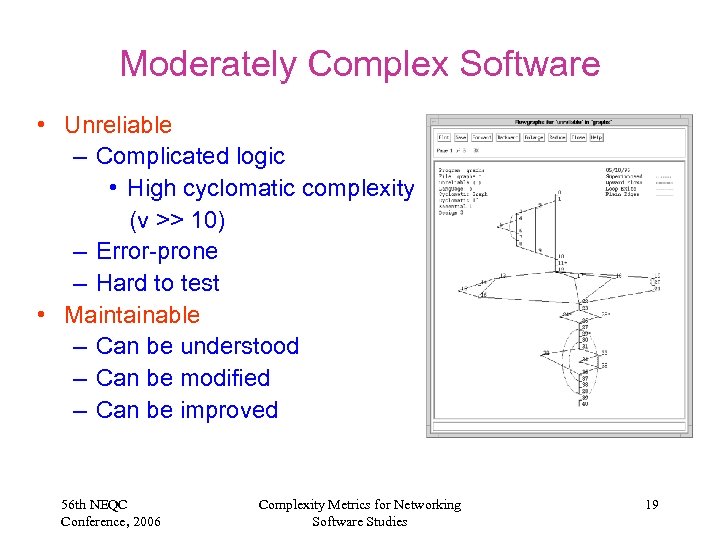

Moderately Complex Software • Unreliable – Complicated logic • High cyclomatic complexity (v >> 10) – Error-prone – Hard to test • Maintainable – Can be understood – Can be modified – Can be improved 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 19

Moderately Complex Software • Unreliable – Complicated logic • High cyclomatic complexity (v >> 10) – Error-prone – Hard to test • Maintainable – Can be understood – Can be modified – Can be improved 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 19

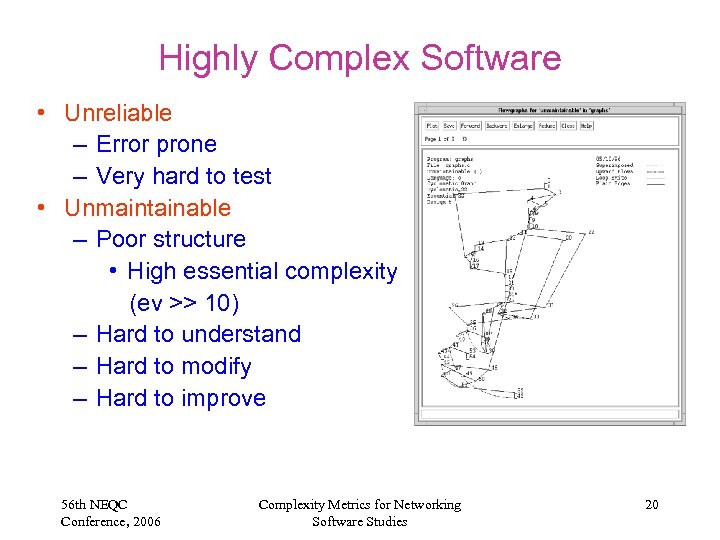

Highly Complex Software • Unreliable – Error prone – Very hard to test • Unmaintainable – Poor structure • High essential complexity (ev >> 10) – Hard to understand – Hard to modify – Hard to improve 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 20

Highly Complex Software • Unreliable – Error prone – Very hard to test • Unmaintainable – Poor structure • High essential complexity (ev >> 10) – Hard to understand – Hard to modify – Hard to improve 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 20

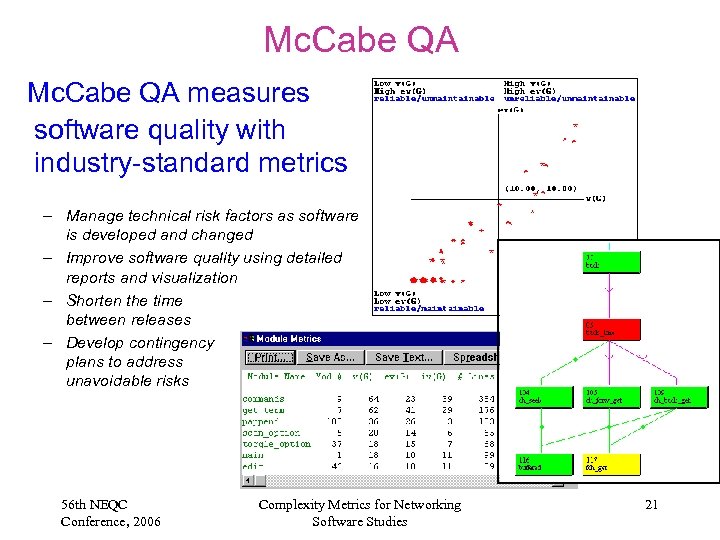

Mc. Cabe QA measures software quality with industry-standard metrics – Manage technical risk factors as software is developed and changed – Improve software quality using detailed reports and visualization – Shorten the time between releases – Develop contingency plans to address unavoidable risks 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 21

Mc. Cabe QA measures software quality with industry-standard metrics – Manage technical risk factors as software is developed and changed – Improve software quality using detailed reports and visualization – Shorten the time between releases – Develop contingency plans to address unavoidable risks 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 21

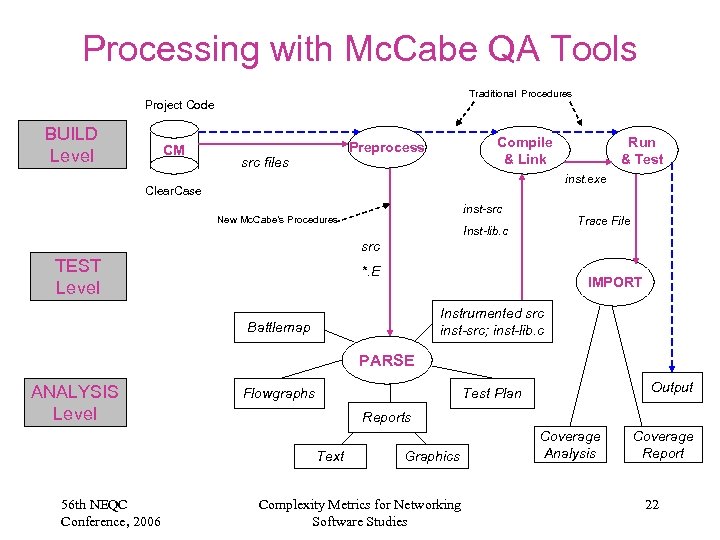

Processing with Mc. Cabe QA Tools Traditional Procedures Project Code BUILD Level CM Compile & Link Preprocess src files Run & Test inst. exe Clear. Case inst-src New Mc. Cabe’s Procedures Trace File Inst-lib. c src TEST Level *. E IMPORT Instrumented src inst-src; inst-lib. c Battlemap PARSE ANALYSIS Level Flowgraphs Reports Text 56 th NEQC Conference, 2006 Output Test Plan Graphics Complexity Metrics for Networking Software Studies Coverage Analysis Coverage Report 22

Processing with Mc. Cabe QA Tools Traditional Procedures Project Code BUILD Level CM Compile & Link Preprocess src files Run & Test inst. exe Clear. Case inst-src New Mc. Cabe’s Procedures Trace File Inst-lib. c src TEST Level *. E IMPORT Instrumented src inst-src; inst-lib. c Battlemap PARSE ANALYSIS Level Flowgraphs Reports Text 56 th NEQC Conference, 2006 Output Test Plan Graphics Complexity Metrics for Networking Software Studies Coverage Analysis Coverage Report 22

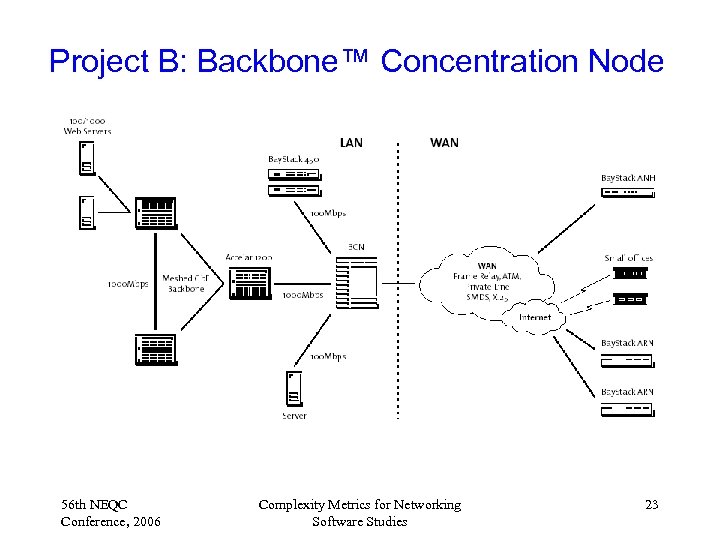

Project B: Backbone™ Concentration Node 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 23

Project B: Backbone™ Concentration Node 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 23

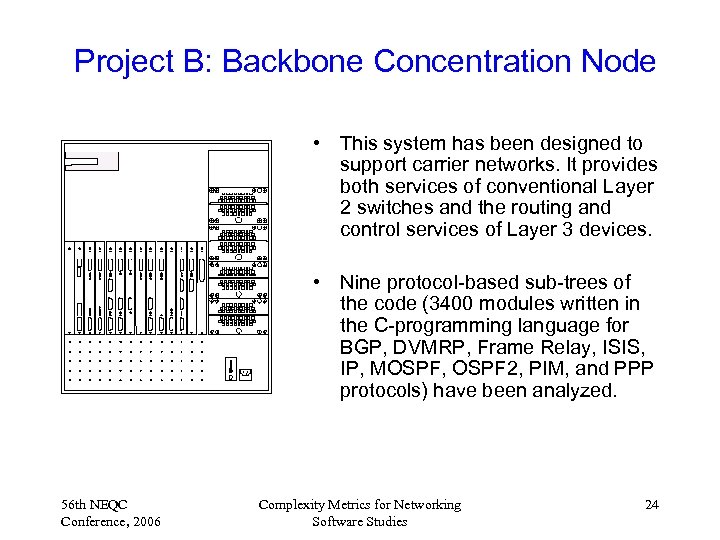

Project B: Backbone Concentration Node • This system has been designed to support carrier networks. It provides both services of conventional Layer 2 switches and the routing and control services of Layer 3 devices. • Nine protocol-based sub-trees of the code (3400 modules written in the C-programming language for BGP, DVMRP, Frame Relay, ISIS, IP, MOSPF, OSPF 2, PIM, and PPP protocols) have been analyzed. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 24

Project B: Backbone Concentration Node • This system has been designed to support carrier networks. It provides both services of conventional Layer 2 switches and the routing and control services of Layer 3 devices. • Nine protocol-based sub-trees of the code (3400 modules written in the C-programming language for BGP, DVMRP, Frame Relay, ISIS, IP, MOSPF, OSPF 2, PIM, and PPP protocols) have been analyzed. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 24

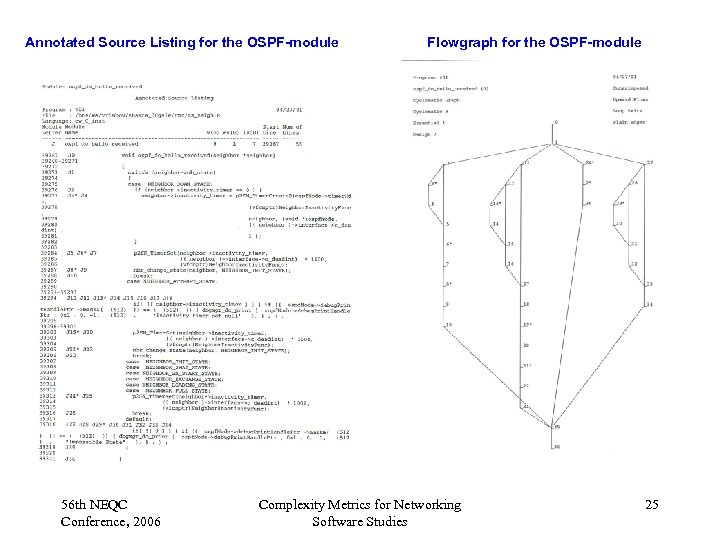

Annotated Source Listing for the OSPF-module 56 th NEQC Conference, 2006 Flowgraph for the OSPF-module Complexity Metrics for Networking Software Studies 25

Annotated Source Listing for the OSPF-module 56 th NEQC Conference, 2006 Flowgraph for the OSPF-module Complexity Metrics for Networking Software Studies 25

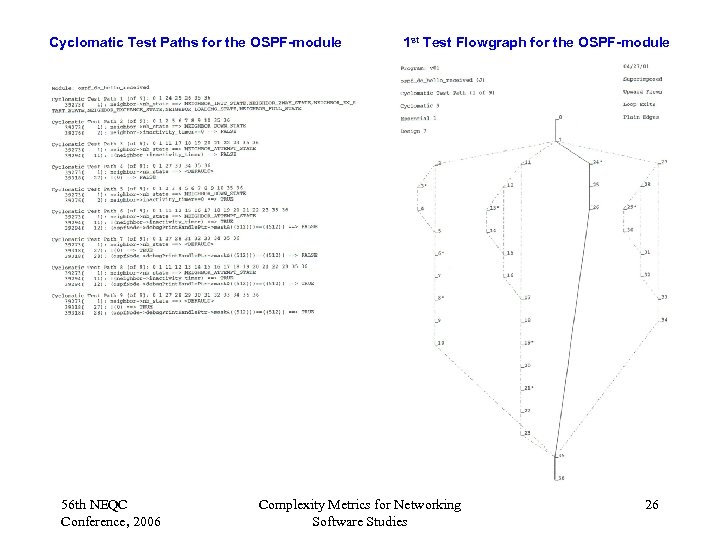

Cyclomatic Test Paths for the OSPF-module 56 th NEQC Conference, 2006 1 st Test Flowgraph for the OSPF-module Complexity Metrics for Networking Software Studies 26

Cyclomatic Test Paths for the OSPF-module 56 th NEQC Conference, 2006 1 st Test Flowgraph for the OSPF-module Complexity Metrics for Networking Software Studies 26

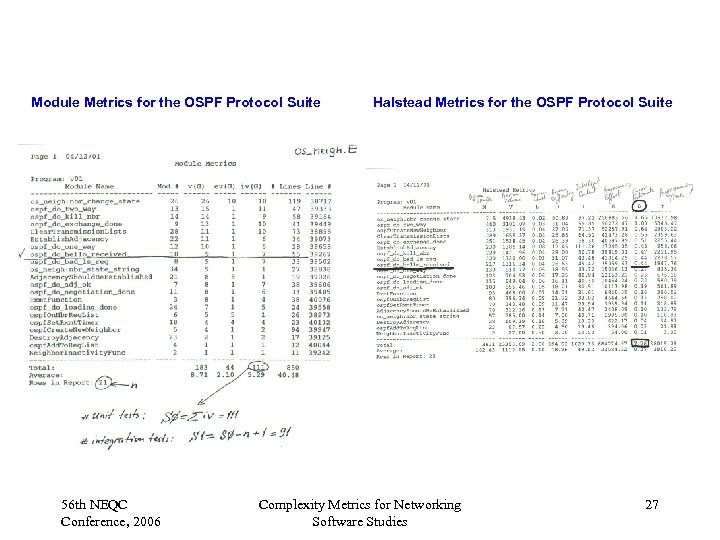

Module Metrics for the OSPF Protocol Suite 56 th NEQC Conference, 2006 Halstead Metrics for the OSPF Protocol Suite Complexity Metrics for Networking Software Studies 27

Module Metrics for the OSPF Protocol Suite 56 th NEQC Conference, 2006 Halstead Metrics for the OSPF Protocol Suite Complexity Metrics for Networking Software Studies 27

Example 1: Reliable and Maintainable Module 56 th NEQC Conference, 2006 Example 2: Unreliable Module that difficult to maintain Complexity Metrics for Networking Software Studies 28

Example 1: Reliable and Maintainable Module 56 th NEQC Conference, 2006 Example 2: Unreliable Module that difficult to maintain Complexity Metrics for Networking Software Studies 28

Example 3: Absolutely Unreliable and Unmaintainable Module 56 th NEQC Conference, 2006 Summary of Modules’ Reliability and Maintainability Complexity Metrics for Networking Software Studies 29

Example 3: Absolutely Unreliable and Unmaintainable Module 56 th NEQC Conference, 2006 Summary of Modules’ Reliability and Maintainability Complexity Metrics for Networking Software Studies 29

Project-B Protocol-Based Code Analysis • Unreliable modules: 38% of the code modules have the Cyclomatic Complexity more than 10 (including 592 functions with v > 20); • Only two code parts (FR, ISIS) are reliable; • BGP and PIM have the worst characteristics (49% of the code modules have v > 10); • 1147 modules (34%) are unreliable and unmaintainable with v > 10 and ev > 4; • BGP, DVMRP, and MOSPF are the most unreliable and unmaintainable (42% modules); • The Project-B was cancelled. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 30

Project-B Protocol-Based Code Analysis • Unreliable modules: 38% of the code modules have the Cyclomatic Complexity more than 10 (including 592 functions with v > 20); • Only two code parts (FR, ISIS) are reliable; • BGP and PIM have the worst characteristics (49% of the code modules have v > 10); • 1147 modules (34%) are unreliable and unmaintainable with v > 10 and ev > 4; • BGP, DVMRP, and MOSPF are the most unreliable and unmaintainable (42% modules); • The Project-B was cancelled. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 30

Project-B Code Protocol-Based Analysis (continue) • 1066 functions (31%) have the Module Design Complexity more than 5. The System Integration Complexity is 16026, which is a top estimation of the number of integration tests; • Only FR, ISIS, IP, and PPP modules require 4 integration tests per module. BGP, MOSPF, and PIM have the worst characteristics (42% of the code modules require more than 7 integration tests per module); • B-2. 0. 0. 0 int 18 Release potentially contains 2920 errors estimated by the Halstead approach. FR, ISIS, and IP have relatively low (significantly less than average level of 0. 86 error per module) Berror metrics. For BGP, DVMRP, MOSPF, and PIM, the error level is the highest one (more than one error per module). 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 31

Project-B Code Protocol-Based Analysis (continue) • 1066 functions (31%) have the Module Design Complexity more than 5. The System Integration Complexity is 16026, which is a top estimation of the number of integration tests; • Only FR, ISIS, IP, and PPP modules require 4 integration tests per module. BGP, MOSPF, and PIM have the worst characteristics (42% of the code modules require more than 7 integration tests per module); • B-2. 0. 0. 0 int 18 Release potentially contains 2920 errors estimated by the Halstead approach. FR, ISIS, and IP have relatively low (significantly less than average level of 0. 86 error per module) Berror metrics. For BGP, DVMRP, MOSPF, and PIM, the error level is the highest one (more than one error per module). 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 31

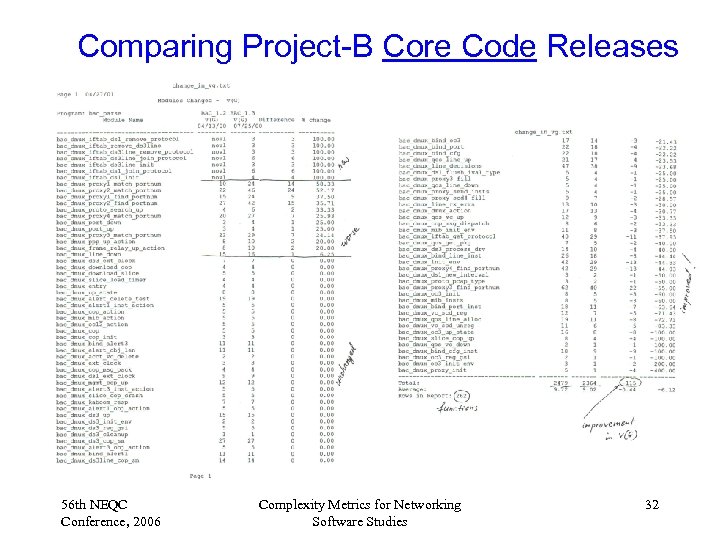

Comparing Project-B Core Code Releases 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 32

Comparing Project-B Core Code Releases 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 32

Comparing Project-B Core Code Releases • • • NEW B-1. 3 Release (262 modules) vs. OLD B-1. 2 Release (271 modules); 16 modules were deleted (7 with v >10); 7 new modules were added (all modules are reliable with v < 10, ev = 1); Sixty percent of changes have been made in the code modules with the parameters of the Cyclomatic Complexity metric more than 20. 63 modules are still unreliable and unmainaitable; 39 out of 70 (56%) modules with v >10 were targeted for changing and remained unreliable; 7 out of 12 (58%) modules have increased their complexity v > 10; Significant reduction achieved in System Design (S 0) and System Integration Metrics (S 1): S 1 from 1126 to 1033; S 0 from 1396 to 1294. New Release potentially contains less errors: 187 errors (vs. 206 errors) estimated by the Halstead approach. The Project-B was cancelled. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 33

Comparing Project-B Core Code Releases • • • NEW B-1. 3 Release (262 modules) vs. OLD B-1. 2 Release (271 modules); 16 modules were deleted (7 with v >10); 7 new modules were added (all modules are reliable with v < 10, ev = 1); Sixty percent of changes have been made in the code modules with the parameters of the Cyclomatic Complexity metric more than 20. 63 modules are still unreliable and unmainaitable; 39 out of 70 (56%) modules with v >10 were targeted for changing and remained unreliable; 7 out of 12 (58%) modules have increased their complexity v > 10; Significant reduction achieved in System Design (S 0) and System Integration Metrics (S 1): S 1 from 1126 to 1033; S 0 from 1396 to 1294. New Release potentially contains less errors: 187 errors (vs. 206 errors) estimated by the Halstead approach. The Project-B was cancelled. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 33

Project C: Broadband Service Node • Broadband Service Node (BSN) allows service providers to aggregate tens of thousands of subscribers onto one platform and apply customized IP services to these subscribers; • Different networking services [IPVPNs, Firewalls, Network Address Translations (NAT), IP Quality-of. Service (Qo. S), Web steering, and others] are provided. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 34

Project C: Broadband Service Node • Broadband Service Node (BSN) allows service providers to aggregate tens of thousands of subscribers onto one platform and apply customized IP services to these subscribers; • Different networking services [IPVPNs, Firewalls, Network Address Translations (NAT), IP Quality-of. Service (Qo. S), Web steering, and others] are provided. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 34

Project-C Code Subtrees-Based Analysis • THREE branches of the Project-C code (Release 2. 5 int 21) have been analyzed, namely RMC, CT 3, and PSP subtrees (23, 136 modules); • 26% of the code modules have the Cyclomatic Complexity more than 10 (including 2, 634 functions with v > 20); - unreliable modules! • All three code parts are approximately at the same level of complexity (average per module: v = 9. 9; ev = 3. 89; iv = 5. 53). • 1. 167 Million lines of code have been studied (50 lines average per module); • 3, 852 modules (17%) are unreliable and unmaintainable with v > 10 and ev > 4; • Estimated number of possible ERRORS is 11, 460; • 128, 013 unit tests and 104, 880 module integration tests should be developed to cover all modules of the Project-C code. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 35

Project-C Code Subtrees-Based Analysis • THREE branches of the Project-C code (Release 2. 5 int 21) have been analyzed, namely RMC, CT 3, and PSP subtrees (23, 136 modules); • 26% of the code modules have the Cyclomatic Complexity more than 10 (including 2, 634 functions with v > 20); - unreliable modules! • All three code parts are approximately at the same level of complexity (average per module: v = 9. 9; ev = 3. 89; iv = 5. 53). • 1. 167 Million lines of code have been studied (50 lines average per module); • 3, 852 modules (17%) are unreliable and unmaintainable with v > 10 and ev > 4; • Estimated number of possible ERRORS is 11, 460; • 128, 013 unit tests and 104, 880 module integration tests should be developed to cover all modules of the Project-C code. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 35

Project-C Protocol-Based Code Analysis • NINE protocol-based areas of the code (2, 141 modules) have been analyzed, namely BGP, FR, IGMP, ISIS, OSPF, PPP, RIP, and SNMP. • 130, 000 lines of code have been studied. • 28% of the code modules have the Cyclomatic Complexity more than 10 (including 272 functions with v > 20); - unreliable modules! • FR & SNMP parts are well designed & programmed with few possible errors. • 39% of the BGP and PPP code areas are unreliable (v > 10). • 416 modules (19. 4%) are unreliable & unmaintainable (v >10 & ev >4). • 27. 4% of the BGP and IP code areas are unreliable & unmaintainable. • Estimated number of possible ERRORS is 1, 272; • 12, 693 unit tests and 10, 561 module integration tests should be developed to cover NINE protocol-based areas of the Project-C code. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 36

Project-C Protocol-Based Code Analysis • NINE protocol-based areas of the code (2, 141 modules) have been analyzed, namely BGP, FR, IGMP, ISIS, OSPF, PPP, RIP, and SNMP. • 130, 000 lines of code have been studied. • 28% of the code modules have the Cyclomatic Complexity more than 10 (including 272 functions with v > 20); - unreliable modules! • FR & SNMP parts are well designed & programmed with few possible errors. • 39% of the BGP and PPP code areas are unreliable (v > 10). • 416 modules (19. 4%) are unreliable & unmaintainable (v >10 & ev >4). • 27. 4% of the BGP and IP code areas are unreliable & unmaintainable. • Estimated number of possible ERRORS is 1, 272; • 12, 693 unit tests and 10, 561 module integration tests should be developed to cover NINE protocol-based areas of the Project-C code. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 36

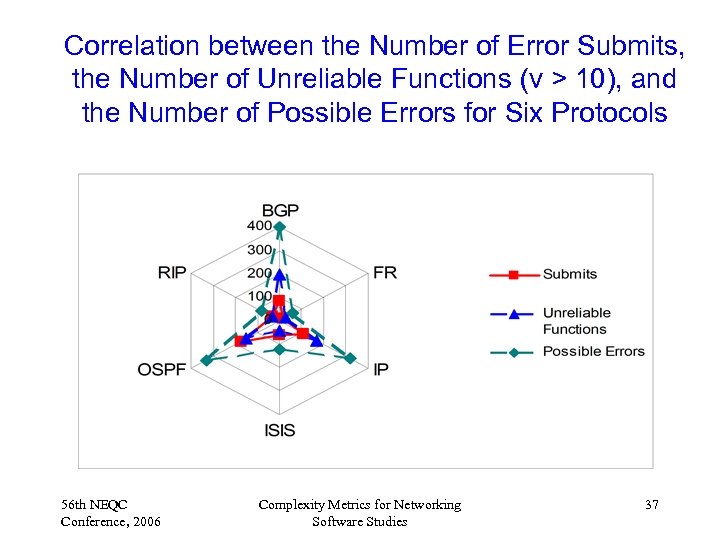

Correlation between the Number of Error Submits, the Number of Unreliable Functions (v > 10), and the Number of Possible Errors for Six Protocols 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 37

Correlation between the Number of Error Submits, the Number of Unreliable Functions (v > 10), and the Number of Possible Errors for Six Protocols 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 37

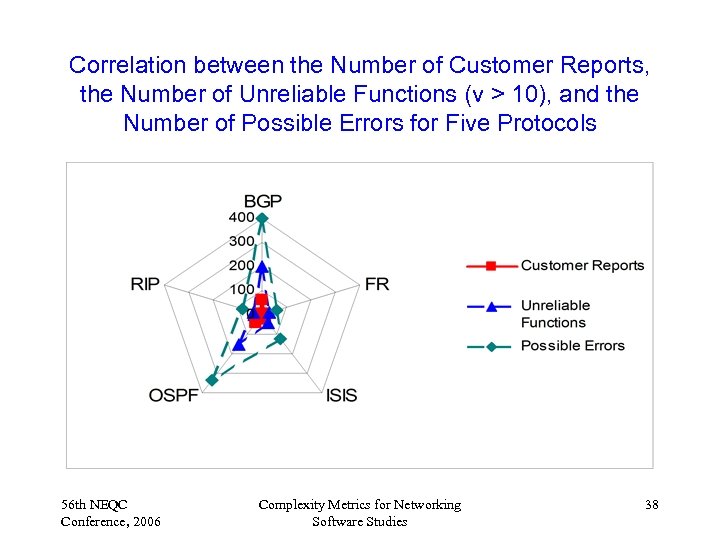

Correlation between the Number of Customer Reports, the Number of Unreliable Functions (v > 10), and the Number of Possible Errors for Five Protocols 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 38

Correlation between the Number of Customer Reports, the Number of Unreliable Functions (v > 10), and the Number of Possible Errors for Five Protocols 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 38

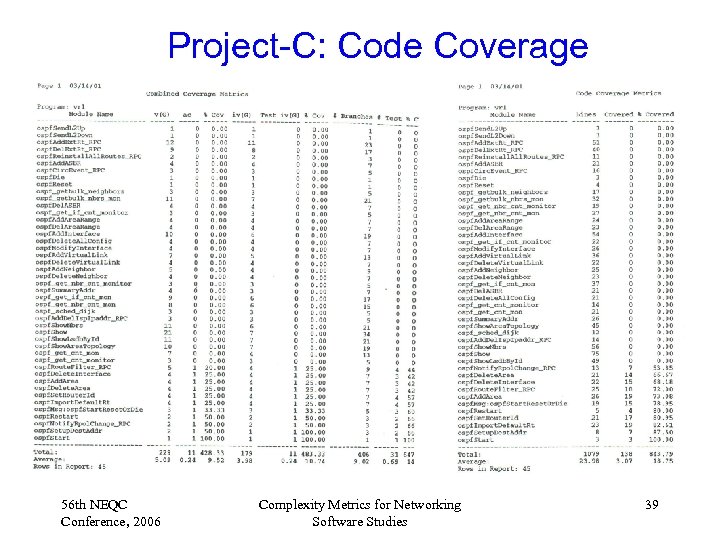

Project-C: Code Coverage 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 39

Project-C: Code Coverage 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 39

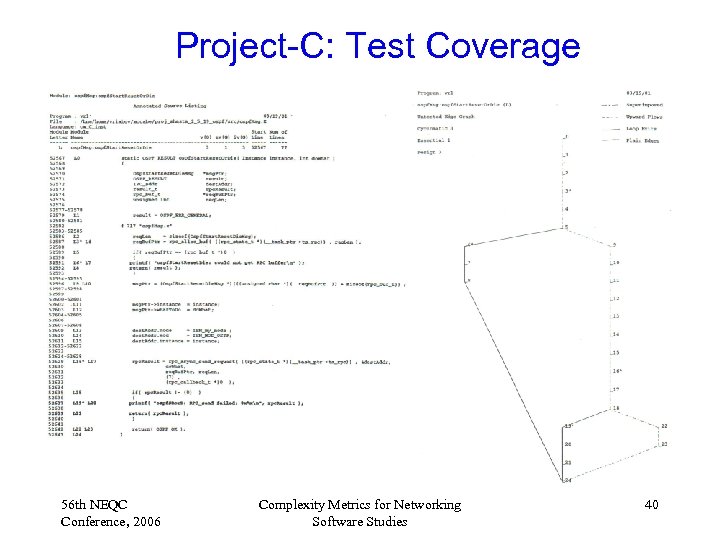

Project-C: Test Coverage 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 40

Project-C: Test Coverage 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 40

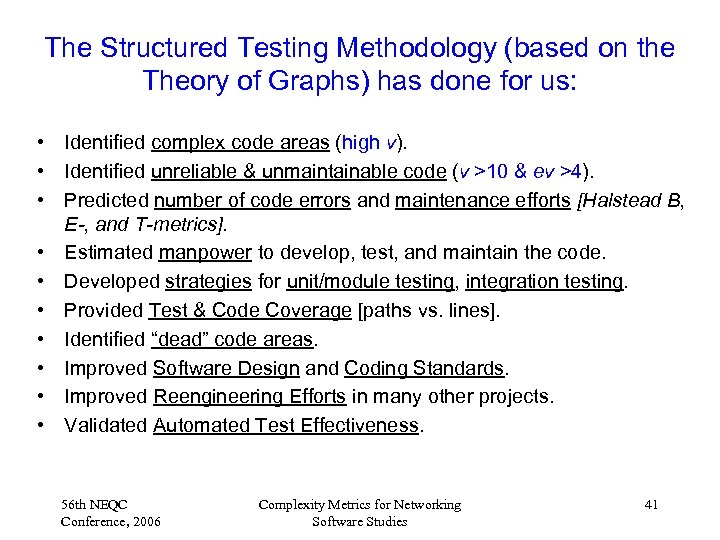

The Structured Testing Methodology (based on the Theory of Graphs) has done for us: • Identified complex code areas (high v). • Identified unreliable & unmaintainable code (v >10 & ev >4). • Predicted number of code errors and maintenance efforts [Halstead B, E-, and T-metrics]. • Estimated manpower to develop, test, and maintain the code. • Developed strategies for unit/module testing, integration testing. • Provided Test & Code Coverage [paths vs. lines]. • Identified “dead” code areas. • Improved Software Design and Coding Standards. • Improved Reengineering Efforts in many other projects. • Validated Automated Test Effectiveness. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 41

The Structured Testing Methodology (based on the Theory of Graphs) has done for us: • Identified complex code areas (high v). • Identified unreliable & unmaintainable code (v >10 & ev >4). • Predicted number of code errors and maintenance efforts [Halstead B, E-, and T-metrics]. • Estimated manpower to develop, test, and maintain the code. • Developed strategies for unit/module testing, integration testing. • Provided Test & Code Coverage [paths vs. lines]. • Identified “dead” code areas. • Improved Software Design and Coding Standards. • Improved Reengineering Efforts in many other projects. • Validated Automated Test Effectiveness. 56 th NEQC Conference, 2006 Complexity Metrics for Networking Software Studies 41