76f484d0a040eaf473395f55ae3252dc.ppt

- Количество слайдов: 61

4/22: Unexpected Hanging and other sadistic pleasures of teaching Today: Probabilistic Plan Recognition Tomorrow: Web Service Composition (BY 510; 11 AM) Thursday: Continual Planning for Printers (in-class) Tuesday 4/29: (Interactive) Review

4/22: Unexpected Hanging and other sadistic pleasures of teaching Today: Probabilistic Plan Recognition Tomorrow: Web Service Composition (BY 510; 11 AM) Thursday: Continual Planning for Printers (in-class) Tuesday 4/29: (Interactive) Review

Approaches to plan recognition h Consistency-based 5 Hypothesize & revise 5 Closed-world reasoning 5 Version spaces Can be complementary. . First pick the consistent plans, and check which of them is most likely (tricky if the agent can make errors) h Probabilistic 5 Stochastic grammars 5 Pending sets 5 Dynamic Bayes nets 5 Layered hidden Markov models 5 Policy recognition 5 Hierarchical hidden semi-Markov models 5 Dynamic probabilistic relational models 5 Example application: Assisted Cognition Oregon State University

Approaches to plan recognition h Consistency-based 5 Hypothesize & revise 5 Closed-world reasoning 5 Version spaces Can be complementary. . First pick the consistent plans, and check which of them is most likely (tricky if the agent can make errors) h Probabilistic 5 Stochastic grammars 5 Pending sets 5 Dynamic Bayes nets 5 Layered hidden Markov models 5 Policy recognition 5 Hierarchical hidden semi-Markov models 5 Dynamic probabilistic relational models 5 Example application: Assisted Cognition Oregon State University

Agenda (as actually realized in class) h Plan recognition as probabilistic (max weight) parsing h On the connection between dynamic bayes nets and plan recognition; with a detour on the special inference tasks for DBN h Examples of plan recognition techniques based on setting up DBNs and doing MPE inference on them h Discussion of Decision Theoretic Assistance paper Oregon State University

Agenda (as actually realized in class) h Plan recognition as probabilistic (max weight) parsing h On the connection between dynamic bayes nets and plan recognition; with a detour on the special inference tasks for DBN h Examples of plan recognition techniques based on setting up DBNs and doing MPE inference on them h Discussion of Decision Theoretic Assistance paper Oregon State University

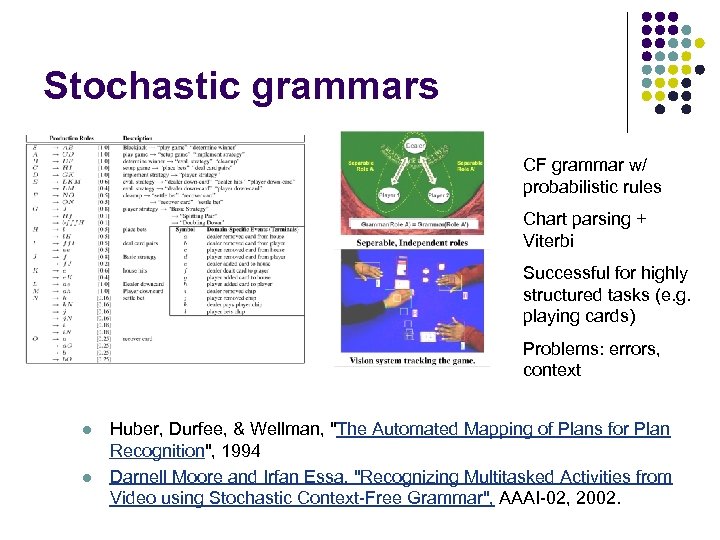

Stochastic grammars CF grammar w/ probabilistic rules Chart parsing + Viterbi Successful for highly structured tasks (e. g. playing cards) Problems: errors, context l l Huber, Durfee, & Wellman, "The Automated Mapping of Plans for Plan Recognition", 1994 Darnell Moore and Irfan Essa, "Recognizing Multitasked Activities from Video using Stochastic Context-Free Grammar", AAAI-02, 2002.

Stochastic grammars CF grammar w/ probabilistic rules Chart parsing + Viterbi Successful for highly structured tasks (e. g. playing cards) Problems: errors, context l l Huber, Durfee, & Wellman, "The Automated Mapping of Plans for Plan Recognition", 1994 Darnell Moore and Irfan Essa, "Recognizing Multitasked Activities from Video using Stochastic Context-Free Grammar", AAAI-02, 2002.

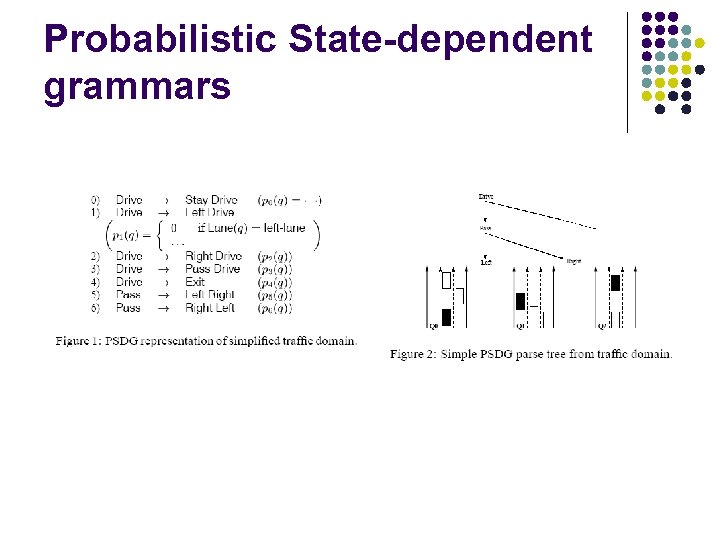

Probabilistic State-dependent grammars

Probabilistic State-dependent grammars

Connection with DBNs

Connection with DBNs

Time and Change in Probabilistic Reasoning

Time and Change in Probabilistic Reasoning

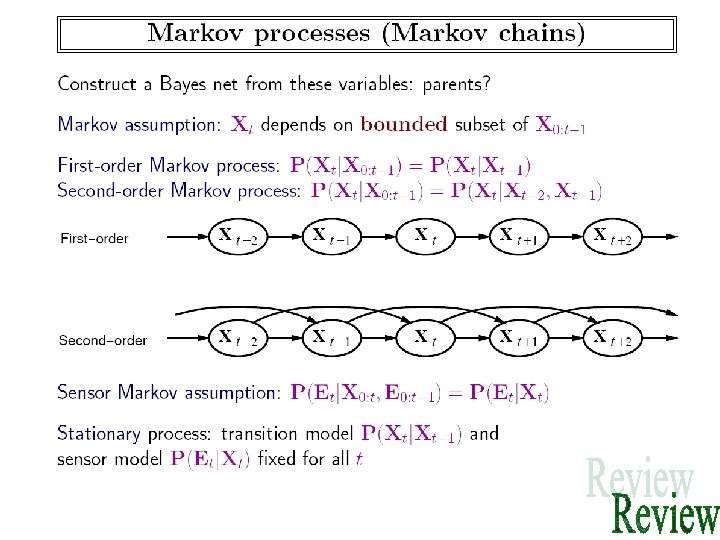

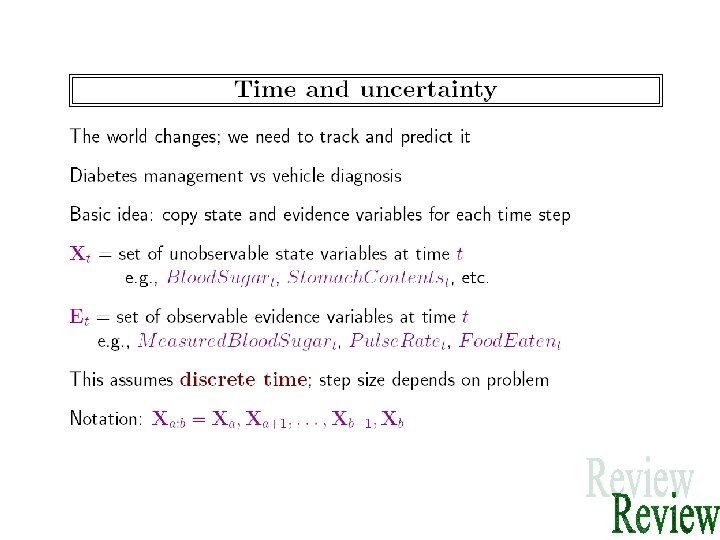

Temporal (Sequential) Process • A temporal process is the evolution of system state over time • Often the system state is hidden, and we need to reconstruct the state from the observations • Relation to Planning: – When you are observing a temporal process, you are observing the execution trace of someone else’s plan…

Temporal (Sequential) Process • A temporal process is the evolution of system state over time • Often the system state is hidden, and we need to reconstruct the state from the observations • Relation to Planning: – When you are observing a temporal process, you are observing the execution trace of someone else’s plan…

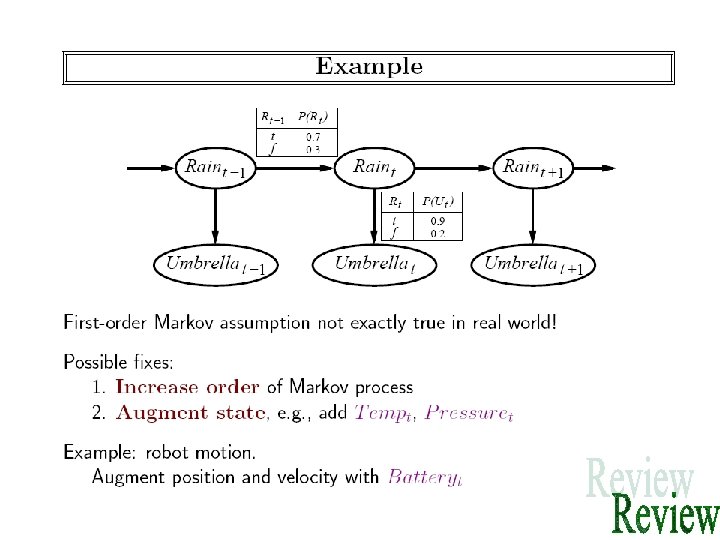

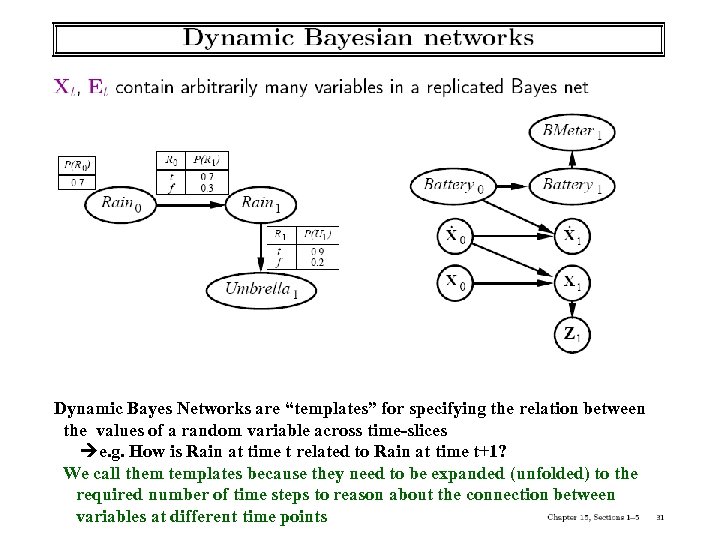

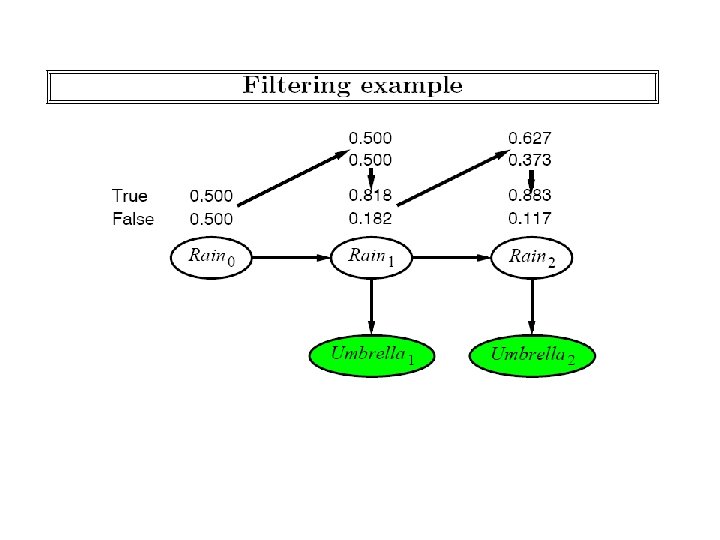

Dynamic Bayes Networks are “templates” for specifying the relation between the values of a random variable across time-slices e. g. How is Rain at time t related to Rain at time t+1? We call them templates because they need to be expanded (unfolded) to the required number of time steps to reason about the connection between variables at different time points

Dynamic Bayes Networks are “templates” for specifying the relation between the values of a random variable across time-slices e. g. How is Rain at time t related to Rain at time t+1? We call them templates because they need to be expanded (unfolded) to the required number of time steps to reason about the connection between variables at different time points

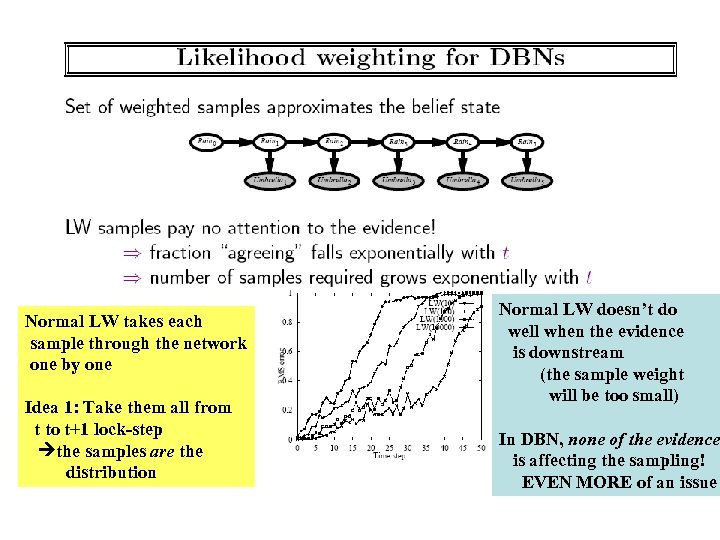

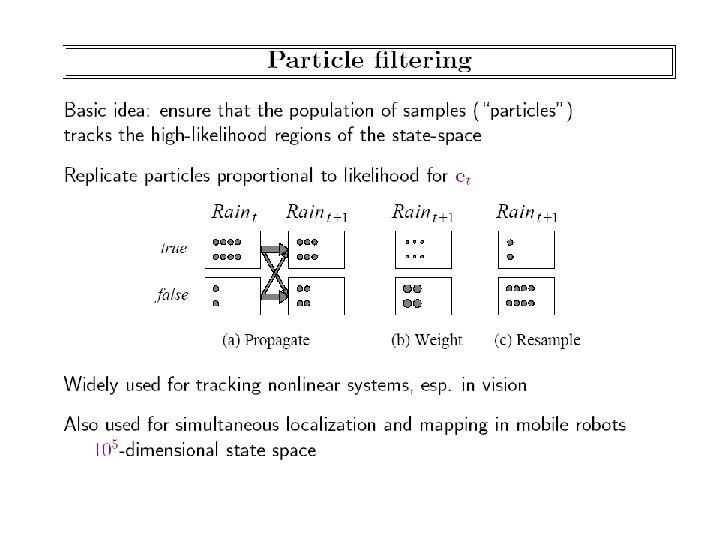

Normal LW takes each sample through the network one by one Idea 1: Take them all from t to t+1 lock-step the samples are the distribution Normal LW doesn’t do well when the evidence is downstream (the sample weight will be too small) In DBN, none of the evidence is affecting the sampling! EVEN MORE of an issue

Normal LW takes each sample through the network one by one Idea 1: Take them all from t to t+1 lock-step the samples are the distribution Normal LW doesn’t do well when the evidence is downstream (the sample weight will be too small) In DBN, none of the evidence is affecting the sampling! EVEN MORE of an issue

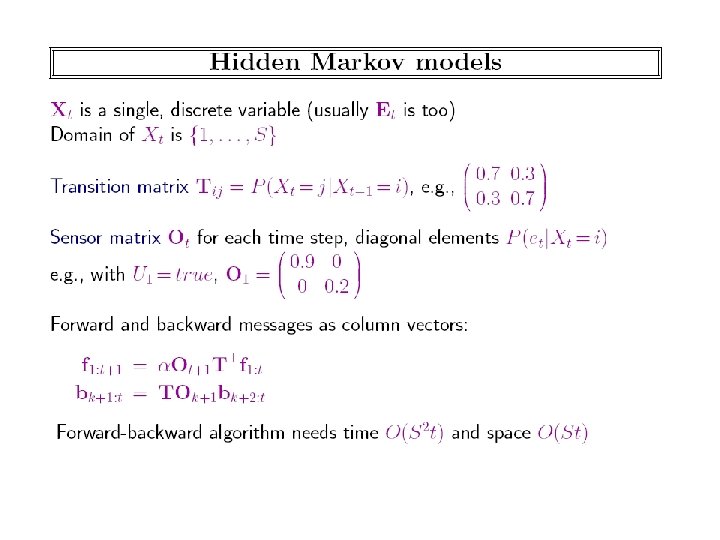

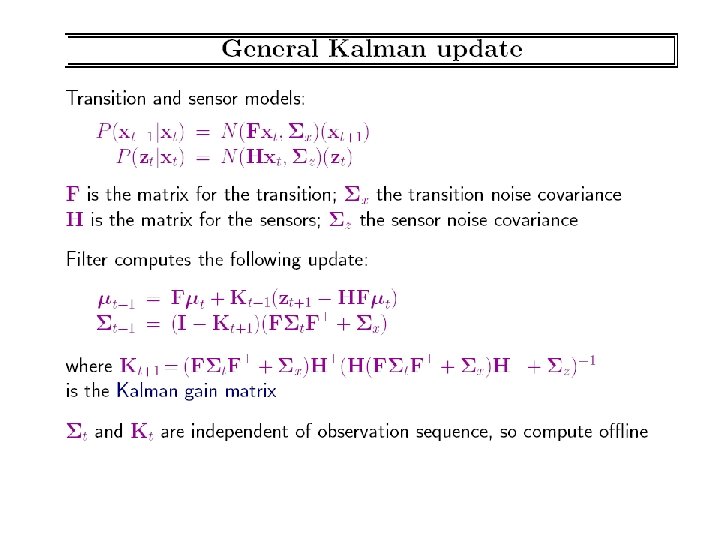

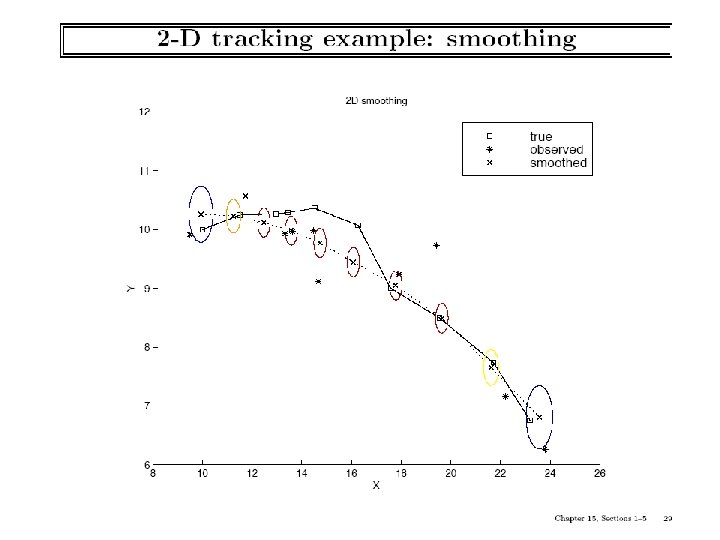

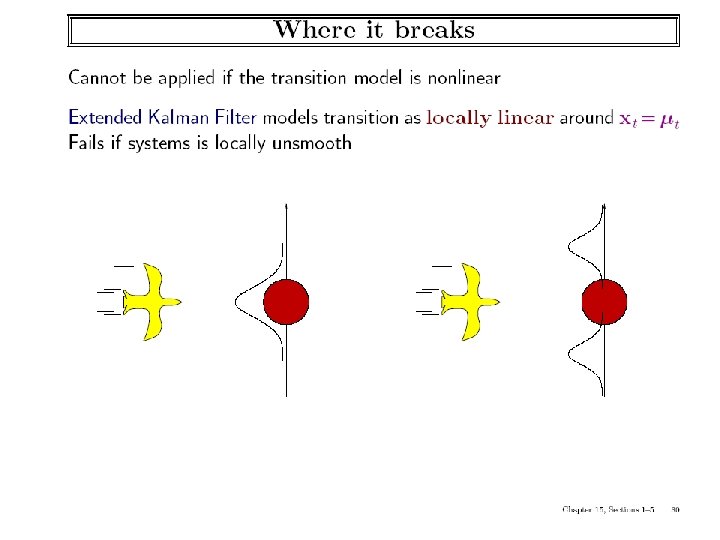

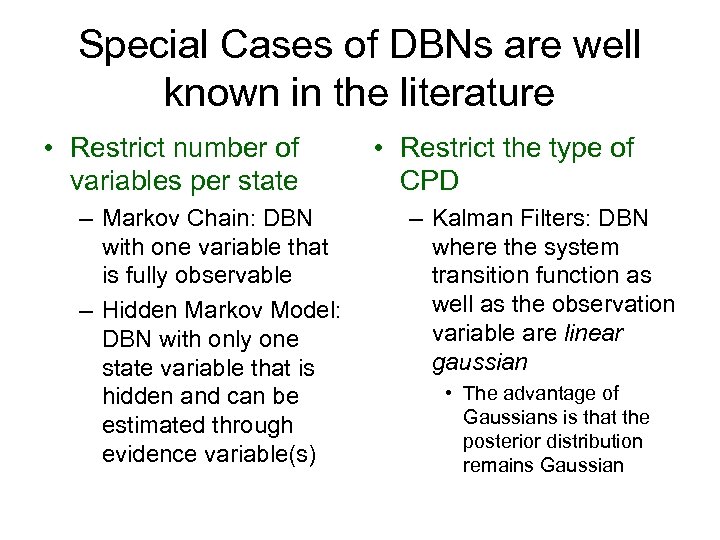

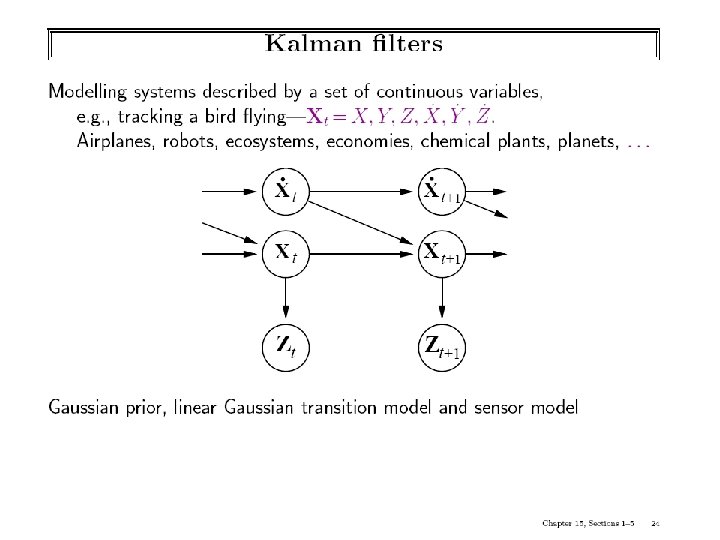

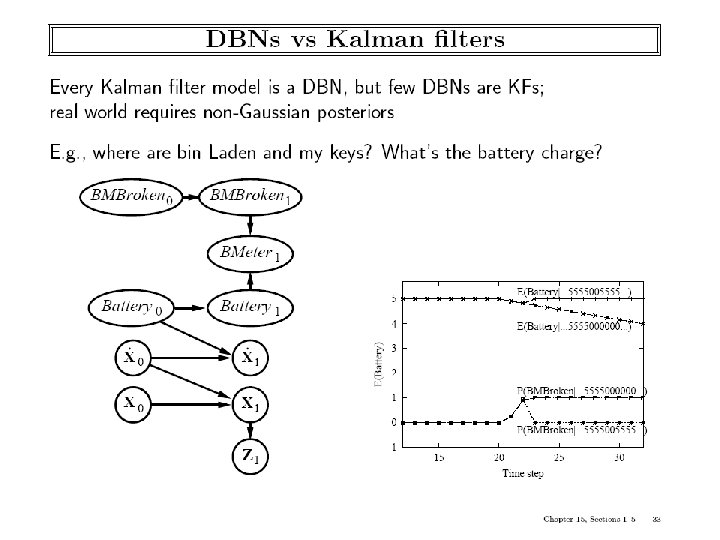

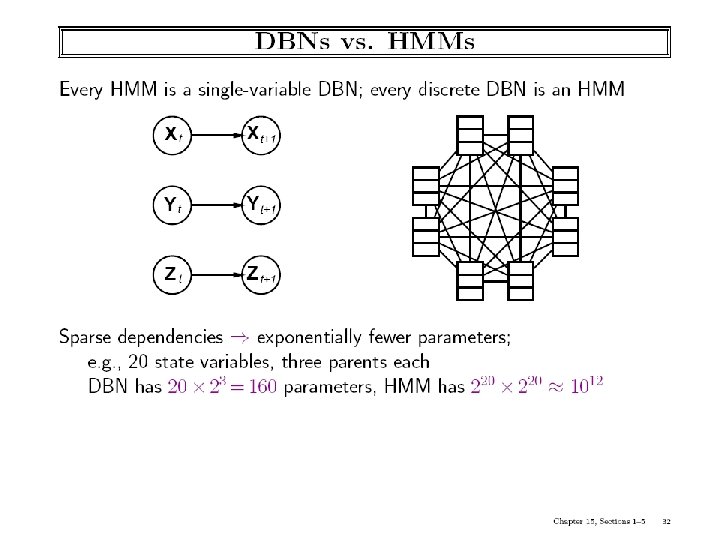

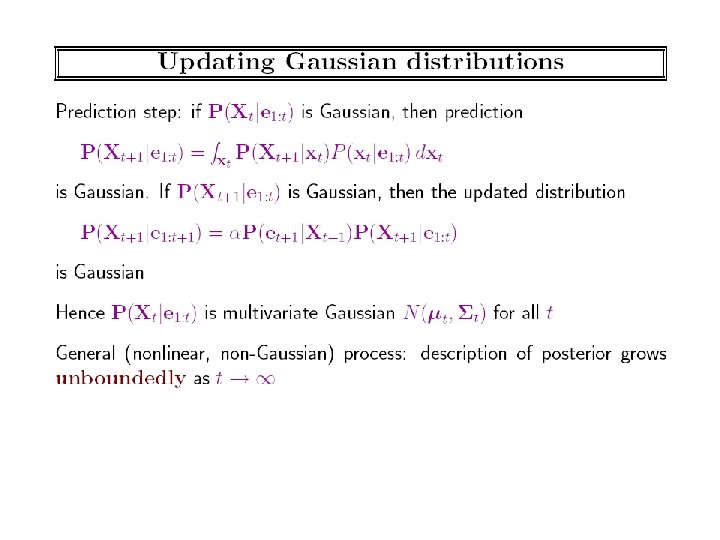

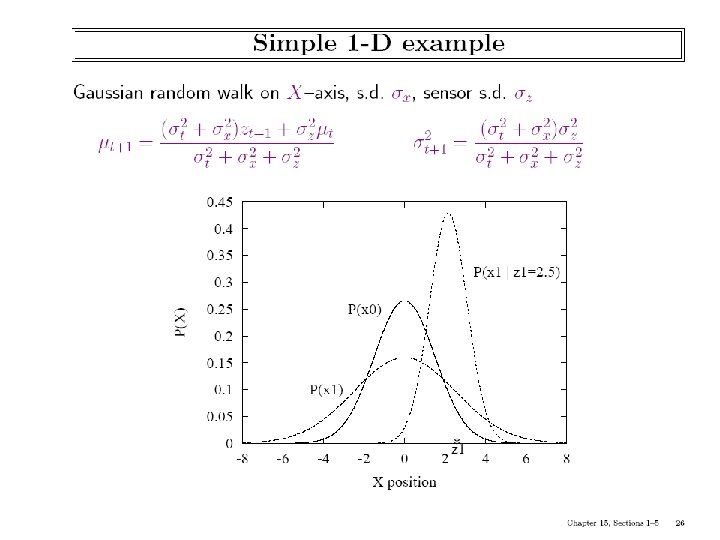

Special Cases of DBNs are well known in the literature • Restrict number of variables per state – Markov Chain: DBN with one variable that is fully observable – Hidden Markov Model: DBN with only one state variable that is hidden and can be estimated through evidence variable(s) • Restrict the type of CPD – Kalman Filters: DBN where the system transition function as well as the observation variable are linear gaussian • The advantage of Gaussians is that the posterior distribution remains Gaussian

Special Cases of DBNs are well known in the literature • Restrict number of variables per state – Markov Chain: DBN with one variable that is fully observable – Hidden Markov Model: DBN with only one state variable that is hidden and can be estimated through evidence variable(s) • Restrict the type of CPD – Kalman Filters: DBN where the system transition function as well as the observation variable are linear gaussian • The advantage of Gaussians is that the posterior distribution remains Gaussian

Plan Recognition Approaches based on setting up DBNs

Plan Recognition Approaches based on setting up DBNs

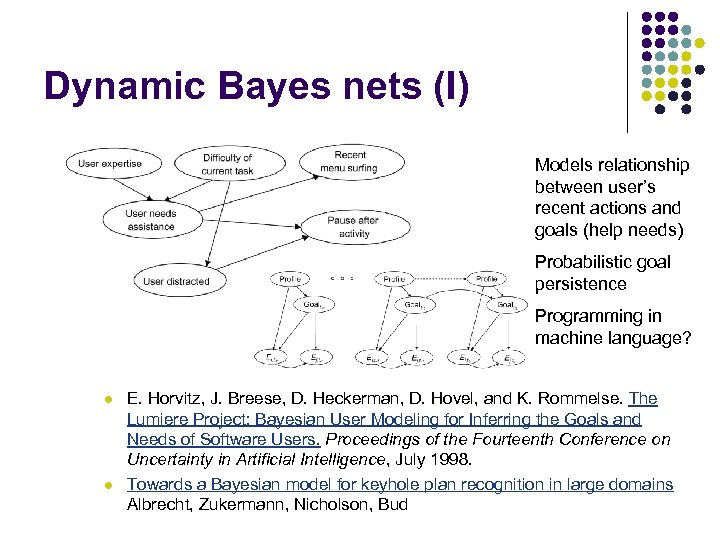

Dynamic Bayes nets (I) Models relationship between user’s recent actions and goals (help needs) Probabilistic goal persistence Programming in machine language? l l E. Horvitz, J. Breese, D. Heckerman, D. Hovel, and K. Rommelse. The Lumiere Project: Bayesian User Modeling for Inferring the Goals and Needs of Software Users. Proceedings of the Fourteenth Conference on Uncertainty in Artificial Intelligence, July 1998. Towards a Bayesian model for keyhole plan recognition in large domains Albrecht, Zukermann, Nicholson, Bud

Dynamic Bayes nets (I) Models relationship between user’s recent actions and goals (help needs) Probabilistic goal persistence Programming in machine language? l l E. Horvitz, J. Breese, D. Heckerman, D. Hovel, and K. Rommelse. The Lumiere Project: Bayesian User Modeling for Inferring the Goals and Needs of Software Users. Proceedings of the Fourteenth Conference on Uncertainty in Artificial Intelligence, July 1998. Towards a Bayesian model for keyhole plan recognition in large domains Albrecht, Zukermann, Nicholson, Bud

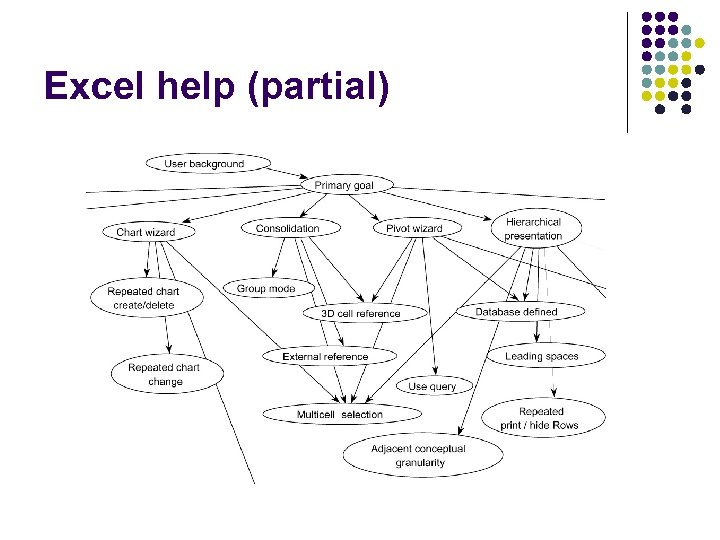

Excel help (partial)

Excel help (partial)

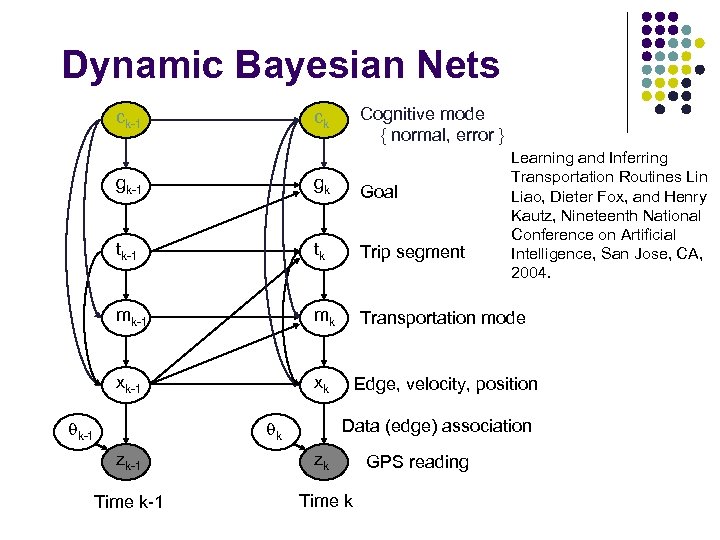

Dynamic Bayesian Nets ck-1 Cognitive mode { normal, error } ck Learning and Inferring Transportation Routines Lin Liao, Dieter Fox, and Henry Kautz, Nineteenth National Conference on Artificial Intelligence, San Jose, CA, 2004. gk-1 gk Goal tk-1 tk Trip segment mk-1 mk Transportation mode xk-1 xk Edge, velocity, position qk-1 Data (edge) association qk zk-1 zk Time k-1 Time k GPS reading

Dynamic Bayesian Nets ck-1 Cognitive mode { normal, error } ck Learning and Inferring Transportation Routines Lin Liao, Dieter Fox, and Henry Kautz, Nineteenth National Conference on Artificial Intelligence, San Jose, CA, 2004. gk-1 gk Goal tk-1 tk Trip segment mk-1 mk Transportation mode xk-1 xk Edge, velocity, position qk-1 Data (edge) association qk zk-1 zk Time k-1 Time k GPS reading

Decision-Theoretic Assistance Don’t just recognize! Jump in and help. . Allows us to also talk about POMDPs

Decision-Theoretic Assistance Don’t just recognize! Jump in and help. . Allows us to also talk about POMDPs

Intelligent Assistants h Many examples of AI techniques being applied to assistive technologies h Intelligent Desktop Assistants 5 Calendar Apprentice (CAP) (Mitchell et al. 1994) 5 Travel Assistant (Ambite et al. 2002) 5 CALO Project 5 Tasktracer 5 Electric Elves (Hans Chalupsky et al. 2001) h Assistive Technologies for the Disabled 5 COACH System (Boger et al. 2005) Oregon State University

Intelligent Assistants h Many examples of AI techniques being applied to assistive technologies h Intelligent Desktop Assistants 5 Calendar Apprentice (CAP) (Mitchell et al. 1994) 5 Travel Assistant (Ambite et al. 2002) 5 CALO Project 5 Tasktracer 5 Electric Elves (Hans Chalupsky et al. 2001) h Assistive Technologies for the Disabled 5 COACH System (Boger et al. 2005) Oregon State University

Not So Intelligent h Most previous work uses problem-specific, hand-crafted solutions 5 Lack ability to offer assistance in ways not planned for by designer h Our goal: provide a general, formal framework for intelligent-assistant design h Desirable properties: 5 Explicitly reason about models of the world and user to provide flexible assistance 5 Handle uncertainty about the world and user 5 Handle variable costs of user and assistive actions h We describe a model-based decision-theoretic framework that captures these properties Oregon State University

Not So Intelligent h Most previous work uses problem-specific, hand-crafted solutions 5 Lack ability to offer assistance in ways not planned for by designer h Our goal: provide a general, formal framework for intelligent-assistant design h Desirable properties: 5 Explicitly reason about models of the world and user to provide flexible assistance 5 Handle uncertainty about the world and user 5 Handle variable costs of user and assistive actions h We describe a model-based decision-theoretic framework that captures these properties Oregon State University

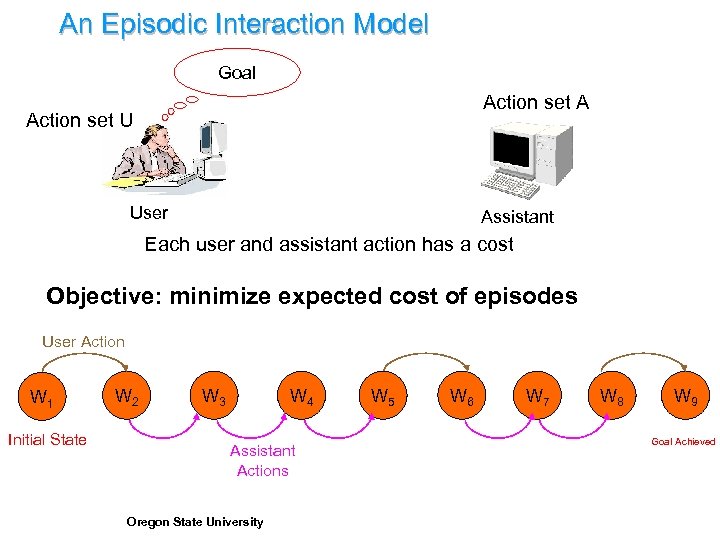

An Episodic Interaction Model Goal Action set A Action set U User Assistant Each user and assistant action has a cost Objective: minimize expected cost of episodes User Action W 1 Initial State W 2 W 3 W 4 Assistant Actions Oregon State University W 5 W 6 W 7 W 8 W 9 Goal Achieved

An Episodic Interaction Model Goal Action set A Action set U User Assistant Each user and assistant action has a cost Objective: minimize expected cost of episodes User Action W 1 Initial State W 2 W 3 W 4 Assistant Actions Oregon State University W 5 W 6 W 7 W 8 W 9 Goal Achieved

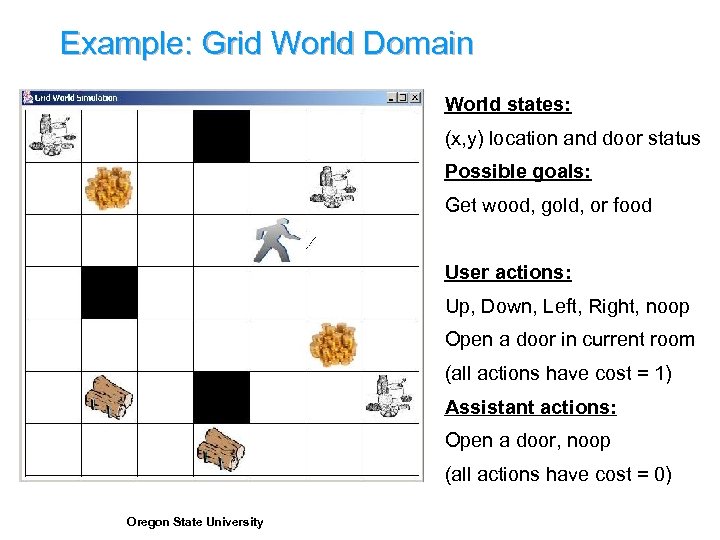

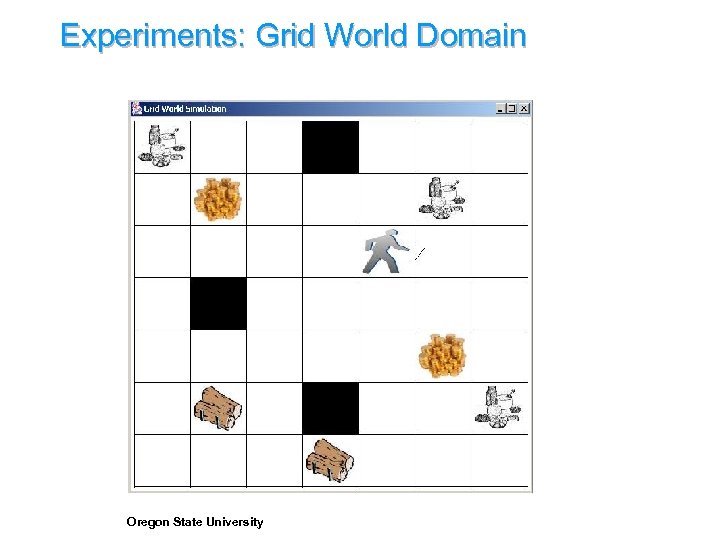

Example: Grid World Domain World states: (x, y) location and door status Possible goals: Get wood, gold, or food User actions: Up, Down, Left, Right, noop Open a door in current room (all actions have cost = 1) Assistant actions: Open a door, noop (all actions have cost = 0) Oregon State University

Example: Grid World Domain World states: (x, y) location and door status Possible goals: Get wood, gold, or food User actions: Up, Down, Left, Right, noop Open a door in current room (all actions have cost = 1) Assistant actions: Open a door, noop (all actions have cost = 0) Oregon State University

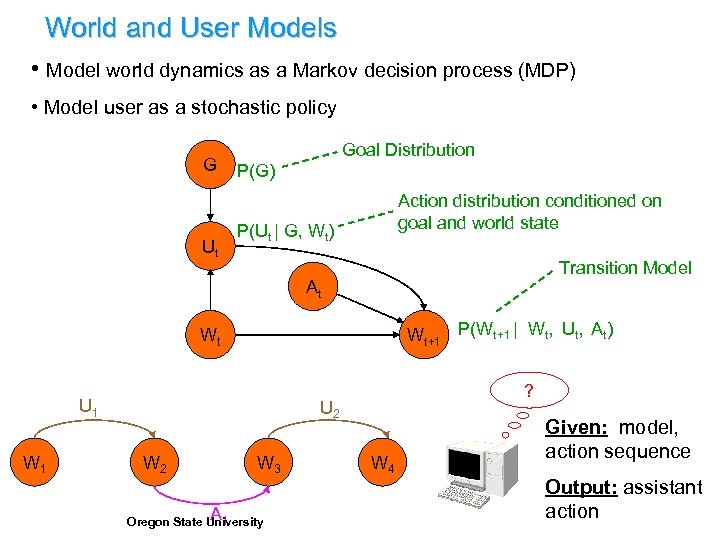

World and User Models • Model world dynamics as a Markov decision process (MDP) • Model user as a stochastic policy G Ut Goal Distribution P(G) Action distribution conditioned on goal and world state P(Ut | G, Wt) Transition Model At Wt+1 P(Wt+1 | Wt, Ut, At) Wt U 1 W 1 ? U 2 W 3 A 1 Oregon State University W 4 Given: model, action sequence Output: assistant action

World and User Models • Model world dynamics as a Markov decision process (MDP) • Model user as a stochastic policy G Ut Goal Distribution P(G) Action distribution conditioned on goal and world state P(Ut | G, Wt) Transition Model At Wt+1 P(Wt+1 | Wt, Ut, At) Wt U 1 W 1 ? U 2 W 3 A 1 Oregon State University W 4 Given: model, action sequence Output: assistant action

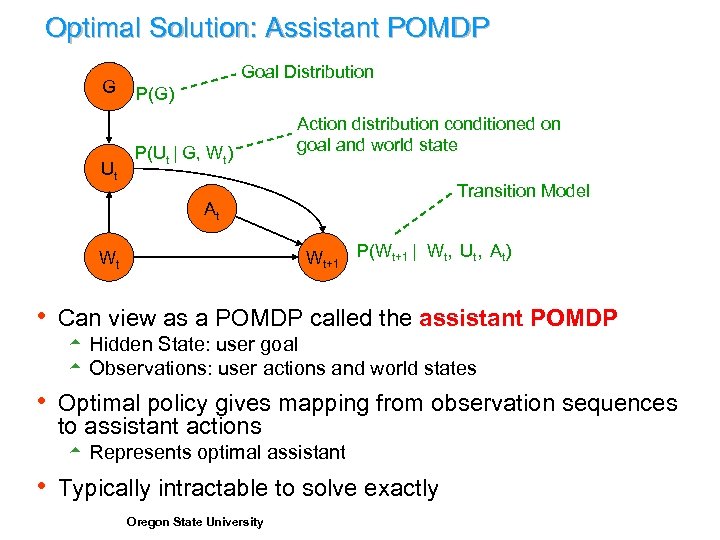

Optimal Solution: Assistant POMDP G Ut Goal Distribution P(G) P(Ut | G, Wt) Action distribution conditioned on goal and world state Transition Model At Wt+1 P(Wt+1 | Wt, Ut, At) Wt h Can view as a POMDP called the assistant POMDP 5 Hidden State: user goal 5 Observations: user actions and world states h Optimal policy gives mapping from observation sequences to assistant actions 5 Represents optimal assistant h Typically intractable to solve exactly Oregon State University

Optimal Solution: Assistant POMDP G Ut Goal Distribution P(G) P(Ut | G, Wt) Action distribution conditioned on goal and world state Transition Model At Wt+1 P(Wt+1 | Wt, Ut, At) Wt h Can view as a POMDP called the assistant POMDP 5 Hidden State: user goal 5 Observations: user actions and world states h Optimal policy gives mapping from observation sequences to assistant actions 5 Represents optimal assistant h Typically intractable to solve exactly Oregon State University

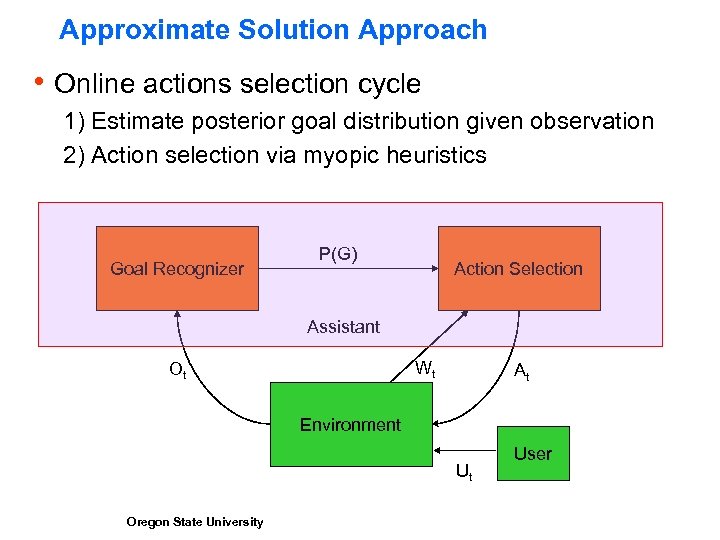

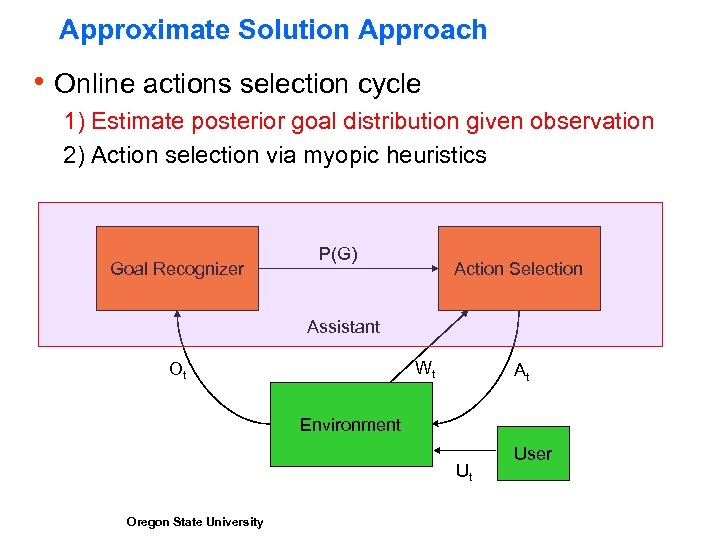

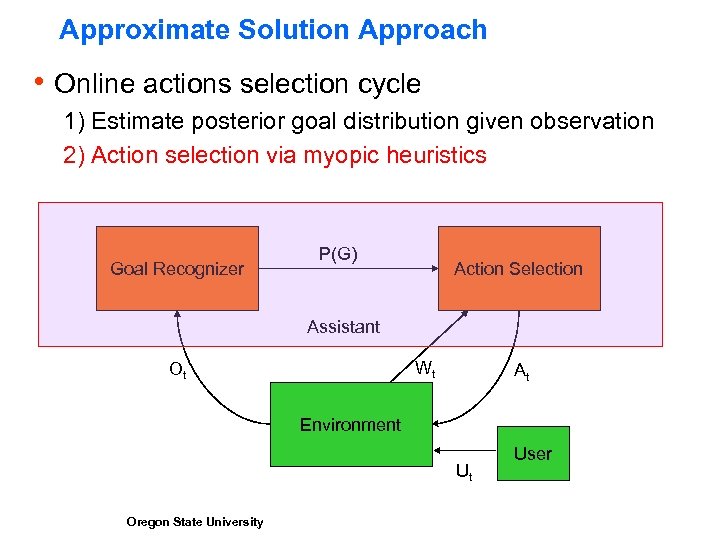

Approximate Solution Approach h Online actions selection cycle 1) Estimate posterior goal distribution given observation 2) Action selection via myopic heuristics Goal Recognizer P(G) Action Selection Assistant Wt Ot At Environment Ut Oregon State University User

Approximate Solution Approach h Online actions selection cycle 1) Estimate posterior goal distribution given observation 2) Action selection via myopic heuristics Goal Recognizer P(G) Action Selection Assistant Wt Ot At Environment Ut Oregon State University User

Approximate Solution Approach h Online actions selection cycle 1) Estimate posterior goal distribution given observation 2) Action selection via myopic heuristics Goal Recognizer P(G) Action Selection Assistant Wt Ot At Environment Ut Oregon State University User

Approximate Solution Approach h Online actions selection cycle 1) Estimate posterior goal distribution given observation 2) Action selection via myopic heuristics Goal Recognizer P(G) Action Selection Assistant Wt Ot At Environment Ut Oregon State University User

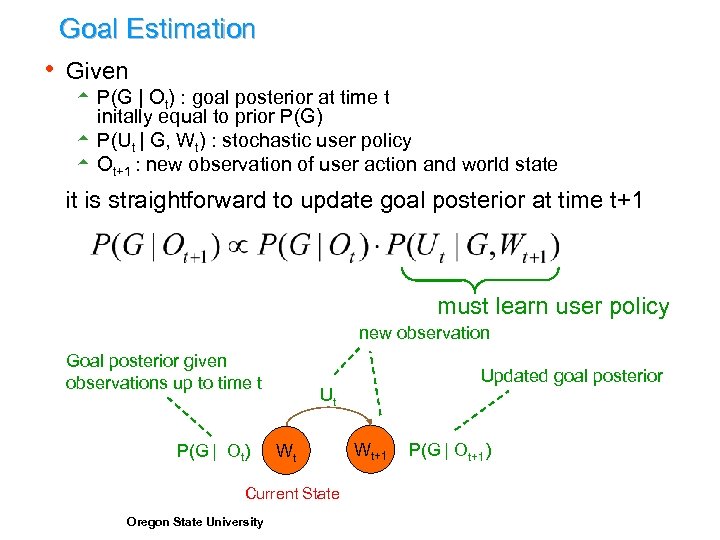

Goal Estimation h Given 5 P(G | Ot) : goal posterior at time t initally equal to prior P(G) 5 P(Ut | G, Wt) : stochastic user policy 5 Ot+1 : new observation of user action and world state it is straightforward to update goal posterior at time t+1 must learn user policy new observation Goal posterior given observations up to time t P(G | Ot) Ut Wt Current State Oregon State University Updated goal posterior Wt+1 P(G | Ot+1)

Goal Estimation h Given 5 P(G | Ot) : goal posterior at time t initally equal to prior P(G) 5 P(Ut | G, Wt) : stochastic user policy 5 Ot+1 : new observation of user action and world state it is straightforward to update goal posterior at time t+1 must learn user policy new observation Goal posterior given observations up to time t P(G | Ot) Ut Wt Current State Oregon State University Updated goal posterior Wt+1 P(G | Ot+1)

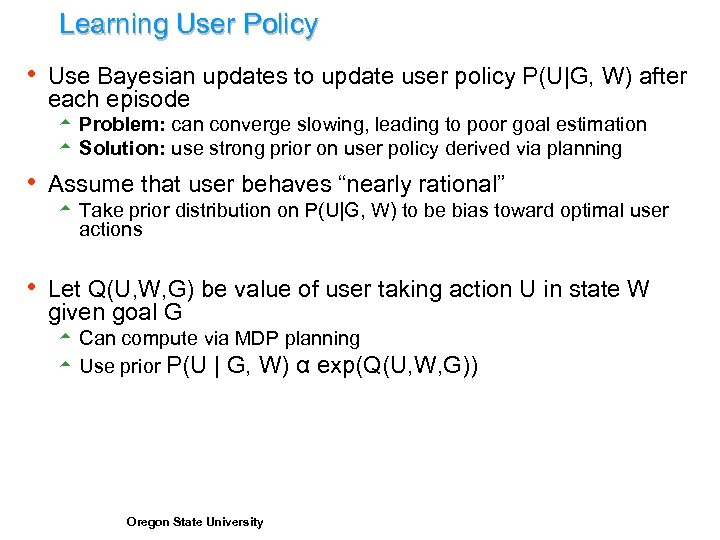

Learning User Policy h Use Bayesian updates to update user policy P(U|G, W) after each episode 5 Problem: can converge slowing, leading to poor goal estimation 5 Solution: use strong prior on user policy derived via planning h Assume that user behaves “nearly rational” 5 Take prior distribution on P(U|G, W) to be bias toward optimal user actions h Let Q(U, W, G) be value of user taking action U in state W given goal G 5 Can compute via MDP planning 5 Use prior P(U | G, W) α exp(Q(U, W, G)) Oregon State University

Learning User Policy h Use Bayesian updates to update user policy P(U|G, W) after each episode 5 Problem: can converge slowing, leading to poor goal estimation 5 Solution: use strong prior on user policy derived via planning h Assume that user behaves “nearly rational” 5 Take prior distribution on P(U|G, W) to be bias toward optimal user actions h Let Q(U, W, G) be value of user taking action U in state W given goal G 5 Can compute via MDP planning 5 Use prior P(U | G, W) α exp(Q(U, W, G)) Oregon State University

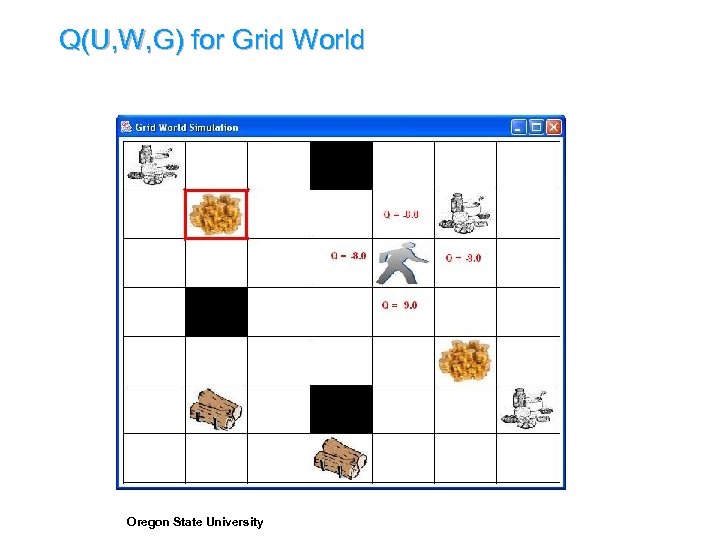

Q(U, W, G) for Grid World Oregon State University

Q(U, W, G) for Grid World Oregon State University

Approximate Solution Approach h Online actions selection cycle 1) Estimate posterior goal distribution given observation 2) Action selection via myopic heuristics Goal Recognizer P(G) Action Selection Assistant Wt Ot At Environment Ut Oregon State University User

Approximate Solution Approach h Online actions selection cycle 1) Estimate posterior goal distribution given observation 2) Action selection via myopic heuristics Goal Recognizer P(G) Action Selection Assistant Wt Ot At Environment Ut Oregon State University User

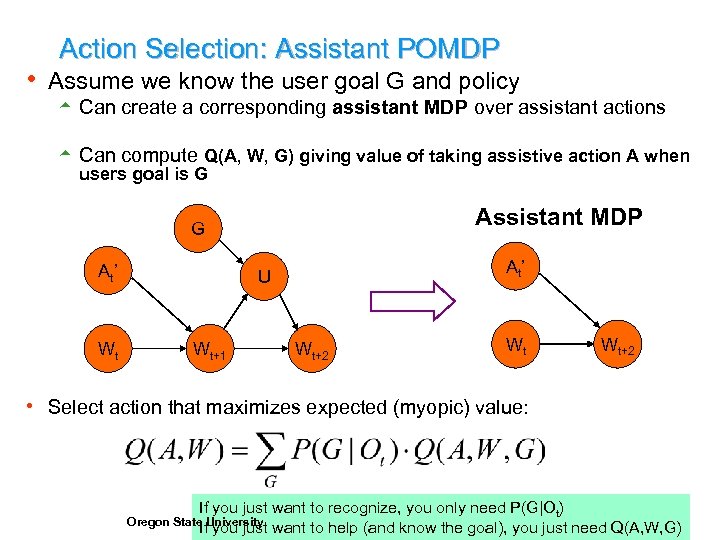

Action Selection: Assistant POMDP h Assume we know the user goal G and policy 5 Can create a corresponding assistant MDP over assistant actions 5 Can compute Q(A, W, G) giving value of taking assistive action A when users goal is G Assistant MDP G At’ Wt At’ U Wt+1 Wt+2 Wt Wt+2 h Select action that maximizes expected (myopic) value: If you just want to recognize, you only need P(G|Ot) If you just want to help (and know the goal), you just need Q(A, W, G) Oregon State University

Action Selection: Assistant POMDP h Assume we know the user goal G and policy 5 Can create a corresponding assistant MDP over assistant actions 5 Can compute Q(A, W, G) giving value of taking assistive action A when users goal is G Assistant MDP G At’ Wt At’ U Wt+1 Wt+2 Wt Wt+2 h Select action that maximizes expected (myopic) value: If you just want to recognize, you only need P(G|Ot) If you just want to help (and know the goal), you just need Q(A, W, G) Oregon State University

Experiments: Grid World Domain Oregon State University

Experiments: Grid World Domain Oregon State University

Experiments: Kitchen Domain Oregon State University

Experiments: Kitchen Domain Oregon State University

Experimental Results § Experiment: 12 human subjects, two domains § Subjects were asked to achieve a sequence of goals § Compared average cost of performing tasks with assistant to optimal cost without assistant § Assistant reduced cost by over 50% Oregon State University

Experimental Results § Experiment: 12 human subjects, two domains § Subjects were asked to achieve a sequence of goals § Compared average cost of performing tasks with assistant to optimal cost without assistant § Assistant reduced cost by over 50% Oregon State University

Summary of Assumptions h Model Assumptions: 5 World can be approximately modeled as MDP 5 User and assistant interleave actions (no parallel activity) 5 User can be modeled as a stationary, stochastic policy 5 Finite set of known goals h Assumptions Made by Solution Approach 5 Access to practical algorithm for solving the world MDP 5 User does no reason about the existence of the assistance 5 Goal set is relatively small and known to assistant 5 User is close to “rational” Oregon State University

Summary of Assumptions h Model Assumptions: 5 World can be approximately modeled as MDP 5 User and assistant interleave actions (no parallel activity) 5 User can be modeled as a stationary, stochastic policy 5 Finite set of known goals h Assumptions Made by Solution Approach 5 Access to practical algorithm for solving the world MDP 5 User does no reason about the existence of the assistance 5 Goal set is relatively small and known to assistant 5 User is close to “rational” Oregon State University

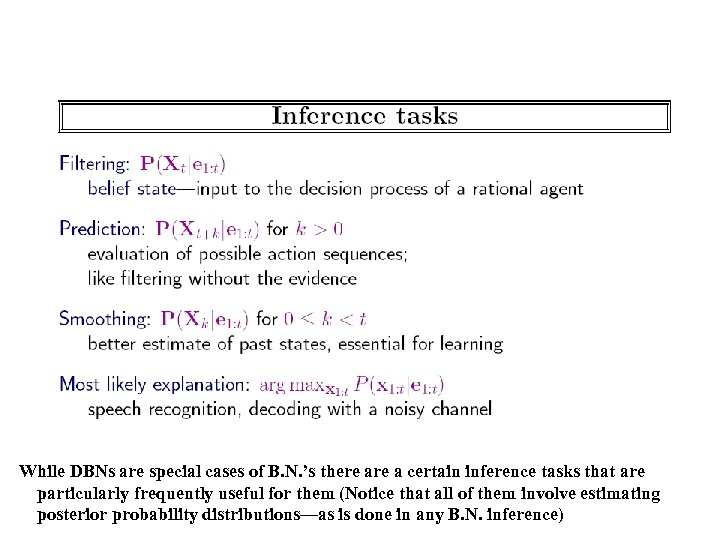

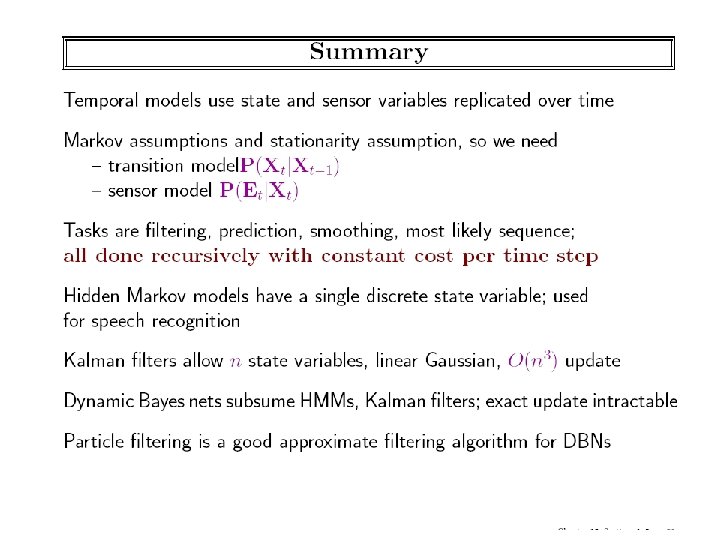

While DBNs are special cases of B. N. ’s there a certain inference tasks that are particularly frequently useful for them (Notice that all of them involve estimating posterior probability distributions—as is done in any B. N. inference)

While DBNs are special cases of B. N. ’s there a certain inference tasks that are particularly frequently useful for them (Notice that all of them involve estimating posterior probability distributions—as is done in any B. N. inference)

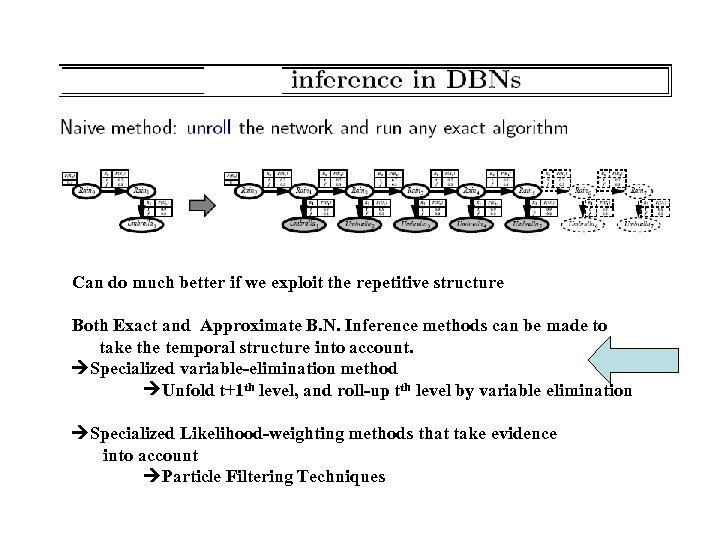

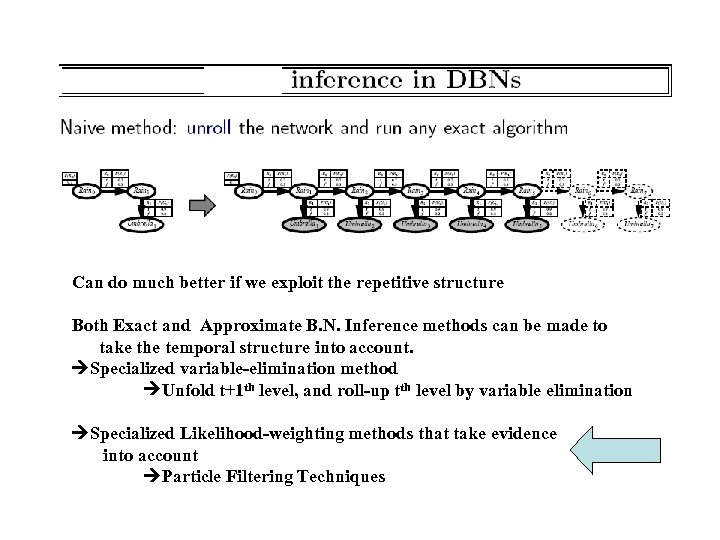

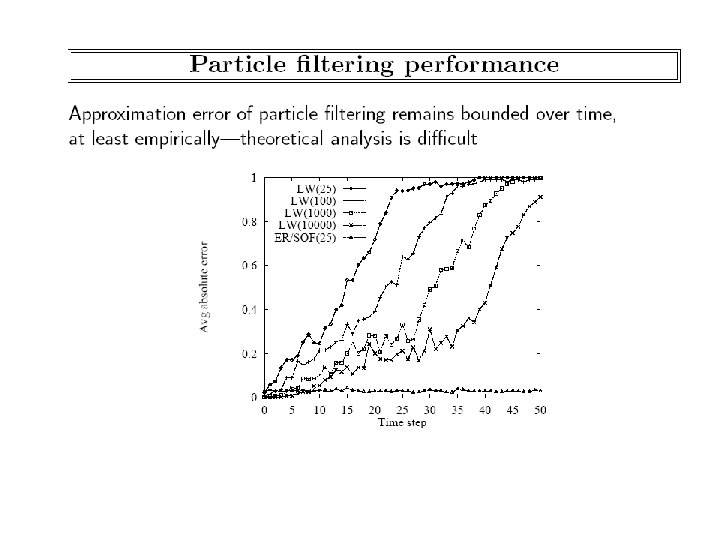

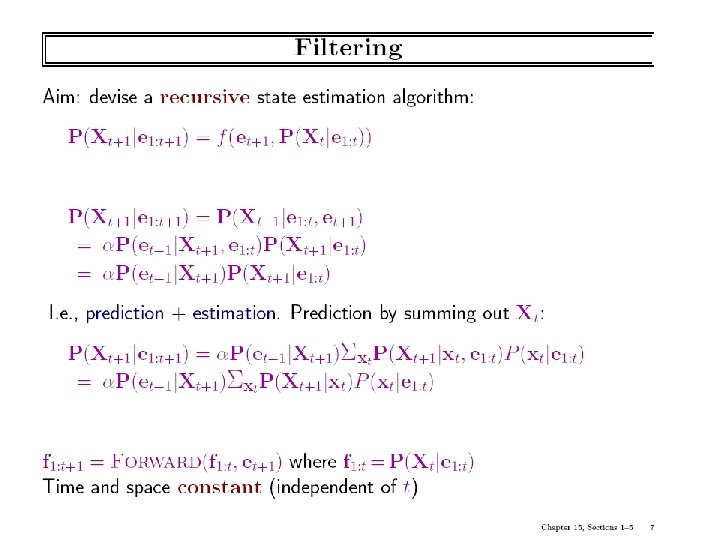

Can do much better if we exploit the repetitive structure Both Exact and Approximate B. N. Inference methods can be made to take the temporal structure into account. Specialized variable-elimination method Unfold t+1 th level, and roll-up tth level by variable elimination Specialized Likelihood-weighting methods that take evidence into account Particle Filtering Techniques

Can do much better if we exploit the repetitive structure Both Exact and Approximate B. N. Inference methods can be made to take the temporal structure into account. Specialized variable-elimination method Unfold t+1 th level, and roll-up tth level by variable elimination Specialized Likelihood-weighting methods that take evidence into account Particle Filtering Techniques

Can do much better if we exploit the repetitive structure Both Exact and Approximate B. N. Inference methods can be made to take the temporal structure into account. Specialized variable-elimination method Unfold t+1 th level, and roll-up tth level by variable elimination Specialized Likelihood-weighting methods that take evidence into account Particle Filtering Techniques

Can do much better if we exploit the repetitive structure Both Exact and Approximate B. N. Inference methods can be made to take the temporal structure into account. Specialized variable-elimination method Unfold t+1 th level, and roll-up tth level by variable elimination Specialized Likelihood-weighting methods that take evidence into account Particle Filtering Techniques

Class Ended here. . Slides beyond this not discussed

Class Ended here. . Slides beyond this not discussed

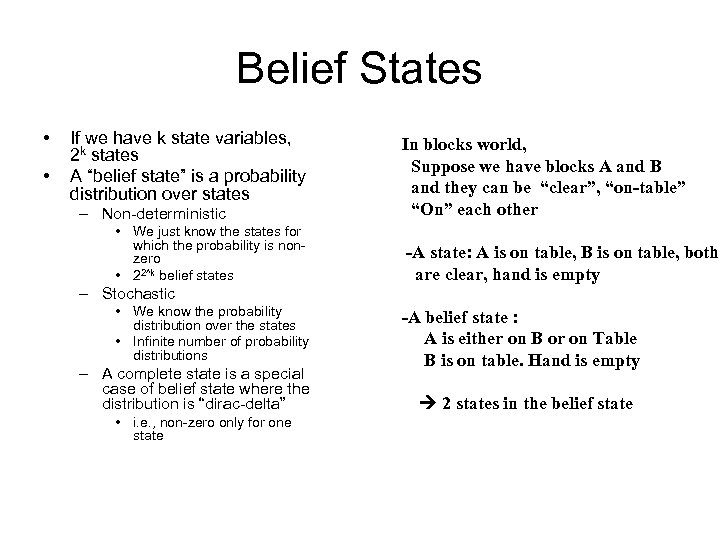

Belief States • • If we have k state variables, 2 k states A “belief state” is a probability distribution over states – Non-deterministic • We just know the states for which the probability is nonzero • 22^k belief states In blocks world, Suppose we have blocks A and B and they can be “clear”, “on-table” “On” each other -A state: A is on table, B is on table, both are clear, hand is empty – Stochastic • We know the probability distribution over the states • Infinite number of probability distributions – A complete state is a special case of belief state where the distribution is “dirac-delta” • i. e. , non-zero only for one state -A belief state : A is either on B or on Table B is on table. Hand is empty 2 states in the belief state

Belief States • • If we have k state variables, 2 k states A “belief state” is a probability distribution over states – Non-deterministic • We just know the states for which the probability is nonzero • 22^k belief states In blocks world, Suppose we have blocks A and B and they can be “clear”, “on-table” “On” each other -A state: A is on table, B is on table, both are clear, hand is empty – Stochastic • We know the probability distribution over the states • Infinite number of probability distributions – A complete state is a special case of belief state where the distribution is “dirac-delta” • i. e. , non-zero only for one state -A belief state : A is either on B or on Table B is on table. Hand is empty 2 states in the belief state

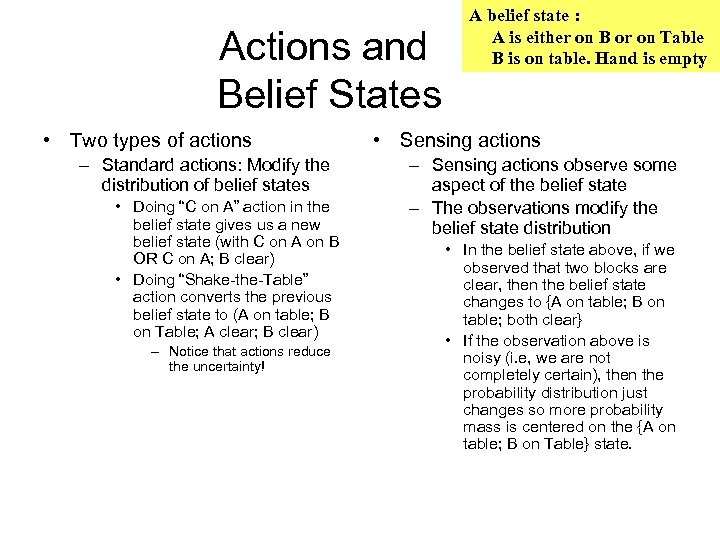

Actions and Belief States • Two types of actions – Standard actions: Modify the distribution of belief states • Doing “C on A” action in the belief state gives us a new belief state (with C on A on B OR C on A; B clear) • Doing “Shake-the-Table” action converts the previous belief state to (A on table; B on Table; A clear; B clear) – Notice that actions reduce the uncertainty! A belief state : A is either on B or on Table B is on table. Hand is empty • Sensing actions – Sensing actions observe some aspect of the belief state – The observations modify the belief state distribution • In the belief state above, if we observed that two blocks are clear, then the belief state changes to {A on table; B on table; both clear} • If the observation above is noisy (i. e, we are not completely certain), then the probability distribution just changes so more probability mass is centered on the {A on table; B on Table} state.

Actions and Belief States • Two types of actions – Standard actions: Modify the distribution of belief states • Doing “C on A” action in the belief state gives us a new belief state (with C on A on B OR C on A; B clear) • Doing “Shake-the-Table” action converts the previous belief state to (A on table; B on Table; A clear; B clear) – Notice that actions reduce the uncertainty! A belief state : A is either on B or on Table B is on table. Hand is empty • Sensing actions – Sensing actions observe some aspect of the belief state – The observations modify the belief state distribution • In the belief state above, if we observed that two blocks are clear, then the belief state changes to {A on table; B on table; both clear} • If the observation above is noisy (i. e, we are not completely certain), then the probability distribution just changes so more probability mass is centered on the {A on table; B on Table} state.

Actions and Belief States • Two types of actions – Standard actions: Modify the distribution of belief states • Doing “C on A” action in the belief state gives us a new belief state (with C on A on B OR C on A; B clear) • Doing “Shake-the-Table” action converts the previous belief state to (A on table; B on Table; A clear; B clear) – Notice that actions reduce the uncertainty! A belief state : A is either on B or on Table B is on table. Hand is empty • Sensing actions – Sensing actions observe some aspect of the belief state – The observations modify the belief state distribution • In the belief state above, if we observed that two blocks are clear, then the belief state changes to {A on table; B on table; both clear} • If the observation above is noisy (i. e, we are not completely certain), then the probability distribution just changes so more probability mass is centered on the {A on table; B on Table} state.

Actions and Belief States • Two types of actions – Standard actions: Modify the distribution of belief states • Doing “C on A” action in the belief state gives us a new belief state (with C on A on B OR C on A; B clear) • Doing “Shake-the-Table” action converts the previous belief state to (A on table; B on Table; A clear; B clear) – Notice that actions reduce the uncertainty! A belief state : A is either on B or on Table B is on table. Hand is empty • Sensing actions – Sensing actions observe some aspect of the belief state – The observations modify the belief state distribution • In the belief state above, if we observed that two blocks are clear, then the belief state changes to {A on table; B on table; both clear} • If the observation above is noisy (i. e, we are not completely certain), then the probability distribution just changes so more probability mass is centered on the {A on table; B on Table} state.