c01953f0b579affb99b656afd42c477f.ppt

- Количество слайдов: 60

11 -1 COMPLETE BUSINESS STATISTICS by AMIR D. ACZEL & JAYAVEL SOUNDERPANDIAN 6 th edition.

11 -2 Chapter 11 Multiple Regression

11 -3 11 Multiple Regression (1) • Using Statistics • The k-Variable Multiple Regression Model • The F Test of a Multiple Regression Model • How Good is the Regression • Tests of the Significance of Individual Regression Parameters • Testing the Validity of the Regression Model • Using the Multiple Regression Model for Prediction

11 -4 11 Multiple Regression (2) • Qualitative Independent Variables • Polynomial Regression • Nonlinear Models and Transformations • Multicollinearity • Residual Autocorrelation and the Durbin-Watson Test • Partial F Tests and Variable Selection Methods • Multiple Regression Using the Solver • The Matrix Approach to Multiple Regression Analysis

11 -5 11 LEARNING OBJECTIVES (1) After studying this chapter you should be able to: • Determine whether multiple regression would be applicable to a given instance • Formulate a multiple regression model • Carryout a multiple regression using a spreadsheet template • Test the validity of a multiple regression by analyzing residuals • Carryout hypothesis tests about the regression coefficients • Compute a prediction interval for the dependent variable

11 -6 11 LEARNING OBJECTIVES (2) After studying this chapter you should be able to: • Use indicator variables in a multiple regression • Carryout a polynomial regression • Conduct a Durbin-Watson test for autocorrelation in residuals • Conduct a partial F-test • Determine which independent variables are to be included in a multiple regression model • Solve multiple regression problems using the Solver macro

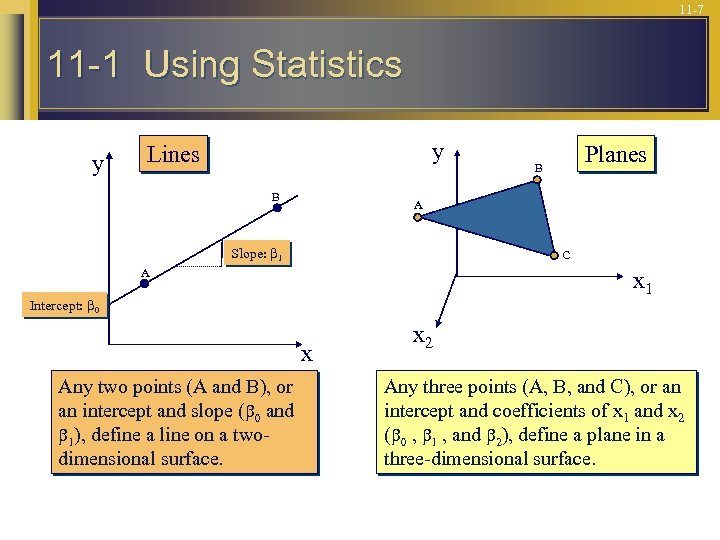

11 -7 11 -1 Using Statistics y y Lines B A Slope: 1 C A x 1 Intercept: 0 x Any two points (A and B), or an intercept and slope ( 0 and 1), define a line on a twodimensional surface. Planes B x 2 Any three points (A, B, and C), or an intercept and coefficients of x 1 and x 2 ( 0 , 1 , and 2), define a plane in a three-dimensional surface.

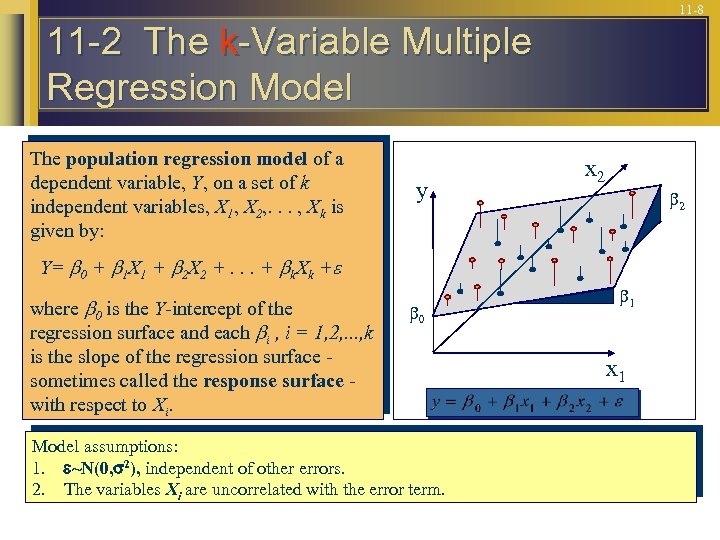

11 -8 11 -2 The k-Variable Multiple Regression Model The population regression model of a dependent variable, Y, on a set of k independent variables, X 1, X 2, . . . , Xk is given by: y x 2 2 Y= 0 + 1 X 1 + 2 X 2 +. . . + k. Xk + where 0 is the Y-intercept of the regression surface and each i , i = 1, 2, . . . , k is the slope of the regression surface sometimes called the response surface with respect to Xi. 0 Model assumptions: 1. ~N(0, 2), independent of other errors. 2. The variables Xi are uncorrelated with the error term. 1 x 1

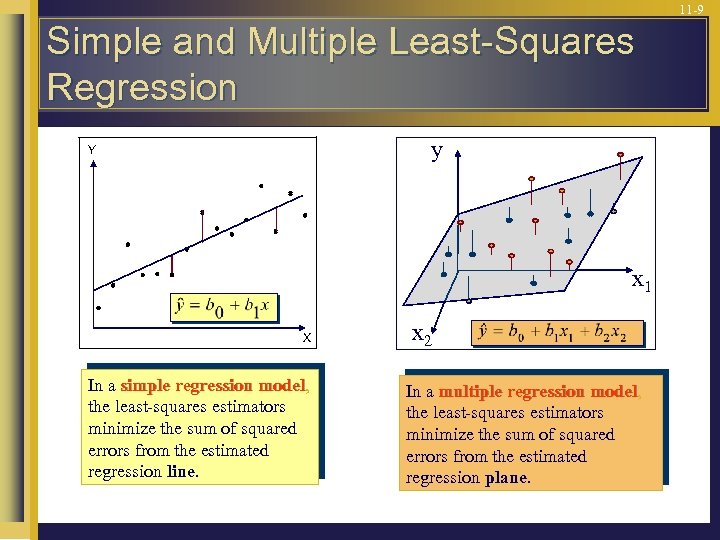

11 -9 Simple and Multiple Least-Squares Regression y Y x 1 X In a simple regression model, model the least-squares estimators minimize the sum of squared errors from the estimated regression line. x 2 In a multiple regression model, model the least-squares estimators minimize the sum of squared errors from the estimated regression plane.

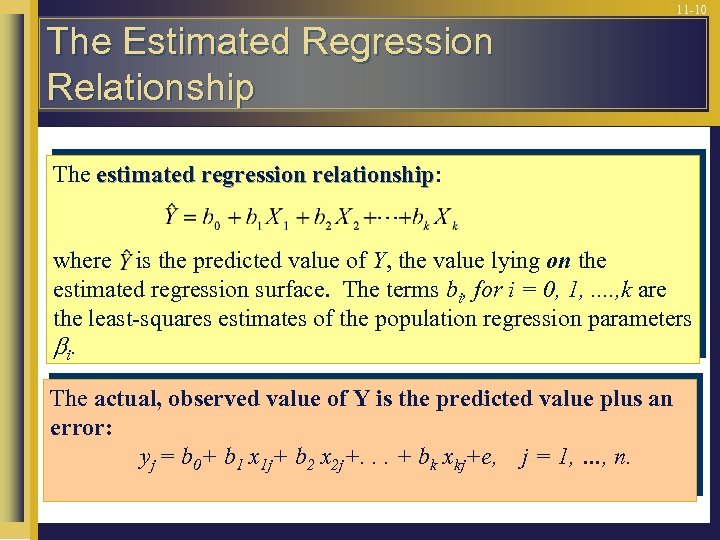

11 -10 The Estimated Regression Relationship The estimated regression relationship: relationship where is the predicted value of Y, the value lying on the estimated regression surface. The terms bi, for i = 0, 1, . . , k are the least-squares estimates of the population regression parameters i. The actual, observed value of Y is the predicted value plus an error: yj = b 0+ b 1 x 1 j+ b 2 x 2 j+. . . + bk xkj+e, j = 1, …, n.

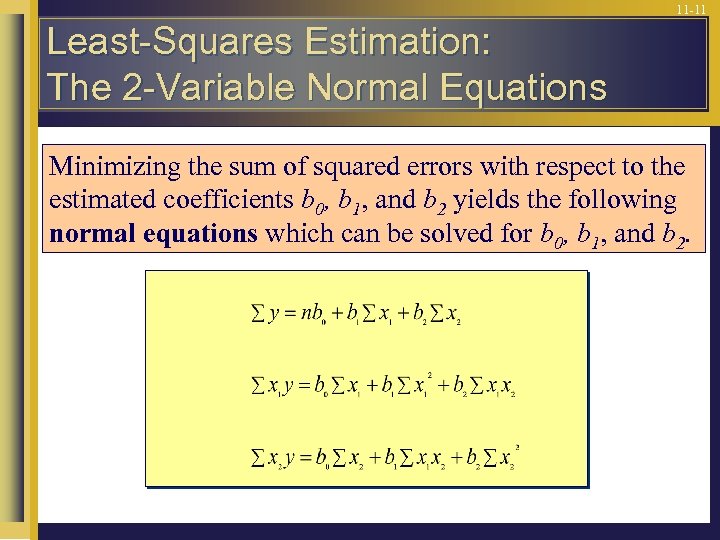

11 -11 Least-Squares Estimation: The 2 -Variable Normal Equations Minimizing the sum of squared errors with respect to the estimated coefficients b 0, b 1, and b 2 yields the following normal equations which can be solved for b 0, b 1, and b 2.

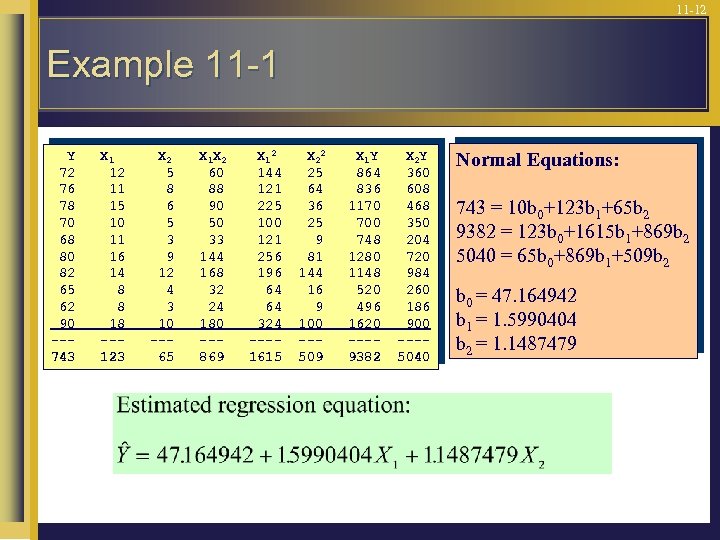

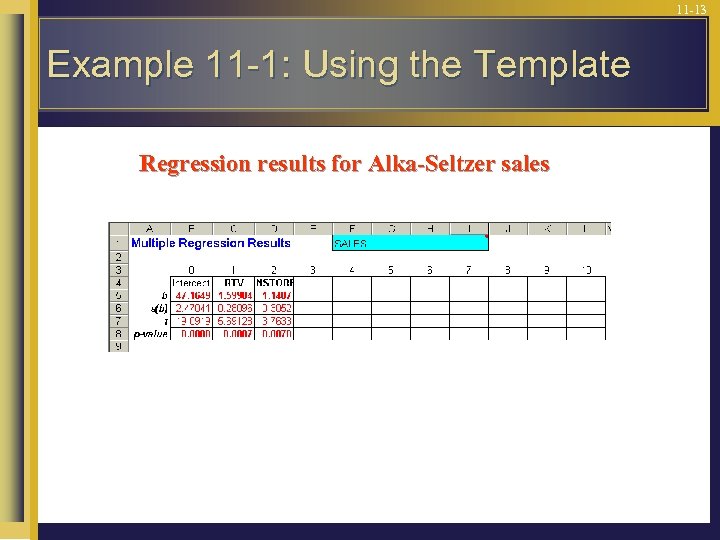

11 -12 Example 11 -1 Y 72 76 78 70 68 80 82 65 62 90 --743 X 1 12 11 15 10 11 16 14 8 8 18 --123 X 2 5 8 6 5 3 9 12 4 3 10 --65 X 1 X 2 60 88 90 50 33 144 168 32 24 180 --869 X 12 144 121 225 100 121 256 196 64 64 324 ---1615 X 22 25 64 36 25 9 81 144 16 9 100 --509 X 1 Y 864 836 1170 700 748 1280 1148 520 496 1620 ---9382 X 2 Y 360 608 468 350 204 720 984 260 186 900 ---5040 Normal Equations: 743 = 10 b 0+123 b 1+65 b 2 9382 = 123 b 0+1615 b 1+869 b 2 5040 = 65 b 0+869 b 1+509 b 2 b 0 = 47. 164942 b 1 = 1. 5990404 b 2 = 1. 1487479

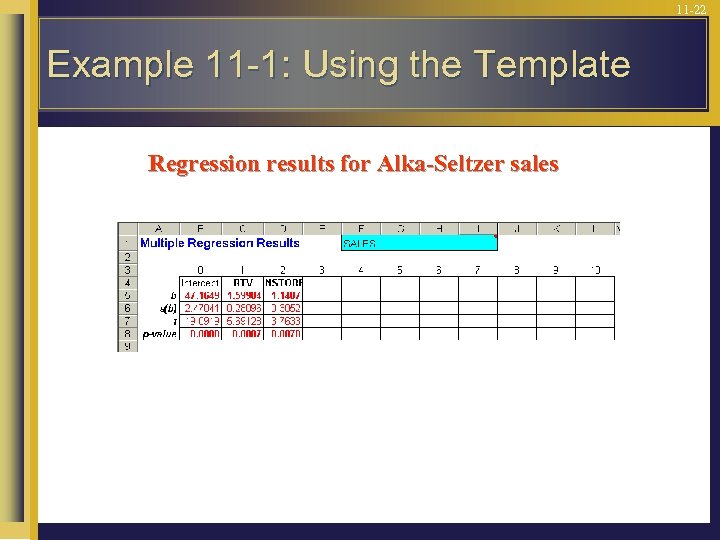

11 -13 Example 11 -1: Using the Template Regression results for Alka-Seltzer sales

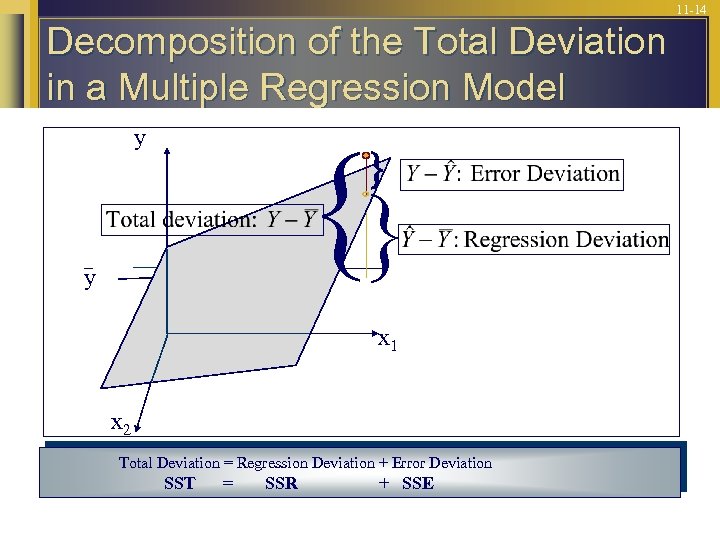

11 -14 Decomposition of the Total Deviation in a Multiple Regression Model y y x 1 x 2 Total Deviation = Regression Deviation + Error Deviation SST = SSR + SSE

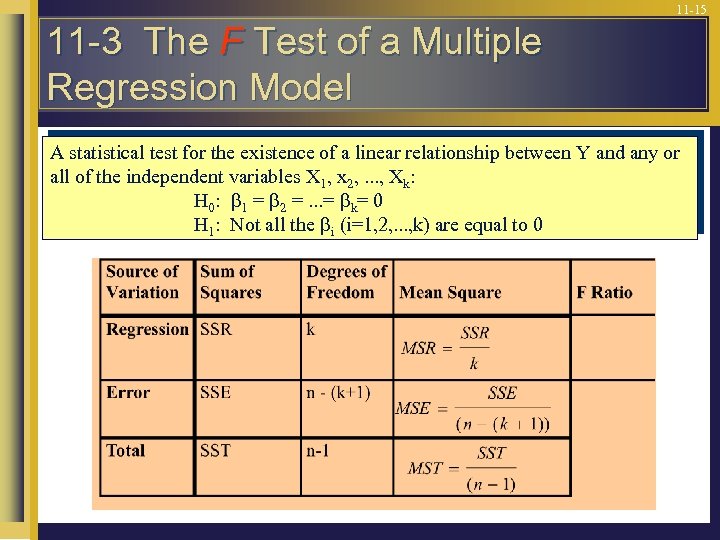

11 -15 11 -3 The F Test of a Multiple Regression Model A statistical test for the existence of a linear relationship between Y and any or all of the independent variables X 1, x 2, . . . , Xk: H 0: 1 = 2 =. . . = k= 0 H 1: Not all the i (i=1, 2, . . . , k) are equal to 0

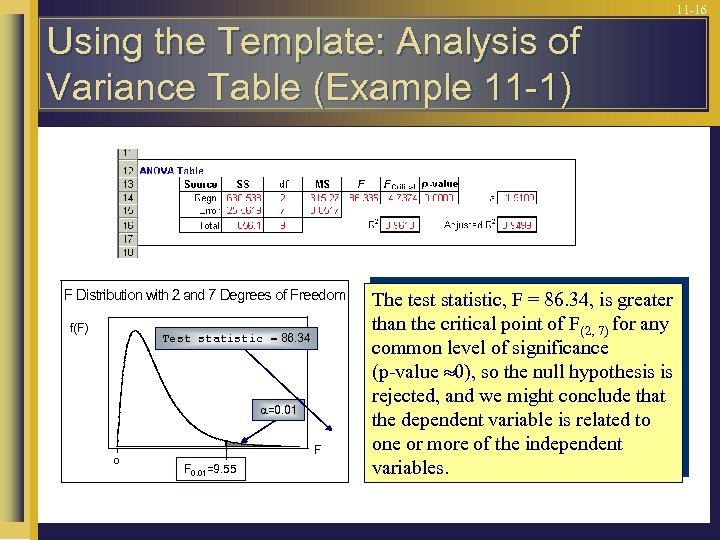

11 -16 Using the Template: Analysis of Variance Table (Example 11 -1) F Distribution with 2 and 7 Degrees of Freedom f(F) Test statistic 86. 34 =0. 01 0 F F 0. 01=9. 55 The test statistic, F = 86. 34, is greater than the critical point of F(2, 7) for any common level of significance (p-value 0), so the null hypothesis is rejected, and we might conclude that the dependent variable is related to one or more of the independent variables.

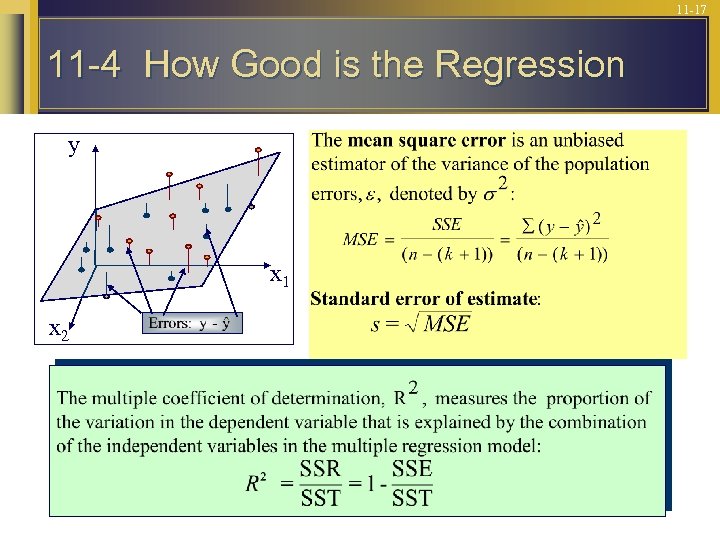

11 -17 11 -4 How Good is the Regression y x 1 x 2

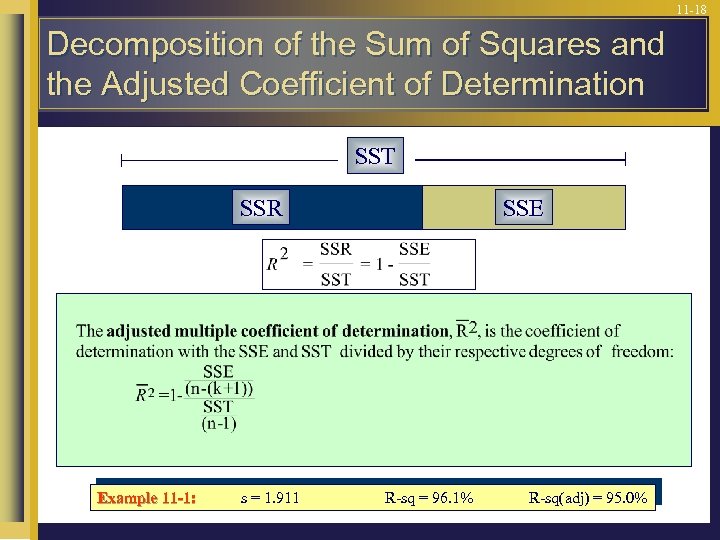

11 -18 Decomposition of the Sum of Squares and the Adjusted Coefficient of Determination SST SSR Example 11 -1: s = 1. 911 SSE R-sq = 96. 1% R-sq(adj) = 95. 0%

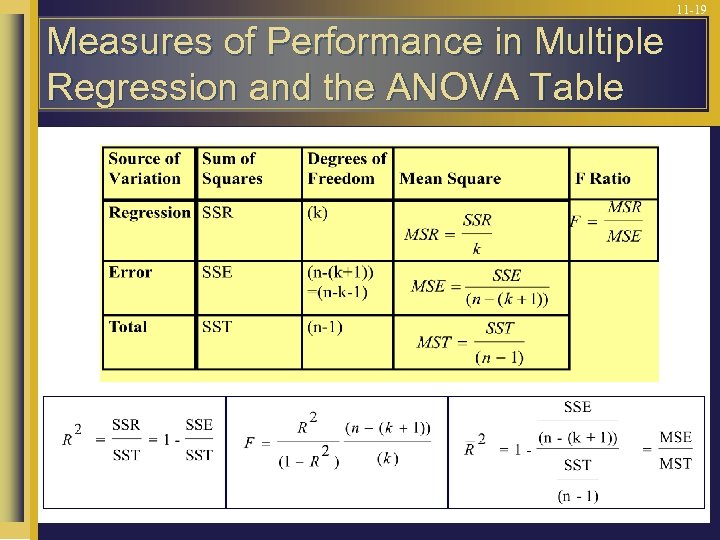

11 -19 Measures of Performance in Multiple Regression and the ANOVA Table

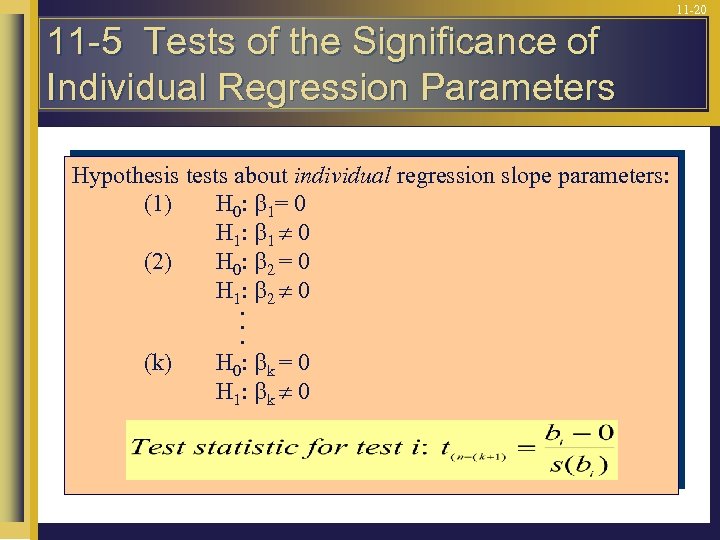

11 -20 11 -5 Tests of the Significance of Individual Regression Parameters Hypothesis tests about individual regression slope parameters: (1) H 0: 1= 0 H 1: 1 0 (2) H 0: 2 = 0 H 1: 2 0. . . (k) H 0: k = 0 H 1: k 0

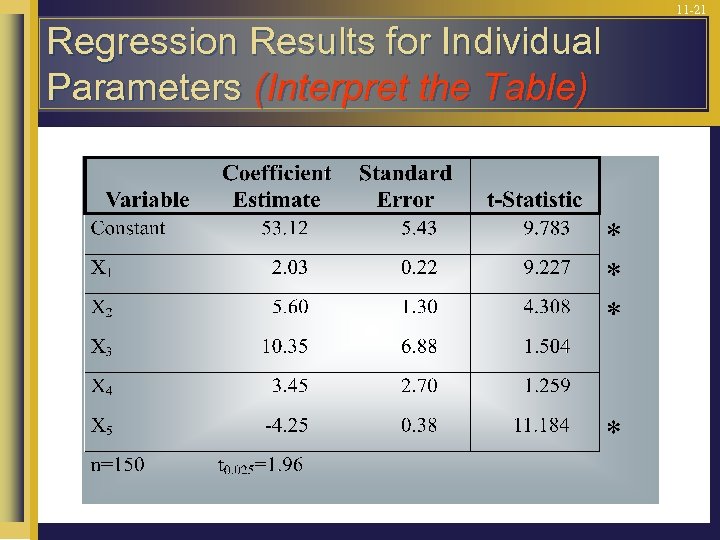

11 -21 Regression Results for Individual Parameters (Interpret the Table)

11 -22 Example 11 -1: Using the Template Regression results for Alka-Seltzer sales

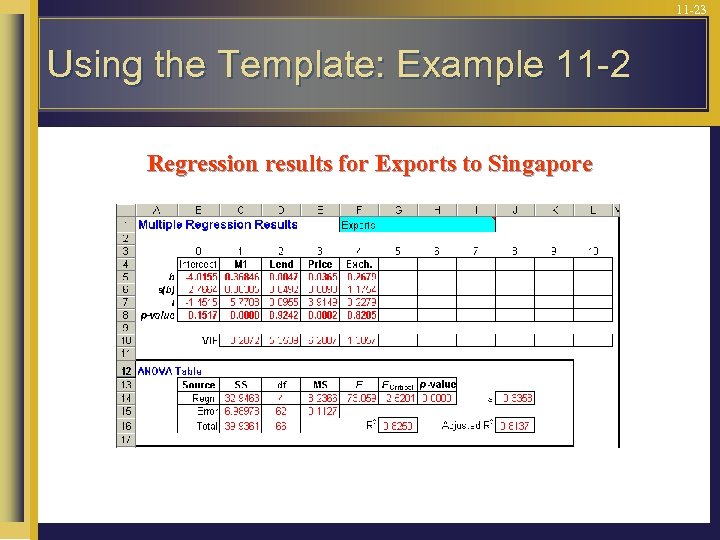

11 -23 Using the Template: Example 11 -2 Regression results for Exports to Singapore

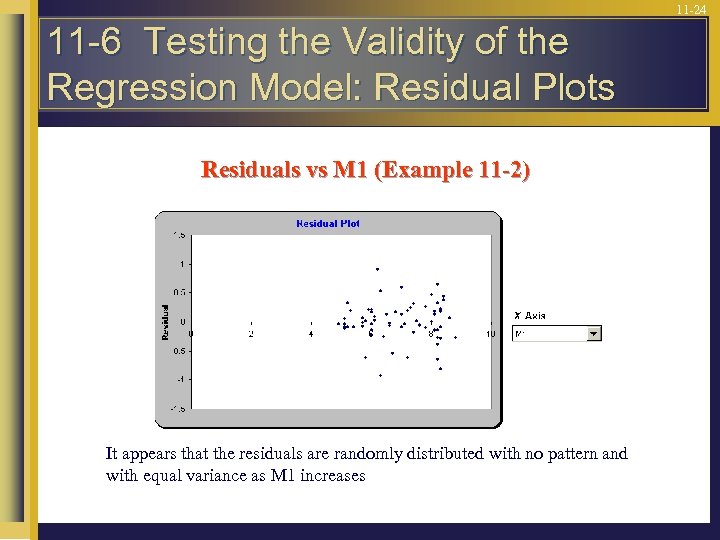

11 -24 11 -6 Testing the Validity of the Regression Model: Residual Plots Residuals vs M 1 (Example 11 -2) It appears that the residuals are randomly distributed with no pattern and with equal variance as M 1 increases

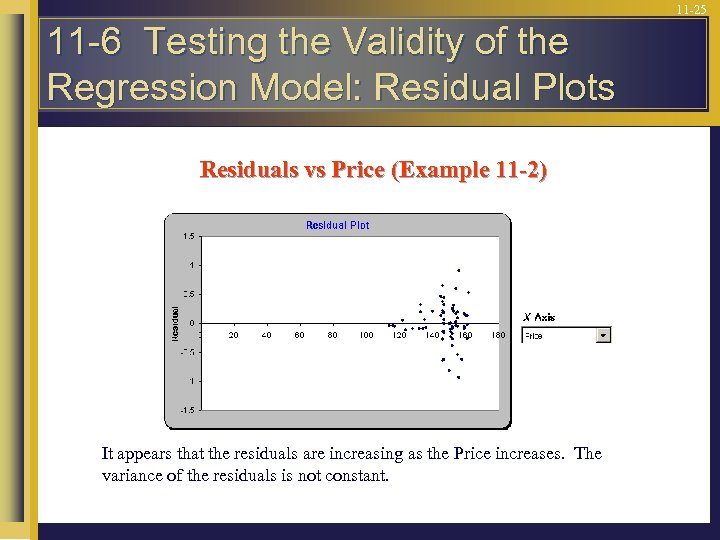

11 -25 11 -6 Testing the Validity of the Regression Model: Residual Plots Residuals vs Price (Example 11 -2) It appears that the residuals are increasing as the Price increases. The variance of the residuals is not constant.

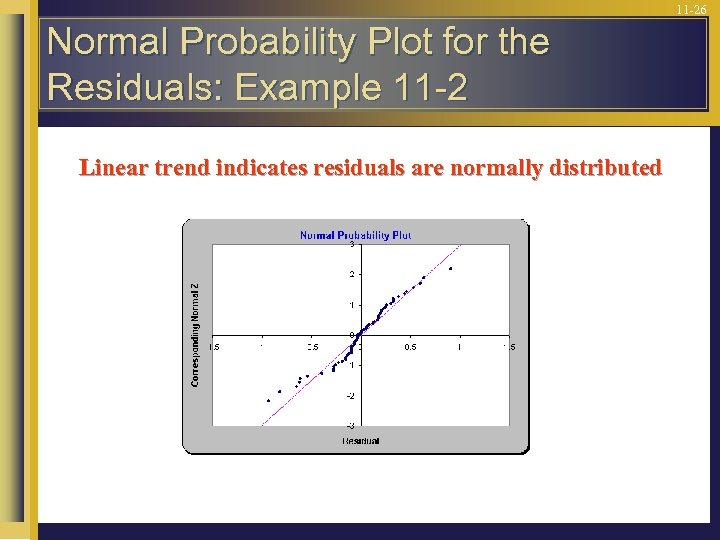

11 -26 Normal Probability Plot for the Residuals: Example 11 -2 Linear trend indicates residuals are normally distributed

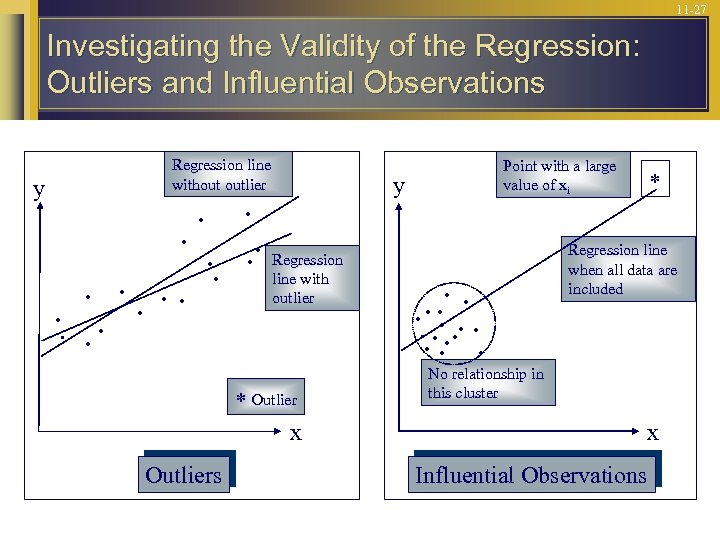

11 -27 Investigating the Validity of the Regression: Outliers and Influential Observations y Regression line without outlier . . . . y Regression line with outlier * Outlier x Outliers Point with a large value of xi . . . . * Regression line when all data are included No relationship in this cluster x Influential Observations

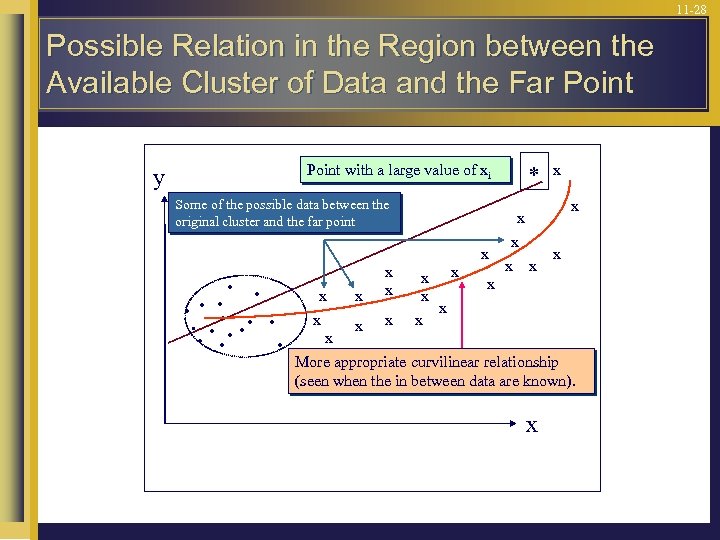

11 -28 Possible Relation in the Region between the Available Cluster of Data and the Far Point with a large value of xi y Some of the possible data between the original cluster and the far point . . . . x x x x * x x x x More appropriate curvilinear relationship (seen when the in between data are known). x

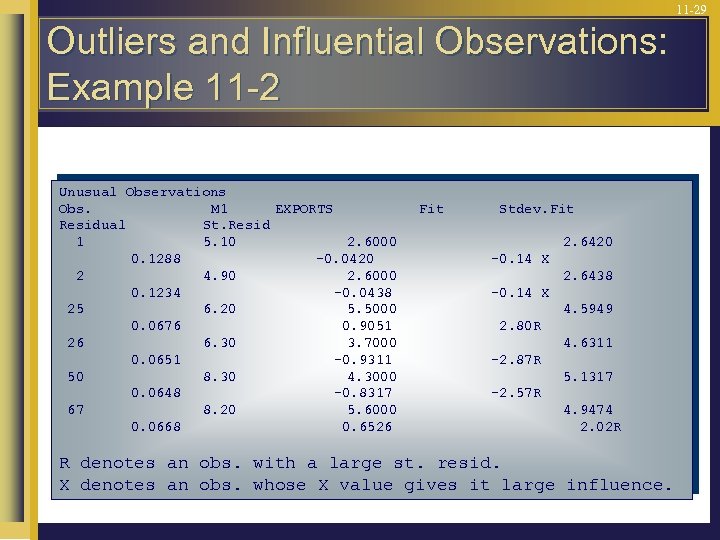

11 -29 Outliers and Influential Observations: Example 11 -2 Unusual Observations Obs. M 1 EXPORTS Residual St. Resid 1 5. 10 2. 6000 0. 1288 -0. 0420 2 4. 90 2. 6000 0. 1234 -0. 0438 25 6. 20 5. 5000 0. 0676 0. 9051 26 6. 30 3. 7000 0. 0651 -0. 9311 50 8. 30 4. 3000 0. 0648 -0. 8317 67 8. 20 5. 6000 0. 0668 0. 6526 Fit Stdev. Fit 2. 6420 -0. 14 X 2. 6438 -0. 14 X 4. 5949 2. 80 R 4. 6311 -2. 87 R 5. 1317 -2. 57 R 4. 9474 2. 02 R R denotes an obs. with a large st. resid. X denotes an obs. whose X value gives it large influence.

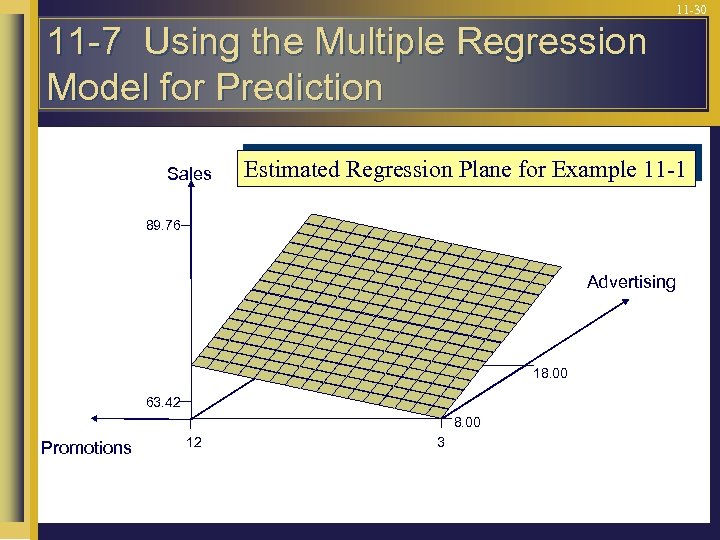

11 -30 11 -7 Using the Multiple Regression Model for Prediction Sales Estimated Regression Plane for Example 11 -1 89. 76 Advertising 18. 00 63. 42 8. 00 Promotions 12 3

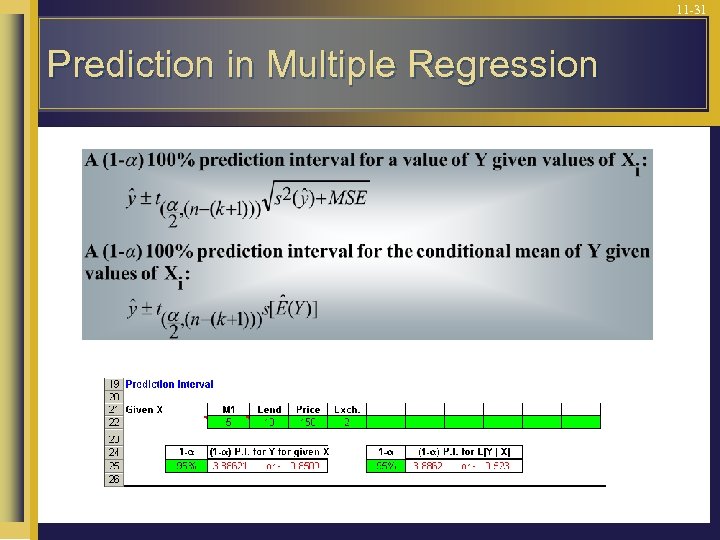

11 -31 Prediction in Multiple Regression

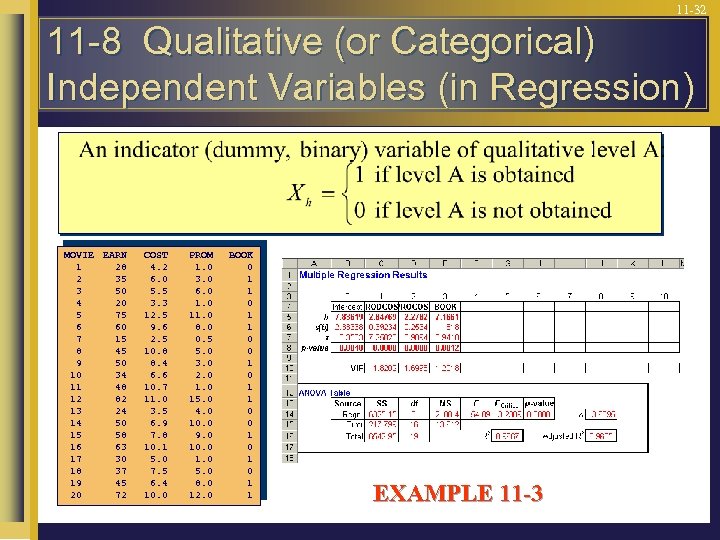

11 -32 11 -8 Qualitative (or Categorical) Independent Variables (in Regression) MOVIE EARN 1 28 2 35 3 50 4 20 5 75 6 60 7 15 8 45 9 50 10 34 11 48 12 82 13 24 14 50 15 58 16 63 17 30 18 37 19 45 20 72 COST 4. 2 6. 0 5. 5 3. 3 12. 5 9. 6 2. 5 10. 8 8. 4 6. 6 10. 7 11. 0 3. 5 6. 9 7. 8 10. 1 5. 0 7. 5 6. 4 10. 0 PROM 1. 0 3. 0 6. 0 11. 0 8. 0 0. 5 5. 0 3. 0 2. 0 15. 0 4. 0 10. 0 9. 0 10. 0 1. 0 5. 0 8. 0 12. 0 BOOK 0 1 1 0 0 1 0 1 1 EXAMPLE 11 -3

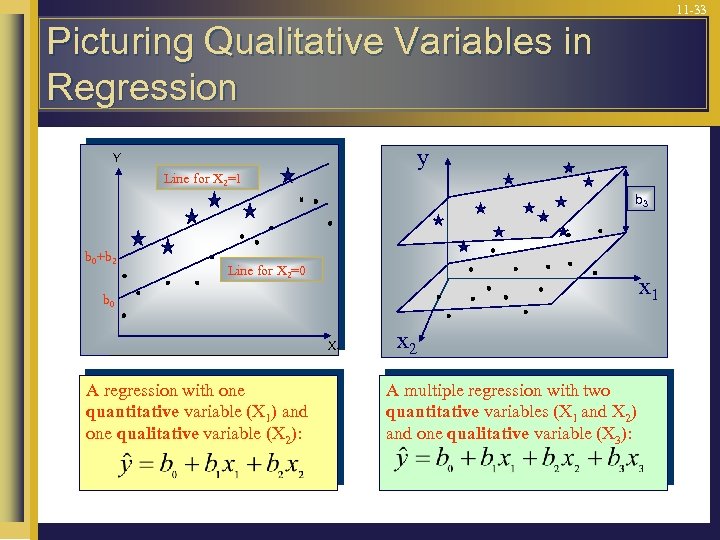

11 -33 Picturing Qualitative Variables in Regression y Y Line for X 2=1 b 3 b 0+b 2 Line for X 2=0 x 1 b 0 X 1 A regression with one quantitative variable (X 1) and one qualitative variable (X 2): x 2 A multiple regression with two quantitative variables (X 1 and X 2) and one qualitative variable (X 3):

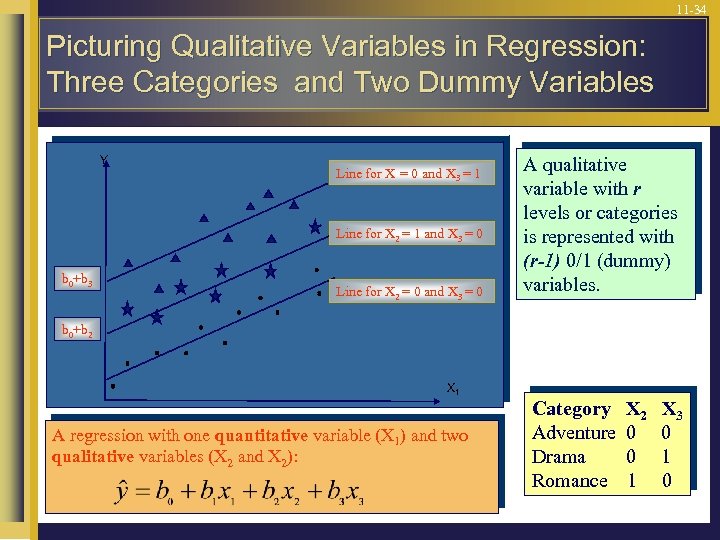

11 -34 Picturing Qualitative Variables in Regression: Three Categories and Two Dummy Variables Y Line for X = 0 and X 3 = 1 Line for X 2 = 1 and X 3 = 0 b 0+b 3 Line for X 2 = 0 and X 3 = 0 A qualitative variable with r levels or categories is represented with (r-1) 0/1 (dummy) variables. b 0+b 2 b 0 X 1 A regression with one quantitative variable (X 1) and two qualitative variables (X 2 and X 2): Category Adventure Drama Romance X 2 0 0 1 X 3 0 1 0

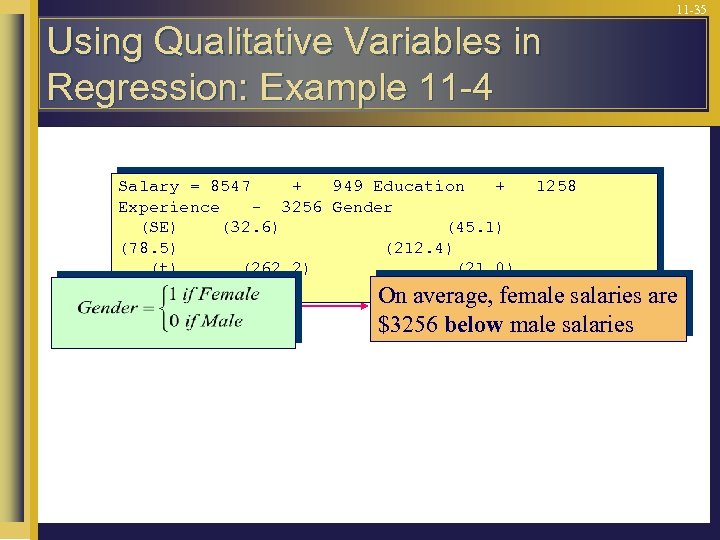

11 -35 Using Qualitative Variables in Regression: Example 11 -4 Salary = 8547 + 949 Education + Experience - 3256 Gender (SE) (32. 6) (45. 1) (78. 5) (212. 4) (t) (262. 2) (21. 0) (16. 0) (-15. 3) 1258 On average, female salaries are $3256 below male salaries

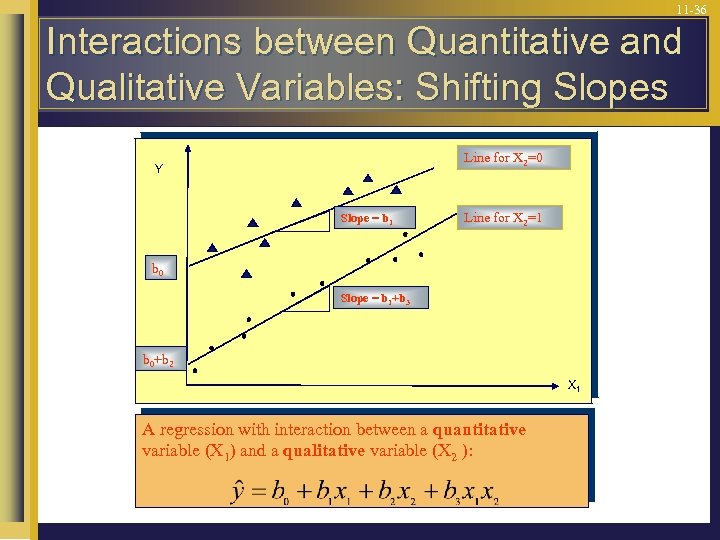

11 -36 Interactions between Quantitative and Qualitative Variables: Shifting Slopes Line for X 2=0 Y Slope = b 1 Line for X 2=1 b 0 Slope = b 1+b 3 b 0+b 2 X 1 A regression with interaction between a quantitative variable (X 1) and a qualitative variable (X 2 ):

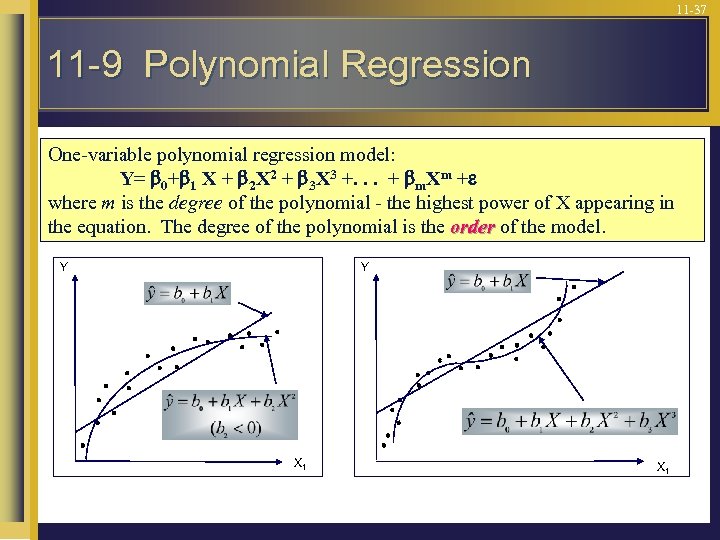

11 -37 11 -9 Polynomial Regression One-variable polynomial regression model: Y= 0+ 1 X + 2 X 2 + 3 X 3 +. . . + m. Xm + where m is the degree of the polynomial - the highest power of X appearing in the equation. The degree of the polynomial is the order of the model. Y Y X 1

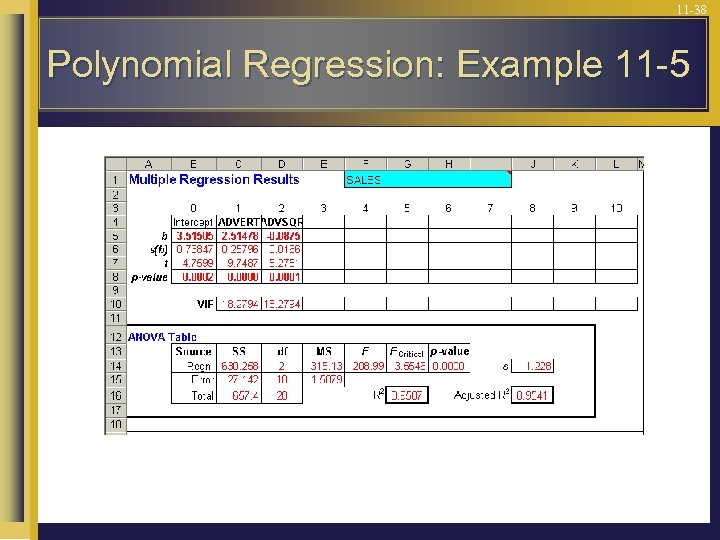

11 -38 Polynomial Regression: Example 11 -5

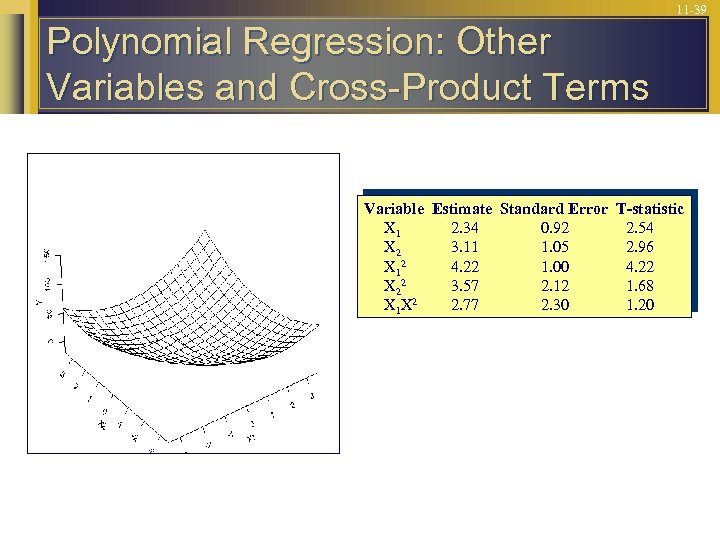

11 -39 Polynomial Regression: Other Variables and Cross-Product Terms Variable Estimate Standard Error T-statistic X 1 2. 34 0. 92 2. 54 X 2 3. 11 1. 05 2. 96 2 X 1 4. 22 1. 00 4. 22 X 22 3. 57 2. 12 1. 68 X 1 X 2 2. 77 2. 30 1. 20

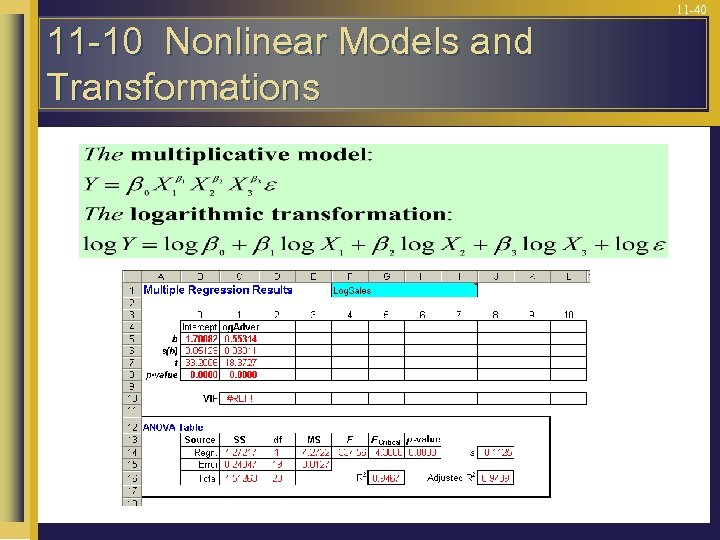

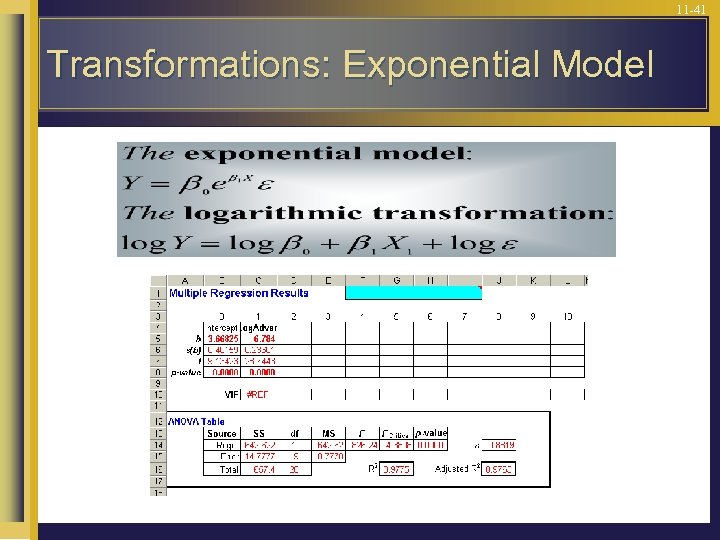

11 -40 11 -10 Nonlinear Models and Transformations

11 -41 Transformations: Exponential Model

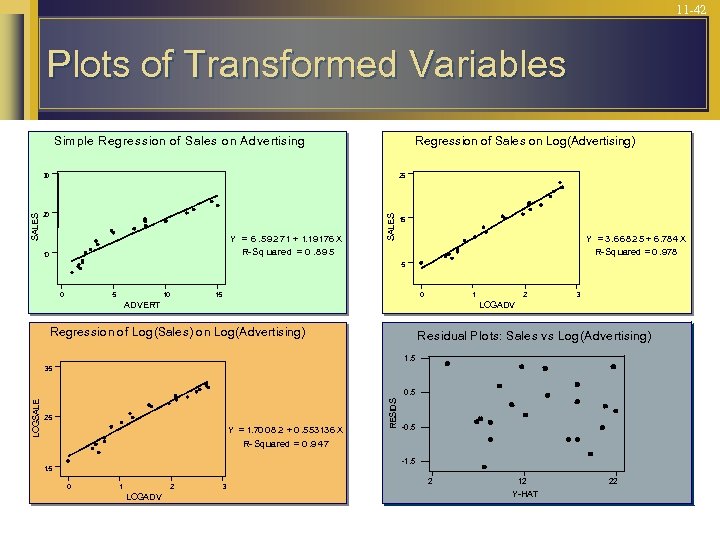

11 -42 Plots of Transformed Variables Sim ple Regression of Sales on Advertising Regression of Sales on Log(Advertising) 25 20 Y = 6. 59 2 71 + 1. 19 176 X R- Sq uared = 0. 8 9 5 10 SALES 30 15 Y = 3. 6 6 8 2 5 + 6. 78 4 X R- Sq uared = 0. 978 5 0 5 10 15 0 1 ADVERT 2 3 LOGADV Regression of Log(Sales) on Log(Advertising) Residual Plots: Sales vs Log(Advertising) 1. 5 3. 5 2. 5 Y = 1. 70 0 8 2 + 0. 5 53 13 6 X R- Sq uared = 0. 9 47 RESIDS LOGSALE 0. 5 -1. 5 0 1 2 LOGADV 3 2 12 Y-HAT 22

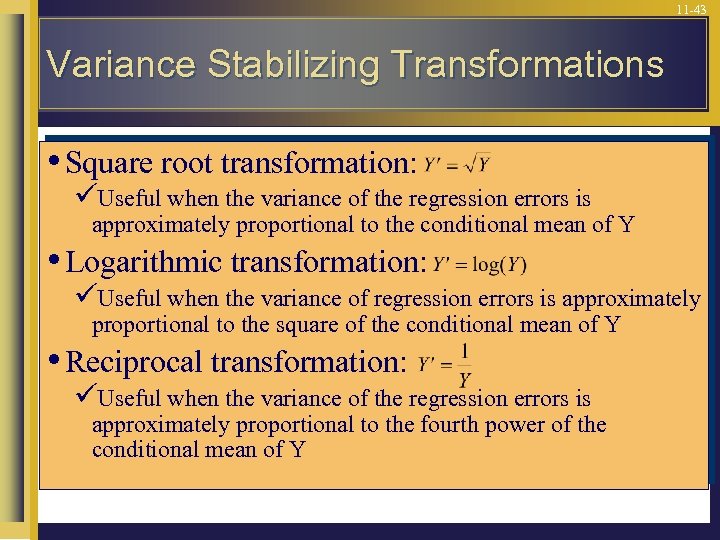

11 -43 Variance Stabilizing Transformations • Square root transformation: üUseful when the variance of the regression errors is approximately proportional to the conditional mean of Y • Logarithmic transformation: üUseful when the variance of regression errors is approximately proportional to the square of the conditional mean of Y • Reciprocal transformation: üUseful when the variance of the regression errors is approximately proportional to the fourth power of the conditional mean of Y

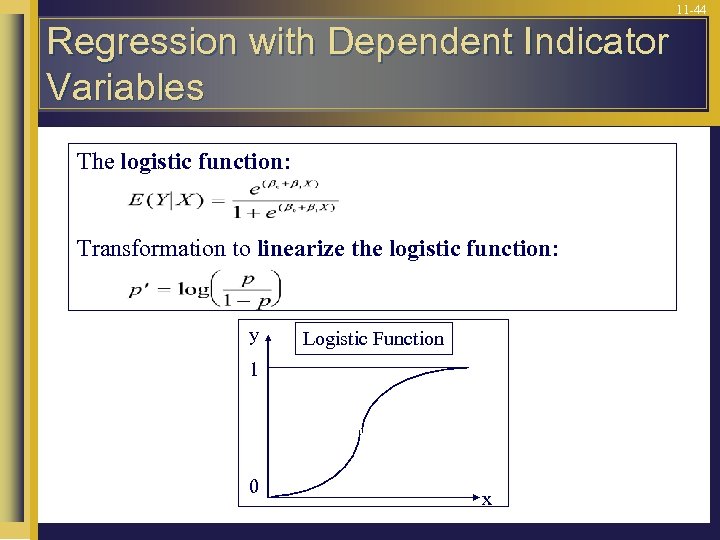

11 -44 Regression with Dependent Indicator Variables The logistic function: Transformation to linearize the logistic function: y Logistic Function 1 0 x

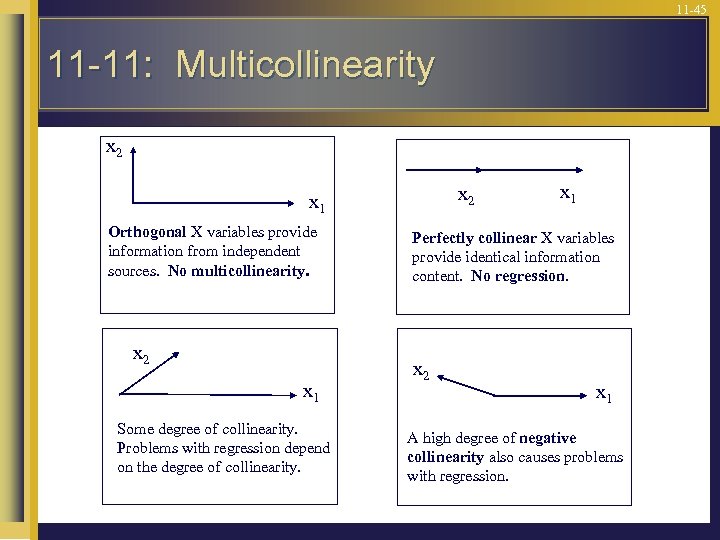

11 -45 11 -11: Multicollinearity x 2 x 1 Orthogonal X variables provide information from independent sources. No multicollinearity. x 2 x 1 Some degree of collinearity. Problems with regression depend on the degree of collinearity. x 1 Perfectly collinear X variables provide identical information content. No regression. x 2 x 1 A high degree of negative collinearity also causes problems with regression.

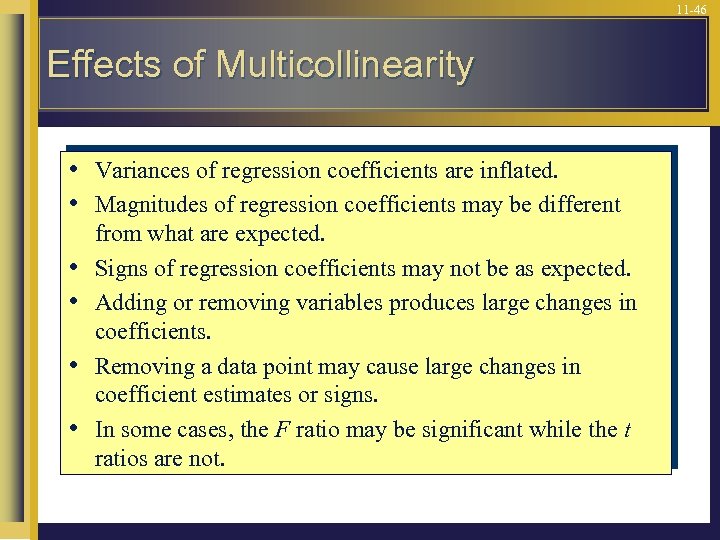

11 -46 Effects of Multicollinearity • • • Variances of regression coefficients are inflated. Magnitudes of regression coefficients may be different from what are expected. Signs of regression coefficients may not be as expected. Adding or removing variables produces large changes in coefficients. Removing a data point may cause large changes in coefficient estimates or signs. In some cases, the F ratio may be significant while the t ratios are not.

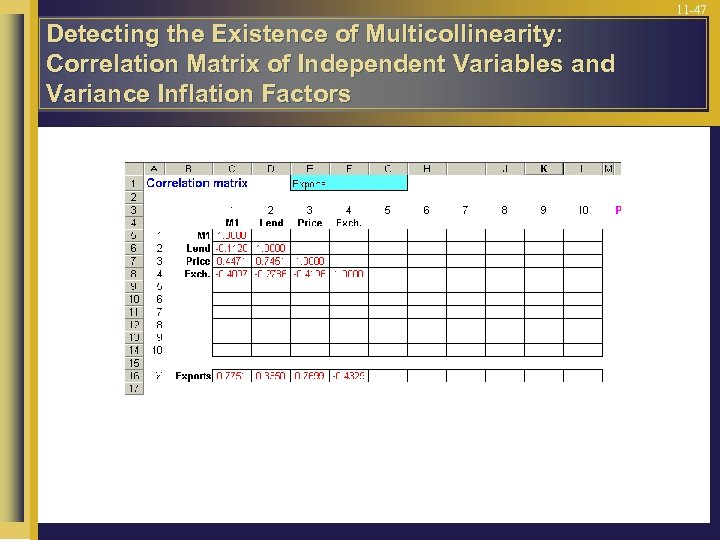

11 -47 Detecting the Existence of Multicollinearity: Correlation Matrix of Independent Variables and Variance Inflation Factors

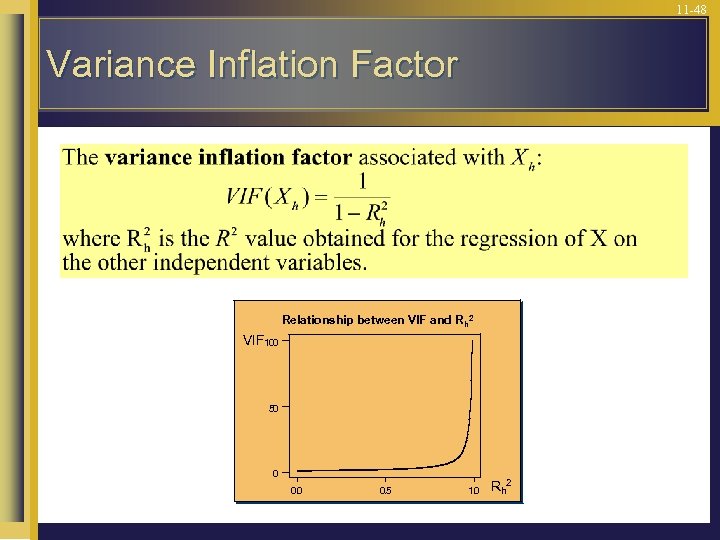

11 -48 Variance Inflation Factor Relationship between VIF and R h 2 VIF 100 50 0 0. 5 1. 0 Rh 2

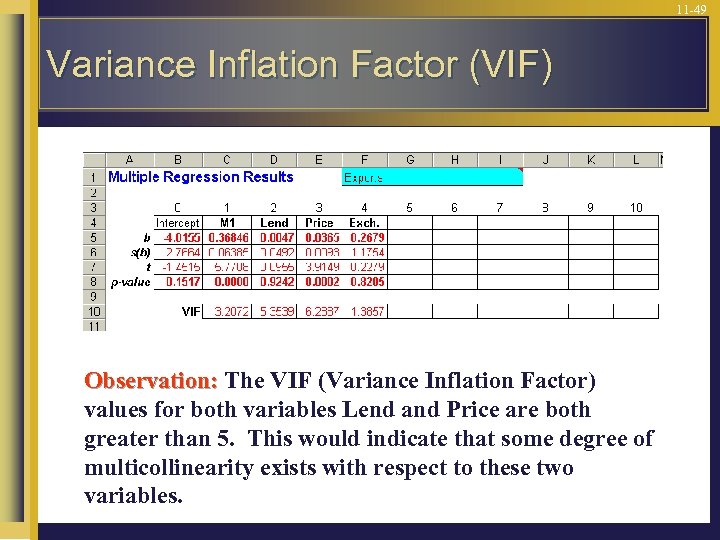

11 -49 Variance Inflation Factor (VIF) Observation: The VIF (Variance Inflation Factor) values for both variables Lend and Price are both greater than 5. This would indicate that some degree of multicollinearity exists with respect to these two variables.

11 -50 Solutions to the Multicollinearity Problem • Drop a collinear variable from the regression • Change in sampling plan to include elements outside the multicollinearity range • Transformations of variables • Ridge regression

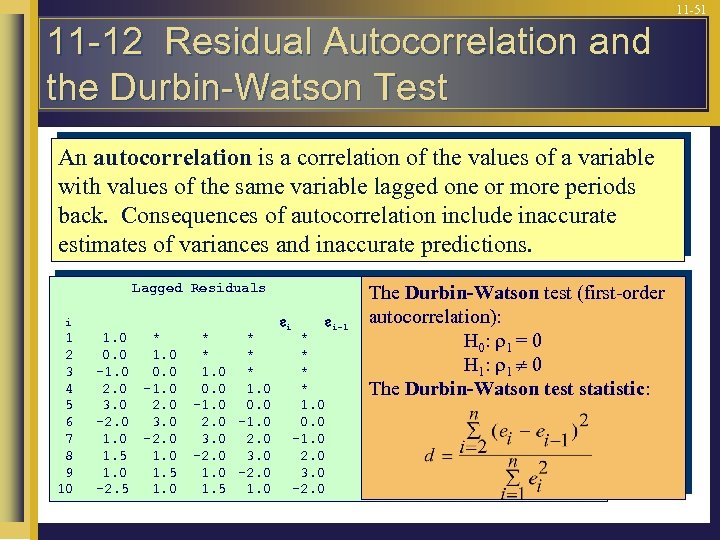

11 -51 11 -12 Residual Autocorrelation and the Durbin-Watson Test An autocorrelation is a correlation of the values of a variable with values of the same variable lagged one or more periods back. Consequences of autocorrelation include inaccurate estimates of variances and inaccurate predictions. Lagged Residuals i 1 2 3 4 5 6 7 8 9 10 1. 0 * 0. 0 1. 0 -1. 0 0. 0 2. 0 -1. 0 3. 0 2. 0 -2. 0 3. 0 1. 0 -2. 0 1. 5 1. 0 1. 5 -2. 5 1. 0 * * 1. 0 * 0. 0 1. 0 -1. 0 0. 0 2. 0 -1. 0 3. 0 2. 0 -2. 0 3. 0 1. 0 -2. 0 1. 5 1. 0 i * * 1. 0 0. 0 -1. 0 2. 0 3. 0 -2. 0 i-1 The Durbin-Watson test (first-order autocorrelation): i-3 i-2 i-4 H 0 : 1 = 0 H 1: 0 The Durbin-Watson test statistic:

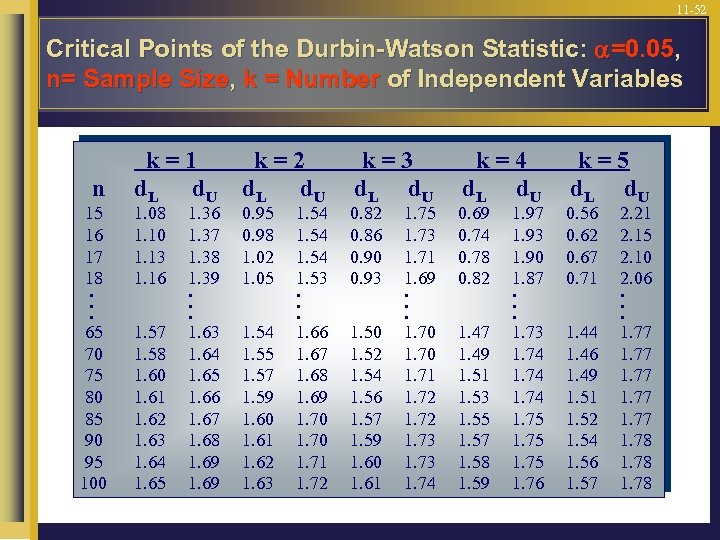

11 -52 Critical Points of the Durbin-Watson Statistic: =0. 05, n= Sample Size, k = Number of Independent Variables n 15 16 17 18. . . 65 70 75 80 85 90 95 100 k=1 d. L d. U k=2 d. L d. U 1. 57 1. 58 1. 60 1. 61 1. 62 1. 63 1. 64 1. 65 1. 54 1. 55 1. 57 1. 59 1. 60 1. 61 1. 62 1. 63 1. 08 1. 10 1. 13 1. 16 1. 37 1. 38 1. 39. . . 1. 63 1. 64 1. 65 1. 66 1. 67 1. 68 1. 69 0. 95 0. 98 1. 02 1. 05 1. 54 1. 53. . . 1. 66 1. 67 1. 68 1. 69 1. 70 1. 71 1. 72 k=3 d. L d. U 0. 82 0. 86 0. 90 0. 93 1. 50 1. 52 1. 54 1. 56 1. 57 1. 59 1. 60 1. 61 1. 75 1. 73 1. 71 1. 69. . . 1. 70 1. 71 1. 72 1. 73 1. 74 k=4 d. L d. U 0. 69 0. 74 0. 78 0. 82 1. 47 1. 49 1. 51 1. 53 1. 55 1. 57 1. 58 1. 59 1. 97 1. 93 1. 90 1. 87. . . 1. 73 1. 74 1. 75 1. 76 k=5 d. L d. U 0. 56 0. 62 0. 67 0. 71 1. 44 1. 46 1. 49 1. 51 1. 52 1. 54 1. 56 1. 57 2. 21 2. 15 2. 10 2. 06. . . 1. 77 1. 78

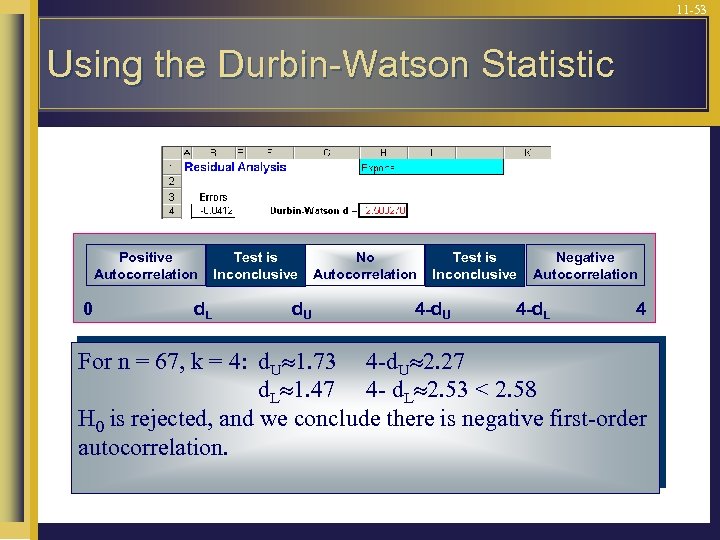

11 -53 Using the Durbin-Watson Statistic Positive Autocorrelation 0 d. L Test is Inconclusive d. U No Autocorrelation Test is Inconclusive 4 -d. U Negative Autocorrelation 4 -d. L 4 For n = 67, k = 4: d. U 1. 73 4 -d. U 2. 27 d. L 1. 47 4 - d. L 2. 53 < 2. 58 H 0 is rejected, and we conclude there is negative first-order autocorrelation.

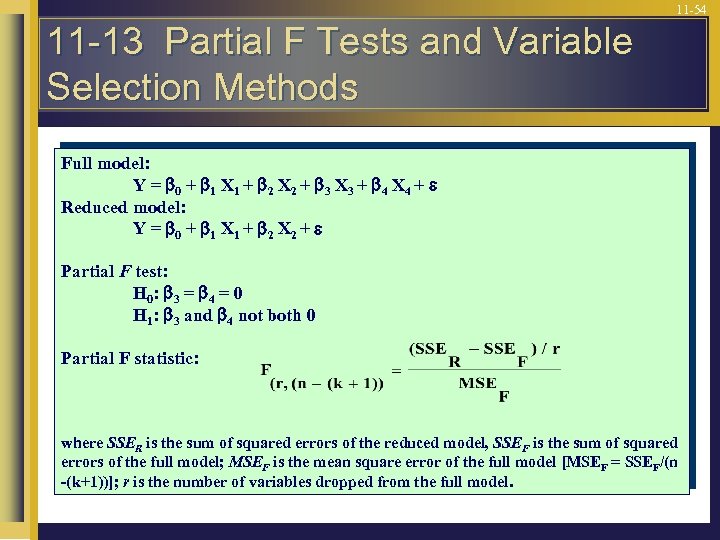

11 -54 11 -13 Partial F Tests and Variable Selection Methods Full model: Y = 0 + 1 X 1 + 2 X 2 + 3 X 3 + 4 X 4 + Reduced model: Y = 0 + 1 X 1 + 2 X 2 + Partial F test: H 0 : 3 = 4 = 0 H 1: 3 and 4 not both 0 Partial F statistic: where SSER is the sum of squared errors of the reduced model, SSEF is the sum of squared errors of the full model; MSEF is the mean square error of the full model [MSEF = SSEF/(n -(k+1))]; r is the number of variables dropped from the full model.

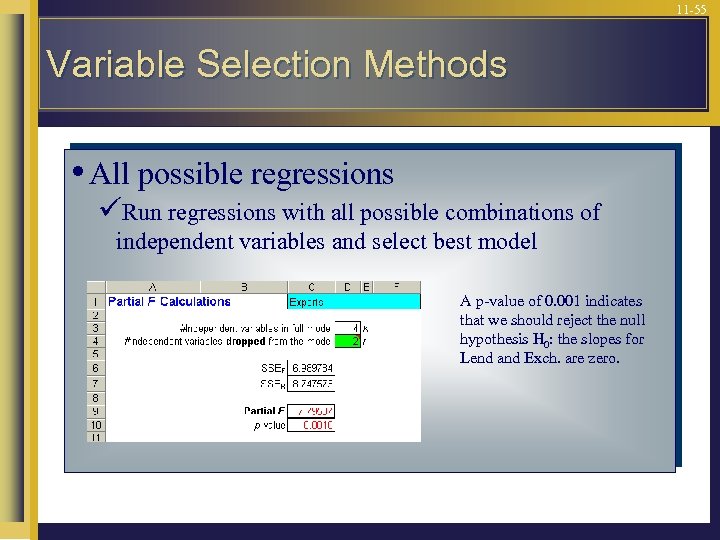

11 -55 Variable Selection Methods • All possible regressions üRun regressions with all possible combinations of independent variables and select best model A p-value of 0. 001 indicates that we should reject the null hypothesis H 0: the slopes for Lend and Exch. are zero.

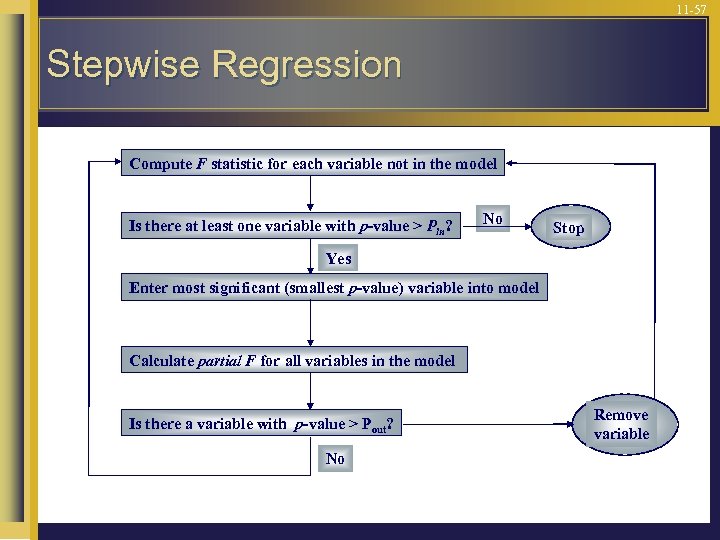

11 -56 Variable Selection Methods • Stepwise procedures üForward selection • Add one variable at a time to the model, on the basis of its F statistic üBackward elimination • Remove one variable at a time, on the basis of its F statistic üStepwise regression • Adds variables to the model and subtracts variables from the model, on the basis of the F statistic

11 -57 Stepwise Regression Compute F statistic for each variable not in the model Is there at least one variable with p-value > Pin? No Stop Yes Enter most significant (smallest p-value) variable into model Calculate partial F for all variables in the model Is there a variable with p-value > Pout? No Remove variable

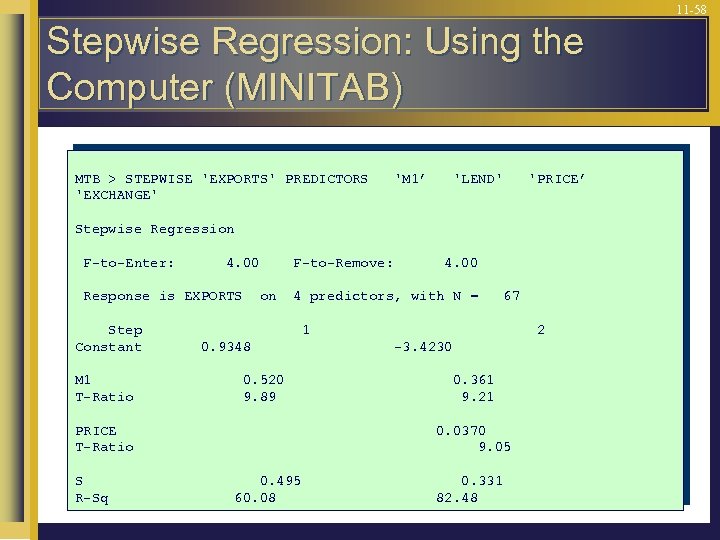

11 -58 Stepwise Regression: Using the Computer (MINITAB) MTB > STEPWISE 'EXPORTS' PREDICTORS 'EXCHANGE' 'M 1’ 'LEND' 'PRICE’ Stepwise Regression F-to-Enter: 4. 00 Response is EXPORTS Step Constant M 1 T-Ratio F-to-Remove: on 4 predictors, with N = 67 1 0. 9348 0. 520 9. 89 PRICE T-Ratio S R-Sq 4. 00 2 -3. 4230 0. 361 9. 21 0. 0370 9. 05 0. 495 60. 08 0. 331 82. 48

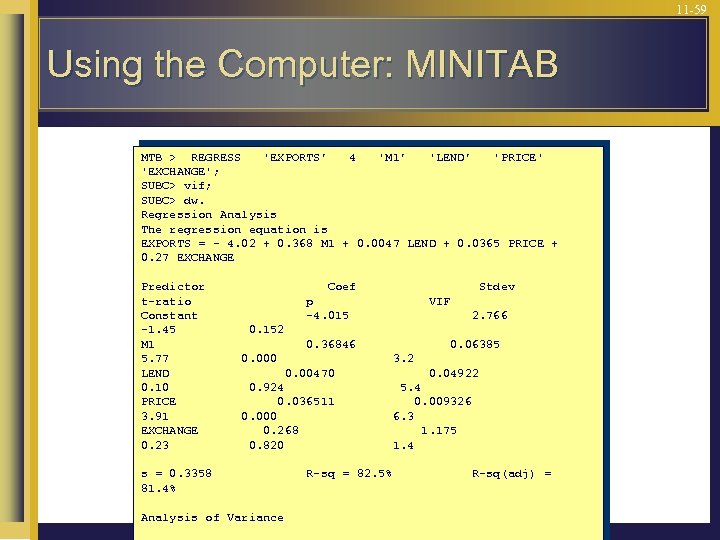

11 -59 Using the Computer: MINITAB MTB > REGRESS 'EXPORTS’ 4 'M 1’ 'LEND’ 'PRICE' 'EXCHANGE'; SUBC> vif; SUBC> dw. Regression Analysis The regression equation is EXPORTS = - 4. 02 + 0. 368 M 1 + 0. 0047 LEND + 0. 0365 PRICE + 0. 27 EXCHANGE Predictor t-ratio Constant -1. 45 M 1 5. 77 LEND 0. 10 PRICE 3. 91 EXCHANGE 0. 23 Coef Stdev p -4. 015 VIF 2. 766 0. 152 0. 36846 0. 06385 0. 000 3. 2 0. 00470 0. 924 0. 036511 0. 000 0. 268 0. 820 0. 04922 5. 4 0. 009326 6. 3 1. 175 1. 4 s = 0. 3358 81. 4% Analysis of Variance R-sq = 82. 5% R-sq(adj) =

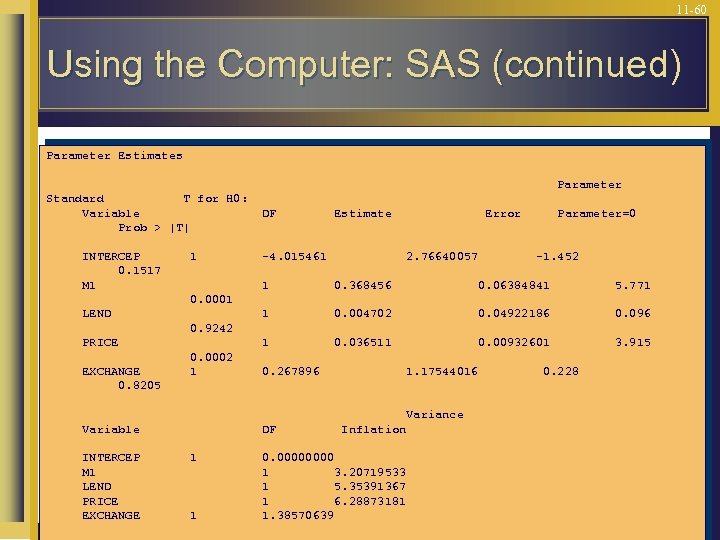

11 -60 Using the Computer: SAS (continued) Parameter Estimates Parameter Standard T for H 0: Variable Prob > |T| INTERCEP 0. 1517 M 1 1 DF Estimate -4. 015461 Error 2. 76640057 Parameter=0 -1. 452 1 0. 368456 0. 06384841 5. 771 1 0. 004702 0. 04922186 0. 096 1 0. 036511 0. 00932601 3. 915 0. 0001 LEND 0. 9242 PRICE EXCHANGE 0. 8205 0. 0002 1 0. 267896 1. 17544016 Variance Variable INTERCEP M 1 LEND PRICE EXCHANGE DF 1 1 Inflation 0. 0000 1 3. 20719533 1 5. 35391367 1 6. 28873181 1. 38570639 0. 228

c01953f0b579affb99b656afd42c477f.ppt