93d61e78c853b88eb53f5b548bd51cc6.ppt

- Количество слайдов: 50

1

1

Vo. IP Testing—A How-to Session for Performance and Functional Test Methodologies Chris Bajorek, Director, CT Labs

Vo. IP Testing—A How-to Session for Performance and Functional Test Methodologies Chris Bajorek, Director, CT Labs

Before We Start • Every year we consult with many companies, helping them to perform many different types of Vo. IPoriented tests • This provides a unique industry perspective on the market readiness of a wide range of Vo. IP products • I’m pleased to have this opportunity to share our test experiences with you today 3

Before We Start • Every year we consult with many companies, helping them to perform many different types of Vo. IPoriented tests • This provides a unique industry perspective on the market readiness of a wide range of Vo. IP products • I’m pleased to have this opportunity to share our test experiences with you today 3

Vo. IP Products by Market Area • Residential (Voice over Broadband) – Analog terminal adapters, Vo. IP softphones, residential routers • Enterprise – IP PBXs, IP Contact Centers, Vo. IP phones & softphones, firewalls/ALGs, intrusion prevention devices, media servers (conferencing, voice mail, IVR) • Next-Gen Network Carriers and Service Providers – Session border controllers, softswitches, media servers, proxys, media gateways, VQ enhancement processors 4

Vo. IP Products by Market Area • Residential (Voice over Broadband) – Analog terminal adapters, Vo. IP softphones, residential routers • Enterprise – IP PBXs, IP Contact Centers, Vo. IP phones & softphones, firewalls/ALGs, intrusion prevention devices, media servers (conferencing, voice mail, IVR) • Next-Gen Network Carriers and Service Providers – Session border controllers, softswitches, media servers, proxys, media gateways, VQ enhancement processors 4

Building Vo. IP Networks: IMS is here and it needs testing. • Key elements of IMS: – Enables innovative new applications – High levels of network complexity – Modules from multiple vendors must peacefully coexist – High rate of carrier adoption – Global deployments – Standards based – Exploits strengths of IP+SIP 5

Building Vo. IP Networks: IMS is here and it needs testing. • Key elements of IMS: – Enables innovative new applications – High levels of network complexity – Modules from multiple vendors must peacefully coexist – High rate of carrier adoption – Global deployments – Standards based – Exploits strengths of IP+SIP 5

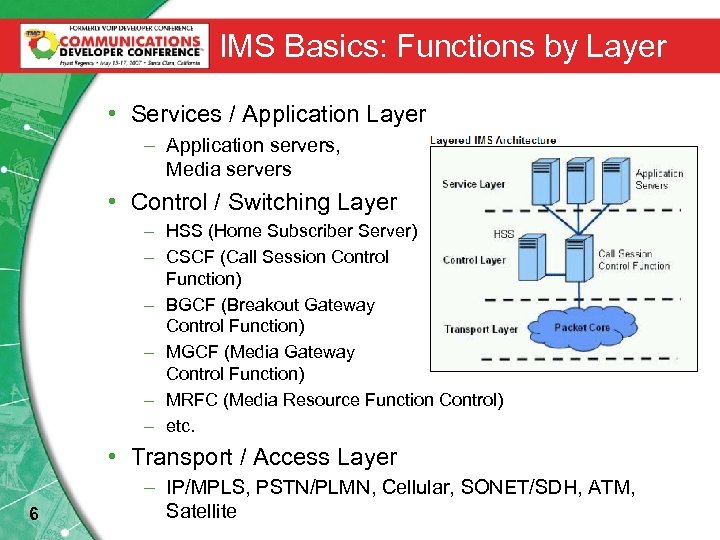

IMS Basics: Functions by Layer • Services / Application Layer – Application servers, Media servers • Control / Switching Layer – HSS (Home Subscriber Server) – CSCF (Call Session Control Function) – BGCF (Breakout Gateway Control Function) – MGCF (Media Gateway Control Function) – MRFC (Media Resource Function Control) – etc. • Transport / Access Layer 6 – IP/MPLS, PSTN/PLMN, Cellular, SONET/SDH, ATM, Satellite

IMS Basics: Functions by Layer • Services / Application Layer – Application servers, Media servers • Control / Switching Layer – HSS (Home Subscriber Server) – CSCF (Call Session Control Function) – BGCF (Breakout Gateway Control Function) – MGCF (Media Gateway Control Function) – MRFC (Media Resource Function Control) – etc. • Transport / Access Layer 6 – IP/MPLS, PSTN/PLMN, Cellular, SONET/SDH, ATM, Satellite

Risks of Inadequate Testing • From the CT Labs Vo. IP project files: – Vo. IP terminal adapters that act unreliable and “emulate” an occasional bad Internet connection – IP PBX’s that drop calls when subjected to only certain types of call loads – Vo. IP soft clients that distort the caller audio – High-end enterprise firewalls that grind to a standstill under certain denial-of-service attacks – Session border controllers that degrade voice quality at traffic levels below rated maximums 7

Risks of Inadequate Testing • From the CT Labs Vo. IP project files: – Vo. IP terminal adapters that act unreliable and “emulate” an occasional bad Internet connection – IP PBX’s that drop calls when subjected to only certain types of call loads – Vo. IP soft clients that distort the caller audio – High-end enterprise firewalls that grind to a standstill under certain denial-of-service attacks – Session border controllers that degrade voice quality at traffic levels below rated maximums 7

Test Automation. Reaping the Benefits of Shorter Test Why you should consider using it Cycles sooner, not later 8

Test Automation. Reaping the Benefits of Shorter Test Why you should consider using it Cycles sooner, not later 8

Test Automation—The Benefits – Tightly controlled test environment • All aspects of the test setup can be controlled and coordinated by testing scripts – Repeatable results • Key to resolving issues that arise during testing • Includes ability to exactly reproduce product settings and test conditions – Faster test execution • Weeks of manual testing can literally be executed in Hours – Increased accuracy of results reporting – All of the above resulting in: • Lower testing costs over product’s lifetime • Greater product and delivered-service reliability • Fewer field failures, fewer customer-reported issues 9

Test Automation—The Benefits – Tightly controlled test environment • All aspects of the test setup can be controlled and coordinated by testing scripts – Repeatable results • Key to resolving issues that arise during testing • Includes ability to exactly reproduce product settings and test conditions – Faster test execution • Weeks of manual testing can literally be executed in Hours – Increased accuracy of results reporting – All of the above resulting in: • Lower testing costs over product’s lifetime • Greater product and delivered-service reliability • Fewer field failures, fewer customer-reported issues 9

Challenges Using Live Callers in Tests – The exact timing and sequence of caller actions is not synchronized or repeatable – Ability to distinguish and describe nuances of results varies widely from person to person • i. e. reliability of reported results can be low – Ability to correlate assessment of voice quality and anomalies across multiple listeners is typically poor • Unless you just happen to know how to run ITU-T P. 800 MOS tests – Call arrival profiles difficult to control when using large numbers of callers for “load tests” – In other words, don’t expect more than coarse results 10

Challenges Using Live Callers in Tests – The exact timing and sequence of caller actions is not synchronized or repeatable – Ability to distinguish and describe nuances of results varies widely from person to person • i. e. reliability of reported results can be low – Ability to correlate assessment of voice quality and anomalies across multiple listeners is typically poor • Unless you just happen to know how to run ITU-T P. 800 MOS tests – Call arrival profiles difficult to control when using large numbers of callers for “load tests” – In other words, don’t expect more than coarse results 10

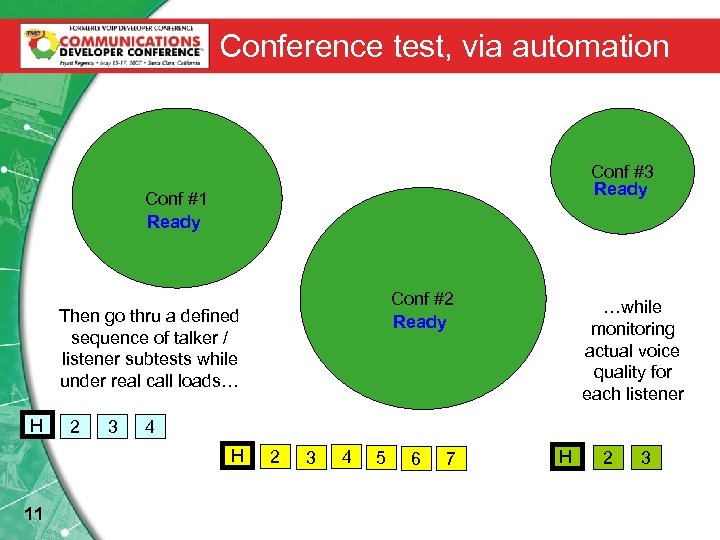

Conference test, via automation Conf #3 Ready Conf #1 Ready Conf #2 Ready Then go thru a defined sequence of talker / listener subtests while under real call loads… H 2 3 4 H 11 …while monitoring actual voice quality for each listener 2 3 4 5 6 7 H 2 3

Conference test, via automation Conf #3 Ready Conf #1 Ready Conf #2 Ready Then go thru a defined sequence of talker / listener subtests while under real call loads… H 2 3 4 H 11 …while monitoring actual voice quality for each listener 2 3 4 5 6 7 H 2 3

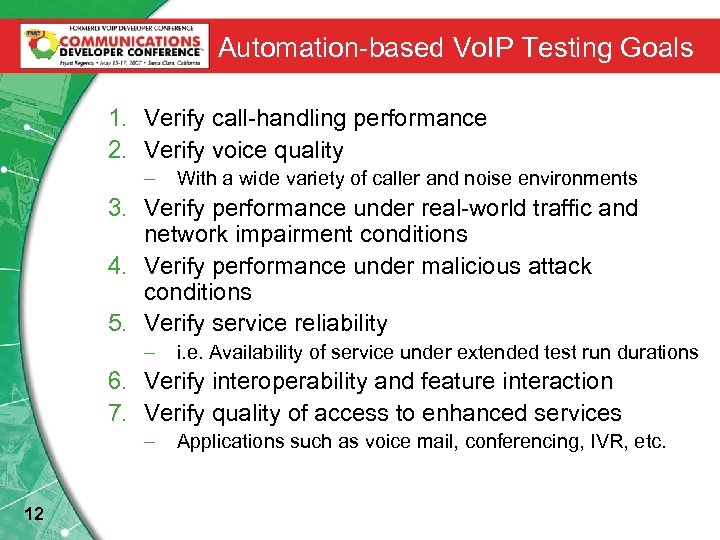

Automation-based Vo. IP Testing Goals 1. Verify call-handling performance 2. Verify voice quality – With a wide variety of caller and noise environments 3. Verify performance under real-world traffic and network impairment conditions 4. Verify performance under malicious attack conditions 5. Verify service reliability – i. e. Availability of service under extended test run durations 6. Verify interoperability and feature interaction 7. Verify quality of access to enhanced services – 12 Applications such as voice mail, conferencing, IVR, etc.

Automation-based Vo. IP Testing Goals 1. Verify call-handling performance 2. Verify voice quality – With a wide variety of caller and noise environments 3. Verify performance under real-world traffic and network impairment conditions 4. Verify performance under malicious attack conditions 5. Verify service reliability – i. e. Availability of service under extended test run durations 6. Verify interoperability and feature interaction 7. Verify quality of access to enhanced services – 12 Applications such as voice mail, conferencing, IVR, etc.

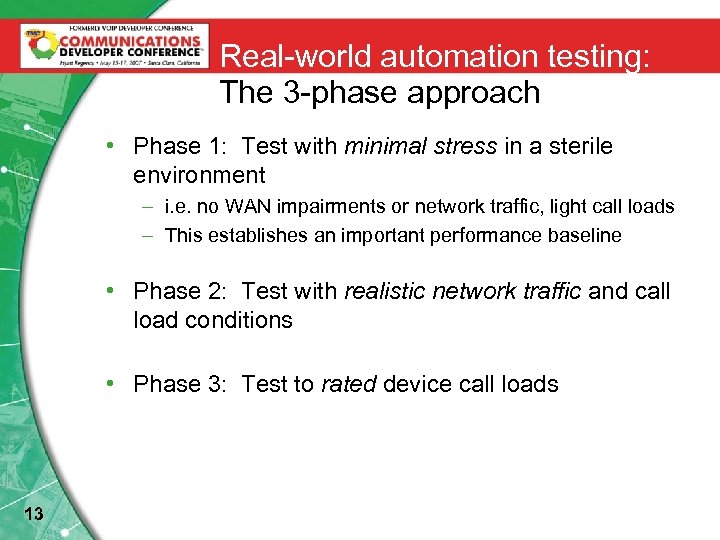

Real-world automation testing: The 3 -phase approach • Phase 1: Test with minimal stress in a sterile environment – i. e. no WAN impairments or network traffic, light call loads – This establishes an important performance baseline • Phase 2: Test with realistic network traffic and call load conditions • Phase 3: Test to rated device call loads 13

Real-world automation testing: The 3 -phase approach • Phase 1: Test with minimal stress in a sterile environment – i. e. no WAN impairments or network traffic, light call loads – This establishes an important performance baseline • Phase 2: Test with realistic network traffic and call load conditions • Phase 3: Test to rated device call loads 13

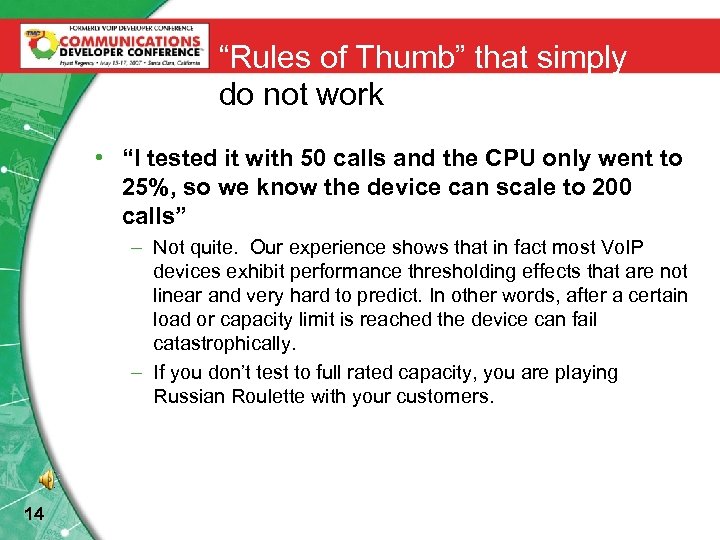

“Rules of Thumb” that simply do not work • “I tested it with 50 calls and the CPU only went to 25%, so we know the device can scale to 200 calls” – Not quite. Our experience shows that in fact most Vo. IP devices exhibit performance thresholding effects that are not linear and very hard to predict. In other words, after a certain load or capacity limit is reached the device can fail catastrophically. – If you don’t test to full rated capacity, you are playing Russian Roulette with your customers. 14

“Rules of Thumb” that simply do not work • “I tested it with 50 calls and the CPU only went to 25%, so we know the device can scale to 200 calls” – Not quite. Our experience shows that in fact most Vo. IP devices exhibit performance thresholding effects that are not linear and very hard to predict. In other words, after a certain load or capacity limit is reached the device can fail catastrophically. – If you don’t test to full rated capacity, you are playing Russian Roulette with your customers. 14

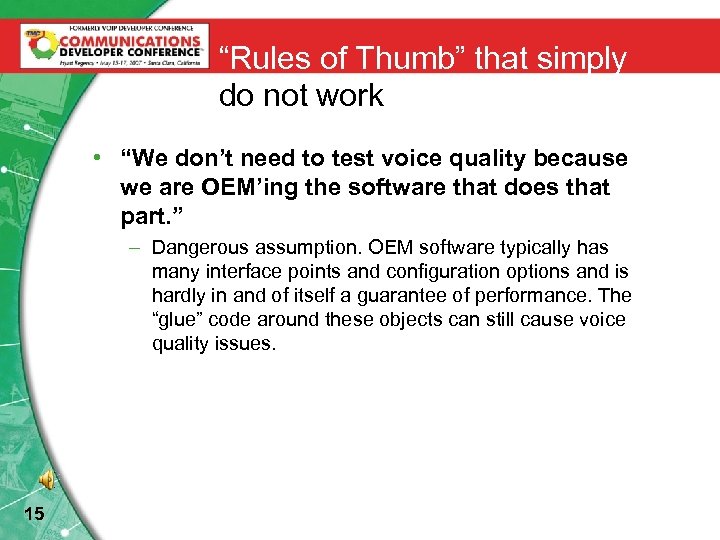

“Rules of Thumb” that simply do not work • “We don’t need to test voice quality because we are OEM’ing the software that does that part. ” – Dangerous assumption. OEM software typically has many interface points and configuration options and is hardly in and of itself a guarantee of performance. The “glue” code around these objects can still cause voice quality issues. 15

“Rules of Thumb” that simply do not work • “We don’t need to test voice quality because we are OEM’ing the software that does that part. ” – Dangerous assumption. OEM software typically has many interface points and configuration options and is hardly in and of itself a guarantee of performance. The “glue” code around these objects can still cause voice quality issues. 15

Emulation of Network Impairments • Perfectly clean networks are not the real world • Real networks corrupt the flow of packets in the following time-varying ways: – Packet loss (especially burst loss), packet duplication, and out-of-order packets – Latency and jitter – Restricted bandwidth • If you test while inducing these conditions, your product or service will be the cause of far fewer post-deployment issues • You can perform both static and dynamic emulation of impairment conditions – Both have value depending on nature of the Vo. IP device – e. g. IP phone that renegotiates codec type or codec mode when network degrades in mid-call 16

Emulation of Network Impairments • Perfectly clean networks are not the real world • Real networks corrupt the flow of packets in the following time-varying ways: – Packet loss (especially burst loss), packet duplication, and out-of-order packets – Latency and jitter – Restricted bandwidth • If you test while inducing these conditions, your product or service will be the cause of far fewer post-deployment issues • You can perform both static and dynamic emulation of impairment conditions – Both have value depending on nature of the Vo. IP device – e. g. IP phone that renegotiates codec type or codec mode when network degrades in mid-call 16

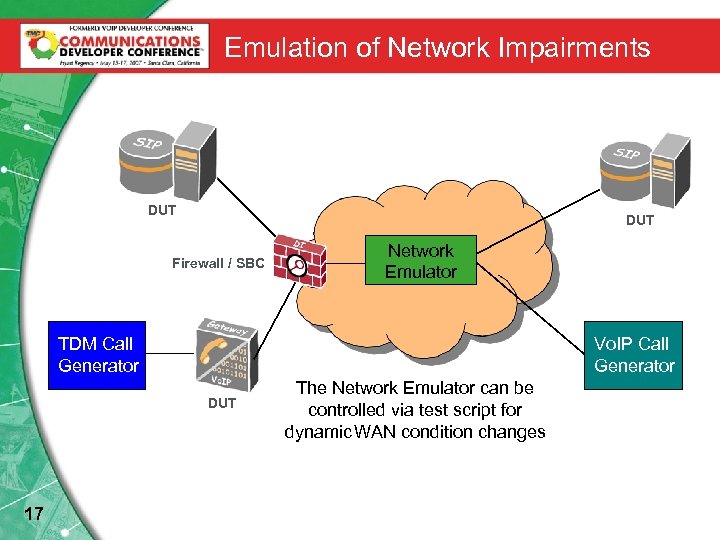

Emulation of Network Impairments DUT Firewall / SBC Network Emulator TDM Call Generator Vo. IP Call Generator DUT 17 The Network Emulator can be controlled via test script for dynamic WAN condition changes

Emulation of Network Impairments DUT Firewall / SBC Network Emulator TDM Call Generator Vo. IP Call Generator DUT 17 The Network Emulator can be controlled via test script for dynamic WAN condition changes

Adding “Internet Mix” Network Traffic • The goal: see the DUT’s impact on Vo. IP calls when subjected to network traffic at rated capacity • Product examples: – Firewalls, intrusion prevention devices, IP phones with integrated switch ports, session border controllers, etc • What we do: Generate real session-based “Internet Mix” traffic and measure throughput performance of Vo. IP calls and IMIX traffic – e. g. http, ftp, P 2 P, SMTP, POP 3, etc – Open source tool: “D-ITG” http: //www. grid. unina. it/software/ITG/ – Notable vendor: Shenick (www. shenick. com) 18

Adding “Internet Mix” Network Traffic • The goal: see the DUT’s impact on Vo. IP calls when subjected to network traffic at rated capacity • Product examples: – Firewalls, intrusion prevention devices, IP phones with integrated switch ports, session border controllers, etc • What we do: Generate real session-based “Internet Mix” traffic and measure throughput performance of Vo. IP calls and IMIX traffic – e. g. http, ftp, P 2 P, SMTP, POP 3, etc – Open source tool: “D-ITG” http: //www. grid. unina. it/software/ITG/ – Notable vendor: Shenick (www. shenick. com) 18

Voice Quality Assessment. Voice and Video Quality Assessment. Automated Testing Techniques 19

Voice Quality Assessment. Voice and Video Quality Assessment. Automated Testing Techniques 19

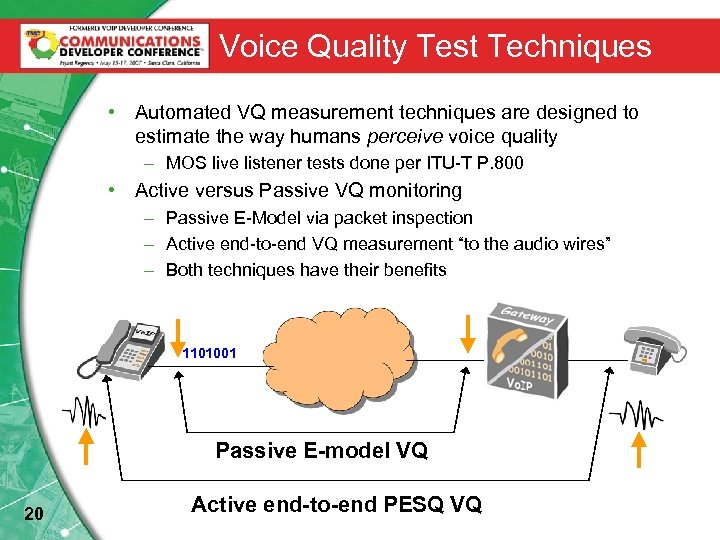

Voice Quality Test Techniques • Automated VQ measurement techniques are designed to estimate the way humans perceive voice quality – MOS live listener tests done per ITU-T P. 800 • Active versus Passive VQ monitoring – Passive E-Model via packet inspection – Active end-to-end VQ measurement “to the audio wires” – Both techniques have their benefits 1101001 Passive E-model VQ 20 Active end-to-end PESQ VQ

Voice Quality Test Techniques • Automated VQ measurement techniques are designed to estimate the way humans perceive voice quality – MOS live listener tests done per ITU-T P. 800 • Active versus Passive VQ monitoring – Passive E-Model via packet inspection – Active end-to-end VQ measurement “to the audio wires” – Both techniques have their benefits 1101001 Passive E-model VQ 20 Active end-to-end PESQ VQ

Active vs Passive VQ Testing • Active voice quality testing – Involves evaluation of “received” audio signals as compared to known references • i. e. you drive real 2 -way calls through the Vo. IP network – PESQ P. 862 (2001) • High correlation with standard MOS-LQ subjective tests – Benefits: More accurate, uses mature standards (PESQ) for automated quality assessment – Negatives: Consumes Vo. IP network resources 21

Active vs Passive VQ Testing • Active voice quality testing – Involves evaluation of “received” audio signals as compared to known references • i. e. you drive real 2 -way calls through the Vo. IP network – PESQ P. 862 (2001) • High correlation with standard MOS-LQ subjective tests – Benefits: More accurate, uses mature standards (PESQ) for automated quality assessment – Negatives: Consumes Vo. IP network resources 21

Active vs Passive VQ Testing • Passive voice quality testing – Involves passive evaluation of call-based packet flows • ITU-T G. 107 E-Model • Can return estimated MOS-LQ and MOS-CQ scores (Listening versus Conversational) – Benefits: Can be embedded into products and test equipment with relatively low resource footprint – Negatives: Ignores (or models) Vo. IP endpoint-specific behaviors to network conditions. Vendor implementations can vary. 22

Active vs Passive VQ Testing • Passive voice quality testing – Involves passive evaluation of call-based packet flows • ITU-T G. 107 E-Model • Can return estimated MOS-LQ and MOS-CQ scores (Listening versus Conversational) – Benefits: Can be embedded into products and test equipment with relatively low resource footprint – Negatives: Ignores (or models) Vo. IP endpoint-specific behaviors to network conditions. Vendor implementations can vary. 22

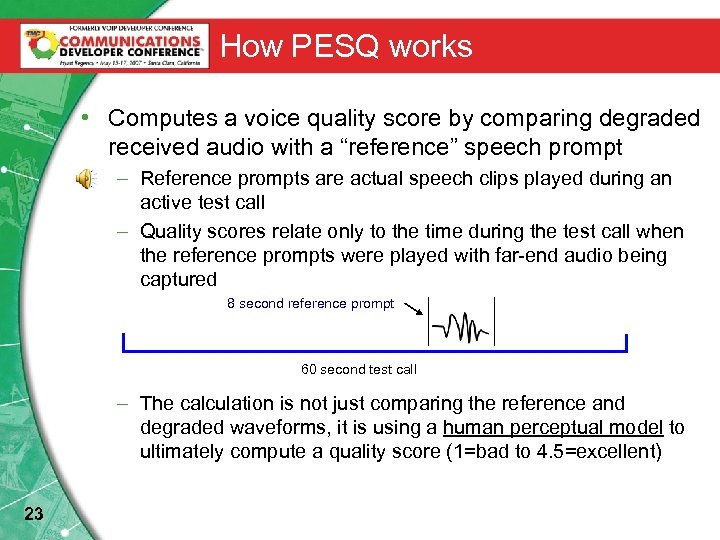

How PESQ works • Computes a voice quality score by comparing degraded received audio with a “reference” speech prompt – Reference prompts are actual speech clips played during an active test call – Quality scores relate only to the time during the test call when the reference prompts were played with far-end audio being captured 8 second reference prompt 60 second test call – The calculation is not just comparing the reference and degraded waveforms, it is using a human perceptual model to ultimately compute a quality score (1=bad to 4. 5=excellent) 23

How PESQ works • Computes a voice quality score by comparing degraded received audio with a “reference” speech prompt – Reference prompts are actual speech clips played during an active test call – Quality scores relate only to the time during the test call when the reference prompts were played with far-end audio being captured 8 second reference prompt 60 second test call – The calculation is not just comparing the reference and degraded waveforms, it is using a human perceptual model to ultimately compute a quality score (1=bad to 4. 5=excellent) 23

What PESQ VQ Testing is designed for ü PESQ is a way to quickly and cost-effectively estimate the effects of one-way speech distortion and noise on speech quality ü PESQ is “endpoint-agnostic” – can be used for Vo. IPto-Vo. IP, Vo. IP-to-PSTN calls, etc. • Strengths – Provides excellent estimate of voice quality – Tests can be performed quickly – Tests are very repeatable 24

What PESQ VQ Testing is designed for ü PESQ is a way to quickly and cost-effectively estimate the effects of one-way speech distortion and noise on speech quality ü PESQ is “endpoint-agnostic” – can be used for Vo. IPto-Vo. IP, Vo. IP-to-PSTN calls, etc. • Strengths – Provides excellent estimate of voice quality – Tests can be performed quickly – Tests are very repeatable 24

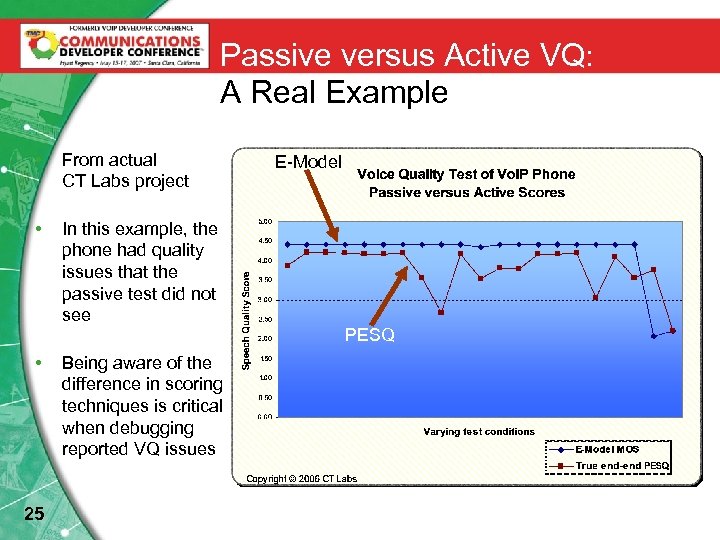

Passive versus Active VQ: A Real Example • From actual CT Labs project • In this example, the phone had quality issues that the passive test did not see E-Model PESQ • 25 Being aware of the difference in scoring techniques is critical when debugging reported VQ issues

Passive versus Active VQ: A Real Example • From actual CT Labs project • In this example, the phone had quality issues that the passive test did not see E-Model PESQ • 25 Being aware of the difference in scoring techniques is critical when debugging reported VQ issues

Video Quality Test Techniques • Automated Video quality measurement techniques estimate the way humans perceive picture quality – Live viewer tests done per ITU-T BT. 500 • Three classes of objective video quality algorithms – Full reference, partial reference, and zero reference • Full reference techniques – PSNR (most used), VIM, SSIM. See ITU-T J. 144. – Compute intensive, not useful for real time measurements – Software suite available at: http: //www. compression. ru • Zero reference techniques 26 – Best suited for in-service monitoring – Standards activity continues – Encompasses quality tests for picture, audio, multimedia, and network’s ability to carry streams.

Video Quality Test Techniques • Automated Video quality measurement techniques estimate the way humans perceive picture quality – Live viewer tests done per ITU-T BT. 500 • Three classes of objective video quality algorithms – Full reference, partial reference, and zero reference • Full reference techniques – PSNR (most used), VIM, SSIM. See ITU-T J. 144. – Compute intensive, not useful for real time measurements – Software suite available at: http: //www. compression. ru • Zero reference techniques 26 – Best suited for in-service monitoring – Standards activity continues – Encompasses quality tests for picture, audio, multimedia, and network’s ability to carry streams.

Load and Stress Testing 27

Load and Stress Testing 27

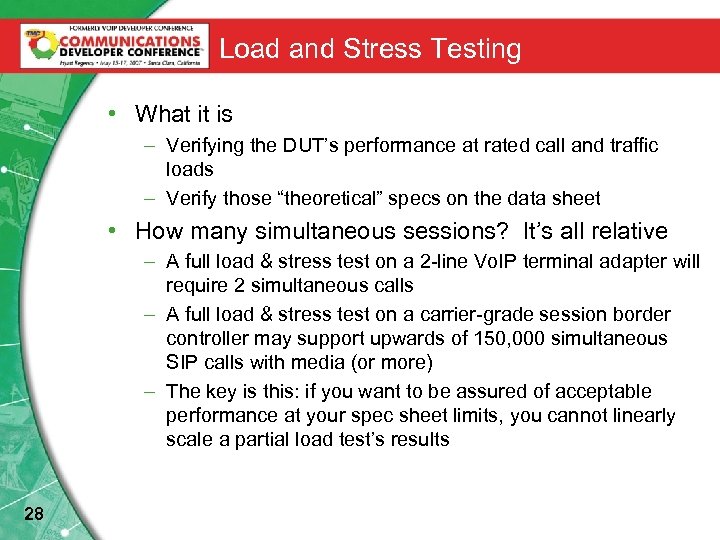

Load and Stress Testing • What it is – Verifying the DUT’s performance at rated call and traffic loads – Verify those “theoretical” specs on the data sheet • How many simultaneous sessions? It’s all relative – A full load & stress test on a 2 -line Vo. IP terminal adapter will require 2 simultaneous calls – A full load & stress test on a carrier-grade session border controller may support upwards of 150, 000 simultaneous SIP calls with media (or more) – The key is this: if you want to be assured of acceptable performance at your spec sheet limits, you cannot linearly scale a partial load test’s results 28

Load and Stress Testing • What it is – Verifying the DUT’s performance at rated call and traffic loads – Verify those “theoretical” specs on the data sheet • How many simultaneous sessions? It’s all relative – A full load & stress test on a 2 -line Vo. IP terminal adapter will require 2 simultaneous calls – A full load & stress test on a carrier-grade session border controller may support upwards of 150, 000 simultaneous SIP calls with media (or more) – The key is this: if you want to be assured of acceptable performance at your spec sheet limits, you cannot linearly scale a partial load test’s results 28

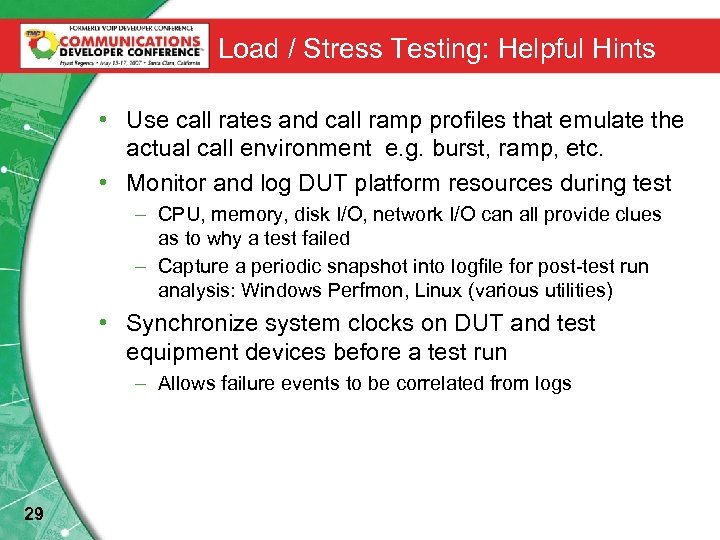

Load / Stress Testing: Helpful Hints • Use call rates and call ramp profiles that emulate the actual call environment e. g. burst, ramp, etc. • Monitor and log DUT platform resources during test – CPU, memory, disk I/O, network I/O can all provide clues as to why a test failed – Capture a periodic snapshot into logfile for post-test run analysis: Windows Perfmon, Linux (various utilities) • Synchronize system clocks on DUT and test equipment devices before a test run – Allows failure events to be correlated from logs 29

Load / Stress Testing: Helpful Hints • Use call rates and call ramp profiles that emulate the actual call environment e. g. burst, ramp, etc. • Monitor and log DUT platform resources during test – CPU, memory, disk I/O, network I/O can all provide clues as to why a test failed – Capture a periodic snapshot into logfile for post-test run analysis: Windows Perfmon, Linux (various utilities) • Synchronize system clocks on DUT and test equipment devices before a test run – Allows failure events to be correlated from logs 29

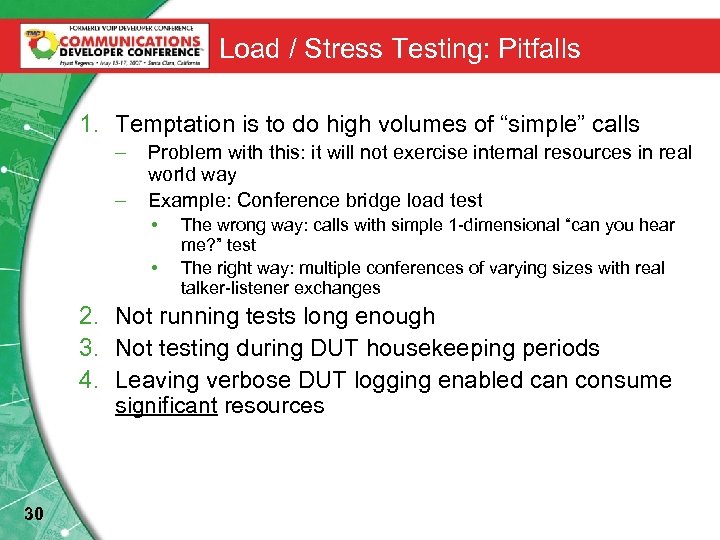

Load / Stress Testing: Pitfalls 1. Temptation is to do high volumes of “simple” calls – – Problem with this: it will not exercise internal resources in real world way Example: Conference bridge load test • • The wrong way: calls with simple 1 -dimensional “can you hear me? ” test The right way: multiple conferences of varying sizes with real talker-listener exchanges 2. Not running tests long enough 3. Not testing during DUT housekeeping periods 4. Leaving verbose DUT logging enabled can consume significant resources 30

Load / Stress Testing: Pitfalls 1. Temptation is to do high volumes of “simple” calls – – Problem with this: it will not exercise internal resources in real world way Example: Conference bridge load test • • The wrong way: calls with simple 1 -dimensional “can you hear me? ” test The right way: multiple conferences of varying sizes with real talker-listener exchanges 2. Not running tests long enough 3. Not testing during DUT housekeeping periods 4. Leaving verbose DUT logging enabled can consume significant resources 30

Test. Functional Testing Automation Setups 31

Test. Functional Testing Automation Setups 31

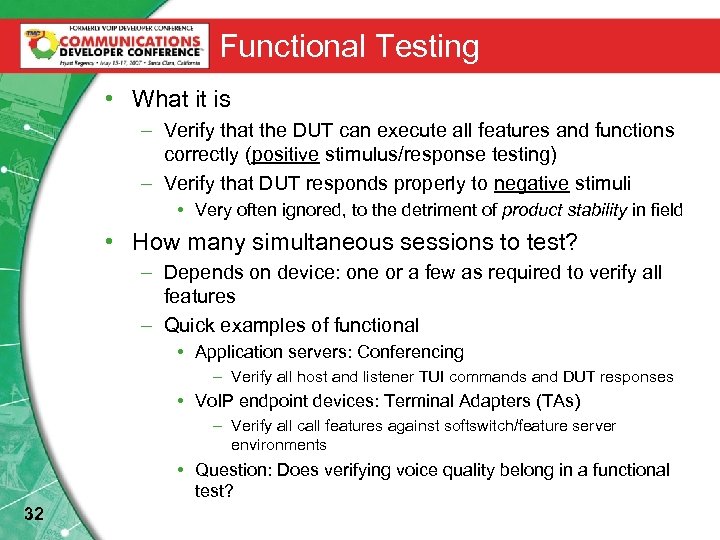

Functional Testing • What it is – Verify that the DUT can execute all features and functions correctly (positive stimulus/response testing) – Verify that DUT responds properly to negative stimuli • Very often ignored, to the detriment of product stability in field • How many simultaneous sessions to test? – Depends on device: one or a few as required to verify all features – Quick examples of functional • Application servers: Conferencing – Verify all host and listener TUI commands and DUT responses • Vo. IP endpoint devices: Terminal Adapters (TAs) – Verify all call features against softswitch/feature server environments • Question: Does verifying voice quality belong in a functional test? 32

Functional Testing • What it is – Verify that the DUT can execute all features and functions correctly (positive stimulus/response testing) – Verify that DUT responds properly to negative stimuli • Very often ignored, to the detriment of product stability in field • How many simultaneous sessions to test? – Depends on device: one or a few as required to verify all features – Quick examples of functional • Application servers: Conferencing – Verify all host and listener TUI commands and DUT responses • Vo. IP endpoint devices: Terminal Adapters (TAs) – Verify all call features against softswitch/feature server environments • Question: Does verifying voice quality belong in a functional test? 32

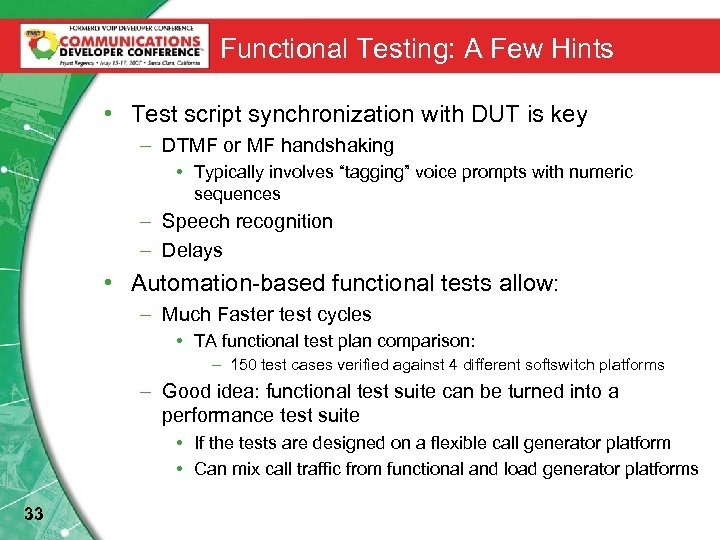

Functional Testing: A Few Hints • Test script synchronization with DUT is key – DTMF or MF handshaking • Typically involves “tagging” voice prompts with numeric sequences – Speech recognition – Delays • Automation-based functional tests allow: – Much Faster test cycles • TA functional test plan comparison: – 150 test cases verified against 4 different softswitch platforms – Good idea: functional test suite can be turned into a performance test suite • If the tests are designed on a flexible call generator platform • Can mix call traffic from functional and load generator platforms 33

Functional Testing: A Few Hints • Test script synchronization with DUT is key – DTMF or MF handshaking • Typically involves “tagging” voice prompts with numeric sequences – Speech recognition – Delays • Automation-based functional tests allow: – Much Faster test cycles • TA functional test plan comparison: – 150 test cases verified against 4 different softswitch platforms – Good idea: functional test suite can be turned into a performance test suite • If the tests are designed on a flexible call generator platform • Can mix call traffic from functional and load generator platforms 33

Test Automation Setups 34

Test Automation Setups 34

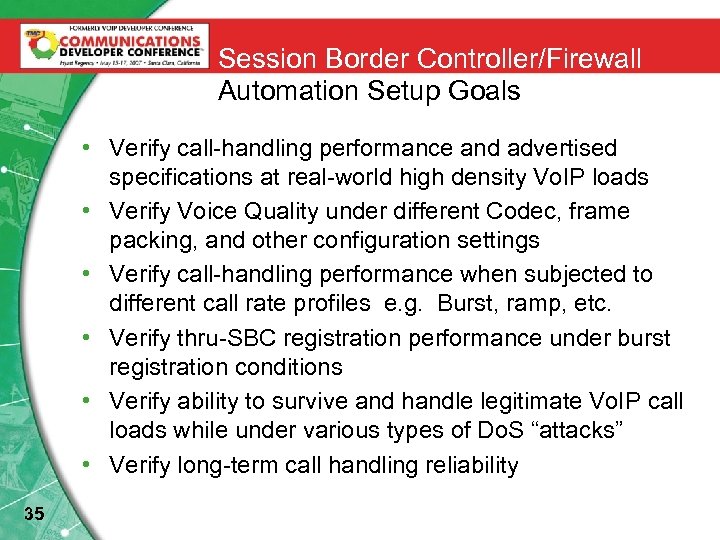

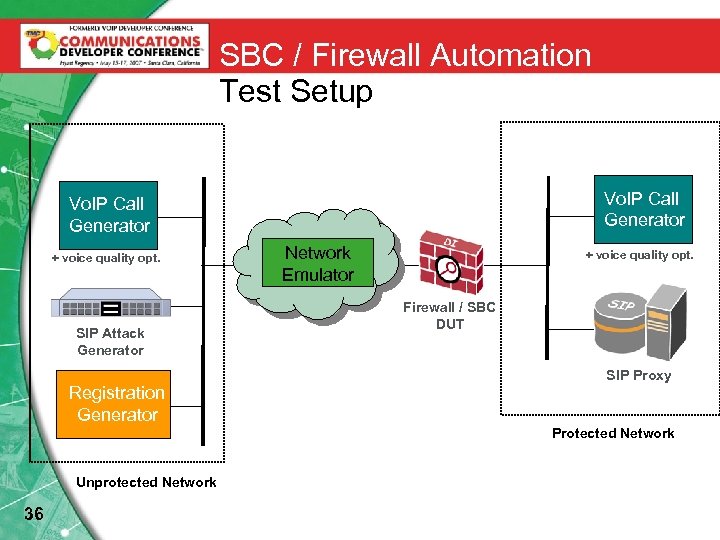

Session Border Controller/Firewall Automation Setup Goals • Verify call-handling performance and advertised specifications at real-world high density Vo. IP loads • Verify Voice Quality under different Codec, frame packing, and other configuration settings • Verify call-handling performance when subjected to different call rate profiles e. g. Burst, ramp, etc. • Verify thru-SBC registration performance under burst registration conditions • Verify ability to survive and handle legitimate Vo. IP call loads while under various types of Do. S “attacks” • Verify long-term call handling reliability 35

Session Border Controller/Firewall Automation Setup Goals • Verify call-handling performance and advertised specifications at real-world high density Vo. IP loads • Verify Voice Quality under different Codec, frame packing, and other configuration settings • Verify call-handling performance when subjected to different call rate profiles e. g. Burst, ramp, etc. • Verify thru-SBC registration performance under burst registration conditions • Verify ability to survive and handle legitimate Vo. IP call loads while under various types of Do. S “attacks” • Verify long-term call handling reliability 35

SBC / Firewall Automation Test Setup Vo. IP Call Generator + voice quality opt. SIP Attack Generator Registration Generator Network Emulator + voice quality opt. Firewall / SBC DUT SIP Proxy Protected Network Unprotected Network 36

SBC / Firewall Automation Test Setup Vo. IP Call Generator + voice quality opt. SIP Attack Generator Registration Generator Network Emulator + voice quality opt. Firewall / SBC DUT SIP Proxy Protected Network Unprotected Network 36

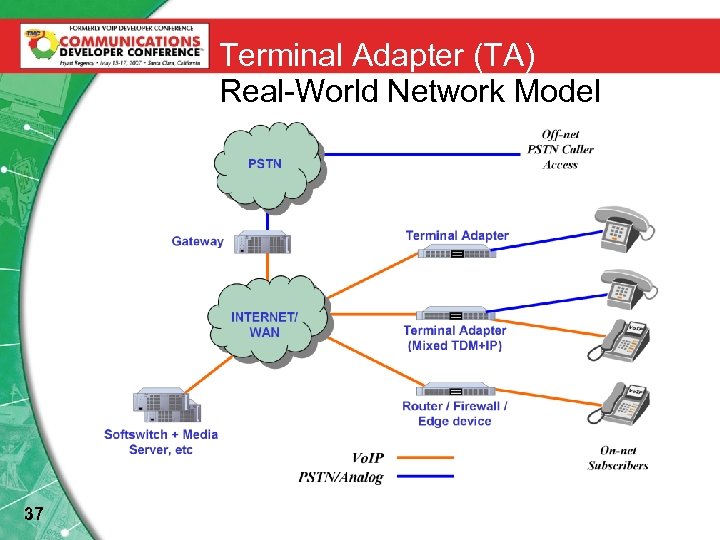

Terminal Adapter (TA) Real-World Network Model Vo. IP PSTN/Analog 37

Terminal Adapter (TA) Real-World Network Model Vo. IP PSTN/Analog 37

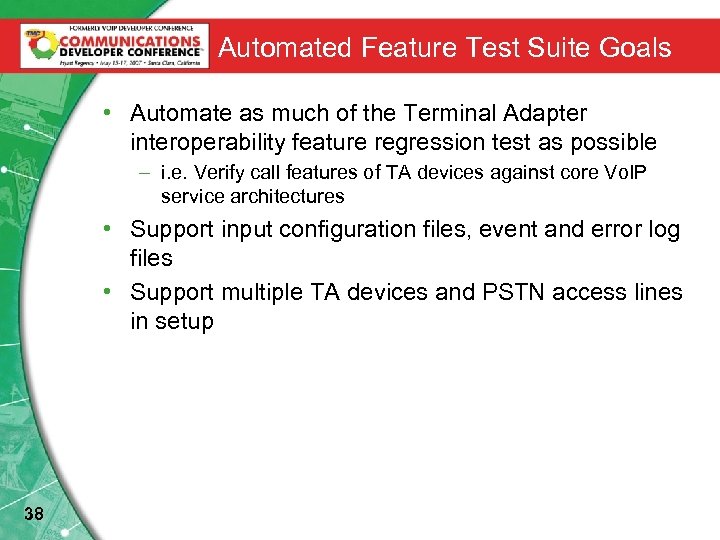

Automated Feature Test Suite Goals • Automate as much of the Terminal Adapter interoperability feature regression test as possible – i. e. Verify call features of TA devices against core Vo. IP service architectures • Support input configuration files, event and error log files • Support multiple TA devices and PSTN access lines in setup 38

Automated Feature Test Suite Goals • Automate as much of the Terminal Adapter interoperability feature regression test as possible – i. e. Verify call features of TA devices against core Vo. IP service architectures • Support input configuration files, event and error log files • Support multiple TA devices and PSTN access lines in setup 38

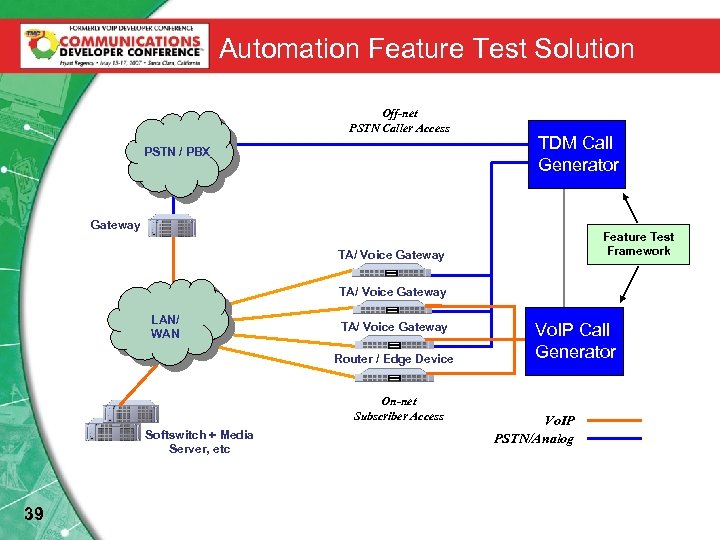

Automation Feature Test Solution Off-net PSTN Caller Access PSTN / PBX TDM Call Generator Gateway Feature Test Framework TA/ Voice Gateway LAN/ WAN TA/ Voice Gateway Router / Edge Device On-net Subscriber Access Softswitch + Media Server, etc 39 Vo. IP Call Generator Vo. IP PSTN/Analog

Automation Feature Test Solution Off-net PSTN Caller Access PSTN / PBX TDM Call Generator Gateway Feature Test Framework TA/ Voice Gateway LAN/ WAN TA/ Voice Gateway Router / Edge Device On-net Subscriber Access Softswitch + Media Server, etc 39 Vo. IP Call Generator Vo. IP PSTN/Analog

Automation Feature Test Framework Details • Supports 140+ feature tests – Including 2 -way calls, 3 -way calls, features including hold/park/transfer, 911/411, voice mail, + voice quality checking • Test run results captured in easily analyzed logs • Custom reports are generated • Individual test case scripts easily changed 40

Automation Feature Test Framework Details • Supports 140+ feature tests – Including 2 -way calls, 3 -way calls, features including hold/park/transfer, 911/411, voice mail, + voice quality checking • Test run results captured in easily analyzed logs • Custom reports are generated • Individual test case scripts easily changed 40

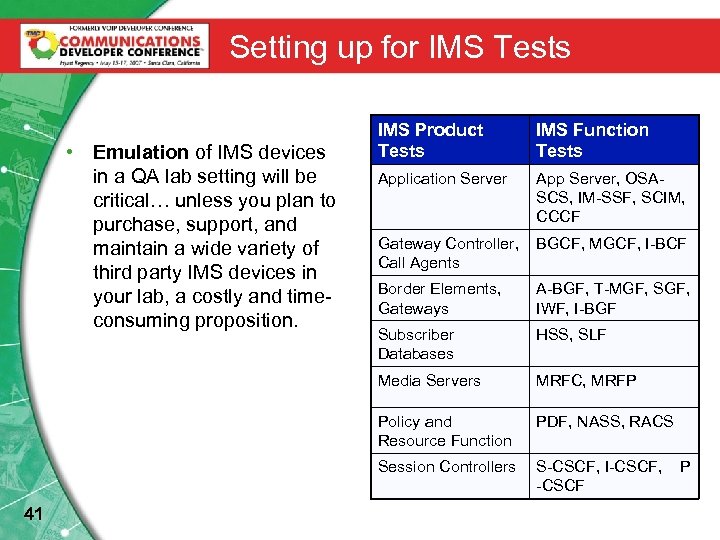

Setting up for IMS Tests Application Server App Server, OSASCS, IM-SSF, SCIM, CCCF Gateway Controller, Call Agents BGCF, MGCF, I-BCF Border Elements, Gateways A-BGF, T-MGF, SGF, IWF, I-BGF Subscriber Databases HSS, SLF MRFC, MRFP Policy and Resource Function PDF, NASS, RACS Session Controllers 41 IMS Function Tests Media Servers • Emulation of IMS devices in a QA lab setting will be critical… unless you plan to purchase, support, and maintain a wide variety of third party IMS devices in your lab, a costly and timeconsuming proposition. IMS Product Tests S-CSCF, I-CSCF, -CSCF P

Setting up for IMS Tests Application Server App Server, OSASCS, IM-SSF, SCIM, CCCF Gateway Controller, Call Agents BGCF, MGCF, I-BCF Border Elements, Gateways A-BGF, T-MGF, SGF, IWF, I-BGF Subscriber Databases HSS, SLF MRFC, MRFP Policy and Resource Function PDF, NASS, RACS Session Controllers 41 IMS Function Tests Media Servers • Emulation of IMS devices in a QA lab setting will be critical… unless you plan to purchase, support, and maintain a wide variety of third party IMS devices in your lab, a costly and timeconsuming proposition. IMS Product Tests S-CSCF, I-CSCF, -CSCF P

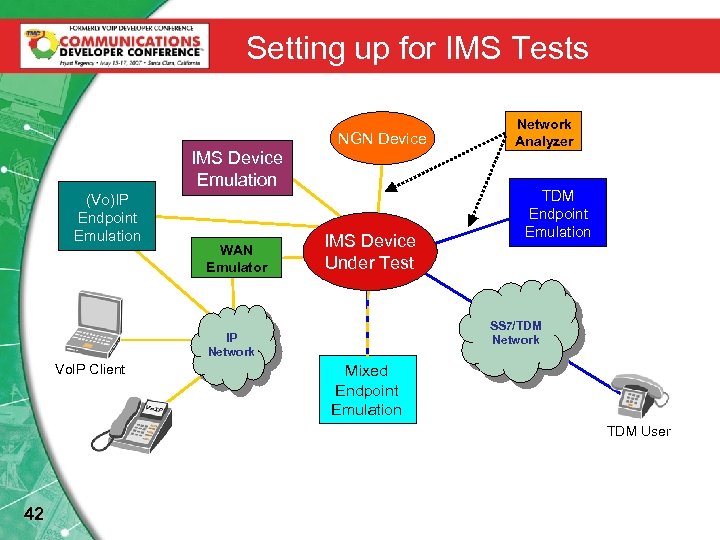

Setting up for IMS Tests NGN Device IMS Device Emulation (Vo)IP Endpoint Emulation WAN Emulator IMS Device Under Test TDM Endpoint Emulation SS 7/TDM Network IP Network Vo. IP Client Network Analyzer Mixed Endpoint Emulation TDM User 42

Setting up for IMS Tests NGN Device IMS Device Emulation (Vo)IP Endpoint Emulation WAN Emulator IMS Device Under Test TDM Endpoint Emulation SS 7/TDM Network IP Network Vo. IP Client Network Analyzer Mixed Endpoint Emulation TDM User 42

Vo. IP Security Testing— Issues to consider 43

Vo. IP Security Testing— Issues to consider 43

Vo. IP Vulnerabilities/Threats • The bad news: Vo. IP systems are vulnerable – Platforms are vulnerable – Vo. IP-specific attacks are becoming more common • The good news: The threat is still developing – Vo. IP handsets are still in minority “out there” – Vast majority of Vo. IP is company-internal Courtesy: Mark Collier, CTO Secure. Logix • Vo. IP networks share the same vulnerabilities that plague data networks, PLUS some specific additional threats 44

Vo. IP Vulnerabilities/Threats • The bad news: Vo. IP systems are vulnerable – Platforms are vulnerable – Vo. IP-specific attacks are becoming more common • The good news: The threat is still developing – Vo. IP handsets are still in minority “out there” – Vast majority of Vo. IP is company-internal Courtesy: Mark Collier, CTO Secure. Logix • Vo. IP networks share the same vulnerabilities that plague data networks, PLUS some specific additional threats 44

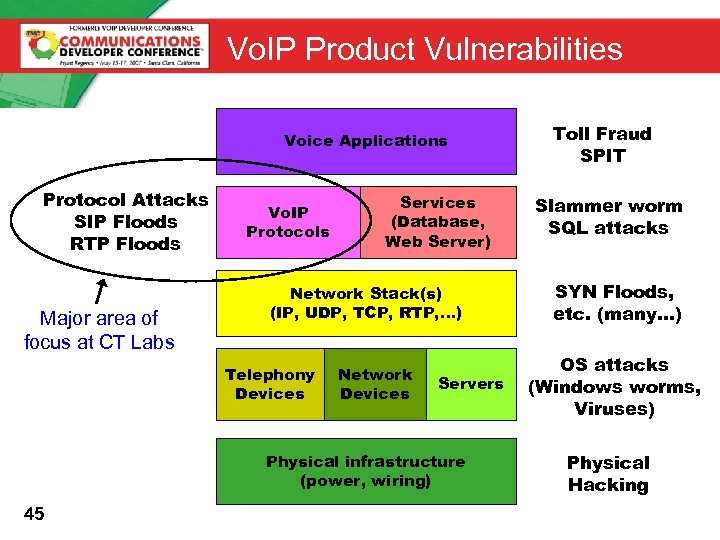

Vo. IP Product Vulnerabilities Voice Applications Protocol Attacks SIP Floods RTP Floods Major area of focus at CT Labs Vo. IP Protocols Services (Database, Web Server) Network Stack(s) (IP, UDP, TCP, RTP, …) Telephony Devices Network Devices Servers Physical infrastructure (power, wiring) 45 Toll Fraud SPIT Slammer worm SQL attacks SYN Floods, etc. (many…) OS attacks (Windows worms, Viruses) Physical Hacking

Vo. IP Product Vulnerabilities Voice Applications Protocol Attacks SIP Floods RTP Floods Major area of focus at CT Labs Vo. IP Protocols Services (Database, Web Server) Network Stack(s) (IP, UDP, TCP, RTP, …) Telephony Devices Network Devices Servers Physical infrastructure (power, wiring) 45 Toll Fraud SPIT Slammer worm SQL attacks SYN Floods, etc. (many…) OS attacks (Windows worms, Viruses) Physical Hacking

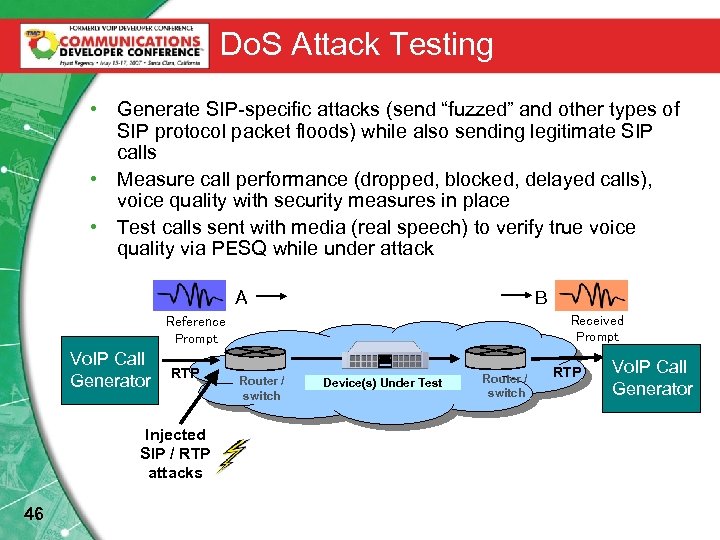

Do. S Attack Testing • Generate SIP-specific attacks (send “fuzzed” and other types of SIP protocol packet floods) while also sending legitimate SIP calls • Measure call performance (dropped, blocked, delayed calls), voice quality with security measures in place • Test calls sent with media (real speech) to verify true voice quality via PESQ while under attack A B Received Prompt Reference Prompt Vo. IP Call Generator RTP Injected SIP / RTP attacks 46 Router / switch Device(s) Under Test Router / switch RTP Vo. IP Call Generator

Do. S Attack Testing • Generate SIP-specific attacks (send “fuzzed” and other types of SIP protocol packet floods) while also sending legitimate SIP calls • Measure call performance (dropped, blocked, delayed calls), voice quality with security measures in place • Test calls sent with media (real speech) to verify true voice quality via PESQ while under attack A B Received Prompt Reference Prompt Vo. IP Call Generator RTP Injected SIP / RTP attacks 46 Router / switch Device(s) Under Test Router / switch RTP Vo. IP Call Generator

SIP-Specific Attacks to Launch – i. e. in addition to lower-layer well known Do. S attacks • Blast packets from these scenarios at up to line rates: – Malformed and Torture Test floods • Using SIP packets from open source Protos test suite – INVITE, REGISTER, and Response floods – Spoofed variations for above • i. e. Spoofing the IP address and port of legitimate devices, or spoofing the Via or Ao. R of legitimate users – RTP attacks • Rogue / Random RTP Fraud and Floods 47

SIP-Specific Attacks to Launch – i. e. in addition to lower-layer well known Do. S attacks • Blast packets from these scenarios at up to line rates: – Malformed and Torture Test floods • Using SIP packets from open source Protos test suite – INVITE, REGISTER, and Response floods – Spoofed variations for above • i. e. Spoofing the IP address and port of legitimate devices, or spoofing the Via or Ao. R of legitimate users – RTP attacks • Rogue / Random RTP Fraud and Floods 47

SIP-Specific Attacks: What to expect • Run each variation for 10 -15 minutes – In the presence of varying levels of legitimate Vo. IP traffic – Monitoring DUT resources (CPU, memory), call completion rates, and voice quality of completed calls • It’s typical to see threshold failure effects – i. e. above certain levels of legitimate SIP calls + attack packets, service takes a major hit. Below that threshold normal calls may be handled fine. • DUT often shows weakness within seconds of test start • DUT may exhibit hard or soft crashes • Voice quality may show early warning of catastrophic failure 48

SIP-Specific Attacks: What to expect • Run each variation for 10 -15 minutes – In the presence of varying levels of legitimate Vo. IP traffic – Monitoring DUT resources (CPU, memory), call completion rates, and voice quality of completed calls • It’s typical to see threshold failure effects – i. e. above certain levels of legitimate SIP calls + attack packets, service takes a major hit. Below that threshold normal calls may be handled fine. • DUT often shows weakness within seconds of test start • DUT may exhibit hard or soft crashes • Voice quality may show early warning of catastrophic failure 48

Good resources on Vo. IP Security • NIST – National Institute of Standards and Technology – Publication 800 -58: “Security Considerations for Vo. IP Systems” (99 pgs, free) – http: //csrc. nist. gov/publications/nistpubs • Vo. IPSA – Voice over IP Security Alliance – Promoting education & awareness, research, testing methodologies & tools – Extensive membership: vendors, Vo. IP providers, researchers, security vendors, test tool vendors – www. voipsa. org • PROTOS group - University of Oulu in Finland – Using protocol fuzzing to discover a wide variety of Do. S and buffer overflow vulnerabilities – Have exposed HTTP, LDAP, SNMP, WAP, and Vo. IP vulnerabilities – www. ee. oulu. fi/research/ouspg/protos/index. html • Mu Security 49 – Manufacturers of a powerful protocol mutation tester (Mu-4000) – www. musecurity. com

Good resources on Vo. IP Security • NIST – National Institute of Standards and Technology – Publication 800 -58: “Security Considerations for Vo. IP Systems” (99 pgs, free) – http: //csrc. nist. gov/publications/nistpubs • Vo. IPSA – Voice over IP Security Alliance – Promoting education & awareness, research, testing methodologies & tools – Extensive membership: vendors, Vo. IP providers, researchers, security vendors, test tool vendors – www. voipsa. org • PROTOS group - University of Oulu in Finland – Using protocol fuzzing to discover a wide variety of Do. S and buffer overflow vulnerabilities – Have exposed HTTP, LDAP, SNMP, WAP, and Vo. IP vulnerabilities – www. ee. oulu. fi/research/ouspg/protos/index. html • Mu Security 49 – Manufacturers of a powerful protocol mutation tester (Mu-4000) – www. musecurity. com

Feel free to call if you have any questions Chris Bajorek chris@ct-labs. com 916 -577 -2110 (direct line)

Feel free to call if you have any questions Chris Bajorek chris@ct-labs. com 916 -577 -2110 (direct line)