52fbe0f3cf2069ac54629f7b5cadd7cd.ppt

- Количество слайдов: 42

1 The New “Bill of Rights” of Information Society Raj Reddy and Jaime Carbonell Carnegie Mellon University March 23, 2006 Talk at Google

New Bill of Rights l Get the right information l l To the right people l l e. g. machine translation With the right level of detail l l e. g. Just-in-Time (task modeling, planning) In the right language l l e. g. categorizing, routing At the right time l l e. g. search engines e. g. summarization In the right medium l e. g. access to information in non-textual media 2

Relevant Technologies l l l “…right information” “…right people” “…right time” “…right language” “…right level of detail” “…right medium” l l l search engines classification, routing anticipatory analysis machine translation summarization speech input and output 3

4 “…right information” Search Engines

The Right Information l Right Information from future Search Engines l l How to go beyond just “relevance to query” (all) and “popularity” Eliminate massive redundancy e. g. “web-based email” l Should not result in l l Should result in l l multiple links to different yahoo sites promoting their email, or even non. Yahoo sites discussing just Yahoo-email. a link to Yahoo email, one to MSN email, one to Gmail, one that compares them, etc. First show trusted info sources and user-community-vetted sources l At least for important info (medical, financial, educational, …), I want to trust what I read, e. g. , l For new medical treatments First info from hospitals, medical schools, the AMA, medical publications, etc. , and l NOT from Joe Shmo’s quack practice page or from the National Enquirer. l l Maximum Marginal Relevance Novelty Detection Named Entity Extraction 5

Beyond Pure Relevance in IR Current Information Retrieval Technology Only Maximizes Relevance to Query l What about information novelty, timeliness, appropriateness, validity, comprehensibility, density, medium, . . . ? ? l Novelty is approximated by non-redundancy! l l we really want to maximize: relevance to the query, given the user profile and interaction history, l l P(U(f i , . . . , f n ) | Q & {C} & U & H) where Q = query, {C} = collection set, U = user profile, H = interaction history . . . but we don’t yet know how. Darn. 6

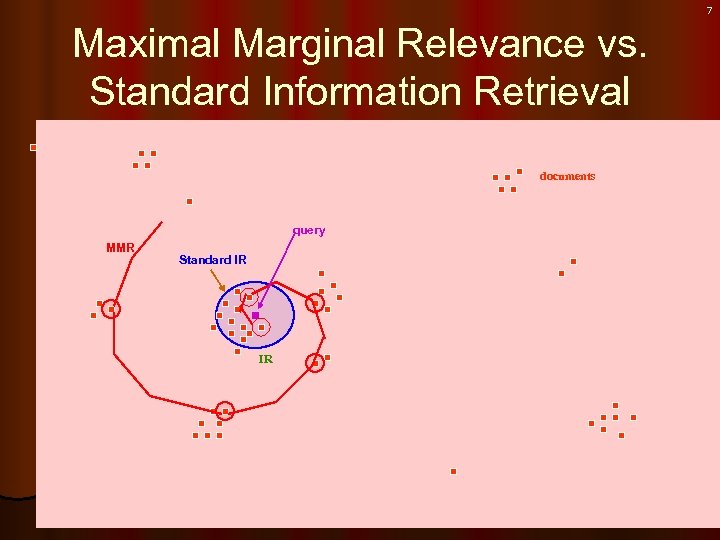

7 Maximal Marginal Relevance vs. Standard Information Retrieval documents query MMR Standard IR IR

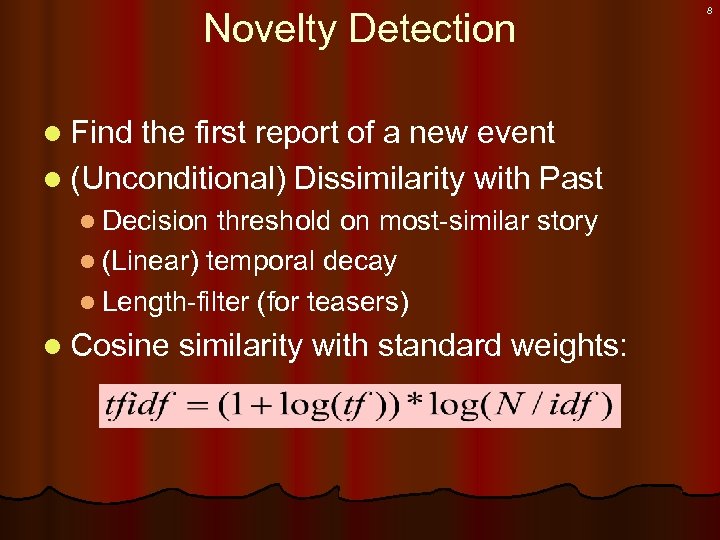

Novelty Detection l Find the first report of a new event l (Unconditional) Dissimilarity with Past l Decision threshold on most-similar story l (Linear) temporal decay l Length-filter (for teasers) l Cosine similarity with standard weights: 8

New First Story Detection Directions l Topic-conditional models l e. g. “airplane, ” “investigation, ” “FAA, ” “FBI, ” “casualties, ” topic, not event l “TWA 800, ” “March 12, 1997” event l First categorize into topic, then use maximallydiscriminative terms within topic l Rely on situated named entities l e. g. “Arcan as victim, ” “Sharon as peacemaker” 9

Link Detection in Texts Find text (e. g. Newstories) that mention the same underlying events. l Could be combined with novelty (e. g. something l new about interesting event. ) l Techniques: text similarity, NE’s, situated NE’s, relations, topic-conditioned models, … 10

Named-Entity identification Purpose: to answer questions such as: l Who is mentioned in these 100 Society articles? l What locations are listed in these 2000 web pages? l What companies are mentioned in these patent applications? l What products were evaluated by Consumer Reports this year? 11

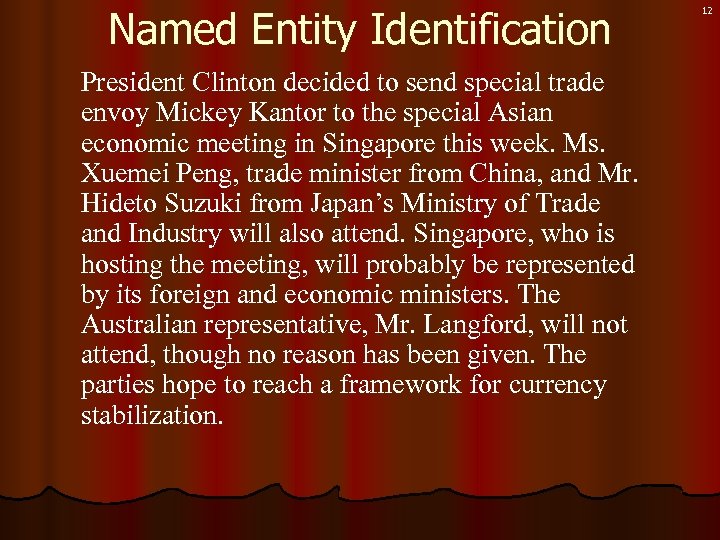

Named Entity Identification President Clinton decided to send special trade envoy Mickey Kantor to the special Asian economic meeting in Singapore this week. Ms. Xuemei Peng, trade minister from China, and Mr. Hideto Suzuki from Japan’s Ministry of Trade and Industry will also attend. Singapore, who is hosting the meeting, will probably be represented by its foreign and economic ministers. The Australian representative, Mr. Langford, will not attend, though no reason has been given. The parties hope to reach a framework for currency stabilization. 12

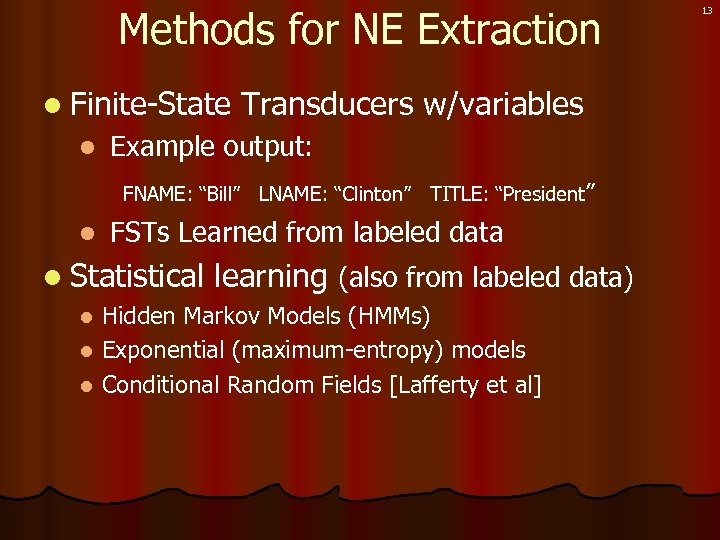

Methods for NE Extraction l Finite-State l Transducers w/variables Example output: FNAME: “Bill” LNAME: “Clinton” TITLE: “President” l FSTs Learned from labeled data l Statistical learning (also from labeled data) Hidden Markov Models (HMMs) l Exponential (maximum-entropy) models l Conditional Random Fields [Lafferty et al] l 13

Named Entity Identification Extracted Named Entities (NEs) People Places President Clinton Mickey Kantor Ms. Xuemei Peng Mr. Hideto Suzuki Mr. Langford Singapore Japan China Australia 14

Role Situated NE’s Motivation: It is useful to know roles of NE’s: l Who participated in the economic meeting? l Who hosted the economic meeting? l Who was discussed in the economic meeting? l Who was absent from the economic meeting? 15

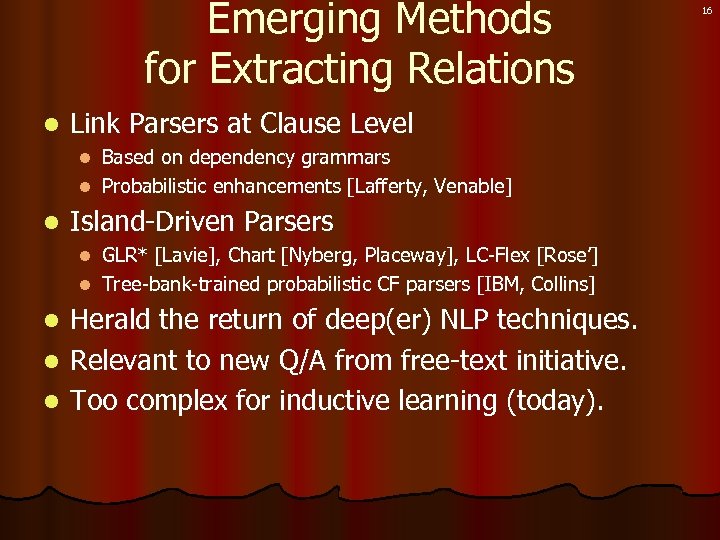

Emerging Methods for Extracting Relations l Link Parsers at Clause Level Based on dependency grammars l Probabilistic enhancements [Lafferty, Venable] l l Island-Driven Parsers GLR* [Lavie], Chart [Nyberg, Placeway], LC-Flex [Rose’] l Tree-bank-trained probabilistic CF parsers [IBM, Collins] l Herald the return of deep(er) NLP techniques. l Relevant to new Q/A from free-text initiative. l Too complex for inductive learning (today). l 16

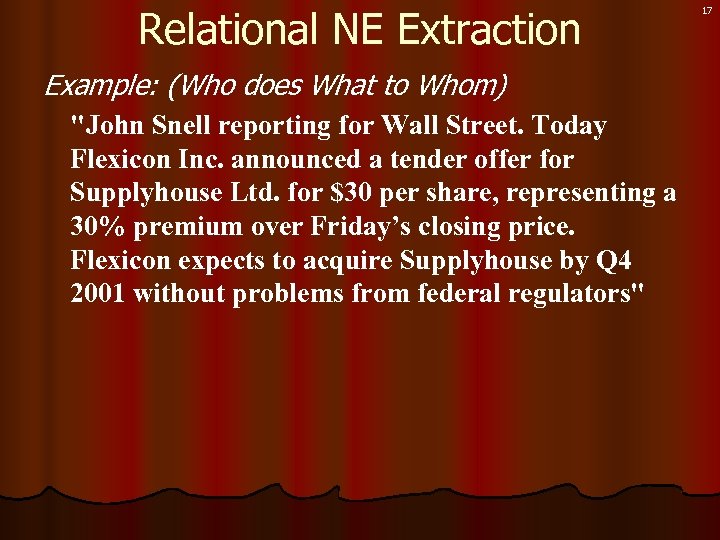

Relational NE Extraction Example: (Who does What to Whom) "John Snell reporting for Wall Street. Today Flexicon Inc. announced a tender offer for Supplyhouse Ltd. for $30 per share, representing a 30% premium over Friday’s closing price. Flexicon expects to acquire Supplyhouse by Q 4 2001 without problems from federal regulators" 17

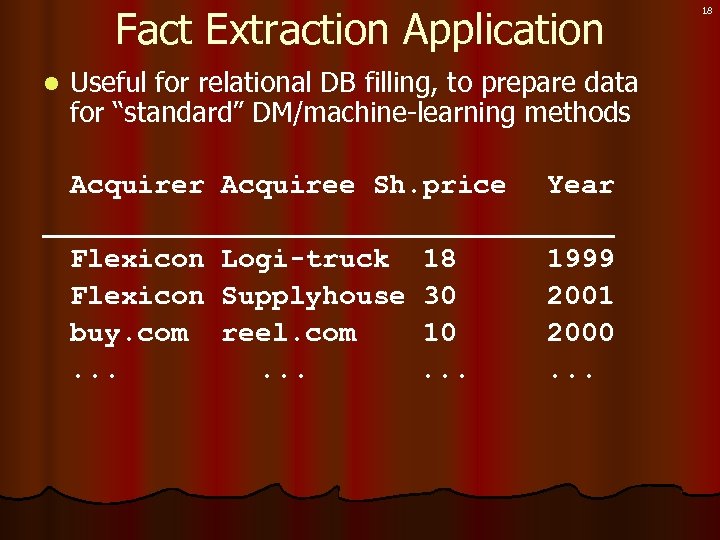

Fact Extraction Application l Useful for relational DB filling, to prepare data for “standard” DM/machine-learning methods Acquirer Acquiree Sh. price Year _________________ Flexicon Logi-truck 18 1999 Flexicon Supplyhouse 30 2001 buy. com reel. com 10 2000. . . 18

19 “…right people” Text Categorization

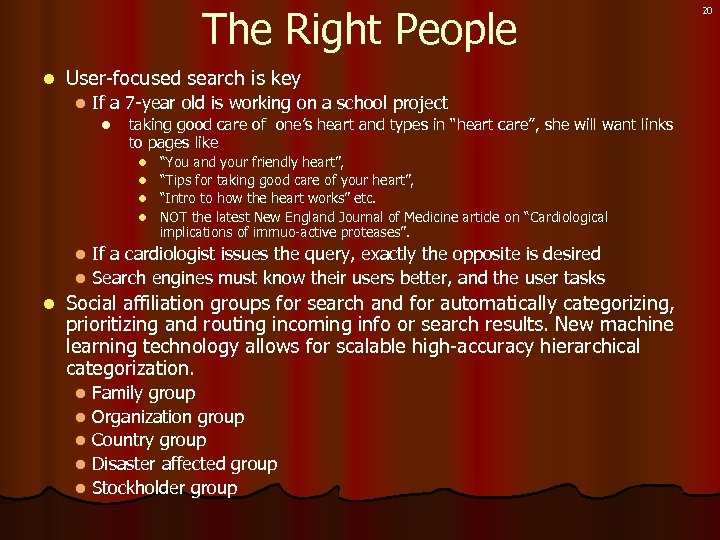

The Right People l User-focused search is key l If a 7 -year old is working on a school project l taking good care of one’s heart and types in “heart care”, she will want links to pages like “You and your friendly heart”, l “Tips for taking good care of your heart”, l “Intro to how the heart works” etc. l NOT the latest New England Journal of Medicine article on “ Cardiological implications of immuo-active proteases”. l If a cardiologist issues the query, exactly the opposite is desired l Search engines must know their users better, and the user tasks l l Social affiliation groups for search and for automatically categorizing, prioritizing and routing incoming info or search results. New machine learning technology allows for scalable high-accuracy hierarchical categorization. l l l Family group Organization group Country group Disaster affected group Stockholder group 20

Text Categorization Assign labels to each document or web-page l Labels may be topics such as Yahoo-categories l l Labels may be genres l l finance, sports, News World Asia Business editorials, movie-reviews, news Labels may be routing codes l send to marketing, send to customer service 21

Text Categorization Methods l Manual assignment l l Hand-coded rules l l as in Yahoo as in Reuters Machine Learning (dominant paradigm) Words in text become predictors l Category labels become “to be predicted” l Predictor-feature reduction (SVD, 2, …) l Apply any inductive method: k. NN, NB, DT, … l 22

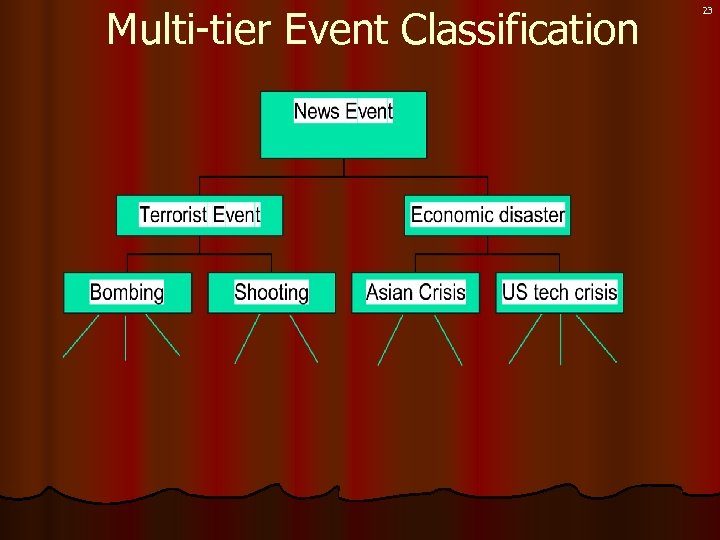

Multi-tier Event Classification 23

24 “…right timeframe” Just-in-Time - no sooner or later

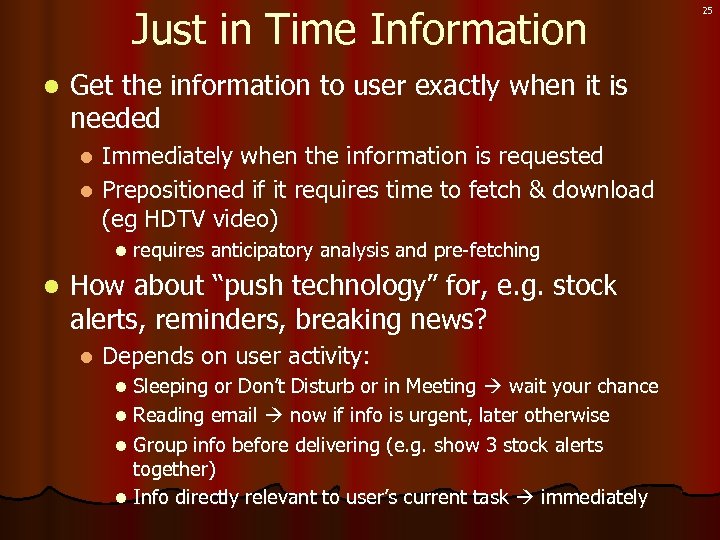

Just in Time Information l Get the information to user exactly when it is needed Immediately when the information is requested l Prepositioned if it requires time to fetch & download (eg HDTV video) l l l requires anticipatory analysis and pre-fetching How about “push technology” for, e. g. stock alerts, reminders, breaking news? l Depends on user activity: Sleeping or Don’t Disturb or in Meeting wait your chance l Reading email now if info is urgent, later otherwise l Group info before delivering (e. g. show 3 stock alerts together) l Info directly relevant to user’s current task immediately l 25

26 “…right language” Translation

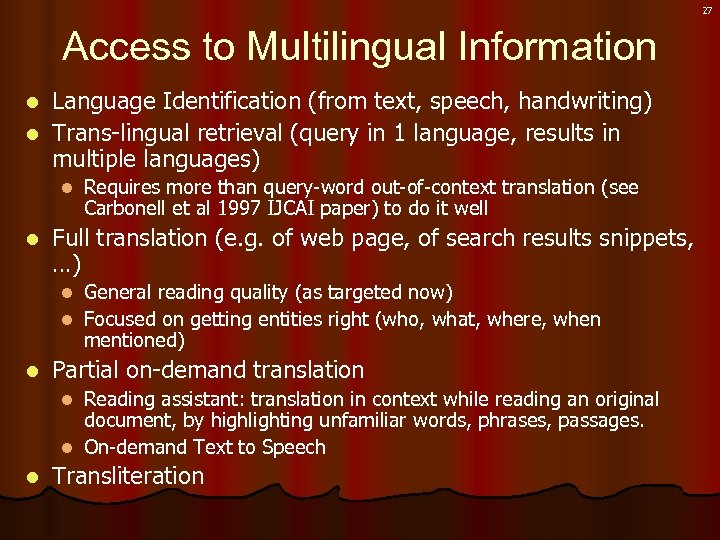

27 Access to Multilingual Information Language Identification (from text, speech, handwriting) l Trans-lingual retrieval (query in 1 language, results in multiple languages) l l l Requires more than query-word out-of-context translation (see Carbonell et al 1997 IJCAI paper) to do it well Full translation (e. g. of web page, of search results snippets, …) General reading quality (as targeted now) l Focused on getting entities right (who, what, where, when mentioned) l l Partial on-demand translation Reading assistant: translation in context while reading an original document, by highlighting unfamiliar words, phrases, passages. l On-demand Text to Speech l l Transliteration

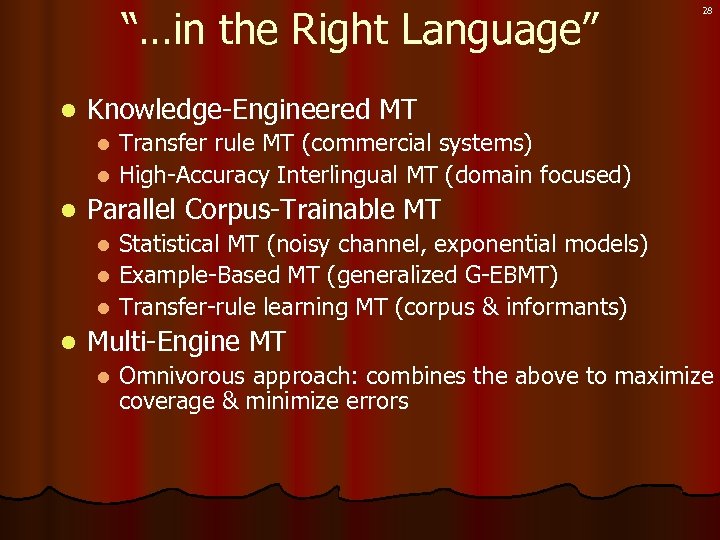

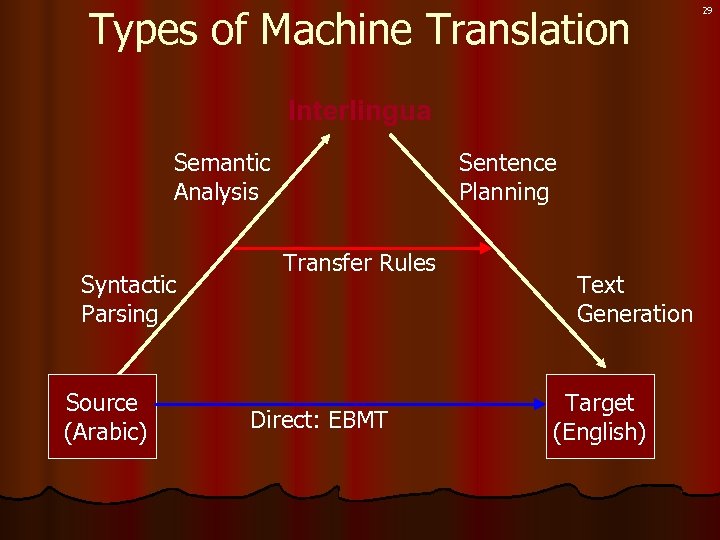

“…in the Right Language” l 28 Knowledge-Engineered MT Transfer rule MT (commercial systems) l High-Accuracy Interlingual MT (domain focused) l l Parallel Corpus-Trainable MT Statistical MT (noisy channel, exponential models) l Example-Based MT (generalized G-EBMT) l Transfer-rule learning MT (corpus & informants) l l Multi-Engine MT l Omnivorous approach: combines the above to maximize coverage & minimize errors

Types of Machine Translation Interlingua Semantic Analysis Syntactic Parsing Source (Arabic) Sentence Planning Transfer Rules Direct: EBMT Text Generation Target (English) 29

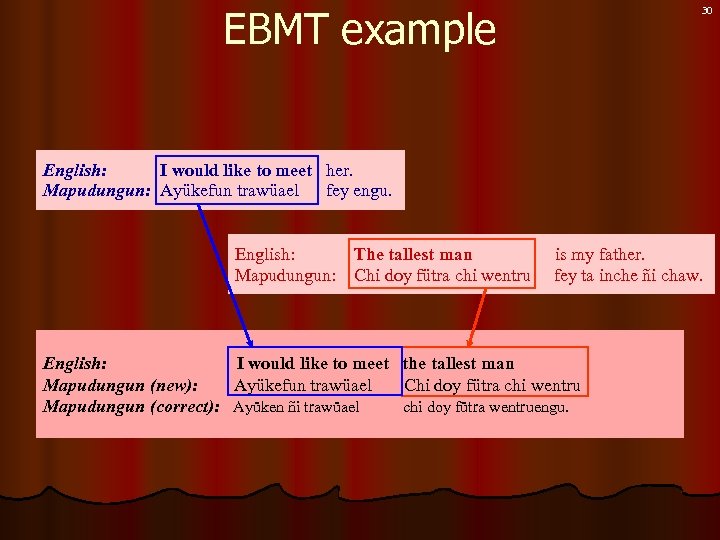

EBMT example 30 English: I would like to meet her. Mapudungun: Ayükefun trawüael fey engu. English: The tallest man Mapudungun: Chi doy fütra chi wentru is my father. fey ta inche ñi chaw. English: I would like to meet the tallest man Mapudungun (new): Ayükefun trawüael Chi doy fütra chi wentru Mapudungun (correct): Ayüken ñi trawüael chi doy fütra wentruengu.

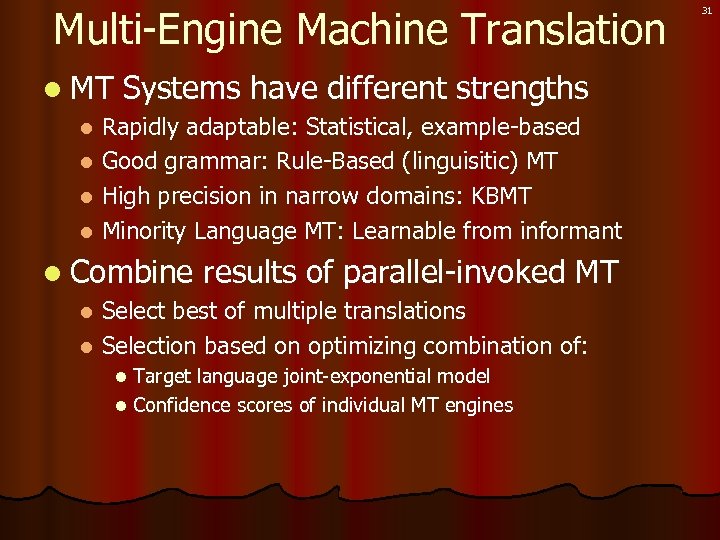

Multi-Engine Machine Translation l MT Systems have different strengths Rapidly adaptable: Statistical, example-based l Good grammar: Rule-Based (linguisitic) MT l High precision in narrow domains: KBMT l Minority Language MT: Learnable from informant l l Combine results of parallel-invoked MT Select best of multiple translations l Selection based on optimizing combination of: l Target language joint-exponential model l Confidence scores of individual MT engines l 31

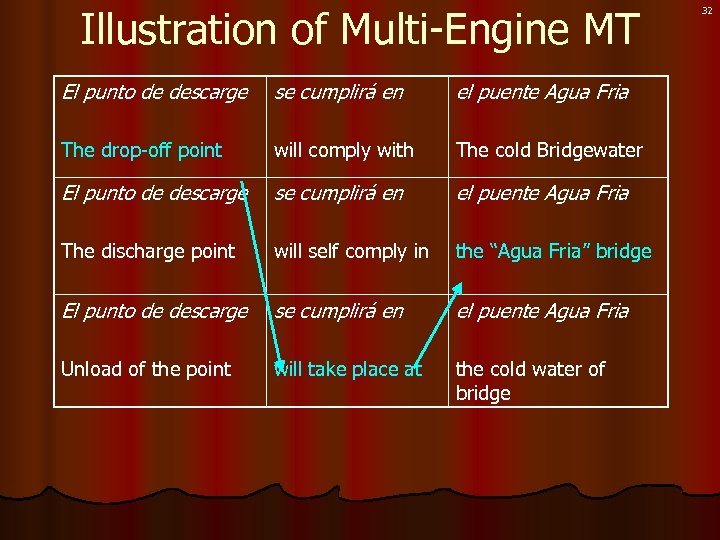

Illustration of Multi-Engine MT El punto de descarge se cumplirá en el puente Agua Fria The drop-off point will comply with The cold Bridgewater El punto de descarge se cumplirá en el puente Agua Fria The discharge point will self comply in the “Agua Fria” bridge El punto de descarge se cumplirá en el puente Agua Fria Unload of the point will take place at the cold water of bridge 32

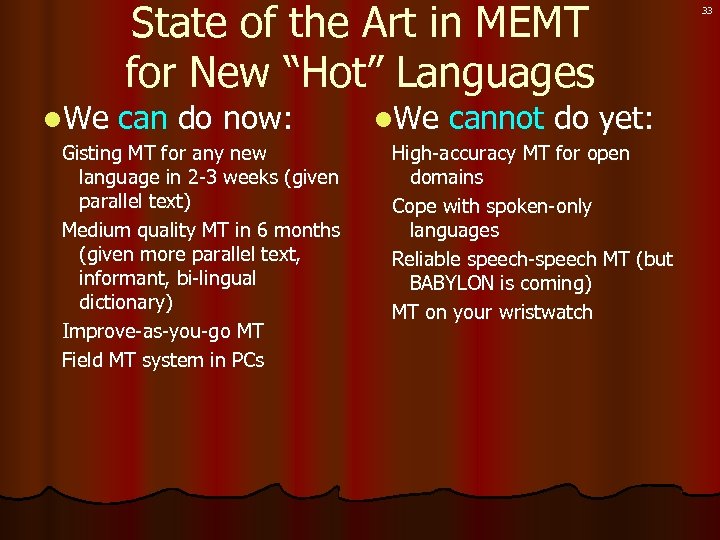

l. We State of the Art in MEMT for New “Hot” Languages can do now: Gisting MT for any new language in 2 -3 weeks (given parallel text) Medium quality MT in 6 months (given more parallel text, informant, bi-lingual dictionary) Improve-as-you-go MT Field MT system in PCs l. We cannot do yet: High-accuracy MT for open domains Cope with spoken-only languages Reliable speech-speech MT (but BABYLON is coming) MT on your wristwatch 33

34 “…right level of detail” Summarization

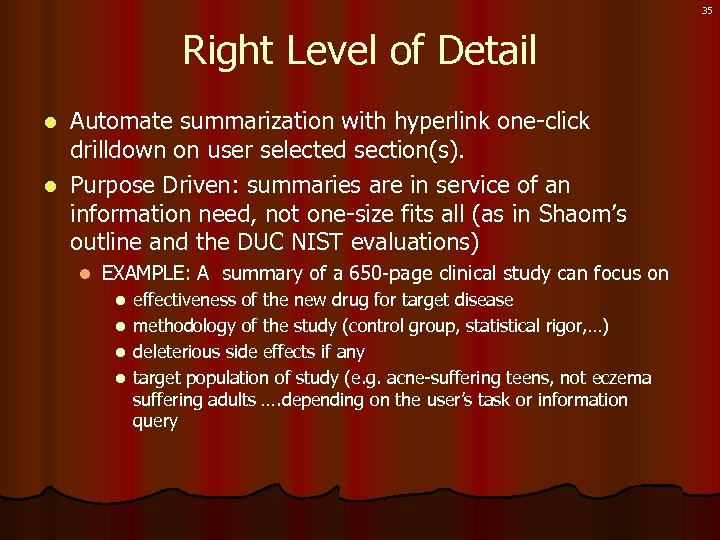

35 Right Level of Detail Automate summarization with hyperlink one-click drilldown on user selected section(s). l Purpose Driven: summaries are in service of an information need, not one-size fits all (as in Shaom’s outline and the DUC NIST evaluations) l l EXAMPLE: A summary of a 650 -page clinical study can focus on effectiveness of the new drug for target disease l methodology of the study (control group, statistical rigor, …) l deleterious side effects if any l target population of study (e. g. acne-suffering teens, not eczema suffering adults …. depending on the user’s task or information query l

Information Structuring and Summarization l Hierarchical multi-level pre-computed summary structure, or on-the-fly drilldown expansion of info. Headline <20 words Abstract 1% or 1 page l Summary 5 -10% or 10 pages l Document 100% l l l Scope of Summary l l l Single big document (e. g. big clinical study) Tight cluster of search results (e. g. vivisimo) Related set of clusters (e. g. conflicting opinions on how to cope with Iran’s nuclear capabilities) Focused area of knowledge (e. g. What’s known about Pluto? Lycos has good project in this via Hotbot) Specific kinds of commonly asked information(e. g. synthesize a bio on person X from any web-accessible info) 36

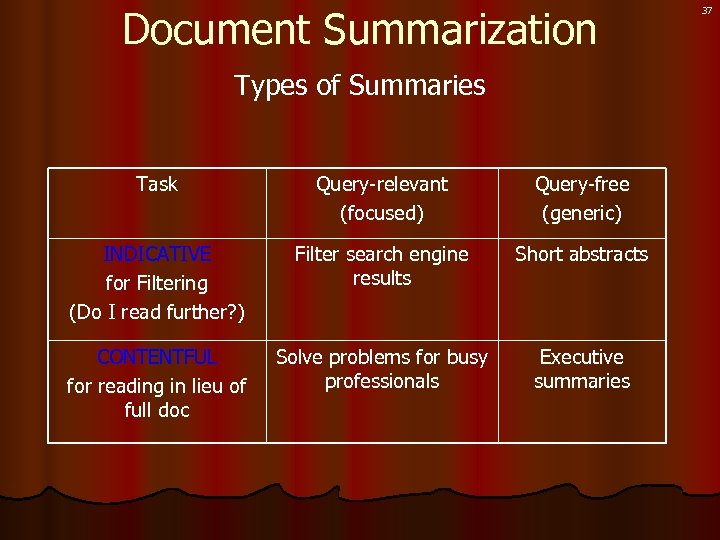

Document Summarization Types of Summaries Task Query-relevant (focused) Query-free (generic) INDICATIVE for Filtering (Do I read further? ) Filter search engine results Short abstracts CONTENTFUL for reading in lieu of full doc Solve problems for busy professionals Executive summaries 37

38 “…right medium” Finding information in Non-textual Media

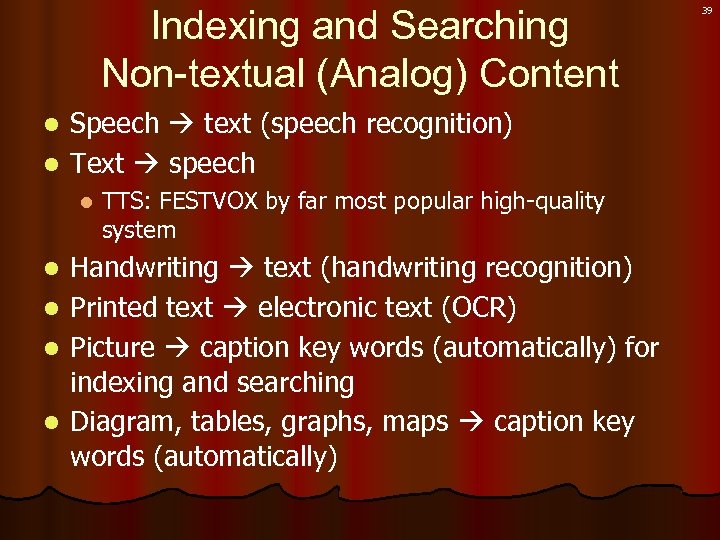

Indexing and Searching Non-textual (Analog) Content Speech text (speech recognition) l Text speech l l l TTS: FESTVOX by far most popular high-quality system Handwriting text (handwriting recognition) Printed text electronic text (OCR) Picture caption key words (automatically) for indexing and searching Diagram, tables, graphs, maps caption key words (automatically) 39

40 Conclusion

What is Text Mining Search documents, web, news l Categorize by topic, taxonomy l l Enables filtering, routing, multi-text summaries, … Extract names, relations, … l Summarize text, rules, trends, … l Detect redundancy, novelty, anomalies, … l Predict outcomes, behaviors, trends, … l Who did what to whom and where? 41

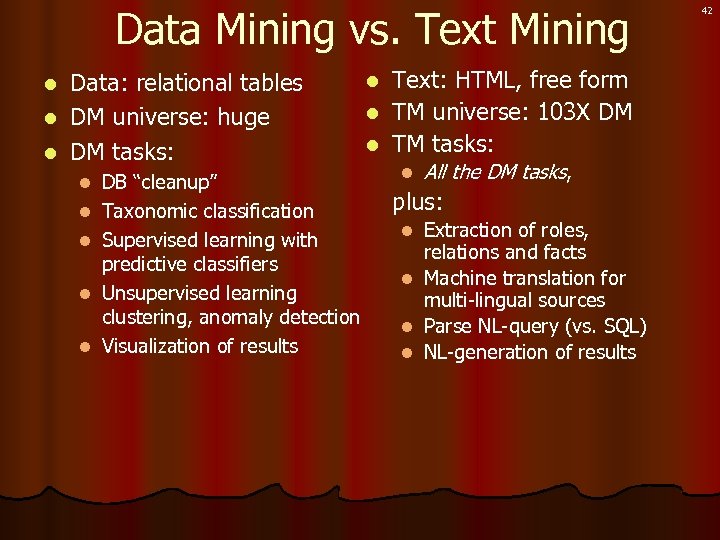

Data Mining vs. Text Mining Data: relational tables l DM universe: huge l DM tasks: l l l DB “cleanup” Taxonomic classification Supervised learning with predictive classifiers Unsupervised learning clustering, anomaly detection Visualization of results Text: HTML, free form l TM universe: 103 X DM l TM tasks: l l All the DM tasks, plus: Extraction of roles, relations and facts l Machine translation for multi-lingual sources l Parse NL-query (vs. SQL) l NL-generation of results l 42

52fbe0f3cf2069ac54629f7b5cadd7cd.ppt