d0da652a22fbc0ed627a95780d57547c.ppt

- Количество слайдов: 65

龙星计划课程: 信息检索 Course Overview & Background Cheng. Xiang Zhai (翟成祥) Department of Computer Science Graduate School of Library & Information Science Institute for Genomic Biology, Statistics University of Illinois, Urbana-Champaign http: //www-faculty. cs. uiuc. edu/~czhai, czhai@cs. uiuc. edu 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 1

Outline • Course overview • Essential background – Probability & statistics – Basic concepts in information theory – Natural language processing 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 2

Course Overview 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 3

Course Objectives • Introduce the field of information retrieval (IR) – Foundation: Basic concepts, principles, methods, etc – Trends: Frontier topics • Prepare students to do research in IR and/or related fields – Research methodology (general and IR-specific) – Research proposal writing – Research project (to be finished after the lecture period) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 4

Prerequisites • Proficiency in programming (C++ is needed for assignments) • Knowledge of basic probability & statistics (would be necessary for understanding algorithms deeply) • Big plus: knowledge of related areas – Machine learning – Natural language processing – Data mining –… 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 5

Course Management • Teaching staff – Instructor: Cheng. Xiang Zhai (UIUC) – Teaching assistants: • Hongfei Yan (Peking Univ) • • Bo Peng (Peking Univ) Course website: http: //net. pku. edu. cn/~course/cs 410/ Course group discussion: http: //groups. google. com/group/cs 410 pku Questions: First post the questions on the group discussion forum; if questions are unanswered, bring them to the office hours (first office hour: June 23, 2: 30 -4: 30 pm) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 6

Format & Requirements • Lecture-based: – Morning lectures: Foundation & Trends – Afternoon lectures: IR research methodology – Readings are usually available online • 2 Assignments (based on morning lectures) – Coding (C++), experimenting with data, analyzing results, open explorations (~5 hours each) • Final exam (based on morning lectures): 1: 304: 30 pm, June 30. – Practice questions will be available 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 7

Format & Requirements (cont. ) • Course project (Mini-TREC) – Work in teams – Phase I: create test collections (~ 3 hours, done within lecture period) – Phase II: develop algorithms and submit results (done in the summer) • Research project proposal (based on afternoon lectures) – Work in teams – 2 -page outline done within lecture period – full proposal (5 pages) due later 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 8

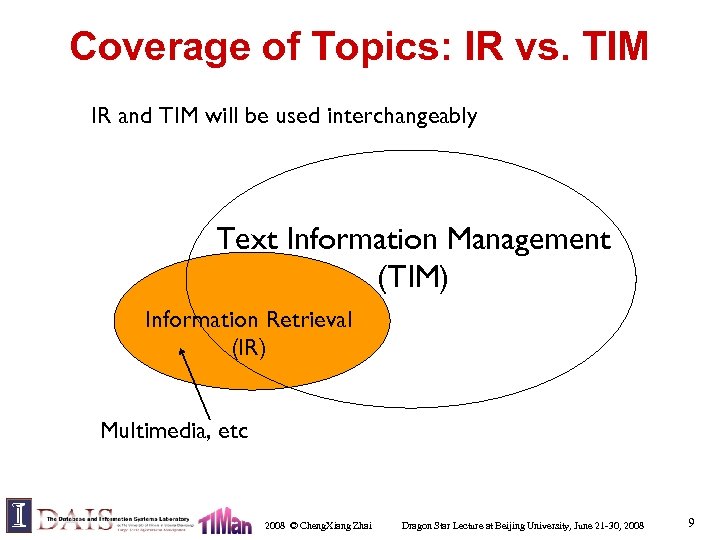

Coverage of Topics: IR vs. TIM IR and TIM will be used interchangeably Text Information Management (TIM) Information Retrieval (IR) Multimedia, etc 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 9

What is Text Info. Management? • TIM is concerned with technologies for managing and exploiting text information effectively and efficiently • Importance of managing text information – The most natural way of encoding knowledge • Think about scientific literature – The most common type of information • How much textual information do you produce and consum every day? – The most basic form of information • It can be used to describe other media of information – The most useful form of information! 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 10

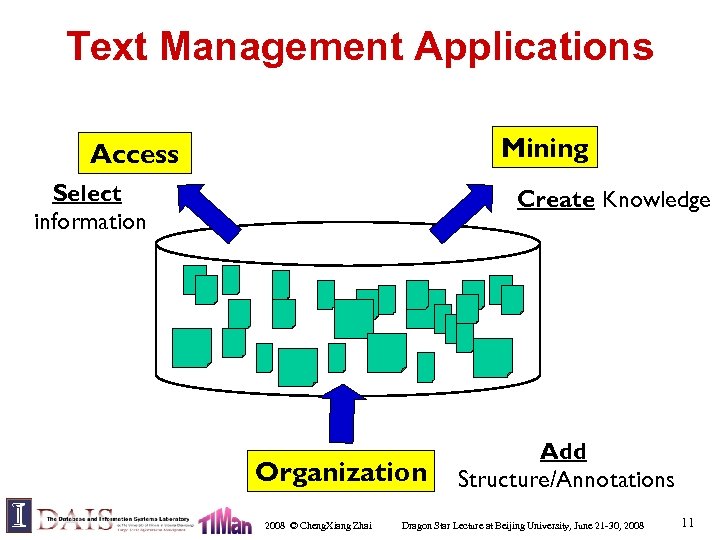

Text Management Applications Mining Access Select information Create Knowledge Organization 2008 © Cheng. Xiang Zhai Add Structure/Annotations Dragon Star Lecture at Beijing University, June 21 -30, 2008 11

Examples of Text Management Applications • • • Search – Web search engines (Google, Yahoo, …) – Library systems – … Recommendation – News filter – Literature/movie recommender Categorization – Automatically sorting emails – … Mining/Extraction – – Discovering major complaints from email in customer service Business intelligence Bioinformatics … Many others… 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 12

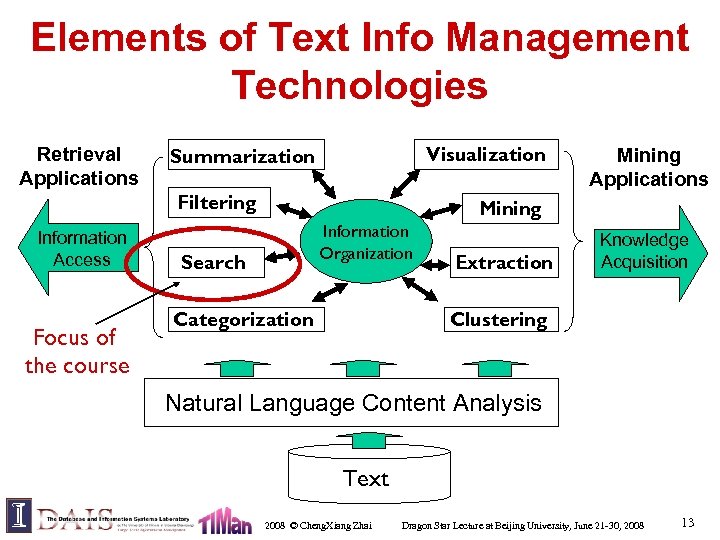

Elements of Text Info Management Technologies Retrieval Applications Visualization Summarization Filtering Information Access Focus of the course Mining Applications Mining Information Organization Search Categorization Extraction Knowledge Acquisition Clustering Natural Language Content Analysis Text 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 13

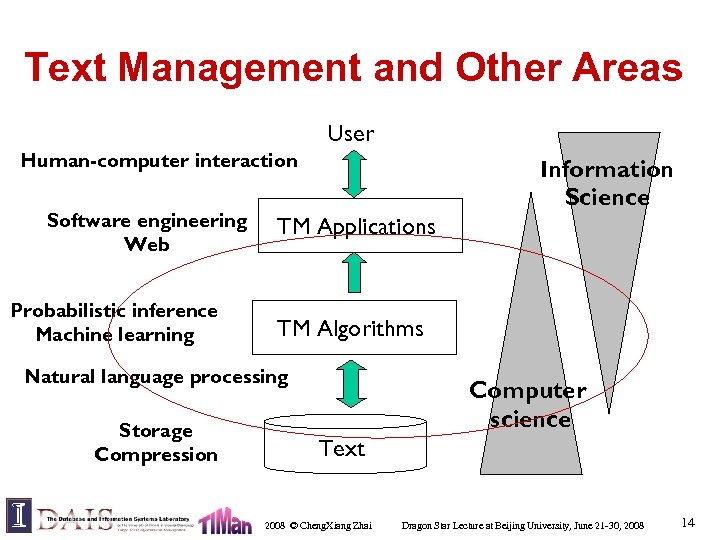

Text Management and Other Areas User Human-computer interaction Software engineering Web Probabilistic inference Machine learning Information Science TM Applications TM Algorithms Natural language processing Storage Compression Computer science Text 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 14

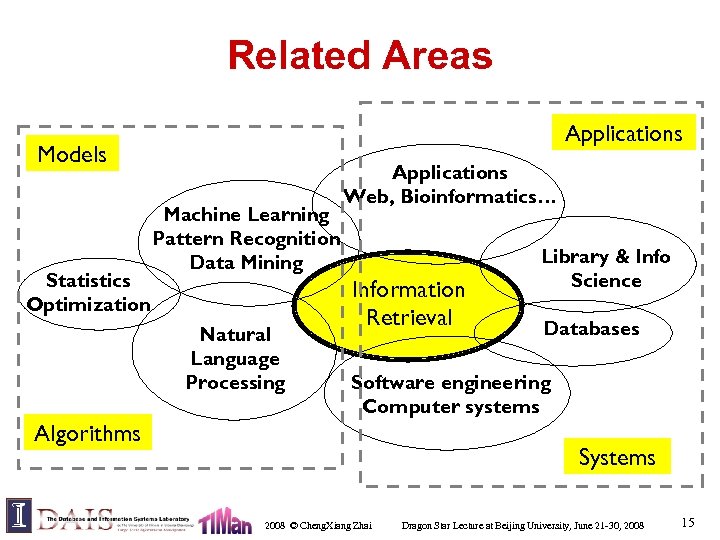

Related Areas Applications Models Statistics Optimization Machine Learning Pattern Recognition Data Mining Natural Language Processing Applications Web, Bioinformatics… Information Retrieval Library & Info Science Databases Software engineering Computer systems Algorithms Systems 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 15

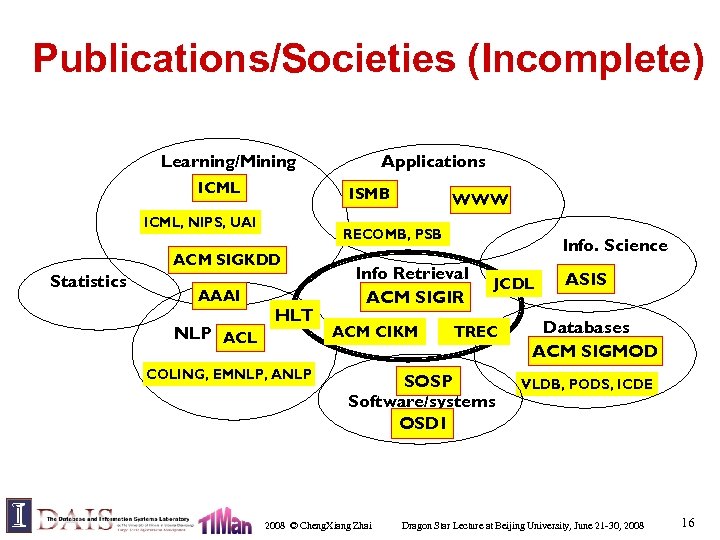

Publications/Societies (Incomplete) Learning/Mining ICML ISMB ICML, NIPS, UAI AAAI NLP ACL WWW RECOMB, PSB ACM SIGKDD Statistics Applications HLT COLING, EMNLP, ANLP Info. Science Info Retrieval ACM SIGIR ACM CIKM JCDL TREC SOSP Software/systems OSDI 2008 © Cheng. Xiang Zhai ASIS Databases ACM SIGMOD VLDB, PODS, ICDE Dragon Star Lecture at Beijing University, June 21 -30, 2008 16

Schedule: available at http: //net. pku. edu. cn/~course/cs 410/ 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 17

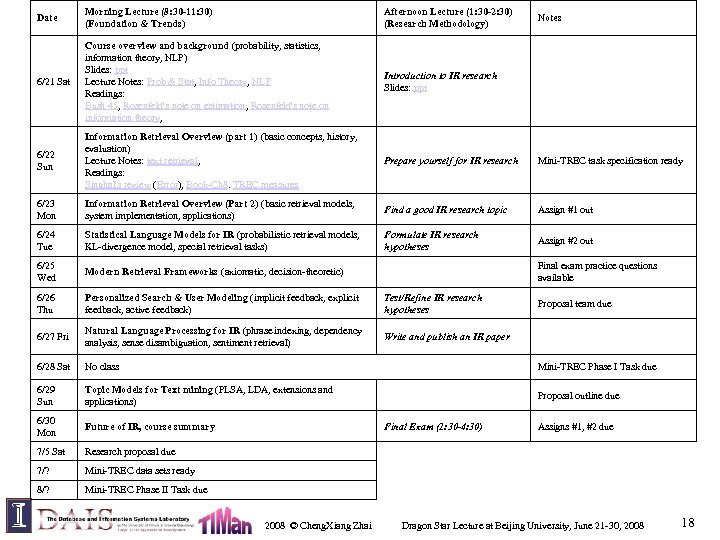

Date Morning Lecture (8: 30 -11: 30) (Foundation & Trends) Afternoon Lecture (1: 30 -2: 30) (Research Methodology) 6/21 Sat Course overview and background (probability, statistics, information theory, NLP) Slides: ppt Lecture Notes: Prob & Stat, Info Theory, NLP Readings: Bush 45, Rosenfeld's note on estimation, Rosenfeld's note on information theory, Introduction to IR research Slides: ppt 6/22 Sun Information Retrieval Overview (part 1) (basic concepts, history, evaluation) Lecture Notes: text retrieval, Readings: Singhal's review (Error), Book-Ch 8. TREC measures Prepare yourself for IR research Mini-TREC task specification ready 6/23 Mon Information Retrieval Overview (Part 2) (basic retrieval models, system implementation, applications) Find a good IR research topic Assign #1 out 6/24 Tue Statistical Language Models for IR (probabilistic retrieval models, KL-divergence model, special retrieval tasks) Formulate IR research hypotheses Assign #2 out 6/25 Wed Modern Retrieval Frameworks (axiomatic, decision-theoretic) 6/26 Thu Personalized Search & User Modeling (implicit feedback, explicit feedback, active feedback) Test/Refine IR research hypotheses 6/27 Fri Natural Language Processing for IR (phrase indexing, dependency analysis, sense disambiguation, sentiment retrieval) Write and publish an IR paper 6/28 Sat No class Mini-TREC Phase I Task due 6/29 Sun Topic Models for Text mining (PLSA, LDA, extensions and applications) Proposal outline due 6/30 Mon Future of IR, course summary 7/5 Sat Research proposal due 7/? Mini-TREC data sets ready 8/? Mini-TREC Phase II Task due Final exam practice questions available Final Exam (1: 30 -4: 30) 2008 © Cheng. Xiang Zhai Notes Proposal team due Assigns #1, #2 due Dragon Star Lecture at Beijing University, June 21 -30, 2008 18

Essential Backgroud 1: Probability & Statistics 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 19

Prob/Statistics & Text Management • Probability & statistics provide a principled way to quantify the uncertainties associated with natural language • Allow us to answer questions like: – Given that we observe “baseball” three times and “game” once in a news article, how likely is it about “sports”? (text categorization, information retrieval) – Given that a user is interested in sports news, how likely would the user use “baseball” in a query? (information retrieval) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 20

Basic Concepts in Probability • • • Random experiment: an experiment with uncertain outcome (e. g. , tossing a coin, picking a word from text) Sample space: all possible outcomes, e. g. , – Tossing 2 fair coins, S ={HH, HT, TH, TT} Event: E S, E happens iff outcome is in E, e. g. , – E={HH} (all heads) – E={HH, TT} (same face) • – Impossible event ({}), certain event (S) Probability of Event : 1 P(E) 0, s. t. – P(S)=1 (outcome always in S) – P(A B)=P(A)+P(B) if (A B)= (e. g. , A=same face, B=different face) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 21

Basic Concepts of Prob. (cont. ) • Conditional Probability : P(B|A)=P(A B)/P(A) – P(A B) = P(A)P(B|A) =P(B)P(A|B) – So, P(A|B)=P(B|A)P(A)/P(B) (Bayes’ Rule) – For independent events, P(A B) = P(A)P(B), so P(A|B)=P(A) • Total probability: If A 1, …, An form a partition of S, then – P(B)= P(B S)=P(B A 1)+…+P(B An) (why? ) – So, P(Ai|B)=P(B|Ai)P(Ai)/P(B) = P(B|Ai)P(Ai)/[P(B|A 1)P(A 1)+…+P(B|An)P(An)] – This allows us to compute P(Ai|B) based on P(B|Ai) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 22

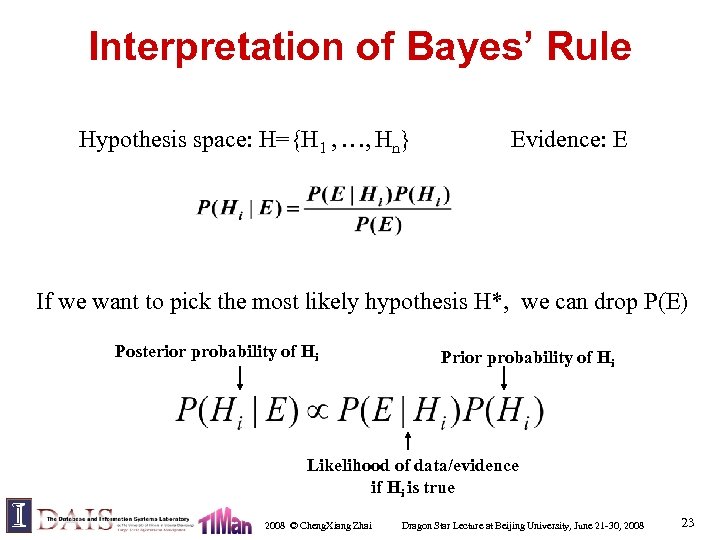

Interpretation of Bayes’ Rule Hypothesis space: H={H 1 , …, Hn} Evidence: E If we want to pick the most likely hypothesis H*, we can drop P(E) Posterior probability of Hi Prior probability of Hi Likelihood of data/evidence if Hi is true 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 23

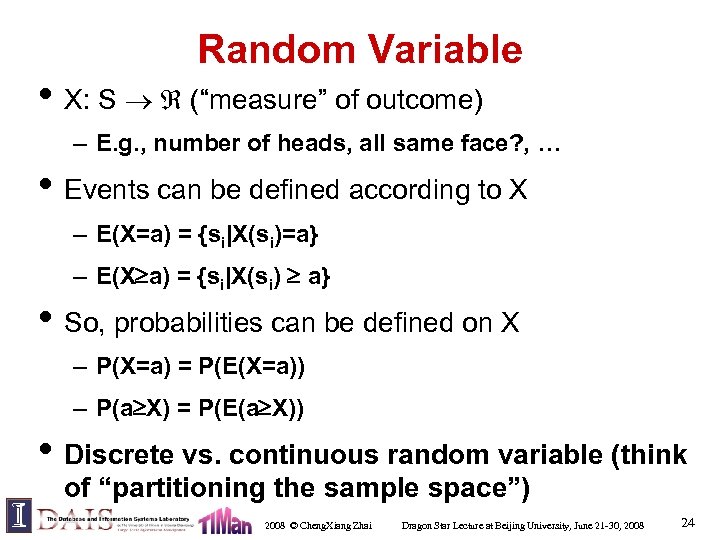

Random Variable • X: S (“measure” of outcome) – E. g. , number of heads, all same face? , … • Events can be defined according to X – E(X=a) = {si|X(si)=a} – E(X a) = {si|X(si) a} • So, probabilities can be defined on X – P(X=a) = P(E(X=a)) – P(a X) = P(E(a X)) • Discrete vs. continuous random variable (think of “partitioning the sample space”) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 24

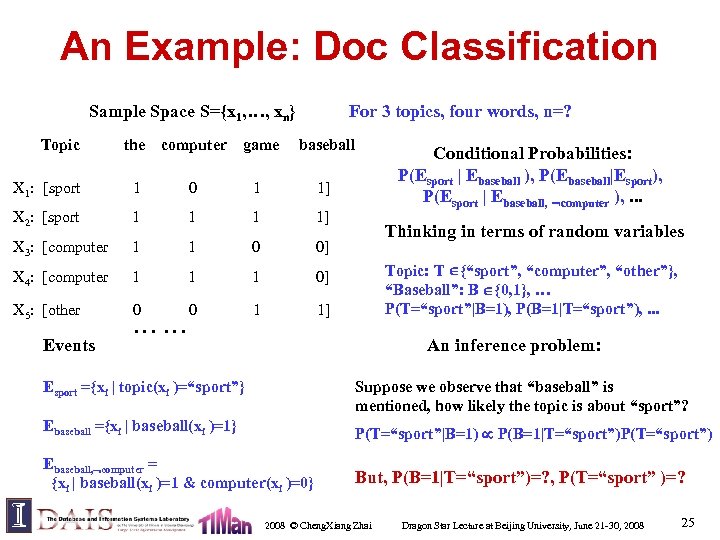

An Example: Doc Classification Sample Space S={x 1, …, xn} For 3 topics, four words, n=? Topic the computer game baseball X 1: [sport 1 0 1 1] X 2: [sport 1 1] X 3: [computer 1 1 0 0] X 4: [computer 1 1 1 0] X 5: [other 0 0 1 1] Events …… Conditional Probabilities: P(Esport | Ebaseball ), P(Ebaseball|Esport), P(Esport | Ebaseball, computer ), . . . Thinking in terms of random variables Topic: T {“sport”, “computer”, “other”}, “Baseball”: B {0, 1}, … P(T=“sport”|B=1), P(B=1|T=“sport”), . . . An inference problem: Esport ={xi | topic(xi )=“sport”} Suppose we observe that “baseball” is mentioned, how likely the topic is about “sport”? Ebaseball ={xi | baseball(xi )=1} P(T=“sport”|B=1) P(B=1|T=“sport”)P(T=“sport”) Ebaseball, computer = {xi | baseball(xi )=1 & computer(xi )=0} But, P(B=1|T=“sport”)=? , P(T=“sport” )=? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 25

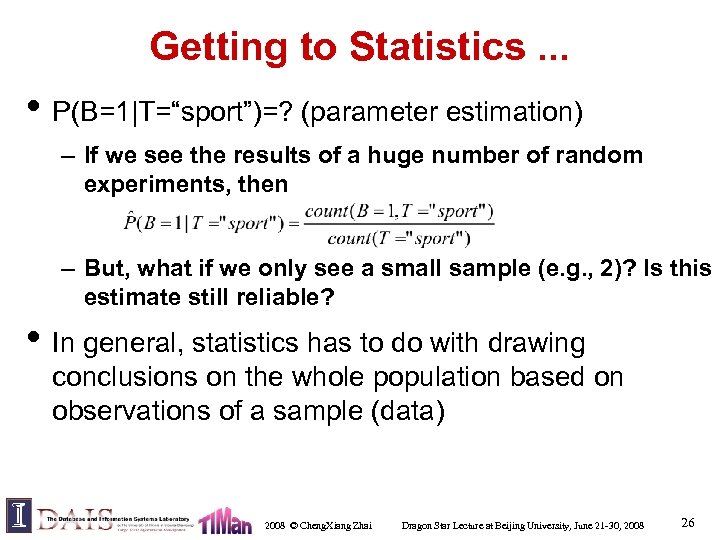

Getting to Statistics. . . • P(B=1|T=“sport”)=? (parameter estimation) – If we see the results of a huge number of random experiments, then – But, what if we only see a small sample (e. g. , 2)? Is this estimate still reliable? • In general, statistics has to do with drawing conclusions on the whole population based on observations of a sample (data) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 26

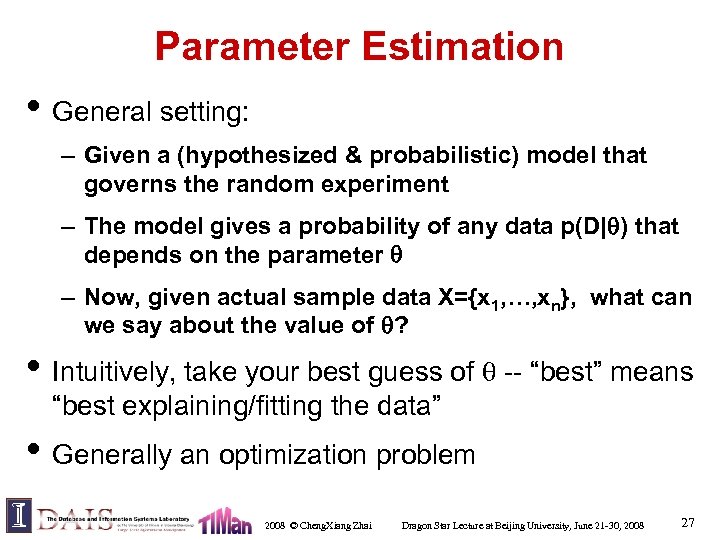

Parameter Estimation • General setting: – Given a (hypothesized & probabilistic) model that governs the random experiment – The model gives a probability of any data p(D| ) that depends on the parameter – Now, given actual sample data X={x 1, …, xn}, what can we say about the value of ? • Intuitively, take your best guess of -- “best” means “best explaining/fitting the data” • Generally an optimization problem 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 27

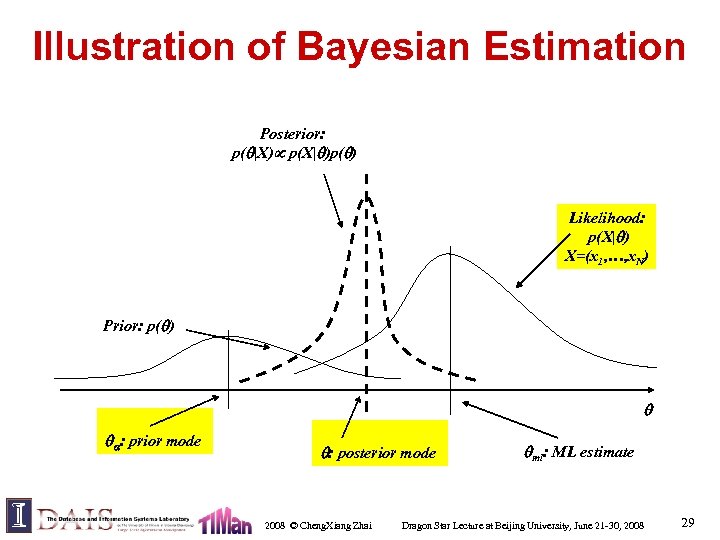

Maximum Likelihood vs. Bayesian • Maximum likelihood estimation – “Best” means “data likelihood reaches maximum” – Problem: small sample • Bayesian estimation – “Best” means being consistent with our “prior” knowledge and explaining data well – Problem: how to define prior? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 28

Illustration of Bayesian Estimation Posterior: p( |X) p(X| )p( ) Likelihood: p(X| ) X=(x 1, …, x. N) Prior: p( ) : prior mode : posterior mode 2008 © Cheng. Xiang Zhai ml: ML estimate Dragon Star Lecture at Beijing University, June 21 -30, 2008 29

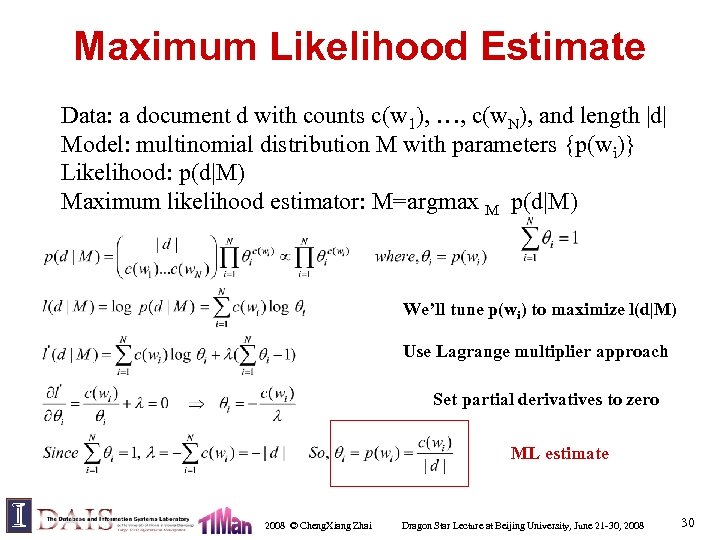

Maximum Likelihood Estimate Data: a document d with counts c(w 1), …, c(w. N), and length |d| Model: multinomial distribution M with parameters {p(wi)} Likelihood: p(d|M) Maximum likelihood estimator: M=argmax M p(d|M) We’ll tune p(wi) to maximize l(d|M) Use Lagrange multiplier approach Set partial derivatives to zero ML estimate 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 30

What You Should Know • Probability concepts: – sample space, event, random variable, conditional prob. multinomial distribution, etc • Bayes formula and its interpretation • Statistics: Know how to compute maximum likelihood estimate 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 31

Essential Background 2: Basic Concepts in Information Theory 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 32

Information Theory • Developed by Shannon in the 40 s • Maximizing the amount of information that can be transmitted over an imperfect communication channel • Data compression (entropy) • Transmission rate (channel capacity) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 33

Basic Concepts in Information Theory • Entropy: Measuring uncertainty of a random variable • Kullback-Leibler divergence: comparing two distributions • Mutual Information: measuring the correlation of two random variables 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 34

Entropy: Motivation • • • Feature selection: – If we use only a few words to classify docs, what kind of words should we use? – P(Topic| “computer”=1) vs p(Topic | “the”=1): which is more random? Text compression: – Some documents (less random) can be compressed more than others (more random) – Can we quantify the “compressibility”? In general, given a random variable X following distribution p(X), – How do we measure the “randomness” of X? – How do we design optimal coding for X? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 35

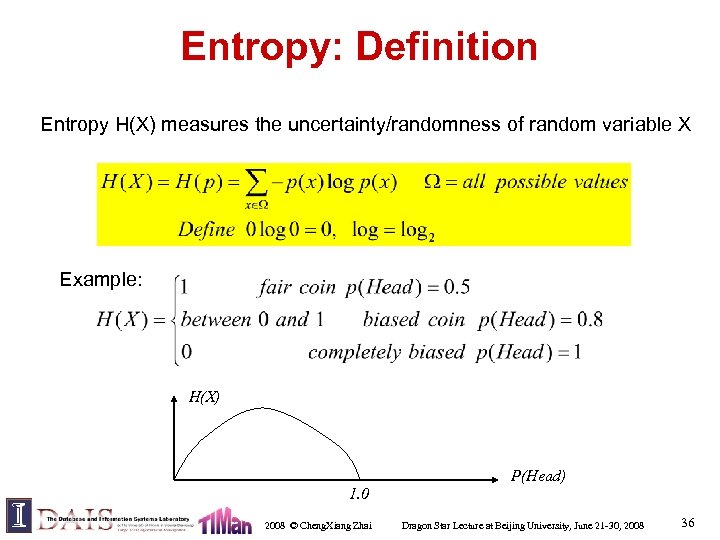

Entropy: Definition Entropy H(X) measures the uncertainty/randomness of random variable X Example: H(X) P(Head) 1. 0 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 36

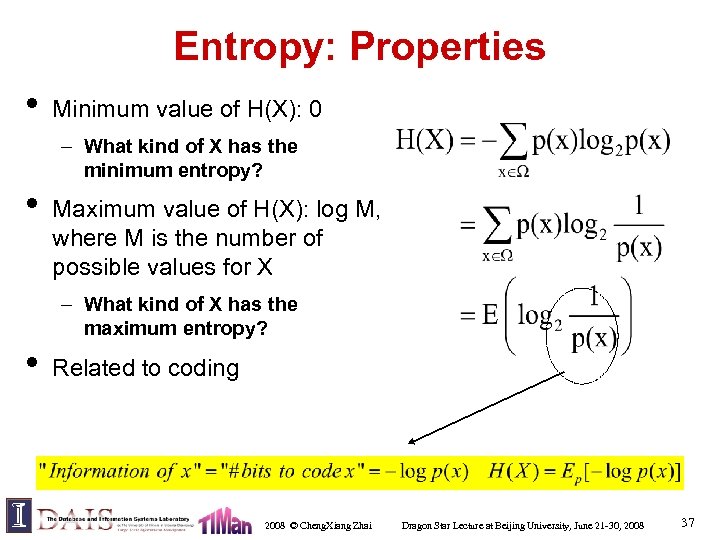

Entropy: Properties • Minimum value of H(X): 0 – What kind of X has the minimum entropy? • Maximum value of H(X): log M, where M is the number of possible values for X – What kind of X has the maximum entropy? • Related to coding 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 37

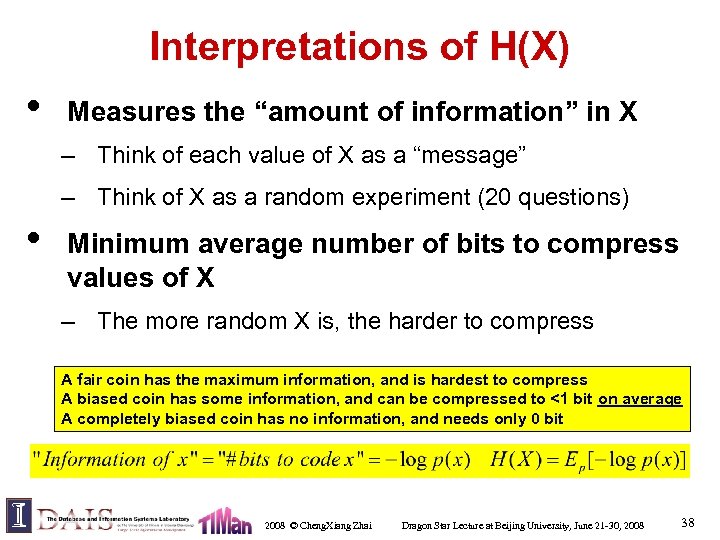

Interpretations of H(X) • Measures the “amount of information” in X – Think of each value of X as a “message” – Think of X as a random experiment (20 questions) • Minimum average number of bits to compress values of X – The more random X is, the harder to compress A fair coin has the maximum information, and is hardest to compress A biased coin has some information, and can be compressed to <1 bit on average A completely biased coin has no information, and needs only 0 bit 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 38

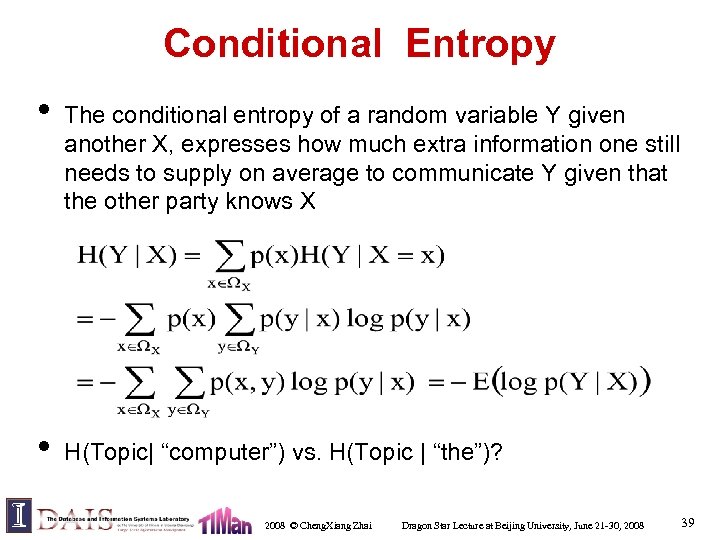

Conditional Entropy • • The conditional entropy of a random variable Y given another X, expresses how much extra information one still needs to supply on average to communicate Y given that the other party knows X H(Topic| “computer”) vs. H(Topic | “the”)? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 39

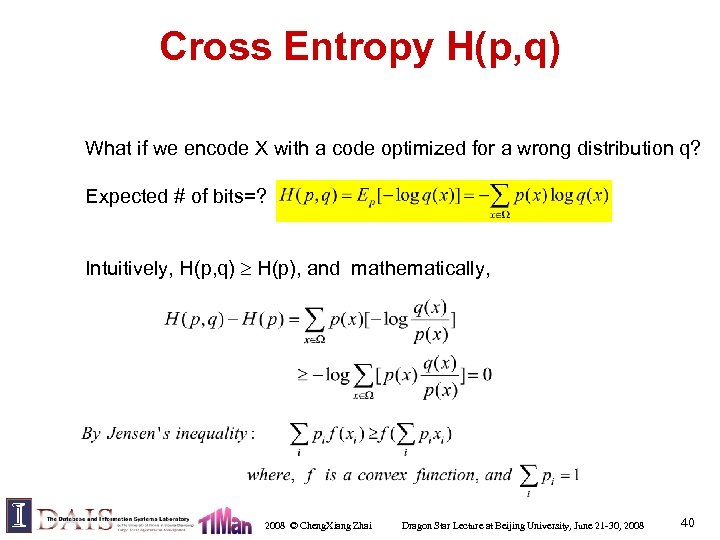

Cross Entropy H(p, q) What if we encode X with a code optimized for a wrong distribution q? Expected # of bits=? Intuitively, H(p, q) H(p), and mathematically, 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 40

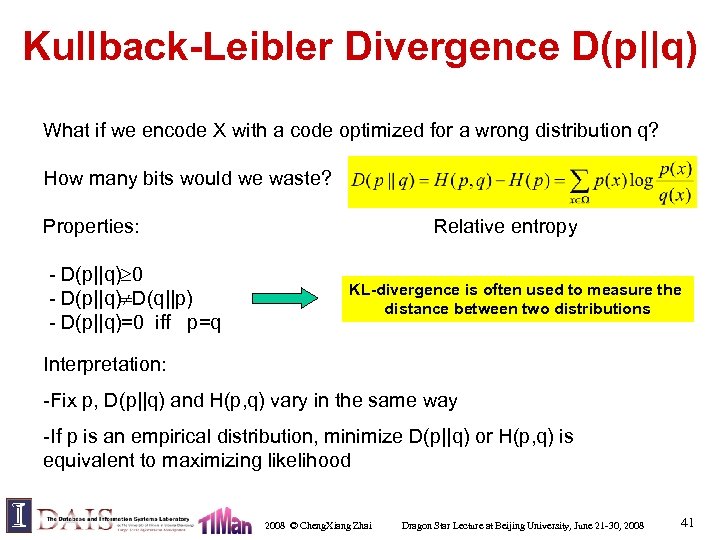

Kullback-Leibler Divergence D(p||q) What if we encode X with a code optimized for a wrong distribution q? How many bits would we waste? Properties: - D(p||q) 0 - D(p||q) D(q||p) - D(p||q)=0 iff p=q Relative entropy KL-divergence is often used to measure the distance between two distributions Interpretation: -Fix p, D(p||q) and H(p, q) vary in the same way -If p is an empirical distribution, minimize D(p||q) or H(p, q) is equivalent to maximizing likelihood 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 41

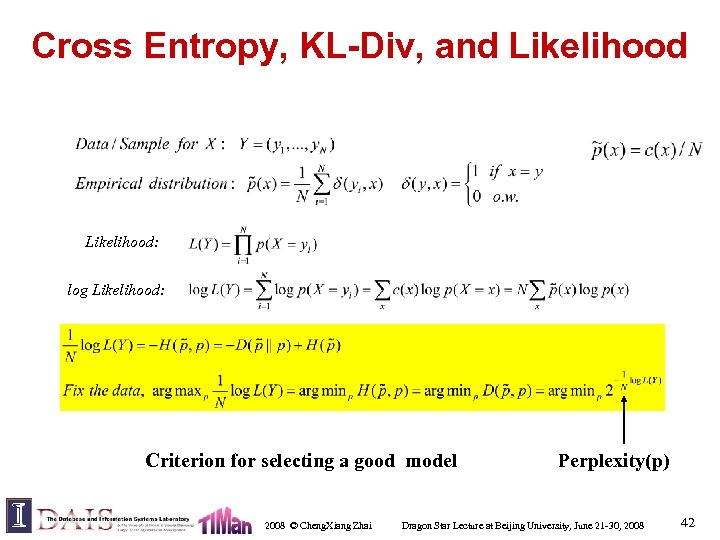

Cross Entropy, KL-Div, and Likelihood: log Likelihood: Criterion for selecting a good model 2008 © Cheng. Xiang Zhai Perplexity(p) Dragon Star Lecture at Beijing University, June 21 -30, 2008 42

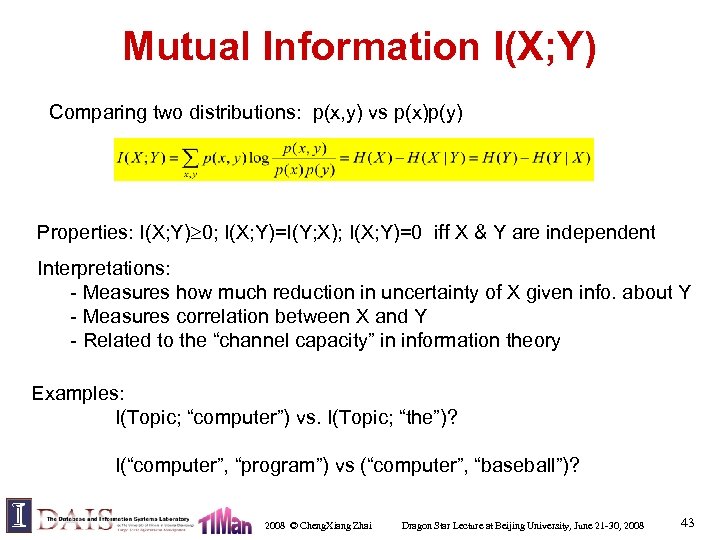

Mutual Information I(X; Y) Comparing two distributions: p(x, y) vs p(x)p(y) Properties: I(X; Y) 0; I(X; Y)=I(Y; X); I(X; Y)=0 iff X & Y are independent Interpretations: - Measures how much reduction in uncertainty of X given info. about Y - Measures correlation between X and Y - Related to the “channel capacity” in information theory Examples: I(Topic; “computer”) vs. I(Topic; “the”)? I(“computer”, “program”) vs (“computer”, “baseball”)? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 43

What You Should Know • Information theory concepts: entropy, cross entropy, relative entropy, conditional entropy, KL-div. , mutual information – Know their definitions, how to compute them – Know how to interpret them – Know their relationships 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 44

Essential Background 3: Natural Language Processing 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 45

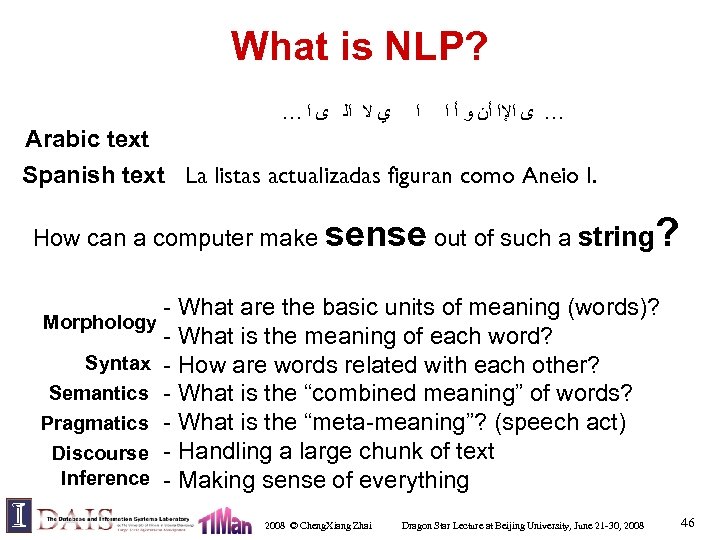

What is NLP? … ﻱ ﻻ ﺍﻟ ﻯ ﺍ ﺍ … ﻯ ﺍﻹﺍ ﺃﻦ ﻭ ﺃ ﺍ Arabic text Spanish text La listas actualizadas figuran como Aneio I. How can a computer make sense out of such a string ? - What are the basic units of meaning (words)? Morphology - What is the meaning of each word? Syntax - How are words related with each other? Semantics - What is the “combined meaning” of words? Pragmatics - What is the “meta-meaning”? (speech act) Discourse - Handling a large chunk of text Inference - Making sense of everything 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 46

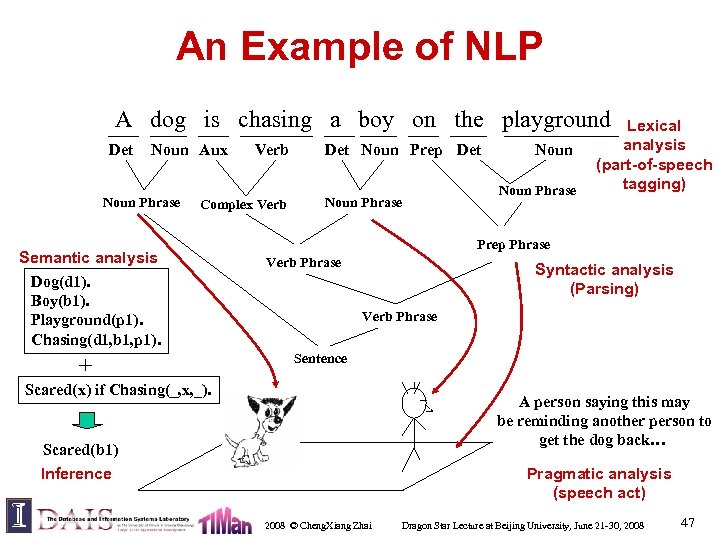

An Example of NLP A dog is chasing a boy on the playground Det Noun Aux Noun Phrase Verb Complex Verb Semantic analysis Dog(d 1). Boy(b 1). Playground(p 1). Chasing(d 1, b 1, p 1). + Det Noun Prep Det Noun Phrase Lexical analysis (part-of-speech tagging) Prep Phrase Verb Phrase Syntactic analysis (Parsing) Verb Phrase Sentence Scared(x) if Chasing(_, x, _). A person saying this may be reminding another person to get the dog back… Scared(b 1) Inference Pragmatic analysis (speech act) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 47

If we can do this for all the sentences, then … BAD NEWS: Unfortunately, we can’t. General NLP = “AI-Complete” 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 48

NLP is Difficult! • Natural language is designed to make human communication efficient. As a result, – we omit a lot of “common sense” knowledge, which we assume the hearer/reader possesses – we keep a lot of ambiguities, which we assume the hearer/reader knows how to resolve • This makes EVERY step in NLP hard – Ambiguity is a “killer” – Common sense reasoning is pre-required 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 49

Examples of Challenges • Word-level ambiguity: E. g. , – “design” can be a noun or a verb (Ambiguous POS) – “root” has multiple meanings (Ambiguous sense) • Syntactic ambiguity: E. g. , – “natural language processing” (Modification) – “A man saw a boy with a telescope. ” (PP Attachment) • Anaphora resolution: “John persuaded Bill to buy a TV for himself. ” (himself = John or Bill? ) • Presupposition: “He has quit smoking. ” implies that he smoked before. 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 50

Despite all the challenges, research in NLP has also made a lot of progress… 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 51

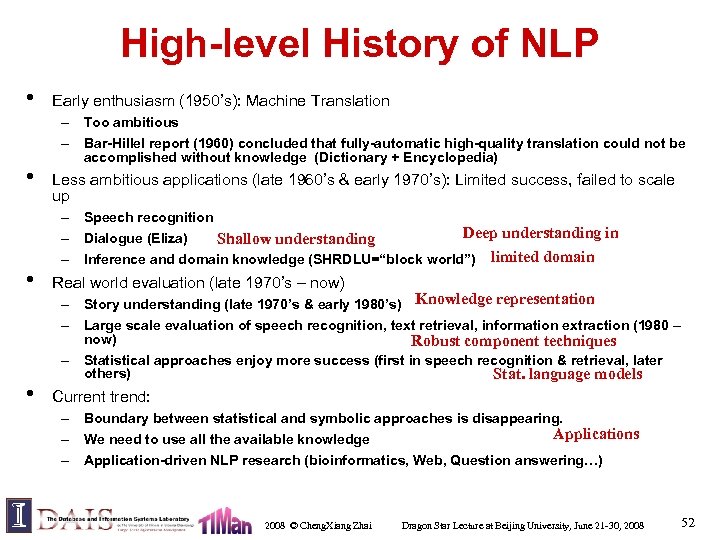

High-level History of NLP • • Early enthusiasm (1950’s): Machine Translation – Too ambitious – Bar-Hillel report (1960) concluded that fully-automatic high-quality translation could not be accomplished without knowledge (Dictionary + Encyclopedia) Less ambitious applications (late 1960’s & early 1970’s): Limited success, failed to scale up – Speech recognition Deep understanding in – Dialogue (Eliza) understanding Shallow limited domain – Inference and domain knowledge (SHRDLU=“block world”) • Real world evaluation (late 1970’s – now) • Current trend: – Story understanding (late 1970’s & early 1980’s) Knowledge representation – Large scale evaluation of speech recognition, text retrieval, information extraction (1980 – now) Robust component techniques – Statistical approaches enjoy more success (first in speech recognition & retrieval, later others) Stat. language models – Boundary between statistical and symbolic approaches is disappearing. Applications – We need to use all the available knowledge – Application-driven NLP research (bioinformatics, Web, Question answering…) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 52

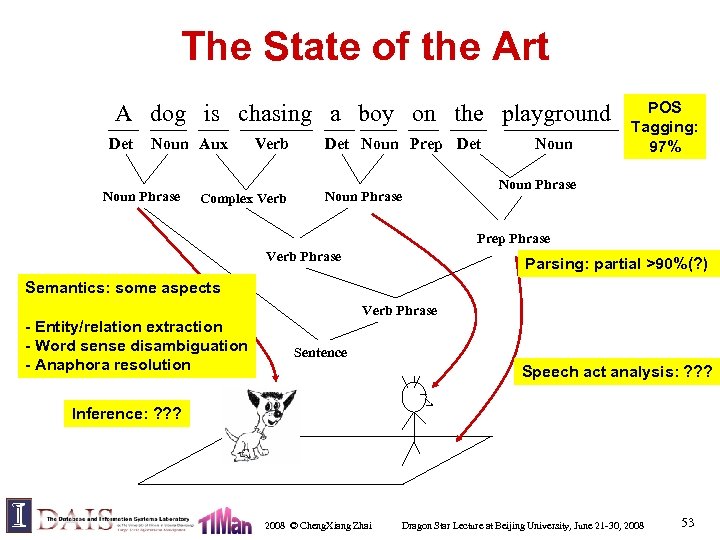

The State of the Art A dog is chasing a boy on the playground Det Noun Aux Noun Phrase Verb Complex Verb Det Noun Prep Det Noun Phrase Noun POS Tagging: 97% Noun Phrase Prep Phrase Verb Phrase Parsing: partial >90%(? ) Semantics: some aspects - Entity/relation extraction - Word sense disambiguation - Anaphora resolution Verb Phrase Sentence Speech act analysis: ? ? ? Inference: ? ? ? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 53

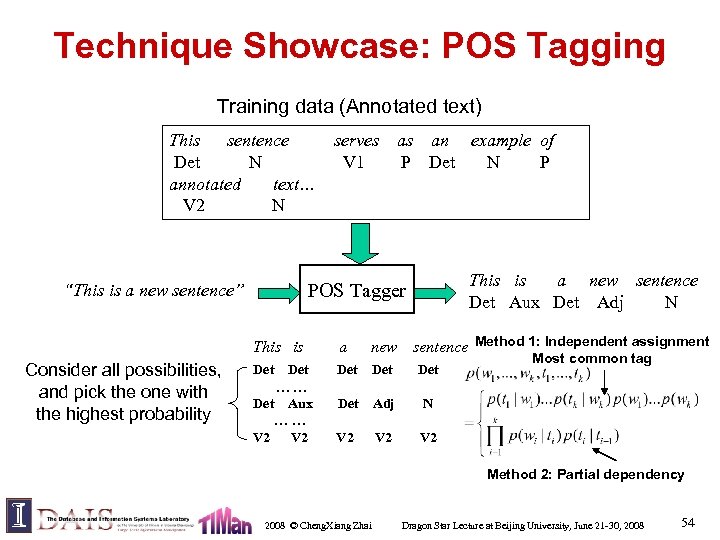

Technique Showcase: POS Tagging Training data (Annotated text) This sentence Det N annotated text… V 2 N serves as an example of V 1 P Det N P This is a new sentence Det Aux Det Adj N POS Tagger “This is a new sentence” This is Consider all possibilities, and pick the one with the highest probability a new sentence Method 1: Independent assignment Det Det Det Adj N V 2 V 2 Det …… Det Aux …… V 2 Most common tag Method 2: Partial dependency 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 54

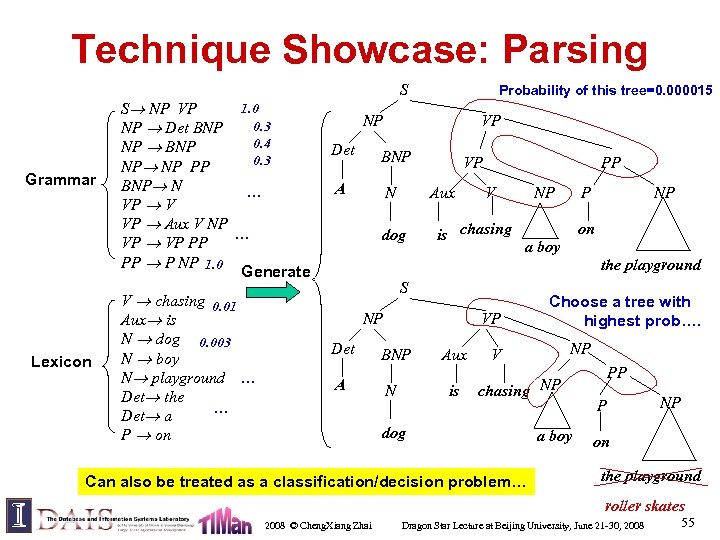

Technique Showcase: Parsing Grammar Lexicon 1. 0 S NP VP 0. 3 NP Det BNP 0. 4 NP BNP 0. 3 NP NP PP BNP N … VP V VP Aux V NP … VP PP PP P NP 1. 0 Generate V chasing 0. 01 Aux is N dog 0. 003 N boy N playground … Det the … Det a P on S Probability of this tree=0. 000015 NP VP Det BNP A N VP Aux dog PP V is chasing NP P NP on a boy the playground S NP Det A VP BNP N Aux is Choose a tree with highest prob…. NP V chasing NP dog Can also be treated as a classification/decision problem… a boy PP P NP on the playground roller skates 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 55

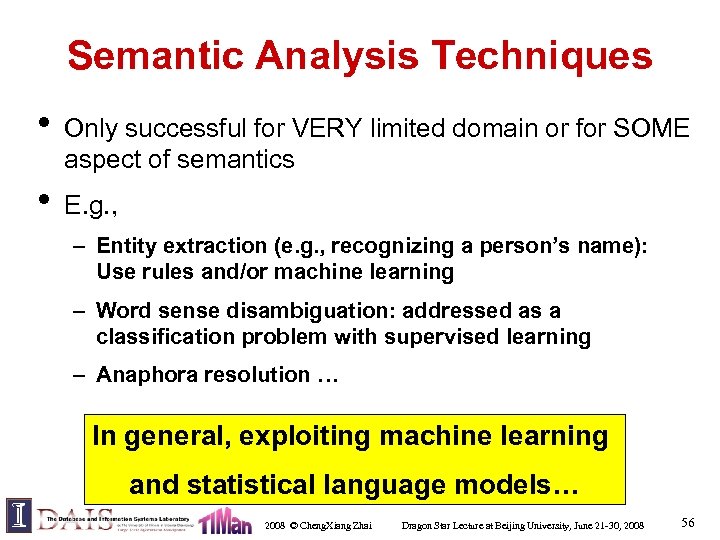

Semantic Analysis Techniques • Only successful for VERY limited domain or for SOME aspect of semantics • E. g. , – Entity extraction (e. g. , recognizing a person’s name): Use rules and/or machine learning – Word sense disambiguation: addressed as a classification problem with supervised learning – Anaphora resolution … In general, exploiting machine learning and statistical language models… 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 56

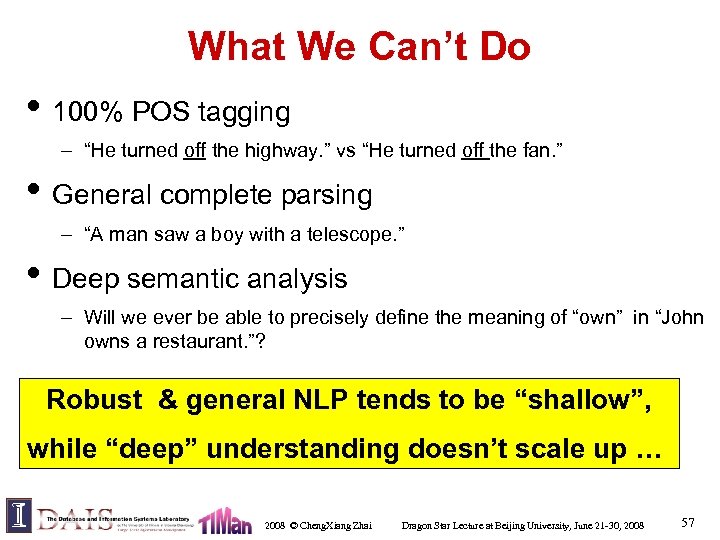

What We Can’t Do • 100% POS tagging – “He turned off the highway. ” vs “He turned off the fan. ” • General complete parsing – “A man saw a boy with a telescope. ” • Deep semantic analysis – Will we ever be able to precisely define the meaning of “own” in “John owns a restaurant. ”? Robust & general NLP tends to be “shallow”, while “deep” understanding doesn’t scale up … 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 57

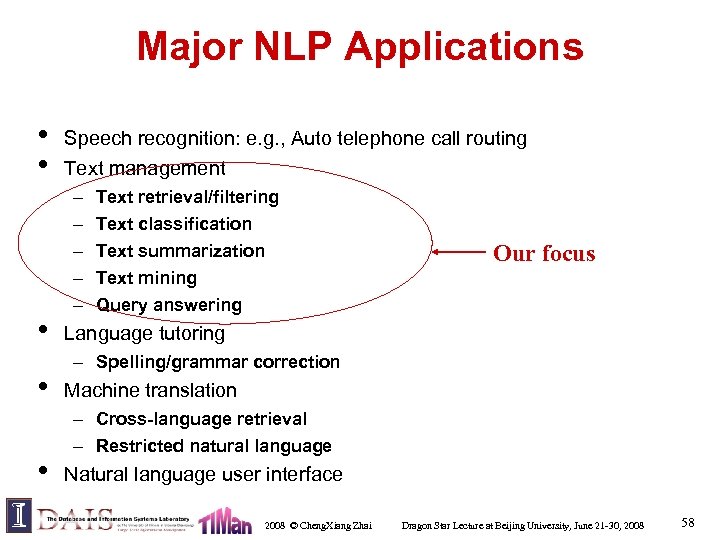

Major NLP Applications • • • Speech recognition: e. g. , Auto telephone call routing Text management – – – Text retrieval/filtering Text classification Text summarization Text mining Query answering Our focus Language tutoring – Spelling/grammar correction Machine translation – Cross-language retrieval – Restricted natural language Natural language user interface 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 58

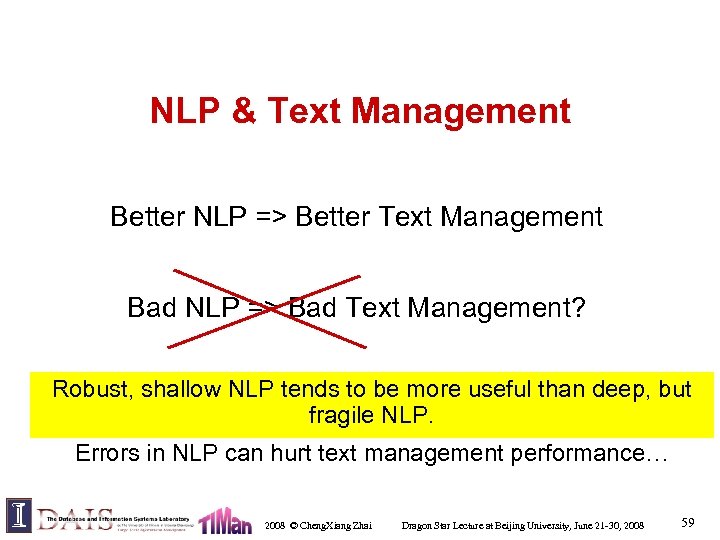

NLP & Text Management Better NLP => Better Text Management Bad NLP => Bad Text Management? Robust, shallow NLP tends to be more useful than deep, but fragile NLP. Errors in NLP can hurt text management performance… 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 59

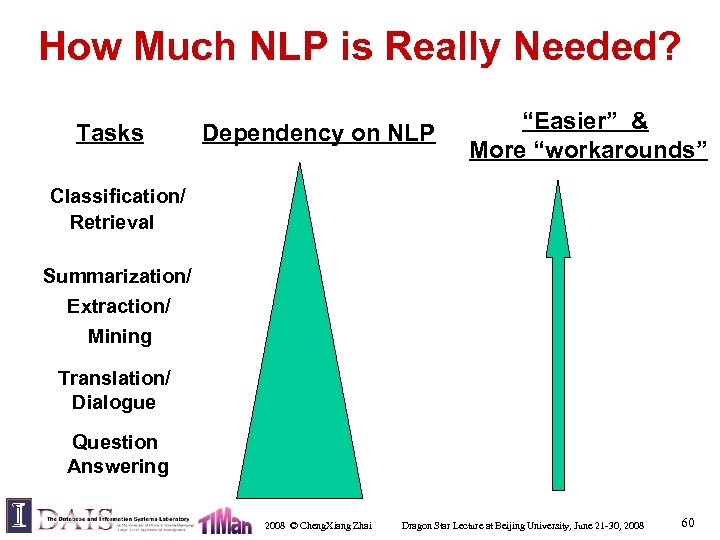

How Much NLP is Really Needed? Tasks Dependency on NLP “Easier” & More “workarounds” Classification/ Retrieval Summarization/ Extraction/ Mining Translation/ Dialogue Question Answering 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 60

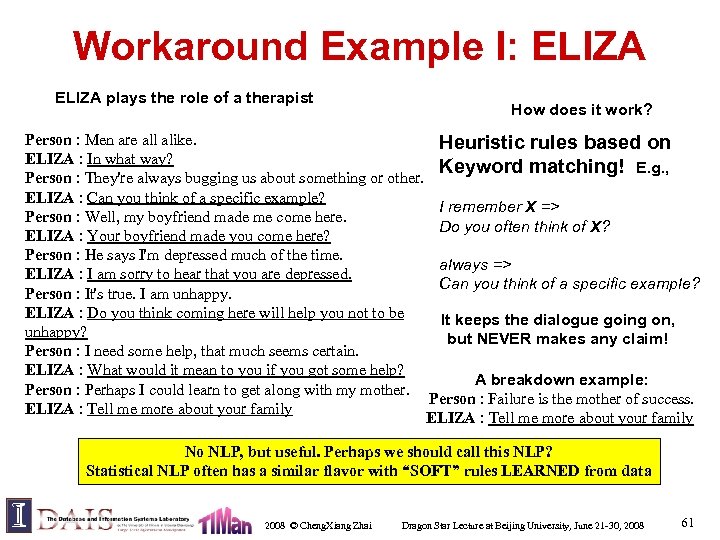

Workaround Example I: ELIZA plays the role of a therapist How does it work? Person : Men are all alike. Heuristic rules based on ELIZA : In what way? Keyword matching! E. g. , Person : They're always bugging us about something or other. ELIZA : Can you think of a specific example? I remember X => Person : Well, my boyfriend made me come here. Do you often think of X? ELIZA : Your boyfriend made you come here? Person : He says I'm depressed much of the time. always => ELIZA : I am sorry to hear that you are depressed. Can you think of a specific example? Person : It's true. I am unhappy. ELIZA : Do you think coming here will help you not to be It keeps the dialogue going on, unhappy? but NEVER makes any claim! Person : I need some help, that much seems certain. ELIZA : What would it mean to you if you got some help? A breakdown example: Person : Perhaps I could learn to get along with my mother. Person : Failure is the mother of success. ELIZA : Tell me more about your family No NLP, but useful. Perhaps we should call this NLP? Statistical NLP often has a similar flavor with “SOFT” rules LEARNED from data 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 61

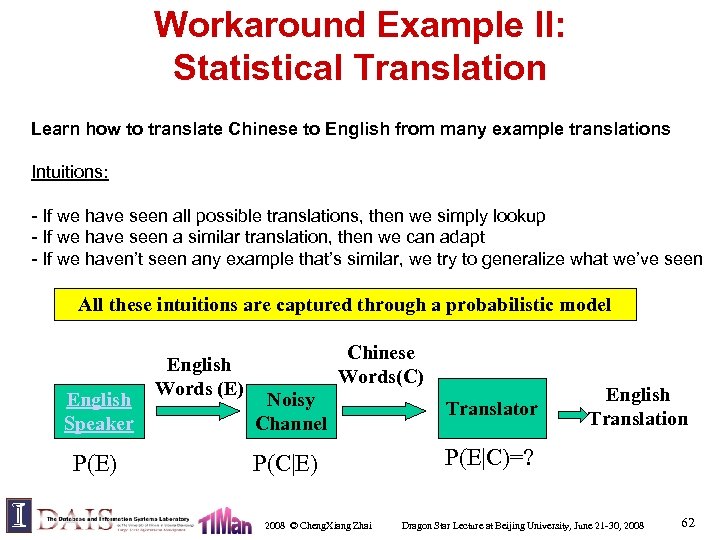

Workaround Example II: Statistical Translation Learn how to translate Chinese to English from many example translations Intuitions: - If we have seen all possible translations, then we simply lookup - If we have seen a similar translation, then we can adapt - If we haven’t seen any example that’s similar, we try to generalize what we’ve seen All these intuitions are captured through a probabilistic model English Speaker P(E) English Words (E) Chinese Words(C) Noisy Channel Translator P(C|E) English Translation P(E|C)=? 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 62

So, what NLP techniques are most useful for text management? Statistical NLP in general, and statistical language models in particular The need for high robustness and efficiency implies the dominant use of simple models (i. e. , unigram models) 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 63

What You Should Know • • NLP is the basis for text management – Better NLP enables better text management – Better NLP is necessary for sophisticated tasks But – Bad NLP doesn’t mean bad text management – There are often “workarounds” for a task – Inaccurate NLP can even hurt the performance of a task The most effective NLP techniques are often statistical with the help of linguistic knowledge The challenge is to bridge the gap between NLP and applications 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 64

Roadmap • Today’s lecture – Course overview – Essential background (prob & stat, info theory, NLP) • Next two lectures: overview of IR – Basic concepts – Evaluation – Brief history – Basic models –… 2008 © Cheng. Xiang Zhai Dragon Star Lecture at Beijing University, June 21 -30, 2008 65

d0da652a22fbc0ed627a95780d57547c.ppt